The KLDivergence Let and be distributions over KL

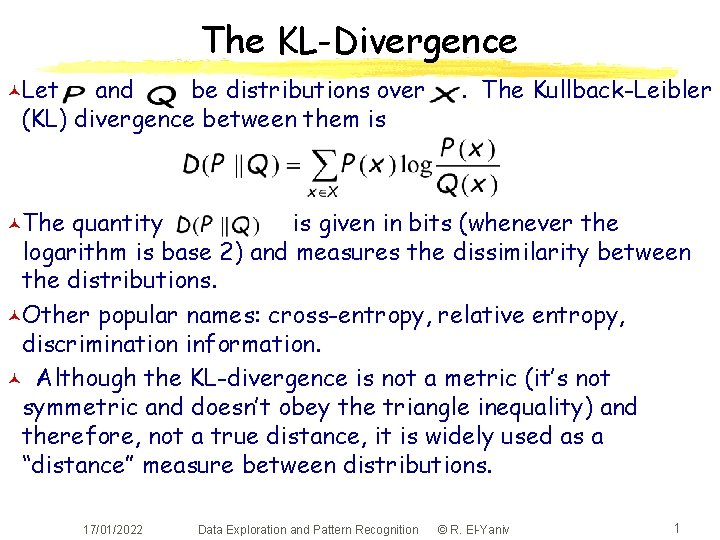

The KL-Divergence ©Let and be distributions over (KL) divergence between them is . The Kullback-Leibler ©The quantity is given in bits (whenever the logarithm is base 2) and measures the dissimilarity between the distributions. ©Other popular names: cross-entropy, relative entropy, discrimination information. © Although the KL-divergence is not a metric (it’s not symmetric and doesn’t obey the triangle inequality) and therefore, not a true distance, it is widely used as a “distance” measure between distributions. 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 1

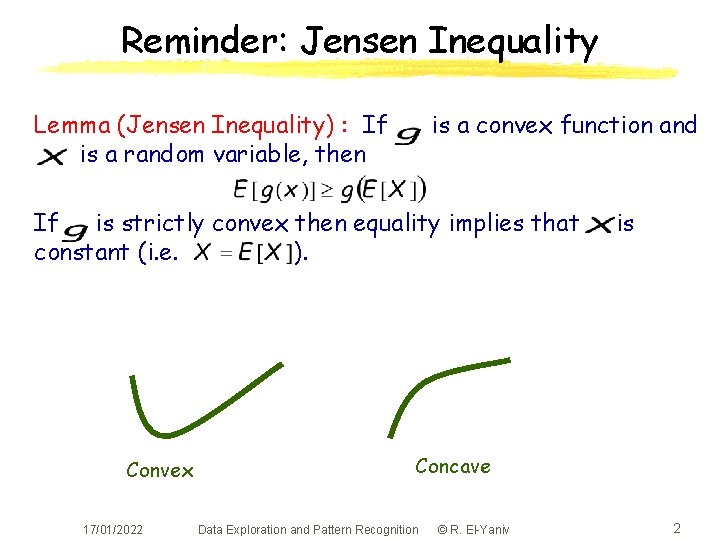

Reminder: Jensen Inequality Lemma (Jensen Inequality) : If is a random variable, then is a convex function and If is strictly convex then equality implies that constant (i. e. ). Convex 17/01/2022 is Concave Data Exploration and Pattern Recognition © R. El-Yaniv 2

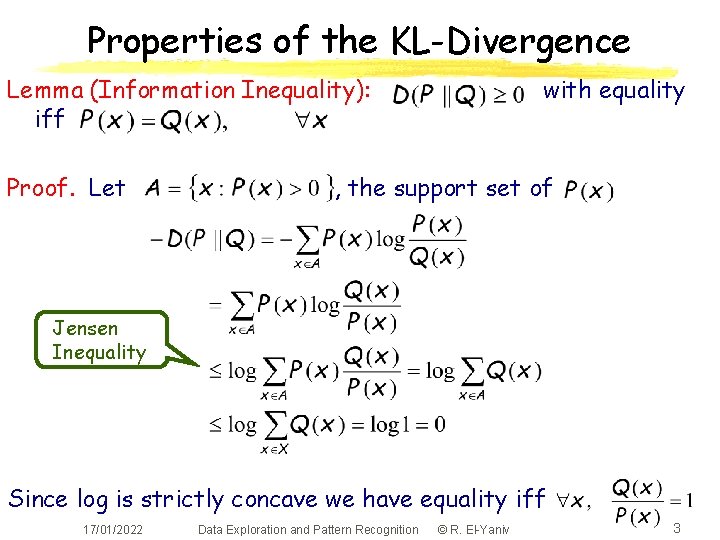

Properties of the KL-Divergence Lemma (Information Inequality): iff Proof. Let with equality , the support set of Jensen Inequality Since log is strictly concave we have equality iff 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 3

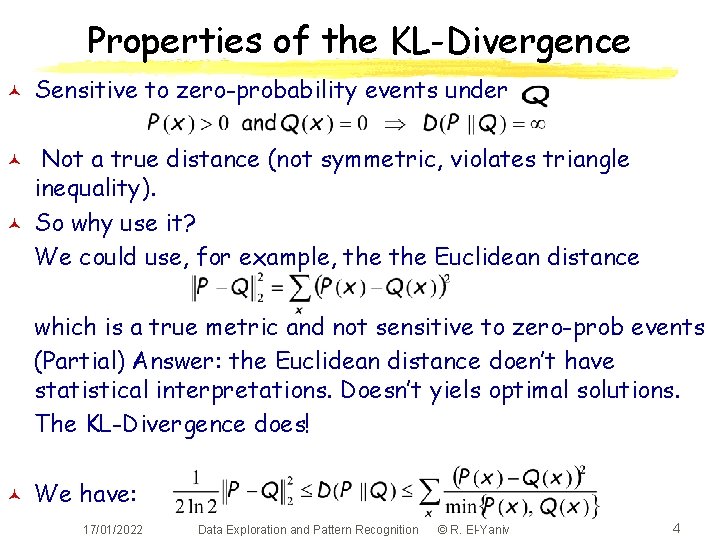

Properties of the KL-Divergence © © © Sensitive to zero-probability events under Not a true distance (not symmetric, violates triangle inequality). So why use it? We could use, for example, the Euclidean distance which is a true metric and not sensitive to zero-prob events (Partial) Answer: the Euclidean distance doen’t have statistical interpretations. Doesn’t yiels optimal solutions. The KL-Divergence does! © We have: 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 4

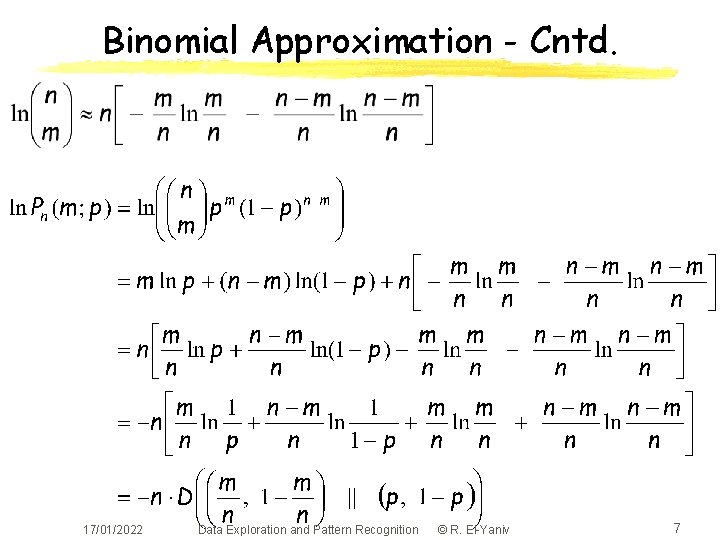

Binomial Approximation With KL-Div. © © Let One method to compute Approximation 17/01/2022 is to use Stirling Data Exploration and Pattern Recognition © R. El-Yaniv 5

Binomial Approximation - Cntd. 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 6

Binomial Approximation - Cntd. 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 7

Binomial Approximation - Cntd. Example: What is the probability of getting 30 heads when tossing an unbiased coin 100 times? 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 8

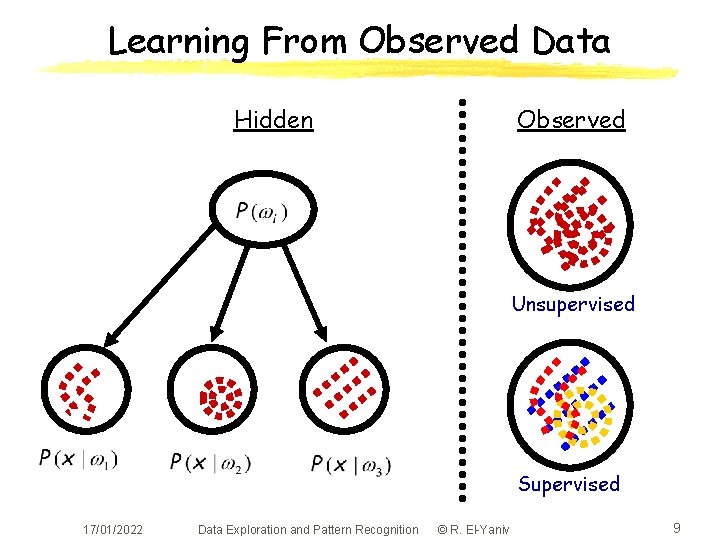

Learning From Observed Data Hidden Observed Unsupervised Supervised 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 9

Density Estimation © © © The Bayesian method is optimal (for classification and decision making) but requires that all relevant distributions (prior, and class-conditional) are known. Unfortunately, this is rarely the case. We only see data, not distributions. Threfore, in order to use Bayesian classification We want to learn these distributions from the data (called training data). Supervised learning: we get to see samples from each of the classes “separately” (called tagged samples). Tagged samples are “expensive”. We need to learn the distributions as efficiently as possible. Two methods: parametric (easier) and nonparametric (harder) 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 10

Parameter Estimation © © © Suppose we can assume that the relevant densities are of some parametric form. For example, suppose we are pretty sure that is normal , without knowing and. It remains to estimate the parameters and from the data. Examples of parameterized desnsities l Binomial: has 1’s and l Normal: Each data point 0’s is distributed according to Here, 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 11

Two Methods for Parameter Estimation © © We’ll study two methods for parameter estimation: Bayesian estimation and maximum likelihood. Both methods are conceptually different. l l l Maximum likelihood: unknown parameters are fixed; pick the parameters that best “explain” the data Bayesian estimation: unknown parameters are random variables sampled from some prior; we use the observed data to revise the prior (obtaining “sharper” posterior) and choose the best parameters using the standard (and optimal) Bayesian method. But assymptotically they yield the same results 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 12

Isolating the Problem © © © We get to see a traning set of data points Each point in belongs to some class of different classes Suppose the subset of i. i. d. points is in some class so that each is drown according to to the class-conditional We assume that has some known parametric form given by for some parameter vector Thus, we have sepaerate parameter estimation problems 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 13

Maximum Likelihood Estimation © © Recall that the likelihood of is with respect to The maximum likelihood parameter vector best supports the data; that is, is the one that Analytically, it is often easier to consider the log of the likelihood function (since the log is monotone, the maximum log-likelihood is same as maximum likelihood). Example: assume that all the points in are drawn from some (one-dimensional) normal distribution with some particular variance (and unknown mean). 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 14

Maximum Likelihood - Illustration 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 15

Maximum Likelihood - Cntd. © If is “well-behaved” and, in particular, differentiable, we can find using standard differntial calculus Suppose. Then satisfies © and we must verify that this is a global maximum © 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 16

Example - Maximum Likelihood © Suppose we know that each data point is distributed according to a normal distribution with known standard deviation 1 but with unknown mean © Differentiating, we have © So 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 17

Example: Normal, Unknown © Suppose each data point We have © so © 17/01/2022 and is distributed Data Exploration and Pattern Recognition © R. El-Yaniv 18

Biased Estimators © In the last example the ML estimator of © This estimate is biased ; that is, © Claim: © © © was This estimator is asymptotically unbiased (approaches an unbiased estimate; see below) To see the bias it is sufficient to consider one data point (that is, ) Unbiased estimates for 17/01/2022 and Data Exploration and Pattern Recognition are © R. El-Yaniv 19

ML Estimators for Multivariate Normal PDF © © A similar (but much more involved) calculations yields the following ML estimator for the multivariate normal density with unknown mean vector and unknown covariance matrix The estimator for the mean is unbiased and the estimator for the covariance matrix is biased. An unbiased estimator is 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 20

Baysian Parameter Estimation © © © Here again, the form of the source density is assumed to be known but the parameter is unknown We assume that is random variable Our initial knowledge (guess) about , before observing the data , is given by the prior We use the sample data (drawn independently according to ) to compute the posterior Since Recall that factor 17/01/2022 is drawn i. i. d. is a normalizing Data Exploration and Pattern Recognition © R. El-Yaniv 21

Bayesian Estimation © © The prior is typically “broad” or “flat”, reflecting the fact that we don’t know a lot about the parameters values The data we see is more consistent with some values of the parameters and therefore we expect the posterior to pick sharply around more likely values 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 22

Bayesian Estimation © © Recall that our goal (in the isolated problem) is to estimate the class-conditional density of the th class, given the (labeled data of that class ) Using the posterior we compute the classconditional the weighted average over all possible values of 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 23

Bayesian Estimation - Example © © Suppose we know that class-conditional p. d. f. is normal with unknown mean Also, suppose with both known We imagine that Nature draws a value for using and then i. i. d. chooses the data using We now calculate the posterior 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 24

Bayesian Estimation - Example cntd. © © © The answer (exercise): Letting is normal Hint: to save algebraic manipulations, note that any p. d. f. of the form is normal Notice that is a convex combination of and Always and after observing samples, is our “best guess” for 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 25

Bayesian Estimation - Example cntd. © After determining the posterior it remains to calculate the class-conditional where 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 26

Another Example: Prob. of Sun Rising © © © Question: What is the probability that the sun will rise tomorrow? Laplace’s (Bayesian) answer: Assume that each day the sun rises with probability (Bernoulli process) and that is distributed uniformaly in. Suppose there were sun rises so far. What is the probability of an st rise? Denote the data set by where. 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 27

Prob. of Sun Rising - Cntd. © We have © Therefore, © This is called: Laplace’s law of succession © Notice that ML gives 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 28

Maximum Likelihood vs. Bayesian © © © ML and Bayesian estimations are asymptotically equivalent. they yield the same class-conditional densities when the size of the training data grows to infinity. ML is typically computationally easier: E. g. consider the case where the p. d. f. is “nice” (i. e. differentiable). In ML we need to do (multidimensional) differentiation and in Bayesian, (multidimensional) integration. ML is often easier to interpret: it returns the single best model (parameter) whereas Bayesian gives a weighted average of models. But for a finite training data (and given a reliable prior) Bayesian is more accurate (uses more of the information). Bayesian with “flat” prior is essntially ML. With asymmetric and broad priors the methods lead to different solutions. 17/01/2022 Data Exploration and Pattern Recognition © R. El-Yaniv 29

- Slides: 29