The Grid Opportunities Achievements and Challenges for Computer

- Slides: 47

The Grid: Opportunities, Achievements, and Challenges for (Computer) Science Ian Foster Argonne National Laboratory University of Chicago Globus Alliance www. mcs. anl. gov/~foster

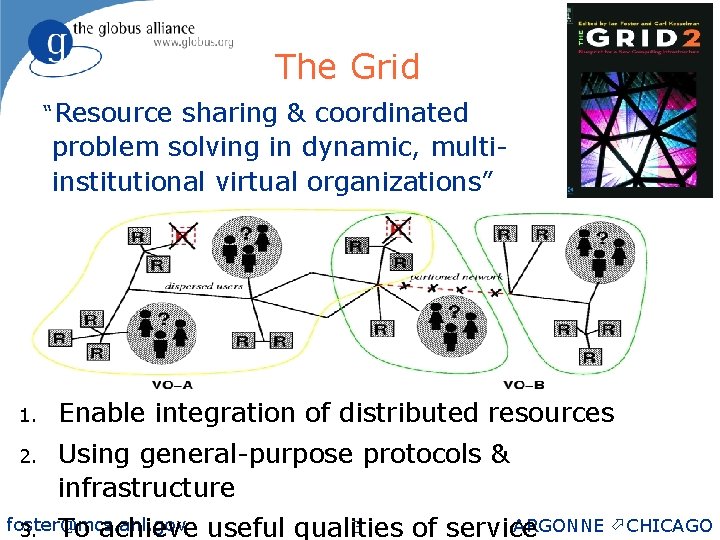

The Grid “Resource sharing & coordinated problem solving in dynamic, multiinstitutional virtual organizations” 1. Enable integration of distributed resources 2. Using general-purpose protocols & infrastructure foster@mcs. anl. gov 3. To achieve ARGONNE ö CHICAGO 3 useful qualities of service

The Grid Phenomenon: An Abbreviated History u Early 90 s ◊ u Mid to late 90 s ◊ u Gigabit testbeds, metacomputing Early experiments (e. g. , I-WAY), academic software (Globus, Condor, Legion), experiments 2003 Hundreds of application communities & projects in scientific and technical computing ◊ Major infrastructure deployments ◊ Open source technology: Globus Toolkit ®, etc. ◊ Global Grid Forum: ~2000 people, 30+ countries ◊ Growing industrial adoption ◊

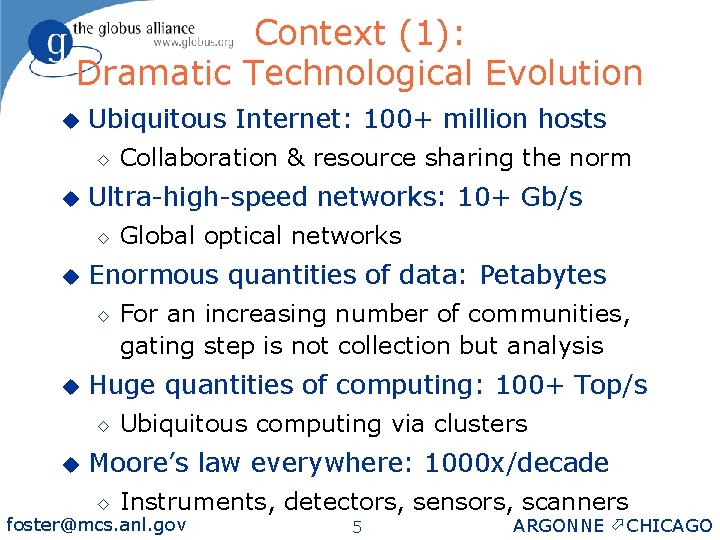

Context (1): Dramatic Technological Evolution u Ubiquitous Internet: 100+ million hosts ◊ u Ultra-high-speed networks: 10+ Gb/s ◊ u For an increasing number of communities, gating step is not collection but analysis Huge quantities of computing: 100+ Top/s ◊ u Global optical networks Enormous quantities of data: Petabytes ◊ u Collaboration & resource sharing the norm Ubiquitous computing via clusters Moore’s law everywhere: 1000 x/decade ◊ Instruments, detectors, sensors, scanners foster@mcs. anl. gov 5 ARGONNE ö CHICAGO

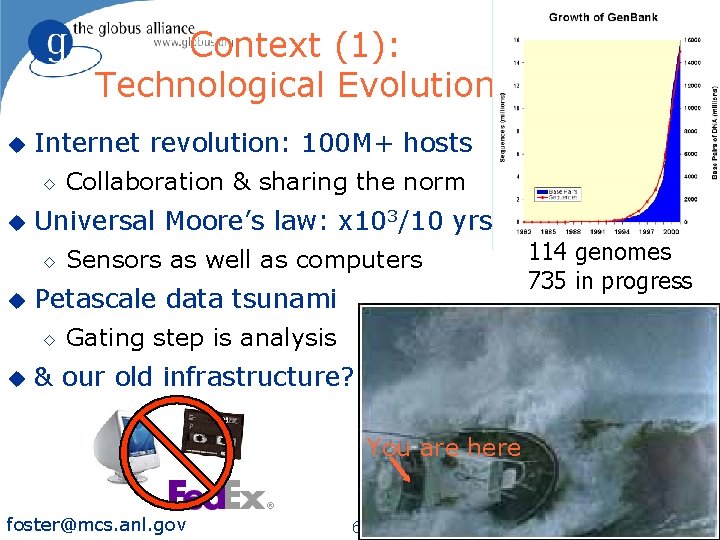

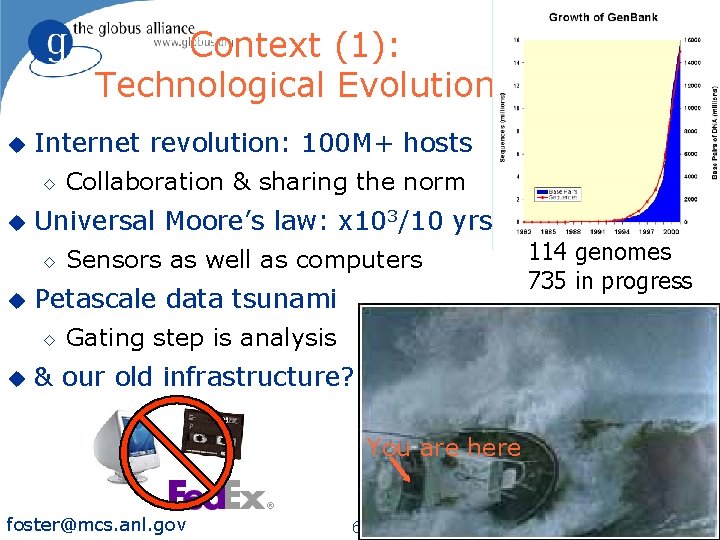

Context (1): Technological Evolution u Internet revolution: 100 M+ hosts ◊ u Universal Moore’s law: x 103/10 yrs ◊ u 114 genomes 735 in progress Sensors as well as computers Petascale data tsunami ◊ u Collaboration & sharing the norm Gating step is analysis & our old infrastructure? You are here foster@mcs. anl. gov 6 ARGONNE ö CHICAGO

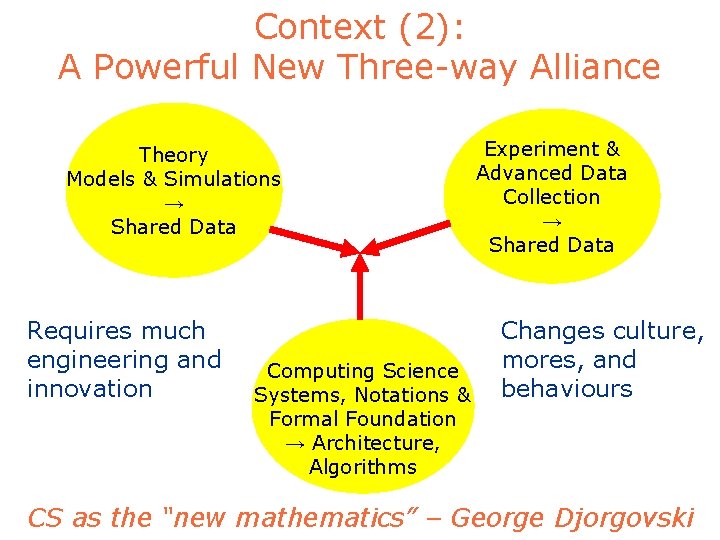

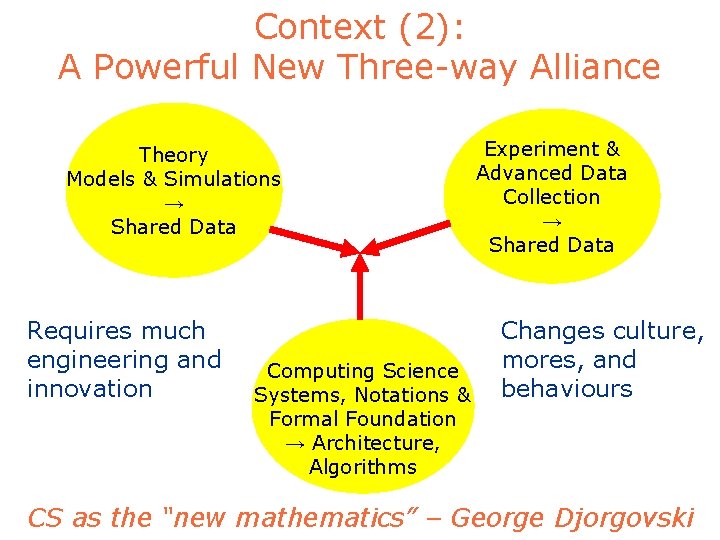

Context (2): A Powerful New Three-way Alliance Theory Models & Simulations → Shared Data Requires much engineering and innovation Computing Science Systems, Notations & Formal Foundation → Architecture, Algorithms Experiment & Advanced Data Collection → Shared Data Changes culture, mores, and behaviours CS as the “new mathematics” – George Djorgovski

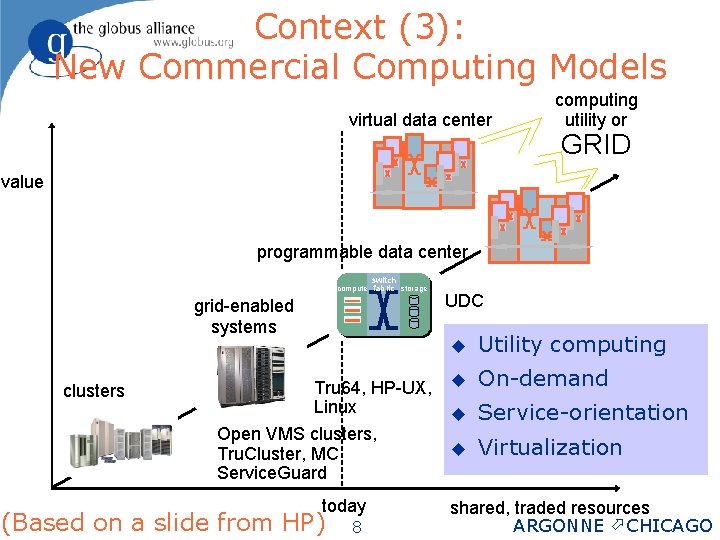

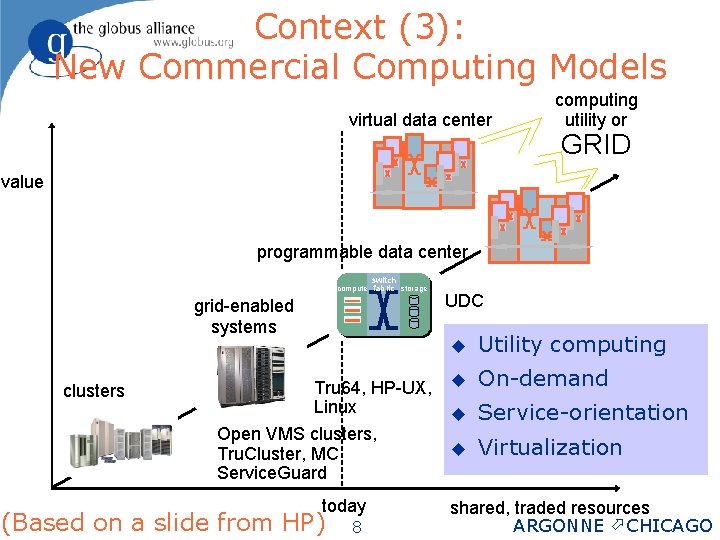

Context (3): New Commercial Computing Models virtual data center computing utility or GRID value programmable data center switch compute fabric storage grid-enabled systems clusters Tru 64, HP-UX, Linux Open VMS clusters, Tru. Cluster, MC Service. Guard today (Based on a slide from HP) foster@mcs. anl. gov 8 UDC u Utility computing u On-demand u Service-orientation u Virtualization shared, traded resources ARGONNE ö CHICAGO

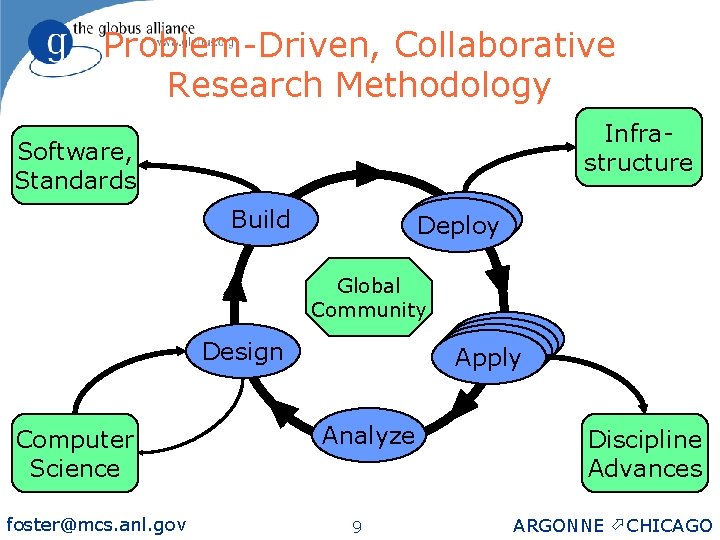

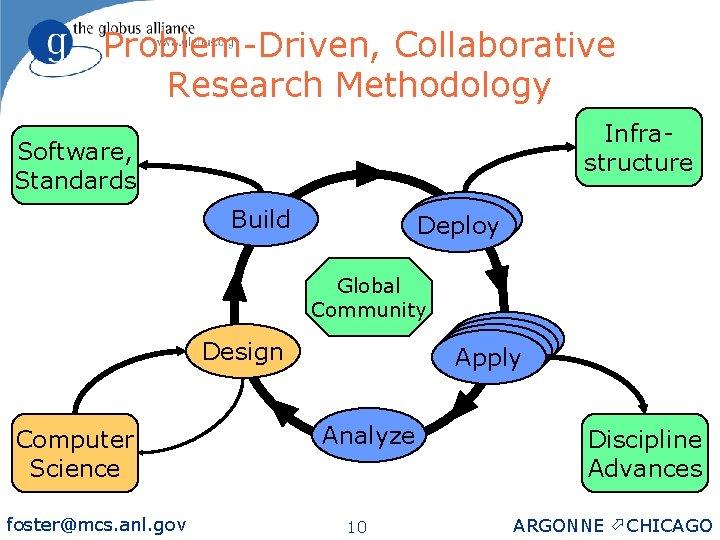

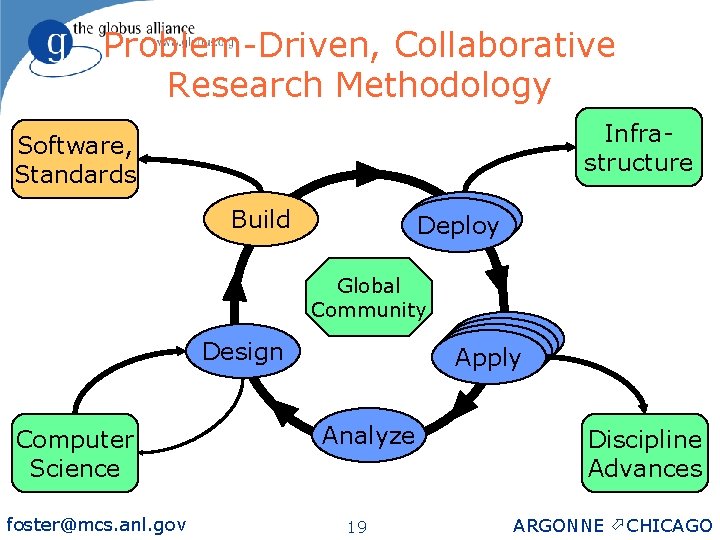

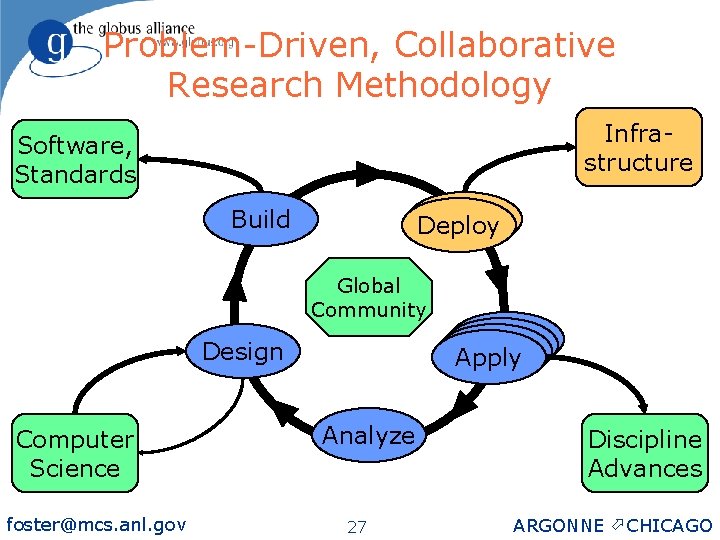

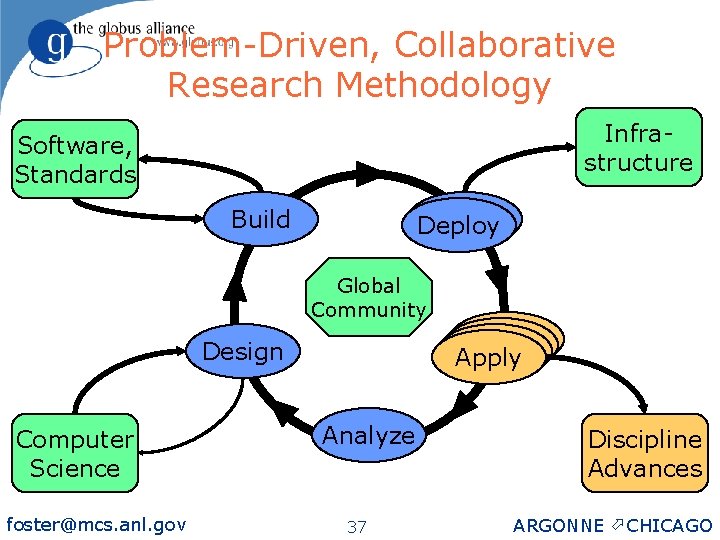

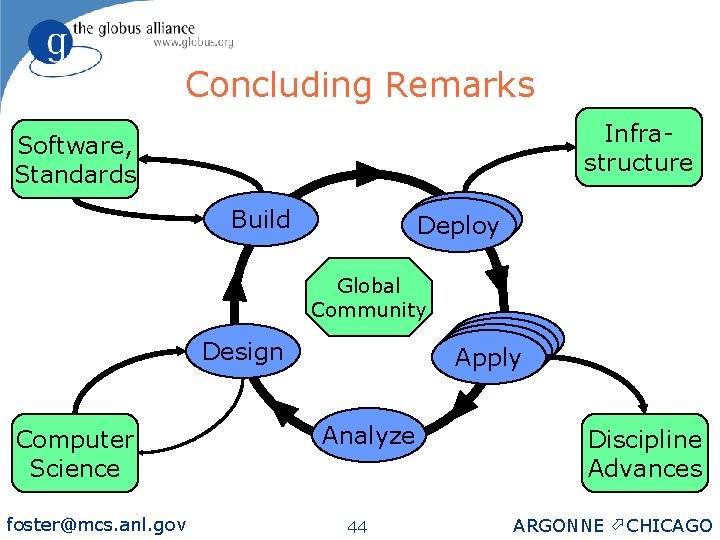

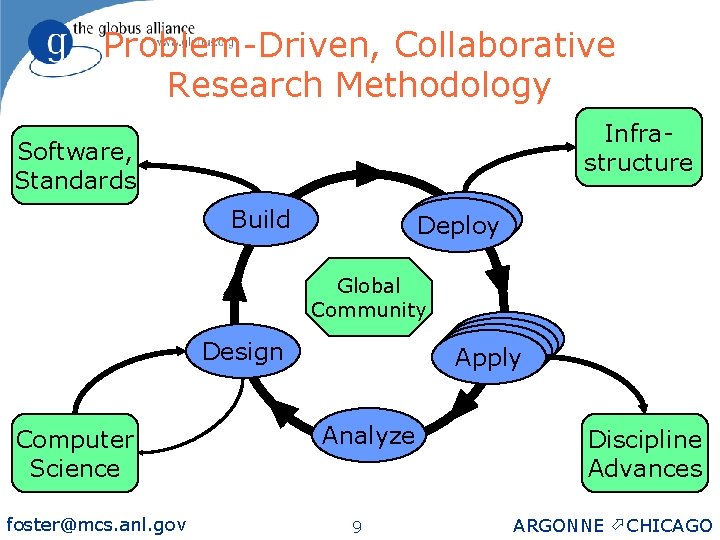

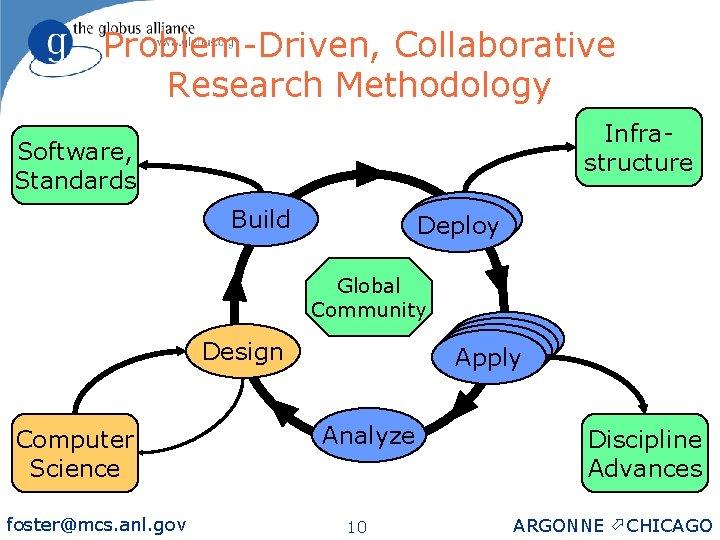

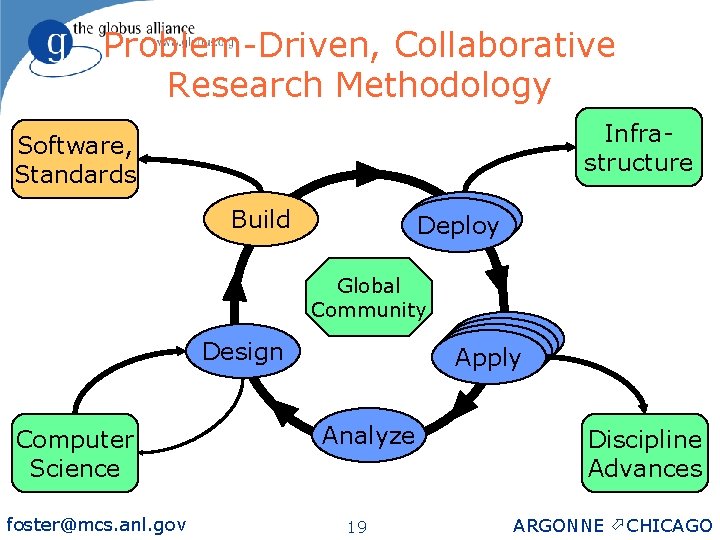

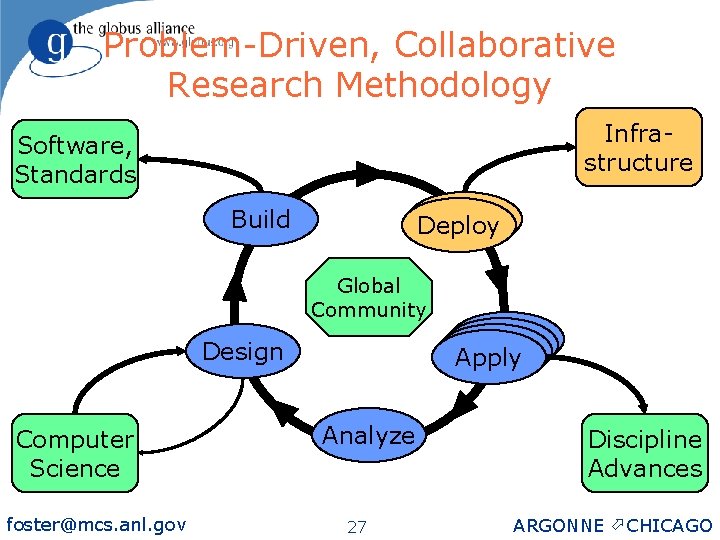

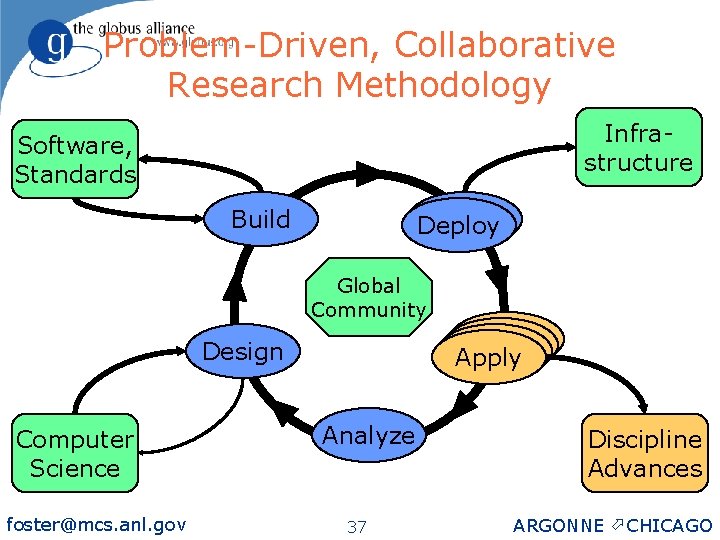

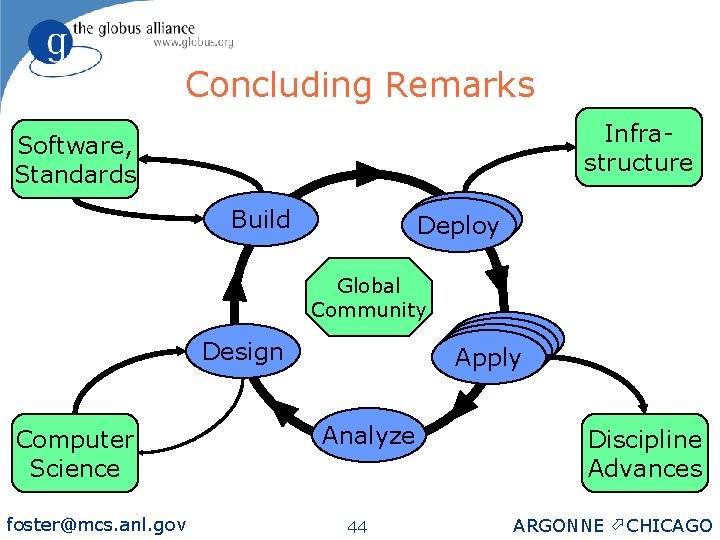

Problem-Driven, Collaborative Research Methodology Infrastructure Software, Standards Build Deploy Global Community Apply Design Computer Science foster@mcs. anl. gov Analyze 9 Discipline Advances ARGONNE ö CHICAGO

Problem-Driven, Collaborative Research Methodology Infrastructure Software, Standards Build Deploy Global Community Apply Design Computer Science foster@mcs. anl. gov Analyze 10 Discipline Advances ARGONNE ö CHICAGO

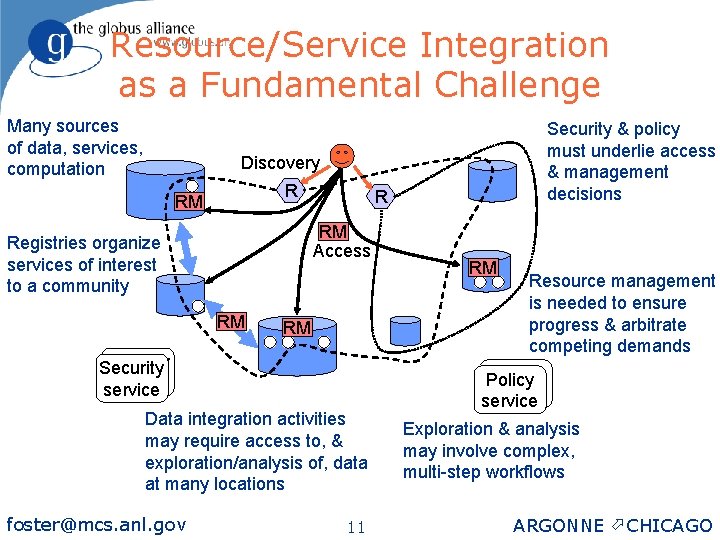

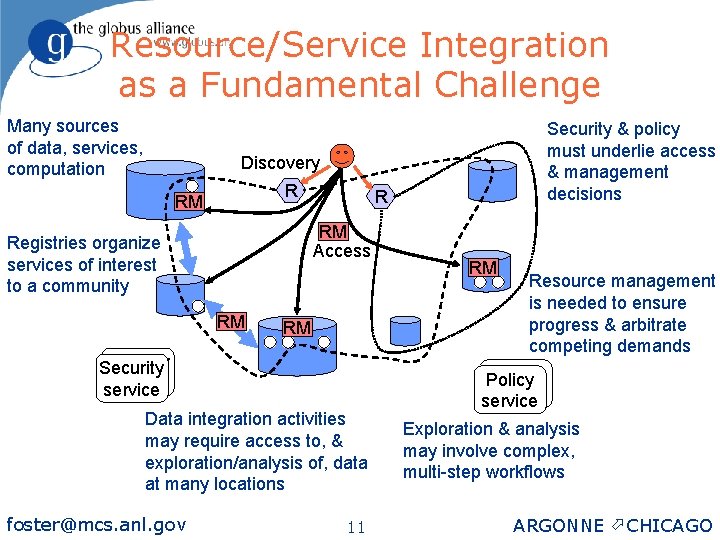

Resource/Service Integration as a Fundamental Challenge Many sources of data, services, computation Security & policy must underlie access & management decisions Discovery R RM Access Registries organize services of interest to a community RM RM Security service Data integration activities may require access to, & exploration/analysis of, data at many locations foster@mcs. anl. gov 11 RM Resource management is needed to ensure progress & arbitrate competing demands Policy service Exploration & analysis may involve complex, multi-step workflows ARGONNE ö CHICAGO

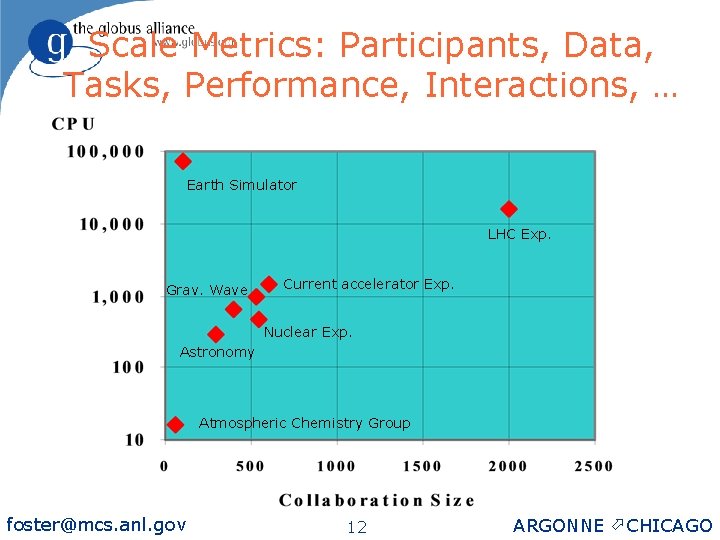

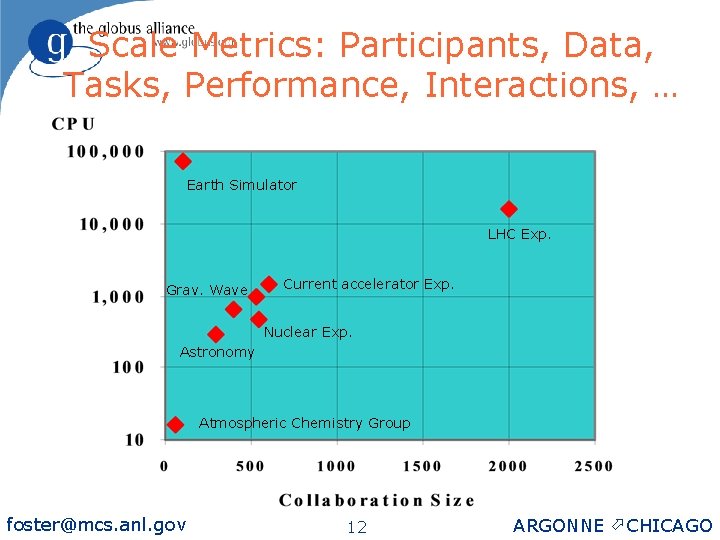

Scale Metrics: Participants, Data, Tasks, Performance, Interactions, … Earth Simulator LHC Exp. Grav. Wave Current accelerator Exp. Nuclear Exp. Astronomy Atmospheric Chemistry Group foster@mcs. anl. gov 12 ARGONNE ö CHICAGO

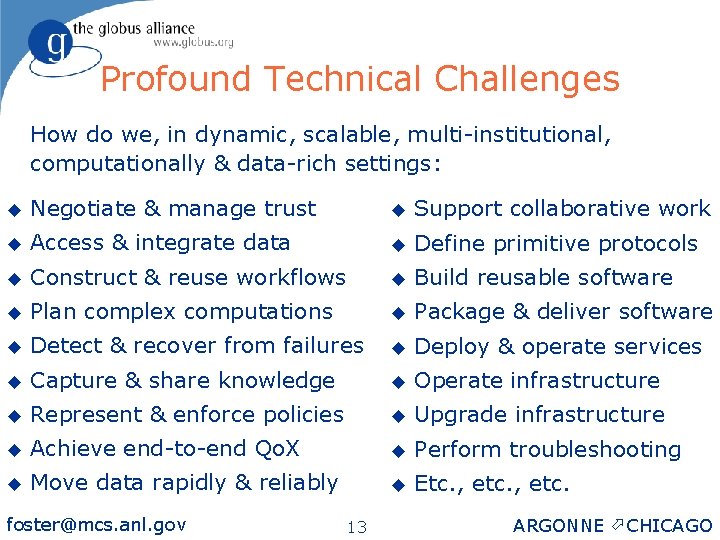

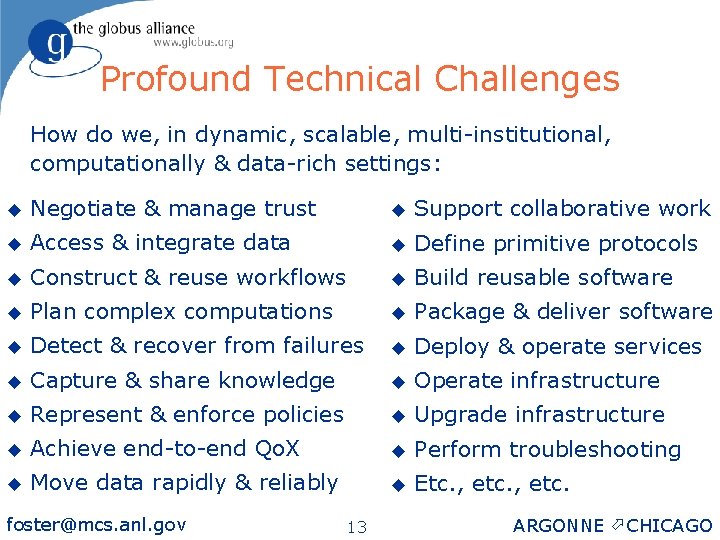

Profound Technical Challenges How do we, in dynamic, scalable, multi-institutional, computationally & data-rich settings: u Negotiate & manage trust u Support collaborative work u Access & integrate data u Define primitive protocols u Construct & reuse workflows u Build reusable software u Plan complex computations u Package & deliver software u Detect & recover from failures u Deploy & operate services u Capture & share knowledge u Operate infrastructure u Represent & enforce policies u Upgrade infrastructure u Achieve end-to-end Qo. X u Perform troubleshooting u Move data rapidly & reliably u Etc. , etc. foster@mcs. anl. gov 13 ARGONNE ö CHICAGO

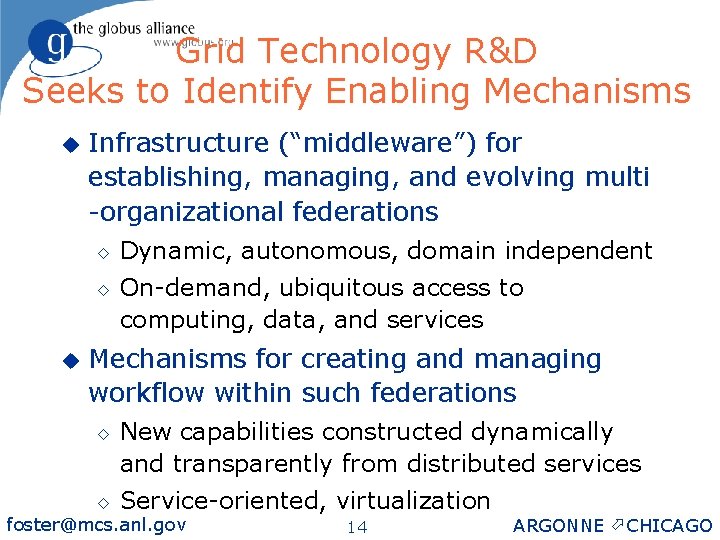

Grid Technology R&D Seeks to Identify Enabling Mechanisms u Infrastructure (“middleware”) for establishing, managing, and evolving multi -organizational federations ◊ ◊ u Dynamic, autonomous, domain independent On-demand, ubiquitous access to computing, data, and services Mechanisms for creating and managing workflow within such federations ◊ ◊ New capabilities constructed dynamically and transparently from distributed services Service-oriented, virtualization foster@mcs. anl. gov 14 ARGONNE ö CHICAGO

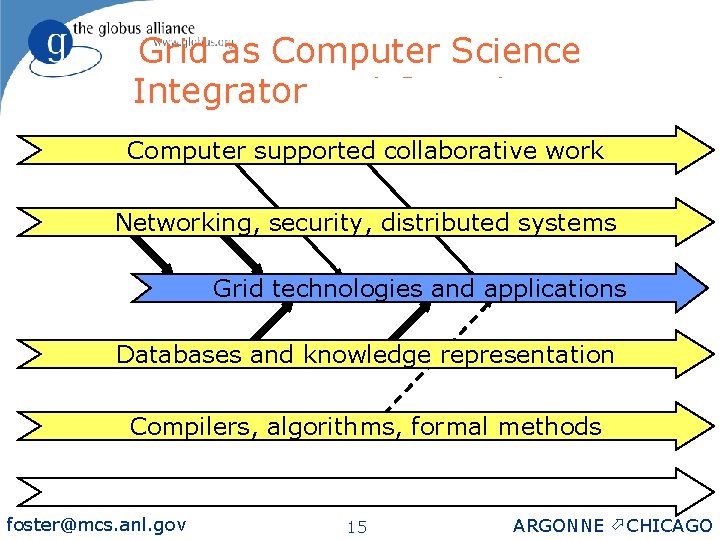

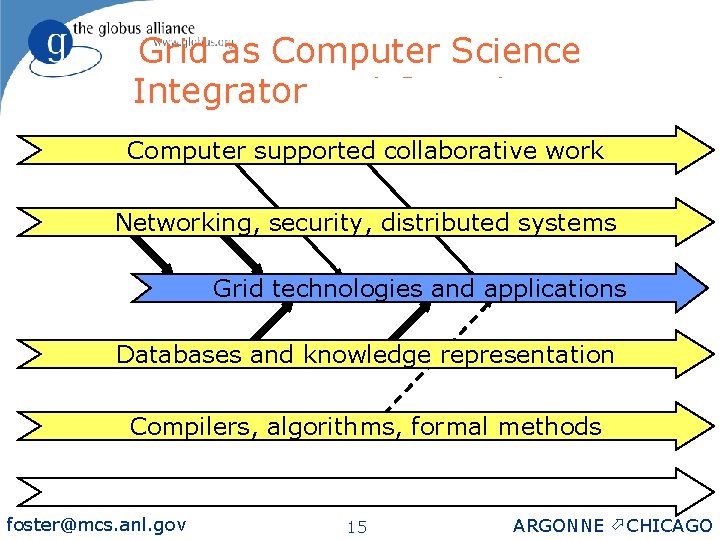

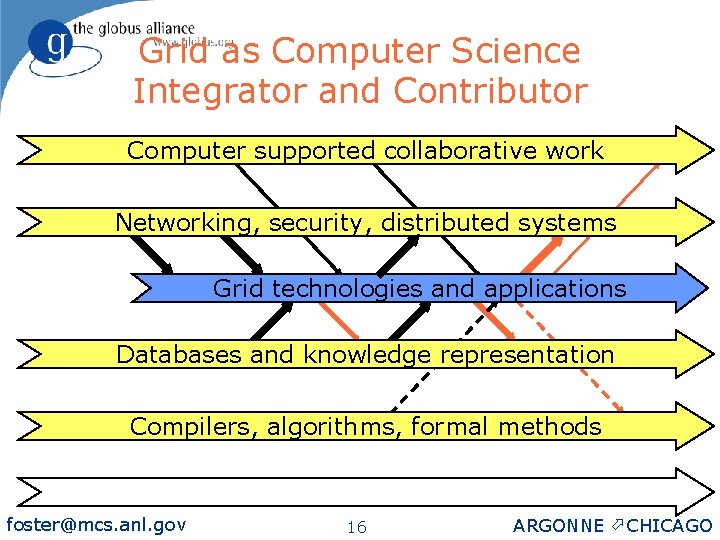

Grid as Computer Science Integrator and Contributor Computer supported collaborative work Networking, security, distributed systems Grid technologies and applications Databases and knowledge representation Compilers, algorithms, formal methods foster@mcs. anl. gov 15 ARGONNE ö CHICAGO

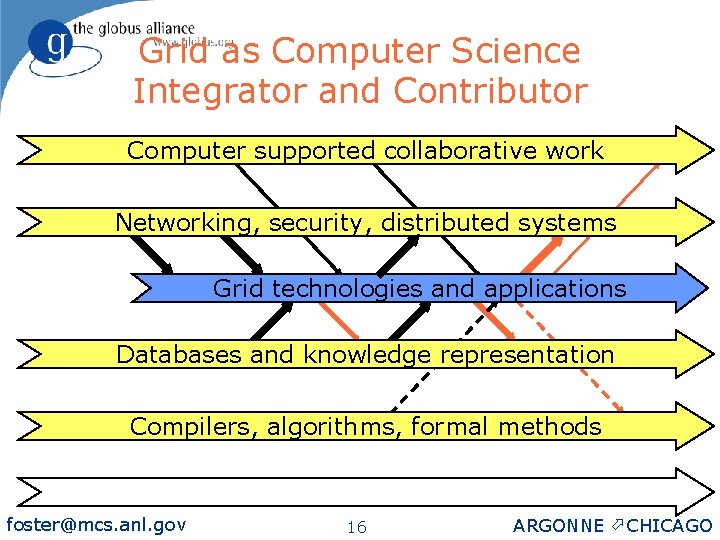

Grid as Computer Science Integrator and Contributor Computer supported collaborative work Networking, security, distributed systems Grid technologies and applications Databases and knowledge representation Compilers, algorithms, formal methods foster@mcs. anl. gov 16 ARGONNE ö CHICAGO

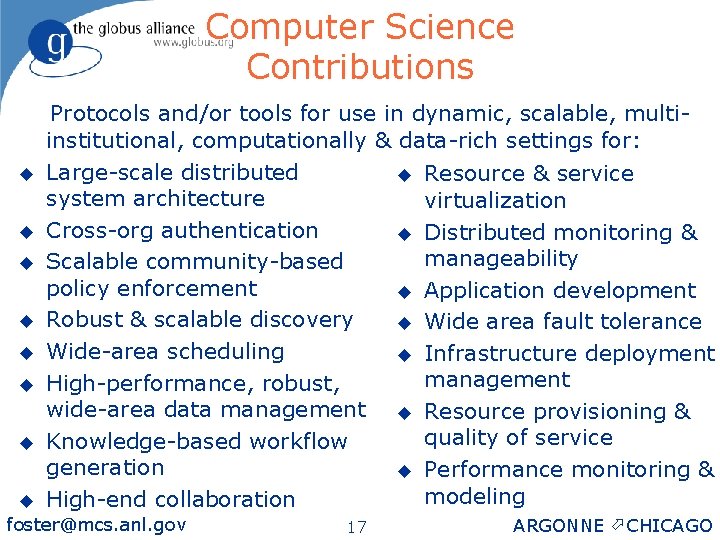

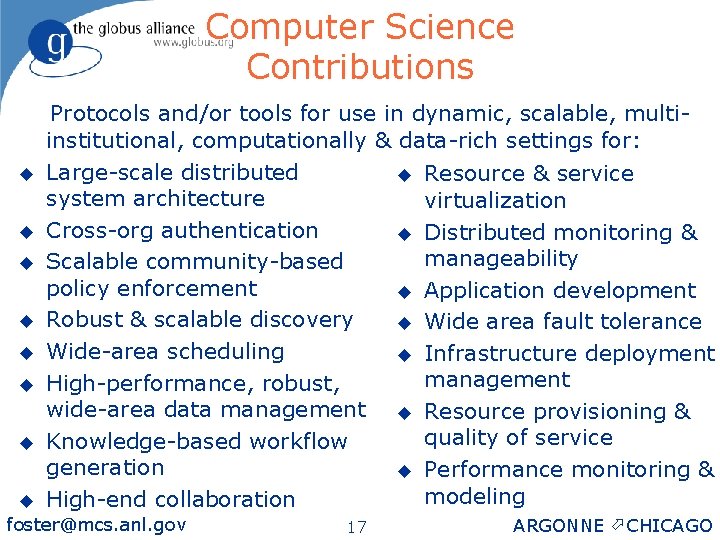

Computer Science Contributions u u u u Protocols and/or tools for use in dynamic, scalable, multiinstitutional, computationally & data-rich settings for: Large-scale distributed u Resource & service system architecture virtualization Cross-org authentication u Distributed monitoring & manageability Scalable community-based policy enforcement u Application development Robust & scalable discovery u Wide area fault tolerance Wide-area scheduling u Infrastructure deployment management High-performance, robust, wide-area data management u Resource provisioning & quality of service Knowledge-based workflow generation u Performance monitoring & modeling High-end collaboration foster@mcs. anl. gov 17 ARGONNE ö CHICAGO

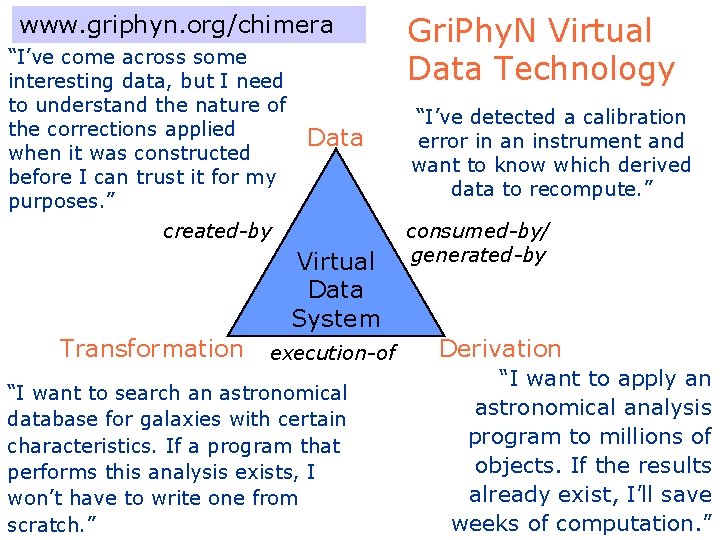

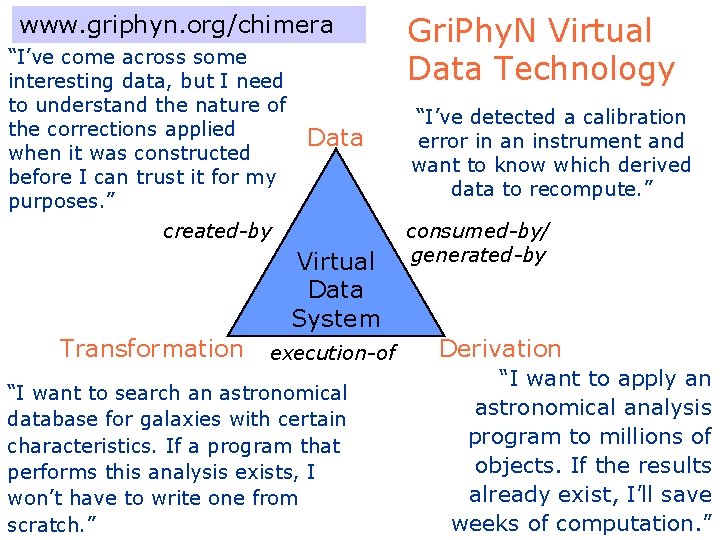

www. griphyn. org/chimera “I’ve come across some interesting data, but I need to understand the nature of the corrections applied when it was constructed before I can trust it for my purposes. ” Data created-by Virtual Data System Transformation execution-of “I want to search an astronomical database for galaxies with certain characteristics. If a program that performs this analysis exists, I won’t have to write one from scratch. ” Gri. Phy. N Virtual Data Technology “I’ve detected a calibration error in an instrument and want to know which derived data to recompute. ” consumed-by/ generated-by Derivation “I want to apply an astronomical analysis program to millions of objects. If the results already exist, I’ll save weeks of computation. ”

Problem-Driven, Collaborative Research Methodology Infrastructure Software, Standards Build Deploy Global Community Apply Design Computer Science foster@mcs. anl. gov Analyze 19 Discipline Advances ARGONNE ö CHICAGO

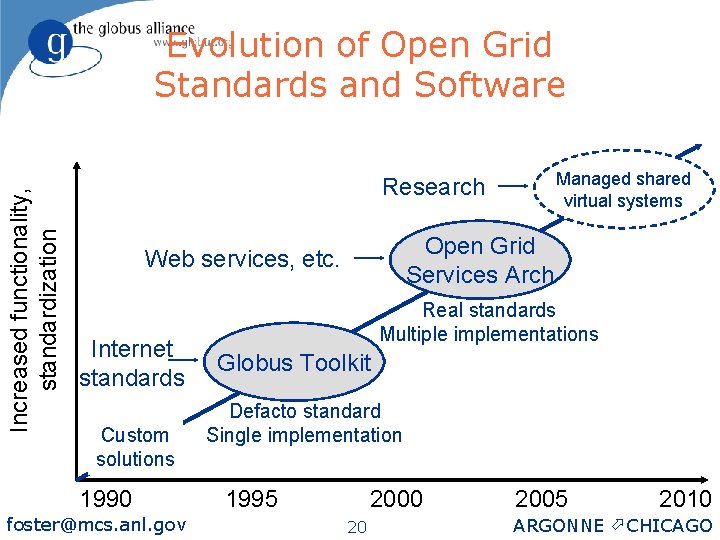

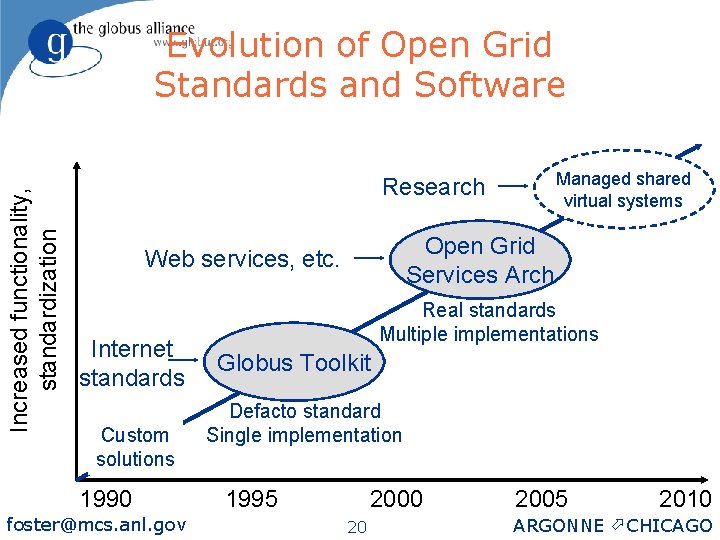

Increased functionality, standardization Evolution of Open Grid Standards and Software Managed shared virtual systems Research Open Grid Services Arch Web services, etc. Internet standards Custom solutions 1990 foster@mcs. anl. gov Real standards Multiple implementations Globus Toolkit Defacto standard Single implementation 1995 2000 20 2005 2010 ARGONNE ö CHICAGO

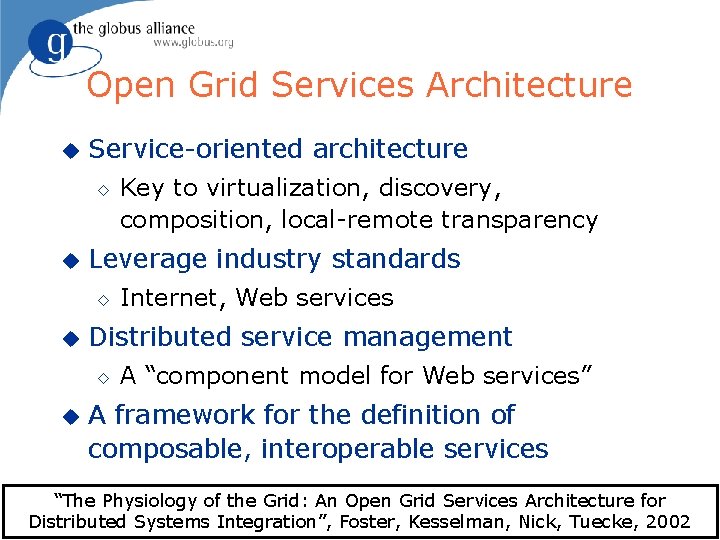

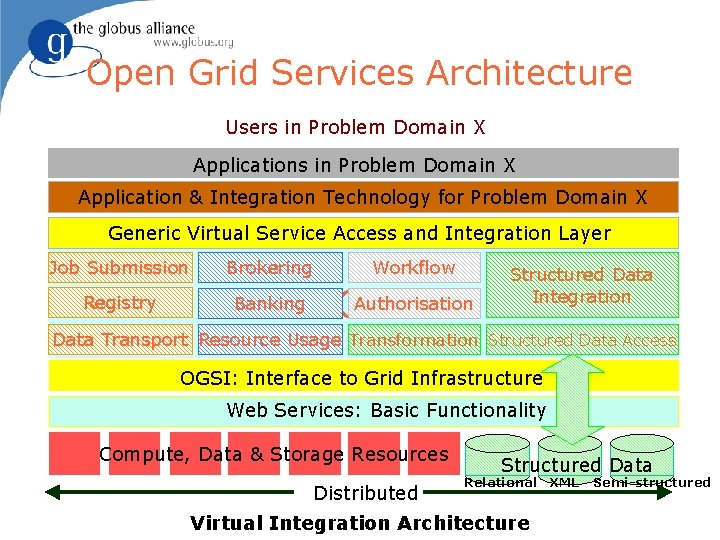

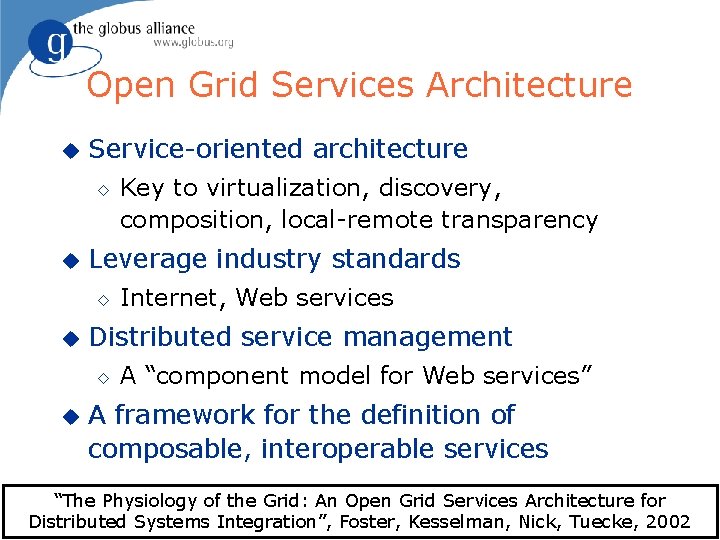

Open Grid Services Architecture u Service-oriented architecture ◊ u Leverage industry standards ◊ u Internet, Web services Distributed service management ◊ u Key to virtualization, discovery, composition, local-remote transparency A “component model for Web services” A framework for the definition of composable, interoperable services “The Physiology of the Grid: An Open Grid Services Architecture for Distributed Systems Integration”, Foster, Kesselman, ARGONNE Nick, Tuecke, 2002 foster@mcs. anl. gov ö CHICAGO 21

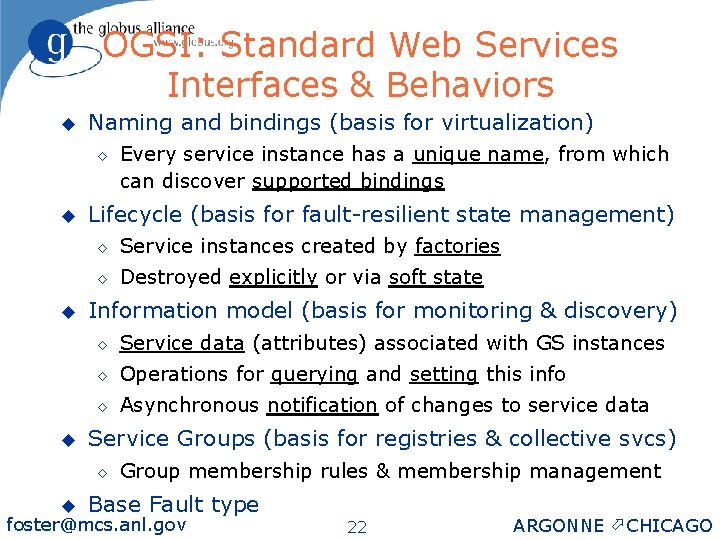

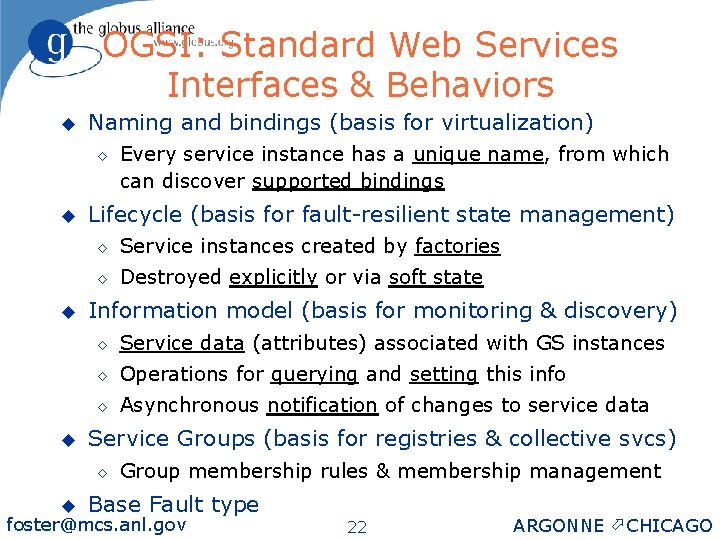

OGSI: Standard Web Services Interfaces & Behaviors u Naming and bindings (basis for virtualization) ◊ u u u Lifecycle (basis for fault-resilient state management) ◊ Service instances created by factories ◊ Destroyed explicitly or via soft state Information model (basis for monitoring & discovery) ◊ Service data (attributes) associated with GS instances ◊ Operations for querying and setting this info ◊ Asynchronous notification of changes to service data Service Groups (basis for registries & collective svcs) ◊ u Every service instance has a unique name, from which can discover supported bindings Group membership rules & membership management Base Fault type foster@mcs. anl. gov 22 ARGONNE ö CHICAGO

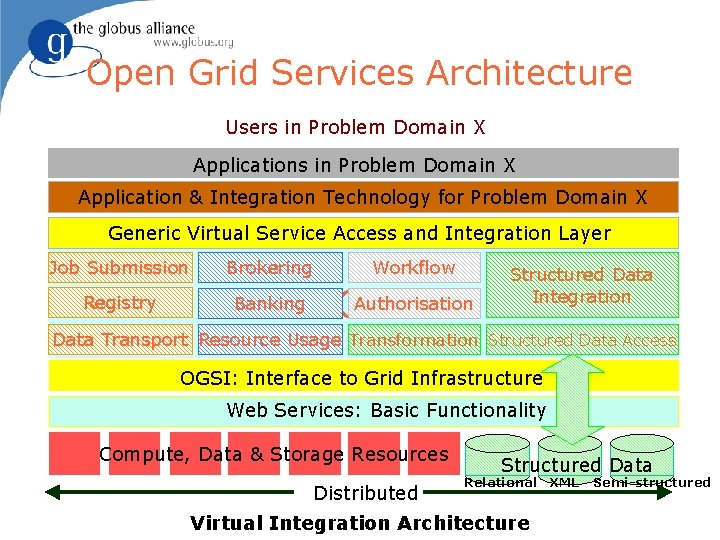

Open Grid Services Architecture Users in Problem Domain X Application & Integration Technology for Problem Domain X Generic Virtual Service Access and Integration Layer Job Submission Brokering Registry Banking Workflow Authorisation OGSA Structured Data Integration Data Transport Resource Usage Transformation Structured Data Access OGSI: Interface to Grid Infrastructure Web Services: Basic Functionality Compute, Data & Storage Resources Distributed Structured Data Relational XML Semi-structured - Architecture foster@mcs. anl. gov Virtual Integration ARGONNE ö CHICAGO 23

The Globus Alliance & Toolkit (Argonne, USC/ISI, Edinburgh, PDC) u An international partnership dedicated to creating & disseminating high-quality open source Grid technology: the Globus Toolkit ◊ u Design, engineering, support, governance Academic Affiliates make major contributions ◊ EU: CERN, Imperial, MPI, Poznan ◊ AP: AIST, TIT, Monash ◊ US: NCSA, SDSC, TACC, UCSB, UW, etc. u Significant industrial contributions u 1000 s of users worldwide, many contribute foster@mcs. anl. gov 24 ARGONNE ö CHICAGO

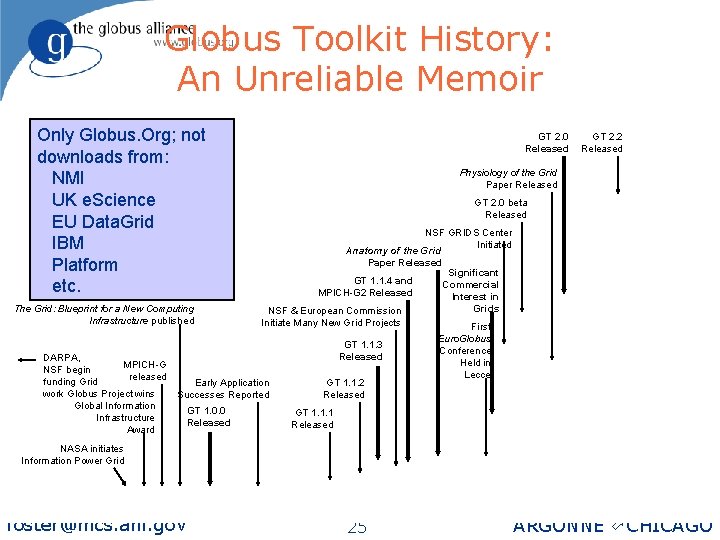

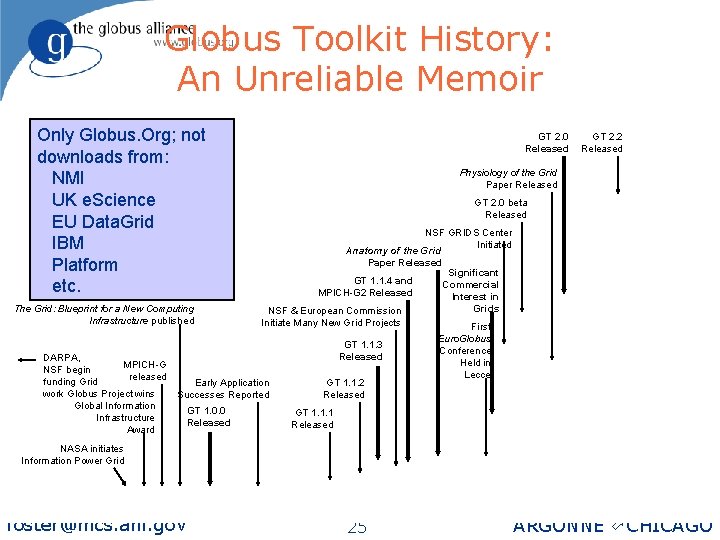

Globus Toolkit History: An Unreliable Memoir Only Globus. Org; not downloads from: NMI UK e. Science EU Data. Grid IBM Platform etc. The Grid: Blueprint for a New Computing Infrastructure published DARPA, MPICH-G NSF begin released funding Grid work Globus Project wins Global Information Infrastructure Award GT 2. 0 Released Physiology of the Grid Paper Released GT 2. 0 beta Released NSF GRIDS Center Initiated Anatomy of the Grid Paper Released Significant GT 1. 1. 4 and Commercial MPICH-G 2 Released Interest in Grids NSF & European Commission Initiate Many New Grid Projects First GT 1. 1. 3 Released Early Application Successes Reported GT 1. 0. 0 Released GT 2. 2 Released GT 1. 1. 2 Released Euro. Globus Conference Held in Lecce GT 1. 1. 1 Released NASA initiates Information Power Grid foster@mcs. anl. gov 25 ARGONNE ö CHICAGO

Globus Toolkit Contributors Include foster@mcs. anl. gov u Grid Packaging Technology (GPT) NCSA u Persistent GRAM Jobmanager Condor u GSI/Kerberos interchangeability Sandia u Documentation u Ports u MDS stress testing u Support u Testing and patches Many u Interoperable tools Many u Replica location service u Python hosting environment u Data access & integration u Data mediation services u Tooling, Xindice, JMS IBM u Brokering framework Platform u Management framework u $$ DARPA, DOE, NSF, NASA, Microsoft, EU NASA, NCSA IBM, HP, Sun, SDSC, … 26 EU Data. Grid IBM, Platform, UK e. Science EU Data. Grid LBNL UK e. Science SDSC HP ARGONNE ö CHICAGO

Problem-Driven, Collaborative Research Methodology Infrastructure Software, Standards Build Deploy Global Community Apply Design Computer Science foster@mcs. anl. gov Analyze 27 Discipline Advances ARGONNE ö CHICAGO

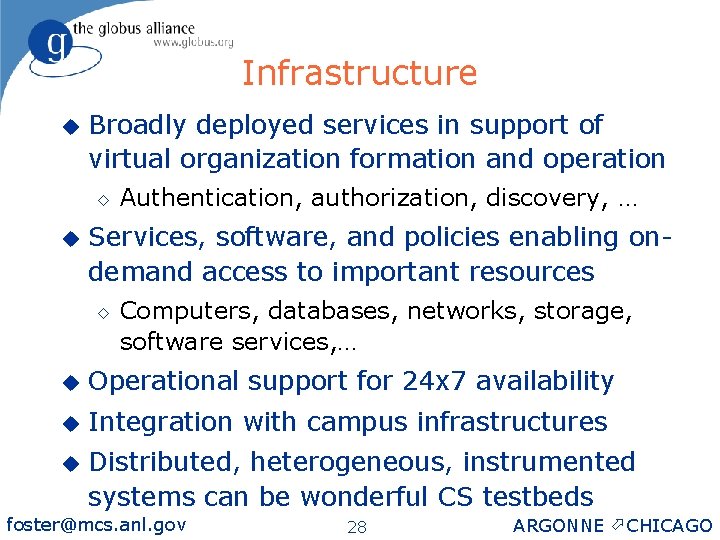

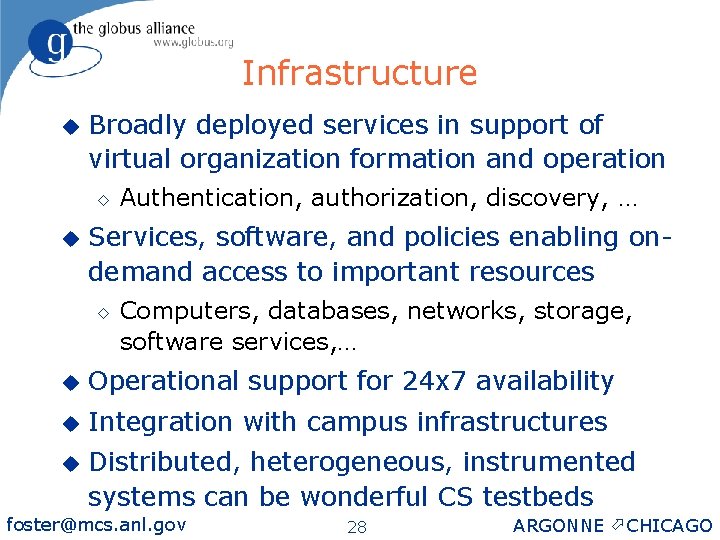

Infrastructure u Broadly deployed services in support of virtual organization formation and operation ◊ u Authentication, authorization, discovery, … Services, software, and policies enabling ondemand access to important resources ◊ Computers, databases, networks, storage, software services, … u Operational support for 24 x 7 availability u Integration with campus infrastructures u Distributed, heterogeneous, instrumented systems can be wonderful CS testbeds foster@mcs. anl. gov 28 ARGONNE ö CHICAGO

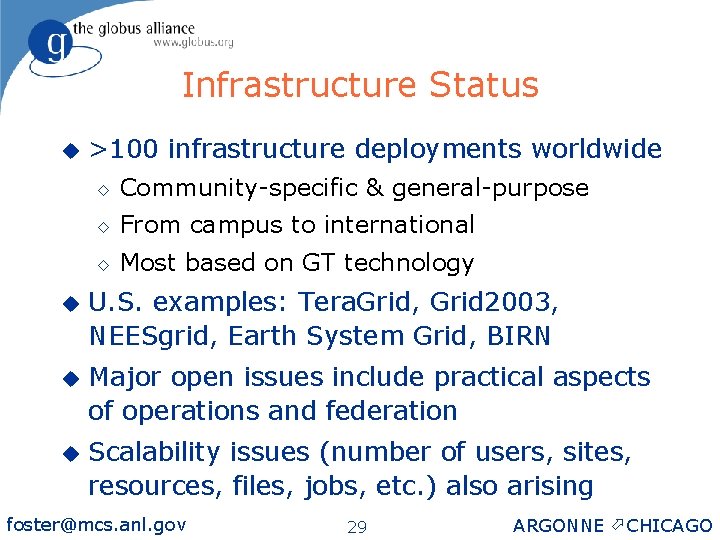

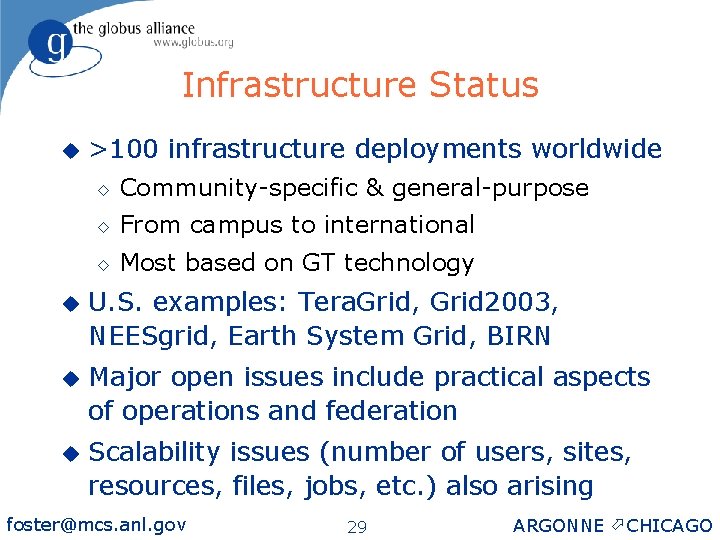

Infrastructure Status u >100 infrastructure deployments worldwide ◊ Community-specific & general-purpose ◊ From campus to international ◊ Most based on GT technology u U. S. examples: Tera. Grid, Grid 2003, NEESgrid, Earth System Grid, BIRN u Major open issues include practical aspects of operations and federation u Scalability issues (number of users, sites, resources, files, jobs, etc. ) also arising foster@mcs. anl. gov 29 ARGONNE ö CHICAGO

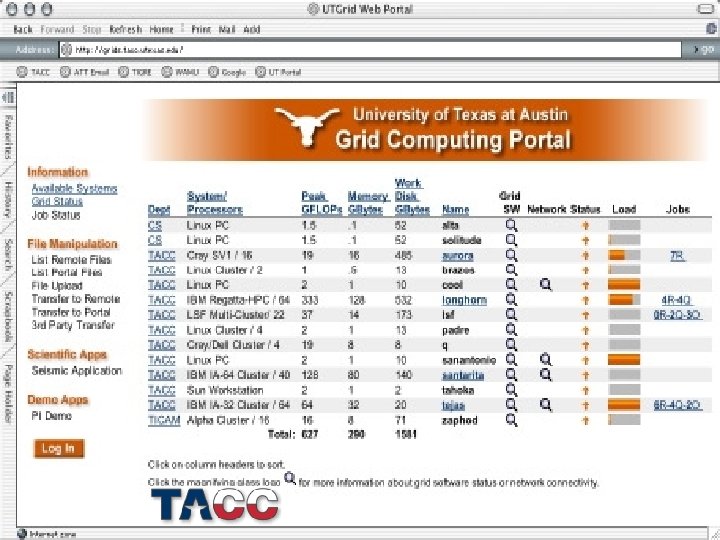

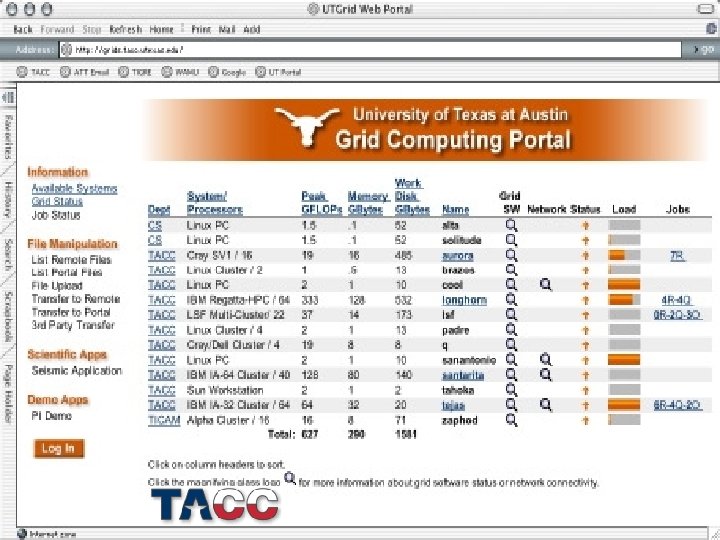

foster@mcs. anl. gov 30 ARGONNE ö CHICAGO

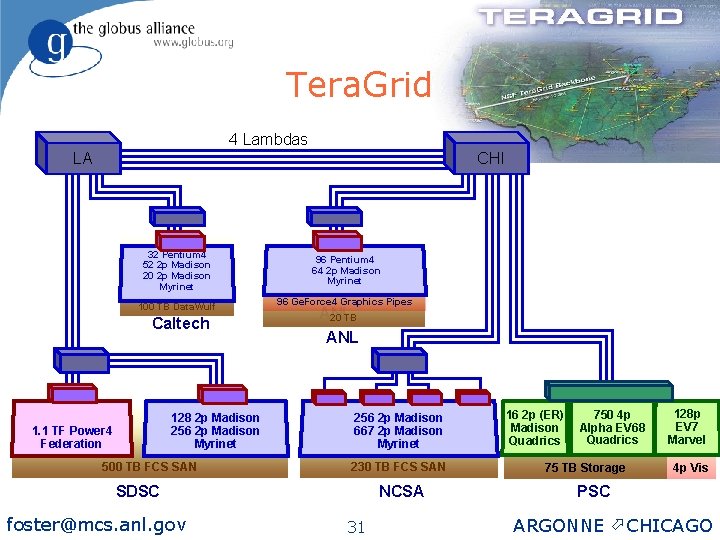

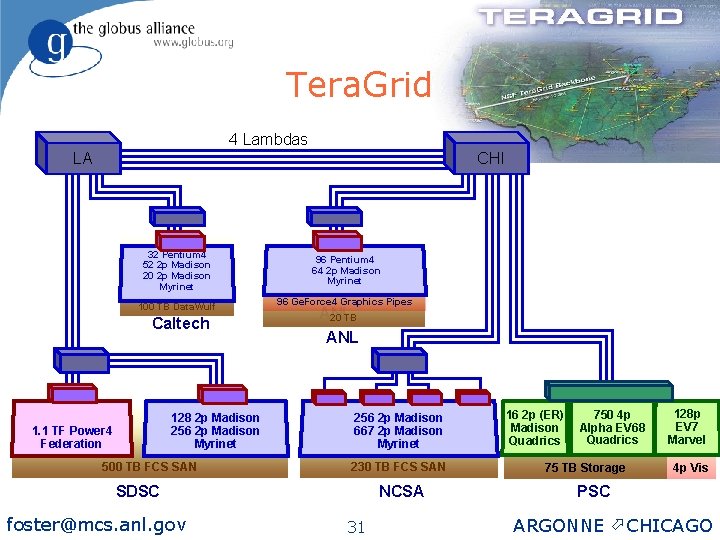

Tera. Grid 4 Lambdas LA CHI 32 Pentium 4 52 2 p Madison 20 2 p Madison Myrinet 96 Pentium 4 64 2 p Madison Myrinet 100 TB Data. Wulf 96 Ge. Force 4 Graphics Pipes Caltech 128 2 p Madison 256 2 p Madison Myrinet 1. 1 TF Power 4 Federation 500 TB FCS SAN ANL 20 TB ANL 256 2 p Madison 667 2 p Madison Myrinet 230 TB FCS SAN SDSC foster@mcs. anl. gov NCSA 31 16 2 p (ER) Madison Quadrics 750 4 p Alpha EV 68 Quadrics 75 TB Storage 128 p EV 7 Marvel 4 p Vis PSC ARGONNE ö CHICAGO

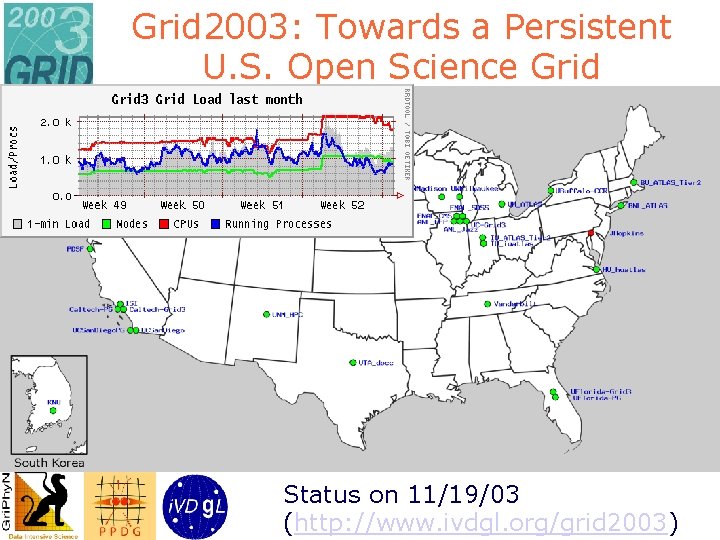

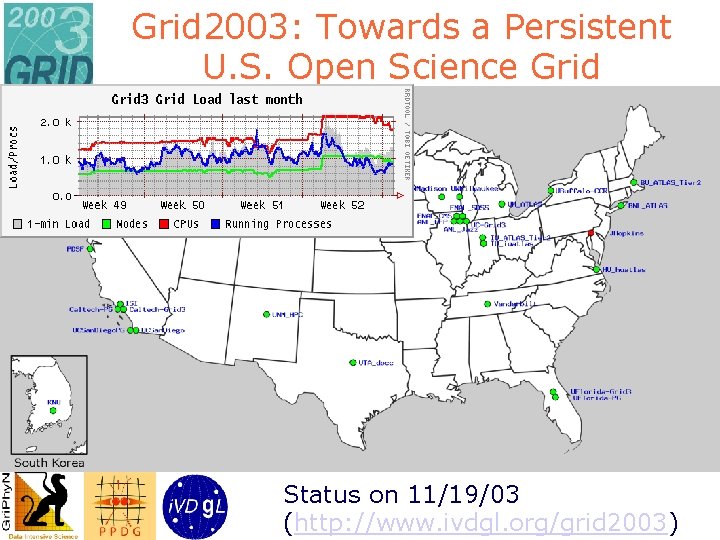

Grid 2003: Towards a Persistent U. S. Open Science Grid Status on 11/19/03 (http: //www. ivdgl. org/grid 2003)

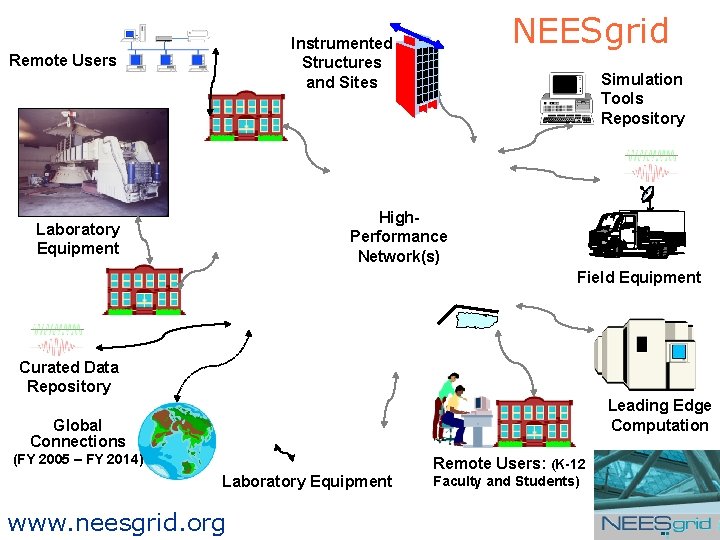

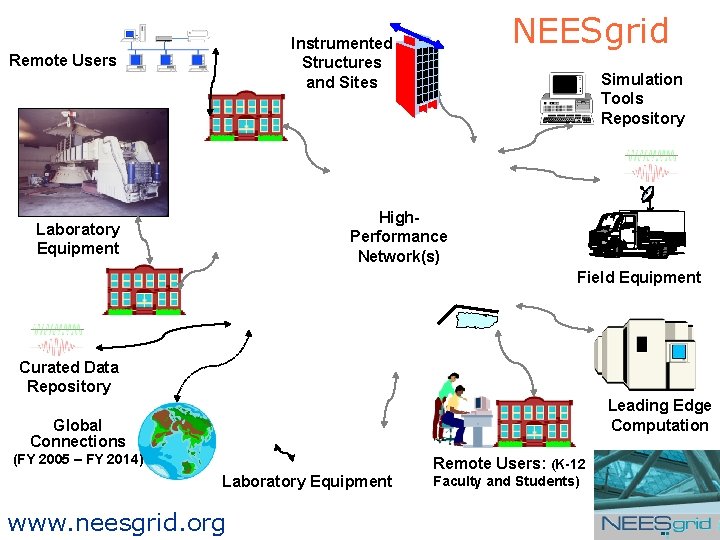

NEESgrid Instrumented Structures and Sites Remote Users Simulation Tools Repository High. Performance Network(s) Laboratory Equipment Field Equipment Curated Data Repository Leading Edge Computation Global Connections (FY 2005 – FY 2014) Remote Users: (K-12 Laboratory Equipment www. neesgrid. org Faculty and Students)

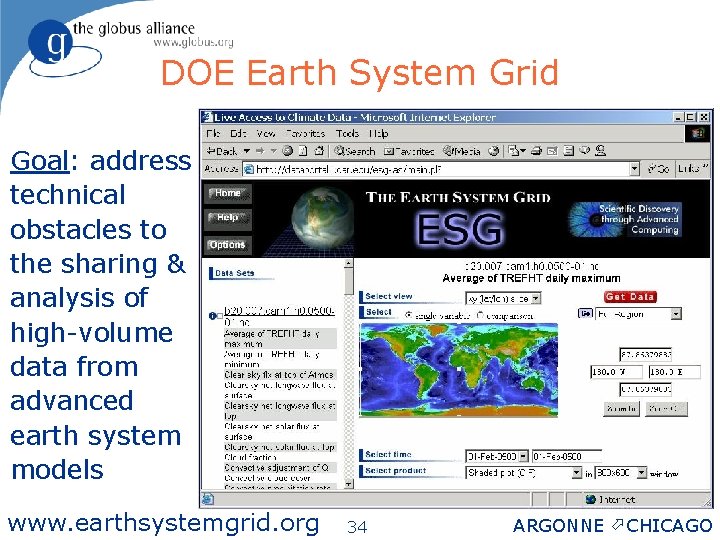

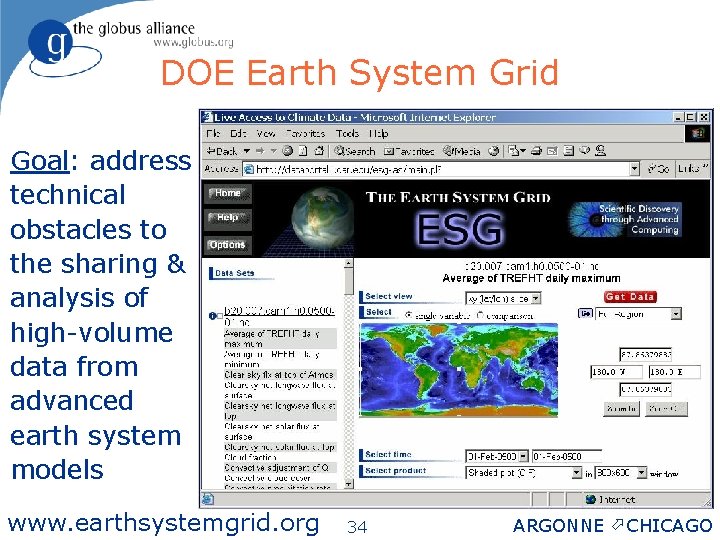

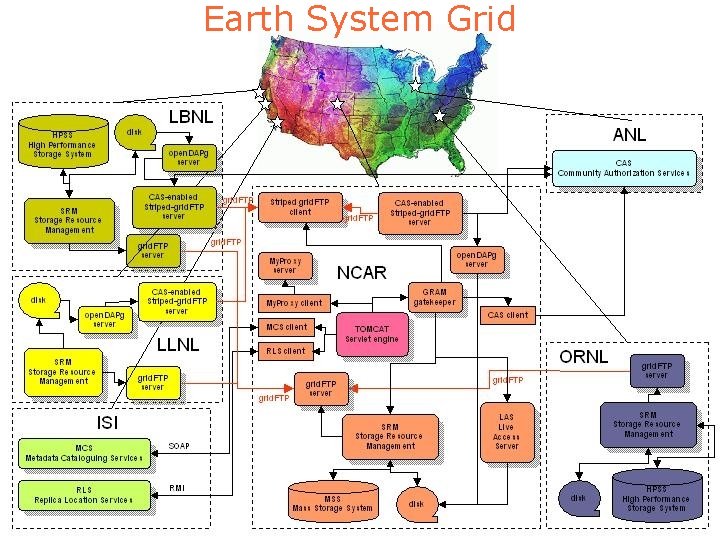

DOE Earth System Grid Goal: address technical obstacles to the sharing & analysis of high-volume data from advanced earth system models www. earthsystemgrid. org foster@mcs. anl. gov 34 ARGONNE ö CHICAGO

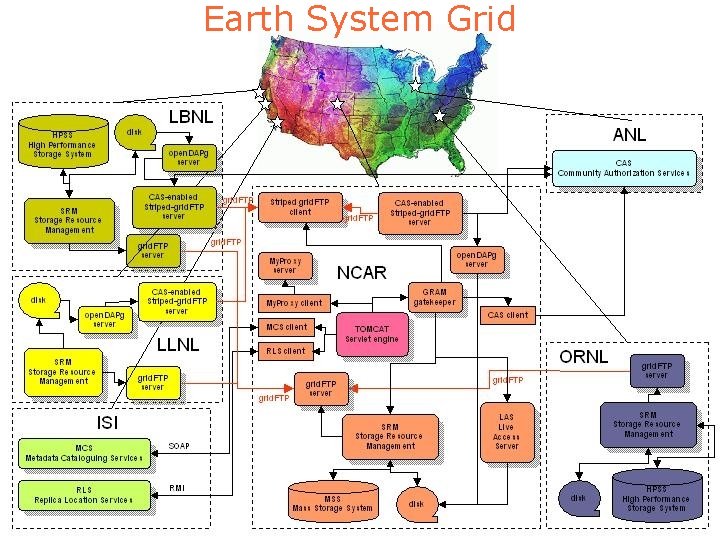

Earth System Grid foster@mcs. anl. gov 35 ARGONNE ö CHICAGO

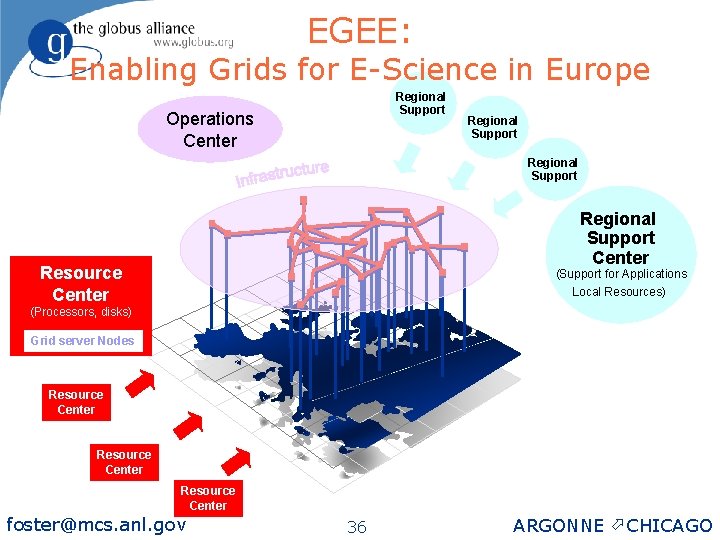

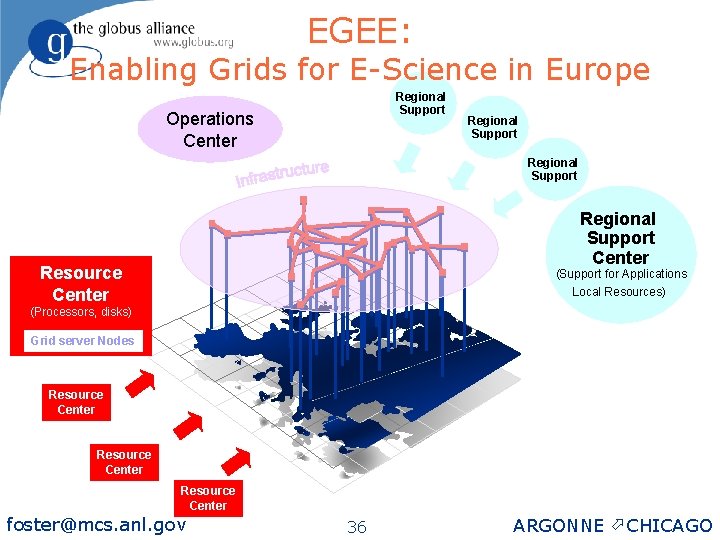

EGEE: Enabling Grids for E-Science in Europe Regional Support Operations Center Regional Support Center Resource Center (Support for Applications Local Resources) (Processors, disks) Grid server Nodes Resource Center foster@mcs. anl. gov 36 ARGONNE ö CHICAGO

Problem-Driven, Collaborative Research Methodology Infrastructure Software, Standards Build Deploy Global Community Apply Design Computer Science foster@mcs. anl. gov Analyze 37 Discipline Advances ARGONNE ö CHICAGO

Applications u 100 s of projects applying Grid technologies in science, engineering, and industry u Many are exploratory but a significant number are delivering real value, in such areas as ◊ u Remote access to computers, data, services, instrumentation ◊ Federation of computers, data, instruments ◊ Collaborative environments No single recipe for success, but well-defined goals, modest ambition, & skilled staff help foster@mcs. anl. gov 38 ARGONNE ö CHICAGO

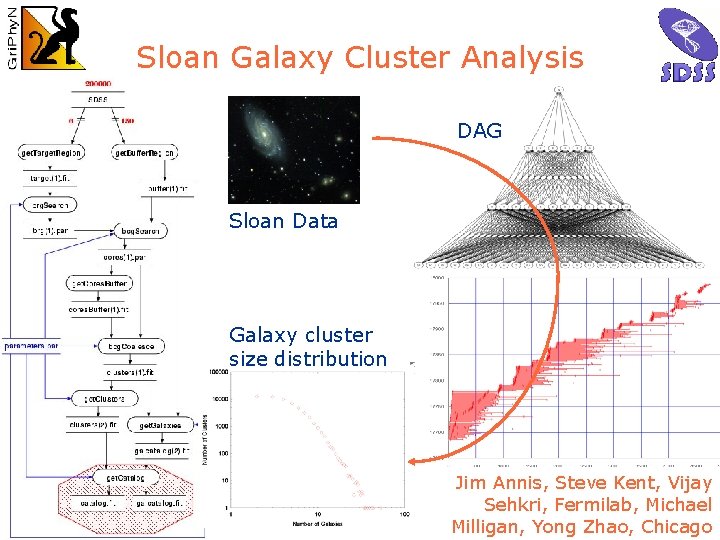

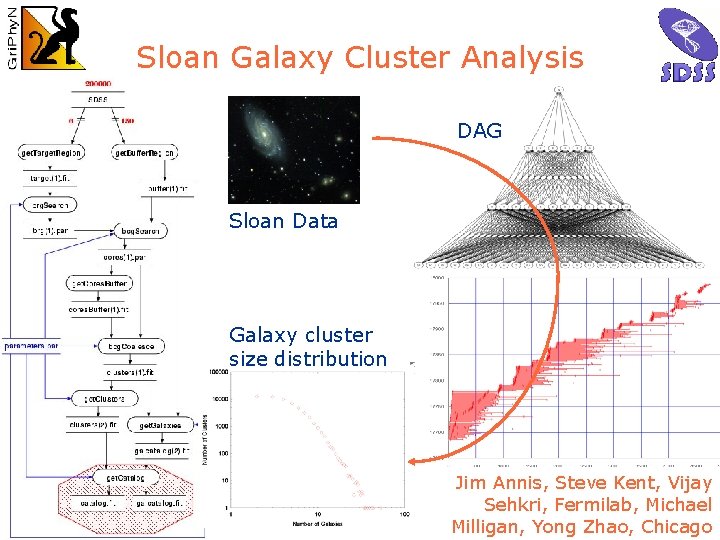

Sloan Galaxy Cluster Analysis DAG Sloan Data Galaxy cluster size distribution Jim Annis, Steve Kent, Vijay Sehkri, Fermilab, Michael Milligan, Yong Zhao, Chicago

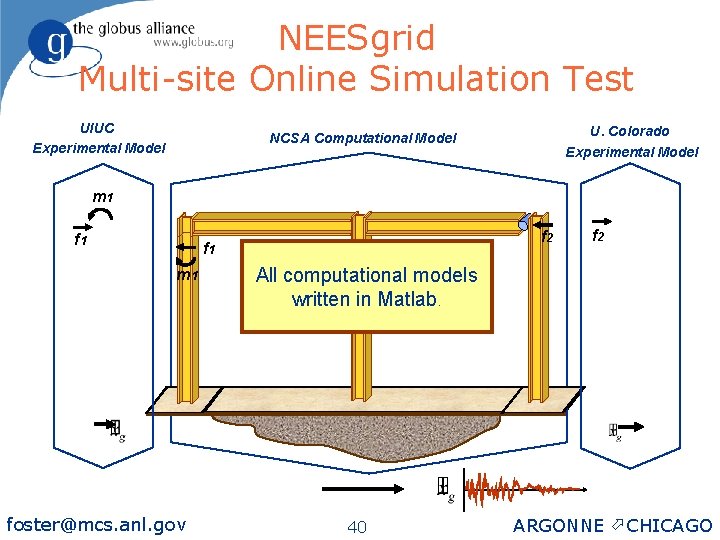

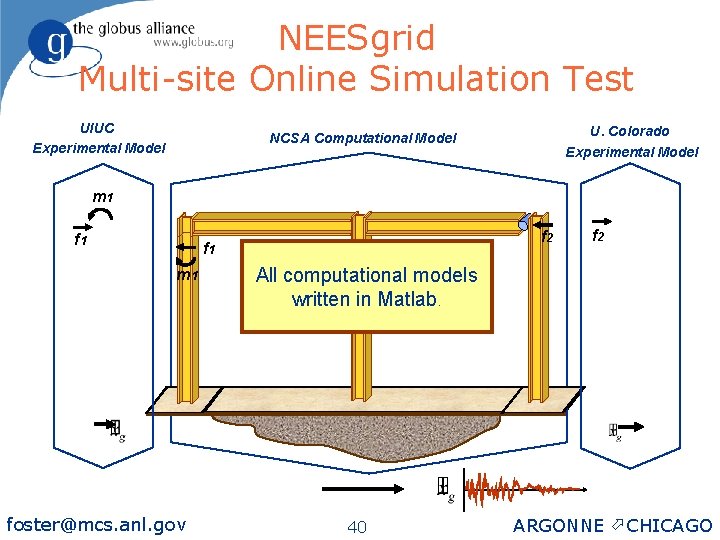

NEESgrid Multi-site Online Simulation Test UIUC Experimental Model U. Colorado Experimental Model NCSA Computational Model m 1 f 2 f 1 m 1 foster@mcs. anl. gov f 2 All computational models written in Matlab. 40 ARGONNE ö CHICAGO

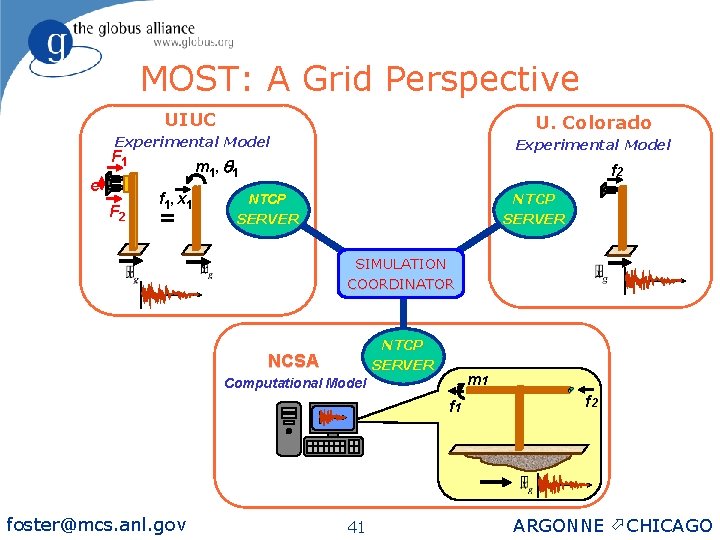

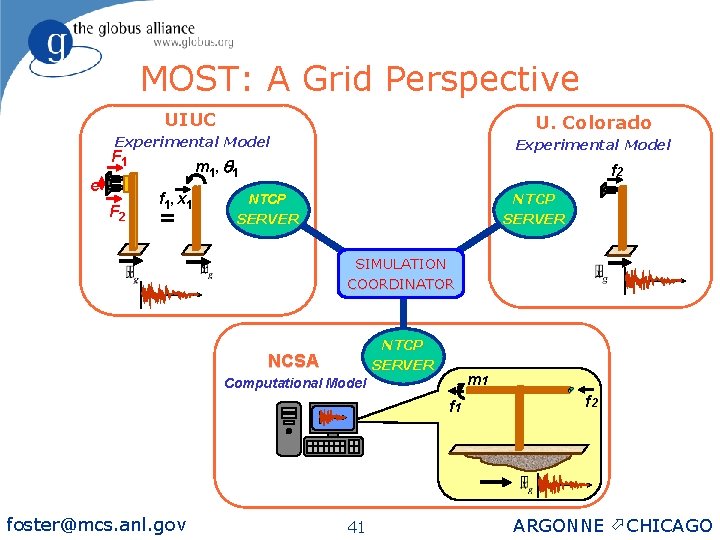

MOST: A Grid Perspective UIUC U. Colorado Experimental Model F 1 e F 2 m 1 , q 1 f 1 , x 1 = f 2 NTCP SERVER SIMULATION COORDINATOR NTCP SERVER NCSA m 1 Computational Model f 1 foster@mcs. anl. gov 41 f 2 ARGONNE ö CHICAGO

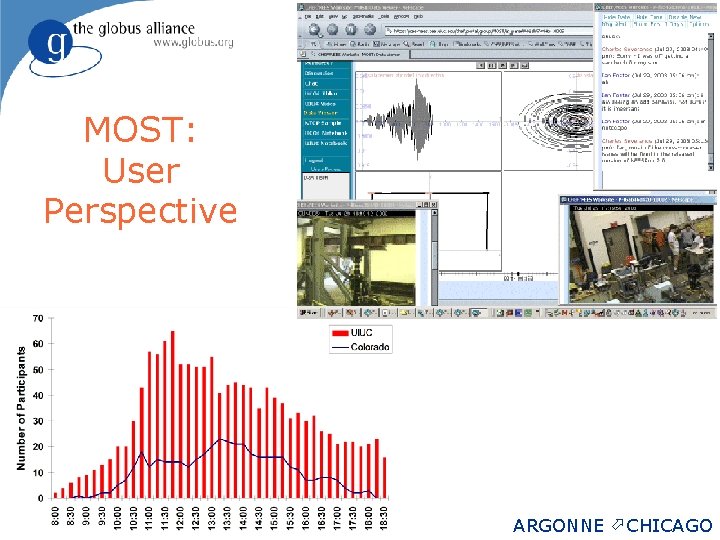

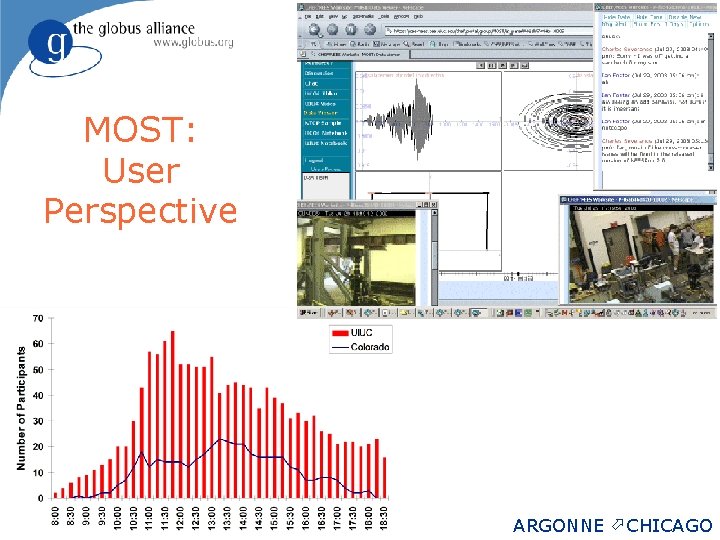

MOST: User Perspective foster@mcs. anl. gov 42 ARGONNE ö CHICAGO

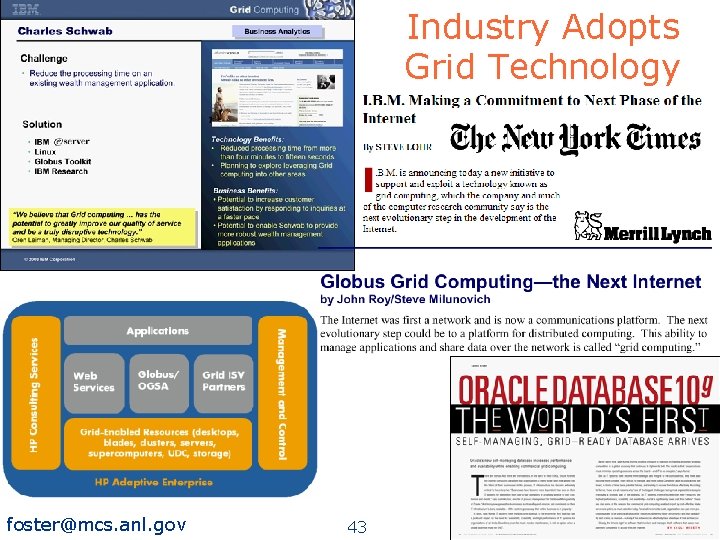

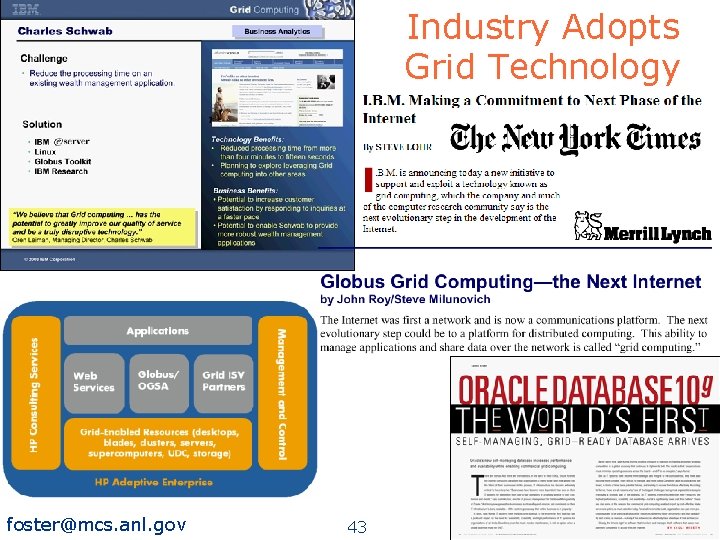

Industry Adopts Grid Technology foster@mcs. anl. gov 43 ARGONNE ö CHICAGO

Concluding Remarks Infrastructure Software, Standards Build Deploy Global Community Apply Design Computer Science foster@mcs. anl. gov Analyze 44 Discipline Advances ARGONNE ö CHICAGO

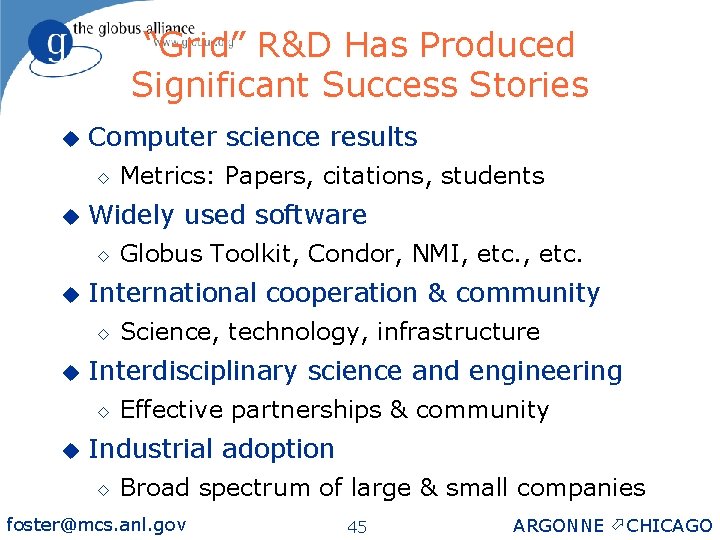

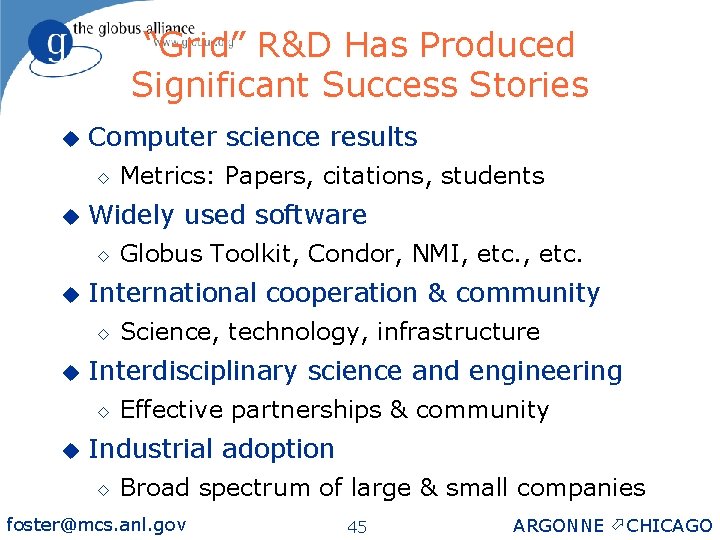

“Grid” R&D Has Produced Significant Success Stories u Computer science results ◊ u Widely used software ◊ u Science, technology, infrastructure Interdisciplinary science and engineering ◊ u Globus Toolkit, Condor, NMI, etc. International cooperation & community ◊ u Metrics: Papers, citations, students Effective partnerships & community Industrial adoption ◊ Broad spectrum of large & small companies foster@mcs. anl. gov 45 ARGONNE ö CHICAGO

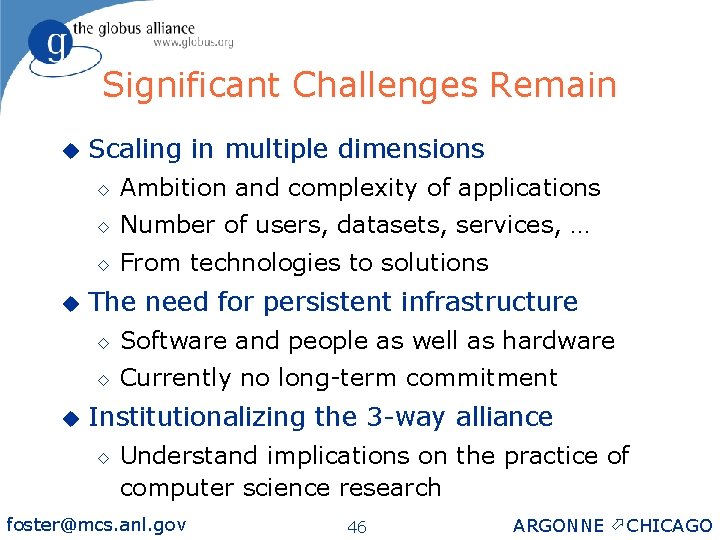

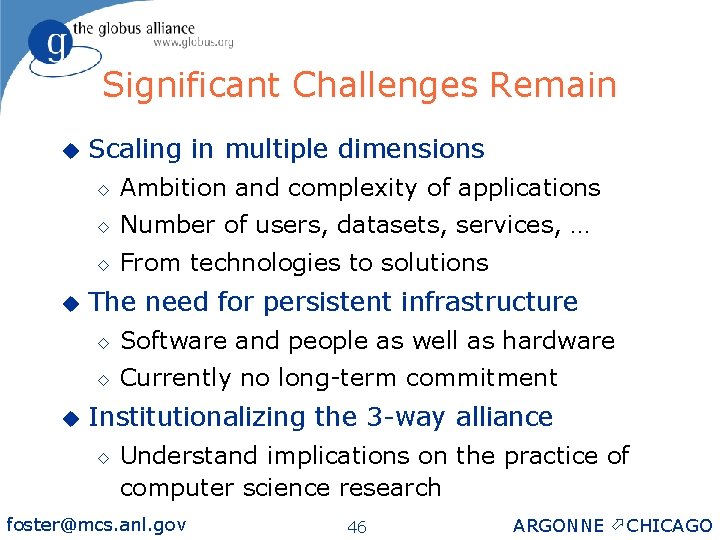

Significant Challenges Remain u u u Scaling in multiple dimensions ◊ Ambition and complexity of applications ◊ Number of users, datasets, services, … ◊ From technologies to solutions The need for persistent infrastructure ◊ Software and people as well as hardware ◊ Currently no long-term commitment Institutionalizing the 3 -way alliance ◊ Understand implications on the practice of computer science research foster@mcs. anl. gov 46 ARGONNE ö CHICAGO

Thanks, in particular, to: u Carl Kesselman and Steve Tuecke, my longtime Globus co-conspirators u Gregor von Laszewski, Kate Keahey, Jennifer Schopf, Mike Wilde, Argonne colleagues u Globus Alliance members at Argonne, U. Chicago, USC/ISI, Edinburgh, PDC u Miron Livny, U. Wisconsin Condor project, Rick Stevens, Argonne & U. Chicago u Other partners in Grid technology, application, & infrastructure projects u DOE, NSF, foster@mcs. anl. gov NASA, IBM for generous support 47 ARGONNE ö CHICAGO

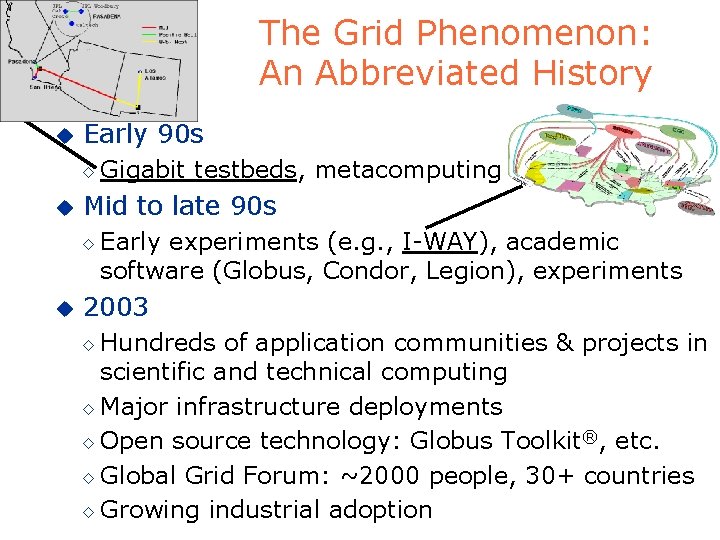

For More Information u The Globus Alliance® ◊ u Global Grid Forum ◊ u www. globus. org www. ggf. org Background information ◊ www. mcs. anl. gov/~foster 2 nd Edition: Just Out foster@mcs. anl. gov 48 ARGONNE ö CHICAGO