The Greedy TechniqueMethod The greedy technique Problems explored

The Greedy Technique(Method) The greedy technique Problems explored § The coin changing problem § Activity selection (interval scheduling) § Interval partitioning § Slides for this unit based on text slides, and instructor’s slides based on Cormen

Optimization problems An optimization problem: Given a problem instance, a set of constraints and an objective function. Find a feasible solution for the given instance for which the objective function has an optimal value either maximum or minimum depending on the problem being solved. A feasible solution satisfies the problem’s constraints The constraints specify the limitations on the required solutions. For example in the knapsack problem we require that the items in the knapsack will not exceed a given weight n n n 2

The Greedy Technique(Method) Greedy algorithms make good local choices in the hope that they result in an optimal solution. They result in feasible solutions. Not necessarily an optimal solution. n n A proof is needed to show that the algorithm finds an optimal solution. A counter example shows that the greedy algorithm does not provide an optimal solution. 3

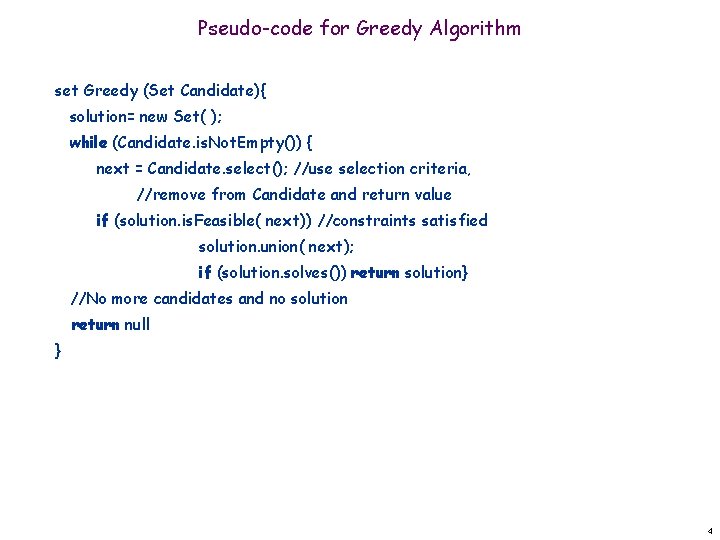

Pseudo-code for Greedy Algorithm set Greedy (Set Candidate){ solution= new Set( ); while (Candidate. is. Not. Empty()) { next = Candidate. select(); //use selection criteria, //remove from Candidate and return value if (solution. is. Feasible( next)) //constraints satisfied solution. union( next); if (solution. solves()) return solution} //No more candidates and no solution return null } 4

Pseudo code for greedy cont. select() chooses a candidate based on a local selection criteria, removes it from Candidate, and returns its value. is. Feasible() checks whether adding the selected value to the current solution can result in a feasible solution (no constraints are violated). solves() checks whether the problem is solved. 5

Coin Changing Greed is good. Greed is right. Greed works. Greed clarifies, cuts through, and captures the essence of the evolutionary spirit. - Gordon Gecko (Michael Douglas)

Coin Changing Goal. Given currency denominations: 1, 5, 10, 25, 100, devise a method to pay amount to customer using fewest number of coins. Ex: 34¢. Cashier's algorithm. At each iteration, add coin of the largest value that does not take us past the amount to be paid. Ex: $2. 89. 7

Coin changing problem Problem: Return correct change using a minimum number of coins. Greedy choice: coin with highest coin value A greedy solution (next slide): American money The amount owed = 37 cents. The change is: 1 quarter, 1 dime, 2 cents. Solution is optimal. Is it optimal for all sets of coin sizes? Is there a solution for all sets of coin sizes? (12, D, N, P/15) 8

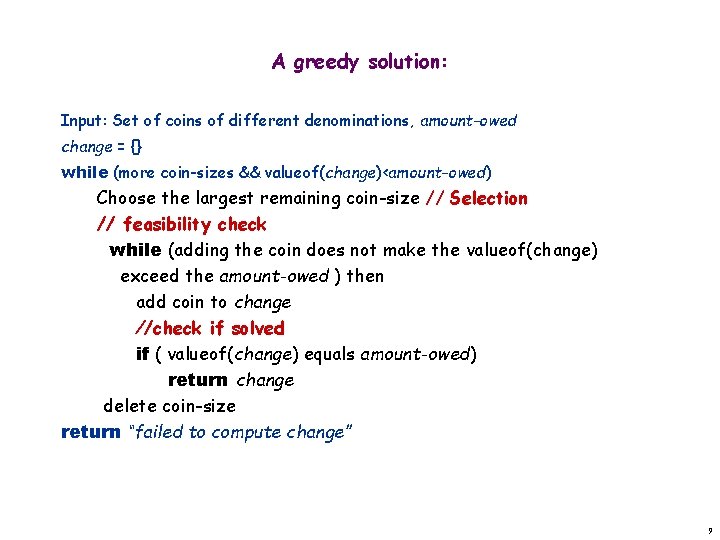

A greedy solution: Input: Set of coins of different denominations, amount-owed change = {} while (more coin-sizes && valueof(change)<amount-owed) Choose the largest remaining coin-size // Selection // feasibility check while (adding the coin does not make the valueof(change) exceed the amount-owed ) then add coin to change //check if solved if ( valueof(change) equals amount-owed) return change delete coin-size return “failed to compute change” 9

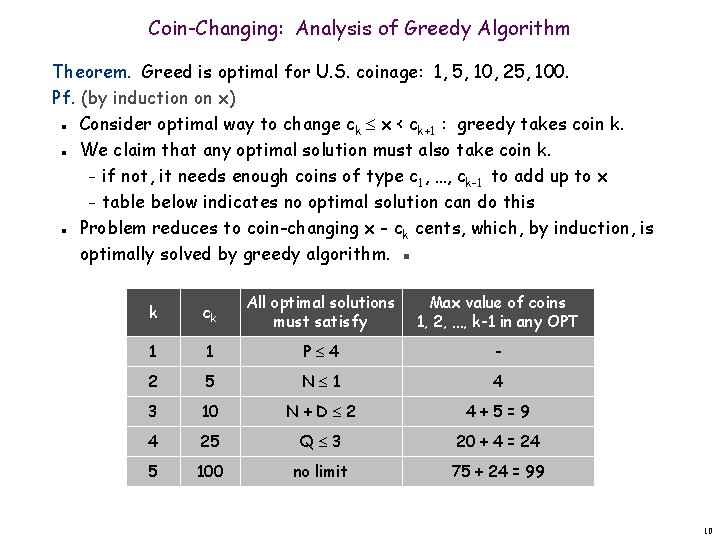

Coin-Changing: Analysis of Greedy Algorithm Theorem. Greed is optimal for U. S. coinage: 1, 5, 10, 25, 100. Pf. (by induction on x) Consider optimal way to change ck x < ck+1 : greedy takes coin k. We claim that any optimal solution must also take coin k. – if not, it needs enough coins of type c 1, …, ck-1 to add up to x – table below indicates no optimal solution can do this Problem reduces to coin-changing x - ck cents, which, by induction, is optimally solved by greedy algorithm. ▪ n n n k ck All optimal solutions must satisfy Max value of coins 1, 2, …, k-1 in any OPT 1 1 P 4 - 2 5 N 1 4 3 10 N+D 2 4+5=9 4 25 Q 3 20 + 4 = 24 5 100 no limit 75 + 24 = 99 10

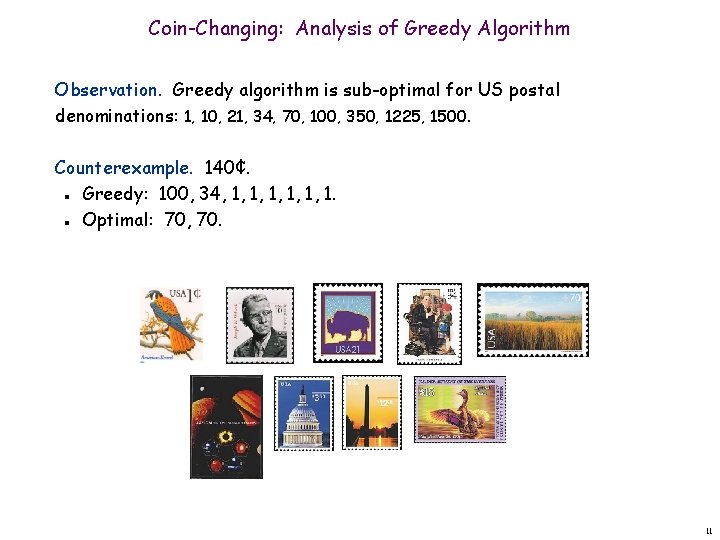

Coin-Changing: Analysis of Greedy Algorithm Observation. Greedy algorithm is sub-optimal for US postal denominations: 1, 10, 21, 34, 70, 100, 350, 1225, 1500. Counterexample. 140¢. Greedy: 100, 34, 1, 1, 1. Optimal: 70, 70. n n 11

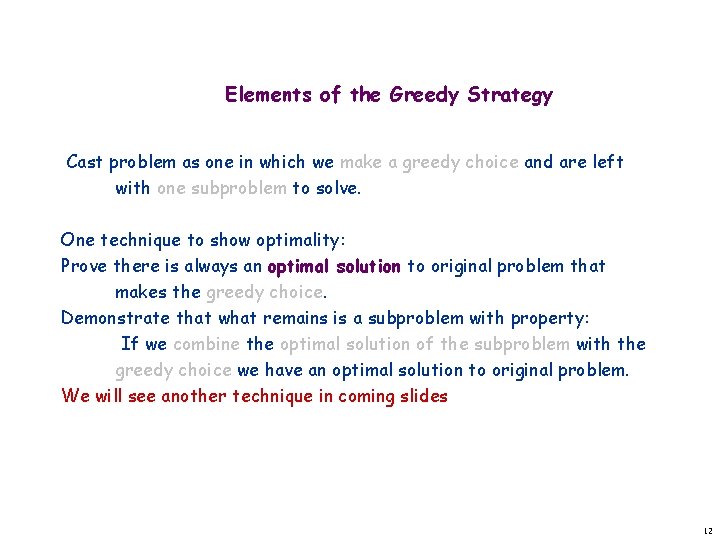

Elements of the Greedy Strategy Cast problem as one in which we make a greedy choice and are left with one subproblem to solve. One technique to show optimality: Prove there is always an optimal solution to original problem that makes the greedy choice. Demonstrate that what remains is a subproblem with property: If we combine the optimal solution of the subproblem with the greedy choice we have an optimal solution to original problem. We will see another technique in coming slides 12

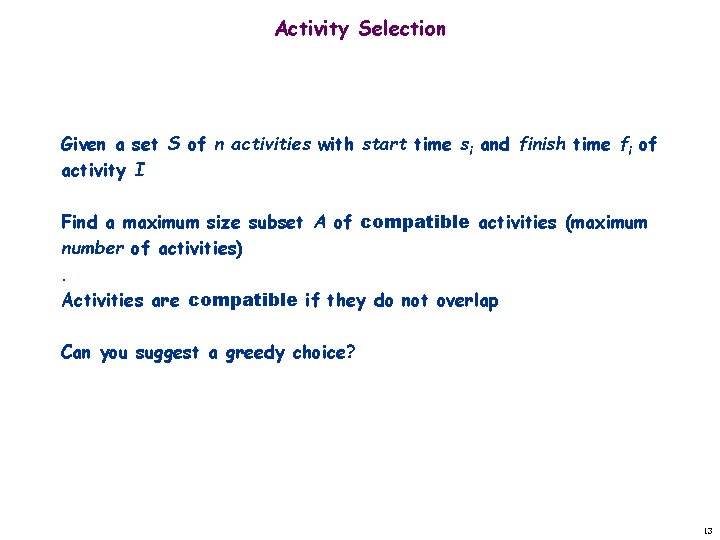

Activity Selection Given a set S of n activities with start time si and finish time fi of activity I Find a maximum size subset A of compatible activities (maximum number of activities). Activities are compatible if they do not overlap Can you suggest a greedy choice? 13

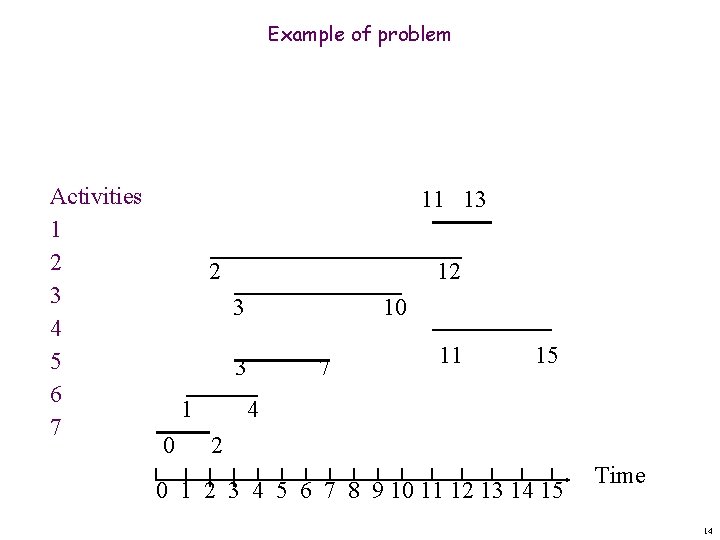

Example of problem Activities 1 2 3 4 5 6 7 11 13 2 12 3 10 3 1 0 7 11 15 4 2 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Time 14

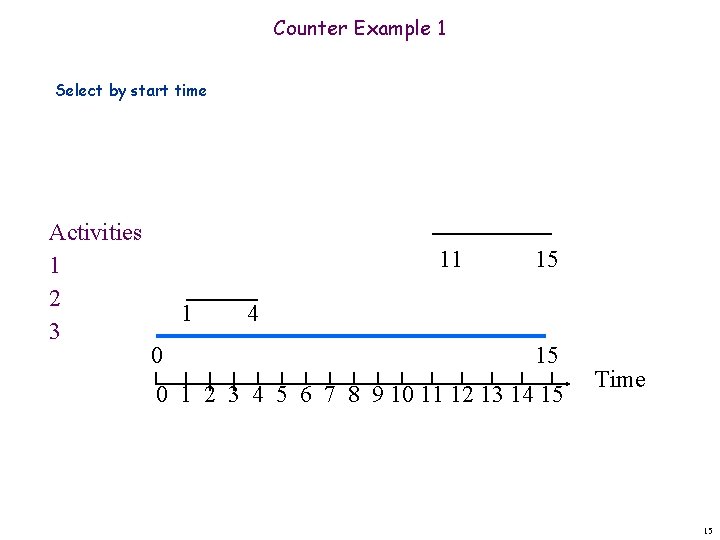

Counter Example 1 Select by start time Activities 1 2 3 11 1 0 15 4 15 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Time 15

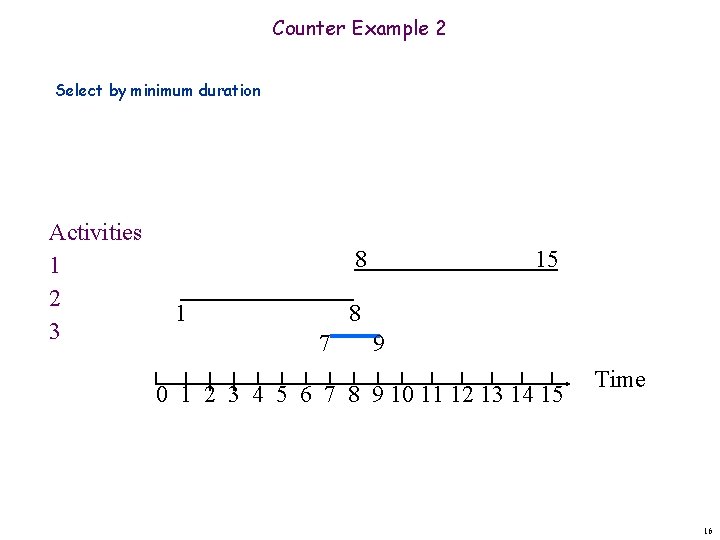

Counter Example 2 Select by minimum duration Activities 1 2 3 8 1 15 8 7 9 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Time 16

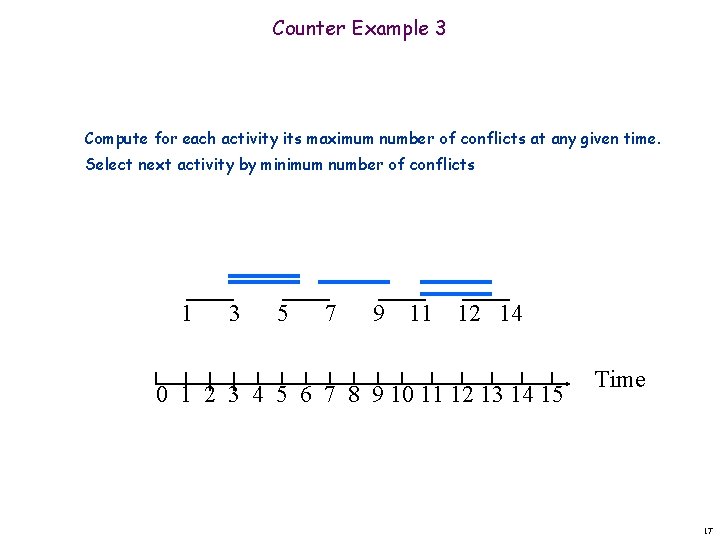

Counter Example 3 Compute for each activity its maximum number of conflicts at any given time. Select next activity by minimum number of conflicts 1 3 5 7 9 11 12 14 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Time 17

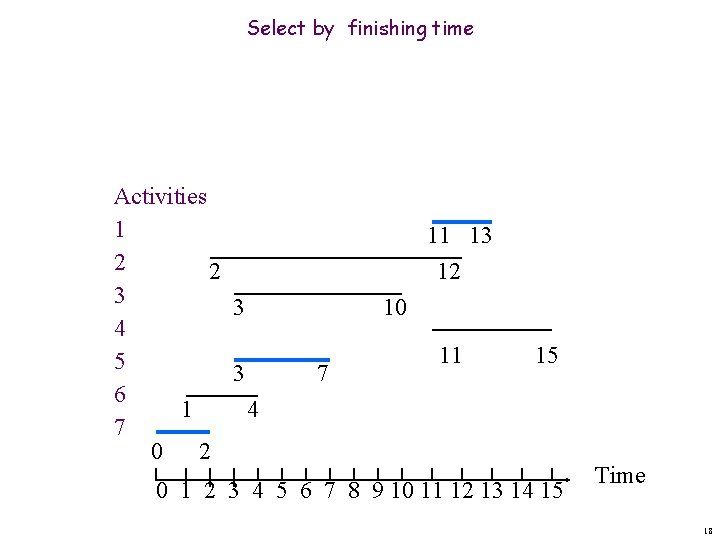

Select by finishing time Activities 1 2 2 3 3 4 5 3 6 1 4 7 0 2 11 13 12 10 7 11 15 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Time 18

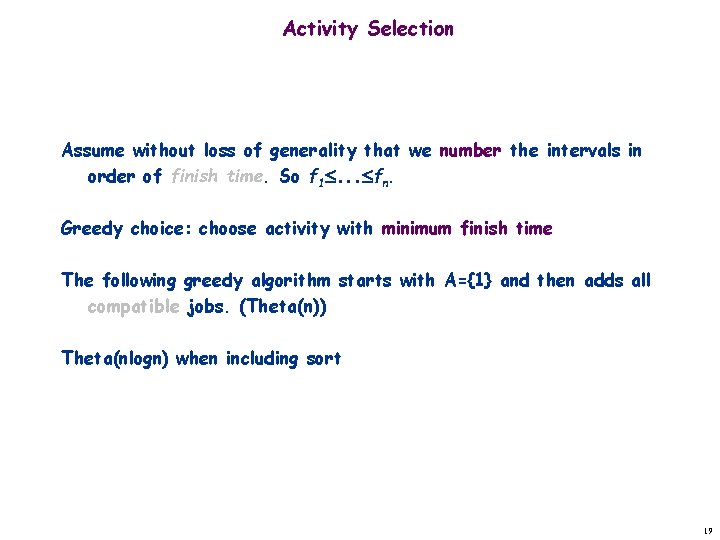

Activity Selection Assume without loss of generality that we number the intervals in order of finish time. So f 1. . . fn. Greedy choice: choose activity with minimum finish time The following greedy algorithm starts with A={1} and then adds all compatible jobs. (Theta(n)) Theta(nlogn) when including sort 19

![Greedy-Activity-Selector(s, f) n <- length[s] // number of activities A <- {1} last. Activity Greedy-Activity-Selector(s, f) n <- length[s] // number of activities A <- {1} last. Activity](http://slidetodoc.com/presentation_image/22b4d0d913d8d8f0d12f2adeeee64323/image-20.jpg)

Greedy-Activity-Selector(s, f) n <- length[s] // number of activities A <- {1} last. Activity <- 1 //last activity added for i <- 2 to n //select if si >= fj then //compatible (feasible) add {i} to A last. Activity <- i //save new last activity return A 20

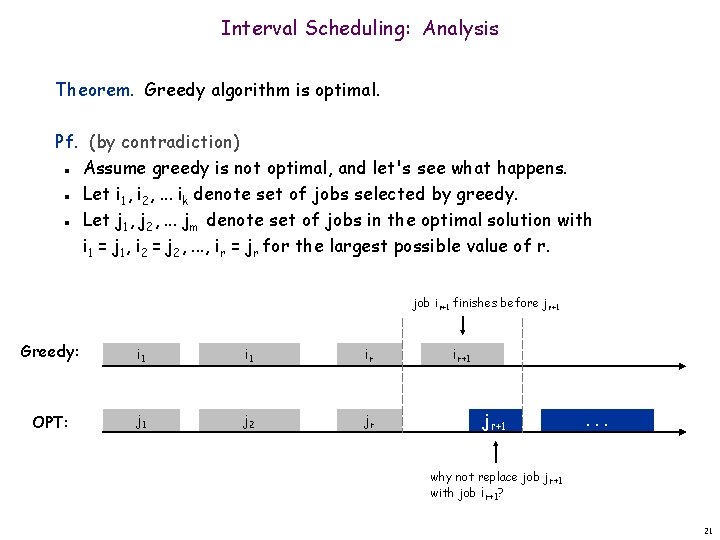

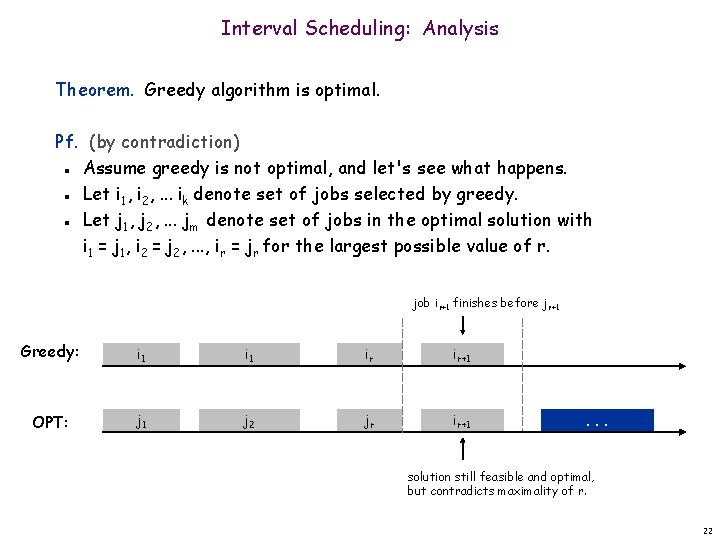

Interval Scheduling: Analysis Theorem. Greedy algorithm is optimal. Pf. (by contradiction) Assume greedy is not optimal, and let's see what happens. Let i 1, i 2, . . . ik denote set of jobs selected by greedy. Let j 1, j 2, . . . jm denote set of jobs in the optimal solution with i 1 = j 1, i 2 = j 2, . . . , ir = jr for the largest possible value of r. n n n job ir+1 finishes before jr+1 Greedy: i 1 ir OPT: j 1 j 2 jr ir+1 jr+1 . . . why not replace job jr+1 with job ir+1? 21

Interval Scheduling: Analysis Theorem. Greedy algorithm is optimal. Pf. (by contradiction) Assume greedy is not optimal, and let's see what happens. Let i 1, i 2, . . . ik denote set of jobs selected by greedy. Let j 1, j 2, . . . jm denote set of jobs in the optimal solution with i 1 = j 1, i 2 = j 2, . . . , ir = jr for the largest possible value of r. n n n job ir+1 finishes before jr+1 Greedy: i 1 ir ir+1 OPT: j 1 j 2 jr ir+1 . . . solution still feasible and optimal, but contradicts maximality of r. 22

4. 1 Interval Partitioning

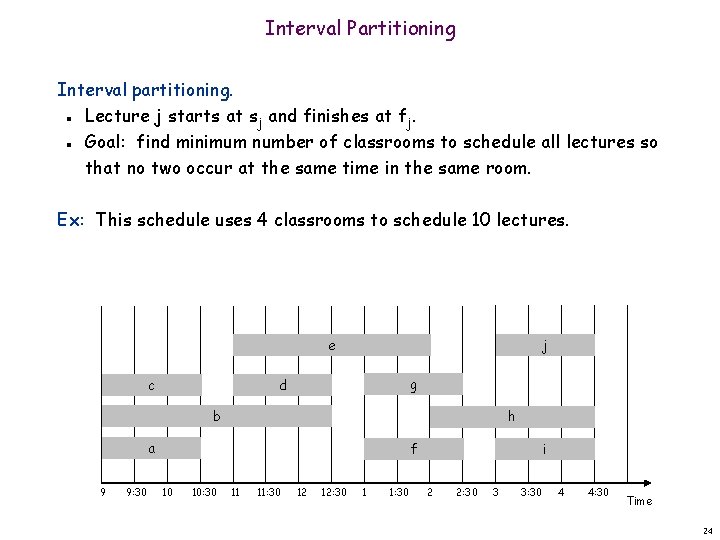

Interval Partitioning Interval partitioning. Lecture j starts at sj and finishes at fj. Goal: find minimum number of classrooms to schedule all lectures so that no two occur at the same time in the same room. n n Ex: This schedule uses 4 classrooms to schedule 10 lectures. e c j g d b h a 9 9: 30 f 10 10: 30 11 11: 30 12 12: 30 1 1: 30 i 2 2: 30 3 3: 30 4 4: 30 Time 24

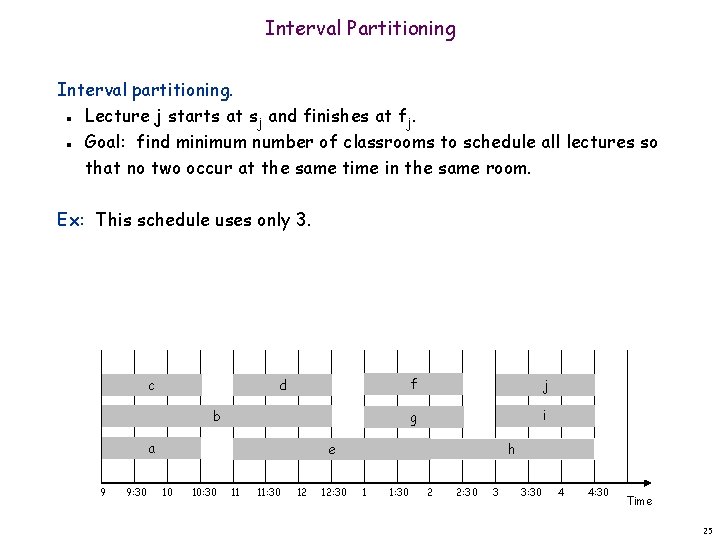

Interval Partitioning Interval partitioning. Lecture j starts at sj and finishes at fj. Goal: find minimum number of classrooms to schedule all lectures so that no two occur at the same time in the same room. n n Ex: This schedule uses only 3. c d b a 9 9: 30 f j g i h e 10 10: 30 11 11: 30 12 12: 30 1 1: 30 2 2: 30 3 3: 30 4 4: 30 Time 25

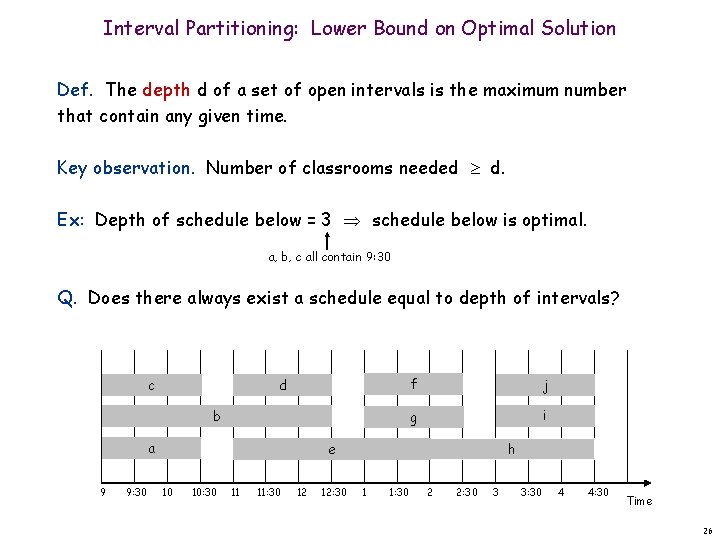

Interval Partitioning: Lower Bound on Optimal Solution Def. The depth d of a set of open intervals is the maximum number that contain any given time. Key observation. Number of classrooms needed d. Ex: Depth of schedule below = 3 schedule below is optimal. a, b, c all contain 9: 30 Q. Does there always exist a schedule equal to depth of intervals? c d b a 9 9: 30 f j g i h e 10 10: 30 11 11: 30 12 12: 30 1 1: 30 2 2: 30 3 3: 30 4 4: 30 Time 26

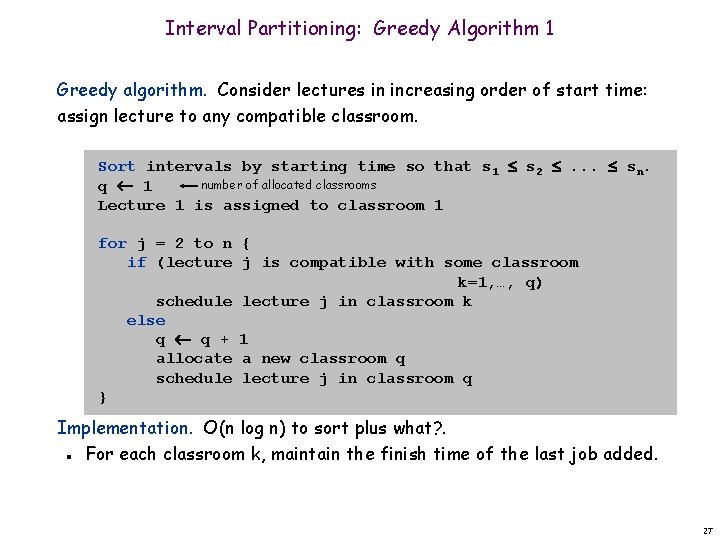

Interval Partitioning: Greedy Algorithm 1 Greedy algorithm. Consider lectures in increasing order of start time: assign lecture to any compatible classroom. Sort intervals by starting time so that s 1 s 2 . . . sn. number of allocated classrooms q 1 Lecture 1 is assigned to classroom 1 for j = 2 to n { if (lecture j is compatible with some classroom k=1, …, q) schedule lecture j in classroom k else q q + 1 allocate a new classroom q schedule lecture j in classroom q } Implementation. O(n log n) to sort plus what? . For each classroom k, maintain the finish time of the last job added. n 27

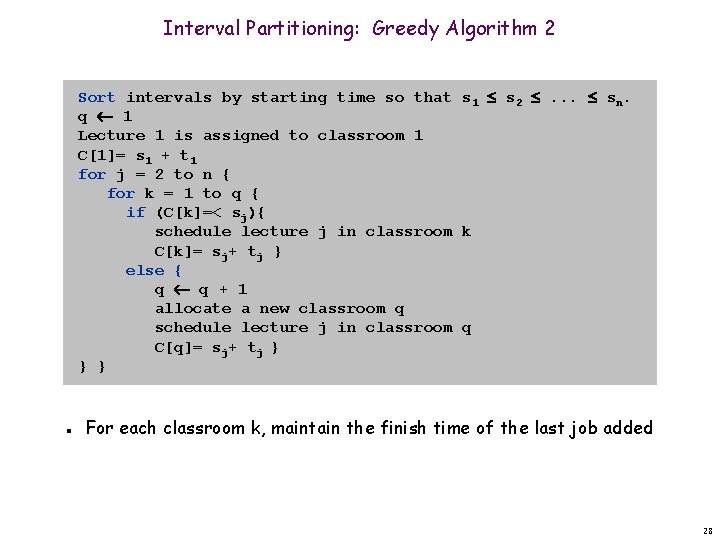

Interval Partitioning: Greedy Algorithm 2 Sort intervals by starting time so that s 1 s 2 . . . sn. q 1 Lecture 1 is assigned to classroom 1 C[1]= s 1 + t 1 for j = 2 to n { for k = 1 to q { if (C[k]=< sj){ schedule lecture j in classroom k C[k]= sj+ tj } else { q q + 1 allocate a new classroom q schedule lecture j in classroom q C[q]= sj+ tj } } } n For each classroom k, maintain the finish time of the last job added 28

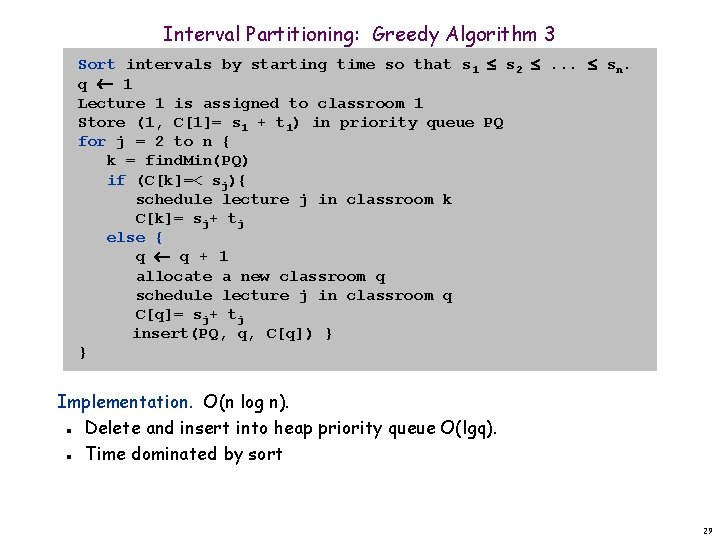

Interval Partitioning: Greedy Algorithm 3 Sort intervals by starting time so that s 1 s 2 . . . sn. q 1 Lecture 1 is assigned to classroom 1 Store (1, C[1]= s 1 + t 1) in priority queue PQ for j = 2 to n { k = find. Min(PQ) if (C[k]=< sj){ schedule lecture j in classroom k C[k]= sj+ tj else { q q + 1 allocate a new classroom q schedule lecture j in classroom q C[q]= sj+ tj insert(PQ, q, C[q]) } } Implementation. O(n log n). Delete and insert into heap priority queue O(lgq). Time dominated by sort n n 29

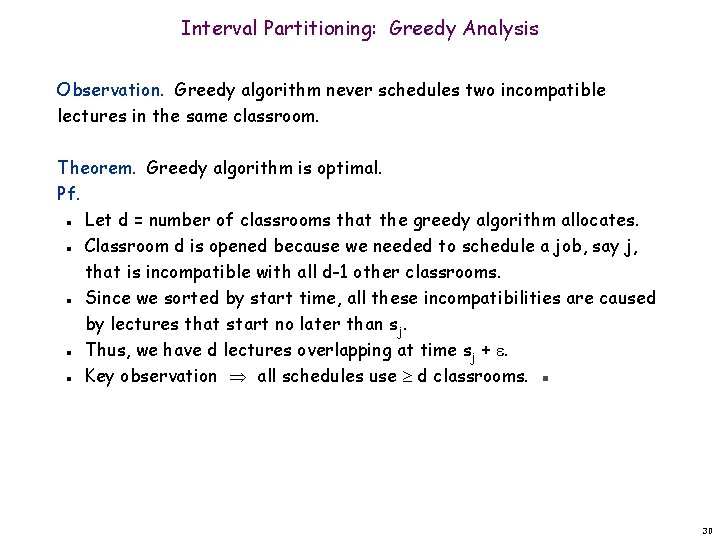

Interval Partitioning: Greedy Analysis Observation. Greedy algorithm never schedules two incompatible lectures in the same classroom. Theorem. Greedy algorithm is optimal. Pf. Let d = number of classrooms that the greedy algorithm allocates. Classroom d is opened because we needed to schedule a job, say j, that is incompatible with all d-1 other classrooms. Since we sorted by start time, all these incompatibilities are caused by lectures that start no later than sj. Thus, we have d lectures overlapping at time sj + . Key observation all schedules use d classrooms. ▪ n n n 30

4. 2 Scheduling to Minimize Lateness

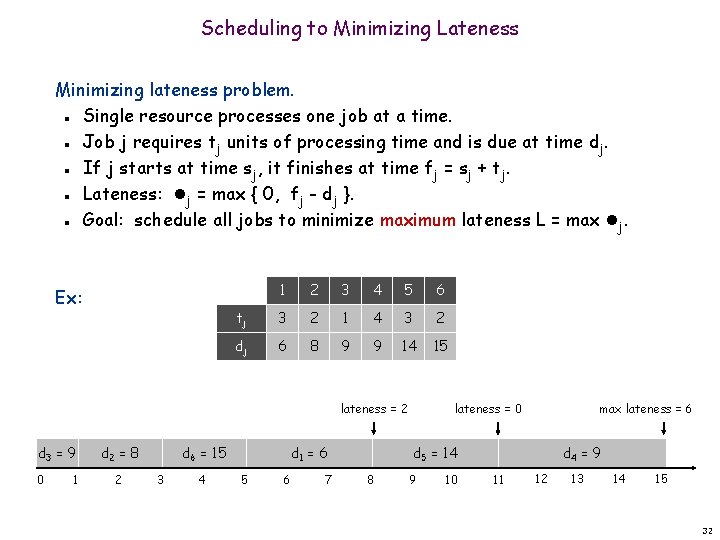

Scheduling to Minimizing Lateness Minimizing lateness problem. Single resource processes one job at a time. Job j requires tj units of processing time and is due at time dj. If j starts at time sj, it finishes at time fj = sj + tj. Lateness: j = max { 0, fj - dj }. Goal: schedule all jobs to minimize maximum lateness L = max j. n n n Ex: 1 2 3 4 5 6 tj 3 2 1 4 3 2 dj 6 8 9 9 14 15 lateness = 2 d 3 = 9 0 1 d 2 = 8 2 d 6 = 15 3 4 d 1 = 6 5 6 7 lateness = 0 max lateness = 6 d 5 = 14 8 9 10 d 4 = 9 11 12 13 14 15 32

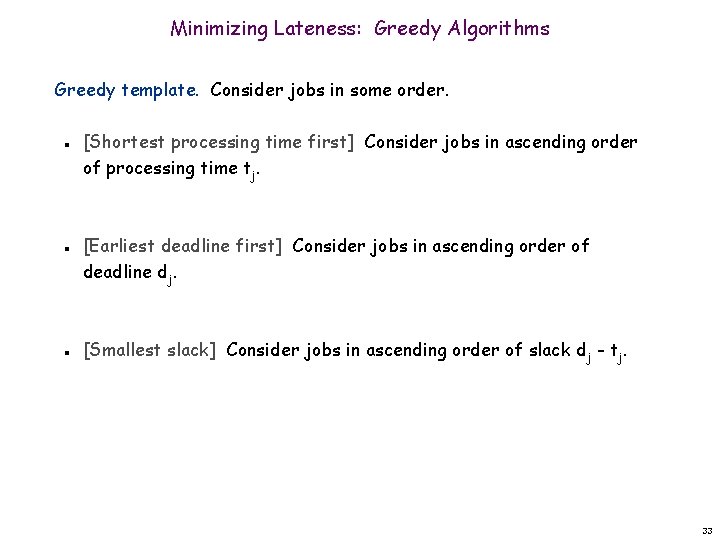

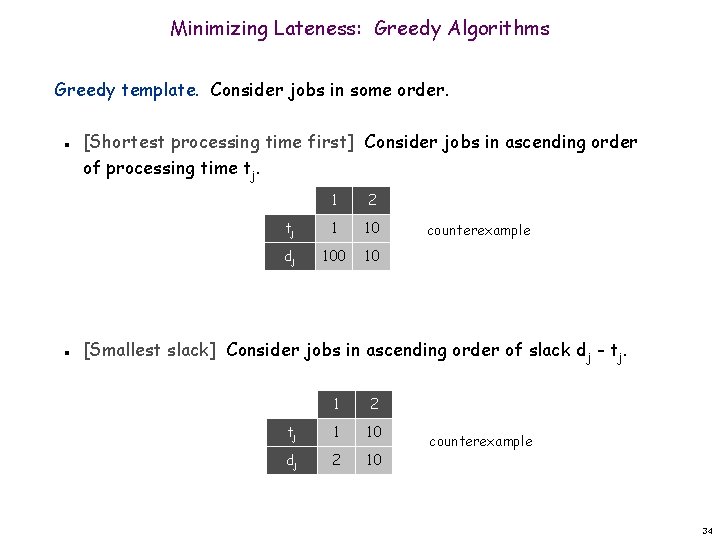

Minimizing Lateness: Greedy Algorithms Greedy template. Consider jobs in some order. n n n [Shortest processing time first] Consider jobs in ascending order of processing time tj. [Earliest deadline first] Consider jobs in ascending order of deadline dj. [Smallest slack] Consider jobs in ascending order of slack dj - tj. 33

Minimizing Lateness: Greedy Algorithms Greedy template. Consider jobs in some order. n n [Shortest processing time first] Consider jobs in ascending order of processing time tj. 1 2 tj 1 10 dj 100 10 counterexample [Smallest slack] Consider jobs in ascending order of slack dj - tj. 1 2 tj 1 10 dj 2 10 counterexample 34

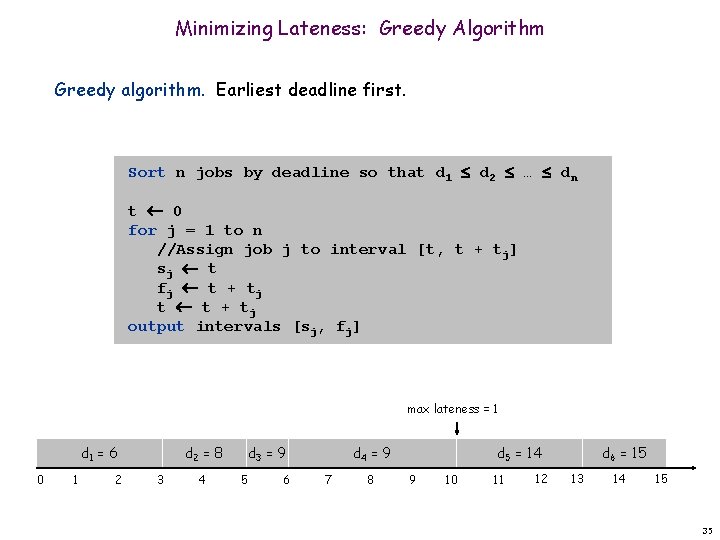

Minimizing Lateness: Greedy Algorithm Greedy algorithm. Earliest deadline first. Sort n jobs by deadline so that d 1 d 2 … dn t 0 for j = 1 to n //Assign job j to interval [t, t + tj] sj t fj t + tj t t + tj output intervals [sj, fj] max lateness = 1 d 1 = 6 0 1 2 d 2 = 8 3 4 d 3 = 9 5 6 d 4 = 9 7 8 d 5 = 14 9 10 11 12 d 6 = 15 13 14 15 35

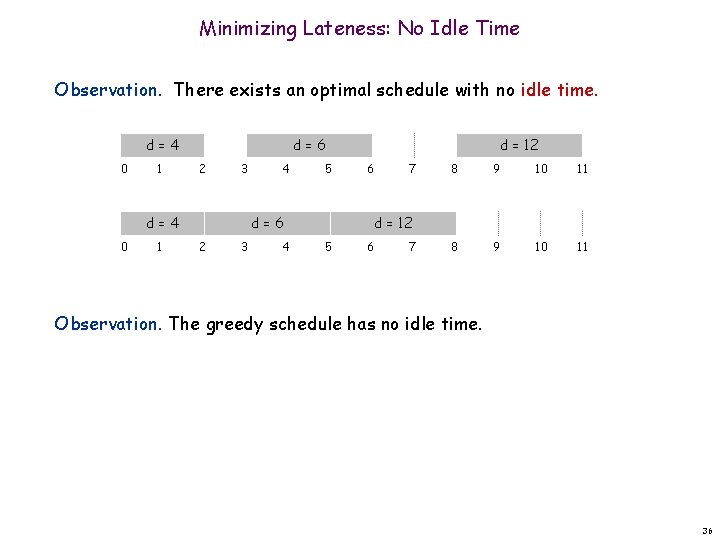

Minimizing Lateness: No Idle Time Observation. There exists an optimal schedule with no idle time. d=4 0 1 d=6 2 3 d=4 0 1 4 d = 12 5 d=6 2 3 6 7 8 9 10 11 d = 12 4 5 6 7 Observation. The greedy schedule has no idle time. 36

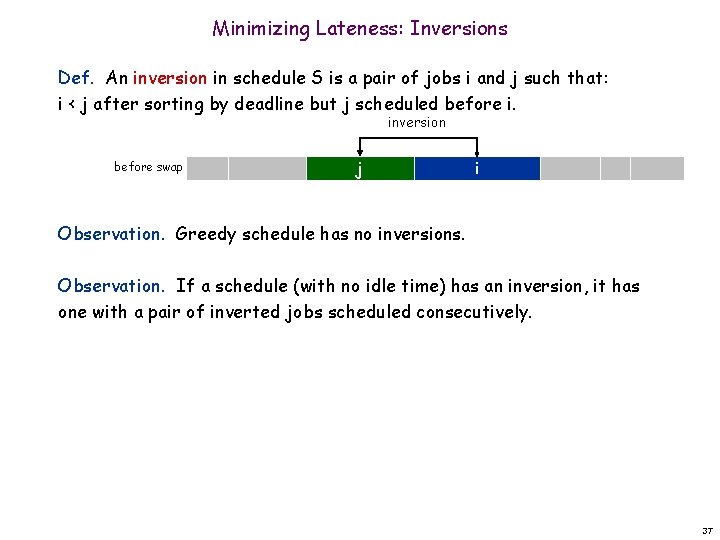

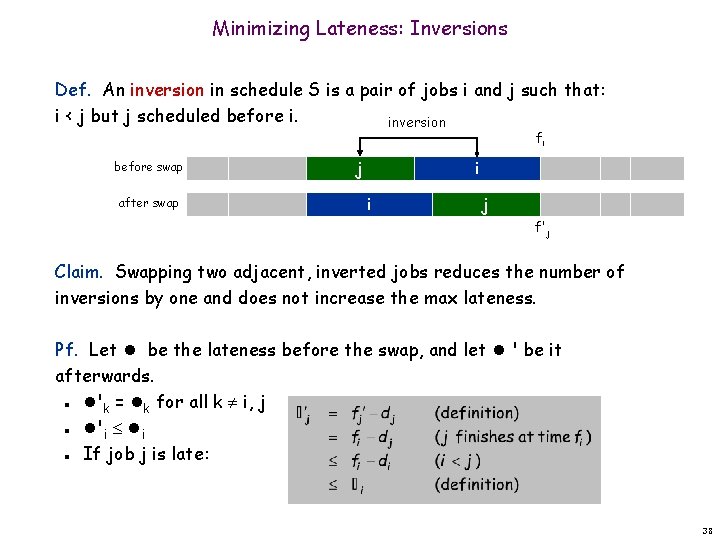

Minimizing Lateness: Inversions Def. An inversion in schedule S is a pair of jobs i and j such that: i < j after sorting by deadline but j scheduled before i. inversion before swap j i Observation. Greedy schedule has no inversions. Observation. If a schedule (with no idle time) has an inversion, it has one with a pair of inverted jobs scheduled consecutively. 37

Minimizing Lateness: Inversions Def. An inversion in schedule S is a pair of jobs i and j such that: i < j but j scheduled before i. inversion fi before swap after swap j i i j f'j Claim. Swapping two adjacent, inverted jobs reduces the number of inversions by one and does not increase the max lateness. Pf. Let be the lateness before the swap, and let ' be it afterwards. 'k = k for all k i, j 'i i If job j is late: n n n 38

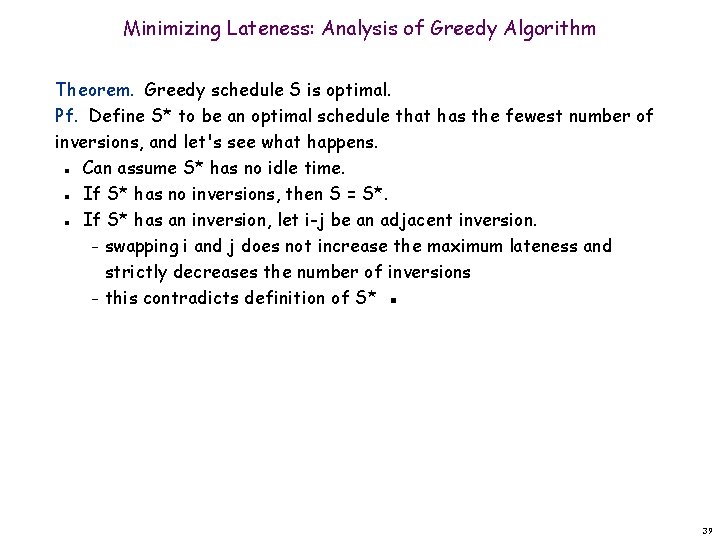

Minimizing Lateness: Analysis of Greedy Algorithm Theorem. Greedy schedule S is optimal. Pf. Define S* to be an optimal schedule that has the fewest number of inversions, and let's see what happens. Can assume S* has no idle time. If S* has no inversions, then S = S*. If S* has an inversion, let i-j be an adjacent inversion. – swapping i and j does not increase the maximum lateness and strictly decreases the number of inversions – this contradicts definition of S* ▪ n n n 39

Greedy Analysis Strategies Greedy algorithm stays ahead. Show that after each step of the greedy algorithm, its solution is at least as good as any other algorithm's. Exchange argument. Gradually transform any optimal solution to the one found by the greedy algorithm without hurting its quality. Structural. Discover a simple "structural" bound asserting that every possible solution must have a certain value. Then show that your algorithm always achieves this bound. 40

- Slides: 40