The Geometry of IR Keith van Rijsbergen lost

The Geometry of IR Keith van Rijsbergen (lost in Hilbert space!) Tampere 15 th August, 2002 © Cv. R 1

“Unscripted” comments I States Observables Measurement => Reality? Projection Postulates Cognitive State Changes © Cv. R 2

“Unscripted” comments II (quoting John von Neumann) However, all quantum mechanical probabilities are defined by inner products of vectors. Essentially if a state of a system is given by one vector, the transition probability in another state is the inner product of the two which is the square of the angle between them. In other words, probability correspond precisely to introducing the angles geometrically. Furthermore, there is only one way to introduce it. The more so because in the quantum mechanical machinery the negation of a statement, so the negation of a statement which represented by a linear set of vectors, correponds to the orthogonal complement of this linear space. Unsolved problems in mathematics, typescript, September, 1954 © Cv. R 3

What is this talk about? Not about quantum computation. see Nielsen and Chuang, CUP, 2000 Not about Logic see Engesser and Gabbay, AI, 2002 • History (von Neumann, Dirac, Schroedinger) • Motivation (complementarity) • Duality (Syntax/Semantics) • Measurement (Incompatibility) • Projections (subspaces) • Probability (inner products) • IR application (feedback, clusters, ostension) © Cv. R 4

© Cv. R 5

© Cv. R 6

Images not Text: how might that make a difference? no visual keywords (yet) - tf/idf issue aboutness revisable (eg Maron) relevance revisable (eg Goffman) feedback requires salience aboutness -> relevance -> aboutness © Cv. R 7

This is not new! Goffman, 1969: ‘. . that the relevance of the information from one document depends upon what is already known about the subject, and in turn affects the relevance of other documents subsequently examined. ’ Maron, : ‘Just because a document is about the subject sought by a patron, that fact does not imply that he would judge it relevant. ’ © Cv. R 8

Maron’s theory of indexing …. . in the case where the query consists of single term, call it B, the probability that a given document will be judged relevant by a patron submitting B is simply the ratio of the number of patrons who submit B as their query and judge that document as relevant, to the number of patrons, who submit B as their search query © Cv. R 9

In 1949 D. M Mackay wrote a paper ‘Quantal aspects of scientific information’, SER, vol 41, no. 314, in which he alluded to using the quantum mechanics paradigm to IR © Cv. R 10

Expectation Catalogue It (y-function) is now the means for predicting probability of measurement results. In it is embodied the momentarily-attained sum of theoretically based future expectation, somewhat as laid down in a catalogue. It is the relation-and-determinacy-bridge between measurements and measurements. . . It is, in principle, determined by a finite number of suitably chosen measurement on the object. . . Thus the catalogue of expectations is initially compiled. Schrödinger, 1935 &1980 © Cv. R 11

Hypotheses Cluster Hypothesis: closely associated documents tend to be relevant to the same requests. (1971) [co-ordination is positively correlated with external relevance, Jackson, 1969] Association Hypothesis: If an index term is good at discriminating relevant from non-relevant documents then any closely associated index term is also likely to be good at this. (1979) [co-occurrence of terms within documents is a suitable measure of similarity between terms, Jackson, 1971] © Cv. R 12

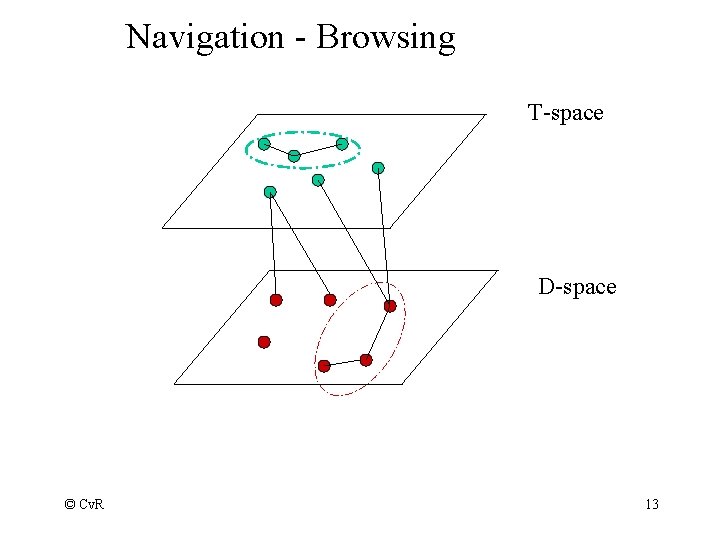

Navigation - Browsing T-space D-space © Cv. R 13

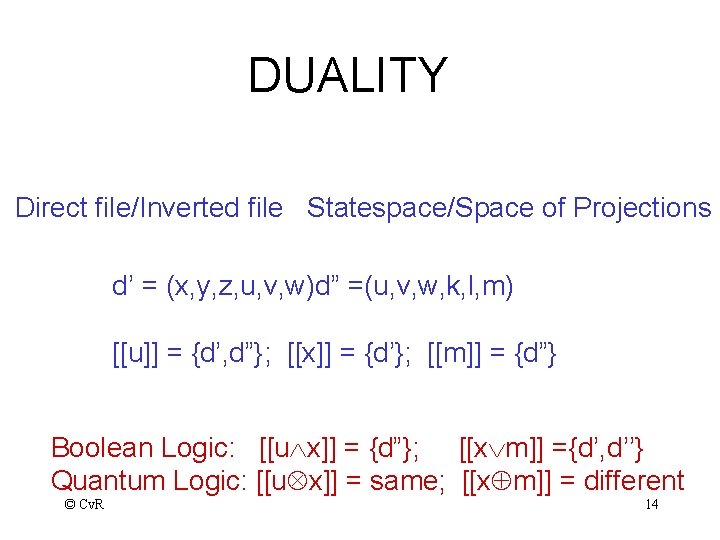

DUALITY Direct file/Inverted file Statespace/Space of Projections d’ = (x, y, z, u, v, w)d” =(u, v, w, k, l, m) [[u]] = {d’, d”}; [[x]] = {d’}; [[m]] = {d”} Boolean Logic: [[u x]] = {d”}; [[x m]] ={d’, d’’} Quantum Logic: [[u x]] = same; [[x m]] = different © Cv. R 14

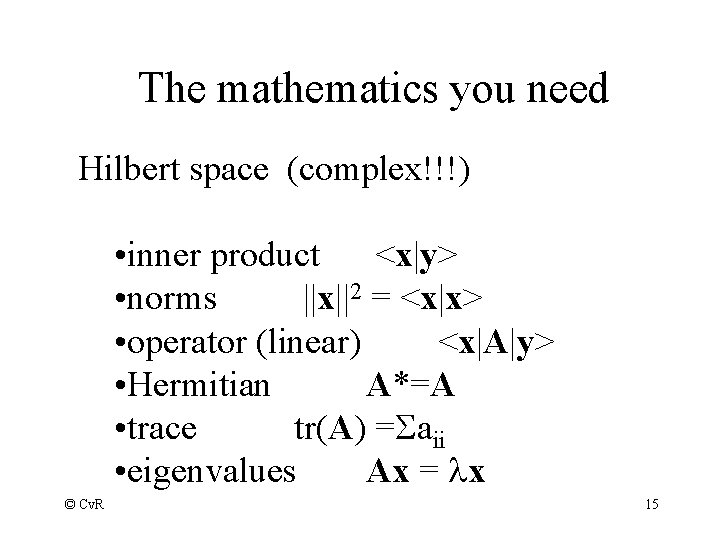

The mathematics you need Hilbert space (complex!!!) • inner product <x|y> • norms ||x||2 = <x|x> • operator (linear) <x|A|y> • Hermitian A*=A • trace tr(A) = aii • eigenvalues Ax = lx © Cv. R 15

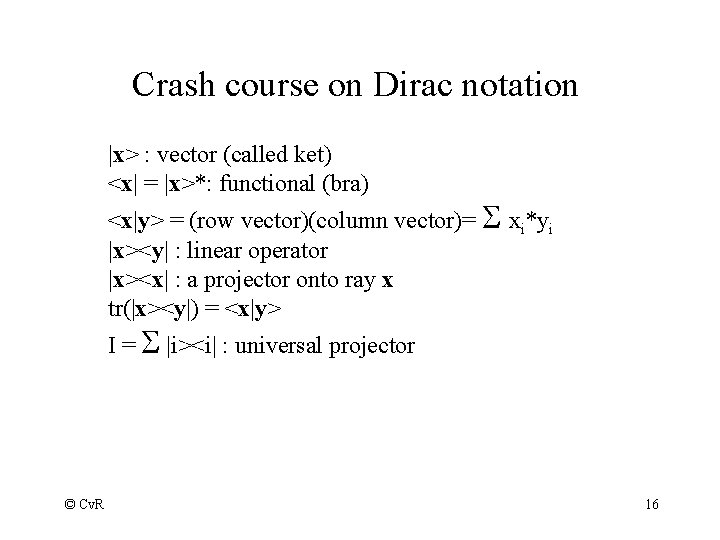

Crash course on Dirac notation |x> : vector (called ket) <x| = |x>*: functional (bra) <x|y> = (row vector)(column vector)= xi*yi |x><y| : linear operator |x><x| : a projector onto ray x tr(|x><y|) = <x|y> I = |i><i| : universal projector © Cv. R 16

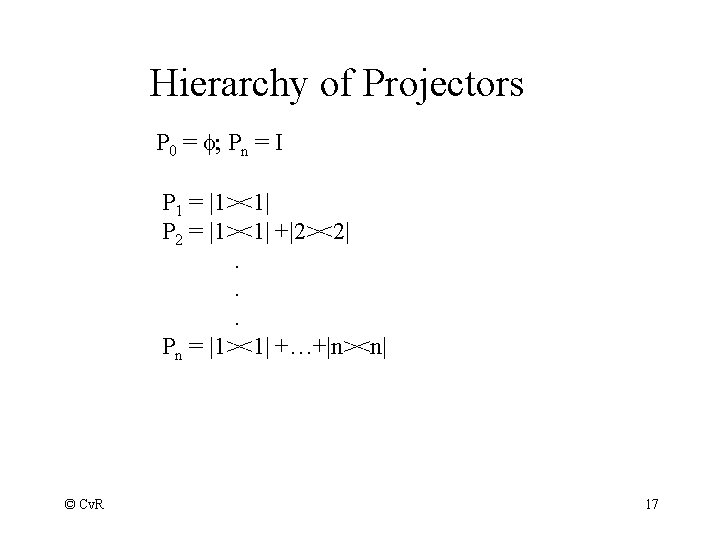

Hierarchy of Projectors P 0 = f; Pn = I P 1 = |1><1| P 2 = |1><1| +|2><2|. . . Pn = |1><1| +…+|n><n| © Cv. R 17

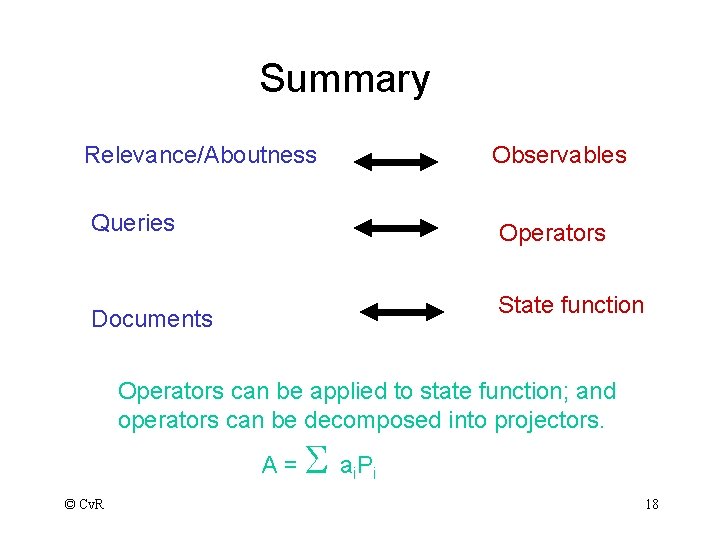

Summary Relevance/Aboutness Observables Queries Operators State function Documents Operators can be applied to state function; and operators can be decomposed into projectors. A= © Cv. R a. P i i 18

‘That is the relevance or irrelevance of a given retrieved document may affect the user’s current state of knowledge resulting in a change of the user’s information need, which may lead to a change of the user’s perception/ interpretation of the subsequent retrieved documents…. ’ Borlund, 2000 © Cv. R 19

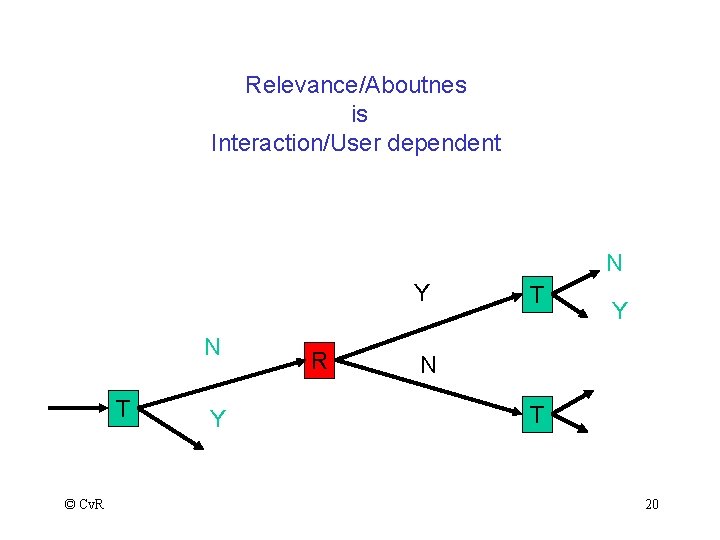

Relevance/Aboutnes is Interaction/User dependent N Y N T © Cv. R Y R T Y N T 20

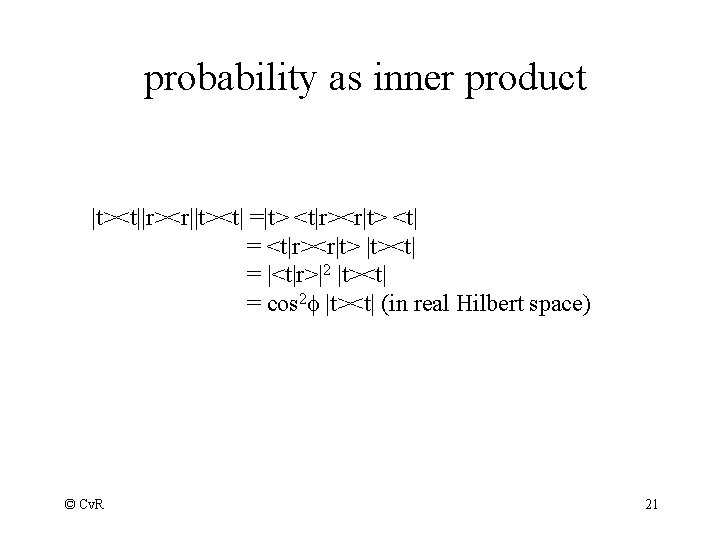

probability as inner product |t><t||r><r||t><t| =|t> <t|r><r|t> <t| = <t|r><r|t> |t><t| = |<t|r>|2 |t><t| = cos 2 f |t><t| (in real Hilbert space) © Cv. R 21

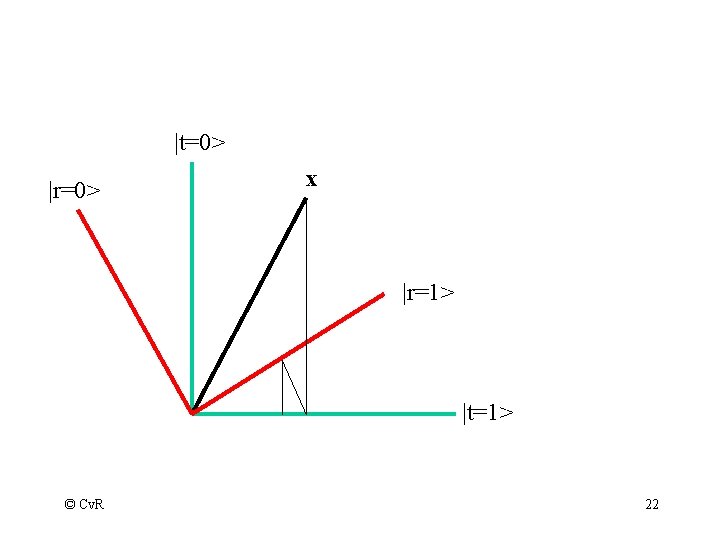

|t=0> |r=0> x |r=1> |t=1> © Cv. R 22

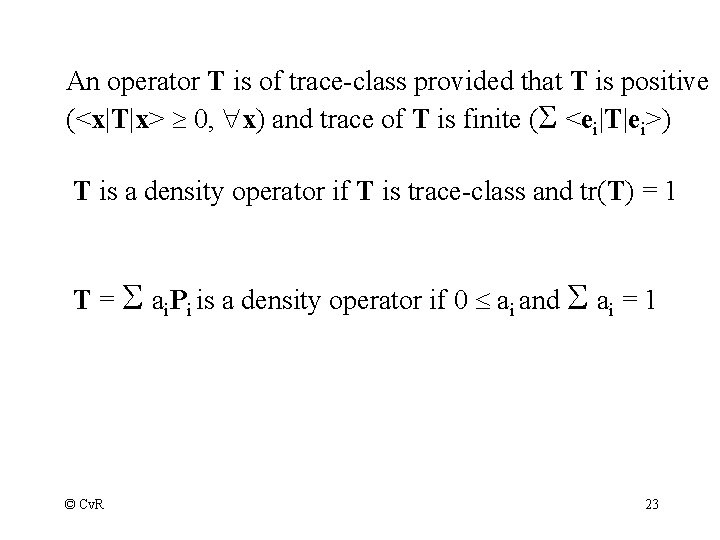

An operator T is of trace-class provided that T is positive (<x|T|x> 0, x) and trace of T is finite ( <ei|T|ei>) T is a density operator if T is trace-class and tr(T) = 1 T = ai. Pi is a density operator if 0 ai and ai = 1 © Cv. R 23

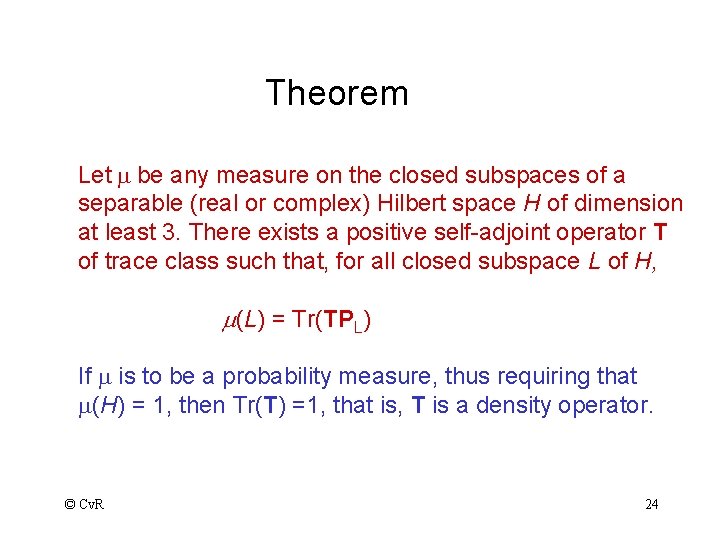

Theorem Let m be any measure on the closed subspaces of a separable (real or complex) Hilbert space H of dimension at least 3. There exists a positive self-adjoint operator T of trace class such that, for all closed subspace L of H, m(L) = Tr(TPL) If m is to be a probability measure, thus requiring that m(H) = 1, then Tr(T) =1, that is, T is a density operator. © Cv. R 24

Conditional Probability P(LA|LB) = tr(PBDPBPA) / tr(DPB) Note that PA could be E -> F © Cv. R 25

What is T? – without blinding you with science -Relevance Feedback ( a mixture with log weights) -Pseudo relevance feedback (a mixture with similarity weights) -Clustering (superposition of members? ) -Ostension (a history) © Cv. R 26

Conclusions? Is it worth it? Does it matter? - images - logic/probability/information/vectors - language © Cv. R 27

Useful References Readings in Information Retrieval, Morgan Kaufman, Edited by Sparck Jones and Willett Advances in Information Retrieval: Recent Research from CIIR, Edited by Bruce Croft. Information Retrieval: Uncertainty and Logics, Advanced Models for the Representation and Retrieval of Information, Edited by Crestani, Lalmas, Van Rijsbergen. Finding out about, Richard Belew. © Cv. R 28

- Slides: 28