The Dynamics of Learning Vector Quantization Barbara Hammer

The Dynamics of Learning Vector Quantization Barbara Hammer TU Clausthal-Zellerfeld Institute of Computing Science The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005 Michael Biehl, Anarta Ghosh Rijksuniversiteit Groningen Mathematics and Computing Science

Introduction prototype-based learning from example data: representation, classification Vector Quantization (VQ) Learning Vector Quantization (LVQ) The dynamics of learning a model situation: randomized data learning algorithms for VQ und LVQ analysis and comparison: dynamics, success of learning Summary Outlook The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

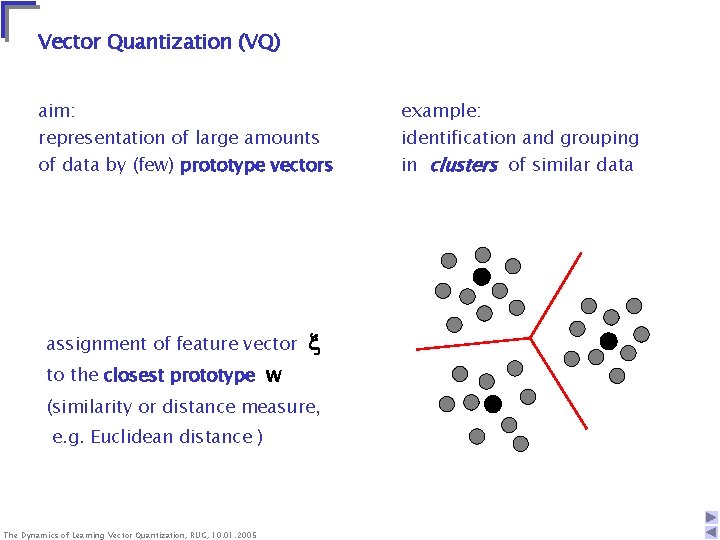

Vector Quantization (VQ) aim: representation of large amounts of data by (few) prototype vectors assignment of feature vector to the closest prototype w (similarity or distance measure, e. g. Euclidean distance ) The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005 example: identification and grouping in clusters of similar data

unsupervised competitive learning • initialize K prototype vectors • present a single example • identify the closest prototype, i. e the so-called winner • move the winner even closer towards the example intuitively clear, plausible procedure - places prototypes in areas with high density of data - identifies the most relevant combinations of features - (stochastic) on-line gradient descent with respect to the cost function. . . The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

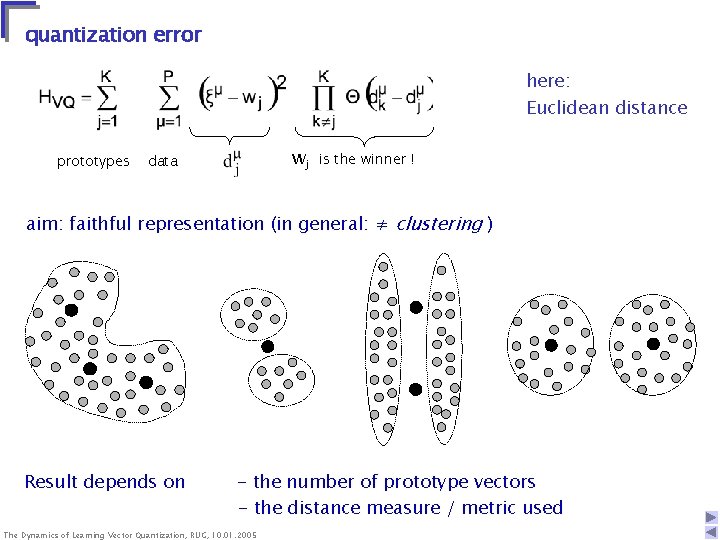

quantization error here: Euclidean distance prototypes wj data is the winner ! aim: faithful representation (in general: ≠ clustering ) Result depends on - the number of prototype vectors - the distance measure / metric used The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

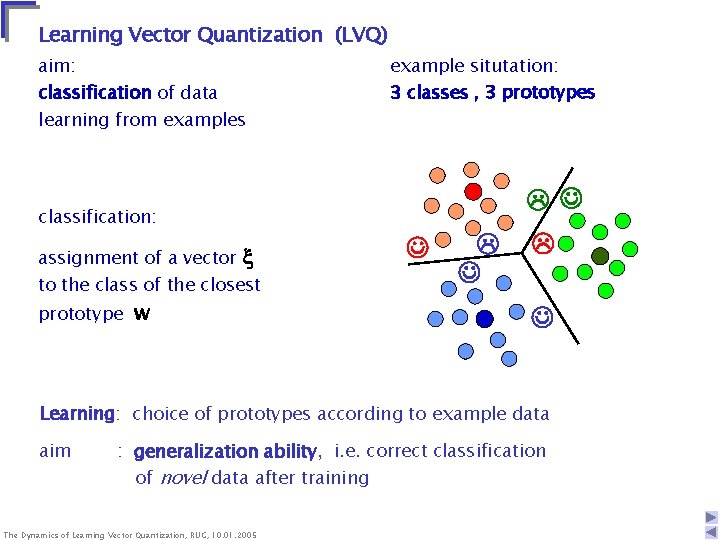

Learning Vector Quantization (LVQ) aim: classification of data learning from examples example situtation: 3 classes , 3 prototypes classification: assignment of a vector to the class of the closest prototype w Learning: choice of prototypes according to example data aim : generalization ability, i. e. correct classification of novel data after training The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

![mostly: heuristically motivated variations of competitive learning prominent example [Kohonen]: “ LVQ 2. 1. mostly: heuristically motivated variations of competitive learning prominent example [Kohonen]: “ LVQ 2. 1.](http://slidetodoc.com/presentation_image/50045d76c72ff47902a52cc6926c94cc/image-7.jpg)

mostly: heuristically motivated variations of competitive learning prominent example [Kohonen]: “ LVQ 2. 1. ” • initialize prototype vectors (for different classes) • present a single example • identify the closest correct and the closest wrong prototype • move the corresponding winner towards / away from the example known convergence / stability problems, e. g. for infrequent classes The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

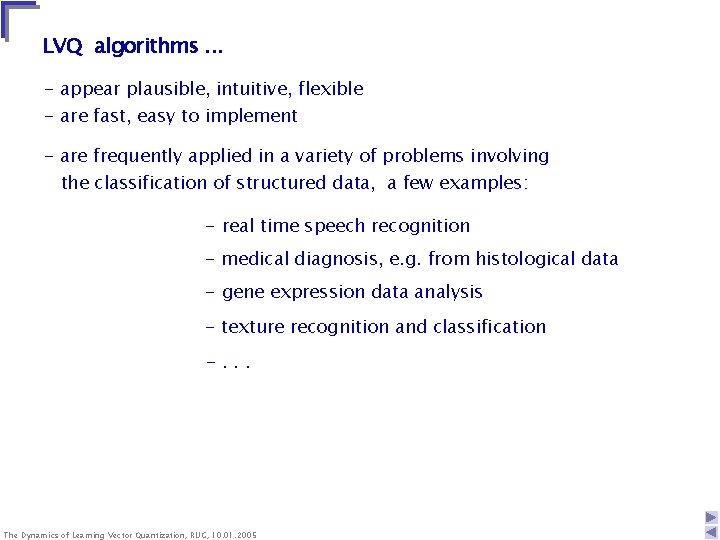

LVQ algorithms. . . - appear plausible, intuitive, flexible - are fast, easy to implement - are frequently applied in a variety of problems involving the classification of structured data, a few examples: - real time speech recognition - medical diagnosis, e. g. from histological data - gene expression data analysis - texture recognition and classification -. . . The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

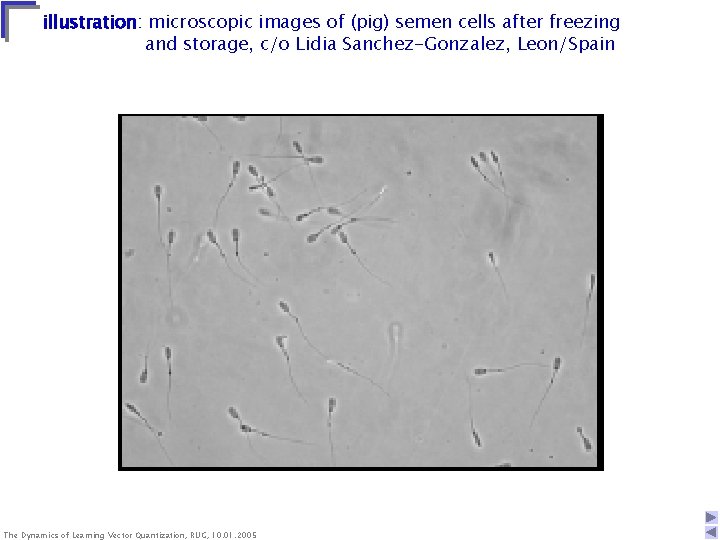

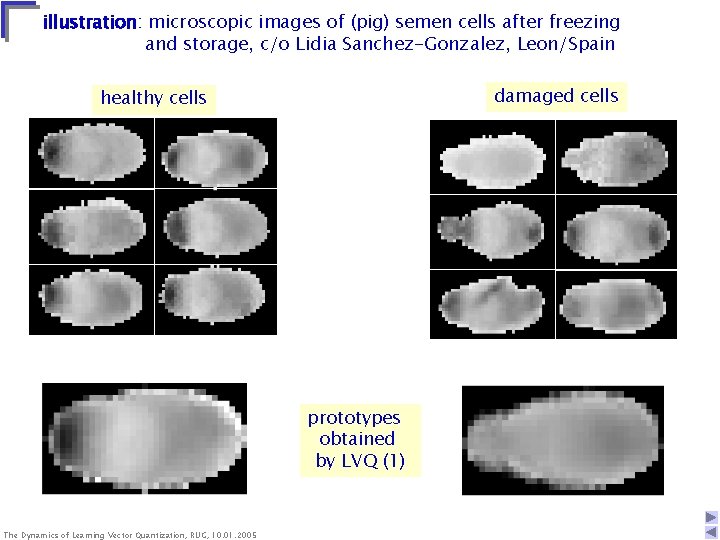

illustration: microscopic images of (pig) semen cells after freezing and storage, c/o Lidia Sanchez-Gonzalez, Leon/Spain The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

illustration: microscopic images of (pig) semen cells after freezing and storage, c/o Lidia Sanchez-Gonzalez, Leon/Spain damaged cells healthy cells prototypes obtained by LVQ (1) The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

LVQ algorithms. . . - are often based on purely heuristic arguments, or derived from a cost function with unclear relation to the generalization ability - almost exclusively use the Euclidean distance measure, inappropriate for heterogeneous data - lack, in general, a thorough theoretical understanding of dynamics, convergence properties, performance w. r. t. generalization, etc. The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

In the following: analysis of LVQ algorithms w. r. t. - dynamics of the learning process - performance, i. e. generalization ability - asymptotic behavior in the limit of many examples typical behavior in a model situation - randomized, high-dimensional data - essential features of LVQ learning aim: - contribute to theoretical understanding - develop efficient LVQ schemes - test in applications The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

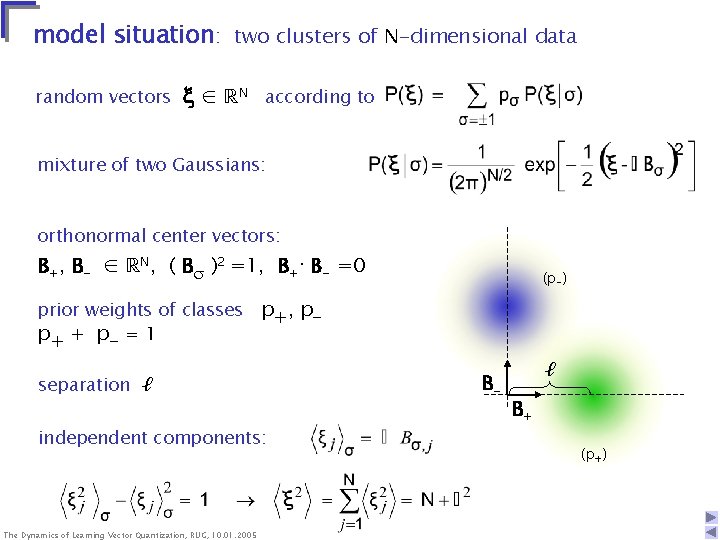

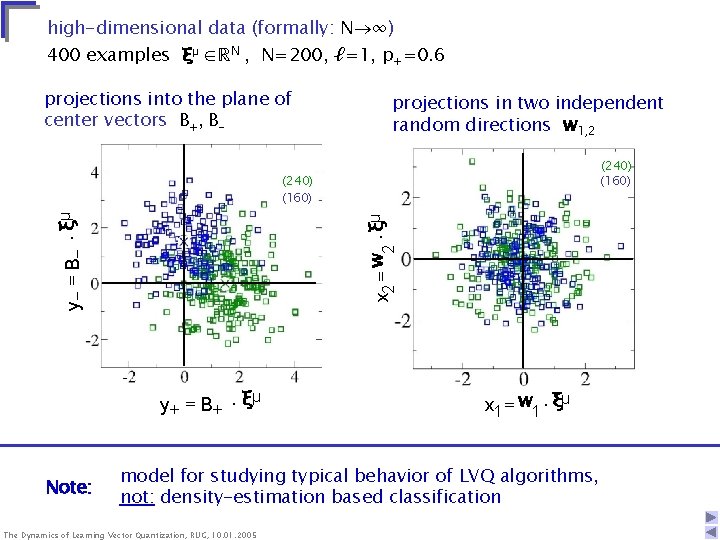

model situation: two clusters of N-dimensional data random vectors ∈ ℝN according to mixture of two Gaussians: orthonormal center vectors: B+, B- ∈ ℝN, ( B )2 =1, B+· B- =0 (p-) prior weights of classes p+, pp+ + p- = 1 separation ℓ independent components: The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005 B- ℓ B+ (p+)

high-dimensional data (formally: N ∞) 400 examples ξμ ∈ℝN , N=200, ℓ=1, p+=0. 6 projections into the plane of center vectors B+, B- projections in two independent random directions w 1, 2 (240) (160) x 2 = w 2 × ξμ y - = B- × ξμ (240) (160) y + = B+ × ξμ Note: x 1 = w 1 × ξμ model for studying typical behavior of LVQ algorithms, not: density-estimation based classification The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

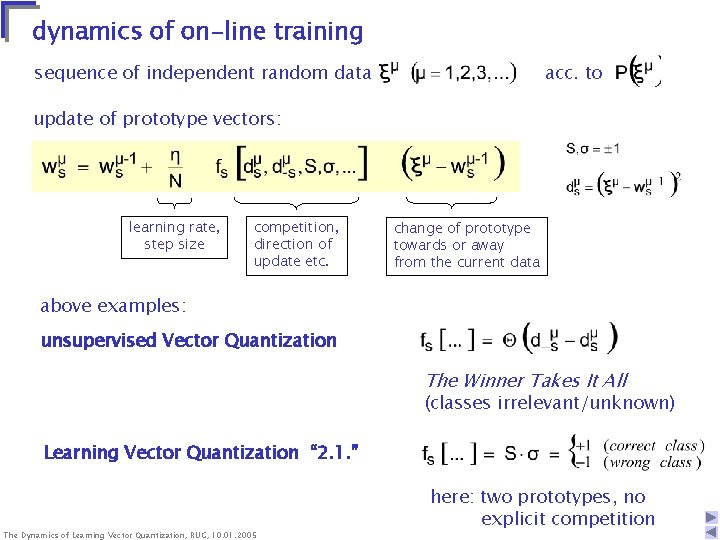

dynamics of on-line training sequence of independent random data acc. to update of prototype vectors: learning rate, step size competition, direction of update etc. change of prototype towards or away from the current data above examples: unsupervised Vector Quantization The Winner Takes It All (classes irrelevant/unknown) Learning Vector Quantization “ 2. 1. ” The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005 here: two prototypes, no explicit competition

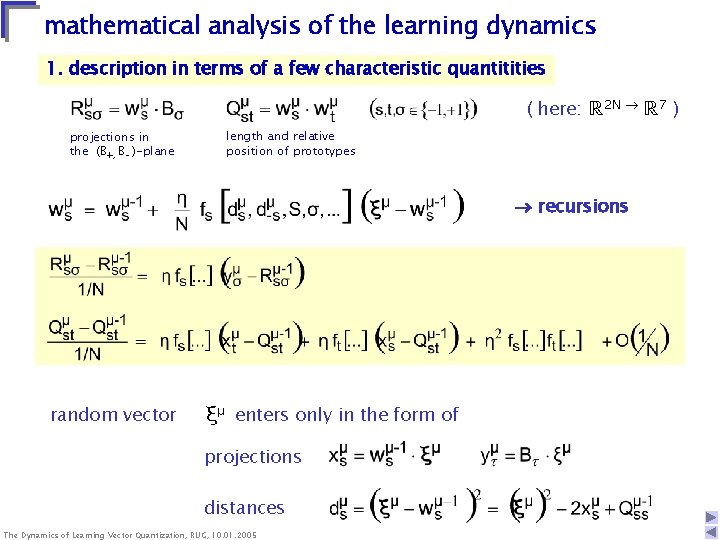

mathematical analysis of the learning dynamics 1. description in terms of a few characteristic quantitities ( here: ℝ 2 N ℝ 7 ) projections in the (B+, B- )-plane length and relative position of prototypes recursions random vector ξμ enters only in the form of projections distances The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

2. average over the current example random vector acc. to correlated Gaussian random quantities in thermodynamic limit N completely specified in terms of first and second moments (w/o indices μ) averaged recursions The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005 closed in { Rsσ , Qst }

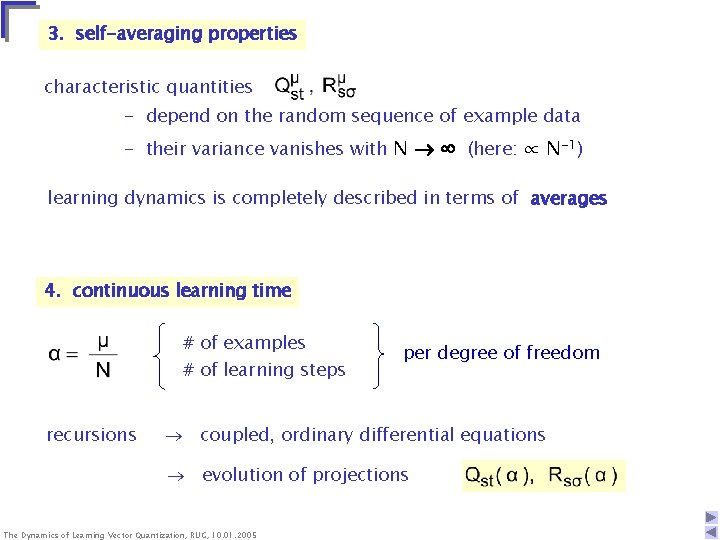

3. self-averaging properties characteristic quantities - depend on the random sequence of example data - their variance vanishes with N (here: ∝ N-1) learning dynamics is completely described in terms of averages 4. continuous learning time # of examples # of learning steps recursions per degree of freedom coupled, ordinary differential equations evolution of projections The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

5. learning curve probability for misclassification of a novel example generalization error εg(α) after training with α N examples investigation and comparison of given algorithms - repulsive/attractive fixed points of the dynamics - asymptotic behavior for - dependence on learning rate, separation, initialization -. . . optimization and development of new prescriptions - time-dependent learning rate η(α) maximize - variational optimization w. r. t. fs[. . . ] -. . . The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

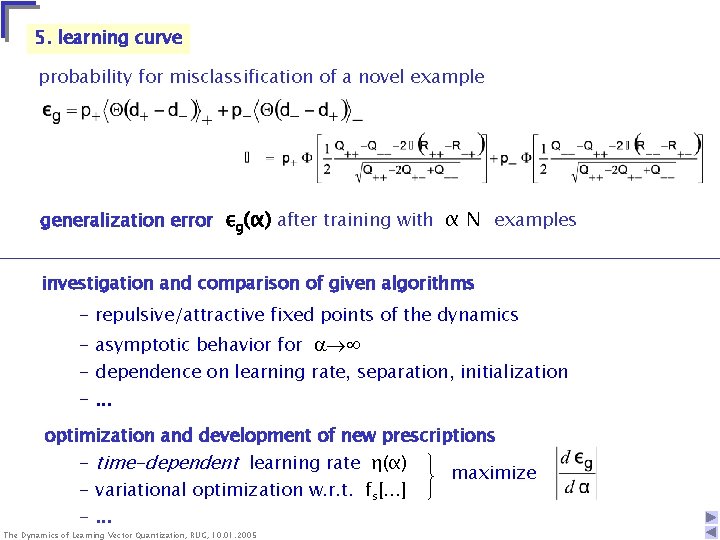

optimal classification with minimal generalization error in the model situation (equal variances of clusters): separation of classes by the plane with (p+) ℓ B+ B(p->p+ ) excess error 0. 50 ℓ=0 εg 0. 25 ℓ=1 minimal εg as a function of prior weights The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005 0 ℓ=2 0 0. 5 p+ 1. 0

![“LVQ 2. 1. “ update the correct and wrong winner [Seo, Obermeyer]: LVQ 2. “LVQ 2. 1. “ update the correct and wrong winner [Seo, Obermeyer]: LVQ 2.](http://slidetodoc.com/presentation_image/50045d76c72ff47902a52cc6926c94cc/image-21.jpg)

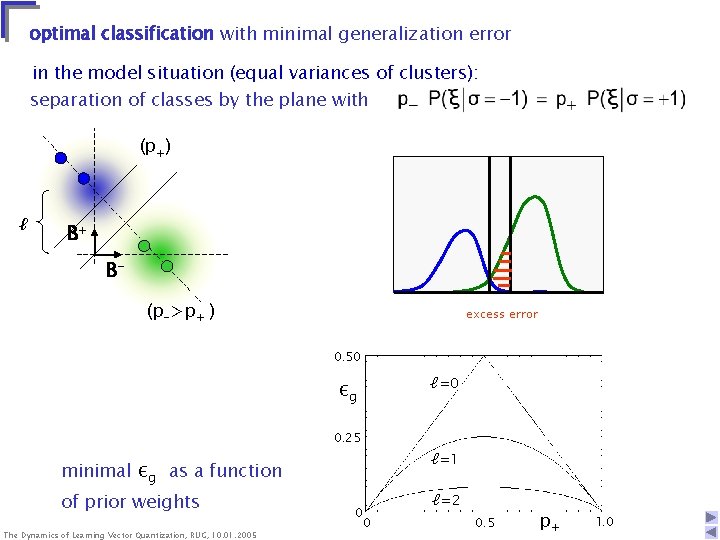

“LVQ 2. 1. “ update the correct and wrong winner [Seo, Obermeyer]: LVQ 2. 1. ↔ cost function (likelihood ratios) p = (1+m ) / 2 (analytical) integration for ws(0) = 0 theory and simulation (N=100) p+=0. 8, ℓ=1, =0. 5 averages over 100 independent runs The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005 (m>0)

problem: instability of the algorithm (p+> p-) due to repulsion of wrong prototypes trivial classification für α ∞: εg = max { p+, p- } strategies: - selection of data in a window close to the current decision boundary slows down the repulsion, system remains instable - Soft Robust Learning Vector Quantization [Seo & Obermayer] density-estimation based cost function limiting case Learning from mistakes: LVQ 2. 1 -step only, if the example is currently misclassified slow learning, poor generalization The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005 (p- )

![“ The winner takes it all ” I) LVQ 1 [Kohonen] winner ws 1 “ The winner takes it all ” I) LVQ 1 [Kohonen] winner ws 1](http://slidetodoc.com/presentation_image/50045d76c72ff47902a52cc6926c94cc/image-23.jpg)

“ The winner takes it all ” I) LVQ 1 [Kohonen] winner ws 1 RS+ only the winner is updated according to the class membership numerical integration for ws(0)=0 R++ Q++ R-R-+ Q-- Q+- R -- α theory and simulation (N=200) p+=0. 2, ℓ=1. 2, =1. 2 averaged over 100 indep. runs The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005 ℓ B+ w- w+ w- ℓ BRS- trajectories in the (B+, B- )-plane ( • ) =20, 40, . . 140. . . . optimal decision boundary ____ asymptotic position

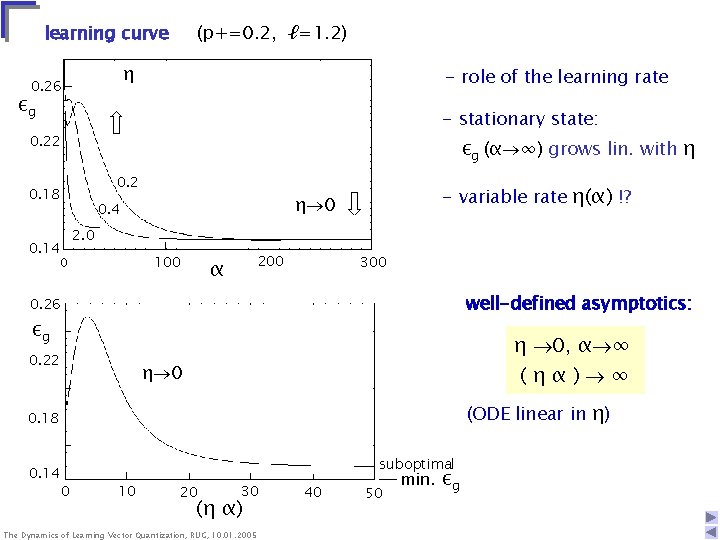

learning curve (p+=0. 2, ℓ=1. 2) η ε 0. 26 g =1. 2 εg - role of the learning rate - stationary state: 0. 22 εg (α ∞) grows lin. with η 0. 2 0. 18 2. 0 0. 14 100 0 - variable rate η(α) !? η 0 0. 4 200 α 300 0. 26 - well-defined asymptotics: 0. 22 η 0, α ∞ (ηα) ∞ εg η 0 (ODE linear in η) 0. 18 0. 14 suboptimal 0 10 20 30 (η α) The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005 40 50 min. εg

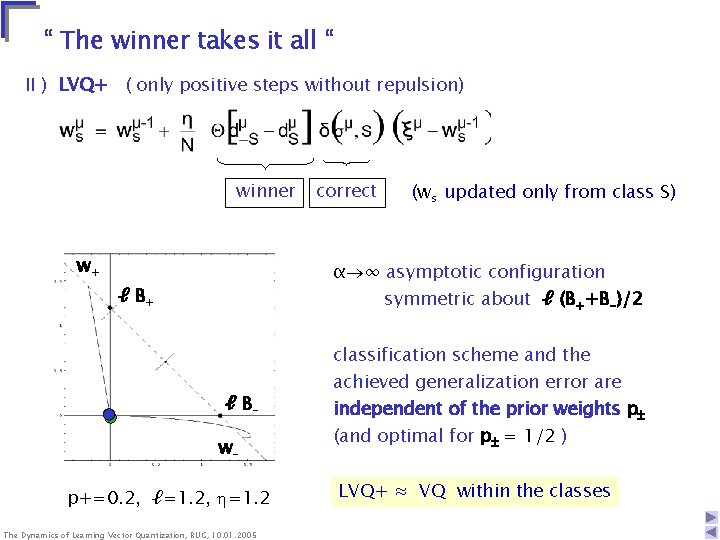

“ The winner takes it all “ II ) LVQ+ ( only positive steps without repulsion) winner w+ correct (ws updated only from class S) α ∞ asymptotic configuration ℓ B+ symmetric about ℓ (B++B-)/2 classification scheme and the ℓ Bwp+=0. 2, ℓ=1. 2, =1. 2 The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005 achieved generalization error are independent of the prior weights p (and optimal for p = 1/2 ) LVQ+ ≈ VQ within the classes

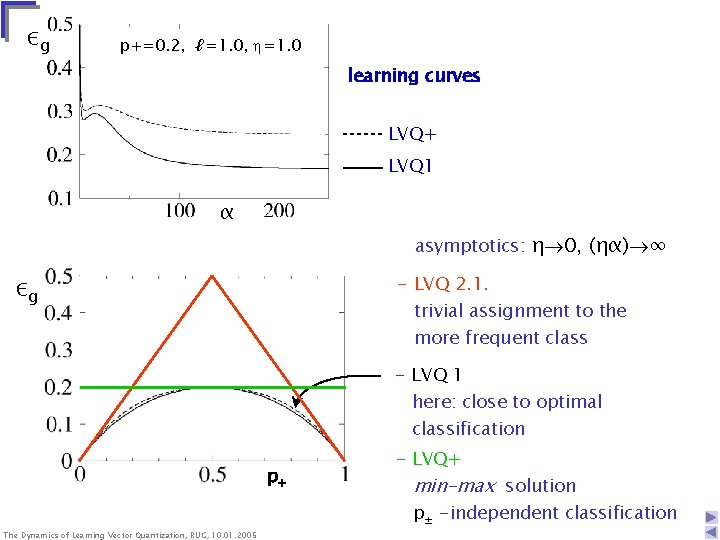

εg p+=0. 2, ℓ=1. 0, =1. 0 learning curves LVQ+ LVQ 1 α asymptotics: η 0, (ηα) ∞ εg - LVQ 2. 1. trivial assignment to the more frequent class min {p+, p-} optimal classification p+ The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005 - LVQ 1 here: close to optimal classification - LVQ+ min-max solution p± -independent classification

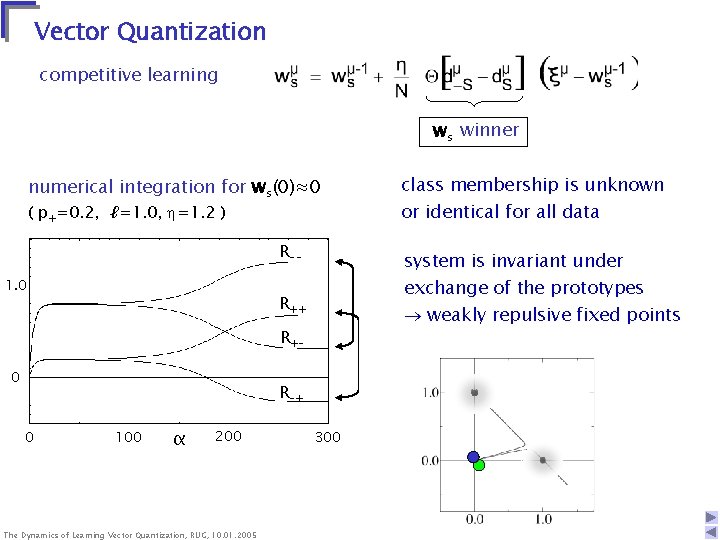

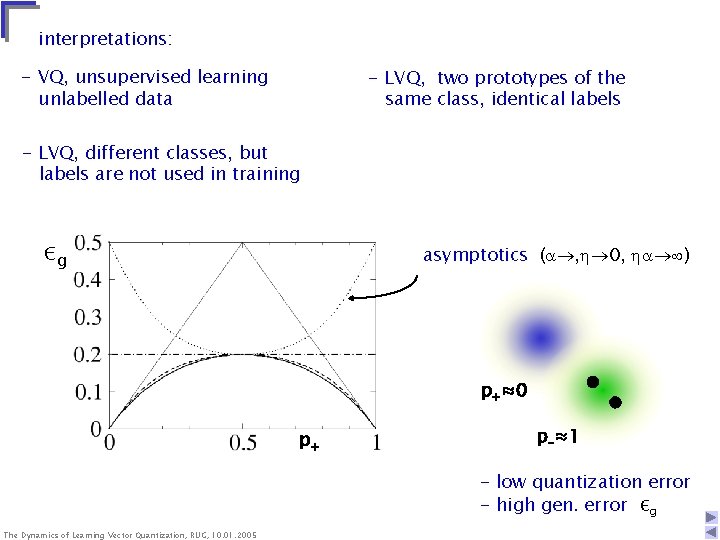

Vector Quantization competitive learning ws winner numerical integration for ws(0)≈0 ( p+=0. 2, ℓ=1. 0, =1. 2 ) R-- εg 1. 0 0 0 exchange of the prototypes weakly repulsive fixed points R+LVQ 1 100 α α 200 The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005 R-+ α or identical for all data system is invariant under VQR ++ LVQ+ class membership is unknown 300

interpretations: - VQ, unsupervised learning unlabelled data - LVQ, two prototypes of the same class, identical labels - LVQ, different classes, but labels are not used in training εg asymptotics ( , 0, ) p+≈0 p+ p-≈1 - low quantization error - high gen. error εg The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

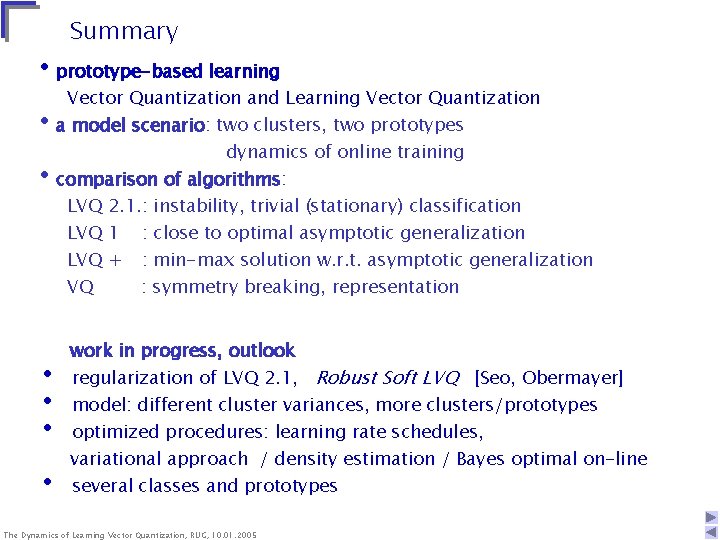

Summary • prototype-based learning Vector Quantization and Learning Vector Quantization • a model scenario: two clusters, two prototypes dynamics of online training • comparison of algorithms: LVQ 2. 1. : instability, trivial (stationary) classification LVQ 1 : close to optimal asymptotic generalization LVQ + : min-max solution w. r. t. asymptotic generalization VQ : symmetry breaking, representation • • work in progress, outlook regularization of LVQ 2. 1, Robust Soft LVQ [Seo, Obermayer] model: different cluster variances, more clusters/prototypes optimized procedures: learning rate schedules, variational approach / density estimation / Bayes optimal on-line several classes and prototypes The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005

![Perspectives • Generalized Relevance LVQ [Hammer & Villmann] adaptive metrics, e. g. distance measure Perspectives • Generalized Relevance LVQ [Hammer & Villmann] adaptive metrics, e. g. distance measure](http://slidetodoc.com/presentation_image/50045d76c72ff47902a52cc6926c94cc/image-30.jpg)

Perspectives • Generalized Relevance LVQ [Hammer & Villmann] adaptive metrics, e. g. distance measure • Self-Organizing Maps (SOM) training (many) N-dim. prototypes form a (low) d-dimensional grid representation of data in a topology preserving map neighborhood preserving SOM • applications The Dynamics of Learning Vector Quantization, RUG, 10. 01. 2005 Neural Gas (distance based)

- Slides: 30