The Complexity of Massive Data Set Computations Ziv

![Data Stream Computations [HRR 98, AMS 96, FKSV 99] x 1 x 2 x Data Stream Computations [HRR 98, AMS 96, FKSV 99] x 1 x 2 x](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-7.jpg)

![Sketch Computations [GM 98, BCFM 98, FKSV 99] x 11 … x 1 k Sketch Computations [GM 98, BCFM 98, FKSV 99] x 11 … x 1 k](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-8.jpg)

![Thesis Blueprint lower bounds for general functions [BKS 01, B 02] Statistical Decision Theory Thesis Blueprint lower bounds for general functions [BKS 01, B 02] Statistical Decision Theory](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-10.jpg)

![Example: Mean Theorem (originally, [CEG 95]) Approximating the mean of n numbers in [0, Example: Mean Theorem (originally, [CEG 95]) Approximating the mean of n numbers in [0,](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-14.jpg)

![Communication Complexity [Yao 79] f: X Y Z $$ Alice x X $$ f(x, Communication Complexity [Yao 79] f: X Y Z $$ Alice x X $$ f(x,](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-19.jpg)

![Example: Set-disjointness t-party set-disjointness Pi gets Si [n], Theorem [KS 87, R 90]: Rd(Disj Example: Set-disjointness t-party set-disjointness Pi gets Si [n], Theorem [KS 87, R 90]: Rd(Disj](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-21.jpg)

![Restricted Communication Models One-Way Communication [PS 84, Ablayev 93, KNR 95] P 1 P Restricted Communication Models One-Way Communication [PS 84, Ablayev 93, KNR 95] P 1 P](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-22.jpg)

![Example: Disjointness Frequency Moments k-th frequency moment Input stream: a 1, …, am [n], Example: Disjointness Frequency Moments k-th frequency moment Input stream: a 1, …, am [n],](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-23.jpg)

![Information Cost [Ablayev 93, Chakrabarti et al. 01, Saks-Sun 02] For a protocol P Information Cost [Ablayev 93, Chakrabarti et al. 01, Saks-Sun 02] For a protocol P](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-26.jpg)

![Yao’s Lemma [Yao 83] Definition: m-distributional CC (Dm, d(f)) Complexity of best deterministic protocol Yao’s Lemma [Yao 83] Definition: m-distributional CC (Dm, d(f)) Complexity of best deterministic protocol](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-33.jpg)

- Slides: 38

The Complexity of Massive Data Set Computations Ziv Bar-Yossef Computer Science Division U. C. Berkeley Ph. D. Dissertation Talk May 6, 2002 1

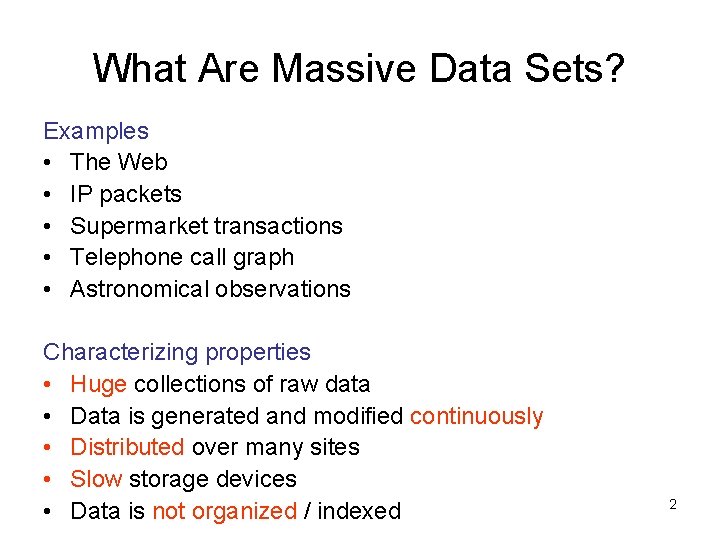

What Are Massive Data Sets? Examples • The Web • IP packets • Supermarket transactions • Telephone call graph • Astronomical observations Characterizing properties • Huge collections of raw data • Data is generated and modified continuously • Distributed over many sites • Slow storage devices • Data is not organized / indexed 2

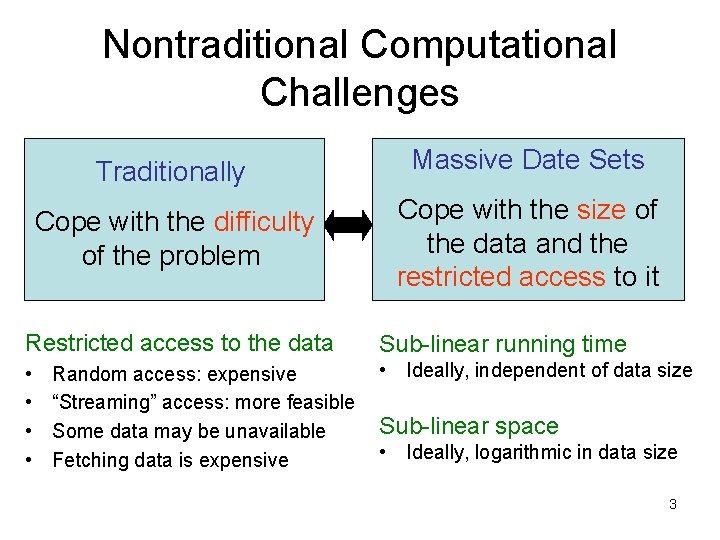

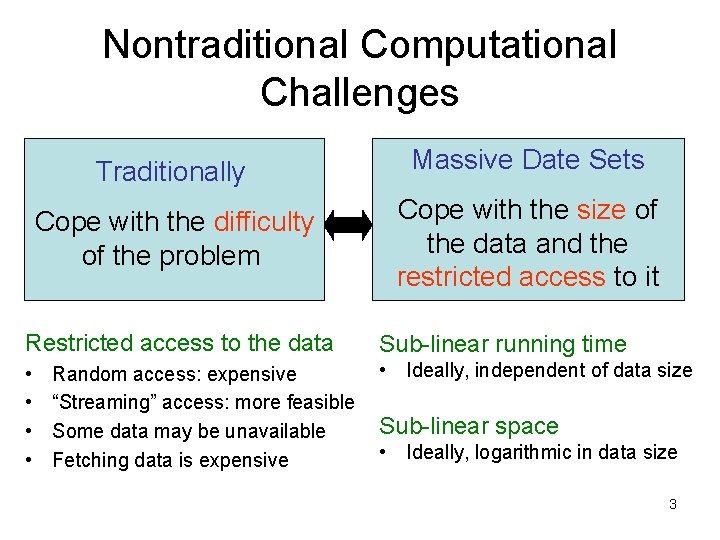

Nontraditional Computational Challenges Traditionally Massive Date Sets Cope with the difficulty of the problem Cope with the size of the data and the restricted access to it Restricted access to the data Sub-linear running time • • • Ideally, independent of data size Random access: expensive “Streaming” access: more feasible Some data may be unavailable Fetching data is expensive Sub-linear space • Ideally, logarithmic in data size 3

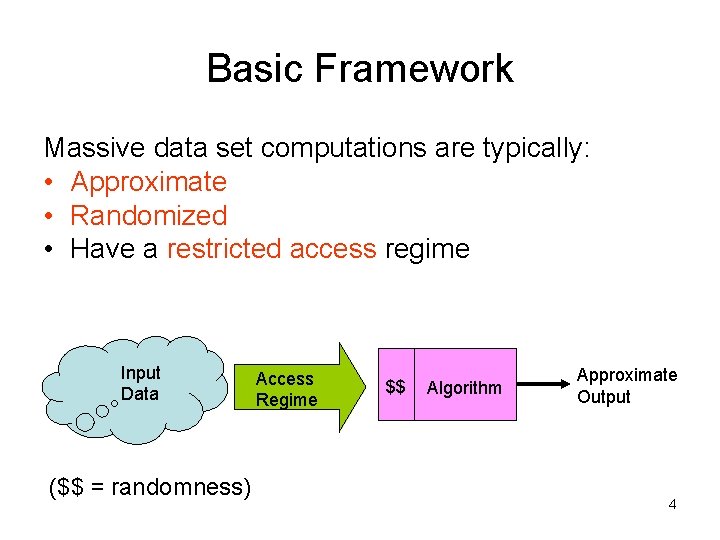

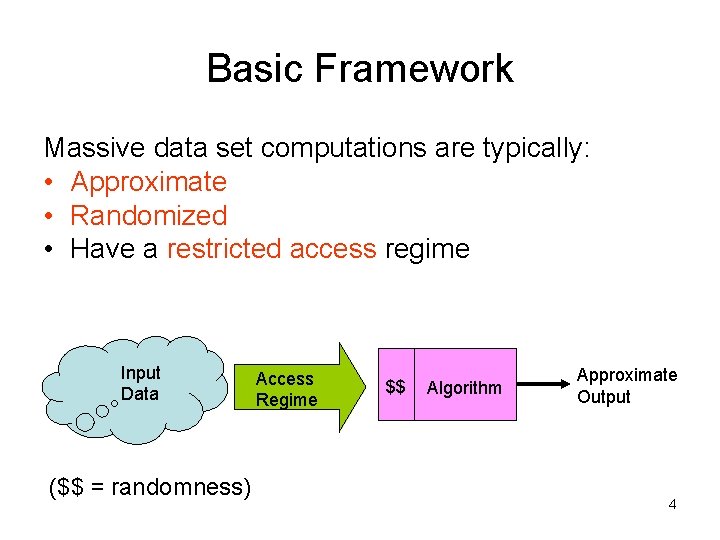

Basic Framework Massive data set computations are typically: • Approximate • Randomized • Have a restricted access regime Input Data ($$ = randomness) Access Regime $$ Algorithm Approximate Output 4

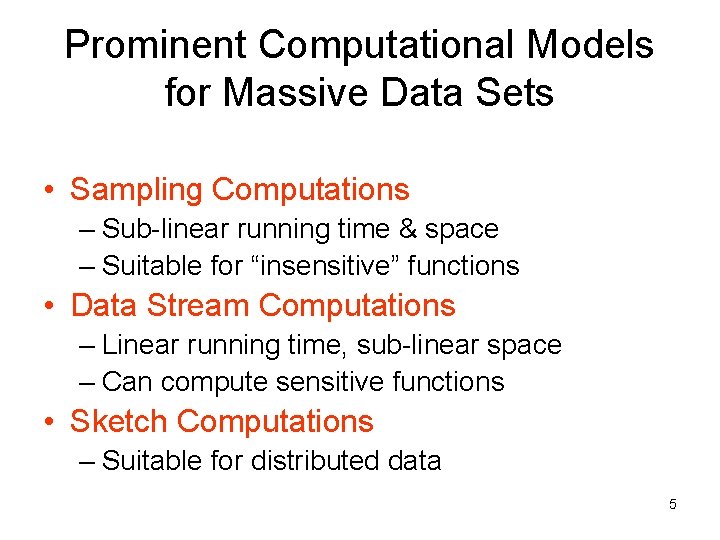

Prominent Computational Models for Massive Data Sets • Sampling Computations – Sub-linear running time & space – Suitable for “insensitive” functions • Data Stream Computations – Linear running time, sub-linear space – Can compute sensitive functions • Sketch Computations – Suitable for distributed data 5

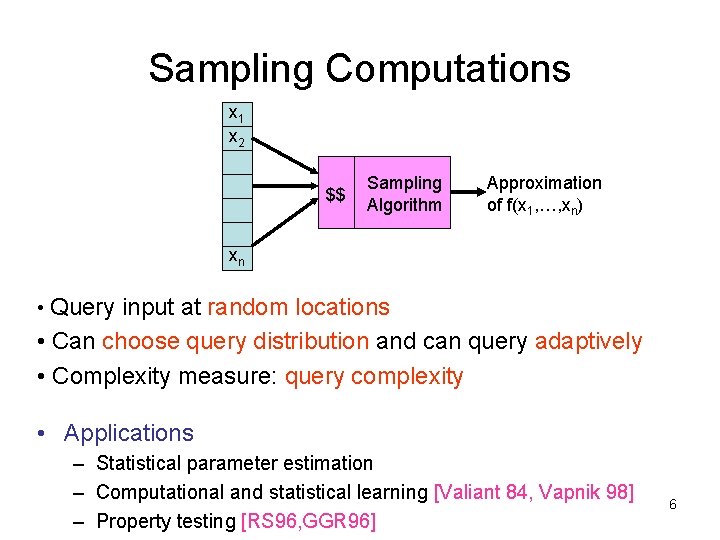

Sampling Computations x 1 x 2 $$ Sampling Algorithm Approximation of f(x 1, …, xn) xn • Query input at random locations • Can choose query distribution and can query adaptively • Complexity measure: query complexity • Applications – Statistical parameter estimation – Computational and statistical learning [Valiant 84, Vapnik 98] – Property testing [RS 96, GGR 96] 6

![Data Stream Computations HRR 98 AMS 96 FKSV 99 x 1 x 2 x Data Stream Computations [HRR 98, AMS 96, FKSV 99] x 1 x 2 x](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-7.jpg)

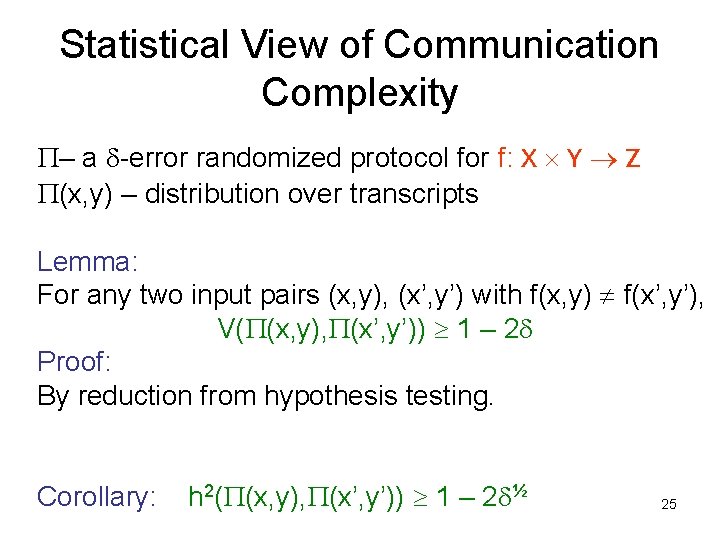

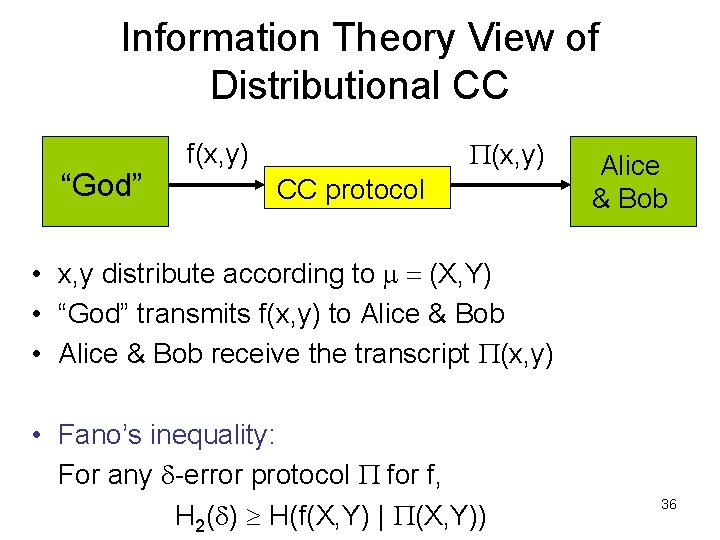

Data Stream Computations [HRR 98, AMS 96, FKSV 99] x 1 x 2 x 3 $$ Data Stream memory Algorithm xn Approximation of f(x 1, …, xn) • Input arrives in a one-way stream in arbitrary order • Complexity measures: space and time per data item • Applications – Database (Frequency moments [AMS 96]) – Networking (Lp distance [AMS 96, FKSV 99, FS 00, Indyk 00]) 7 – Web Information Retrieval (Web crawling, Google query logs [CCF 02])

![Sketch Computations GM 98 BCFM 98 FKSV 99 x 11 x 1 k Sketch Computations [GM 98, BCFM 98, FKSV 99] x 11 … x 1 k](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-8.jpg)

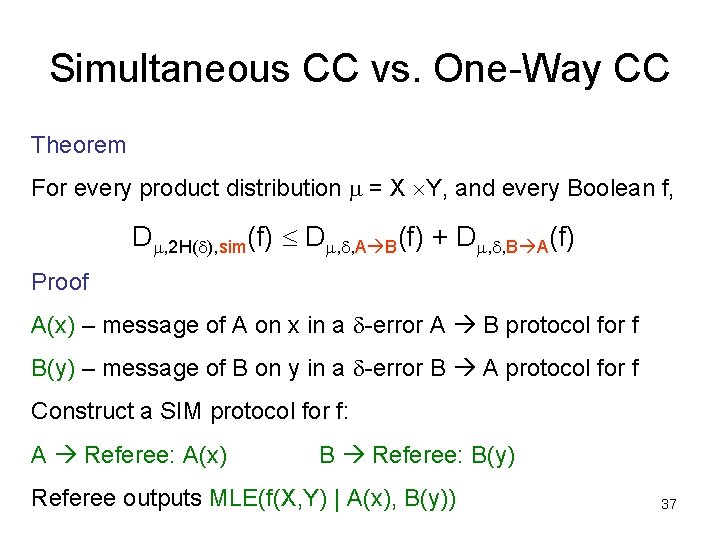

Sketch Computations [GM 98, BCFM 98, FKSV 99] x 11 … x 1 k x 21 … x 2 k compression $$ Sketch Algorithm xt 1 … xtk $$ compression Approximation of f(x 11, …, xtk) • Algorithm computes from data “sketches” sent from sites • Complexity measure: sketch lengths • Applications – Web Information Retrieval (Identifying document similarities [BCFM 98]) – Networking (Lp distance [FKSV 99]) – Lossy compression, approximate nearest neighbor 8

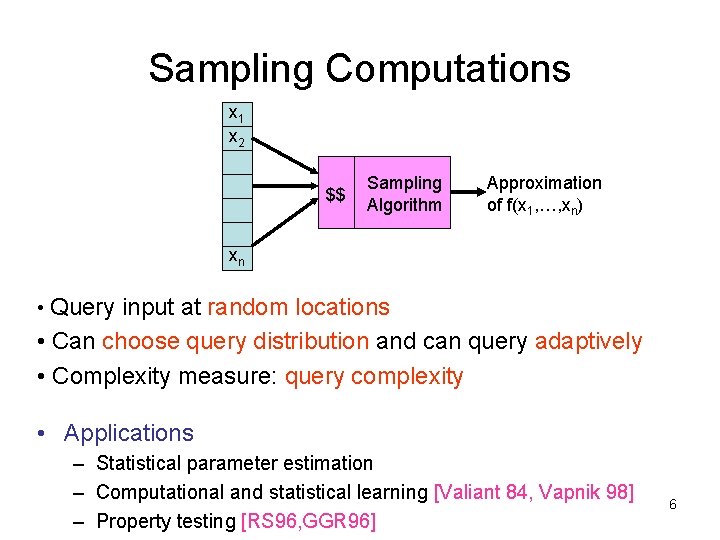

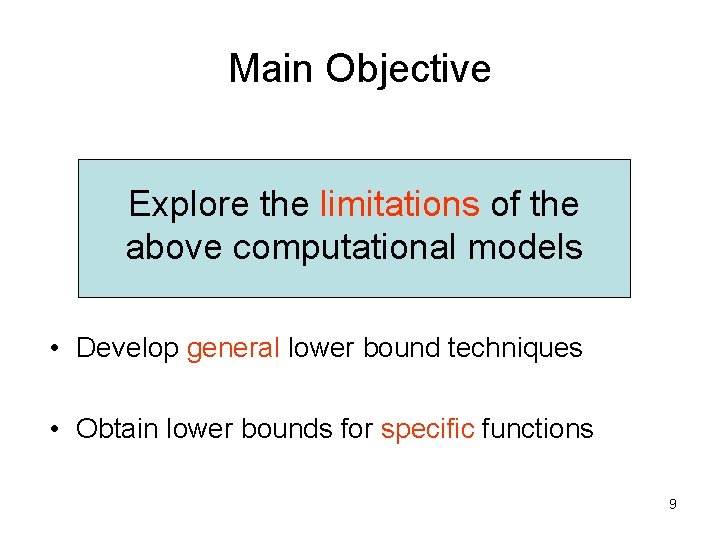

Main Objective Explore the limitations of the above computational models • Develop general lower bound techniques • Obtain lower bounds for specific functions 9

![Thesis Blueprint lower bounds for general functions BKS 01 B 02 Statistical Decision Theory Thesis Blueprint lower bounds for general functions [BKS 01, B 02] Statistical Decision Theory](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-10.jpg)

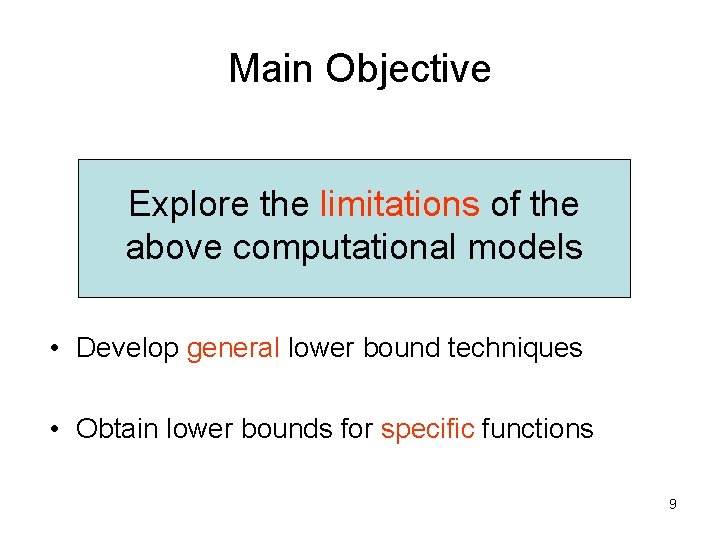

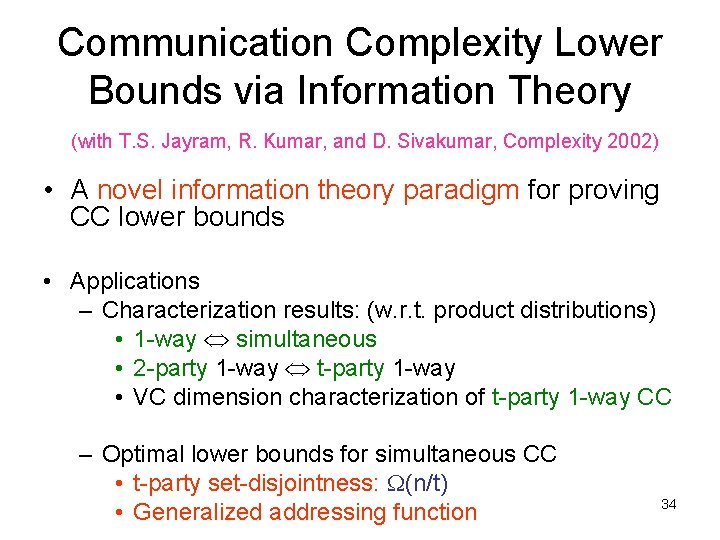

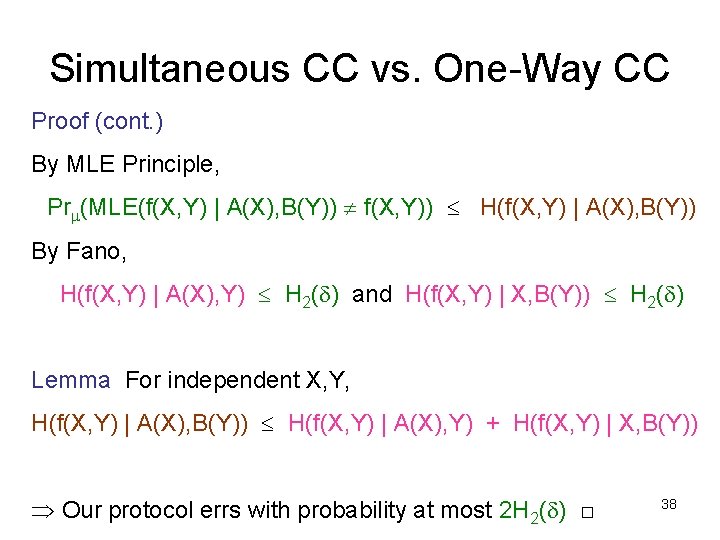

Thesis Blueprint lower bounds for general functions [BKS 01, B 02] Statistical Decision Theory General CC lower bounds [BJKS 02 b] Reduction from simultaneous CC Communication Complexity Sampling Computations Sketch Computations Reduction from one-way CC Information Theory One-way and simultaneous CC lower bounds [BJKS 02 a] Data Stream Computations 10

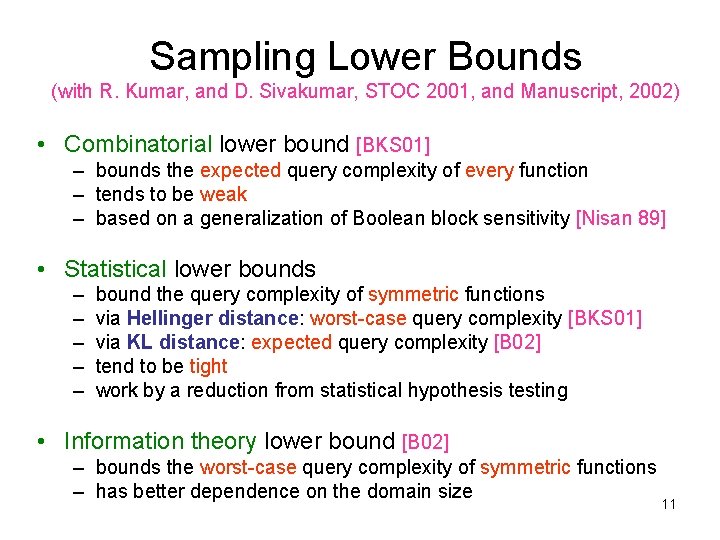

Sampling Lower Bounds (with R. Kumar, and D. Sivakumar, STOC 2001, and Manuscript, 2002) • Combinatorial lower bound [BKS 01] – bounds the expected query complexity of every function – tends to be weak – based on a generalization of Boolean block sensitivity [Nisan 89] • Statistical lower bounds – – – bound the query complexity of symmetric functions via Hellinger distance: worst-case query complexity [BKS 01] via KL distance: expected query complexity [B 02] tend to be tight work by a reduction from statistical hypothesis testing • Information theory lower bound [B 02] – bounds the worst-case query complexity of symmetric functions – has better dependence on the domain size 11

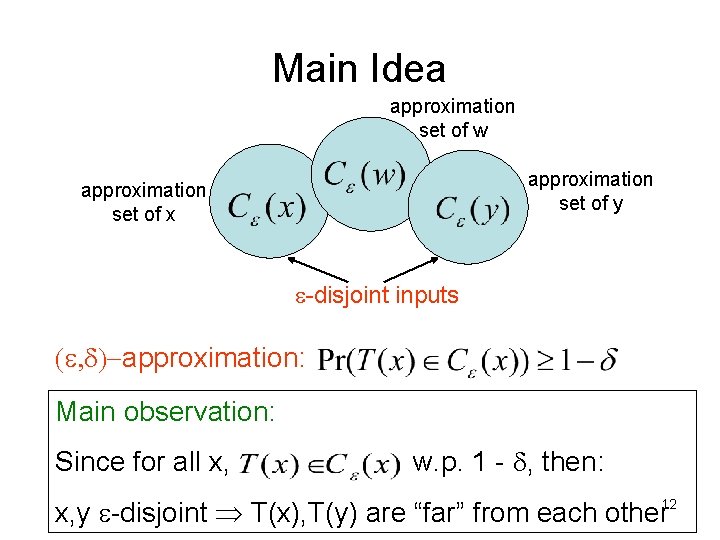

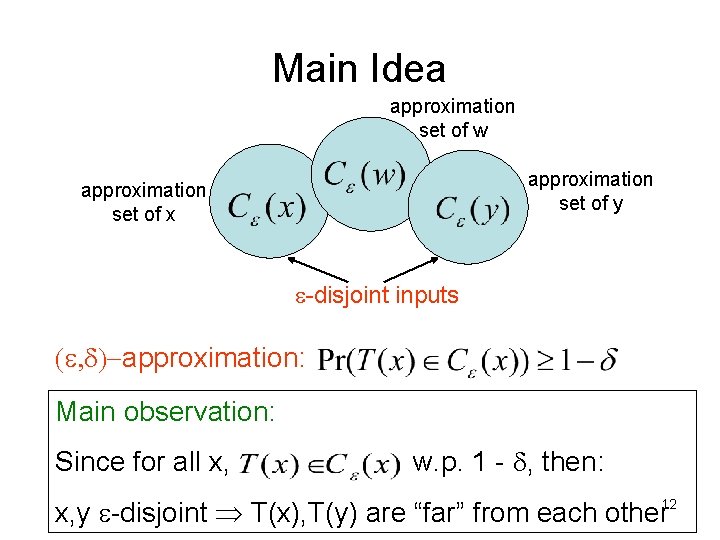

Main Idea approximation set of w approximation set of y approximation set of x e-disjoint inputs (e, d)-approximation: Main observation: Since for all x, w. p. 1 - d, then: x, y e-disjoint T(x), T(y) are “far” from each other 12

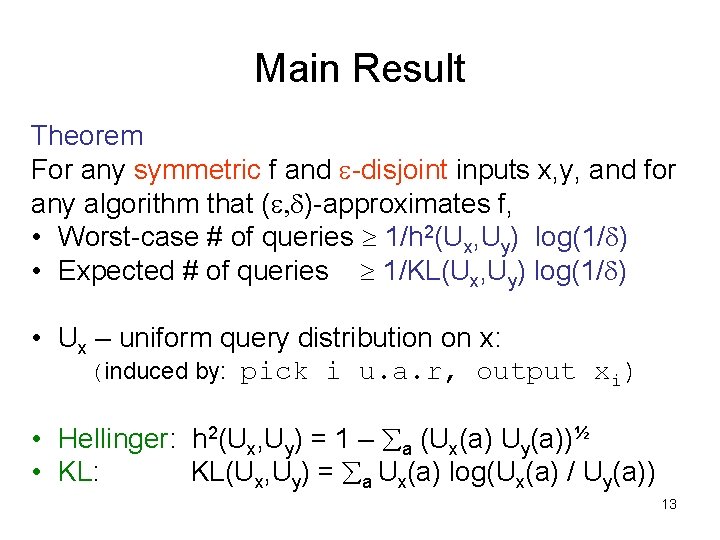

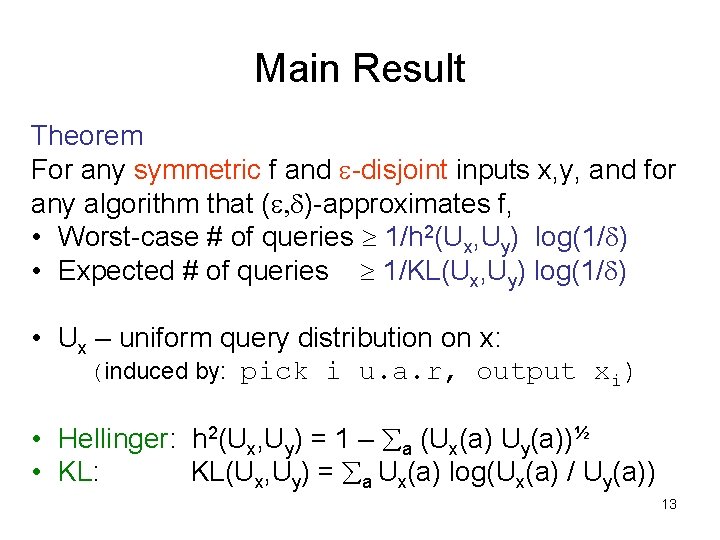

Main Result Theorem For any symmetric f and e-disjoint inputs x, y, and for any algorithm that (e, d)-approximates f, • Worst-case # of queries 1/h 2(Ux, Uy) log(1/d) • Expected # of queries 1/KL(Ux, Uy) log(1/d) • Ux – uniform query distribution on x: (induced by: pick i u. a. r, output xi) • Hellinger: h 2(Ux, Uy) = 1 – a (Ux(a) Uy(a))½ • KL: KL(Ux, Uy) = a Ux(a) log(Ux(a) / Uy(a)) 13

![Example Mean Theorem originally CEG 95 Approximating the mean of n numbers in 0 Example: Mean Theorem (originally, [CEG 95]) Approximating the mean of n numbers in [0,](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-14.jpg)

Example: Mean Theorem (originally, [CEG 95]) Approximating the mean of n numbers in [0, 1] to within e additive error requires W(1/e 2 log(1/d)) queries. X: ½+e 1 ½-e 0 y: ½-e 1 ½+e 0 h 2(Ux, Uy) = KL(Ux, Uy) = O(e 2) Other applications: Selection functions, frequency 14 moments, extractors and dispersers

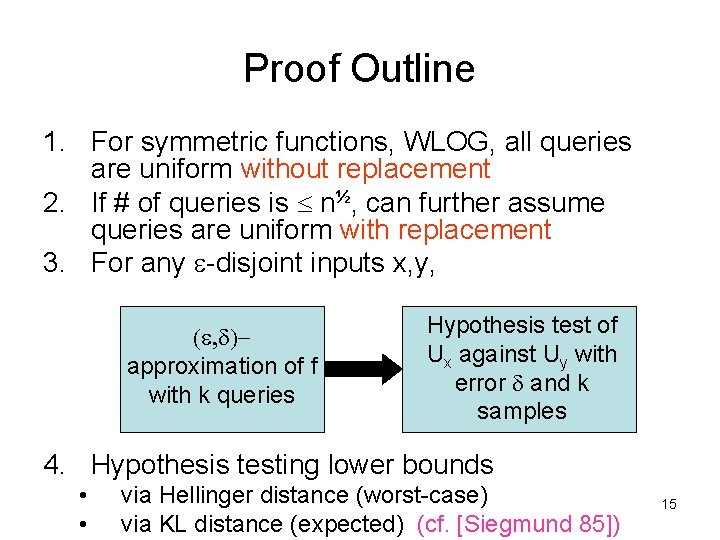

Proof Outline 1. For symmetric functions, WLOG, all queries are uniform without replacement 2. If # of queries is n½, can further assume queries are uniform with replacement 3. For any e-disjoint inputs x, y, (e, d)approximation of f with k queries Hypothesis test of Ux against Uy with error d and k samples 4. Hypothesis testing lower bounds • • via Hellinger distance (worst-case) via KL distance (expected) (cf. [Siegmund 85]) 15

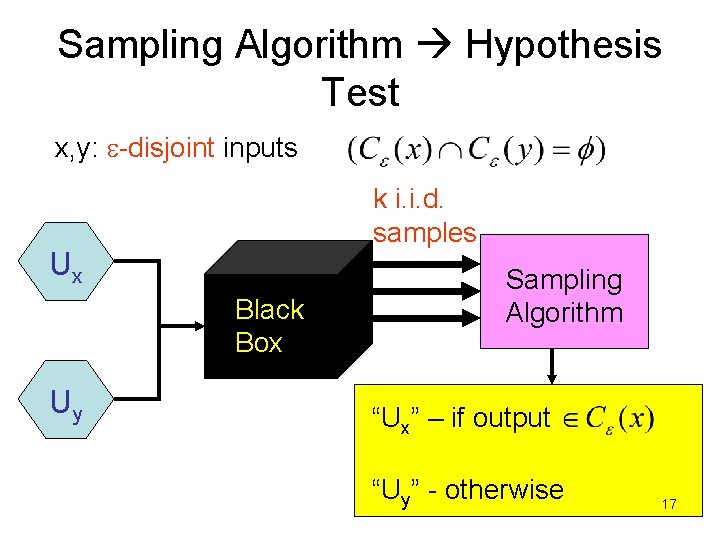

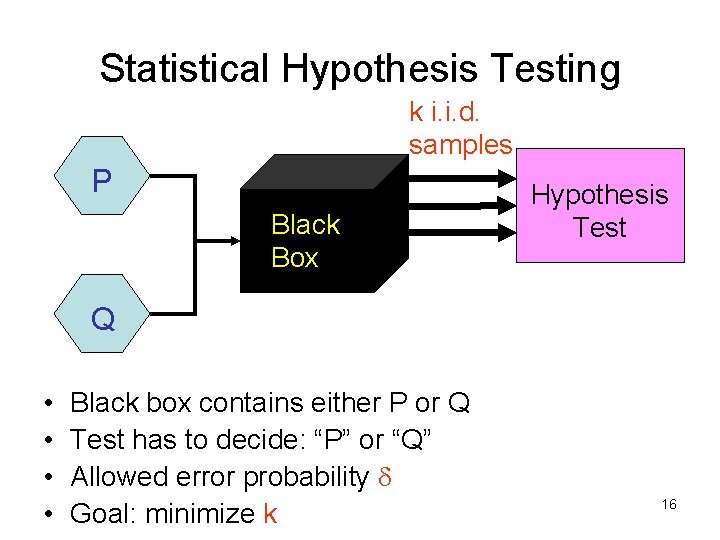

Statistical Hypothesis Testing k i. i. d. samples P Black Box Hypothesis Test Q • • Black box contains either P or Q Test has to decide: “P” or “Q” Allowed error probability d Goal: minimize k 16

Sampling Algorithm Hypothesis Test x, y: e-disjoint inputs k i. i. d. samples Ux Black Box Uy Sampling Algorithm “Ux” – if output “Uy” - otherwise 17

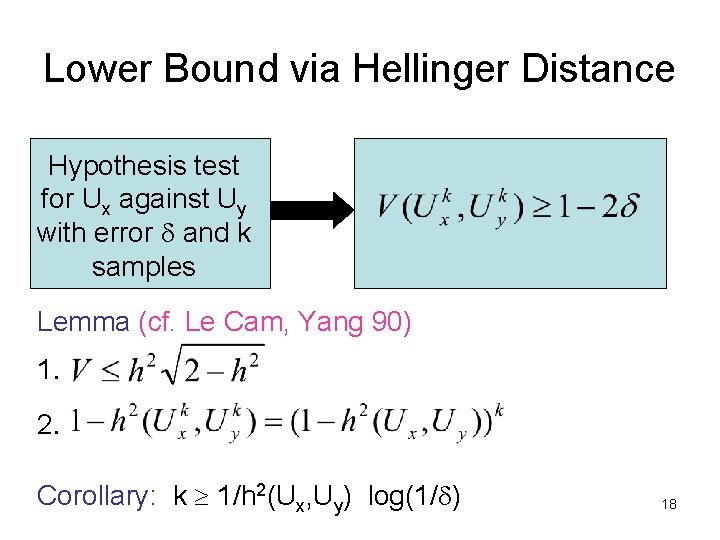

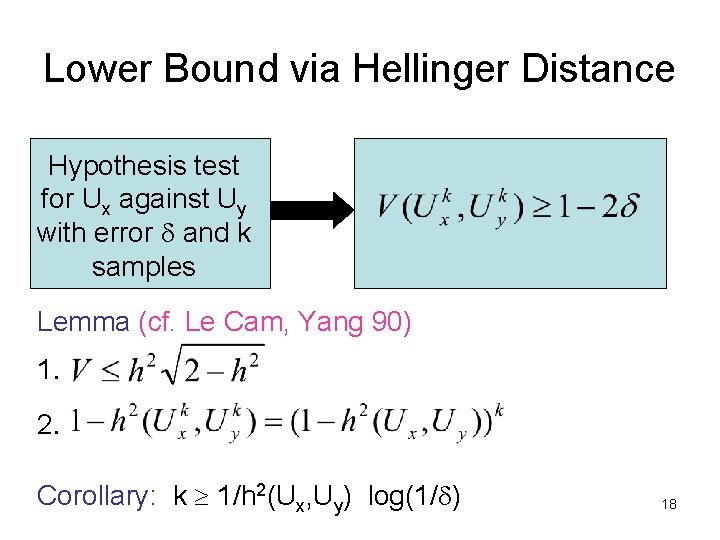

Lower Bound via Hellinger Distance Hypothesis test for Ux against Uy with error d and k samples Lemma (cf. Le Cam, Yang 90) 1. 2. Corollary: k 1/h 2(Ux, Uy) log(1/d) 18

![Communication Complexity Yao 79 f X Y Z Alice x X fx Communication Complexity [Yao 79] f: X Y Z $$ Alice x X $$ f(x,](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-19.jpg)

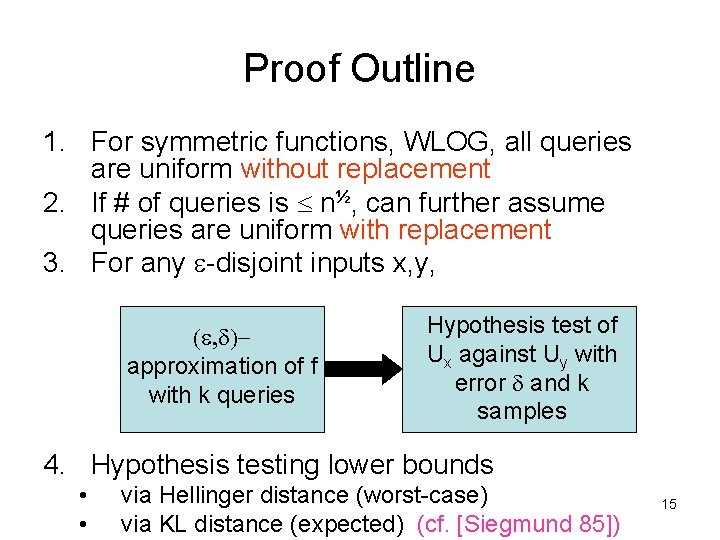

Communication Complexity [Yao 79] f: X Y Z $$ Alice x X $$ f(x, y) Bob y Y Rd(f) = randomized CC of f with error d 19

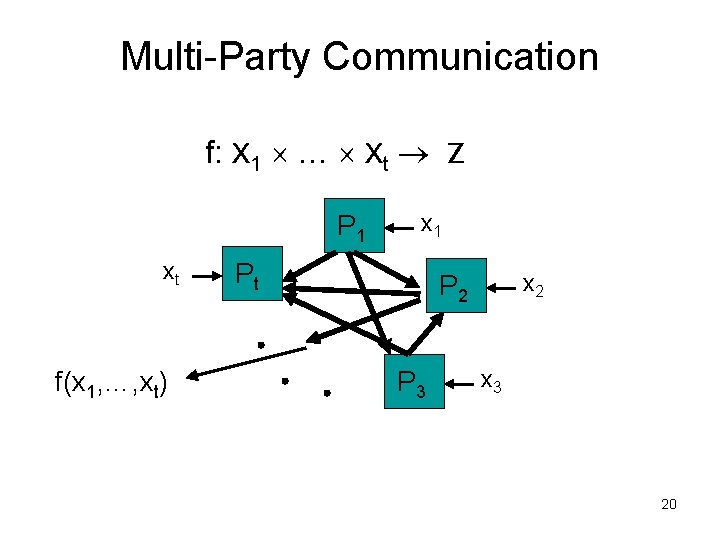

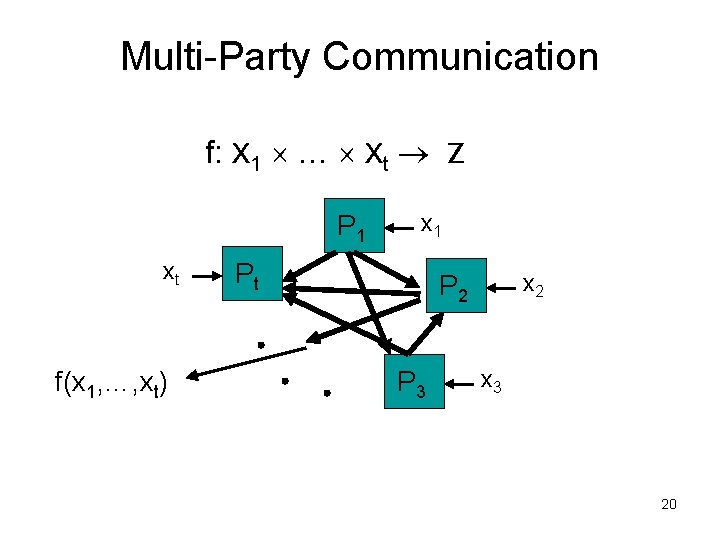

Multi-Party Communication f: X 1 … Xt Z P 1 xt f(x 1, …, xt) x 1 Pt x 2 P 3 x 3 20

![Example Setdisjointness tparty setdisjointness Pi gets Si n Theorem KS 87 R 90 RdDisj Example: Set-disjointness t-party set-disjointness Pi gets Si [n], Theorem [KS 87, R 90]: Rd(Disj](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-21.jpg)

Example: Set-disjointness t-party set-disjointness Pi gets Si [n], Theorem [KS 87, R 90]: Rd(Disj 2) = W(n) Theorem [AMS 96]: Rd(Disjt) = W(n/t 4) Best upper bound: Rd(Disjt) = O(n/t) 21

![Restricted Communication Models OneWay Communication PS 84 Ablayev 93 KNR 95 P 1 P Restricted Communication Models One-Way Communication [PS 84, Ablayev 93, KNR 95] P 1 P](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-22.jpg)

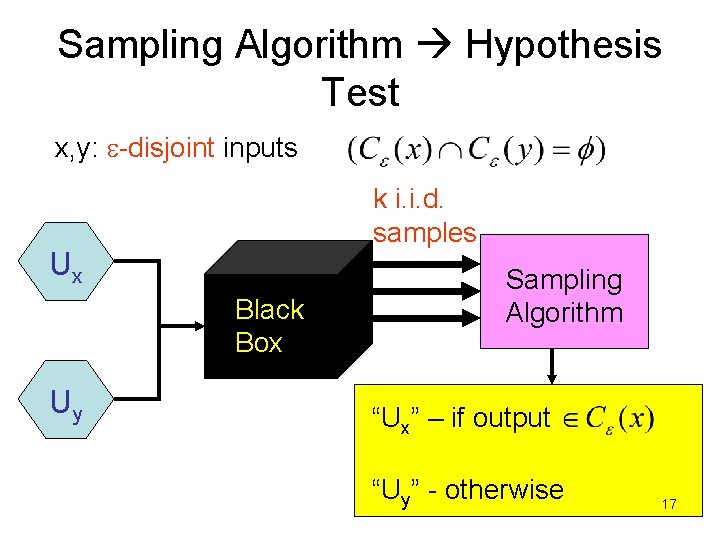

Restricted Communication Models One-Way Communication [PS 84, Ablayev 93, KNR 95] P 1 P 2 Pt f(x 1, …, xt) • Reduces to data stream computations Simultaneous Communication [Yao 79] P 1 P 2 Pt Referee f(x 1, …, xt) • Reduces to sketch computations 22

![Example Disjointness Frequency Moments kth frequency moment Input stream a 1 am n Example: Disjointness Frequency Moments k-th frequency moment Input stream: a 1, …, am [n],](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-23.jpg)

Example: Disjointness Frequency Moments k-th frequency moment Input stream: a 1, …, am [n], For j [n], fj = # of occurrences of j in a 1, …, am Fk(a 1, …, am) = j [n] (fj)k Theorem [AMS 96]: Corollary: DS(Fk) = n. W(1), k > 5 Best upper bounds: DS(Fk) = n. O(1), k > 2 DS(Fk) = O(log n), k=0, 1, 2 23

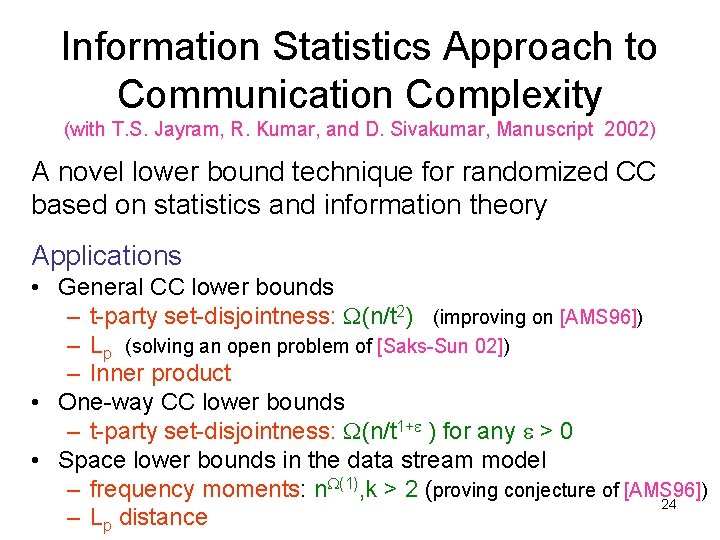

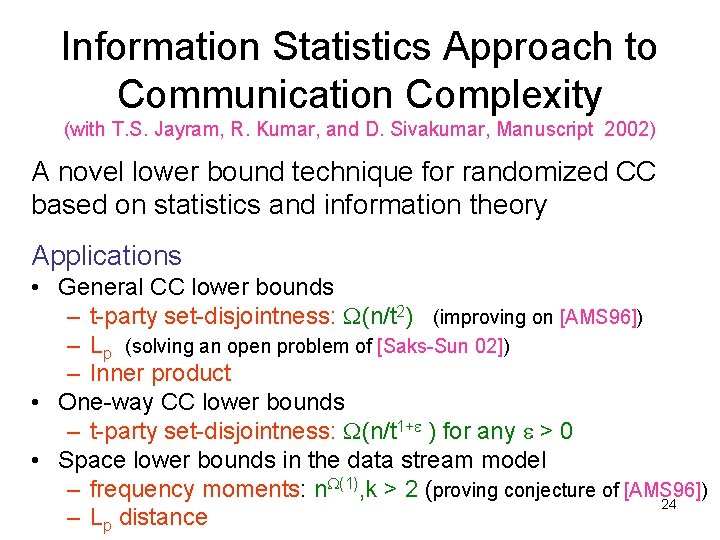

Information Statistics Approach to Communication Complexity (with T. S. Jayram, R. Kumar, and D. Sivakumar, Manuscript 2002) A novel lower bound technique for randomized CC based on statistics and information theory Applications • General CC lower bounds – t-party set-disjointness: W(n/t 2) (improving on [AMS 96]) – Lp (solving an open problem of [Saks-Sun 02]) – Inner product • One-way CC lower bounds – t-party set-disjointness: W(n/t 1+e ) for any e > 0 • Space lower bounds in the data stream model – frequency moments: n. W(1), k > 2 (proving conjecture of [AMS 96]) 24 – Lp distance

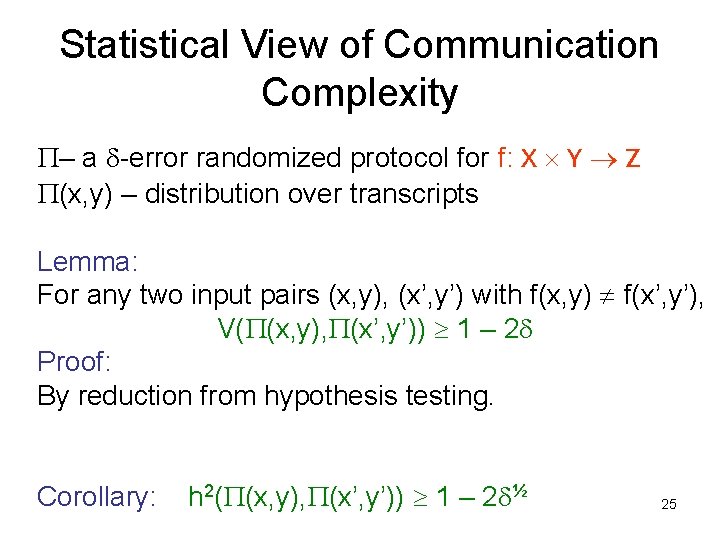

Statistical View of Communication Complexity P– a d-error randomized protocol for f: X Y Z P(x, y) – distribution over transcripts Lemma: For any two input pairs (x, y), (x’, y’) with f(x, y) f(x’, y’), V(P(x, y), P(x’, y’)) 1 – 2 d Proof: By reduction from hypothesis testing. Corollary: h 2(P(x, y), P(x’, y’)) 1 – 2 d½ 25

![Information Cost Ablayev 93 Chakrabarti et al 01 SaksSun 02 For a protocol P Information Cost [Ablayev 93, Chakrabarti et al. 01, Saks-Sun 02] For a protocol P](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-26.jpg)

Information Cost [Ablayev 93, Chakrabarti et al. 01, Saks-Sun 02] For a protocol P that computes f, how much information does P(x, y) have to reveal about (x, y)? m = (X, Y) – a distribution over inputs of f Definition: m-information cost icostm(P) = I(X, Y ; P(X, Y)) icostm, d(f) = min. P{icostm(P)} I(X, Y ; P(X, Y)) H(P(X, Y)) |P(X, Y)| Information cost lower bound CC lower bound 26

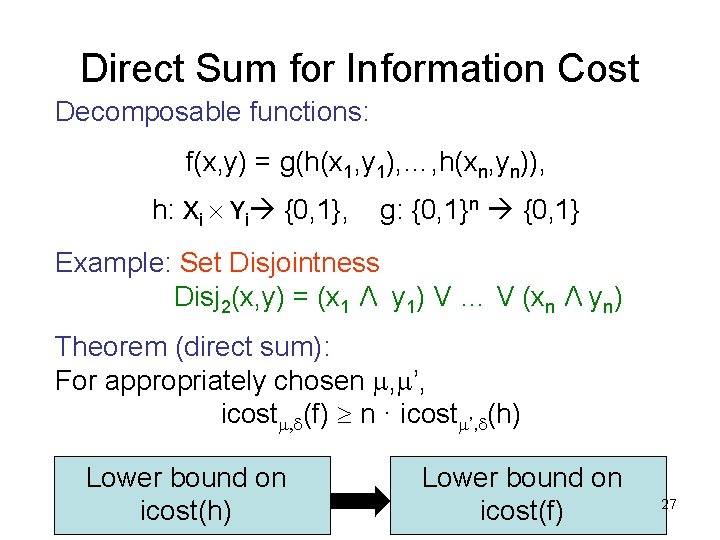

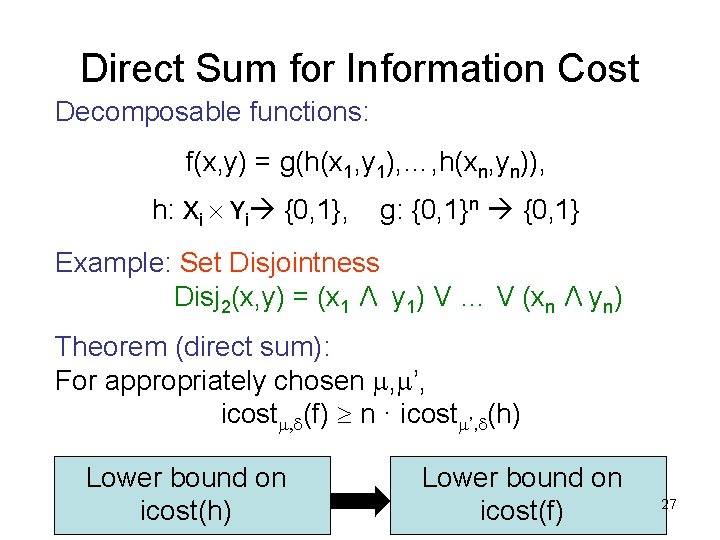

Direct Sum for Information Cost Decomposable functions: f(x, y) = g(h(x 1, y 1), …, h(xn, yn)), h: Xi Yi {0, 1}, g: {0, 1}n {0, 1} Example: Set Disjointness Disj 2(x, y) = (x 1 Λ y 1) V … V (xn Λ yn) Theorem (direct sum): For appropriately chosen m, m’, icostm, d(f) n · icostm’, d(h) Lower bound on icost(f) 27

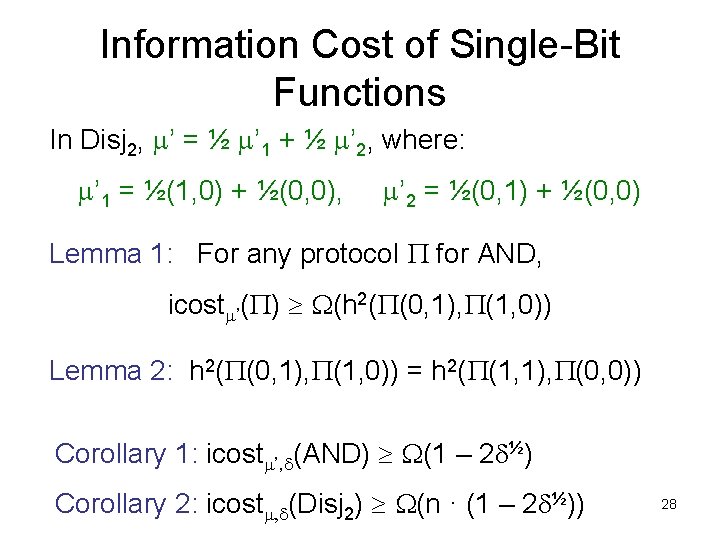

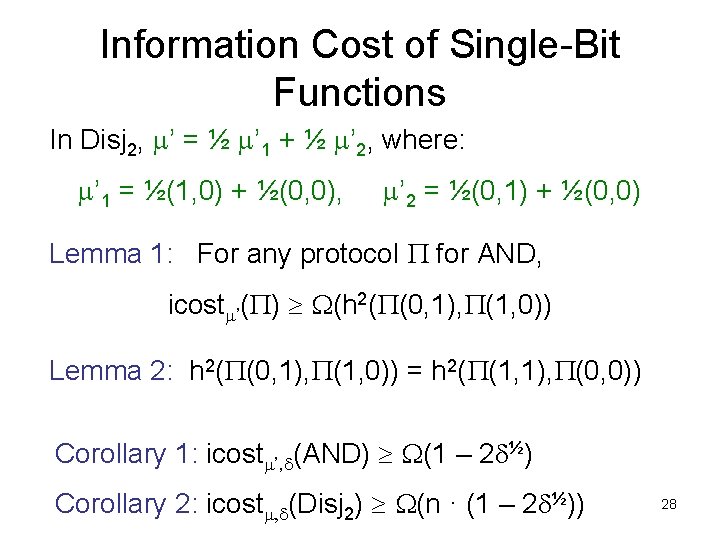

Information Cost of Single-Bit Functions In Disj 2, m’ = ½ m’ 1 + ½ m’ 2, where: m’ 1 = ½(1, 0) + ½(0, 0), m’ 2 = ½(0, 1) + ½(0, 0) Lemma 1: For any protocol P for AND, icostm’(P) W(h 2(P(0, 1), P(1, 0)) Lemma 2: h 2(P(0, 1), P(1, 0)) = h 2(P(1, 1), P(0, 0)) Corollary 1: icostm’, d(AND) W(1 – 2 d½) Corollary 2: icostm, d(Disj 2) W(n · (1 – 2 d½)) 28

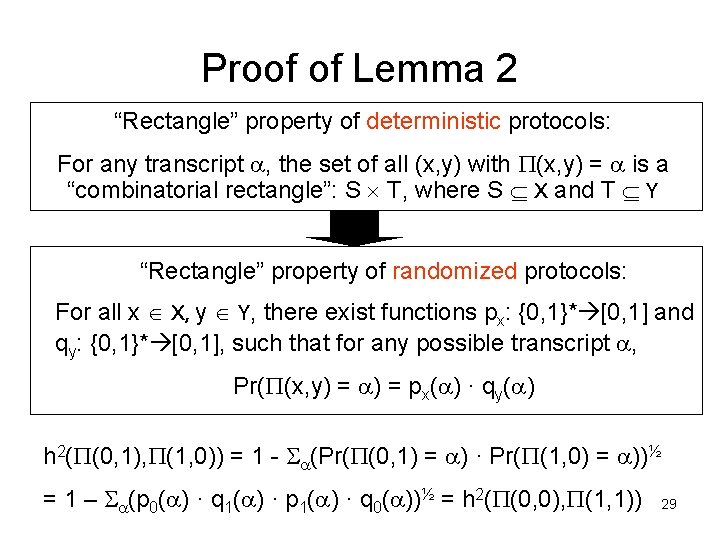

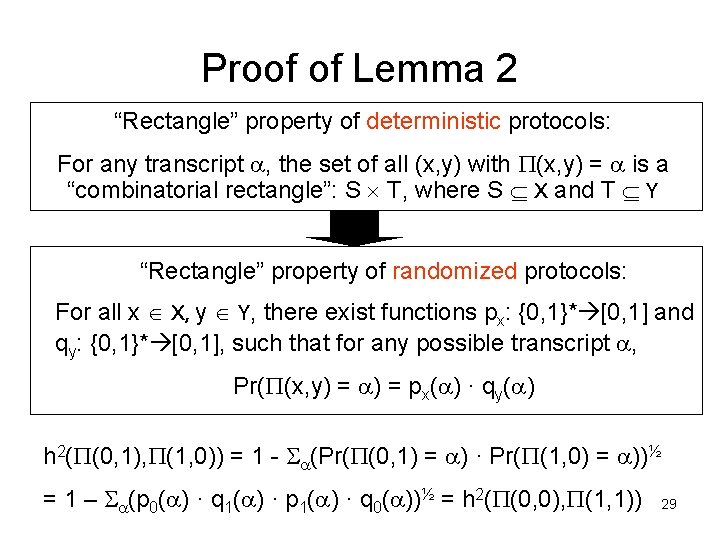

Proof of Lemma 2 “Rectangle” property of deterministic protocols: For any transcript a, the set of all (x, y) with P(x, y) = a is a “combinatorial rectangle”: S T, where S X and T Y “Rectangle” property of randomized protocols: For all x X, y Y, there exist functions px: {0, 1}* [0, 1] and qy: {0, 1}* [0, 1], such that for any possible transcript a, Pr(P(x, y) = a) = px(a) · qy(a) h 2(P(0, 1), P(1, 0)) = 1 - Sa(Pr(P(0, 1) = a) · Pr(P(1, 0) = a))½ = 1 – Sa(p 0(a) · q 1(a) · p 1(a) · q 0(a))½ = h 2(P(0, 0), P(1, 1)) 29

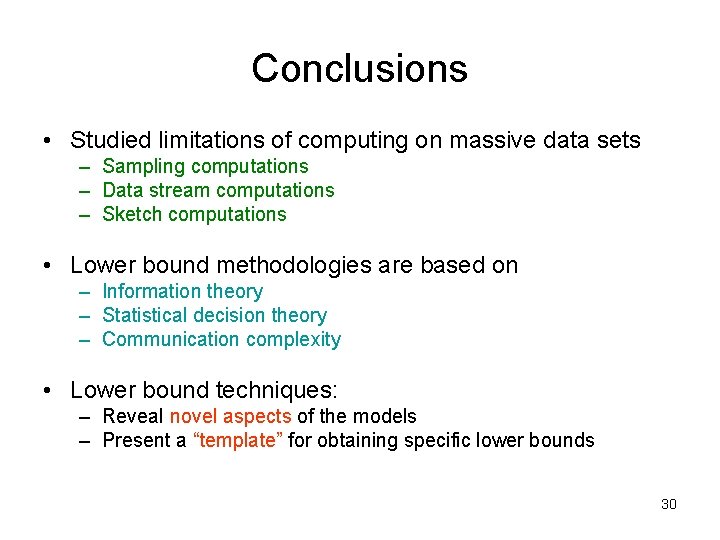

Conclusions • Studied limitations of computing on massive data sets – Sampling computations – Data stream computations – Sketch computations • Lower bound methodologies are based on – Information theory – Statistical decision theory – Communication complexity • Lower bound techniques: – Reveal novel aspects of the models – Present a “template” for obtaining specific lower bounds 30

Open Problems • Sampling – Lower bounds for non-symmetric functions – Property testing lower bounds • Communication complexity – Study the communication complexity of approximations – Tight lower bound for t-party set disjointness – Under what circumstances are one-way and simultaneous communication equivalent? 31

Thank You! 32

![Yaos Lemma Yao 83 Definition mdistributional CC Dm df Complexity of best deterministic protocol Yao’s Lemma [Yao 83] Definition: m-distributional CC (Dm, d(f)) Complexity of best deterministic protocol](https://slidetodoc.com/presentation_image/b6113f0278bac24b7beb01be716ef473/image-33.jpg)

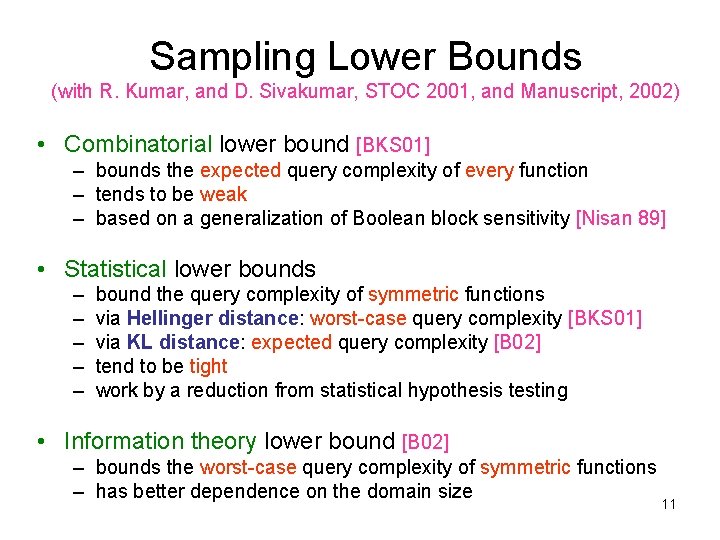

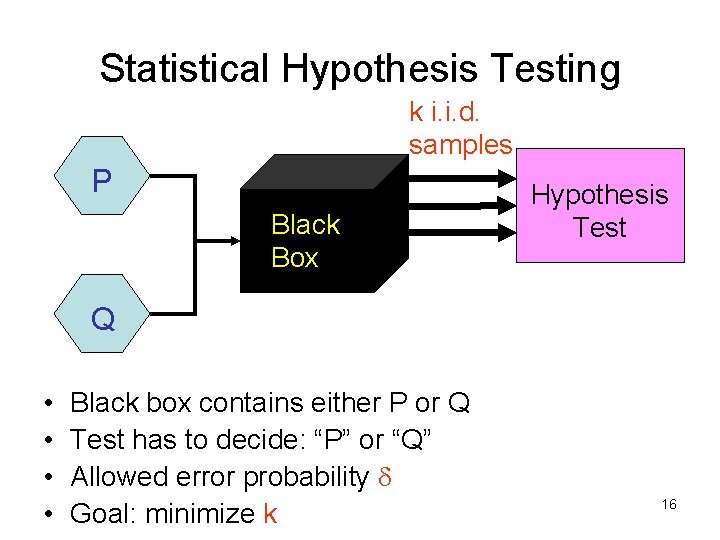

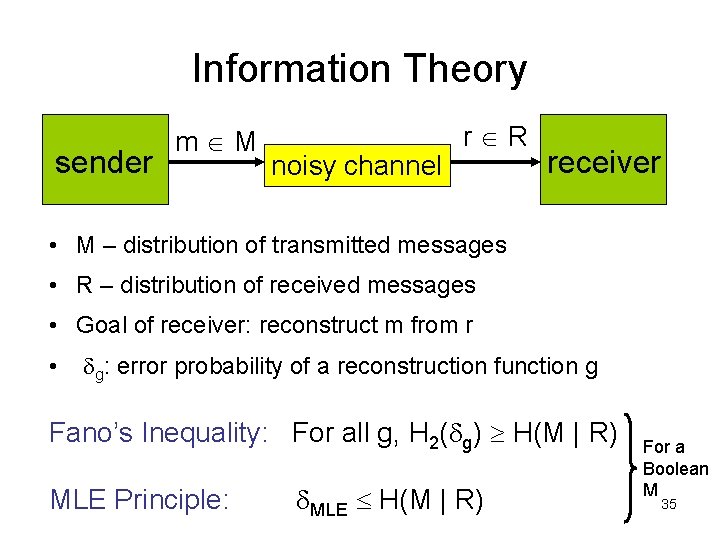

Yao’s Lemma [Yao 83] Definition: m-distributional CC (Dm, d(f)) Complexity of best deterministic protocol that computes f with error d on inputs drawn according to m Yao’s Lemma: Rd(f) maxm. Dm, d(f) • Convenient technique to prove randomized CC lower bounds 33

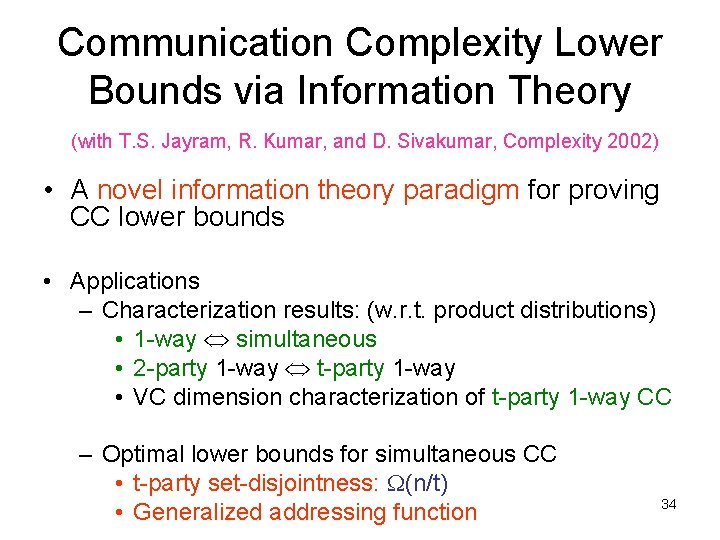

Communication Complexity Lower Bounds via Information Theory (with T. S. Jayram, R. Kumar, and D. Sivakumar, Complexity 2002) • A novel information theory paradigm for proving CC lower bounds • Applications – Characterization results: (w. r. t. product distributions) • 1 -way simultaneous • 2 -party 1 -way t-party 1 -way • VC dimension characterization of t-party 1 -way CC – Optimal lower bounds for simultaneous CC • t-party set-disjointness: W(n/t) • Generalized addressing function 34

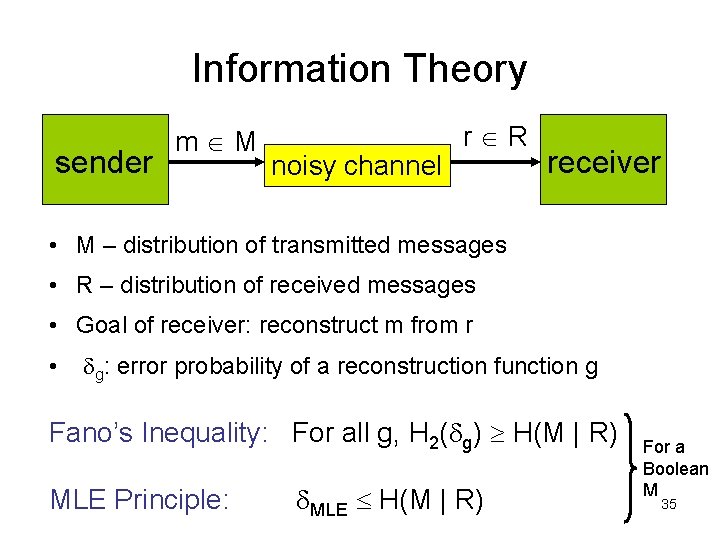

Information Theory sender m M noisy channel r R receiver • M – distribution of transmitted messages • R – distribution of received messages • Goal of receiver: reconstruct m from r • dg: error probability of a reconstruction function g Fano’s Inequality: For all g, H 2(dg) H(M | R) MLE Principle: d. MLE H(M | R) For a Boolean M 35

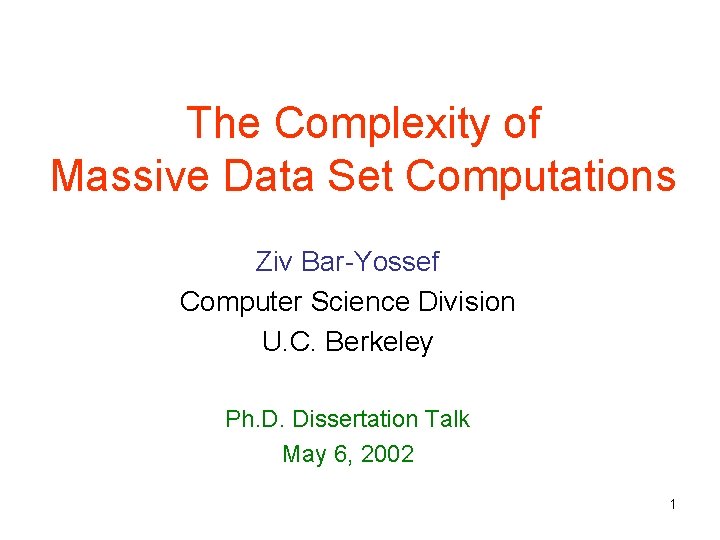

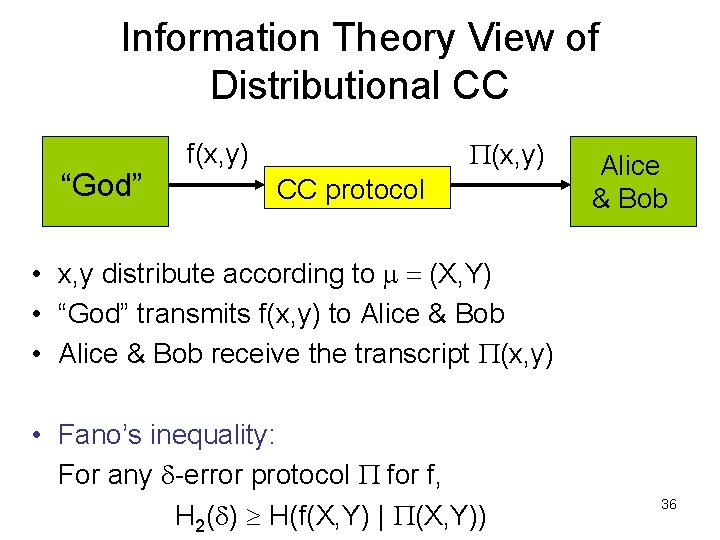

Information Theory View of Distributional CC “God” P(x, y) f(x, y) CC protocol Alice & Bob • x, y distribute according to m = (X, Y) • “God” transmits f(x, y) to Alice & Bob • Alice & Bob receive the transcript P(x, y) • Fano’s inequality: For any d-error protocol P for f, H 2(d) H(f(X, Y) | P(X, Y)) 36

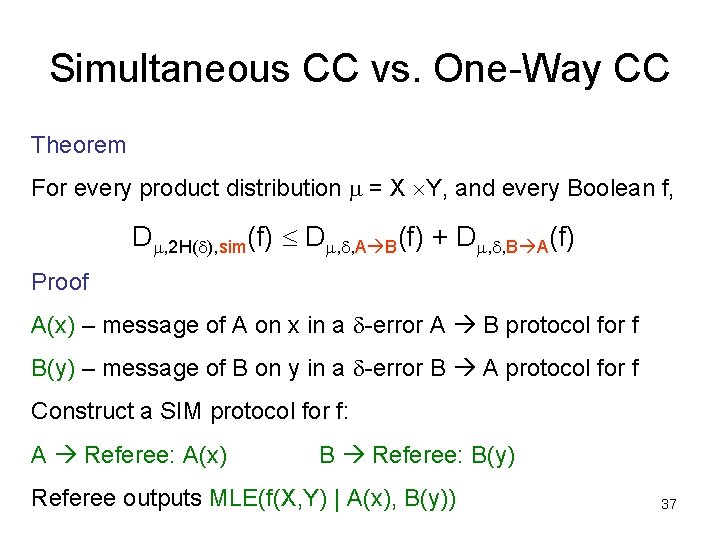

Simultaneous CC vs. One-Way CC Theorem For every product distribution m = X Y, and every Boolean f, Dm, 2 H(d), sim(f) Dm, d, A B(f) + Dm, d, B A(f) Proof A(x) – message of A on x in a d-error A B protocol for f B(y) – message of B on y in a d-error B A protocol for f Construct a SIM protocol for f: A Referee: A(x) B Referee: B(y) Referee outputs MLE(f(X, Y) | A(x), B(y)) 37

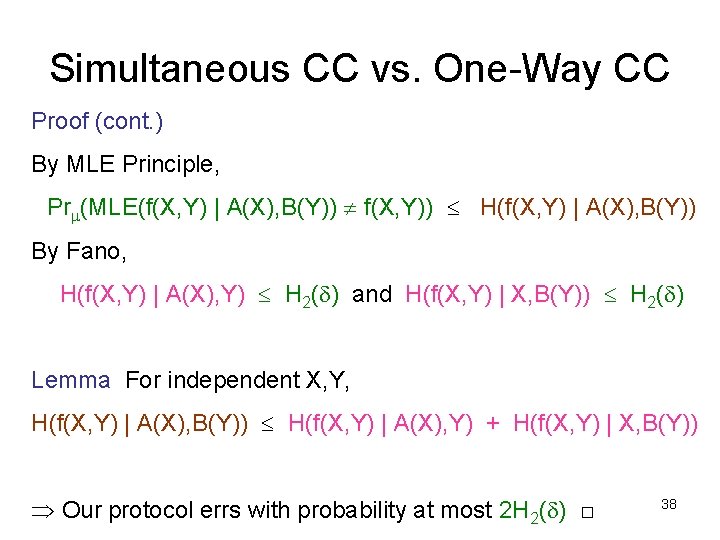

Simultaneous CC vs. One-Way CC Proof (cont. ) By MLE Principle, Prm(MLE(f(X, Y) | A(X), B(Y)) f(X, Y)) H(f(X, Y) | A(X), B(Y)) By Fano, H(f(X, Y) | A(X), Y) H 2(d) and H(f(X, Y) | X, B(Y)) H 2(d) Lemma For independent X, Y, H(f(X, Y) | A(X), B(Y)) H(f(X, Y) | A(X), Y) + H(f(X, Y) | X, B(Y)) Our protocol errs with probability at most 2 H 2(d) □ 38