The CheckPointed and ErrorRecoverable MPI Java of Agent

The Check-Pointed and Error-Recoverable MPI Java of Agent. Teamwork Grid Computing Middleware Munehiro Fukuda and Zhiji Huang Computing & Software Systems, University of Washington, Bothell Funded by 8/25/2005 IEEE Pac. Rim 2005 1

Background n n Target applications in most grid-computing systems q Communication takes place at the beginning and the end of each sub task. q A crashed sub task will be simply repeated. q Example: Master-worker and parameter-sweep models Fault tolerance q FT-MPI or MPI in Legion/Avaki n n q Condor MW n n q The system will not recover lost messages. Users must specify variables to save and add a function called from MPI. Init( ) upon a job resumption. Messages between the master and each slave will be saved. No inter-slave communication will be check-pointed. Rock/Rack n n 8/25/2005 Socket buffers will be saved at application level. A process must be mobile-aware to keep track of its communication counterpart. IEEE Pac. Rim 2005 2

Objective n More programming models q q n Process resumption in its middle q q n Not restricted to master slave or parameter sweep Targeting heartbeat, pipeline, and collective-communication -oriented applications Resuming a process from the last checkpoint. Allowing process migration for performance improvement Error-recovery support from sockets to MPI q q 8/25/2005 Facilitating check-pointed error-recoverable Java socket. Implementing mpi. Java API with our fault-tolerant socket. IEEE Pac. Rim 2005 3

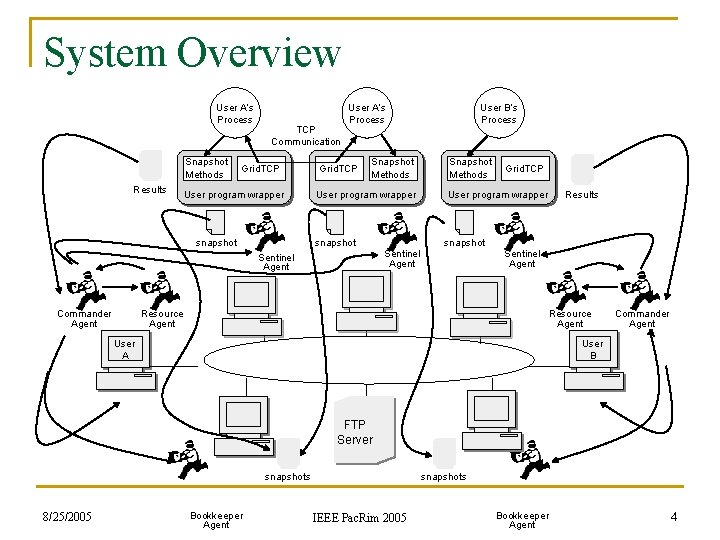

System Overview User A’s Process Snapshot Methods Results TCP Communication Grid. TCP User program wrapper snapshot User A’s Process Grid. TCP Snapshot Methods User program wrapper snapshot Snapshot Methods Grid. TCP User program wrapper Results snapshot Sentinel Agent Commander Agent User B’s Process Sentinel Agent Resource Agent User A Commander Agent User B FTP Server snapshots 8/25/2005 Bookkeeper Agent snapshots IEEE Pac. Rim 2005 Bookkeeper Agent 4

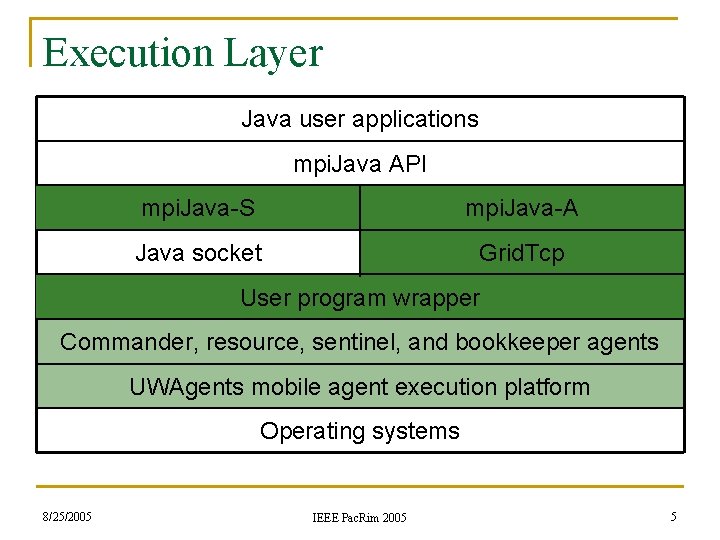

Execution Layer Java user applications mpi. Java API mpi. Java-S mpi. Java-A Java socket Grid. Tcp User program wrapper Commander, resource, sentinel, and bookkeeper agents UWAgents mobile agent execution platform Operating systems 8/25/2005 IEEE Pac. Rim 2005 5

![Programming Interface public class My. Application { public Grid. Ip. Entry ip. Entry[]; public Programming Interface public class My. Application { public Grid. Ip. Entry ip. Entry[]; public](http://slidetodoc.com/presentation_image_h2/efd7dcdb311d60f774fda2e9761bcbcb/image-6.jpg)

Programming Interface public class My. Application { public Grid. Ip. Entry ip. Entry[]; public int func. Id; public Grid. Tcp tcp; public int nprocess; public int my. Rank; public int func_0( String args[] ) { MPJ. Init( args, ip. Entry, tcp ); . . . ; return 1; } public int func_1( ) { if ( MPJ. COMM_WORLD. Rank( ) == 0 ) MPJ. COMM_WORLD. Send(. . . ); else MPJ. COMM_WORLD. Recv(. . . ); . . . ; return 2; } public int func_2( ) {. . . ; MPJ. finalize( ); return -2; } } 8/25/2005 // used by the Grid. Tcp socket library // used by the user program wrapper // the Grid. Tcp error-recoverable socket // #processors // processor id ( or mpi rank) // constructor // invoke mpi. Java-A // more statements to be inserted // calls func_1( ) // called from func_0 // more statements to be inserted // calls func_2( ) // called from func_2, the last function // more statements to be inserted // stops mpi. Java-A // application terminated IEEE Pac. Rim 2005 6

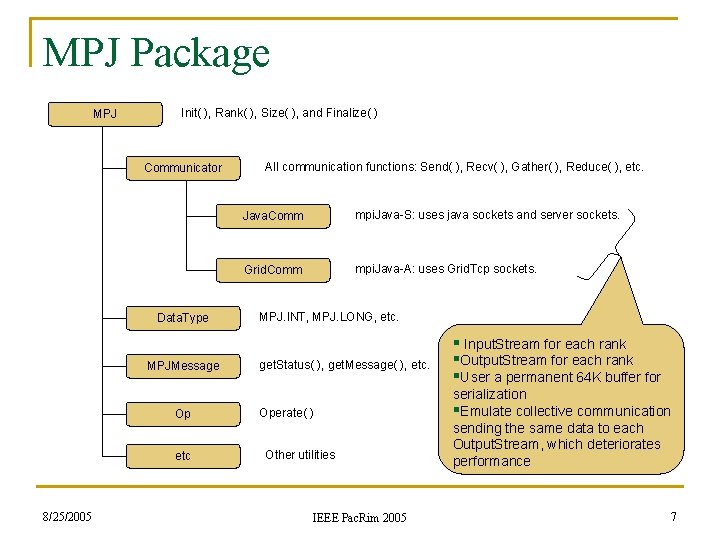

MPJ Package MPJ Init( ), Rank( ), Size( ), and Finalize( ) Communicator Data. Type MPJMessage Op etc 8/25/2005 All communication functions: Send( ), Recv( ), Gather( ), Reduce( ), etc. Java. Comm mpi. Java-S: uses java sockets and server sockets. Grid. Comm mpi. Java-A: uses Grid. Tcp sockets. MPJ. INT, MPJ. LONG, etc. get. Status( ), get. Message( ), etc. Operate( ) Other utilities IEEE Pac. Rim 2005 § Input. Stream for each rank §Output. Stream for each rank §User a permanent 64 K buffer for serialization §Emulate collective communication sending the same data to each Output. Stream, which deteriorates performance 7

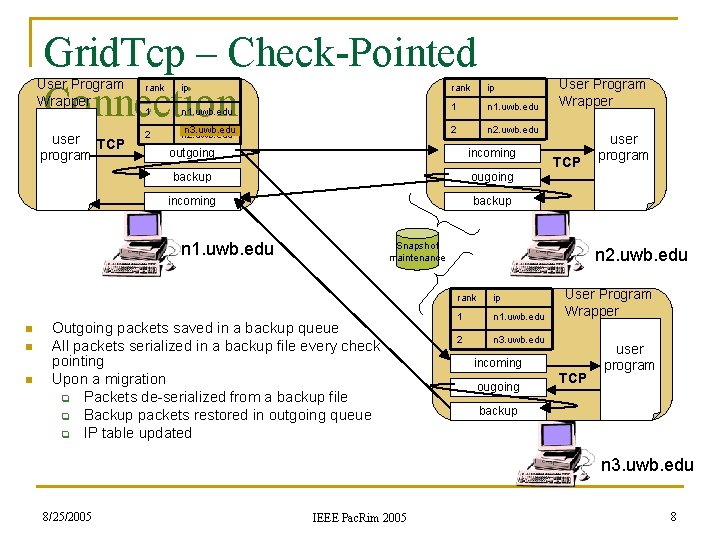

Grid. Tcp – Check-Pointed Connection User Program Wrapper user TCP program rank ip 1 n 1. uwb. edu 2 n 3. uwb. edu n 2. uwb. edu 2 n 2. uwb. edu outgoing incoming backup ougoing incoming backup n 1. uwb. edu n n n User Program Wrapper TCP Snapshot maintenance Outgoing packets saved in a backup queue All packets serialized in a backup file every check pointing Upon a migration q Packets de-serialized from a backup file q Backup packets restored in outgoing queue q IP table updated user program n 2. uwb. edu rank ip 1 n 1. uwb. edu 2 n 3. uwb. edu User Program Wrapper incoming ougoing TCP user program backup n 3. uwb. edu 8/25/2005 IEEE Pac. Rim 2005 8

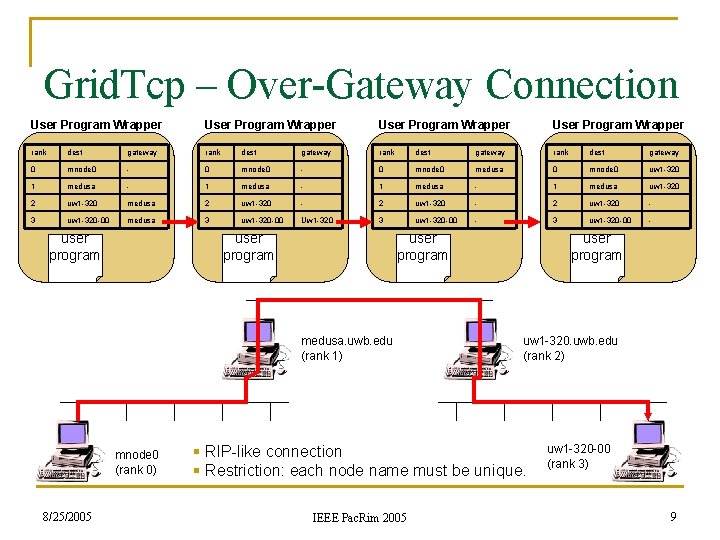

Grid. Tcp – Over-Gateway Connection User Program Wrapper rank dest gateway 0 mnode 0 - 0 mnode 0 medusa 0 mnode 0 uw 1 -320 1 medusa - 1 medusa uw 1 -320 2 uw 1 -320 medusa 2 uw 1 -320 - 3 uw 1 -320 -00 medusa 3 uw 1 -320 -00 Uw 1 -320 3 uw 1 -320 -00 - user program medusa. uwb. edu (rank 1) mnode 0 (rank 0) 8/25/2005 user program uw 1 -320. uwb. edu (rank 2) § RIP-like connection § Restriction: each node name must be unique. IEEE Pac. Rim 2005 uw 1 -320 -00 (rank 3) 9

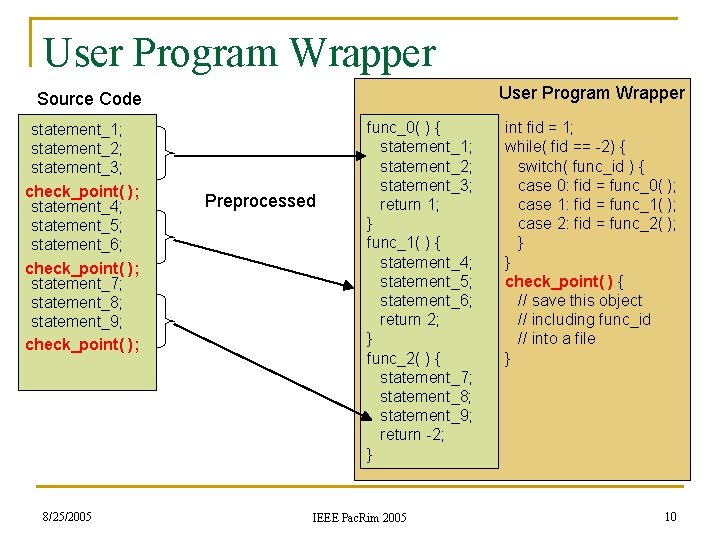

User Program Wrapper Source Code statement_1; statement_2; statement_3; check_point( ); statement_4; statement_5; statement_6; check_point( ); statement_7; statement_8; statement_9; check_point( ); 8/25/2005 Preprocessed func_0( ) { statement_1; statement_2; statement_3; return 1; } func_1( ) { statement_4; statement_5; statement_6; return 2; } func_2( ) { statement_7; statement_8; statement_9; return -2; } IEEE Pac. Rim 2005 int fid = 1; while( fid == -2) { switch( func_id ) { case 0: fid = func_0( ); case 1: fid = func_1( ); case 2: fid = func_2( ); } } check_point( ) { // save this object // including func_id // into a file } 10

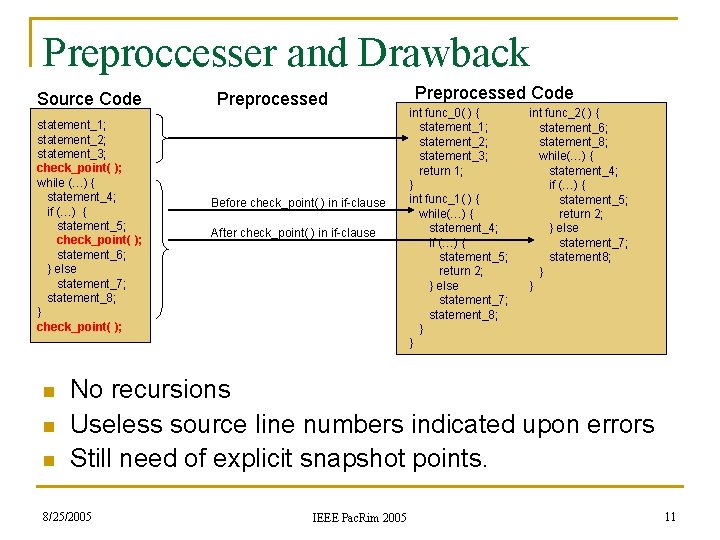

Preproccesser and Drawback Source Code statement_1; statement_2; statement_3; check_point( ); while (…) { statement_4; if (…) { statement_5; check_point( ); statement_6; } else statement_7; statement_8; } check_point( ); n n n Preprocessed Before check_point( ) in if-clause After check_point( ) in if-clause Preprocessed Code int func_0( ) { statement_1; statement_2; statement_3; return 1; } int func_1( ) { while(…) { statement_4; if (…) { statement_5; return 2; } else statement_7; statement_8; } } int func_2( ) { statement_6; statement_8; while(…) { statement_4; if (…) { statement_5; return 2; } else statement_7; statement 8; } } No recursions Useless source line numbers indicated upon errors Still need of explicit snapshot points. 8/25/2005 IEEE Pac. Rim 2005 11

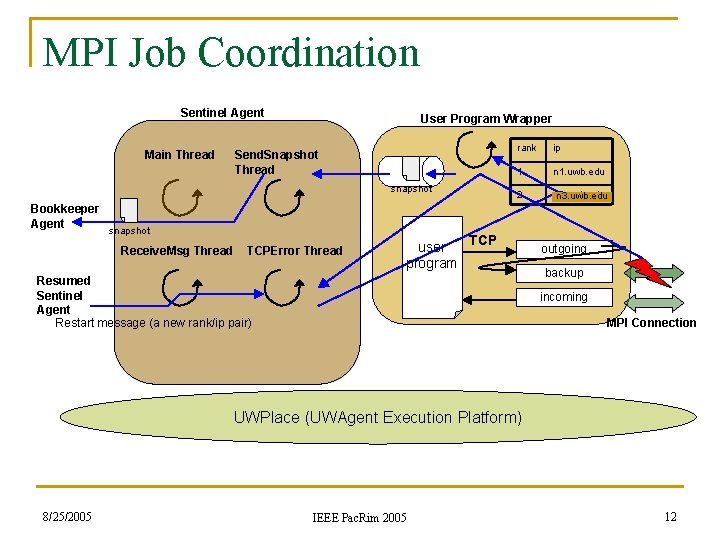

MPI Job Coordination Sentinel Agent Main Thread User Program Wrapper Send. Snapshot Thread snapshot Bookkeeper Agent snapshot Receive. Msg Thread TCPError Thread rank ip 1 n 1. uwb. edu 2 n 2. uwb. edu n 3. uwb. edu TCP user program Resumed Sentinel Agent Restart message (a new rank/ip pair) outgoing backup incoming MPI Connection UWPlace (UWAgent Execution Platform) 8/25/2005 IEEE Pac. Rim 2005 12

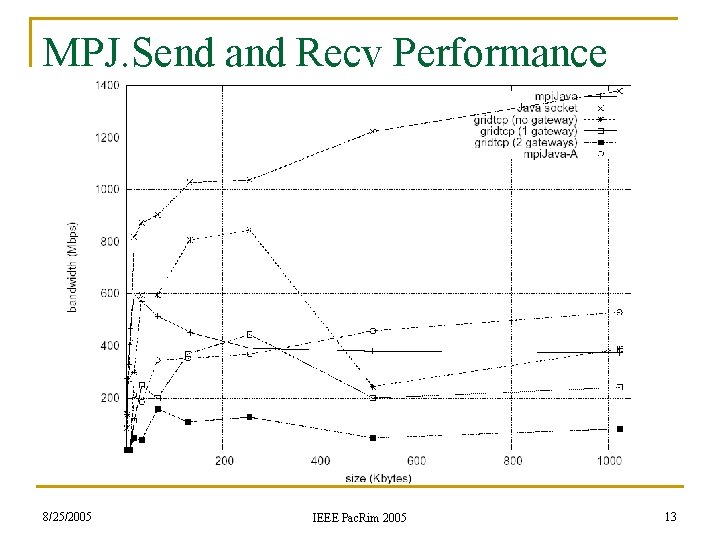

MPJ. Send and Recv Performance 8/25/2005 IEEE Pac. Rim 2005 13

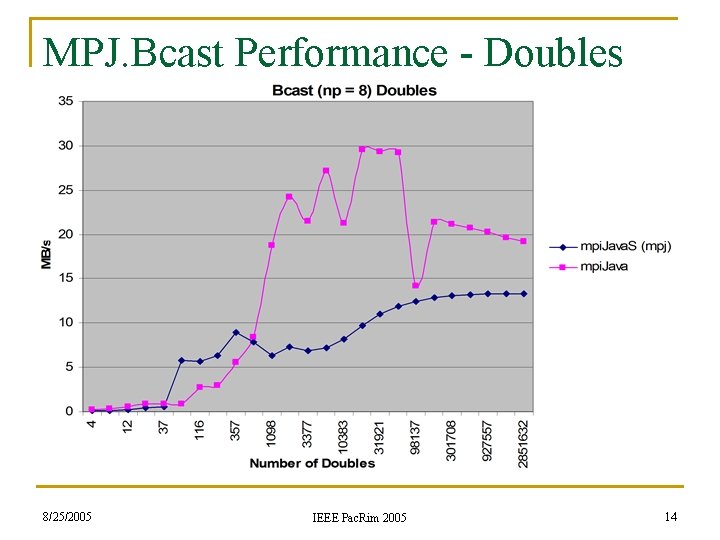

MPJ. Bcast Performance - Doubles 8/25/2005 IEEE Pac. Rim 2005 14

Conclusions n Raw bandwidth q q n Serialization q n When dealing with primitives or objects that need serialization, a 25 -50% overhead is incurred. Memory issues related to mpi. Java. A q n mpi. Java-S comes to about 95 -100% of maximum Java performance. mpi. Java-A (with check-pointing and error recovery) incurs 20 -60% overhead, but still overtakes mpi. Java with bigger data segments. Due to snapshots created every func_n call. Next work q q 8/25/2005 Performance and memory-usage improvement Preprocessor implementation IEEE Pac. Rim 2005 15

- Slides: 15