The Case For Predictionbased Besteffort Realtime Peter A

The Case For Prediction-based Best-effort Real-time Peter A. Dinda Bruce Lowekamp Loukas F. Kallivokas David R. O’Hallaron Carnegie Mellon University

Overview • Distributed interactive applications • Could benefit from best-effort real-time • Example: Quake. Viz (Earthquake Visualization) and the DV (Distributed Visualization) framework • Evidence for feasibility of prediction-based best-effort RT service for these applications • Mapping algorithms • Execution time model • Host load prediction 2

Application Characteristics • Interactivity • Users initiate tasks with deadlines • Timely, consistent, and predictable feedback • Resilience • Missed deadlines are acceptable • Distributability • Tasks can be initiated on any host • Adaptability • Task computation and communication can be adjusted Shared, unreserved computing environments 3

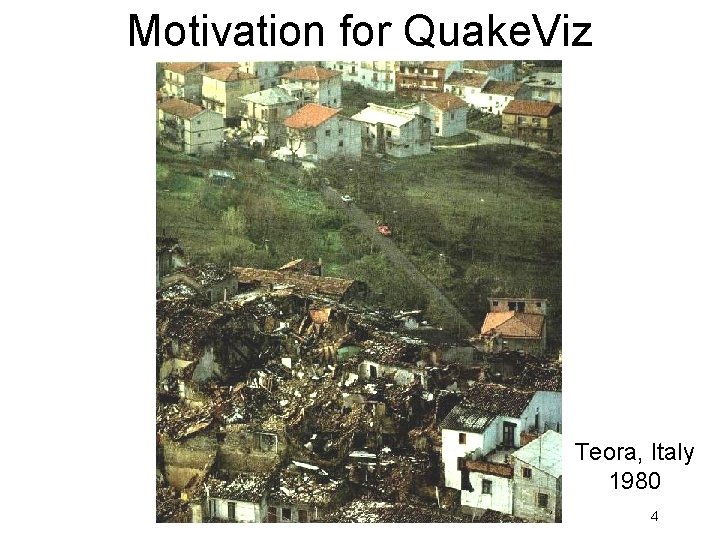

Motivation for Quake. Viz Teora, Italy 1980 4

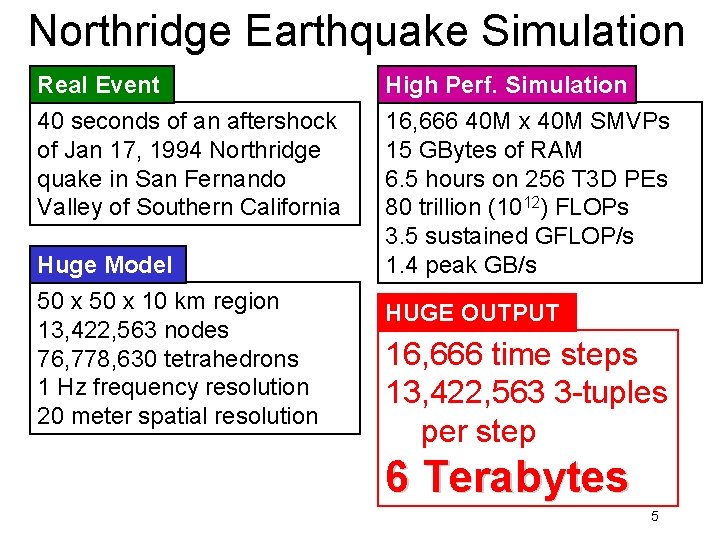

Northridge Earthquake Simulation Real Event 40 seconds of an aftershock of Jan 17, 1994 Northridge quake in San Fernando Valley of Southern California Huge Model 50 x 10 km region 13, 422, 563 nodes 76, 778, 630 tetrahedrons 1 Hz frequency resolution 20 meter spatial resolution High Perf. Simulation 16, 666 40 M x 40 M SMVPs 15 GBytes of RAM 6. 5 hours on 256 T 3 D PEs 80 trillion (1012) FLOPs 3. 5 sustained GFLOP/s 1. 4 peak GB/s HUGE OUTPUT 16, 666 time steps 13, 422, 563 3 -tuples per step 6 Terabytes 5

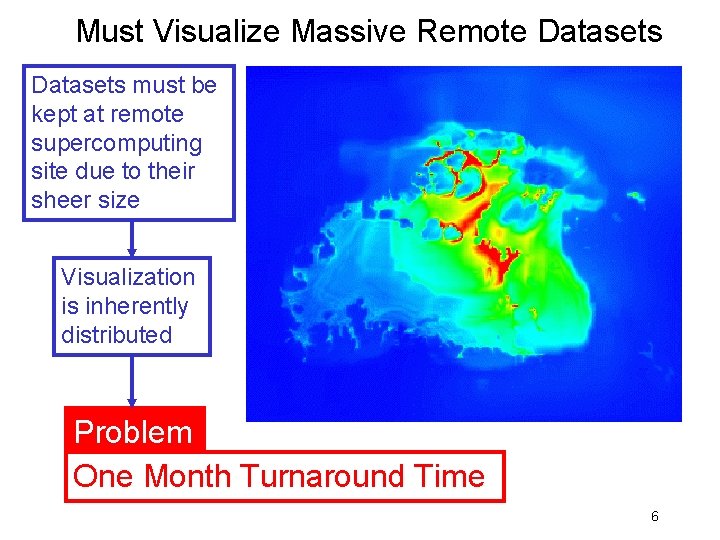

Must Visualize Massive Remote Datasets must be kept at remote supercomputing site due to their sheer size Visualization is inherently distributed Problem One Month Turnaround Time 6

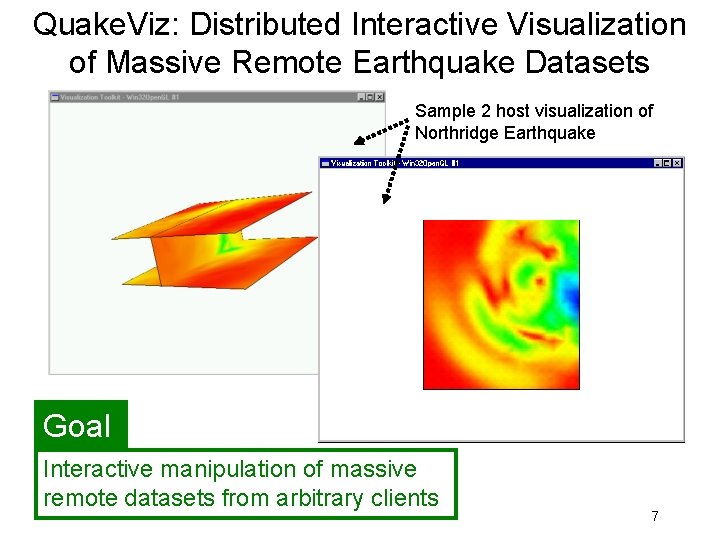

Quake. Viz: Distributed Interactive Visualization of Massive Remote Earthquake Datasets Sample 2 host visualization of Northridge Earthquake Goal Interactive manipulation of massive remote datasets from arbitrary clients 7

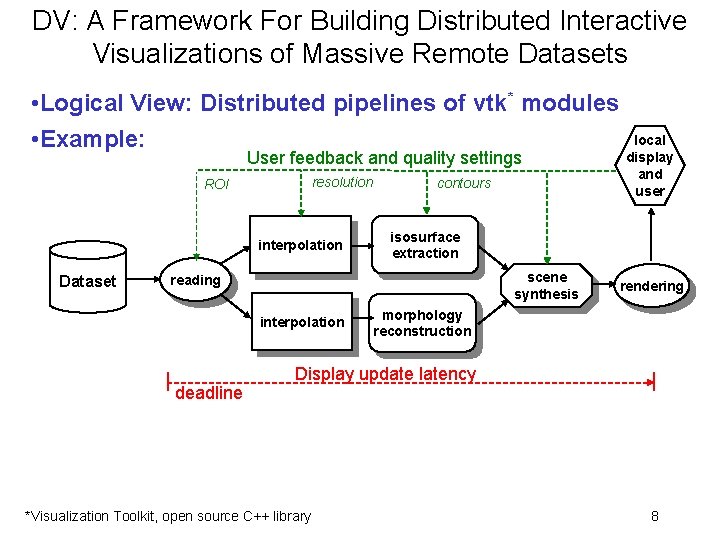

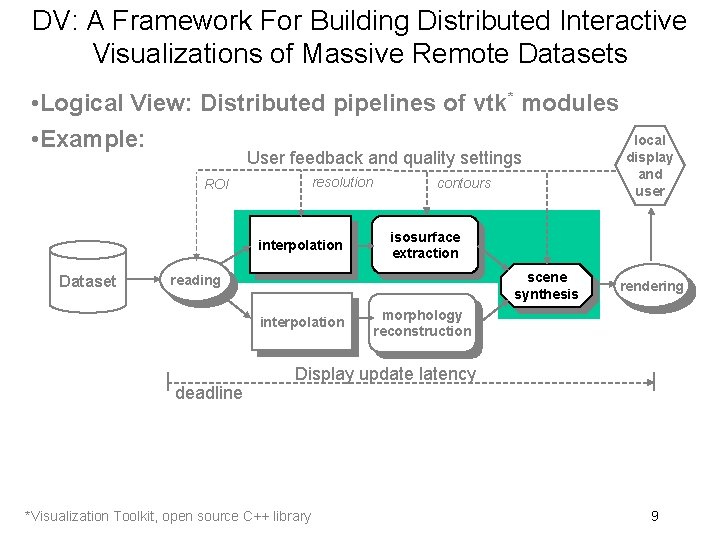

DV: A Framework For Building Distributed Interactive Visualizations of Massive Remote Datasets • Logical View: Distributed pipelines of vtk* modules • Example: User feedback and quality settings resolution ROI interpolation Dataset contours isosurface extraction scene synthesis reading interpolation deadline local display and user rendering morphology reconstruction Display update latency *Visualization Toolkit, open source C++ library 8

DV: A Framework For Building Distributed Interactive Visualizations of Massive Remote Datasets • Logical View: Distributed pipelines of vtk* modules • Example: User feedback and quality settings resolution ROI interpolation Dataset contours isosurface extraction scene synthesis reading interpolation deadline local display and user rendering morphology reconstruction Display update latency *Visualization Toolkit, open source C++ library 9

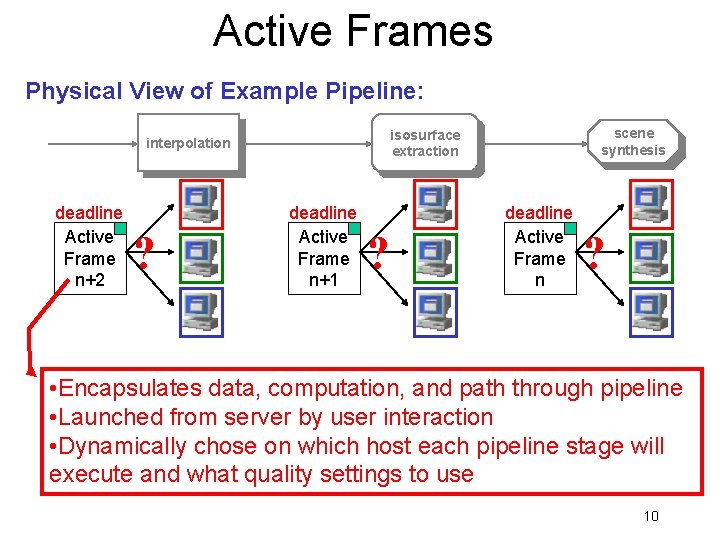

Active Frames Physical View of Example Pipeline: deadline Active Frame n+2 ? scene synthesis isosurface extraction interpolation deadline Active Frame n+1 ? deadline Active Frame n ? • Encapsulates data, computation, and path through pipeline • Launched from server by user interaction • Dynamically chose on which host each pipeline stage will execute and what quality settings to use 10

Active Frames Physical View of Example Pipeline: deadline Active Frame n+2 ? scene synthesis isosurface extraction interpolation deadline Active Frame n+1 ? deadline Active Frame n ? • Encapsulates data, computation, and path through pipeline • Launched from server by user interaction • Dynamically chose on which host each pipeline stage will execute and what quality settings to use 11

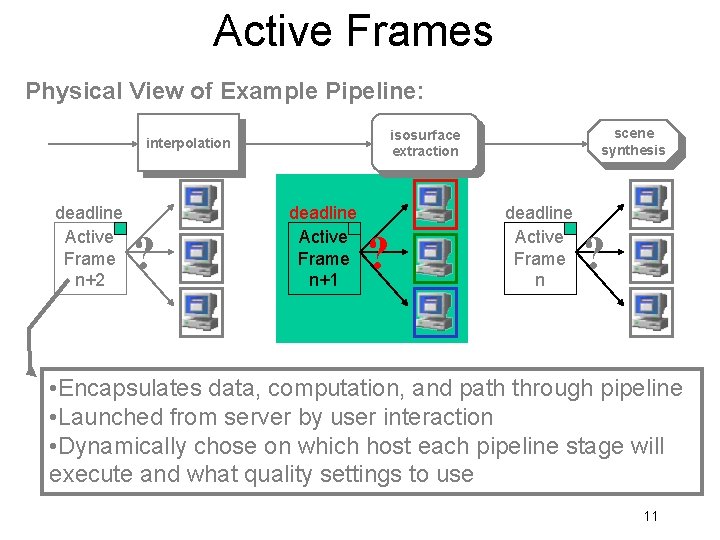

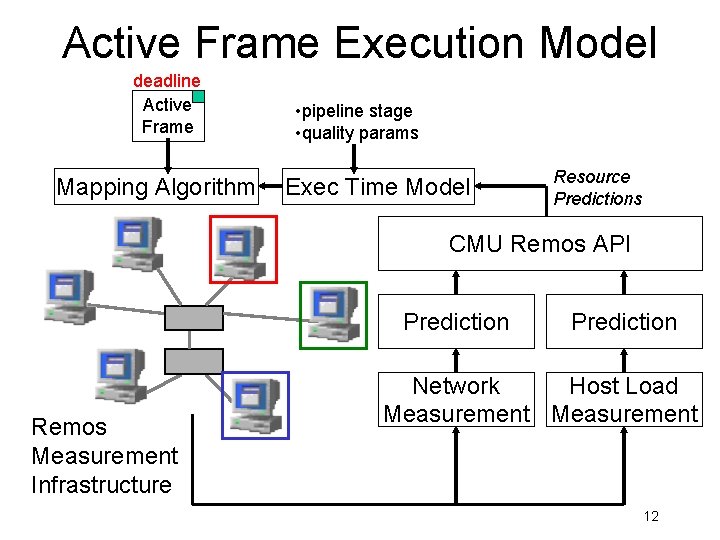

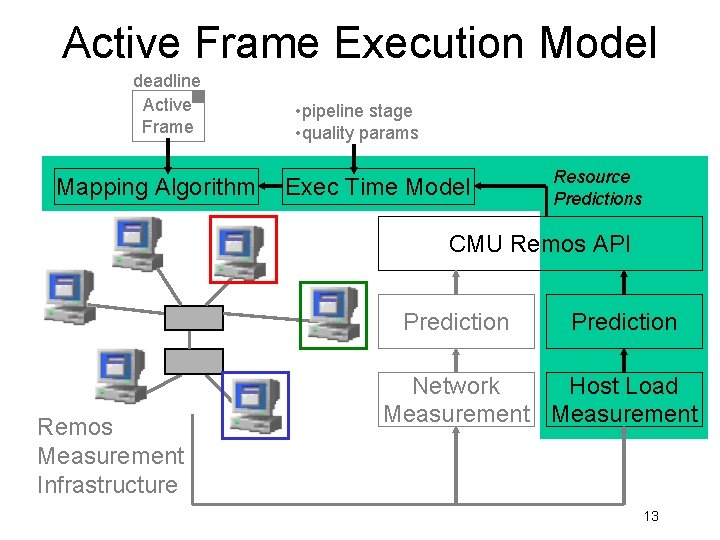

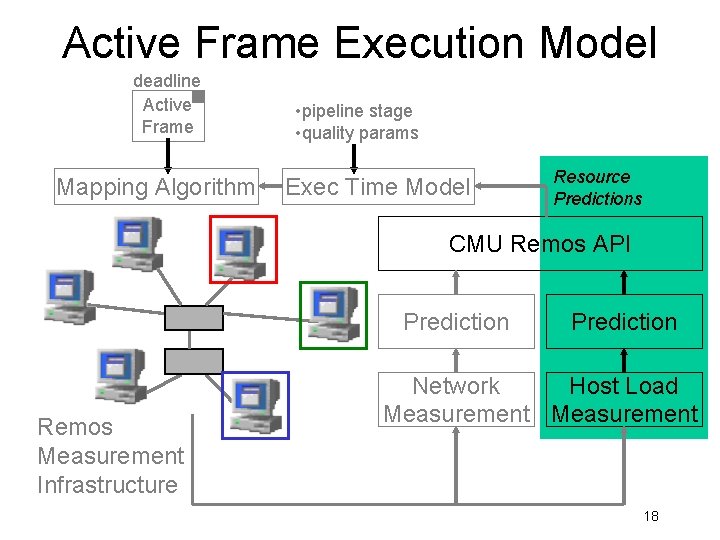

Active Frame Execution Model deadline Active Frame Mapping Algorithm • pipeline stage • quality params Exec Time Model Resource Predictions CMU Remos API Prediction Remos Measurement Infrastructure Prediction Network Host Load Measurement 12

Active Frame Execution Model deadline Active Frame Mapping Algorithm • pipeline stage • quality params Exec Time Model Resource Predictions CMU Remos API Prediction Remos Measurement Infrastructure Prediction Network Host Load Measurement 13

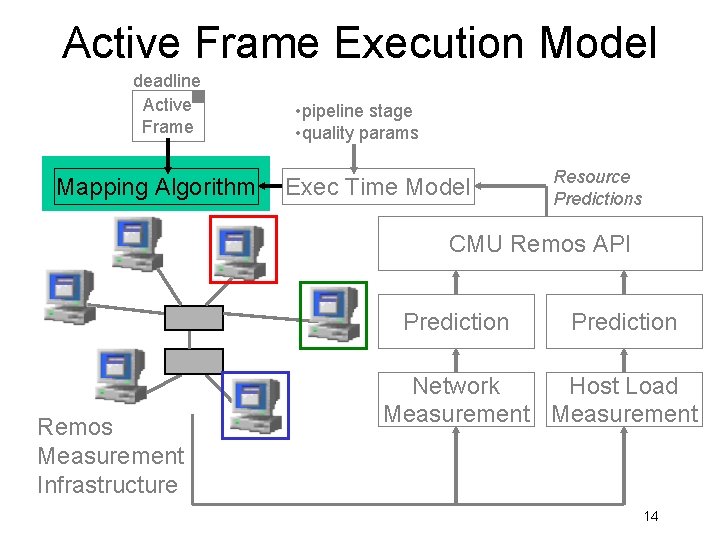

Active Frame Execution Model deadline Active Frame Mapping Algorithm • pipeline stage • quality params Exec Time Model Resource Predictions CMU Remos API Prediction Remos Measurement Infrastructure Prediction Network Host Load Measurement 14

Feasibility of Best-effort Mapping Algorithms 15

Active Frame Execution Model deadline Active Frame Mapping Algorithm • pipeline stage • quality params Exec Time Model Resource Predictions CMU Remos API Prediction Remos Measurement Infrastructure Prediction Network Host Load Measurement 16

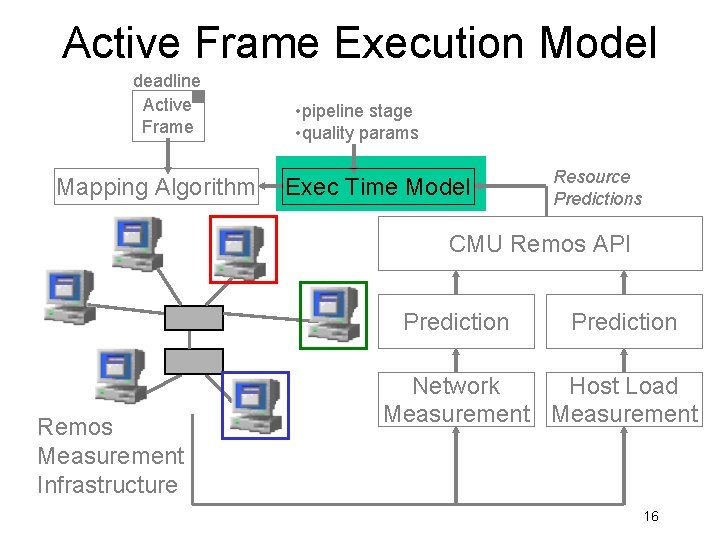

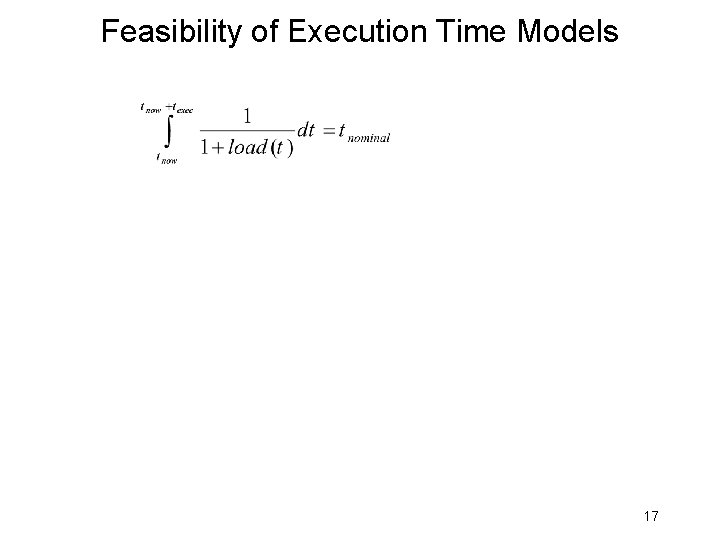

Feasibility of Execution Time Models 17

Active Frame Execution Model deadline Active Frame Mapping Algorithm • pipeline stage • quality params Exec Time Model Resource Predictions CMU Remos API Prediction Remos Measurement Infrastructure Prediction Network Host Load Measurement 18

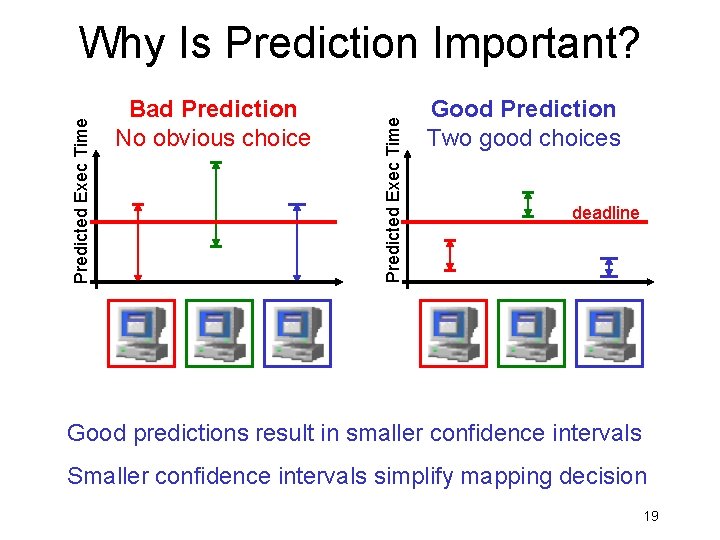

Bad Prediction No obvious choice Predicted Exec Time Why Is Prediction Important? Good Prediction Two good choices deadline Good predictions result in smaller confidence intervals Smaller confidence intervals simplify mapping decision 19

Feasibility of Host Load Prediction 20

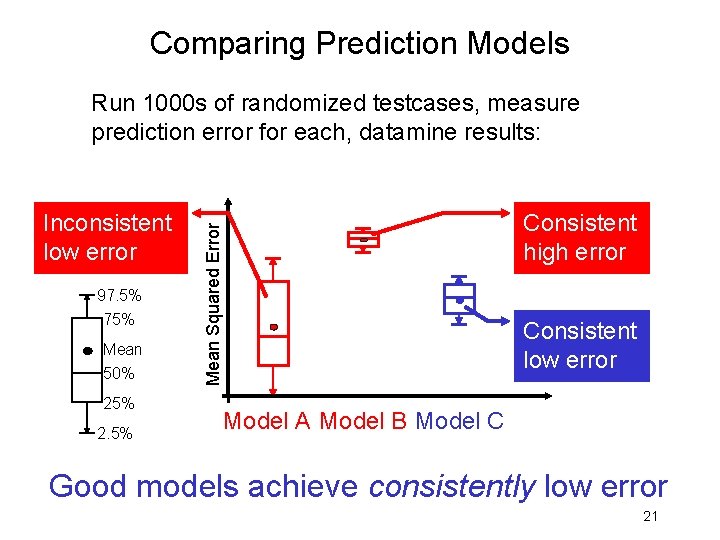

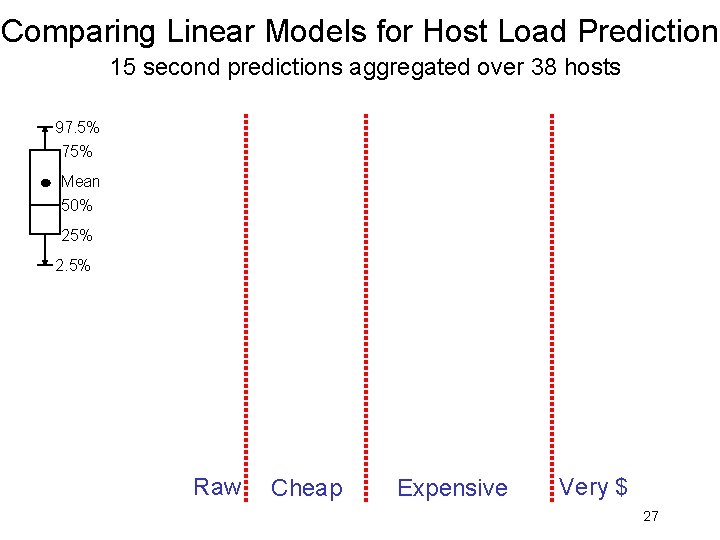

Comparing Prediction Models Run 1000 s of randomized testcases, measure prediction error for each, datamine results: 97. 5% 75% Mean 50% 25% 2. 5% Consistent high error Mean Squared Error Inconsistent low error Consistent low error Model A Model B Model C Good models achieve consistently low error 21

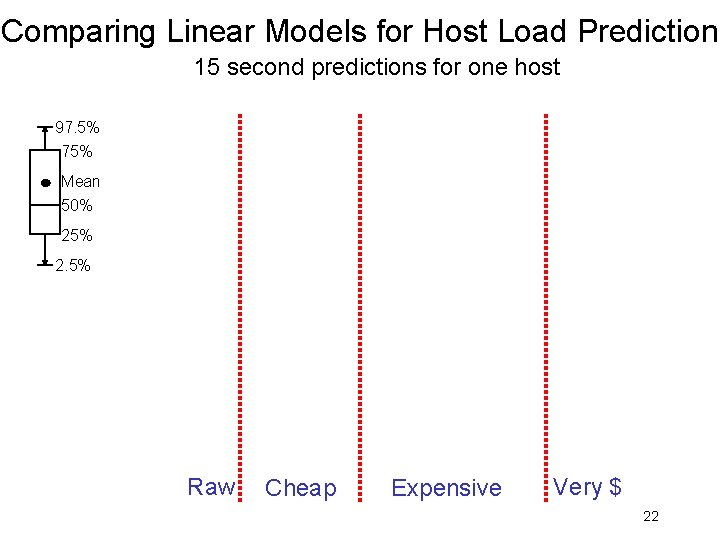

Comparing Linear Models for Host Load Prediction 15 second predictions for one host 97. 5% 75% Mean 50% 25% 2. 5% Raw Cheap Expensive Very $ 22

Conclusions • Identified and described class of applications that benefit from best-effort real-time • Distributed interactive applications • Example: Quake. Viz / DV • Showed feasibility of prediction-based besteffort real-time systems • Mapping algorithms, execution time model, host load prediction 23

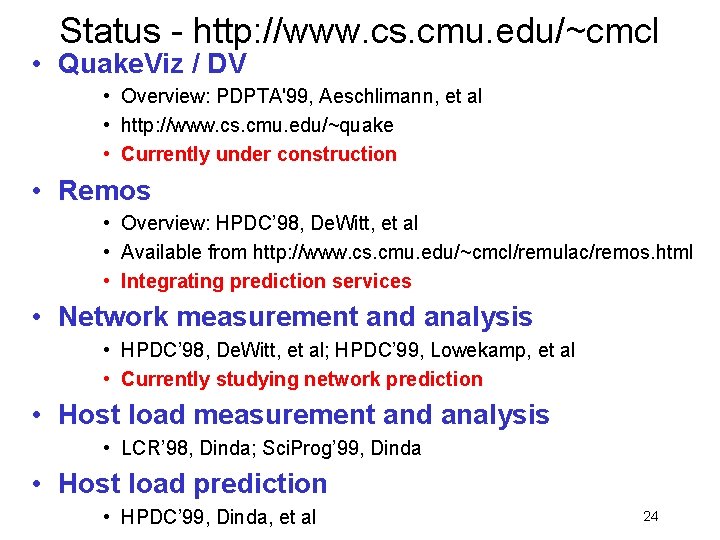

Status - http: //www. cs. cmu. edu/~cmcl • Quake. Viz / DV • Overview: PDPTA'99, Aeschlimann, et al • http: //www. cs. cmu. edu/~quake • Currently under construction • Remos • Overview: HPDC’ 98, De. Witt, et al • Available from http: //www. cs. cmu. edu/~cmcl/remulac/remos. html • Integrating prediction services • Network measurement and analysis • HPDC’ 98, De. Witt, et al; HPDC’ 99, Lowekamp, et al • Currently studying network prediction • Host load measurement and analysis • LCR’ 98, Dinda; Sci. Prog’ 99, Dinda • Host load prediction • HPDC’ 99, Dinda, et al 24

Feasibility of Best-effort Mapping Algorithms 25

Feasibility of Host Load Prediction 26

Comparing Linear Models for Host Load Prediction 15 second predictions aggregated over 38 hosts 97. 5% 75% Mean 50% 25% 2. 5% Raw Cheap Expensive Very $ 27

- Slides: 27