The Cambridge Research Computing Service A brief overview

The Cambridge Research Computing Service A brief overview Dr Paul Calleja Director of Research Computing University of Cambridge

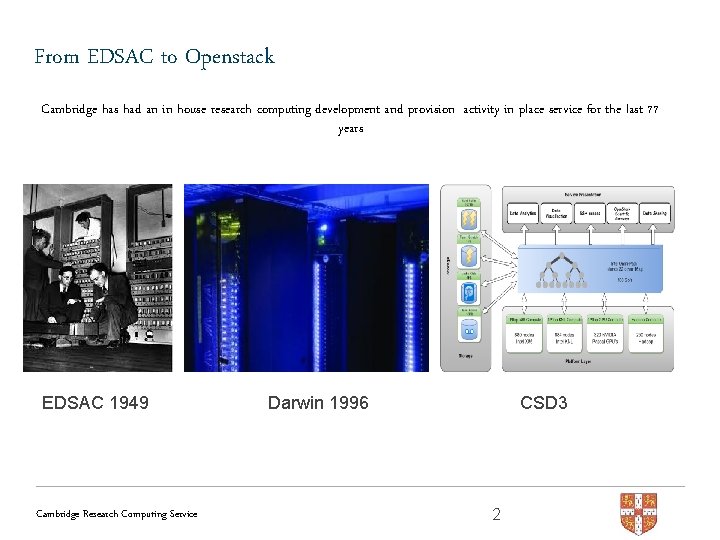

From EDSAC to Openstack Cambridge has had an in house research computing development and provision activity in place service for the last 77 years EDSAC 1949 Cambridge Research Computing Service Darwin 1996 CSD 3 2

Strategy – what are we trying to achieve • Deliver cutting-edge research capability using state of the art technologies within the broad area of data-centric highperformance computing, driving research discovery and innovation. • Strategic outcomes – World class innovative data centric research computing provision – Diversity of high value user driven services – Drive research discovery & Innovation within Cambridge and the national science communities that we serve – Delivery economic impact within UK economy Cambridge Research Computing Service 3

Strategy – how do we get there • Continue in house technology innovation, currently focused on : – Convergence of HPC and Openstack technologies – Next generation tiered storage – strong focus om parallel file systems and NVMe – Large scale genomics analysis software – Hospital clinical informatics platforms – Data analytics and machine learning platforms – Data visualisation platforms • Continue to build best in class, in house capability in: – System design, integration and solution support – User support – Scientific support RSE (6 FTE) Cambridge Research Computing Service 4

Delivery focus Driving Discovery, Innovation & Impact Cambridge Research Computing Service 5

Team structure 28 FTE across 6 groups Cambridge Research Computing Service 6

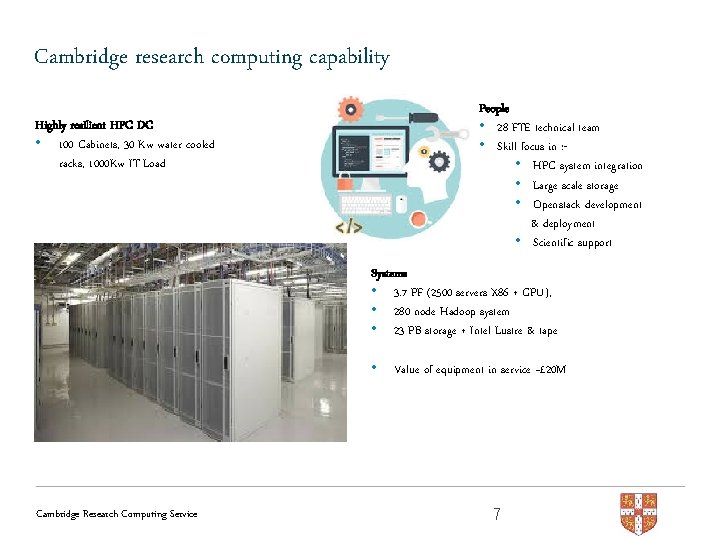

Cambridge research computing capability People • 28 FTE technical team • Skill focus in : • HPC system integration • Large scale storage • Openstack development & deployment • Scientific support Highly resilient HPC DC • 100 Cabinets, 30 Kw water cooled racks, 1000 Kw IT Load Systems • 3. 7 PF (2500 servers X 86 + GPU), • 280 node Hadoop system • 23 PB storage + Intel Lustre & tape • Cambridge Research Computing Service Value of equipment in service ~£ 20 M 7

Research computing usage and outputs • 1600 active from 387 research groups from 42 University departments + National HPC users • Usage growth rate is 28% CAGR year on year for last 9 years, growth rate is expected to grow with Openstack usage models • Research computing services support a current active grant portfolio of £ 120 – which represents 8% of the Universities annual grant income • Underpinning 2000 publications over the last 9 years, current output ~300 per year Cambridge Research Computing Service 8

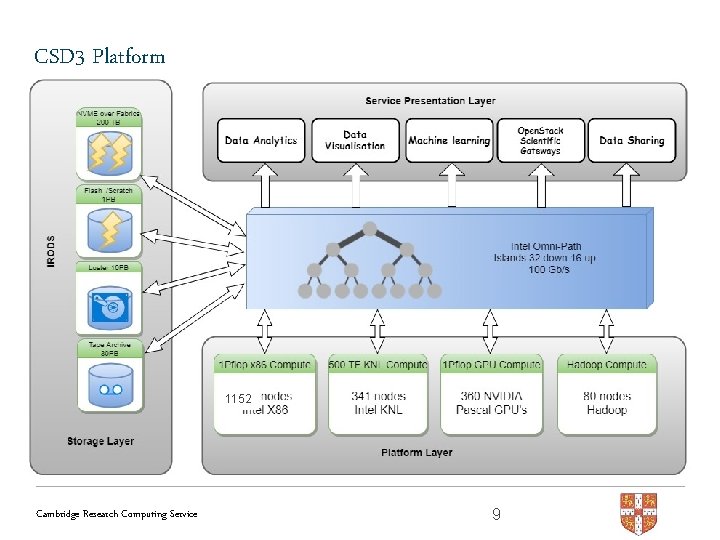

CSD 3 Platform 1152 Cambridge Research Computing Service 9

Peta-4 & Wilkes leading UK academic systems • Peta-4 • Largest open access academic X 86 system in UK • 1152 32 core skylake nodes – 36000 cores 2. 0 PF • Fastest academic supercomputer in the UK • KNL 341 nodes 0. 5 PF • We can run this as a single heterogeneous system yielding 2. 4 PF • Wilkes-2 • Largest open access academic GPU system in UK • 1. 2 PF (20% over design performance) • 360 P 100 Cambridge Research Computing Service 10

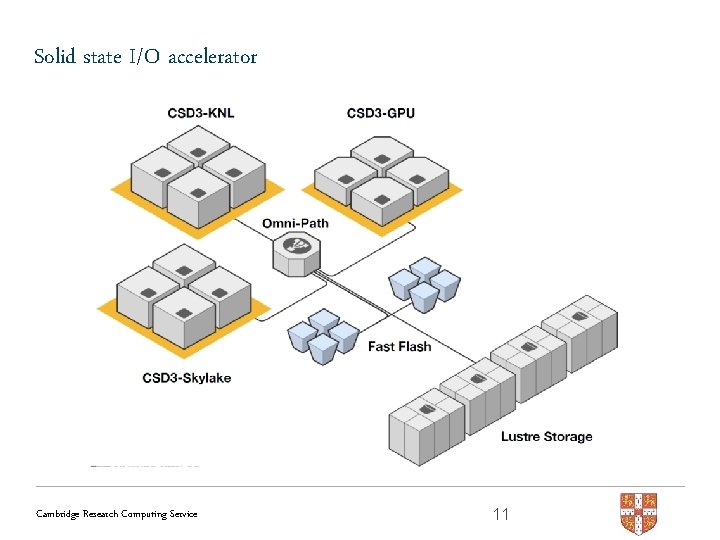

Solid state I/O accelerator Cambridge Research Computing Service 11

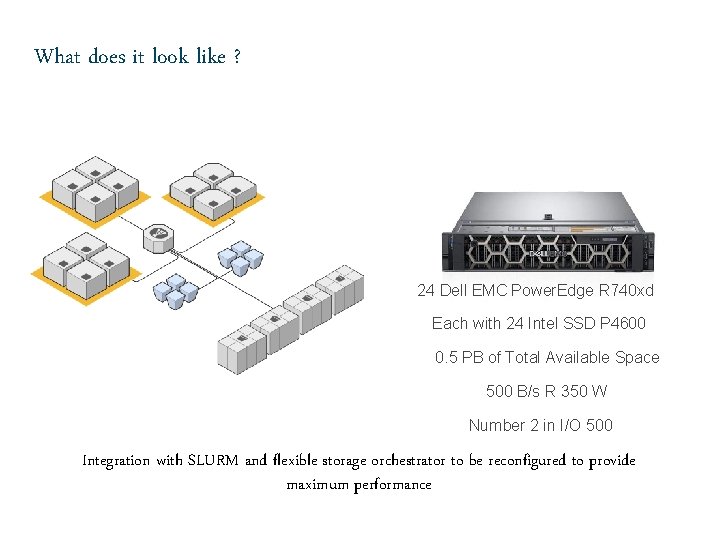

What does it look like ? 24 Dell EMC Power. Edge R 740 xd Each with 24 Intel SSD P 4600 0. 5 PB of Total Available Space 500 B/s R 350 W Number 2 in I/O 500 Integration with SLURM and flexible storage orchestrator to be reconfigured to provide maximum performance

Cambridge HPC services • Central HPC data analytics service and Service • Pay per use service of large central HPC and storage systems • X 86, KNL, GPU • Research computing cloud (new in 2018) • Infrastructure as service • Clinical cloud VM service • Scientific Openstack cloud for IRIS • Secure data storage and archive service (new in 2019) • NHS IGT • ISO 27001 • Data Analytics Service (beta) • Hadoop / Sparc Cambridge Research Computing Service 13

Cambridge HPC services • Bio-lab • Develop, deploy and support Open-CB next genomics analysis platform • Deploy and support Biocomputing scientific gateways • Deploy and support wide range of medical imaging, microscopy and structure determination platforms • Scientific computing support • Team of scientific programme experts that provide in depth application development support to users. • Very flexible support model • Able to pool fractional FTE funds from grant to part time FTE on long term basis Cambridge Research Computing Service 14

Cambridge HPC services • HPC and Big Data innovation lab • Holds a large range of test /dev HPC Data analytics hardware • Specific Lab engineering resource • Open to third party use • Used to drive HPC and Big Data R & D for RCS and our customers • Strong industrial supply chain collaboration • Strong user driven inputs • Outputs POC’s, case studies and white papers • Drives innovation in research computing solution development and usage for both The University and wider community • System design, procurement and managed hosting service for group owned resources Cambridge Research Computing Service 15

Cambridge HPC services • HPC and Big Data innovation lab • Holds a large range of test /dev HPC Data analytics hardware • Specific Lab engineering resource • Open to third party use • Used to drive HPC and Big Data R & D for RCS and our customers • Strong industrial supply chain collaboration • Strong user driven inputs • Outputs POC’s, case studies and white papers • Drives innovation in research computing solution development and usage for both The University and wider community • System design, procurement and managed hosting service for group owned resources Cambridge Research Computing Service 16

IRIS at Cambridge • • 36 32 core skylake nodes, 384 GB RAM, dual low latency 25 g ethernet, OPA 1 PB lustre Provisioned as core hours – 2, 270, 592 per quarter Bare metal, Openstack VM’s, Openstack slurm as a service • Available in next few weeks fr on boarding IRIS users Cambridge Research Computing Service 17

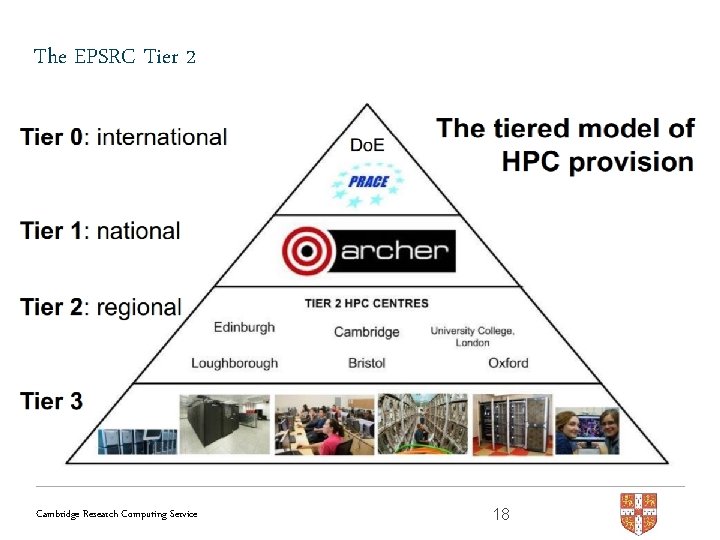

The EPSRC Tier 2 Cambridge Research Computing Service 18

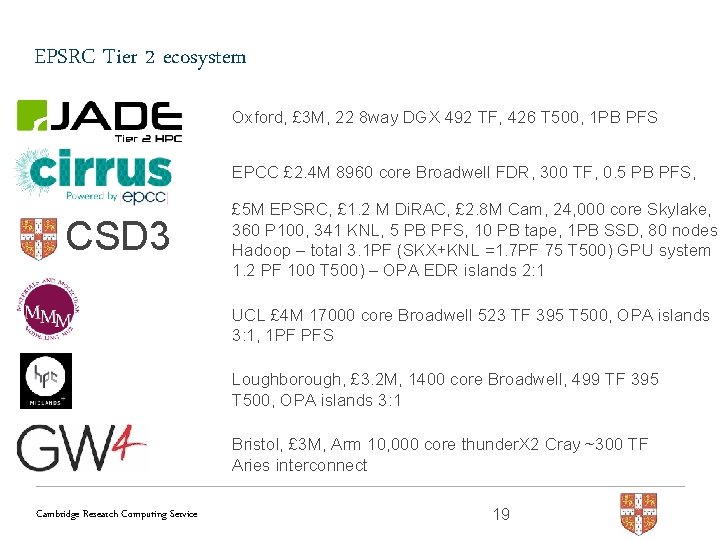

EPSRC Tier 2 ecosystem Oxford, £ 3 M, 22 8 way DGX 492 TF, 426 T 500, 1 PB PFS EPCC £ 2. 4 M 8960 core Broadwell FDR, 300 TF, 0. 5 PB PFS, CSD 3 £ 5 M EPSRC, £ 1. 2 M Di. RAC, £ 2. 8 M Cam, 24, 000 core Skylake, 360 P 100, 341 KNL, 5 PB PFS, 10 PB tape, 1 PB SSD, 80 nodes Hadoop – total 3. 1 PF (SKX+KNL =1. 7 PF 75 T 500) GPU system 1. 2 PF 100 T 500) – OPA EDR islands 2: 1 UCL £ 4 M 17000 core Broadwell 523 TF 395 T 500, OPA islands 3: 1, 1 PF PFS Loughborough, £ 3. 2 M, 1400 core Broadwell, 499 TF 395 T 500, OPA islands 3: 1 Bristol, £ 3 M, Arm 10, 000 core thunder. X 2 Cray ~300 TF Aries interconnect Cambridge Research Computing Service 19

Di. RAC national HPC service • Cambridge are a long standing Di. RAC delivery partner • ~500 Skylake nodes + 13% of our KNL and GPU system, 3 PB Lustre • Co development partner in DAC and Openstack Cambridge Research Computing Service 20

- Slides: 20