The Alpha 21264 Microprocessor OutofOrder Execution at 600

- Slides: 30

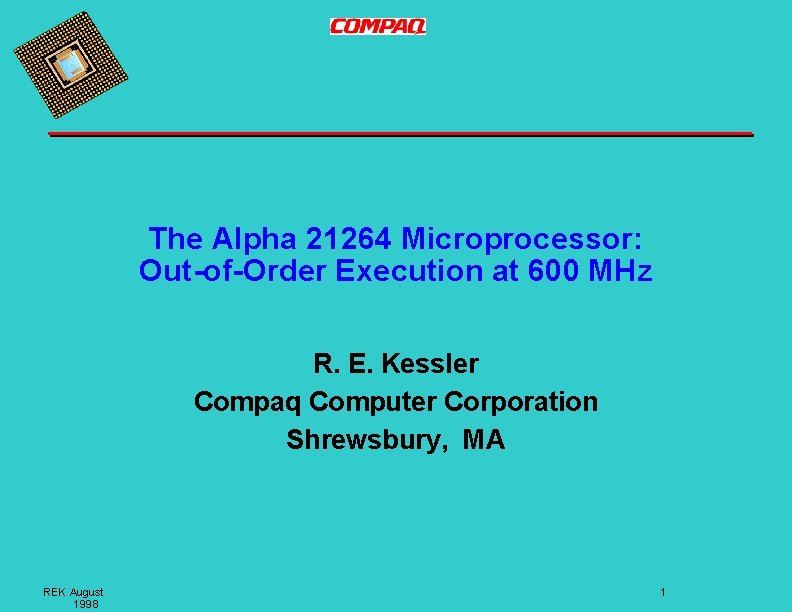

The Alpha 21264 Microprocessor: Out-of-Order Execution at 600 MHz R. E. Kessler Compaq Computer Corporation Shrewsbury, MA REK August 1998 1

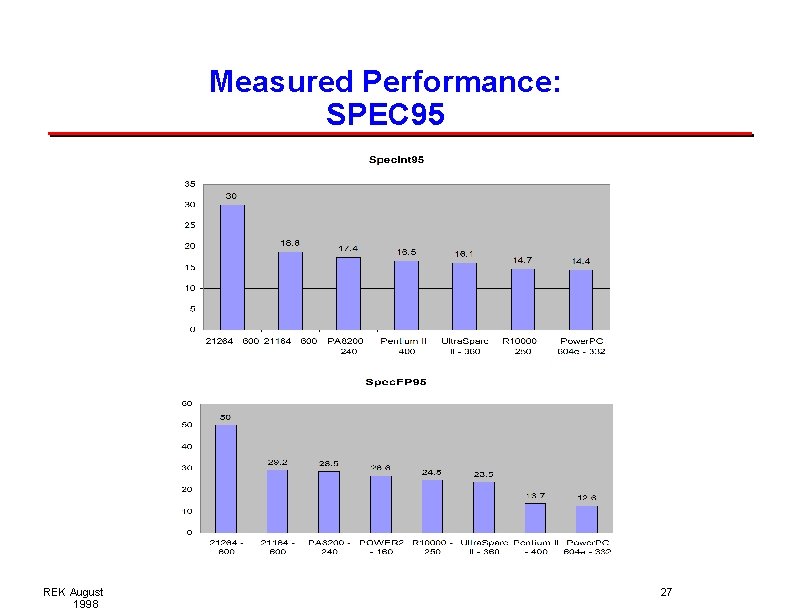

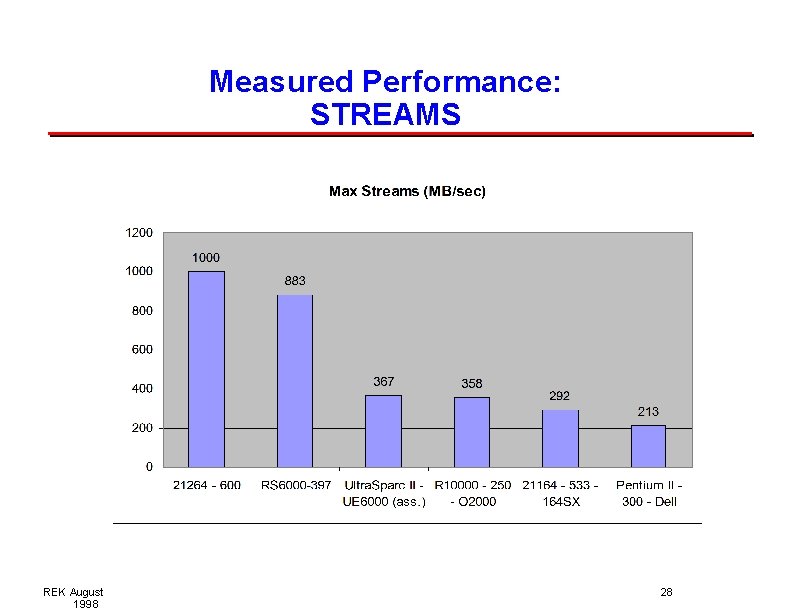

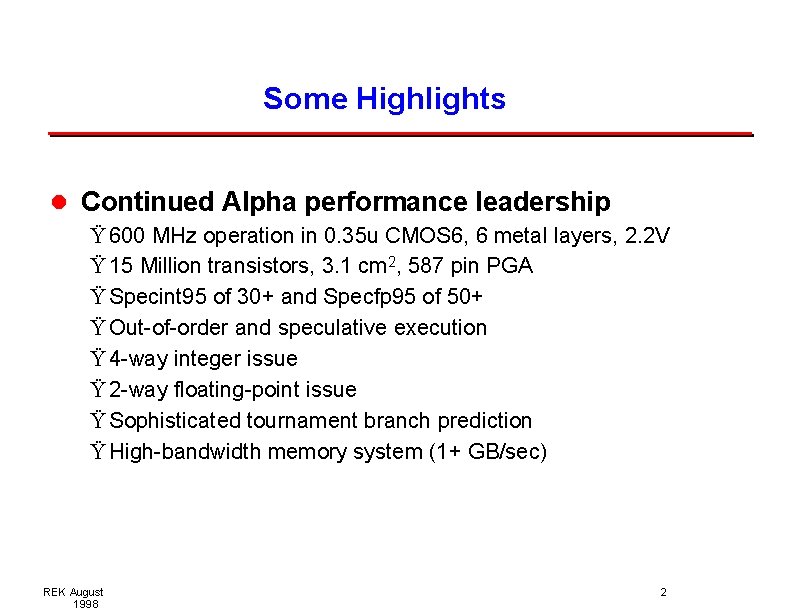

Some Highlights l Continued Alpha performance leadership Ÿ 600 MHz operation in 0. 35 u CMOS 6, 6 metal layers, 2. 2 V Ÿ 15 Million transistors, 3. 1 cm 2, 587 pin PGA Ÿ Specint 95 of 30+ and Specfp 95 of 50+ Ÿ Out-of-order and speculative execution Ÿ 4 -way integer issue Ÿ 2 -way floating-point issue Ÿ Sophisticated tournament branch prediction Ÿ High-bandwidth memory system (1+ GB/sec) REK August 1998 2

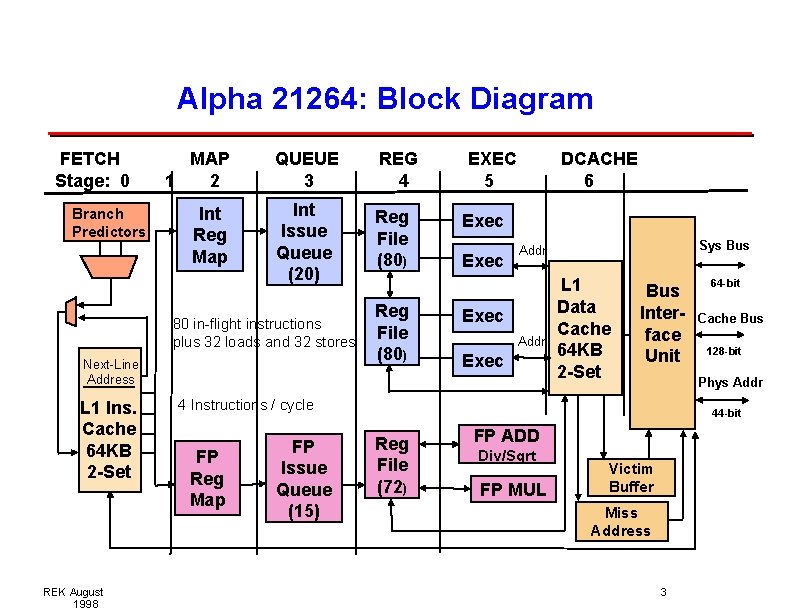

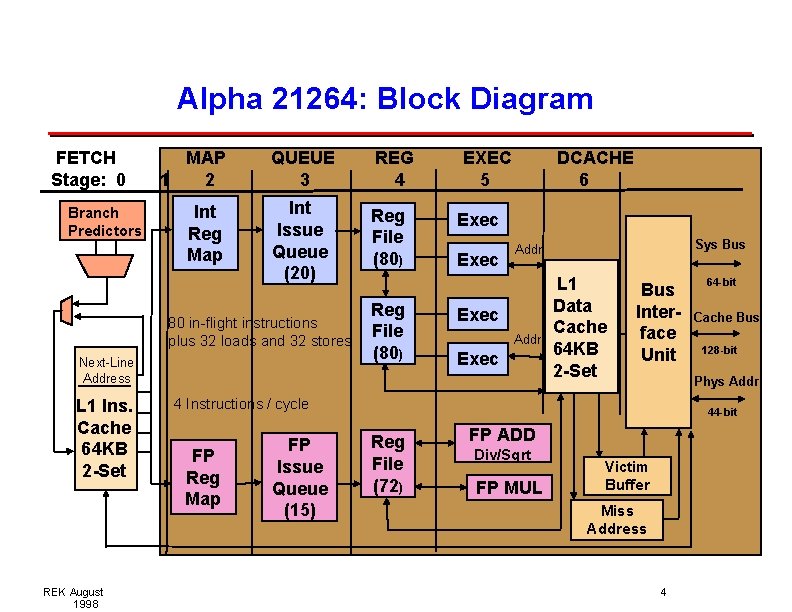

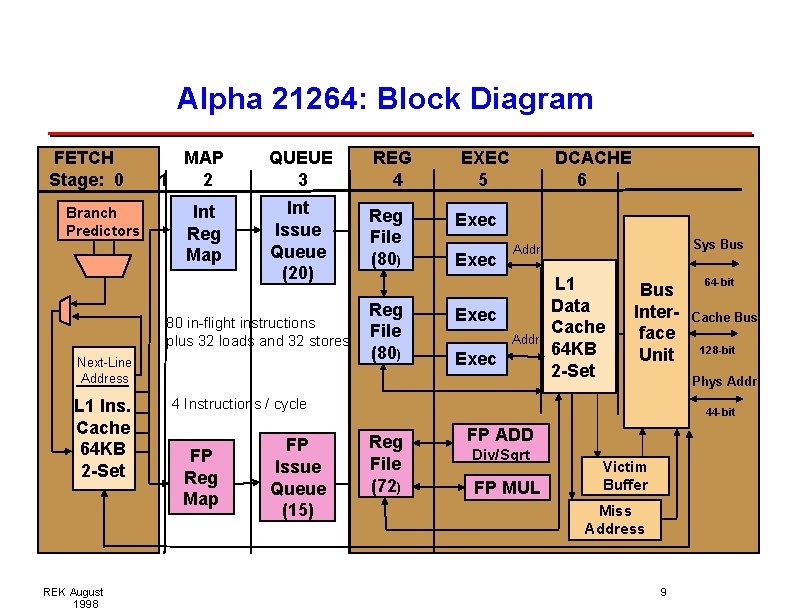

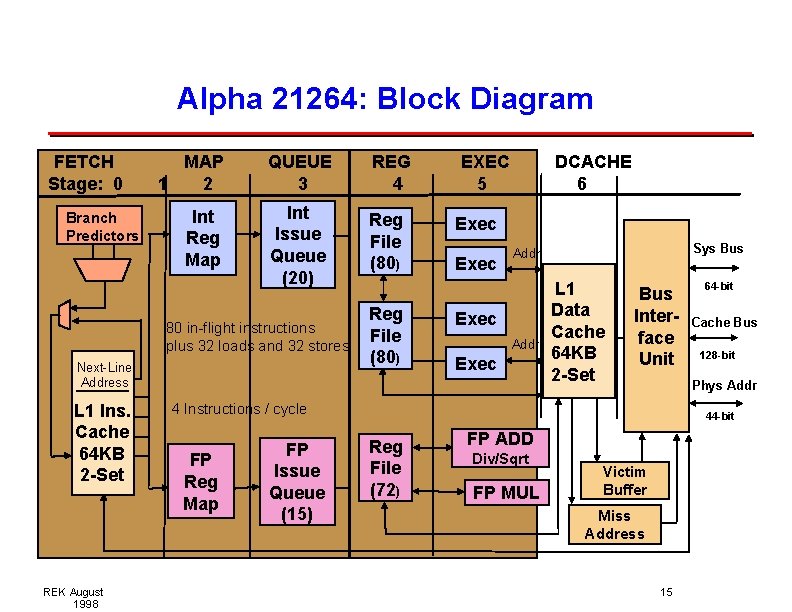

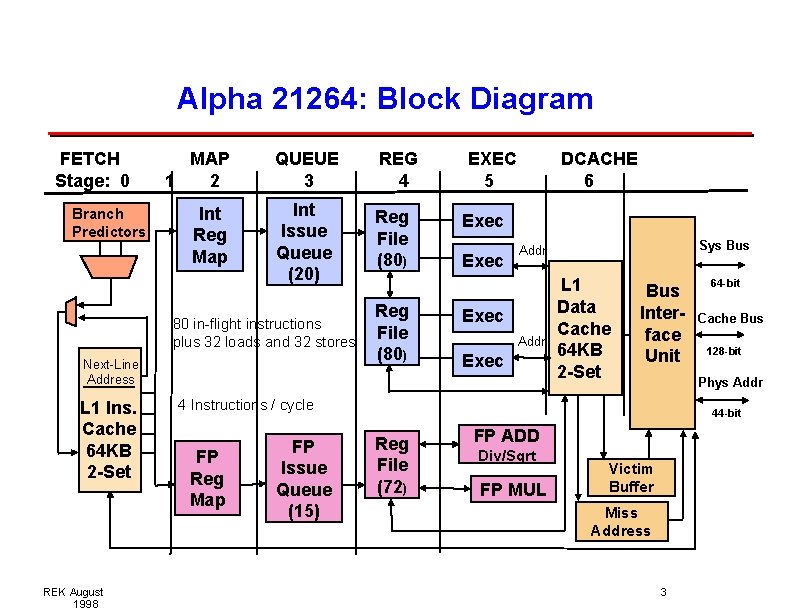

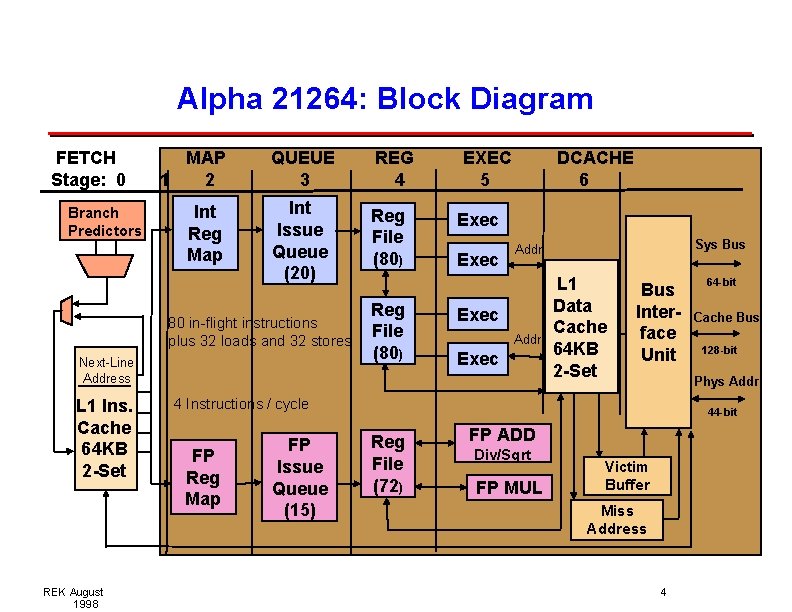

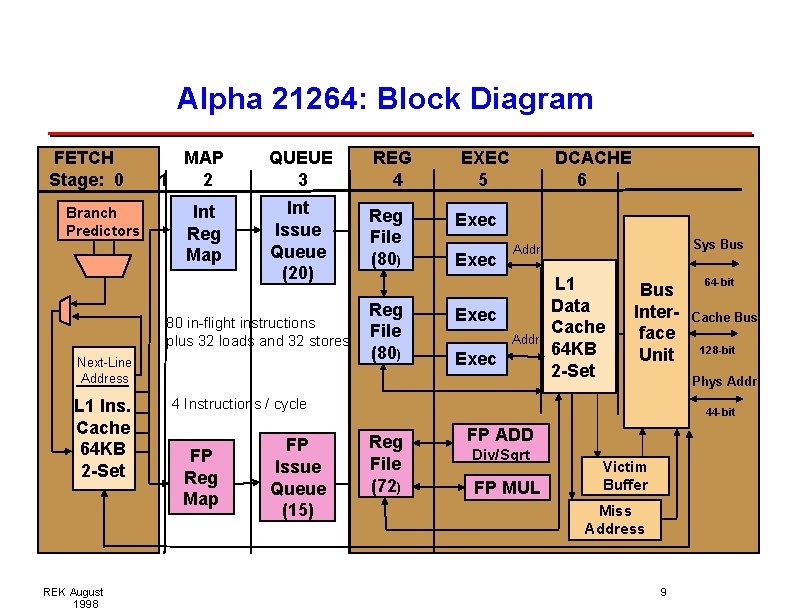

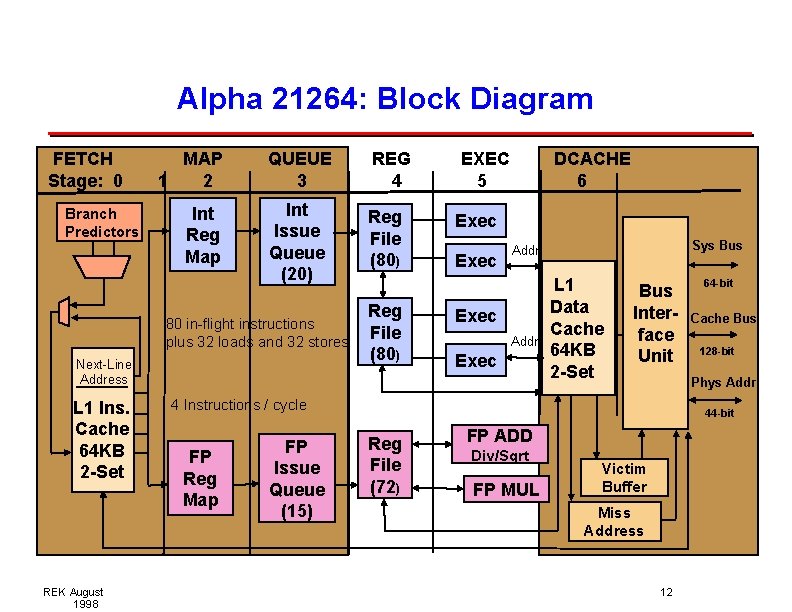

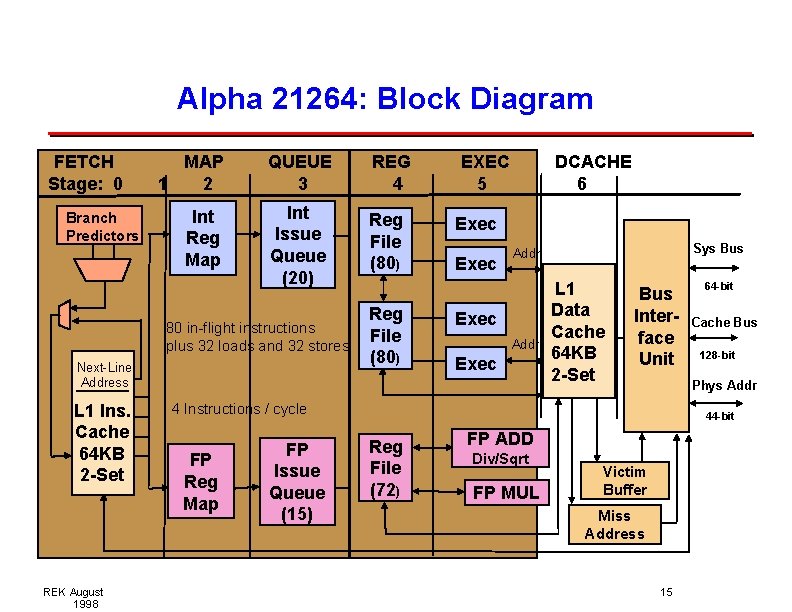

Alpha 21264: Block Diagram FETCH Stage: 0 Branch Predictors MAP 1 2 Int Reg Map QUEUE 3 REG 4 Int Issue Queue (20) Reg File (80) 80 in-flight instructions plus 32 loads and 32 stores Next-Line Address L 1 Ins. Cache 64 KB 2 -Set REK August 1998 Reg File (80) EXEC 5 DCACHE 6 Exec Sys Bus Addr Exec L 1 Data Cache 64 KB 2 -Set Bus Interface Unit FP Reg Map Cache Bus 128 -bit Phys Addr 4 Instructions / cycle FP Issue Queue (15) 64 -bit 44 -bit Reg File (72) FP ADD Div/Sqrt FP MUL Victim Buffer Miss Address 3

Alpha 21264: Block Diagram FETCH Stage: 0 Branch Predictors MAP 1 2 Int Reg Map QUEUE 3 REG 4 Int Issue Queue (20) Reg File (80) 80 in-flight instructions plus 32 loads and 32 stores Next-Line Address L 1 Ins. Cache 64 KB 2 -Set REK August 1998 Reg File (80) EXEC 5 DCACHE 6 Exec Sys Bus Addr Exec L 1 Data Cache 64 KB 2 -Set Bus Interface Unit FP Reg Map Cache Bus 128 -bit Phys Addr 4 Instructions / cycle FP Issue Queue (15) 64 -bit 44 -bit Reg File (72) FP ADD Div/Sqrt FP MUL Victim Buffer Miss Address 4

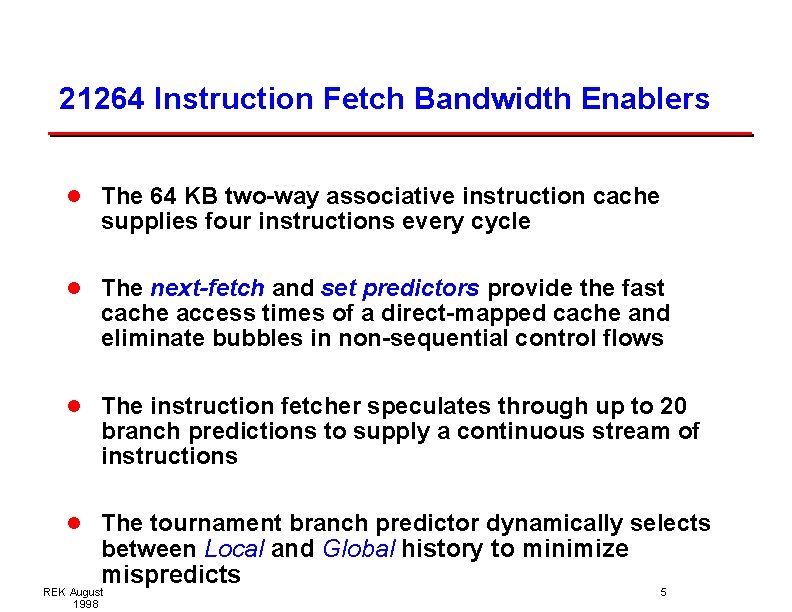

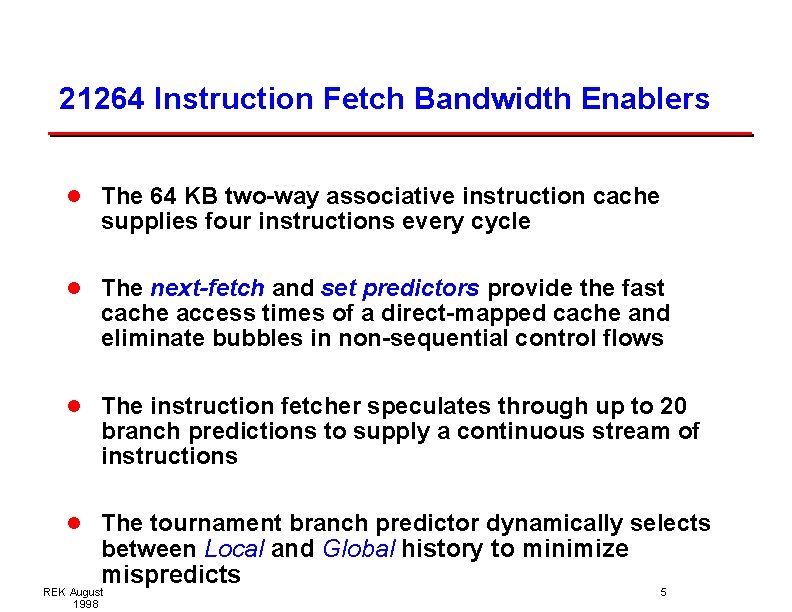

21264 Instruction Fetch Bandwidth Enablers l The 64 KB two-way associative instruction cache supplies four instructions every cycle l The next-fetch and set predictors provide the fast cache access times of a direct-mapped cache and eliminate bubbles in non-sequential control flows l The instruction fetcher speculates through up to 20 branch predictions to supply a continuous stream of instructions l The tournament branch predictor dynamically selects between Local and Global history to minimize mispredicts REK August 1998 5

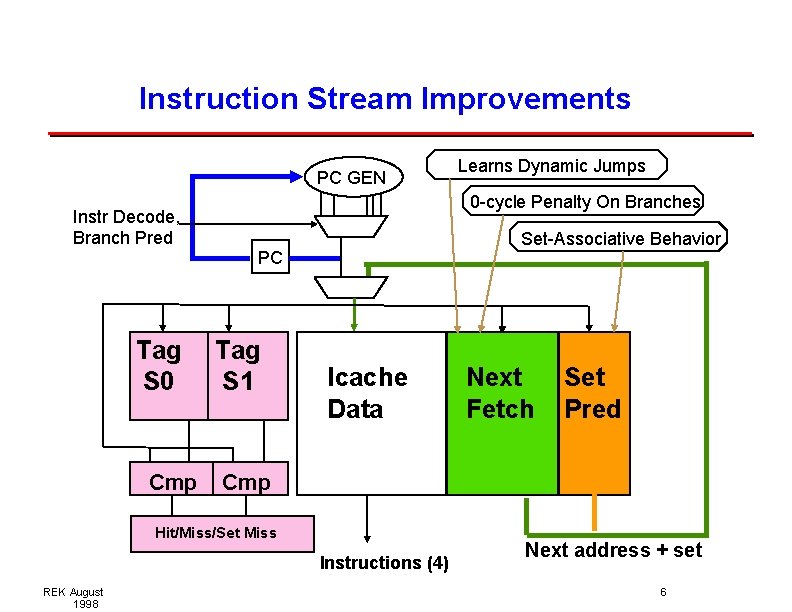

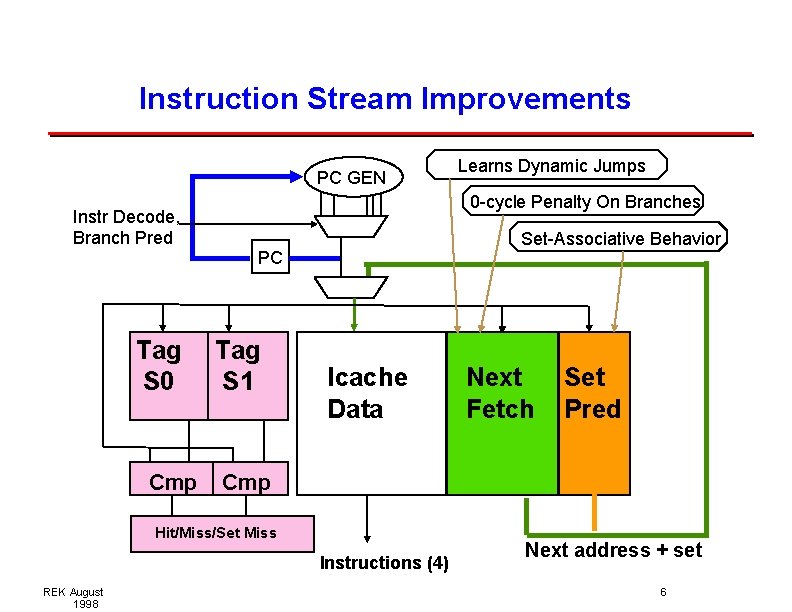

Instruction Stream Improvements PC. . . GEN Instr Decode, Branch Pred Tag S 0 Cmp Icache Data Next Fetch Set Pred Cmp Hit/Miss/Set Miss Instructions (4) REK August 1998 0 -cycle Penalty On Branches Set-Associative Behavior PC Tag S 1 Learns Dynamic Jumps Next address + set 6

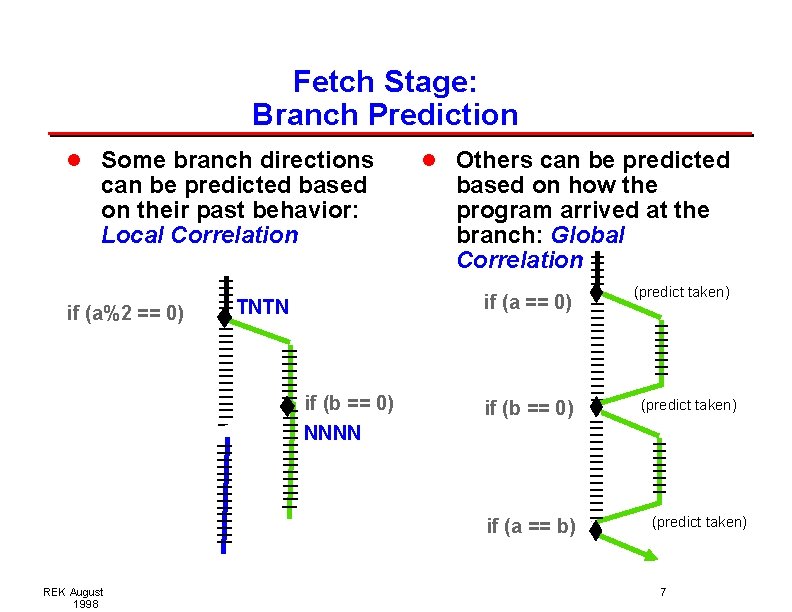

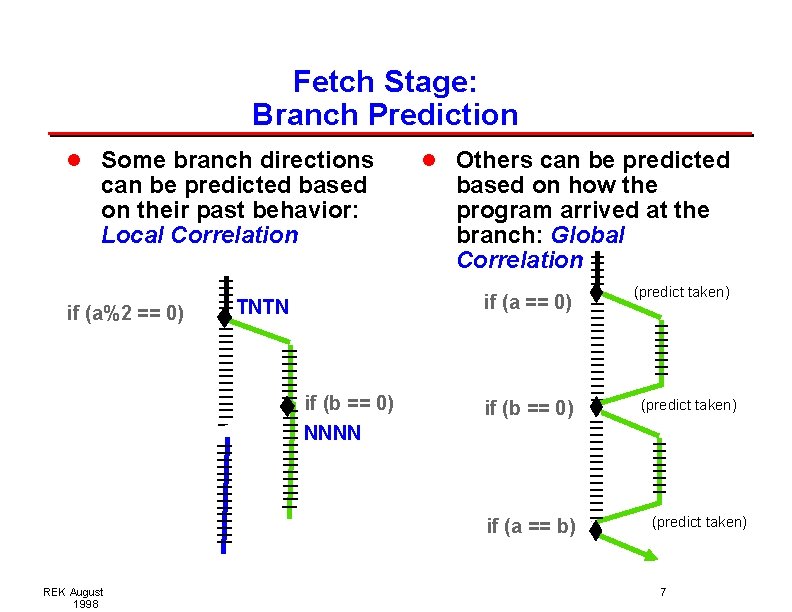

Fetch Stage: Branch Prediction l Some branch directions can be predicted based on their past behavior: Local Correlation if (a%2 == 0) l Others can be predicted based on how the program arrived at the branch: Global Correlation if (a == 0) TNTN if (b == 0) NNNN if (b == 0) if (a == b) REK August 1998 (predict taken) 7

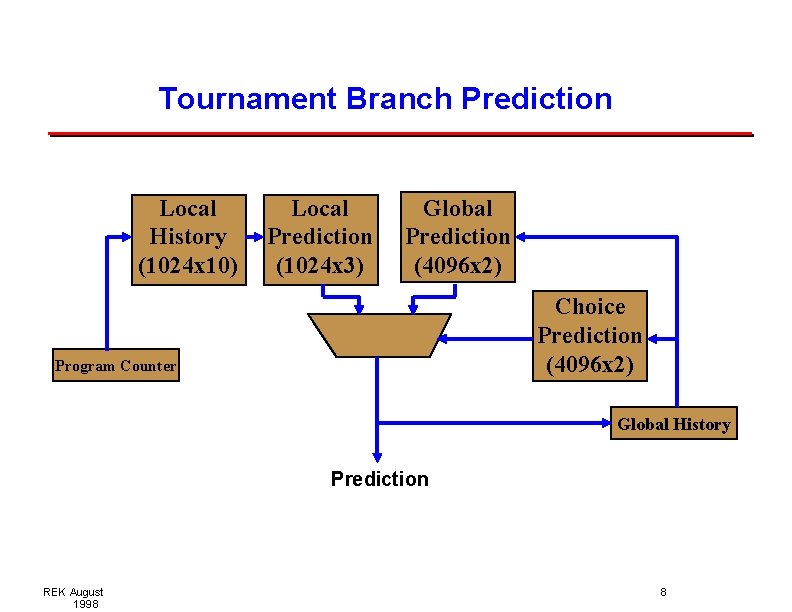

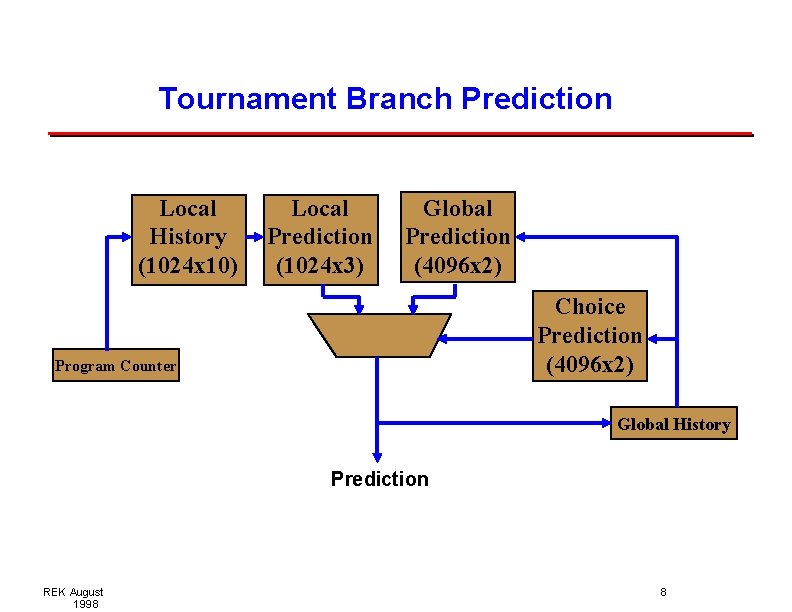

Tournament Branch Prediction Local History (1024 x 10) Local Prediction (1024 x 3) Global Prediction (4096 x 2) Choice Prediction (4096 x 2) Program Counter Global History Prediction REK August 1998 8

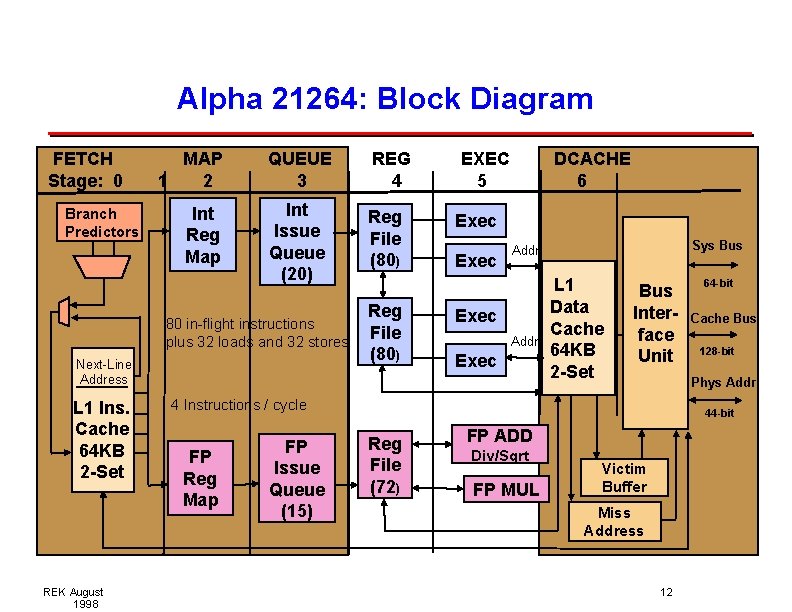

Alpha 21264: Block Diagram FETCH Stage: 0 Branch Predictors MAP 1 2 Int Reg Map QUEUE 3 REG 4 Int Issue Queue (20) Reg File (80) 80 in-flight instructions plus 32 loads and 32 stores Next-Line Address L 1 Ins. Cache 64 KB 2 -Set REK August 1998 Reg File (80) EXEC 5 DCACHE 6 Exec Sys Bus Addr Exec L 1 Data Cache 64 KB 2 -Set Bus Interface Unit FP Reg Map Cache Bus 128 -bit Phys Addr 4 Instructions / cycle FP Issue Queue (15) 64 -bit 44 -bit Reg File (72) FP ADD Div/Sqrt FP MUL Victim Buffer Miss Address 9

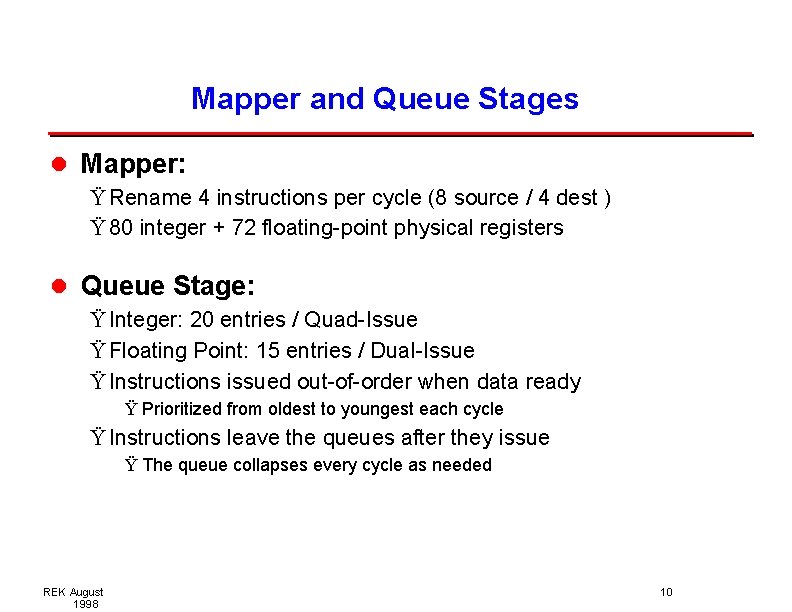

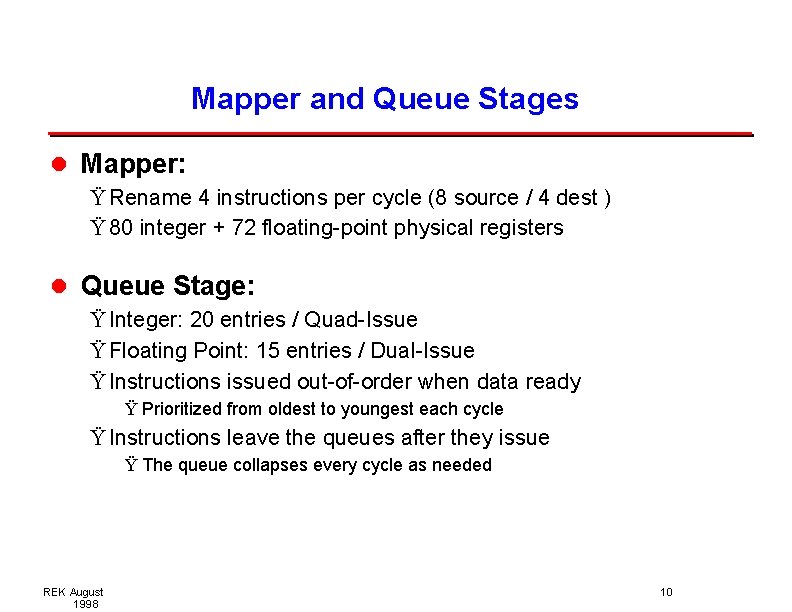

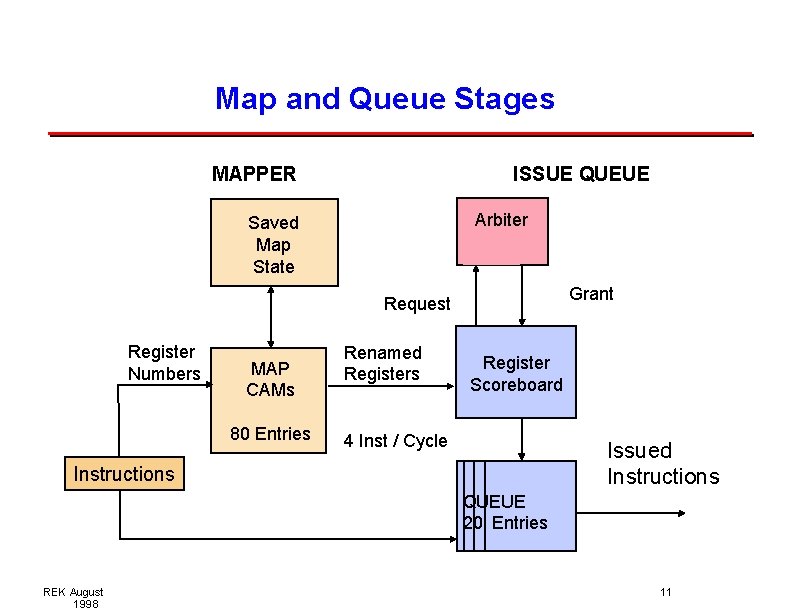

Mapper and Queue Stages l Mapper: Ÿ Rename 4 instructions per cycle (8 source / 4 dest ) Ÿ 80 integer + 72 floating-point physical registers l Queue Stage: Ÿ Integer: 20 entries / Quad-Issue Ÿ Floating Point: 15 entries / Dual-Issue Ÿ Instructions issued out-of-order when data ready Ÿ Prioritized from oldest to youngest each cycle Ÿ Instructions leave the queues after they issue Ÿ The queue collapses every cycle as needed REK August 1998 10

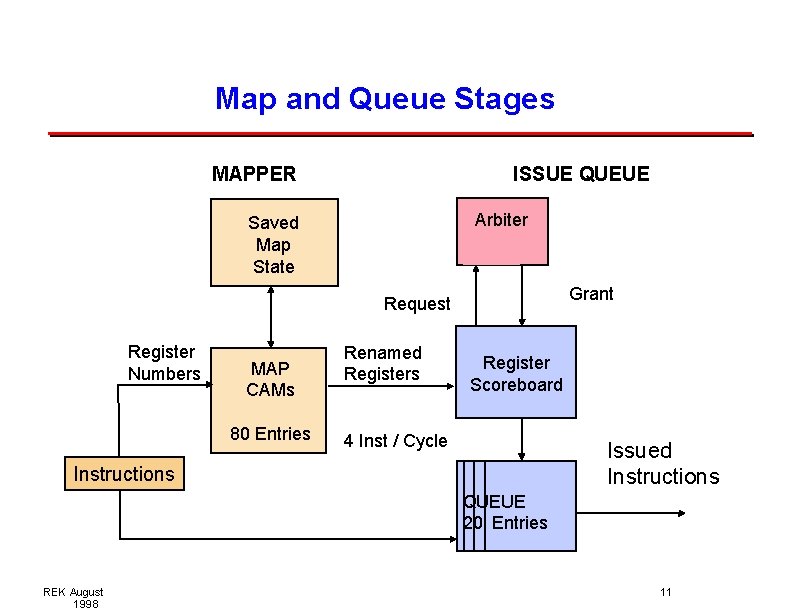

Map and Queue Stages MAPPER ISSUE QUEUE Arbiter Saved Map State Grant Request Register Numbers MAP CAMs 80 Entries Renamed Registers Register Scoreboard 4 Inst / Cycle Issued Instructions QUEUE 20 Entries REK August 1998 11

Alpha 21264: Block Diagram FETCH Stage: 0 Branch Predictors MAP 1 2 Int Reg Map QUEUE 3 REG 4 Int Issue Queue (20) Reg File (80) 80 in-flight instructions plus 32 loads and 32 stores Next-Line Address L 1 Ins. Cache 64 KB 2 -Set REK August 1998 Reg File (80) EXEC 5 DCACHE 6 Exec Sys Bus Addr Exec L 1 Data Cache 64 KB 2 -Set Bus Interface Unit FP Reg Map Cache Bus 128 -bit Phys Addr 4 Instructions / cycle FP Issue Queue (15) 64 -bit 44 -bit Reg File (72) FP ADD Div/Sqrt FP MUL Victim Buffer Miss Address 12

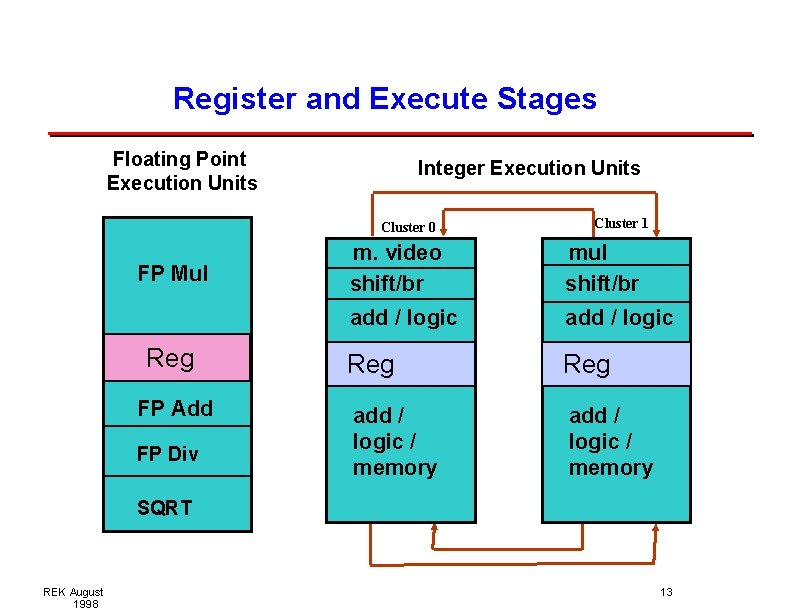

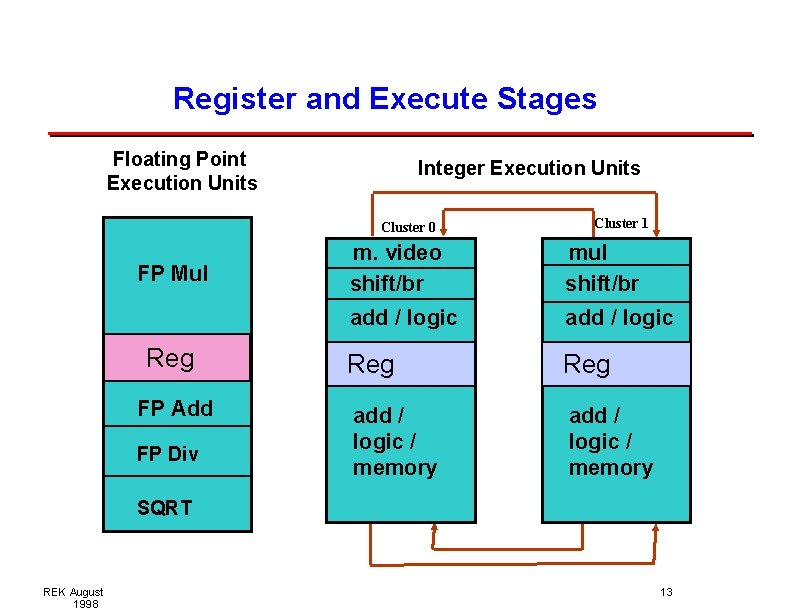

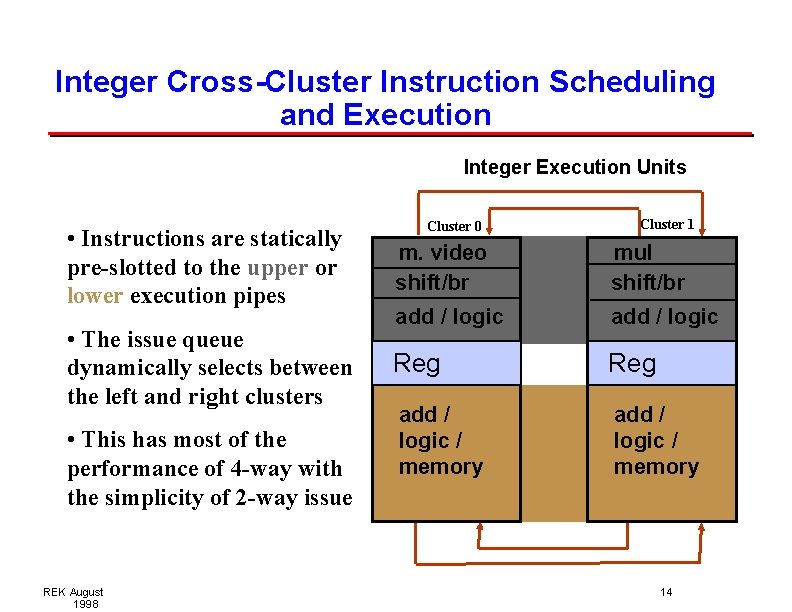

Register and Execute Stages Floating Point Execution Units Integer Execution Units Cluster 0 FP Mul Reg FP Add FP Div Cluster 1 m. video shift/br mul shift/br add / logic Reg add / logic / memory SQRT REK August 1998 13

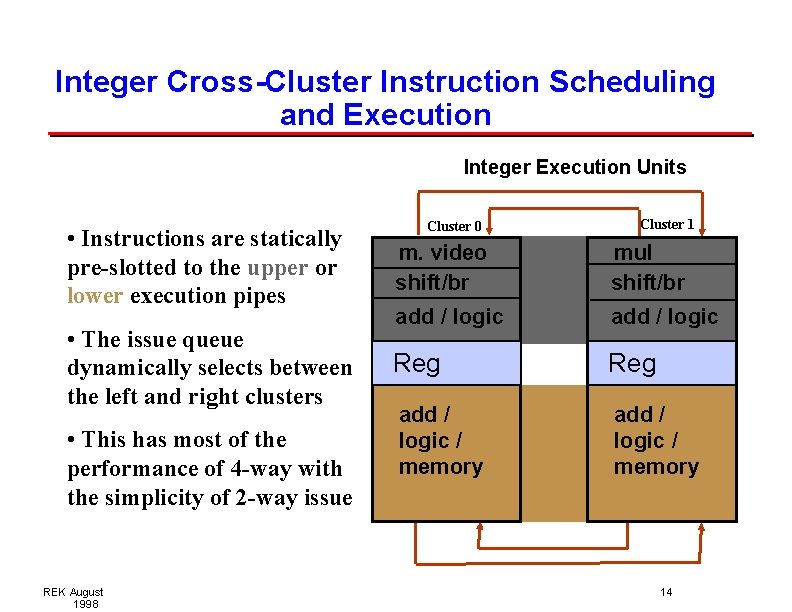

Integer Cross-Cluster Instruction Scheduling and Execution Integer Execution Units Cluster 0 Cluster 1 • Instructions are statically pre-slotted to the upper or lower execution pipes m. video shift/br mul shift/br • The issue queue dynamically selects between the left and right clusters add / logic Reg add / logic / memory • This has most of the performance of 4 -way with the simplicity of 2 -way issue REK August 1998 14

Alpha 21264: Block Diagram FETCH Stage: 0 Branch Predictors MAP 1 2 Int Reg Map QUEUE 3 REG 4 Int Issue Queue (20) Reg File (80) 80 in-flight instructions plus 32 loads and 32 stores Next-Line Address L 1 Ins. Cache 64 KB 2 -Set REK August 1998 Reg File (80) EXEC 5 DCACHE 6 Exec Sys Bus Addr Exec L 1 Data Cache 64 KB 2 -Set Bus Interface Unit FP Reg Map Cache Bus 128 -bit Phys Addr 4 Instructions / cycle FP Issue Queue (15) 64 -bit 44 -bit Reg File (72) FP ADD Div/Sqrt FP MUL Victim Buffer Miss Address 15

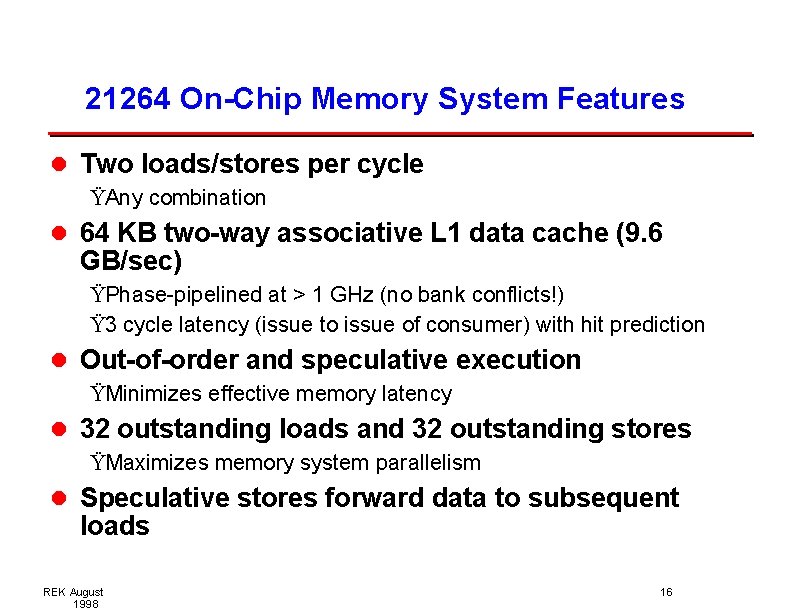

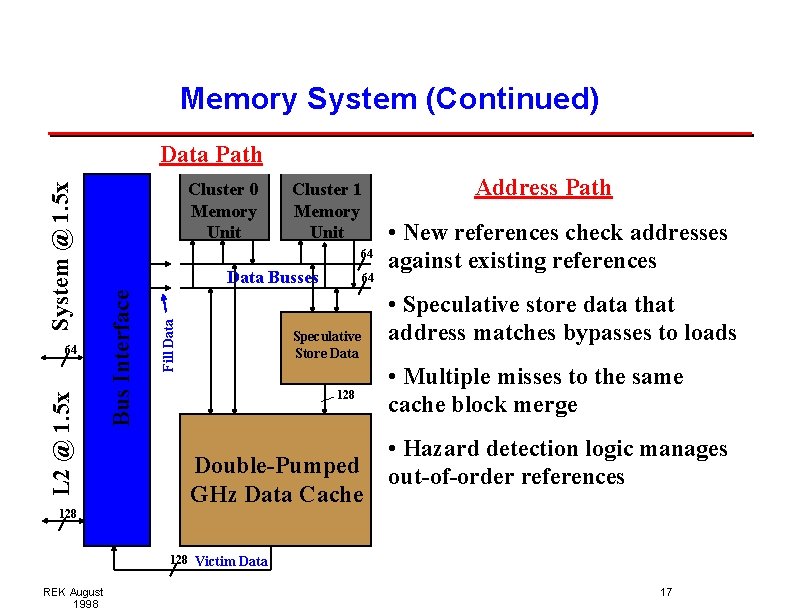

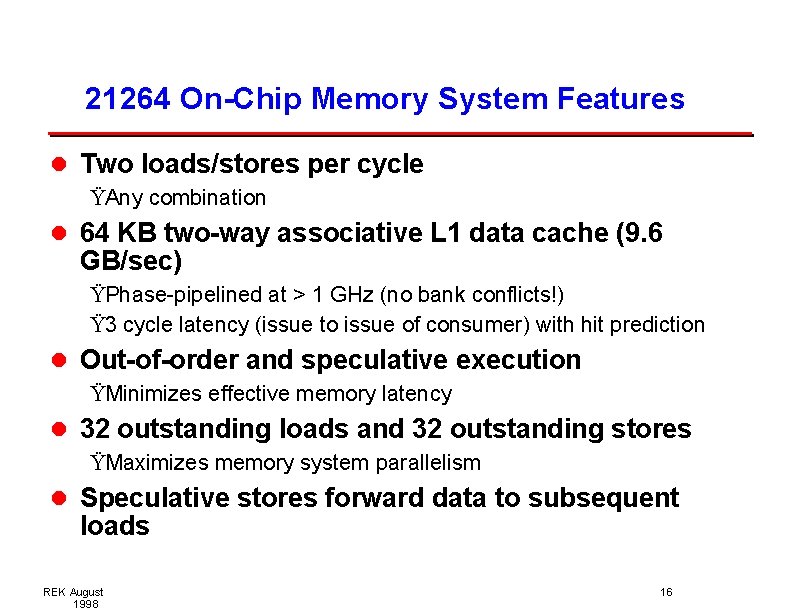

21264 On-Chip Memory System Features l Two loads/stores per cycle ŸAny combination l 64 KB two-way associative L 1 data cache (9. 6 GB/sec) ŸPhase-pipelined at > 1 GHz (no bank conflicts!) Ÿ 3 cycle latency (issue to issue of consumer) with hit prediction l Out-of-order and speculative execution ŸMinimizes effective memory latency l 32 outstanding loads and 32 outstanding stores ŸMaximizes memory system parallelism l Speculative stores forward data to subsequent loads REK August 1998 16

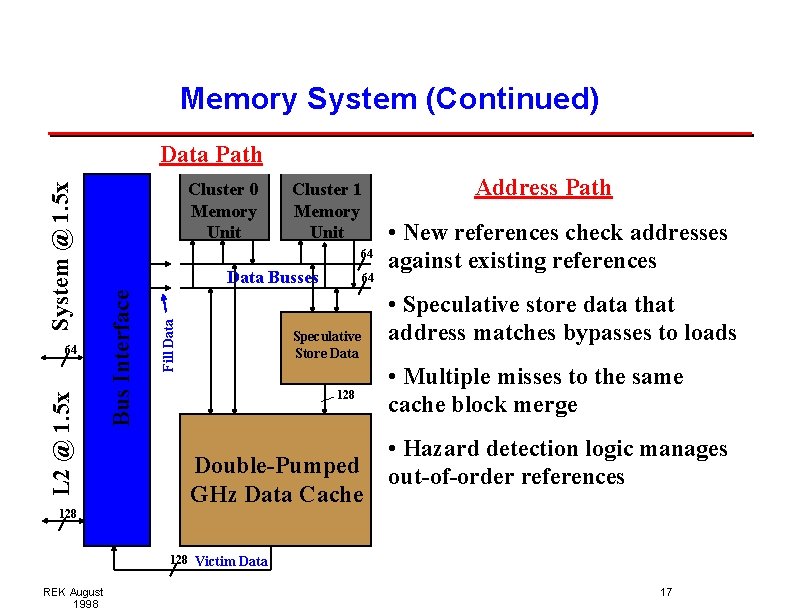

Memory System (Continued) L 2 @ 1. 5 x Cluster 1 Memory Unit 64 Data Busses Fill Data 64 Cluster 0 Memory Unit Bus Interface System @ 1. 5 x Data Path 64 Speculative Store Data 128 Double-Pumped GHz Data Cache Address Path • New references check addresses against existing references • Speculative store data that address matches bypasses to loads • Multiple misses to the same cache block merge • Hazard detection logic manages out-of-order references 128 Victim Data REK August 1998 17

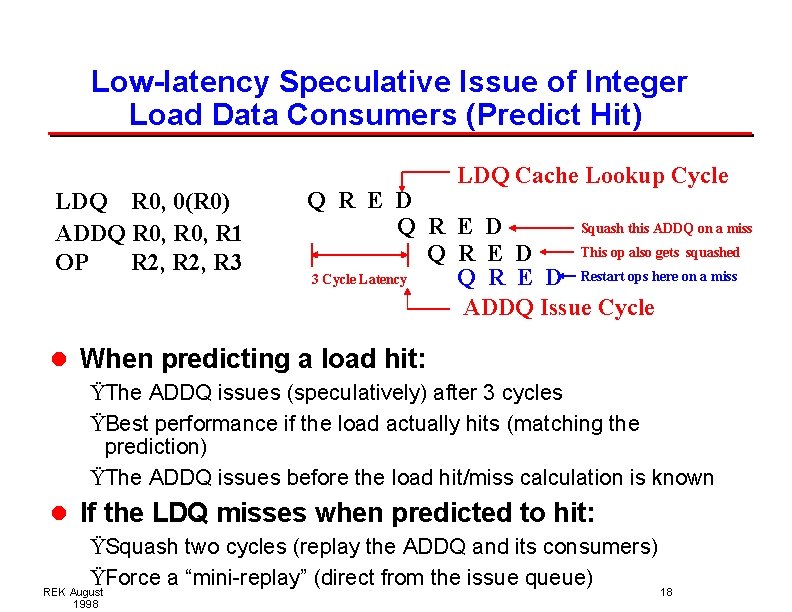

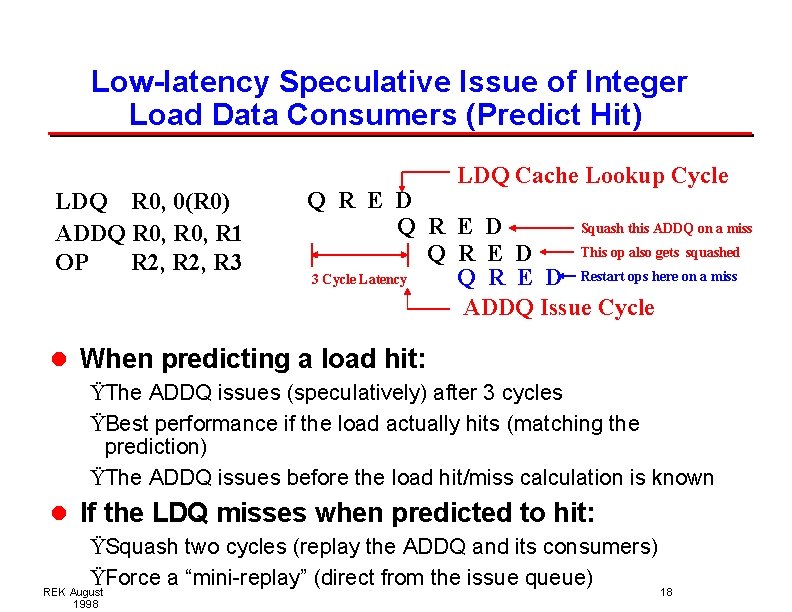

Low-latency Speculative Issue of Integer Load Data Consumers (Predict Hit) LDQ R 0, 0(R 0) ADDQ R 0, R 1 OP R 2, R 3 LDQ Cache Lookup Cycle Q R E D Squash this ADDQ on a miss Q R E D This op also gets squashed Q R E D 3 Cycle Latency Q R E D Restart ops here on a miss ADDQ Issue Cycle l When predicting a load hit: ŸThe ADDQ issues (speculatively) after 3 cycles ŸBest performance if the load actually hits (matching the prediction) ŸThe ADDQ issues before the load hit/miss calculation is known l If the LDQ misses when predicted to hit: ŸSquash two cycles (replay the ADDQ and its consumers) ŸForce a “mini-replay” (direct from the issue queue) REK August 18 1998

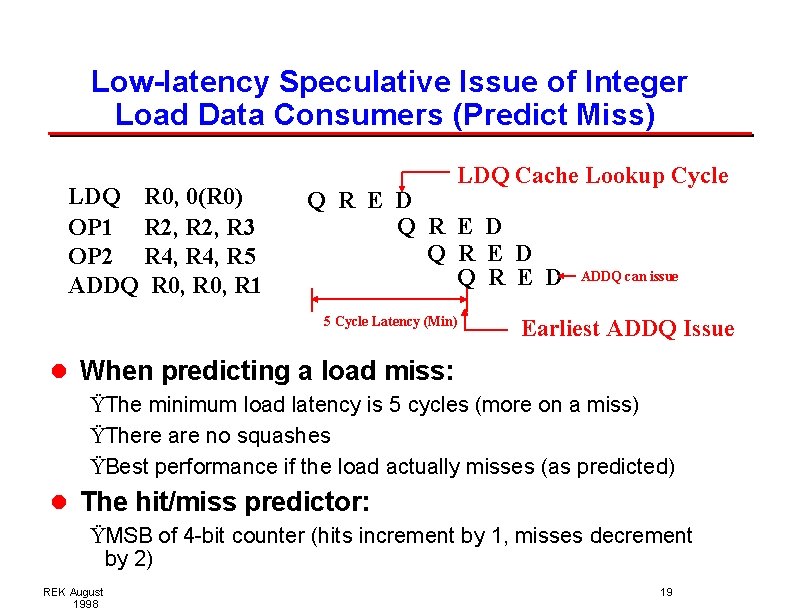

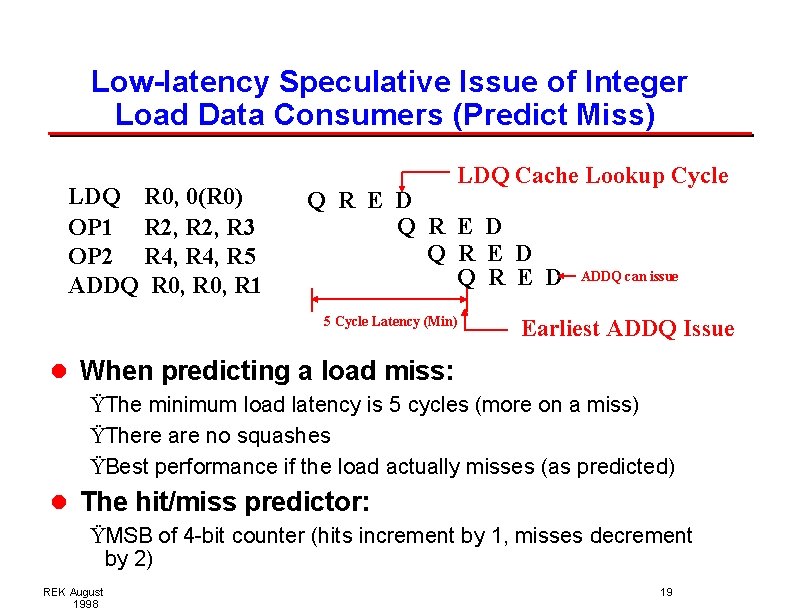

Low-latency Speculative Issue of Integer Load Data Consumers (Predict Miss) LDQ R 0, 0(R 0) OP 1 R 2, R 3 OP 2 R 4, R 5 ADDQ R 0, R 1 LDQ Cache Lookup Cycle Q R E D 5 Cycle Latency (Min) ADDQ can issue Earliest ADDQ Issue l When predicting a load miss: ŸThe minimum load latency is 5 cycles (more on a miss) ŸThere are no squashes ŸBest performance if the load actually misses (as predicted) l The hit/miss predictor: ŸMSB of 4 -bit counter (hits increment by 1, misses decrement by 2) REK August 1998 19

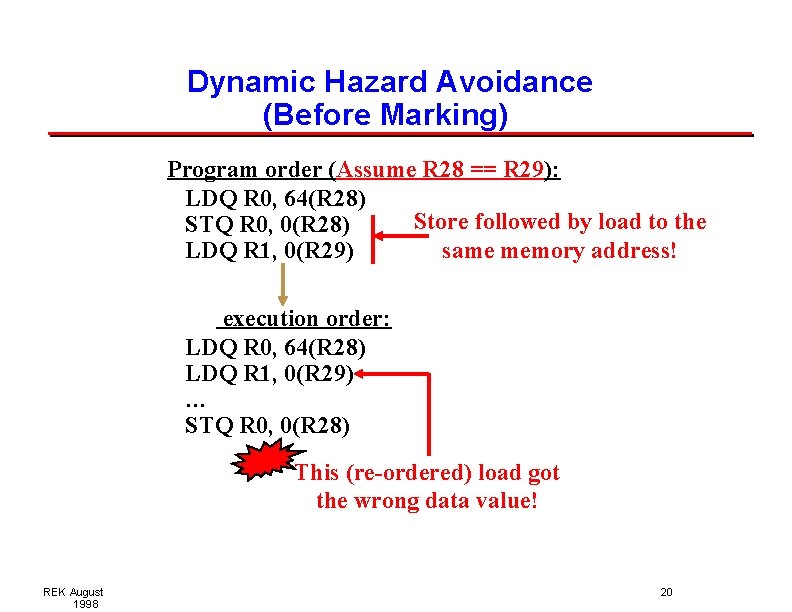

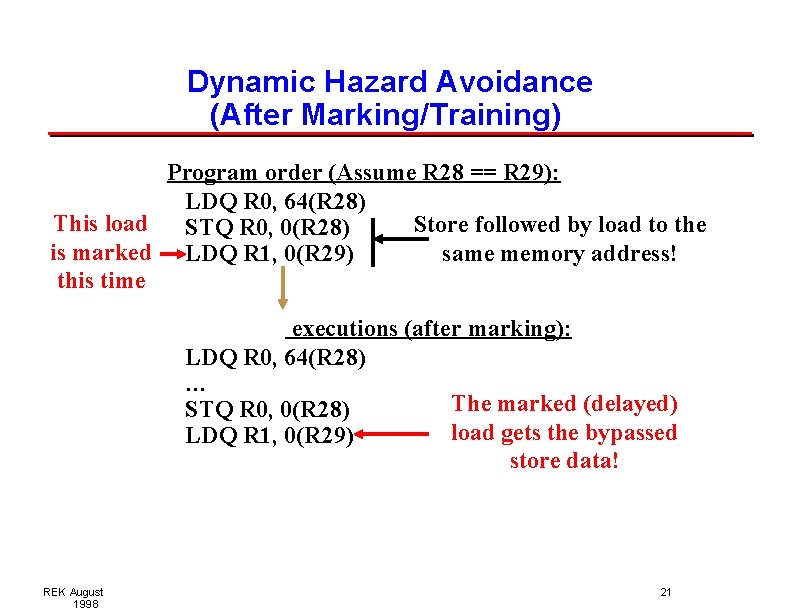

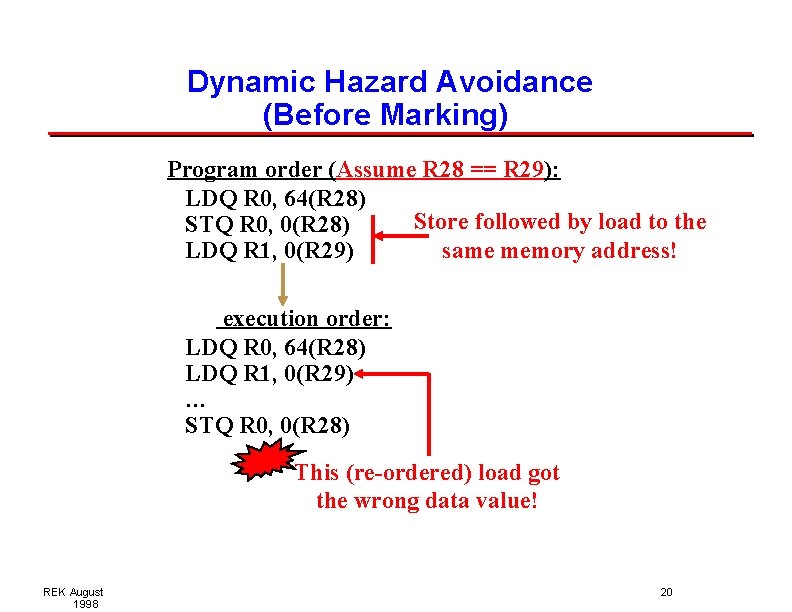

Dynamic Hazard Avoidance (Before Marking) Program order (Assume R 28 == R 29): LDQ R 0, 64(R 28) Store followed by load to the STQ R 0, 0(R 28) same memory address! LDQ R 1, 0(R 29) First execution order: LDQ R 0, 64(R 28) LDQ R 1, 0(R 29) … STQ R 0, 0(R 28) This (re-ordered) load got the wrong data value! REK August 1998 20

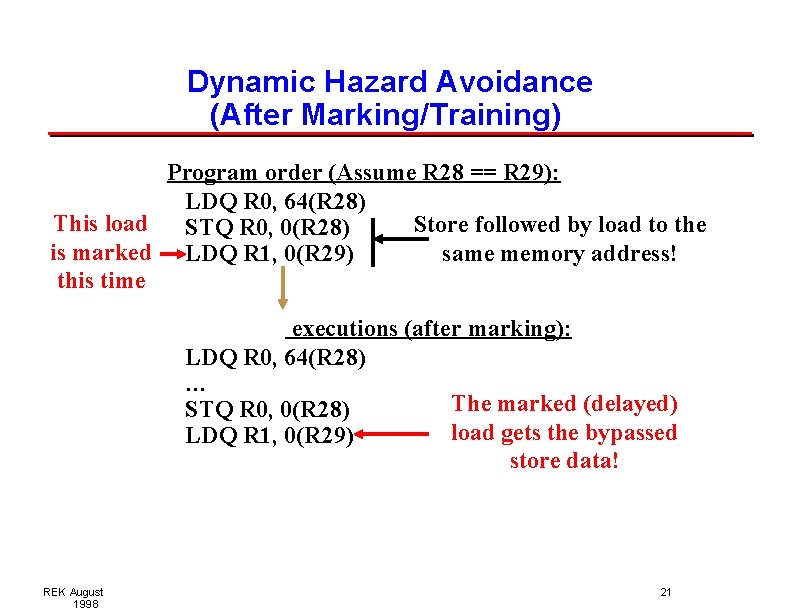

Dynamic Hazard Avoidance (After Marking/Training) Program order (Assume R 28 == R 29): LDQ R 0, 64(R 28) This load Store followed by load to the STQ R 0, 0(R 28) is marked LDQ R 1, 0(R 29) same memory address! this time Subsequent executions (after marking): LDQ R 0, 64(R 28) … The marked (delayed) STQ R 0, 0(R 28) load gets the bypassed LDQ R 1, 0(R 29) store data! REK August 1998 21

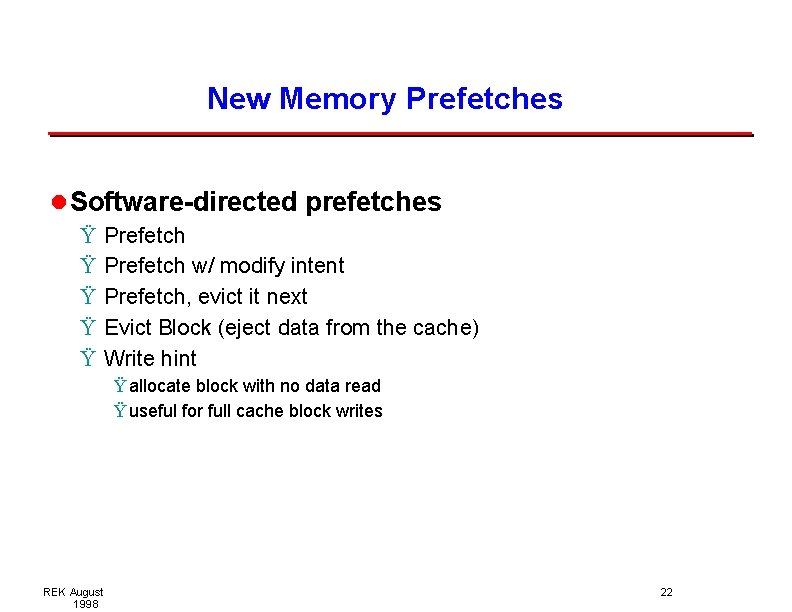

New Memory Prefetches l Software-directed prefetches Ÿ Ÿ Ÿ Prefetch w/ modify intent Prefetch, evict it next Evict Block (eject data from the cache) Write hint Ÿ allocate block with no data read Ÿ useful for full cache block writes REK August 1998 22

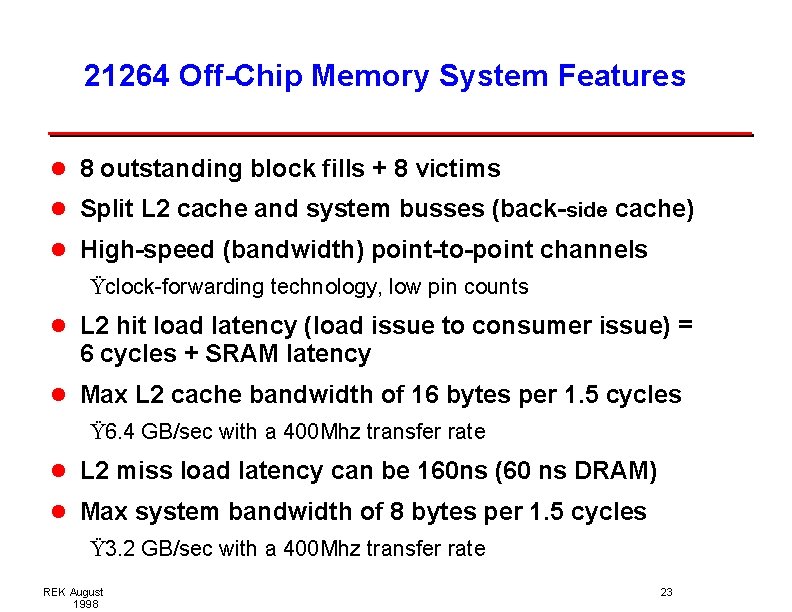

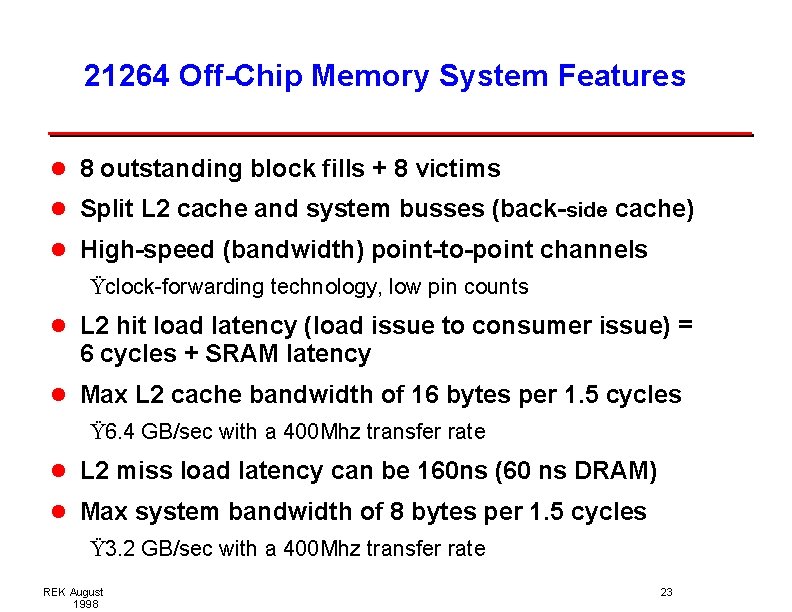

21264 Off-Chip Memory System Features l 8 outstanding block fills + 8 victims l Split L 2 cache and system busses (back-side cache) l High-speed (bandwidth) point-to-point channels Ÿclock-forwarding technology, low pin counts l L 2 hit load latency (load issue to consumer issue) = 6 cycles + SRAM latency l Max L 2 cache bandwidth of 16 bytes per 1. 5 cycles Ÿ 6. 4 GB/sec with a 400 Mhz transfer rate l L 2 miss load latency can be 160 ns (60 ns DRAM) l Max system bandwidth of 8 bytes per 1. 5 cycles Ÿ 3. 2 GB/sec with a 400 Mhz transfer rate REK August 1998 23

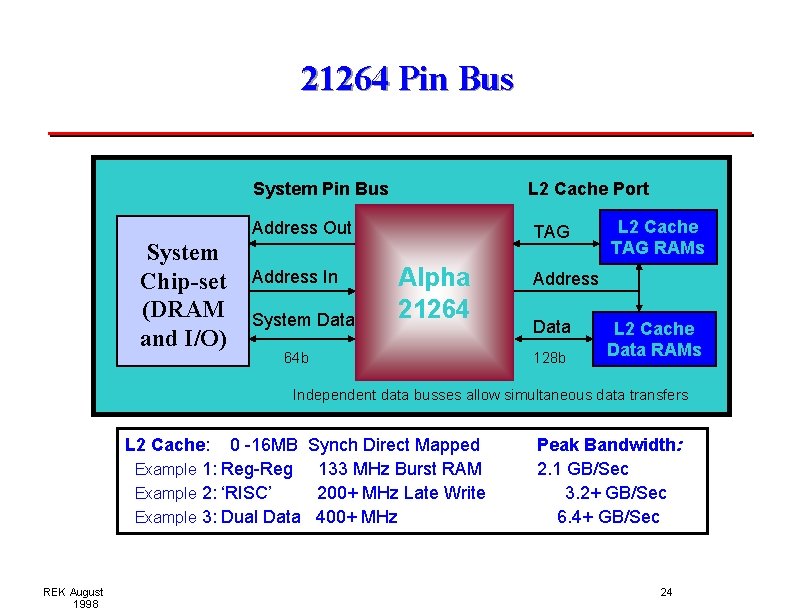

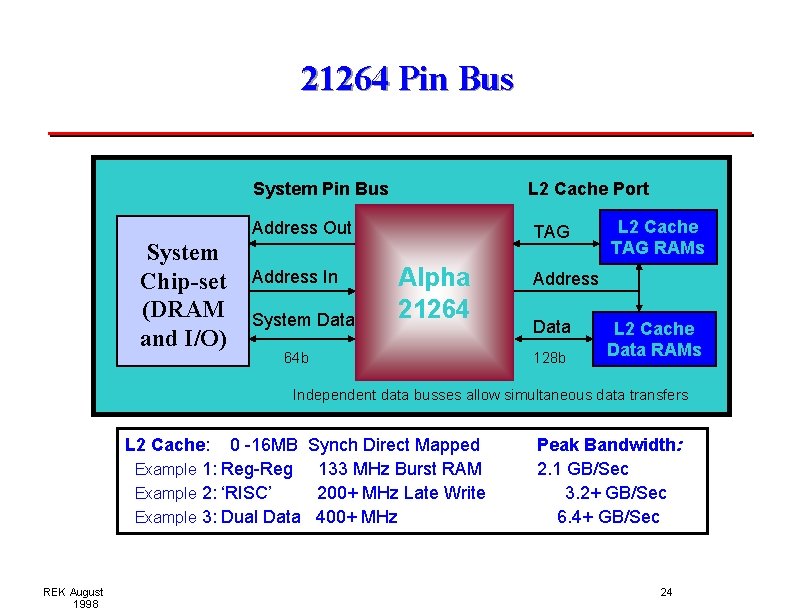

21264 Pin Bus System Chip-set (DRAM and I/O) System Pin Bus L 2 Cache Port Address Out TAG Address In System Data Alpha 21264 64 b L 2 Cache TAG RAMs Address Data 128 b L 2 Cache Data RAMs Independent data busses allow simultaneous data transfers L 2 Cache: 0 -16 MB Example 1: Reg-Reg Example 2: ‘RISC’ Example 3: Dual Data REK August 1998 Synch Direct Mapped 133 MHz Burst RAM 200+ MHz Late Write 400+ MHz Peak Bandwidth: 2. 1 GB/Sec 3. 2+ GB/Sec 6. 4+ GB/Sec 24

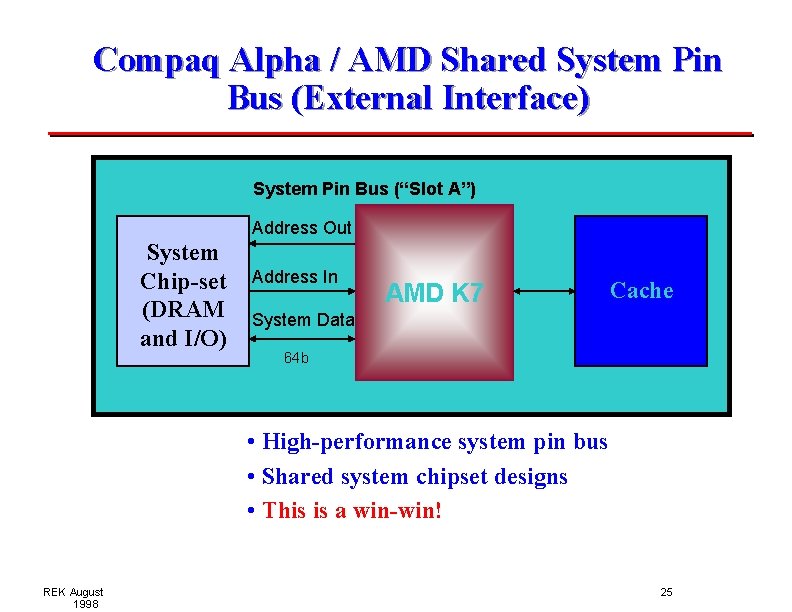

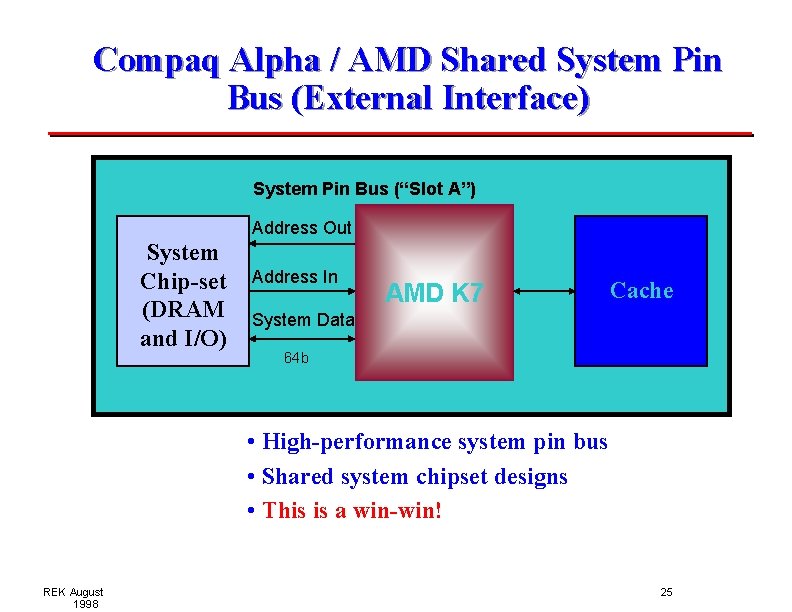

Compaq Alpha / AMD Shared System Pin Bus (External Interface) System Pin Bus (“Slot A”) Address Out System Chip-set (DRAM and I/O) Address In AMD K 7 Cache System Data 64 b • High-performance system pin bus • Shared system chipset designs • This is a win-win! REK August 1998 25

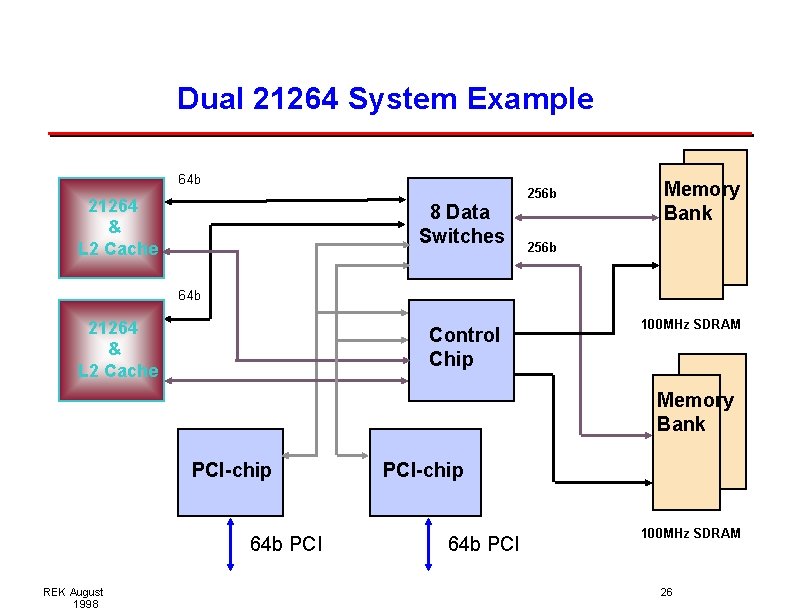

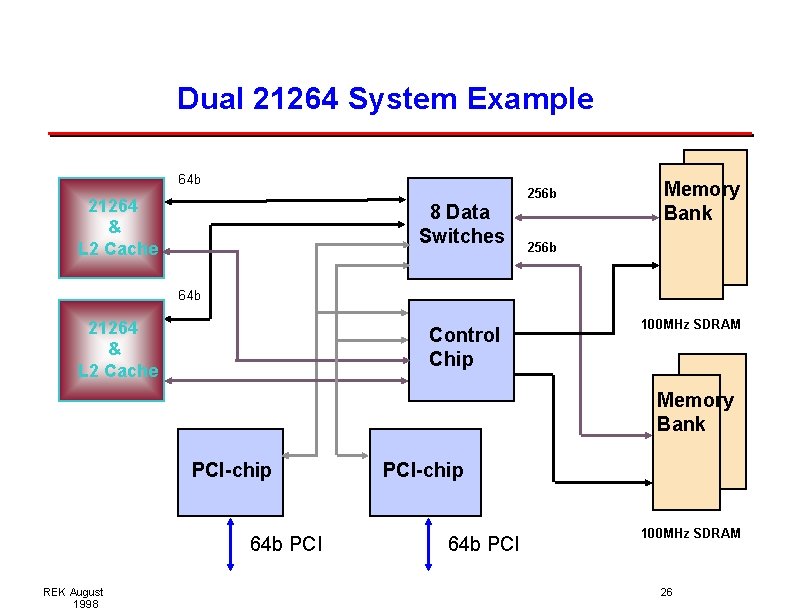

Dual 21264 System Example 64 b 256 b 21264 & L 2 Cache 8 Data Switches Memory Bank 256 b 64 b 21264 & L 2 Cache Control Chip 100 MHz SDRAM Memory Bank PCI-chip 64 b PCI REK August 1998 PCI-chip 64 b PCI 100 MHz SDRAM 26

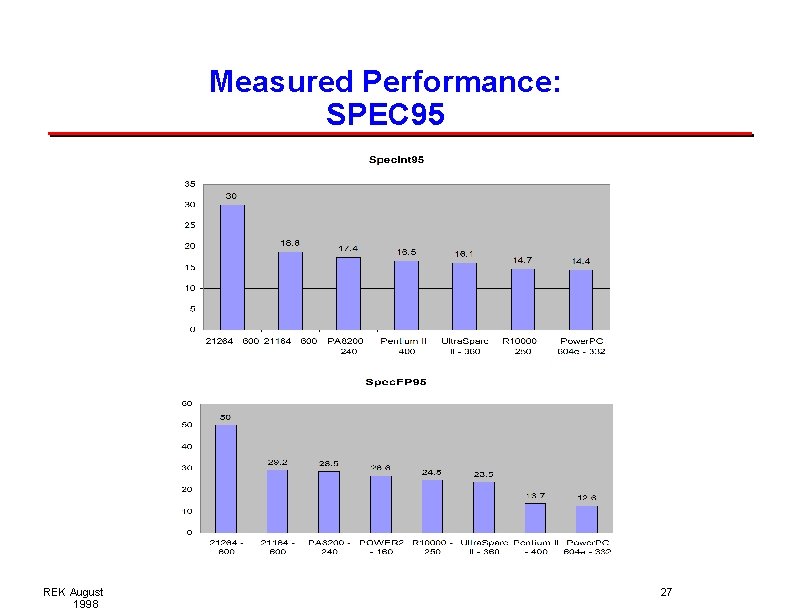

Measured Performance: SPEC 95 REK August 1998 27

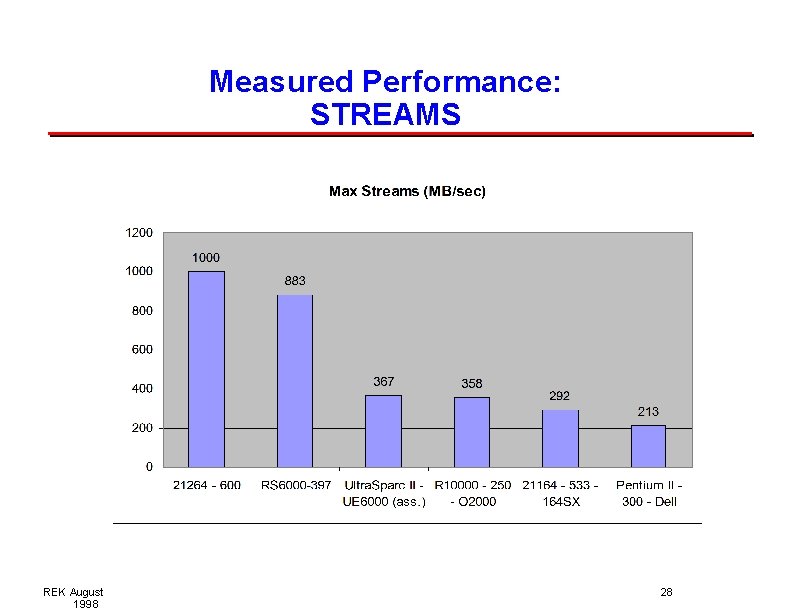

Measured Performance: STREAMS REK August 1998 28

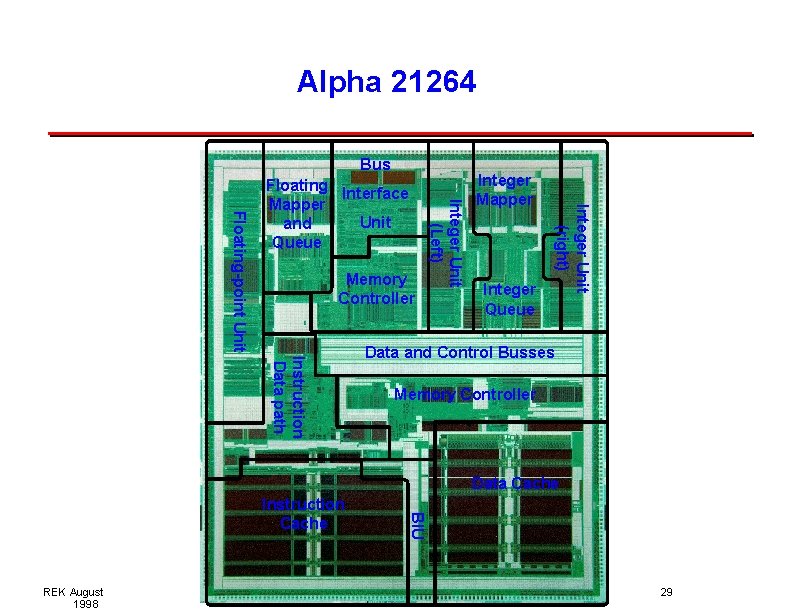

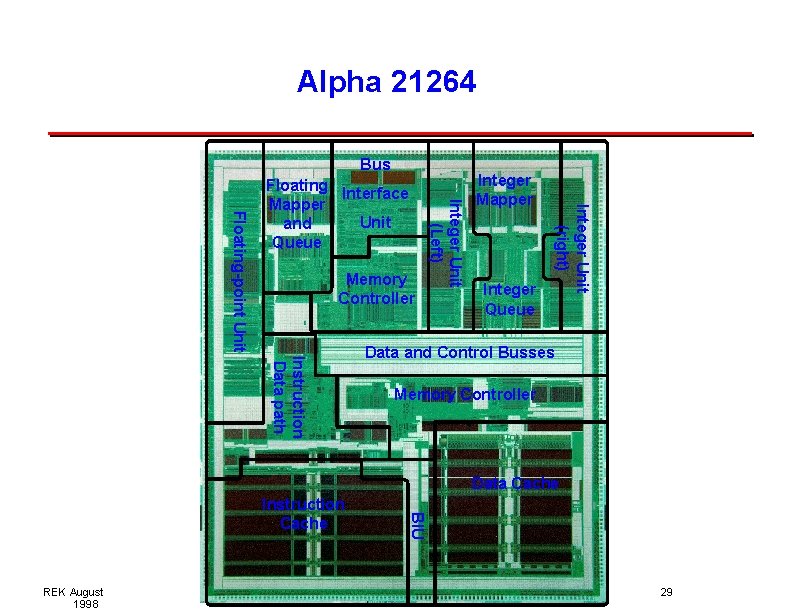

Alpha 21264 Bus Integer Mapper Integer Queue Integer Unit (right) Memory Controller Integer Unit (Left) Floating-point Unit Floating Interface Mapper Unit and Queue Instruction Data path Data and Control Busses Memory Controller Data Cache REK August 1998 BIU Instruction Cache 29

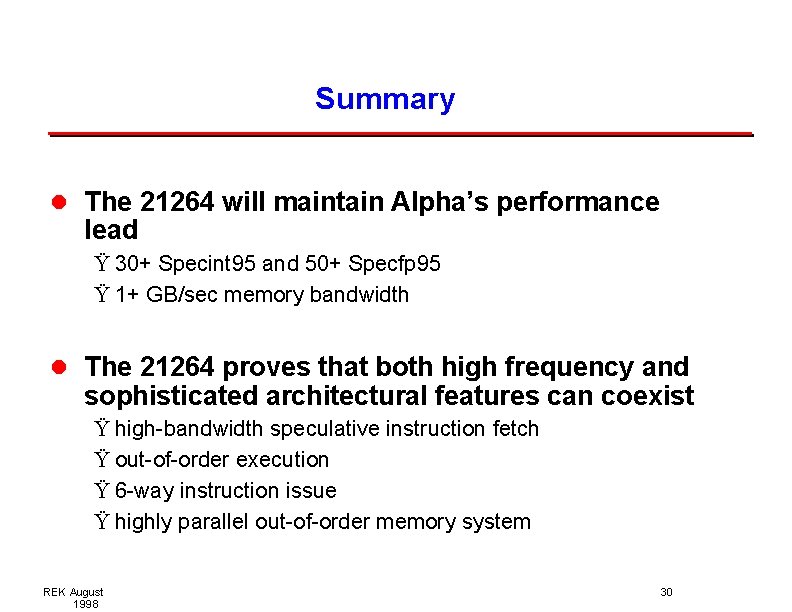

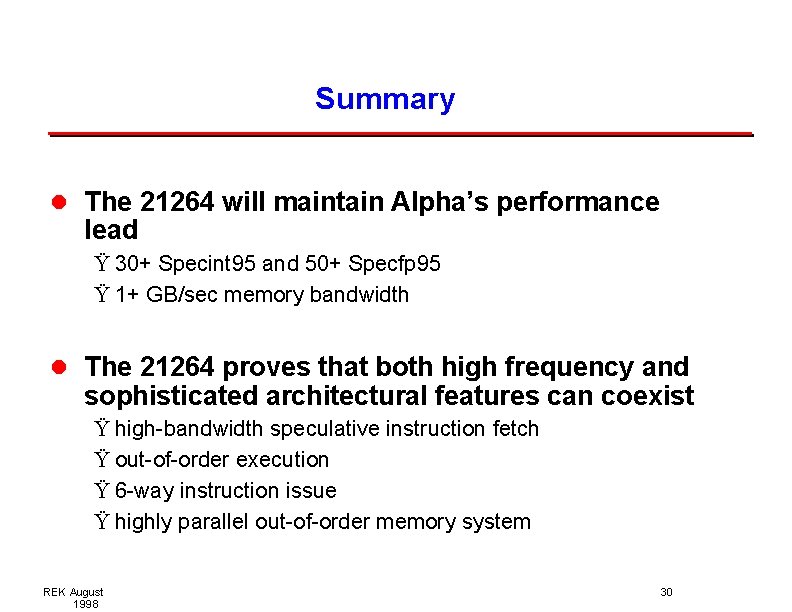

Summary l The 21264 will maintain Alpha’s performance lead Ÿ 30+ Specint 95 and 50+ Specfp 95 Ÿ 1+ GB/sec memory bandwidth l The 21264 proves that both high frequency and sophisticated architectural features can coexist Ÿ high-bandwidth speculative instruction fetch Ÿ out-of-order execution Ÿ 6 -way instruction issue Ÿ highly parallel out-of-order memory system REK August 1998 30