Text Preprocessing Steps in text preprocessing Obtaining text

Text Preprocessing

Steps in text preprocessing: • Obtaining text • Cleaning up the text • Sentence segmentation • Tokenization • Grammatical tagging • Then comes processing: entity extraction, frequency counting, etc.

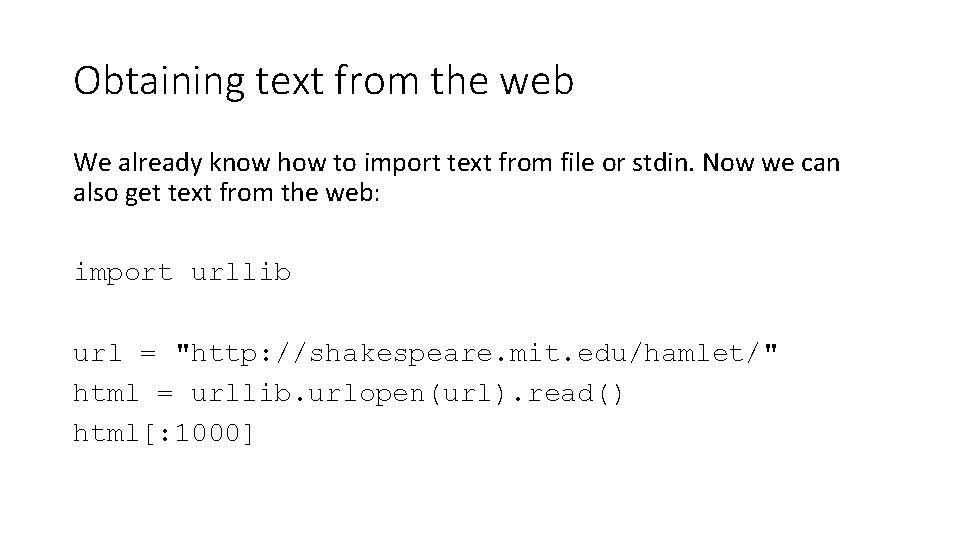

Obtaining text from the web We already know how to import text from file or stdin. Now we can also get text from the web: import urllib url = "http: //shakespeare. mit. edu/hamlet/" html = urllib. urlopen(url). read() html[: 1000]

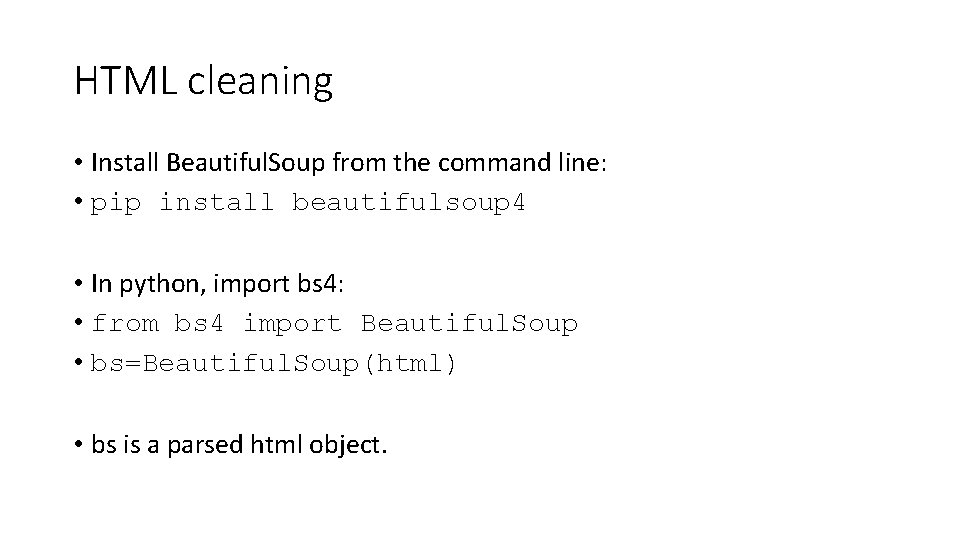

HTML cleaning • Install Beautiful. Soup from the command line: • pip install beautifulsoup 4 • In python, import bs 4: • from bs 4 import Beautiful. Soup • bs=Beautiful. Soup(html) • bs is a parsed html object.

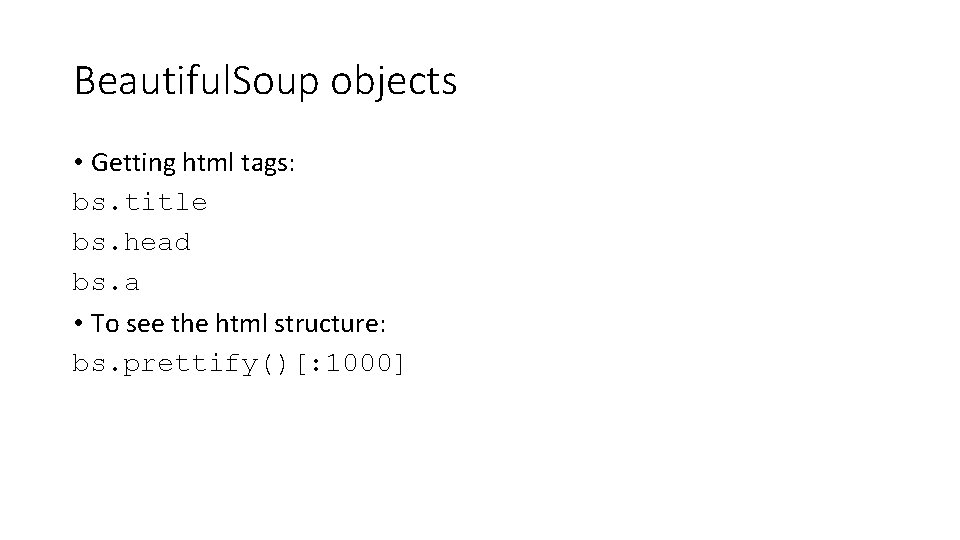

Beautiful. Soup objects • Getting html tags: bs. title bs. head bs. a • To see the html structure: bs. prettify()[: 1000]

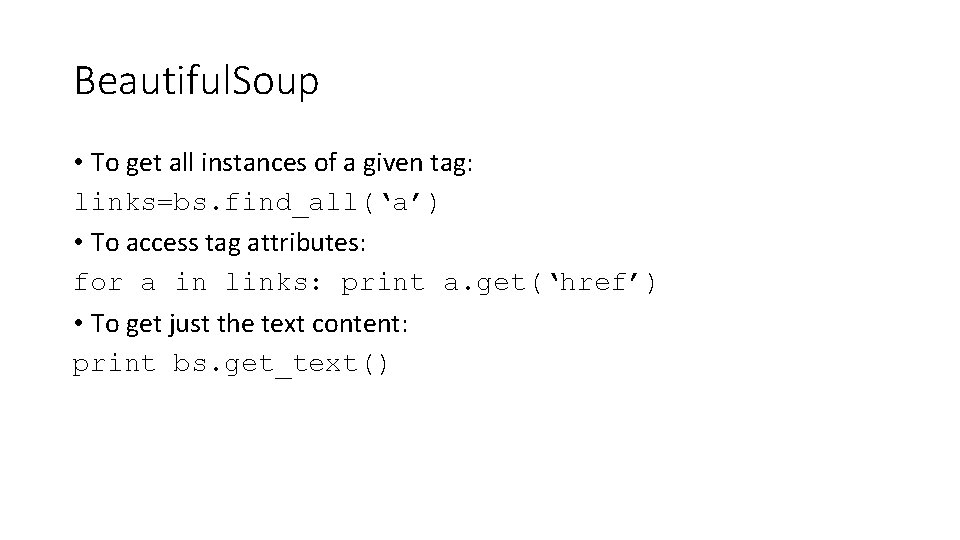

Beautiful. Soup • To get all instances of a given tag: links=bs. find_all(‘a’) • To access tag attributes: for a in links: print a. get(‘href’) • To get just the text content: print bs. get_text()

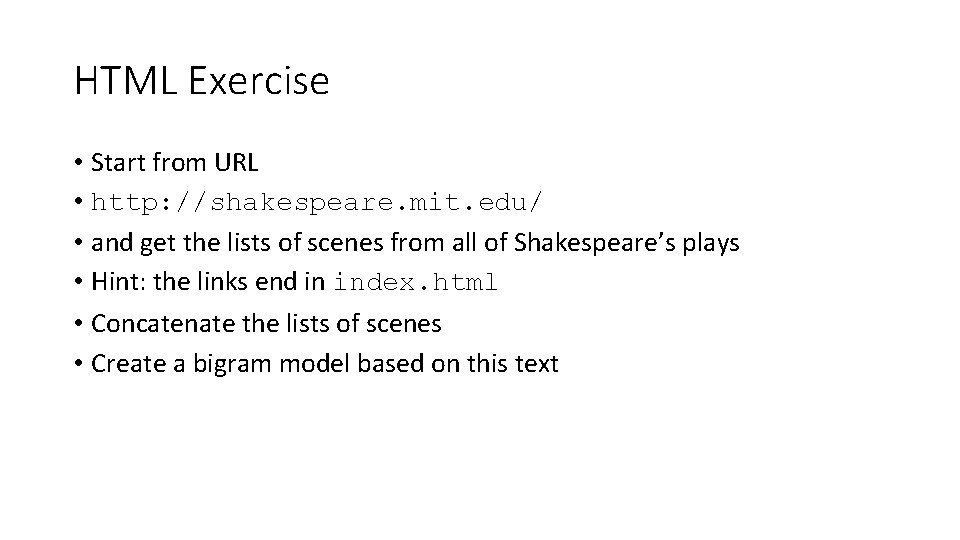

HTML Exercise • Start from URL • http: //shakespeare. mit. edu/ • and get the lists of scenes from all of Shakespeare’s plays • Hint: the links end in index. html • Concatenate the lists of scenes • Create a bigram model based on this text

HTML exercise • Now collect the full texts of all Shakespeare’s plays from the website • Hint: the relevant pages are called full. html • Save this corpus to a file.

Step we have already seen • Word tokenization • nltk. word_tokenize(text) • A similar function for splitting sentences: • nltk. sent_tokenize(text) • Need to tokenize in a different language? For many languages there are pre-built tokenizers in NLTK • it_tokenizer='tokenizers/punkt/italian. pickle' • tokenizer = nltk. data. load(it_tokenizer)

Grammatical Tagging • t=nltk. word_tokenize(“To be or not to be”) • tgd=nltk. pos_tag(t) Exercise: extract all adjective+noun combinations from the tagged text of the fist 1 K sentences of Shakespeare’s plays. What are the most frequent ones? • Caution: NLTK’s POS tagger is very slow!

- Slides: 10