Testing on a Large Scale Running the Atlas

- Slides: 23

Testing on a Large Scale Running the Atlas Data Acquisition and High Level Trigger Software on 700 PC Nodes CHEP 06 13 -17 February 2006 Mumbai India ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek on behalf of the Atlas DAQ/HLT Community

Content Scope and aims of the Large Scale Test 2005 (LST 05) Control infrastructure tests Component tests Sub-system tests Experience DAQ/HLT integrated tests Conclusions The ATLAS Data acquisition and High-Level Trigger: concept, design and status. B. GORINI , 291 track OC ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 2

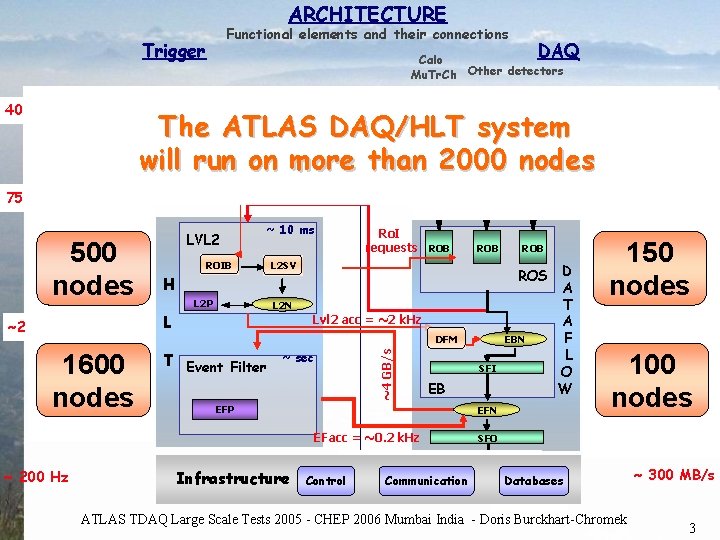

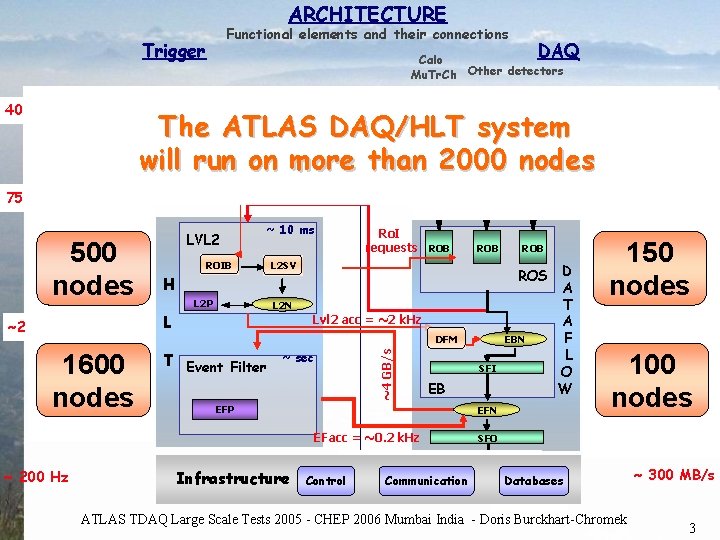

ARCHITECTURE Functional elements and their connections Trigger 40 MHz DAQ Calo Mu. Tr. Ch Other detectors The ATLAS DAQ/HLT system. D FE Pipelines LV E L 1 will run on more than 2000 nodes T Lvl 1 acc = 75 k. Hz 40 MHz Ro. I 2. 5 ms 75 k. Hz LVL 2 Ro. I Builder 500 L 2 Supervisor L 2 N/work nodes L 2 Proc Unit ROIB ~ 10 ms ROD 120 Ro. I requests ROB L 2 P L 2 SV Lvl 2 acc = ~2 k. Hz ~4 GB/s ~ sec Infrastructure Control Communication ROB 150 nodes ROS D Read-Out Sub-systems A T ~2+4 GB/s A Dataflow Manager EBN F Event Building N/work L O Sub-Farm Input W Event Builder EFN SFO Sub-Farm Output EB EFP Read-Out Links 100 nodes Event Filter N/work SFI EFacc = ~0. 2 k. Hz ~ 200 Hz ROB L 2 N T Event Filter RO Read-Out Drivers 120 GB/s Read-Out Buffers DFM 1600 Event Filter nodes Processors ROD GB/s H L ~2 k. Hz ROD Ro. I data = 1 -2% Databases ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek ~ 300 MB/s 3

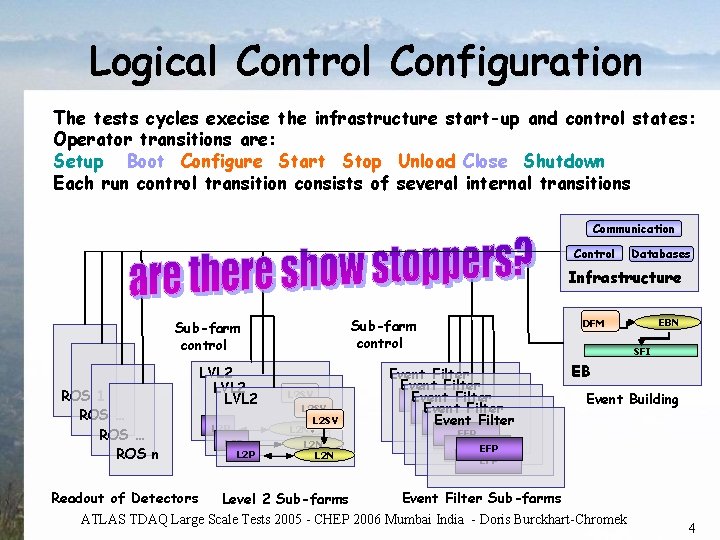

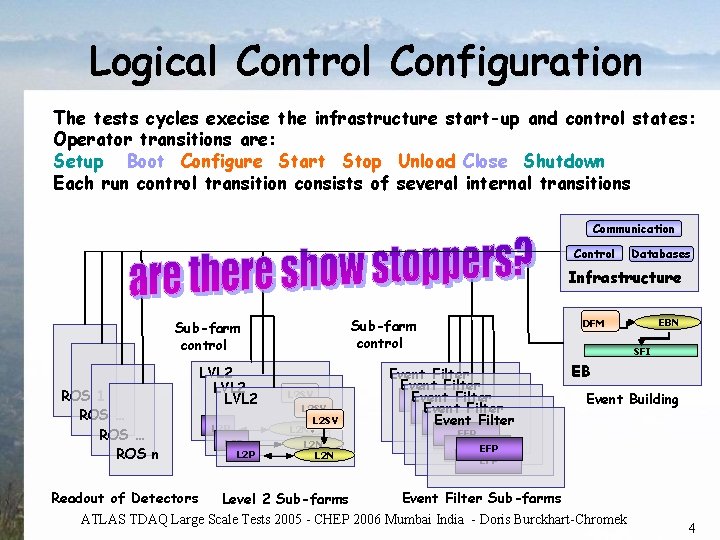

Logical Control Configuration The tests cycles execise the infrastructure start-up and control states: Operator transitions are: Setup Boot Configure Start Stop Unload Close Shutdown Each run control transition consists of several internal transitions Communication Control Databases Infrastructure Sub-farm control ROS 1 ROS … ROS n LVL 2 L 2 P L 2 SV L 2 N EBN DFM SFI Event Filter Event EFP Filter EFP EB Event Building EFP EFP Event Filter Sub-farms Readout of Detectors Level 2 Sub-farms ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 4

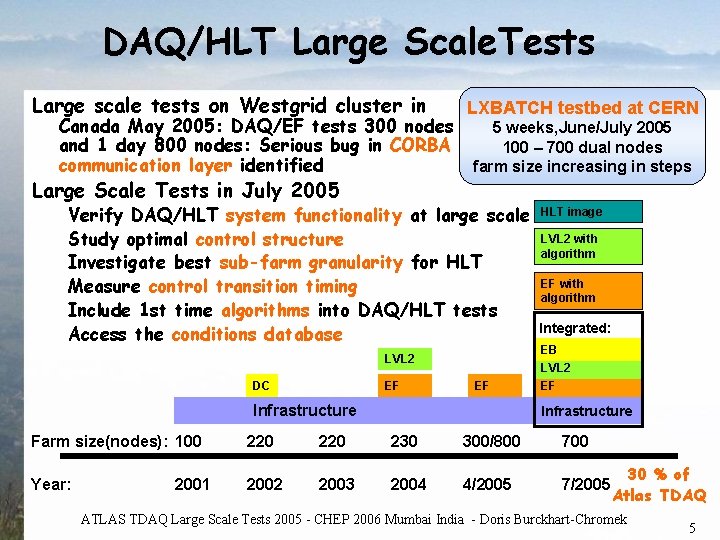

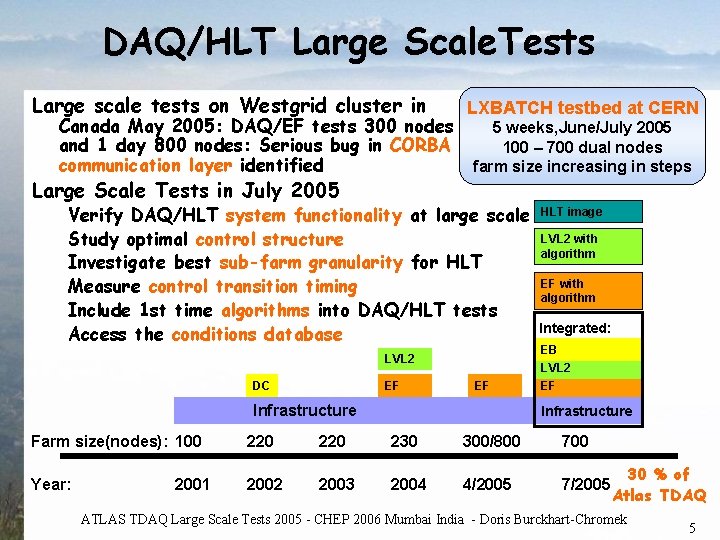

DAQ/HLT Large Scale. Tests Large scale tests on Westgrid cluster in LXBATCH testbed at CERN Canada May 2005: DAQ/EF tests 300 nodes 5 weeks, June/July 2005 and 1 day 800 nodes: Serious bug in CORBA system 100 – 700 dual nodes communication layer identified farm size increasing in steps Large Scale Tests in July 2005 Verify DAQ/HLT system functionality at large scale Study optimal control structure Investigate best sub-farm granularity for HLT Measure control transition timing Include 1 st time algorithms into DAQ/HLT tests Access the conditions database LVL 2 DC EF EF Infrastructure HLT image LVL 2 with algorithm EF with algorithm Integrated: EB LVL 2 EF Infrastructure Farm size(nodes): 100 220 230 300/800 700 Year: 2002 2003 2004 4/2005 7/2005 2001 30 % of Atlas TDAQ ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 5

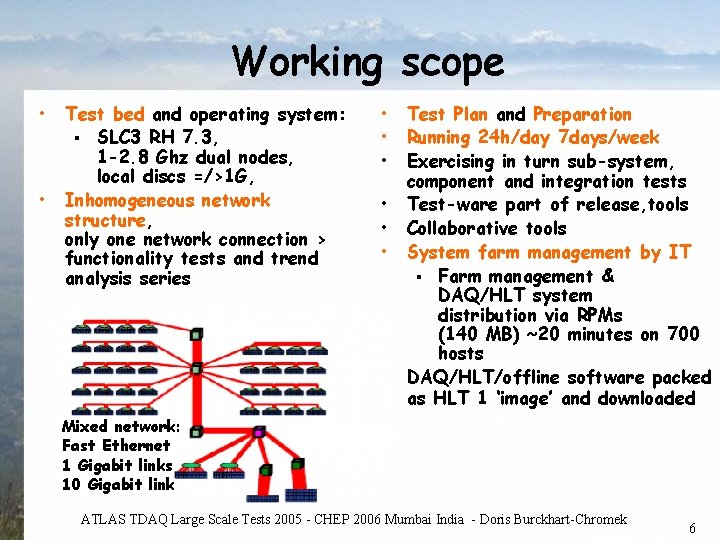

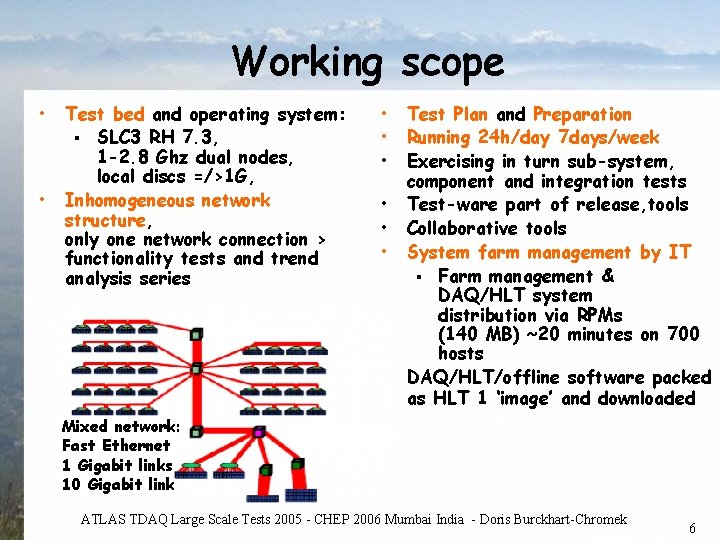

Working scope • • Test bed and operating system: § SLC 3 RH 7. 3, 1 -2. 8 Ghz dual nodes, local discs =/>1 G, Inhomogeneous network structure, only one network connection > functionality tests and trend analysis series • • Test Plan and Preparation Running 24 h/day 7 days/week Exercising in turn sub-system, component and integration tests Test-ware part of release, tools Collaborative tools System farm management by IT § Farm management & DAQ/HLT system distribution via RPMs (140 MB) ~20 minutes on 700 hosts DAQ/HLT/offline software packed as HLT 1 ‘image’ and downloaded Mixed network: Fast Ethernet 1 Gigabit links 10 Gigabit link ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 6

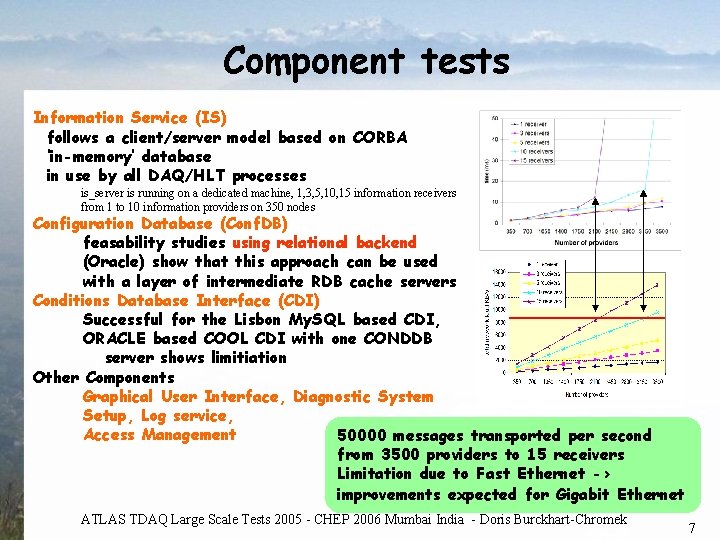

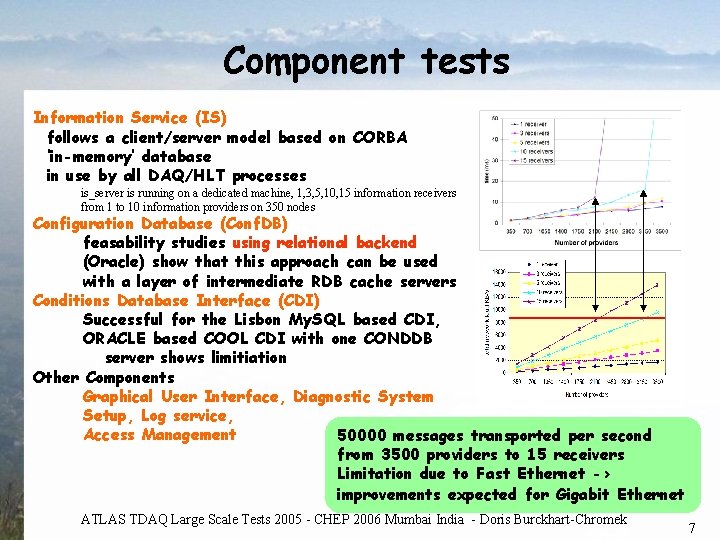

Component tests Information Service (IS) follows a client/server model based on CORBA ‘in-memory’ database in use by all DAQ/HLT processes is_server is running on a dedicated machine, 1, 3, 5, 10, 15 information receivers from 1 to 10 information providers on 350 nodes Configuration Database (Conf. DB) feasability studies using relational backend (Oracle) show that this approach can be used with a layer of intermediate RDB cache servers Conditions Database Interface (CDI) Successful for the Lisbon My. SQL based CDI, ORACLE based COOL CDI with one CONDDB server shows limitiation Other Components Graphical User Interface, Diagnostic System Setup, Log service, Access Management 50000 messages transported per second from 3500 providers to 15 receivers Limitation due to Fast Ethernet -> improvements expected for Gigabit Ethernet ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 7

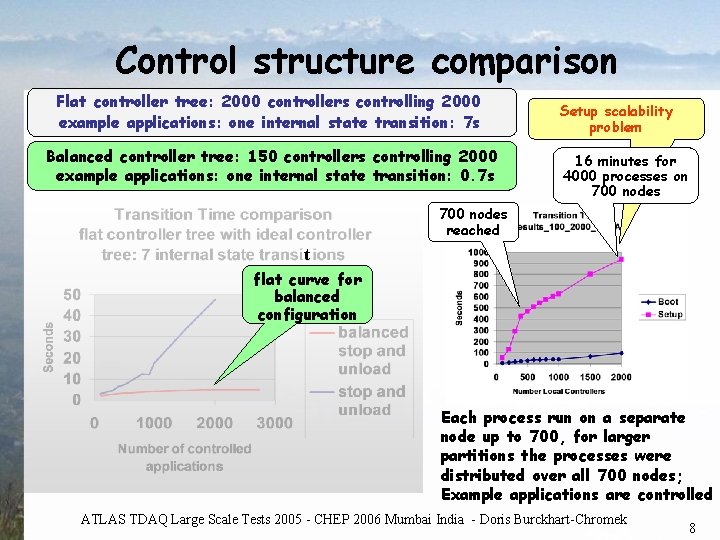

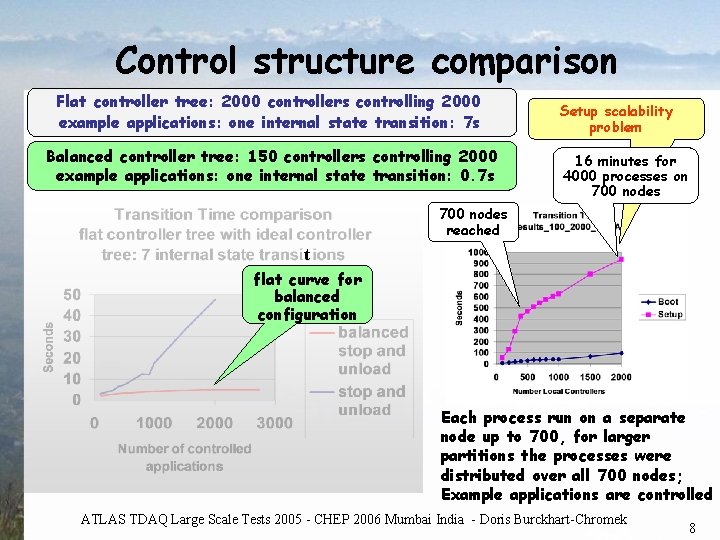

Control structure comparison Flat controller tree: 2000 controllers controlling 2000 example applications: one internal state transition: 7 s Balanced controller tree: 150 controllers controlling 2000 example applications: one internal state transition: 0. 7 s Setup scalability problem 16 minutes for 4000 processes on 700 nodes reached t flat curve for balanced configuration Each process run on a separate node up to 700, for larger partitions the processes were distributed over all 700 nodes; Example applications are controlled ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 8

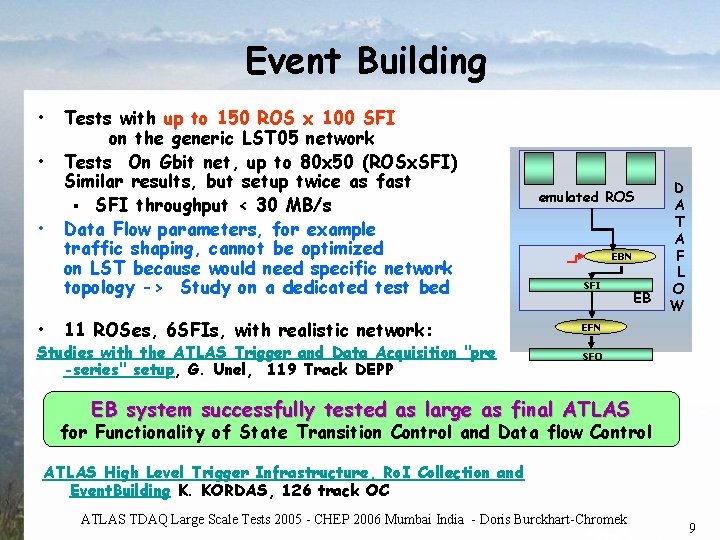

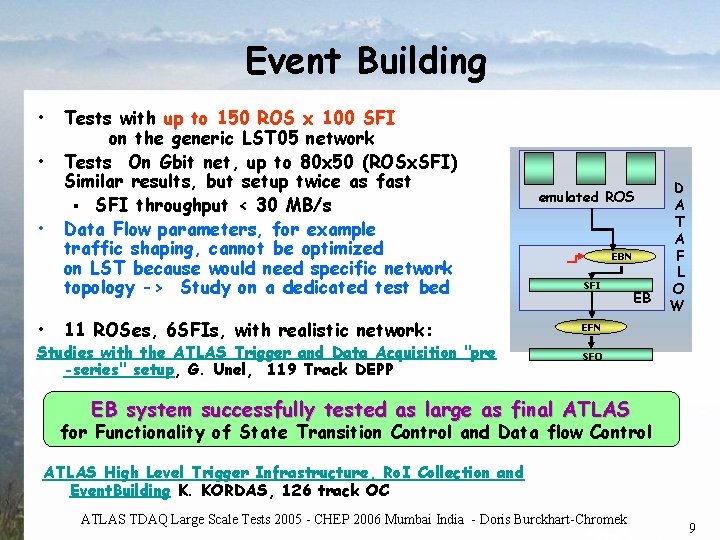

Event Building • • Tests with up to 150 ROS x 100 SFI on the generic LST 05 network Tests On Gbit net, up to 80 x 50 (ROSx. SFI) Similar results, but setup twice as fast § SFI throughput < 30 MB/s Data Flow parameters, for example traffic shaping, cannot be optimized on LST because would need specific network topology -> Study on a dedicated test bed 11 ROSes, 6 SFIs, with realistic network: Studies with the ATLAS Trigger and Data Acquisition "pre -series" setup, G. Unel, 119 Track DEPP emulated ROS EBN SFI EB D A T A F L O W EFN SFO EB system successfully tested as large as final ATLAS for Functionality of State Transition Control and Data flow Control ATLAS High Level Trigger Infrastructure, Ro. I Collection and Event. Building K. KORDAS, 126 track OC ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 9

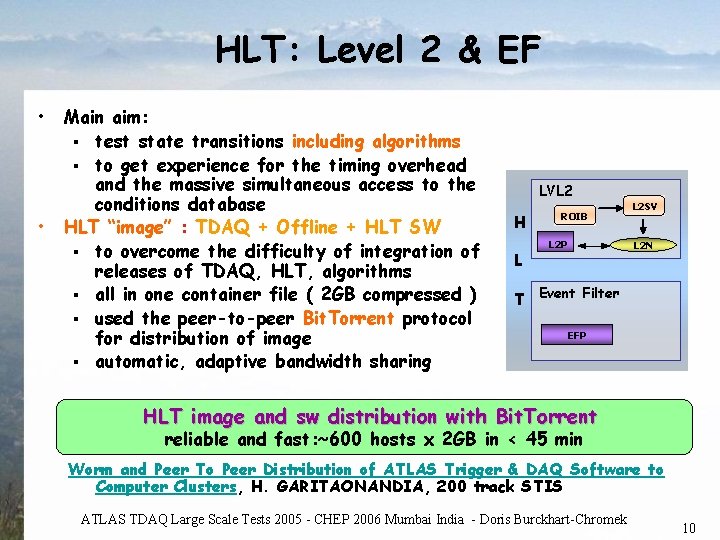

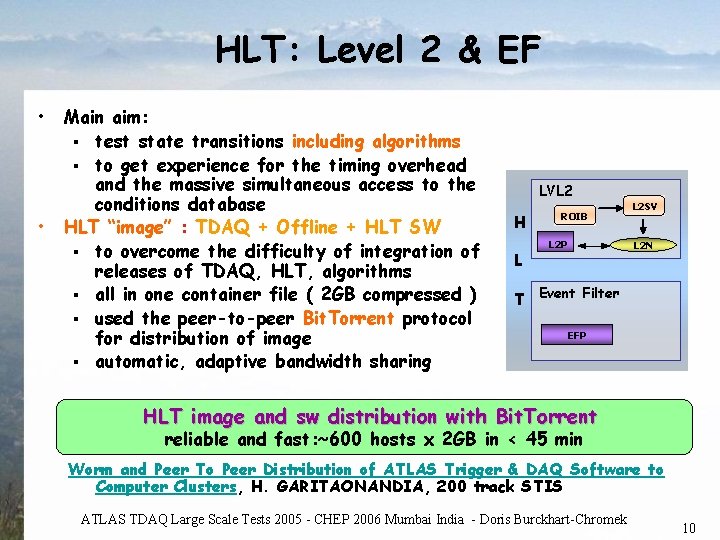

HLT: Level 2 & EF • • Main aim: § test state transitions including algorithms § to get experience for the timing overhead and the massive simultaneous access to the conditions database HLT “image” : TDAQ + Offline + HLT SW § to overcome the difficulty of integration of releases of TDAQ, HLT, algorithms § all in one container file ( 2 GB compressed ) § used the peer-to-peer Bit. Torrent protocol for distribution of image § automatic, adaptive bandwidth sharing LVL 2 H L ROIB L 2 P L 2 SV L 2 N T Event Filter EFP HLT image and sw distribution with Bit. Torrent reliable and fast: ~600 hosts x 2 GB in < 45 min Worm and Peer To Peer Distribution of ATLAS Trigger & DAQ Software to Computer Clusters, H. GARITAONANDIA, 200 track STIS ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 10

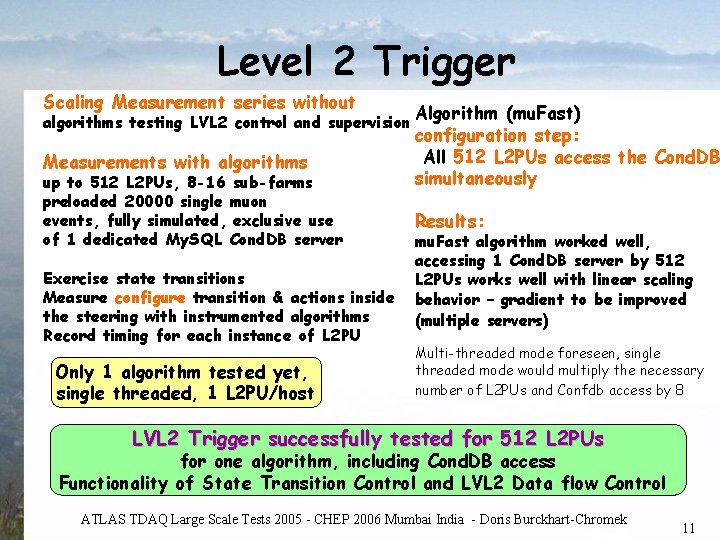

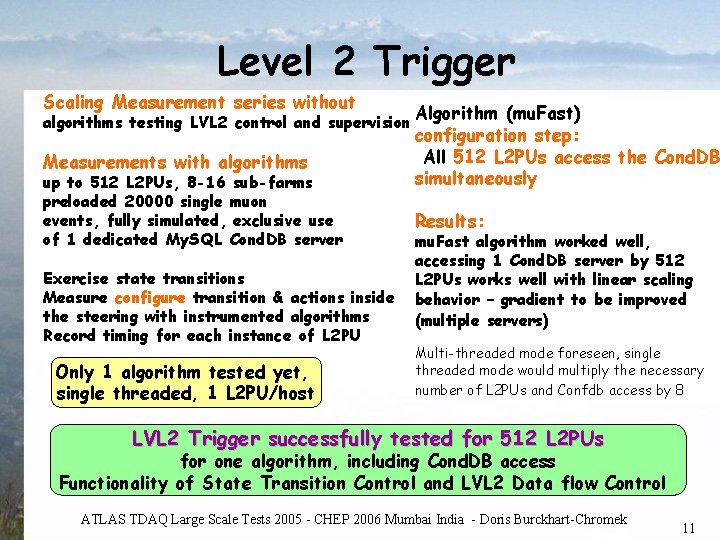

Level 2 Trigger Scaling Measurement series without algorithms testing LVL 2 control and supervision Measurements with algorithms up to 512 L 2 PUs, 8 -16 sub-farms preloaded 20000 single muon events, fully simulated, exclusive use of 1 dedicated My. SQL Cond. DB server Exercise state transitions Measure configure transition & actions inside the steering with instrumented algorithms Record timing for each instance of L 2 PU Only 1 algorithm tested yet, single threaded, 1 L 2 PU/host Algorithm (mu. Fast) configuration step: All 512 L 2 PUs access the Cond. DB simultaneously Results: mu. Fast algorithm worked well, accessing 1 Cond. DB server by 512 L 2 PUs works well with linear scaling behavior – gradient to be improved (multiple servers) Multi-threaded mode foreseen, single threaded mode would multiply the necessary number of L 2 PUs and Confdb access by 8 LVL 2 Trigger successfully tested for 512 L 2 PUs for one algorithm, including Cond. DB access Functionality of State Transition Control and LVL 2 Data flow Control ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 11

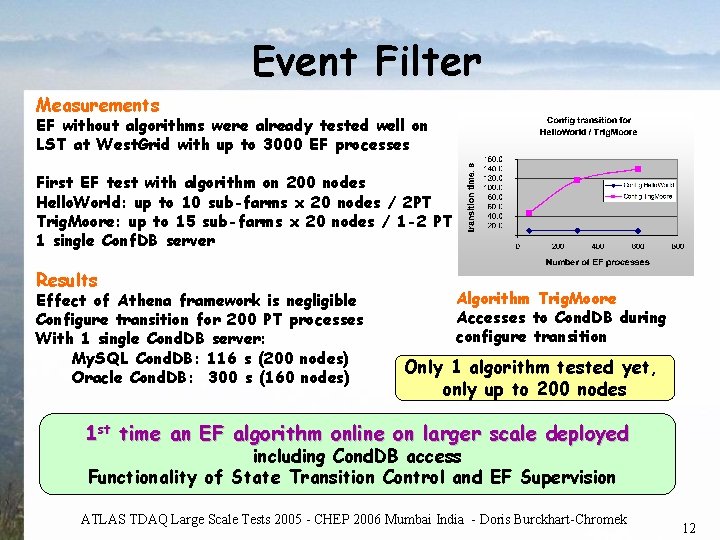

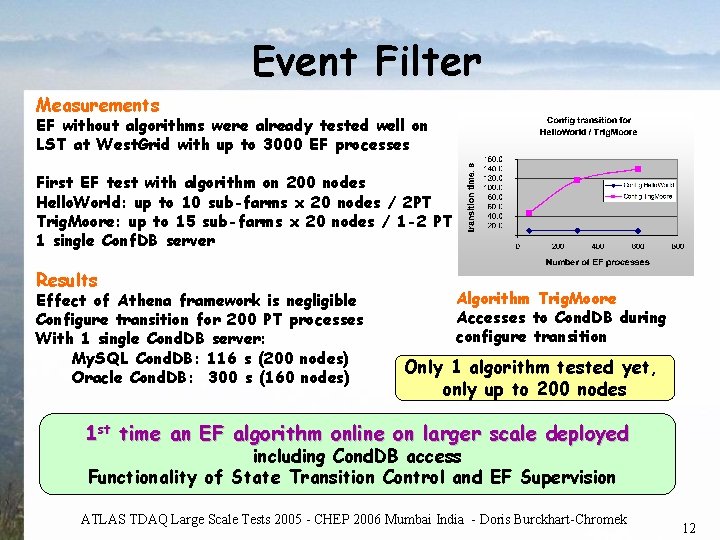

Event Filter Measurements EF without algorithms were already tested well on LST at West. Grid with up to 3000 EF processes First EF test with algorithm on 200 nodes Hello. World: up to 10 sub-farms x 20 nodes / 2 PT Trig. Moore: up to 15 sub-farms x 20 nodes / 1 -2 PT 1 single Conf. DB server Results Effect of Athena framework is negligible Configure transition for 200 PT processes With 1 single Cond. DB server: My. SQL Cond. DB: 116 s (200 nodes) Oracle Cond. DB: 300 s (160 nodes) Algorithm Trig. Moore Accesses to Cond. DB during configure transition Only 1 algorithm tested yet, only up to 200 nodes 1 st time an EF algorithm online on larger scale deployed including Cond. DB access Functionality of State Transition Control and EF Supervision ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 12

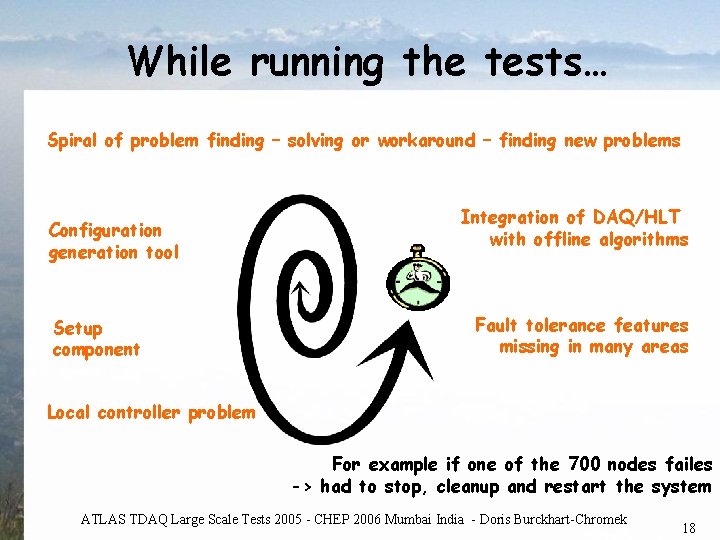

While running the tests… Spiral of problem finding – solving or workaround – finding new problems Stepwise increase of the farm size allowed for successive improvements 100 200 300 500 700 ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 13

While running the tests… Spiral of problem finding – solving or workaround – finding new problems Configuration generation Vital tool Configuration contains all knowledge of the TDAQ system complex tool was not designed for LST ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 14

While running the tests… Spiral of problem finding – solving or workaround – finding new problems Configuration generation tool Setup component new implementation several patches Obligatory for each run Serious scalability problem: 16 minutes to set up the largest configuration with 4000 processes on 700 nodes ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 15

While running the tests… Spiral of problem finding – solving or workaround – finding new problems Configuration generation tool Setup component Leaf controller problem Crashed every 1/10. 000 operation -> not visible at small scale large scale: at one of the 1 st transitions -> reproducible problem ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 16

While running the tests… Spiral of problem finding – solving or workaround – finding new problems Configuration generation tool Integration of DAQ/HLT with offline algorithms Setup component Local controller problem Software release compatibility problems Synchronisation of releases and run time environment ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 17

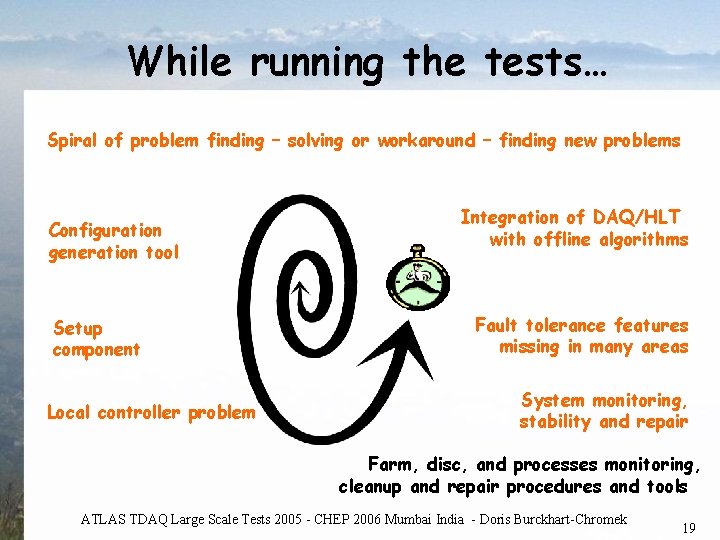

While running the tests… Spiral of problem finding – solving or workaround – finding new problems Configuration generation tool Setup component Integration of DAQ/HLT with offline algorithms Fault tolerance features missing in many areas Local controller problem For example if one of the 700 nodes failes -> had to stop, cleanup and restart the system ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 18

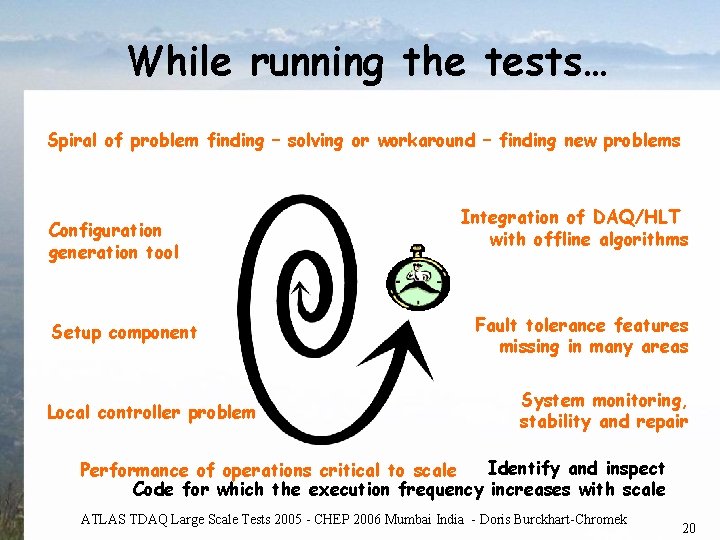

While running the tests… Spiral of problem finding – solving or workaround – finding new problems Configuration generation tool Setup component Local controller problem Integration of DAQ/HLT with offline algorithms Fault tolerance features missing in many areas System monitoring, stability and repair Farm, disc, and processes monitoring, cleanup and repair procedures and tools ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 19

While running the tests… Spiral of problem finding – solving or workaround – finding new problems Configuration generation tool Setup component Local controller problem Integration of DAQ/HLT with offline algorithms Fault tolerance features missing in many areas System monitoring, stability and repair Identify and inspect Performance of operations critical to scale Code for which the execution frequency increases with scale ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 20

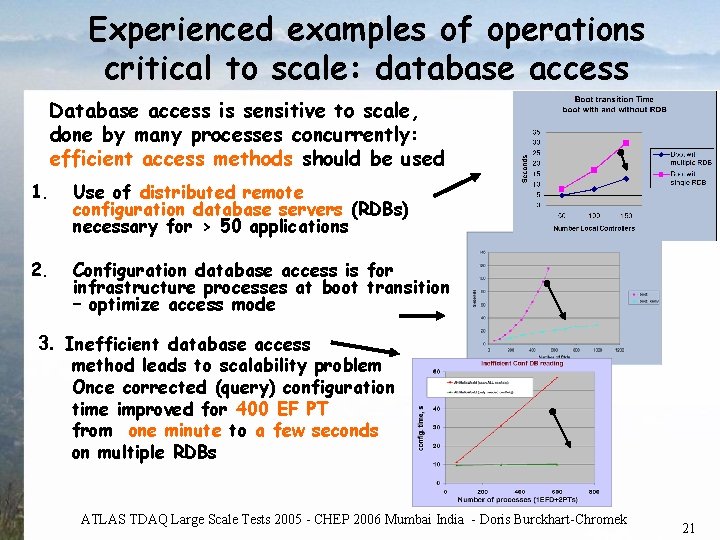

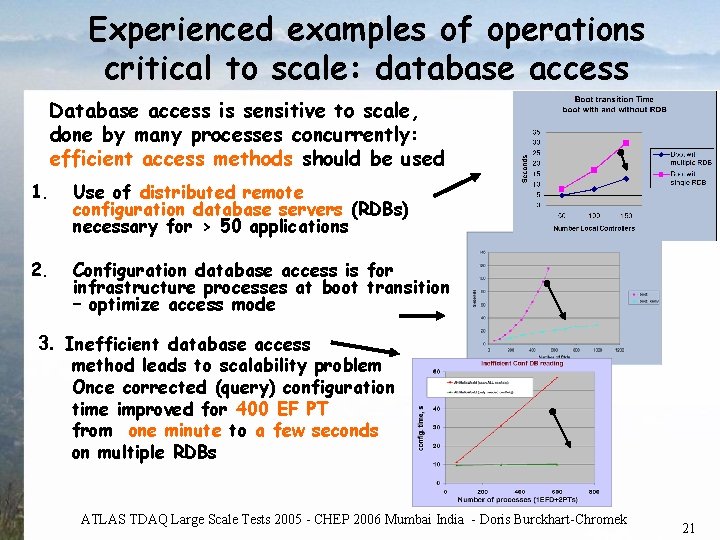

Experienced examples of operations critical to scale: database access Database access is sensitive to scale, done by many processes concurrently: efficient access methods should be used 1. Use of distributed remote configuration database servers (RDBs) necessary for > 50 applications 2. Configuration database access is for infrastructure processes at boot transition – optimize access mode 3. Inefficient database access method leads to scalability problem Once corrected (query) configuration time improved for 400 EF PT from one minute to a few seconds on multiple RDBs ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 21

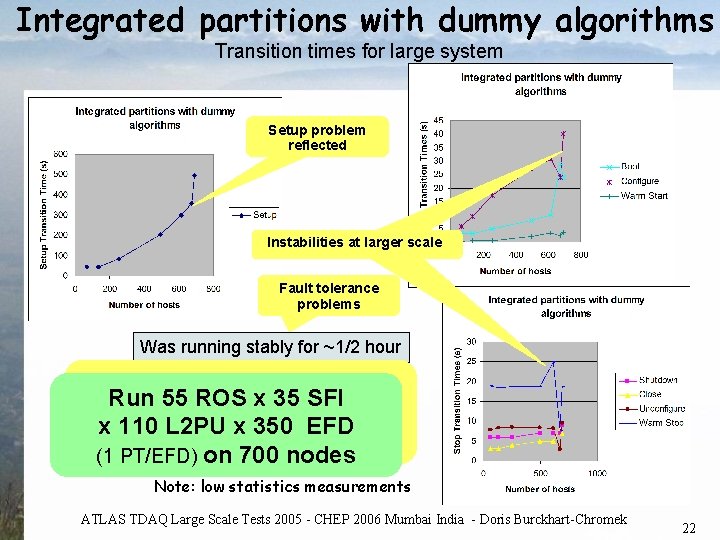

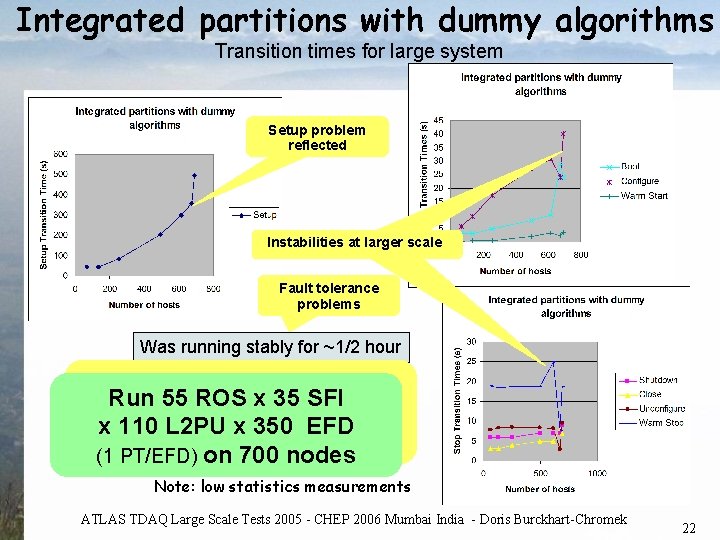

Integrated partitions with dummy algorithms Transition times for large system Setup problem reflected Instabilitiesatatlargerscale Fault tolerance problems Was running stably for ~1/2 hour We experienced a big Run 55 ROS x 35 SFI difference when increasing x 110 L 2 PU x 350 EFD scale from 500 to 700 nodes (1 PT/EFD) on 700 nodes Note: low statistics measurements ATLAS TDAQ Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek 22

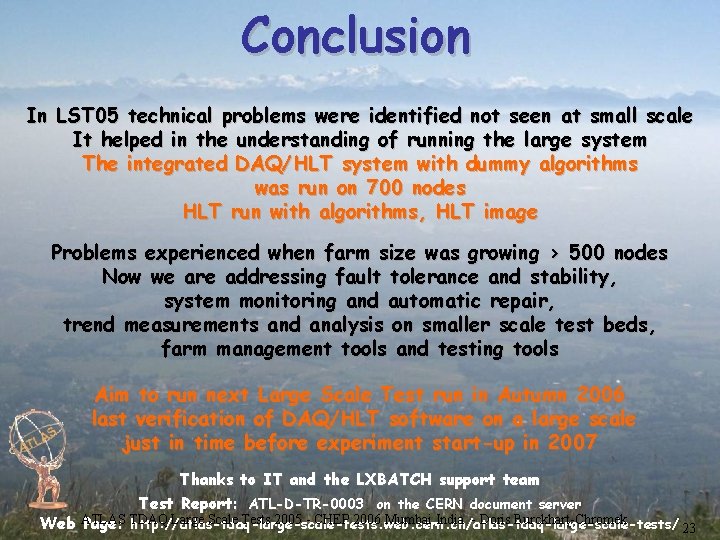

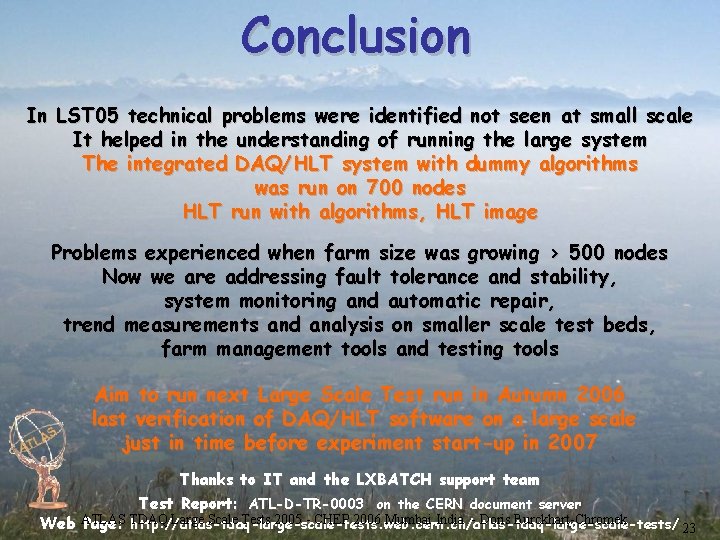

Conclusion In LST 05 technical problems were identified not seen at small scale It helped in the understanding of running the large system The integrated DAQ/HLT system with dummy algorithms was run on 700 nodes HLT run with algorithms, HLT image Problems experienced when farm size was growing > 500 nodes Now we are addressing fault tolerance and stability, system monitoring and automatic repair, trend measurements and analysis on smaller scale test beds, farm management tools and testing tools Aim to run next Large Scale Test run in Autumn 2006 last verification of DAQ/HLT software on a large scale just in time before experiment start-up in 2007 Thanks to IT and the LXBATCH support team Test Report: ATL-D-TR-0003 on the CERN document server Large Scale Tests 2005 - CHEP 2006 Mumbai India - Doris Burckhart-Chromek Web ATLAS Page: TDAQ http: //atlas-tdaq-large-scale-tests. web. cern. ch/atlas-tdaq-large-scale-tests/ 23