Terabit VNF Kyle Larose Nicolas StPierre Agenda Introduction

Terabit VNF Kyle Larose & Nicolas St-Pierre

Agenda • Introduction to Sandvine • Sandvine Policy Control , Sandscript and Platform • The Policy Traffic Switch hardware appliance • The Virtual Network Function sizing and positioning • Sandvine, Dell and Intel® break the VNF Terabit/s barrier • The Sandvine PTS appliance legacy hardware & software • Porting HW platform to a new OS, COTS, DPDK, and futures • Life Pro Tips on using DPDK! • A look at Sandvine Virtual Series PTS software and packet path • Why Sandvine adopts DPDK 2

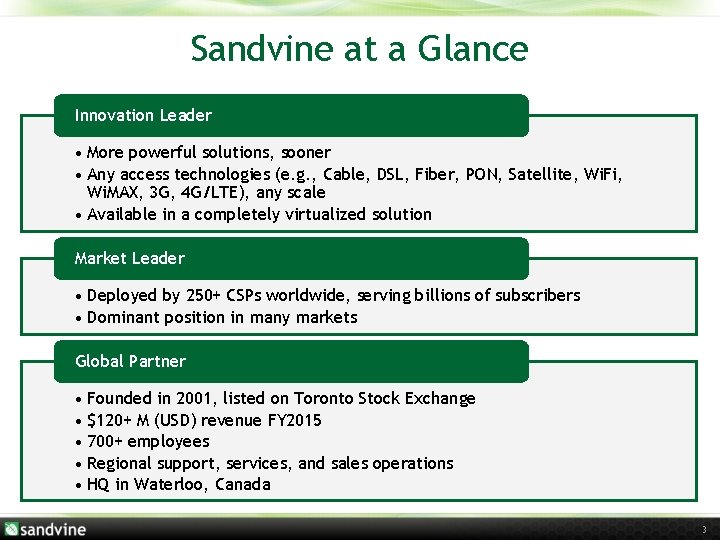

Sandvine at a Glance Innovation Leader • More powerful solutions, sooner • Any access technologies (e. g. , Cable, DSL, Fiber, PON, Satellite, Wi. Fi, Wi. MAX, 3 G, 4 G/LTE), any scale • Available in a completely virtualized solution Market Leader • Deployed by 250+ CSPs worldwide, serving billions of subscribers • Dominant position in many markets Global Partner • Founded in 2001, listed on Toronto Stock Exchange • $120+ M (USD) revenue FY 2015 • 700+ employees • Regional support, services, and sales operations • HQ in Waterloo, Canada 3

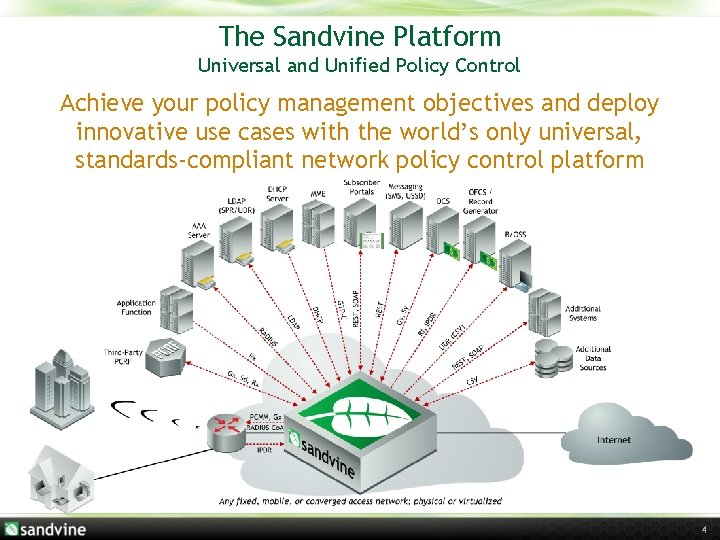

The Sandvine Platform Universal and Unified Policy Control Achieve your policy management objectives and deploy innovative use cases with the world’s only universal, standards-compliant network policy control platform 4

What is Sandvine policy control? • The Sandvine Policy Engine translates business rules into network policy control › Links any condition to any action › Sand. Script does the translation › Embedded in our PCEF/PTS and PCRF/SDE Conditions and entitlements Inputs Charging updates and policy actions Outputs 5

Virtualization Objectives Can I greenfield deploy highly scalable NFV within my network? What is the true cost or penalty of doing so? Compute penalty? Density & Efficiency penalty? Data Center power and footprint? Demonstrate an orchestrated Virtual Network Function rollout of Network Policy Control on established COTS platform, including all compute & network nodes within a fixed enclosure footprint, and establish a new benchmark for Policy Control VNF network throughput, compute density and power efficiency. 6

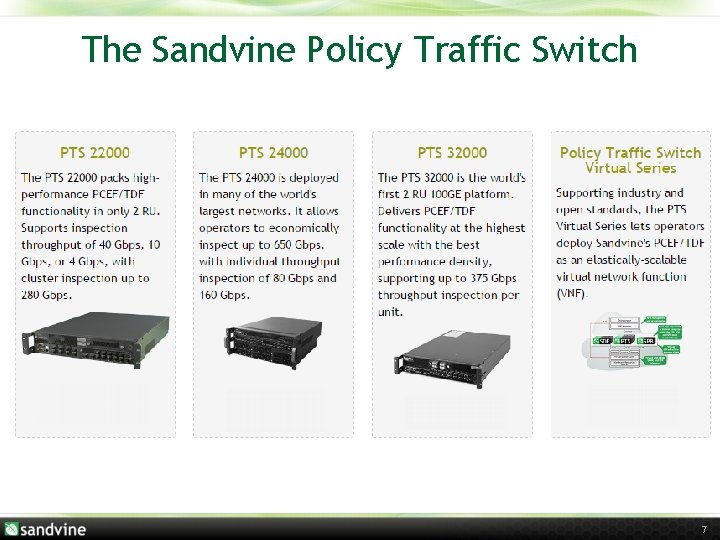

The Sandvine Policy Traffic Switch 7

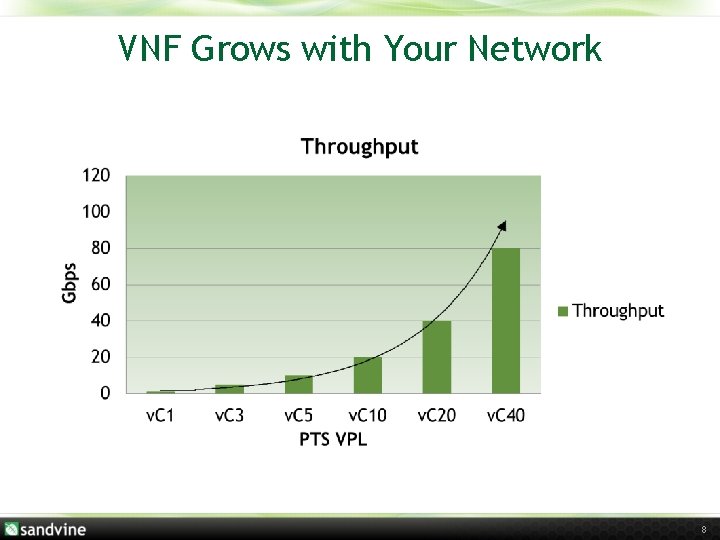

VNF Grows with Your Network 8

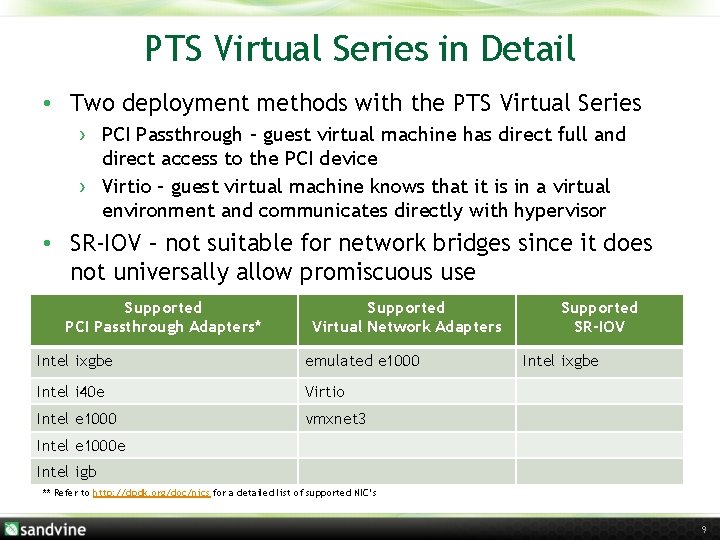

PTS Virtual Series in Detail • Two deployment methods with the PTS Virtual Series › PCI Passthrough – guest virtual machine has direct full and direct access to the PCI device › Virtio – guest virtual machine knows that it is in a virtual environment and communicates directly with hypervisor • SR-IOV – not suitable for network bridges since it does not universally allow promiscuous use Supported PCI Passthrough Adapters* Supported Virtual Network Adapters Intel ixgbe emulated e 1000 Intel i 40 e Virtio Intel e 1000 vmxnet 3 Supported SR-IOV Intel ixgbe Intel e 1000 e Intel igb ** Refer to http: //dpdk. org/doc/nics for a detailed list of supported NIC’s 9

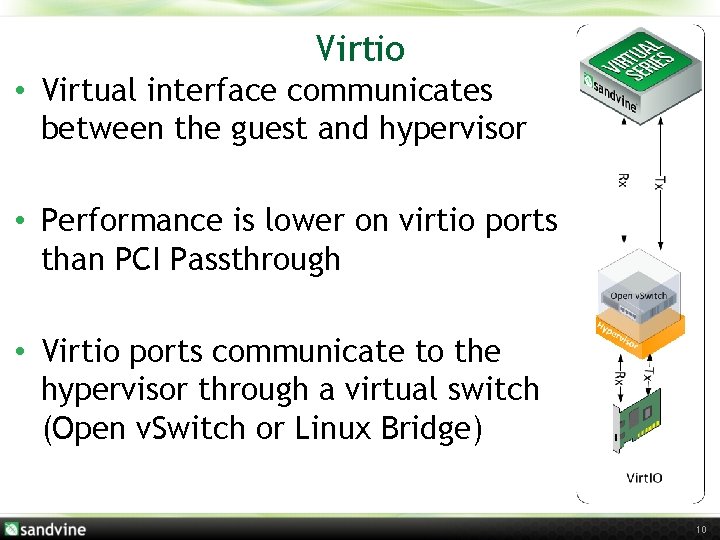

Virtio • Virtual interface communicates between the guest and hypervisor • Performance is lower on virtio ports than PCI Passthrough • Virtio ports communicate to the hypervisor through a virtual switch (Open v. Switch or Linux Bridge) 10

Sandvine and Intel® Partnership Demonstrate an orchestrated Virtual Network Function rollout of Network Policy Control on established COTS platform, including all compute & network nodes within a fixed enclosure footprint, and establish a new benchmark for Policy Control VNF network throughput, compute density and power efficiency. 11

Sandvine Partnerships and Platforms Established COTS vendor with a proven server platform 12

Terabit VNF Platform Dell M 1000 e enclosure. 10 Rack Units. 17. 5” Dell Networking Force 10 MXL (6 x). 192 x 10 Gb. E, 36 x 40 Gb. E 13 th Generation blade server M 630 (14 x) Intel® Xeon E 5 -2699 v 3 (Haswell, 18 cores) (2 x) Intel® X 710 – Quad 10 Gb. E (1 x) Intel® X 520 – Dual 10 Gb. E (2 x) Dataplane connectivity: 8 x 10 Gb. E/blade ^ 80 Gbps x 14 = 1120 Gbps 13

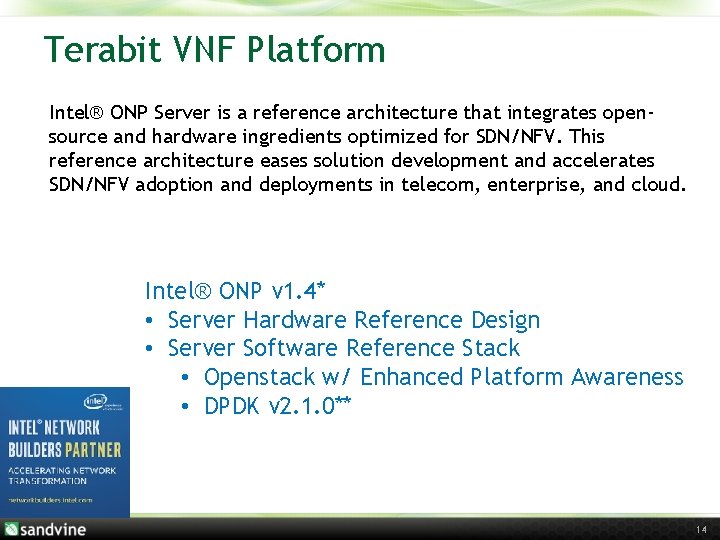

Terabit VNF Platform Intel® ONP Server is a reference architecture that integrates opensource and hardware ingredients optimized for SDN/NFV. This reference architecture eases solution development and accelerates SDN/NFV adoption and deployments in telecom, enterprise, and cloud. Intel® ONP v 1. 4* • Server Hardware Reference Design • Server Software Reference Stack • Openstack w/ Enhanced Platform Awareness • DPDK v 2. 1. 0** 14

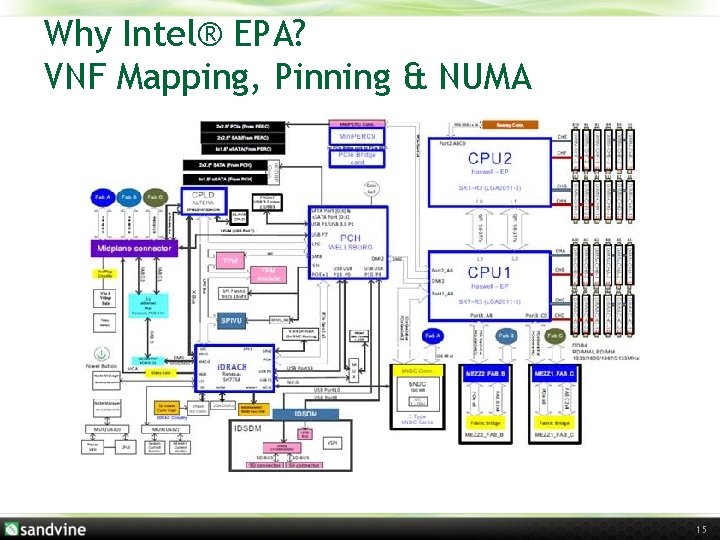

Why Intel® EPA? VNF Mapping, Pinning & NUMA 15

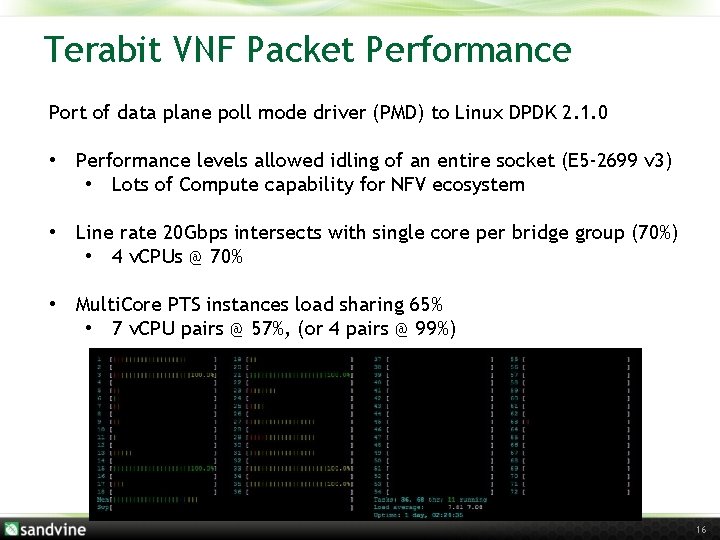

Terabit VNF Packet Performance Port of data plane poll mode driver (PMD) to Linux DPDK 2. 1. 0 • Performance levels allowed idling of an entire socket (E 5 -2699 v 3) • Lots of Compute capability for NFV ecosystem • Line rate 20 Gbps intersects with single core per bridge group (70%) • 4 v. CPUs @ 70% • Multi. Core PTS instances load sharing 65% • 7 v. CPU pairs @ 57%, (or 4 pairs @ 99%) 16

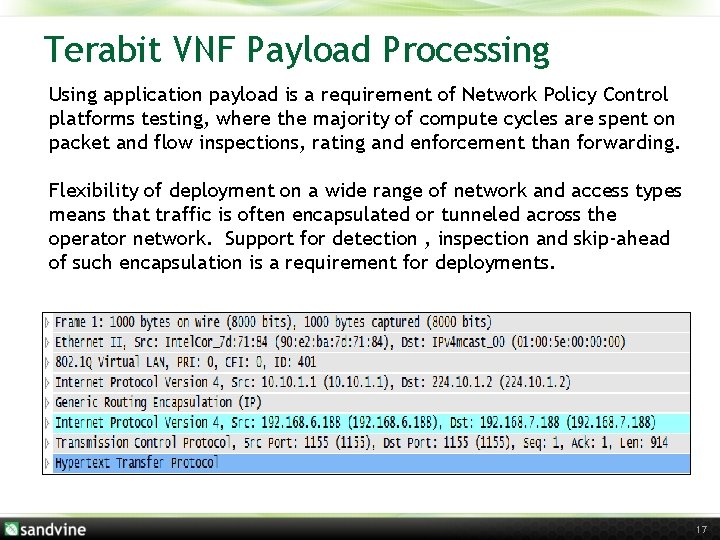

Terabit VNF Payload Processing Using application payload is a requirement of Network Policy Control platforms testing, where the majority of compute cycles are spent on packet and flow inspections, rating and enforcement than forwarding. Flexibility of deployment on a wide range of network and access types means that traffic is often encapsulated or tunneled across the operator network. Support for detection , inspection and skip-ahead of such encapsulation is a requirement for deployments. 17

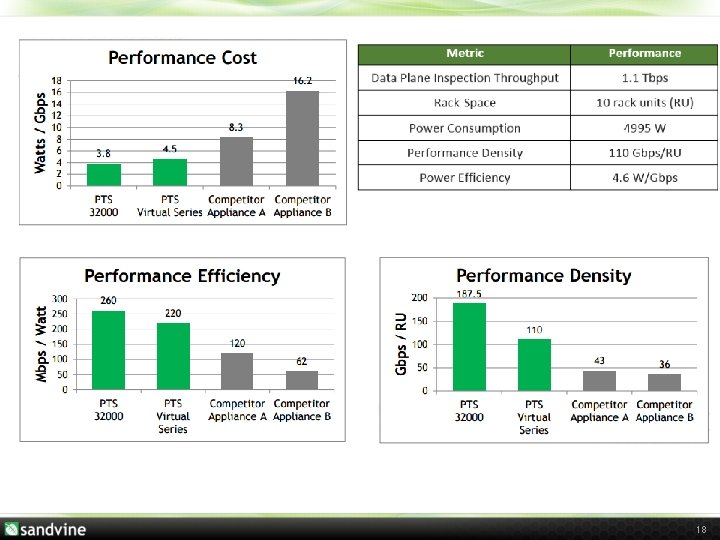

18

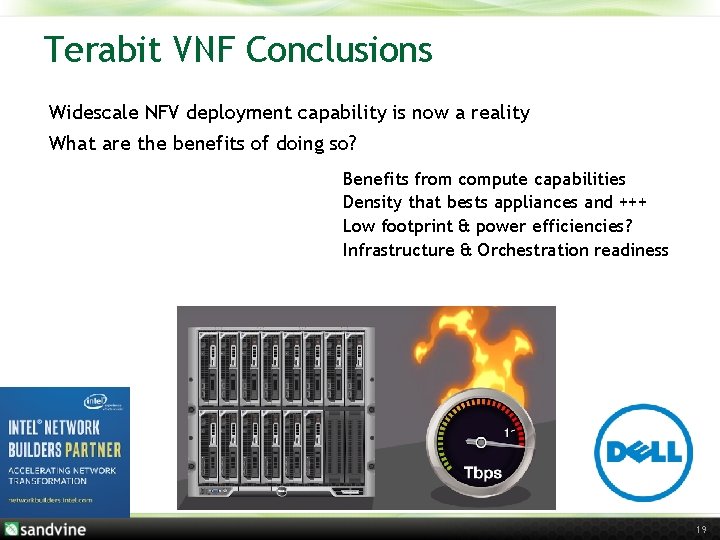

Terabit VNF Conclusions Widescale NFV deployment capability is now a reality What are the benefits of doing so? Benefits from compute capabilities Density that bests appliances and +++ Low footprint & power efficiencies? Infrastructure & Orchestration readiness 19

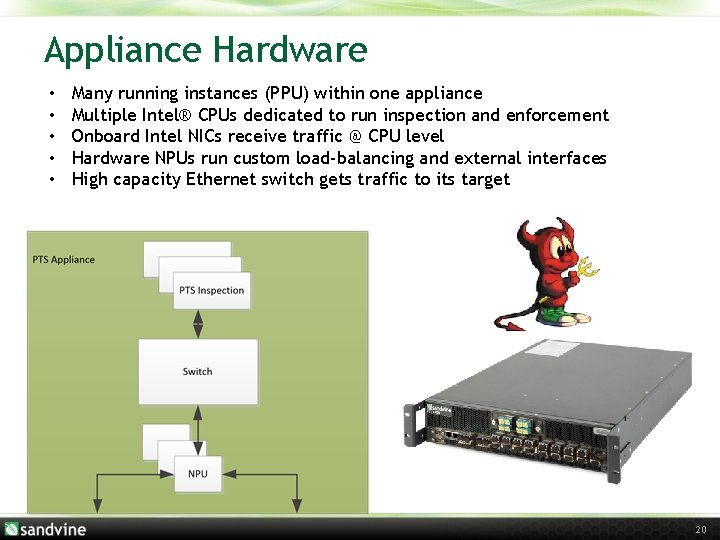

Appliance Hardware • • • Many running instances (PPU) within one appliance Multiple Intel® CPUs dedicated to run inspection and enforcement Onboard Intel NICs receive traffic @ CPU level Hardware NPUs run custom load-balancing and external interfaces High capacity Ethernet switch gets traffic to its target 20

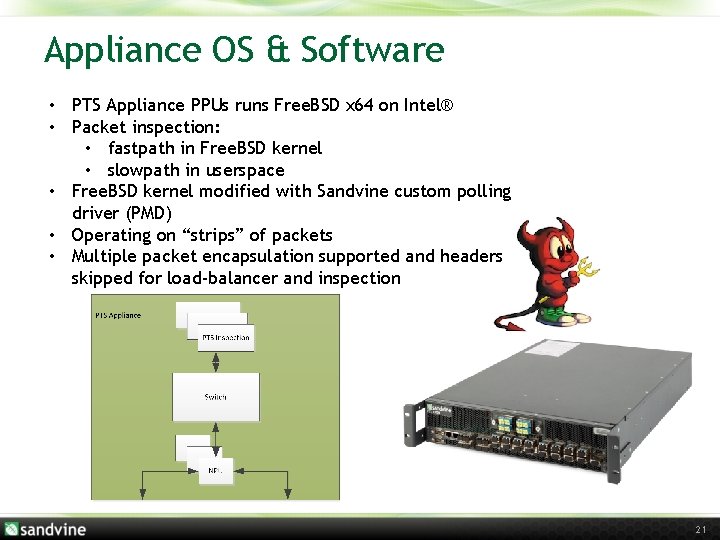

Appliance OS & Software • PTS Appliance PPUs runs Free. BSD x 64 on Intel® • Packet inspection: • fastpath in Free. BSD kernel • slowpath in userspace • Free. BSD kernel modified with Sandvine custom polling driver (PMD) • Operating on “strips” of packets • Multiple packet encapsulation supported and headers skipped for load-balancer and inspection 21

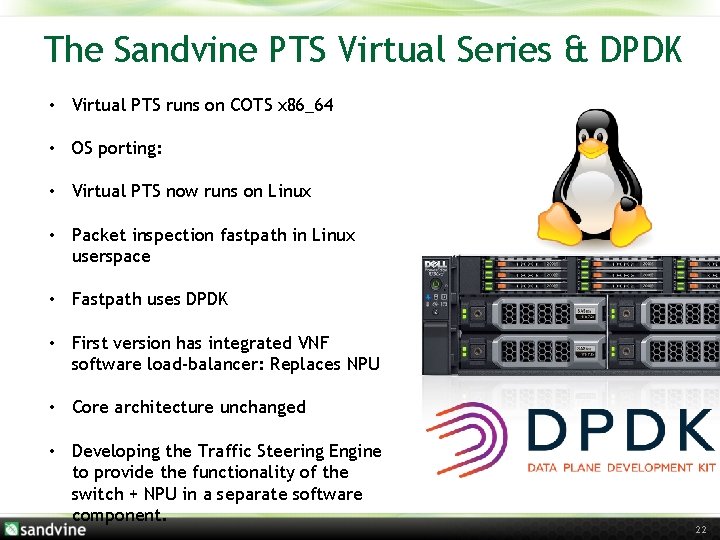

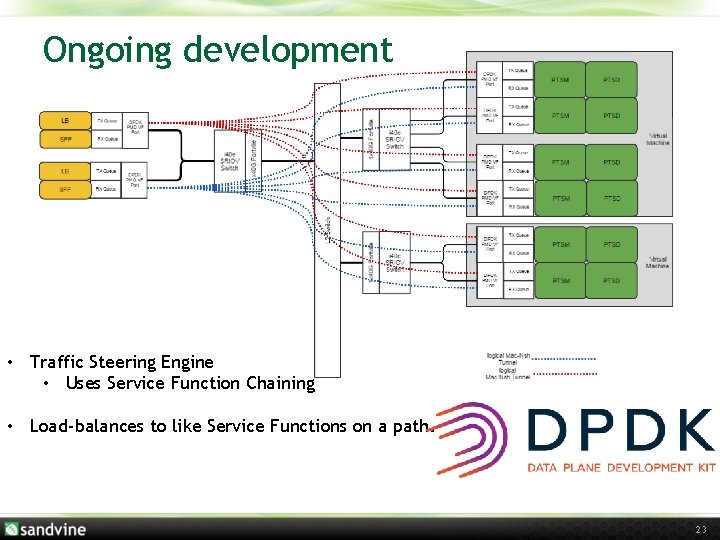

The Sandvine PTS Virtual Series & DPDK • Virtual PTS runs on COTS x 86_64 • OS porting: • Virtual PTS now runs on Linux • Packet inspection fastpath in Linux userspace • Fastpath uses DPDK • First version has integrated VNF software load-balancer: Replaces NPU • Core architecture unchanged • Developing the Traffic Steering Engine to provide the functionality of the switch + NPU in a separate software component. 22

Ongoing development • Traffic Steering Engine • Uses Service Function Chaining • Load-balances to like Service Functions on a path. 23

Sandvine LPT with DPDK • Tx Queues are your friend! • Challenging to integrate stats with our network appliance focused UI • Lack of tcpdump/reason for packet discards causes challenges in the field, and with support procedures • Need to stay up to date for latest drivers (i. e. i 40 e had major issues with release 1. 8 in ONP 1. 4 during terabit test, addressed in 2. 0+ ) • Major DPDK release API change was minimal effort • Losing the interfaces from the kernel confuses many • DPDK is only part of the performance story: so much on the host/BIOS/etc as well • When a HW device does not play well with IOMMU, be afraid! • Integrating a git-based source tree with non-git can be painful 24

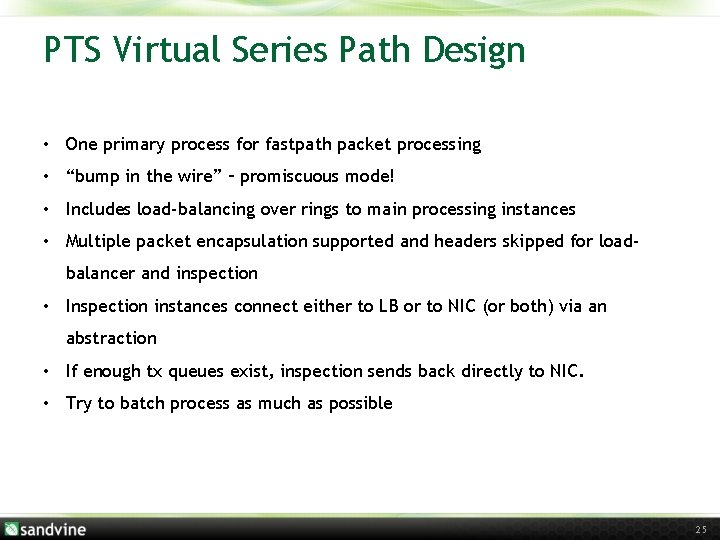

PTS Virtual Series Path Design • One primary process for fastpath packet processing • “bump in the wire” – promiscuous mode! • Includes load-balancing over rings to main processing instances • Multiple packet encapsulation supported and headers skipped for loadbalancer and inspection • Inspection instances connect either to LB or to NIC (or both) via an abstraction • If enough tx queues exist, inspection sends back directly to NIC. • Try to batch process as much as possible 25

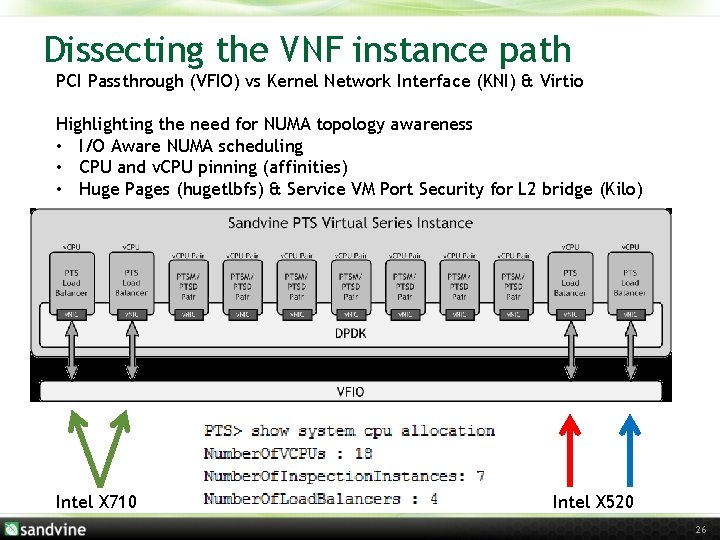

Dissecting the VNF instance path PCI Passthrough (VFIO) vs Kernel Network Interface (KNI) & Virtio Highlighting the need for NUMA topology awareness • I/O Aware NUMA scheduling • CPU and v. CPU pinning (affinities) • Huge Pages (hugetlbfs) & Service VM Port Security for L 2 bridge (Kilo) Intel X 710 Intel X 520 26

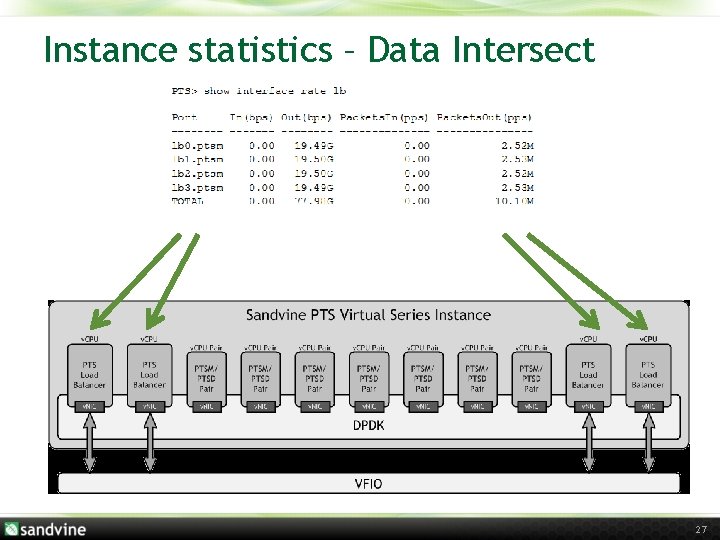

Instance statistics – Data Intersect 27

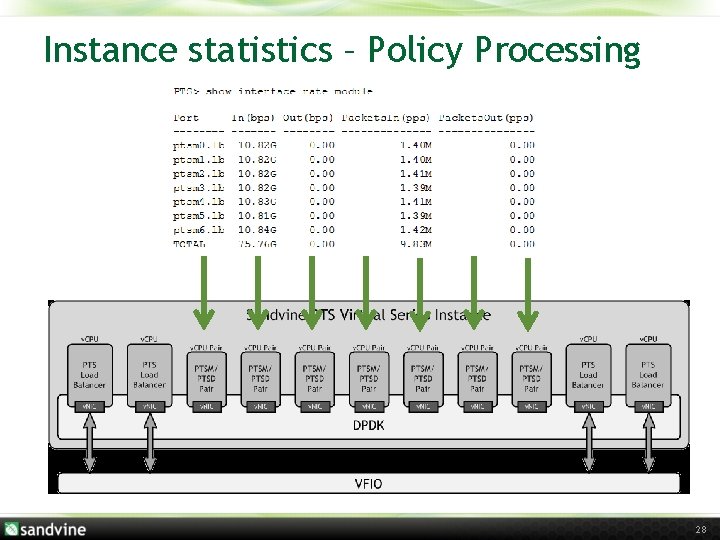

Instance statistics – Policy Processing 28

Why Sandvine adopts DPDK • Good architecture helps • Plan to continuously take upgrades • Don’t be afraid to become involved in the community (dpdk. org 01. org) • Performance was great and increases with microarchitecture progress 29

Thank you! http: //www. sandvine. com/terabit-nfv 30

- Slides: 30