Tera Grid and Opti Grid Charlie Catlett Tera

- Slides: 9

Tera. Grid and “Opti. Grid” Charlie Catlett, Tera. Grid Director University of Chicago and Argonne National Laboratory Cal. IT 2 - Optiputer Open House January 20, 2006 www. teragrid. org Charlie Catlett (cec@uchicago. edu)

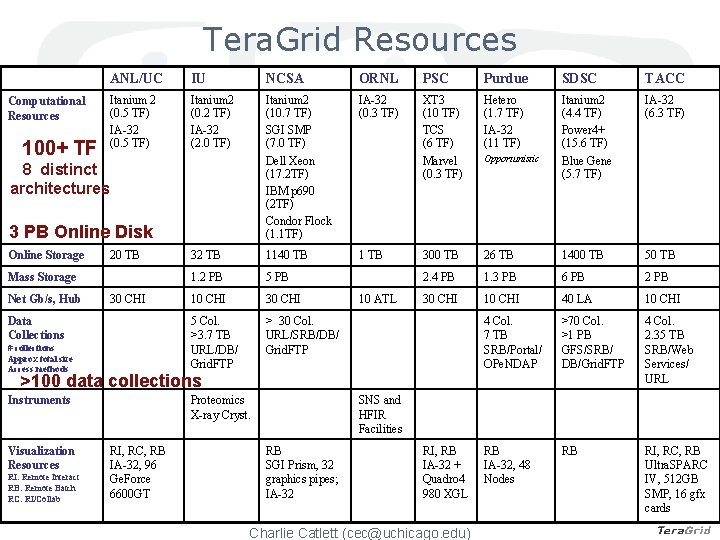

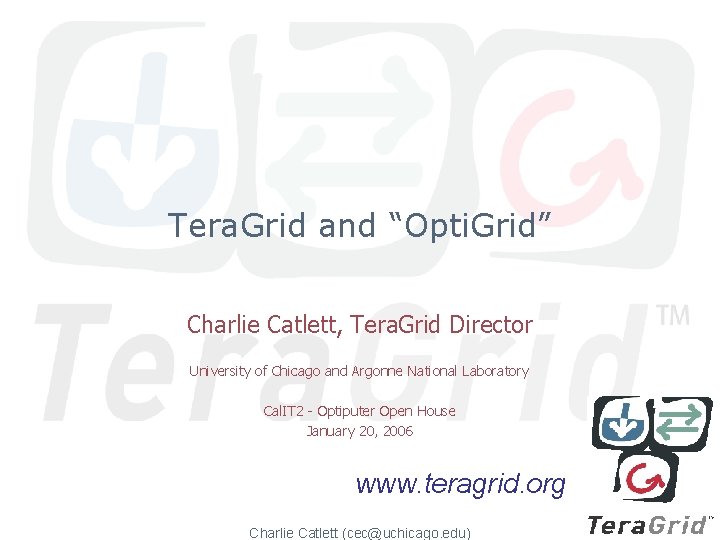

Tera. Grid Resources Computational Resources 100+ TF ANL/UC IU NCSA ORNL PSC Purdue SDSC TACC Itanium 2 (0. 5 TF) IA-32 (0. 5 TF) Itanium 2 (0. 2 TF) IA-32 (2. 0 TF) Itanium 2 (10. 7 TF) SGI SMP (7. 0 TF) Dell Xeon (17. 2 TF) IBM p 690 (2 TF) Condor Flock (1. 1 TF) IA-32 (0. 3 TF) XT 3 (10 TF) TCS (6 TF) Marvel (0. 3 TF) Hetero (1. 7 TF) IA-32 (11 TF) Itanium 2 (4. 4 TF) Power 4+ (15. 6 TF) Blue Gene (5. 7 TF) IA-32 (6. 3 TF) 32 TB 1140 TB 1 TB 300 TB 26 TB 1400 TB 50 TB 1. 2 PB 5 PB 2. 4 PB 1. 3 PB 6 PB 2 PB 10 CHI 30 CHI 10 CHI 40 LA 10 CHI 5 Col. >3. 7 TB URL/DB/ Grid. FTP > 30 Col. URL/SRB/DB/ Grid. FTP 4 Col. 7 TB SRB/Portal/ OPe. NDAP >70 Col. >1 PB GFS/SRB/ DB/Grid. FTP 4 Col. 2. 35 TB SRB/Web Services/ URL RB IA-32, 48 Nodes RB RI, RC, RB Ultra. SPARC IV, 512 GB SMP, 16 gfx cards 8 distinct architectures 3 PB Online Disk Online Storage 20 TB Mass Storage Net Gb/s, Hub 30 CHI Data Collections # collections Approx total size Access methods 10 ATL Opportunistic >100 data collections Instruments Visualization Resources RI: Remote Interact RB: Remote Batch RC: RI/Collab Proteomics X-ray Cryst. RI, RC, RB IA-32, 96 Ge. Force 6600 GT SNS and HFIR Facilities RB SGI Prism, 32 graphics pipes; IA-32 RI, RB IA-32 + Quadro 4 980 XGL Charlie Catlett (cec@uchicago. edu)

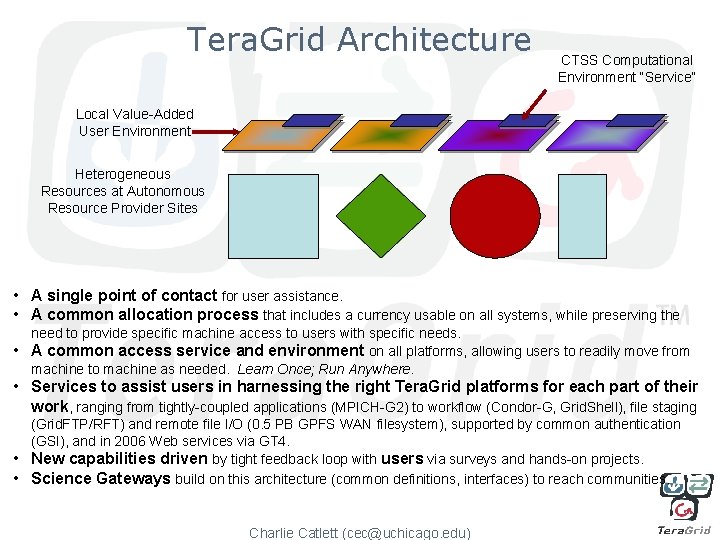

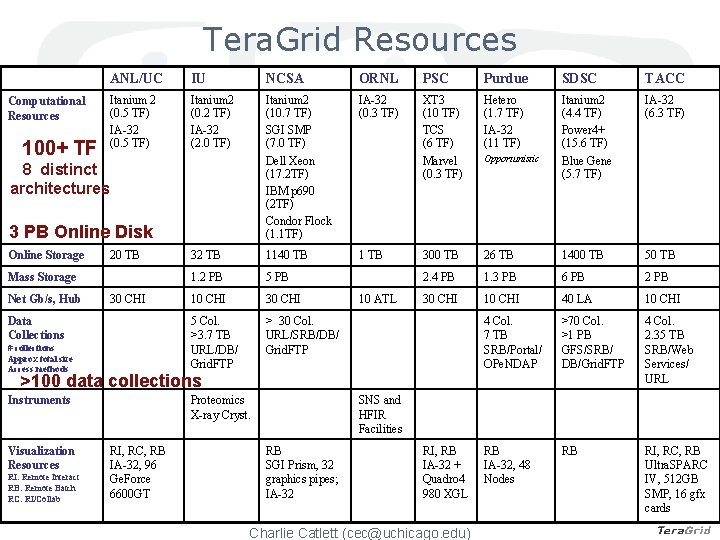

Tera. Grid Architecture CTSS Computational Environment “Service” Local Value-Added User Environment Heterogeneous Resources at Autonomous Resource Provider Sites • A single point of contact for user assistance. • A common allocation process that includes a currency usable on all systems, while preserving the need to provide specific machine access to users with specific needs. • A common access service and environment on all platforms, allowing users to readily move from machine to machine as needed. Learn Once; Run Anywhere. • Services to assist users in harnessing the right Tera. Grid platforms for each part of their work, ranging from tightly-coupled applications (MPICH-G 2) to workflow (Condor-G, Grid. Shell), file staging (Grid. FTP/RFT) and remote file I/O (0. 5 PB GPFS WAN filesystem), supported by common authentication (GSI), and in 2006 Web services via GT 4. • New capabilities driven by tight feedback loop with users via surveys and hands-on projects. • Science Gateways build on this architecture (common definitions, interfaces) to reach communities. Charlie Catlett (cec@uchicago. edu)

Tera. Grid Use 1600 users Charlie Catlett (cec@uchicago. edu)

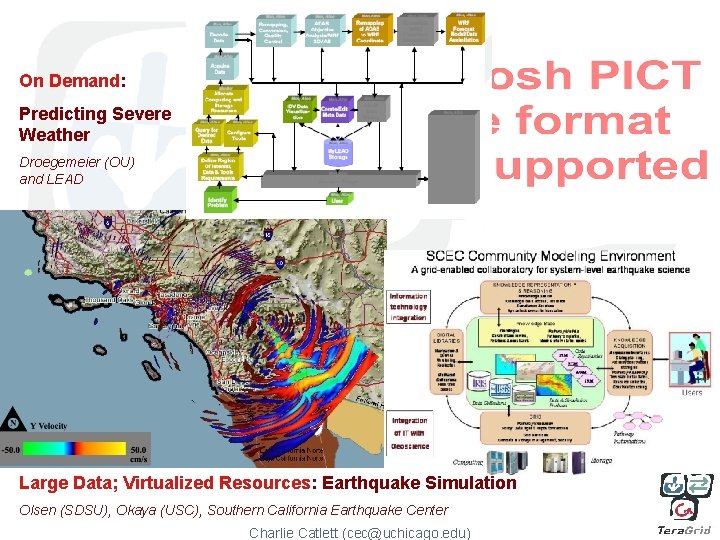

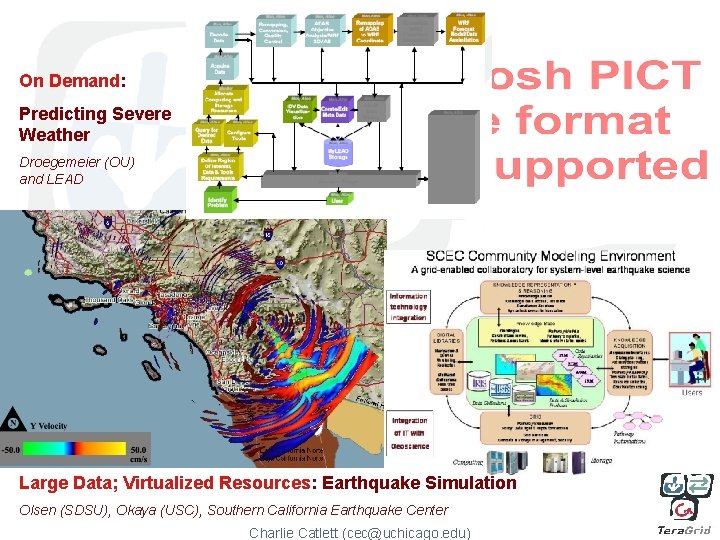

On Demand: Predicting Severe Weather Droegemeier (OU) and LEAD Large Data; Virtualized Resources: Earthquake Simulation Olsen (SDSU), Okaya (USC), Southern California Earthquake Center Charlie Catlett (cec@uchicago. edu)

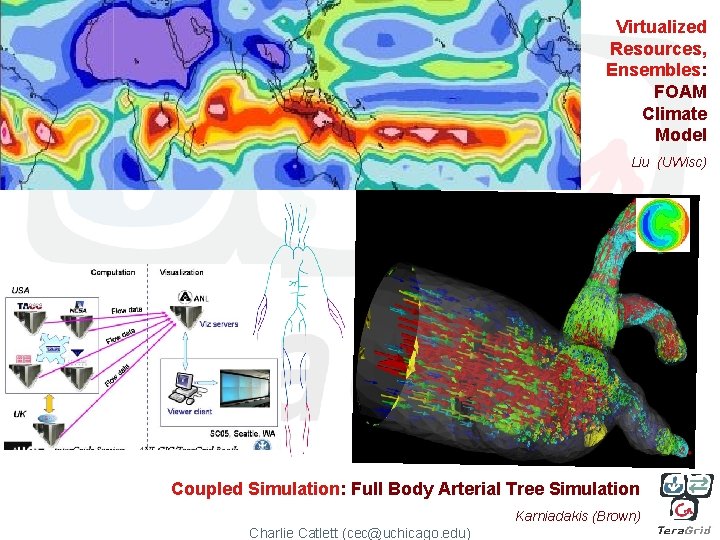

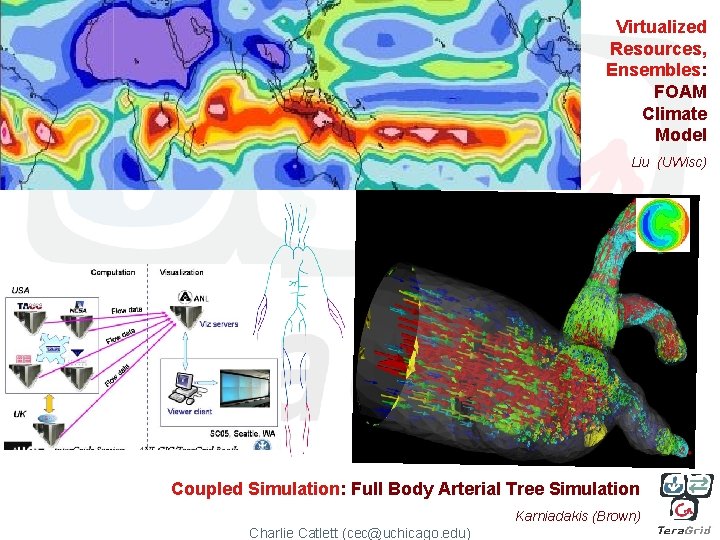

Virtualized Resources, Ensembles: FOAM Climate Model Liu (UWisc) Coupled Simulation: Full Body Arterial Tree Simulation Karniadakis (Brown) Charlie Catlett (cec@uchicago. edu)

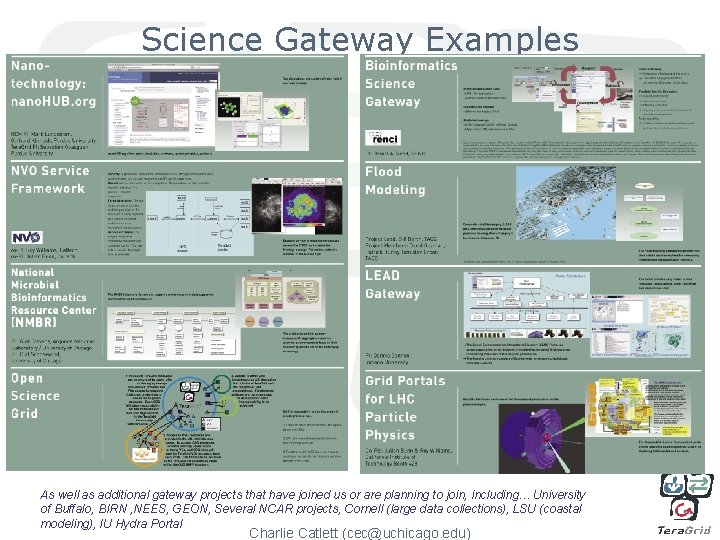

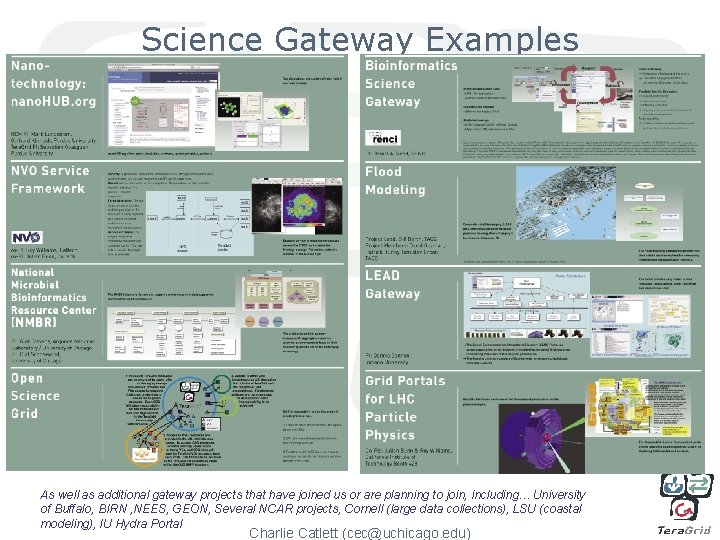

Science Gateway Examples As well as additional gateway projects that have joined us or are planning to join, including… University of Buffalo, BIRN , NEES, GEON, Several NCAR projects, Cornell (large data collections), LSU (coastal modeling), IU Hydra Portal Charlie Catlett (cec@uchicago. edu)

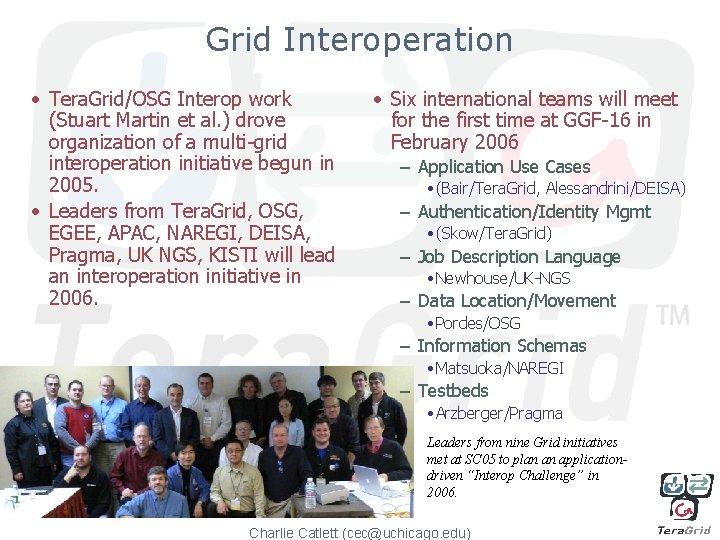

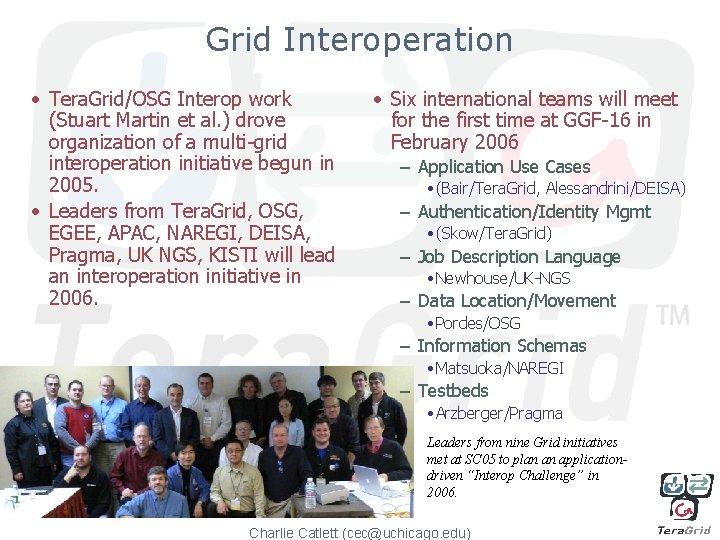

Grid Interoperation • Tera. Grid/OSG Interop work (Stuart Martin et al. ) drove organization of a multi-grid interoperation initiative begun in 2005. • Leaders from Tera. Grid, OSG, EGEE, APAC, NAREGI, DEISA, Pragma, UK NGS, KISTI will lead an interoperation initiative in 2006. • Six international teams will meet for the first time at GGF-16 in February 2006 – Application Use Cases • (Bair/Tera. Grid, Alessandrini/DEISA) – Authentication/Identity Mgmt • (Skow/Tera. Grid) – Job Description Language • Newhouse/UK-NGS – Data Location/Movement • Pordes/OSG – Information Schemas • Matsuoka/NAREGI – Testbeds • Arzberger/Pragma Leaders from nine Grid initiatives met at SC 05 to plan an applicationdriven “Interop Challenge” in 2006. Charlie Catlett (cec@uchicago. edu)

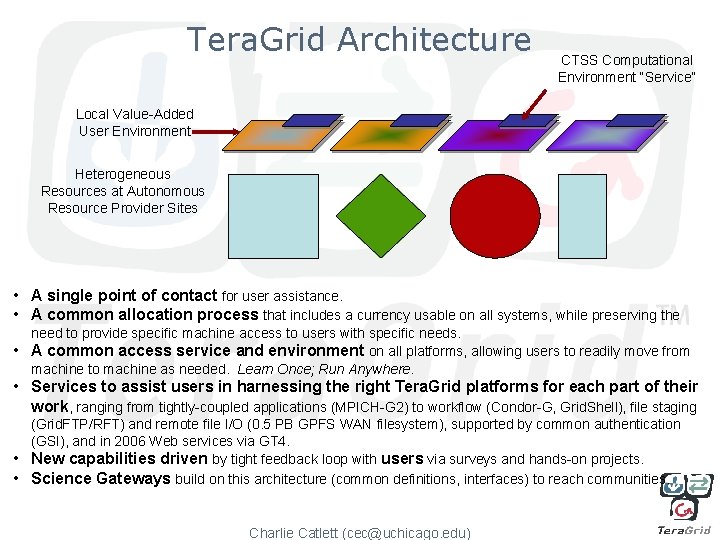

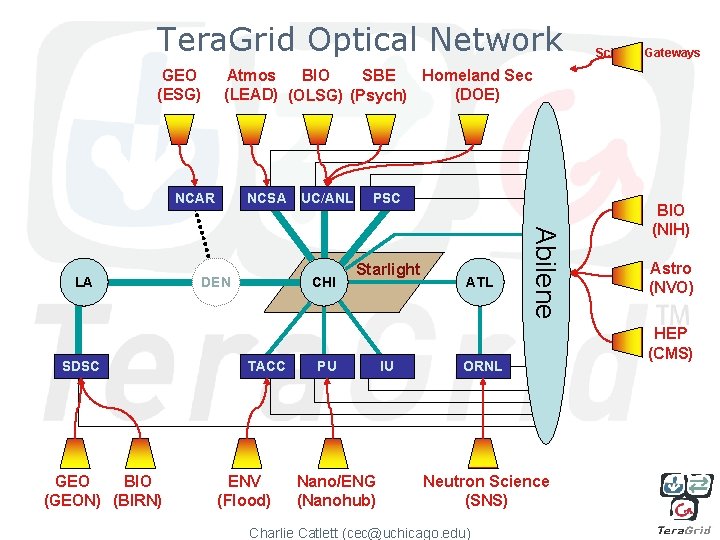

Tera. Grid Optical Network GEO (ESG) Atmos Homeland Sec BIO SBE (LEAD) (OLSG) (Psych) (DOE) NCAR SDSC GEO BIO (GEON) (BIRN) NCSA UC/ANL DEN CHI TACC ENV (Flood) PSC Starlight PU Nano/ENG (Nanohub) IU ATL Abilene LA Science Gateways ORNL Neutron Science (SNS) Charlie Catlett (cec@uchicago. edu) BIO (NIH) Astro (NVO) HEP (CMS)