Team Exploration vs Exploitation with Finite Budgets David

Team Exploration vs Exploitation with Finite Budgets David Castañón Boston University Center for Information and Systems Eng

Introduction • Exploration vs exploitation is a classic tradeoff in decision problems with uncertainty - Exploit available information vs improving information - Numerous applications in finance, adaptive control, machine learning, … • Interested in paradigms for teams of agents - Search and exploit information with limited resources - Task partitioning, motivated by applications (e. g. surveillance) • Objective: techniques for team control of activities - Improve coordination among team members

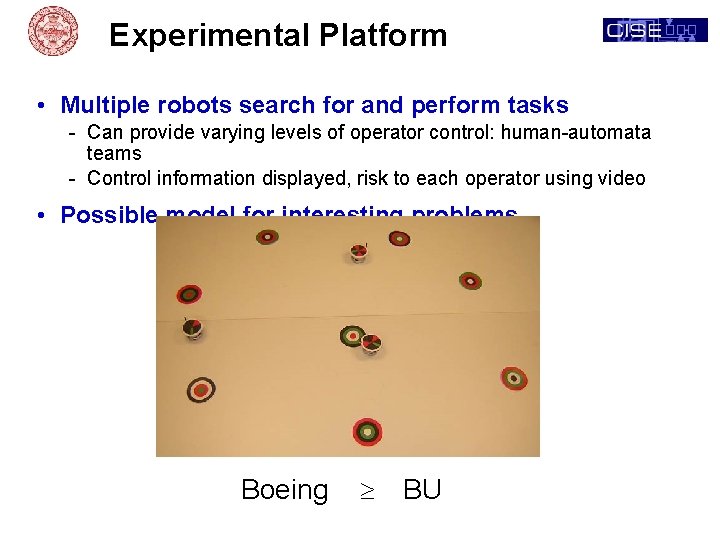

Experimental Platform • Multiple robots search for and perform tasks - Can provide varying levels of operator control: human-automata teams - Control information displayed, risk to each operator using video • Possible model for interesting problems Boeing ³ BU

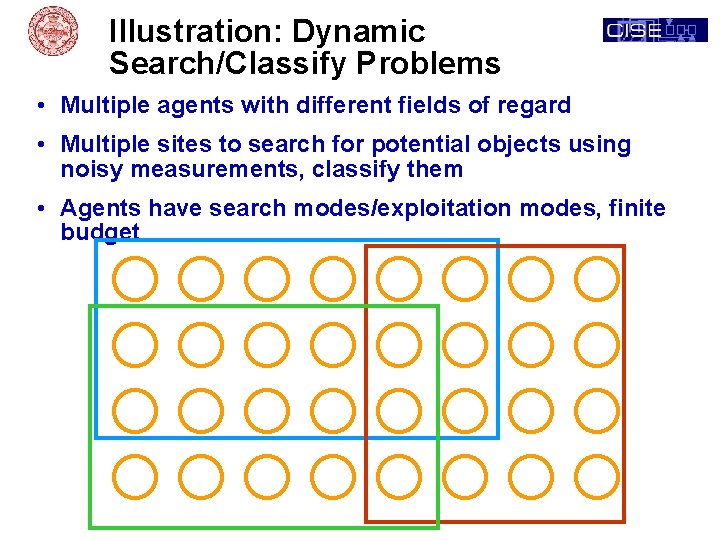

Illustration: Dynamic Search/Classify Problems • Multiple agents with different fields of regard • Multiple sites to search for potential objects using noisy measurements, classify them • Agents have search modes/exploitation modes, finite budget

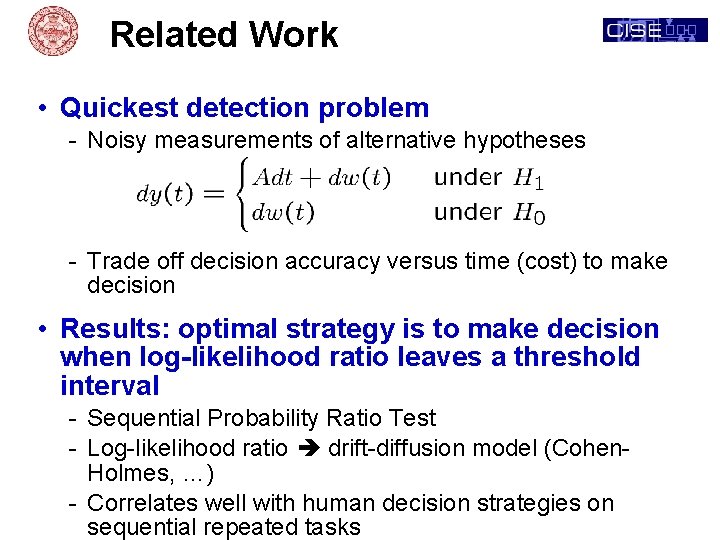

Related Work • Quickest detection problem - Noisy measurements of alternative hypotheses - Trade off decision accuracy versus time (cost) to make decision • Results: optimal strategy is to make decision when log-likelihood ratio leaves a threshold interval - Sequential Probability Ratio Test - Log-likelihood ratio drift-diffusion model (Cohen. Holmes, …) - Correlates well with human decision strategies on sequential repeated tasks

Multi-Armed Bandits • Robbins (50 s), Gittins (70 s), many others • Independent Machines - Each machine has individual state with random dynamics - State evolves when machine is played, stationary otherwise - Random payoff depending on state of machine • Objective: infinite horizon sum of discounted rewards - Repeated decisions among finite alternatives • Result: Optimal policy based on Gittins indices, computed independently for each machine based on current state

Human Exploration/Exploitation in Multi-armed Bandit Problems • Multi-armed bandit paradigm - Complex choice task with simple structure to normative solution - Can model human choice with heuristics/simple strategies that approximate Gittins indices and include parameters for human variability • Daw et al (05, 06): Time varying environments, propose alternative models for exploration/ exploitation using soft-max and other random decision rules • Steyvers et al (09), Zhang et al (09): Finite horizon total reward paradigm, with models using a latent variable to encourage exploration vs exploitation • Yu-Dayan (05), Aston-Jones & Cohen (05), others: Propose mechanisms underlying brain activity in aspects of exploration vs exploitation • Cohen et al (07): Highlight limitations of the multi-armed

Limits of Bandit Paradigm • Stationary environments - Tasks do not evolve unless acted on - Likelihood of success at task plus follow-on task is time invariant - Set of possible tasks is time-invariant • Single action per time - Not geared to team activities • Infinite horizon objective - Unbounded resources • Single type of action per bandit - Can’t vary choice Interested in other paradigms that remove these limitations

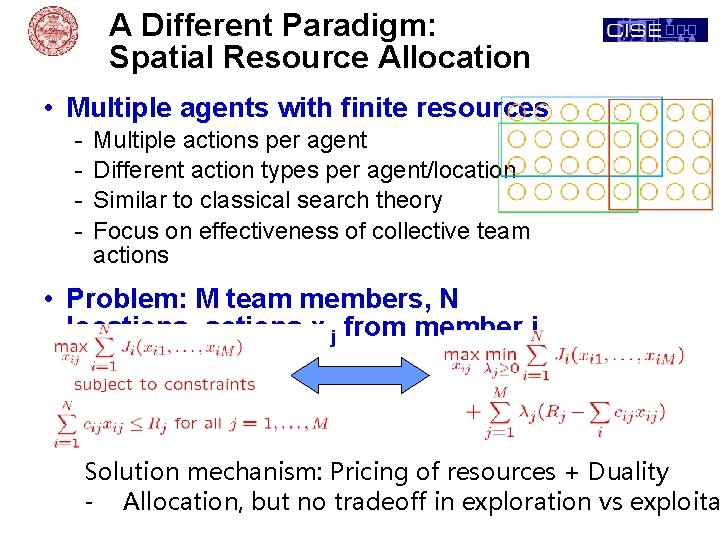

A Different Paradigm: Spatial Resource Allocation • Multiple agents with finite resources - Multiple actions per agent Different action types per agent/location Similar to classical search theory Focus on effectiveness of collective team actions • Problem: M team members, N locations, actions xij from member j to location i: Solution mechanism: Pricing of resources + Duality - Allocation, but no tradeoff in exploration vs exploita

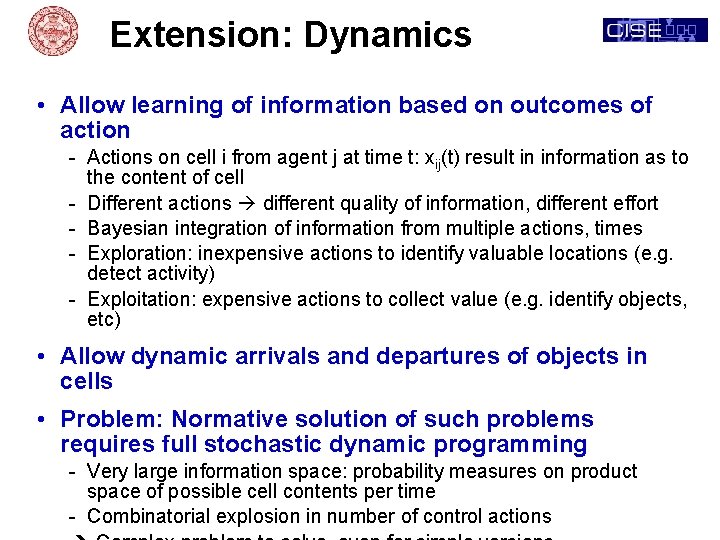

Extension: Dynamics • Allow learning of information based on outcomes of action - Actions on cell i from agent j at time t: xij(t) result in information as to the content of cell - Different actions different quality of information, different effort - Bayesian integration of information from multiple actions, times - Exploration: inexpensive actions to identify valuable locations (e. g. detect activity) - Exploitation: expensive actions to collect value (e. g. identify objects, etc) • Allow dynamic arrivals and departures of objects in cells • Problem: Normative solution of such problems requires full stochastic dynamic programming - Very large information space: probability measures on product space of possible cell contents per time - Combinatorial explosion in number of control actions

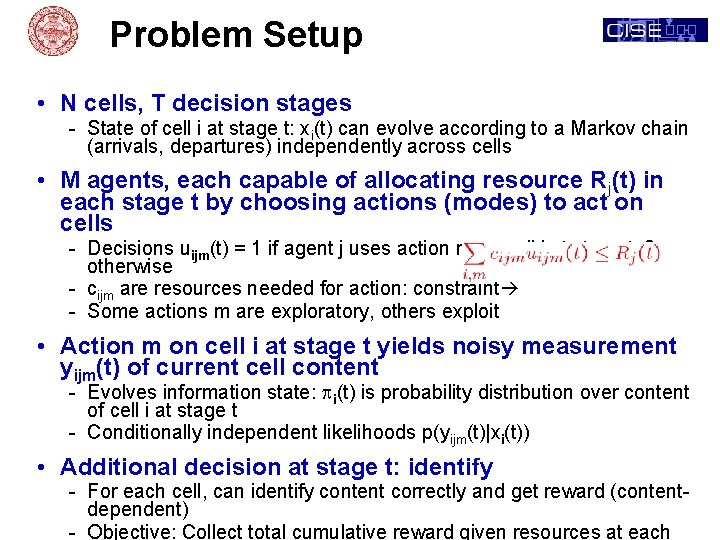

Problem Setup • N cells, T decision stages - State of cell i at stage t: xi(t) can evolve according to a Markov chain (arrivals, departures) independently across cells • M agents, each capable of allocating resource Rj(t) in each stage t by choosing actions (modes) to act on cells - Decisions uijm(t) = 1 if agent j uses action m on cell i at stage t, 0 otherwise - cijm are resources needed for action: constraint - Some actions m are exploratory, others exploit • Action m on cell i at stage t yields noisy measurement yijm(t) of current cell content - Evolves information state: pi(t) is probability distribution over content of cell i at stage t - Conditionally independent likelihoods p(yijm(t)|xi(t)) • Additional decision at stage t: identify - For each cell, can identify content correctly and get reward (contentdependent) - Objective: Collect total cumulative reward given resources at each

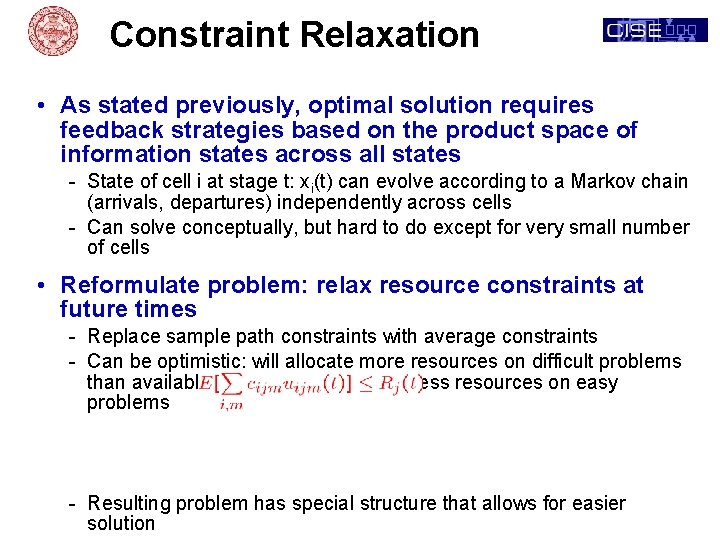

Constraint Relaxation • As stated previously, optimal solution requires feedback strategies based on the product space of information states across all states - State of cell i at stage t: xi(t) can evolve according to a Markov chain (arrivals, departures) independently across cells - Can solve conceptually, but hard to do except for very small number of cells • Reformulate problem: relax resource constraints at future times - Replace sample path constraints with average constraints - Can be optimistic: will allocate more resources on difficult problems than available, balance by allocating less resources on easy problems - Resulting problem has special structure that allows for easier solution

Results: Duality • Theorem: - Given resource prices lj(t) for agents at each stage, stochastic optimization problem decouples into N independent cell problems - Optimal solution can be found in terms of feedback strategies that use only information on the current information state of a cell to select actions for that cell - Overall information state is product of marginal information state for each cell • Implication: efficient solution algorithms - Merge pricing approaches from resource allocation with single cell subproblem solutions that use stochastic dynamic optimization - Reduce joint N-cell optimization problem into N decoupled problems, coordinated by prices - Replaces combinatorial optimization across cells by pricing mechanisms • May provide tractable models for human choice in resource allocation and optional stopping - Replace detailed enumeration of outcomes with price estimates

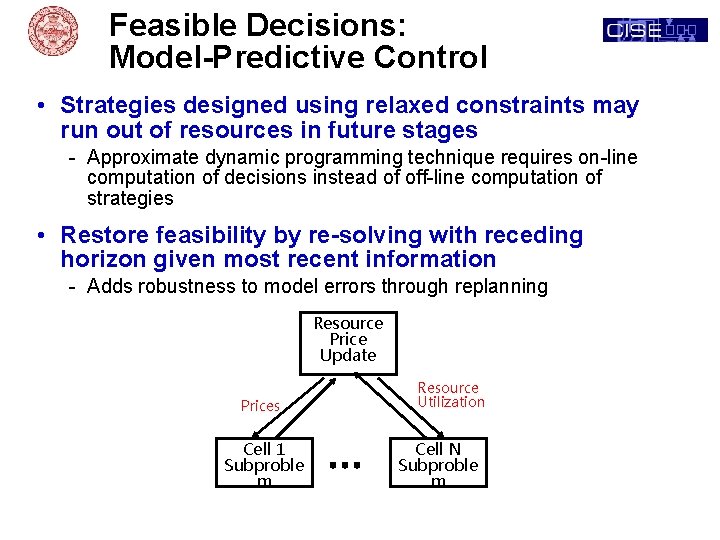

Feasible Decisions: Model-Predictive Control • Strategies designed using relaxed constraints may run out of resources in future stages - Approximate dynamic programming technique requires on-line computation of decisions instead of off-line computation of strategies • Restore feasibility by re-solving with receding horizon given most recent information - Adds robustness to model errors through replanning Resource Price Update Prices Cell 1 Subproble m Resource Utilization Cell N Subproble m

Team Computation • Interesting problem: agents negotiate based on local problems to agree on prices - Concave maximization problem, but non-differentiable challenging to establish converging algorithms - Can truncate negotiation with heuristics current approach - Topic of current Ph. D effort • Mixed Initiative Variations - Human team members as equals control subset of resources, negotiate on prices with automata - Human team members as leaders select actions for own resources, automata select complementary actions for others - Humans as controllers impose constraints (e. g. cell responsibility allocations) on automata • Algorithms have been developed to implement above variations and explore potential experiments

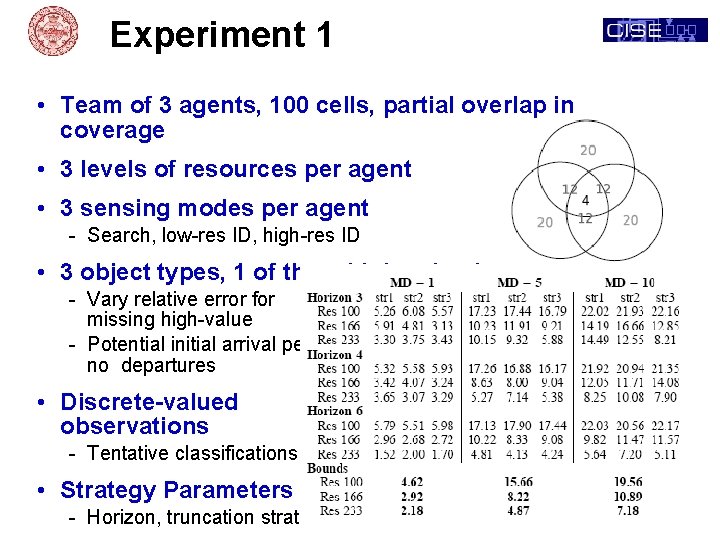

Experiment 1 • Team of 3 agents, 100 cells, partial overlap in coverage • 3 levels of resources per agent • 3 sensing modes per agent - Search, low-res ID, high-res ID • 3 object types, 1 of them high-valued - Vary relative error for missing high-value - Potential initial arrival per cell no departures • Discrete-valued observations - Tentative classifications • Strategy Parameters - Horizon, truncation strategy

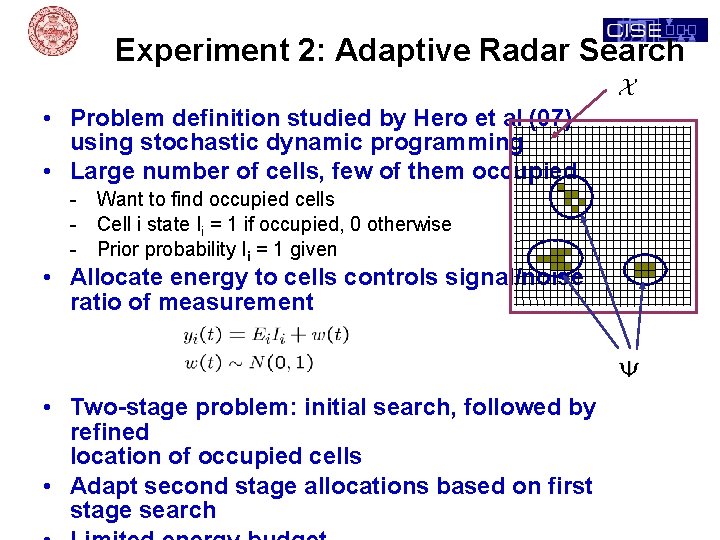

Experiment 2: Adaptive Radar Search • Problem definition studied by Hero et al (07) using stochastic dynamic programming • Large number of cells, few of them occupied - Want to find occupied cells - Cell i state Ii = 1 if occupied, 0 otherwise - Prior probability Ii = 1 given • Allocate energy to cells controls signal/noise ratio of measurement • Two-stage problem: initial search, followed by refined location of occupied cells • Adapt second stage allocations based on first stage search

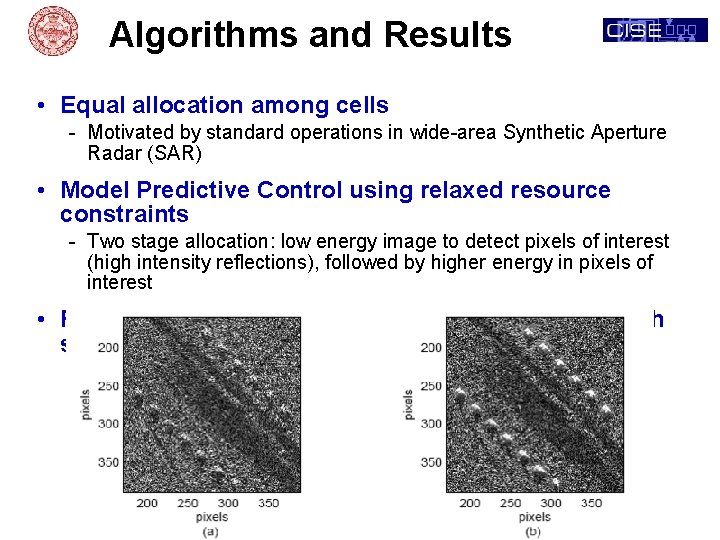

Algorithms and Results • Equal allocation among cells - Motivated by standard operations in wide-area Synthetic Aperture Radar (SAR) • Model Predictive Control using relaxed resource constraints - Two stage allocation: low energy image to detect pixels of interest (high intensity reflections), followed by higher energy in pixels of interest • Results similar to prior work by Hero et al (2007) with simpler algorithm 2 orders of magnitude faster

Paradigm Extension: Mobile Agents • Viewable sites depend on agent positions - Slower time scale control - Focus on trajectory selection and mode - Sequencing of sites critical to set up future sites • Mobile agents: trajectory and focus of attention control - Models where electronic steering is not feasible - Sequence-dependent setup cost for activities • Additional uncertainty: risk of travel - Visiting a site accomplishes task that gains task value - Traversing among sites can result in vehicle failure and loss

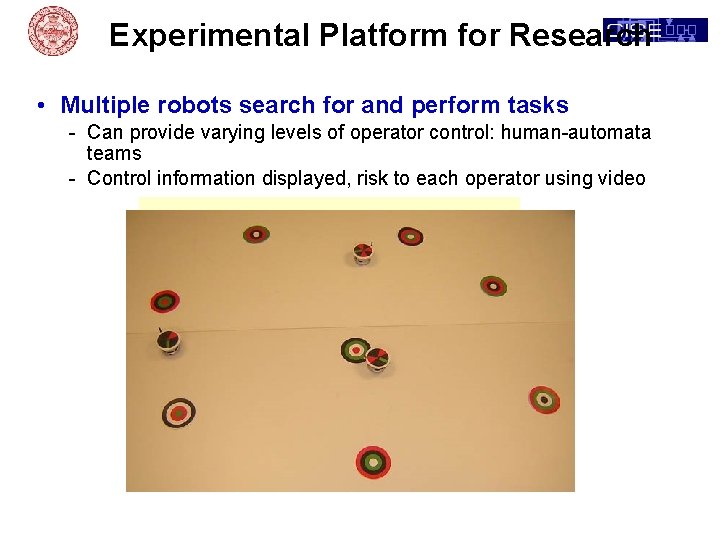

Experimental Platform for Research • Multiple robots search for and perform tasks - Can provide varying levels of operator control: human-automata teams - Control information displayed, risk to each operator using video

Future Activities • Evaluate algorithms on experiments with dynamic arrivals/departures • Develop algorithms for motion-constrained mobile agents • Implement experiments involving tasks with performance uncertainty in robot test facility - Vary tempo, size, uncertainty, information • Implement autonomous team control algorithms to interact with humans in alternative roles - Supervisory control, Team partners, others • Extend existing algorithms to different classes of tasks - Area search, task discovery, risk to platforms • Collaborate with MURI team to design and analyze experiments involving alternative structures for human -automata teams

- Slides: 21