TCPIP Masterclass or So TCP works but still

TCP/IP Masterclass or So TCP works … but still the users ask: Where is my throughput? Richard Hughes-Jones The University of Manchester www. hep. man. ac. uk/~rich/ then “Talks” GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 1

Layers & IP GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 2

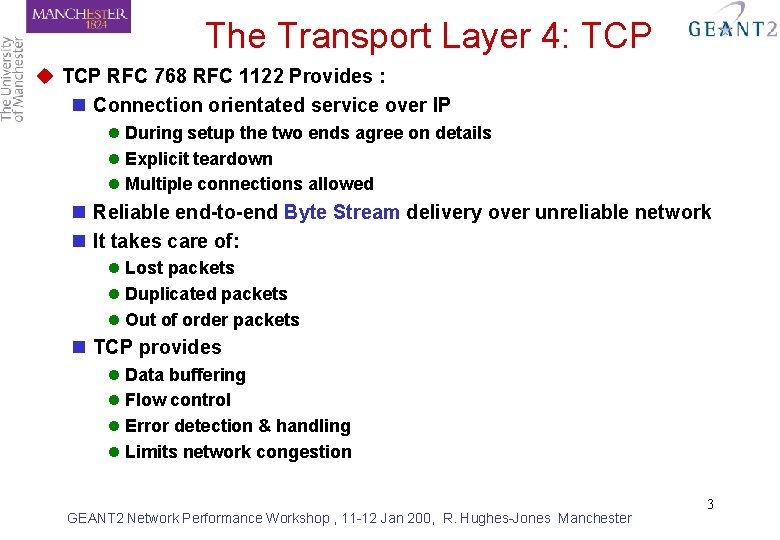

The Transport Layer 4: TCP u TCP RFC 768 RFC 1122 Provides : n Connection orientated service over IP l During setup the two ends agree on details l Explicit teardown l Multiple connections allowed n Reliable end-to-end Byte Stream delivery over unreliable network n It takes care of: l Lost packets l Duplicated packets l Out of order packets n TCP provides l Data buffering l Flow control l Error detection & handling l Limits network congestion GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 3

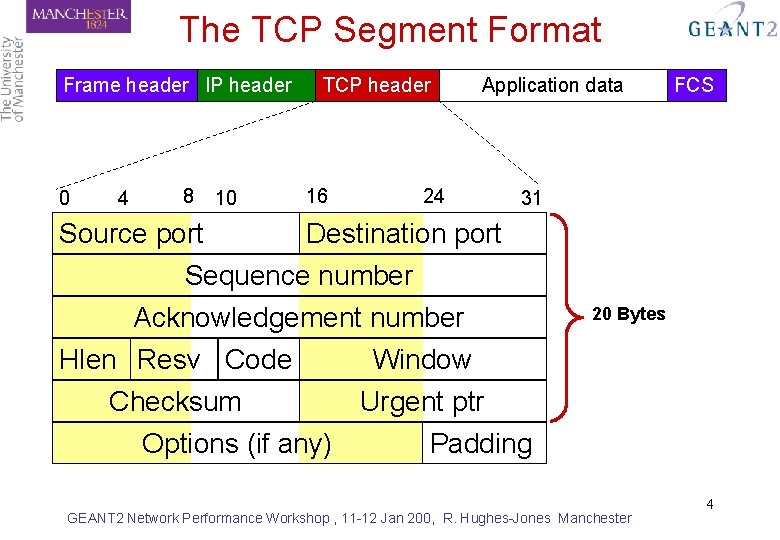

The TCP Segment Format Frame header IP header 0 4 8 Source port 10 TCP header 16 Application data 24 FCS 31 Destination port Sequence number Acknowledgement number Hlen Resv Code Window Checksum Urgent ptr Options (if any) 20 Bytes Padding GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 4

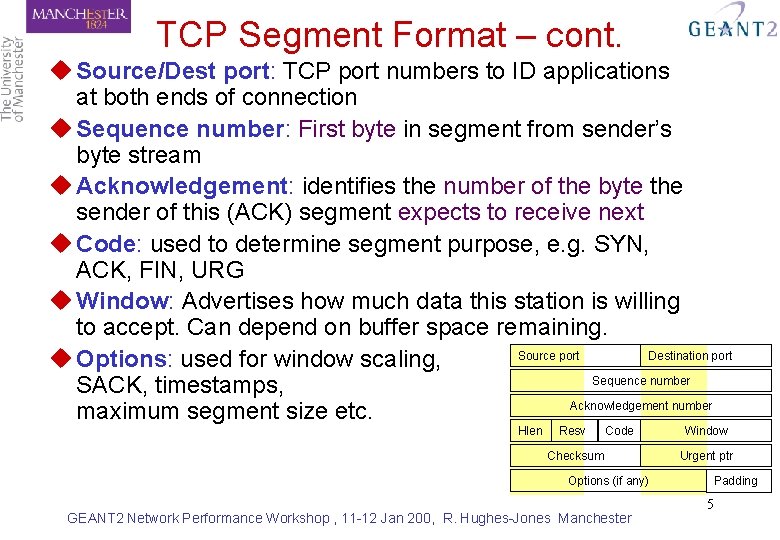

TCP Segment Format – cont. u Source/Dest port: TCP port numbers to ID applications at both ends of connection u Sequence number: First byte in segment from sender’s byte stream u Acknowledgement: identifies the number of the byte the sender of this (ACK) segment expects to receive next u Code: used to determine segment purpose, e. g. SYN, ACK, FIN, URG u Window: Advertises how much data this station is willing to accept. Can depend on buffer space remaining. Source port Destination port u Options: used for window scaling, Sequence number SACK, timestamps, Acknowledgement number maximum segment size etc. Hlen Resv Code Checksum Options (if any) GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester Window Urgent ptr Padding 5

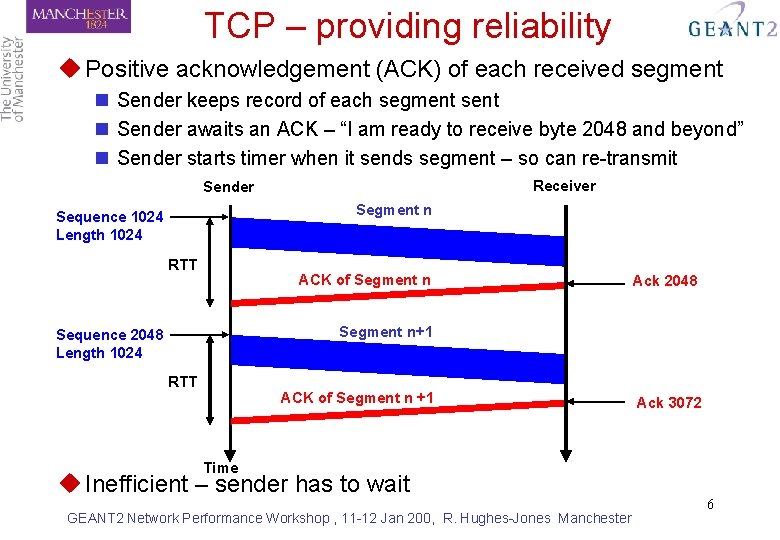

TCP – providing reliability u Positive acknowledgement (ACK) of each received segment n Sender keeps record of each segment sent n Sender awaits an ACK – “I am ready to receive byte 2048 and beyond” n Sender starts timer when it sends segment – so can re-transmit Receiver Sender Segment n Sequence 1024 Length 1024 RTT ACK of Segment n Ack 2048 Segment n+1 Sequence 2048 Length 1024 RTT ACK of Segment n +1 Ack 3072 Time u Inefficient – sender has to wait GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 6

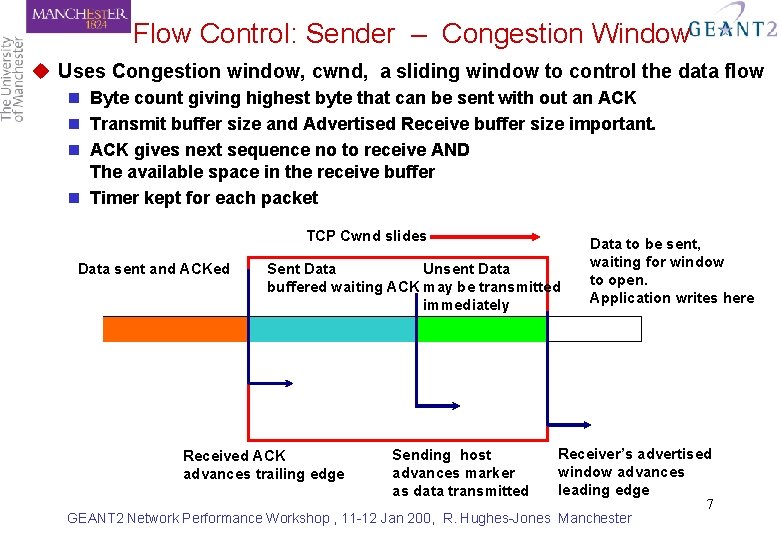

Flow Control: Sender – Congestion Window u Uses Congestion window, cwnd, a sliding window to control the data flow n Byte count giving highest byte that can be sent with out an ACK n Transmit buffer size and Advertised Receive buffer size important. n ACK gives next sequence no to receive AND The available space in the receive buffer n Timer kept for each packet TCP Cwnd slides Data sent and ACKed Unsent Data Sent Data buffered waiting ACK may be transmitted immediately Received ACK advances trailing edge Sending host advances marker as data transmitted Data to be sent, waiting for window to open. Application writes here Receiver’s advertised window advances leading edge GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 7

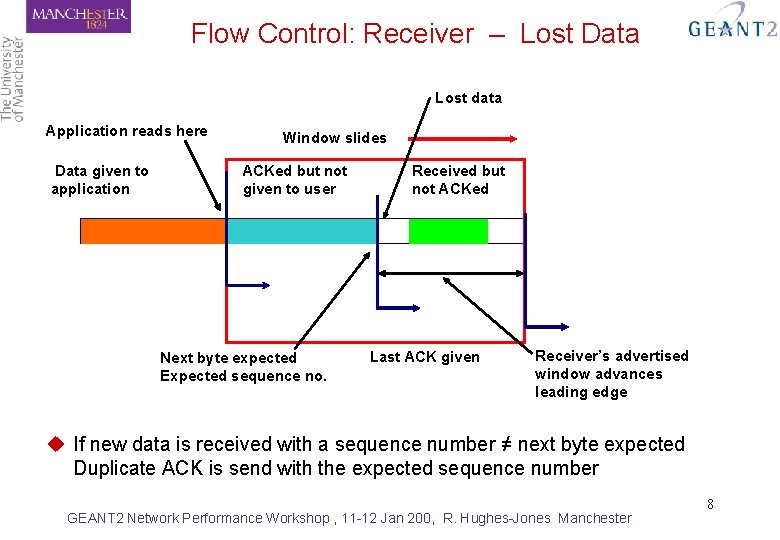

Flow Control: Receiver – Lost Data Lost data Application reads here Data given to application Window slides ACKed but not given to user Next byte expected Expected sequence no. Received but not ACKed Last ACK given Receiver’s advertised window advances leading edge u If new data is received with a sequence number ≠ next byte expected Duplicate ACK is send with the expected sequence number GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 8

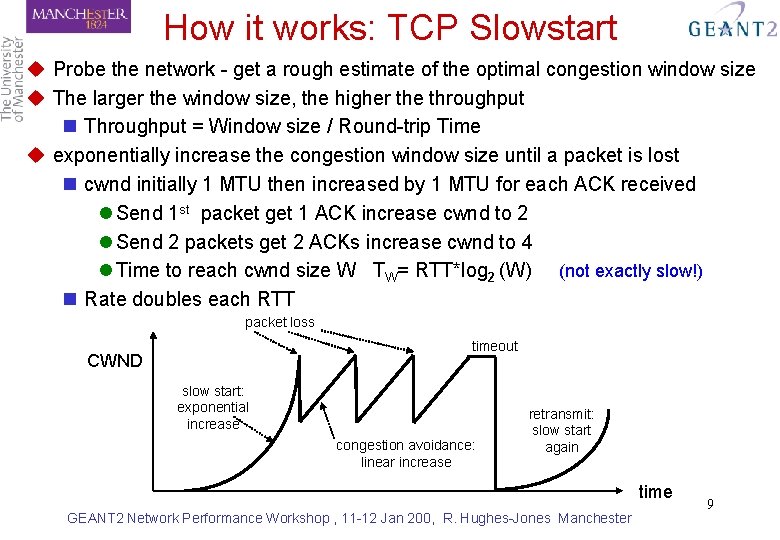

How it works: TCP Slowstart u Probe the network - get a rough estimate of the optimal congestion window size u The larger the window size, the higher the throughput n Throughput = Window size / Round-trip Time u exponentially increase the congestion window size until a packet is lost n cwnd initially 1 MTU then increased by 1 MTU for each ACK received l Send 1 st packet get 1 ACK increase cwnd to 2 l Send 2 packets get 2 ACKs increase cwnd to 4 l Time to reach cwnd size W TW= RTT*log 2 (W) (not exactly slow!) n Rate doubles each RTT packet loss timeout CWND slow start: exponential increase congestion avoidance: linear increase retransmit: slow start again time GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 9

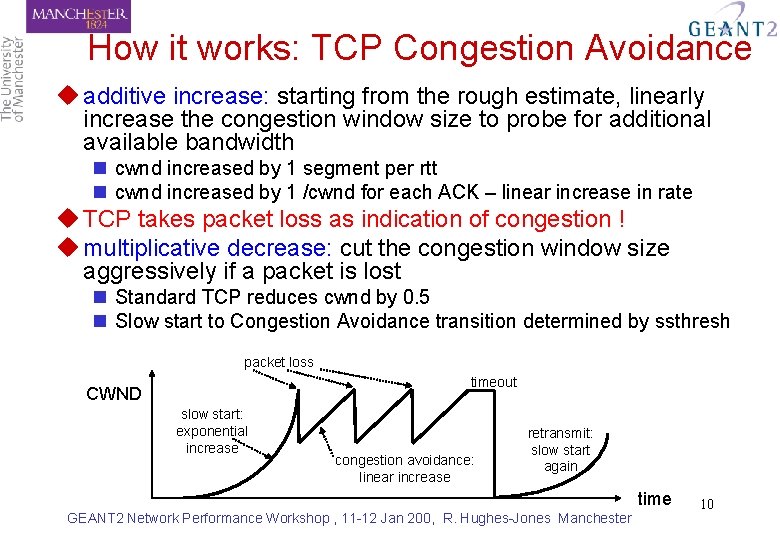

How it works: TCP Congestion Avoidance u additive increase: starting from the rough estimate, linearly increase the congestion window size to probe for additional available bandwidth n cwnd increased by 1 segment per rtt n cwnd increased by 1 /cwnd for each ACK – linear increase in rate u TCP takes packet loss as indication of congestion ! u multiplicative decrease: cut the congestion window size aggressively if a packet is lost n Standard TCP reduces cwnd by 0. 5 n Slow start to Congestion Avoidance transition determined by ssthresh packet loss timeout CWND slow start: exponential increase congestion avoidance: linear increase retransmit: slow start again time GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 10

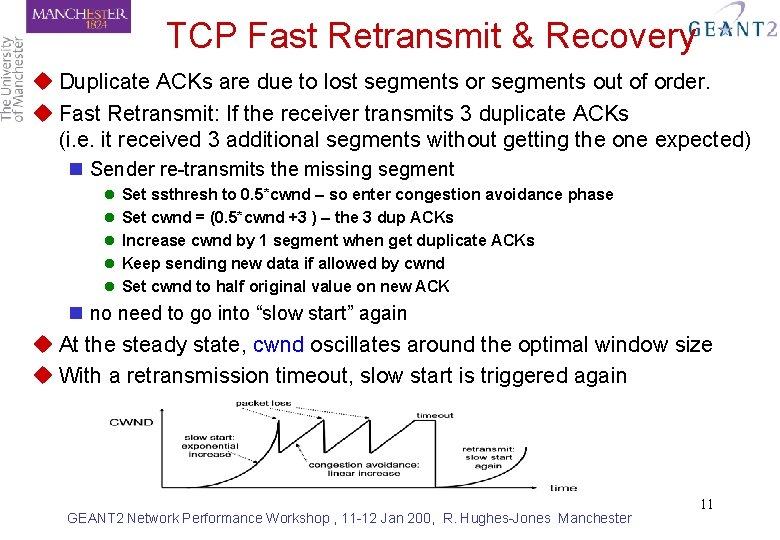

TCP Fast Retransmit & Recovery u Duplicate ACKs are due to lost segments or segments out of order. u Fast Retransmit: If the receiver transmits 3 duplicate ACKs (i. e. it received 3 additional segments without getting the one expected) n Sender re-transmits the missing segment l l l Set ssthresh to 0. 5*cwnd – so enter congestion avoidance phase Set cwnd = (0. 5*cwnd +3 ) – the 3 dup ACKs Increase cwnd by 1 segment when get duplicate ACKs Keep sending new data if allowed by cwnd Set cwnd to half original value on new ACK n no need to go into “slow start” again u At the steady state, cwnd oscillates around the optimal window size u With a retransmission timeout, slow start is triggered again GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 11

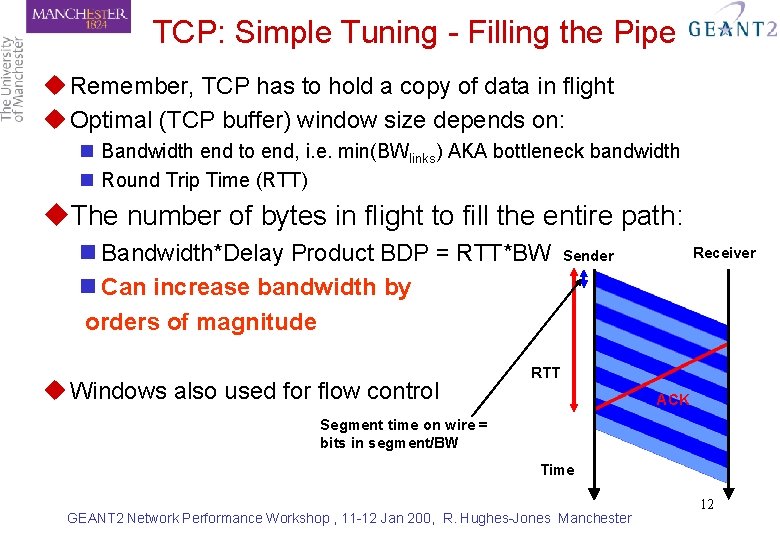

TCP: Simple Tuning - Filling the Pipe u Remember, TCP has to hold a copy of data in flight u Optimal (TCP buffer) window size depends on: n Bandwidth end to end, i. e. min(BWlinks) AKA bottleneck bandwidth n Round Trip Time (RTT) u. The number of bytes in flight to fill the entire path: n Bandwidth*Delay Product BDP = RTT*BW n Can increase bandwidth by orders of magnitude u Windows also used for flow control Receiver Sender RTT ACK Segment time on wire = bits in segment/BW Time GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 12

Standard TCP (Reno) – What’s the problem? u TCP has 2 phases: n Slowstart Probe the network to estimate the Available BW Exponential growth n Congestion Avoidance Main data transfer phase – transfer rate glows “slowly” u AIMD and High Bandwidth – Long Distance networks Poor performance of TCP in high bandwidth wide area networks is due in part to the TCP congestion control algorithm. n For each ack in a RTT without loss: cwnd -> cwnd + a / cwnd - Additive Increase, a=1 n For each window experiencing loss: cwnd -> cwnd – b (cwnd) - Multiplicative Decrease, b= ½ u Packet loss is a killer !! GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 13

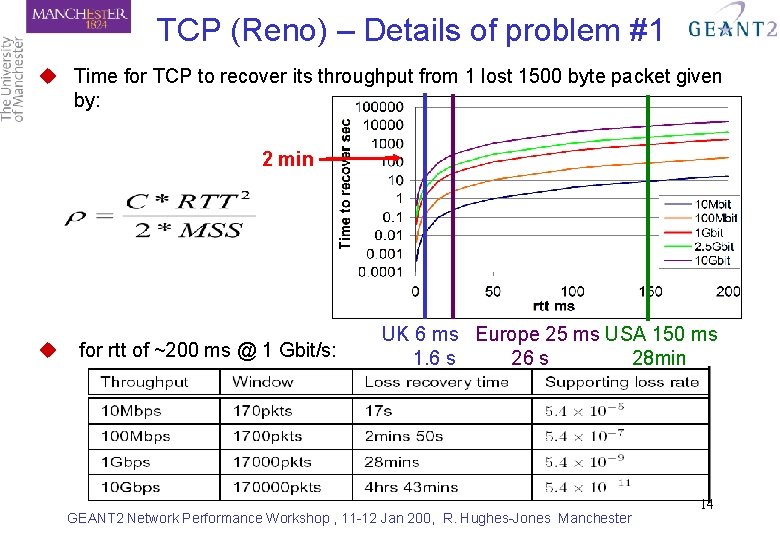

TCP (Reno) – Details of problem #1 u Time for TCP to recover its throughput from 1 lost 1500 byte packet given by: 2 min u for rtt of ~200 ms @ 1 Gbit/s: UK 6 ms Europe 25 ms USA 150 ms 1. 6 s 28 min GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 14

Investigation of new TCP Stacks u The AIMD Algorithm – Standard TCP (Reno) n For each ack in a RTT without loss: cwnd -> cwnd + a / cwnd - Additive Increase, a=1 n For each window experiencing loss: cwnd -> cwnd – b (cwnd) - Multiplicative Decrease, b= ½ u High Speed TCP a and b vary depending on current cwnd using a table n a increases more rapidly with larger cwnd – returns to the ‘optimal’ cwnd size sooner for the network path n b decreases less aggressively and, as a consequence, so does the cwnd. The effect is that there is not such a decrease in throughput. u Scalable TCP a and b are fixed adjustments for the increase and decrease of cwnd n a = 1/100 – the increase is greater than TCP Reno n b = 1/8 – the decrease on loss is less than TCP Reno n Scalable over any link speed. u Fast TCP Uses round trip time as well as packet loss to indicate congestion with rapid convergence to fair equilibrium for throughput. u HSTCP-LP, H-TCP, Bi. C-TCP 15 GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester

Lets Check out this theory about new TCP stacks Does it matter ? Does it work? GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 16

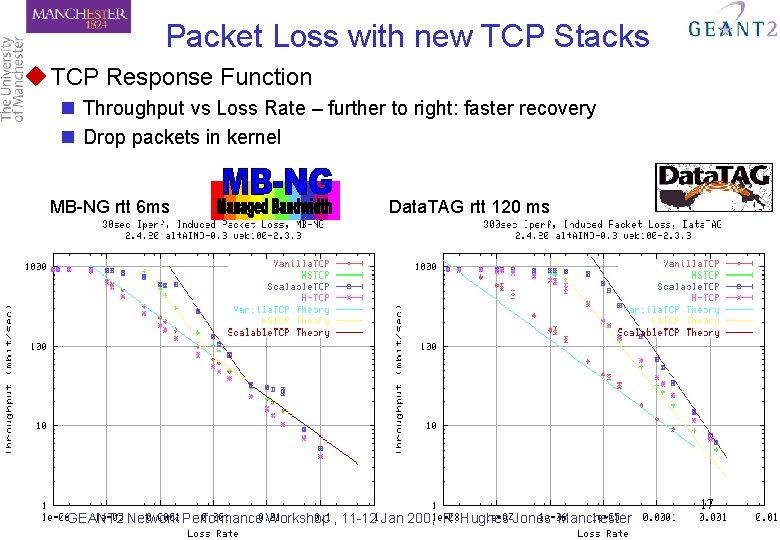

Packet Loss with new TCP Stacks u TCP Response Function n Throughput vs Loss Rate – further to right: faster recovery n Drop packets in kernel MB-NG rtt 6 ms Data. TAG rtt 120 ms GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 17

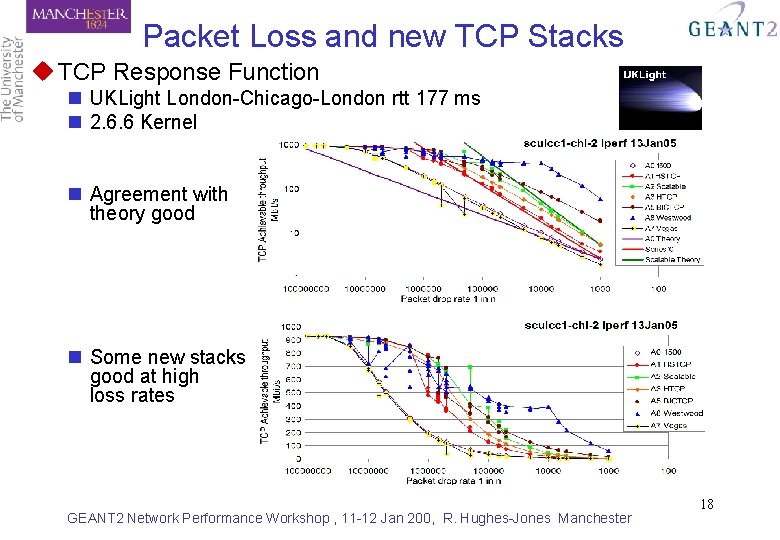

Packet Loss and new TCP Stacks u TCP Response Function n UKLight London-Chicago-London rtt 177 ms n 2. 6. 6 Kernel n Agreement with theory good n Some new stacks good at high loss rates GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 18

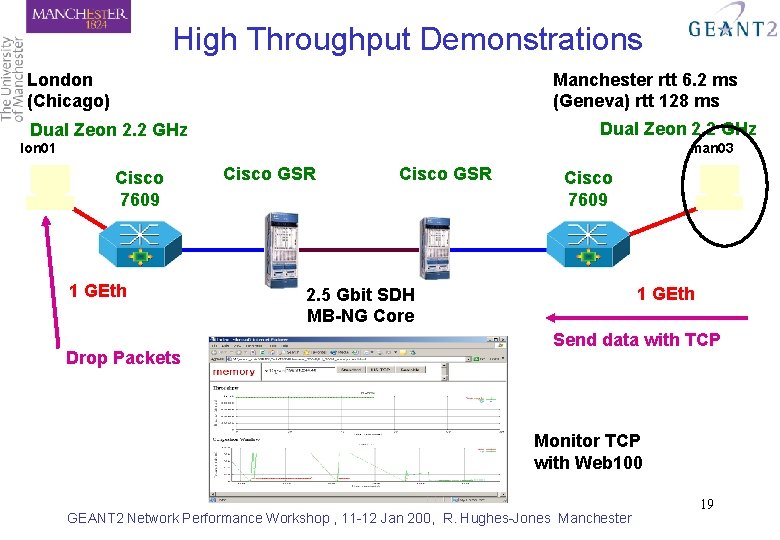

High Throughput Demonstrations London (Chicago) Manchester rtt 6. 2 ms (Geneva) rtt 128 ms Dual Zeon 2. 2 GHz lon 01 man 03 Cisco 7609 1 GEth Drop Packets Cisco GSR Cisco 7609 1 GEth 2. 5 Gbit SDH MB-NG Core Send data with TCP Monitor TCP with Web 100 GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 19

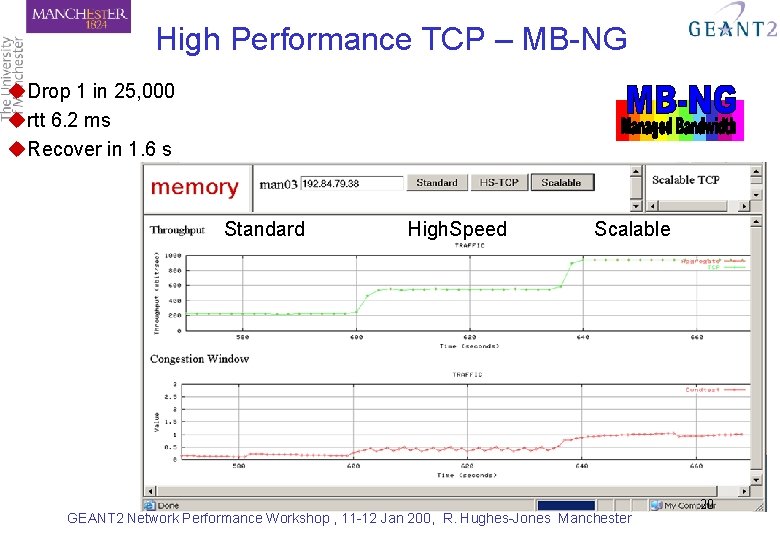

High Performance TCP – MB-NG u. Drop 1 in 25, 000 urtt 6. 2 ms u. Recover in 1. 6 s Standard High. Speed Scalable GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 20

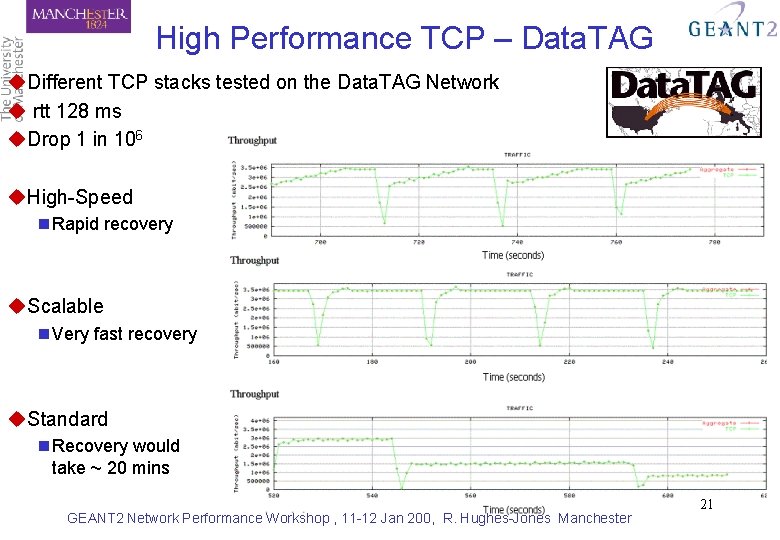

High Performance TCP – Data. TAG u. Different TCP stacks tested on the Data. TAG Network u rtt 128 ms u. Drop 1 in 106 u. High-Speed n Rapid recovery u. Scalable n Very fast recovery u. Standard n Recovery would take ~ 20 mins GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 21

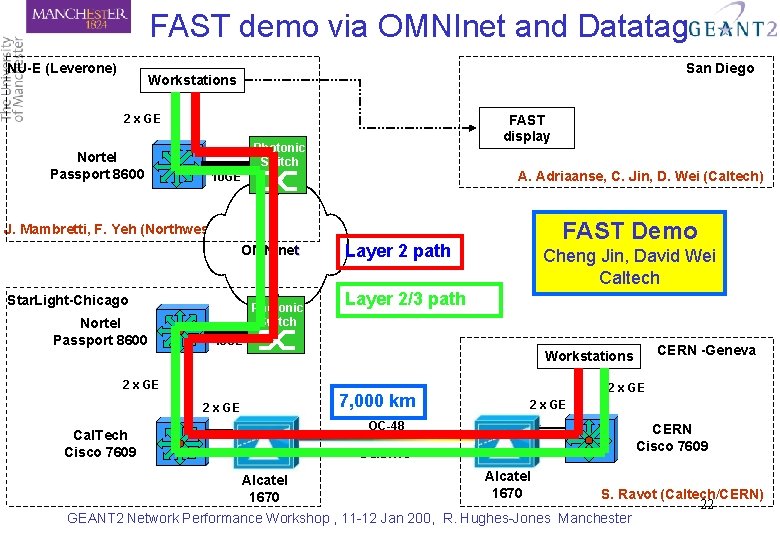

FAST demo via OMNInet and Datatag NU-E (Leverone) San Diego Workstations FAST display 2 x GE Nortel Passport 8600 Photonic Switch A. Adriaanse, C. Jin, D. Wei (Caltech) 10 GE FAST Demo J. Mambretti, F. Yeh (Northwestern) OMNInet Star. Light-Chicago Nortel Passport 8600 Photonic Switch Layer 2 path Cheng Jin, David Wei Caltech Layer 2/3 path 10 GE CERN -Geneva Workstations 2 x GE 7, 000 km 2 x GE OC-48 Cal. Tech Cisco 7609 CERN Cisco 7609 Data. TAG Alcatel 1670 S. Ravot (Caltech/CERN) 22 GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester

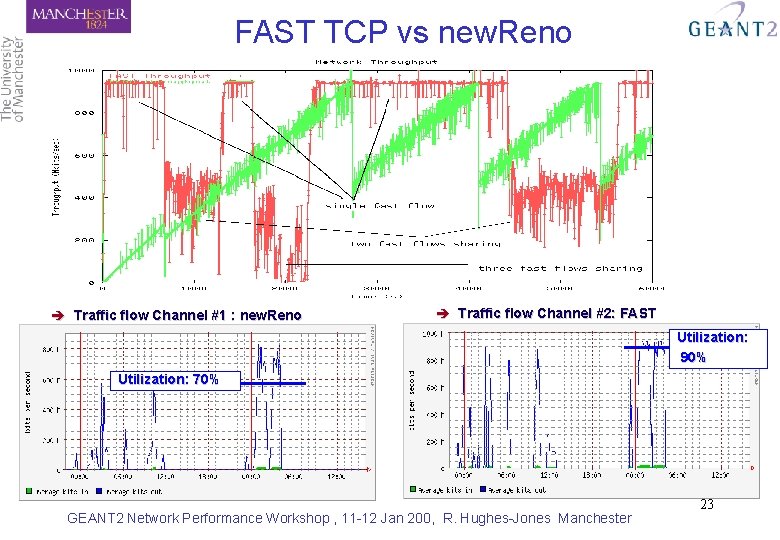

FAST TCP vs new. Reno è Traffic flow Channel #1 : new. Reno è Traffic flow Channel #2: FAST Utilization: 90% Utilization: 70% GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 23

Problem #2 Is TCP fair? look at Round Trip Times & Max Transfer Unit GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 24

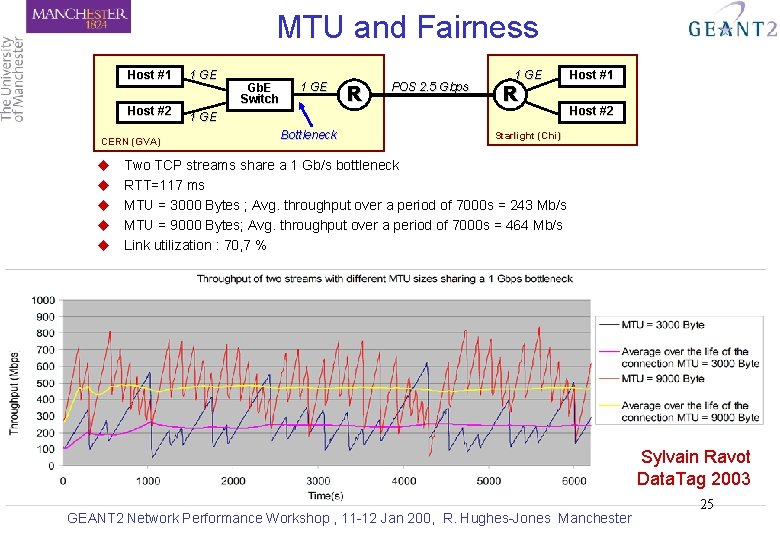

MTU and Fairness Host #1 Host #2 CERN (GVA) u u u 1 GE Gb. E Switch 1 GE R POS 2. 5 Gbps 1 GE R 1 GE Bottleneck Host #1 Host #2 Starlight (Chi) Two TCP streams share a 1 Gb/s bottleneck RTT=117 ms MTU = 3000 Bytes ; Avg. throughput over a period of 7000 s = 243 Mb/s MTU = 9000 Bytes; Avg. throughput over a period of 7000 s = 464 Mb/s Link utilization : 70, 7 % Sylvain Ravot Data. Tag 2003 GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 25

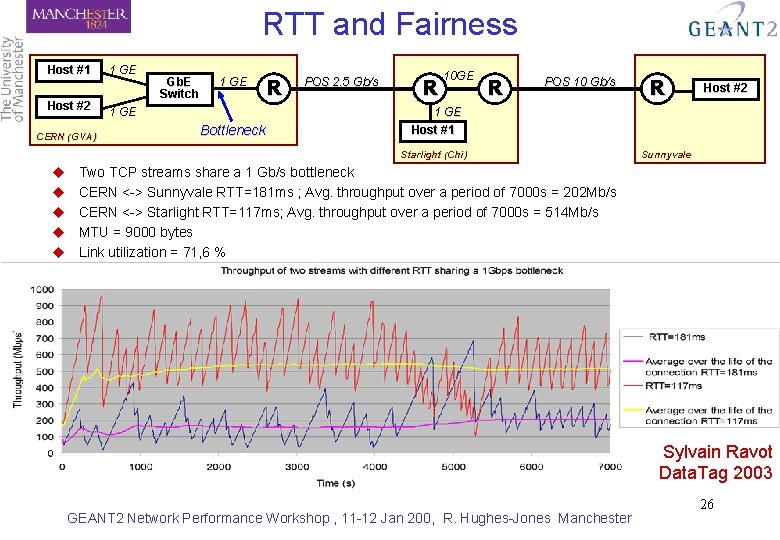

RTT and Fairness Host #1 Host #2 CERN (GVA) 1 GE Gb. E Switch 1 GE Bottleneck R POS 2. 5 Gb/s R 10 GE R POS 10 Gb/s Host #2 1 GE Host #1 Starlight (Chi) u u u R Sunnyvale Two TCP streams share a 1 Gb/s bottleneck CERN <-> Sunnyvale RTT=181 ms ; Avg. throughput over a period of 7000 s = 202 Mb/s CERN <-> Starlight RTT=117 ms; Avg. throughput over a period of 7000 s = 514 Mb/s MTU = 9000 bytes Link utilization = 71, 6 % Sylvain Ravot Data. Tag 2003 GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 26

Problem #n Do TCP Flows Share the Bandwidth ? GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 27

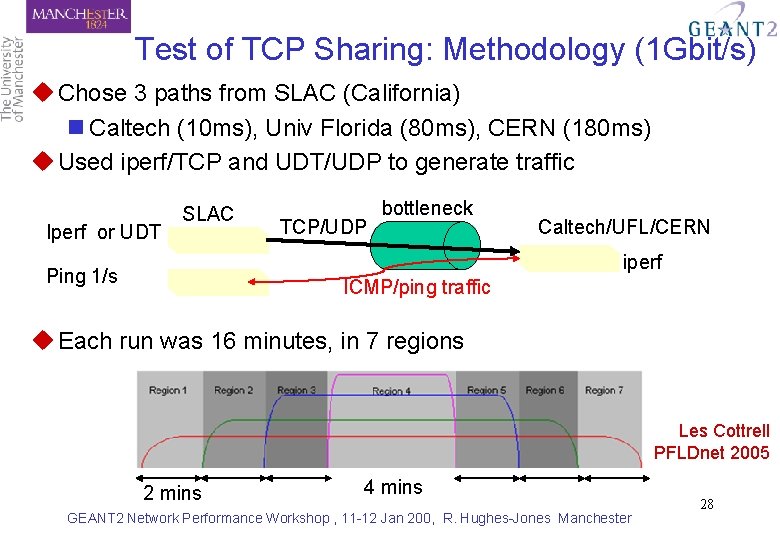

Test of TCP Sharing: Methodology (1 Gbit/s) u Chose 3 paths from SLAC (California) n Caltech (10 ms), Univ Florida (80 ms), CERN (180 ms) u Used iperf/TCP and UDT/UDP to generate traffic Iperf or UDT SLAC TCP/UDP bottleneck Caltech/UFL/CERN iperf Ping 1/s ICMP/ping traffic u Each run was 16 minutes, in 7 regions Les Cottrell PFLDnet 2005 2 mins 4 mins GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 28

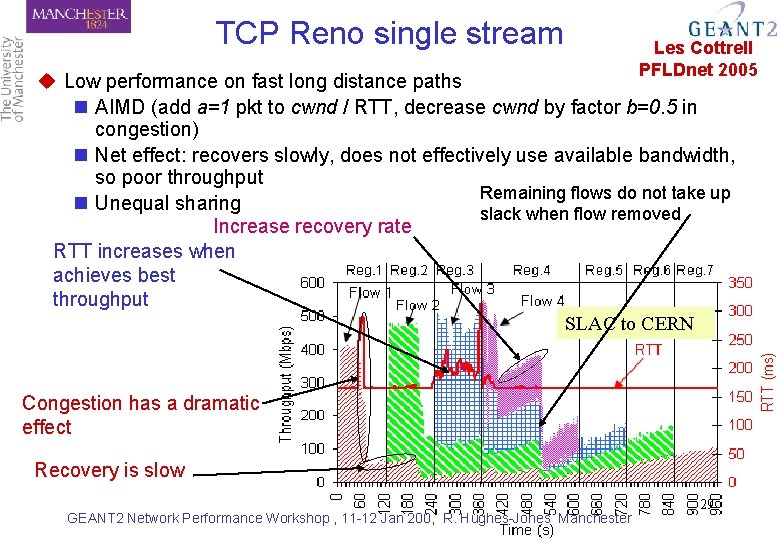

TCP Reno single stream Les Cottrell PFLDnet 2005 u Low performance on fast long distance paths n AIMD (add a=1 pkt to cwnd / RTT, decrease cwnd by factor b=0. 5 in congestion) n Net effect: recovers slowly, does not effectively use available bandwidth, so poor throughput Remaining flows do not take up n Unequal sharing slack when flow removed Increase recovery rate RTT increases when achieves best throughput SLAC to CERN Congestion has a dramatic effect Recovery is slow GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 29

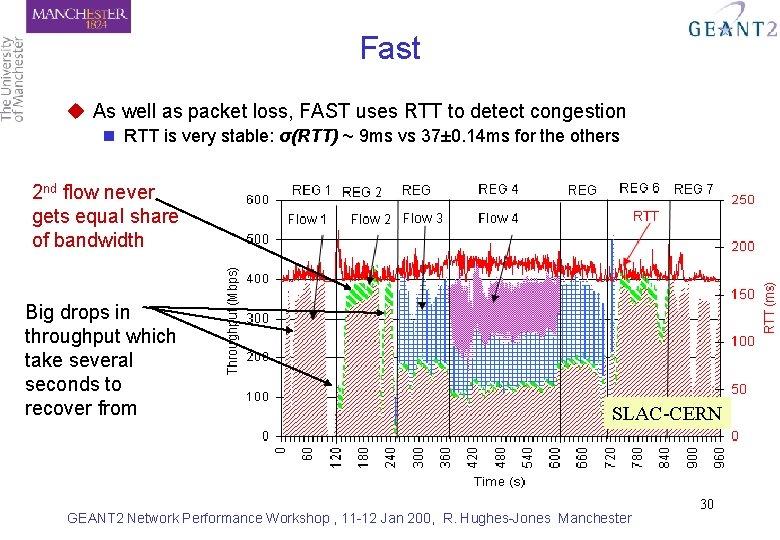

Fast u As well as packet loss, FAST uses RTT to detect congestion n RTT is very stable: σ(RTT) ~ 9 ms vs 37± 0. 14 ms for the others 2 nd flow never gets equal share of bandwidth Big drops in throughput which take several seconds to recover from SLAC-CERN GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 30

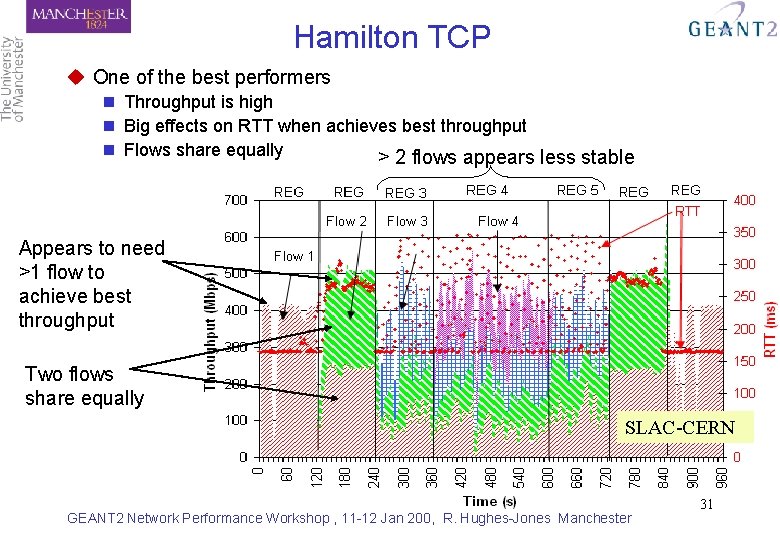

Hamilton TCP u One of the best performers n Throughput is high n Big effects on RTT when achieves best throughput n Flows share equally > 2 flows appears less stable Appears to need >1 flow to achieve best throughput Two flows share equally SLAC-CERN GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 31

Problem #n+1 To SACK or not to SACK ? GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 32

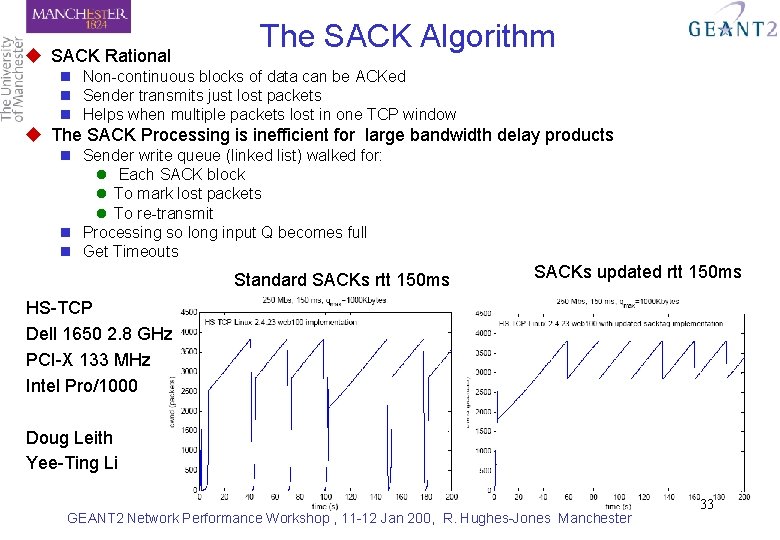

u SACK Rational The SACK Algorithm n Non-continuous blocks of data can be ACKed n Sender transmits just lost packets n Helps when multiple packets lost in one TCP window u The SACK Processing is inefficient for large bandwidth delay products n Sender write queue (linked list) walked for: l Each SACK block l To mark lost packets l To re-transmit n Processing so long input Q becomes full n Get Timeouts Standard SACKs rtt 150 ms SACKs updated rtt 150 ms HS-TCP Dell 1650 2. 8 GHz PCI-X 133 MHz Intel Pro/1000 Doug Leith Yee-Ting Li GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 33

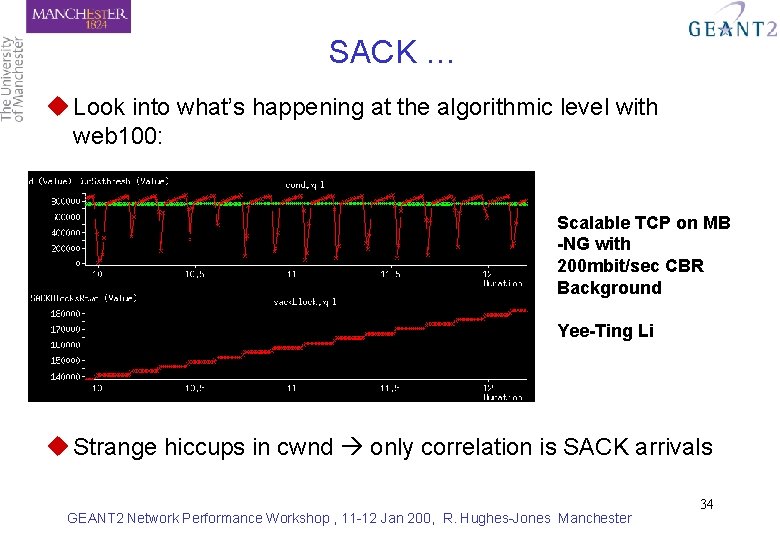

SACK … u Look into what’s happening at the algorithmic level with web 100: Scalable TCP on MB -NG with 200 mbit/sec CBR Background Yee-Ting Li u Strange hiccups in cwnd only correlation is SACK arrivals GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 34

Real Applications on Real Networks n Disk-2 -disk applications on real networks l. Memory-2 -memory tests l. Transatlantic disk-2 -disk at Gigabit speeds n Remote Computing Farms l. The effect of TCP l. The effect of distance n Radio Astronomy e-VLBI l. Leave for Ralph’s talk GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 35

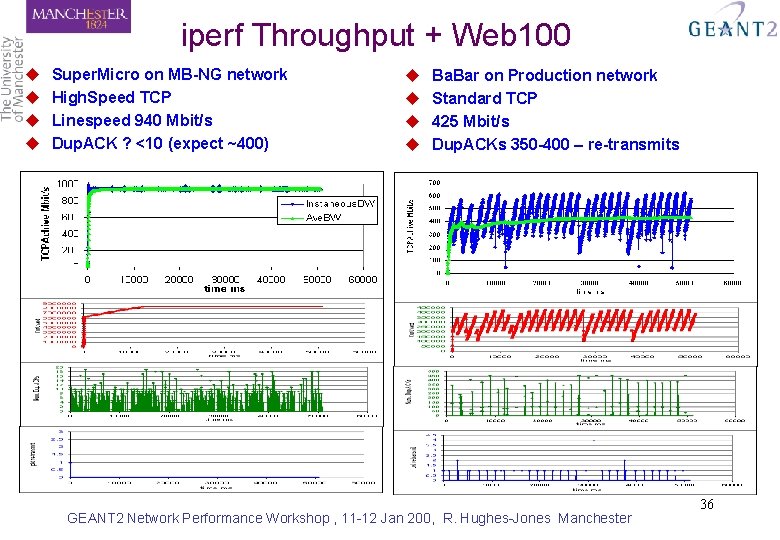

iperf Throughput + Web 100 u u Super. Micro on MB-NG network High. Speed TCP Linespeed 940 Mbit/s Dup. ACK ? <10 (expect ~400) u u Ba. Bar on Production network Standard TCP 425 Mbit/s Dup. ACKs 350 -400 – re-transmits GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 36

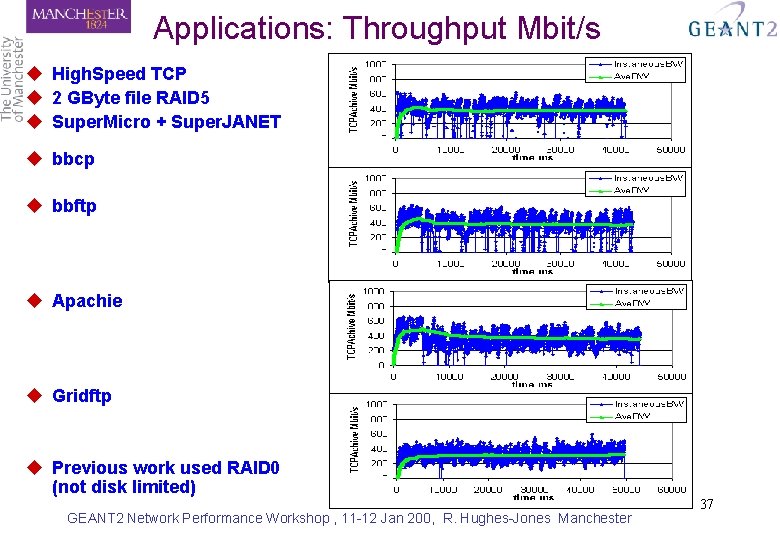

Applications: Throughput Mbit/s u High. Speed TCP u 2 GByte file RAID 5 u Super. Micro + Super. JANET u bbcp u bbftp u Apachie u Gridftp u Previous work used RAID 0 (not disk limited) GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 37

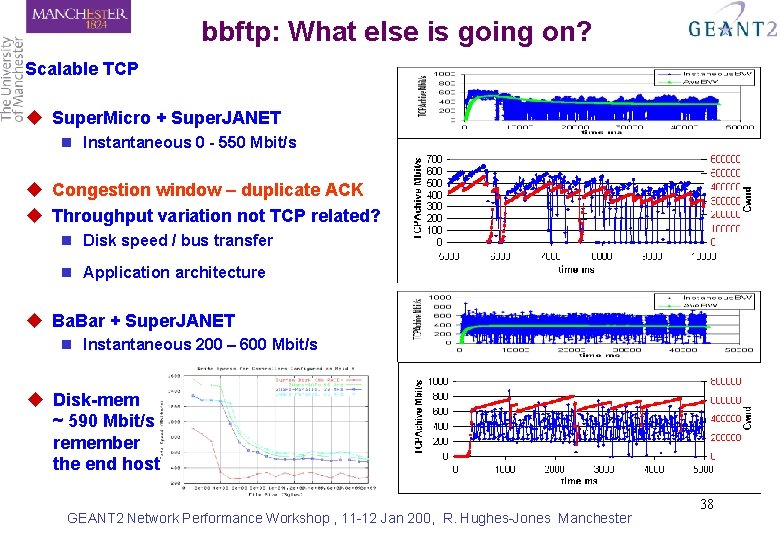

bbftp: What else is going on? Scalable TCP u Super. Micro + Super. JANET n Instantaneous 0 - 550 Mbit/s u Congestion window – duplicate ACK u Throughput variation not TCP related? n Disk speed / bus transfer n Application architecture u Ba. Bar + Super. JANET n Instantaneous 200 – 600 Mbit/s u Disk-mem ~ 590 Mbit/s remember the end host GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 38

Transatlantic Disk to Disk Transfers With UKLight Super. Computing 2004 GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 39

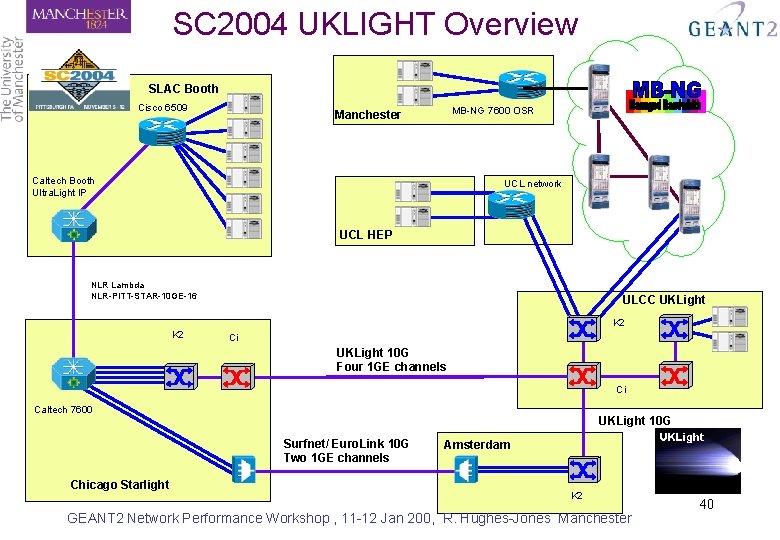

SC 2004 UKLIGHT Overview SLAC Booth SC 2004 Cisco 6509 MB-NG 7600 OSR Manchester Caltech Booth Ultra. Light IP UCL network UCL HEP NLR Lambda NLR-PITT-STAR-10 GE-16 ULCC UKLight K 2 Ci UKLight 10 G Four 1 GE channels Ci Caltech 7600 UKLight 10 G Surfnet/ Euro. Link 10 G Two 1 GE channels Chicago Starlight Amsterdam K 2 GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 40

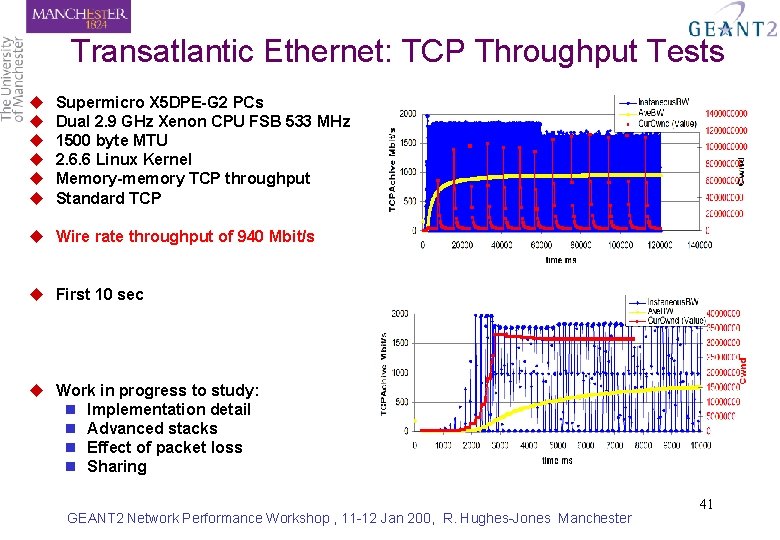

Transatlantic Ethernet: TCP Throughput Tests u u u Supermicro X 5 DPE-G 2 PCs Dual 2. 9 GHz Xenon CPU FSB 533 MHz 1500 byte MTU 2. 6. 6 Linux Kernel Memory-memory TCP throughput Standard TCP u Wire rate throughput of 940 Mbit/s u First 10 sec u Work in progress to study: n Implementation detail n Advanced stacks n Effect of packet loss n Sharing GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 41

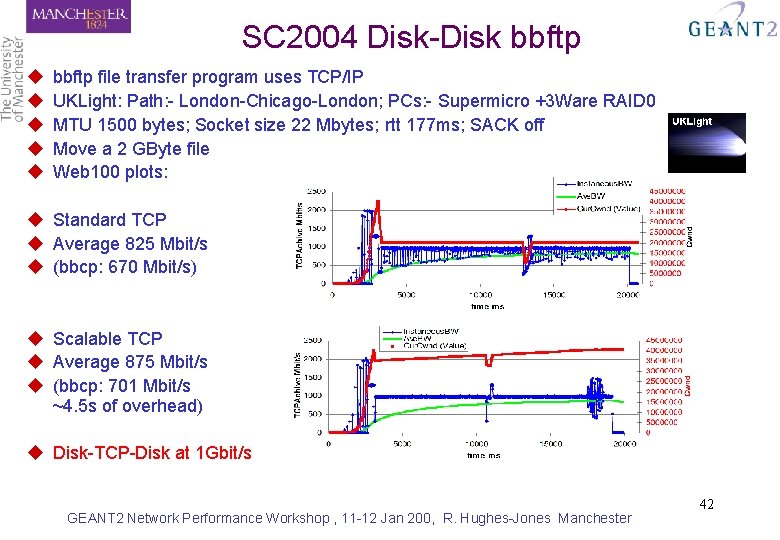

SC 2004 Disk-Disk bbftp u u u bbftp file transfer program uses TCP/IP UKLight: Path: - London-Chicago-London; PCs: - Supermicro +3 Ware RAID 0 MTU 1500 bytes; Socket size 22 Mbytes; rtt 177 ms; SACK off Move a 2 GByte file Web 100 plots: u Standard TCP u Average 825 Mbit/s u (bbcp: 670 Mbit/s) u Scalable TCP u Average 875 Mbit/s u (bbcp: 701 Mbit/s ~4. 5 s of overhead) u Disk-TCP-Disk at 1 Gbit/s GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 42

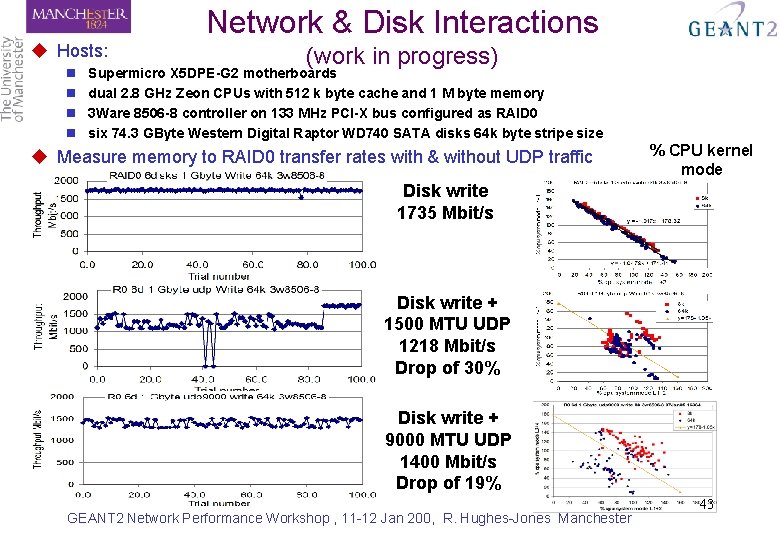

Network & Disk Interactions u Hosts: n n (work in progress) Supermicro X 5 DPE-G 2 motherboards dual 2. 8 GHz Zeon CPUs with 512 k byte cache and 1 M byte memory 3 Ware 8506 -8 controller on 133 MHz PCI-X bus configured as RAID 0 six 74. 3 GByte Western Digital Raptor WD 740 SATA disks 64 k byte stripe size u Measure memory to RAID 0 transfer rates with & without UDP traffic % CPU kernel mode Disk write 1735 Mbit/s Disk write + 1500 MTU UDP 1218 Mbit/s Drop of 30% Disk write + 9000 MTU UDP 1400 Mbit/s Drop of 19% GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 43

Remote Computing Farms in the ATLAS TDAQ Experiment GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 44

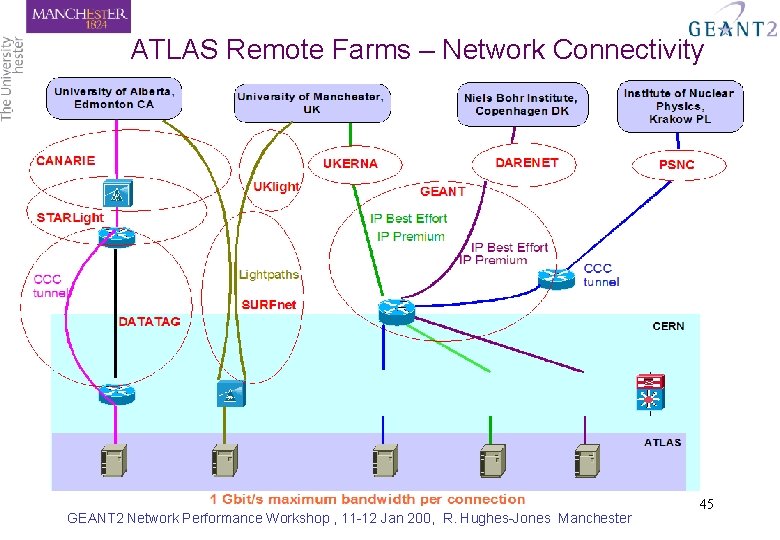

ATLAS Remote Farms – Network Connectivity GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 45

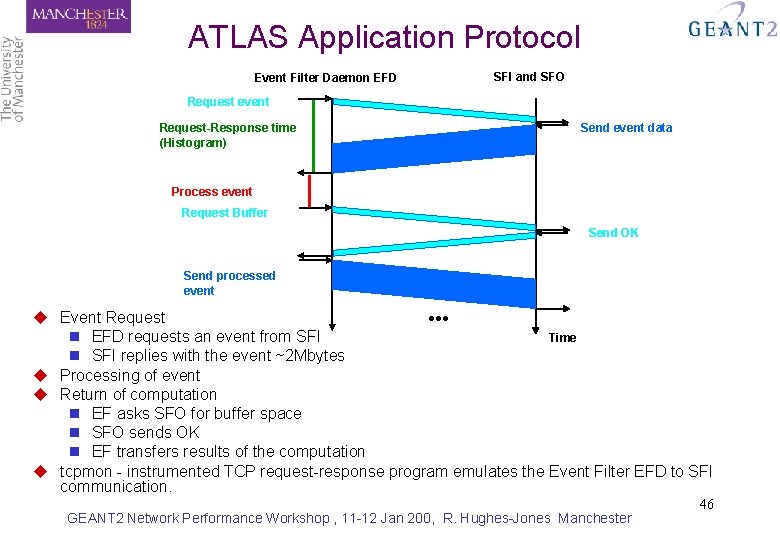

ATLAS Application Protocol Event Filter Daemon EFD SFI and SFO Request event Request-Response time (Histogram) Send event data Process event Request Buffer Send OK Send processed event ●●● u Event Request n EFD requests an event from SFI Time n SFI replies with the event ~2 Mbytes u Processing of event u Return of computation n EF asks SFO for buffer space n SFO sends OK n EF transfers results of the computation u tcpmon - instrumented TCP request-response program emulates the Event Filter EFD to SFI communication. GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 46

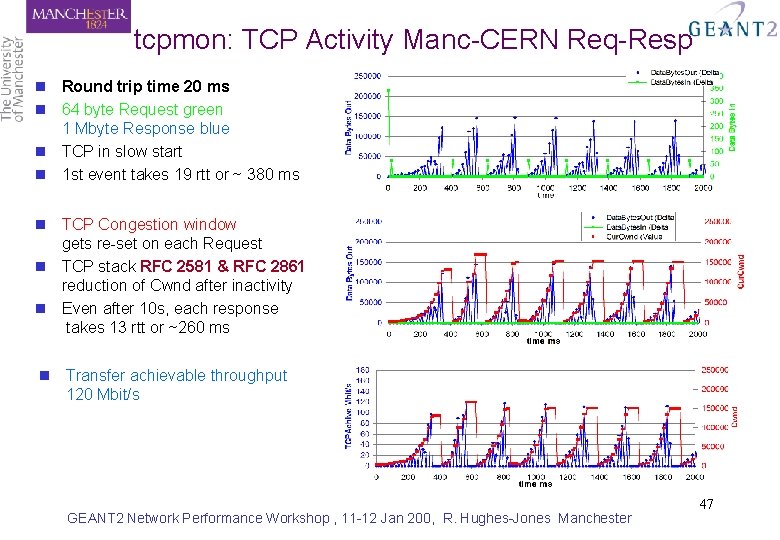

tcpmon: TCP Activity Manc-CERN Req-Resp n Round trip time 20 ms n 64 byte Request green 1 Mbyte Response blue n TCP in slow start n 1 st event takes 19 rtt or ~ 380 ms n TCP Congestion window gets re-set on each Request n TCP stack RFC 2581 & RFC 2861 reduction of Cwnd after inactivity n Even after 10 s, each response takes 13 rtt or ~260 ms n Transfer achievable throughput 120 Mbit/s GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 47

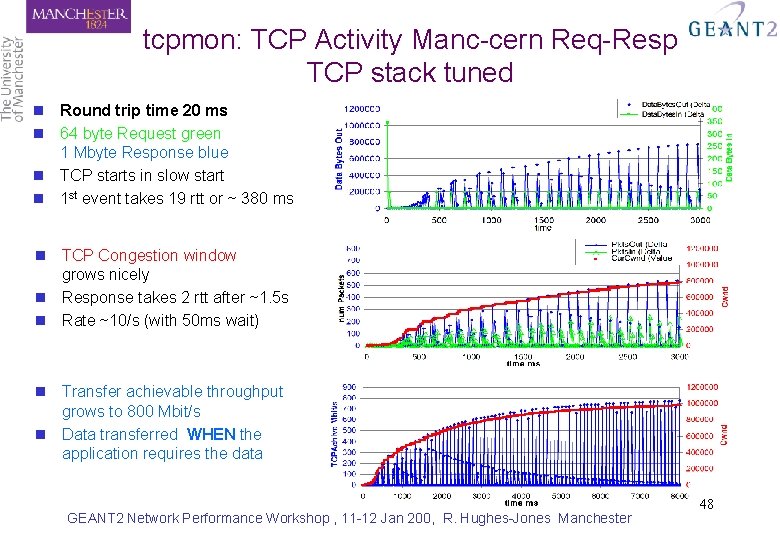

tcpmon: TCP Activity Manc-cern Req-Resp TCP stack tuned n Round trip time 20 ms n 64 byte Request green 1 Mbyte Response blue n TCP starts in slow start n 1 st event takes 19 rtt or ~ 380 ms n TCP Congestion window grows nicely n Response takes 2 rtt after ~1. 5 s n Rate ~10/s (with 50 ms wait) n Transfer achievable throughput grows to 800 Mbit/s n Data transferred WHEN the application requires the data GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 48

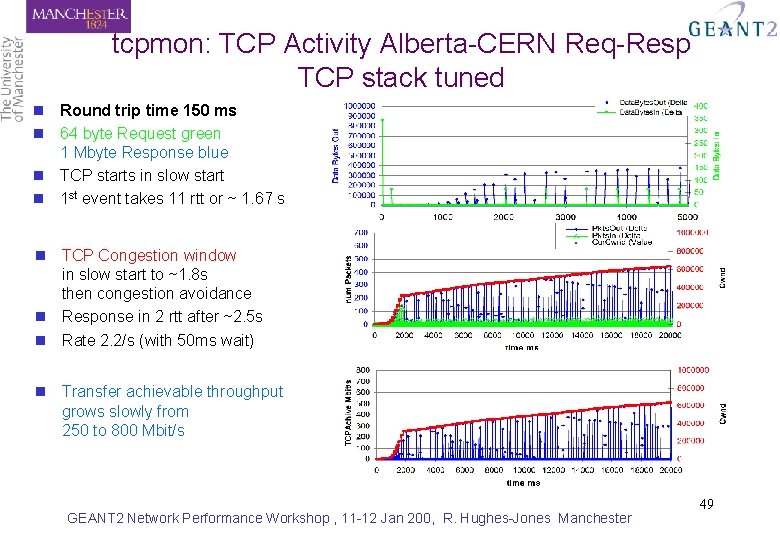

tcpmon: TCP Activity Alberta-CERN Req-Resp TCP stack tuned n Round trip time 150 ms n 64 byte Request green 1 Mbyte Response blue n TCP starts in slow start n 1 st event takes 11 rtt or ~ 1. 67 s n TCP Congestion window in slow start to ~1. 8 s then congestion avoidance n Response in 2 rtt after ~2. 5 s n Rate 2. 2/s (with 50 ms wait) n Transfer achievable throughput grows slowly from 250 to 800 Mbit/s GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 49

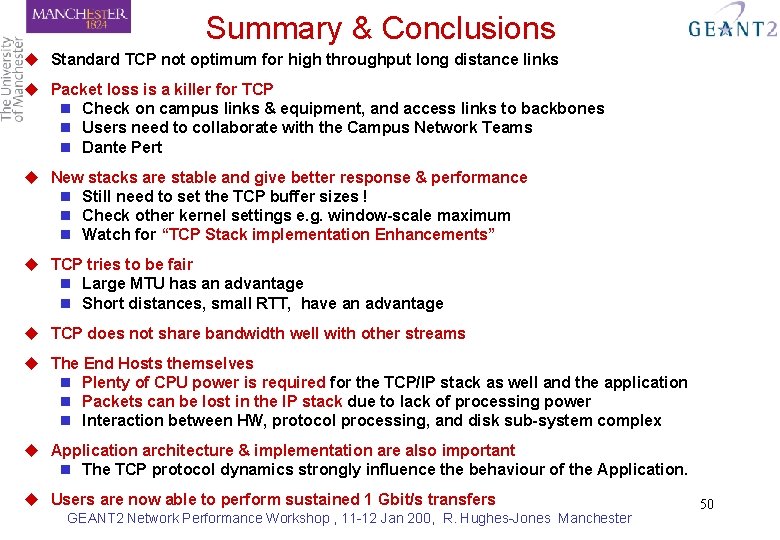

Summary & Conclusions u Standard TCP not optimum for high throughput long distance links u Packet loss is a killer for TCP n Check on campus links & equipment, and access links to backbones n Users need to collaborate with the Campus Network Teams n Dante Pert u New stacks are stable and give better response & performance n Still need to set the TCP buffer sizes ! n Check other kernel settings e. g. window-scale maximum n Watch for “TCP Stack implementation Enhancements” u TCP tries to be fair n Large MTU has an advantage n Short distances, small RTT, have an advantage u TCP does not share bandwidth well with other streams u The End Hosts themselves n Plenty of CPU power is required for the TCP/IP stack as well and the application n Packets can be lost in the IP stack due to lack of processing power n Interaction between HW, protocol processing, and disk sub-system complex u Application architecture & implementation are also important n The TCP protocol dynamics strongly influence the behaviour of the Application. u Users are now able to perform sustained 1 Gbit/s transfers GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 50

More Information Some URLs 1 u u u u u UKLight web site: http: //www. uklight. ac. uk MB-NG project web site: http: //www. mb-ng. net/ Data. TAG project web site: http: //www. datatag. org/ UDPmon / TCPmon kit + writeup: http: //www. hep. man. ac. uk/~rich/net Motherboard and NIC Tests: http: //www. hep. man. ac. uk/~rich/net/nic/Gig. Eth_tests_Boston. ppt & http: //datatag. web. cern. ch/datatag/pfldnet 2003/ “Performance of 1 and 10 Gigabit Ethernet Cards with Server Quality Motherboards” FGCS Special issue 2004 http: // www. hep. man. ac. uk/~rich/ TCP tuning information may be found at: http: //www. ncne. nlanr. net/documentation/faq/performance. html & http: //www. psc. edu/networking/perf_tune. html TCP stack comparisons: “Evaluation of Advanced TCP Stacks on Fast Long-Distance Production Networks” Journal of Grid Computing 2004 PFLDnet http: //www. ens-lyon. fr/LIP/RESO/pfldnet 2005/ Dante PERT http: //www. geant 2. net/server/show/nav. 00 d 00 h 002 GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 51

More Information Some URLs 2 u Lectures, tutorials etc. on TCP/IP: n n n www. nv. cc. va. us/home/joney/tcp_ip. htm www. cs. pdx. edu/~jrb/tcpip. lectures. html www. raleigh. ibm. com/cgi-bin/bookmgr/BOOKS/EZ 306200/CCONTENTS www. cisco. com/univercd/cc/td/doc/product/iaabu/centri 4/user/scf 4 ap 1. htm www. cis. ohio-state. edu/htbin/rfc 1180. html www. jbmelectronics. com/tcp. htm u Encylopaedia n http: //www. freesoft. org/CIE/index. htm u TCP/IP Resources n www. private. org. il/tcpip_rl. html u Understanding IP addresses n http: //www. 3 com. com/solutions/en_US/ncs/501302. html u Configuring TCP (RFC 1122) n ftp: //nic. merit. edu/internet/documents/rfc 1122. txt u Assigned protocols, ports etc (RFC 1010) n http: //www. es. net/pub/rfcs/rfc 1010. txt & /etc/protocols GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 52

Any Questions? GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 53

Backup Slides GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 54

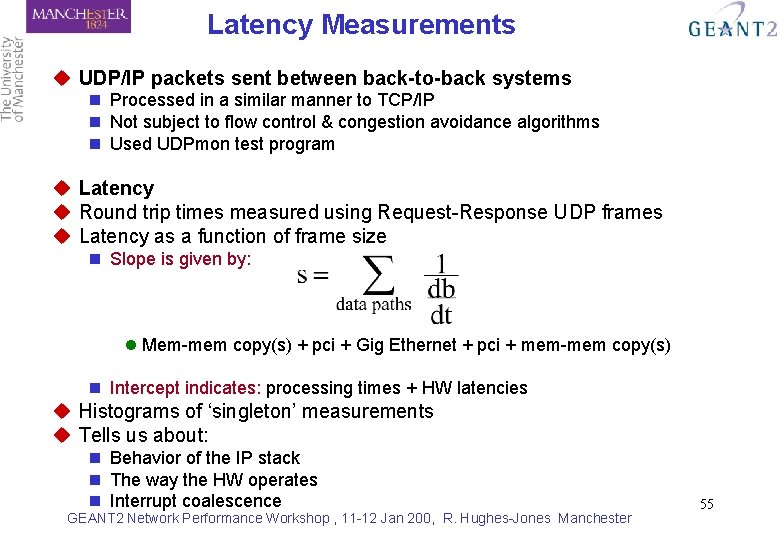

Latency Measurements u UDP/IP packets sent between back-to-back systems n Processed in a similar manner to TCP/IP n Not subject to flow control & congestion avoidance algorithms n Used UDPmon test program u Latency u Round trip times measured using Request-Response UDP frames u Latency as a function of frame size n Slope is given by: l Mem-mem copy(s) + pci + Gig Ethernet + pci + mem-mem copy(s) n Intercept indicates: processing times + HW latencies u Histograms of ‘singleton’ measurements u Tells us about: n Behavior of the IP stack n The way the HW operates n Interrupt coalescence GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 55

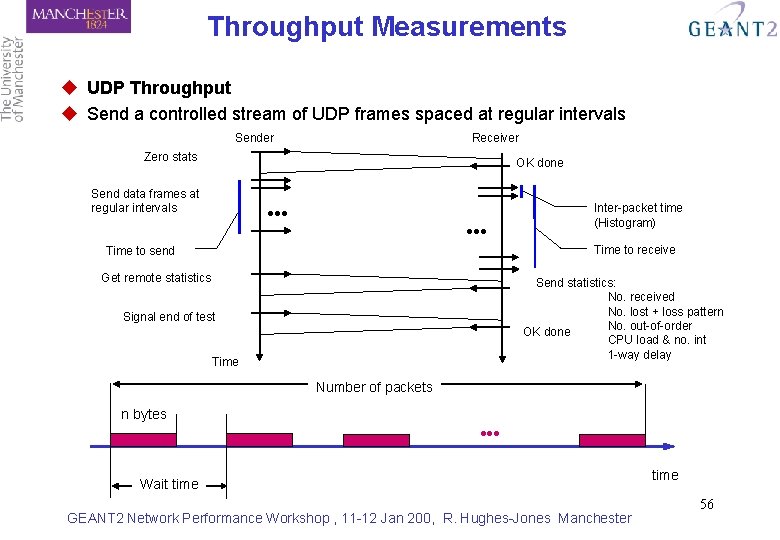

Throughput Measurements u UDP Throughput u Send a controlled stream of UDP frames spaced at regular intervals Sender Receiver Zero stats OK done Send data frames at regular intervals ●●● Inter-packet time (Histogram) Time to receive Time to send Get remote statistics Send statistics: No. received No. lost + loss pattern No. out-of-order OK done CPU load & no. int 1 -way delay Signal end of test Time Number of packets n bytes Wait time GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester time 56

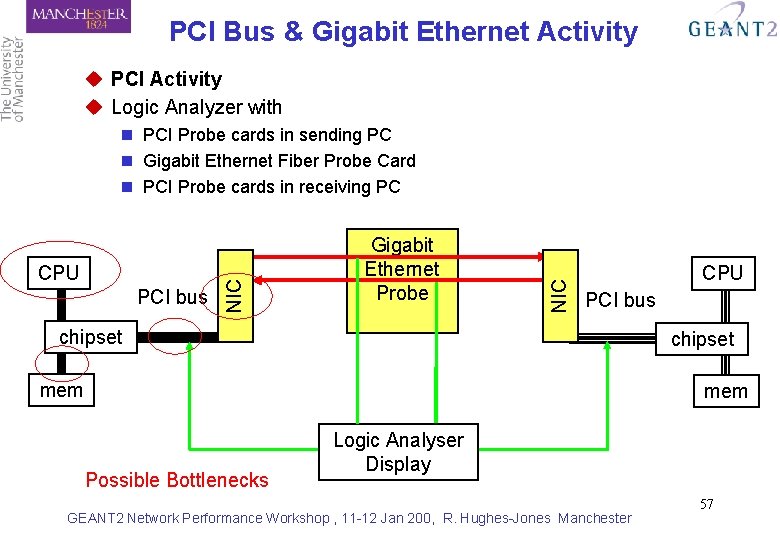

PCI Bus & Gigabit Ethernet Activity u PCI Activity u Logic Analyzer with PCI bus Gigabit Ethernet Probe NIC CPU NIC n PCI Probe cards in sending PC n Gigabit Ethernet Fiber Probe Card n PCI Probe cards in receiving PC CPU PCI bus chipset mem Possible Bottlenecks Logic Analyser Display GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 57

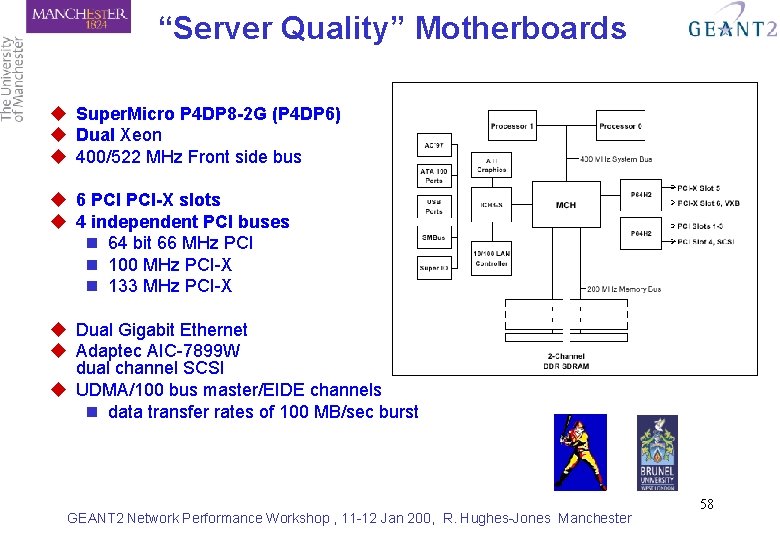

“Server Quality” Motherboards u Super. Micro P 4 DP 8 -2 G (P 4 DP 6) u Dual Xeon u 400/522 MHz Front side bus u 6 PCI-X slots u 4 independent PCI buses n 64 bit 66 MHz PCI n 100 MHz PCI-X n 133 MHz PCI-X u Dual Gigabit Ethernet u Adaptec AIC-7899 W dual channel SCSI u UDMA/100 bus master/EIDE channels n data transfer rates of 100 MB/sec burst GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 58

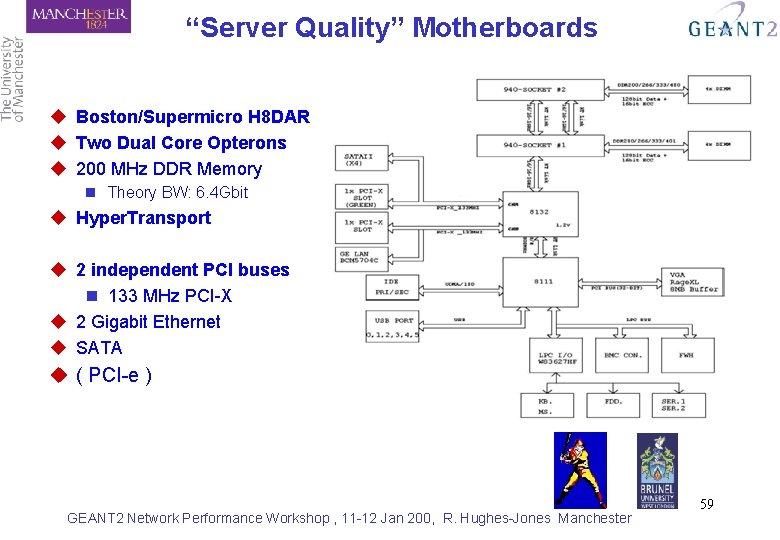

“Server Quality” Motherboards u Boston/Supermicro H 8 DAR u Two Dual Core Opterons u 200 MHz DDR Memory n Theory BW: 6. 4 Gbit u Hyper. Transport u 2 independent PCI buses n 133 MHz PCI-X u 2 Gigabit Ethernet u SATA u ( PCI-e ) GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 59

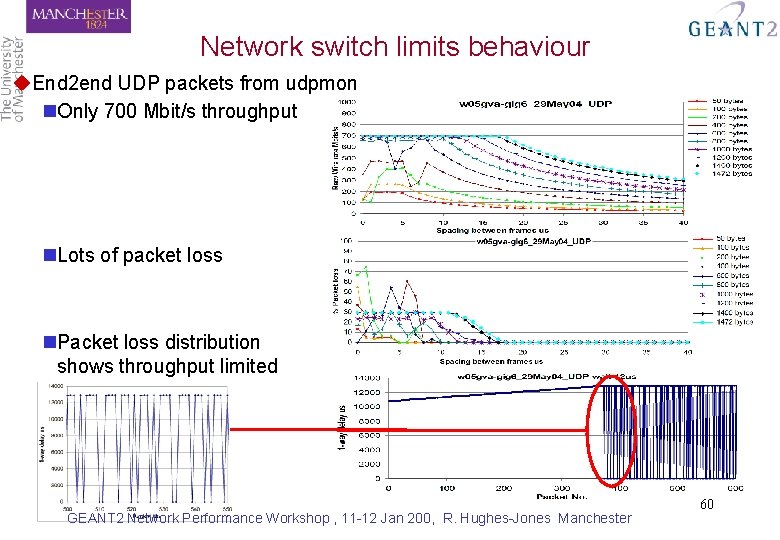

Network switch limits behaviour u. End 2 end UDP packets from udpmon n. Only 700 Mbit/s throughput n. Lots of packet loss n. Packet loss distribution shows throughput limited GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 60

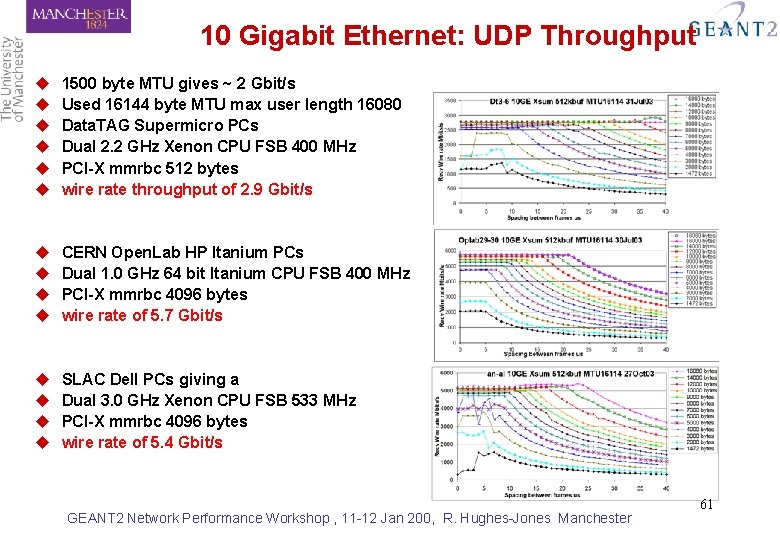

10 Gigabit Ethernet: UDP Throughput u u u 1500 byte MTU gives ~ 2 Gbit/s Used 16144 byte MTU max user length 16080 Data. TAG Supermicro PCs Dual 2. 2 GHz Xenon CPU FSB 400 MHz PCI-X mmrbc 512 bytes wire rate throughput of 2. 9 Gbit/s u u CERN Open. Lab HP Itanium PCs Dual 1. 0 GHz 64 bit Itanium CPU FSB 400 MHz PCI-X mmrbc 4096 bytes wire rate of 5. 7 Gbit/s u u SLAC Dell PCs giving a Dual 3. 0 GHz Xenon CPU FSB 533 MHz PCI-X mmrbc 4096 bytes wire rate of 5. 4 Gbit/s GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 61

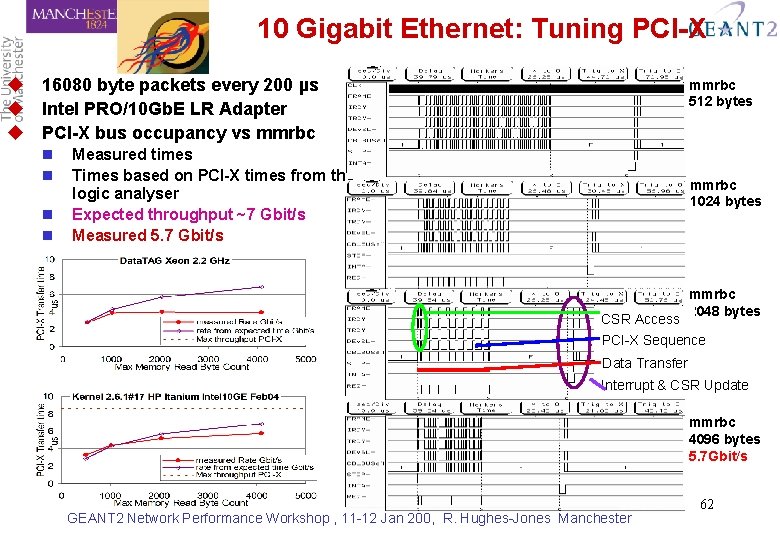

10 Gigabit Ethernet: Tuning PCI-X u 16080 byte packets every 200 µs u Intel PRO/10 Gb. E LR Adapter u PCI-X bus occupancy vs mmrbc n n mmrbc 512 bytes Measured times Times based on PCI-X times from the logic analyser Expected throughput ~7 Gbit/s Measured 5. 7 Gbit/s mmrbc 1024 bytes CSR Access mmrbc 2048 bytes PCI-X Sequence Data Transfer Interrupt & CSR Update mmrbc 4096 bytes 5. 7 Gbit/s GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 62

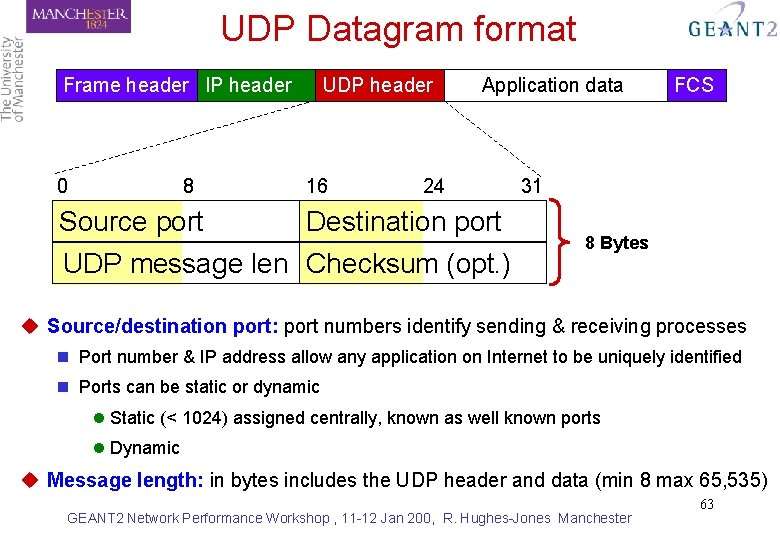

UDP Datagram format Frame header IP header 0 8 UDP header 16 Application data 24 Source port Destination port UDP message len Checksum (opt. ) FCS 31 8 Bytes u Source/destination port: port numbers identify sending & receiving processes n Port number & IP address allow any application on Internet to be uniquely identified n Ports can be static or dynamic l Static (< 1024) assigned centrally, known as well known ports l Dynamic u Message length: in bytes includes the UDP header and data (min 8 max 65, 535) GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 63

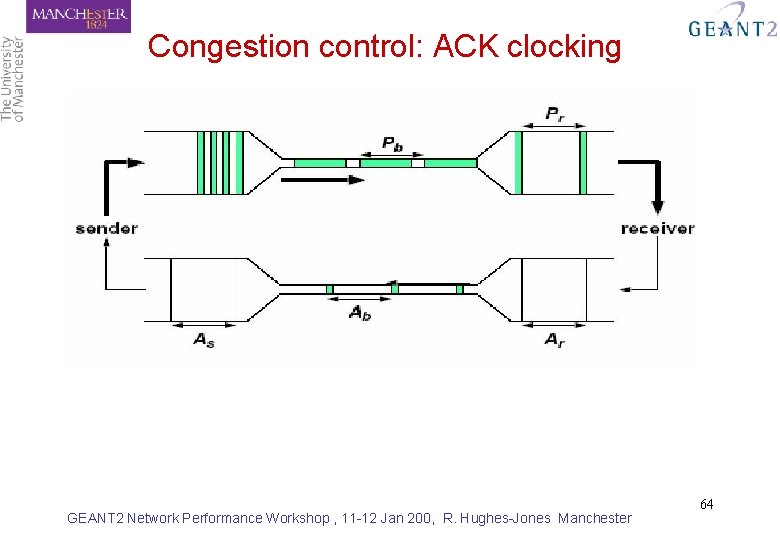

Congestion control: ACK clocking GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 64

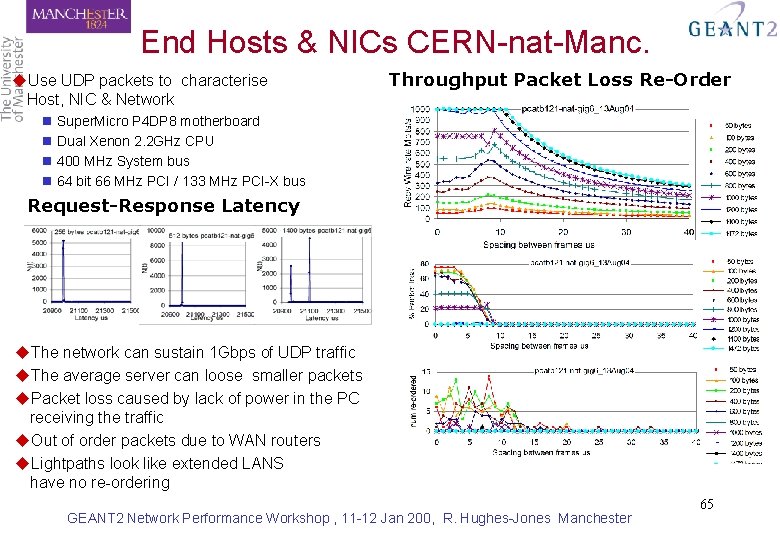

End Hosts & NICs CERN-nat-Manc. u. Use UDP packets to characterise Host, NIC & Network Throughput Packet Loss Re-Order n Super. Micro P 4 DP 8 motherboard n Dual Xenon 2. 2 GHz CPU n 400 MHz System bus n 64 bit 66 MHz PCI / 133 MHz PCI-X bus Request-Response Latency u. The network can sustain 1 Gbps of UDP traffic u. The average server can loose smaller packets u. Packet loss caused by lack of power in the PC receiving the traffic u. Out of order packets due to WAN routers u. Lightpaths look like extended LANS have no re-ordering GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 65

tcpdump / tcptrace u tcpdump: dump all TCP header information for a specified source/destination n ftp: //ftp. ee. lbl. gov/ u tcptrace: format tcpdump output for analysis using xplot n http: //www. tcptrace. org/ n NLANR TCP Testrig : Nice wrapper for tcpdump and tcptrace tools l http: //www. ncne. nlanr. net/TCP/testrig/ u Sample use: tcpdump -s 100 -w /tmp/tcpdump. out hostname tcptrace -Sl /tmp/tcpdump. out xplot /tmp/a 2 b_tsg. xpl GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 66

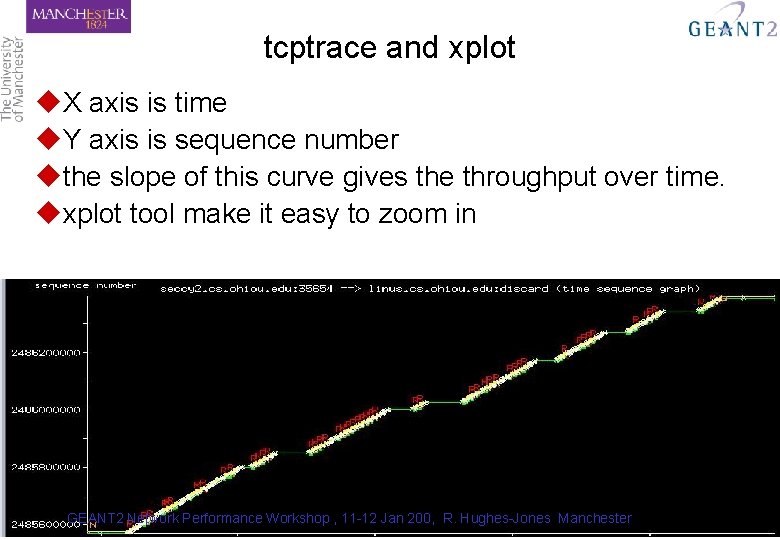

tcptrace and xplot u. X axis is time u. Y axis is sequence number uthe slope of this curve gives the throughput over time. uxplot tool make it easy to zoom in GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 67

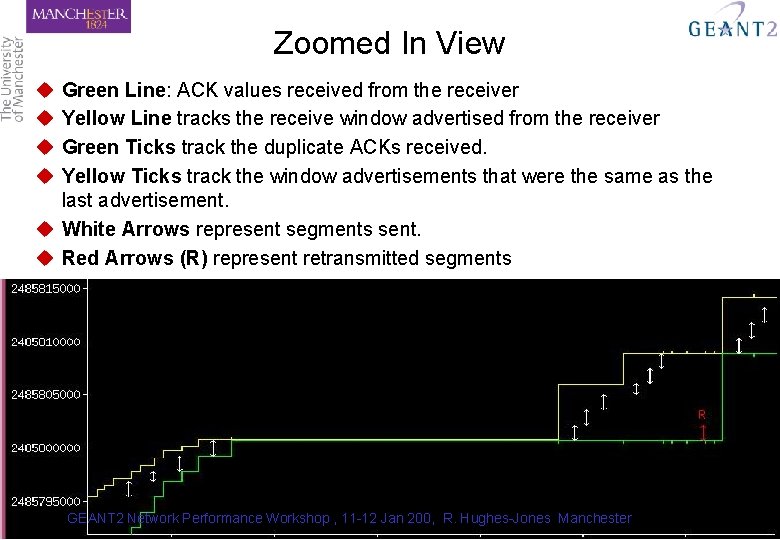

Zoomed In View u u Green Line: ACK values received from the receiver Yellow Line tracks the receive window advertised from the receiver Green Ticks track the duplicate ACKs received. Yellow Ticks track the window advertisements that were the same as the last advertisement. u White Arrows represent segments sent. u Red Arrows (R) represent retransmitted segments GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 68

TCP Slow Start GEANT 2 Network Performance Workshop , 11 -12 Jan 200, R. Hughes-Jones Manchester 69

- Slides: 69