TCAM Ternary Content Addressable Memory 1 Introduction TCAM

TCAM Ternary Content Addressable Memory 張燕光 成大資 1

Introduction – TCAM o Content-addressable memories(CAMs) enable a search operation to complete in a single clock cycle. o TCAM allows a third state of "*" or "Don't Care" for adding flexibility to the search. o The TCAM memory array stores rules in decreasing order of priorities for simplifying the priority encoder. 2

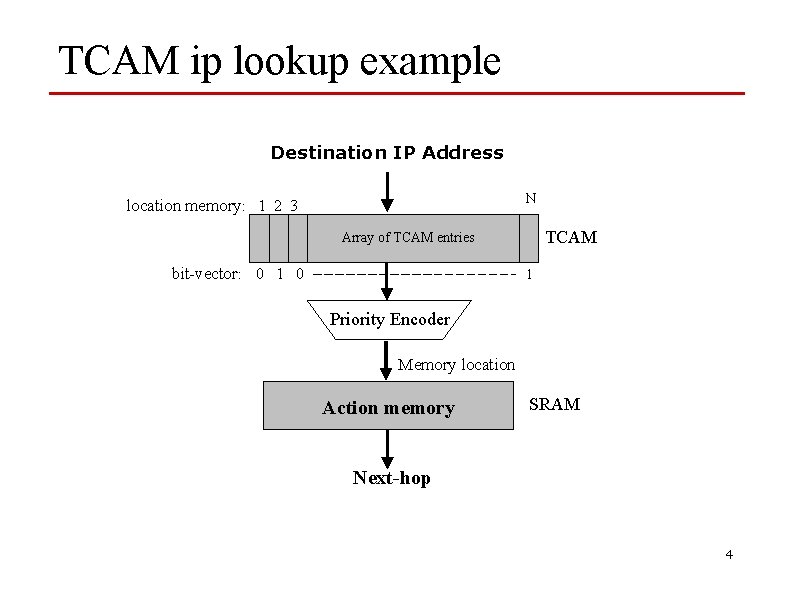

Introduction – TCAM o Compares an input key against all TCAM entries in parallel. n Each TCAM entry is a prefix for IP lookups n In general, each entry is a ternary string o The N-bit bit-vector indicates which rules match. o N-bit priority encoder indicates the address of the matched entry with highest priority. The address is used as an index into an array in SRAM to find the action associated with this prefix. 3

TCAM ip lookup example Destination IP Address N location memory: 1 2 3 TCAM Array of TCAM entries bit-vector: 0 1 Priority Encoder Memory location Action memory SRAM Next-hop 4

Type of CAMs o Binary CAM (BCAM or CAM) only stores 0 s and 1 s n Applications: MAC table consultation. Layer 2 security related VPN segregation. o Ternary CAM (TCAM) stores 0, 1 and *. n Application: when we need wilds cards such as, layer 3 and 4 classification for Qo. S and Co. S purposes and IP routing (longest prefix matching). o Available sizes: 1 Mb, 2 Mb, 4. 7 Mb, 9. 4 Mb, and 18. 8 Mb, 20 Mb, 36 Mb, 40 Mb. o 50, 100, 360 MPP o CAM entries are structured as multiples of 36 -40 bits rather than 32 bits.

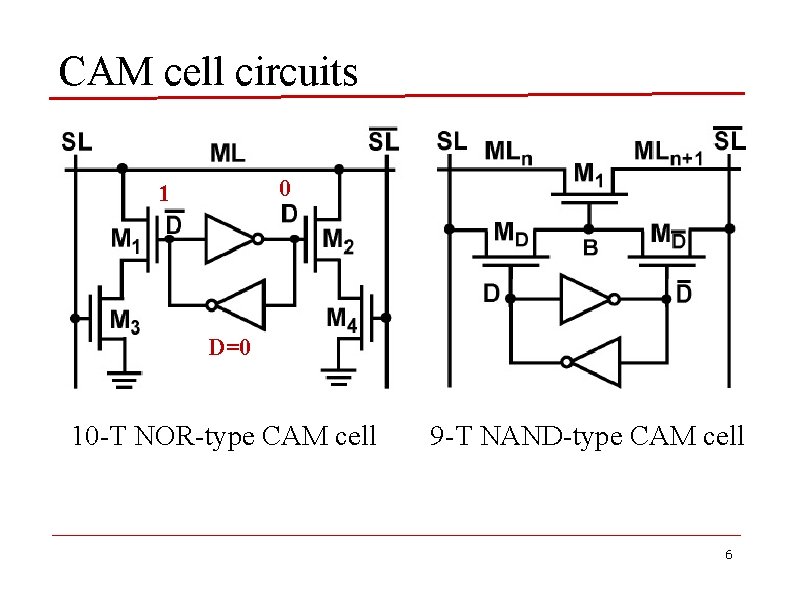

CAM cell circuits 01 10 D=1 10 -T NOR-type CAM cell 9 -T NAND-type CAM cell 6

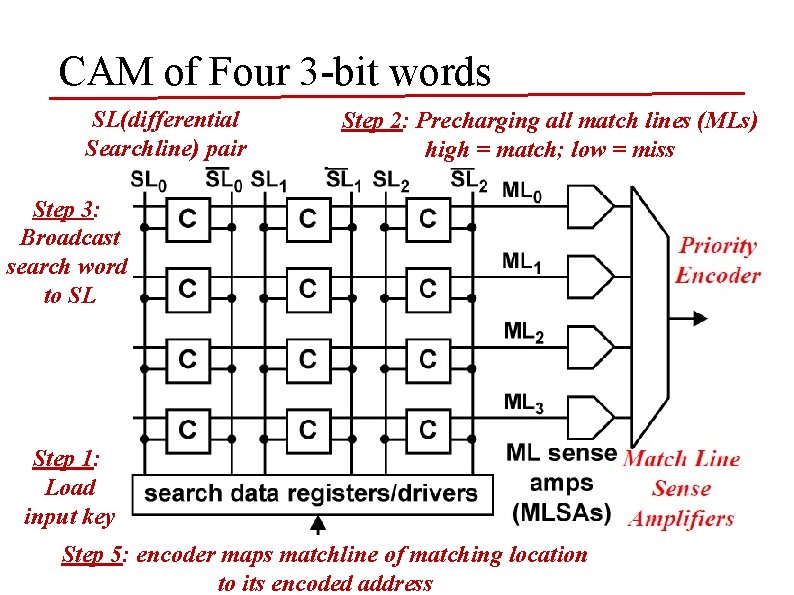

CAM of Four 3 -bit words SL(differential Searchline) pair Step 2: Precharging all match lines (MLs) high = match; low = miss Step 3: Broadcast search word to SL Step 1: Load input key Step 5: encoder maps matchline of matching location Step 4: Perform the cell comparisons to its encoded address 7

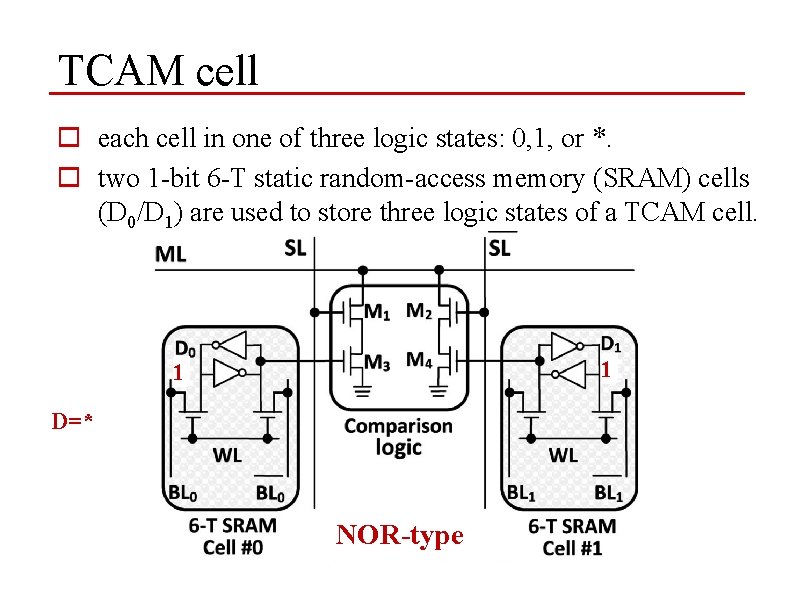

TCAM cell o each cell in one of three logic states: 0, 1, or *. o two 1 -bit 6 -T static random-access memory (SRAM) cells (D 0/D 1) are used to store three logic states of a TCAM cell. 10 011 D=* D=0 NOR-type

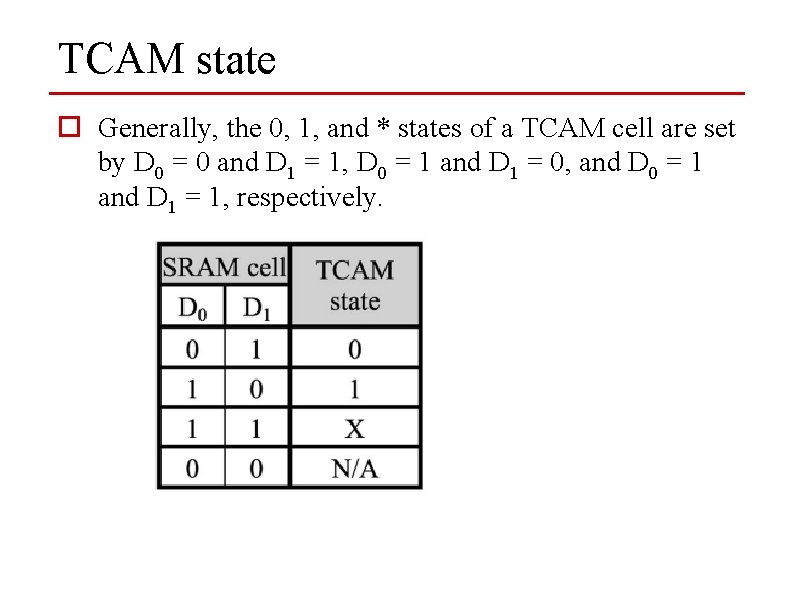

TCAM state o Generally, the 0, 1, and * states of a TCAM cell are set by D 0 = 0 and D 1 = 1, D 0 = 1 and D 1 = 0, and D 0 = 1 and D 1 = 1, respectively.

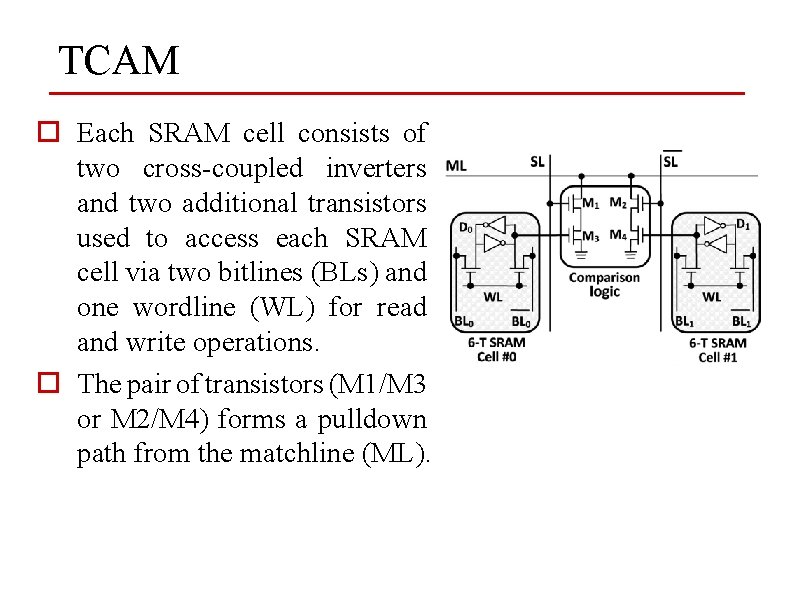

TCAM o Each SRAM cell consists of two cross-coupled inverters and two additional transistors used to access each SRAM cell via two bitlines (BLs) and one wordline (WL) for read and write operations. o The pair of transistors (M 1/M 3 or M 2/M 4) forms a pulldown path from the matchline (ML).

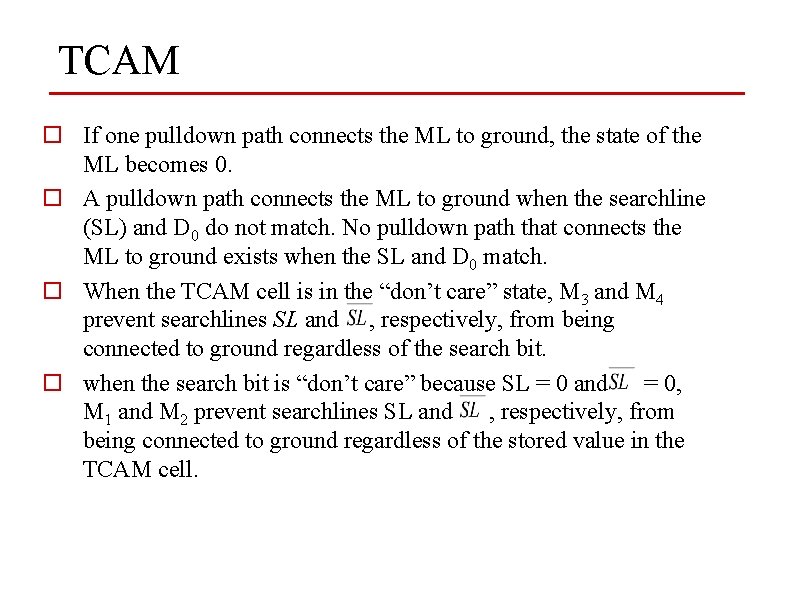

TCAM o If one pulldown path connects the ML to ground, the state of the ML becomes 0. o A pulldown path connects the ML to ground when the searchline (SL) and D 0 do not match. No pulldown path that connects the ML to ground exists when the SL and D 0 match. o When the TCAM cell is in the “don’t care” state, M 3 and M 4 prevent searchlines SL and , respectively, from being connected to ground regardless of the search bit. o when the search bit is “don’t care” because SL = 0 and = 0, M 1 and M 2 prevent searchlines SL and , respectively, from being connected to ground regardless of the stored value in the TCAM cell.

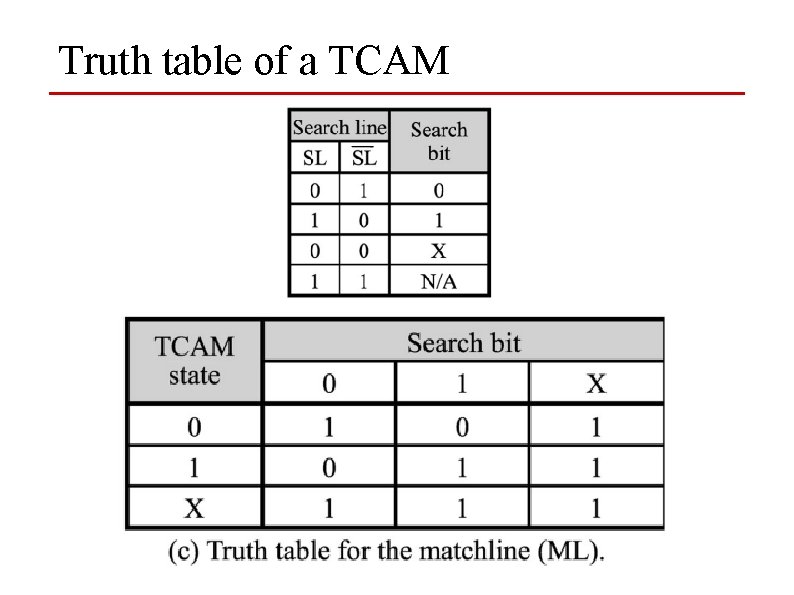

Truth table of a TCAM

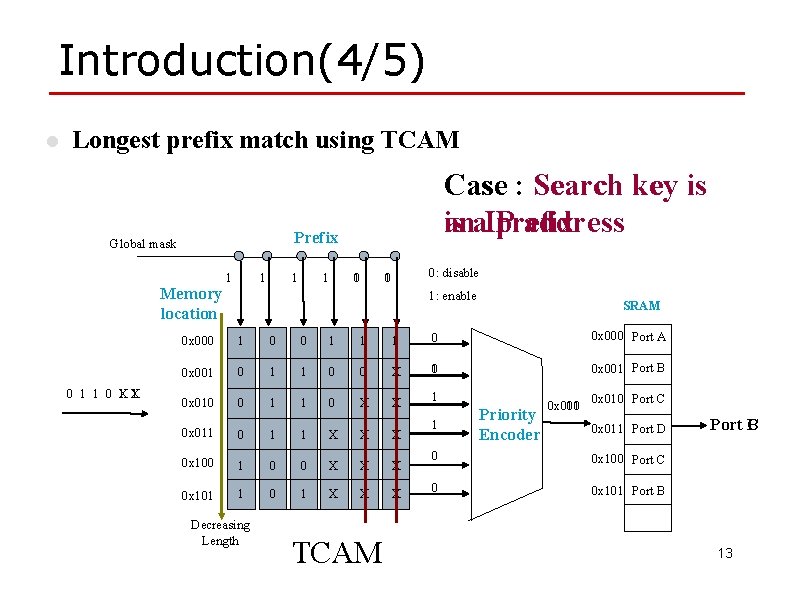

Introduction(4/5) l Longest prefix match using TCAM Prefix Global mask Memory location 0 1 1 0 X 1 Case : Search key is is ana. IP prefix address 1 1 01 01 0: disable 1: enable SRAM 0 x 000 1 0 0 1 1 1 0 0 x 000 Port A 0 x 001 0 1 1 0 0 X 01 0 x 001 Port B 0 x 010 0 1 1 0 X X 1 0 x 011 0 1 1 X X X 0 x 100 1 0 0 X X X 0 x 101 1 0 1 X X X Decreasing Length TCAM 1 Priority Encoder 0 x 010 0 x 001 0 x 010 Port C 0 x 011 Port D 0 0 x 100 Port C 0 0 x 101 Port B C 13

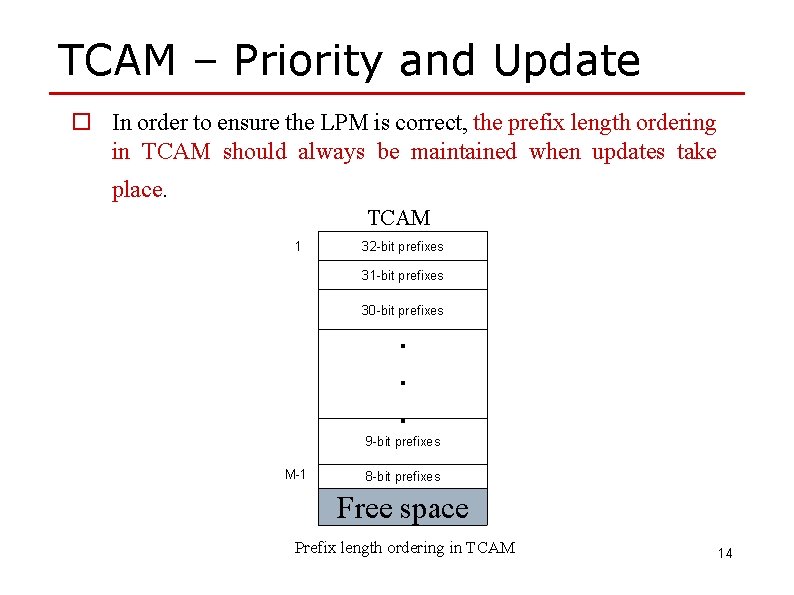

TCAM – Priority and Update o In order to ensure the LPM is correct, the prefix length ordering in TCAM should always be maintained when updates take place. TCAM 1 32 -bit prefixes 31 -bit prefixes 30 -bit prefixes . . . 9 -bit prefixes M-1 8 -bit prefixes Free space Prefix length ordering in TCAM 14

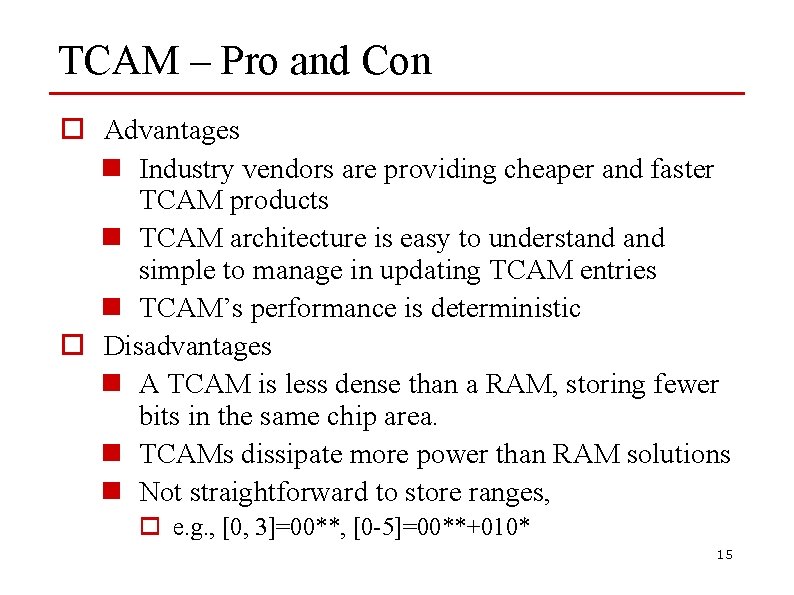

TCAM – Pro and Con o Advantages n Industry vendors are providing cheaper and faster TCAM products n TCAM architecture is easy to understand simple to manage in updating TCAM entries n TCAM’s performance is deterministic o Disadvantages n A TCAM is less dense than a RAM, storing fewer bits in the same chip area. n TCAMs dissipate more power than RAM solutions n Not straightforward to store ranges, o e. g. , [0, 3]=00**, [0 -5]=00**+010* 15

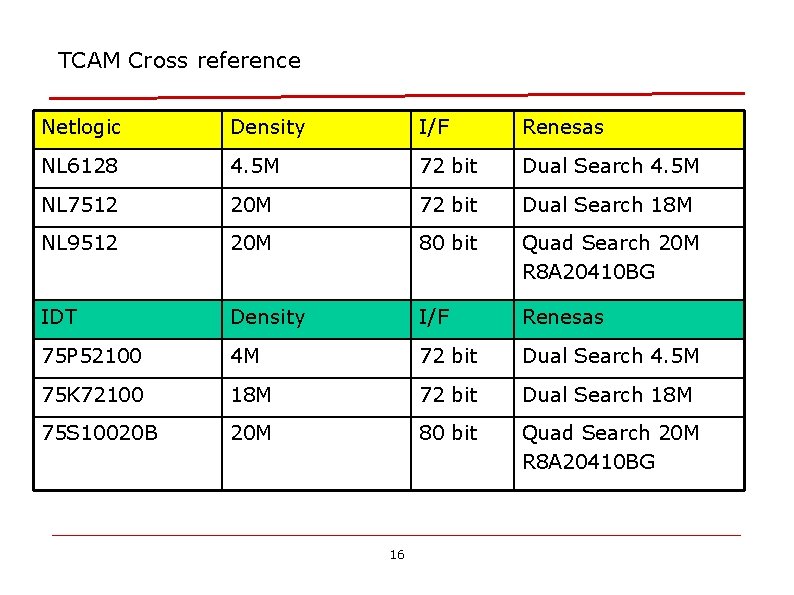

TCAM Cross reference Netlogic Density I/F Renesas NL 6128 4. 5 M 72 bit Dual Search 4. 5 M NL 7512 20 M 72 bit Dual Search 18 M NL 9512 20 M 80 bit Quad Search 20 M R 8 A 20410 BG IDT Density I/F Renesas 75 P 52100 4 M 72 bit Dual Search 4. 5 M 75 K 72100 18 M 72 bit Dual Search 18 M 75 S 10020 B 20 M 80 bit Quad Search 20 M R 8 A 20410 BG 16

Cool. CAMs o Cool. CAM architectures and algorithms for making TCAM-based routing tables more power-efficient. o TCAM vendors provide a mechanism to reduce power. by selectively addressing smaller portions of TCAM n The TCAM is divided into a set of blocks; each block is a contiguous, fixed size chunk of TCAM entries n e. g. a 512 k-entry TCAM could be divided into 64 blocks of 8 k entries each o When a search command is issued, it is possible to specify which block(s) to use in the search o Power saving, since TCAM power consumption is proportional to the number of entries searched

Cool. CAMs o Observation: most prefixes in core routing tables are between 16 and 24 bits long o Put the very short (<16 bit) and very long (>24 bit) prefixes in a set of TCAM blocks to search on every lookup o The remaining prefixes are partitioned into “buckets, ” one of which is selected by hashing for each lookup n each bucket is laid out over one or more TCAM blocks o the hashing function is restricted to merely using a selected set of input bits as an index 18

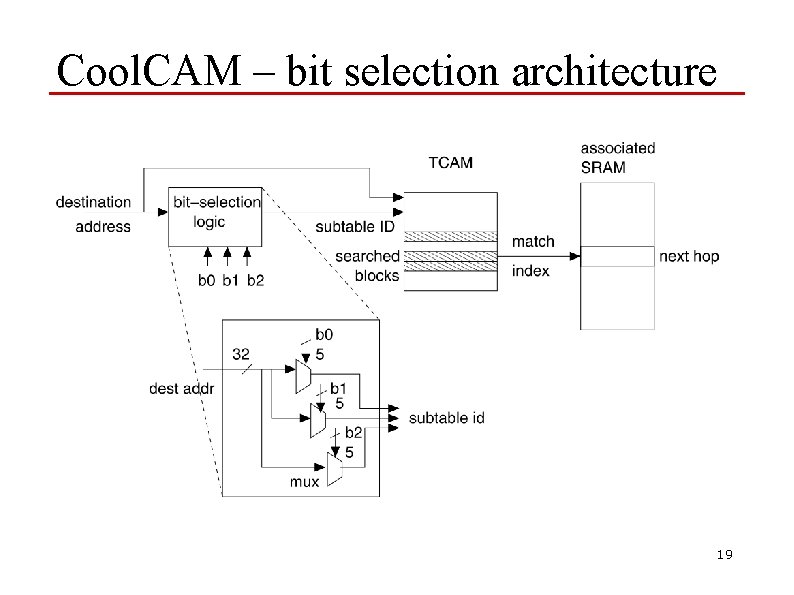

Cool. CAM – bit selection architecture 19

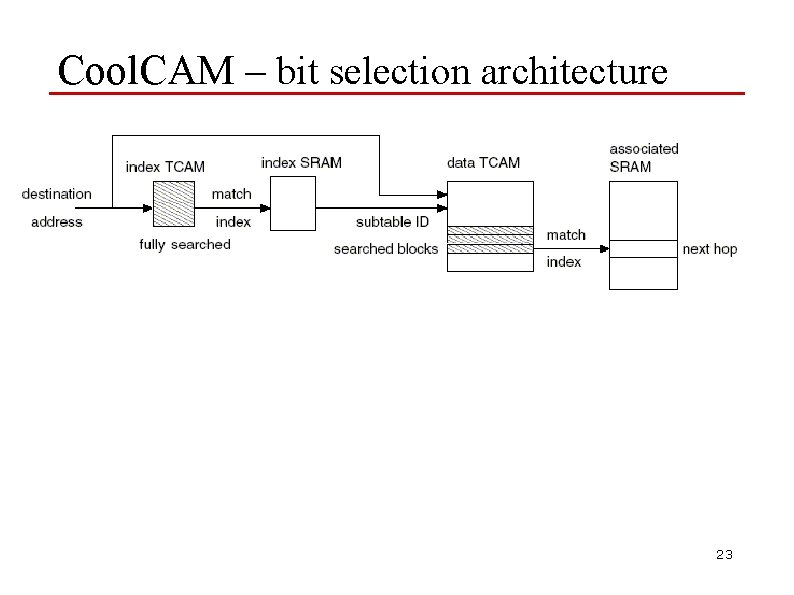

Cool. CAM – bit selection architecture o A route lookup, then, involves the following: n hashing function (bit selection logic, really) selects k hashing bits from the destination address, which identifies a bucket to be searched n also search the blocks with the very long and very short prefixes o In order to avoid the worst-case input, but it gives designers a power budget o Given such a power budget and a routing table, it is sufficient to find a set of hashing bits that produce a split that does not exceed the power budget (a satisfying split) 20

Cool. CAM – bit selection architecture 3 Heuristics n the first is simple: use the rightmost k bits of the first 16 bits. In almost all routing traces studied, this works well. n Second Heuristic: brute force search to check all possible subsets of k bits from the first 16. Guaranteed to find a satisfying split n Third heuristic: a greedy algorithm. Falls between the simple heuristic and the brute-force one, in terms of complexity and accuracy 21

Cool. CAM – bit selection architecture o Partitioning scheme using a Routing Trie data structure o Eliminates drawbacks of the Bit Selection architecture n worst-case bounds on power consumption do not match well with power consumption in practice n assumption that most prefixes are 16 -24 bits long o Two trie-based schemes (subtree-split and postordersplitting), both involving two steps(only differ in the mechanism for performing the first stage lookup) : n construct a binary routing trie from the routing table n partitioning step: carve out subtrees from the trie and place into buckets 22

Cool. CAM – bit selection architecture 23

Trie-based Table Partitioning o Partitioning is based on binary trie data structure o Eliminates drawbacks of bit selection architecture n worst-case bounds on power consumption do not match well with power consumption in practice n assumption that most prefixes are 16 -24 bits long o Two trie-based schemes (subtree-split and postorder-splitting), both involving two steps: n construct a binary trie from the routing table n partitioning step: carve out subtrees from the trie and place into buckets o The two schemes differ in their partitioning step 24

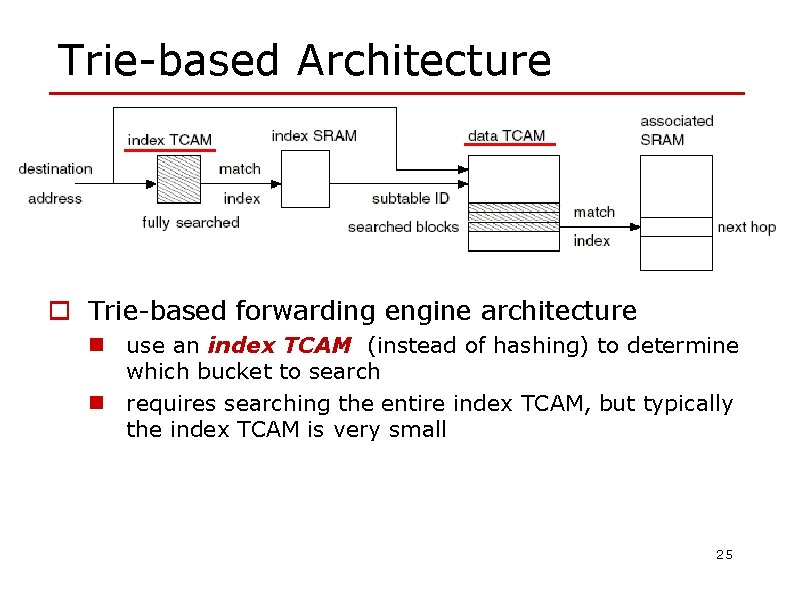

Trie-based Architecture o Trie-based forwarding engine architecture n use an index TCAM (instead of hashing) to determine which bucket to search n requires searching the entire index TCAM, but typically the index TCAM is very small 25

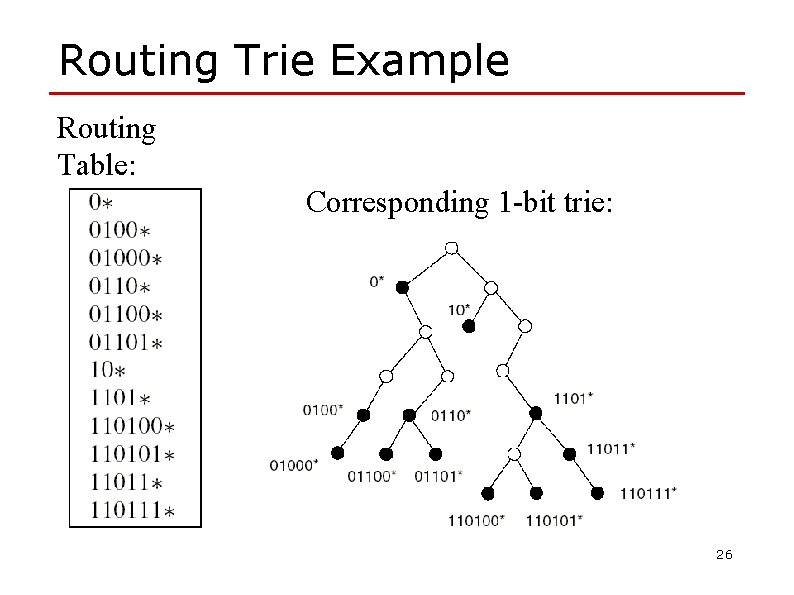

Routing Trie Example Routing Table: Corresponding 1 -bit trie: 26

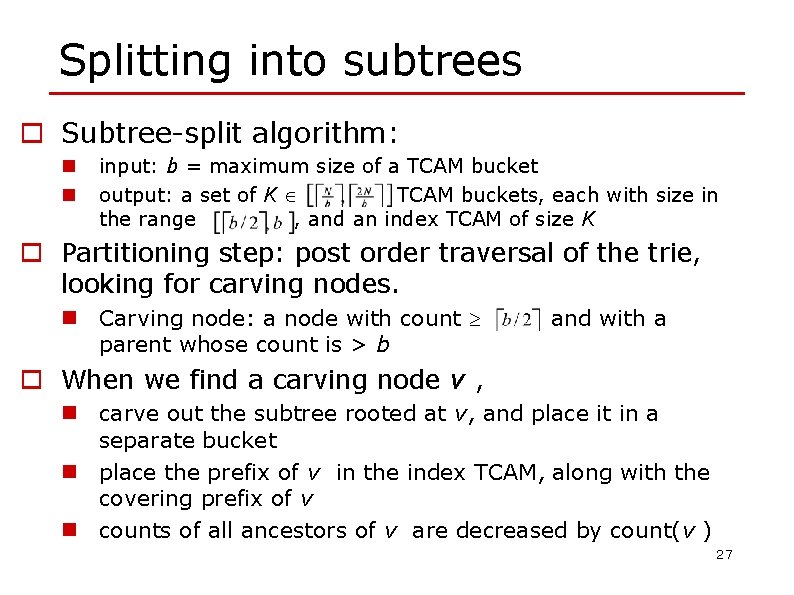

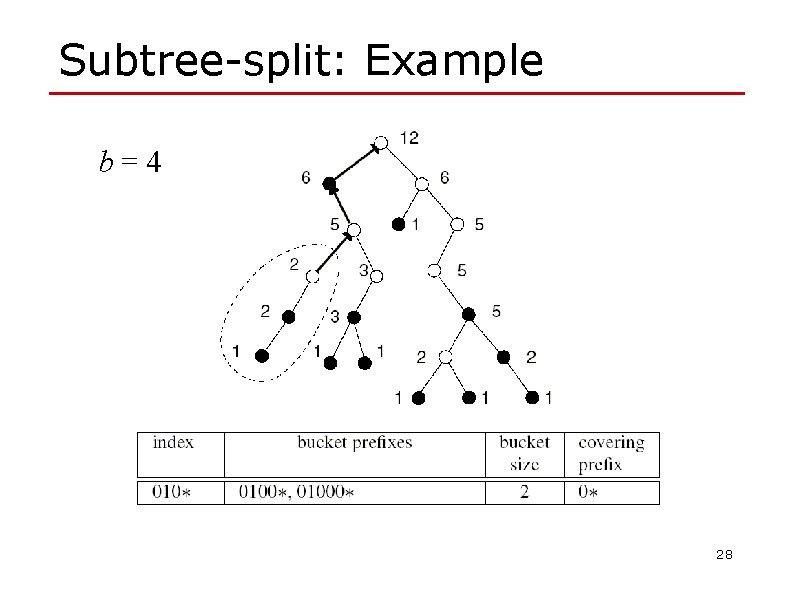

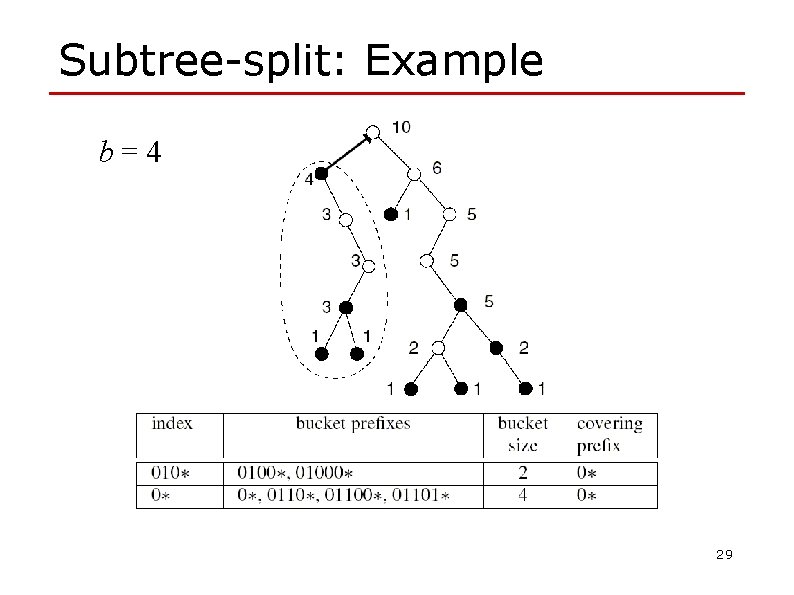

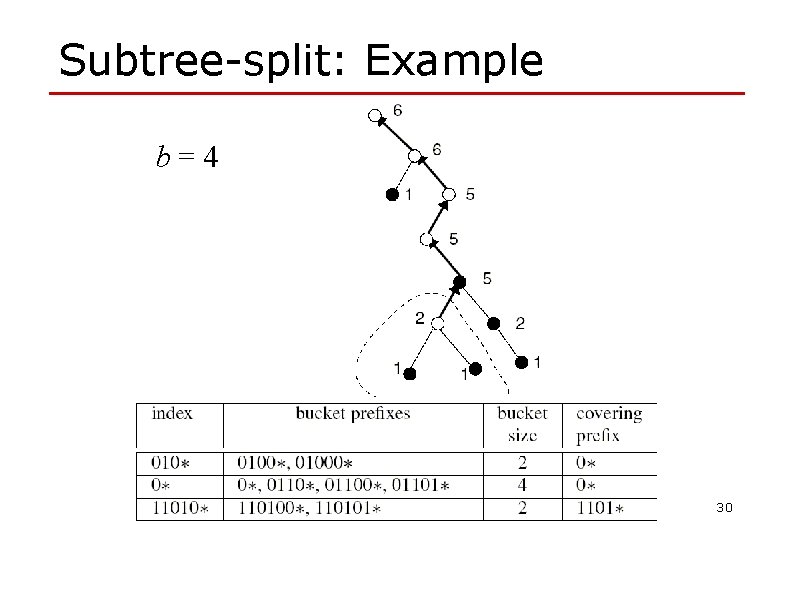

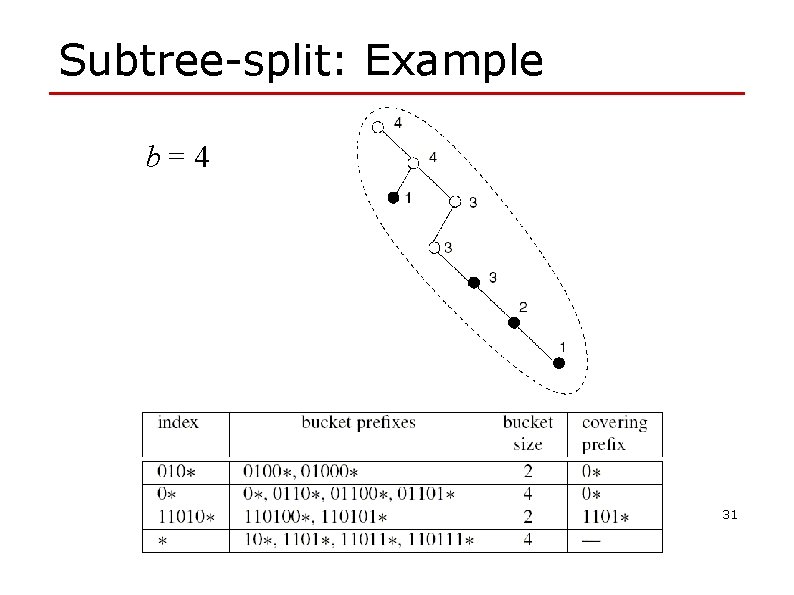

Splitting into subtrees o Subtree-split algorithm: n n input: b = maximum size of a TCAM bucket output: a set of K TCAM buckets, each with size in the range , and an index TCAM of size K o Partitioning step: post order traversal of the trie, looking for carving nodes. n Carving node: a node with count parent whose count is > b and with a o When we find a carving node v , n carve out the subtree rooted at v, and place it in a separate bucket n place the prefix of v in the index TCAM, along with the covering prefix of v n counts of all ancestors of v are decreased by count(v ) 27

Subtree-split: Example b=4 28

Subtree-split: Example b=4 29

Subtree-split: Example b=4 30

Subtree-split: Example b=4 31

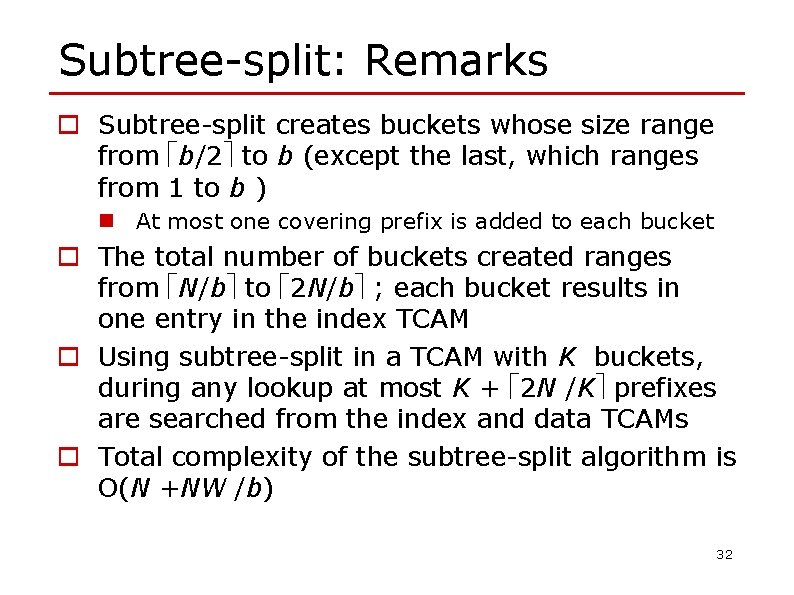

Subtree-split: Remarks o Subtree-split creates buckets whose size range from b/2 to b (except the last, which ranges from 1 to b ) n At most one covering prefix is added to each bucket o The total number of buckets created ranges from N/b to 2 N/b ; each bucket results in one entry in the index TCAM o Using subtree-split in a TCAM with K buckets, during any lookup at most K + 2 N /K prefixes are searched from the index and data TCAMs o Total complexity of the subtree-split algorithm is O(N +NW /b) 32

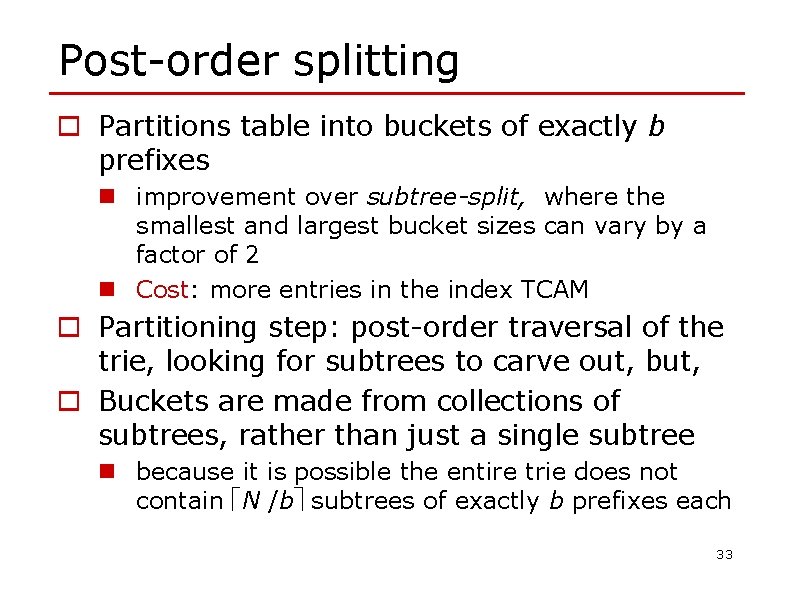

Post-order splitting o Partitions table into buckets of exactly b prefixes n improvement over subtree-split, where the smallest and largest bucket sizes can vary by a factor of 2 n Cost: more entries in the index TCAM o Partitioning step: post-order traversal of the trie, looking for subtrees to carve out, but, o Buckets are made from collections of subtrees, rather than just a single subtree n because it is possible the entire trie does not contain N /b subtrees of exactly b prefixes each 33

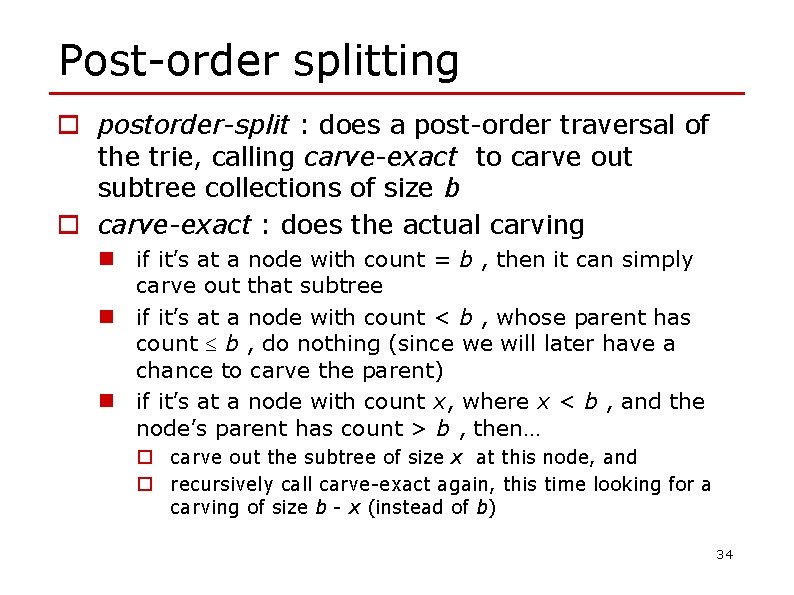

Post-order splitting o postorder-split : does a post-order traversal of the trie, calling carve-exact to carve out subtree collections of size b o carve-exact : does the actual carving n if it’s at a node with count = b , then it can simply carve out that subtree n if it’s at a node with count < b , whose parent has count b , do nothing (since we will later have a chance to carve the parent) n if it’s at a node with count x, where x < b , and the node’s parent has count > b , then… o carve out the subtree of size x at this node, and o recursively call carve-exact again, this time looking for a carving of size b - x (instead of b) 34

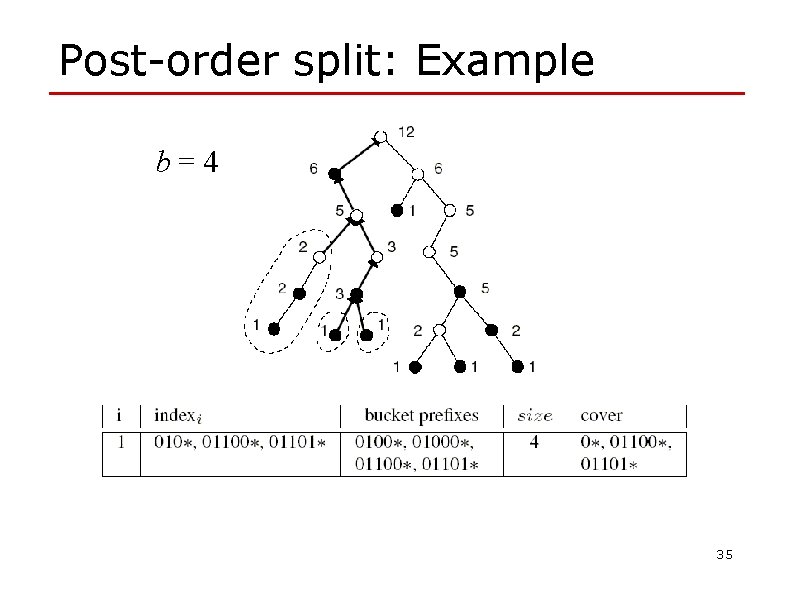

Post-order split: Example b=4 35

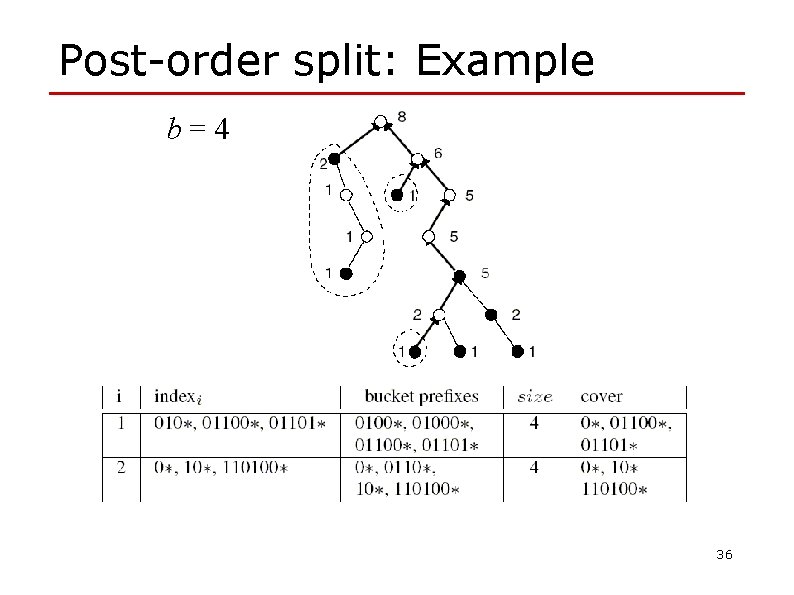

Post-order split: Example b=4 36

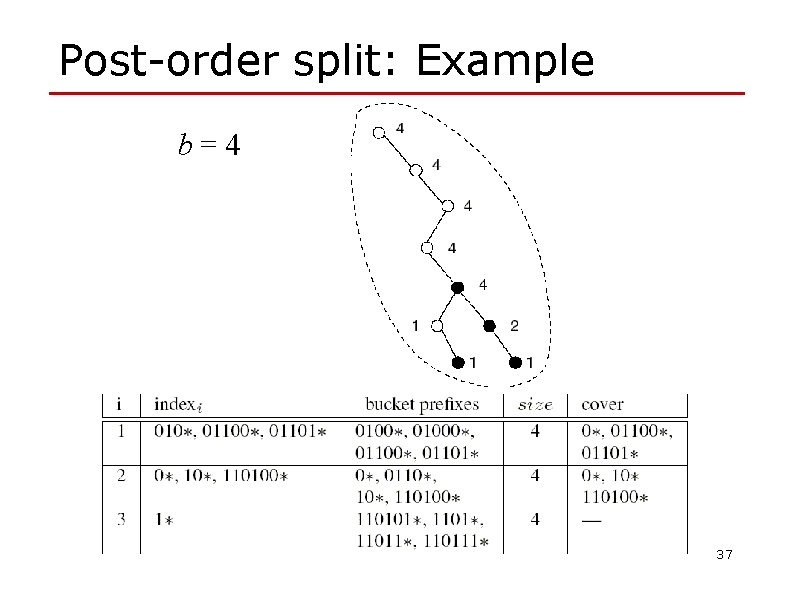

Post-order split: Example b=4 37

Postorder-split: Remarks o Postorder-split creates buckets of size b (except the last, which ranges from 1 to b ) n At most W covering prefixes are added to each bucket, where W is the length of the longest prefix in the table o The total number of buckets created is exactly N/b. Each bucket results in at most W +1 entries in the index TCAM o Using postorder-split in a TCAM with K buckets, during any lookup at most (W +1)K + N /K +W prefixes are searched from the index and data TCAMs o Total complexity of the postorder-split algorithm is O(N +NW /b) 38

TCAM update Ø PLO_OPT (prefix length ordering) Ø CAO_OPT (Chain-ancestor ordering) 39

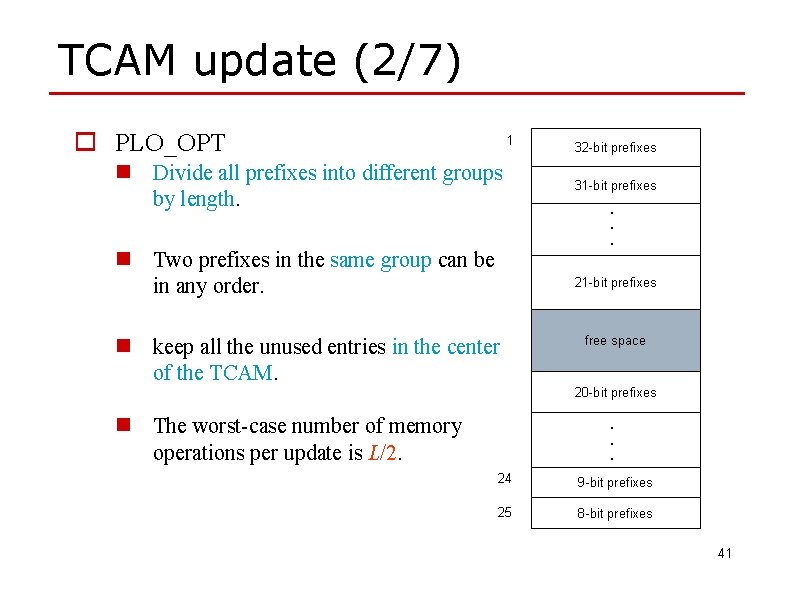

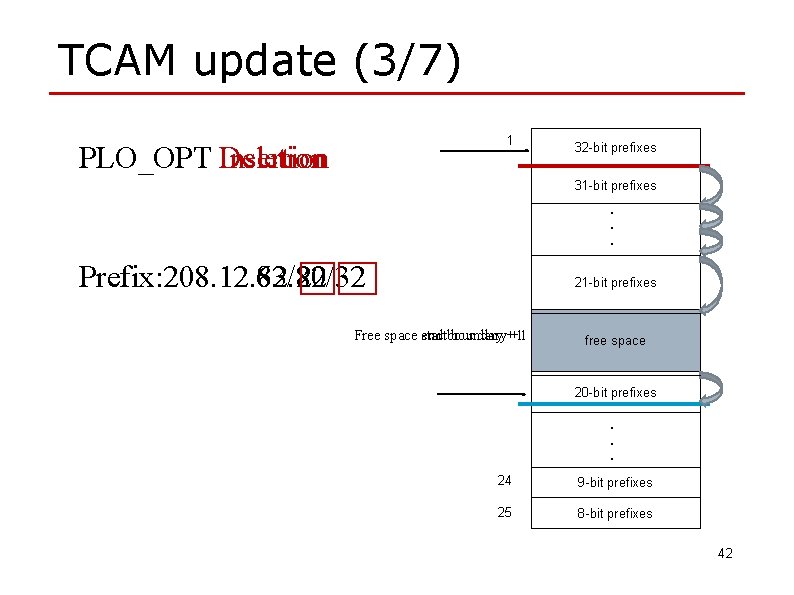

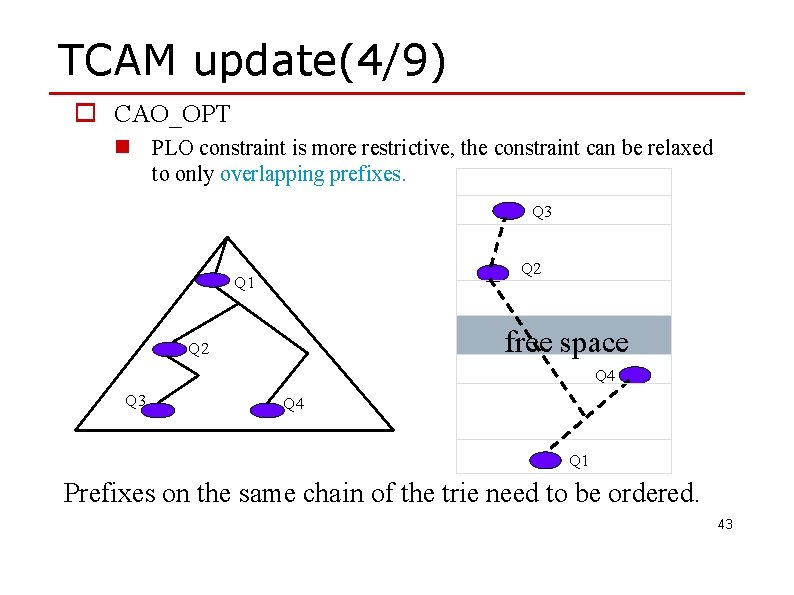

TCAM update (1/7) o Update Scheme n Prefix-length ordering constraint: PLO_OPT Two prefixes of the same length don’t need to be in any specific order. n Chain-ancestor ordering constraint CAO_OPT There’s an ordering constraint between two prefixes if and only if one is a prefix of the other. 40

TCAM update (2/7) o PLO_OPT 1 n Divide all prefixes into different groups by length. n Two prefixes in the same group can be in any order. 32 -bit prefixes 31 -bit prefixes. . . 21 -bit prefixes n keep all the unused entries in the center of the TCAM. n The worst-case number of memory operations per update is L/2. free space 20 -bit. prefixes. . 24 9 -bit prefixes 25 8 -bit prefixes 41

TCAM update (3/7) 1 PLO_OPT Insertion Deletion 32 -bit prefixes 31 -bit prefixes. . . Prefix: 208. 12. 82/20 Prefix: 208. 12. 63. 82/32 21 -bit prefixes Free space end startboundary+1 +1 free space 20 -bit prefixes. . . 24 9 -bit prefixes 25 8 -bit prefixes 42

TCAM update(4/9) o CAO_OPT n PLO constraint is more restrictive, the constraint can be relaxed to only overlapping prefixes. Q 3 Q 2 Q 1 free space Q 2 Q 4 Q 3 Q 4 Q 1 Prefixes on the same chain of the trie need to be ordered. 43

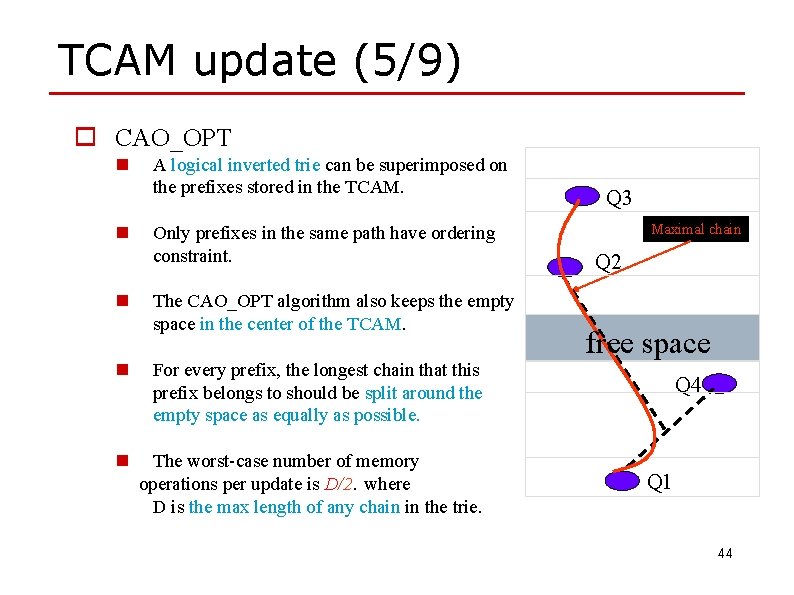

TCAM update (5/9) o CAO_OPT n n n A logical inverted trie can be superimposed on the prefixes stored in the TCAM. Only prefixes in the same path have ordering constraint. The CAO_OPT algorithm also keeps the empty space in the center of the TCAM. n For every prefix, the longest chain that this prefix belongs to should be split around the empty space as equally as possible. n The worst-case number of memory operations per update is D/2. where D is the max length of any chain in the trie. Q 3 Maximal chain Q 2 free space Q 4 Q 1 44

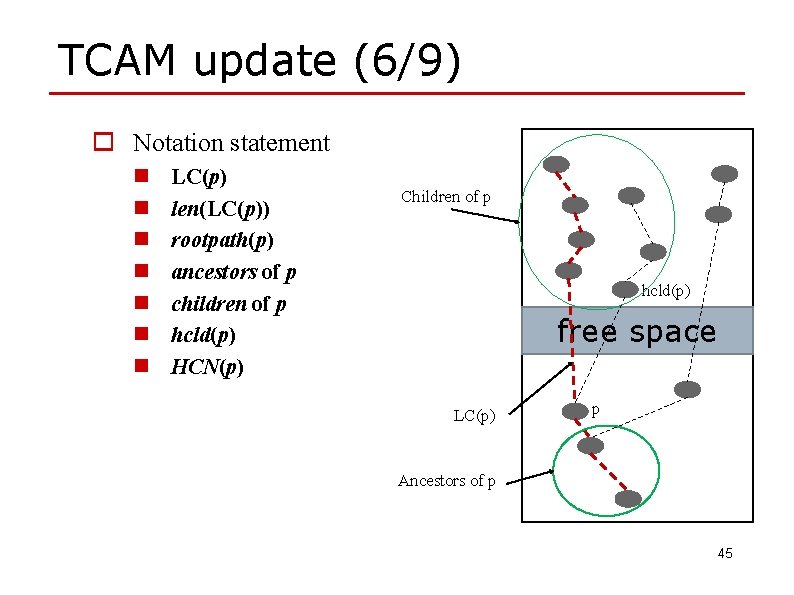

TCAM update (6/9) o Notation statement n n n n LC(p) len(LC(p)) rootpath(p) ancestors of p children of p hcld(p) HCN(p) Children of p hcld(p) free space LC(p) p Ancestors of p 45

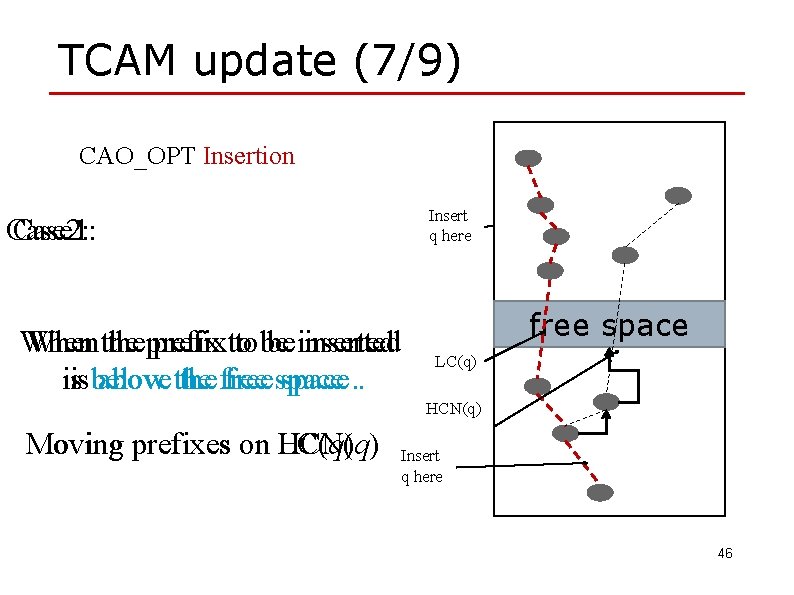

TCAM update (7/9) CAO_OPT Insertion Insert q here Case 1: Case 2: When the prefix to to be be inserted When above the free space. . isisbelow free space LC(q) HCN(q) Moving prefixes on HCN(q) LC(q) Insert q here 46

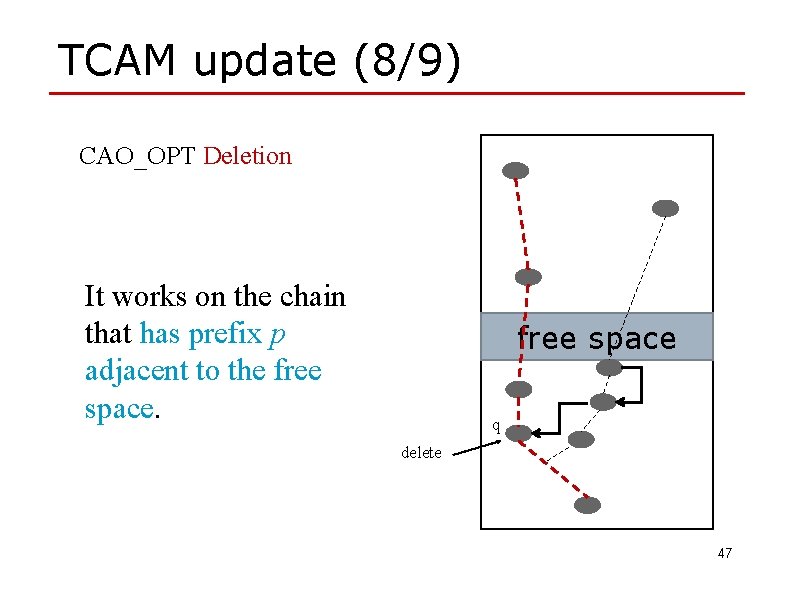

TCAM update (8/9) CAO_OPT Deletion It works on the chain that has prefix p adjacent to the free space q delete 47

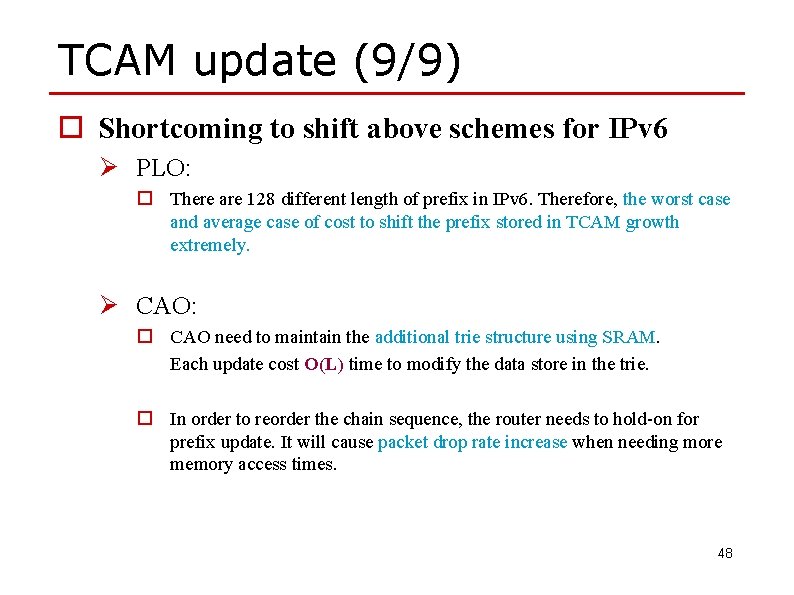

TCAM update (9/9) o Shortcoming to shift above schemes for IPv 6 Ø PLO: o There are 128 different length of prefix in IPv 6. Therefore, the worst case and average case of cost to shift the prefix stored in TCAM growth extremely. Ø CAO: o CAO need to maintain the additional trie structure using SRAM. Each update cost O(L) time to modify the data store in the trie. o In order to reorder the chain sequence, the router needs to hold-on for prefix update. It will cause packet drop rate increase when needing more memory access times. 48

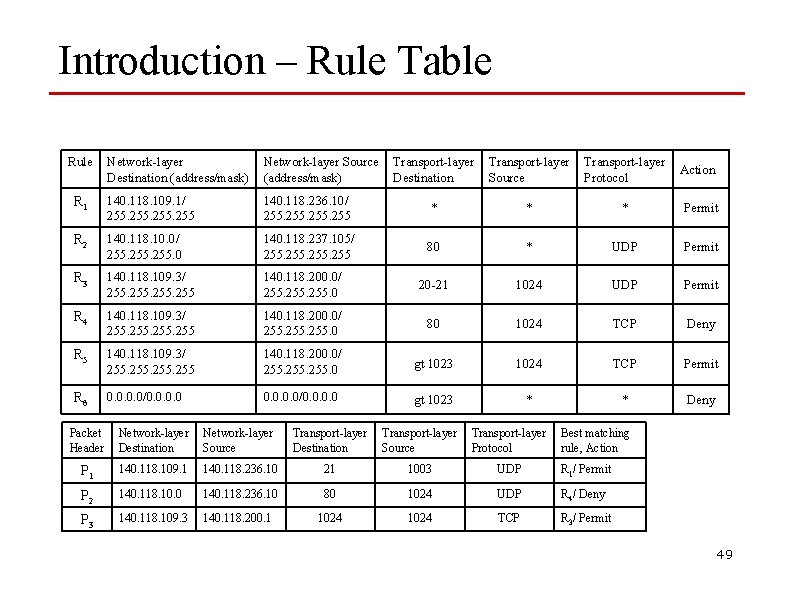

Introduction – Rule Table Rule Network-layer Destination (address/mask) Network-layer Source (address/mask) Transport-layer Destination Transport-layer Source Transport-layer Protocol Action R 1 140. 118. 109. 1/ 255 140. 118. 236. 10/ 255 * * * Permit R 2 140. 118. 10. 0/ 255. 0 140. 118. 237. 105/ 255 80 * UDP Permit R 3 140. 118. 109. 3/ 255 140. 118. 200. 0/ 255. 0 20 -21 1024 UDP Permit R 4 140. 118. 109. 3/ 255 140. 118. 200. 0/ 255. 0 80 1024 TCP Deny R 5 140. 118. 109. 3/ 255 140. 118. 200. 0/ 255. 0 gt 1023 1024 TCP Permit R 6 0. 0/0. 0 gt 1023 * * Deny Packet Header Network-layer Destination Network-layer Source Transport-layer Destination Transport-layer Source Transport-layer Protocol Best matching rule, Action P 1 140. 118. 109. 1 140. 118. 236. 10 21 1003 UDP R 1/ Permit P 2 140. 118. 10. 0 140. 118. 236. 10 80 1024 UDP R 4/ Deny P 3 140. 118. 109. 3 140. 118. 200. 1 1024 TCP R 3/ Permit 49

TCAM range encoding o The direct range-to-prefix conversion is the traditional database independent. o The primary advantage of database independent schemes is their fast update operations. o However, database independent schemes suffer from large TCAM memory consumption. 50

TCAM range encoding o The dependent range encoding schemes, which reduce the TCAM requirement by exploiting the dependency among rules. o Each field value is individually converted into one or more field ternary strings. o The field values in input packet headers must be translated into intermediate results which in turn will be used as search keys in TCAM. 51

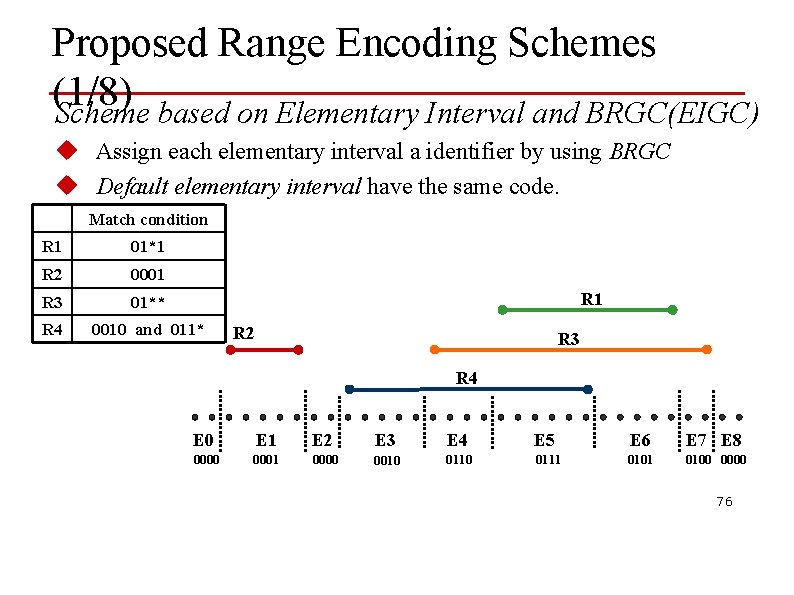

TCAM range encoding o The first scheme called EIGC encodes ranges by using the BRGC identifiers of elementary intervals and converting each range to a number of ternary strings. o The second scheme called perfect BRGC (P-BRGC) groups ranges into perfect BRGC range sets that can be encoded by a minimal number of ternary values. 52

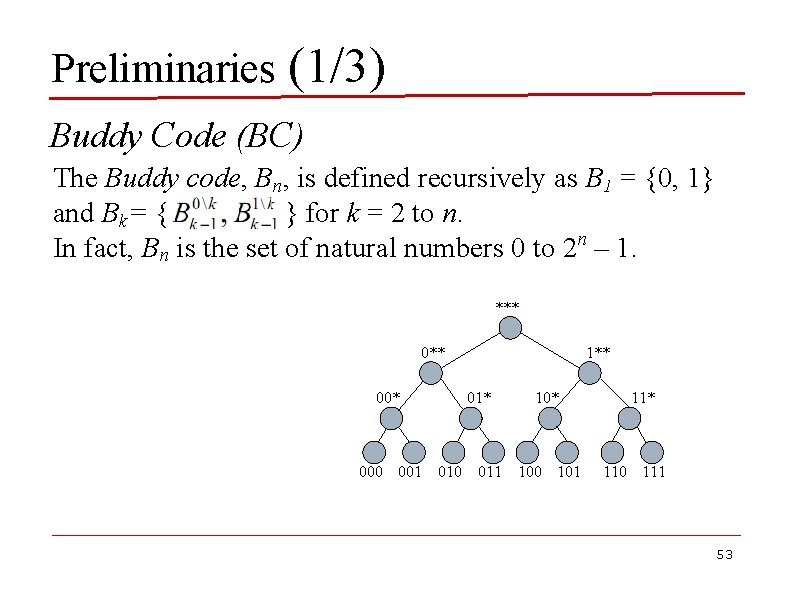

Preliminaries (1/3) Buddy Code (BC) The Buddy code, Bn, is defined recursively as B 1 = {0, 1} and Bk= { } for k = 2 to n. In fact, Bn is the set of natural numbers 0 to 2 n – 1. *** 00* 1** 01* 10* 000 001 010 011 100 101 11* 110 111 53

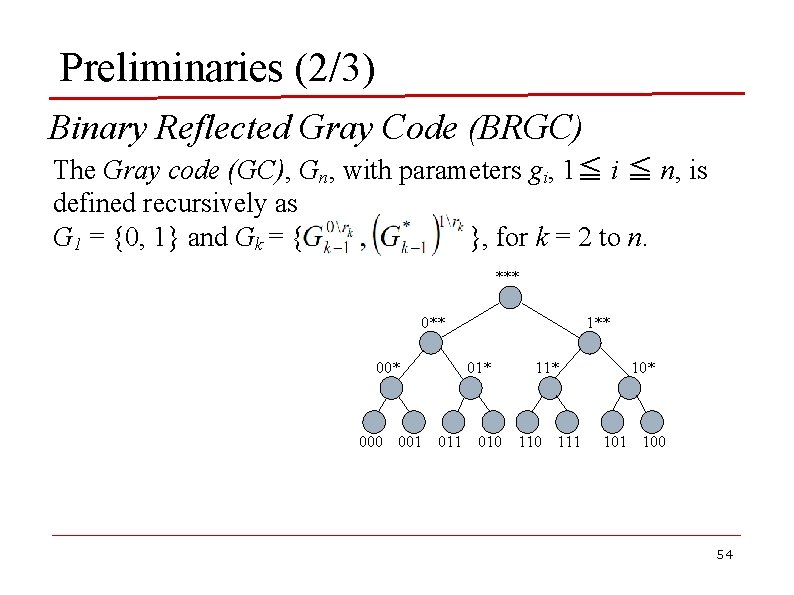

Preliminaries (2/3) Binary Reflected Gray Code (BRGC) The Gray code (GC), Gn, with parameters gi, 1≦ i ≦ n, is defined recursively as G 1 = {0, 1} and Gk = { }, for k = 2 to n. *** 00* 1** 01* 11* 000 001 010 111 10* 101 100 54

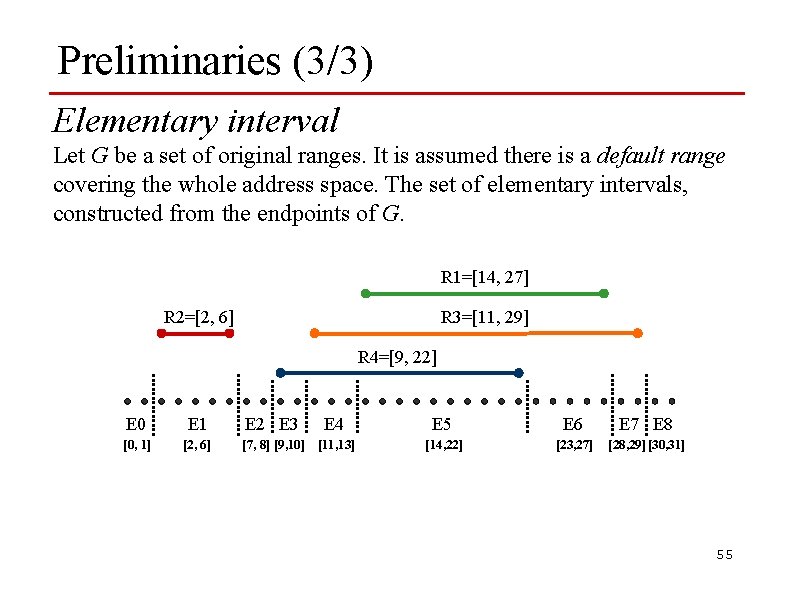

Preliminaries (3/3) Elementary interval Let G be a set of original ranges. It is assumed there is a default range covering the whole address space. The set of elementary intervals, constructed from the endpoints of G. R 1=[14, 27] R 3=[11, 29] R 2=[2, 6] R 4=[9, 22] E 0 E 1 [0, 1] [2, 6] E 2 E 3 E 4 [7, 8] [9, 10] [11, 13] E 5 E 6 E 7 E 8 [14, 22] [23, 27] [28, 29] [30, 31] 55

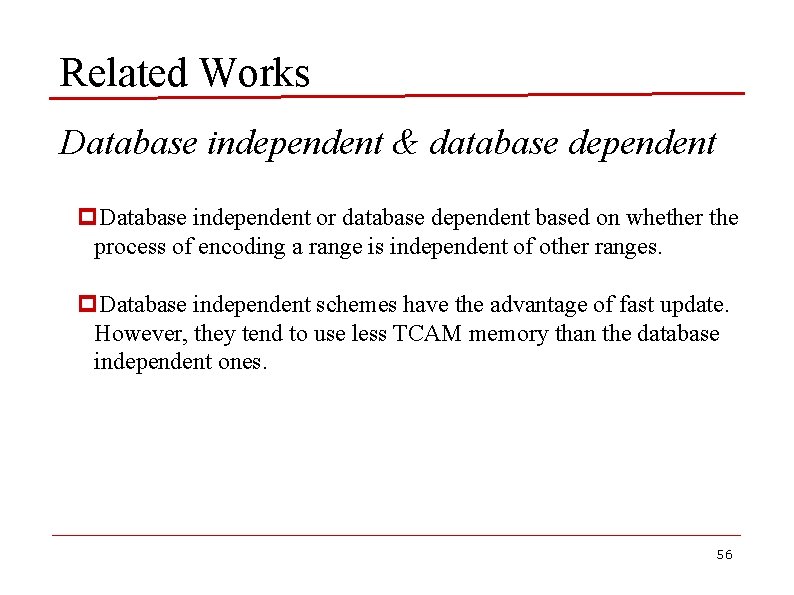

Related Works Database independent & database dependent p. Database independent or database dependent based on whether the process of encoding a range is independent of other ranges. p. Database independent schemes have the advantage of fast update. However, they tend to use less TCAM memory than the database independent ones. 56

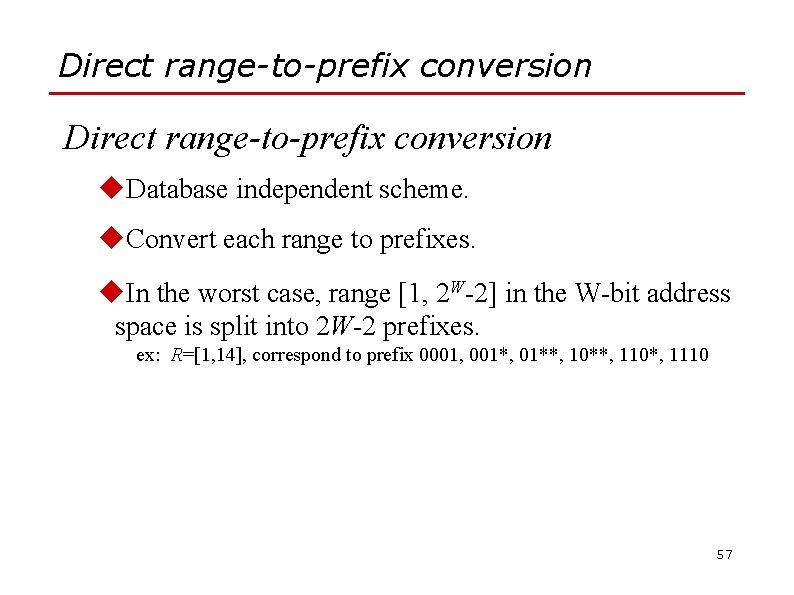

Direct range-to-prefix conversion u. Database independent scheme. u. Convert each range to prefixes. u. In the worst case, range [1, 2 W-2] in the W-bit address space is split into 2 W-2 prefixes. ex: R=[1, 14], correspond to prefix 0001, 001*, 01**, 10**, 110*, 1110 57

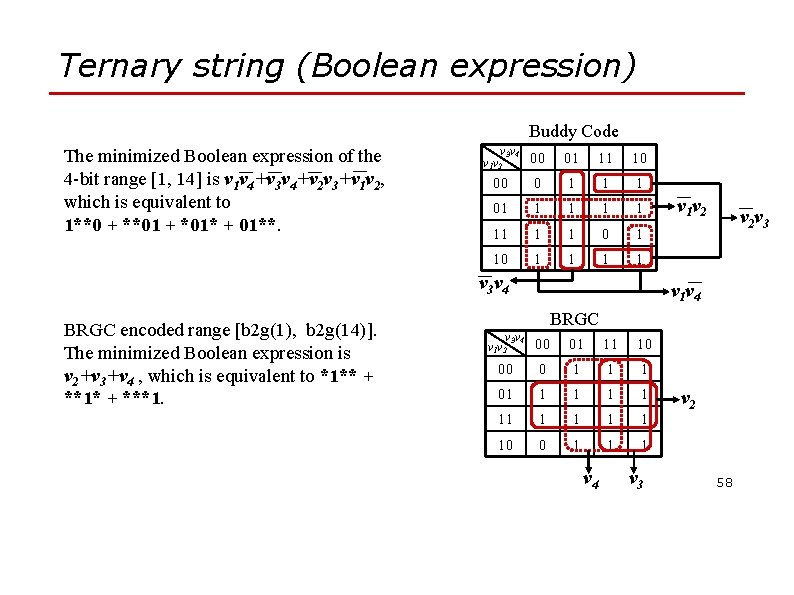

Ternary string (Boolean expression) Buddy Code The minimized Boolean___expression of___the ___ 4 -bit range [1, 14] is v 1 v 4+v 3 v 4+v 2 v 3+v 1 v 2, which is equivalent to 1**0 + **01 + *01* + 01**. v 3 v 4 v 1 v 2 00 01 11 10 00 0 1 1 1 01 1 1 11 1 1 0 1 1 1 1 ___ v 3 v 4 BRGC encoded range [b 2 g(1), b 2 g(14)]. The minimized Boolean expression is v 2+v 3+v 4 , which is equivalent to *1** + **1* + ***1. ___ v 1 v 2 ___ v 2 v 3 ___ v 1 v 4 BRGC v 3 v 4 v 1 v 2 00 01 11 10 00 0 1 1 1 01 1 10 0 1 1 1 v 4 v 3 v 2 58

Ternary string (Boolean expression) p It can be noted that the Boolean expression minimization is an NP-complete problem for which an efficient exact algorithm is difficult to find. p However, Espresso-II [1][11], a fast heuristic algorithm, may be used in practice. 59

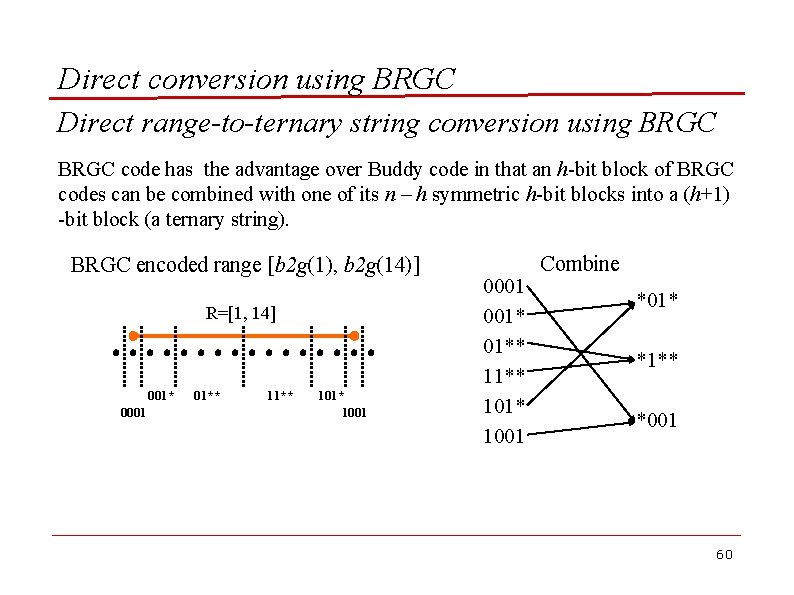

Direct conversion using BRGC Direct range-to-ternary string conversion using BRGC code has the advantage over Buddy code in that an h-bit block of BRGC codes can be combined with one of its n – h symmetric h-bit blocks into a (h+1) -bit block (a ternary string). BRGC encoded range [b 2 g(1), b 2 g(14)] R=[1, 14] 001* 0001 01** 101* 1001 001* 01** 101* 1001 Combine *01* *1** *001 60

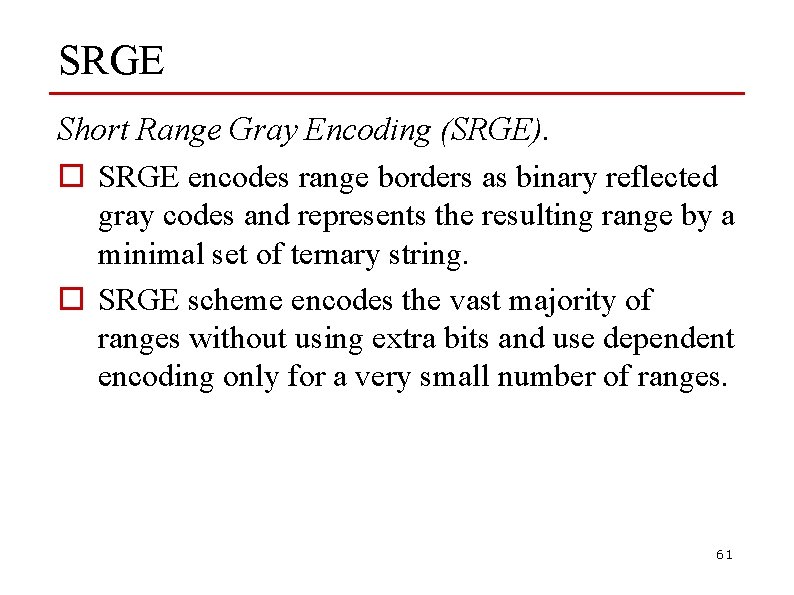

SRGE Short Range Gray Encoding (SRGE). o SRGE encodes range borders as binary reflected gray codes and represents the resulting range by a minimal set of ternary string. o SRGE scheme encodes the vast majority of ranges without using extra bits and use dependent encoding only for a very small number of ranges. 61

SRGE Short Range Gray Encoding (SRGE) 0101 1011 p 6 14 SRGE example: range [6 - 14] 62

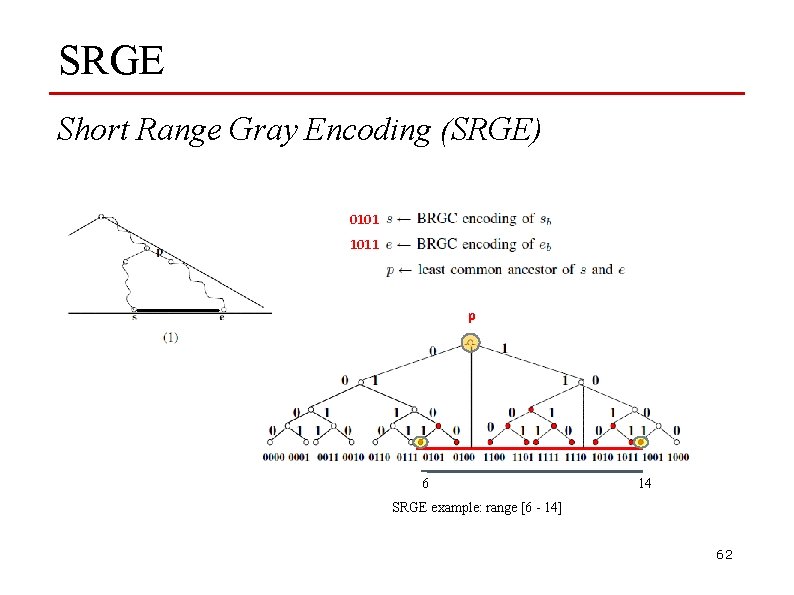

SRGE Short Range Gray Encoding (SRGE) 0100 1100 p pl pr 6 14 SRGE example: range [6 - 14] 63

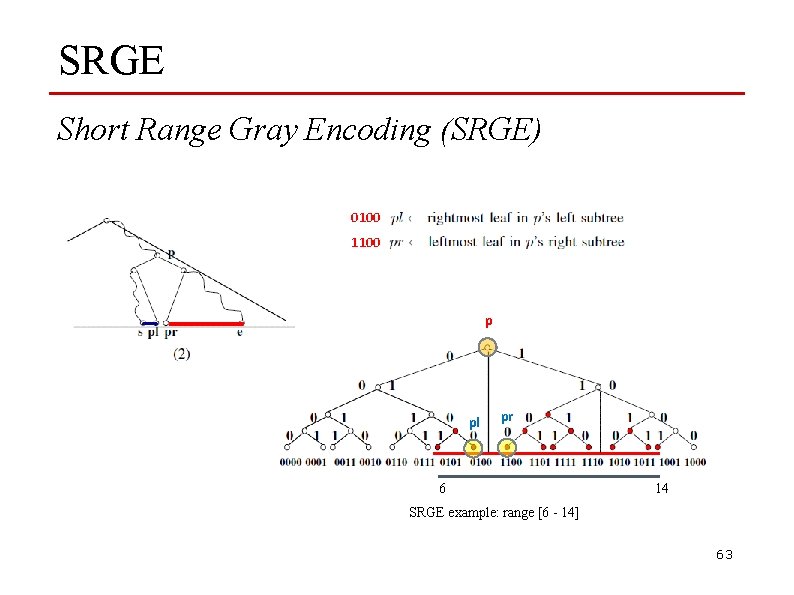

SRGE Short Range Gray Encoding (SRGE) Prefixes 1 = {010*} Prefixes 1 = {*10*} S’ SRGE example: range [6 - 14] 64

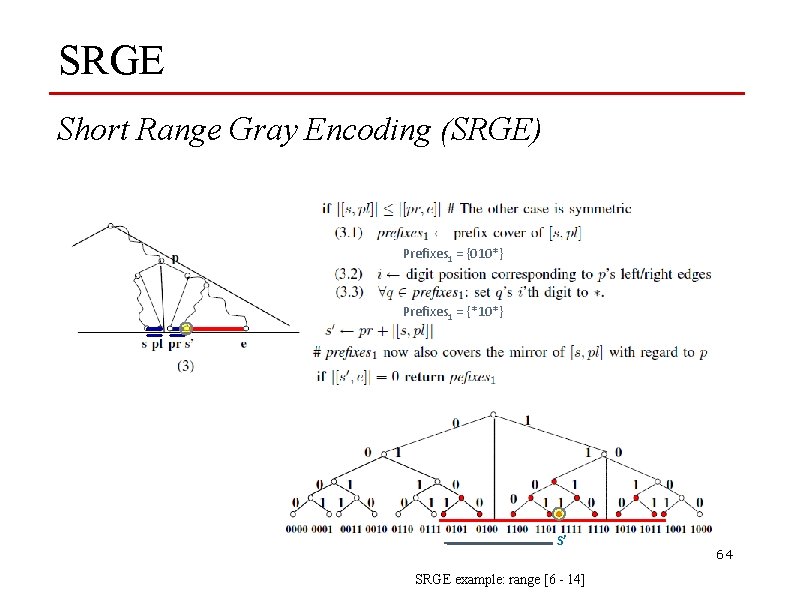

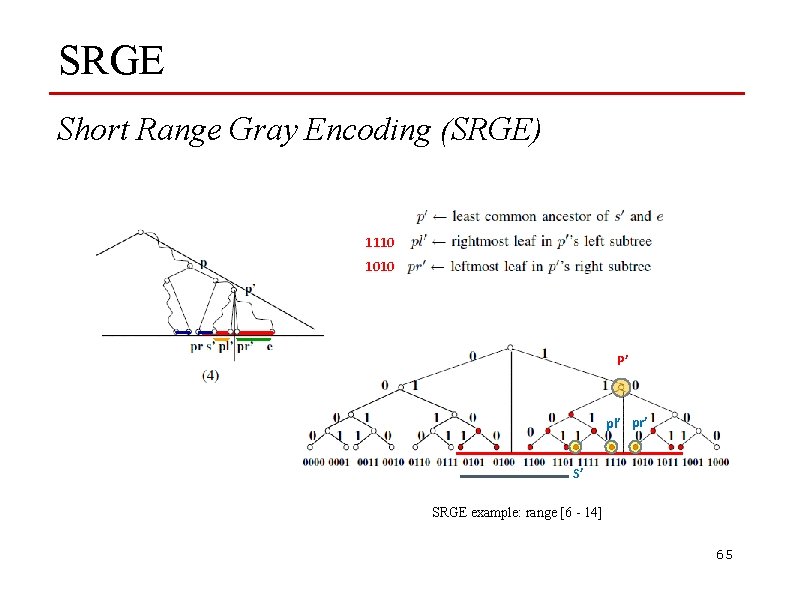

SRGE Short Range Gray Encoding (SRGE) 1110 1010 P’ pl’ pr’ S’ SRGE example: range [6 - 14] 65

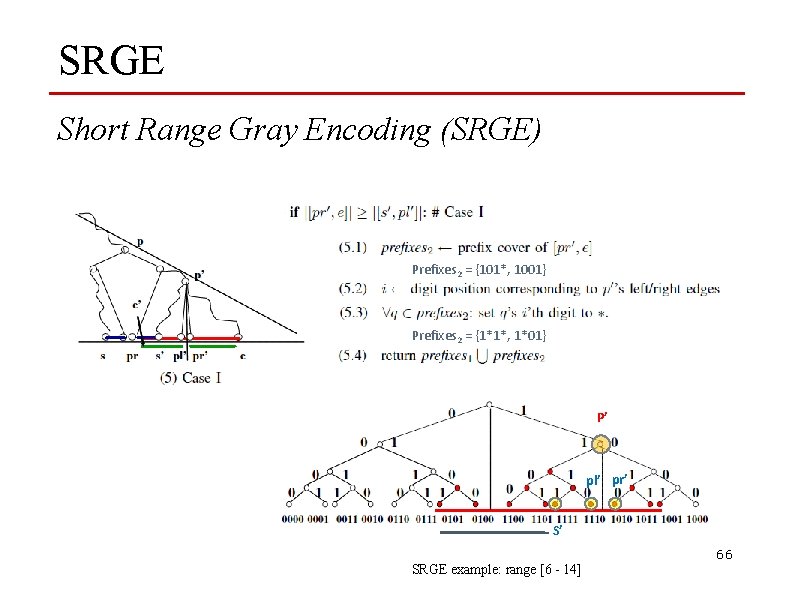

SRGE Short Range Gray Encoding (SRGE) Prefixes 2 = {101*, 1001} Prefixes 2 = {1*1*, 1*01} P’ pl’ pr’ S’ 66 SRGE example: range [6 - 14]

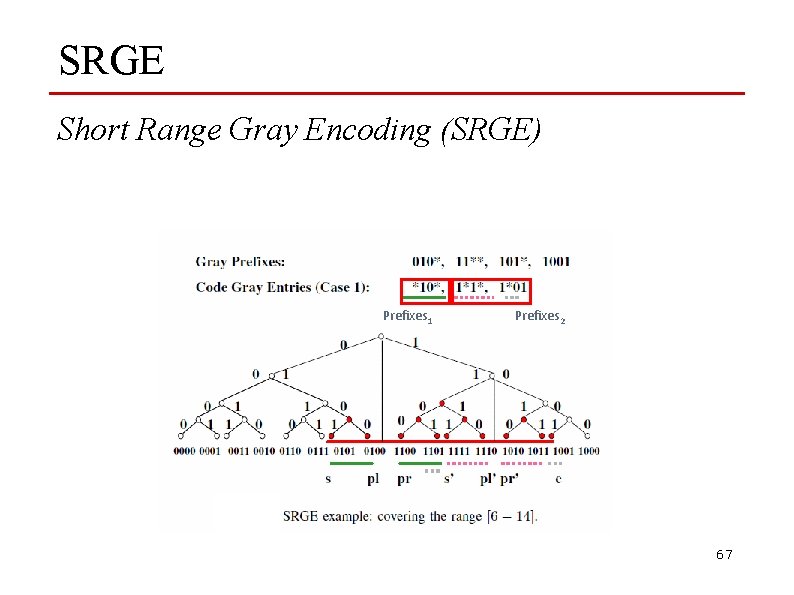

SRGE Short Range Gray Encoding (SRGE) Prefixes 1 Prefixes 2 67

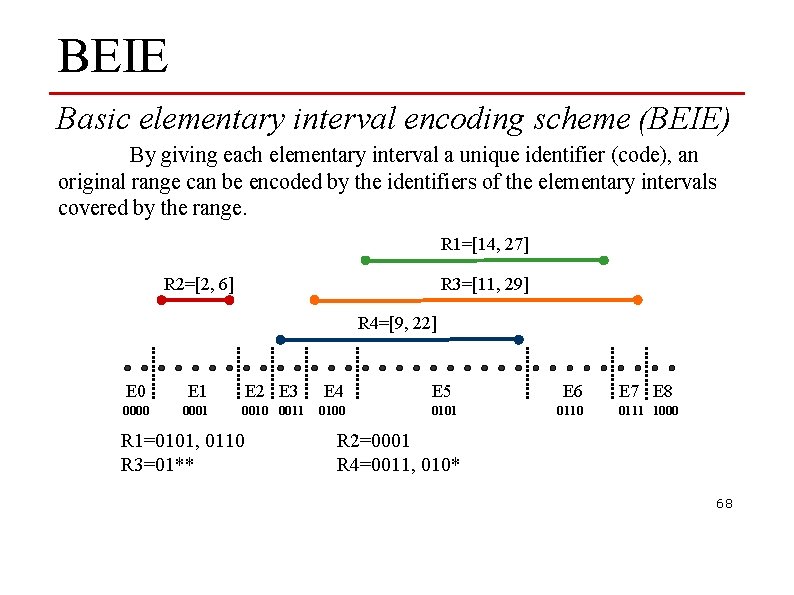

BEIE Basic elementary interval encoding scheme (BEIE) By giving each elementary interval a unique identifier (code), an original range can be encoded by the identifiers of the elementary intervals covered by the range. R 1=[14, 27] R 3=[11, 29] R 2=[2, 6] R 4=[9, 22] E 0 E 1 E 2 E 3 E 4 E 5 E 6 E 7 E 8 0000 0001 0010 0011 0100 0101 0110 0111 1000 R 1=0101, 0110 R 3=01** R 2=0001 R 4=0011, 010* 68

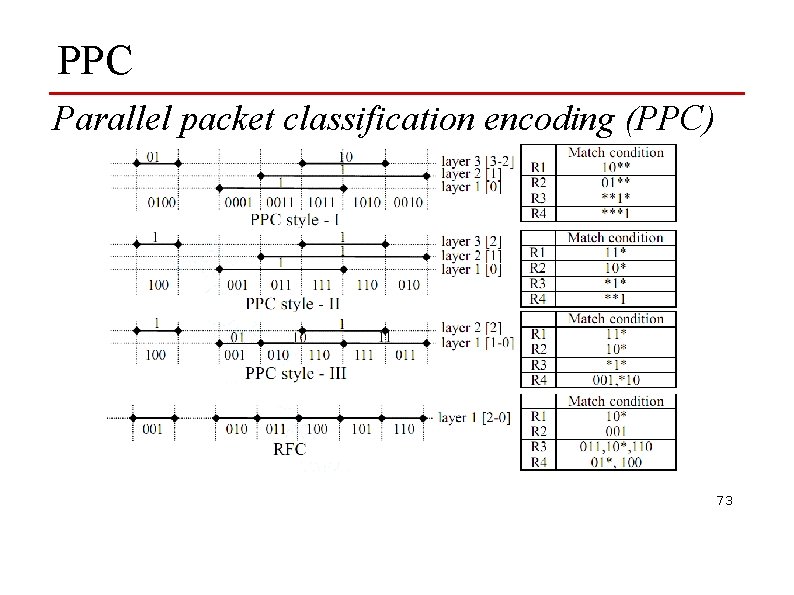

PPC Parallel packet classification encoding (PPC) u The PPC encoding scheme is also based on the concept of elementary intervals. u PPC divides the original primitive ranges into multiple groups (called layers). u Depending on the encoding style, the code assignments in one layer may be I. Independen. II. Partially dependent on other layer. III. Completely dependent on other layer. 69

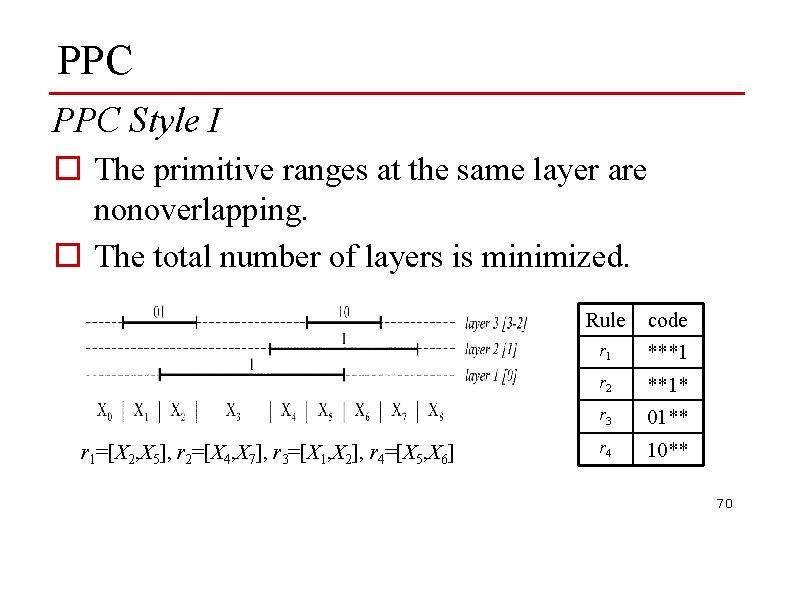

PPC Style I o The primitive ranges at the same layer are nonoverlapping. o The total number of layers is minimized. r 1=[X 2, X 5], r 2=[X 4, X 7], r 3=[X 1, X 2], r 4=[X 5, X 6] Rule code r 1 ***1 r 2 **1* r 3 01** r 4 10** 70

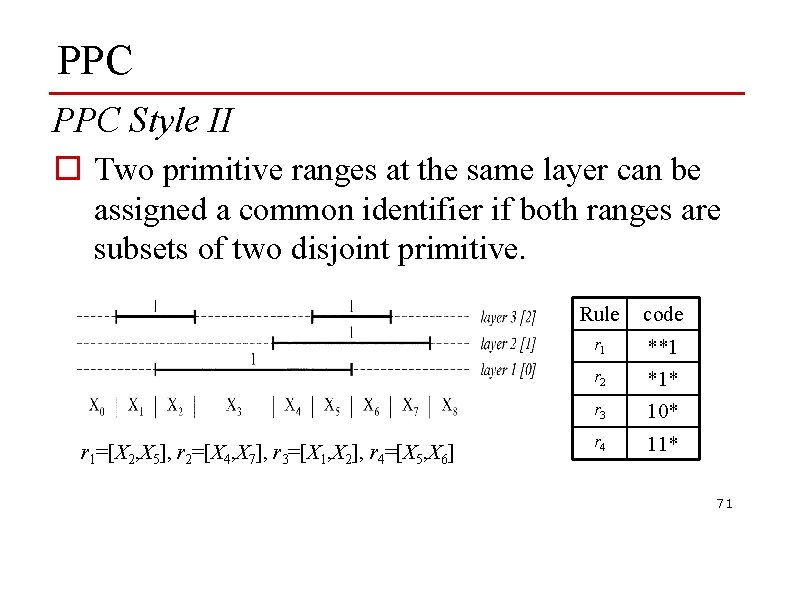

PPC Style II o Two primitive ranges at the same layer can be assigned a common identifier if both ranges are subsets of two disjoint primitive. r 1=[X 2, X 5], r 2=[X 4, X 7], r 3=[X 1, X 2], r 4=[X 5, X 6] Rule code r 1 **1 r 2 *1* r 3 10* r 4 11* 71

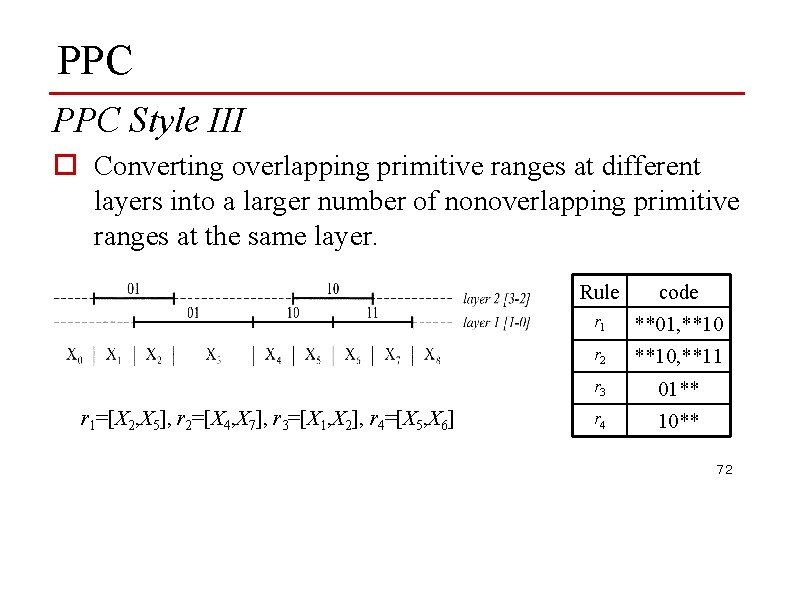

PPC Style III o Converting overlapping primitive ranges at different layers into a larger number of nonoverlapping primitive ranges at the same layer. r 1=[X 2, X 5], r 2=[X 4, X 7], r 3=[X 1, X 2], r 4=[X 5, X 6] Rule code r 1 **01, **10 r 2 **10, **11 r 3 01** r 4 10** 72

PPC Parallel packet classification encoding (PPC) 73

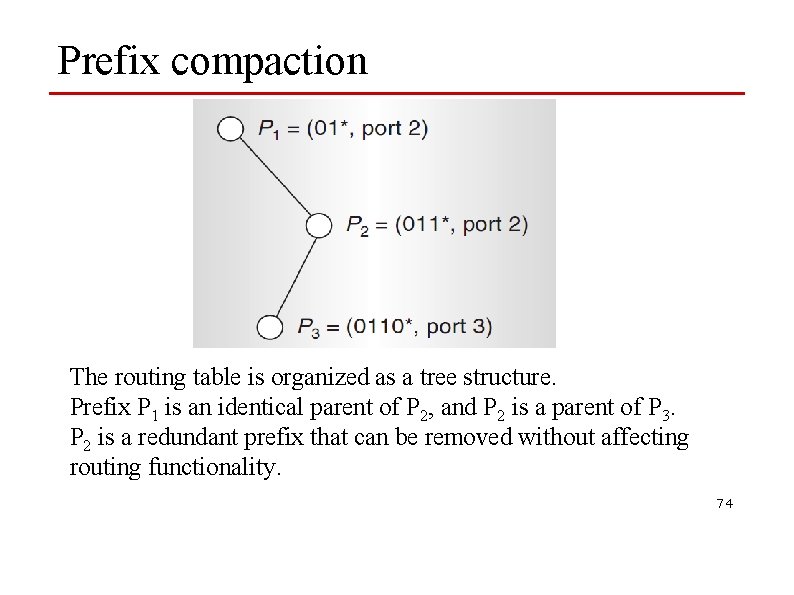

Prefix compaction The routing table is organized as a tree structure. Prefix P 1 is an identical parent of P 2, and P 2 is a parent of P 3. P 2 is a redundant prefix that can be removed without affecting routing functionality. 74

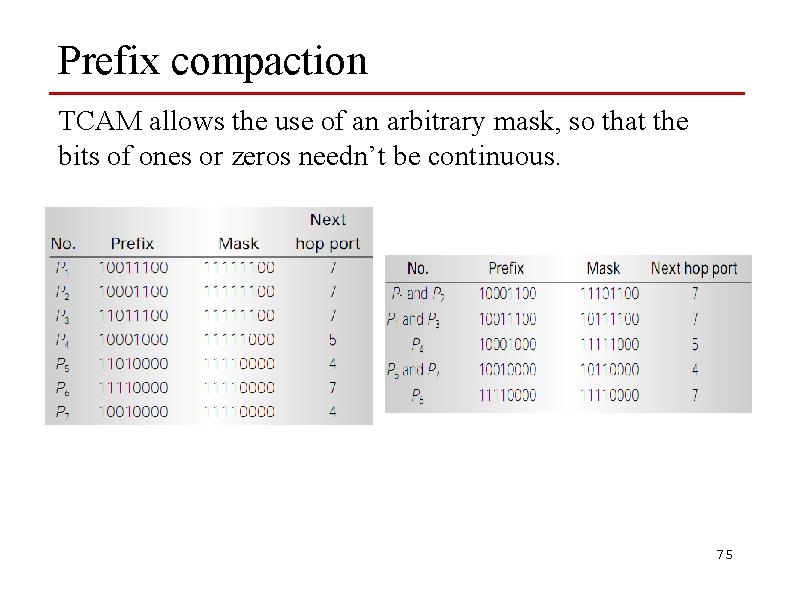

Prefix compaction TCAM allows the use of an arbitrary mask, so that the bits of ones or zeros needn’t be continuous. 75

Proposed Range Encoding Schemes (1/8) Scheme based on Elementary Interval and BRGC(EIGC) u Assign each elementary interval a identifier by using BRGC u Default elementary interval have the same code. Match condition R 1 01*1 R 2 0001 R 3 01** R 4 0010 and 011* R 1 R 2 R 3 R 4 E 0 E 1 E 2 E 3 E 4 E 5 E 6 E 7 E 8 0000 0001 0000 0010 0111 0100 0000 76

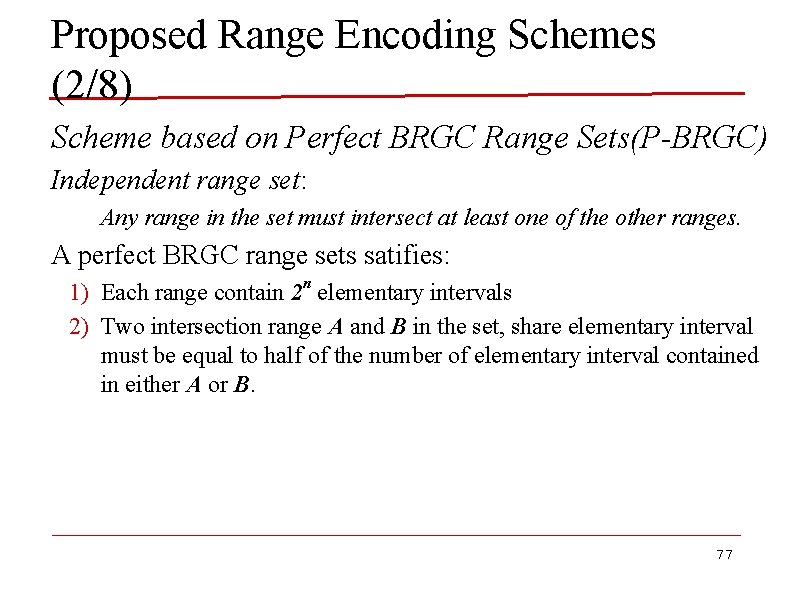

Proposed Range Encoding Schemes (2/8) Scheme based on Perfect BRGC Range Sets(P-BRGC) Independent range set: Any range in the set must intersect at least one of the other ranges. A perfect BRGC range sets satifies: 1) Each range contain 2 n elementary intervals 2) Two intersection range A and B in the set, share elementary interval must be equal to half of the number of elementary interval contained in either A or B. 77

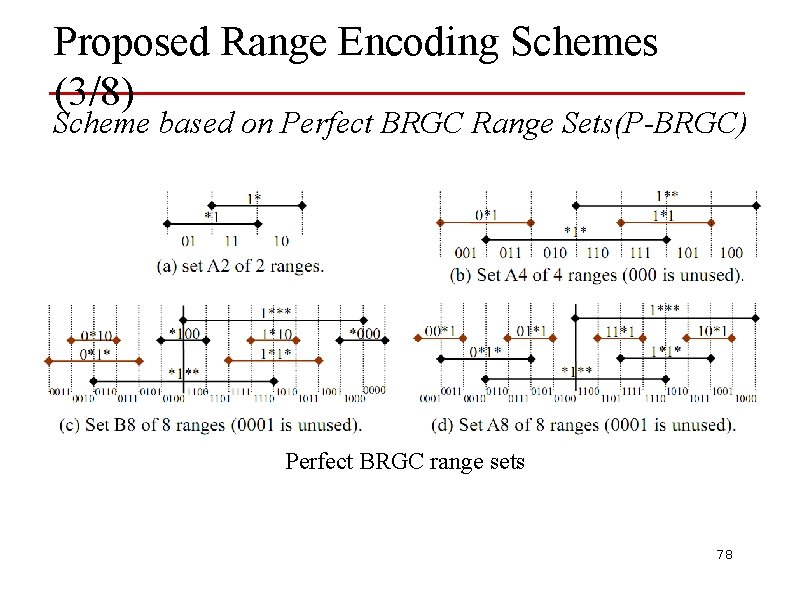

Proposed Range Encoding Schemes (3/8) Scheme based on Perfect BRGC Range Sets(P-BRGC) Perfect BRGC range sets 78

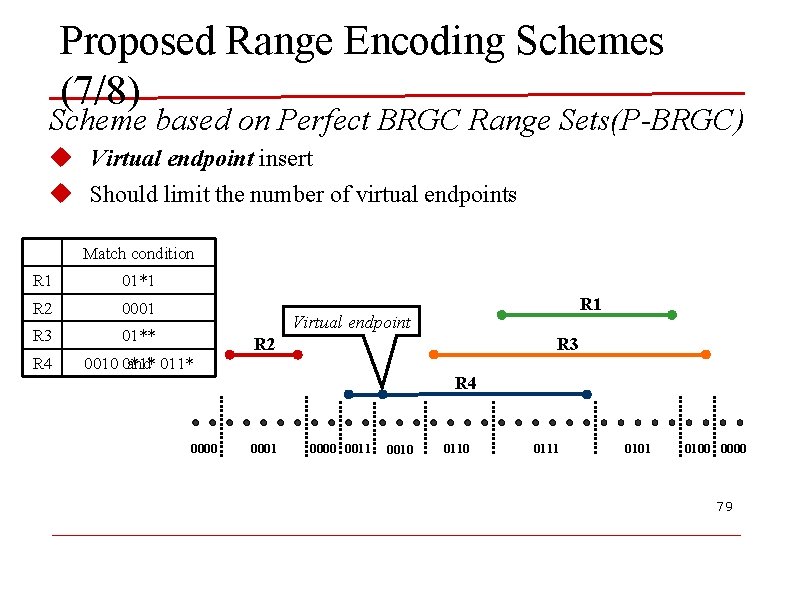

Proposed Range Encoding Schemes (7/8) Scheme based on Perfect BRGC Range Sets(P-BRGC) u Virtual endpoint insert u Should limit the number of virtual endpoints Match condition R 1 01*1 R 2 0001 R 3 01** R 4 0010 0*1* and 011* R 1 Virtual endpoint R 2 R 3 R 4 0000 0001 0000 0011 0010 0111 0100 0000 79

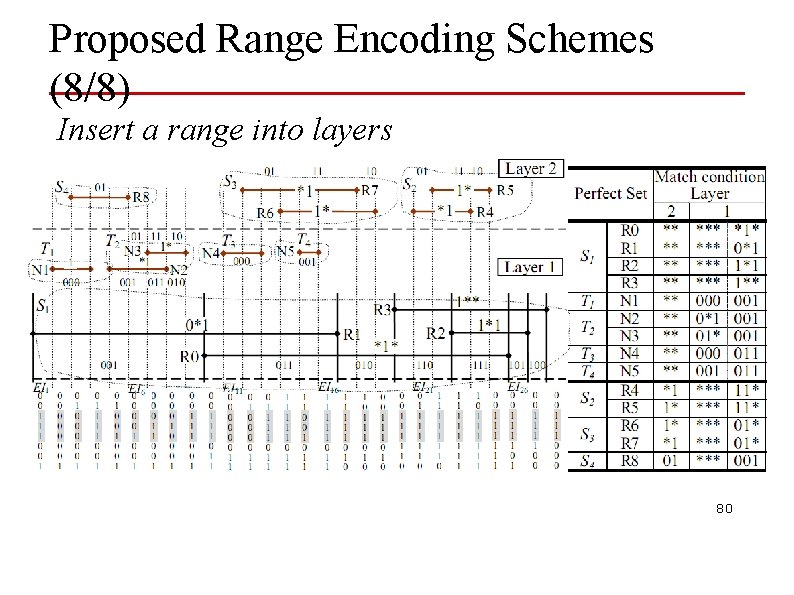

Proposed Range Encoding Schemes (8/8) Insert a range into layers 80

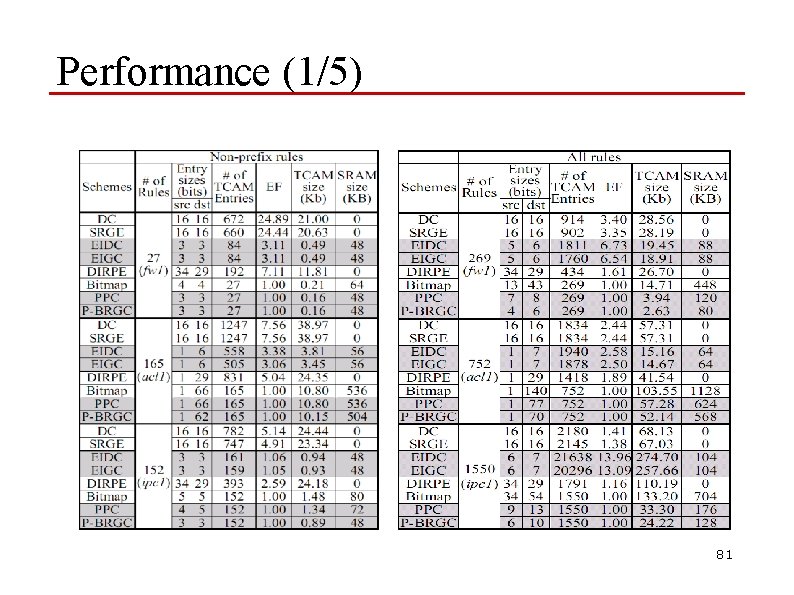

Performance (1/5) 81

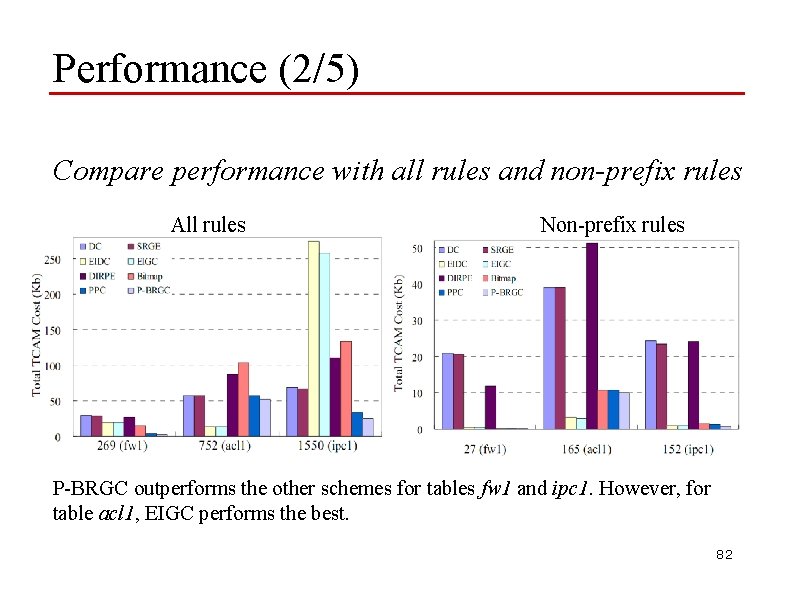

Performance (2/5) Compare performance with all rules and non-prefix rules All rules Non-prefix rules P-BRGC outperforms the other schemes for tables fw 1 and ipc 1. However, for table acl 1, EIGC performs the best. 82

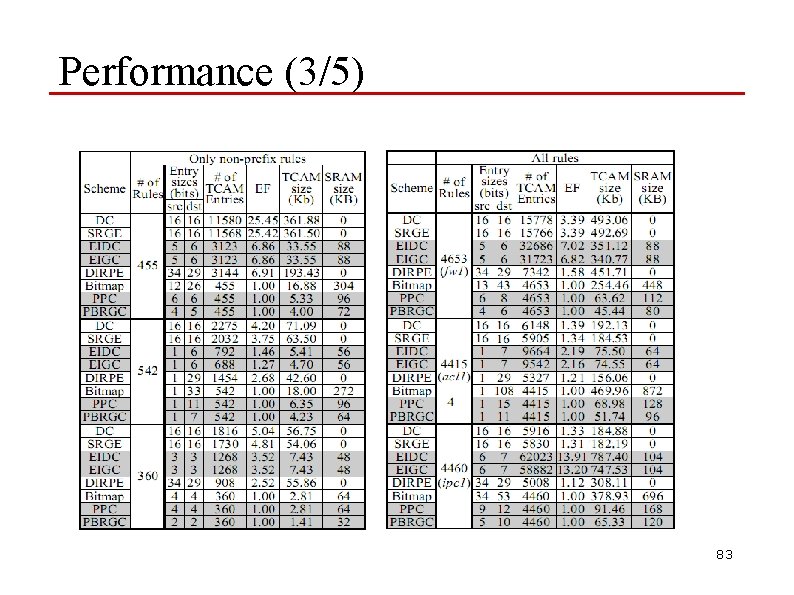

Performance (3/5) 83

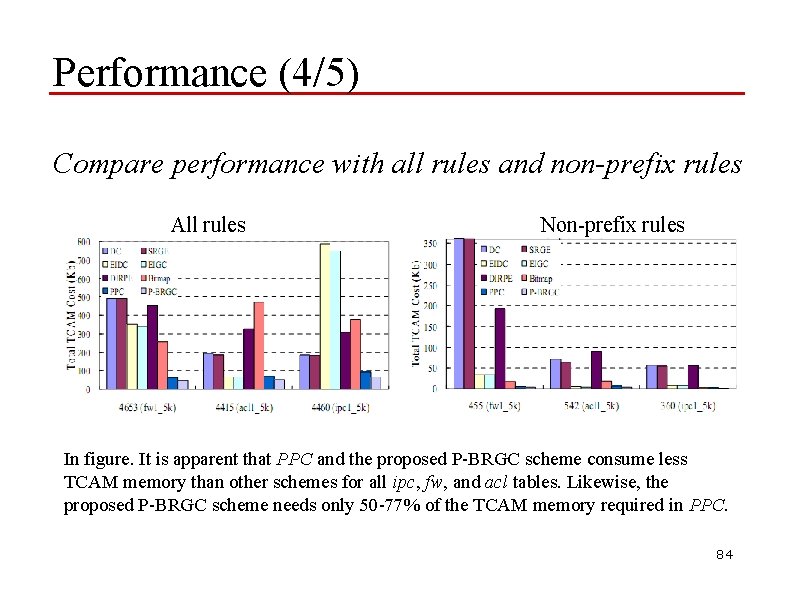

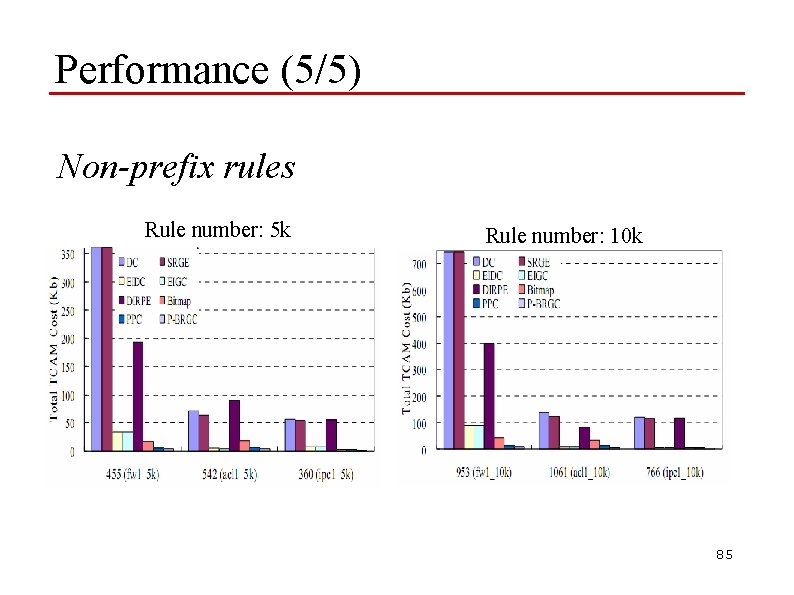

Performance (4/5) Compare performance with all rules and non-prefix rules All rules Non-prefix rules In figure. It is apparent that PPC and the proposed P-BRGC scheme consume less TCAM memory than other schemes for all ipc, fw, and acl tables. Likewise, the proposed P-BRGC scheme needs only 50 -77% of the TCAM memory required in PPC. 84

Performance (5/5) Non-prefix rules Rule number: 5 k Rule number: 10 k 85

Conclusions o TCAM introduction o Power reduction o Range encoding 86

- Slides: 86