System installation updates A Manabe KEK 23 May

System installation & updates A. Manabe (KEK) 23 May 2001 LSCCW A. Manabe 1

Installation & update z. System(SW) installation & update is boring and hard work for me. z. Question: How do you install or update system for Cluster of more than 100 nodes. z. Question: Did you postpone a system upgrading, because the work is too much? 23 May 2001 LSCCW A. Manabe 2

Installation & Update methods 1. Pre-installed, Pre-configured System • you can postpone your work, but soon or later. . . 2. Manual installation; one PC by one PC. • many operators in parallel with many duplicated installation CDs. å it require many CRTs, days and cost (to hire operators) 3. Network Installation • with NFS/FTP server and Automated ‘batch’ installation. å ‘Server too busy’ in installation to many nodes. å A lot of works still remain (utility SW installation. . . ). 23 May 2001 LSCCW A. Manabe 3

Installation & update methods 4. Duplicate disk image 1. Attach many disks to one PC and dup. the installed disk, then distribute duplicated disks to nodes. å Hardware work is hard (attach/detach easy disk unit). 5. Diskless PC 1. Using local disks only for swap and /var directory, other dir. from NFS server. 2. Powerful server is necessary. 3. Node can do nothing alone (trouble shooting may become difficult). 23 May 2001 LSCCW A. Manabe 4

An Idea z. Make one installed host, clone the disk image to nodes via network. z 100 PC installation in 10 min. (objective value) z. Necessary operator intervention as small as possible. 23 May 2001 LSCCW A. Manabe 5

Our planning method (1) u Network Disk Cloning Software • dolly+ è For cloning disk image. U Network Booting • PXE (Preboot Execution Environment) with Intel NIC èFor starting an Installer. U Batch Installer • Modified Red. Hat kickstart èFor disk format, network setup and starting cloning sw. make private /etc/fstab, /etc/sysconfig/network. . 23 May 2001 LSCCW A. Manabe 6

Our method (2) U Remote Power Controller • Network control power tap (Hardware) èFor remote system reset. (replace ‘pushing reset button’ one by one) U Console server with a serial console feature of Linux. èFor watching everything done well. 23 May 2001 LSCCW A. Manabe 7

Dolly+ 100 PC installation in 10 min. m. A software to copy/clone files or/and disk images among many PCs through a network. m. Running on Linux as a user program. m. Free Software m. Dolly is developed by Co. Ps project in ETH. (Swiss) 23 May 2001 LSCCW A. Manabe 8

Dolly+ ü Sequential file & Block file transfer. ü RING network connection topology. ü Pipeline mechanism. ü Fail recovery mechanism. 23 May 2001 LSCCW A. Manabe 9

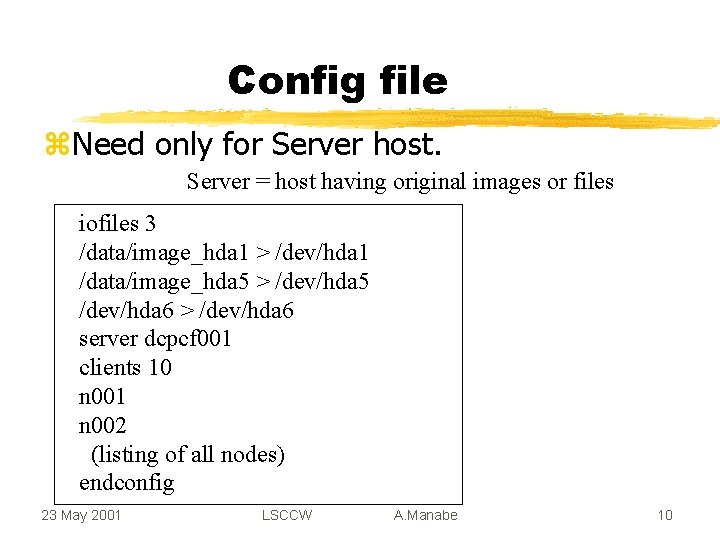

Config file z. Need only for Server host. Server = host having original images or files iofiles 3 /data/image_hda 1 > /dev/hda 1 /data/image_hda 5 > /dev/hda 5 /dev/hda 6 > /dev/hda 6 server dcpcf 001 clients 10 n 001 n 002 (listing of all nodes) endconfig 23 May 2001 LSCCW A. Manabe 10

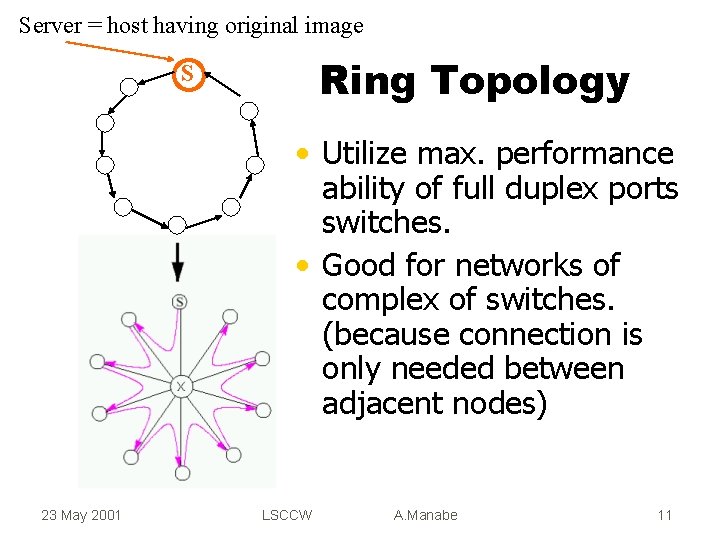

Server = host having original image Ring Topology S • Utilize max. performance ability of full duplex ports switches. • Good for networks of complex of switches. (because connection is only needed between adjacent nodes) 23 May 2001 LSCCW A. Manabe 11

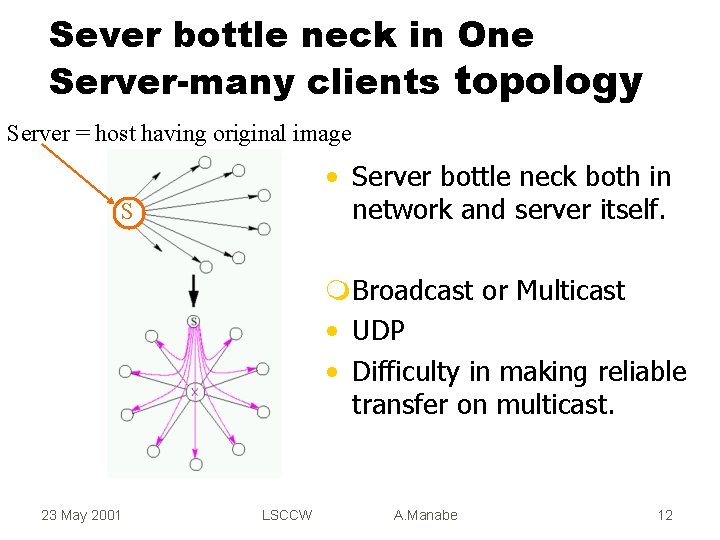

Sever bottle neck in One Server-many clients topology Server = host having original image • Server bottle neck both in network and server itself. S m. Broadcast or Multicast • UDP • Difficulty in making reliable transfer on multicast. 23 May 2001 LSCCW A. Manabe 12

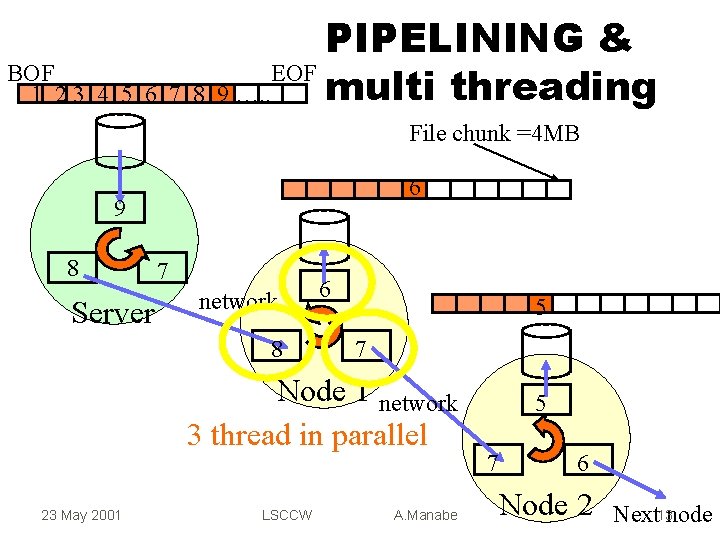

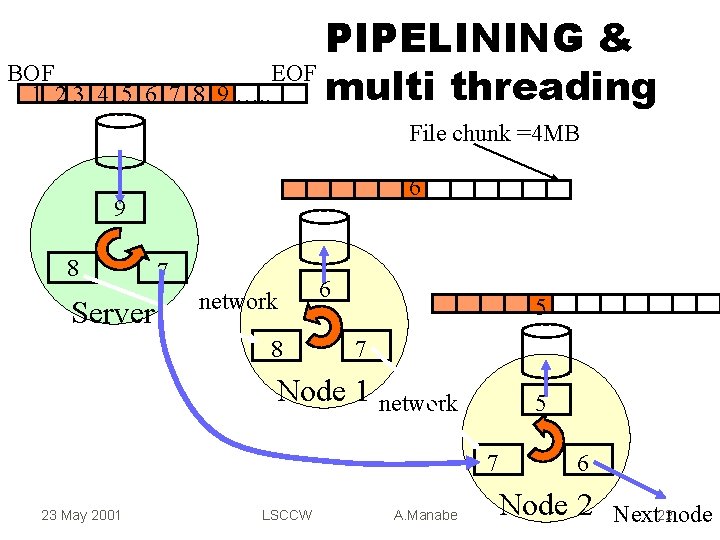

BOF 1 23 4 5 6 7 8 PIPELINING & EOF multi threading 9 …. . File chunk =4 MB 6 9 8 Server 7 network 8 6 5 7 Node 1 network 3 thread in parallel 23 May 2001 LSCCW A. Manabe 5 7 6 Node 2 Next 13 node

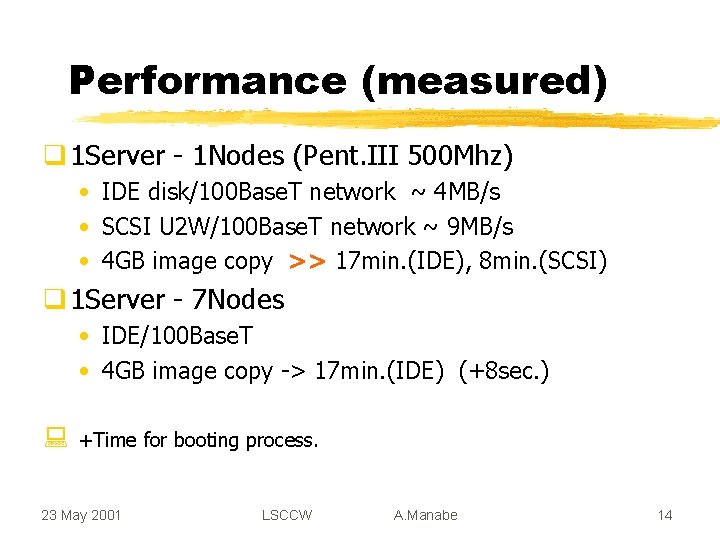

Performance (measured) q 1 Server - 1 Nodes (Pent. III 500 Mhz) • IDE disk/100 Base. T network ~ 4 MB/s • SCSI U 2 W/100 Base. T network ~ 9 MB/s • 4 GB image copy >> 17 min. (IDE), 8 min. (SCSI) q 1 Server - 7 Nodes • IDE/100 Base. T • 4 GB image copy -> 17 min. (IDE) (+8 sec. ) : +Time for booting process. 23 May 2001 LSCCW A. Manabe 14

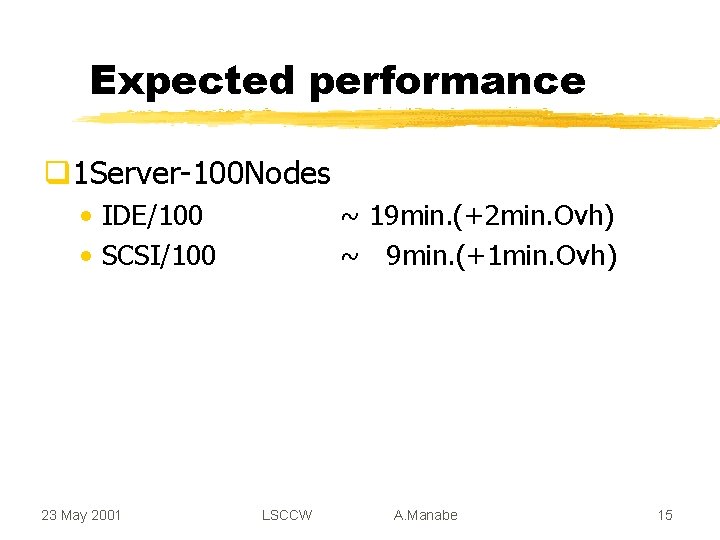

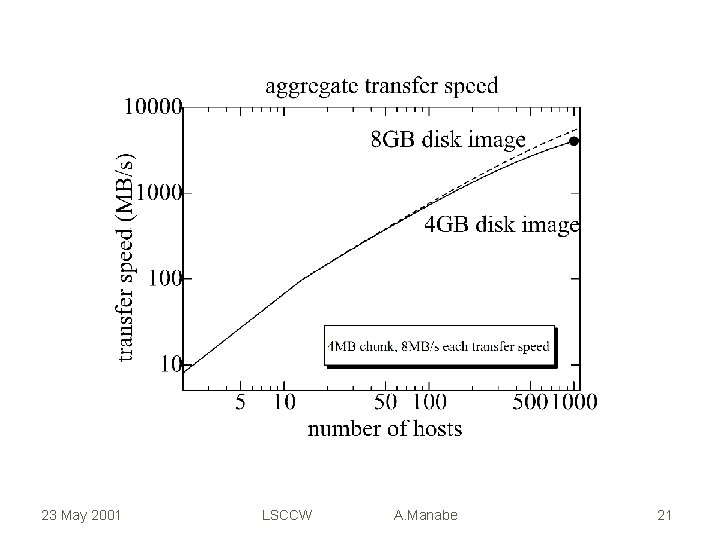

Expected performance q 1 Server-100 Nodes • IDE/100 • SCSI/100 23 May 2001 ~ 19 min. (+2 min. Ovh) ~ 9 min. (+1 min. Ovh) LSCCW A. Manabe 15

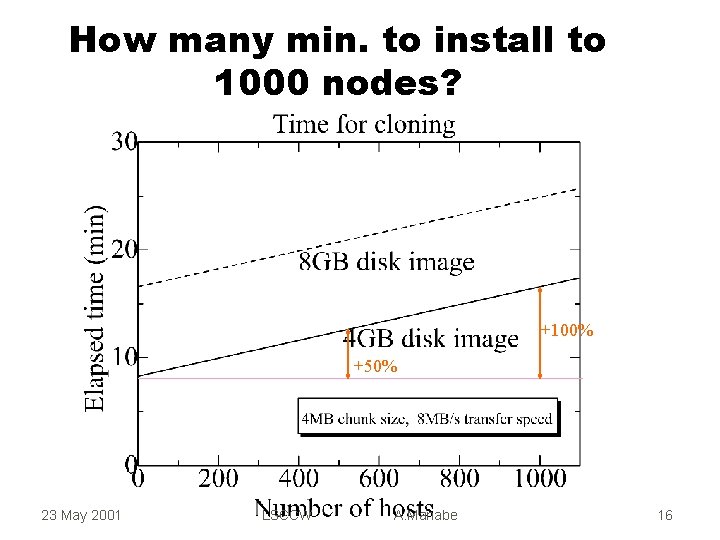

How many min. to install to 1000 nodes? +100% +50% 23 May 2001 LSCCW A. Manabe 16

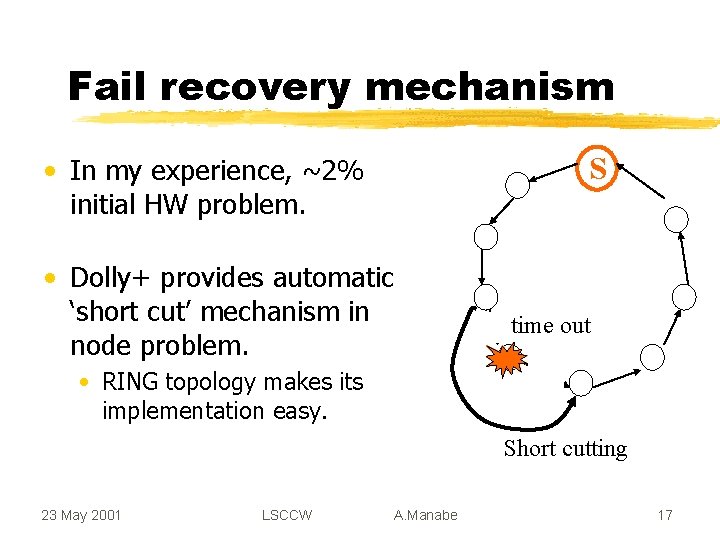

Fail recovery mechanism S • In my experience, ~2% initial HW problem. • Dolly+ provides automatic ‘short cut’ mechanism in node problem. time out • RING topology makes its implementation easy. Short cutting 23 May 2001 LSCCW A. Manabe 17

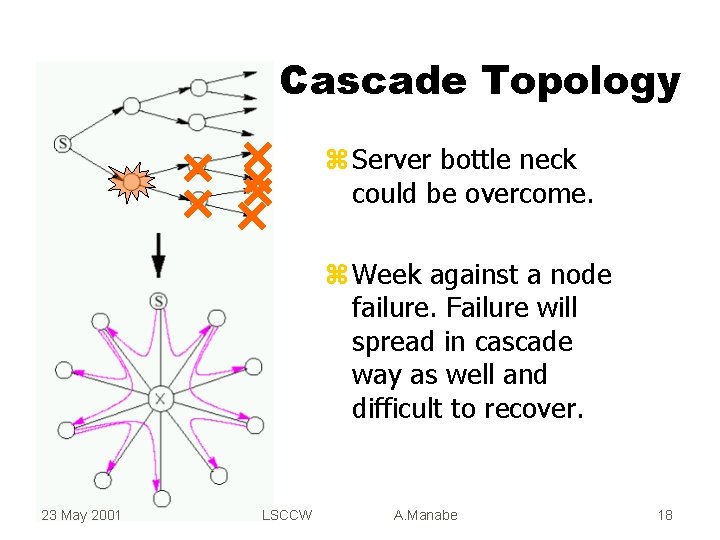

Cascade Topology z Server bottle neck could be overcome. z Week against a node failure. Failure will spread in cascade way as well and difficult to recover. 23 May 2001 LSCCW A. Manabe 18

• Beta version will be available from corvus. kek. jp/~manabe/pcf/dolly after this work shop. 23 May 2001 LSCCW A. Manabe 19

23 May 2001 LSCCW A. Manabe 20

23 May 2001 LSCCW A. Manabe 21

BOF 1 23 4 5 6 7 8 PIPELINING & EOF multi threading 9 …. . File chunk =4 MB 6 9 8 Server 7 network 8 6 5 7 Node 1 network 5 7 23 May 2001 LSCCW A. Manabe 6 Node 2 Next 22 node

- Slides: 22