Synthesis For Finite State Machines 1 FSM Finite

![Other State Encoding If encoding of state-6 = [111], check [1 1 1] ∩ Other State Encoding If encoding of state-6 = [111], check [1 1 1] ∩](https://slidetodoc.com/presentation_image_h2/316165a5507e96395c86401e4cc248fe/image-22.jpg)

- Slides: 35

Synthesis For Finite State Machines 1

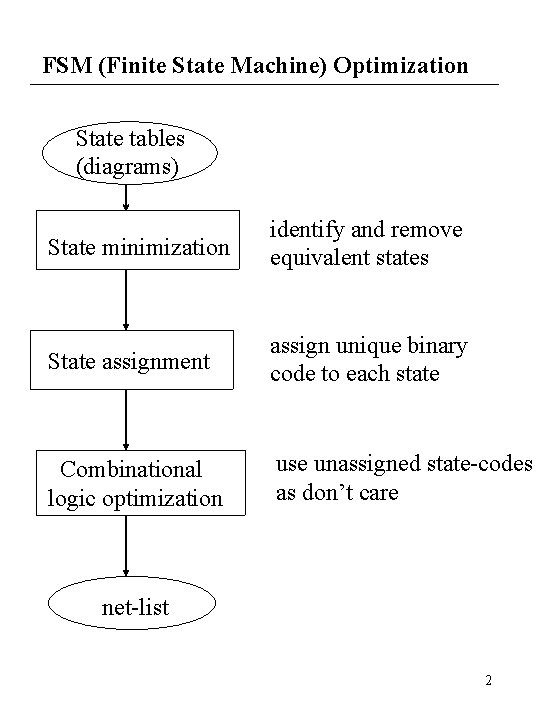

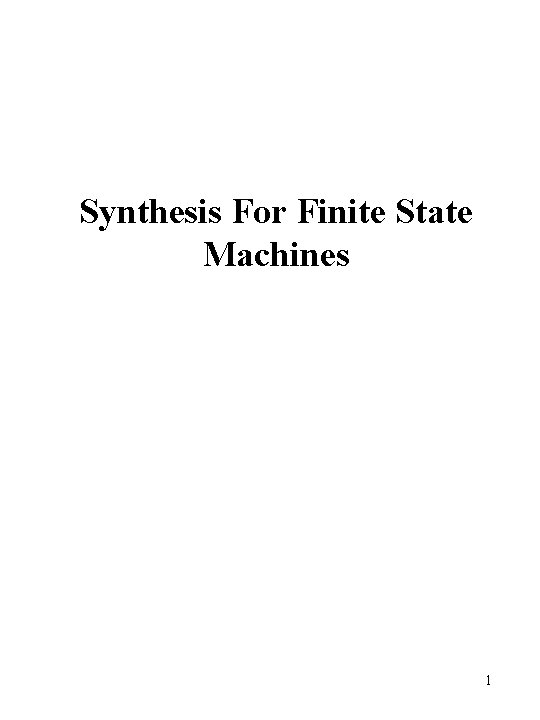

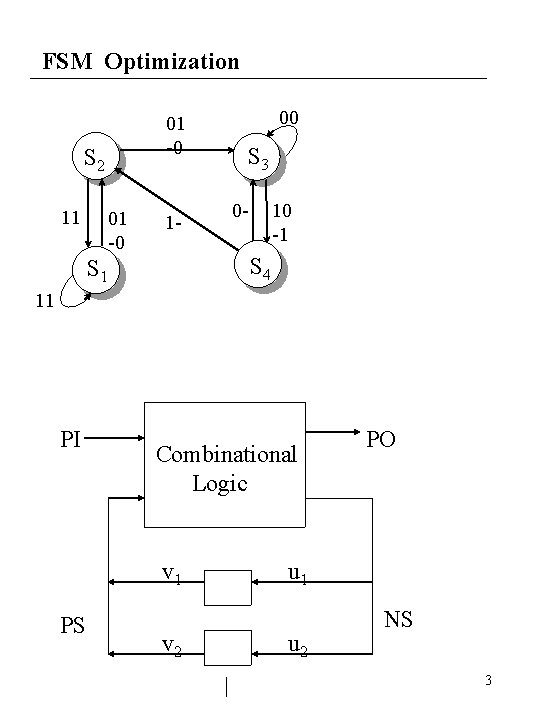

FSM (Finite State Machine) Optimization State tables (diagrams) State minimization identify and remove equivalent states State assignment assign unique binary code to each state Combinational logic optimization use unassigned state-codes as don’t care net-list 2

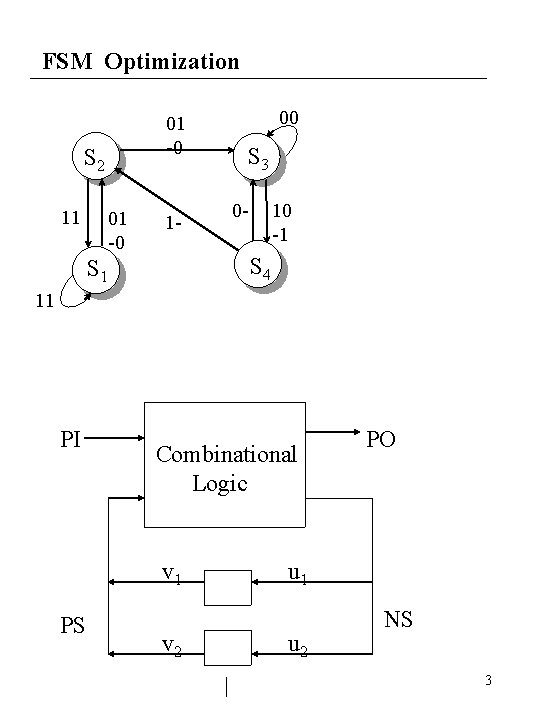

FSM Optimization 01 -0 S 2 11 01 -0 1 - 00 S 3 0 - 10 -1 S 4 S 1 11 PI Combinational Logic v 1 PS v 2 PO u 1 u 2 NS 3

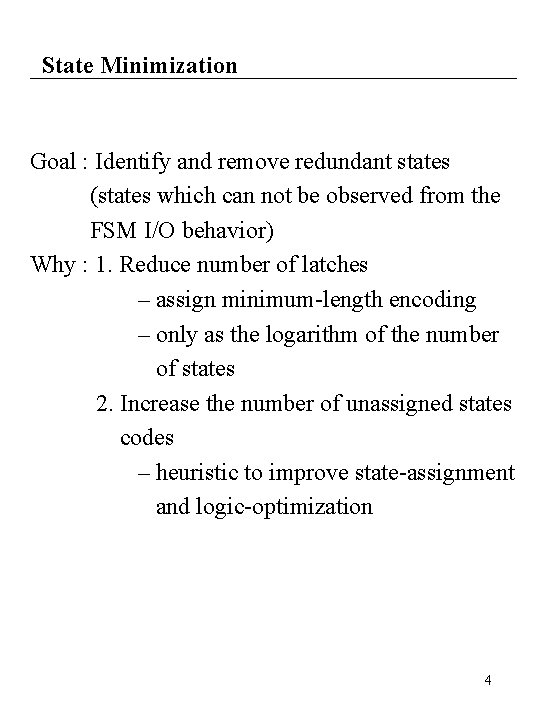

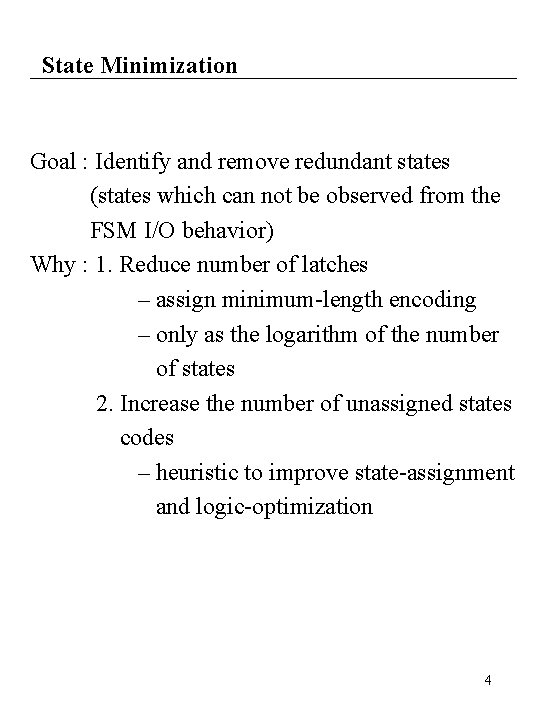

State Minimization Goal : Identify and remove redundant states (states which can not be observed from the FSM I/O behavior) Why : 1. Reduce number of latches – assign minimum-length encoding – only as the logarithm of the number of states 2. Increase the number of unassigned states codes – heuristic to improve state-assignment and logic-optimization 4

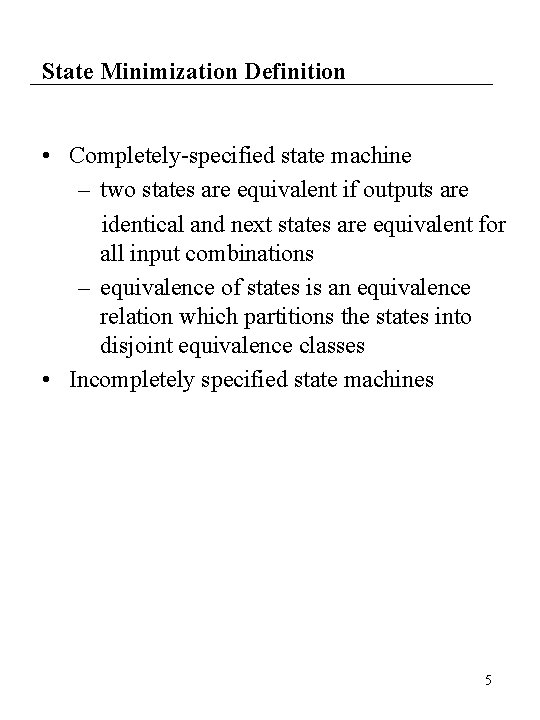

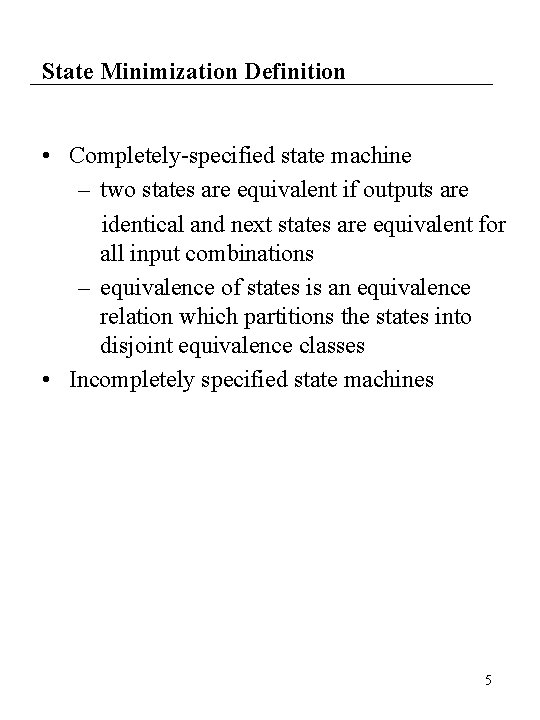

State Minimization Definition • Completely-specified state machine – two states are equivalent if outputs are identical and next states are equivalent for all input combinations – equivalence of states is an equivalence relation which partitions the states into disjoint equivalence classes • Incompletely specified state machines 5

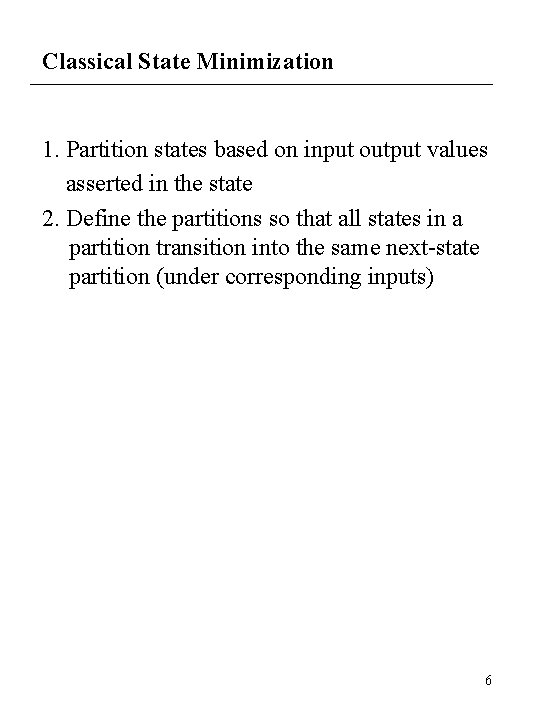

Classical State Minimization 1. Partition states based on input output values asserted in the state 2. Define the partitions so that all states in a partition transition into the same next-state partition (under corresponding inputs) 6

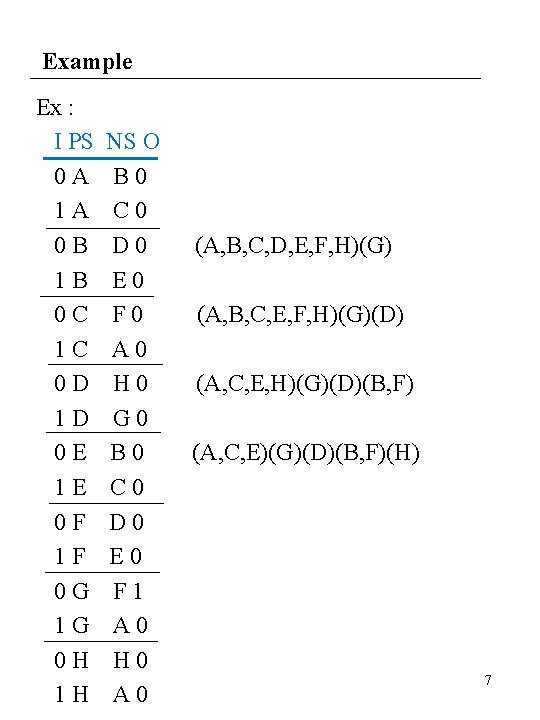

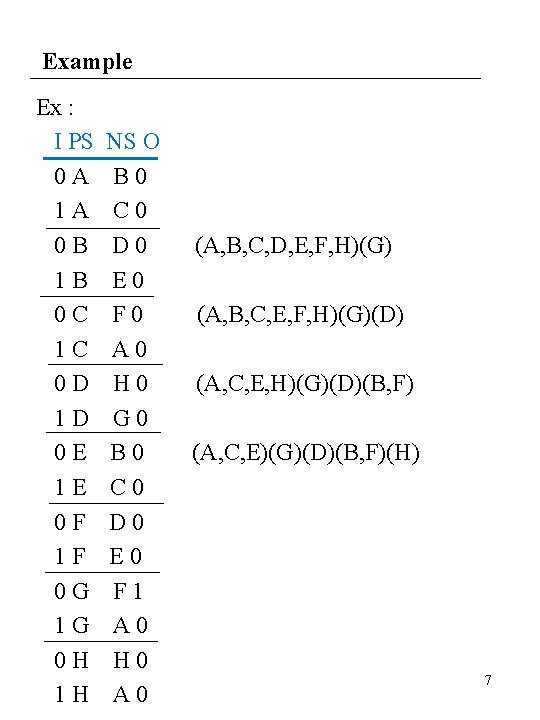

Example Ex : I PS 0 A 1 A 0 B 1 B 0 C 1 C 0 D 1 D 0 E 1 E 0 F 1 F 0 G 1 G 0 H 1 H NS O B 0 C 0 D 0 E 0 F 0 A 0 H 0 G 0 B 0 C 0 D 0 E 0 F 1 A 0 H 0 A 0 (A, B, C, D, E, F, H)(G) (A, B, C, E, F, H)(G)(D) (A, C, E, H)(G)(D)(B, F) (A, C, E)(G)(D)(B, F)(H) 7

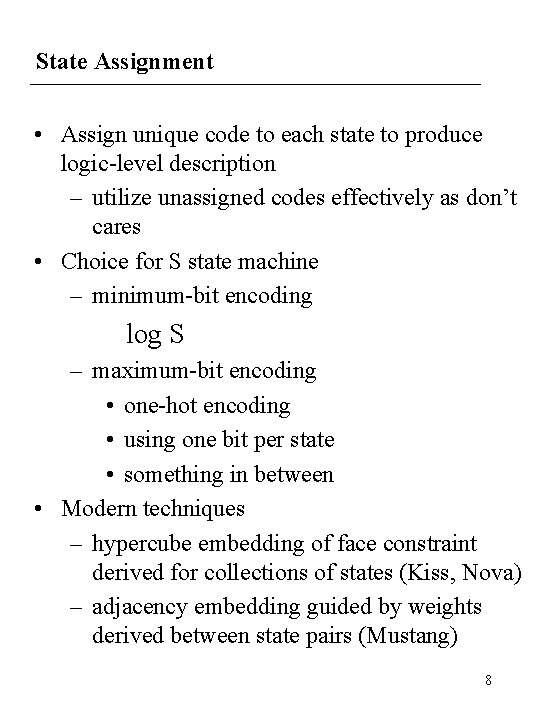

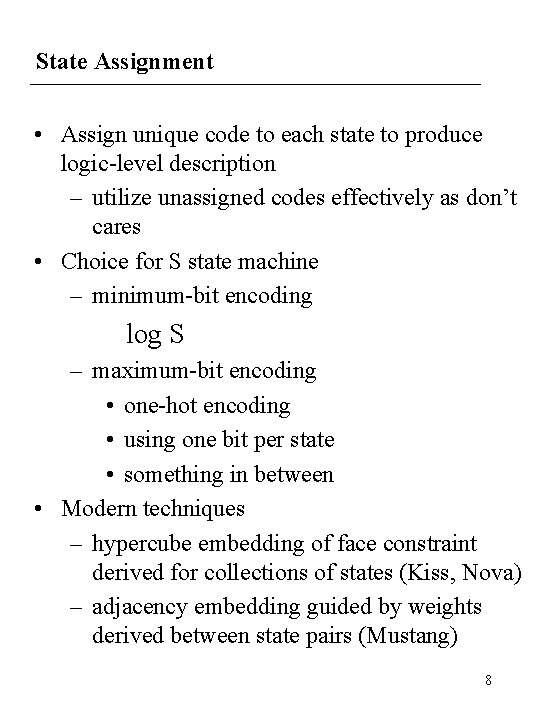

State Assignment • Assign unique code to each state to produce logic-level description – utilize unassigned codes effectively as don’t cares • Choice for S state machine – minimum-bit encoding log S – maximum-bit encoding • one-hot encoding • using one bit per state • something in between • Modern techniques – hypercube embedding of face constraint derived for collections of states (Kiss, Nova) – adjacency embedding guided by weights derived between state pairs (Mustang) 8

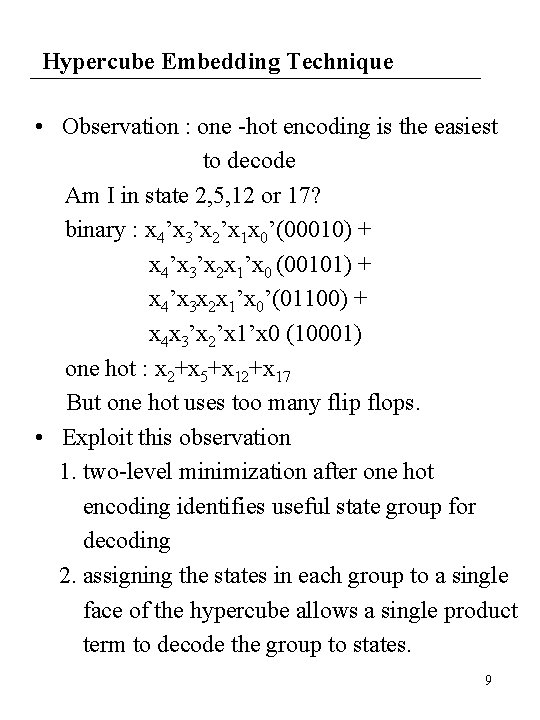

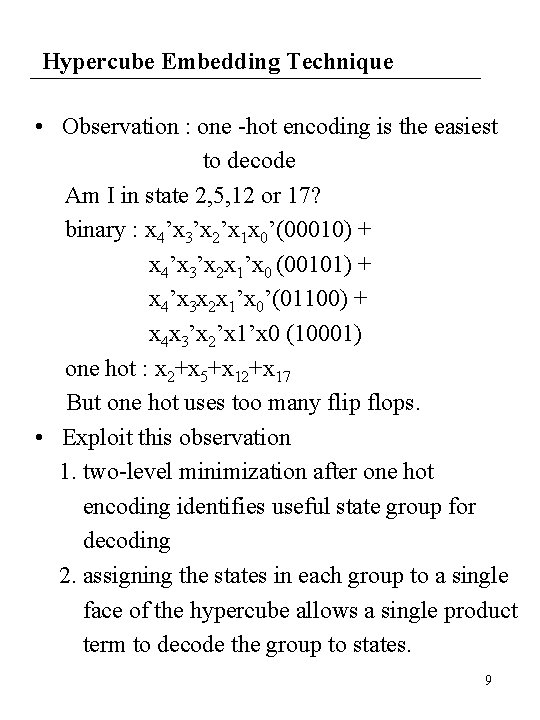

Hypercube Embedding Technique • Observation : one -hot encoding is the easiest to decode Am I in state 2, 5, 12 or 17? binary : x 4’x 3’x 2’x 1 x 0’(00010) + x 4’x 3’x 2 x 1’x 0 (00101) + x 4’x 3 x 2 x 1’x 0’(01100) + x 4 x 3’x 2’x 1’x 0 (10001) one hot : x 2+x 5+x 12+x 17 But one hot uses too many flip flops. • Exploit this observation 1. two-level minimization after one hot encoding identifies useful state group for decoding 2. assigning the states in each group to a single face of the hypercube allows a single product term to decode the group to states. 9

Hypercube Embedding Based State Assignment 10

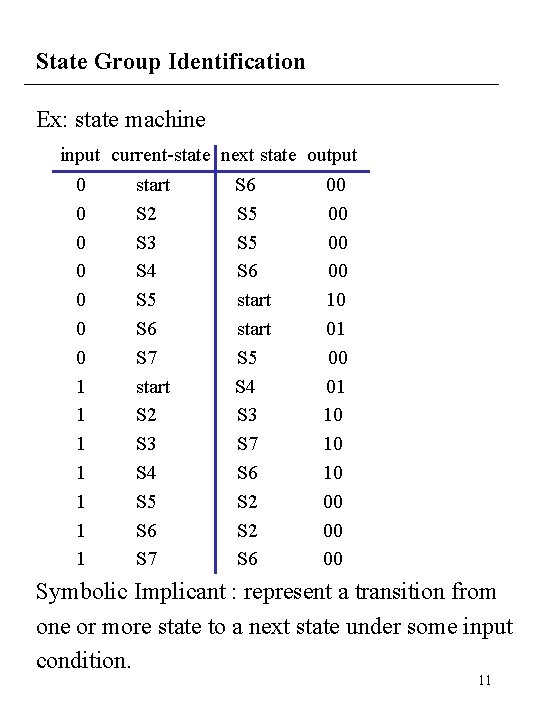

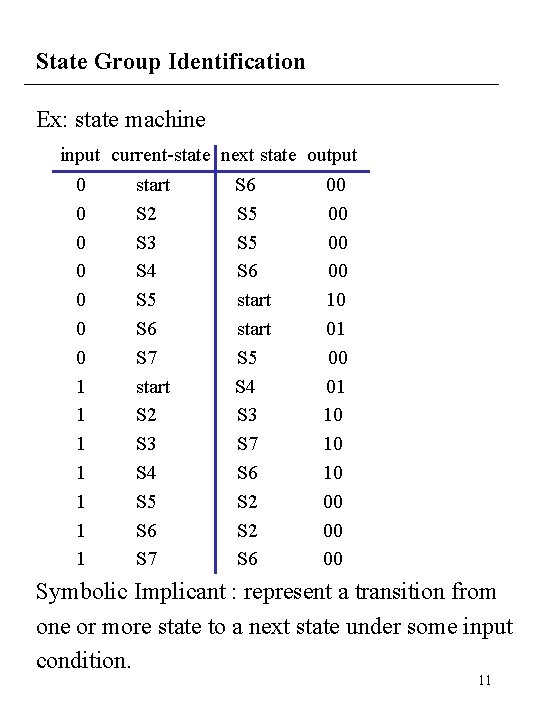

State Group Identification Ex: state machine input current-state next state output 0 start S 6 00 0 S 2 S 5 00 0 S 3 S 5 00 0 S 4 S 6 00 0 S 5 start 10 0 S 6 start 01 0 S 7 S 5 00 1 start S 4 01 1 S 2 S 3 10 1 S 3 S 7 10 1 S 4 S 6 10 1 S 5 S 2 00 1 S 6 S 2 00 1 S 7 S 6 00 Symbolic Implicant : represent a transition from one or more state to a next state under some input condition. 11

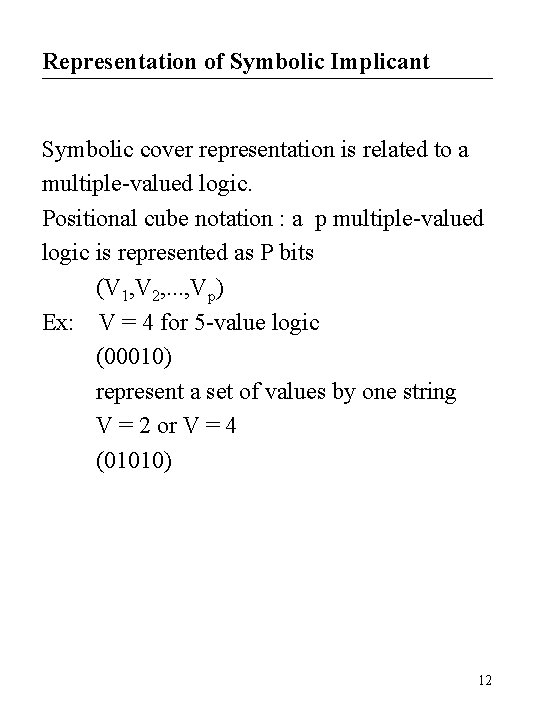

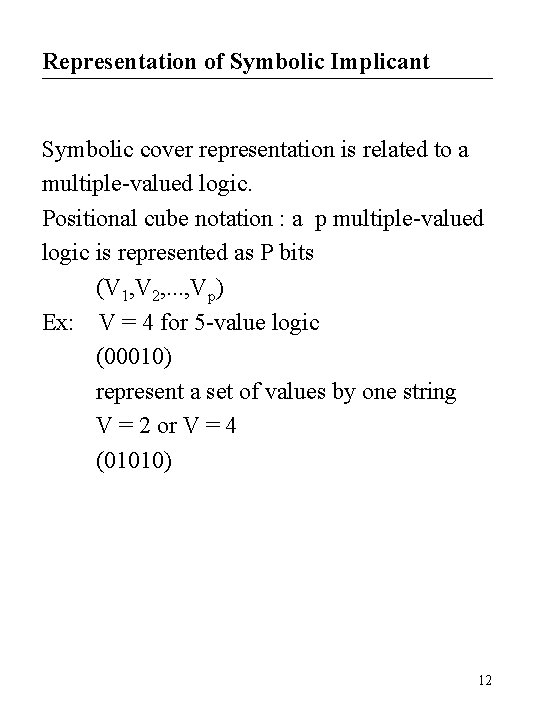

Representation of Symbolic Implicant Symbolic cover representation is related to a multiple-valued logic. Positional cube notation : a p multiple-valued logic is represented as P bits (V 1, V 2, . . . , Vp) Ex: V = 4 for 5 -value logic (00010) represent a set of values by one string V = 2 or V = 4 (01010) 12

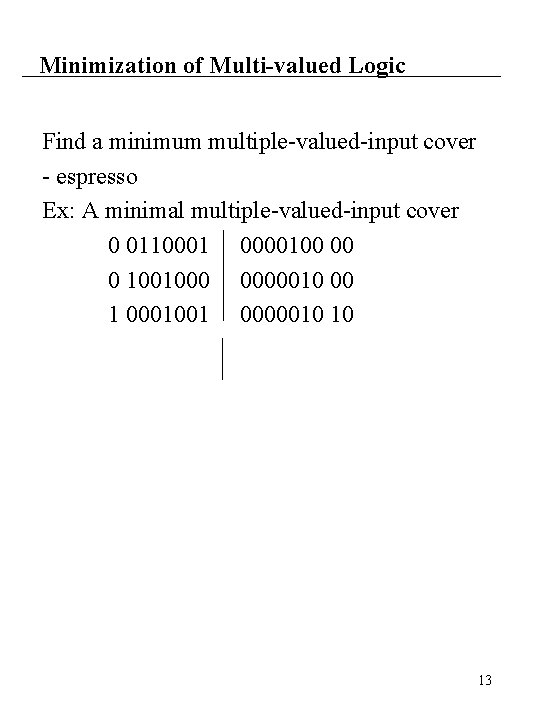

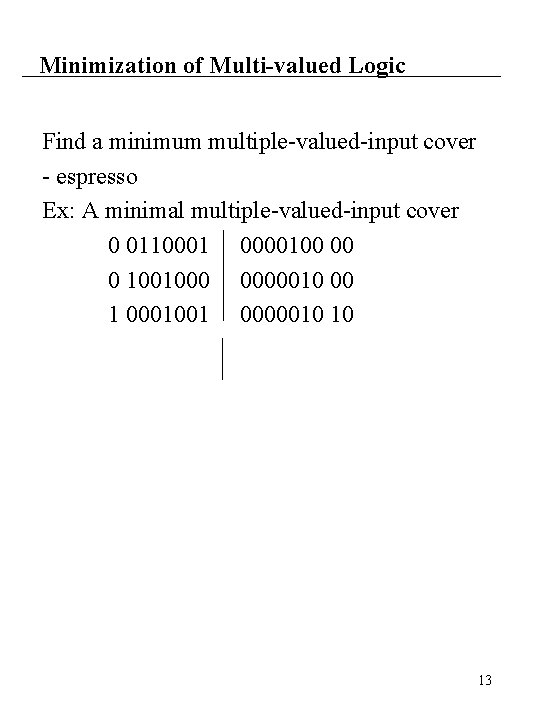

Minimization of Multi-valued Logic Find a minimum multiple-valued-input cover - espresso Ex: A minimal multiple-valued-input cover 0 0110001 0000100 00 0 1001000 0000010 00 1 0001001 0000010 10 13

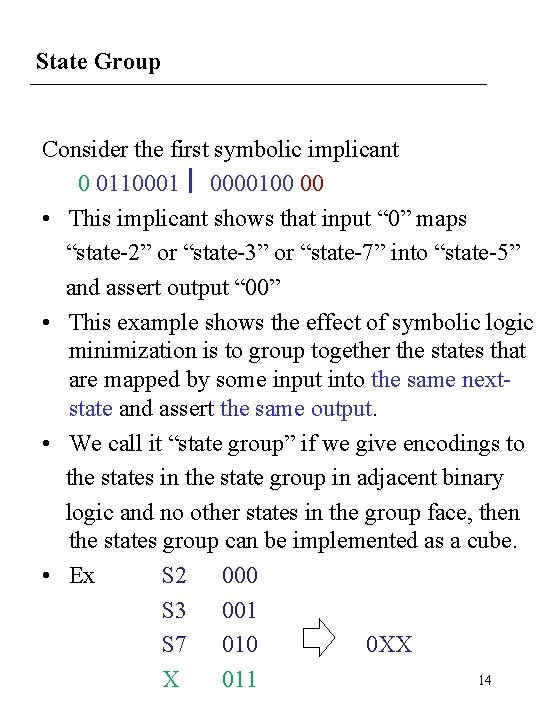

State Group Consider the first symbolic implicant 0 0110001 0000100 00 • This implicant shows that input “ 0” maps “state-2” or “state-3” or “state-7” into “state-5” and assert output “ 00” • This example shows the effect of symbolic logic minimization is to group together the states that are mapped by some input into the same nextstate and assert the same output. • We call it “state group” if we give encodings to the states in the state group in adjacent binary logic and no other states in the group face, then the states group can be implemented as a cube. • Ex S 2 000 S 3 001 S 7 010 0 XX 14 X 011

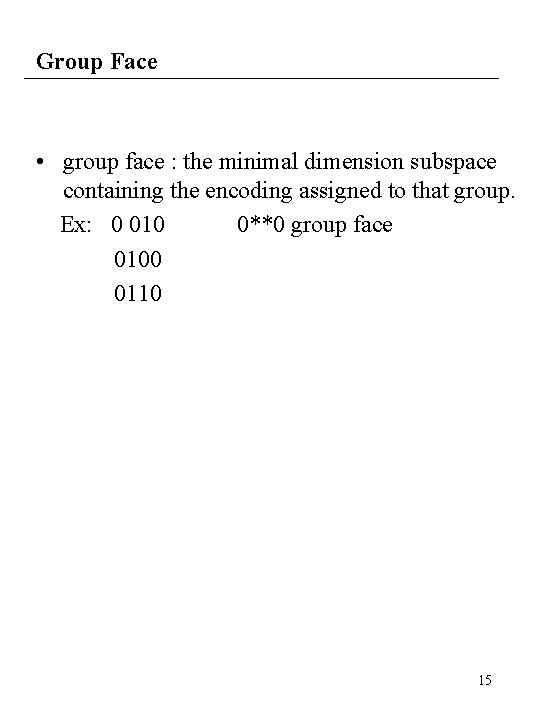

Group Face • group face : the minimal dimension subspace containing the encoding assigned to that group. Ex: 0 010 0**0 group face 0100 0110 15

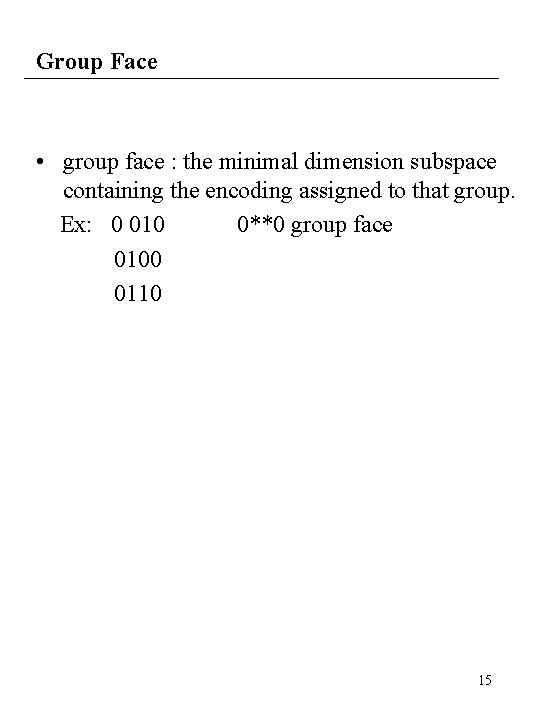

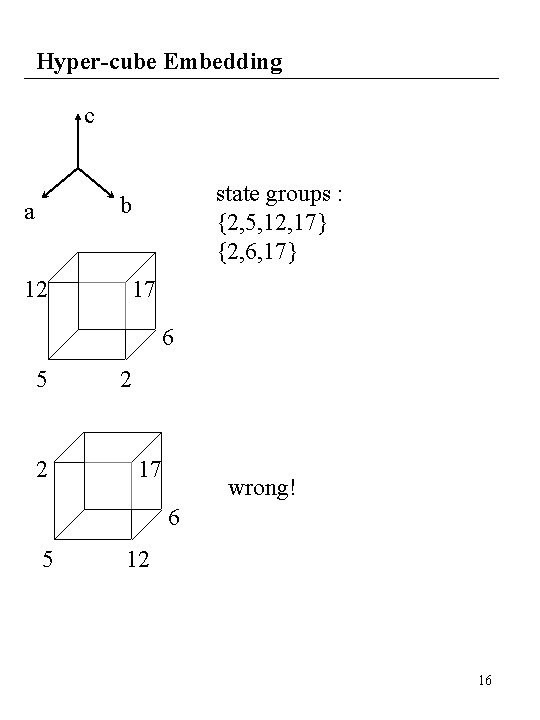

Hyper-cube Embedding c state groups : {2, 5, 12, 17} {2, 6, 17} b a 12 17 6 5 2 2 17 wrong! 6 5 12 16

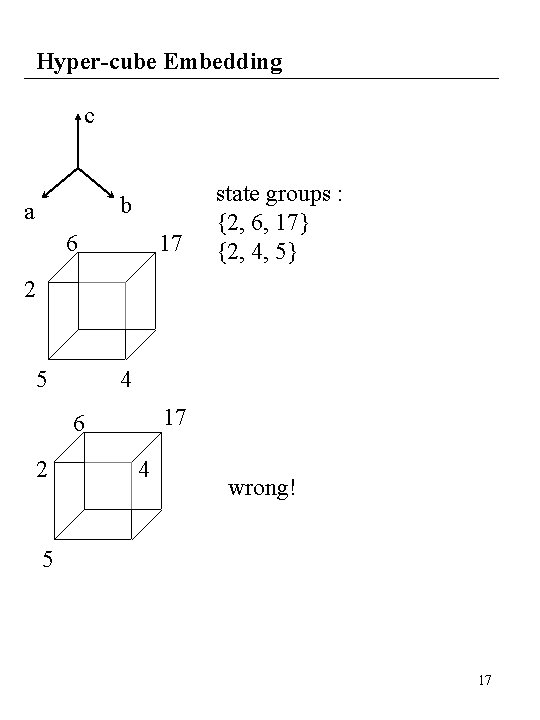

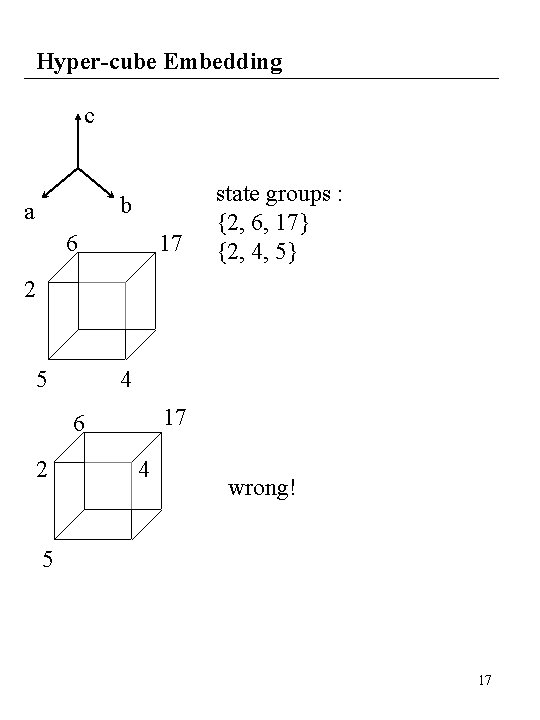

Hyper-cube Embedding c b a 6 17 state groups : {2, 6, 17} {2, 4, 5} 2 5 4 17 6 2 4 wrong! 5 17

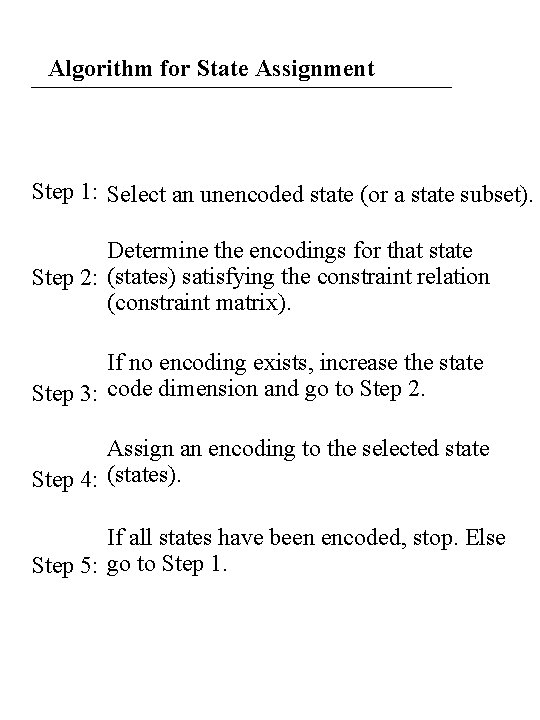

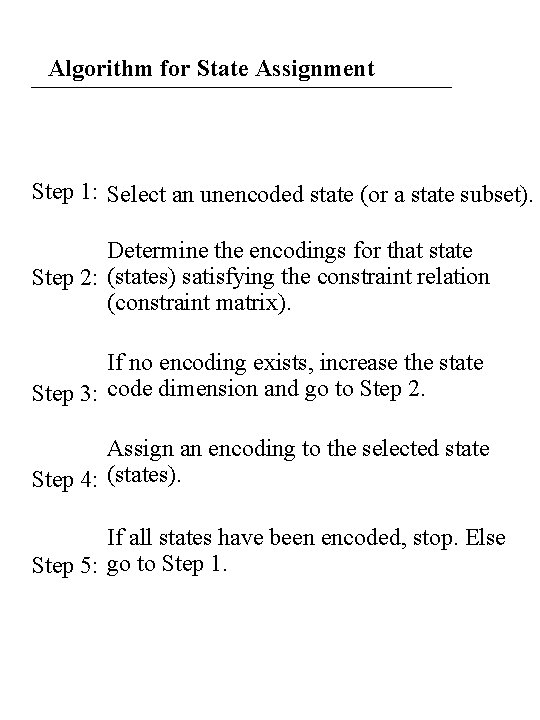

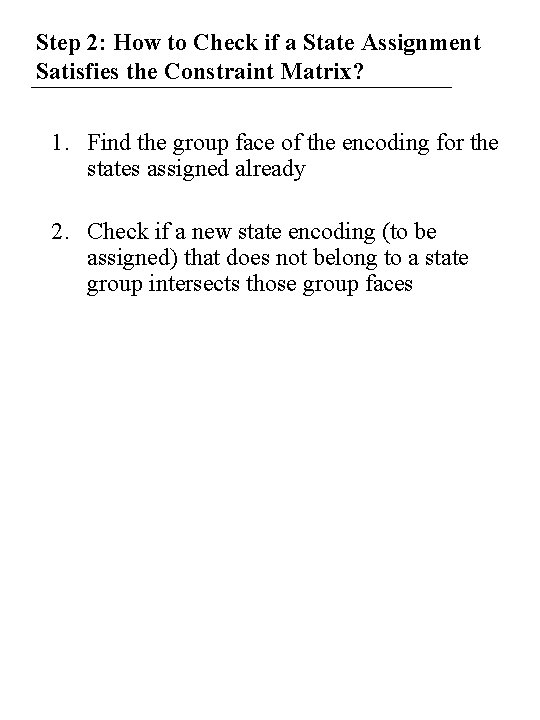

Algorithm for State Assignment Step 1: Select an unencoded state (or a state subset). Determine the encodings for that state Step 2: (states) satisfying the constraint relation (constraint matrix). If no encoding exists, increase the state Step 3: code dimension and go to Step 2. Assign an encoding to the selected state Step 4: (states). If all states have been encoded, stop. Else Step 5: go to Step 1.

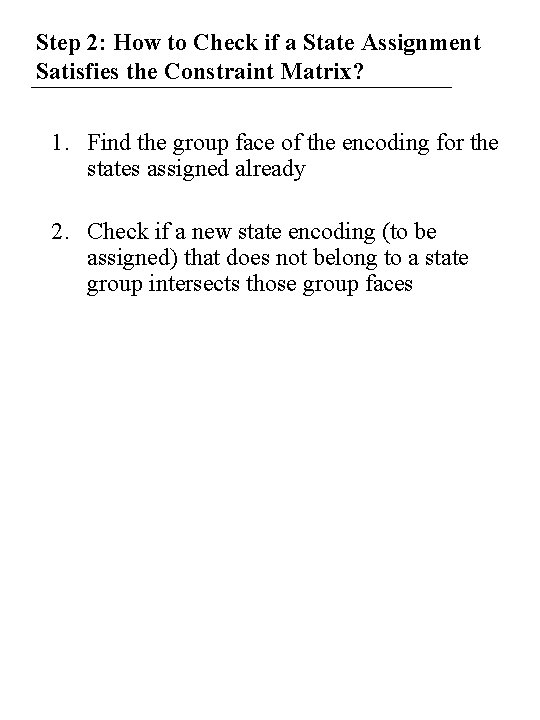

Step 2: How to Check if a State Assignment Satisfies the Constraint Matrix? 1. Find the group face of the encoding for the states assigned already 2. Check if a new state encoding (to be assigned) that does not belong to a state group intersects those group faces

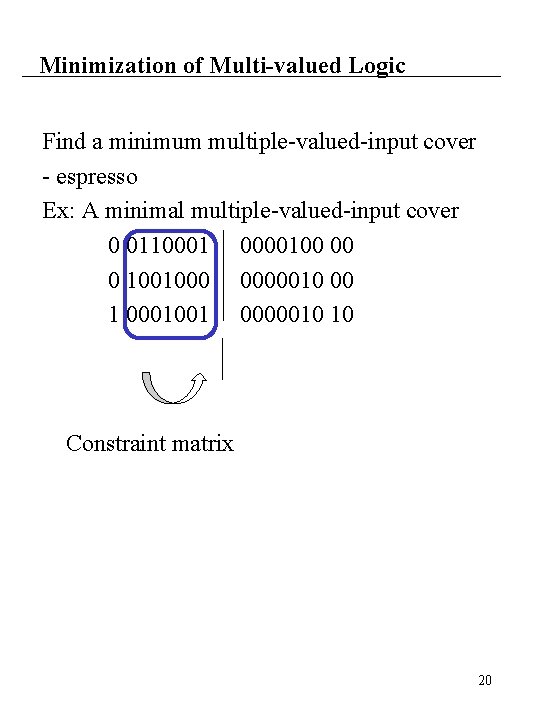

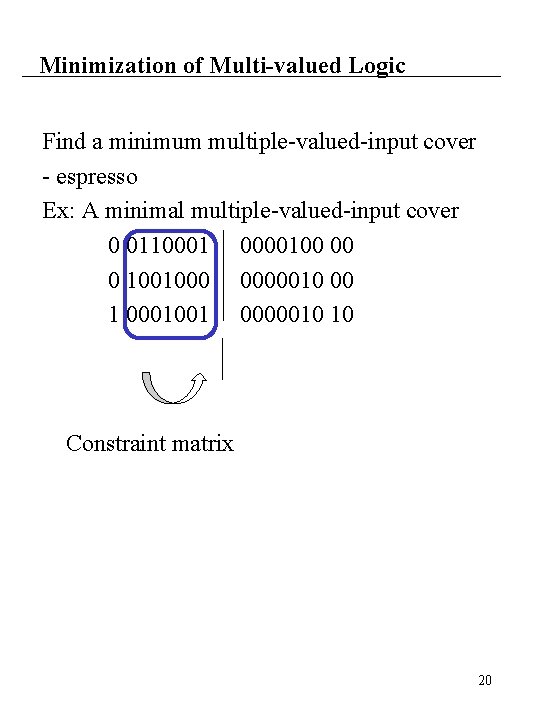

Minimization of Multi-valued Logic Find a minimum multiple-valued-input cover - espresso Ex: A minimal multiple-valued-input cover 0 0110001 0000100 00 0 1001000 0000010 00 1 0001001 0000010 10 Constraint matrix 20

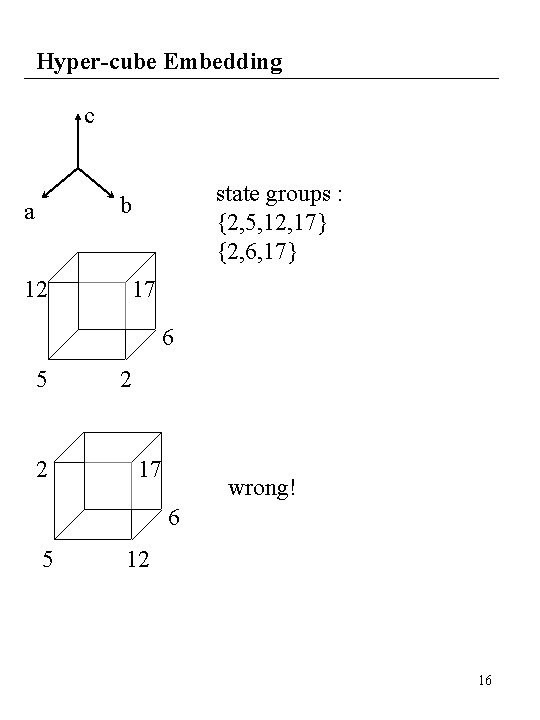

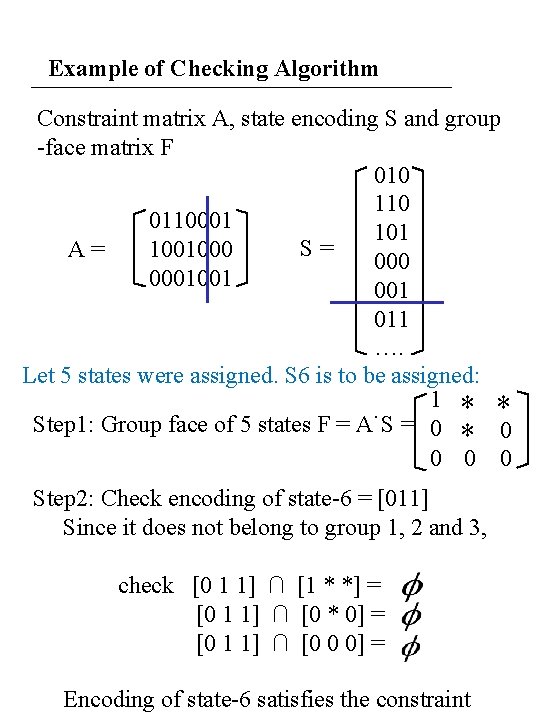

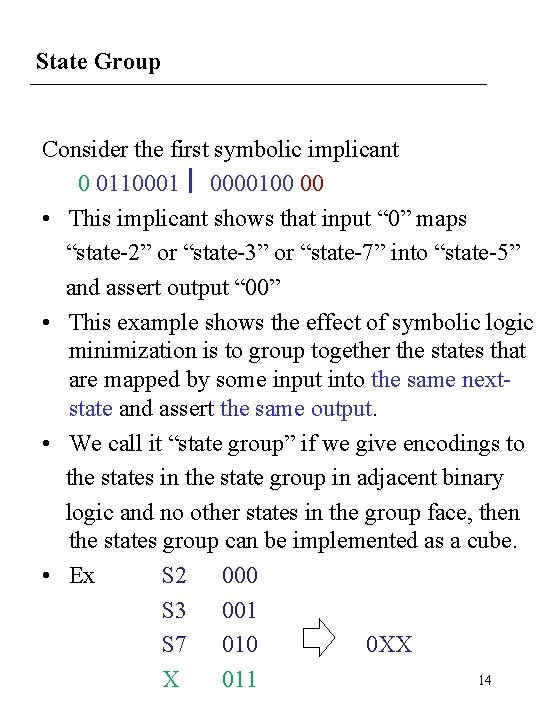

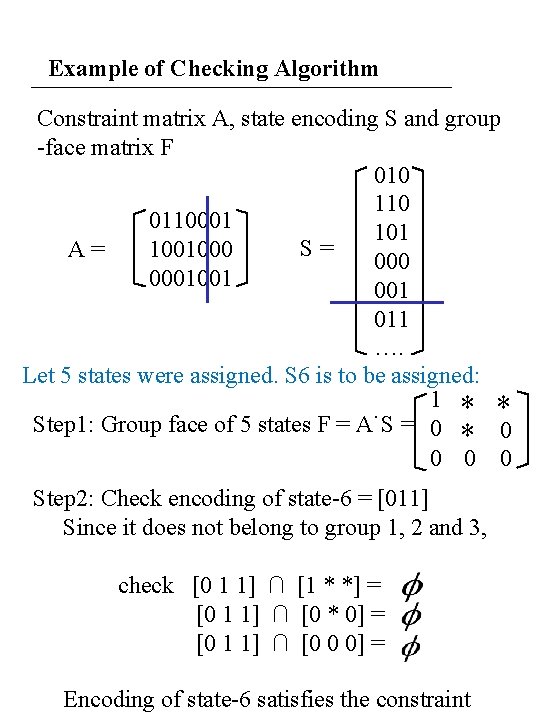

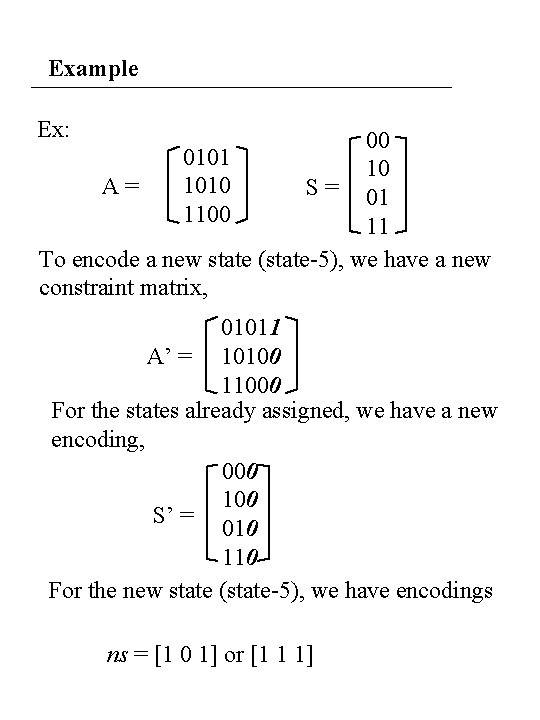

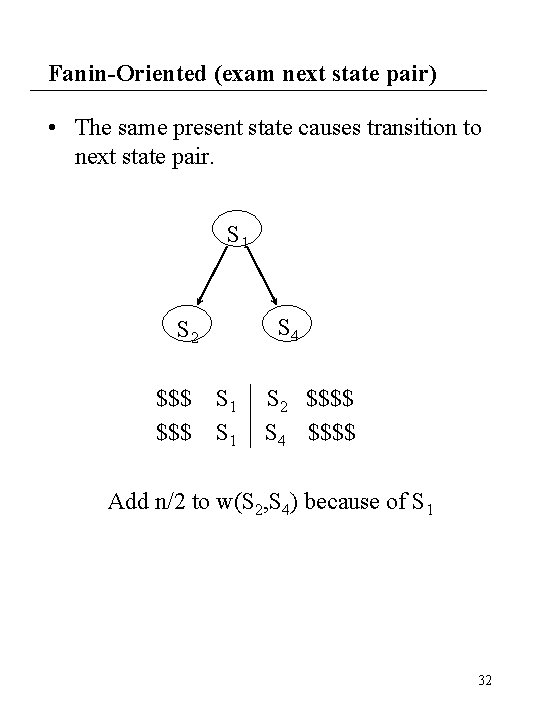

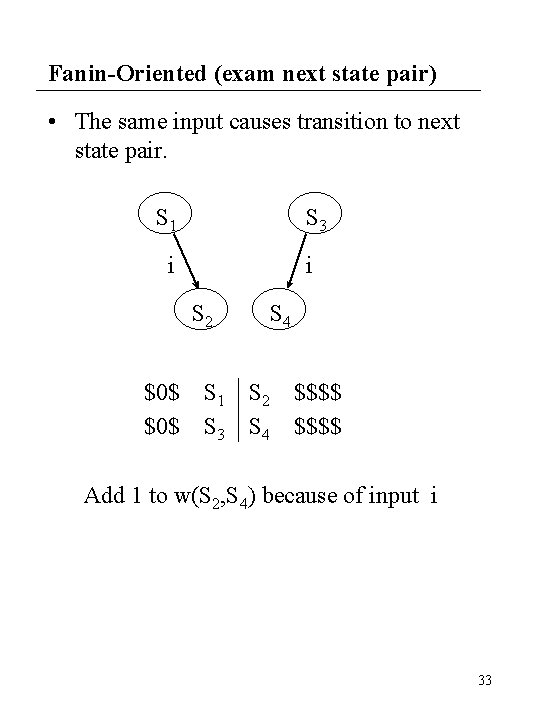

Example of Checking Algorithm Constraint matrix A, state encoding S and group -face matrix F 010 110 0110001 101 S= 1001000 A= 0001001 011 …. Let 5 states were assigned. S 6 is to be assigned: 1 * * Step 1: Group face of 5 states F = A˙S = 0 * 0 0 Step 2: Check encoding of state-6 = [011] Since it does not belong to group 1, 2 and 3, check [0 1 1] ∩ [1 * *] = [0 1 1] ∩ [0 * 0] = [0 1 1] ∩ [0 0 0] = Encoding of state-6 satisfies the constraint

![Other State Encoding If encoding of state6 111 check 1 1 1 Other State Encoding If encoding of state-6 = [111], check [1 1 1] ∩](https://slidetodoc.com/presentation_image_h2/316165a5507e96395c86401e4cc248fe/image-22.jpg)

Other State Encoding If encoding of state-6 = [111], check [1 1 1] ∩ [1 * *] = 111 [1 1 1] ∩ [0 * 0] = [1 1 1] ∩ [0 0 0] = Do not satisfy the constraint.

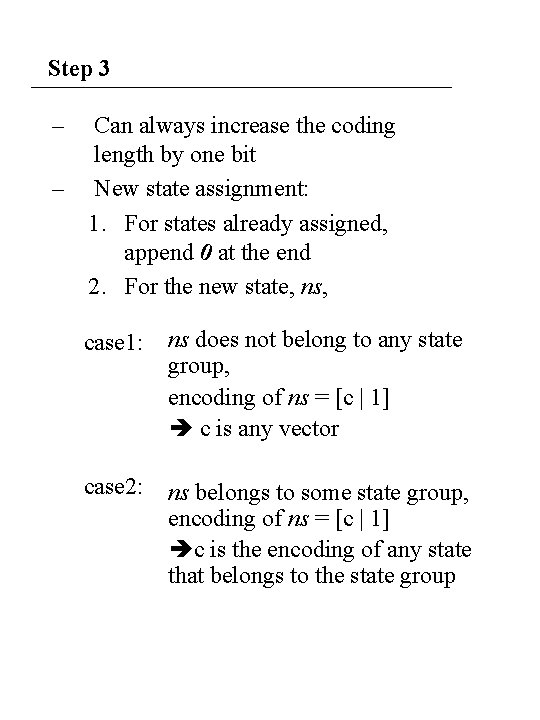

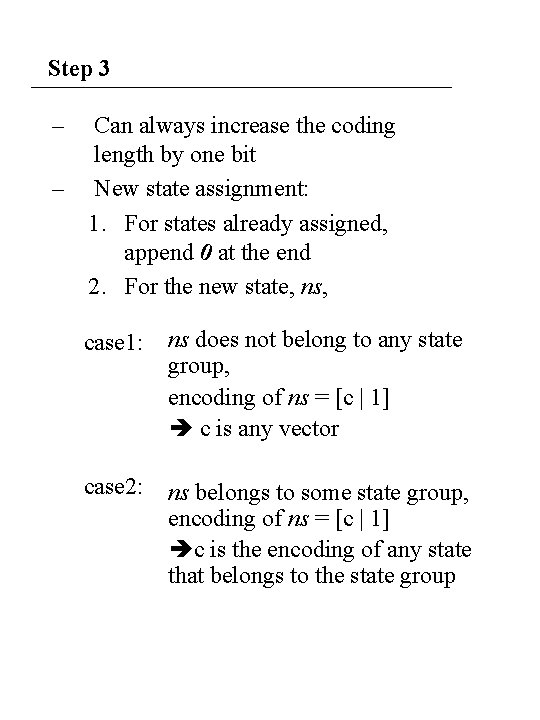

Step 3 – Can always increase the coding length by one bit – New state assignment: 1. For states already assigned, append 0 at the end 2. For the new state, ns, case 1: ns does not belong to any state group, encoding of ns = [c | 1] c is any vector case 2: ns belongs to some state group, encoding of ns = [c | 1] c is the encoding of any state that belongs to the state group

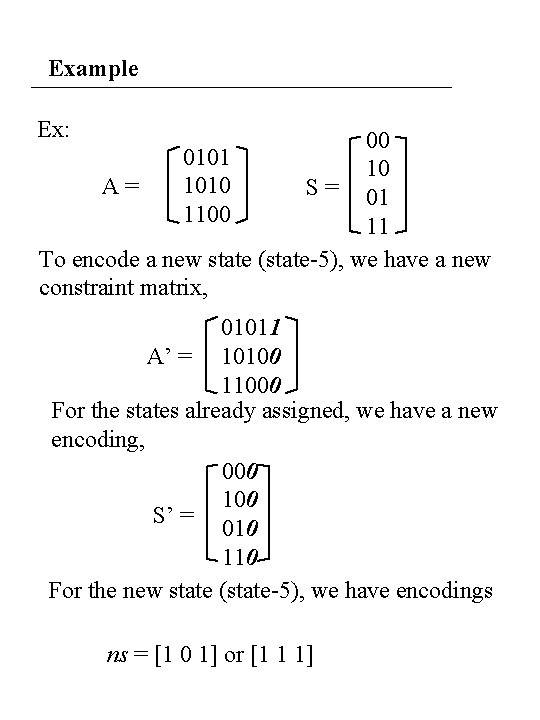

Example Ex: 00 0101 10 1010 A= S = 01 1100 11 To encode a new state (state-5), we have a new constraint matrix, 01011 A’ = 10100 11000 For the states already assigned, we have a new encoding, 000 100 S’ = 010 110 For the new state (state-5), we have encodings ns = [1 0 1] or [1 1 1]

Hyper-cube Embedding • Advantage : – use two-level logic minimizer to identify good state group – almost all of the advantage of one-hot encoding, but fewer state-bit 25

Adjacency Based State Assignment 26

Adjacency-Based State Assignment • Mustang : weight assignment technique based on loosely maximizing common cube factors 27

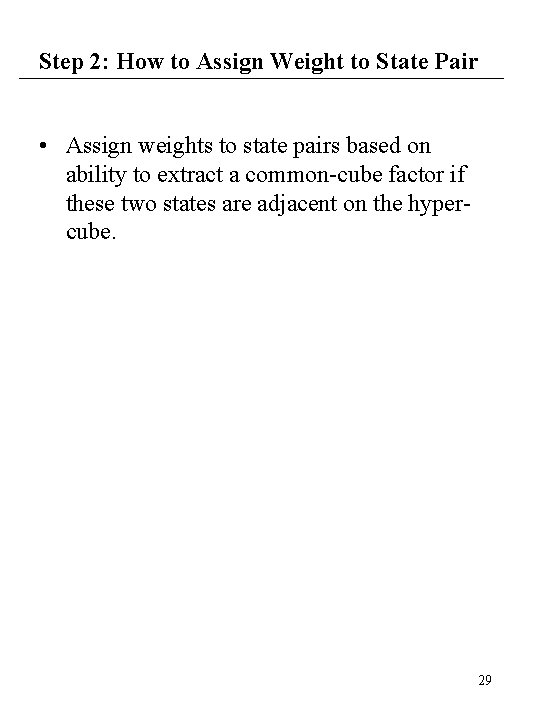

Adjacency-Based State Assignment Basic algorithm: (1) Assign weight w(s, t) to each pair of states – weight reflects desire of placing states adjacent on the hypercube (2) Define cost function for assignment of codes to the states – penalize weights for the distance between the state codes eg. w(s, t) * distance(enc(s), enc(t)) (3) Find assignment of codes which minimize this cost function summed over all pairs of states. – heuristic to find an initial solution – pair-wise interchange (simulated annealing) to improve solution 28

Step 2: How to Assign Weight to State Pair • Assign weights to state pairs based on ability to extract a common-cube factor if these two states are adjacent on the hypercube. 29

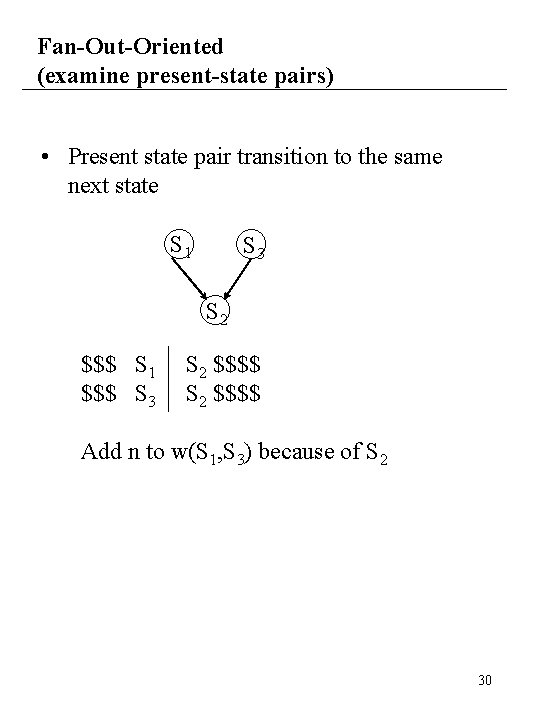

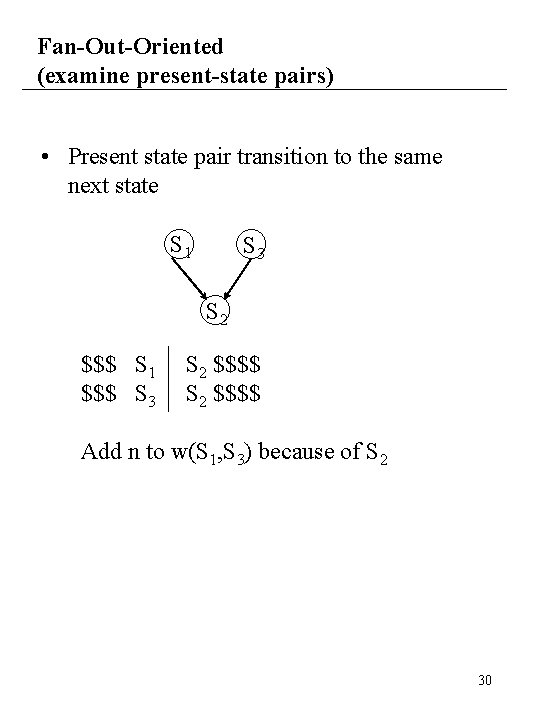

Fan-Out-Oriented (examine present-state pairs) • Present state pair transition to the same next state S 1 S 3 S 2 $$$ S 1 $$$ S 3 S 2 $$$$ Add n to w(S 1, S 3) because of S 2 30

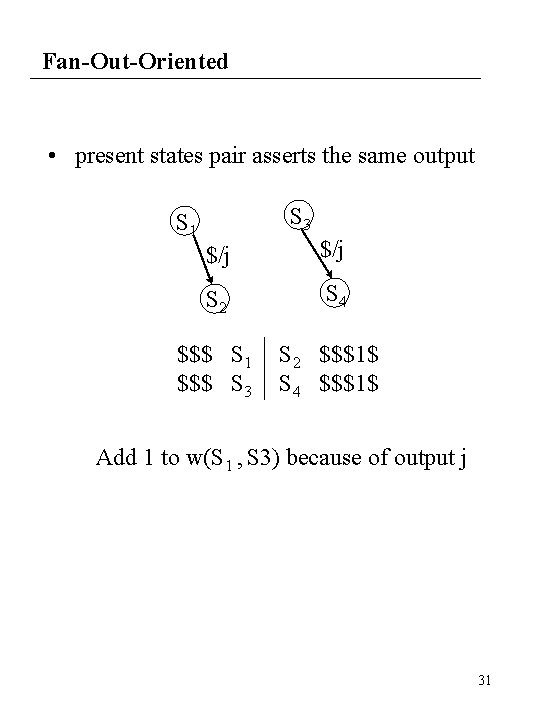

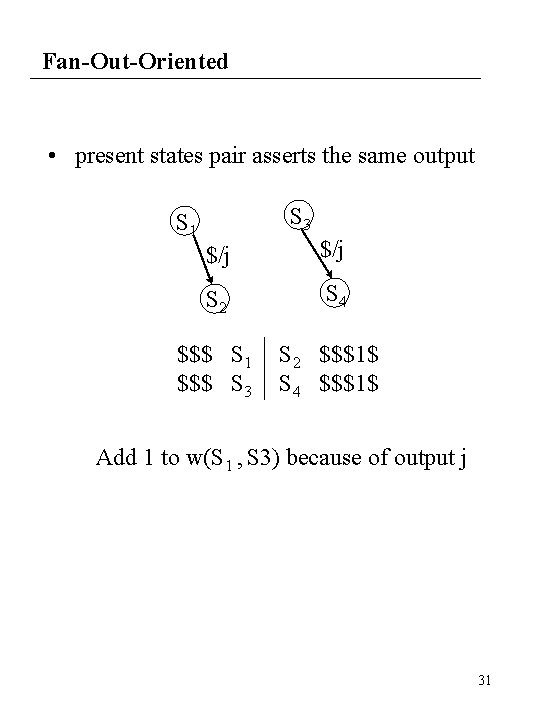

Fan-Out-Oriented • present states pair asserts the same output S 3 S 1 $/j S 2 S 4 $$$ S 1 $$$ S 3 S 2 $$$1$ S 4 $$$1$ Add 1 to w(S 1 , S 3) because of output j 31

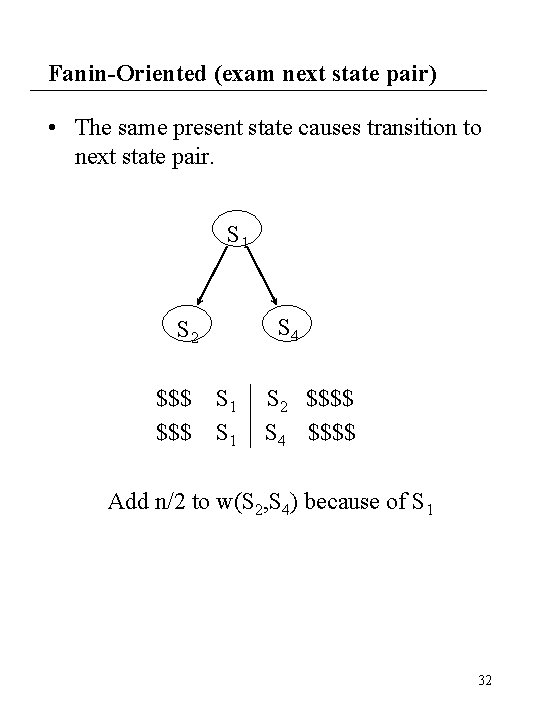

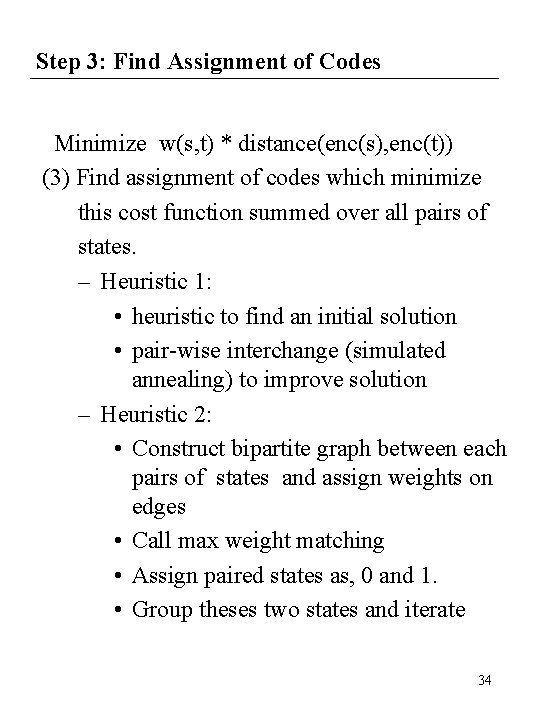

Fanin-Oriented (exam next state pair) • The same present state causes transition to next state pair. S 1 S 4 S 2 $$$ S 1 S 2 $$$$ S 4 $$$$ Add n/2 to w(S 2, S 4) because of S 1 32

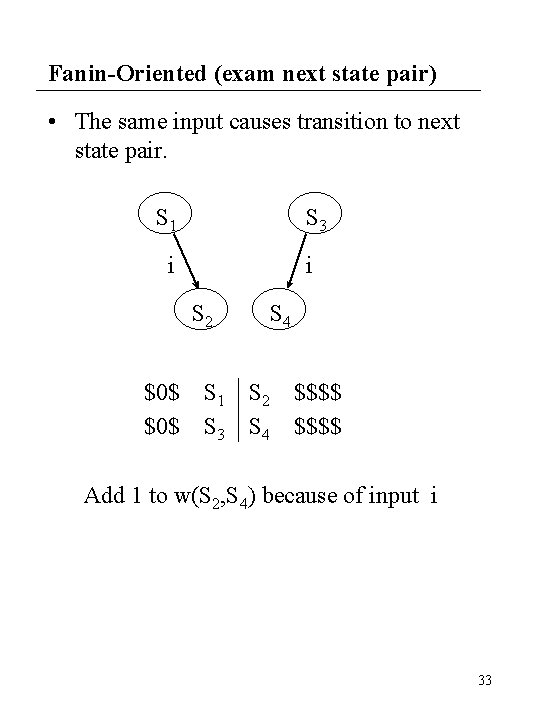

Fanin-Oriented (exam next state pair) • The same input causes transition to next state pair. S 1 S 3 i i S 2 $0$ S 4 S 1 S 2 $$$$ S 3 S 4 $$$$ Add 1 to w(S 2, S 4) because of input i 33

Step 3: Find Assignment of Codes Minimize w(s, t) * distance(enc(s), enc(t)) (3) Find assignment of codes which minimize this cost function summed over all pairs of states. – Heuristic 1: • heuristic to find an initial solution • pair-wise interchange (simulated annealing) to improve solution – Heuristic 2: • Construct bipartite graph between each pairs of states and assign weights on edges • Call max weight matching • Assign paired states as, 0 and 1. • Group theses two states and iterate 34

Which Method Is Better? • Which is better? FSMs have no useful two-level face constraints => adjacency-embedding FSMs have many two-level face constraints => face-embedding 35