Syntax Analysis Introduction to parsers Contextfree grammars Pushdown

![Notational Conventions in CFG • a, b, c, … [+-0 -9], id: symbols in Notational Conventions in CFG • a, b, c, … [+-0 -9], id: symbols in](https://slidetodoc.com/presentation_image/13e68b1758d58bb45a134b8ebdf49f65/image-5.jpg)

![Types of CFG Parsers © Practical Parsers: [“what is a good parser? ”] © Types of CFG Parsers © Practical Parsers: [“what is a good parser? ”] ©](https://slidetodoc.com/presentation_image/13e68b1758d58bb45a134b8ebdf49f65/image-46.jpg)

![An Example type simple | id | array [ simple ] of type simple An Example type simple | id | array [ simple ] of type simple](https://slidetodoc.com/presentation_image/13e68b1758d58bb45a134b8ebdf49f65/image-61.jpg)

![An Example array [ num dotdot num ] of integer type array [ simple An Example array [ num dotdot num ] of integer type array [ simple](https://slidetodoc.com/presentation_image/13e68b1758d58bb45a134b8ebdf49f65/image-62.jpg)

![An Example type simple | id | array [ simple ] of type simple An Example type simple | id | array [ simple ] of type simple](https://slidetodoc.com/presentation_image/13e68b1758d58bb45a134b8ebdf49f65/image-81.jpg)

![An Example Action Stack Input E type array [ num dotdot num ] of An Example Action Stack Input E type array [ num dotdot num ] of](https://slidetodoc.com/presentation_image/13e68b1758d58bb45a134b8ebdf49f65/image-82.jpg)

- Slides: 110

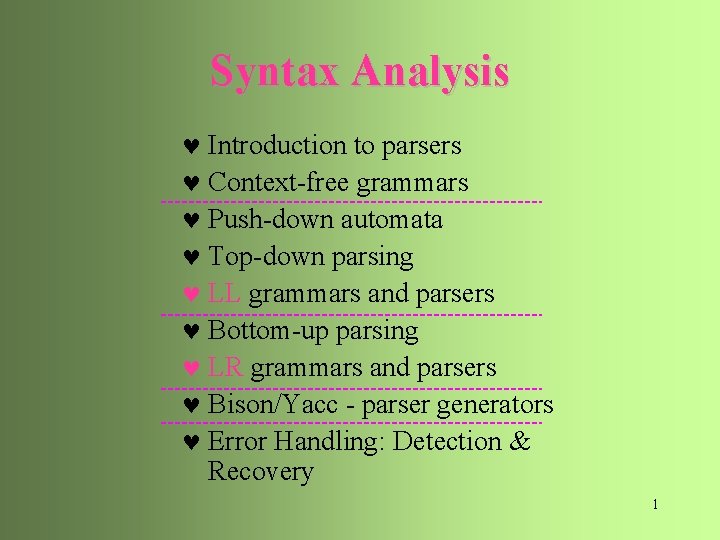

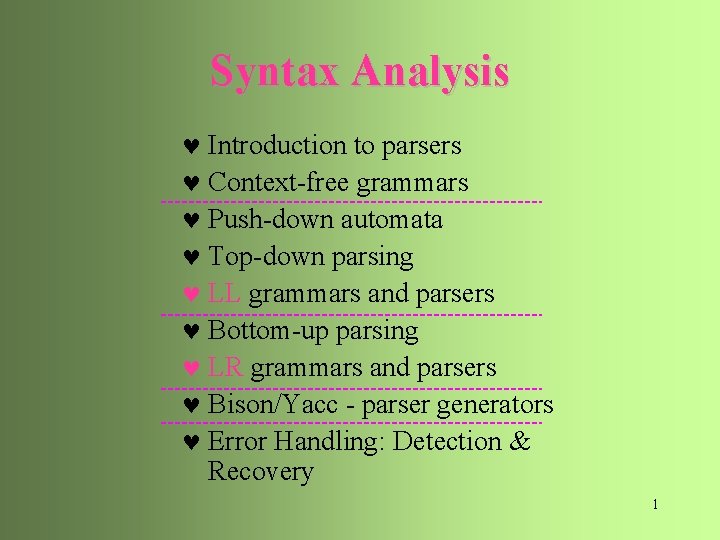

Syntax Analysis © Introduction to parsers © Context-free grammars © Push-down automata © Top-down parsing © LL grammars and parsers © Bottom-up parsing © LR grammars and parsers © Bison/Yacc - parser generators © Error Handling: Detection & Recovery 1

Introduction to parsers CFG source Lexical code Analyzer token next token syntax Semantic Parser Analyzer tree Symbol Table 2

Context Free Grammar © CFG & Terminology © Rewrite vs. Reduce © Derivation © Language and CFL © Equivalence & CNF Derivation is the reverse of Parsing. If we know how sentences are derived, we may find a parsing method in the reversed direction. © Parsing vs. Derivation © lm/rm derivation & parse tree © Ambiguity & resolution © Expressive power 3

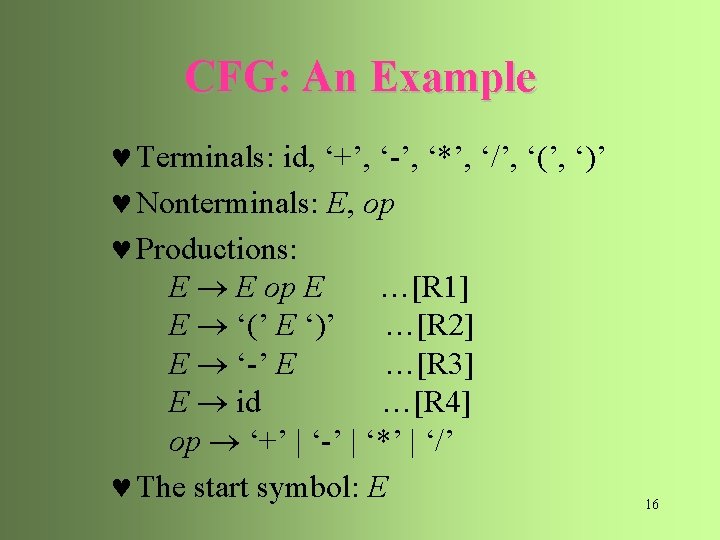

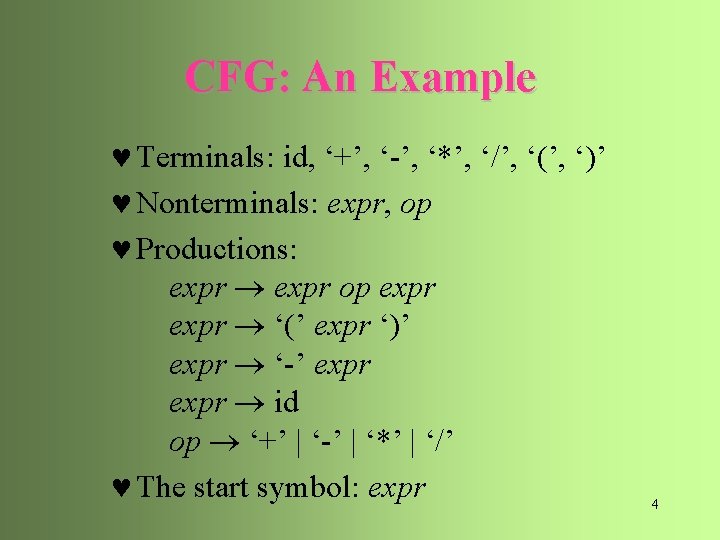

CFG: An Example © Terminals: id, ‘+’, ‘-’, ‘*’, ‘/’, ‘(’, ‘)’ © Nonterminals: expr, op © Productions: expr op expr ‘(’ expr ‘)’ expr ‘-’ expr id op ‘+’ | ‘-’ | ‘*’ | ‘/’ © The start symbol: expr 4

![Notational Conventions in CFG a b c 0 9 id symbols in Notational Conventions in CFG • a, b, c, … [+-0 -9], id: symbols in](https://slidetodoc.com/presentation_image/13e68b1758d58bb45a134b8ebdf49f65/image-5.jpg)

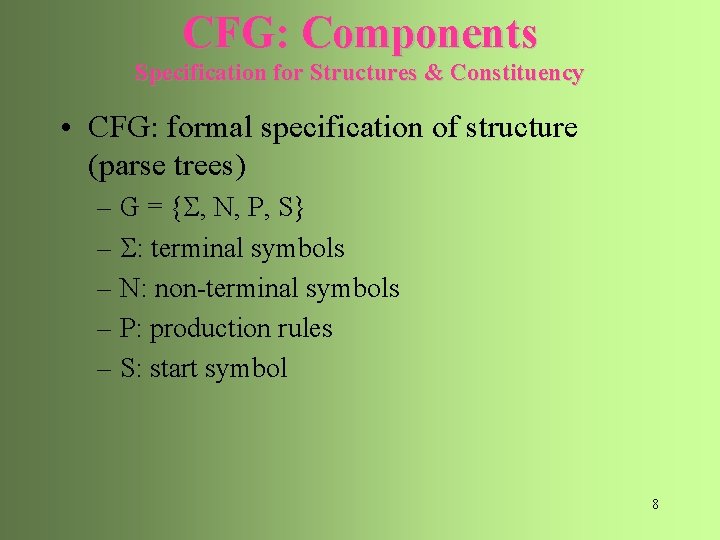

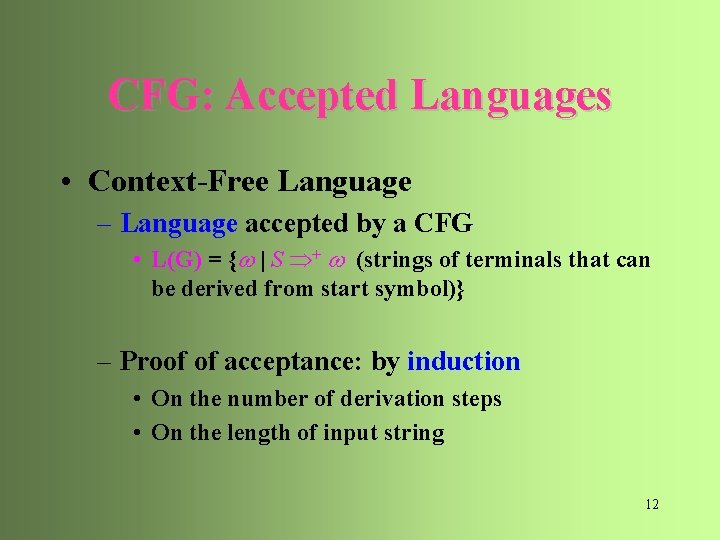

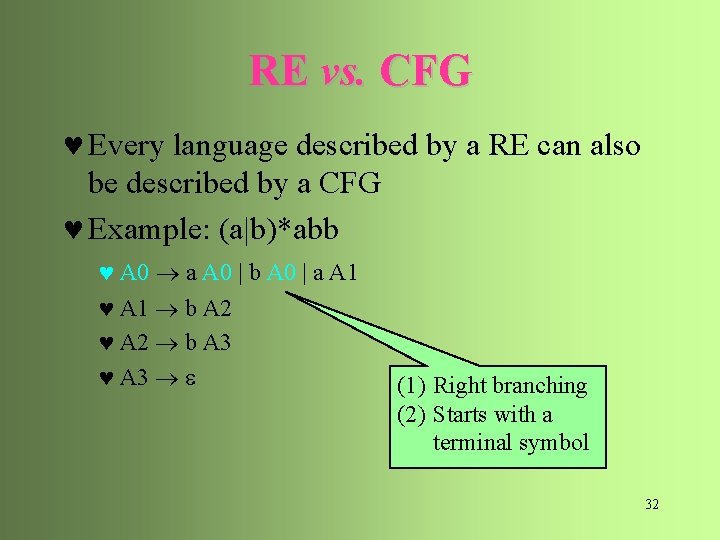

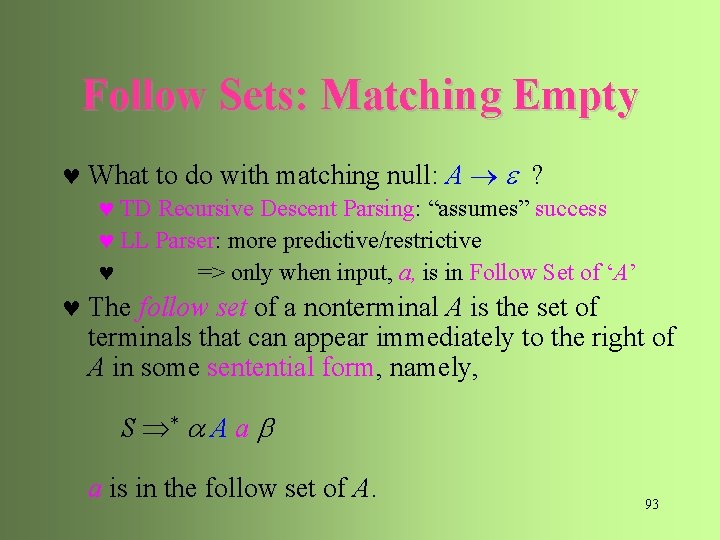

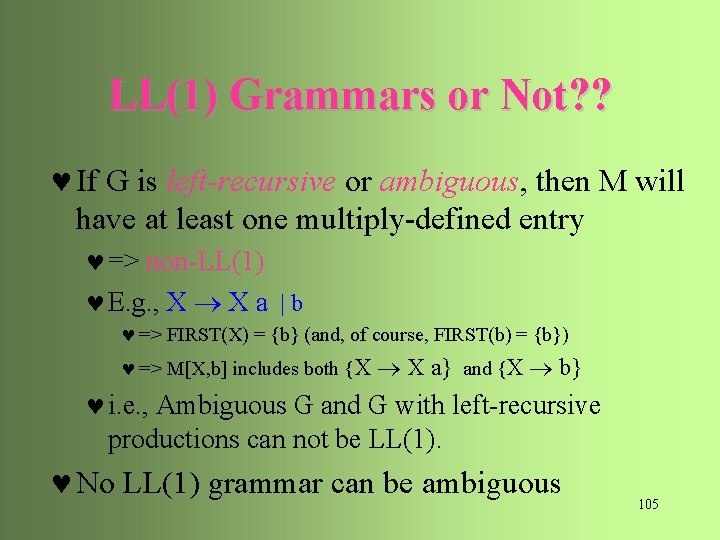

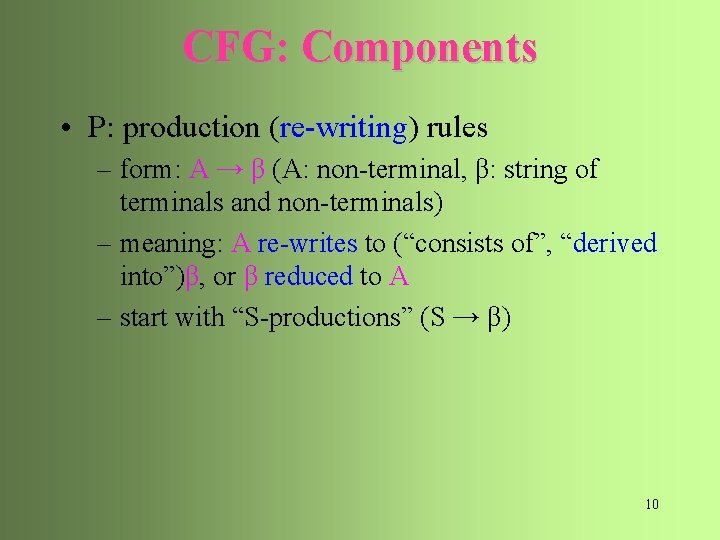

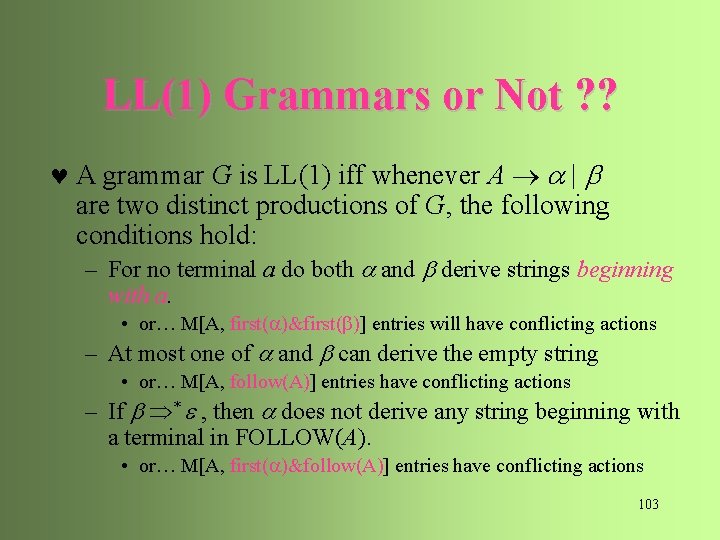

Notational Conventions in CFG • a, b, c, … [+-0 -9], id: symbols in • A, B, C, …, S, expr, stmt: symbols in N • U, V, W, …, X, Y, Z: grammar symbols in( +N) • a, b, g, …denotes strings in ( +N)* • u, v, w, … denotes strings in * • is an abbreviation of • Alternatives: a, b, … at RHS 5

Context-Free Grammars © A set of terminals: basic symbols from which sentences are formed © A set of nonterminals: syntactic variables denoting sets of strings © A set of productions: rules specifying how the terminals and nonterminals can be combined to form sentences © The start symbol: a distinguished nonterminal denoting the language 7

CFG: Components Specification for Structures & Constituency • CFG: formal specification of structure (parse trees) – G = { , N, P, S} – : terminal symbols – N: non-terminal symbols – P: production rules – S: start symbol 8

CFG: Components • : terminal symbols – the input symbols of the language • programming language: tokens (reserved words, variables, operators, …) • natural languages: words or parts of speech – pre-terminal: parts of speech (when words are regarded as terminals) • N: non-terminal symbols – groups of terminals and/or other non-terminals • S: start symbol: the largest constituent of a parse tree 9

CFG: Components • P: production (re-writing) rules – form: A → β (A: non-terminal, β: string of terminals and non-terminals) – meaning: A re-writes to (“consists of”, “derived into”)β, or β reduced to A – start with “S-productions” (S → β) 10

Derivations © A derivation step is an application of a production as a rewriting rule E -E © A sequence of derivation steps E - ( E ) - ( id ) is called a derivation of “- ( id )” from E © The symbol * denotes “derives in zero or more steps”; the symbol + denotes “derives in one or more steps 11

CFG: Accepted Languages • Context-Free Language – Language accepted by a CFG • L(G) = { | S + (strings of terminals that can be derived from start symbol)} – Proof of acceptance: by induction • On the number of derivation steps • On the length of input string 12

Context-Free Languages © A context-free language L(G) is the language defined by a context-free grammar G © A string of terminals is in L(G) if and only if S + , is called a sentence of G © If S * , where may contain nonterminals, then we call a sentential form of G E - ( E ) - ( id ) © G 1 is equivalent to G 2 if L(G 1) = L(G 2) 13

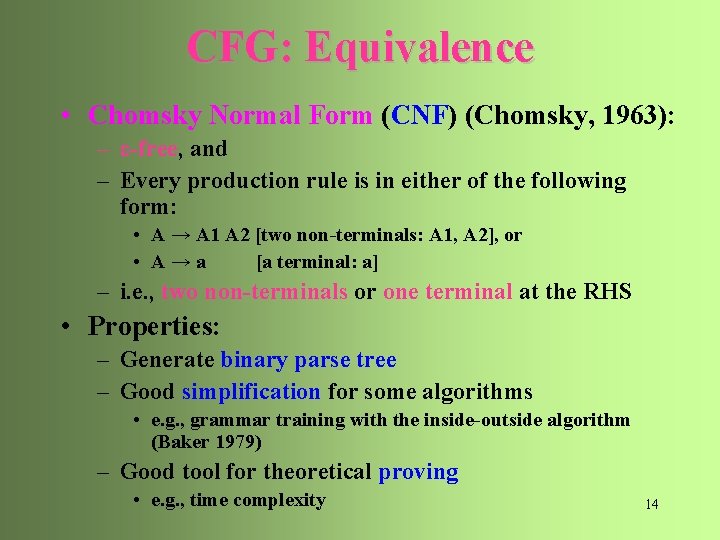

CFG: Equivalence • Chomsky Normal Form (CNF) (Chomsky, 1963): – ε-free, and – Every production rule is in either of the following form: • A → A 1 A 2 [two non-terminals: A 1, A 2], or • A→a [a terminal: a] – i. e. , two non-terminals or one terminal at the RHS • Properties: – Generate binary parse tree – Good simplification for some algorithms • e. g. , grammar training with the inside-outside algorithm (Baker 1979) – Good tool for theoretical proving • e. g. , time complexity 14

CFG: Equivalence • Every CFG can be converted into a weakly equivalent CNF – equivalence: L(G 1) = L(G 2) • strong equivalent: assign the same phrase structure to each sentence (except for renaming non-terminals) • weak equivalent: do not assign the same phrase structure to each sentence – e. g. , A → B C D == {A → B X, X → CD} 15

CFG: An Example © Terminals: id, ‘+’, ‘-’, ‘*’, ‘/’, ‘(’, ‘)’ © Nonterminals: E, op © Productions: E E op E …[R 1] E ‘(’ E ‘)’ …[R 2] E ‘-’ E …[R 3] E id …[R 4] op ‘+’ | ‘-’ | ‘*’ | ‘/’ © The start symbol: E 16

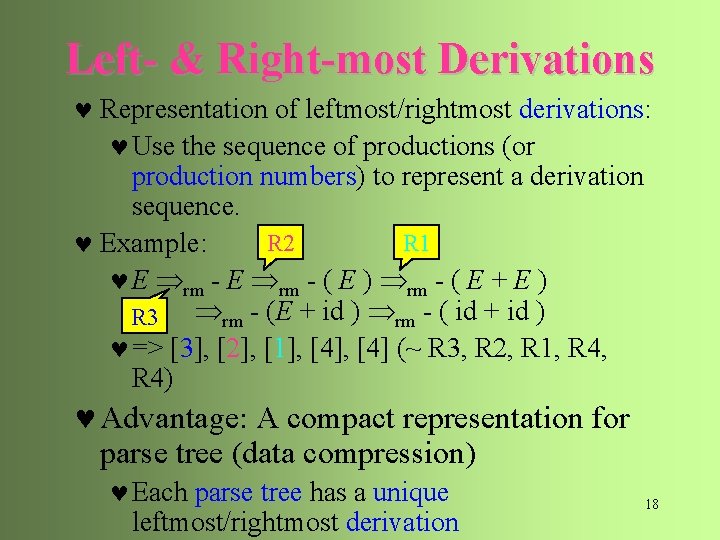

Left- & Right-most Derivations © Each derivation step needs to choose – a nonterminal to rewrite – an alternative to apply © A leftmost derivation always chooses the leftmost nonterminal to rewrite E lm - ( E ) lm - ( E + E ) lm - ( id + id ) © A rightmost (canonical) derivation always chooses the rightmost nonterminal to rewrite E rm - ( E ) rm - ( E + E ) rm - (E + id ) rm - ( id + id ) 17

Left- & Right-most Derivations © Representation of leftmost/rightmost derivations: © Use the sequence of productions (or production numbers) to represent a derivation sequence. R 2 R 1 © Example: © E rm - ( E ) rm - ( E + E ) rm - (E + id ) rm - ( id + id ) R 3 © => [3], [2], [1], [4] (~ R 3, R 2, R 1, R 4) © Advantage: A compact representation for parse tree (data compression) © Each parse tree has a unique leftmost/rightmost derivation 18

Parse Trees © A parse tree is a graphical representation for a derivation that filters out the order of choosing nonterminals for rewriting NP NP PP NP girl in the park 19

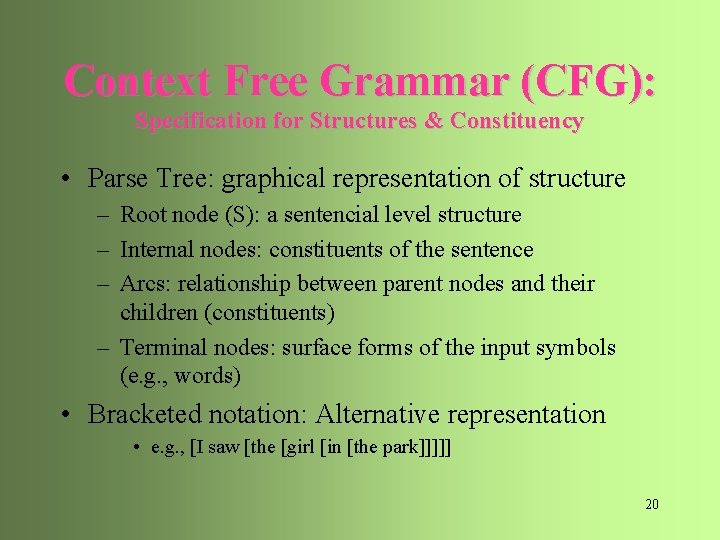

Context Free Grammar (CFG): Specification for Structures & Constituency • Parse Tree: graphical representation of structure – Root node (S): a sentencial level structure – Internal nodes: constituents of the sentence – Arcs: relationship between parent nodes and their children (constituents) – Terminal nodes: surface forms of the input symbols (e. g. , words) • Bracketed notation: Alternative representation • e. g. , [I saw [the [girl [in [the park]]]]] 20

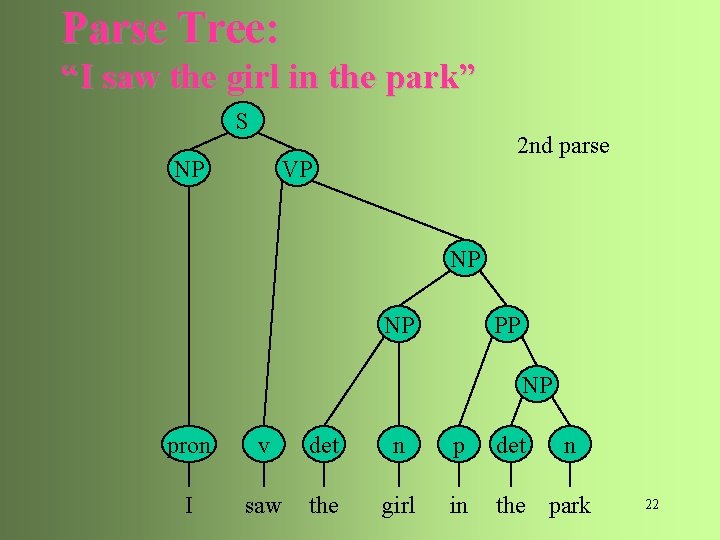

Parse Tree: “I saw the girl in the park” S NP 1 st parse VP NP PP NP pron v det n p det n I saw the girl in the park 21

Parse Tree: “I saw the girl in the park” S NP 2 nd parse VP NP NP PP NP pron v det n p det n I saw the girl in the park 22

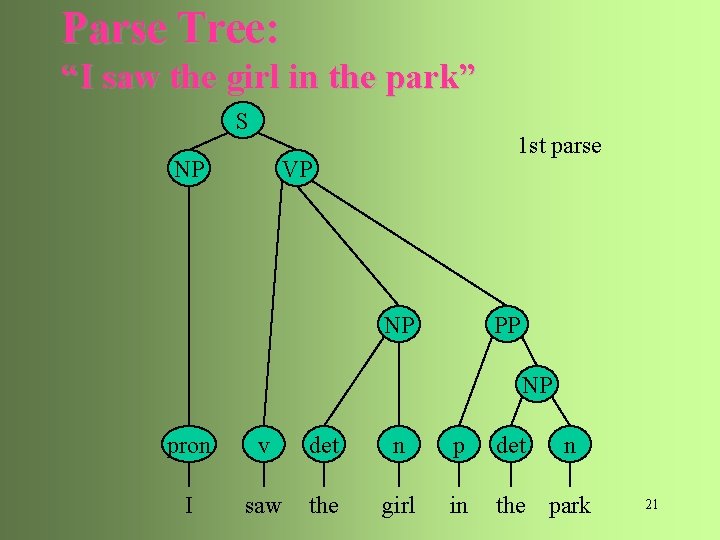

LM & RM: An Example E E lm - ( E ) lm - ( E + E ) lm - ( id + id ) E ( E ) E + E id id E rm - ( E ) rm - ( E + id ) rm - ( id + id ) 23

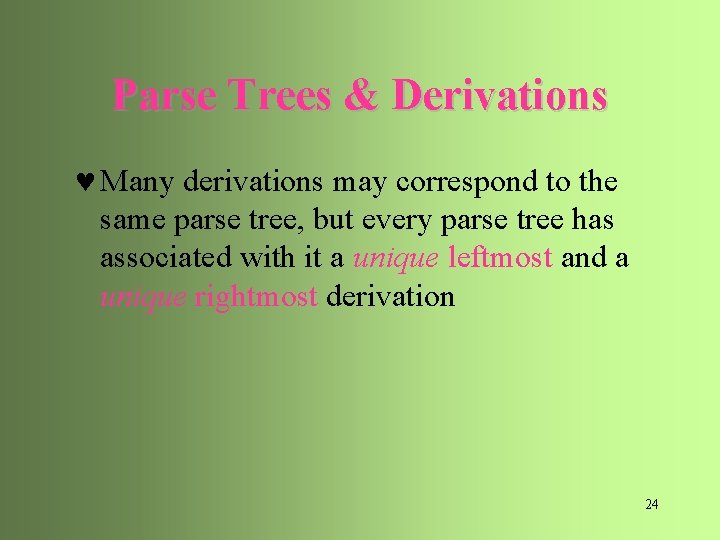

Parse Trees & Derivations © Many derivations may correspond to the same parse tree, but every parse tree has associated with it a unique leftmost and a unique rightmost derivation 24

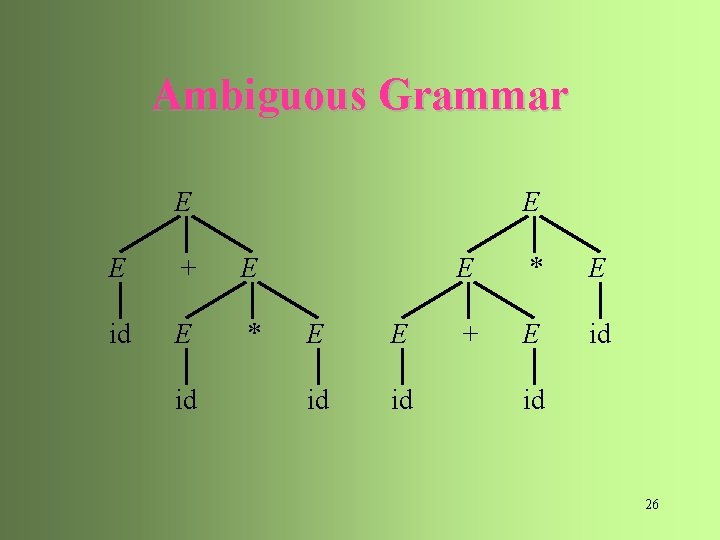

Ambiguous Grammar © A grammar is ambiguous if it produces more than one parse tree for some sentence © more than one leftmost/rightmost derivation E E+E id + E * E id + id * id E E*E E+E*E id + E * E id + id * id 25

Ambiguous Grammar E E E + E id E * id E E id id E * E + E id id 26

Resolving Ambiguity © Use disambiguating rules to throw away undesirable parse trees © Rewrite grammars by incorporating disambiguating rules into unambiguous grammars 27

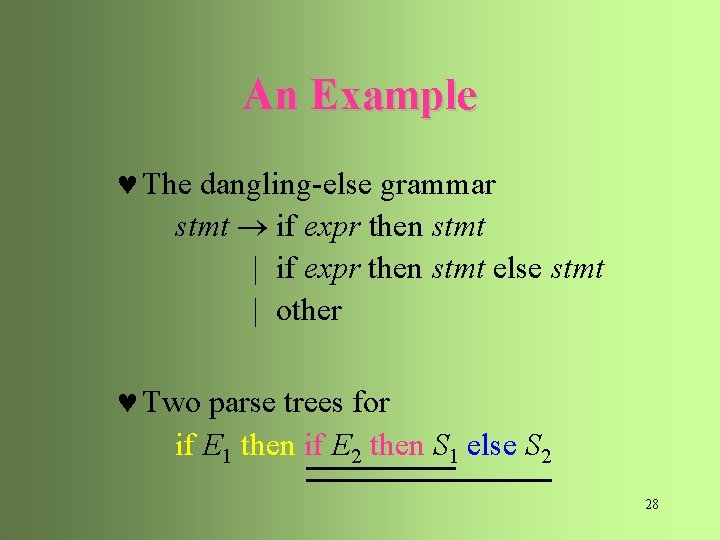

An Example © The dangling-else grammar stmt if expr then stmt | if expr then stmt else stmt | other © Two parse trees for if E 1 then if E 2 then S 1 else S 2 28

An Example S if E then if S E S if E then S S Preferred parse: closest then if else E S then S else S 29

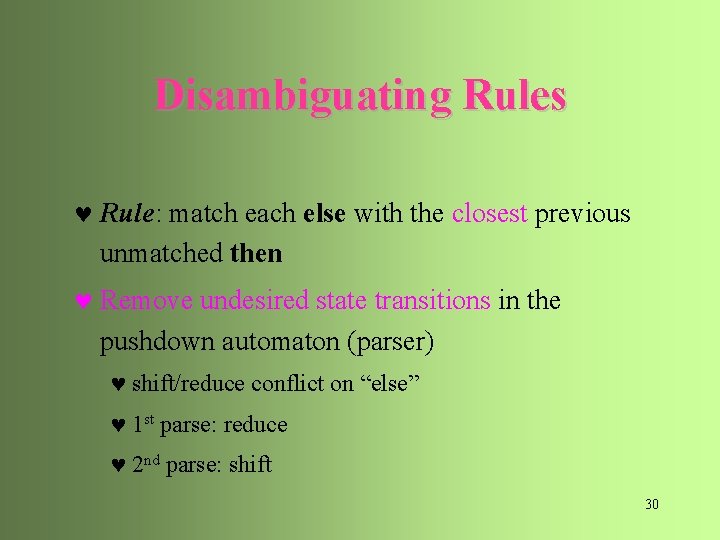

Disambiguating Rules © Rule: match each else with the closest previous unmatched then © Remove undesired state transitions in the pushdown automaton (parser) © shift/reduce conflict on “else” © 1 st parse: reduce © 2 nd parse: shift 30

Grammar Rewriting stmt m_stmt | unm_stmt ; with only paired then-else So… cannot have unmatched then-else m_stmt if expr then m_stmt else m_stmt | other want this then-else pair matched unm_stmt if expr then stmt | if expr then m_stmt else unm_stmt 31

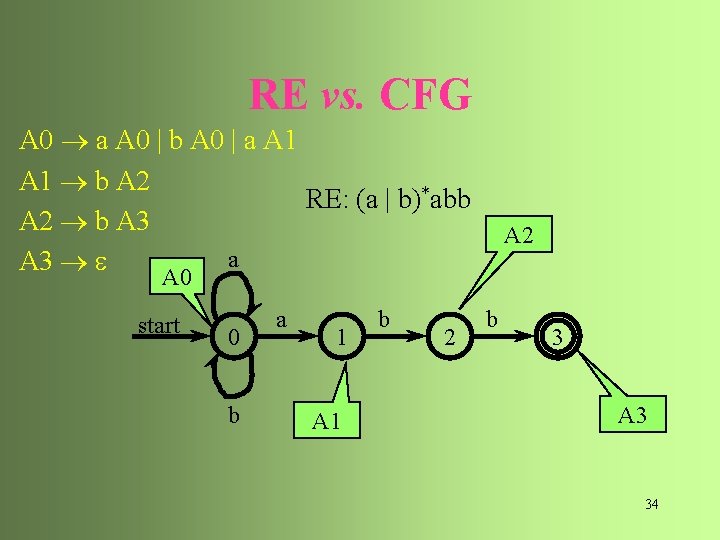

RE vs. CFG © Every language described by a RE can also be described by a CFG © Example: (a|b)*abb © A 0 a A 0 | b A 0 | a A 1 © A 1 b A 2 © A 2 b A 3 © A 3 (1) Right branching (2) Starts with a terminal symbol 32

RE vs. CFG A 0 a(|b) Regular Grammar: • Right branching • Starts with a terminal symbol A 0 a(|b) A 0 a A 1 b (a|b)* abb A 2 b A 3 33

RE vs. CFG A 0 a A 0 | b A 0 | a A 1 b A 2 RE: (a | b)*abb A 2 b A 3 a A 3 A 2 A 0 start 0 b a 1 A 1 b 2 b 3 A 3 34

RE vs. CFG a DFA for (a | b)*abb A 2 A 0 start b a 0 a b A 0 | a A 1 A 1 a A 1 | b A 2 a A 1 | b A 3 a A 1 | b A 0 | 1 b a 2 a b 3 A 3 35

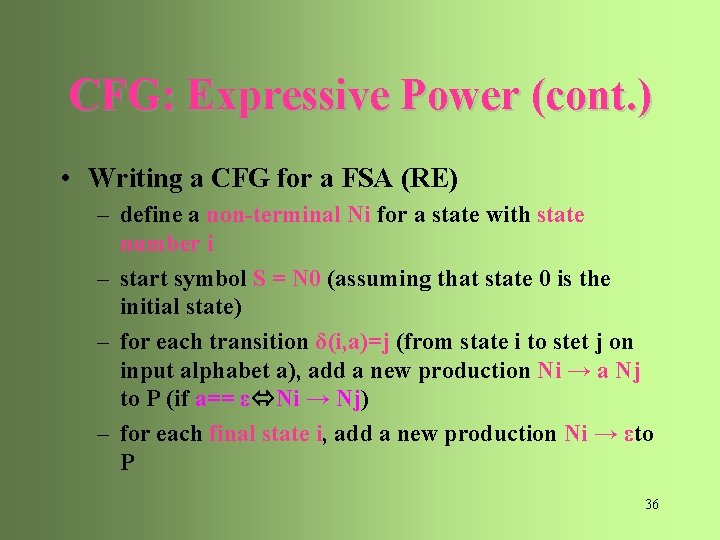

CFG: Expressive Power (cont. ) • Writing a CFG for a FSA (RE) – define a non-terminal Ni for a state with state number i – start symbol S = N 0 (assuming that state 0 is the initial state) – for each transition δ(i, a)=j (from state i to stet j on input alphabet a), add a new production Ni → a Nj to P (if a== ε Ni → Nj) – for each final state i, add a new production Ni → εto P 36

CFG: Expressive Power • CFG vs. Regular Expression (R. E. ) – Every R. E. can be recognized by a FSA – Every FSA can be represented by a CFG with production rules of the form: →a. B|ε – (known as a “Regular Grammar”) A • Therefore, L(RE) L(CFG) 38

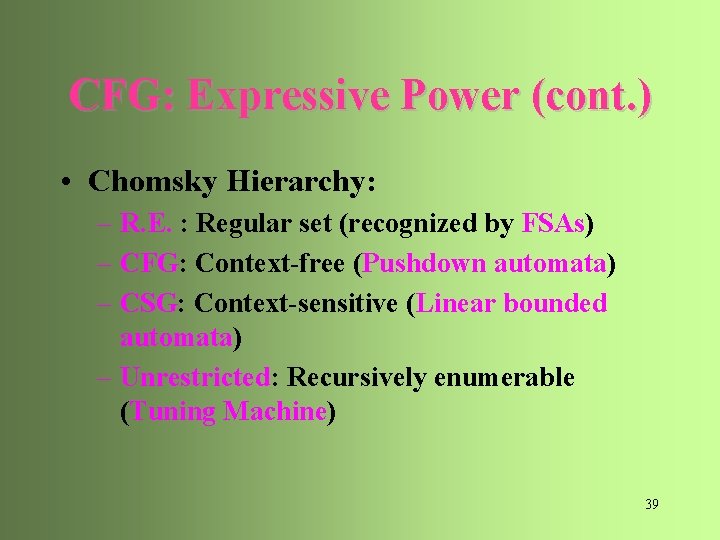

CFG: Expressive Power (cont. ) • Chomsky Hierarchy: – R. E. : Regular set (recognized by FSAs) – CFG: Context-free (Pushdown automata) – CSG: Context-sensitive (Linear bounded automata) – Unrestricted: Recursively enumerable (Tuning Machine) 39

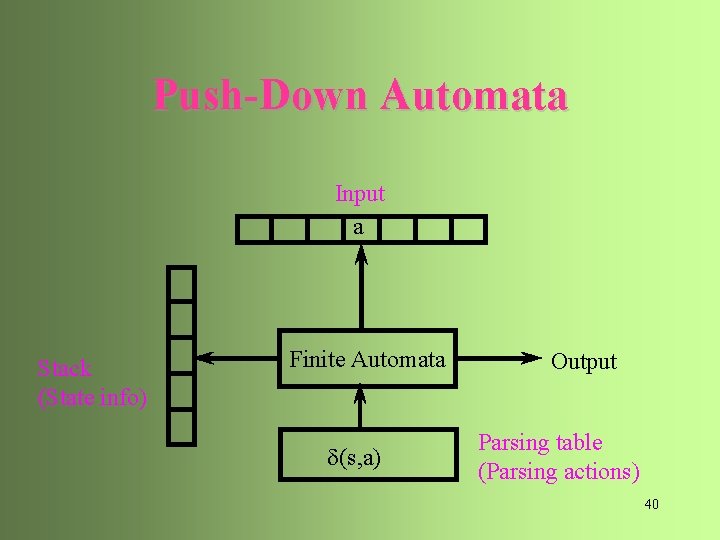

Push-Down Automata Input a Stack (State info) Finite Automata d(s, a) Output Parsing table (Parsing actions) 40

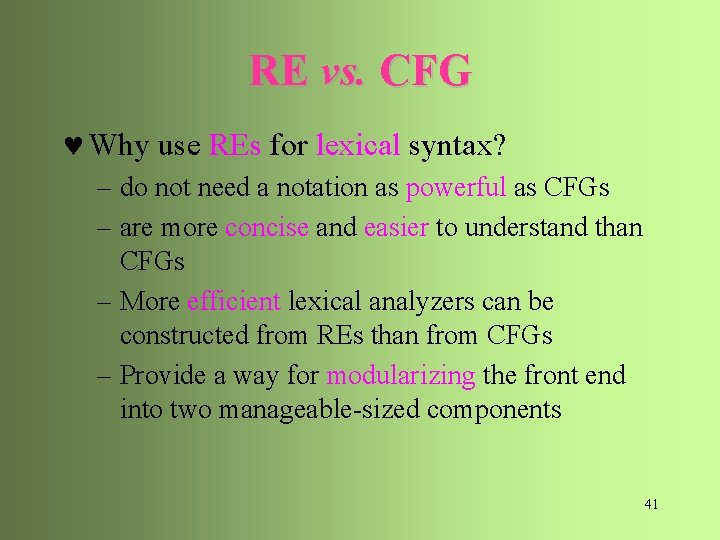

RE vs. CFG © Why use REs for lexical syntax? – do not need a notation as powerful as CFGs – are more concise and easier to understand than CFGs – More efficient lexical analyzers can be constructed from REs than from CFGs – Provide a way for modularizing the front end into two manageable-sized components 41

CFG vs. Finite-State Machine • Inappropriateness of FSA – Constituents: only terminals – Recursion: do not allow A => … B … => … A … • RTN (Recursive Transition Network) – FSA with augmentation of recursion – arc: terminal or non-terminal – if arc is non-terminal: call to a sub-transition network & return upon traversal 42

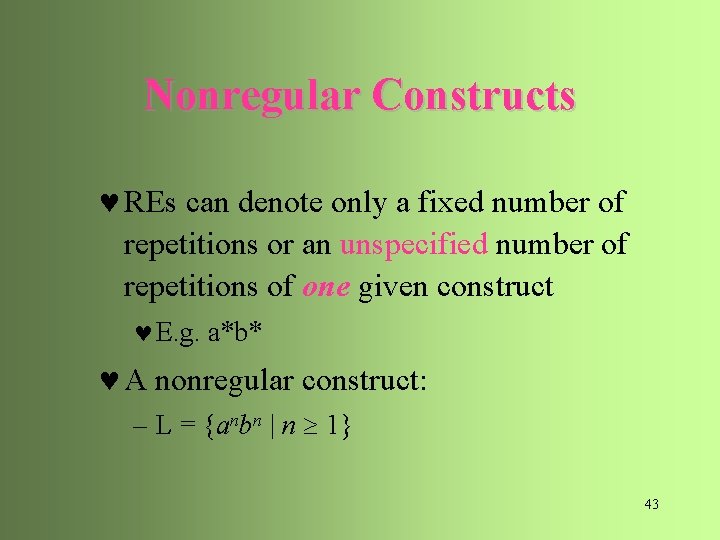

Nonregular Constructs © REs can denote only a fixed number of repetitions or an unspecified number of repetitions of one given construct © E. g. a*b* © A nonregular construct: – L = {anbn | n 1} 43

Non-Context-Free Constructs © CFGs can denote only a fixed number of repetitions or an unspecified number of repetitions of one or two (paired) given constructs © E. g. anbn © Some non-context-free constructs: – L 1 = {wcw | w is in (a | b)*} • declaration/use of identifiers – L 2 = {anbmcndm | n 1 and m 1} • #formal arguments/#actual arguments – L 3 = {anbncn | n 0} • e. g. , b: Backspace, c: under score 44

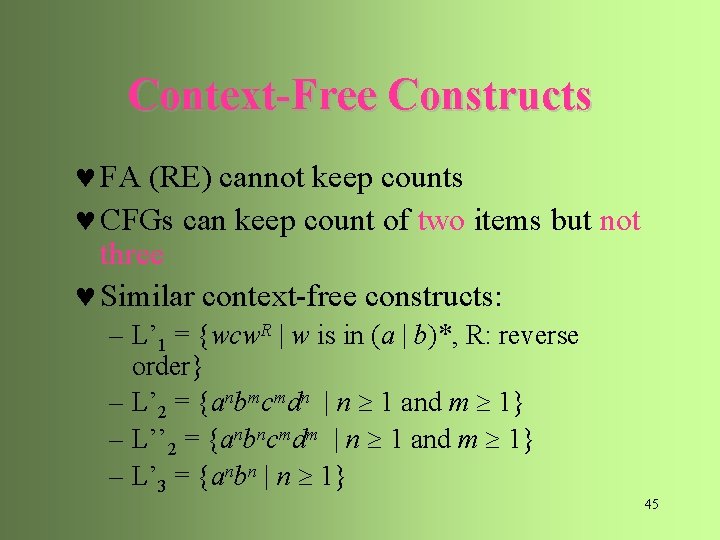

Context-Free Constructs © FA (RE) cannot keep counts © CFGs can keep count of two items but not three © Similar context-free constructs: – L’ 1 = {wcw. R | w is in (a | b)*, R: reverse order} – L’ 2 = {anbmcmdn | n 1 and m 1} – L’’ 2 = {anbncmdm | n 1 and m 1} – L’ 3 = {anbn | n 1} 45

CFG Parsers 46

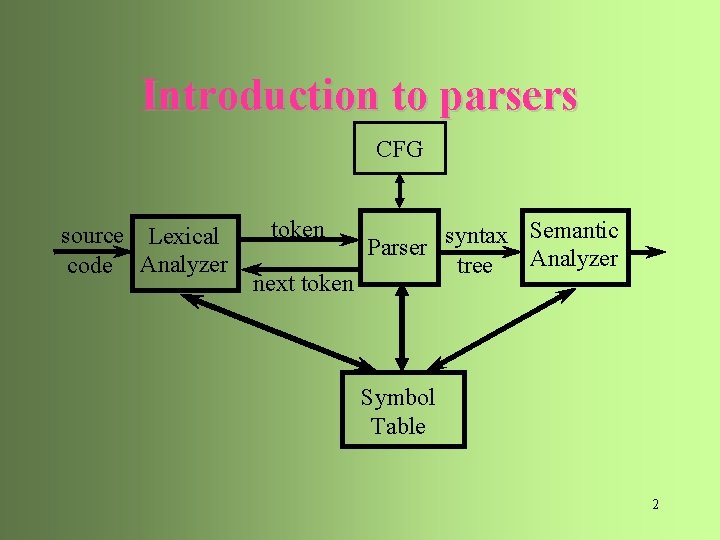

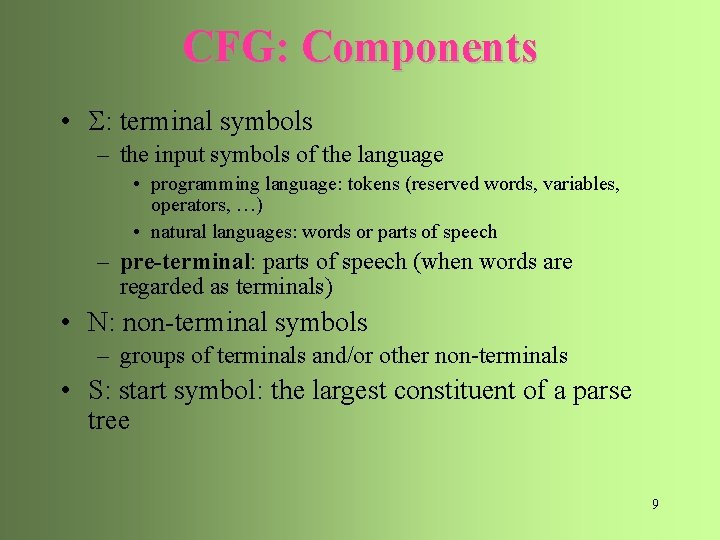

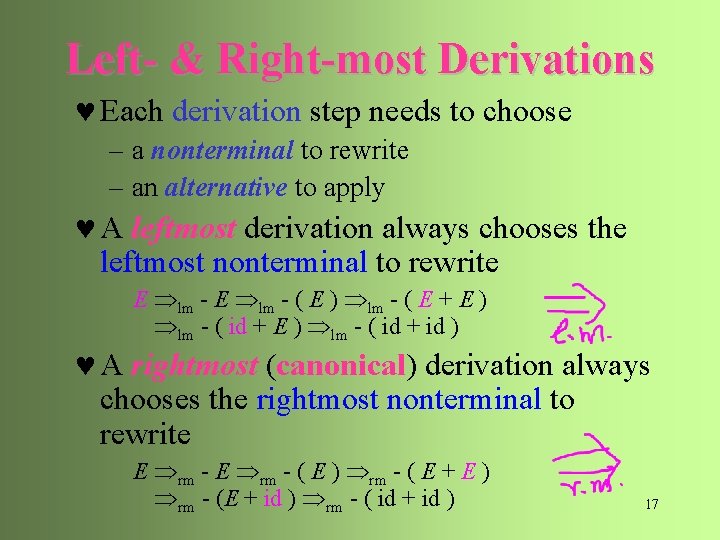

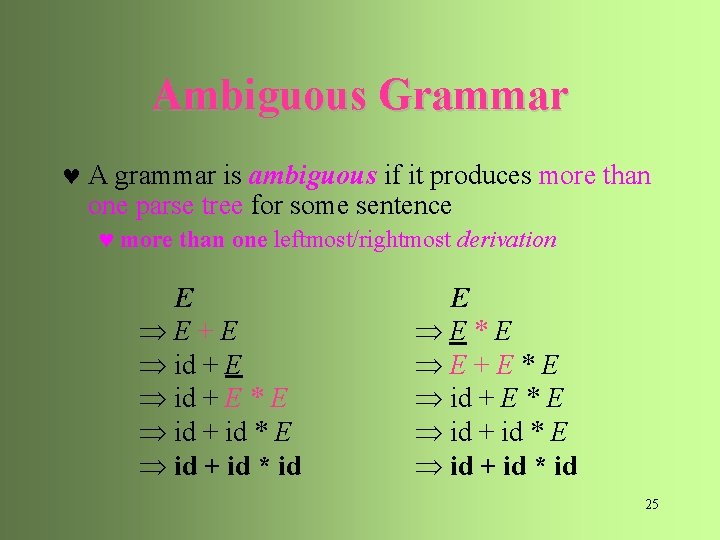

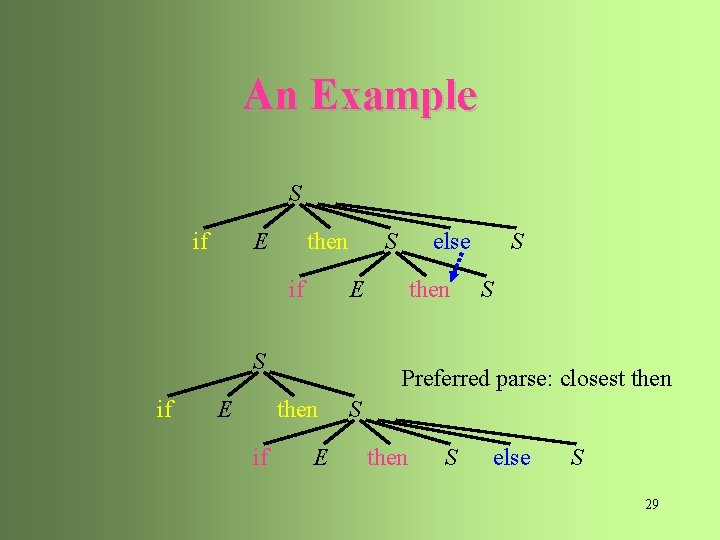

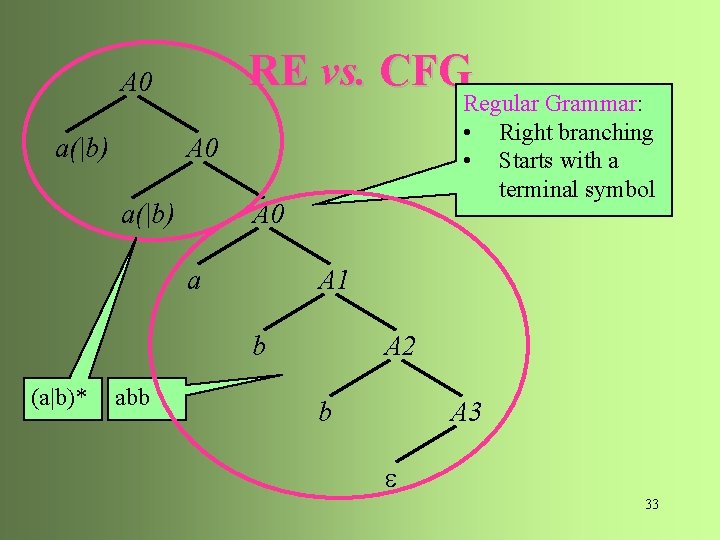

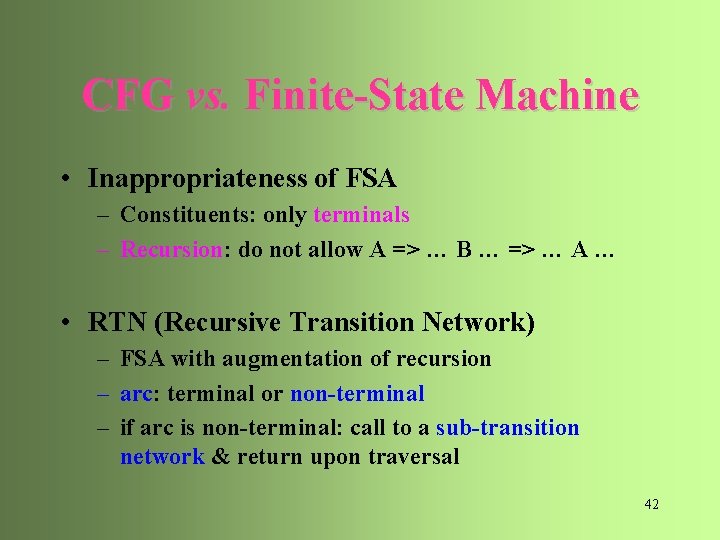

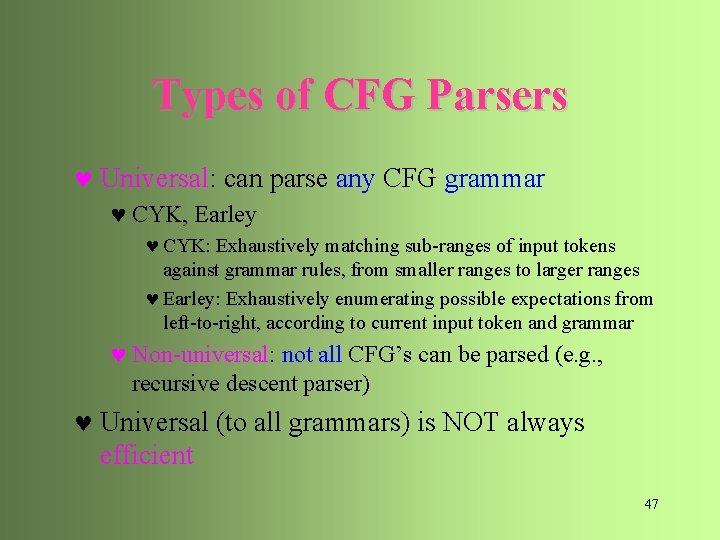

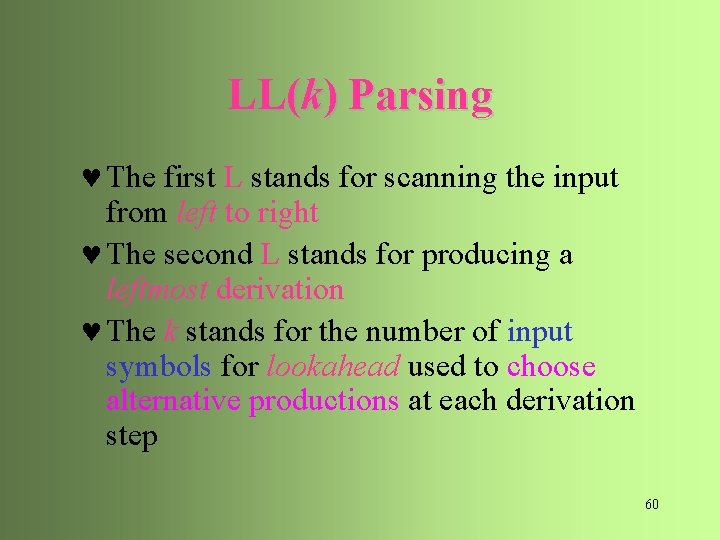

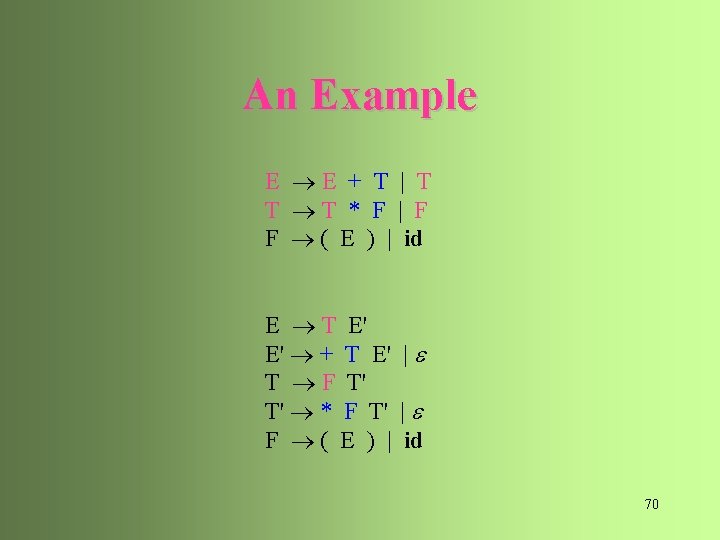

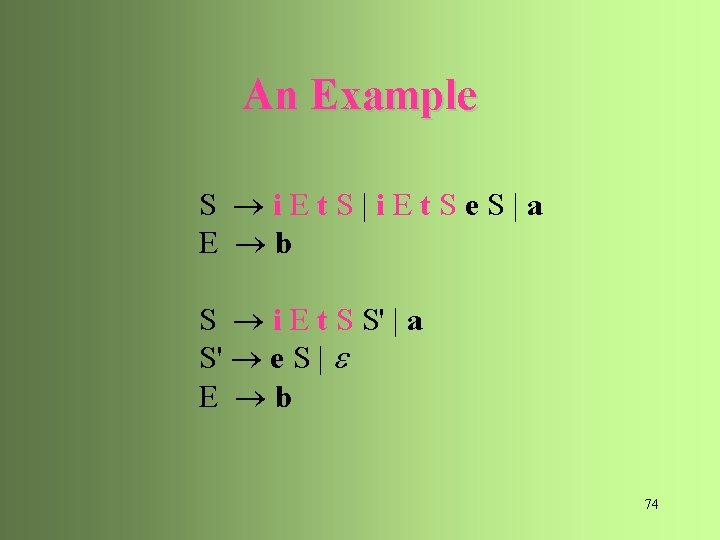

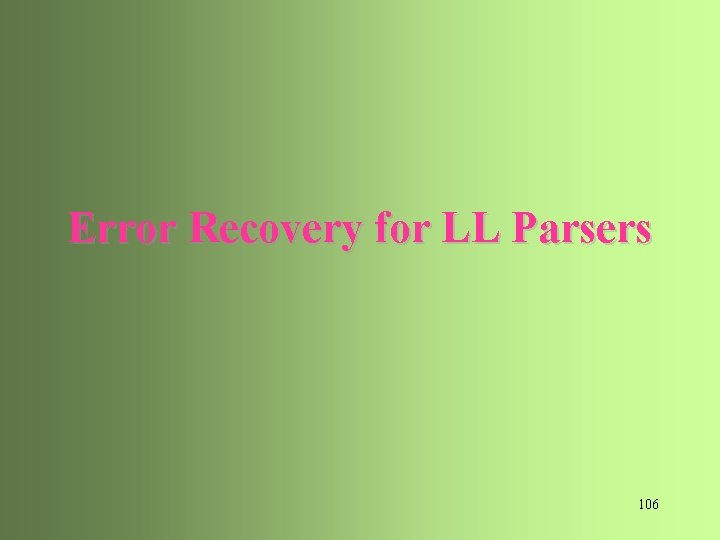

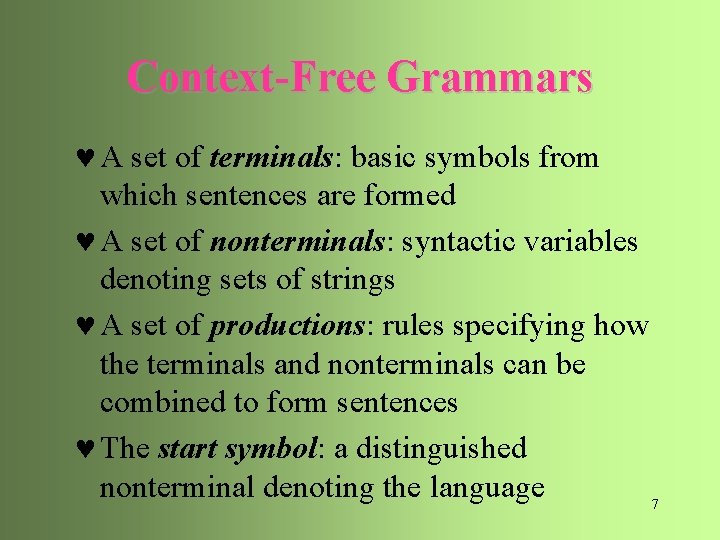

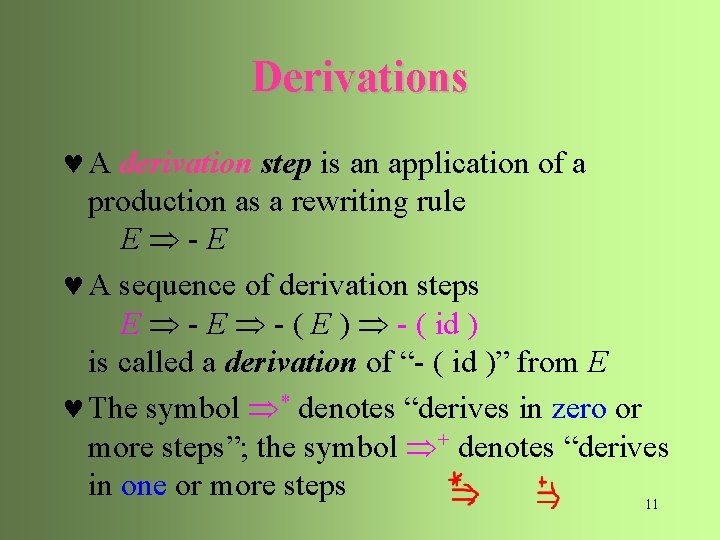

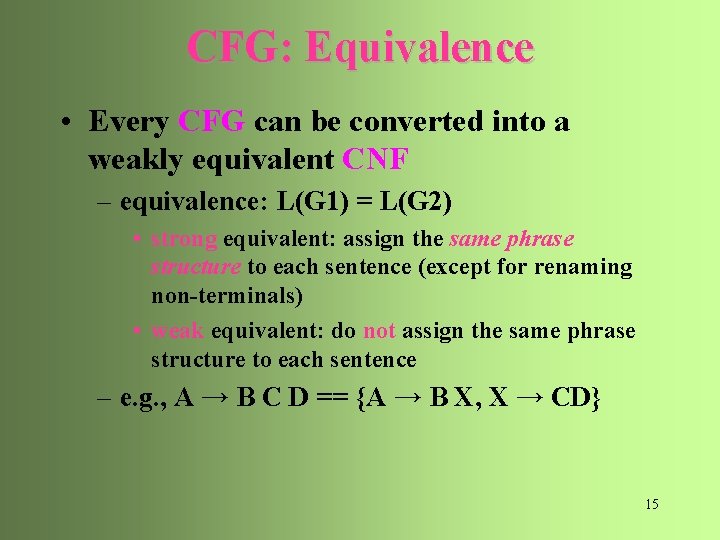

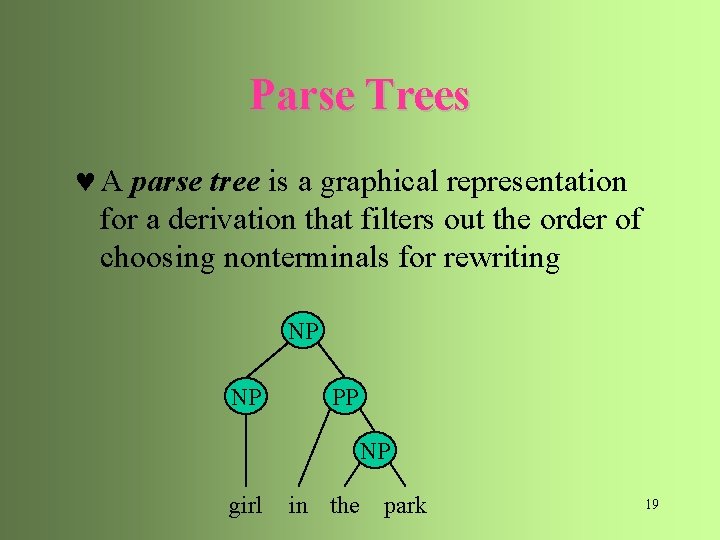

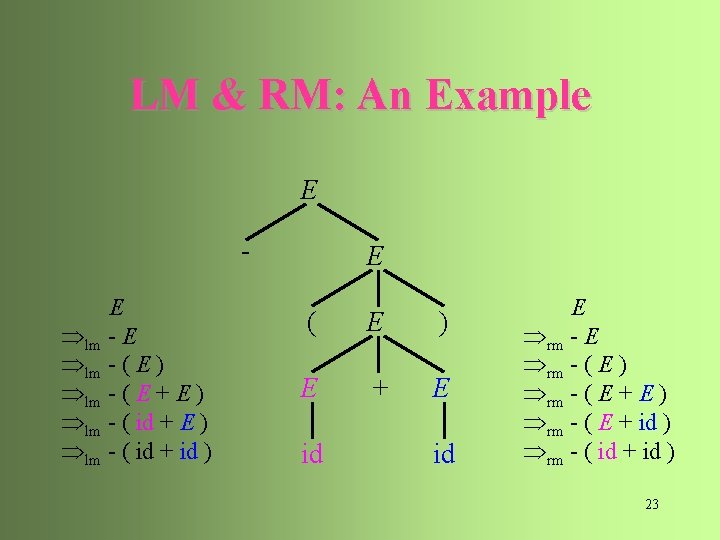

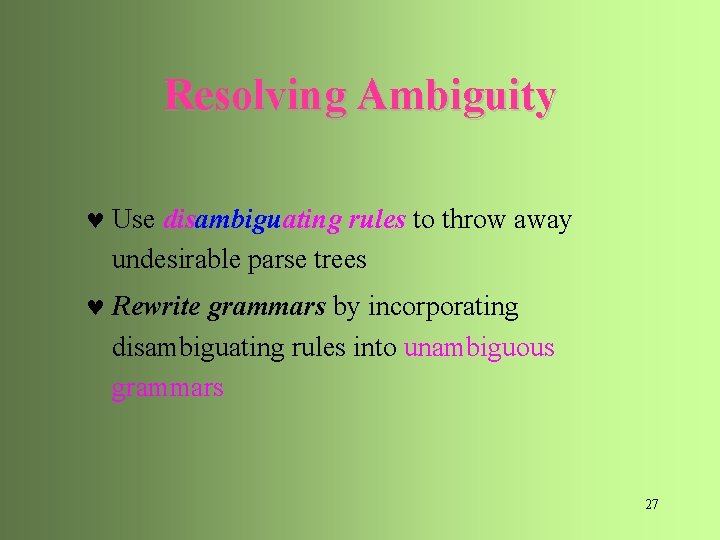

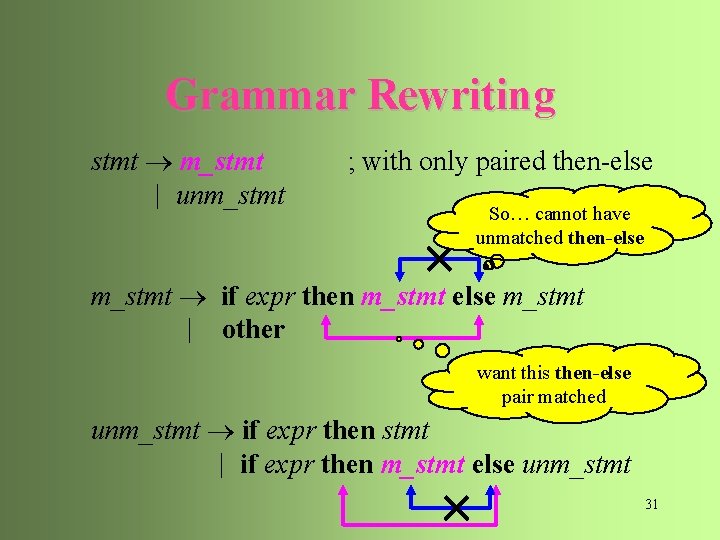

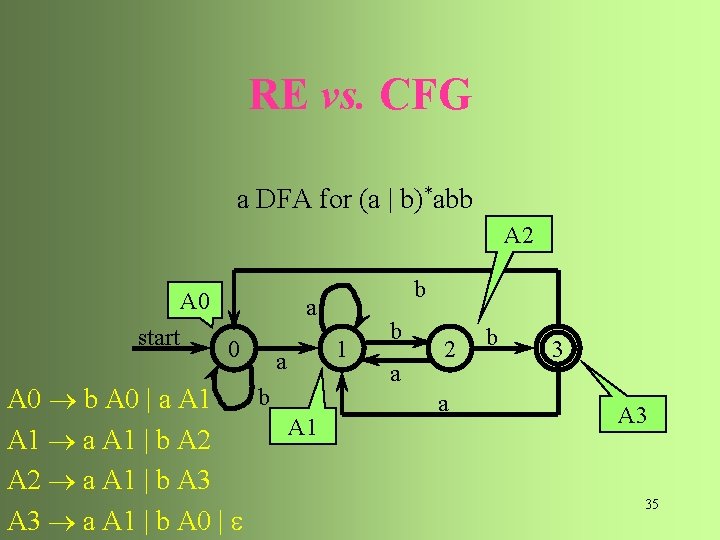

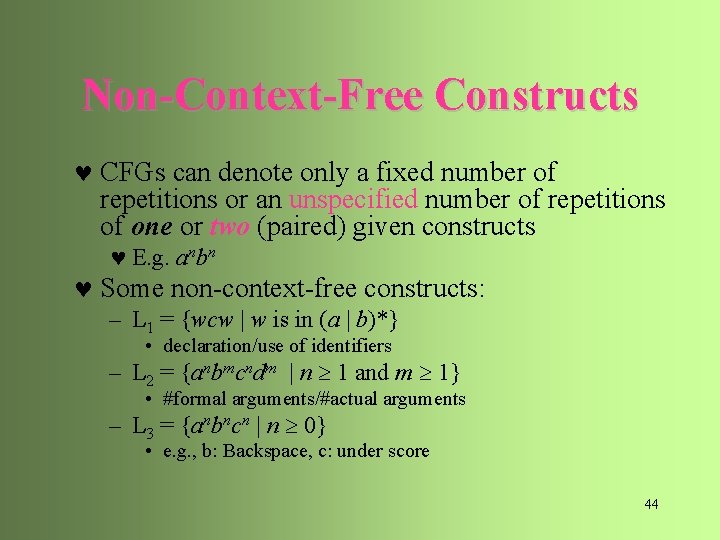

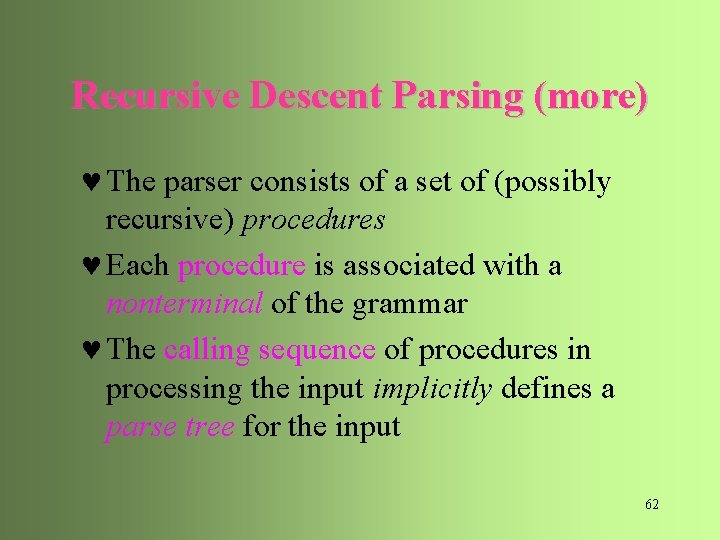

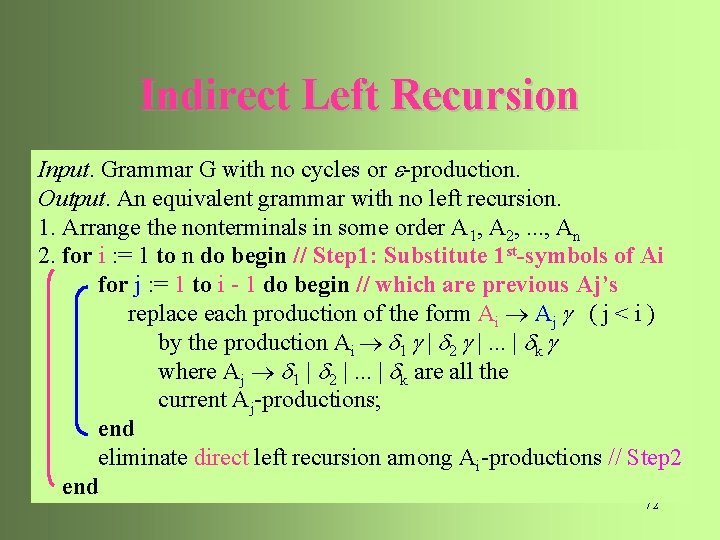

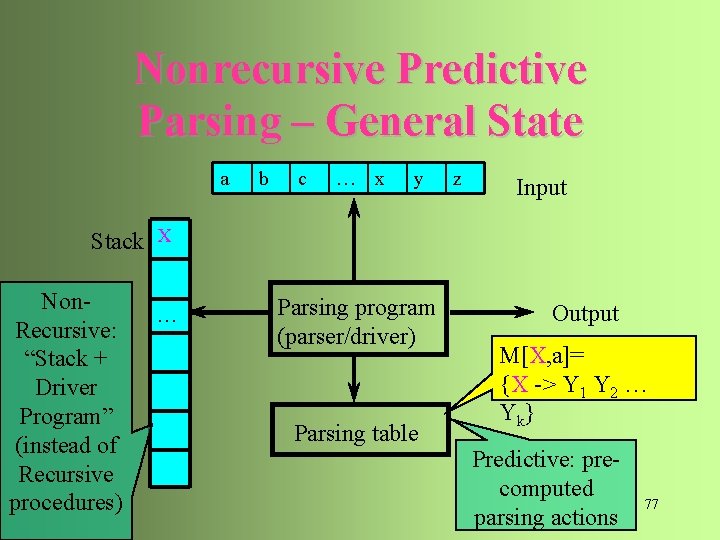

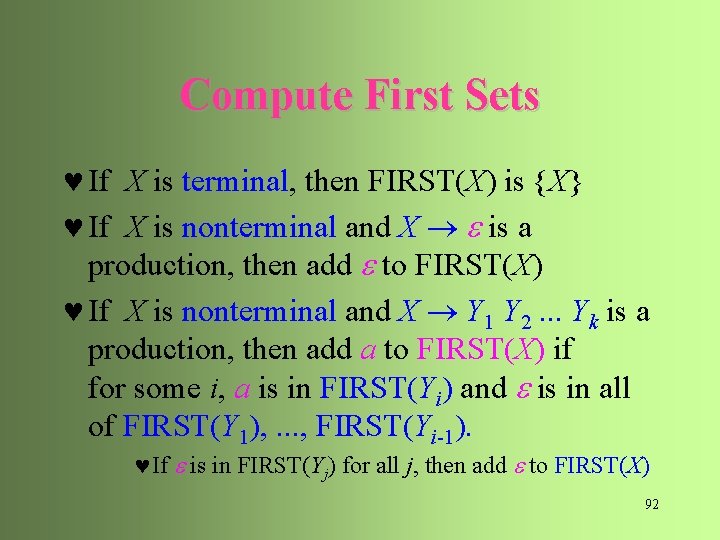

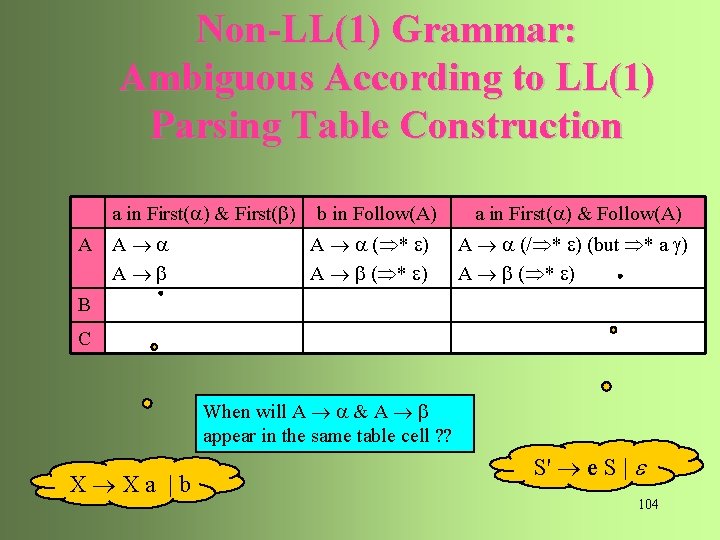

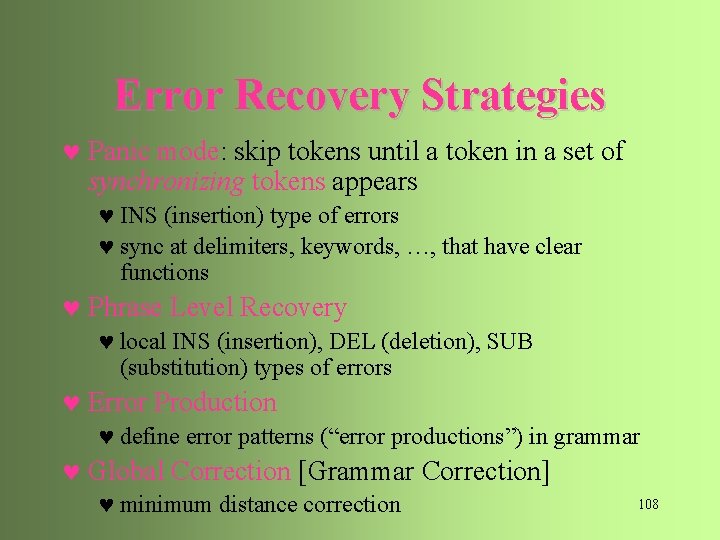

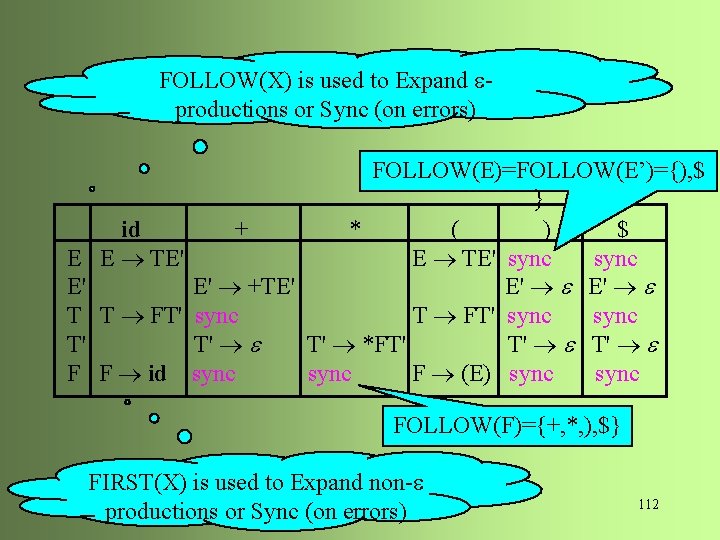

Types of CFG Parsers © Universal: can parse any CFG grammar © CYK, Earley © CYK: Exhaustively matching sub-ranges of input tokens against grammar rules, from smaller ranges to larger ranges © Earley: Exhaustively enumerating possible expectations from left-to-right, according to current input token and grammar © Non-universal: not all CFG’s can be parsed (e. g. , recursive descent parser) © Universal (to all grammars) is NOT always efficient 47

![Types of CFG Parsers Practical Parsers what is a good parser Types of CFG Parsers © Practical Parsers: [“what is a good parser? ”] ©](https://slidetodoc.com/presentation_image/13e68b1758d58bb45a134b8ebdf49f65/image-46.jpg)

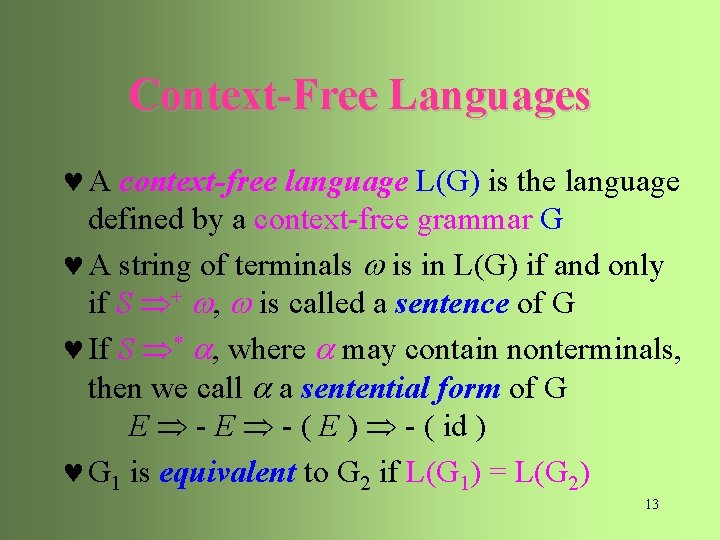

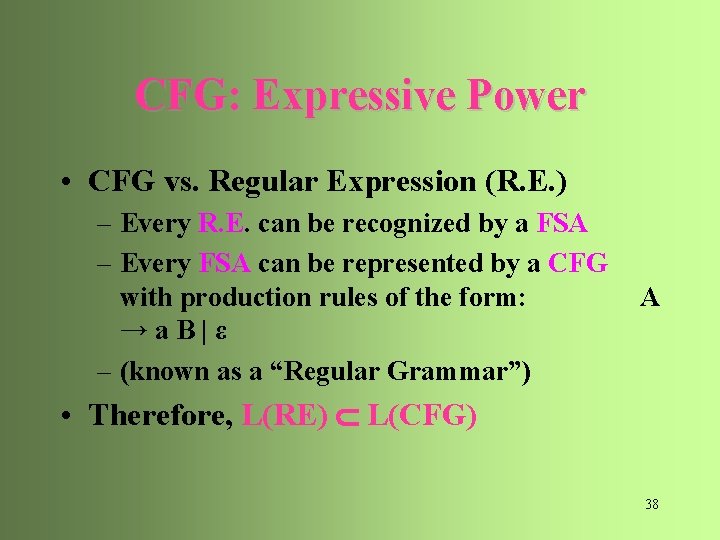

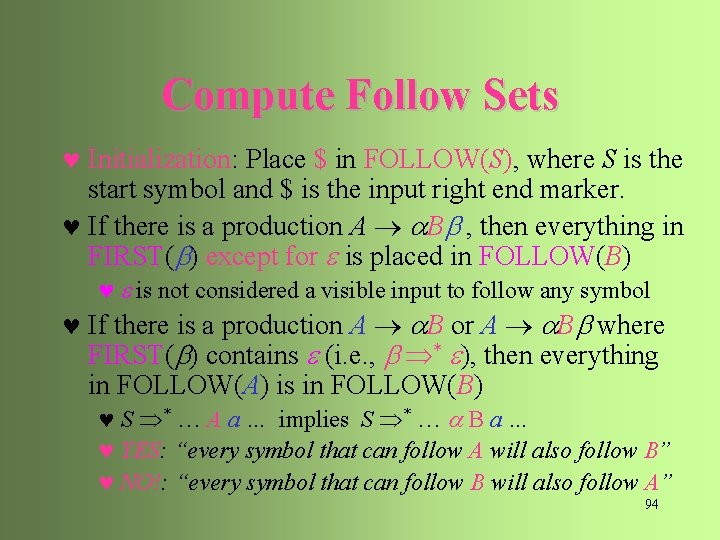

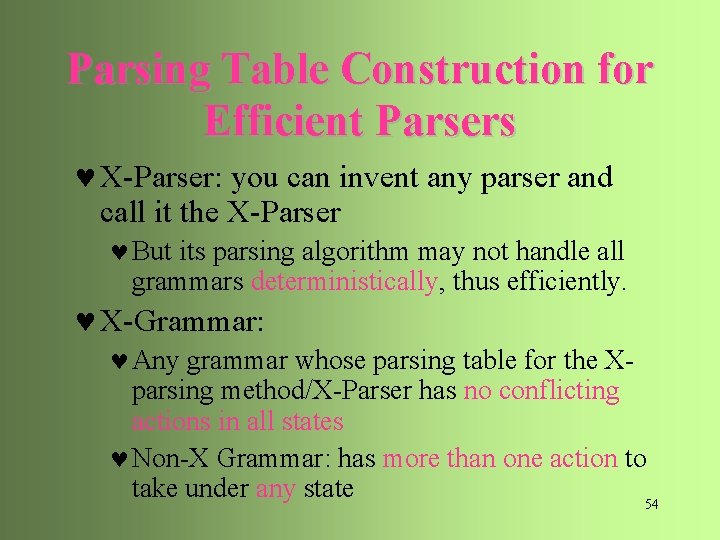

Types of CFG Parsers © Practical Parsers: [“what is a good parser? ”] © Simple: simple program structure © Left-to-right (or right-to-left) scan © middle-out or island driven is often not preferred © Top-down or Bottom up matching © Efficient: efficient for good/bad inputs © Parse normal syntax quickly © Detect errors immediately on next token © Deterministic: © No alternative choices during parsing given next token © Small lookahead buffer (also contribute to efficiency) 48

Types of CFG Parsers © Top Down: © Matching from start symbol down to terminal tokens © Bottom Up: © Matching input tokens with reducible rules from terminal up to start symbol 49

Efficient CFG Parsers © Top Down: LL Parsers © Matching from start symbol down to terminal tokens, left-to-right, according to a leftmost derivation sequence © Bottom Up: LR Parsers © Matching input tokens with reducible rules, left-to-right, from terminal up to start symbol, in a reverse order of rightmost derivation sequence 50

Efficient CFG Parsers © Efficient & Deterministic Parsing – only possible for some subclasses of grammars with special parsing algorithms © Top Down: ©Parsing LL Grammars with LL Parsers © Bottom Up: ©Parsing LR Grammars with LR Parsers ©LR grammar is a larger class of grammars than LL 51

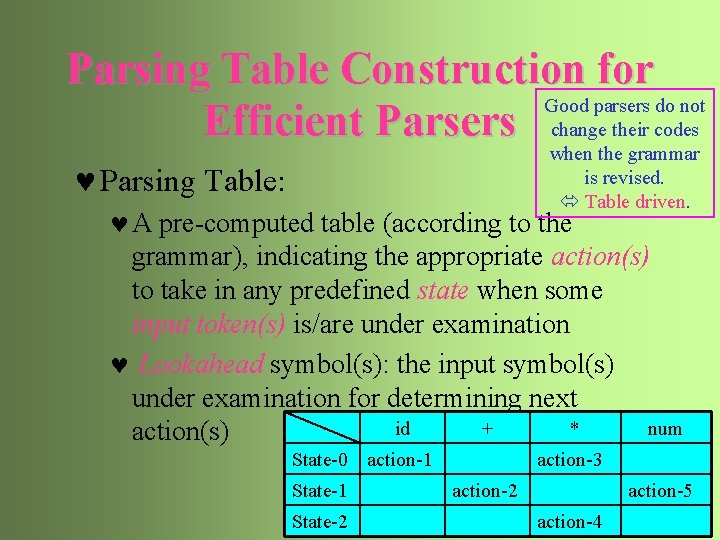

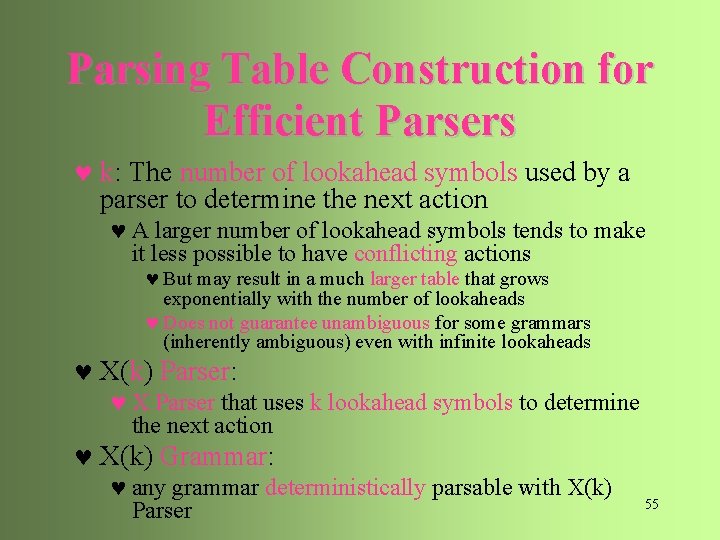

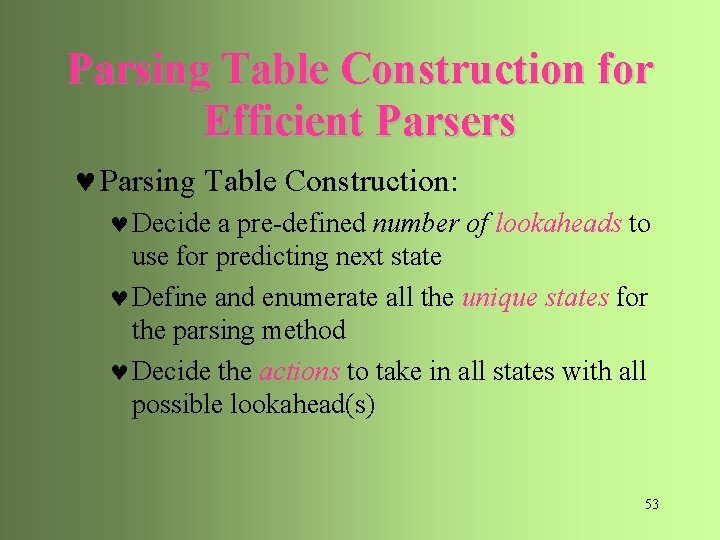

Parsing Table Construction for Good parsers do not Efficient Parsers change their codes when the grammar is revised. Table driven. © Parsing Table: © A pre-computed table (according to the grammar), indicating the appropriate action(s) to take in any predefined state when some input token(s) is/are under examination © Lookahead symbol(s): the input symbol(s) under examination for determining next id + * num action(s) State-0 action-1 State-2 action-3 action-2 action-5 action-4 52

Parsing Table Construction for Efficient Parsers © Parsing Table Construction: © Decide a pre-defined number of lookaheads to use for predicting next state © Define and enumerate all the unique states for the parsing method © Decide the actions to take in all states with all possible lookahead(s) 53

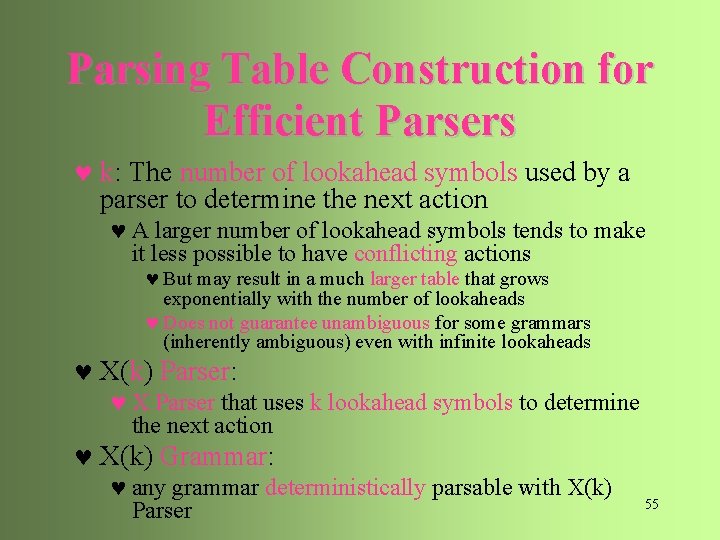

Parsing Table Construction for Efficient Parsers © X-Parser: you can invent any parser and call it the X-Parser © But its parsing algorithm may not handle all grammars deterministically, thus efficiently. © X-Grammar: © Any grammar whose parsing table for the Xparsing method/X-Parser has no conflicting actions in all states © Non-X Grammar: has more than one action to take under any state 54

Parsing Table Construction for Efficient Parsers © k: The number of lookahead symbols used by a parser to determine the next action © A larger number of lookahead symbols tends to make it less possible to have conflicting actions © But may result in a much larger table that grows exponentially with the number of lookaheads © Does not guarantee unambiguous for some grammars (inherently ambiguous) even with infinite lookaheads © X(k) Parser: © X Parser that uses k lookahead symbols to determine the next action © X(k) Grammar: © any grammar deterministically parsable with X(k) Parser 55

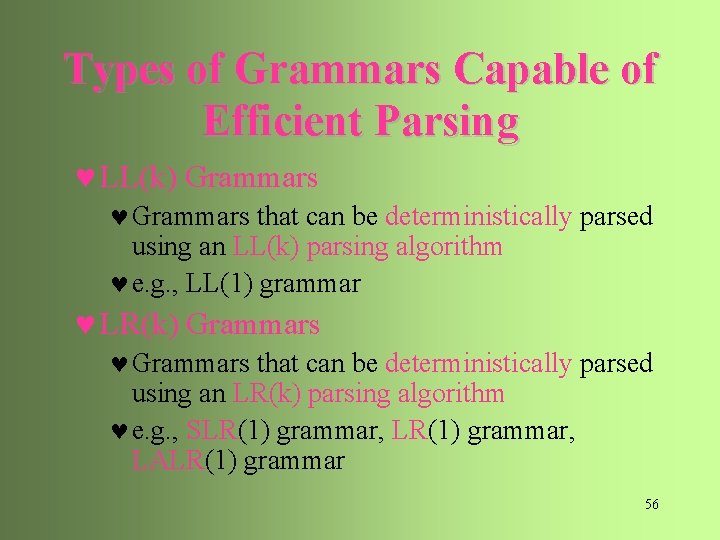

Types of Grammars Capable of Efficient Parsing © LL(k) Grammars © Grammars that can be deterministically parsed using an LL(k) parsing algorithm © e. g. , LL(1) grammar © LR(k) Grammars © Grammars that can be deterministically parsed using an LR(k) parsing algorithm © e. g. , SLR(1) grammar, LALR(1) grammar 56

Top-Down CFG Parsers Recursive Descent Parser vs. Non-Recursive LL(1) Parser 57

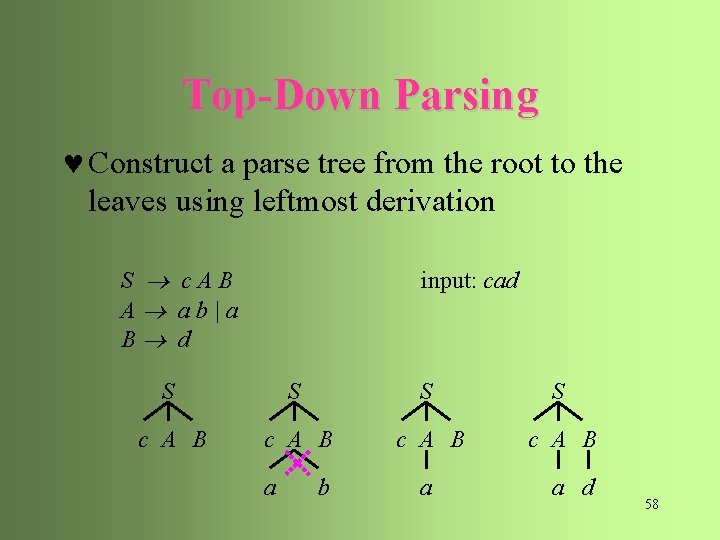

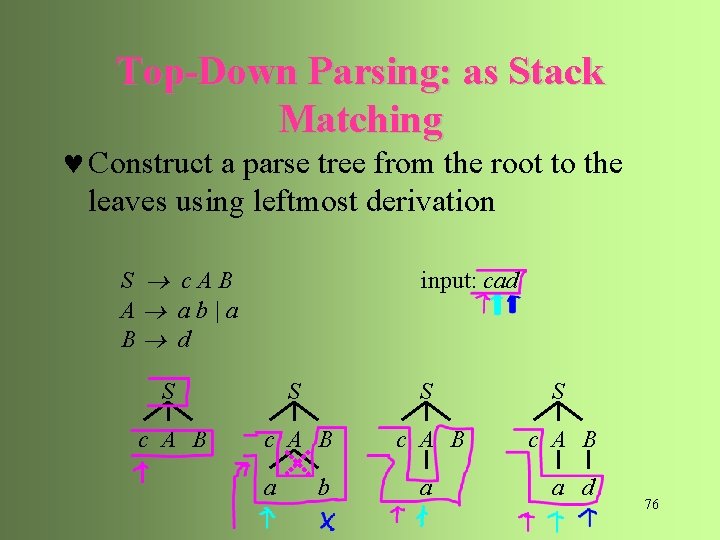

Top-Down Parsing © Construct a parse tree from the root to the leaves using leftmost derivation S c. AB A ab|a B d input: cad S S c A B a b a a d 58

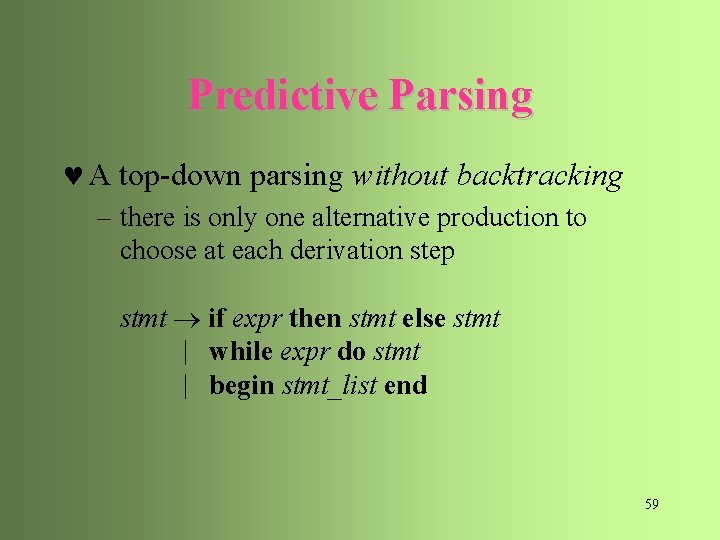

Predictive Parsing © A top-down parsing without backtracking – there is only one alternative production to choose at each derivation step stmt if expr then stmt else stmt | while expr do stmt | begin stmt_list end 59

LL(k) Parsing © The first L stands for scanning the input from left to right © The second L stands for producing a leftmost derivation © The k stands for the number of input symbols for lookahead used to choose alternative productions at each derivation step 60

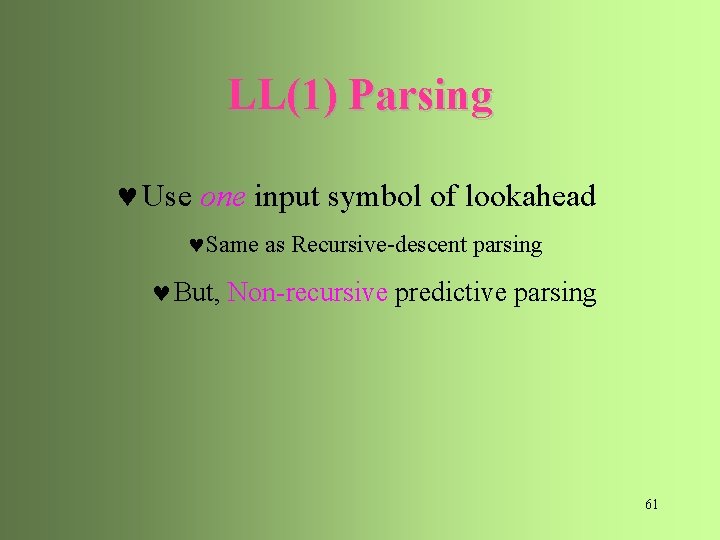

LL(1) Parsing © Use one input symbol of lookahead ©Same as Recursive-descent parsing © But, Non-recursive predictive parsing 61

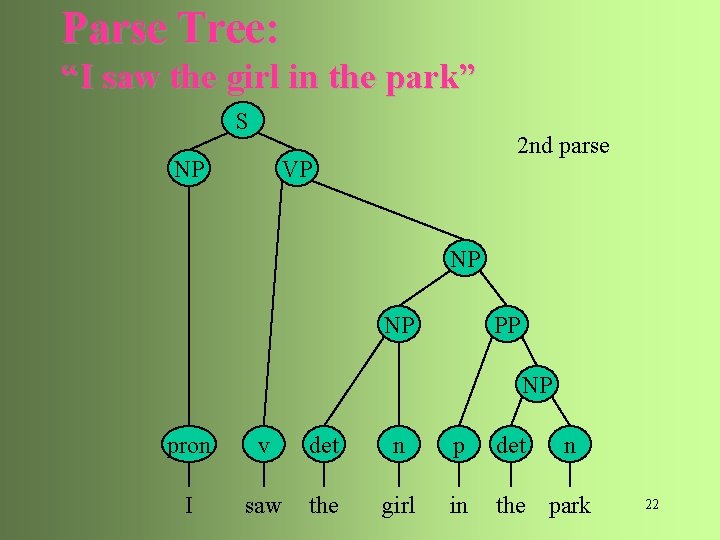

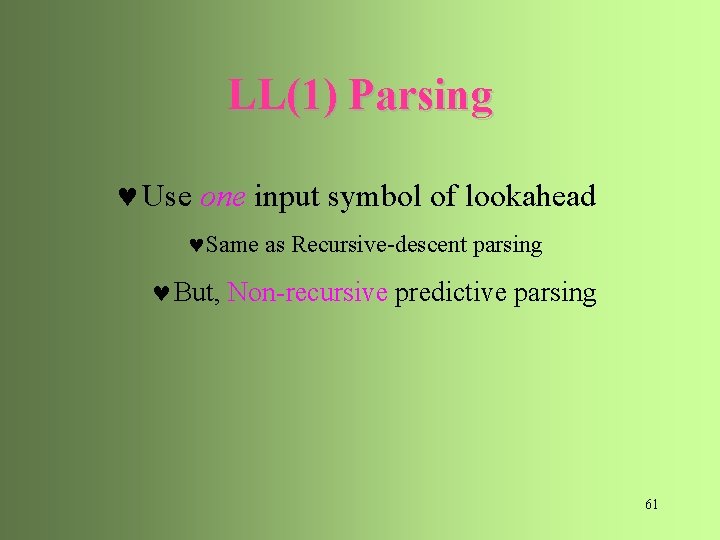

Recursive Descent Parsing (more) © The parser consists of a set of (possibly recursive) procedures © Each procedure is associated with a nonterminal of the grammar © The calling sequence of procedures in processing the input implicitly defines a parse tree for the input 62

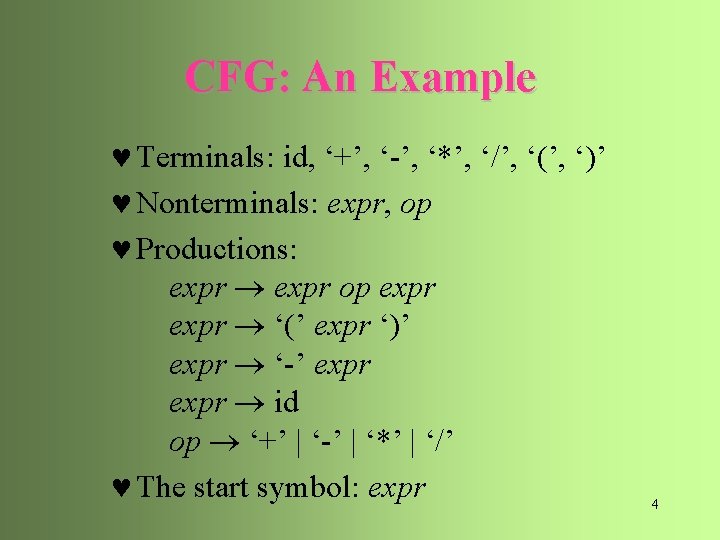

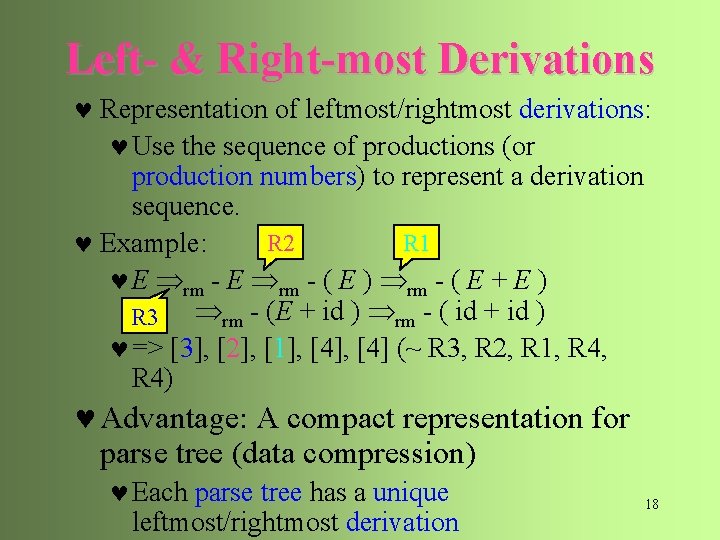

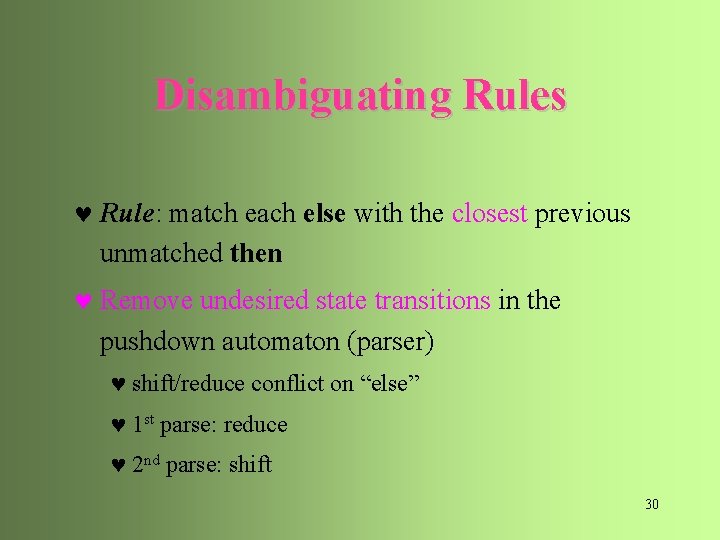

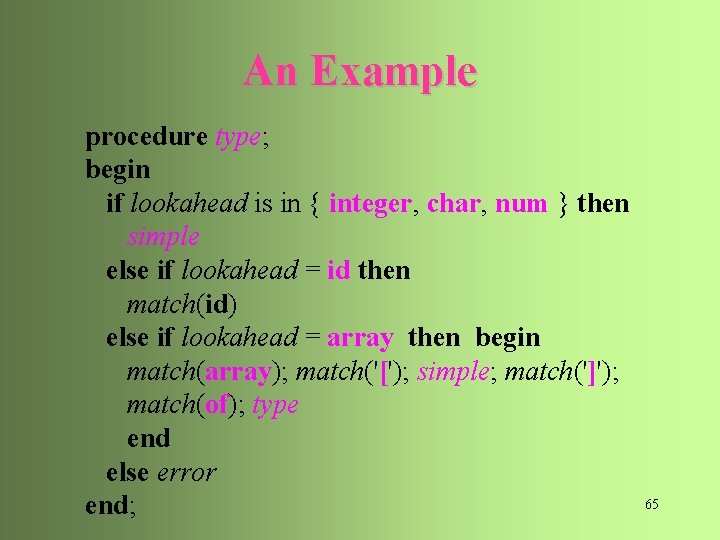

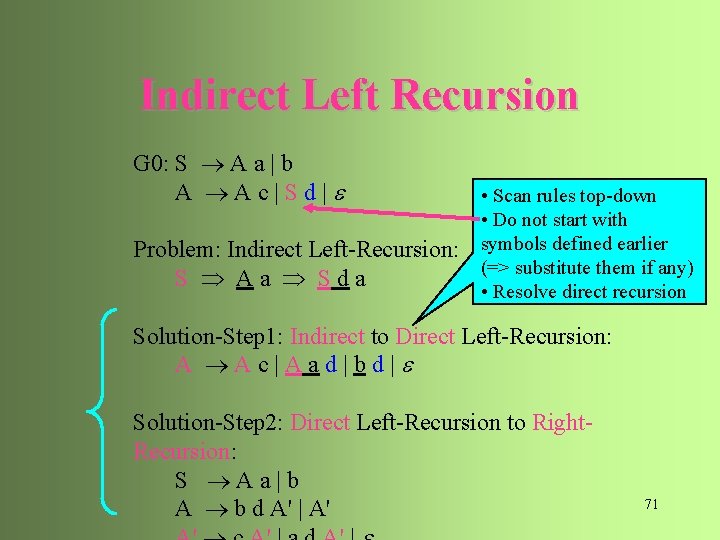

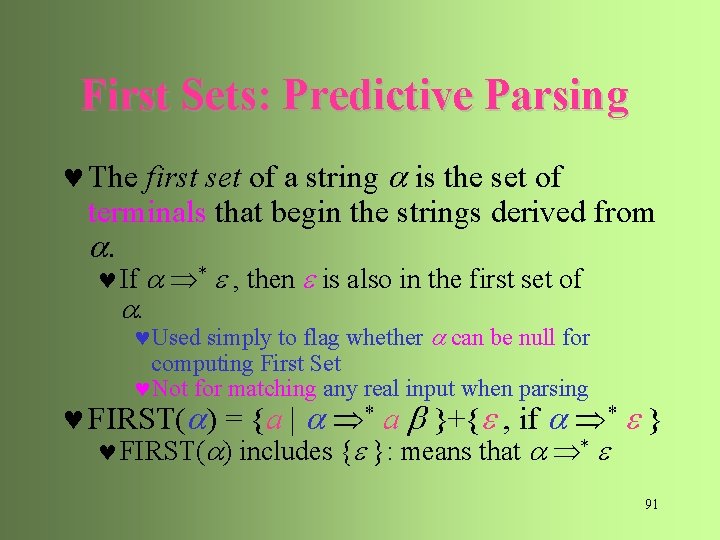

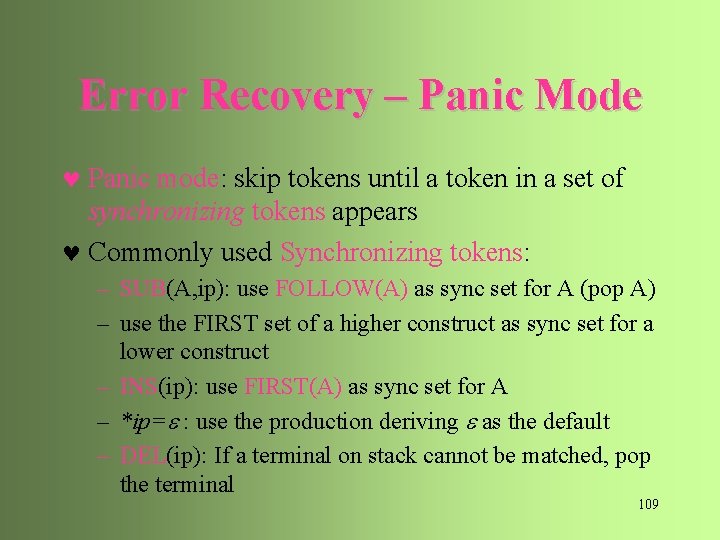

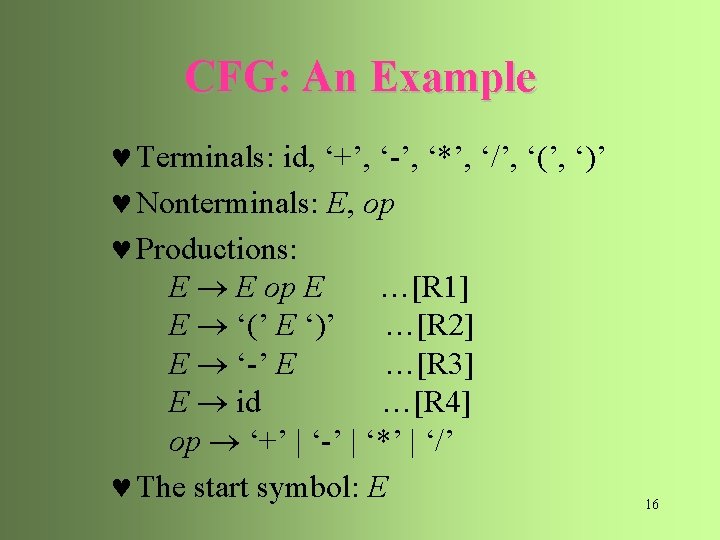

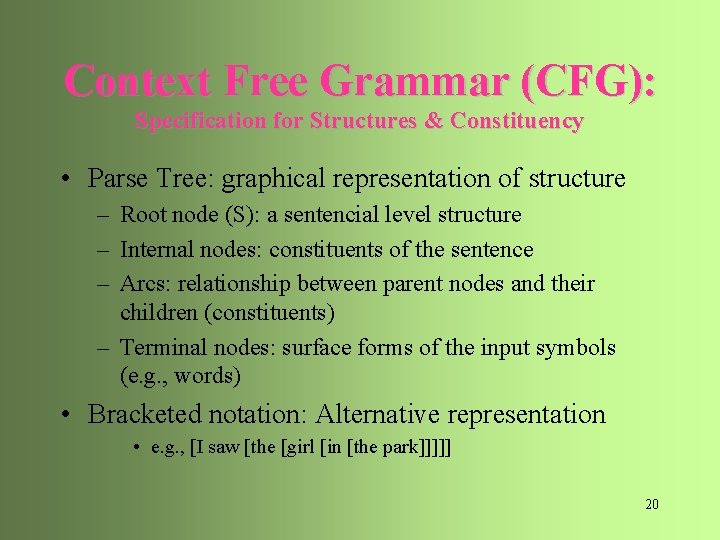

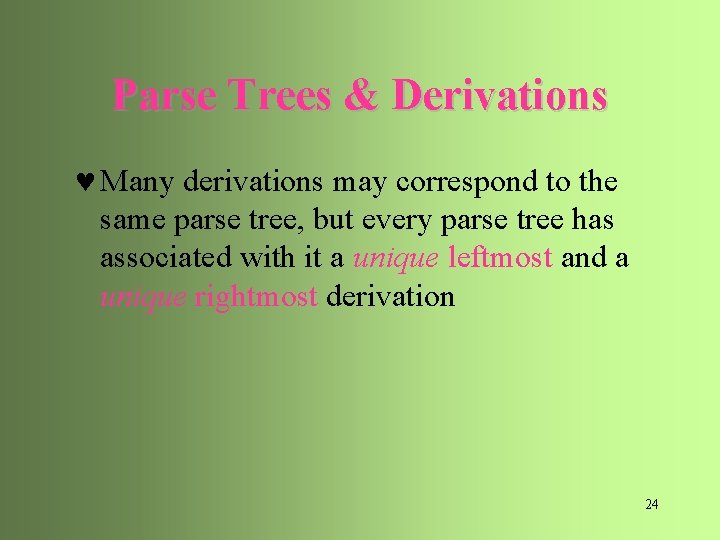

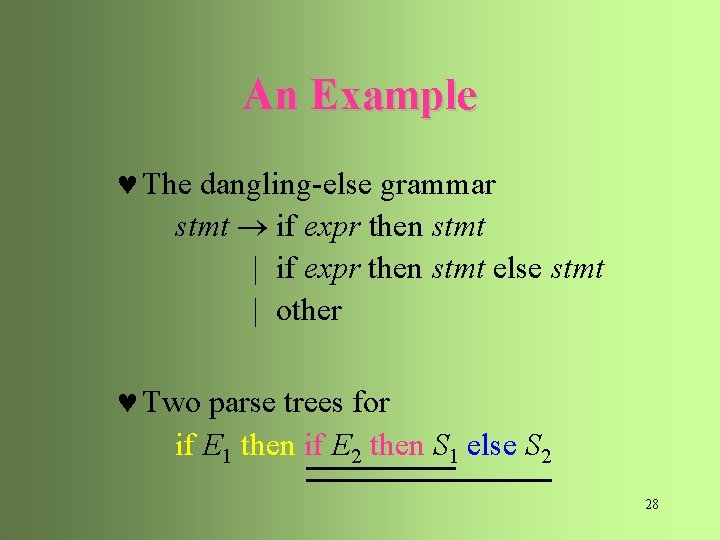

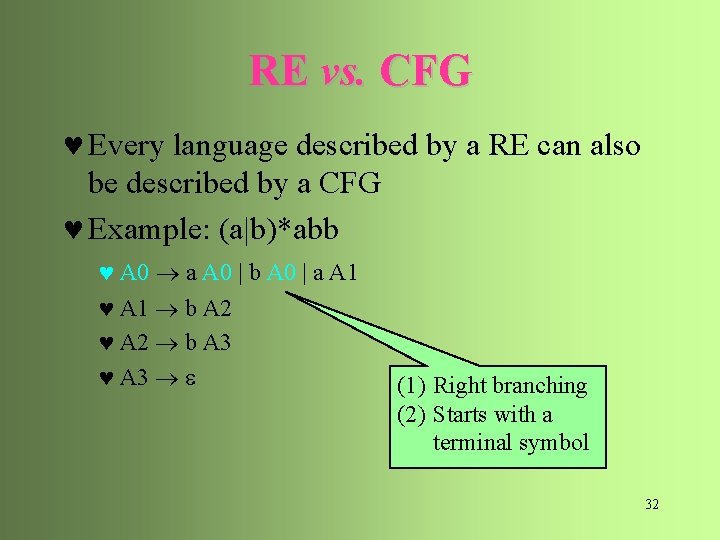

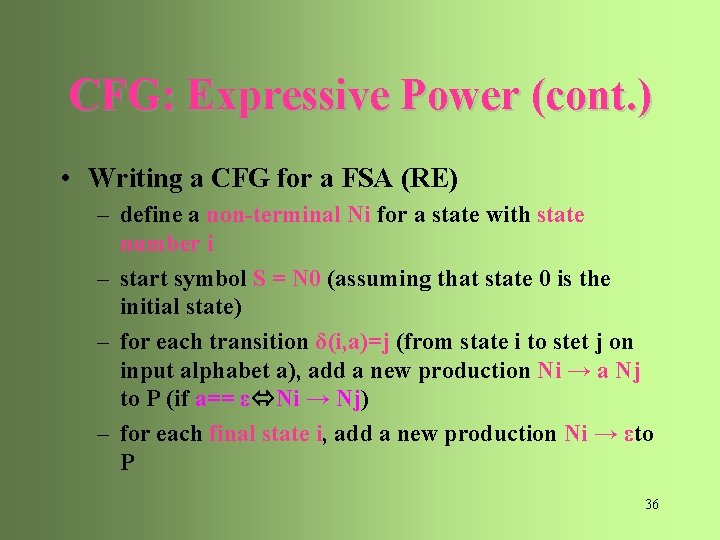

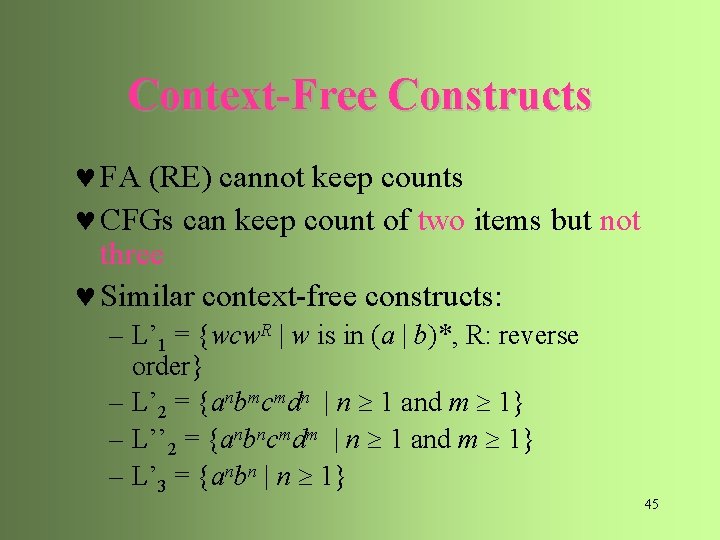

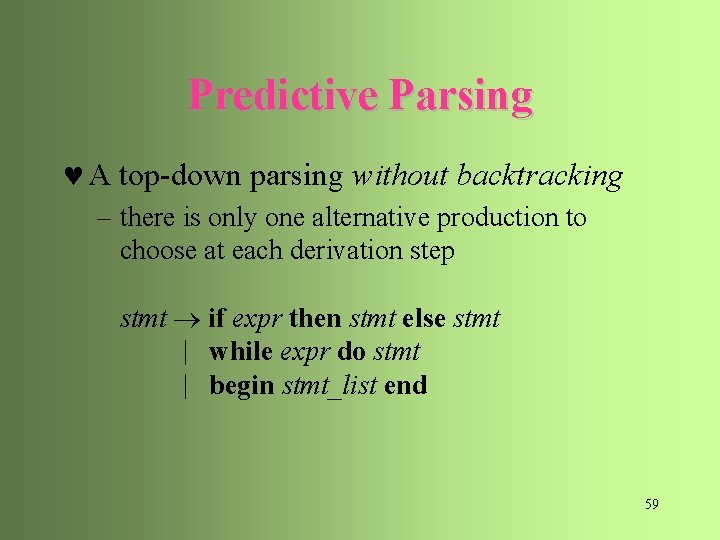

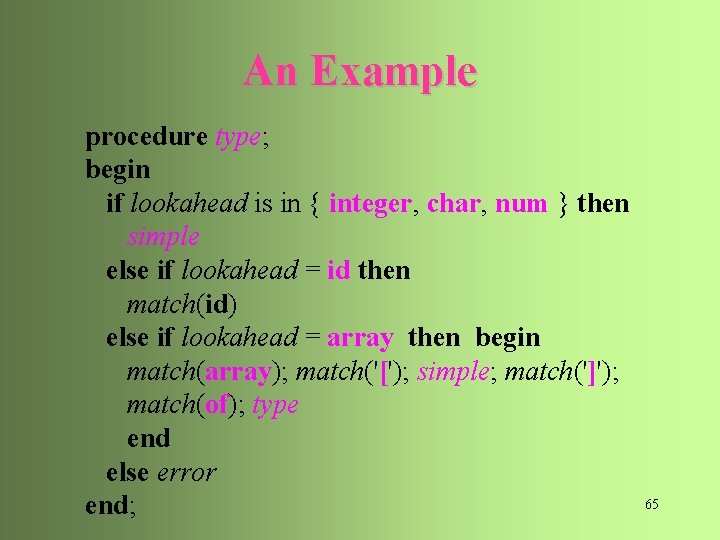

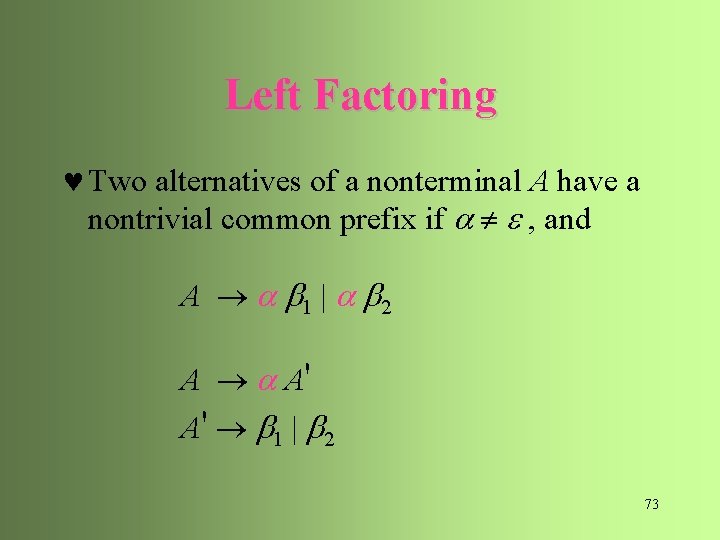

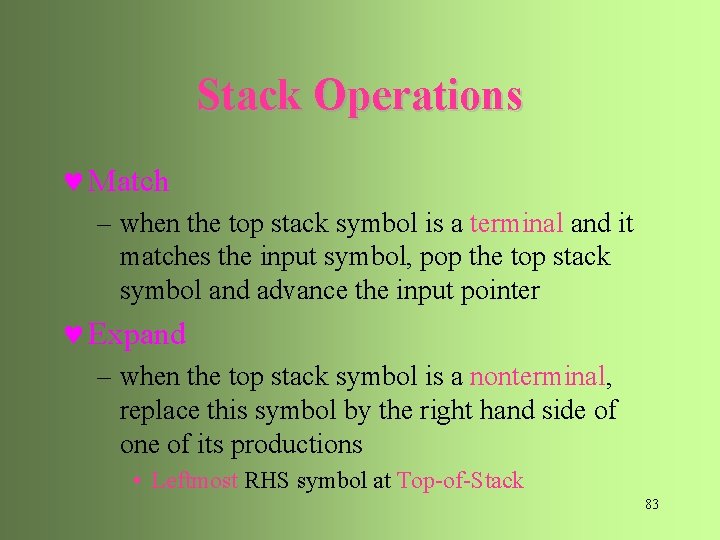

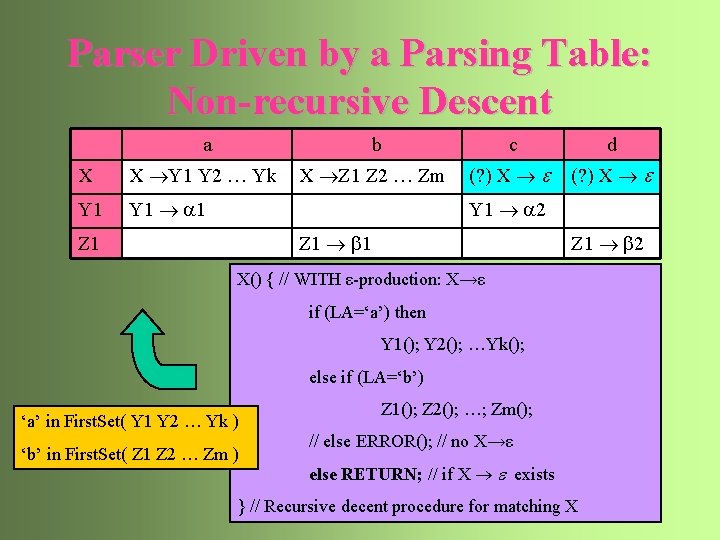

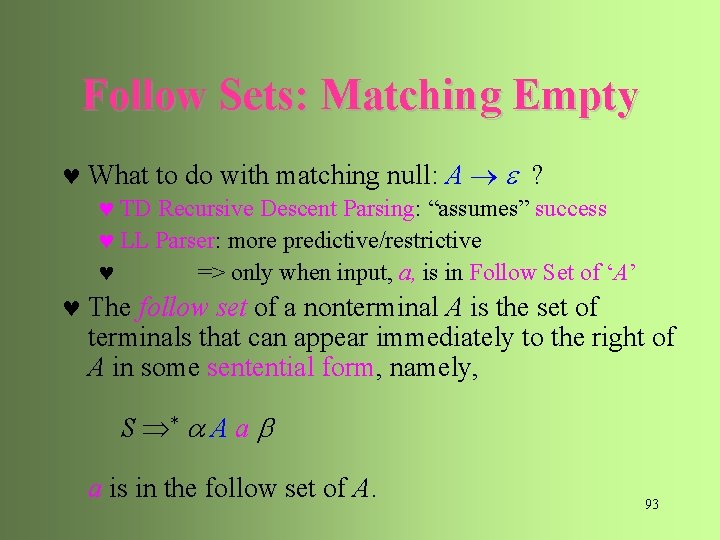

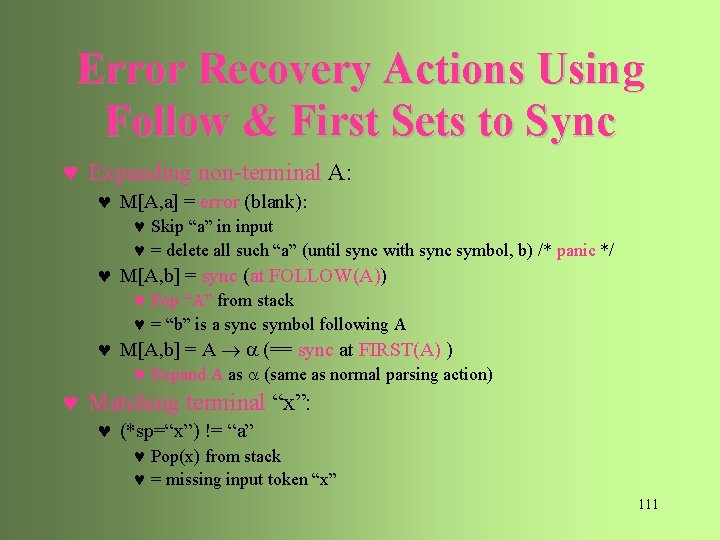

![An Example type simple id array simple of type simple An Example type simple | id | array [ simple ] of type simple](https://slidetodoc.com/presentation_image/13e68b1758d58bb45a134b8ebdf49f65/image-61.jpg)

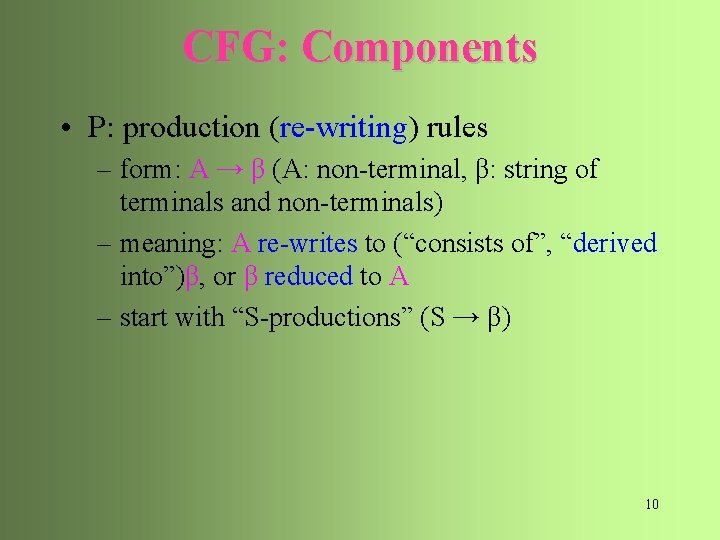

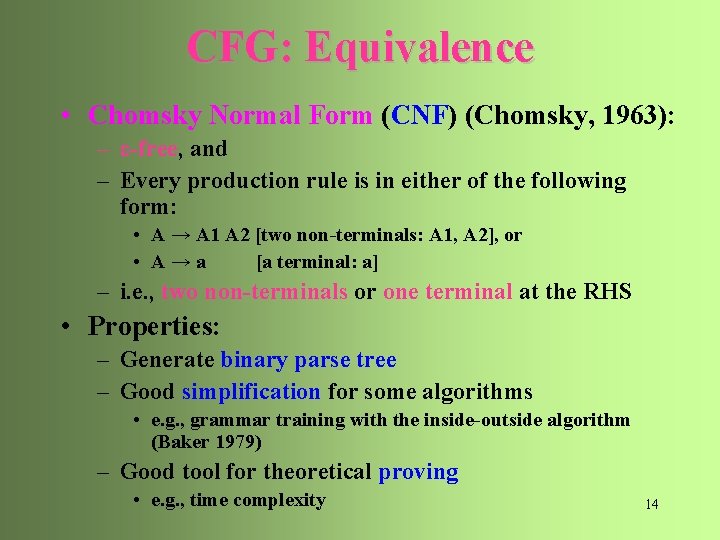

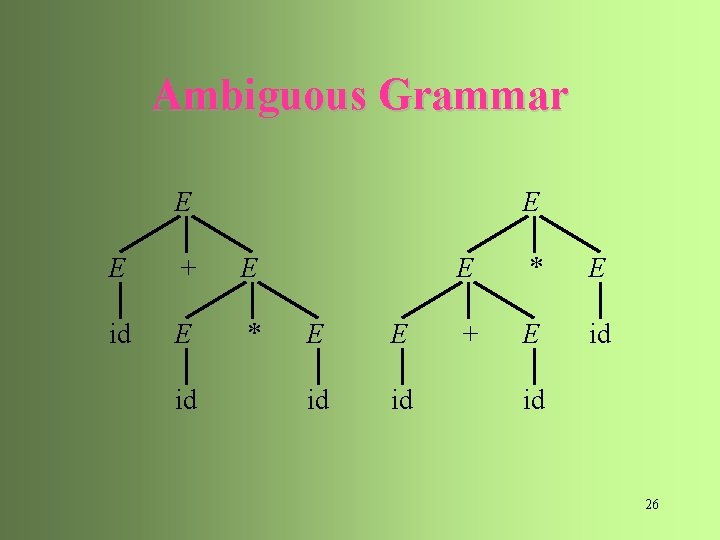

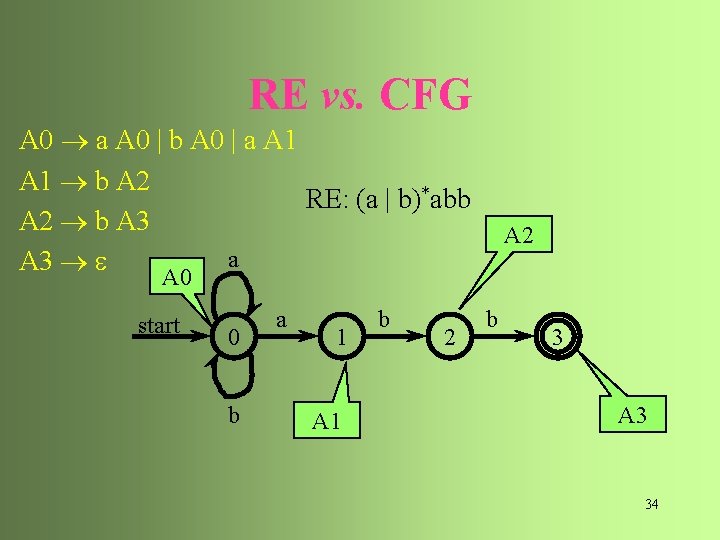

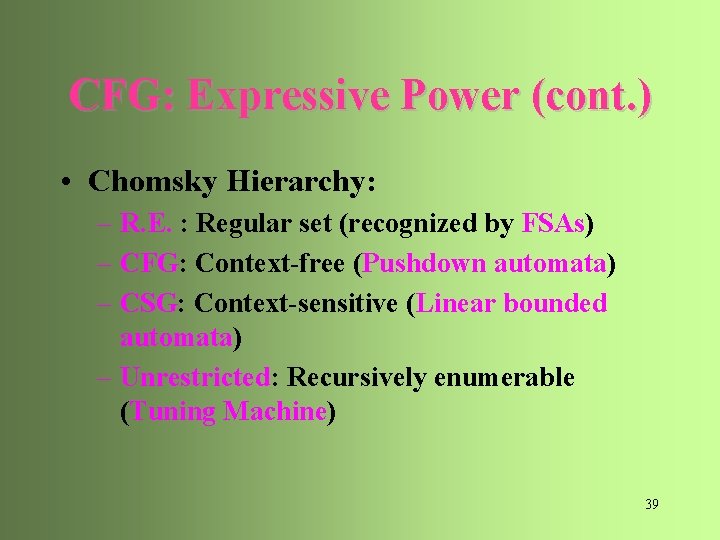

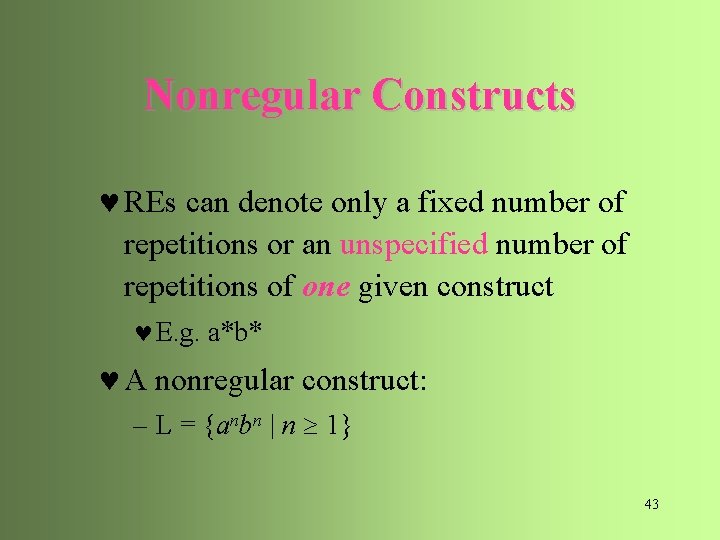

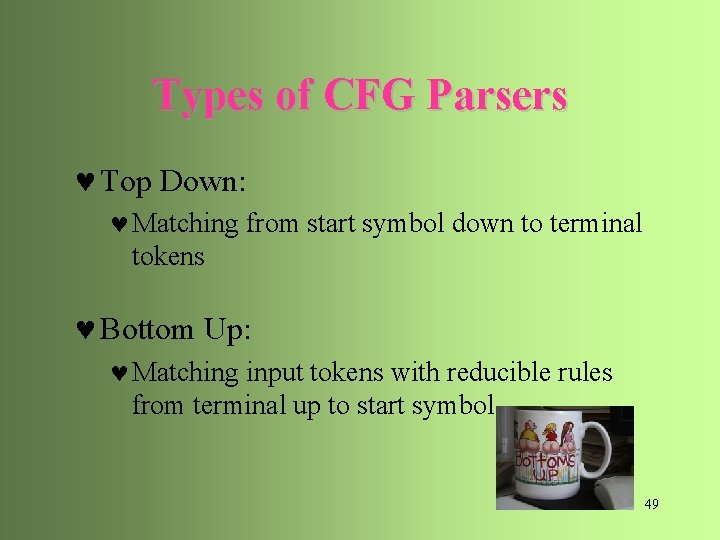

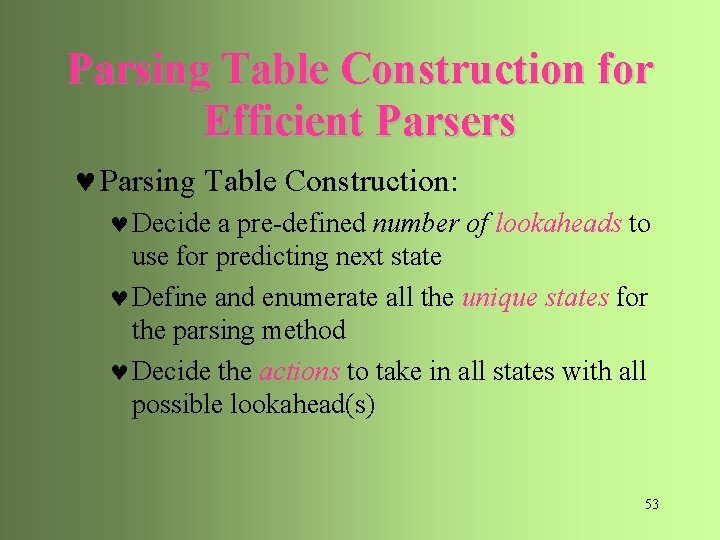

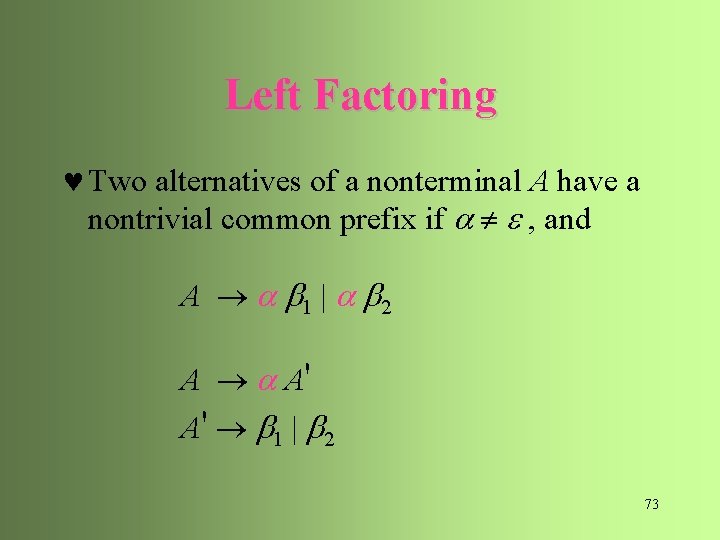

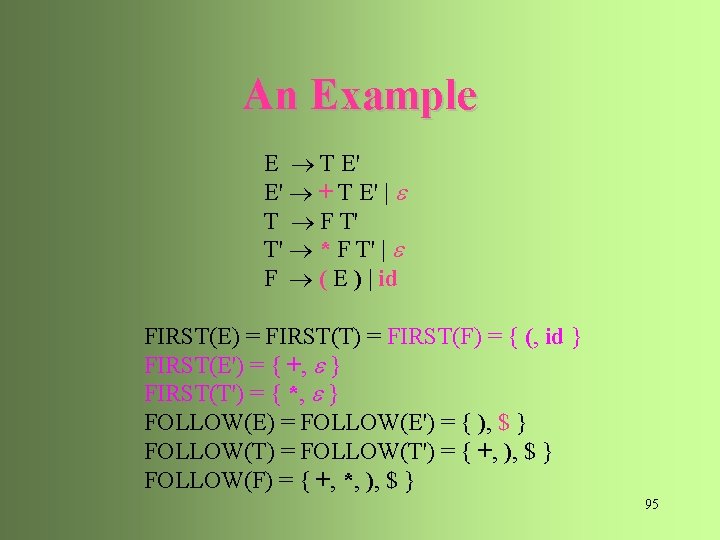

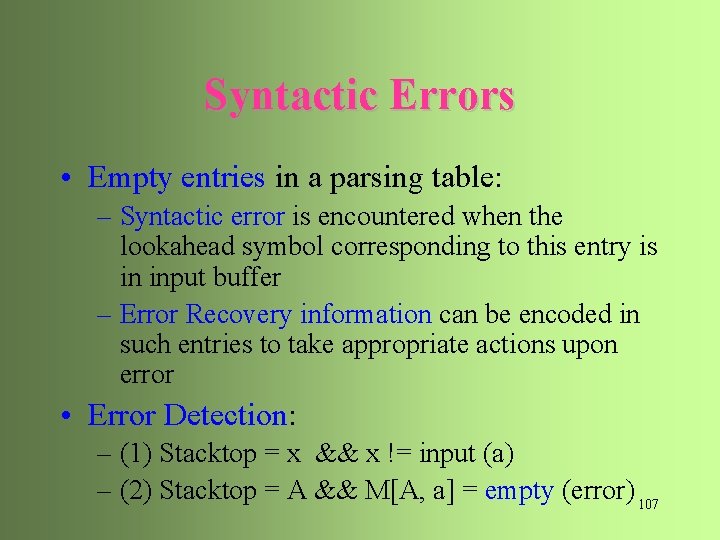

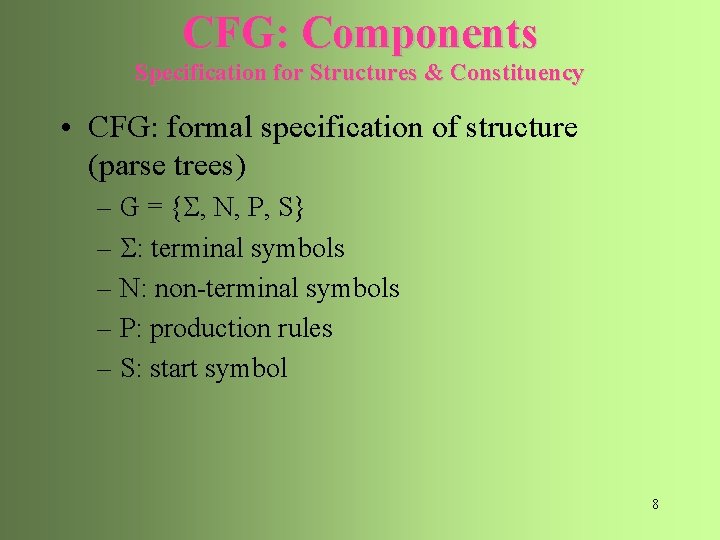

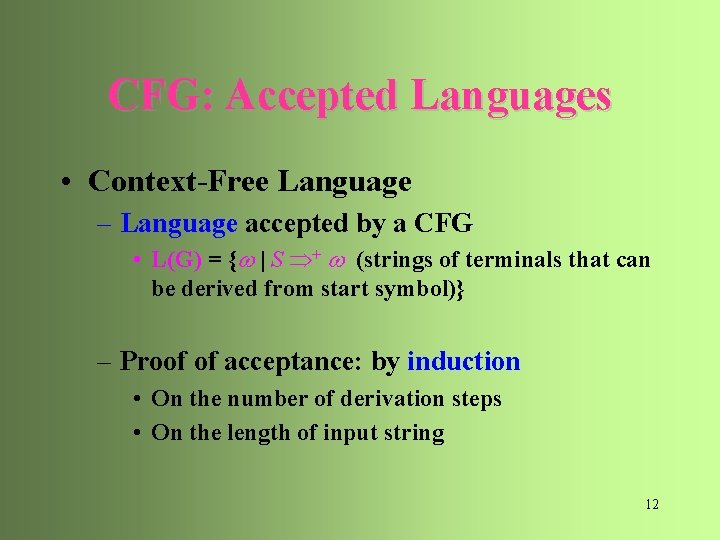

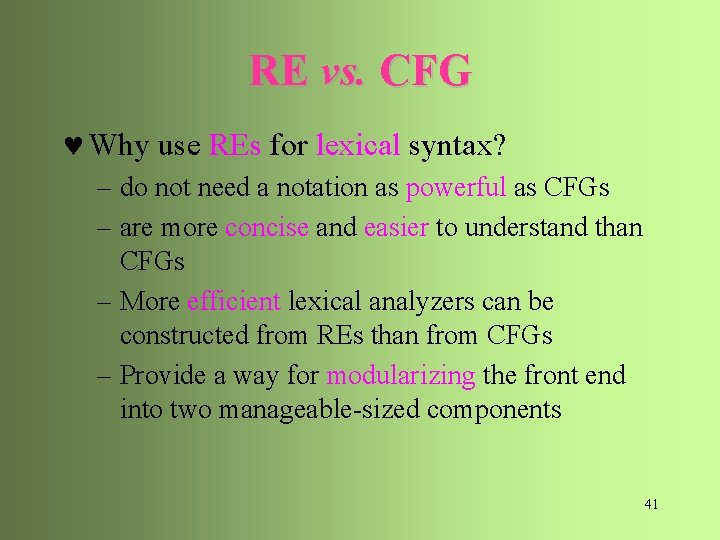

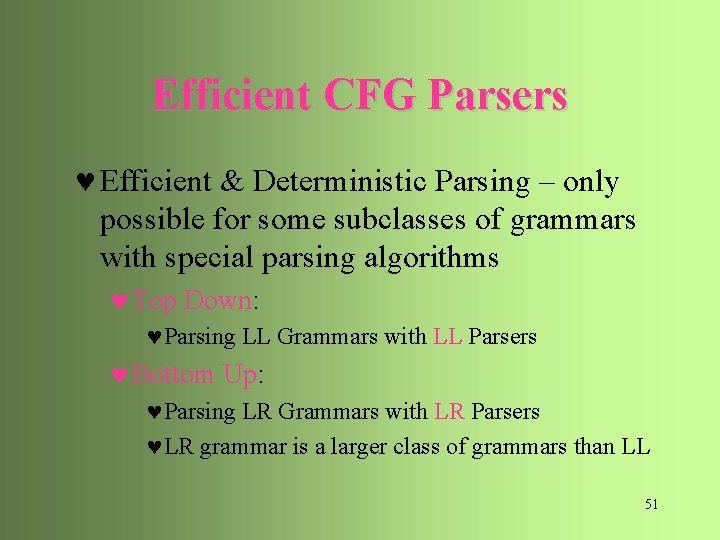

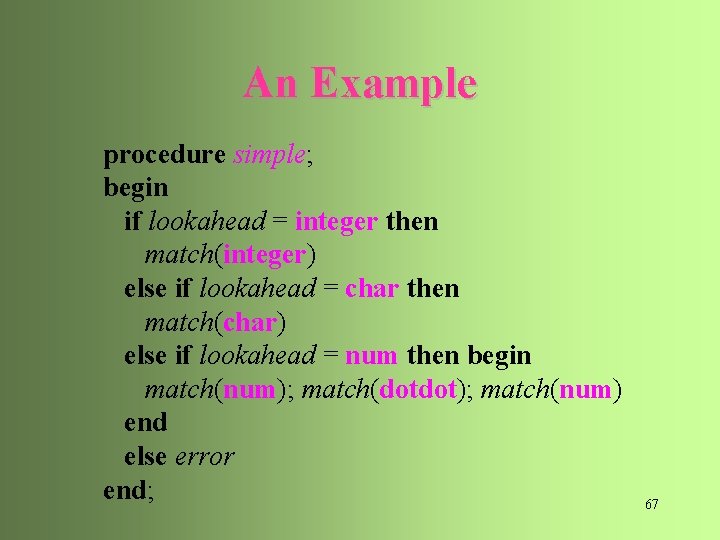

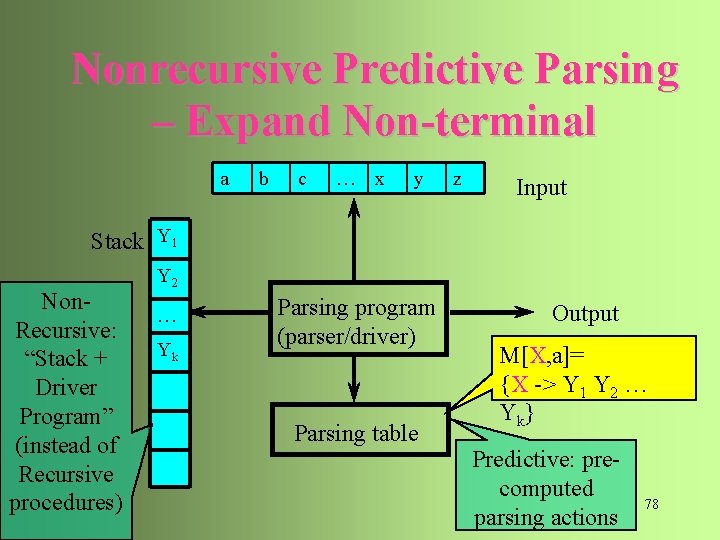

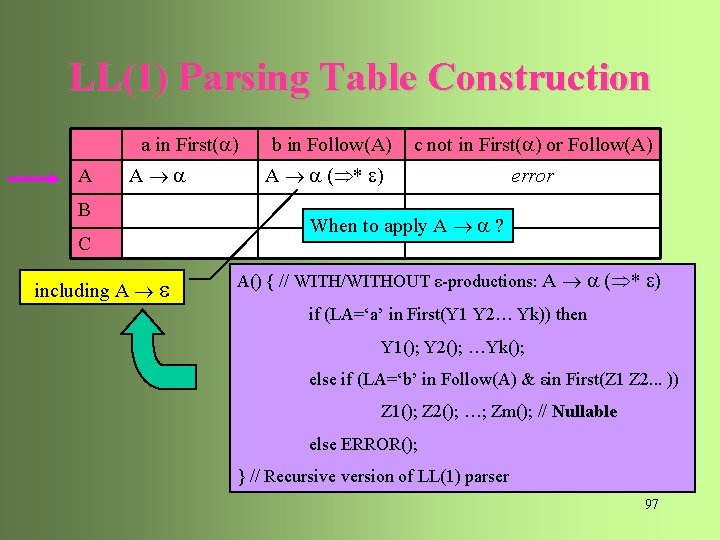

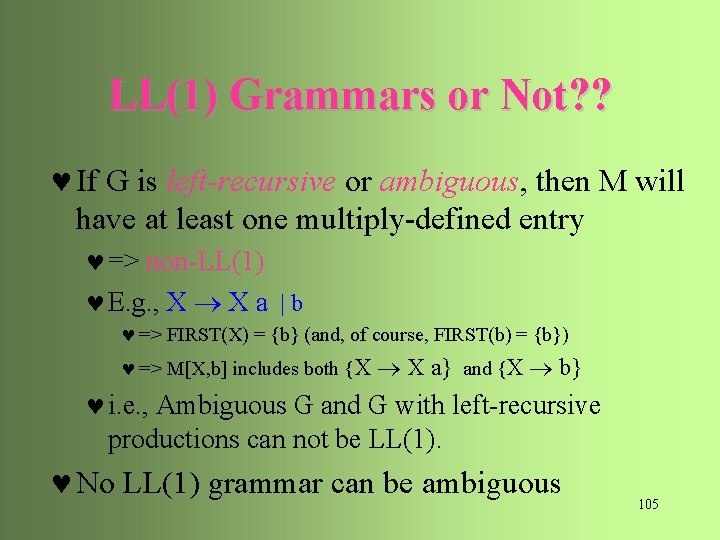

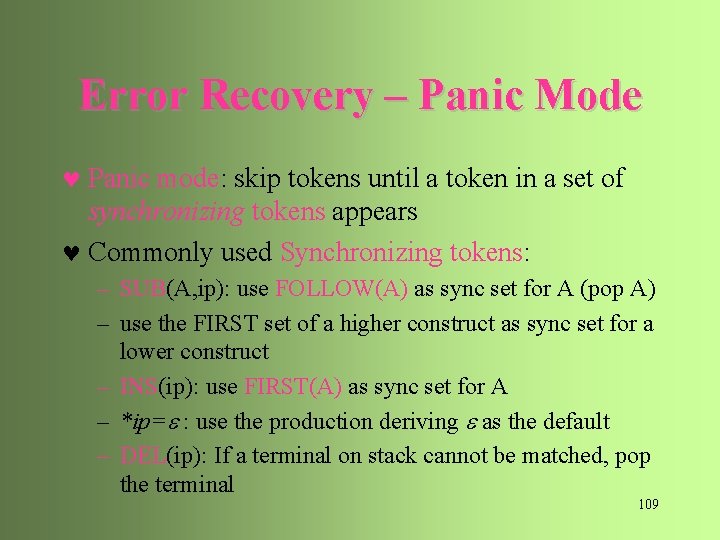

An Example type simple | id | array [ simple ] of type simple integer | char | num dotdot num 63

![An Example array num dotdot num of integer type array simple An Example array [ num dotdot num ] of integer type array [ simple](https://slidetodoc.com/presentation_image/13e68b1758d58bb45a134b8ebdf49f65/image-62.jpg)

An Example array [ num dotdot num ] of integer type array [ simple ] num dotdot num of type simple integer 64

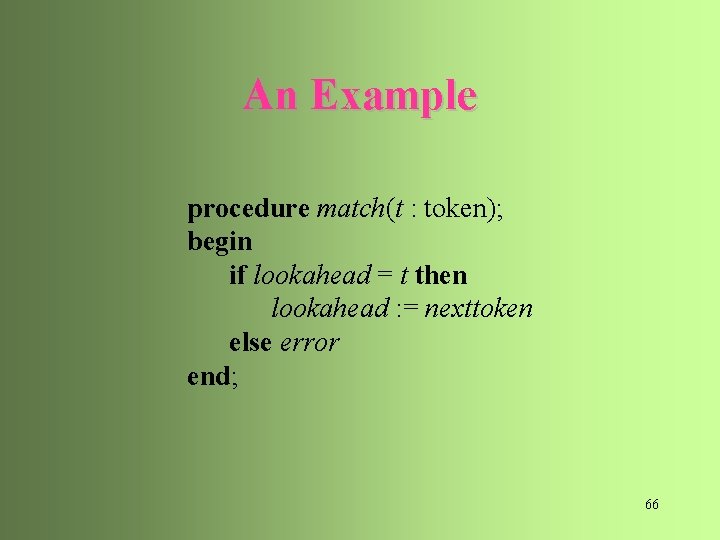

An Example procedure type; begin if lookahead is in { integer, char, num } then simple else if lookahead = id then match(id) else if lookahead = array then begin match(array); match('['); simple; match(']'); match(of); type end else error end; 65

An Example procedure match(t : token); begin if lookahead = t then lookahead : = nexttoken else error end; 66

An Example procedure simple; begin if lookahead = integer then match(integer) else if lookahead = char then match(char) else if lookahead = num then begin match(num); match(dotdot); match(num) end else error end; 67

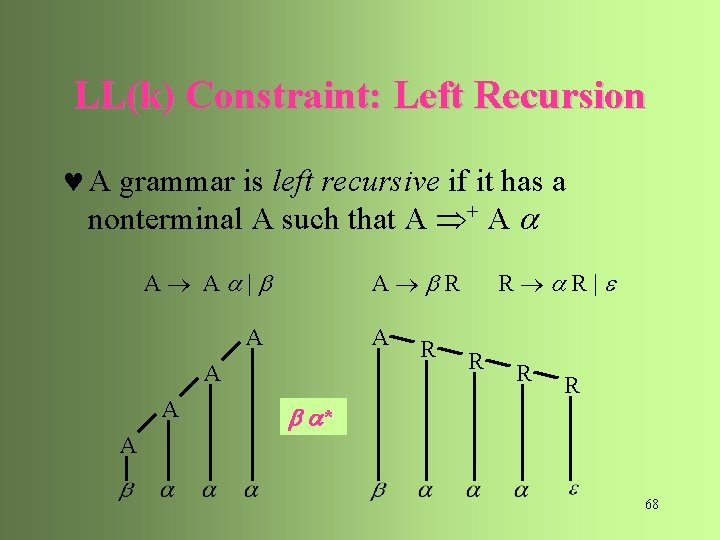

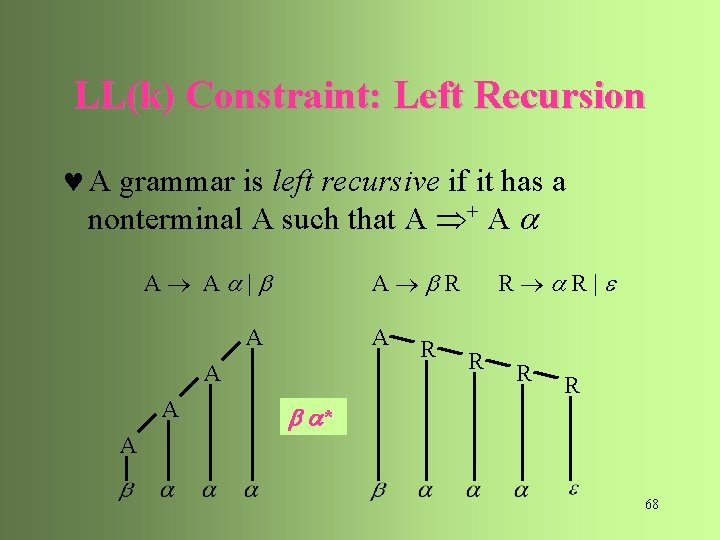

LL(k) Constraint: Left Recursion © A grammar is left recursive if it has a nonterminal A such that A + A A A | A R A A R R R| R R R * A 68

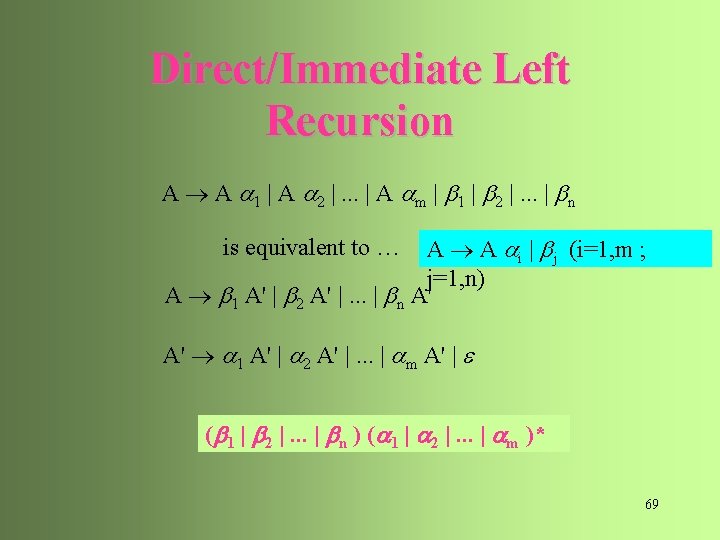

Direct/Immediate Left Recursion A A 1 | A 2 |. . . | A m | 1 | 2 |. . . | n A A i | j (i=1, m ; j=1, n) A 1 A' | 2 A' |. . . | n A' is equivalent to … A' 1 A' | 2 A' |. . . | m A' | ( 1 | 2 |. . . | n ) ( 1 | 2 |. . . | m )* 69

An Example E E + T | T T T * F | F F ( E ) | id E T E' E' + T E' | T F T' T' * F T' | F ( E ) | id 70

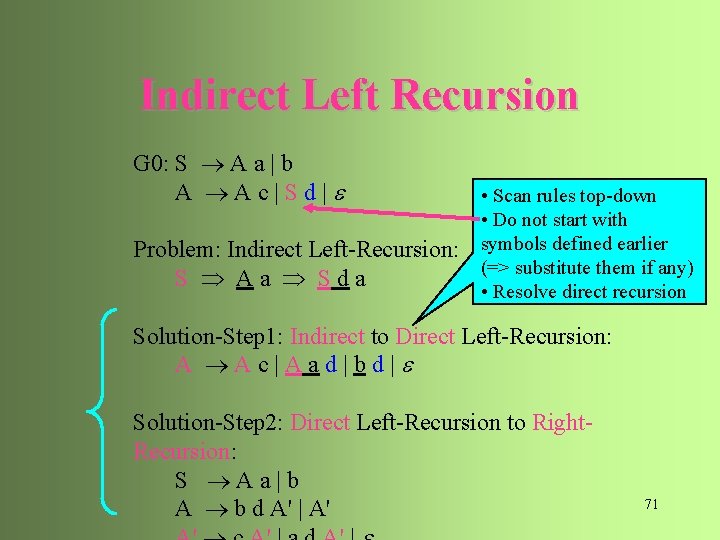

Indirect Left Recursion G 0: S A a | b A Ac|Sd| Problem: Indirect Left-Recursion: S Aa Sda • Scan rules top-down • Do not start with symbols defined earlier (=> substitute them if any) • Resolve direct recursion Solution-Step 1: Indirect to Direct Left-Recursion: A Ac|Aad|bd| Solution-Step 2: Direct Left-Recursion to Right. Recursion: S Aa|b A b d A' | A' 71

Indirect Left Recursion Input. Grammar G with no cycles or -production. Output. An equivalent grammar with no left recursion. 1. Arrange the nonterminals in some order A 1, A 2, . . . , An 2. for i : = 1 to n do begin // Step 1: Substitute 1 st-symbols of Ai for j : = 1 to i - 1 do begin // which are previous Aj’s replace each production of the form Ai Aj ( j < i ) by the production Ai 1 | 2 |. . . | k where Aj 1 | 2 |. . . | k are all the current Aj-productions; end eliminate direct left recursion among Ai-productions // Step 2 end 72

Left Factoring © Two alternatives of a nonterminal A have a nontrivial common prefix if , and A 1 | 2 A A' A' 1 | 2 73

An Example S i. Et. S|i. Et. Se. S|a E b S i E t S S' | a S' e S | E b 74

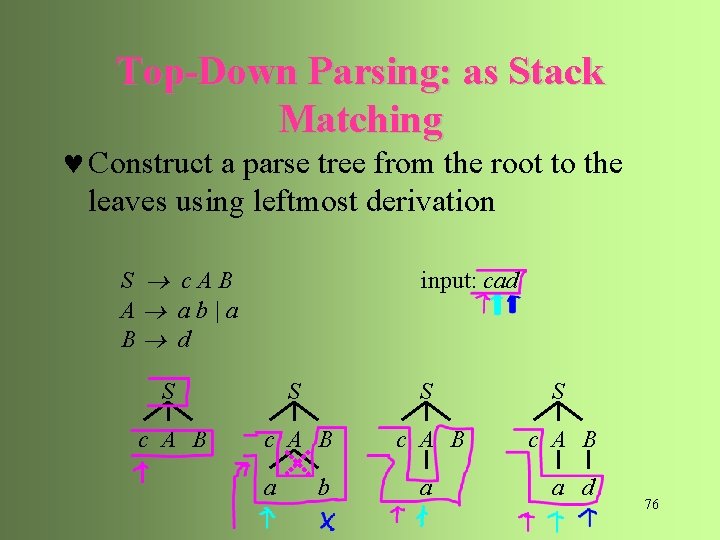

Top-Down Parsing: as Stack Matching © Construct a parse tree from the root to the leaves using leftmost derivation S c. AB A ab|a B d input: cad S S c A B a b a a d 76

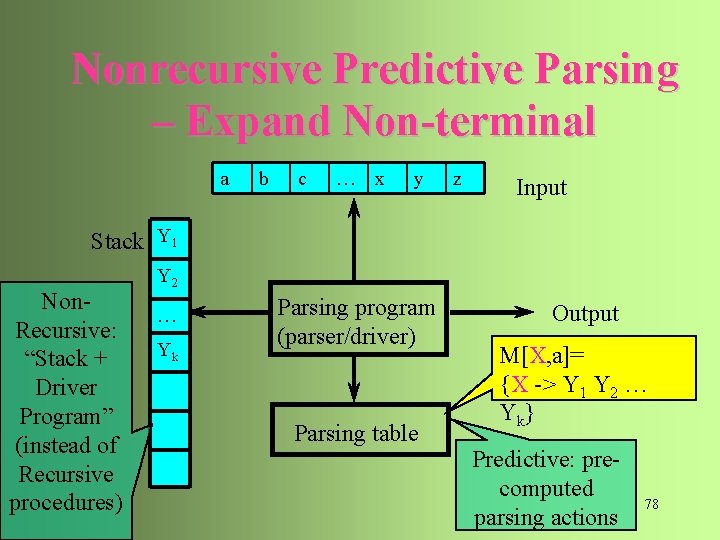

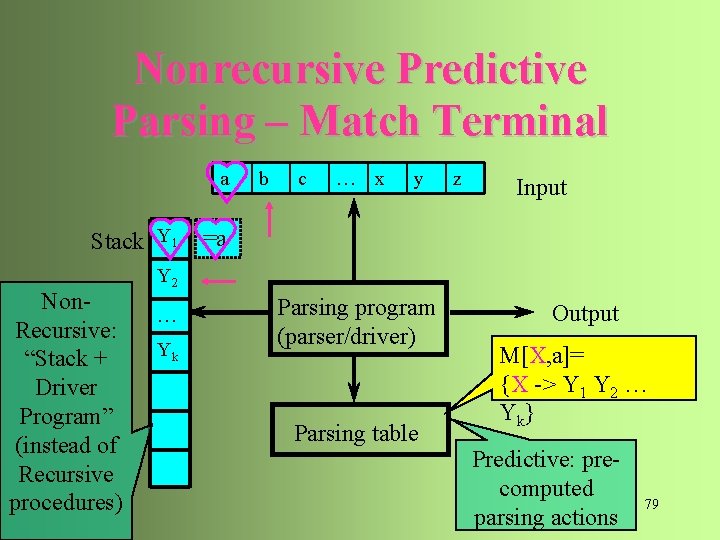

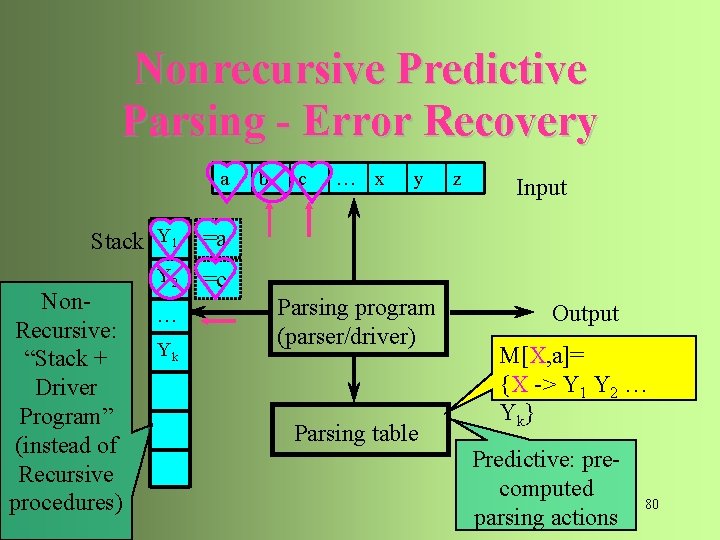

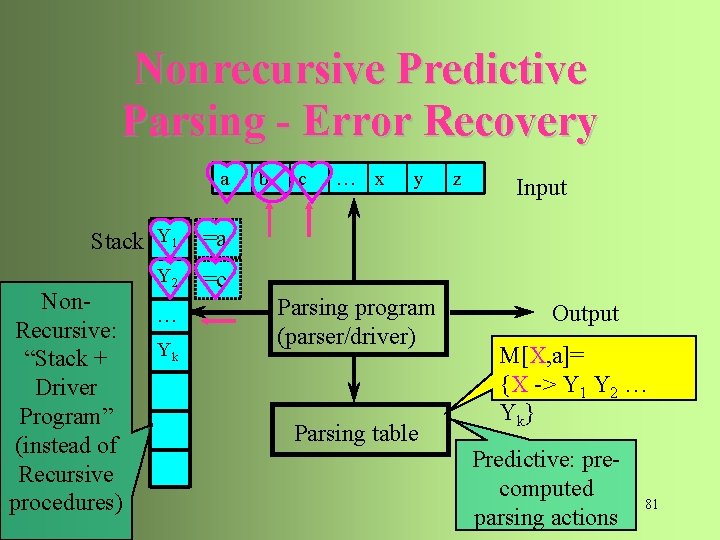

Nonrecursive Predictive Parsing – General State a b c … x y z Input Stack X Non. Recursive: “Stack + Driver Program” (instead of Recursive procedures) … Parsing program (parser/driver) Parsing table Output M[X, a]= {X -> Y 1 Y 2 … Y k} Predictive: precomputed parsing actions 77

Nonrecursive Predictive Parsing – Expand Non-terminal a b c … x y z Input Stack Y 1 Non. Recursive: “Stack + Driver Program” (instead of Recursive procedures) Y 2 … Yk Parsing program (parser/driver) Parsing table Output M[X, a]= {X -> Y 1 Y 2 … Y k} Predictive: precomputed parsing actions 78

Nonrecursive Predictive Parsing – Match Terminal a Stack Y 1 Non. Recursive: “Stack + Driver Program” (instead of Recursive procedures) b c … x y z Input =a Y 2 … Yk Parsing program (parser/driver) Parsing table Output M[X, a]= {X -> Y 1 Y 2 … Y k} Predictive: precomputed parsing actions 79

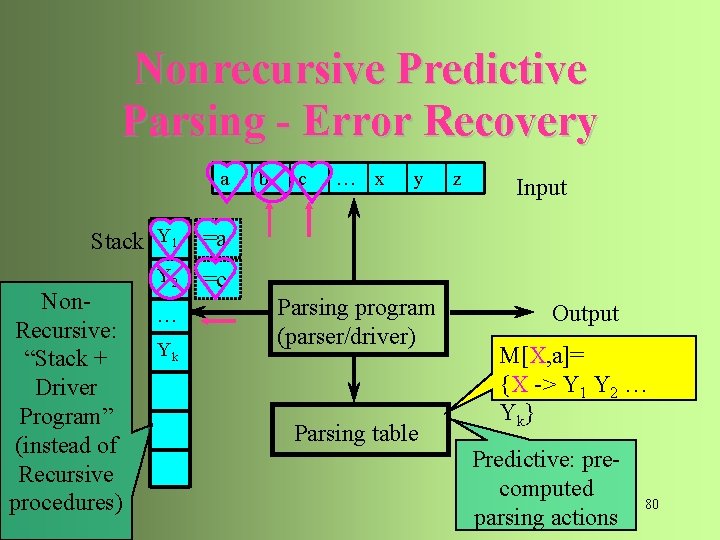

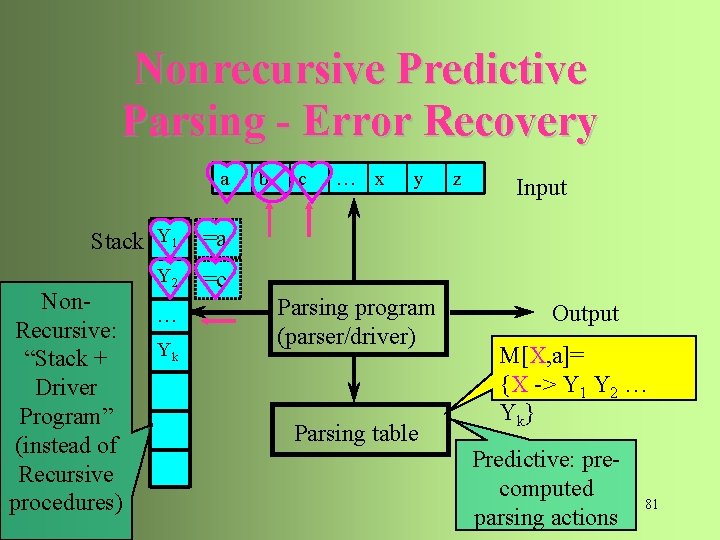

Nonrecursive Predictive Parsing - Error Recovery a Stack Y 1 =a Y 2 =c Non. Recursive: “Stack + Driver Program” (instead of Recursive procedures) … Yk b c … x y Parsing program (parser/driver) Parsing table z Input Output M[X, a]= {X -> Y 1 Y 2 … Y k} Predictive: precomputed parsing actions 80

Nonrecursive Predictive Parsing - Error Recovery a Stack Y 1 =a Y 2 =c Non. Recursive: “Stack + Driver Program” (instead of Recursive procedures) … Yk b c … x y Parsing program (parser/driver) Parsing table z Input Output M[X, a]= {X -> Y 1 Y 2 … Y k} Predictive: precomputed parsing actions 81

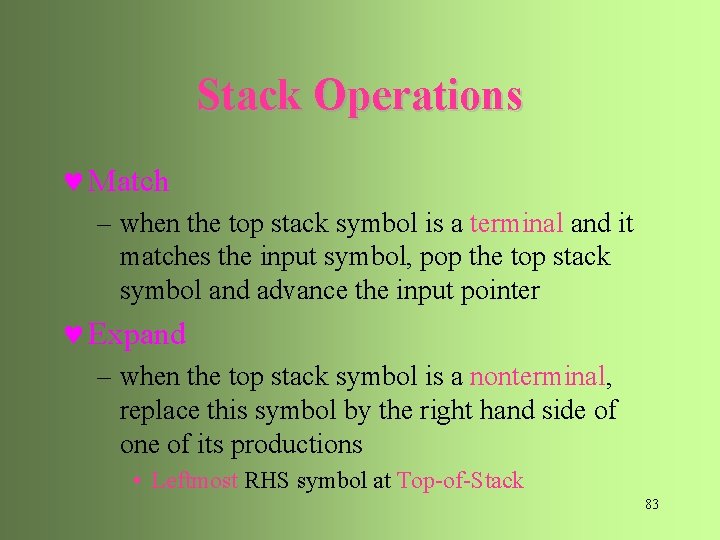

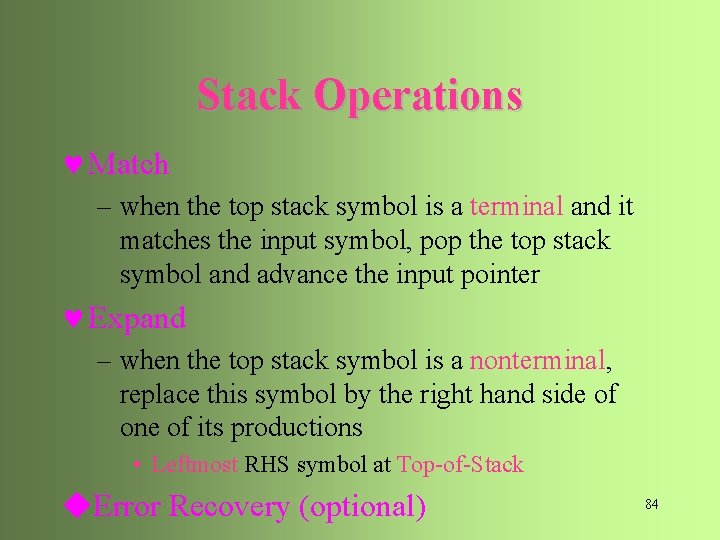

Stack Operations © Match – when the top stack symbol is a terminal and it matches the input symbol, pop the top stack symbol and advance the input pointer © Expand – when the top stack symbol is a nonterminal, replace this symbol by the right hand side of one of its productions • Leftmost RHS symbol at Top-of-Stack 83

Stack Operations © Match – when the top stack symbol is a terminal and it matches the input symbol, pop the top stack symbol and advance the input pointer © Expand – when the top stack symbol is a nonterminal, replace this symbol by the right hand side of one of its productions • Leftmost RHS symbol at Top-of-Stack u. Error Recovery (optional) 84

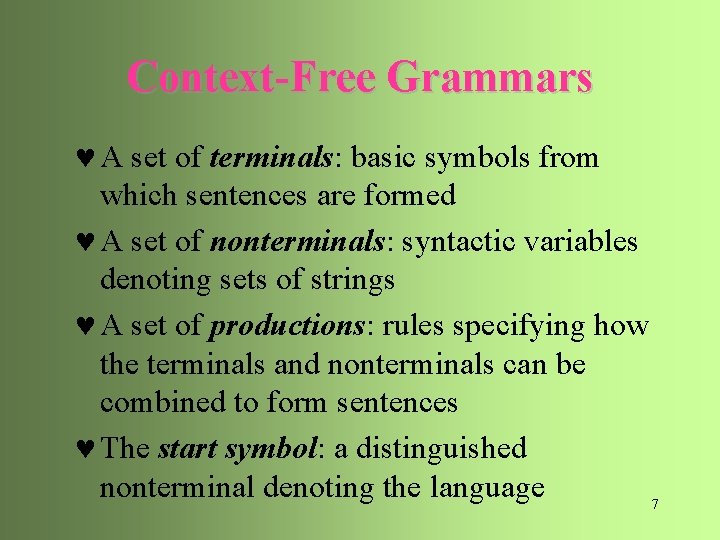

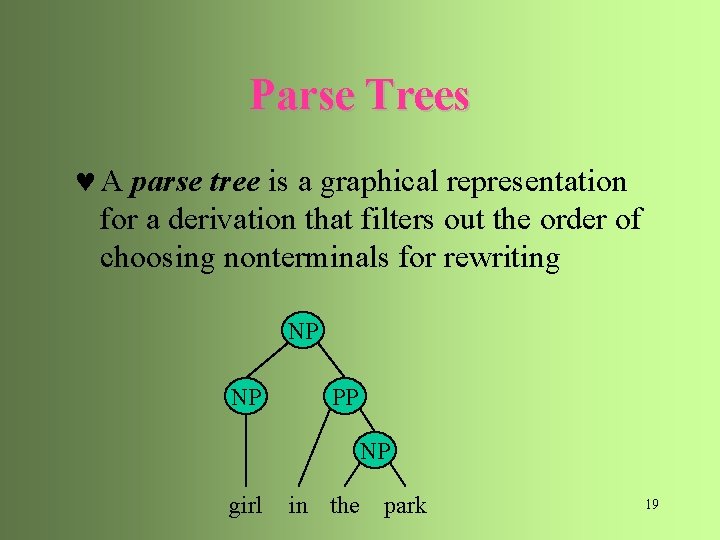

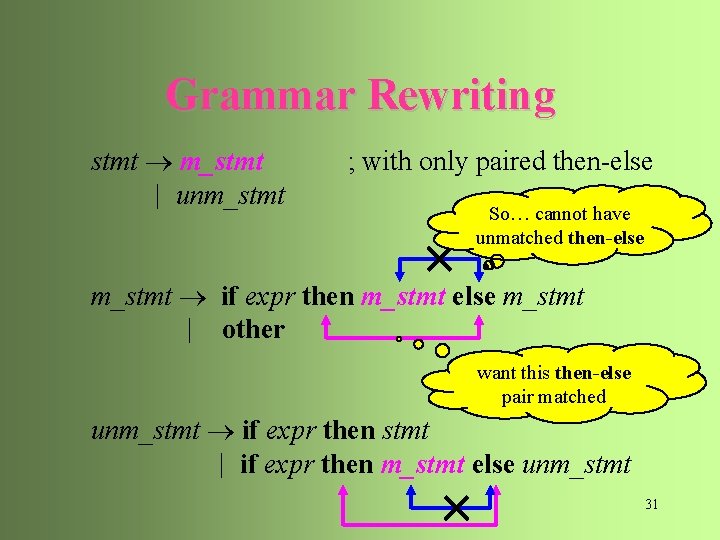

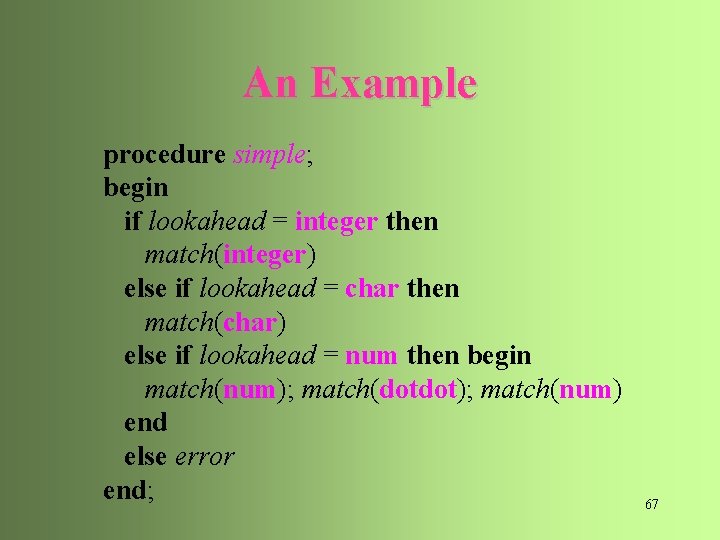

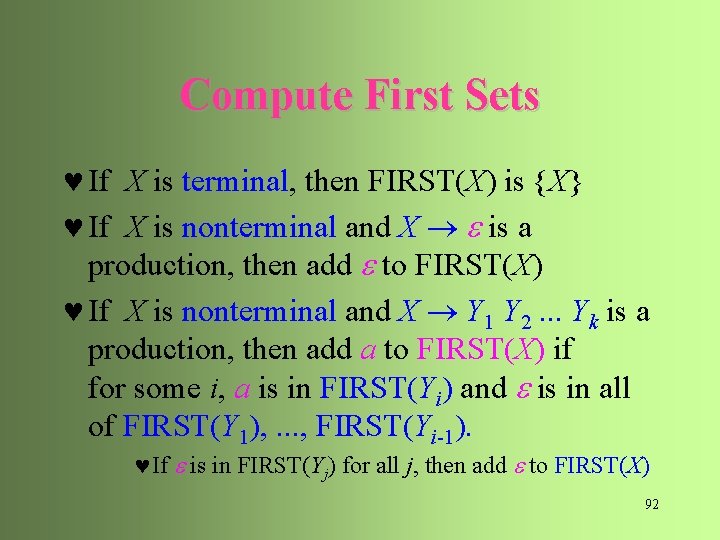

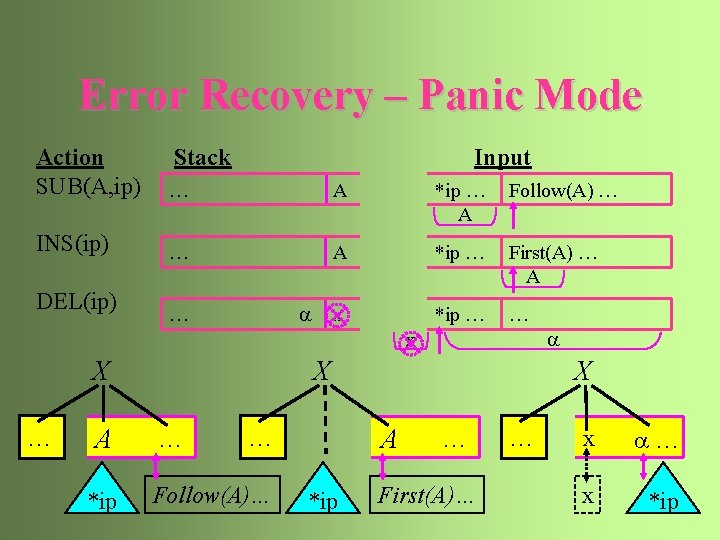

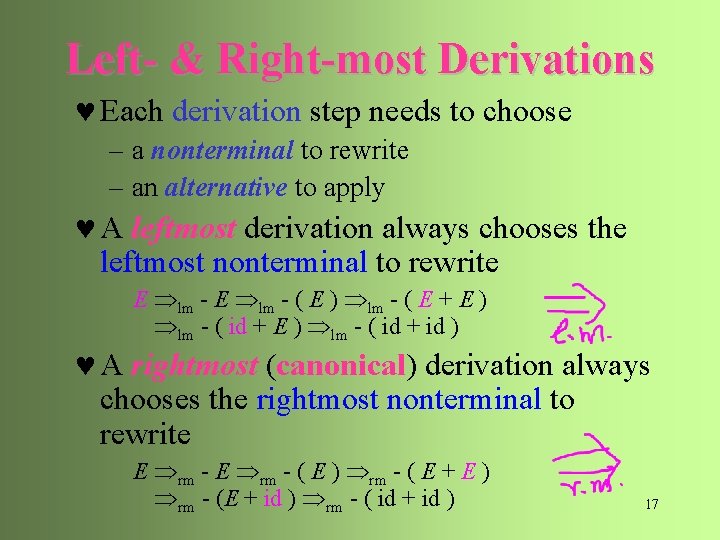

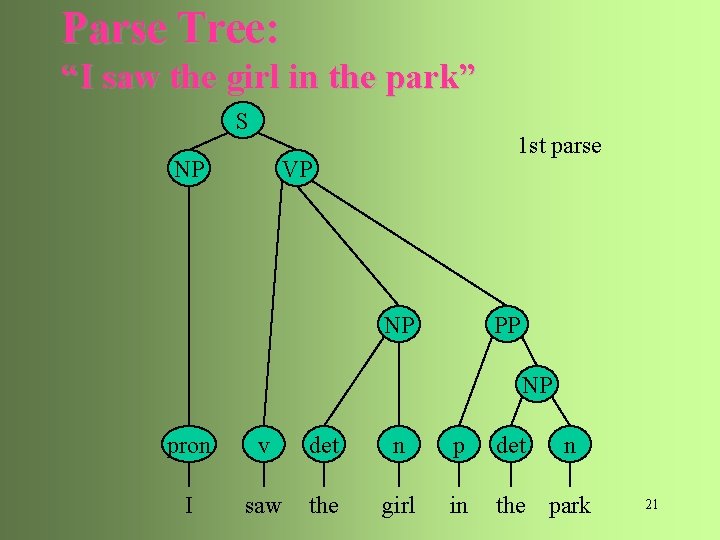

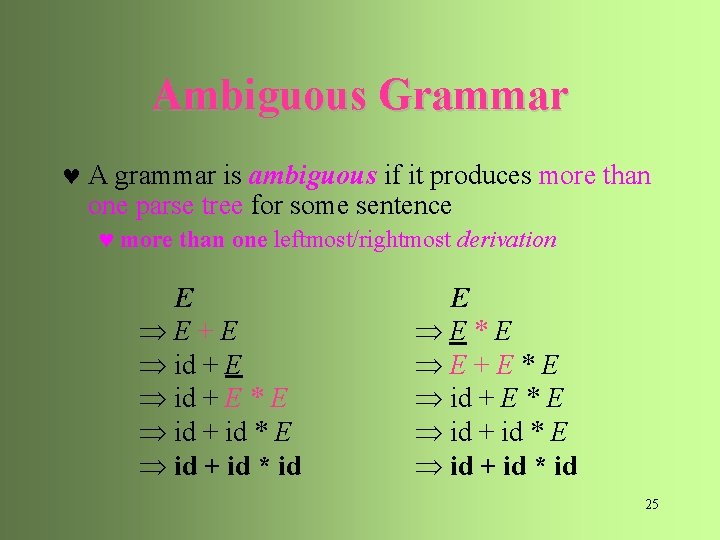

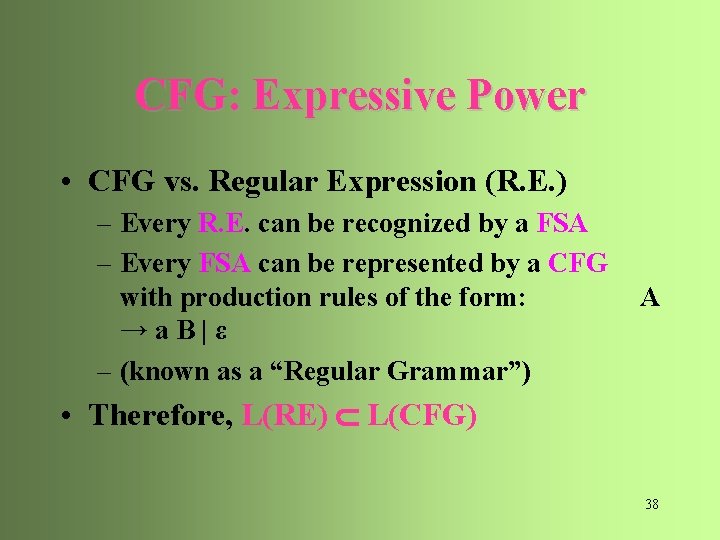

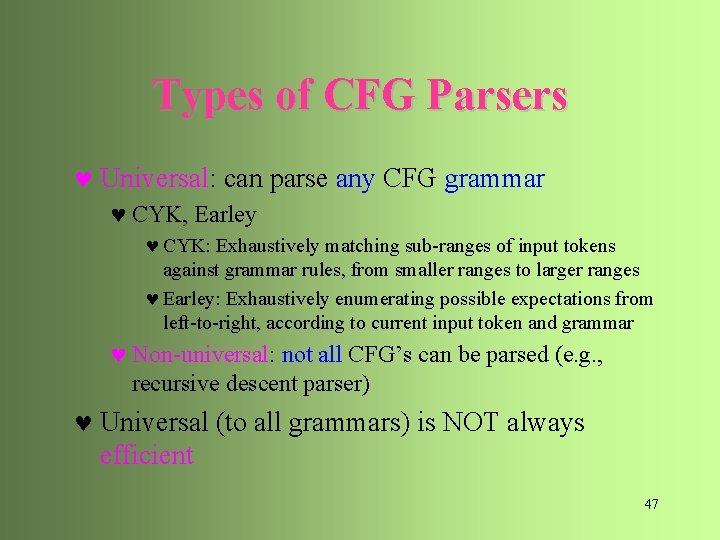

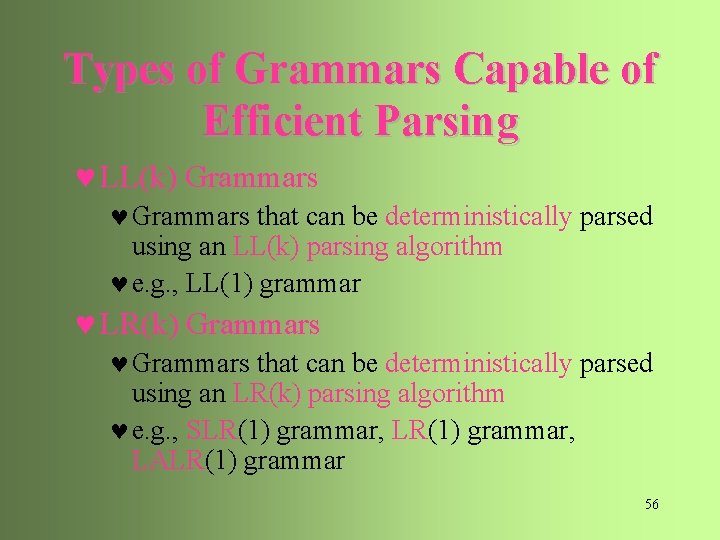

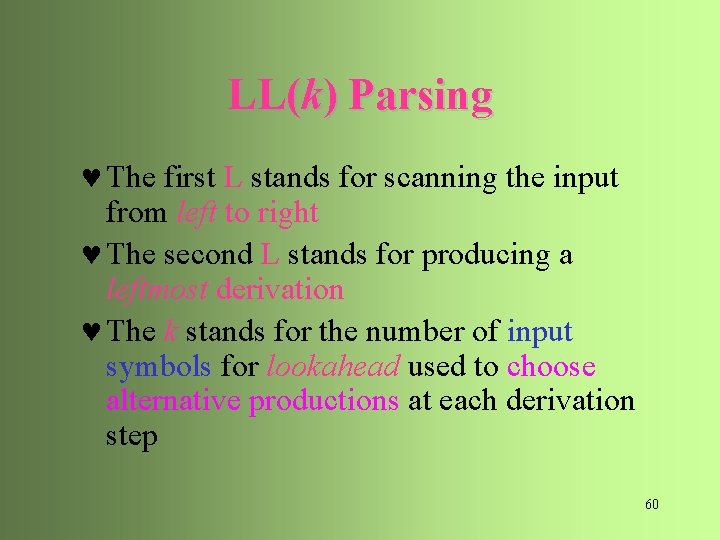

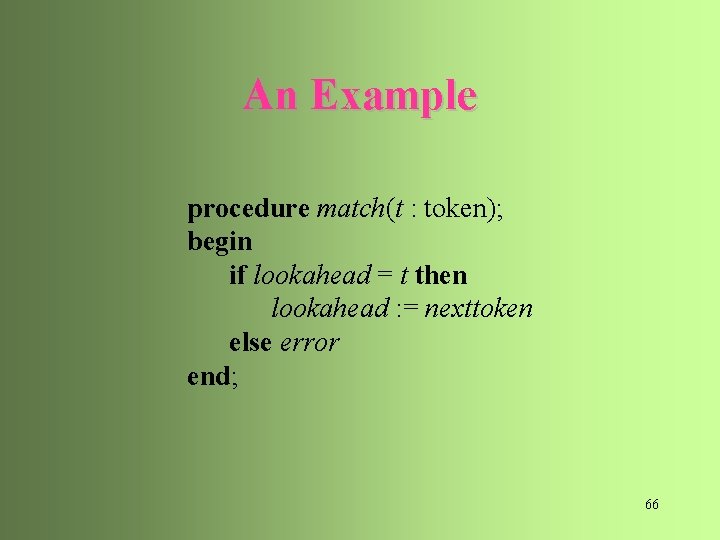

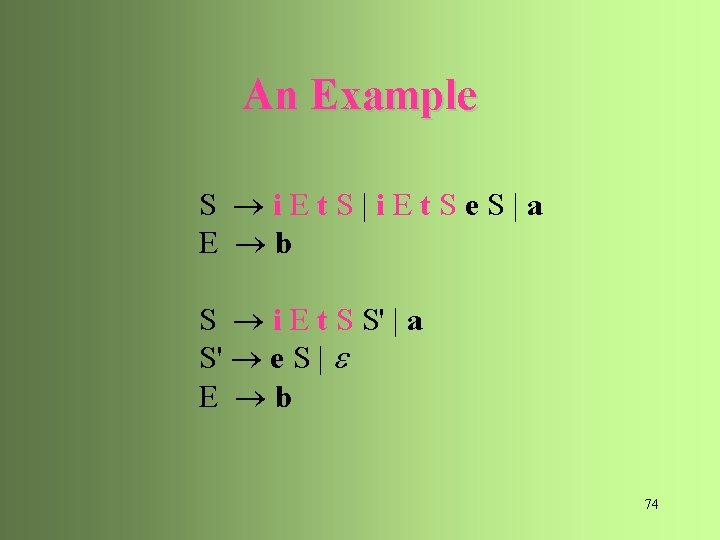

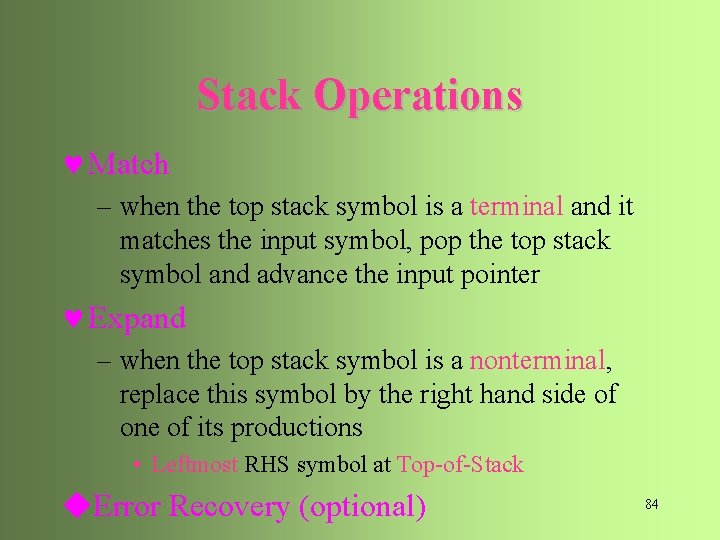

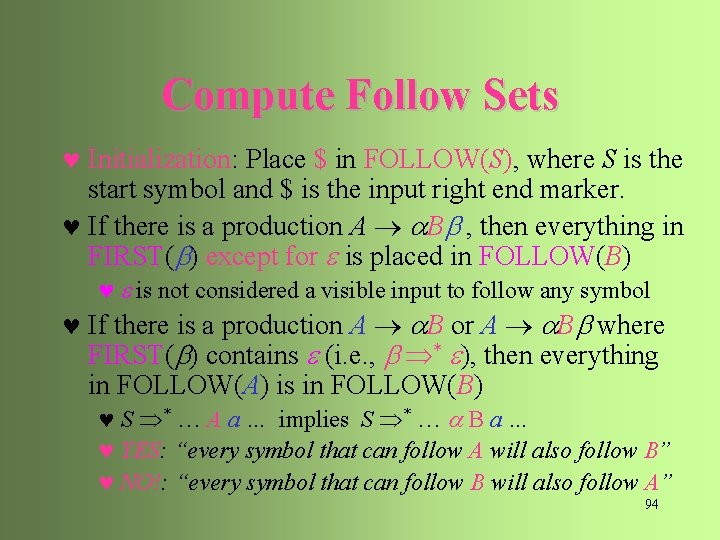

![An Example type simple id array simple of type simple An Example type simple | id | array [ simple ] of type simple](https://slidetodoc.com/presentation_image/13e68b1758d58bb45a134b8ebdf49f65/image-81.jpg)

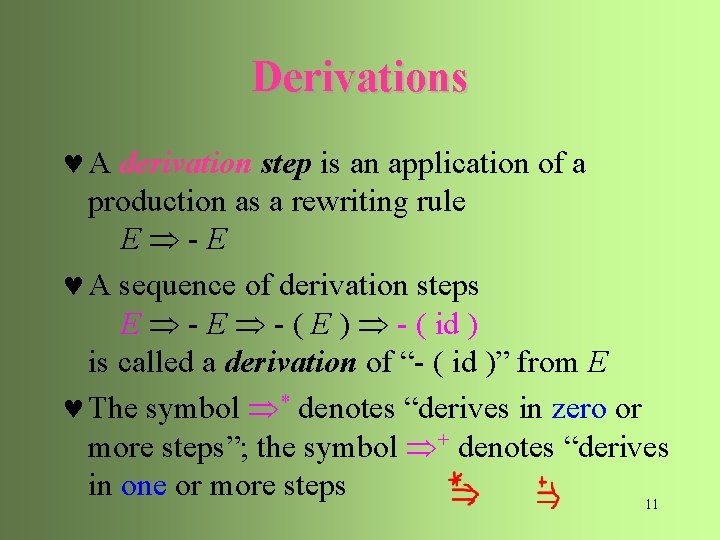

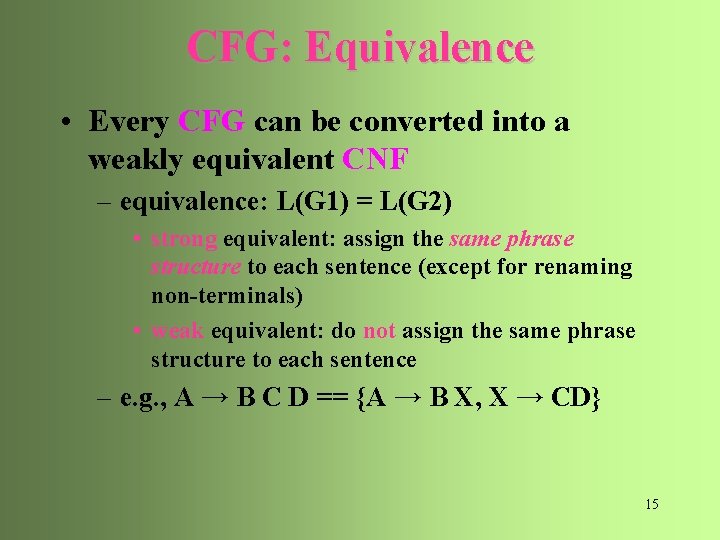

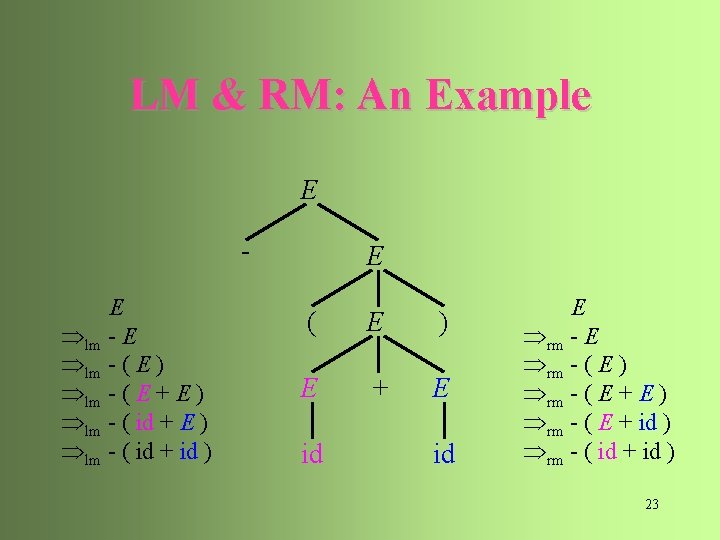

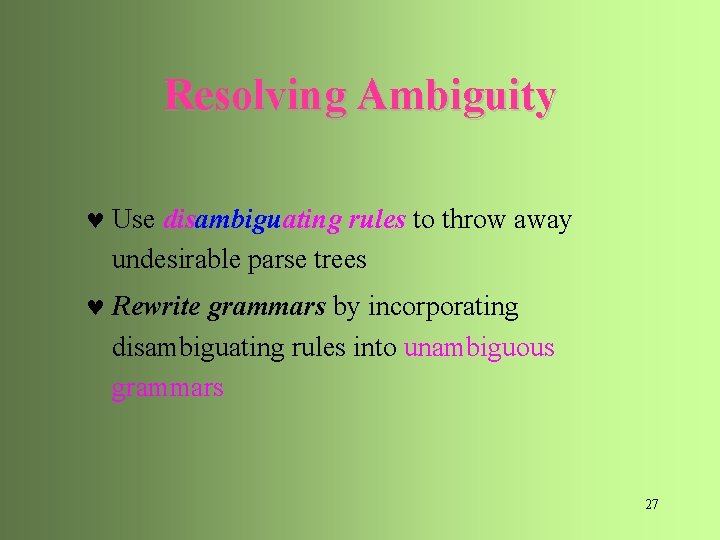

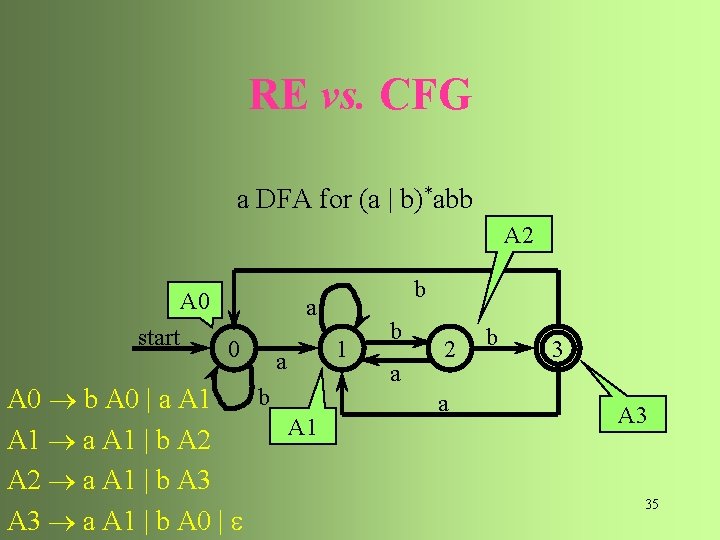

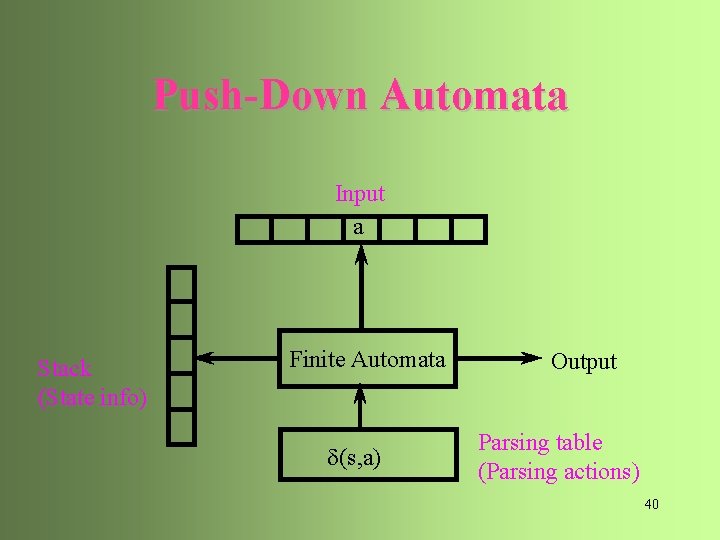

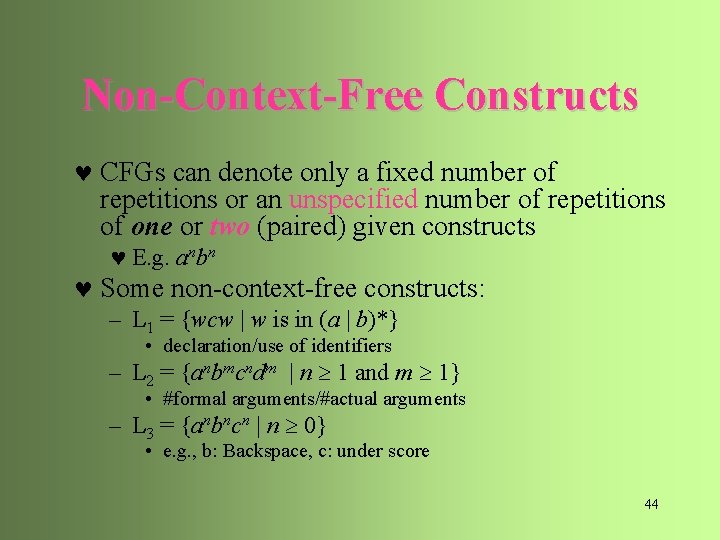

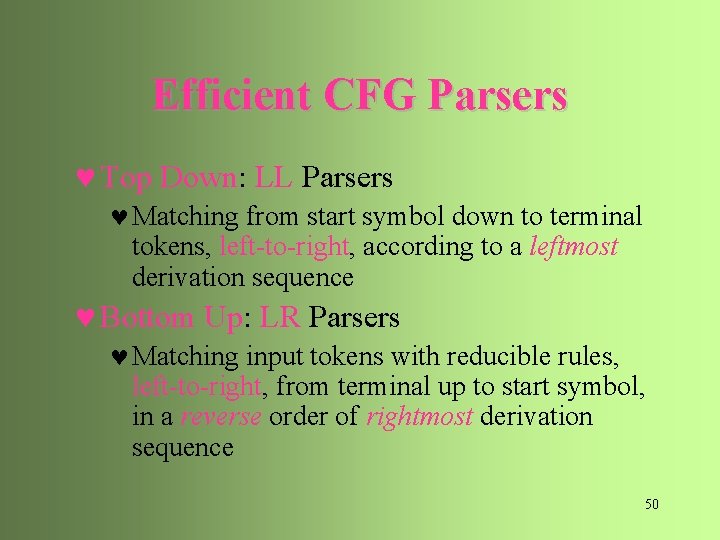

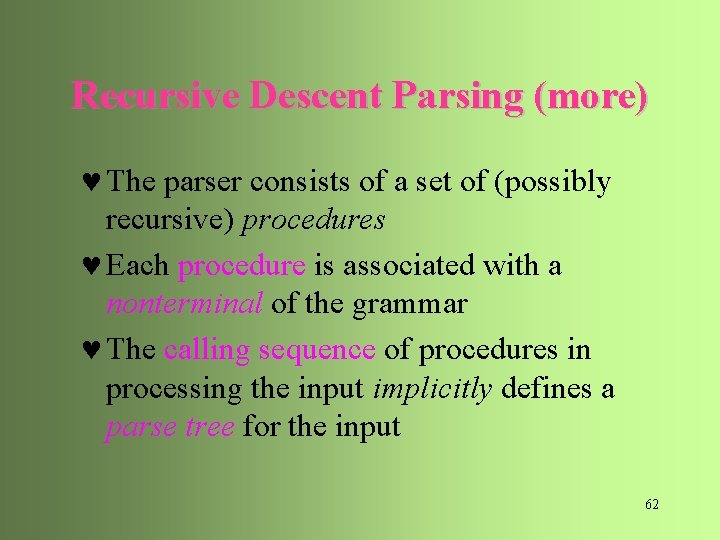

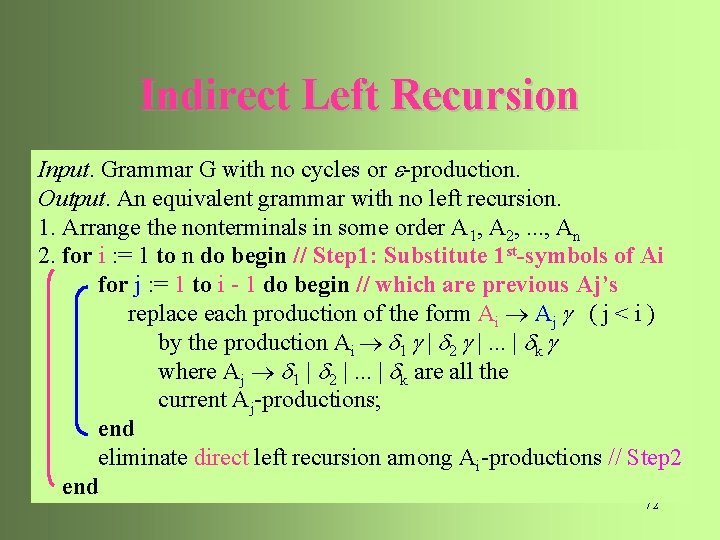

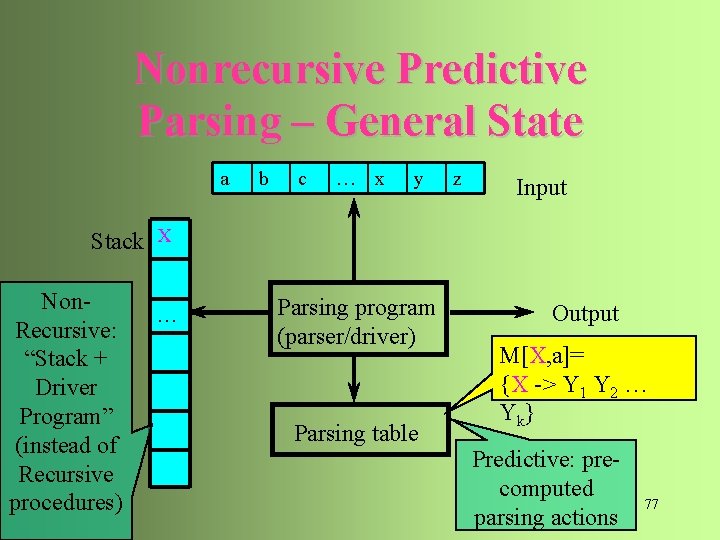

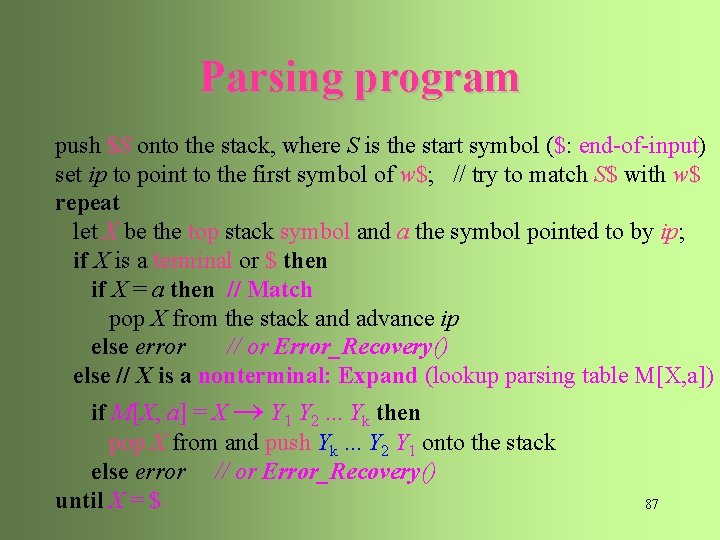

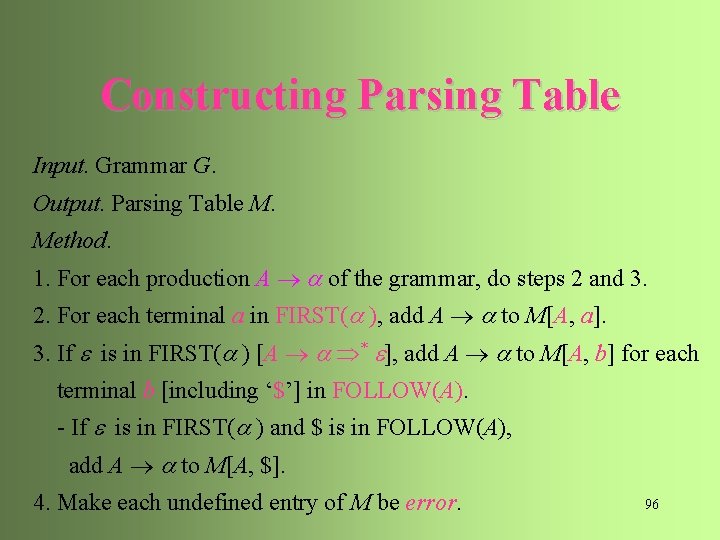

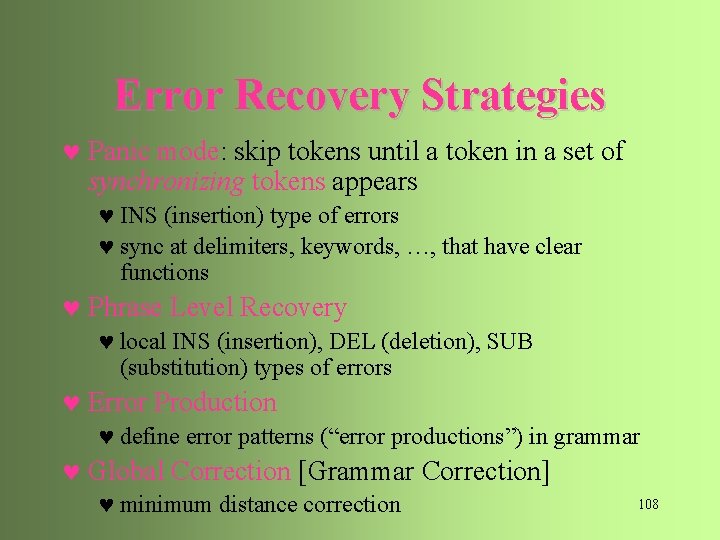

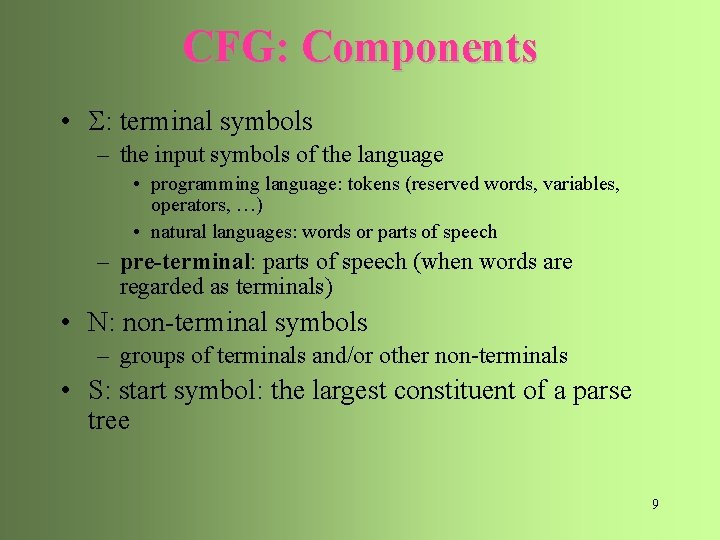

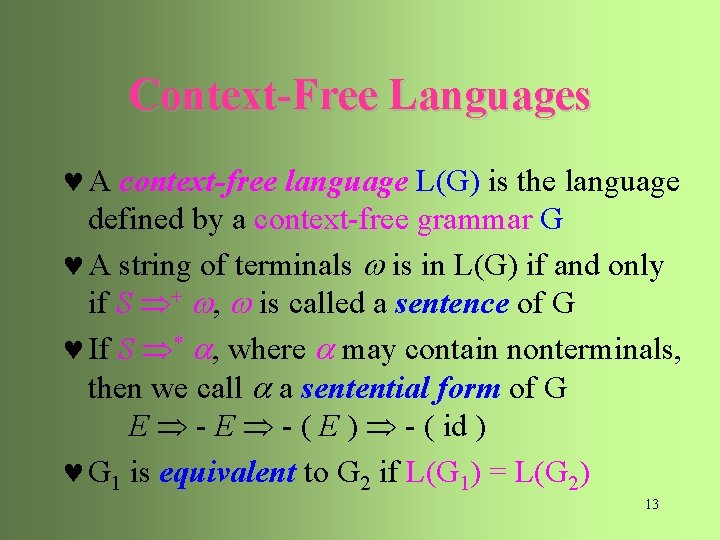

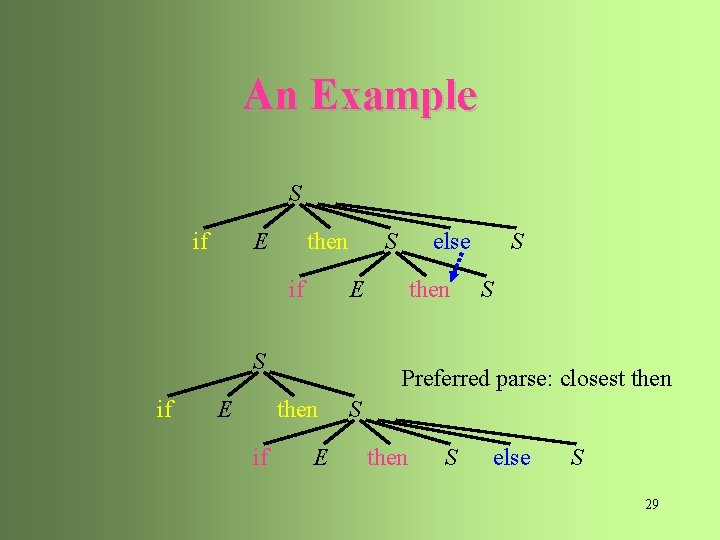

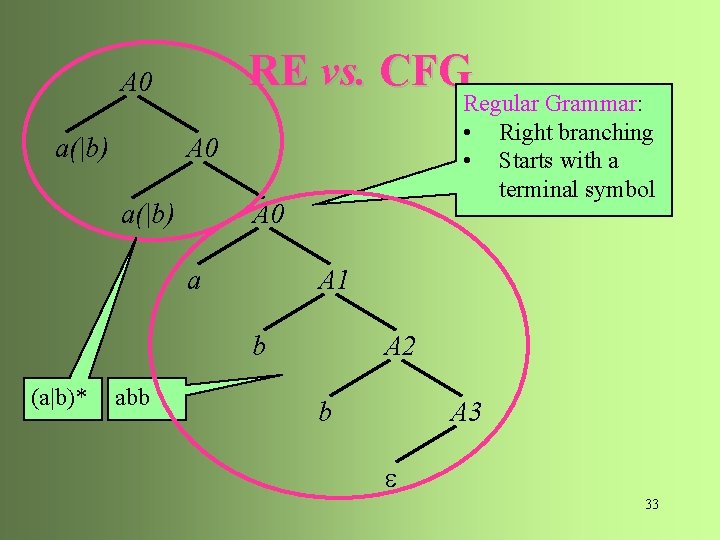

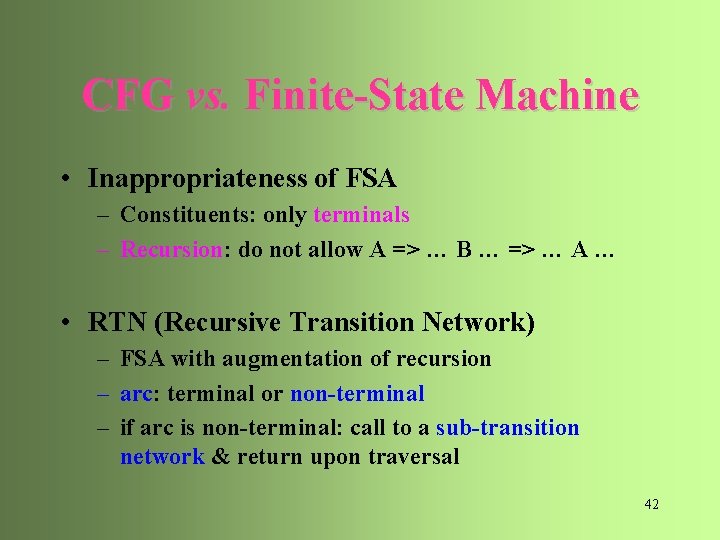

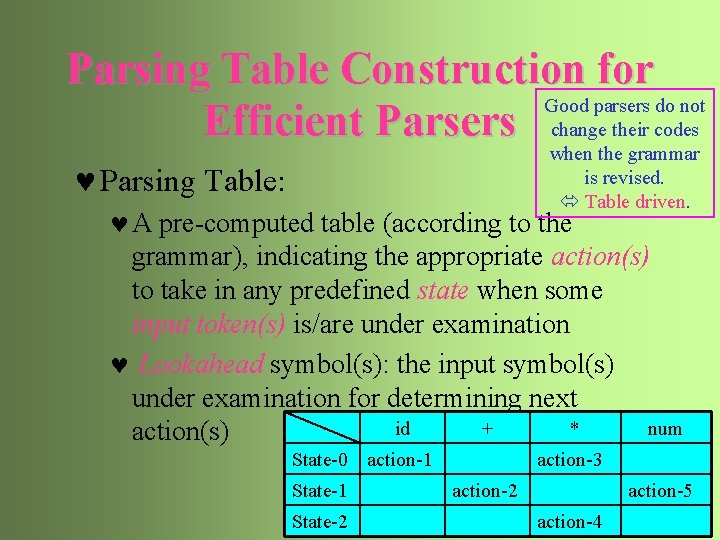

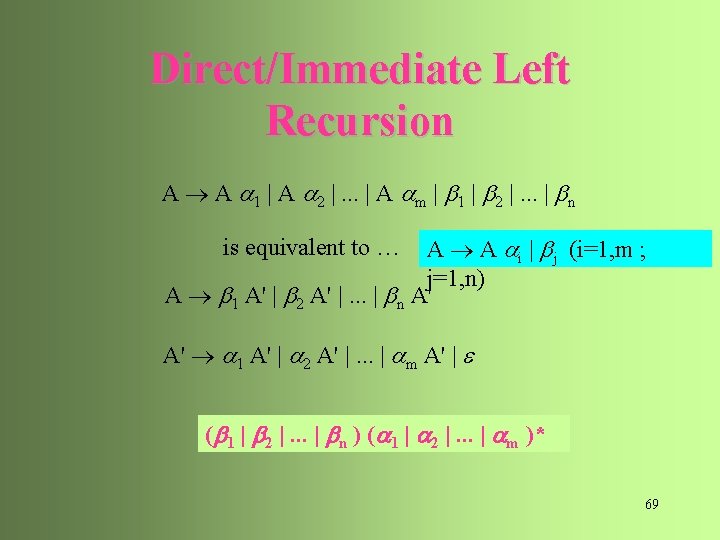

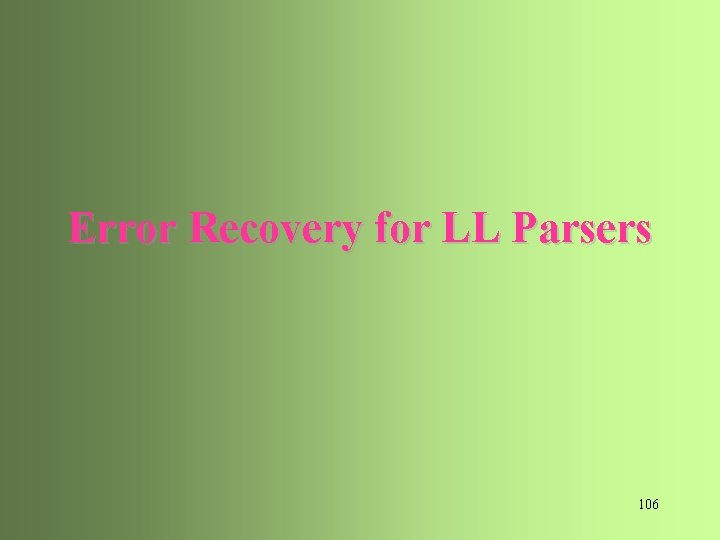

An Example type simple | id | array [ simple ] of type simple integer | char | num dotdot num 85

![An Example Action Stack Input E type array num dotdot num of An Example Action Stack Input E type array [ num dotdot num ] of](https://slidetodoc.com/presentation_image/13e68b1758d58bb45a134b8ebdf49f65/image-82.jpg)

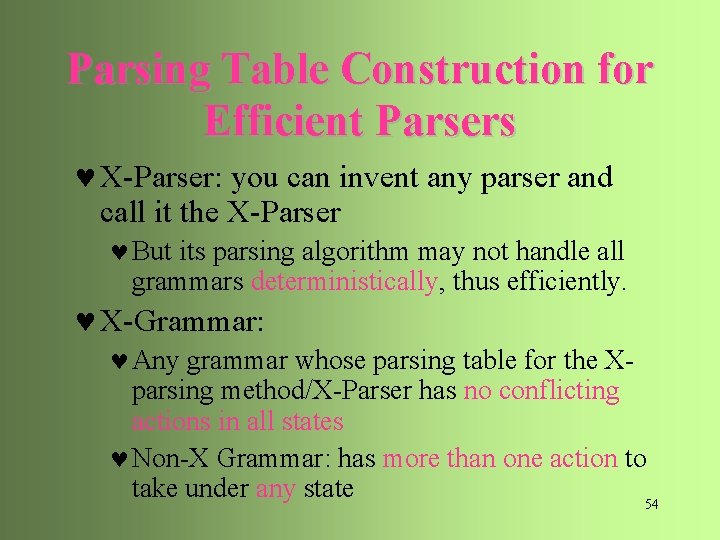

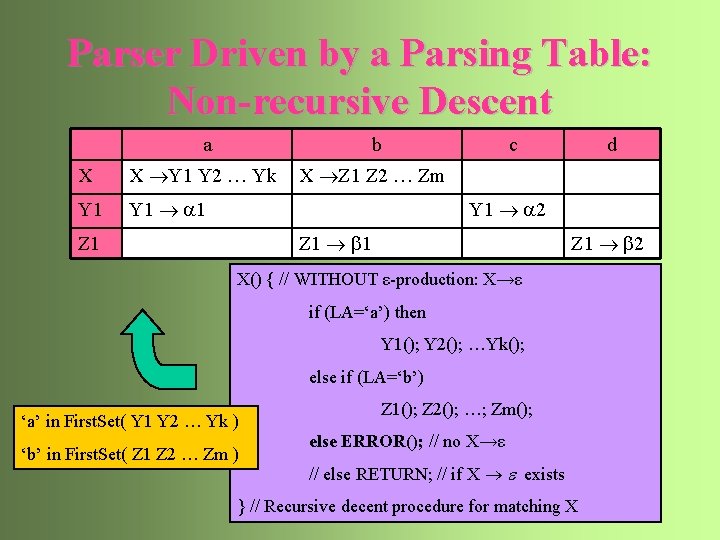

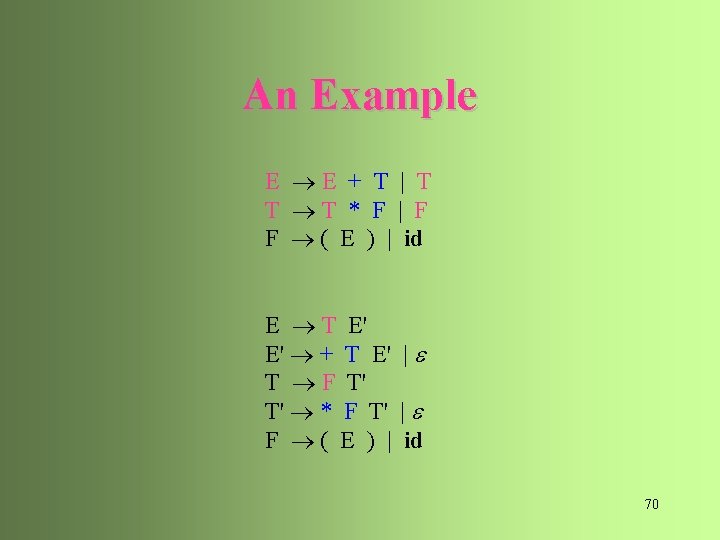

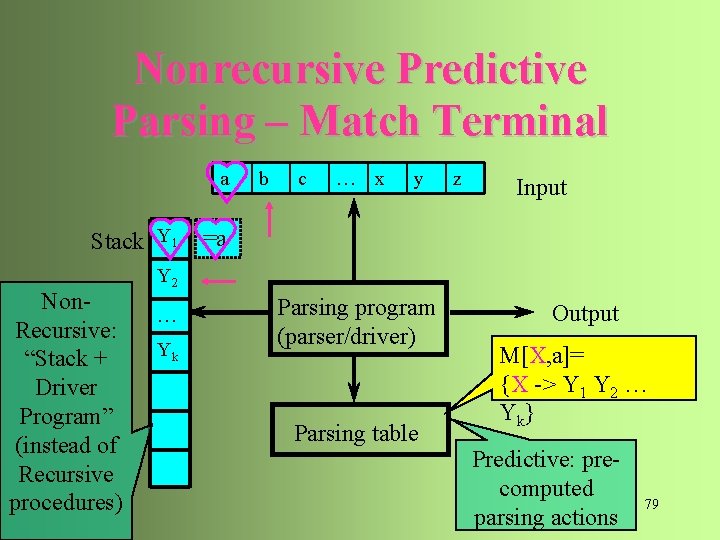

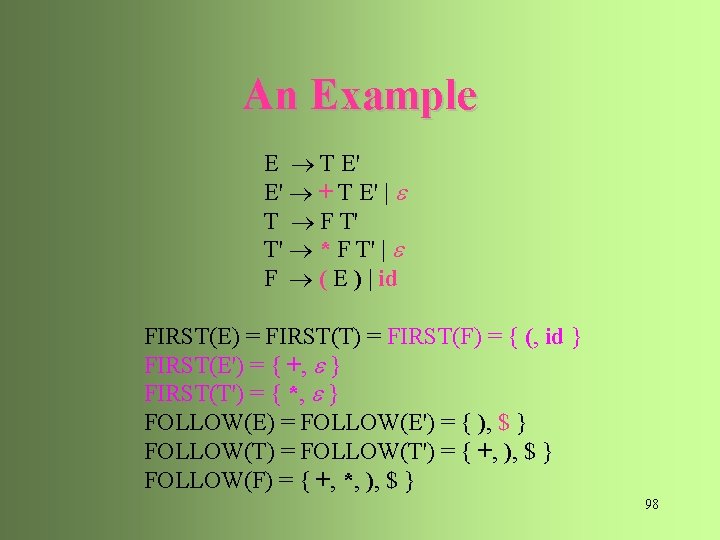

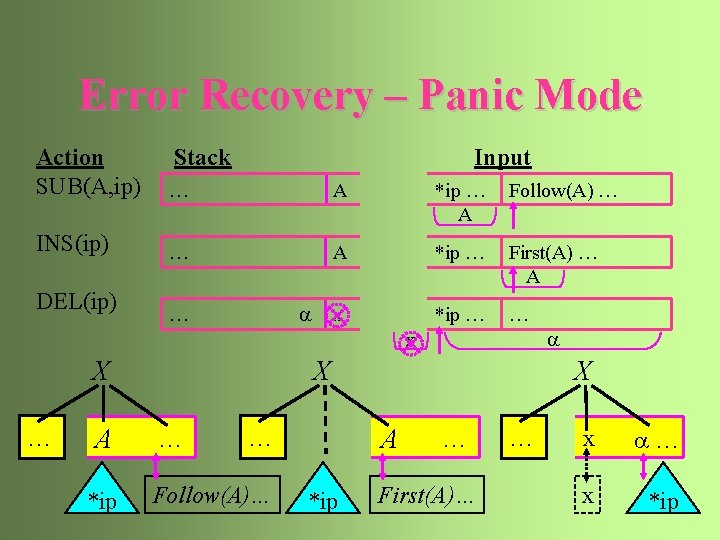

An Example Action Stack Input E type array [ num dotdot num ] of integer M type of ] simple [ [ num dotdot num ] of integer E type of ] simple num dotdot num ] of integer M type of ] num dotdot num ] of integer M type of ] ] of integer M type of of integer E type integer E simple integer 86 M integer

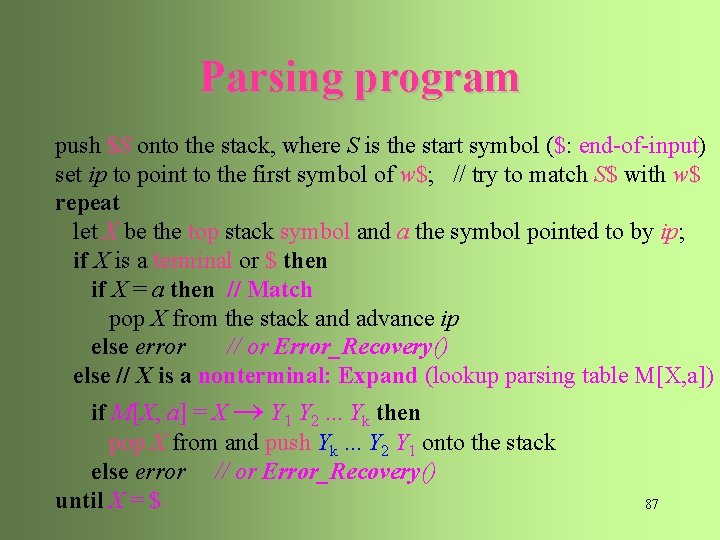

Parsing program push $S onto the stack, where S is the start symbol ($: end-of-input) set ip to point to the first symbol of w$; // try to match S$ with w$ repeat let X be the top stack symbol and a the symbol pointed to by ip; if X is a terminal or $ then if X = a then // Match pop X from the stack and advance ip else error // or Error_Recovery() else // X is a nonterminal: Expand (lookup parsing table M[X, a]) if M[X, a] = X Y 1 Y 2. . . Yk then pop X from and push Yk. . . Y 2 Y 1 onto the stack else error // or Error_Recovery() until X = $ 87

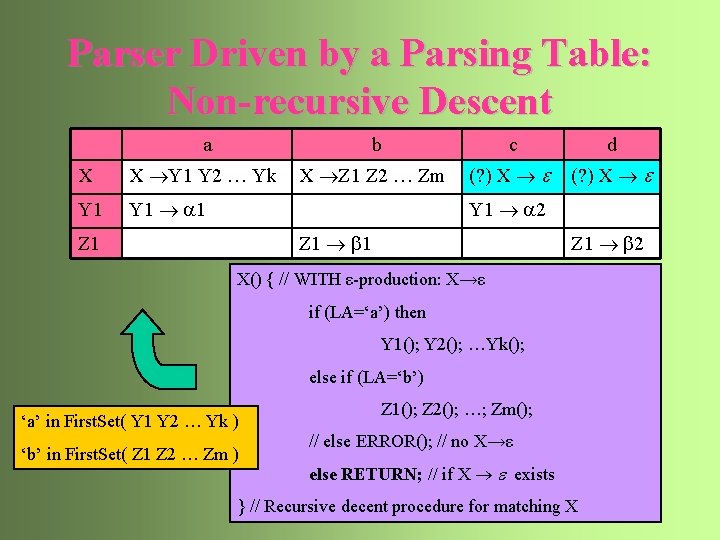

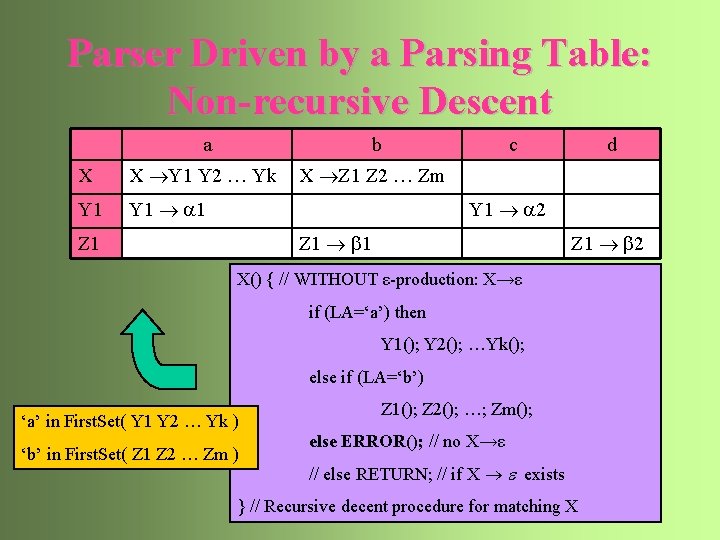

Parser Driven by a Parsing Table: Non-recursive Descent a b X X Y 1 Y 2 … Yk X Z 1 Z 2 … Zm Y 1 1 c d Y 1 2 Z 1 b 1 Z 1 b 2 X() { // WITHOUT ε-production: X→ε if (LA=‘a’) then Y 1(); Y 2(); …Yk(); else if (LA=‘b’) ‘a’ in First. Set( Y 1 Y 2 … Yk ) ‘b’ in First. Set( Z 1 Z 2 … Zm ) Z 1(); Z 2(); …; Zm(); else ERROR(); // no X→ε // else RETURN; // if X exists } // Recursive decent procedure for matching X 88

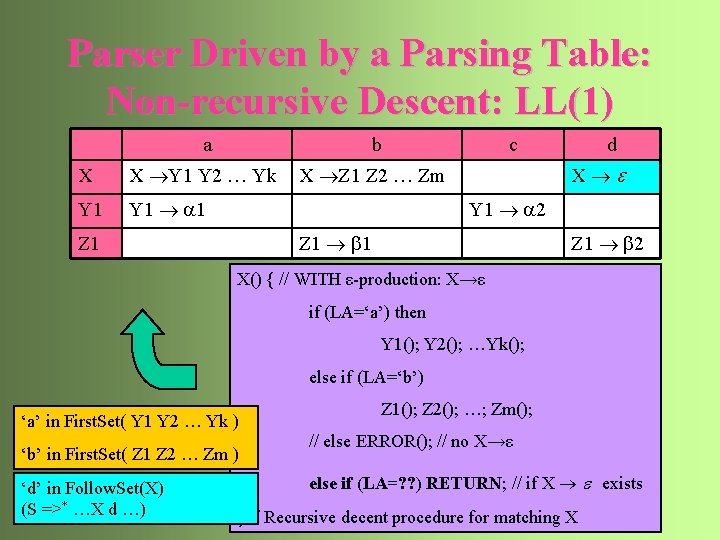

Parser Driven by a Parsing Table: Non-recursive Descent a b c d X X Y 1 Y 2 … Yk X Z 1 Z 2 … Zm (? ) X Y 1 1 Y 1 2 Z 1 b 1 Z 1 b 2 X() { // WITH ε-production: X→ε if (LA=‘a’) then Y 1(); Y 2(); …Yk(); else if (LA=‘b’) ‘a’ in First. Set( Y 1 Y 2 … Yk ) ‘b’ in First. Set( Z 1 Z 2 … Zm ) Z 1(); Z 2(); …; Zm(); // else ERROR(); // no X→ε else RETURN; // if X exists } // Recursive decent procedure for matching X 89

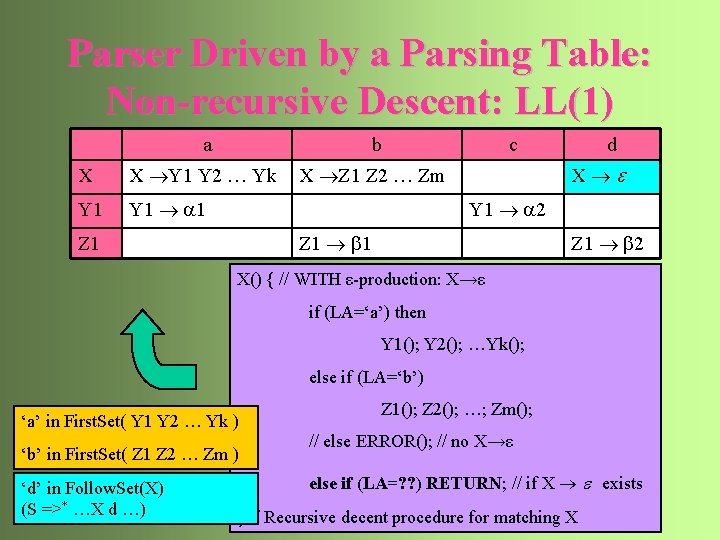

Parser Driven by a Parsing Table: Non-recursive Descent: LL(1) a b X X Y 1 Y 2 … Yk X Z 1 Z 2 … Zm Y 1 1 c d X Y 1 2 Z 1 b 1 Z 1 b 2 X() { // WITH ε-production: X→ε if (LA=‘a’) then Y 1(); Y 2(); …Yk(); else if (LA=‘b’) ‘a’ in First. Set( Y 1 Y 2 … Yk ) ‘b’ in First. Set( Z 1 Z 2 … Zm ) ‘d’ in Follow. Set(X) (S =>* …X d …) Z 1(); Z 2(); …; Zm(); // else ERROR(); // no X→ε else if (LA=? ? ) RETURN; // if X exists } // Recursive decent procedure for matching X 90

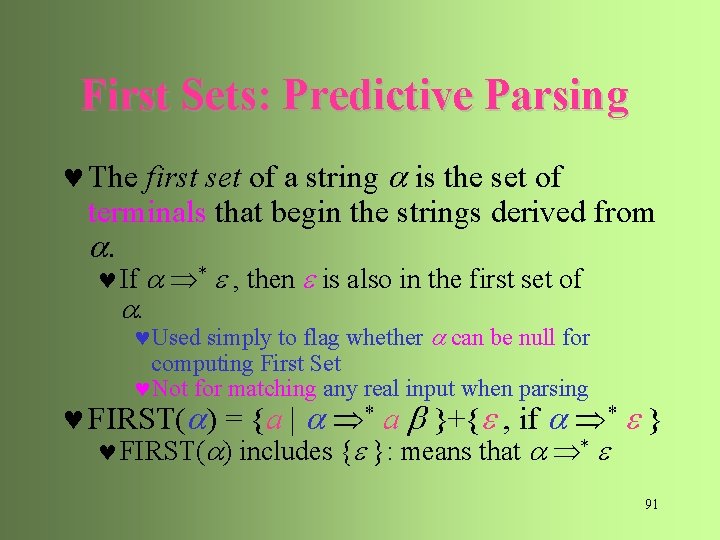

First Sets: Predictive Parsing © The first set of a string is the set of terminals that begin the strings derived from . © If * , then is also in the first set of . ©Used simply to flag whether can be null for computing First Set ©Not for matching any real input when parsing © FIRST( ) = {a | * a }+{ , if * } © FIRST( ) includes { }: means that * 91

Compute First Sets © If X is terminal, then FIRST(X) is {X} © If X is nonterminal and X is a production, then add to FIRST(X) © If X is nonterminal and X Y 1 Y 2. . . Yk is a production, then add a to FIRST(X) if for some i, a is in FIRST(Yi) and is in all of FIRST(Y 1), . . . , FIRST(Yi-1). ©If is in FIRST(Yj) for all j, then add to FIRST(X) 92

Follow Sets: Matching Empty © What to do with matching null: A ? © TD Recursive Descent Parsing: “assumes” success © LL Parser: more predictive/restrictive © => only when input, a, is in Follow Set of ‘A’ © The follow set of a nonterminal A is the set of terminals that can appear immediately to the right of A in some sentential form, namely, S * A a a is in the follow set of A. 93

Compute Follow Sets © Initialization: Place $ in FOLLOW(S), where S is the start symbol and $ is the input right end marker. © If there is a production A B , then everything in FIRST( ) except for is placed in FOLLOW(B) © is not considered a visible input to follow any symbol © If there is a production A B or A B where FIRST( ) contains (i. e. , * ), then everything in FOLLOW(A) is in FOLLOW(B) © S * … A a … implies S * … B a … © YES: “every symbol that can follow A will also follow B” © NO!: “every symbol that can follow B will also follow A” 94

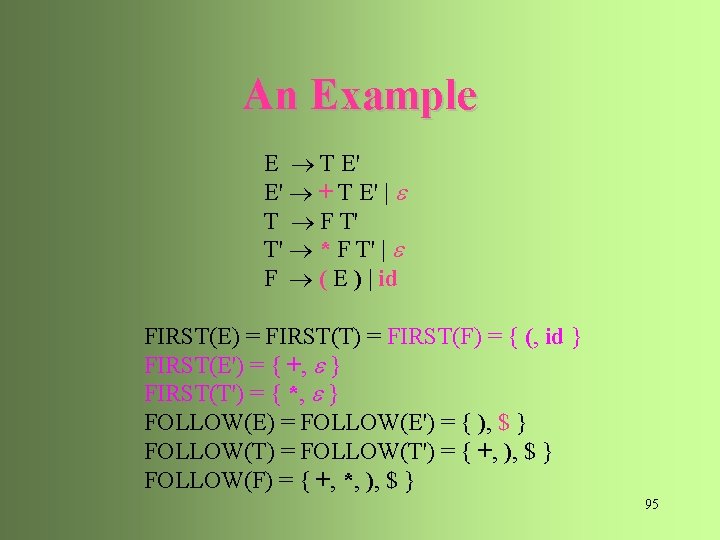

An Example E T E' E' + T E' | T F T' T' * F T' | F ( E ) | id FIRST(E) = FIRST(T) = FIRST(F) = { (, id } FIRST(E') = { +, } FIRST(T') = { *, } FOLLOW(E) = FOLLOW(E') = { ), $ } FOLLOW(T) = FOLLOW(T') = { +, ), $ } FOLLOW(F) = { +, *, ), $ } 95

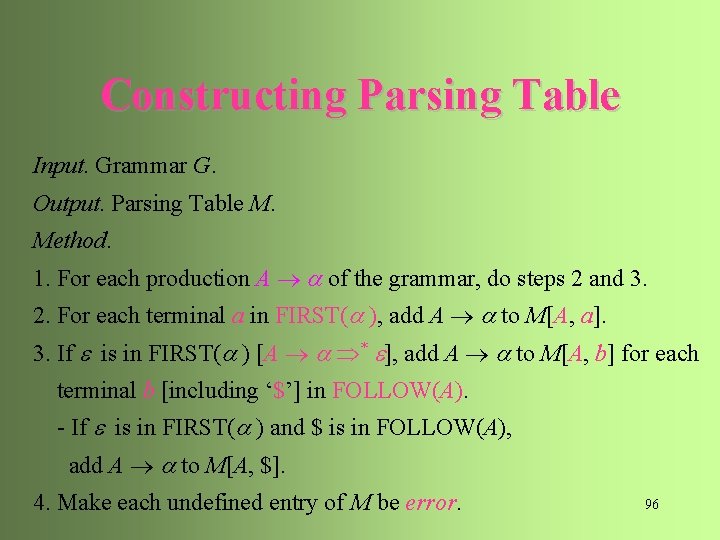

Constructing Parsing Table Input. Grammar G. Output. Parsing Table M. Method. 1. For each production A of the grammar, do steps 2 and 3. 2. For each terminal a in FIRST( ), add A to M[A, a]. 3. If is in FIRST( ) [A * ], add A to M[A, b] for each terminal b [including ‘$’] in FOLLOW(A). - If is in FIRST( ) and $ is in FOLLOW(A), add A to M[A, $]. 4. Make each undefined entry of M be error. 96

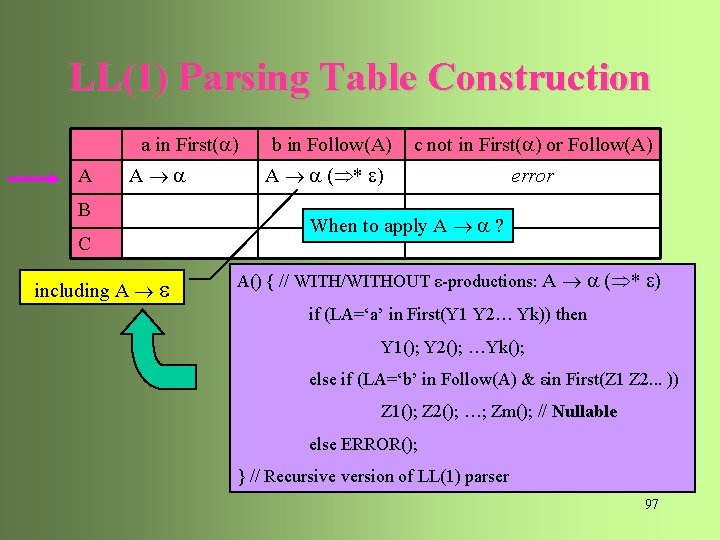

LL(1) Parsing Table Construction a in First( ) A A B C including A b in Follow(A) c not in First( ) or Follow(A) A ( * ) error When to apply A ? A() { // WITH/WITHOUT ε-productions: A ( * ) if (LA=‘a’ in First(Y 1 Y 2… Yk)) then Y 1(); Y 2(); …Yk(); else if (LA=‘b’ in Follow(A) & εin First(Z 1 Z 2. . . )) Z 1(); Z 2(); …; Zm(); // Nullable else ERROR(); } // Recursive version of LL(1) parser 97

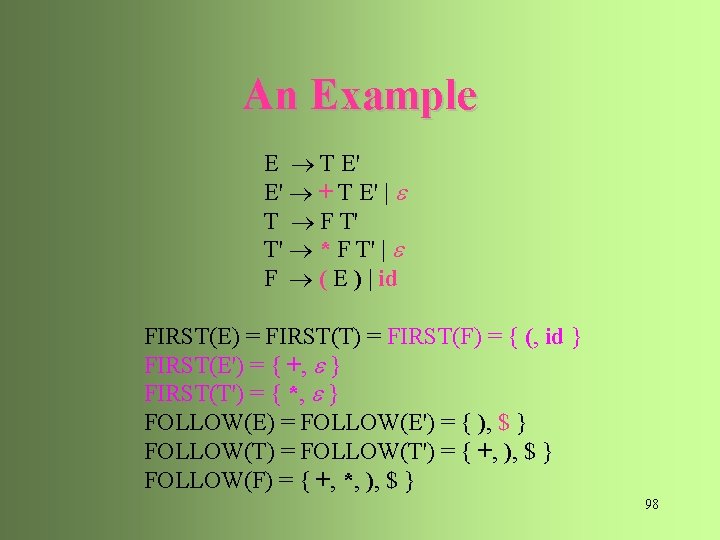

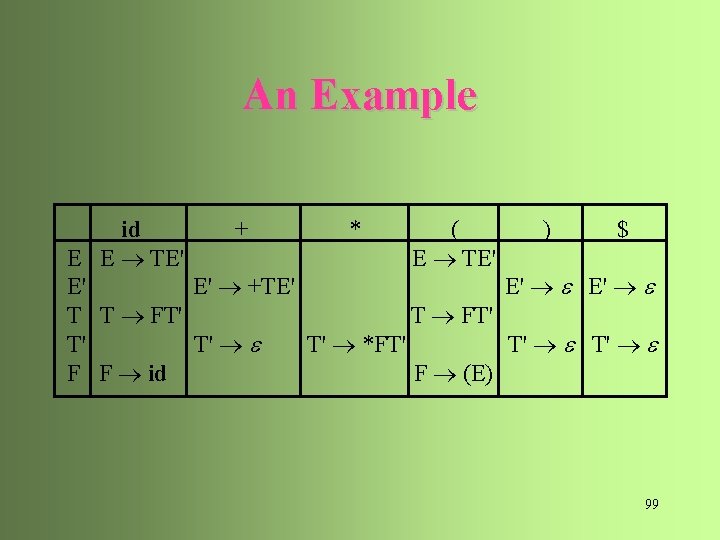

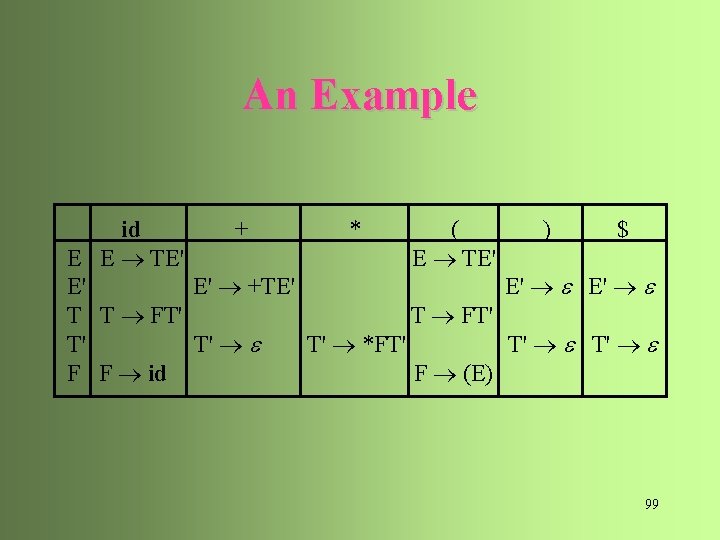

An Example E T E' E' + T E' | T F T' T' * F T' | F ( E ) | id FIRST(E) = FIRST(T) = FIRST(F) = { (, id } FIRST(E') = { +, } FIRST(T') = { *, } FOLLOW(E) = FOLLOW(E') = { ), $ } FOLLOW(T) = FOLLOW(T') = { +, ), $ } FOLLOW(F) = { +, *, ), $ } 98

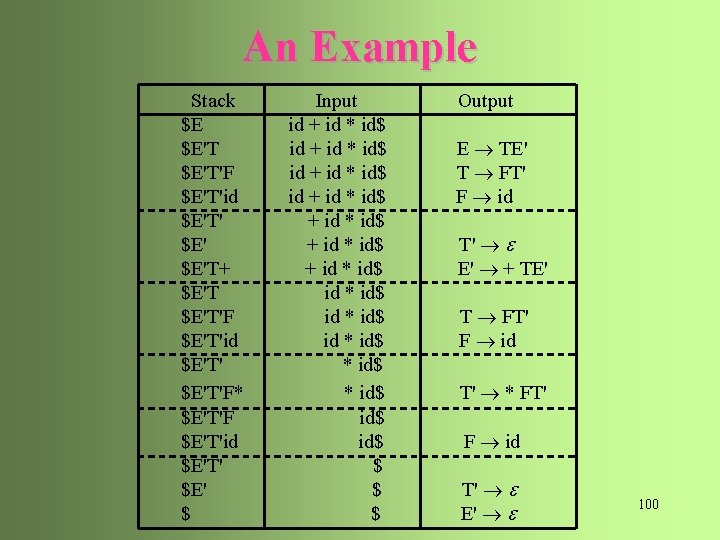

An Example id + * ( ) $ E E TE' E' E' +TE' E' E' T T FT' T' *FT' T' T' F F id F (E) 99

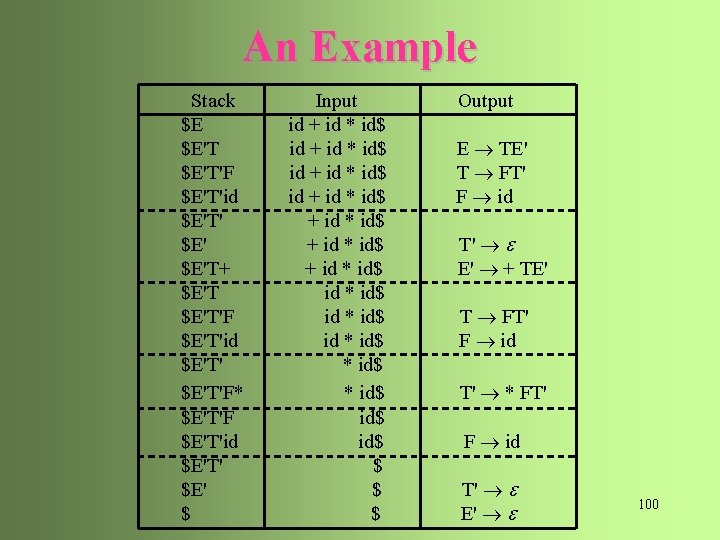

An Example Stack $E $E'T'F $E'T'id $E'T' $E'T+ $E'T'F $E'T'id $E'T'F* $E'T'F $E'T'id $E'T' $E' $ Input id + id * id$ + id * id$ id * id$ id$ $ $ $ Output E TE' T FT' F id T' E' + TE' T FT' F id T' * FT' F id T' E' 100

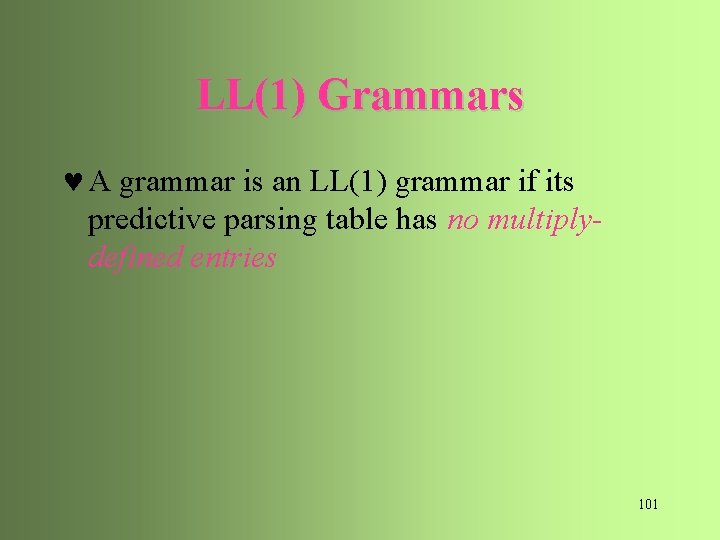

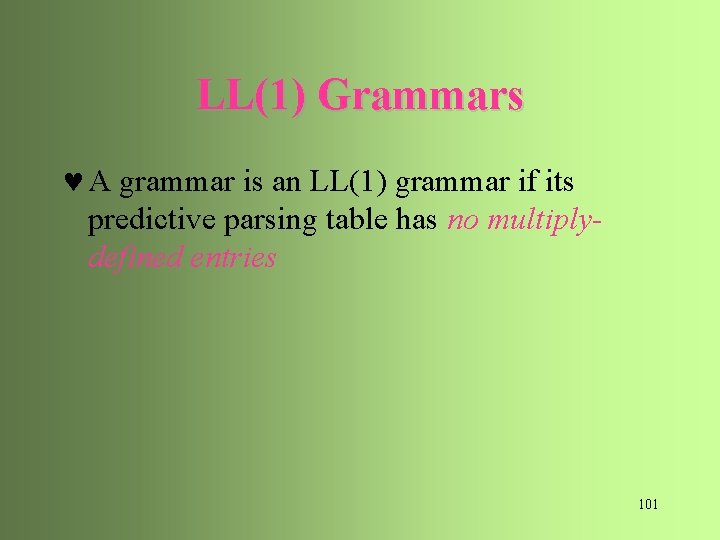

LL(1) Grammars © A grammar is an LL(1) grammar if its predictive parsing table has no multiplydefined entries 101

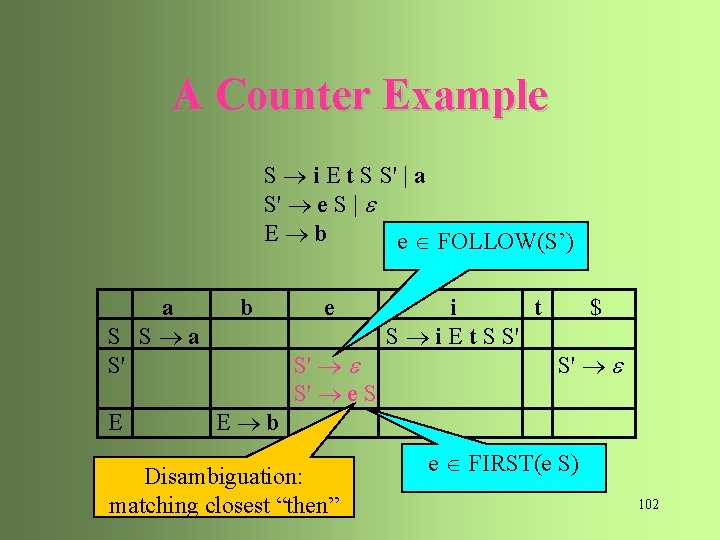

A Counter Example S i E t S S' | a S' e S | E b e FOLLOW(S’) a S S a S' E b e S' S' e S Disambiguation: matching closest “then” i t S i E t S S' $ S' e FIRST(e S) 102

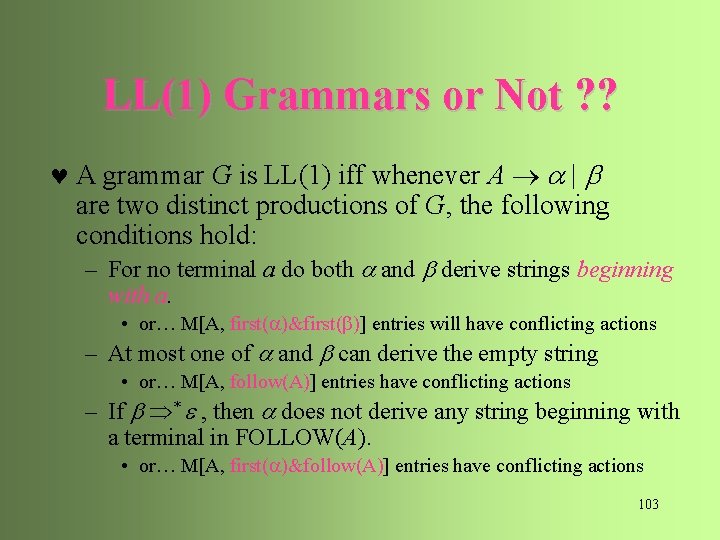

LL(1) Grammars or Not ? ? © A grammar G is LL(1) iff whenever A | are two distinct productions of G, the following conditions hold: – For no terminal a do both and derive strings beginning with a. • or… M[A, first( )&first(b)] entries will have conflicting actions – At most one of and can derive the empty string • or… M[A, follow(A)] entries have conflicting actions – If * , then does not derive any string beginning with a terminal in FOLLOW(A). • or… M[A, first( )&follow(A)] entries have conflicting actions 103

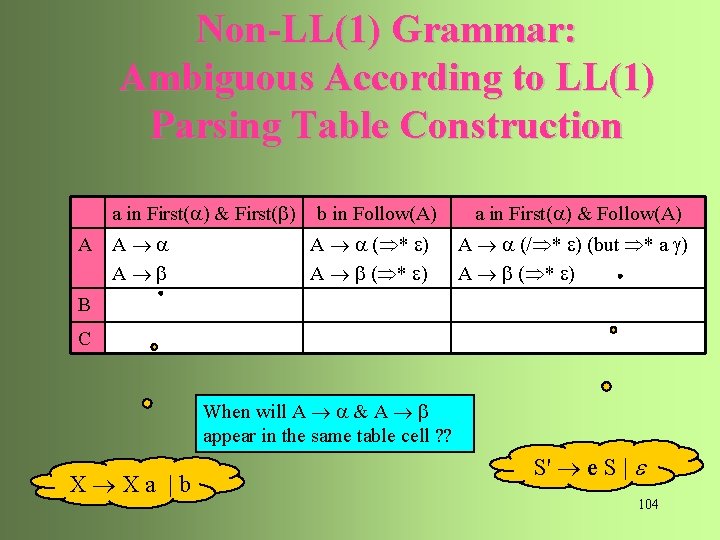

Non-LL(1) Grammar: Ambiguous According to LL(1) Parsing Table Construction a in First( ) & First(b) A A A b b in Follow(A) A ( * ) A b ( * ) a in First( ) & Follow(A) A (/ * ) (but * a g) A b ( * ) B C When will A & A b appear in the same table cell ? ? X Xa |b S' e S | 104

LL(1) Grammars or Not? ? © If G is left-recursive or ambiguous, then M will have at least one multiply-defined entry © => non-LL(1) © E. g. , X X a | b © => FIRST(X) = {b} (and, of course, FIRST(b) = {b}) © => M[X, b] includes both {X X a} and {X b} © i. e. , Ambiguous G and G with left-recursive productions can not be LL(1). © No LL(1) grammar can be ambiguous 105

Error Recovery for LL Parsers 106

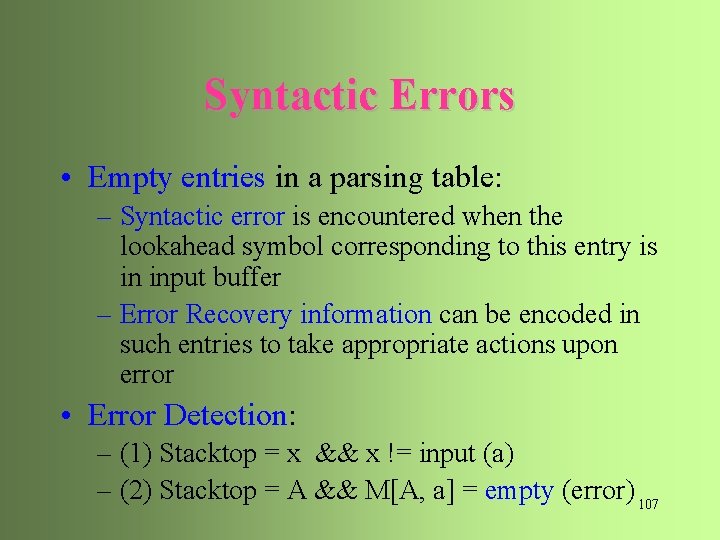

Syntactic Errors • Empty entries in a parsing table: – Syntactic error is encountered when the lookahead symbol corresponding to this entry is in input buffer – Error Recovery information can be encoded in such entries to take appropriate actions upon error • Error Detection: – (1) Stacktop = x && x != input (a) – (2) Stacktop = A && M[A, a] = empty (error) 107

Error Recovery Strategies © Panic mode: skip tokens until a token in a set of synchronizing tokens appears © INS (insertion) type of errors © sync at delimiters, keywords, …, that have clear functions © Phrase Level Recovery © local INS (insertion), DEL (deletion), SUB (substitution) types of errors © Error Production © define error patterns (“error productions”) in grammar © Global Correction [Grammar Correction] © minimum distance correction 108

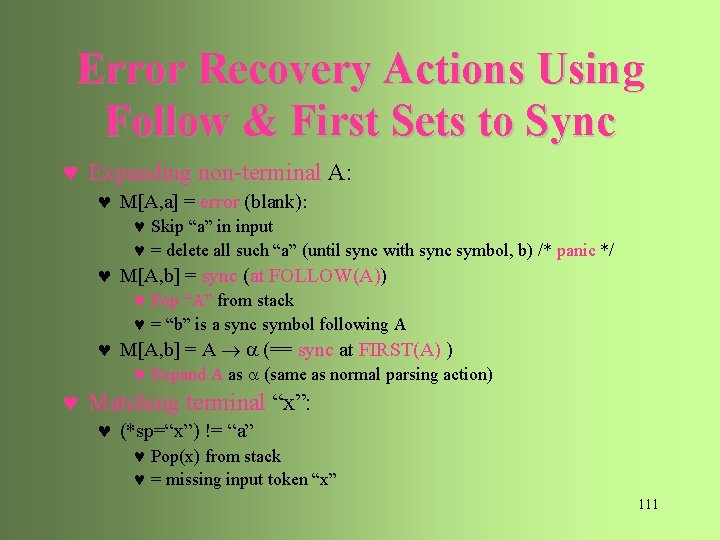

Error Recovery – Panic Mode © Panic mode: skip tokens until a token in a set of synchronizing tokens appears © Commonly used Synchronizing tokens: – SUB(A, ip): use FOLLOW(A) as sync set for A (pop A) – use the FIRST set of a higher construct as sync set for a lower construct – INS(ip): use FIRST(A) as sync set for A – *ip= : use the production deriving as the default – DEL(ip): If a terminal on stack cannot be matched, pop the terminal 109

Error Recovery – Panic Mode Action SUB(A, ip) … A *ip … A Follow(A) … INS(ip) … A *ip … First(A) … A x *ip … … DEL(ip) Stack Input … x X … A *ip X … … Follow(A)… X A *ip … First(A)… … x 110 *ip

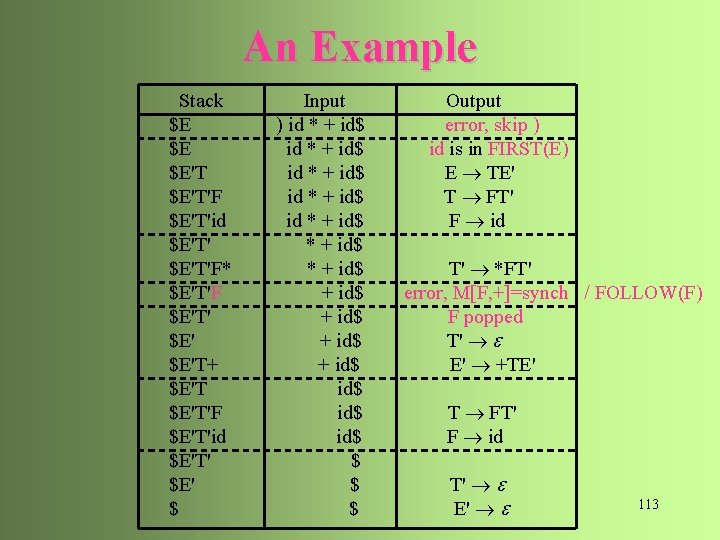

Error Recovery Actions Using Follow & First Sets to Sync © Expanding non-terminal A: © M[A, a] = error (blank): © Skip “a” in input © = delete all such “a” (until sync with sync symbol, b) /* panic */ © M[A, b] = sync (at FOLLOW(A)) © Pop “A” from stack © = “b” is a sync symbol following A © M[A, b] = A (== sync at FIRST(A) ) © Expand A as (same as normal parsing action) © Matching terminal “x”: © (*sp=“x”) != “a” © Pop(x) from stack © = missing input token “x” 111

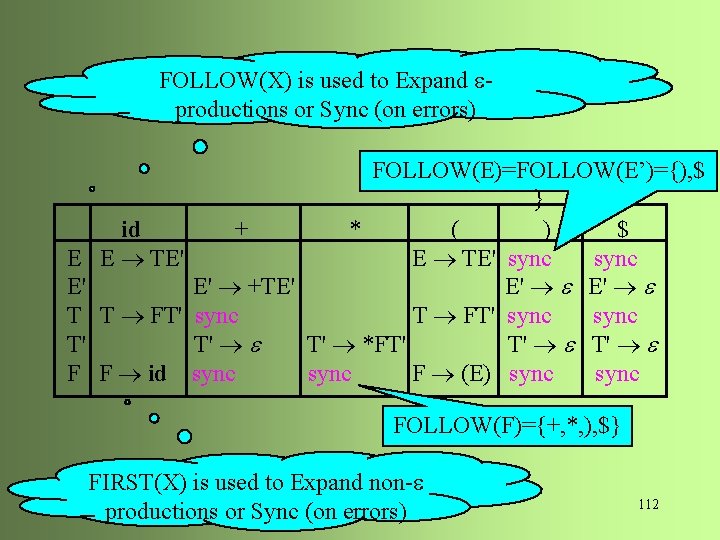

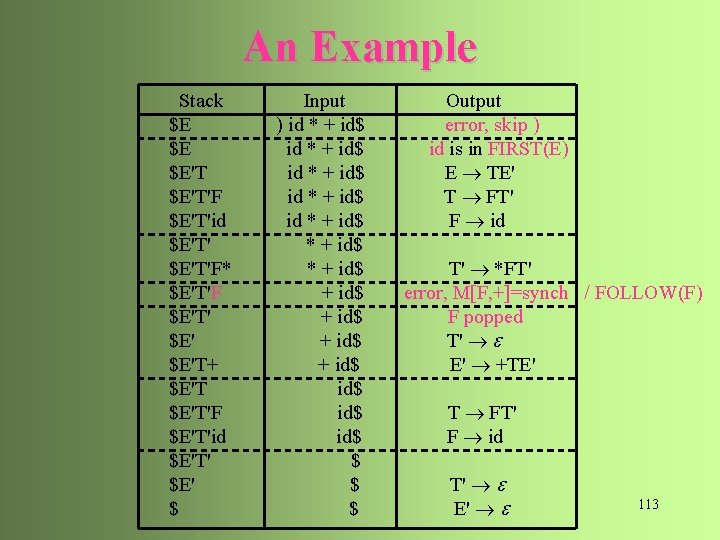

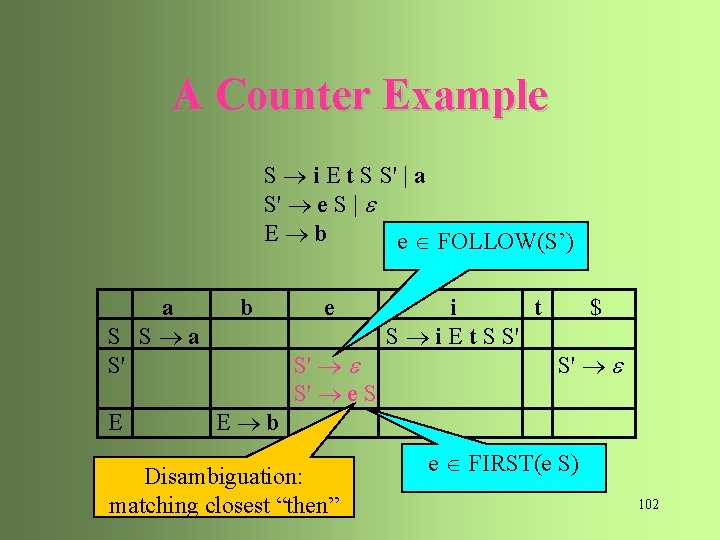

FOLLOW(X) is used to Expand productions or Sync (on errors) An Example E E' T T' F FOLLOW(E)=FOLLOW(E’)={), $ } id + * ( ) $ E TE' sync E' +TE' E' E' T FT' sync T' T' *FT' T' T' F id sync F (E) sync FOLLOW(F)={+, *, ), $} FIRST(X) is used to Expand non- productions or Sync (on errors) 112

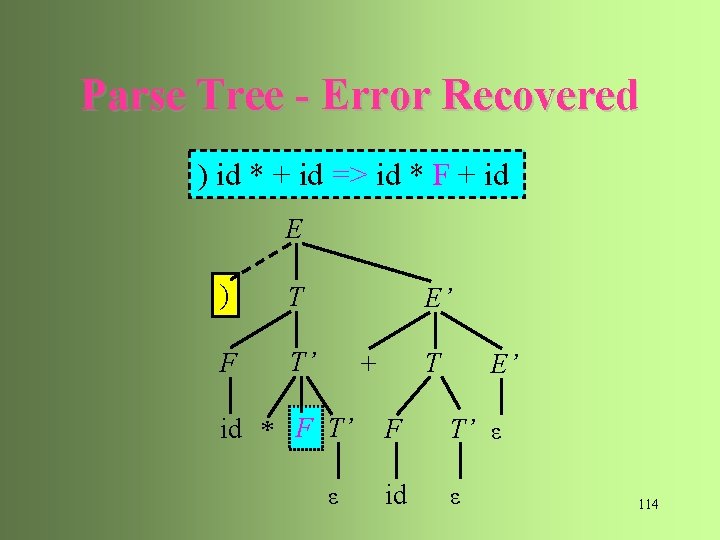

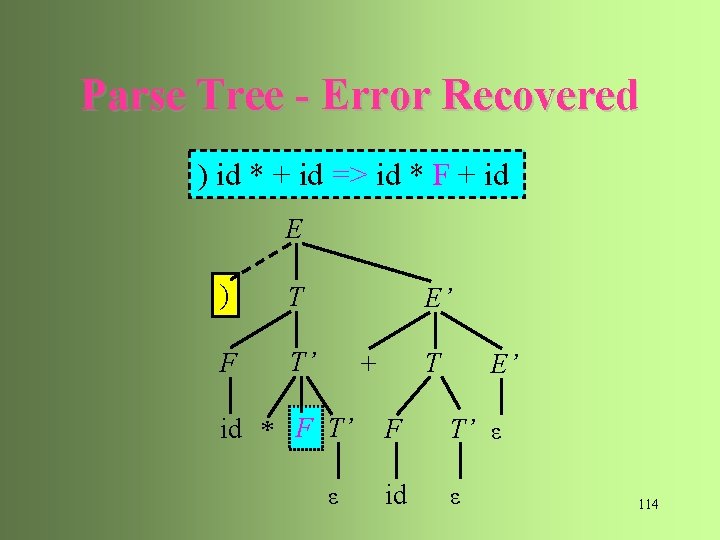

An Example Stack $E $E $E'T'F $E'T'id $E'T'F* $E'T'F $E'T' $E'T+ $E'T'F $E'T'id $E'T' $E' $ Input ) id * + id$ id * + id$ + id$ id$ $ $ $ Output error, skip ) id is in FIRST(E) E TE' T FT' F id T' *FT' error, M[F, +]=synch / FOLLOW(F) F popped T' E' +TE' T FT' F id T' E' 113

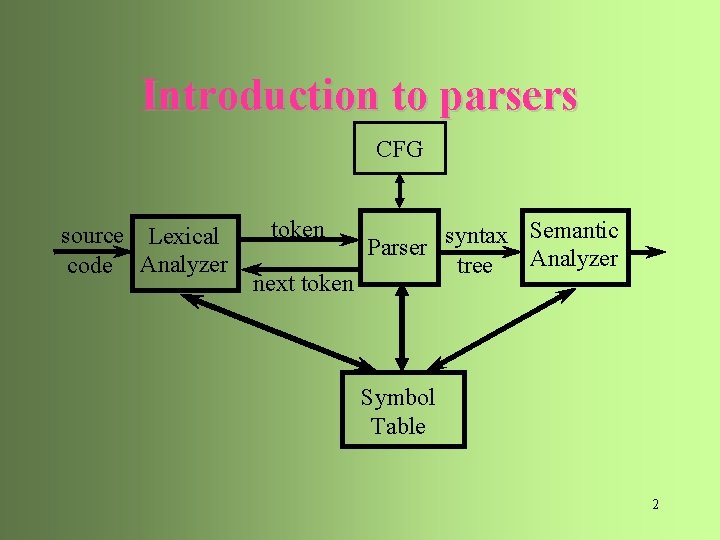

Parse Tree - Error Recovered ) id * + id => id * F + id E ) T F T’ E’ T + id * F T’ ε E’ F T’ ε id ε 114