Syllables and Concepts in Large Vocabulary Continuous Speech

Syllables and Concepts in Large Vocabulary Continuous Speech Recognition Slides available at: www. cs. gonzaga. edu/depalma 1

The World’s Speakers � The worlds 7 billion people speak about 7000 languages � Many are on the verge of extinction � Top Ten (ranked by native speakers): �Mandarin (1. 1 billion) �English (330 million) �Spanish (300 million �Hundu/Urdu (250 million) �Portuguese (220 million) �Arabic (200 million) �Bengali (185 million) �Russian (160 million) �Persian (149 million) �Punjabi (130 million) 2

![Phonology: The sounds of a language �The [t] sound in tunafish differs from the Phonology: The sounds of a language �The [t] sound in tunafish differs from the](http://slidetodoc.com/presentation_image_h2/5b612b3ab80c99d3010e98476eea47c1/image-3.jpg)

Phonology: The sounds of a language �The [t] sound in tunafish differs from the [t] sound in starfish. The first [t] is aspirated (vocal chords briefly don’t vibrate, producing a sound like a puff a air). A [t] followed by an [s] is unaspirated �This happens with a [k] and [g]—both are unaspirated, leading to the mishearing of the Jimi Hendrix song: �‘Scuse me, while I kiss the sky �‘Scuse me, while I kiss this guy 3

Morphology: How words are built from smaller meaning bearing units � guardo/guardando/guardato/guardavo �I look/looking/looked/used to look �Romance languages have a more complex verb structure than English �uygarlasturamadiklarimizdanmisssinizcasina �“behaving as if you are among those whom we could not civilize” �Composed of a stem and 8 morphemes �Turkish (an agglutinative language) has more complex morphological structure than English 4

Syntax: How words are arranged �I’m sorry Dave I’m afraid I can’t do that �I’m I do sorry that afraid Dave I’m can’t �Colorless green ideas sleep furiously 5

Semantics: What words and groups of words mean �How do we distinguish the two uses of by in the following sentences �How much Chinese silk was exported to Western Europe by the end of the 18 th century (temporal end point) �Our Mutual Friend was written by Dickens (agent) 6

Pragmatics: relationship of meaning to intentions �Dave: “HAL, open the pod bay door. ” �HAL: “I’m sorry but I’m afraid that I can’t do that Dave. ” �As opposed to: “No. ” or “I won’t” �Do you have the time? �This is not a yes/no question 7

Discourse: linguistic units larger than a word �“How many states were in the United States that year. ” �Exactly what year is “that year” and how would we find out. 8

Much of language processing comes down to ambiguity resolution �Kiss this guy/kiss the sky �I made her duck �I cooked waterfowl for her �I cooked her waterfowl �I created the duck that she owns �I caused her to move quickly to lower head �I waved my wand turned her into a waterfowl 9

An Engineered Artifact �Syllables �Principled word segmentation scheme �No claim about human syllabification �Concepts �Words and phrases with similar meanings �No claim about cognition 10

Reducing the Search Space ASR answers the question: �What is the most likely sequence of words given an acoustic signal? �Considers many candidate word sequences To Reduce the Search Space � Reduce number of candidates �Using Syllables in the Language Model �Using Concepts in a Concept Component 11

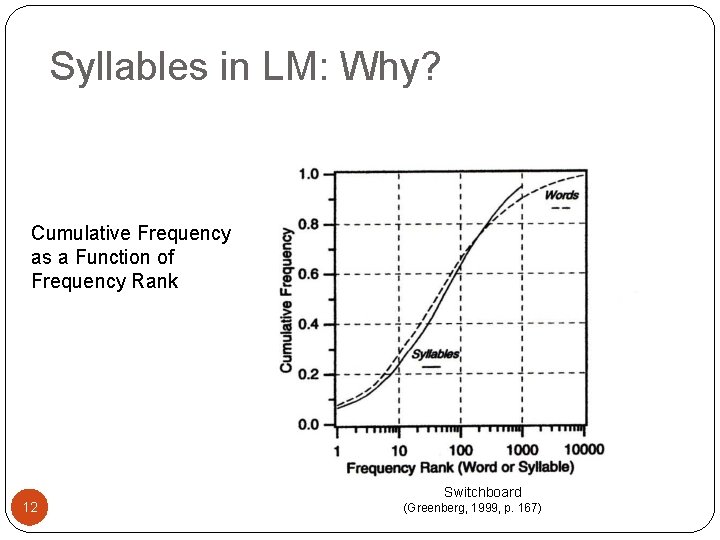

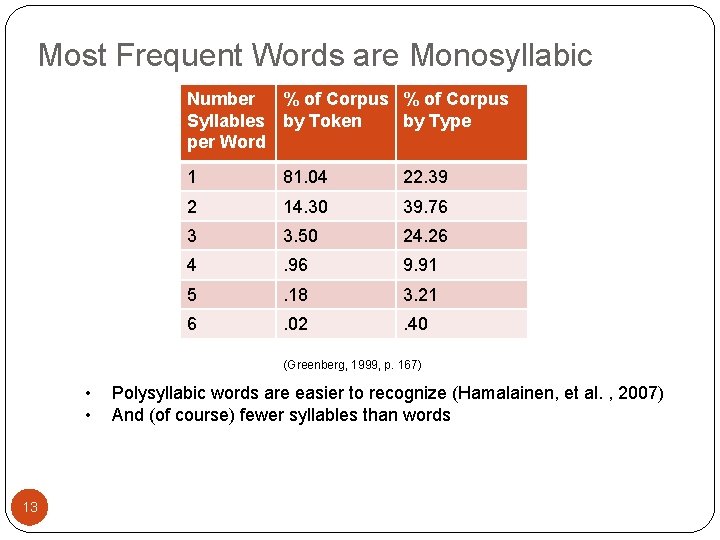

Syllables in LM: Why? Cumulative Frequency as a Function of Frequency Rank 12 Switchboard (Greenberg, 1999, p. 167)

Most Frequent Words are Monosyllabic Number % of Corpus Syllables by Token by Type per Word 1 81. 04 22. 39 2 14. 30 39. 76 3 3. 50 24. 26 4 . 96 9. 91 5 . 18 3. 21 6 . 02 . 40 (Greenberg, 1999, p. 167) • • 13 Polysyllabic words are easier to recognize (Hamalainen, et al. , 2007) And (of course) fewer syllables than words

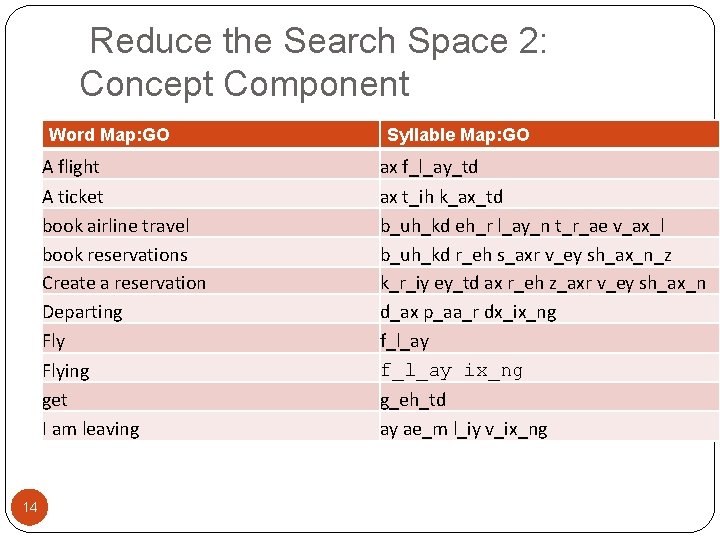

Reduce the Search Space 2: Concept Component Word Map: GO A flight A ticket book airline travel book reservations Create a reservation Departing Flying get I am leaving 14 Syllable Map: GO ax f_l_ay_td ax t_ih k_ax_td b_uh_kd eh_r l_ay_n t_r_ae v_ax_l b_uh_kd r_eh s_axr v_ey sh_ax_n_z k_r_iy ey_td ax r_eh z_axr v_ey sh_ax_n d_ax p_aa_r dx_ix_ng f_l_ay ix_ng g_eh_td ay ae_m l_iy v_ix_ng

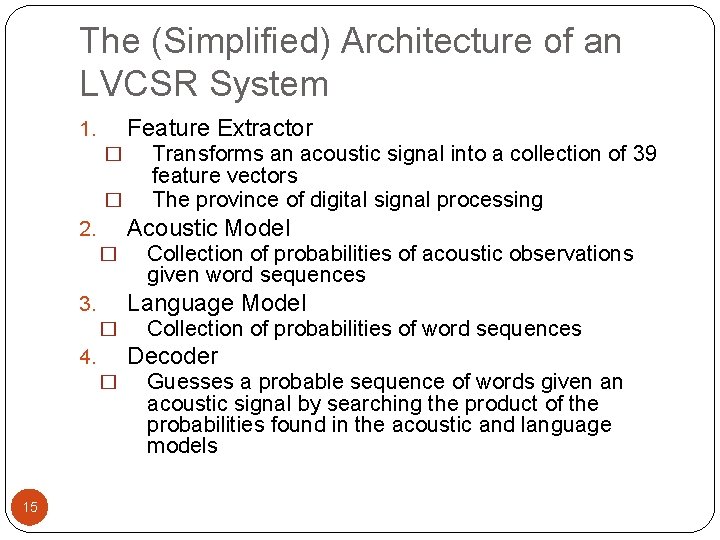

The (Simplified) Architecture of an LVCSR System Feature Extractor 1. � � Acoustic Model 2. � Collection of probabilities of acoustic observations given word sequences Language Model 3. � Collection of probabilities of word sequences Decoder 4. � 15 Transforms an acoustic signal into a collection of 39 feature vectors The province of digital signal processing Guesses a probable sequence of words given an acoustic signal by searching the product of the probabilities found in the acoustic and language models

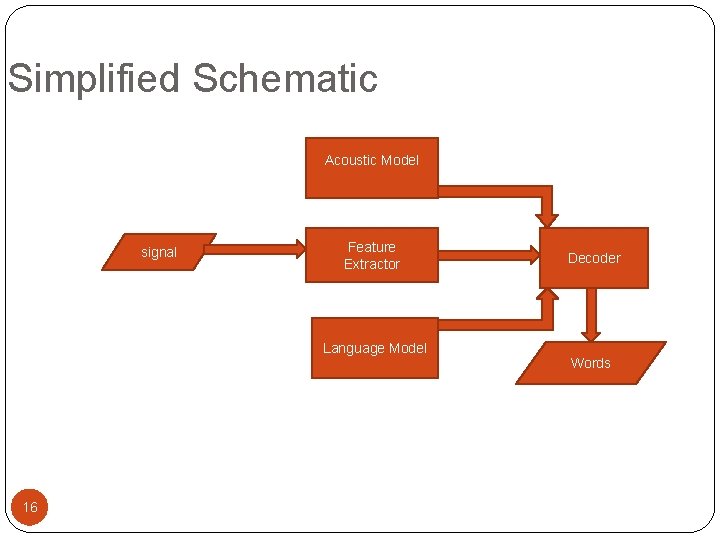

Simplified Schematic Acoustic Model signal Feature Extractor Language Model 16 Decoder Words

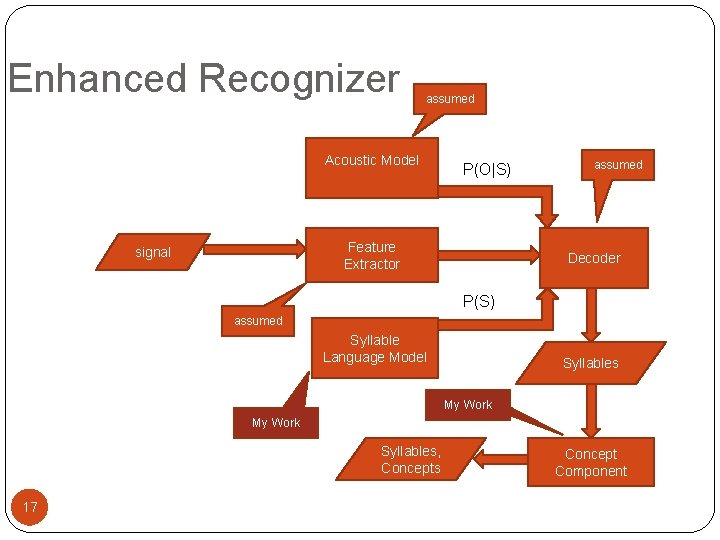

Enhanced Recognizer assumed Acoustic Model P(O|S) Feature Extractor signal assumed Decoder P(S) assumed Syllable Language Model Syllables My Work Syllables, Concepts 17 Concept Component

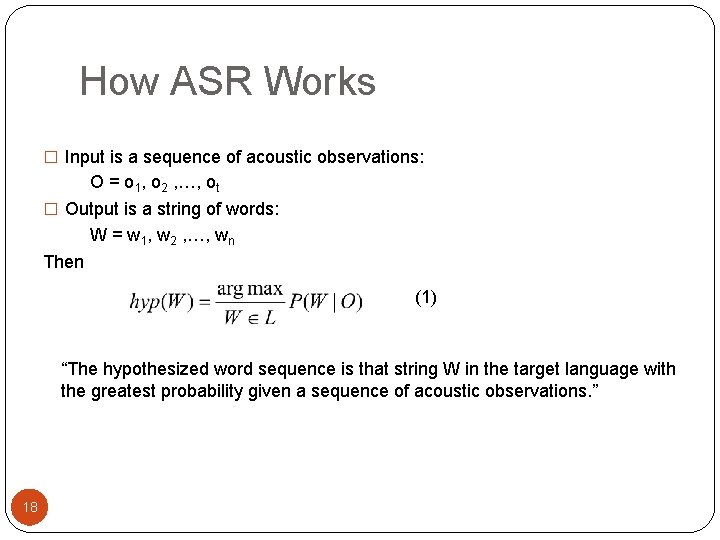

How ASR Works � Input is a sequence of acoustic observations: O = o 1, o 2 , …, ot � Output is a string of words: W = w 1, w 2 , …, wn Then (1) “The hypothesized word sequence is that string W in the target language with the greatest probability given a sequence of acoustic observations. ” 18

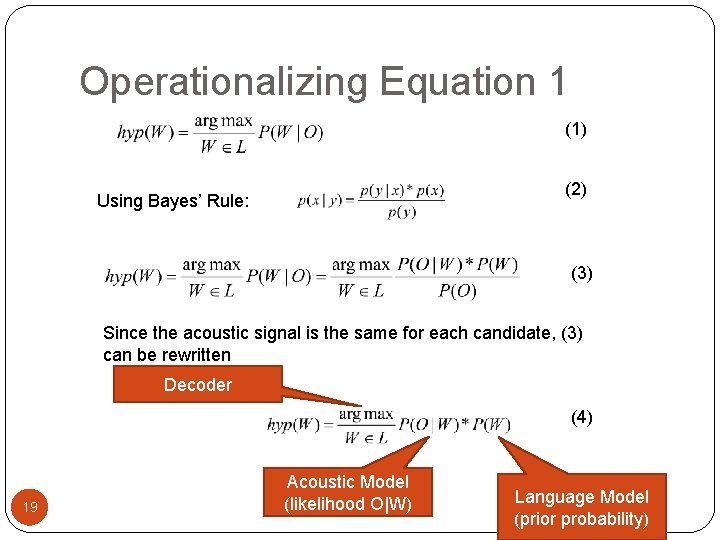

Operationalizing Equation 1 (1) (2) Using Bayes’ Rule: (3) Since the acoustic signal is the same for each candidate, (3) can be rewritten Decoder (4) 19 Acoustic Model (likelihood O|W) Language Model (prior probability)

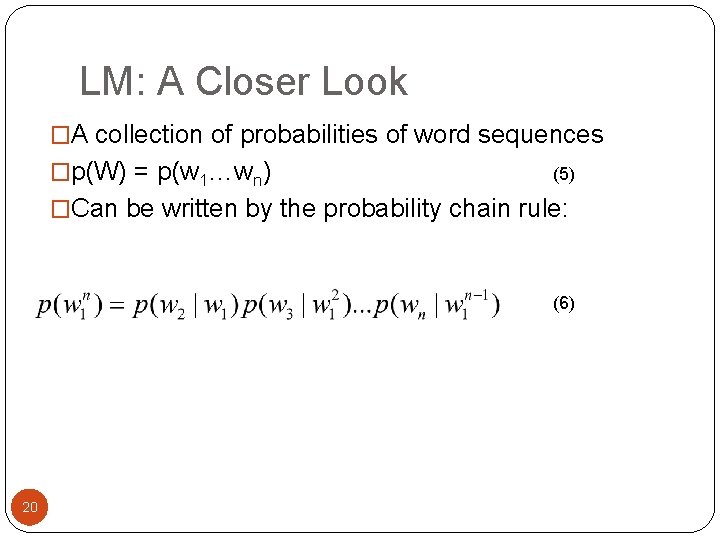

LM: A Closer Look �A collection of probabilities of word sequences �p(W) = p(w 1…wn) (5) �Can be written by the probability chain rule: (6) 20

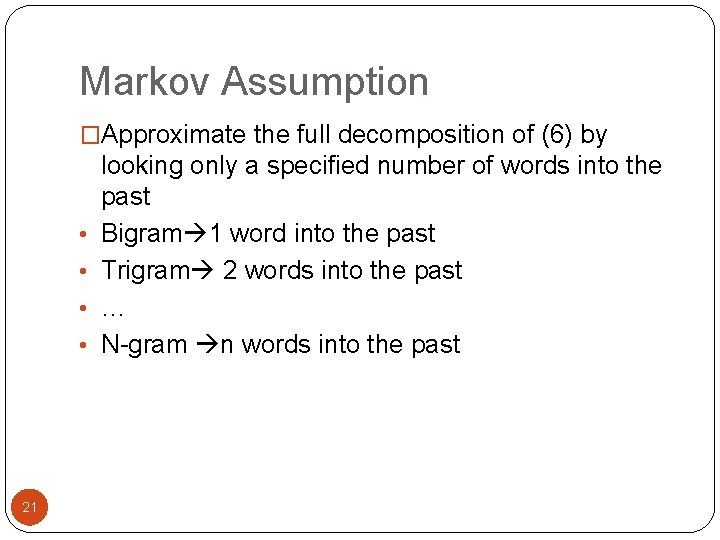

Markov Assumption �Approximate the full decomposition of (6) by • • 21 looking only a specified number of words into the past Bigram 1 word into the past Trigram 2 words into the past … N-gram n words into the past

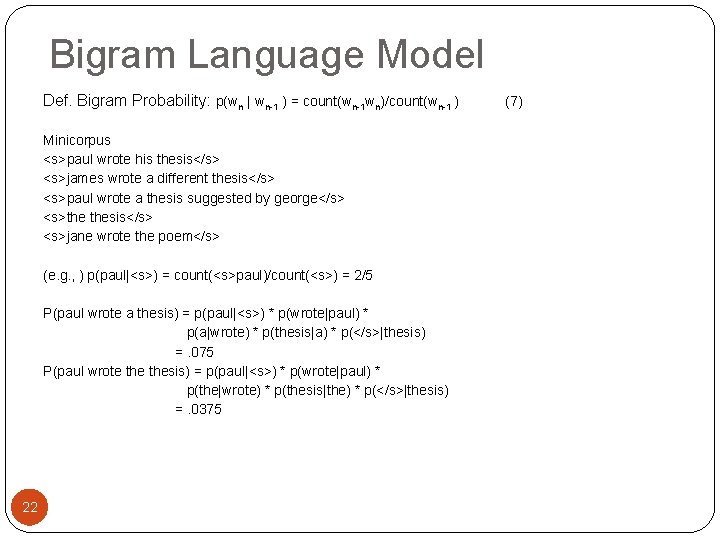

Bigram Language Model Def. Bigram Probability: p(wn | wn-1 ) = count(wn-1 wn)/count(wn-1 ) Minicorpus <s>paul wrote his thesis</s> <s>james wrote a different thesis</s> <s>paul wrote a thesis suggested by george</s> <s>the thesis</s> <s>jane wrote the poem</s> (e. g. , ) p(paul|<s>) = count(<s>paul)/count(<s>) = 2/5 P(paul wrote a thesis) = p(paul|<s>) * p(wrote|paul) * p(a|wrote) * p(thesis|a) * p(</s>|thesis) =. 075 P(paul wrote thesis) = p(paul|<s>) * p(wrote|paul) * p(the|wrote) * p(thesis|the) * p(</s>|thesis) =. 0375 22 (7)

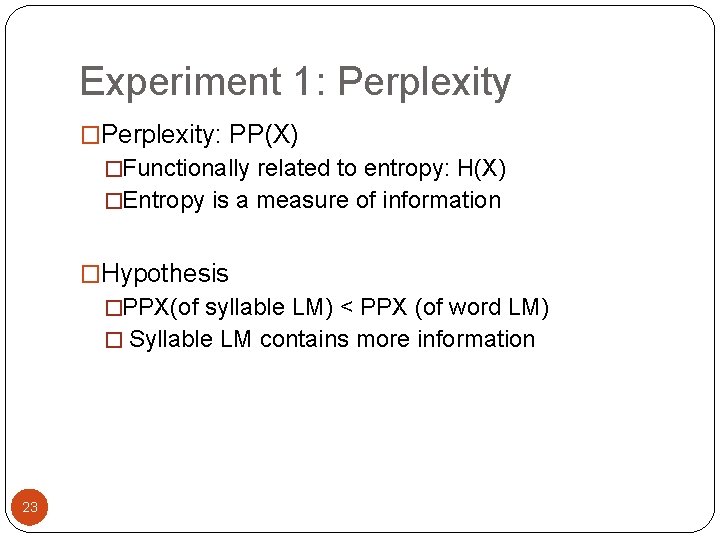

Experiment 1: Perplexity �Perplexity: PP(X) �Functionally related to entropy: H(X) �Entropy is a measure of information �Hypothesis �PPX(of syllable LM) < PPX (of word LM) � Syllable LM contains more information 23

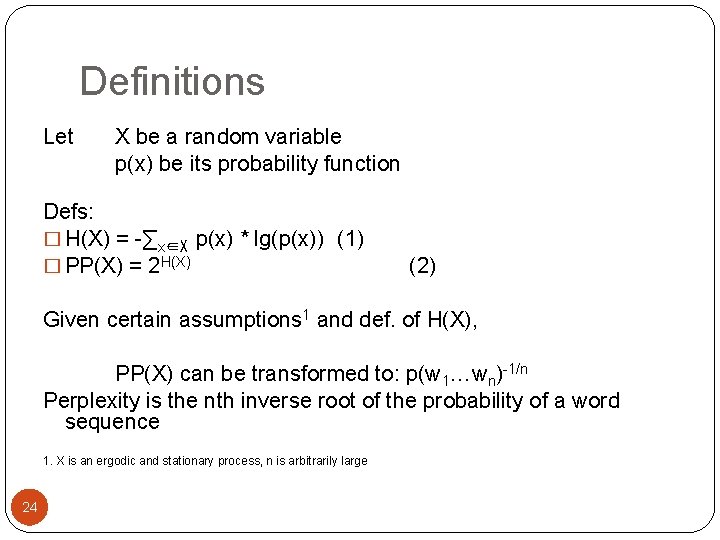

Definitions Let X be a random variable p(x) be its probability function Defs: � H(X) = -∑x∈X p(x) * lg(p(x)) (1) � PP(X) = 2 H(X) (2) Given certain assumptions 1 and def. of H(X), PP(X) can be transformed to: p(w 1…wn)-1/n Perplexity is the nth inverse root of the probability of a word sequence 1. X is an ergodic and stationary process, n is arbitrarily large 24

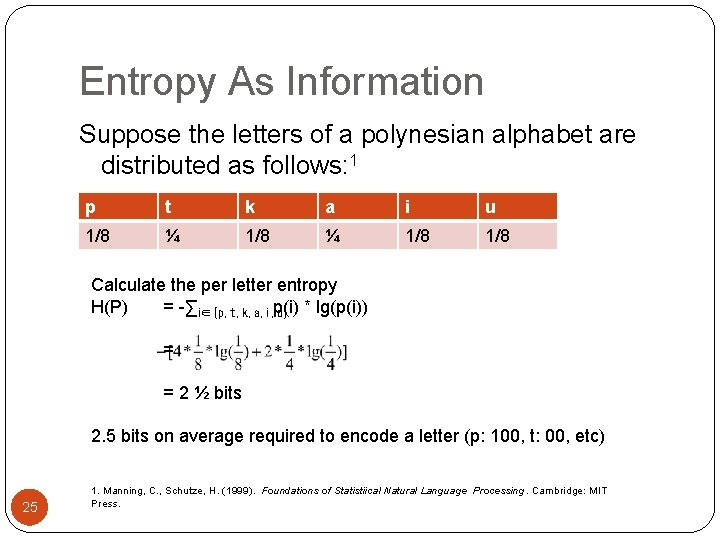

Entropy As Information Suppose the letters of a polynesian alphabet are distributed as follows: 1 p t k a i u 1/8 ¼ 1/8 Calculate the per letter entropy H(P) = -∑i∈{p, t, k, a, i, u} p(i) * lg(p(i)) = = 2 ½ bits 2. 5 bits on average required to encode a letter (p: 100, t: 00, etc) 25 1. Manning, C. , Schutze, H. (1999). Foundations of Statistiical Natural Language Processing. Cambridge: MIT Press.

Reducing the Entropy Suppose • This language consists of entirely of CV syllables • We know their distribution We can compute the conditional entropy of syllables in the language • H(V|C), where V ∈ {a, i, u} and C ∈ {p, t, k} • H(V|C) = 2. 44 bits Entropy for two letters, letter model: 5 bits Conclusion: The syllable model contains more information than the letter model 26

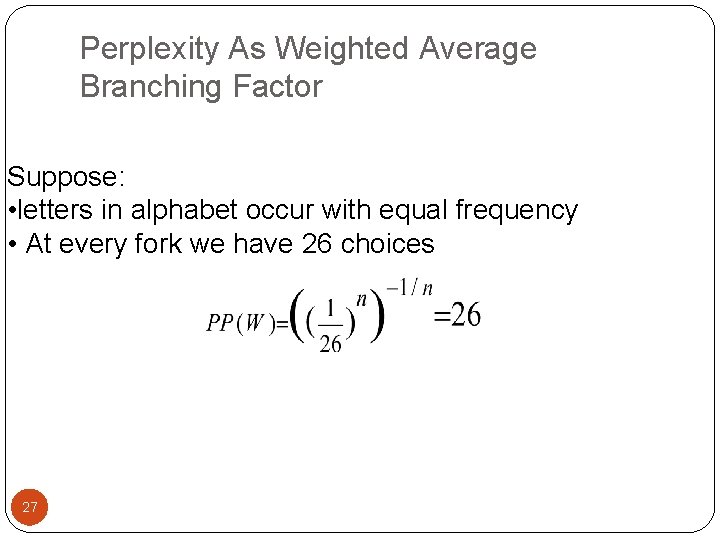

Perplexity As Weighted Average Branching Factor Suppose: • letters in alphabet occur with equal frequency • At every fork we have 26 choices 27

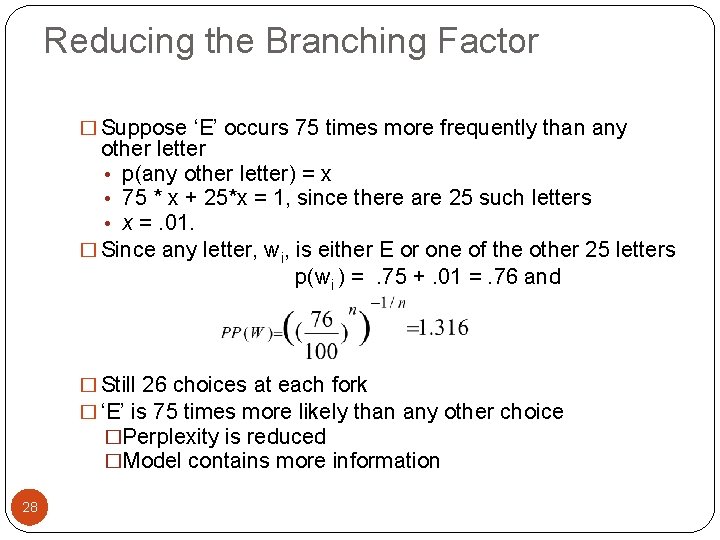

Reducing the Branching Factor � Suppose ‘E’ occurs 75 times more frequently than any other letter • p(any other letter) = x • 75 * x + 25*x = 1, since there are 25 such letters • x =. 01. � Since any letter, wi, is either E or one of the other 25 letters p(wi ) =. 75 +. 01 =. 76 and � Still 26 choices at each fork � ‘E’ is 75 times more likely than any other choice �Perplexity is reduced �Model contains more information 28

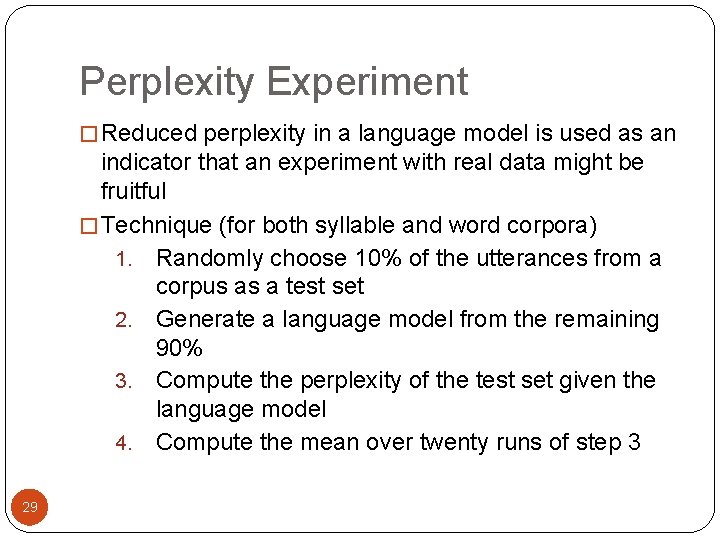

Perplexity Experiment � Reduced perplexity in a language model is used as an indicator that an experiment with real data might be fruitful � Technique (for both syllable and word corpora) 1. Randomly choose 10% of the utterances from a corpus as a test set 2. Generate a language model from the remaining 90% 3. Compute the perplexity of the test set given the language model 4. Compute the mean over twenty runs of step 3 29

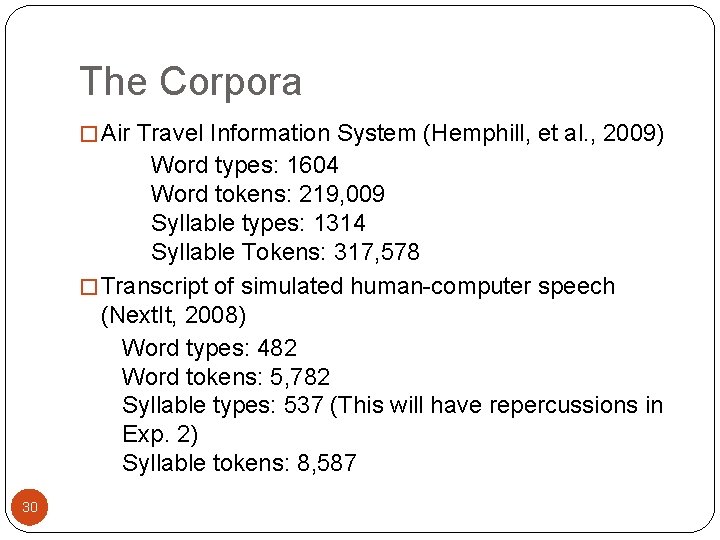

The Corpora � Air Travel Information System (Hemphill, et al. , 2009) Word types: 1604 Word tokens: 219, 009 Syllable types: 1314 Syllable Tokens: 317, 578 � Transcript of simulated human-computer speech (Next. It, 2008) Word types: 482 Word tokens: 5, 782 Syllable types: 537 (This will have repercussions in Exp. 2) Syllable tokens: 8, 587 30

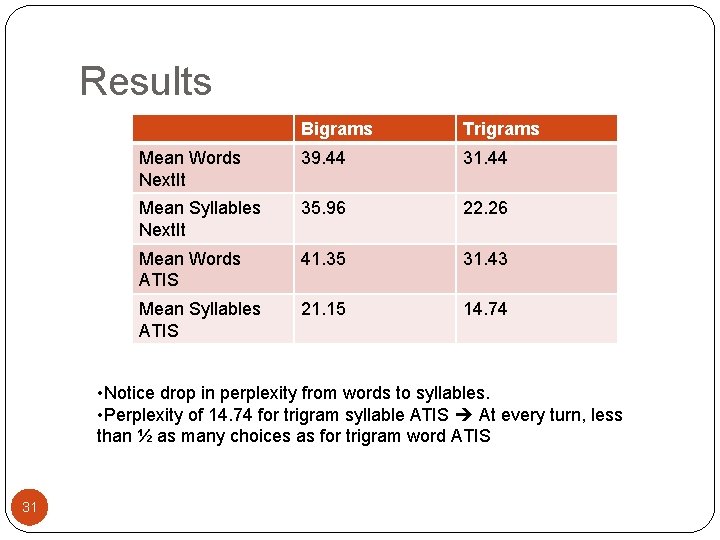

Results Bigrams Trigrams Mean Words Next. It 39. 44 31. 44 Mean Syllables Next. It 35. 96 22. 26 Mean Words ATIS 41. 35 31. 43 Mean Syllables ATIS 21. 15 14. 74 • Notice drop in perplexity from words to syllables. • Perplexity of 14. 74 for trigram syllable ATIS At every turn, less than ½ as many choices as for trigram word ATIS 31

Experiment 2: Syllables in the language Model �Hypothesis: �A syllable language model will perform better than a word-based language model �By what Measure? 32

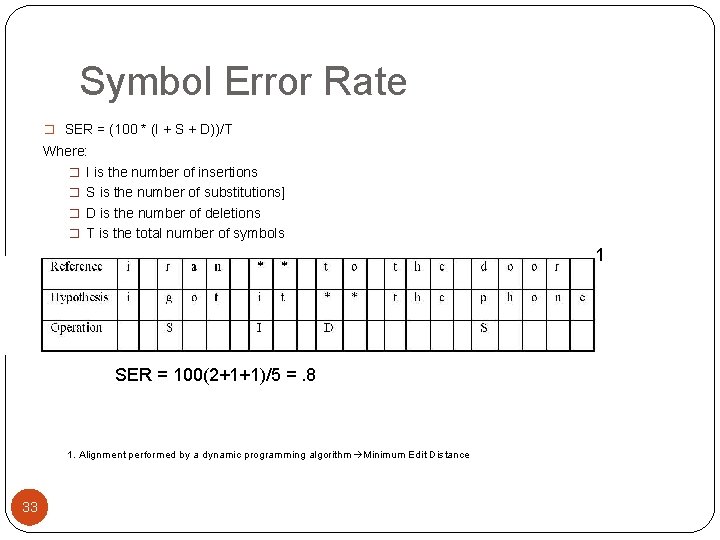

Symbol Error Rate � SER = (100 * (I + S + D))/T Where: � I is the number of insertions � S is the number of substitutions] � D is the number of deletions � T is the total number of symbols 1 SER = 100(2+1+1)/5 =. 8 1. Alignment performed by a dynamic programming algorithm Minimum Edit Distance 33

Technique �Phonetically transcribe corpus and reference files �Syllabify corpus and references files �Build language models �Run a recognizer on 18 short human-computer telephone monologues �Compute mean, median, std of SER for 1 -gram, 2 -gram, 3 gram, 4 -gram over all monologues 34

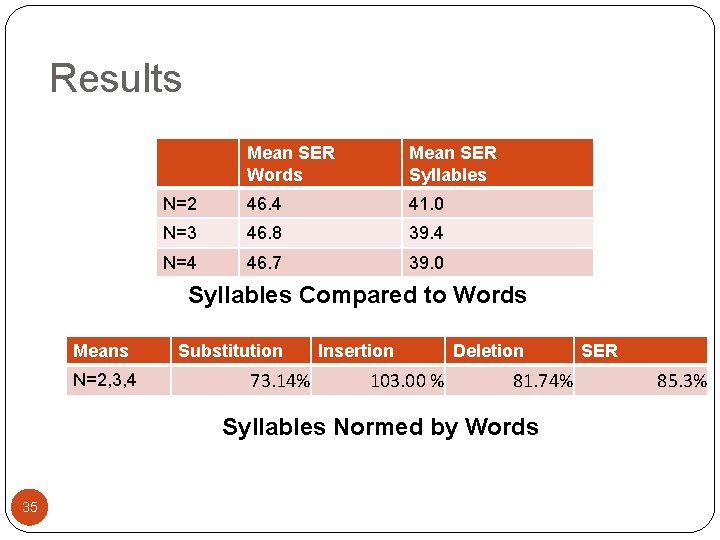

Results Mean SER Words Mean SER Syllables N=2 46. 4 41. 0 N=3 46. 8 39. 4 N=4 46. 7 39. 0 Syllables Compared to Words Means N=2, 3, 4 Substitution 73. 14% Insertion 103. 00 % Deletion 81. 74% Syllables Normed by Words 35 SER 85. 3%

Experiment 3: A Concept Component �Hypothesis: �A recognizer equipped with a post-processor that transforms syllable output to syllable/concept output will perform better than one not equipped with such a processor 36

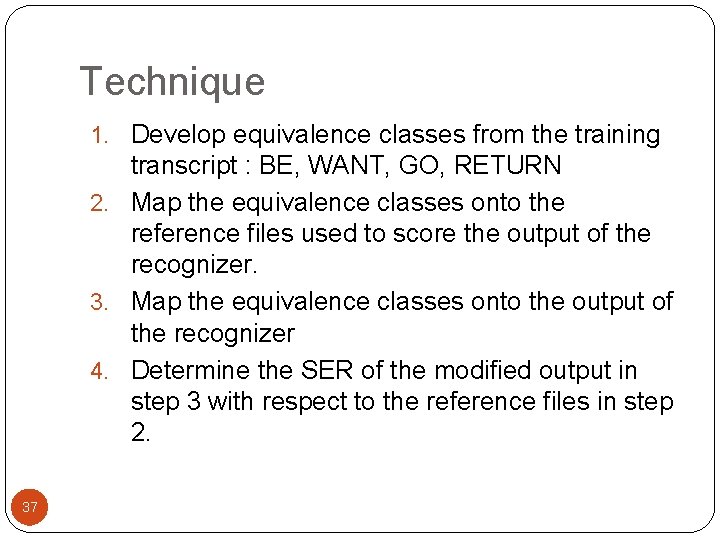

Technique 1. Develop equivalence classes from the training transcript : BE, WANT, GO, RETURN 2. Map the equivalence classes onto the reference files used to score the output of the recognizer. 3. Map the equivalence classes onto the output of the recognizer 4. Determine the SER of the modified output in step 3 with respect to the reference files in step 2. 37

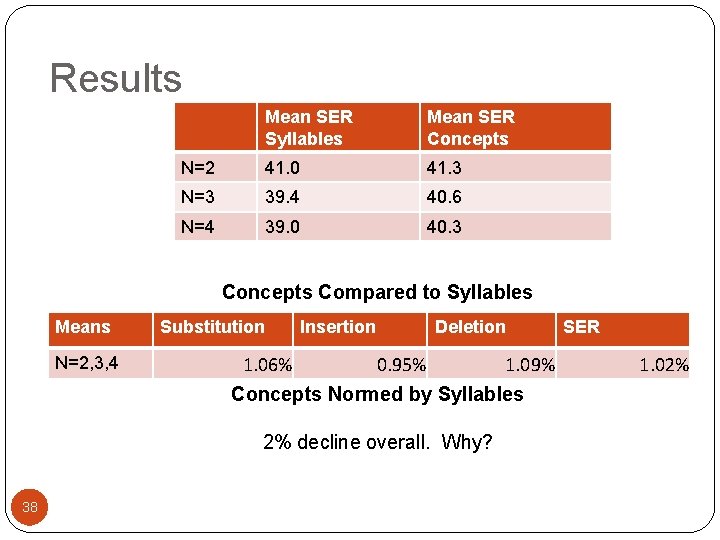

Results Mean SER Syllables Mean SER Concepts N=2 41. 0 41. 3 N=3 39. 4 40. 6 N=4 39. 0 40. 3 Concepts Compared to Syllables Means N=2, 3, 4 Substitution 1. 06% Insertion Deletion 0. 95% 1. 09% Concepts Normed by Syllables 2% decline overall. Why? 38 SER 1. 02%

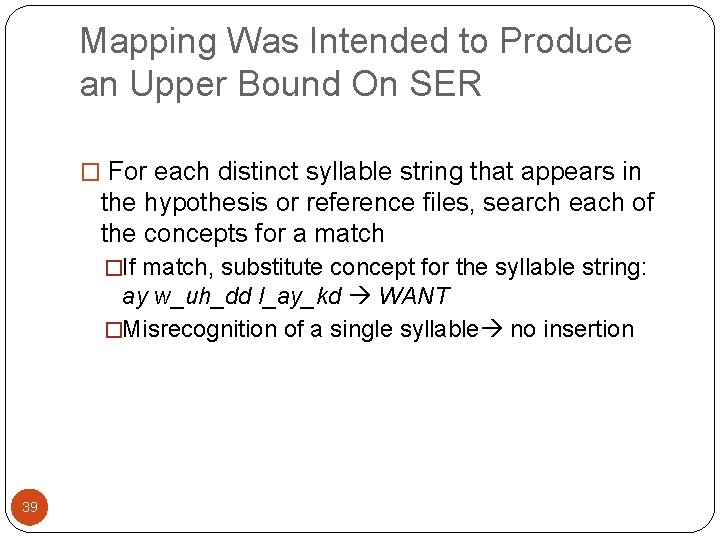

Mapping Was Intended to Produce an Upper Bound On SER � For each distinct syllable string that appears in the hypothesis or reference files, search each of the concepts for a match �If match, substitute concept for the syllable string: ay w_uh_dd l_ay_kd WANT �Misrecognition of a single syllable no insertion 39

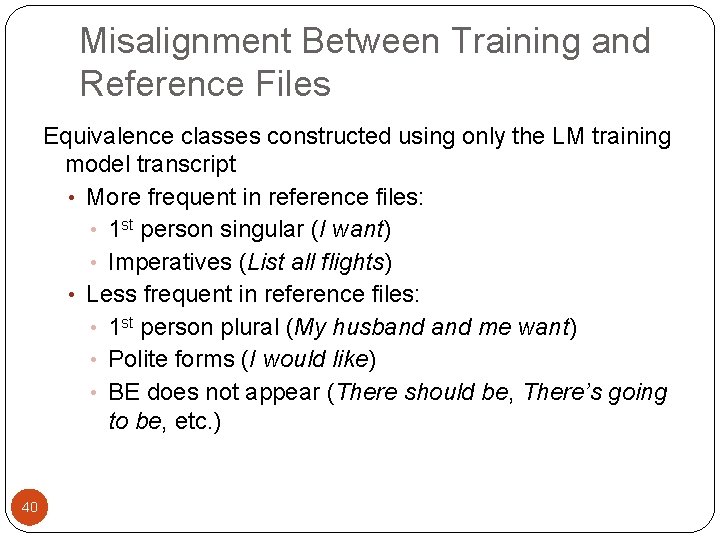

Misalignment Between Training and Reference Files Equivalence classes constructed using only the LM training model transcript • More frequent in reference files: • 1 st person singular (I want) • Imperatives (List all flights) • Less frequent in reference files: • 1 st person plural (My husband me want) • Polite forms (I would like) • BE does not appear (There should be, There’s going to be, etc. ) 40

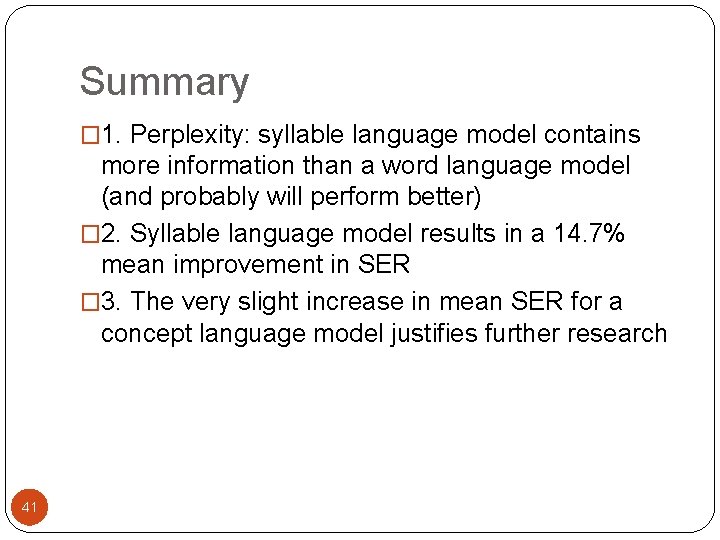

Summary � 1. Perplexity: syllable language model contains more information than a word language model (and probably will perform better) � 2. Syllable language model results in a 14. 7% mean improvement in SER � 3. The very slight increase in mean SER for a concept language model justifies further research 41

Further Research 1. Test the given system over a large production corpus 2. Develop of a probabilistic concept language model 3. Develop necessary software to pass the output of the concept language model on to an expert system 42

The (Almost, Almost) Last Word “But it must be recognized that the notion ‘probability of a sentence’ is an entirely useless one under any known interpretation of the term. ” Cited in Jurafsky and Martin (2009) from a 1969 essay on Quine.

The (Almost) Last Word He just never thought to count. 44

The Last Word Thanks To my generous committee: Bill Croft, Department of Linguistics George Luger, Department of Computer Science Caroline Smith, Department of Linguistics Chuck Wooters, U. S. Department of Defense 45

References Cover, T. , Thomas, J. (1991). Elements of Information Theory. Hoboken, NJ: John Wiley & Sons. Greenberg, S. (1999) Speaking in shorthand—A syllable-centric perspective for understanding pronunciation variation. Speech Communication, 29, 159 -176. Hemphill, C. , Godfrey, J. , Doddington, G. (2009). The ATIS Spoken Language Systems Pilot Corpus. Retrieved 6/17/09 from: http: //www. ldc. upenn. edu/Catalog/readme_files/atis/sspcrd/corpus. html Hamalainen, A. , Boves, L. , de Veth, J. , ten Bosch, L. (2007) On the utility of syllable-based acoustic models for pronunciation variation modeling. EURASIP Journal on Audio, Speech, and Music Processing. 46460, 1 -11. Jurafsky, D. , Martin, J. (2000) Speech and Language Processing: An Introduction to Natural Language Processing, Computational Linguistics, and Speech Recognition. Upper Saddle River, NJ: Prentice Hall. Jurafsky, D. , Martin, J. (2009) Speech and Language Processing: An Introduction to Natural Language Processing, Computational Linguistics, and Speech Recognition. Upper Saddle River, NJ: Prentice Hall. Manning, C. , Schutze, H. (1999). Foundations of Statistical Natural Language Processing. Cambridge: MIT Press. Next. It. (2008). Retrieved 4/5/08 from: http: /www. nextit. com. 46 NIST. (2007) Syllabification software. National Institute of Standards: NIST Spoken Language Technology Evaluation and Utility. Retrieved 11/30/07 from: http: //www. nist. gov/speech/tools/.

Additional Slides 47

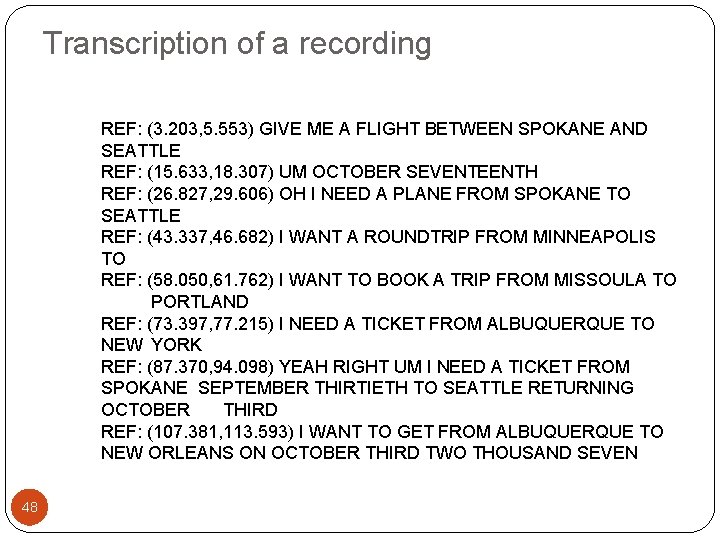

Transcription of a recording REF: (3. 203, 5. 553) GIVE ME A FLIGHT BETWEEN SPOKANE AND SEATTLE REF: (15. 633, 18. 307) UM OCTOBER SEVENTEENTH REF: (26. 827, 29. 606) OH I NEED A PLANE FROM SPOKANE TO SEATTLE REF: (43. 337, 46. 682) I WANT A ROUNDTRIP FROM MINNEAPOLIS TO REF: (58. 050, 61. 762) I WANT TO BOOK A TRIP FROM MISSOULA TO PORTLAND REF: (73. 397, 77. 215) I NEED A TICKET FROM ALBUQUERQUE TO NEW YORK REF: (87. 370, 94. 098) YEAH RIGHT UM I NEED A TICKET FROM SPOKANE SEPTEMBER THIRTIETH TO SEATTLE RETURNING OCTOBER THIRD REF: (107. 381, 113. 593) I WANT TO GET FROM ALBUQUERQUE TO NEW ORLEANS ON OCTOBER THIRD TWO THOUSAND SEVEN 48

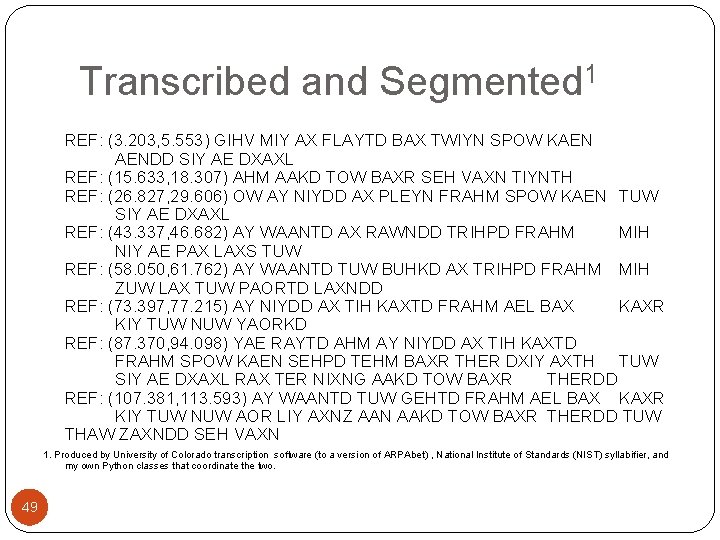

Transcribed and Segmented 1 REF: (3. 203, 5. 553) GIHV MIY AX FLAYTD BAX TWIYN SPOW KAEN AENDD SIY AE DXAXL REF: (15. 633, 18. 307) AHM AAKD TOW BAXR SEH VAXN TIYNTH REF: (26. 827, 29. 606) OW AY NIYDD AX PLEYN FRAHM SPOW KAEN TUW SIY AE DXAXL REF: (43. 337, 46. 682) AY WAANTD AX RAWNDD TRIHPD FRAHM MIH NIY AE PAX LAXS TUW REF: (58. 050, 61. 762) AY WAANTD TUW BUHKD AX TRIHPD FRAHM MIH ZUW LAX TUW PAORTD LAXNDD REF: (73. 397, 77. 215) AY NIYDD AX TIH KAXTD FRAHM AEL BAX KAXR KIY TUW NUW YAORKD REF: (87. 370, 94. 098) YAE RAYTD AHM AY NIYDD AX TIH KAXTD FRAHM SPOW KAEN SEHPD TEHM BAXR THER DXIY AXTH TUW SIY AE DXAXL RAX TER NIXNG AAKD TOW BAXR THERDD REF: (107. 381, 113. 593) AY WAANTD TUW GEHTD FRAHM AEL BAX KAXR KIY TUW NUW AOR LIY AXNZ AAN AAKD TOW BAXR THERDD TUW THAW ZAXNDD SEH VAXN 1. Produced by University of Colorado transcription software (to a version of ARPAbet) , National Institute of Standards (NIST) syllabifier, and my own Python classes that coordinate the two. 49

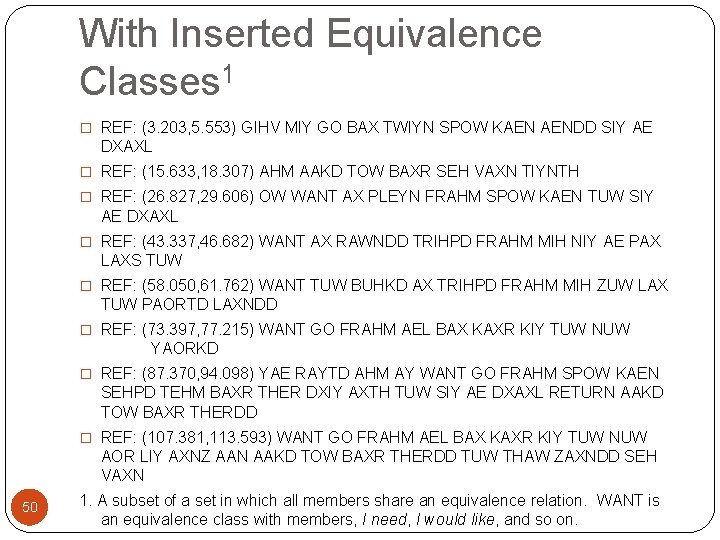

With Inserted Equivalence Classes 1 � REF: (3. 203, 5. 553) GIHV MIY GO BAX TWIYN SPOW KAEN AENDD SIY AE DXAXL � REF: (15. 633, 18. 307) AHM AAKD TOW BAXR SEH VAXN TIYNTH � REF: (26. 827, 29. 606) OW WANT AX PLEYN FRAHM SPOW KAEN TUW SIY AE DXAXL � REF: (43. 337, 46. 682) WANT AX RAWNDD TRIHPD FRAHM MIH NIY AE PAX LAXS TUW � REF: (58. 050, 61. 762) WANT TUW BUHKD AX TRIHPD FRAHM MIH ZUW LAX TUW PAORTD LAXNDD � REF: (73. 397, 77. 215) WANT GO FRAHM AEL BAX KAXR KIY TUW NUW YAORKD � REF: (87. 370, 94. 098) YAE RAYTD AHM AY WANT GO FRAHM SPOW KAEN SEHPD TEHM BAXR THER DXIY AXTH TUW SIY AE DXAXL RETURN AAKD TOW BAXR THERDD � REF: (107. 381, 113. 593) WANT GO FRAHM AEL BAX KAXR KIY TUW NUW AOR LIY AXNZ AAN AAKD TOW BAXR THERDD TUW THAW ZAXNDD SEH VAXN 50 1. A subset of a set in which all members share an equivalence relation. WANT is an equivalence class with members, I need, I would like, and so on.

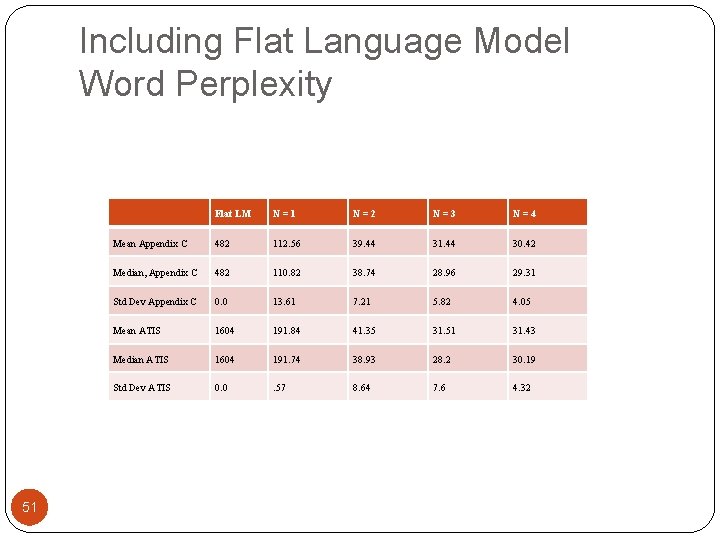

Including Flat Language Model Word Perplexity 51 Flat LM N=1 N=2 N=3 N=4 Mean Appendix C 482 112. 56 39. 44 31. 44 30. 42 Median, Appendix C 482 110. 82 38. 74 28. 96 29. 31 Std Dev Appendix C 0. 0 13. 61 7. 21 5. 82 4. 05 Mean ATIS 1604 191. 84 41. 35 31. 51 31. 43 Median ATIS 1604 191. 74 38. 93 28. 2 30. 19 Std Dev ATIS 0. 0 . 57 8. 64 7. 6 4. 32

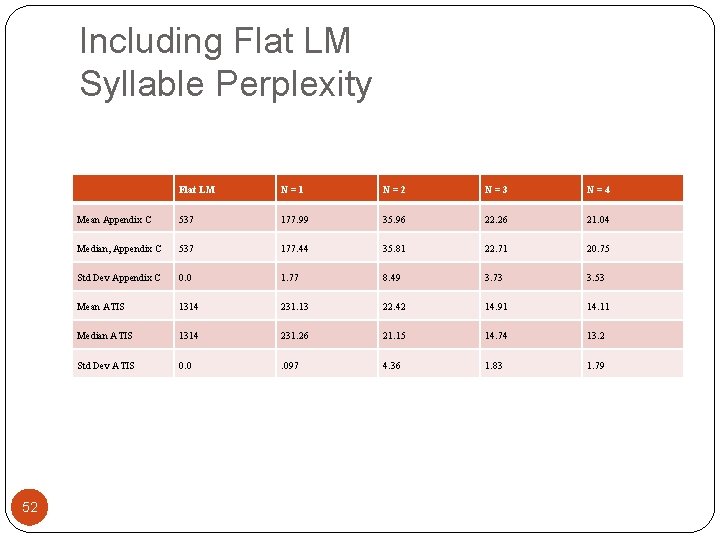

Including Flat LM Syllable Perplexity 52 Flat LM N=1 N=2 N=3 N=4 Mean Appendix C 537 177. 99 35. 96 22. 26 21. 04 Median, Appendix C 537 177. 44 35. 81 22. 71 20. 75 Std Dev Appendix C 0. 0 1. 77 8. 49 3. 73 3. 53 Mean ATIS 1314 231. 13 22. 42 14. 91 14. 11 Median ATIS 1314 231. 26 21. 15 14. 74 13. 2 Std Dev ATIS 0. 0 . 097 4. 36 1. 83 1. 79

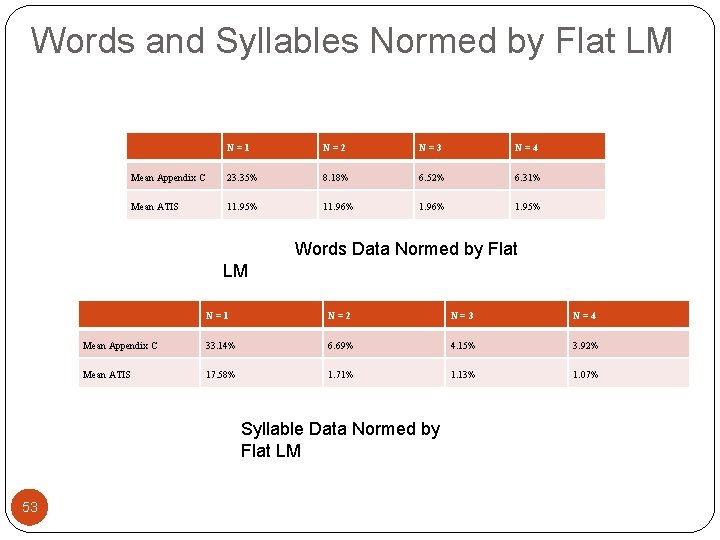

Words and Syllables Normed by Flat LM N=1 N=2 N=3 N=4 Mean Appendix C 23. 35% 8. 18% 6. 52% 6. 31% Mean ATIS 11. 95% 11. 96% 1. 95% Words Data Normed by Flat LM N=1 N=2 N=3 N=4 Mean Appendix C 33. 14% 6. 69% 4. 15% 3. 92% Mean ATIS 17. 58% 1. 71% 1. 13% 1. 07% Syllable Data Normed by Flat LM 53

Syllabifiers �Syllabifier from National Institute of Standards and Technology (NIST, 2007) �Based on Daniel Kahn’s 1976 dissertation from MIT (Kahn, 1976) �Generative in nature and English-biased 54

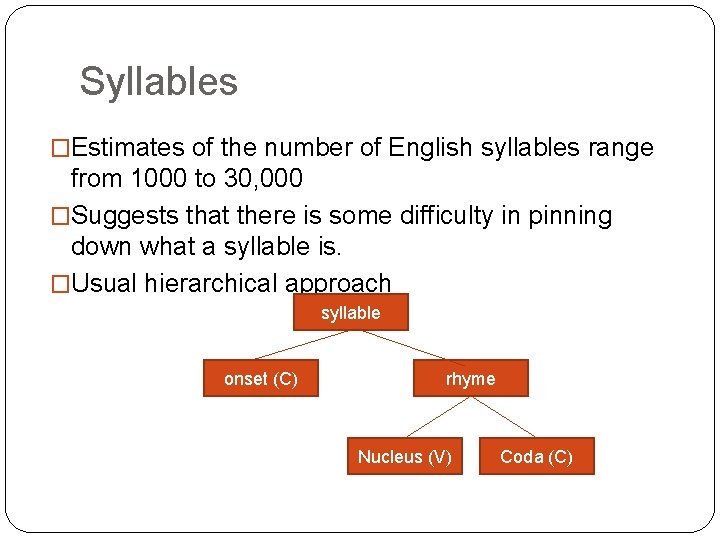

Syllables �Estimates of the number of English syllables range from 1000 to 30, 000 �Suggests that there is some difficulty in pinning down what a syllable is. �Usual hierarchical approach syllable onset (C) rhyme Nucleus (V) Coda (C)

Sonority �Sonority rises to the nucleus and falls to the coda �Speech sounds appear to form a sonority hierarchy (from highest to lowest) vowels, glides, liquids, nasals, obstruents �Useful but not absolute: e. g, both depth and spit seem to violate the sonority hierarchy

- Slides: 56