Survey of unconstrained optimization gradient based algorithms Unconstrained

Survey of unconstrained optimization gradient based algorithms • • Unconstrained minimization Steepest descent vs. conjugate gradients Newton and quasi-Newton methods Matlab fminunc

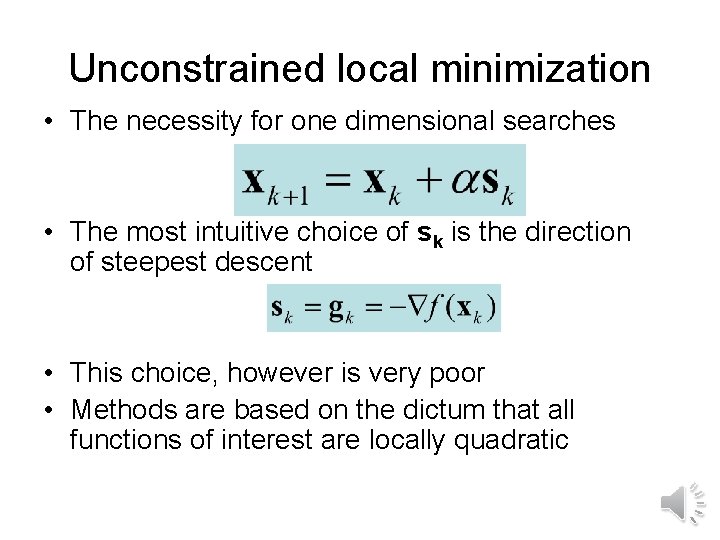

Unconstrained local minimization • The necessity for one dimensional searches • The most intuitive choice of sk is the direction of steepest descent • This choice, however is very poor • Methods are based on the dictum that all functions of interest are locally quadratic

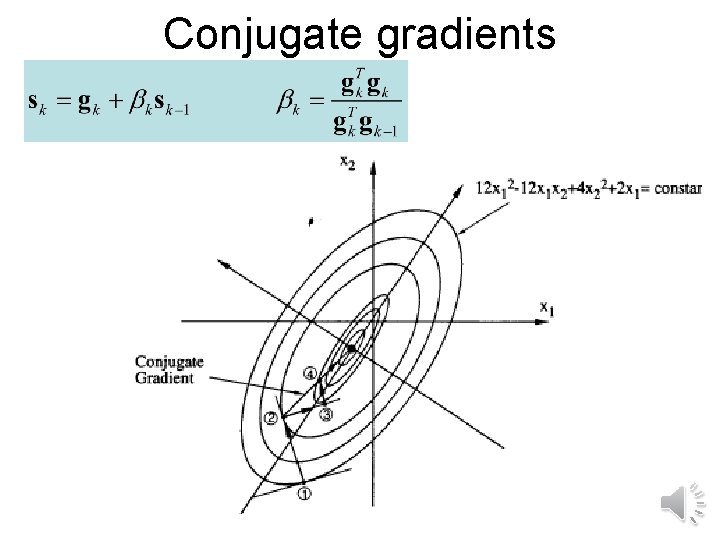

Conjugate gradients

Newton and quasi-Newton methods • Newton • Quasi-Newton methods use successive evaluations of gradients to obtain approximation to Hessian or its inverse • Matlab’s fminunc uses a variant of Newton if gradient routine is provided, otherwise BFGS quasi-Newton. • The variant of Newton is called trust region approach and is based on using a quadratic approximation of the function inside a box.

Problems Unconstrained algorithms • Explain the differences and commonalities of steepest descent, conjugate gradients, Newton’s method, and quasi-Newton methods for unconstrained minimization. Solution on Notes page. • Use fminunc to minimize the Rosenbrock Banana function and compare the trajectories of fminsearch and fminunc starting from (-1. 2, 1), with and without the routine for calculating the gradient. Plot the three trajectories. Solution

- Slides: 5