Support Vector Machines Lecturer Yishay Mansour Itay Kirshenbaum

Support Vector Machines Lecturer: Yishay Mansour Itay Kirshenbaum

Lecture Overview In this lecture we present in detail one of the most theoretically well motivated and practically most effective classification algorithms in modern machine learning: Support Vector Machines (SVMs).

Lecture Overview – Cont. n n n We begin with building the intuition behind SVMs continue to define SVM as an optimization problem and discuss how to efficiently solve it. We conclude with an analysis of the error rate of SVMs using two techniques: Leave One Out and VCdimension.

Introduction n Support Vector Machine is a supervised learning algorithm Used to learn a hyperplane that can solve the binary classification problem Among the most extensively studied problems in machine learning.

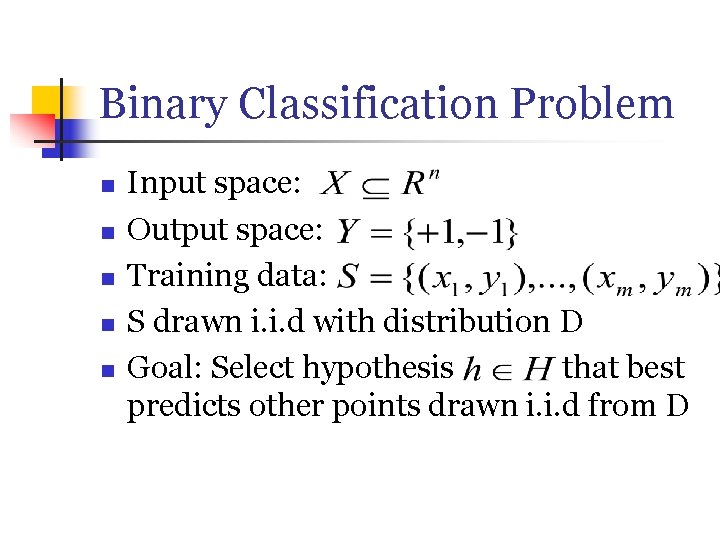

Binary Classification Problem n n n Input space: Output space: Training data: S drawn i. i. d with distribution D Goal: Select hypothesis that best predicts other points drawn i. i. d from D

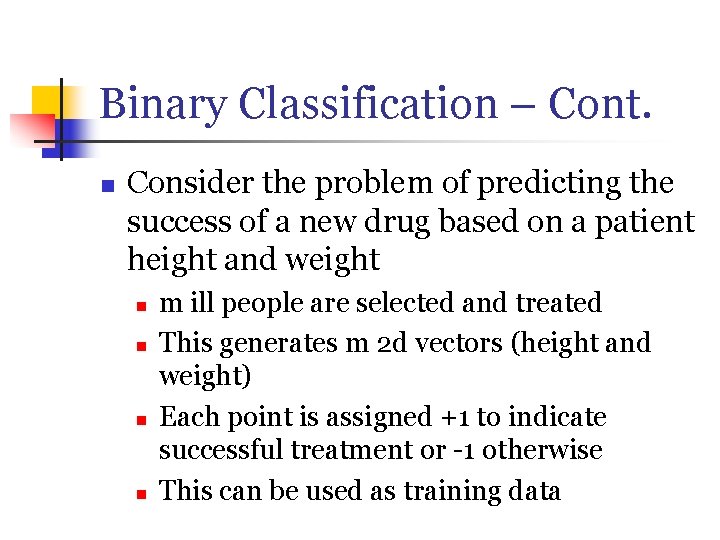

Binary Classification – Cont. n Consider the problem of predicting the success of a new drug based on a patient height and weight n n m ill people are selected and treated This generates m 2 d vectors (height and weight) Each point is assigned +1 to indicate successful treatment or -1 otherwise This can be used as training data

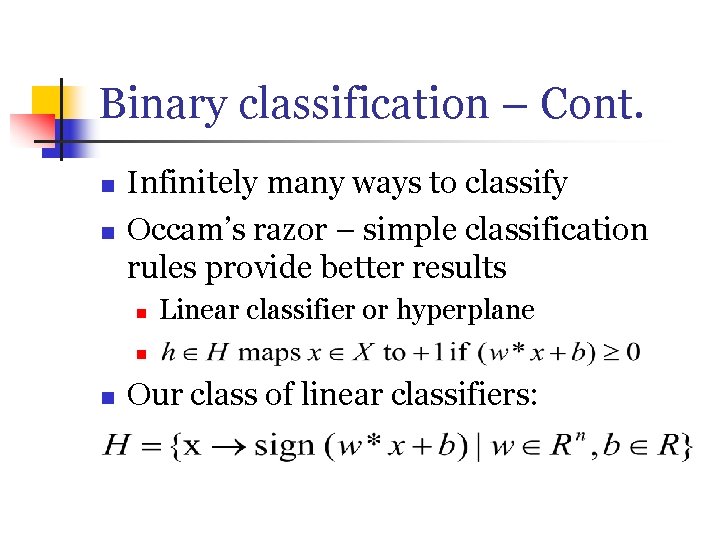

Binary classification – Cont. n n Infinitely many ways to classify Occam’s razor – simple classification rules provide better results n Linear classifier or hyperplane n n Our class of linear classifiers:

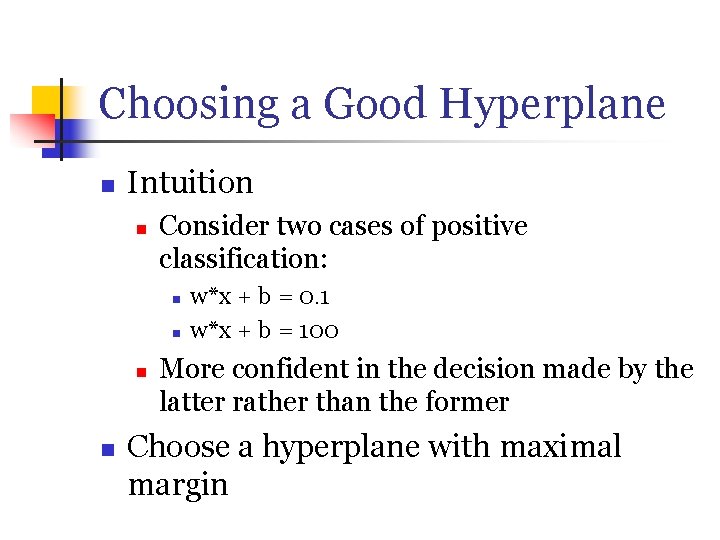

Choosing a Good Hyperplane n Intuition n Consider two cases of positive classification: n n w*x + b = 0. 1 w*x + b = 100 More confident in the decision made by the latter rather than the former Choose a hyperplane with maximal margin

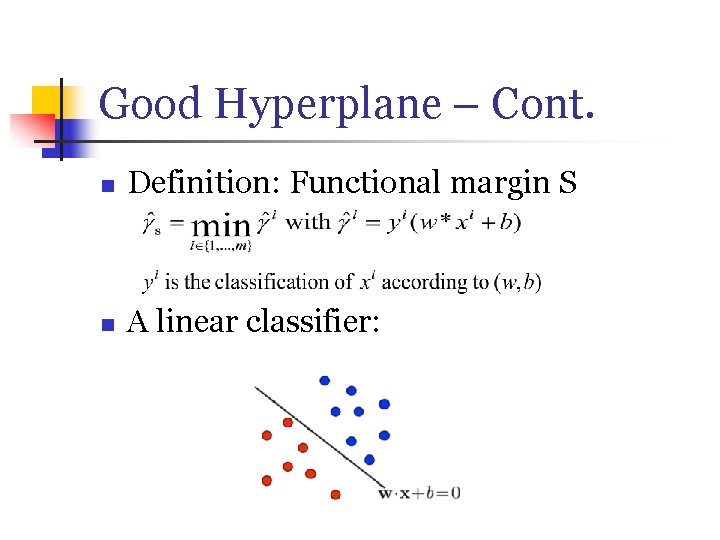

Good Hyperplane – Cont. n Definition: Functional margin S n A linear classifier:

Maximal Margin n w, b can be scaled to increase margin n sign(w*x + b) = sign(5 w*x + 5 b) for all x (5 w, 5 b) is 5 times greater than (w, b) Cope by adding an additional constraint: n ||w|| = 1

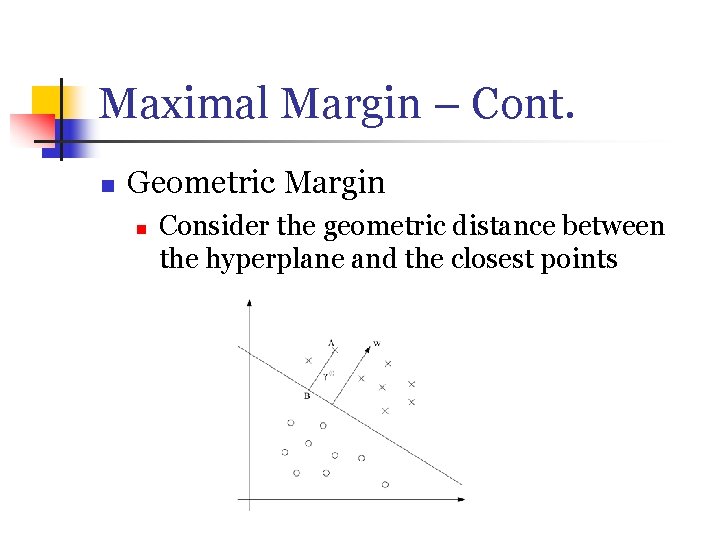

Maximal Margin – Cont. n Geometric Margin n Consider the geometric distance between the hyperplane and the closest points

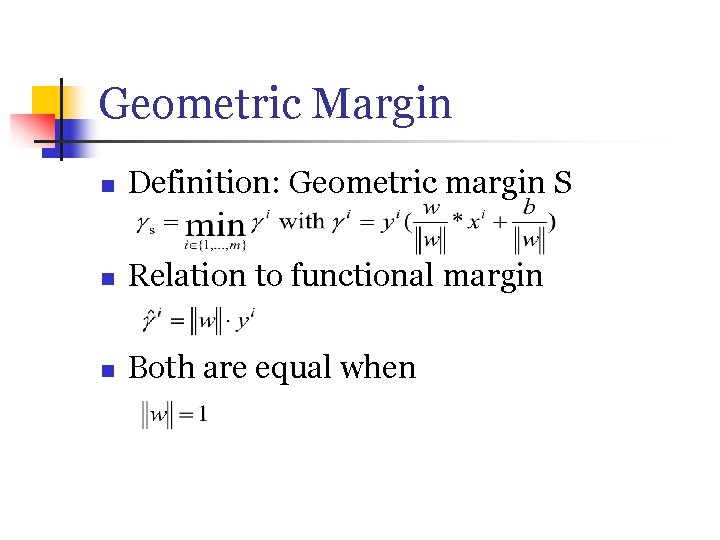

Geometric Margin n Definition: Geometric margin S n Relation to functional margin n Both are equal when

The Algorithm n We saw: n n Two definitions of the margin Intuition behind seeking a maximizing hyperplane Goal: Write an optimization program that finds such a hyperplan We always look for (w, b) maximizing the margin

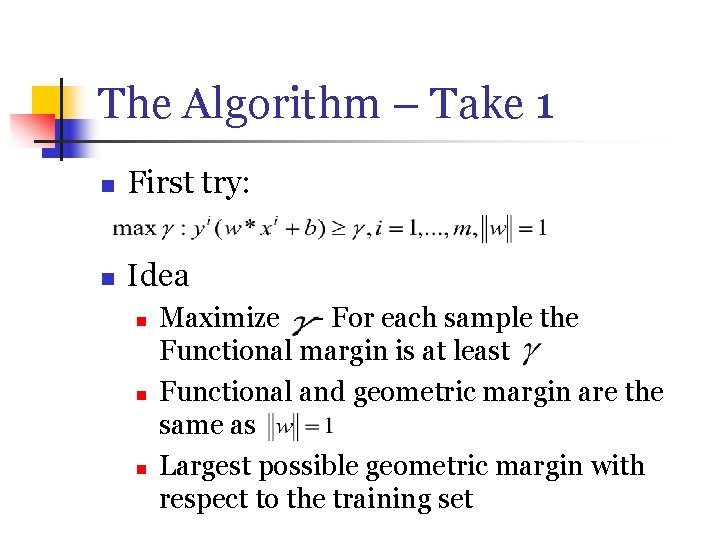

The Algorithm – Take 1 n First try: n Idea n n n Maximize - For each sample the Functional margin is at least Functional and geometric margin are the same as Largest possible geometric margin with respect to the training set

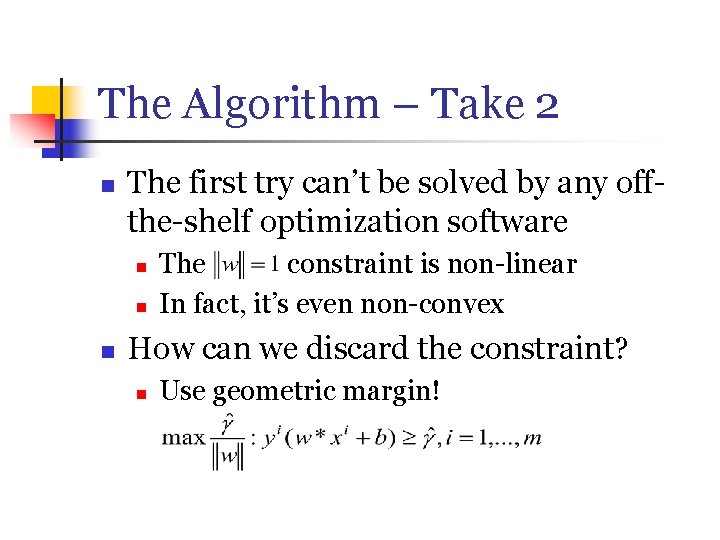

The Algorithm – Take 2 n The first try can’t be solved by any offthe-shelf optimization software n n n The constraint is non-linear In fact, it’s even non-convex How can we discard the constraint? n Use geometric margin!

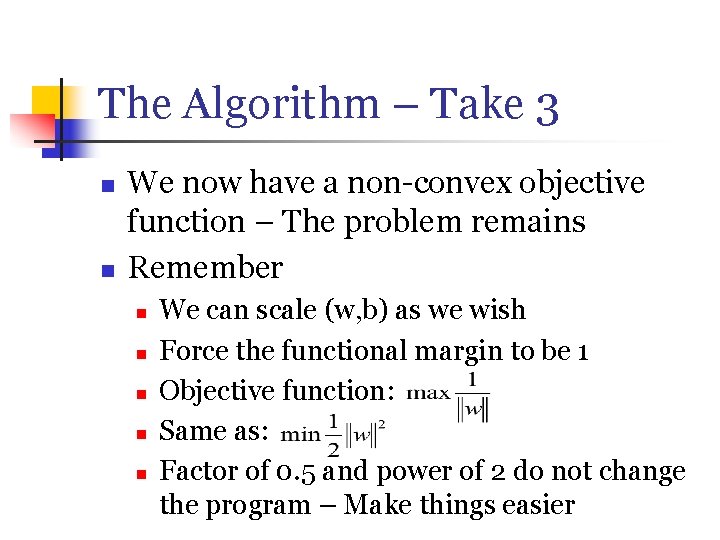

The Algorithm – Take 3 n n We now have a non-convex objective function – The problem remains Remember n n n We can scale (w, b) as we wish Force the functional margin to be 1 Objective function: Same as: Factor of 0. 5 and power of 2 do not change the program – Make things easier

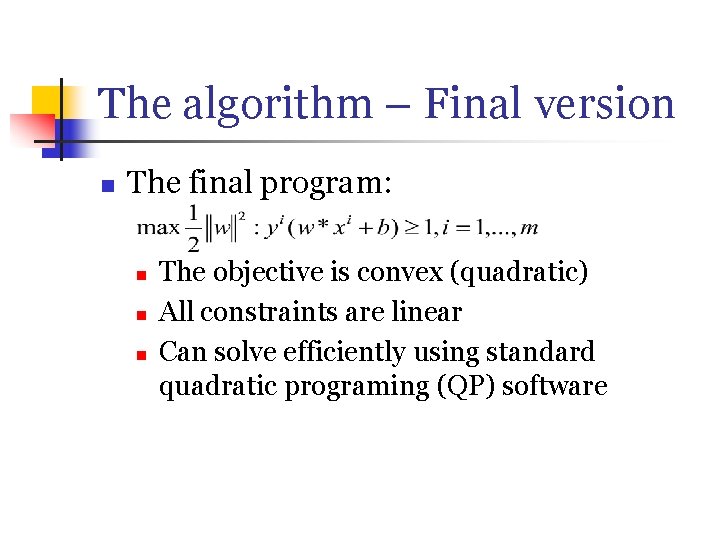

The algorithm – Final version n The final program: n n n The objective is convex (quadratic) All constraints are linear Can solve efficiently using standard quadratic programing (QP) software

Convex Optimization n n We want to solve the optimization problem more efficiently than generic QP Solution – Use convex optimization techniques

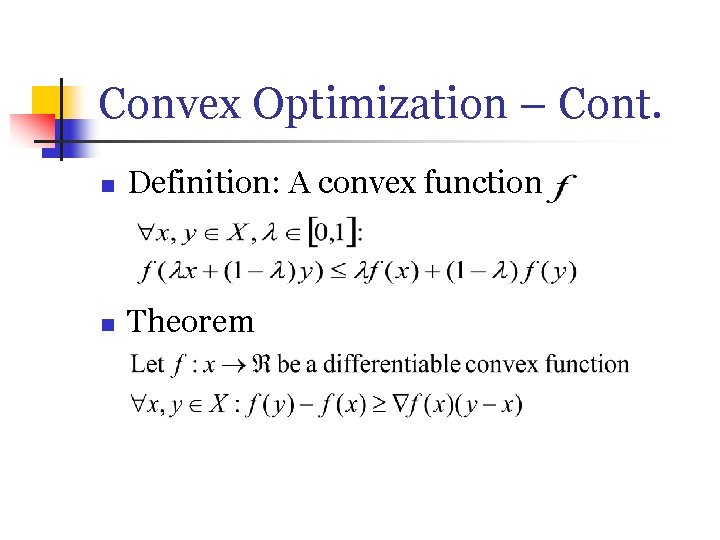

Convex Optimization – Cont. n Definition: A convex function n Theorem

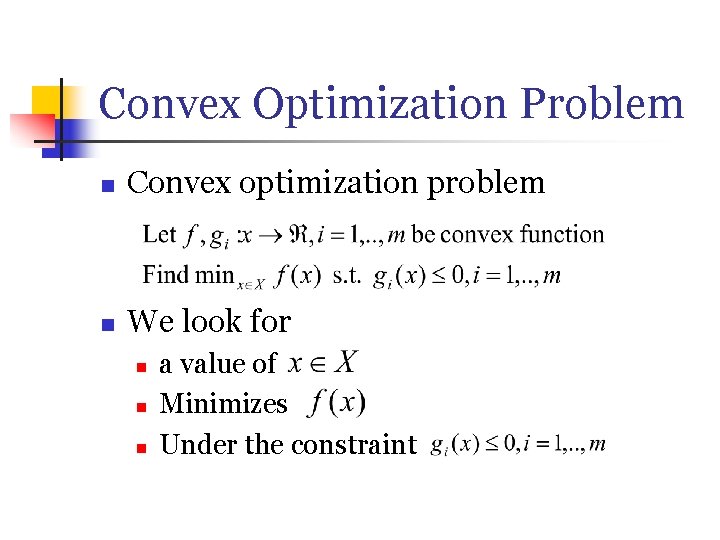

Convex Optimization Problem n Convex optimization problem n We look for n n n a value of Minimizes Under the constraint

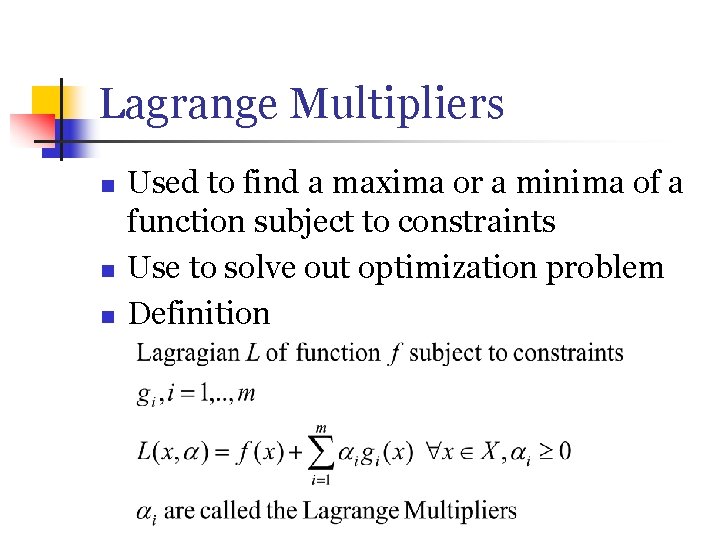

Lagrange Multipliers n n n Used to find a maxima or a minima of a function subject to constraints Use to solve out optimization problem Definition

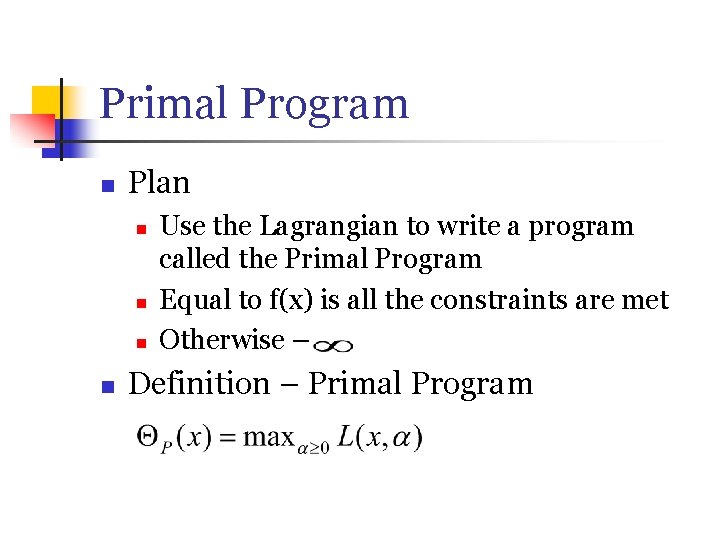

Primal Program n Plan n n Use the Lagrangian to write a program called the Primal Program Equal to f(x) is all the constraints are met Otherwise – Definition – Primal Program

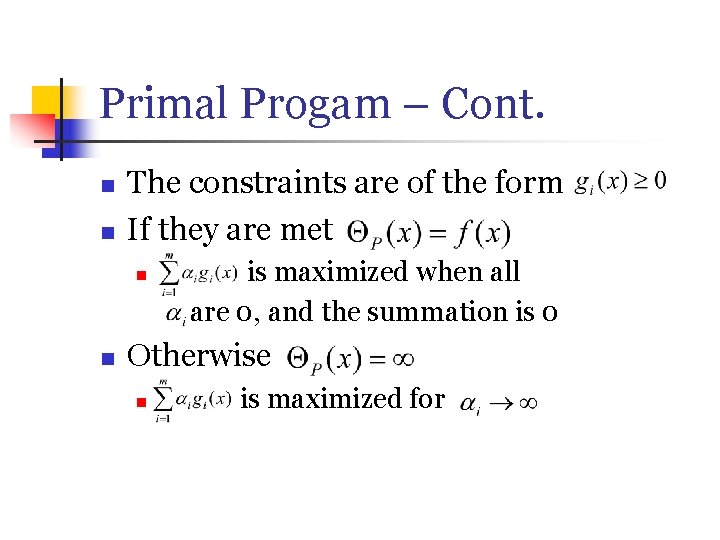

Primal Progam – Cont. n n The constraints are of the form If they are met n n is maximized when all are 0, and the summation is 0 Otherwise n is maximized for

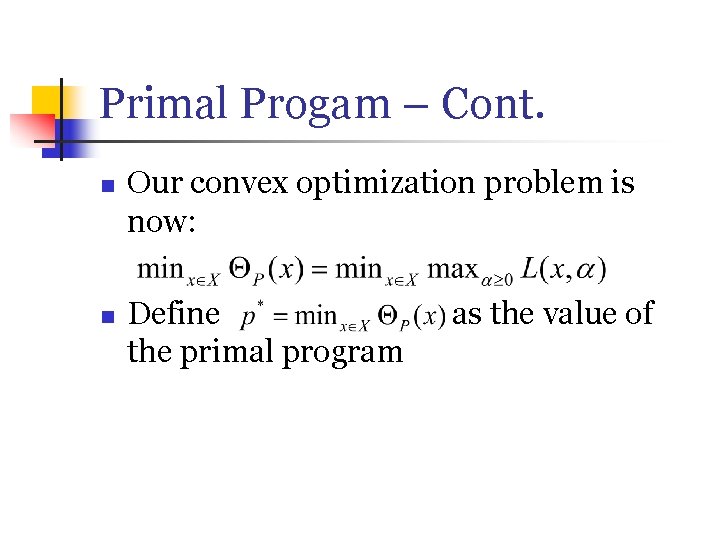

Primal Progam – Cont. n n Our convex optimization problem is now: Define the primal program as the value of

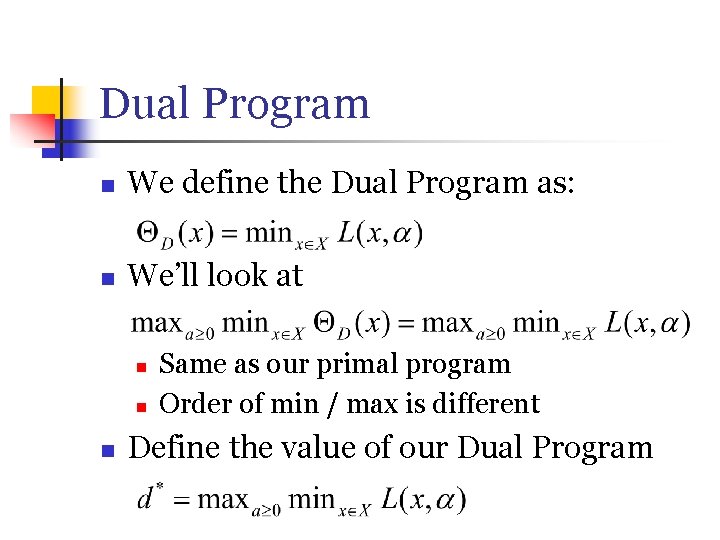

Dual Program n We define the Dual Program as: n We’ll look at n n n Same as our primal program Order of min / max is different Define the value of our Dual Program

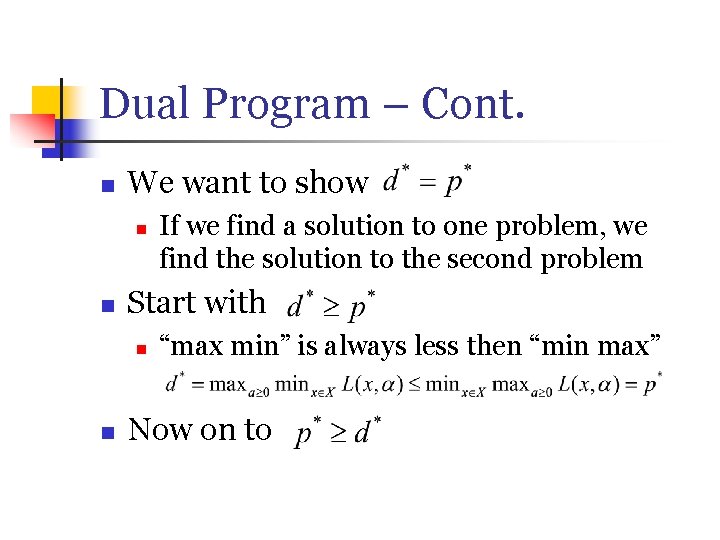

Dual Program – Cont. n We want to show n n Start with n n If we find a solution to one problem, we find the solution to the second problem “max min” is always less then “min max” Now on to

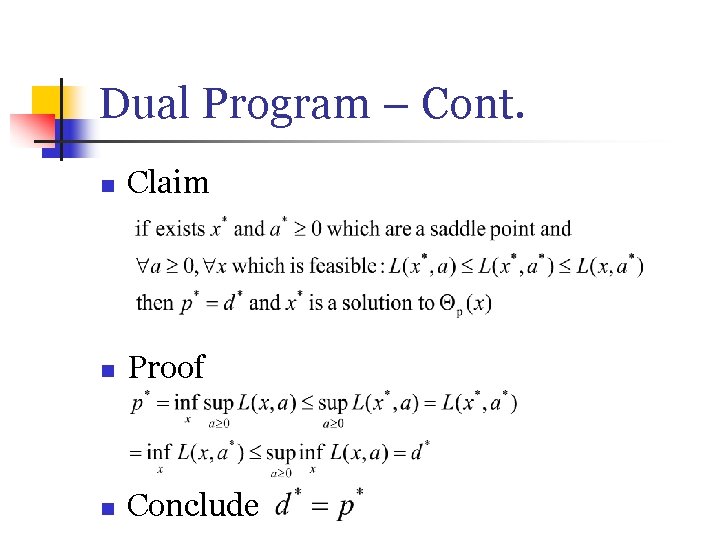

Dual Program – Cont. n Claim n Proof n Conclude

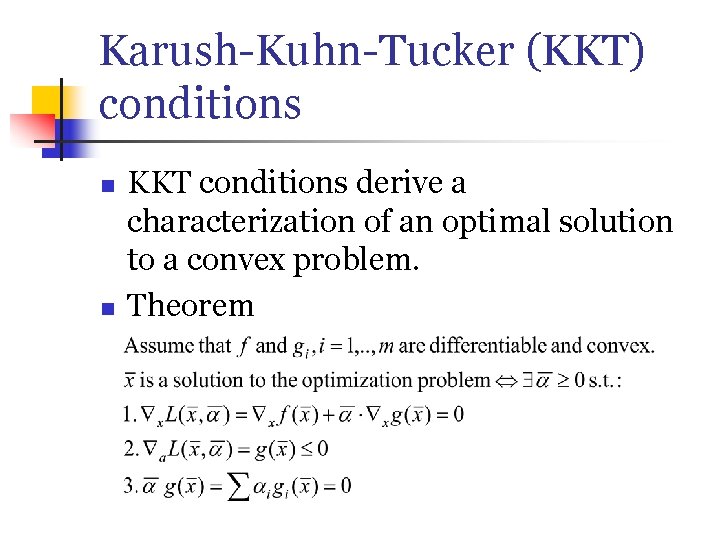

Karush-Kuhn-Tucker (KKT) conditions n n KKT conditions derive a characterization of an optimal solution to a convex problem. Theorem

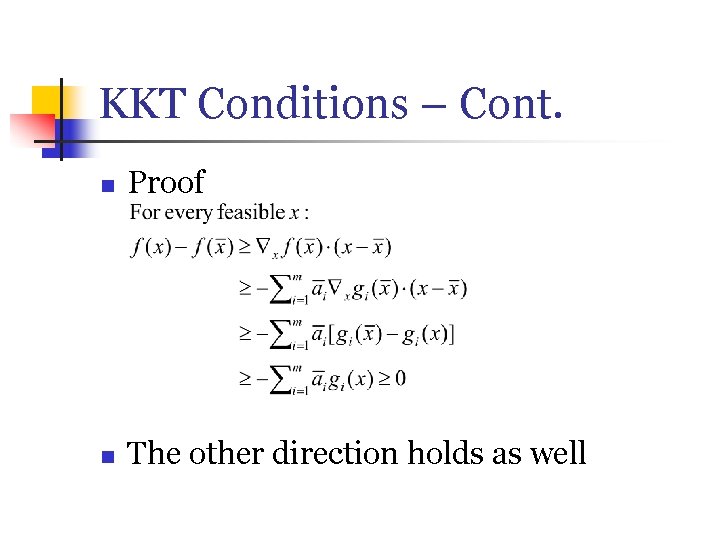

KKT Conditions – Cont. n Proof n The other direction holds as well

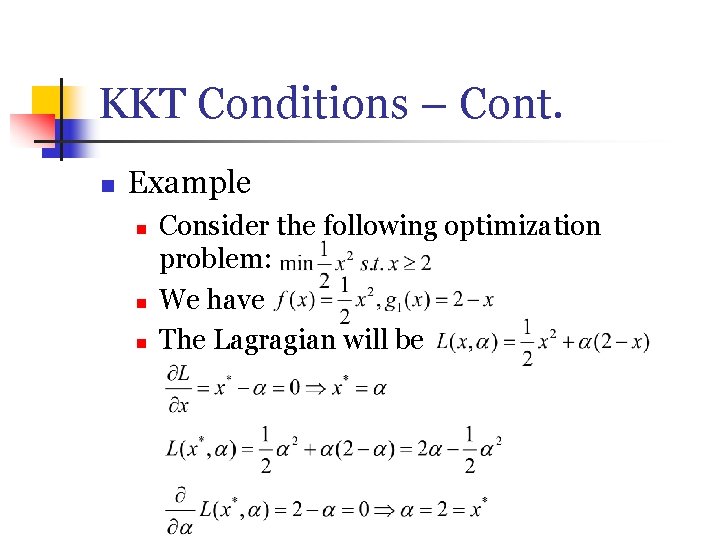

KKT Conditions – Cont. n Example n n n Consider the following optimization problem: We have The Lagragian will be

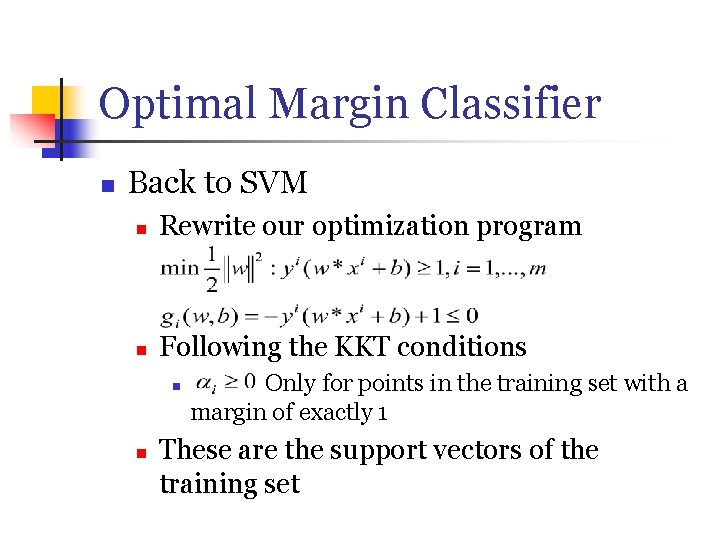

Optimal Margin Classifier n Back to SVM n Rewrite our optimization program n Following the KKT conditions n n Only for points in the training set with a margin of exactly 1 These are the support vectors of the training set

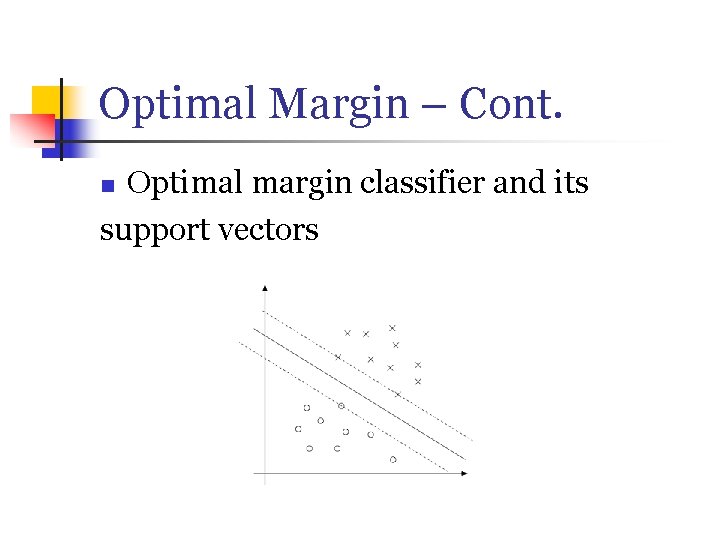

Optimal Margin – Cont. Optimal margin classifier and its support vectors n

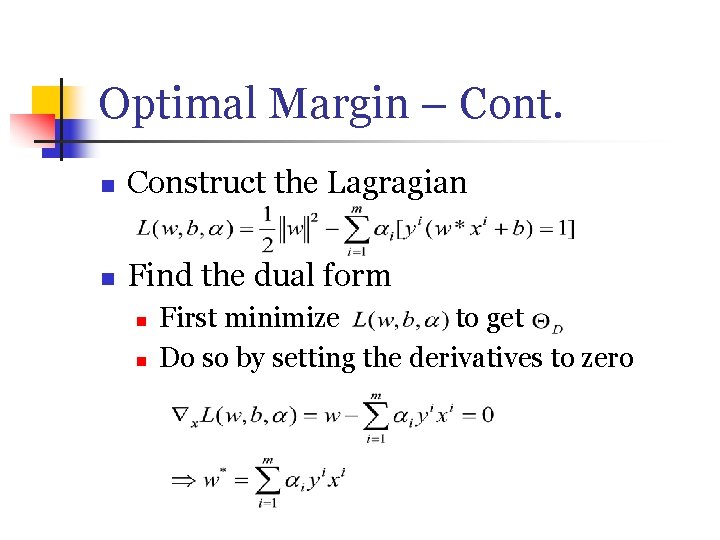

Optimal Margin – Cont. n Construct the Lagragian n Find the dual form n n First minimize to get Do so by setting the derivatives to zero

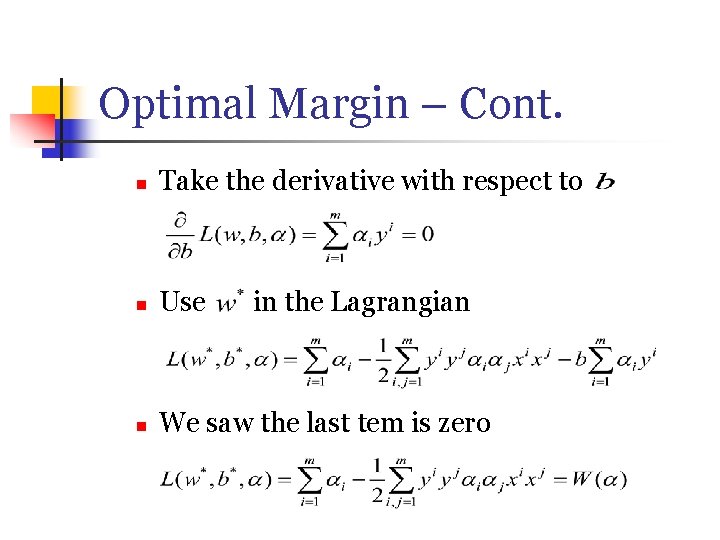

Optimal Margin – Cont. n Take the derivative with respect to n Use n We saw the last tem is zero in the Lagrangian

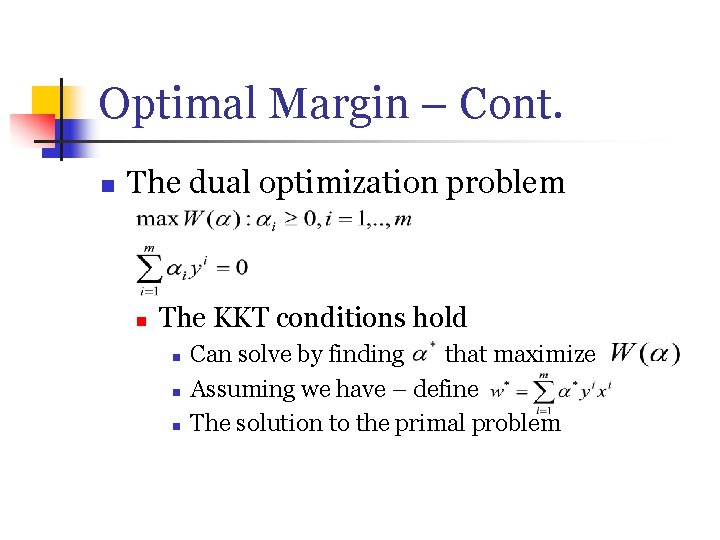

Optimal Margin – Cont. n The dual optimization problem n The KKT conditions hold n n n Can solve by finding that maximize Assuming we have – define The solution to the primal problem

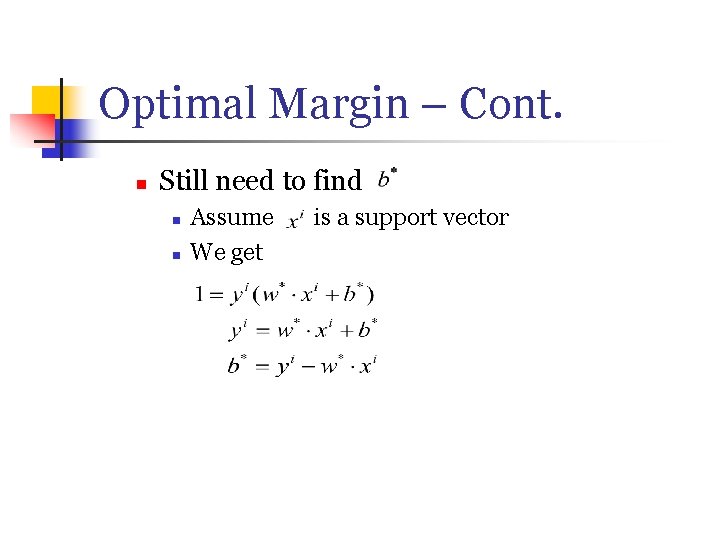

Optimal Margin – Cont. n Still need to find n n Assume We get is a support vector

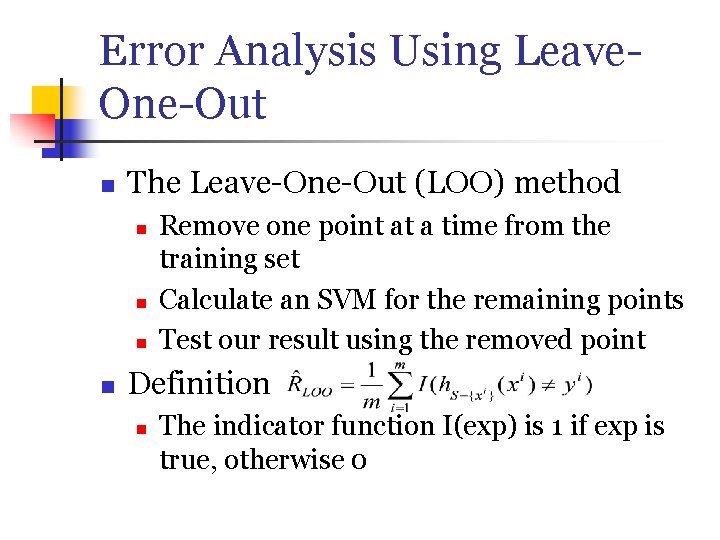

Error Analysis Using Leave. One-Out n The Leave-One-Out (LOO) method n n Remove one point at a time from the training set Calculate an SVM for the remaining points Test our result using the removed point Definition n The indicator function I(exp) is 1 if exp is true, otherwise 0

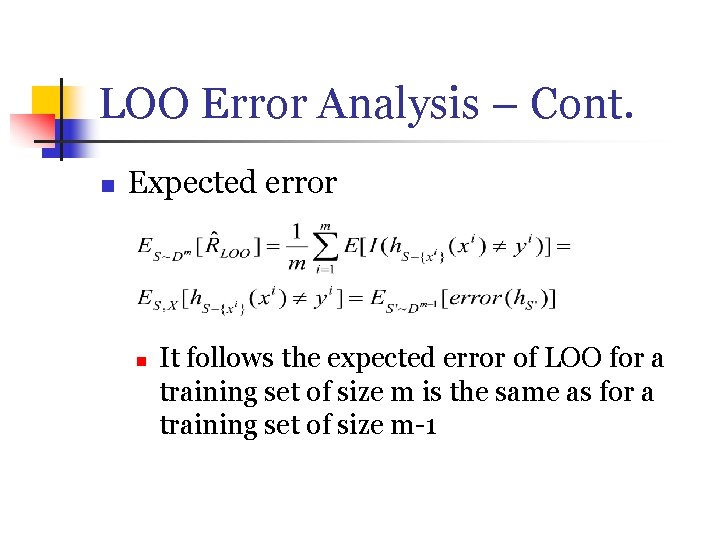

LOO Error Analysis – Cont. n Expected error n It follows the expected error of LOO for a training set of size m is the same as for a training set of size m-1

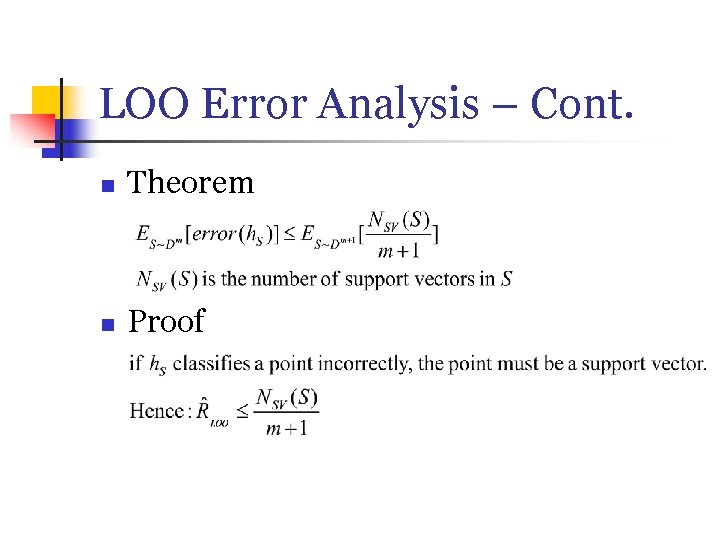

LOO Error Analysis – Cont. n Theorem n Proof

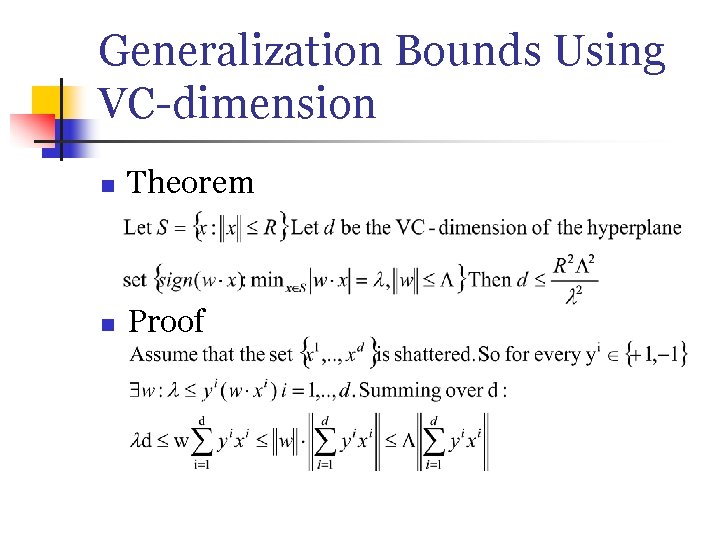

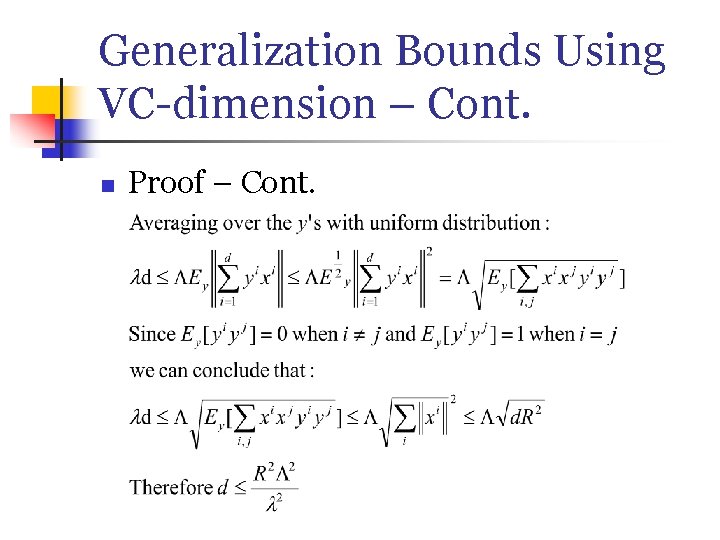

Generalization Bounds Using VC-dimension n Theorem n Proof

Generalization Bounds Using VC-dimension – Cont. n Proof – Cont.

- Slides: 41