Super Bowl Squares A Different Approach Ari J

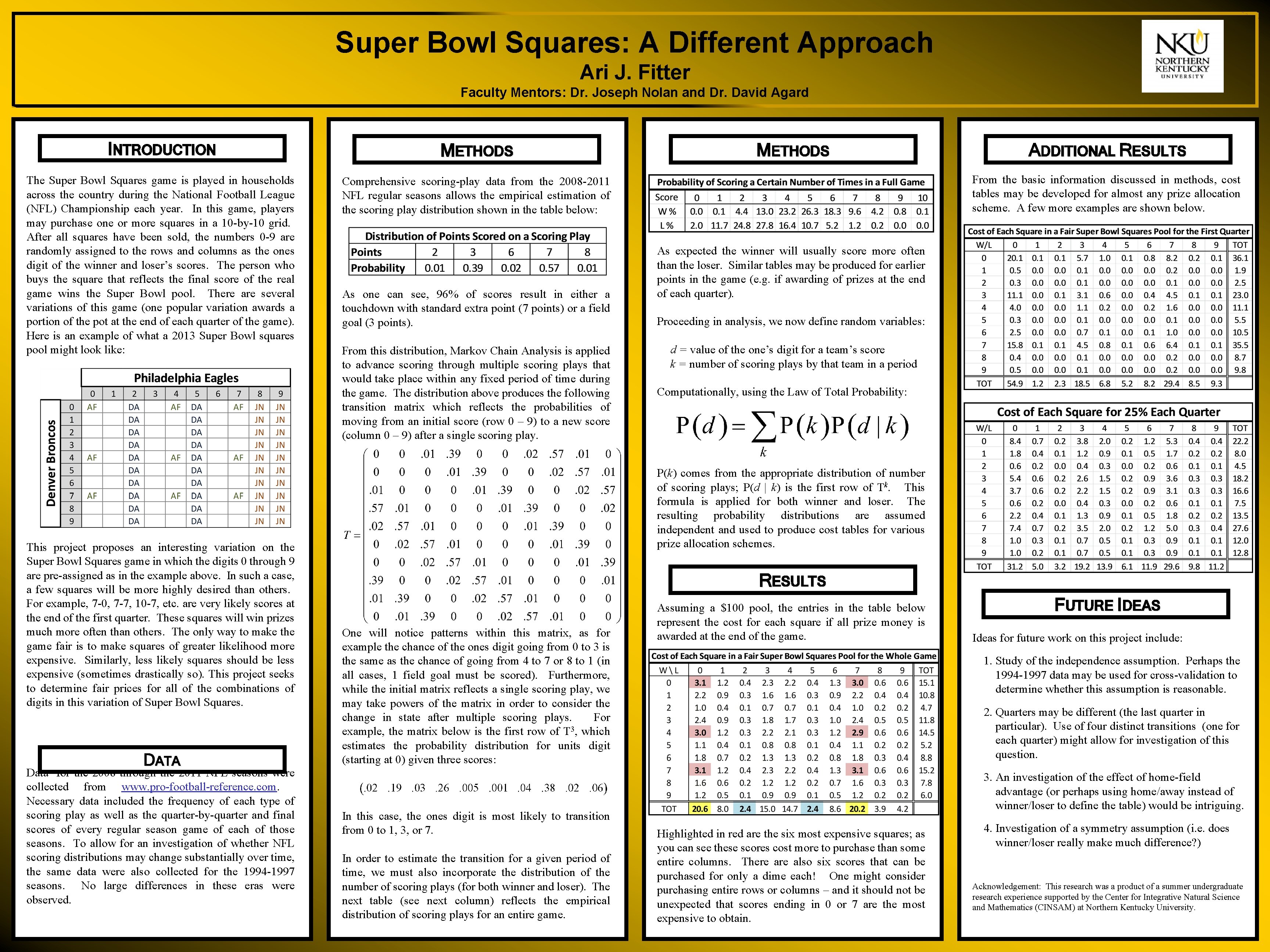

Super Bowl Squares: A Different Approach Ari J. Fitter Faculty Mentors: Dr. Joseph Nolan and Dr. David Agard INTRODUCTION METHODS The Super Bowl Squares game is played in households across the country during the National Football League (NFL) Championship each year. In this game, players may purchase one or more squares in a 10 -by-10 grid. After all squares have been sold, the numbers 0 -9 are randomly assigned to the rows and columns as the ones digit of the winner and loser’s scores. The person who buys the square that reflects the final score of the real game wins the Super Bowl pool. There are several variations of this game (one popular variation awards a portion of the pot at the end of each quarter of the game). Here is an example of what a 2013 Super Bowl squares pool might look like: Comprehensive scoring-play data from the 2008 -2011 NFL regular seasons allows the empirical estimation of the scoring play distribution shown in the table below: This project proposes an interesting variation on the Super Bowl Squares game in which the digits 0 through 9 are pre-assigned as in the example above. In such a case, a few squares will be more highly desired than others. For example, 7 -0, 7 -7, 10 -7, etc. are very likely scores at the end of the first quarter. These squares will win prizes much more often than others. The only way to make the game fair is to make squares of greater likelihood more expensive. Similarly, less likely squares should be less expensive (sometimes drastically so). This project seeks to determine fair prices for all of the combinations of digits in this variation of Super Bowl Squares. DATA Data 1 for the 2008 through the 2011 NFL seasons were collected from www. pro-football-reference. com. Necessary data included the frequency of each type of scoring play as well as the quarter-by-quarter and final scores of every regular season game of each of those seasons. To allow for an investigation of whether NFL scoring distributions may change substantially over time, the same data were also collected for the 1994 -1997 seasons. No large differences in these eras were observed. As one can see, 96% of scores result in either a touchdown with standard extra point (7 points) or a field goal (3 points). From this distribution, Markov Chain Analysis is applied to advance scoring through multiple scoring plays that would take place within any fixed period of time during the game. The distribution above produces the following transition matrix which reflects the probabilities of moving from an initial score (row 0 – 9) to a new score (column 0 – 9) after a single scoring play. METHODS ADDITIONAL RESULTS From the basic information discussed in methods, cost tables may be developed for almost any prize allocation scheme. A few more examples are shown below. As expected the winner will usually score more often than the loser. Similar tables may be produced for earlier points in the game (e. g. if awarding of prizes at the end of each quarter). Proceeding in analysis, we now define random variables: d = value of the one’s digit for a team’s score k = number of scoring plays by that team in a period Computationally, using the Law of Total Probability: P(k) comes from the appropriate distribution of number of scoring plays; P(d | k) is the first row of Tk. This formula is applied for both winner and loser. The resulting probability distributions are assumed independent and used to produce cost tables for various prize allocation schemes. RESULTS One will notice patterns within this matrix, as for example the chance of the ones digit going from 0 to 3 is the same as the chance of going from 4 to 7 or 8 to 1 (in all cases, 1 field goal must be scored). Furthermore, while the initial matrix reflects a single scoring play, we may take powers of the matrix in order to consider the change in state after multiple scoring plays. For example, the matrix below is the first row of T 3, which estimates the probability distribution for units digit (starting at 0) given three scores: In this case, the ones digit is most likely to transition from 0 to 1, 3, or 7. In order to estimate the transition for a given period of time, we must also incorporate the distribution of the number of scoring plays (for both winner and loser). The next table (see next column) reflects the empirical distribution of scoring plays for an entire game. Assuming a $100 pool, the entries in the table below represent the cost for each square if all prize money is awarded at the end of the game. FUTURE IDEAS Ideas for future work on this project include: 1. Study of the independence assumption. Perhaps the 1994 -1997 data may be used for cross-validation to determine whether this assumption is reasonable. 2. Quarters may be different (the last quarter in particular). Use of four distinct transitions (one for each quarter) might allow for investigation of this question. 3. An investigation of the effect of home-field advantage (or perhaps using home/away instead of winner/loser to define the table) would be intriguing. Highlighted in red are the six most expensive squares; as you can see these scores cost more to purchase than some entire columns. There also six scores that can be purchased for only a dime each! One might consider purchasing entire rows or columns – and it should not be unexpected that scores ending in 0 or 7 are the most expensive to obtain. 4. Investigation of a symmetry assumption (i. e. does winner/loser really make much difference? ) Acknowledgement: This research was a product of a summer undergraduate research experience supported by the Center for Integrative Natural Science and Mathematics (CINSAM) at Northern Kentucky University.

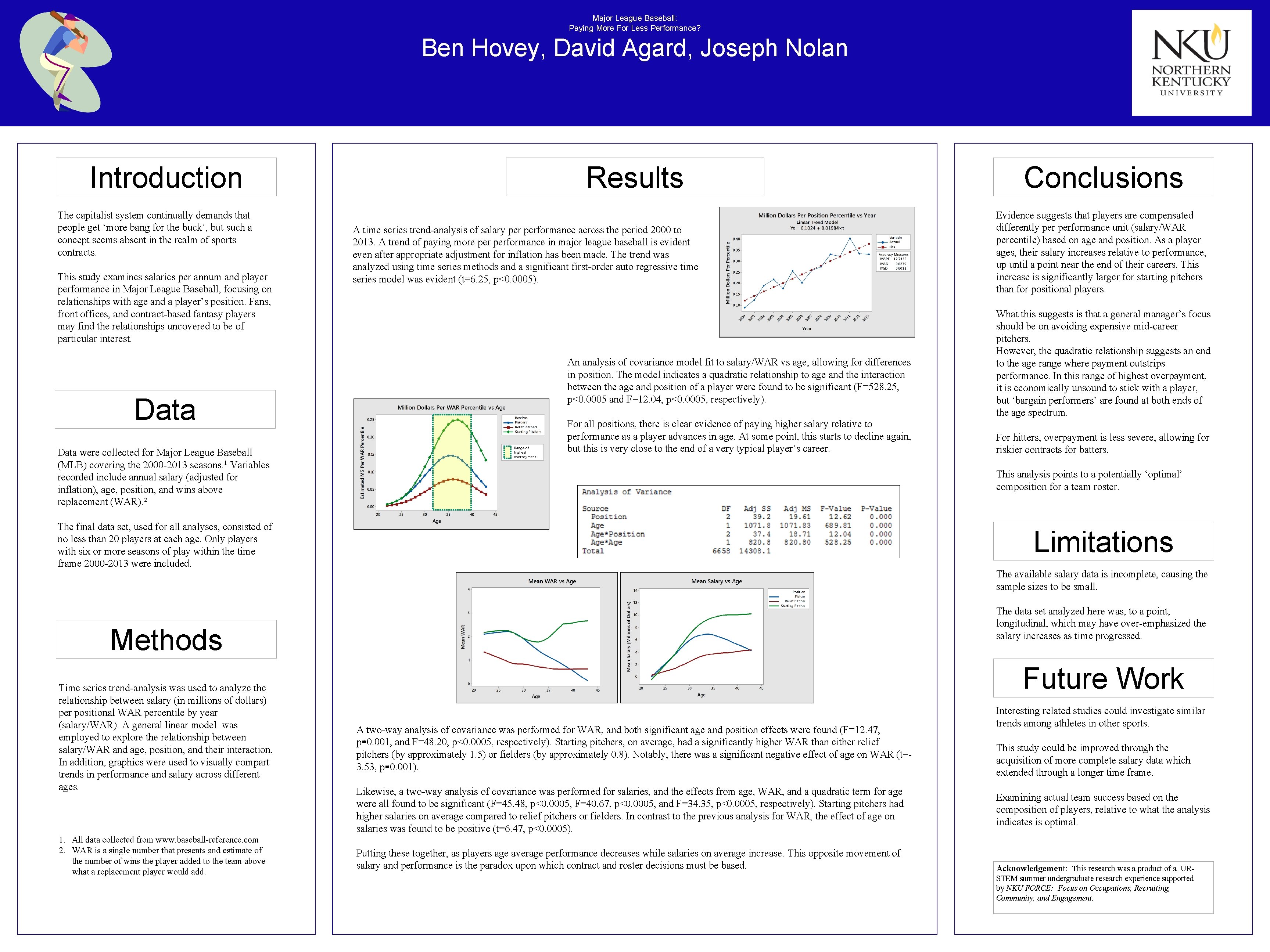

Major League Baseball: Paying More For Less Performance? Ben Hovey, David Agard, Joseph Nolan Introduction The capitalist system continually demands that people get ‘more bang for the buck’, but such a concept seems absent in the realm of sports contracts. This study examines salaries per annum and player performance in Major League Baseball, focusing on relationships with age and a player’s position. Fans, front offices, and contract-based fantasy players may find the relationships uncovered to be of particular interest. Results A time series trend-analysis of salary performance across the period 2000 to 2013. A trend of paying more performance in major league baseball is evident even after appropriate adjustment for inflation has been made. The trend was analyzed using time series methods and a significant first-order auto regressive time series model was evident (t=6. 25, p<0. 0005). An analysis of covariance model fit to salary/WAR vs age, allowing for differences in position. The model indicates a quadratic relationship to age and the interaction between the age and position of a player were found to be significant (F=528. 25, p<0. 0005 and F=12. 04, p<0. 0005, respectively). Data were collected for Major League Baseball (MLB) covering the 2000 -2013 seasons. 1 Variables recorded include annual salary (adjusted for inflation), age, position, and wins above replacement (WAR). 2 Range of highest overpayment For all positions, there is clear evidence of paying higher salary relative to performance as a player advances in age. At some point, this starts to decline again, but this is very close to the end of a very typical player’s career. What this suggests is that a general manager’s focus should be on avoiding expensive mid-career pitchers. However, the quadratic relationship suggests an end to the age range where payment outstrips performance. In this range of highest overpayment, it is economically unsound to stick with a player, but ‘bargain performers’ are found at both ends of the age spectrum. For hitters, overpayment is less severe, allowing for riskier contracts for batters. Limitations The available salary data is incomplete, causing the sample sizes to be small. The data set analyzed here was, to a point, longitudinal, which may have over-emphasized the salary increases as time progressed. Methods 1. All data collected from www. baseball-reference. com 2. WAR is a single number that presents and estimate of the number of wins the player added to the team above what a replacement player would add. Evidence suggests that players are compensated differently performance unit (salary/WAR percentile) based on age and position. As a player ages, their salary increases relative to performance, up until a point near the end of their careers. This increase is significantly larger for starting pitchers than for positional players. This analysis points to a potentially ‘optimal’ composition for a team roster. The final data set, used for all analyses, consisted of no less than 20 players at each age. Only players with six or more seasons of play within the time frame 2000 -2013 were included. Time series trend-analysis was used to analyze the relationship between salary (in millions of dollars) per positional WAR percentile by year (salary/WAR). A general linear model was employed to explore the relationship between salary/WAR and age, position, and their interaction. In addition, graphics were used to visually compart trends in performance and salary across different ages. Conclusions Future Work A two-way analysis of covariance was performed for WAR, and both significant age and position effects were found (F=12. 47, p≅0. 001, and F=48. 20, p<0. 0005, respectively). Starting pitchers, on average, had a significantly higher WAR than either relief pitchers (by approximately 1. 5) or fielders (by approximately 0. 8). Notably, there was a significant negative effect of age on WAR (t=3. 53, p≅0. 001). Likewise, a two-way analysis of covariance was performed for salaries, and the effects from age, WAR, and a quadratic term for age were all found to be significant (F=45. 48, p<0. 0005, F=40. 67, p<0. 0005, and F=34. 35, p<0. 0005, respectively). Starting pitchers had higher salaries on average compared to relief pitchers or fielders. In contrast to the previous analysis for WAR, the effect of age on salaries was found to be positive (t=6. 47, p<0. 0005). Putting these together, as players age average performance decreases while salaries on average increase. This opposite movement of salary and performance is the paradox upon which contract and roster decisions must be based. Interesting related studies could investigate similar trends among athletes in other sports. This study could be improved through the acquisition of more complete salary data which extended through a longer time frame. Examining actual team success based on the composition of players, relative to what the analysis indicates is optimal. Acknowledgement: This research was a product of a URSTEM summer undergraduate research experience supported by NKU FORCE: Focus on Occupations, Recruiting, Community, and Engagement.

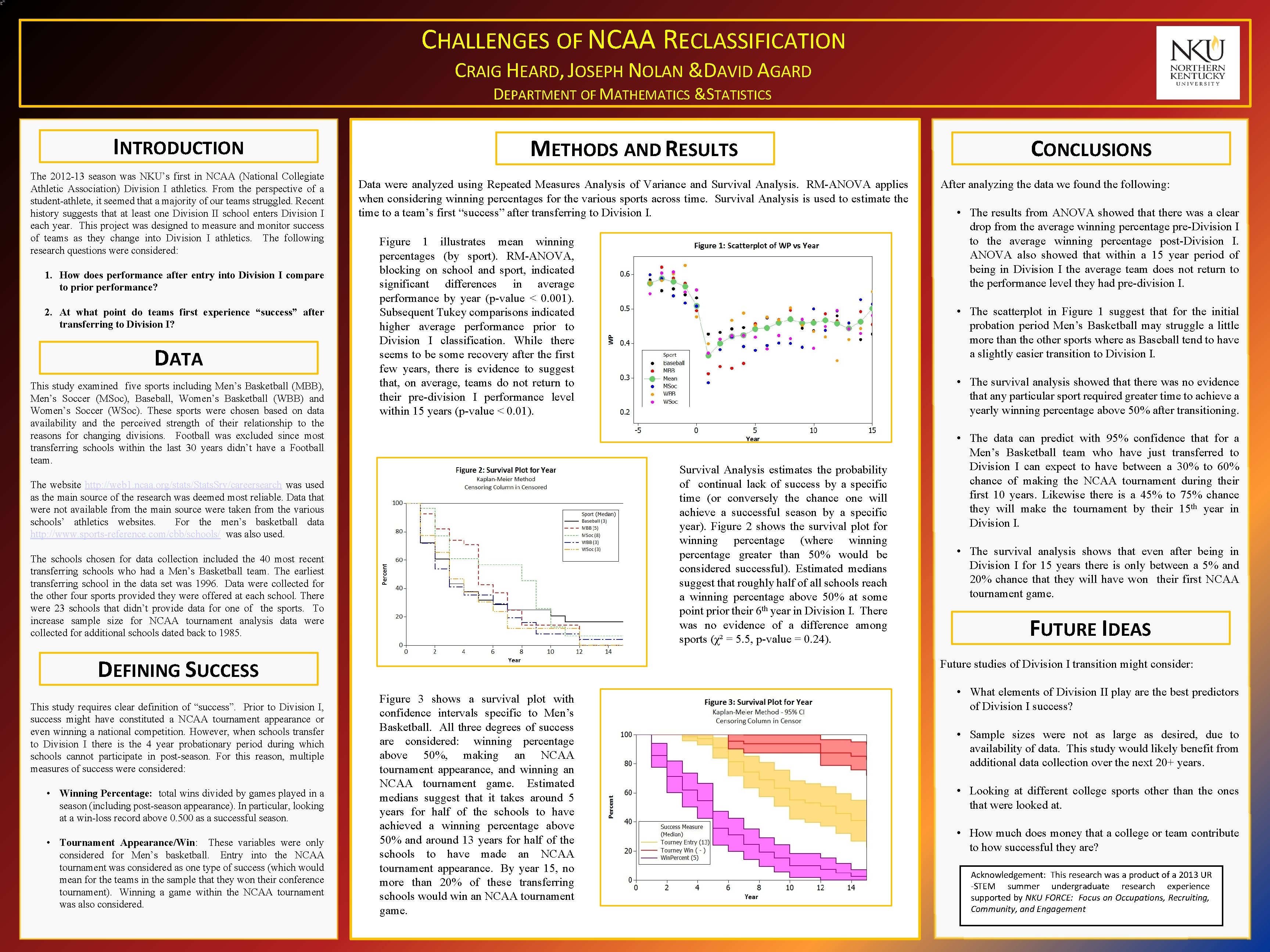

CHALLENGES OF NCAA RECLASSIFICATION CRAIG HEARD, JOSEPH NOLAN &DAVID AGARD DEPARTMENT OF MATHEMATICS &STATISTICS INTRODUCTION The 2012 -13 season was NKU’s first in NCAA (National Collegiate Athletic Association) Division I athletics. From the perspective of a student-athlete, it seemed that a majority of our teams struggled. Recent history suggests that at least one Division II school enters Division I each year. This project was designed to measure and monitor success of teams as they change into Division I athletics. The following research questions were considered: METHODS AND RESULTS Data were analyzed using Repeated Measures Analysis of Variance and Survival Analysis. RM-ANOVA applies when considering winning percentages for the various sports across time. Survival Analysis is used to estimate the time to a team’s first “success” after transferring to Division I. After analyzing the data we found the following: • The results from ANOVA showed that there was a clear drop from the average winning percentage pre-Division I to the average winning percentage post-Division I. ANOVA also showed that within a 15 year period of being in Division I the average team does not return to the performance level they had pre-division I. Figure 1 illustrates mean winning percentages (by sport). RM-ANOVA, blocking on school and sport, indicated significant differences in average performance by year (p-value < 0. 001). Subsequent Tukey comparisons indicated higher average performance prior to Division I classification. While there seems to be some recovery after the first few years, there is evidence to suggest that, on average, teams do not return to their pre-division I performance level within 15 years (p-value < 0. 01). 1. How does performance after entry into Division I compare to prior performance? 2. At what point do teams first experience “success” after transferring to Division I? DATA This study examined five sports including Men’s Basketball (MBB), Men’s Soccer (MSoc), Baseball, Women’s Basketball (WBB) and Women’s Soccer (WSoc). These sports were chosen based on data availability and the perceived strength of their relationship to the reasons for changing divisions. Football was excluded since most transferring schools within the last 30 years didn’t have a Football team. • The scatterplot in Figure 1 suggest that for the initial probation period Men’s Basketball may struggle a little more than the other sports where as Baseball tend to have a slightly easier transition to Division I. • The survival analysis showed that there was no evidence that any particular sport required greater time to achieve a yearly winning percentage above 50% after transitioning. Survival Analysis estimates the probability of continual lack of success by a specific time (or conversely the chance one will achieve a successful season by a specific year). Figure 2 shows the survival plot for winning percentage (where winning percentage greater than 50% would be considered successful). Estimated medians suggest that roughly half of all schools reach a winning percentage above 50% at some point prior their 6 th year in Division I. There was no evidence of a difference among sports (χ² = 5. 5, p-value = 0. 24). The website http: //web 1. ncaa. org/stats/Stats. Srv/careersearch was used as the main source of the research was deemed most reliable. Data that were not available from the main source were taken from the various schools’ athletics websites. For the men’s basketball data http: //www. sports-reference. com/cbb/schools/ was also used. The schools chosen for data collection included the 40 most recent transferring schools who had a Men’s Basketball team. The earliest transferring school in the data set was 1996. Data were collected for the other four sports provided they were offered at each school. There were 23 schools that didn’t provide data for one of the sports. To increase sample size for NCAA tournament analysis data were collected for additional schools dated back to 1985. DEFINING SUCCESS • The data can predict with 95% confidence that for a Men’s Basketball team who have just transferred to Division I can expect to have between a 30% to 60% chance of making the NCAA tournament during their first 10 years. Likewise there is a 45% to 75% chance they will make the tournament by their 15 th year in Division I. • The survival analysis shows that even after being in Division I for 15 years there is only between a 5% and 20% chance that they will have won their first NCAA tournament game. FUTURE IDEAS Future studies of Division I transition might consider: This study requires clear definition of “success”. Prior to Division I, success might have constituted a NCAA tournament appearance or even winning a national competition. However, when schools transfer to Division I there is the 4 year probationary period during which schools cannot participate in post-season. For this reason, multiple measures of success were considered: • Winning Percentage: total wins divided by games played in a season (including post-season appearance). In particular, looking at a win-loss record above 0. 500 as a successful season. • Tournament Appearance/Win: These variables were only considered for Men’s basketball. Entry into the NCAA tournament was considered as one type of success (which would mean for the teams in the sample that they won their conference tournament). Winning a game within the NCAA tournament was also considered. CONCLUSIONS Figure 3 shows a survival plot with confidence intervals specific to Men’s Basketball. All three degrees of success are considered: winning percentage above 50%, making an NCAA tournament appearance, and winning an NCAA tournament game. Estimated medians suggest that it takes around 5 years for half of the schools to have achieved a winning percentage above 50% and around 13 years for half of the schools to have made an NCAA tournament appearance. By year 15, no more than 20% of these transferring schools would win an NCAA tournament game. • What elements of Division II play are the best predictors of Division I success? • Sample sizes were not as large as desired, due to availability of data. This study would likely benefit from additional data collection over the next 20+ years. • Looking at different college sports other than the ones that were looked at. • How much does money that a college or team contribute to how successful they are? Acknowledgement: This research was a product of a 2013 UR -STEM summer undergraduate research experience supported by NKU FORCE: Focus on Occupations, Recruiting, Community, and Engagement

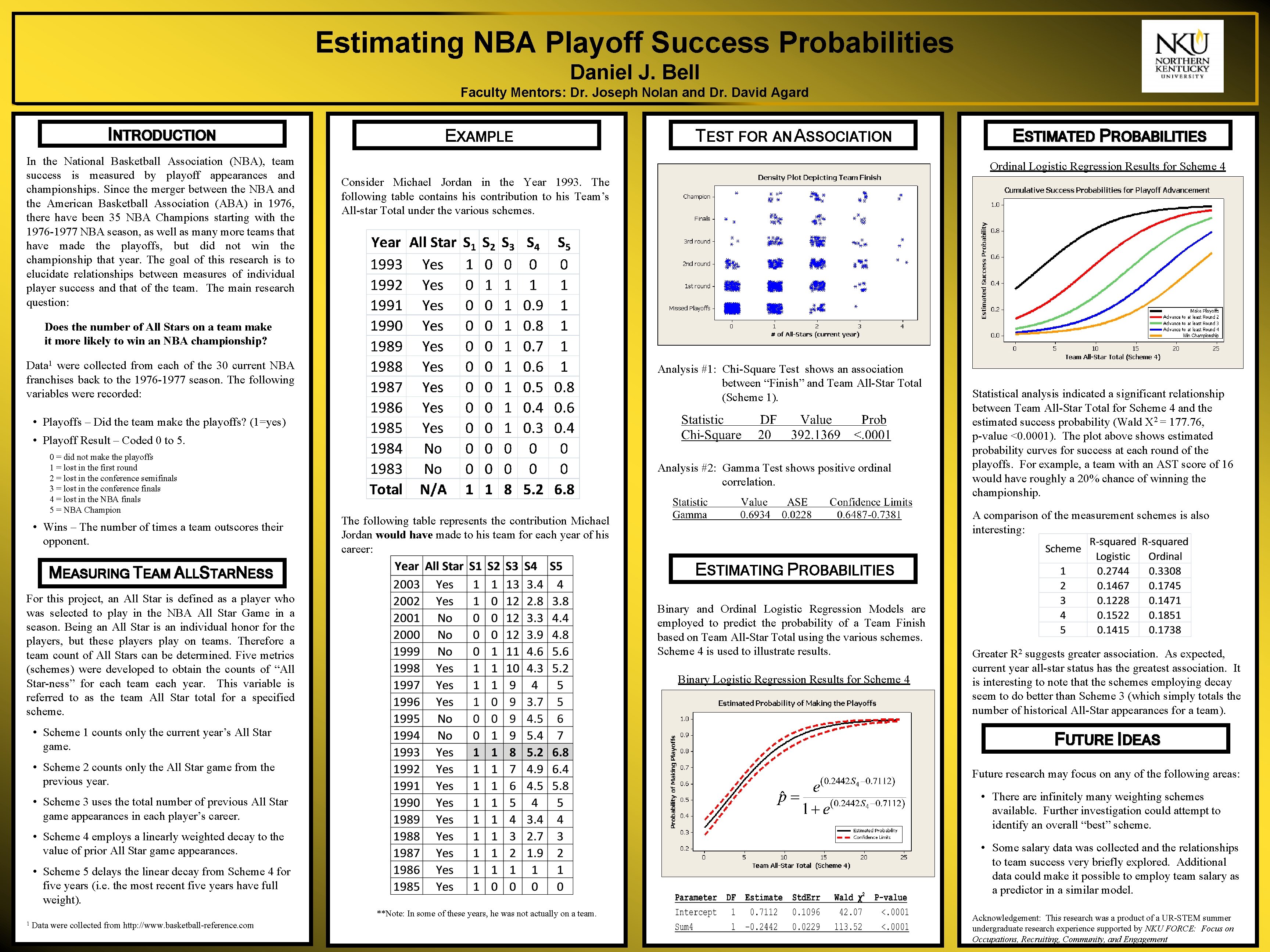

Estimating NBA Playoff Success Probabilities Daniel J. Bell Faculty Mentors: Dr. Joseph Nolan and Dr. David Agard INTRODUCTION In the National Basketball Association (NBA), team success is measured by playoff appearances and championships. Since the merger between the NBA and the American Basketball Association (ABA) in 1976, there have been 35 NBA Champions starting with the 1976 -1977 NBA season, as well as many more teams that have made the playoffs, but did not win the championship that year. The goal of this research is to elucidate relationships between measures of individual player success and that of the team. The main research question: EXAMPLE TEST FOR AN ASSOCIATION ESTIMATED PROBABILITIES Ordinal Logistic Regression Results for Scheme 4 Consider Michael Jordan in the Year 1993. The following table contains his contribution to his Team’s All-star Total under the various schemes. Does the number of All Stars on a team make it more likely to win an NBA championship? Data 1 were collected from each of the 30 current NBA franchises back to the 1976 -1977 season. The following variables were recorded: Analysis #1: Chi-Square Test shows an association between “Finish” and Team All-Star Total (Scheme 1). • Playoffs – Did the team make the playoffs? (1=yes) • Playoff Result – Coded 0 to 5. 0 = did not make the playoffs 1 = lost in the first round 2 = lost in the conference semifinals 3 = lost in the conference finals 4 = lost in the NBA finals 5 = NBA Champion • Wins – The number of times a team outscores their opponent. Analysis #2: Gamma Test shows positive ordinal correlation. Statistical analysis indicated a significant relationship between Team All-Star Total for Scheme 4 and the estimated success probability (Wald X 2 = 177. 76, p-value <0. 0001). The plot above shows estimated probability curves for success at each round of the playoffs. For example, a team with an AST score of 16 would have roughly a 20% chance of winning the championship. A comparison of the measurement schemes is also interesting: The following table represents the contribution Michael Jordan would have made to his team for each year of his career: ESTIMATING PROBABILITIES MEASURING TEAM ALLSTARNESS For this project, an All Star is defined as a player who was selected to play in the NBA All Star Game in a season. Being an All Star is an individual honor for the players, but these players play on teams. Therefore a team count of All Stars can be determined. Five metrics (schemes) were developed to obtain the counts of “All Star-ness” for each team each year. This variable is referred to as the team All Star total for a specified scheme. Binary and Ordinal Logistic Regression Models are employed to predict the probability of a Team Finish based on Team All-Star Total using the various schemes. Scheme 4 is used to illustrate results. Binary Logistic Regression Results for Scheme 4 Greater R 2 suggests greater association. As expected, current year all-star status has the greatest association. It is interesting to note that the schemes employing decay seem to do better than Scheme 3 (which simply totals the number of historical All-Star appearances for a team). • Scheme 1 counts only the current year’s All Star game. FUTURE IDEAS • Scheme 2 counts only the All Star game from the previous year. Future research may focus on any of the following areas: • There are infinitely many weighting schemes available. Further investigation could attempt to identify an overall “best” scheme. • Scheme 3 uses the total number of previous All Star game appearances in each player’s career. • Scheme 4 employs a linearly weighted decay to the value of prior All Star game appearances. • Some salary data was collected and the relationships to team success very briefly explored. Additional data could make it possible to employ team salary as a predictor in a similar model. • Scheme 5 delays the linear decay from Scheme 4 for five years (i. e. the most recent five years have full weight). **Note: In some of these years, he was not actually on a team. 1 Data were collected from http: //www. basketball-reference. com Acknowledgement: This research was a product of a UR-STEM summer undergraduate research experience supported by NKU FORCE: Focus on Occupations, Recruiting, Community, and Engagement

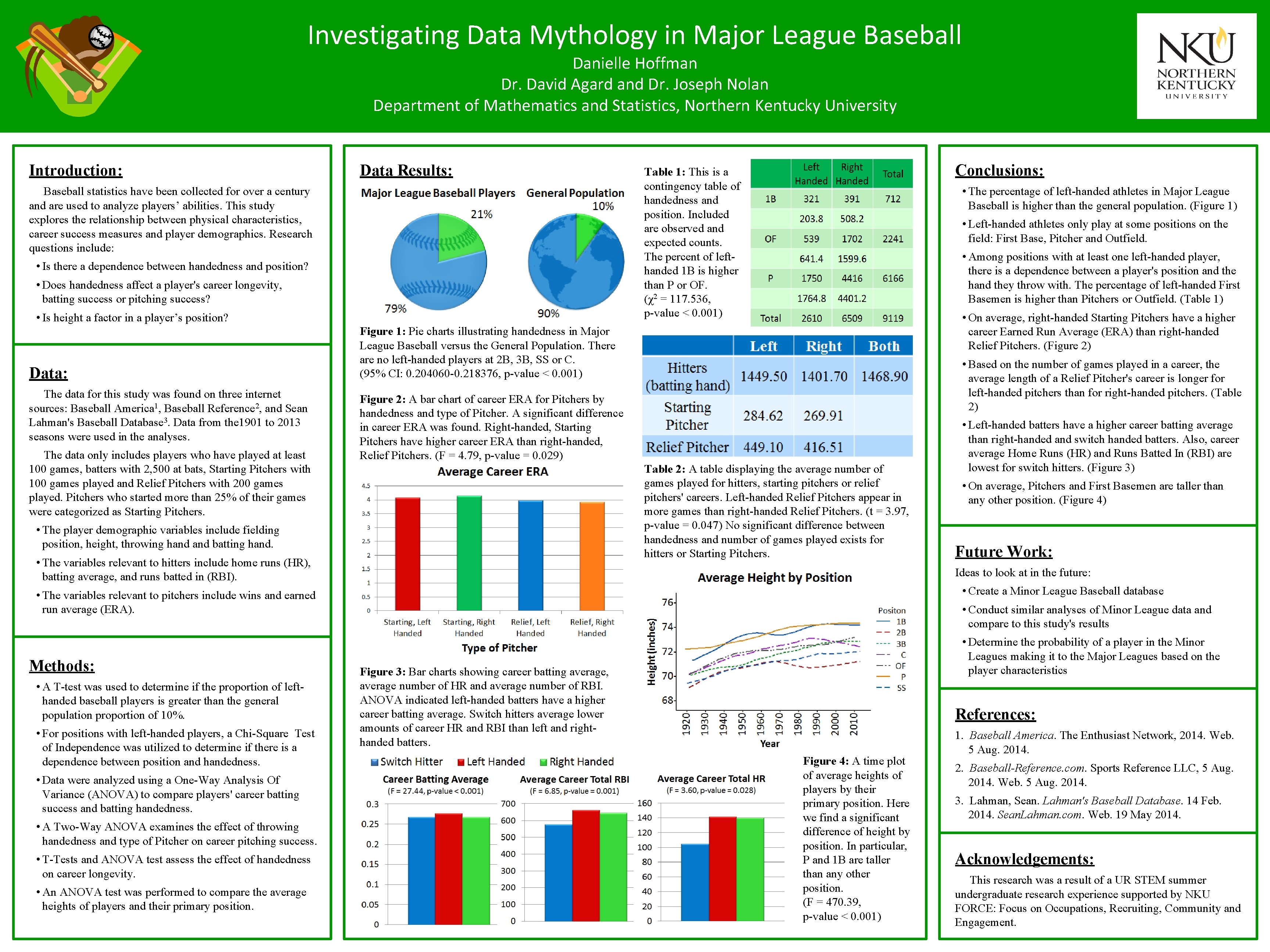

Investigating Data Mythology in Major League Baseball Danielle Hoffman Dr. David Agard and Dr. Joseph Nolan Department of Mathematics and Statistics, Northern Kentucky University Introduction: Data Results: Baseball statistics have been collected for over a century and are used to analyze players’ abilities. This study explores the relationship between physical characteristics, career success measures and player demographics. Research questions include: • Is there a dependence between handedness and position? • Does handedness affect a player's career longevity, batting success or pitching success? • Is height a factor in a player’s position? Data: The data for this study was found on three internet sources: Baseball America 1, Baseball Reference 2, and Sean Lahman's Baseball Database 3. Data from the 1901 to 2013 seasons were used in the analyses. The data only includes players who have played at least 100 games, batters with 2, 500 at bats, Starting Pitchers with 100 games played and Relief Pitchers with 200 games played. Pitchers who started more than 25% of their games were categorized as Starting Pitchers. Conclusions: Table 1: This is a contingency table of handedness and position. Included are observed and expected counts. The percent of lefthanded 1 B is higher than P or OF. (χ2 = 117. 536, p-value < 0. 001) • The percentage of left-handed athletes in Major League Baseball is higher than the general population. (Figure 1) • Left-handed athletes only play at some positions on the field: First Base, Pitcher and Outfield. • Among positions with at least one left-handed player, there is a dependence between a player's position and the hand they throw with. The percentage of left-handed First Basemen is higher than Pitchers or Outfield. (Table 1) • On average, right-handed Starting Pitchers have a higher career Earned Run Average (ERA) than right-handed Relief Pitchers. (Figure 2) Figure 1: Pie charts illustrating handedness in Major League Baseball versus the General Population. There are no left-handed players at 2 B, 3 B, SS or C. (95% CI: 0. 204060 -0. 218376, p-value < 0. 001) • Based on the number of games played in a career, the average length of a Relief Pitcher's career is longer for left-handed pitchers than for right-handed pitchers. (Table 2) Figure 2: A bar chart of career ERA for Pitchers by handedness and type of Pitcher. A significant difference in career ERA was found. Right-handed, Starting Pitchers have higher career ERA than right-handed, Relief Pitchers. (F = 4. 79, p-value = 0. 029) Table 2: A table displaying the average number of games played for hitters, starting pitchers or relief pitchers' careers. Left-handed Relief Pitchers appear in more games than right-handed Relief Pitchers. (t = 3. 97, p-value = 0. 047) No significant difference between handedness and number of games played exists for hitters or Starting Pitchers. • The player demographic variables include fielding position, height, throwing hand batting hand. • The variables relevant to hitters include home runs (HR), batting average, and runs batted in (RBI). • A T-test was used to determine if the proportion of lefthanded baseball players is greater than the general population proportion of 10%. • For positions with left-handed players, a Chi-Square Test of Independence was utilized to determine if there is a dependence between position and handedness. • Data were analyzed using a One-Way Analysis Of Variance (ANOVA) to compare players' career batting success and batting handedness. • A Two-Way ANOVA examines the effect of throwing handedness and type of Pitcher on career pitching success. • T-Tests and ANOVA test assess the effect of handedness on career longevity. • An ANOVA test was performed to compare the average heights of players and their primary position. • On average, Pitchers and First Basemen are taller than any other position. (Figure 4) Future Work: Ideas to look at in the future: • Create a Minor League Baseball database • The variables relevant to pitchers include wins and earned run average (ERA). Methods: • Left-handed batters have a higher career batting average than right-handed and switch handed batters. Also, career average Home Runs (HR) and Runs Batted In (RBI) are lowest for switch hitters. (Figure 3) • Conduct similar analyses of Minor League data and compare to this study's results • Determine the probability of a player in the Minor Leagues making it to the Major Leagues based on the player characteristics Figure 3: Bar charts showing career batting average, average number of HR and average number of RBI. ANOVA indicated left-handed batters have a higher career batting average. Switch hitters average lower amounts of career HR and RBI than left and righthanded batters. References: Figure 4: A time plot of average heights of players by their primary position. Here we find a significant difference of height by position. In particular, P and 1 B are taller than any other position. (F = 470. 39, p-value < 0. 001) 1. Baseball America. The Enthusiast Network, 2014. Web. 5 Aug. 2014. 2. Baseball-Reference. com. Sports Reference LLC, 5 Aug. 2014. Web. 5 Aug. 2014. 3. Lahman, Sean. Lahman's Baseball Database. 14 Feb. 2014. Sean. Lahman. com. Web. 19 May 2014. Acknowledgements: This research was a result of a UR STEM summer undergraduate research experience supported by NKU FORCE: Focus on Occupations, Recruiting, Community and Engagement.

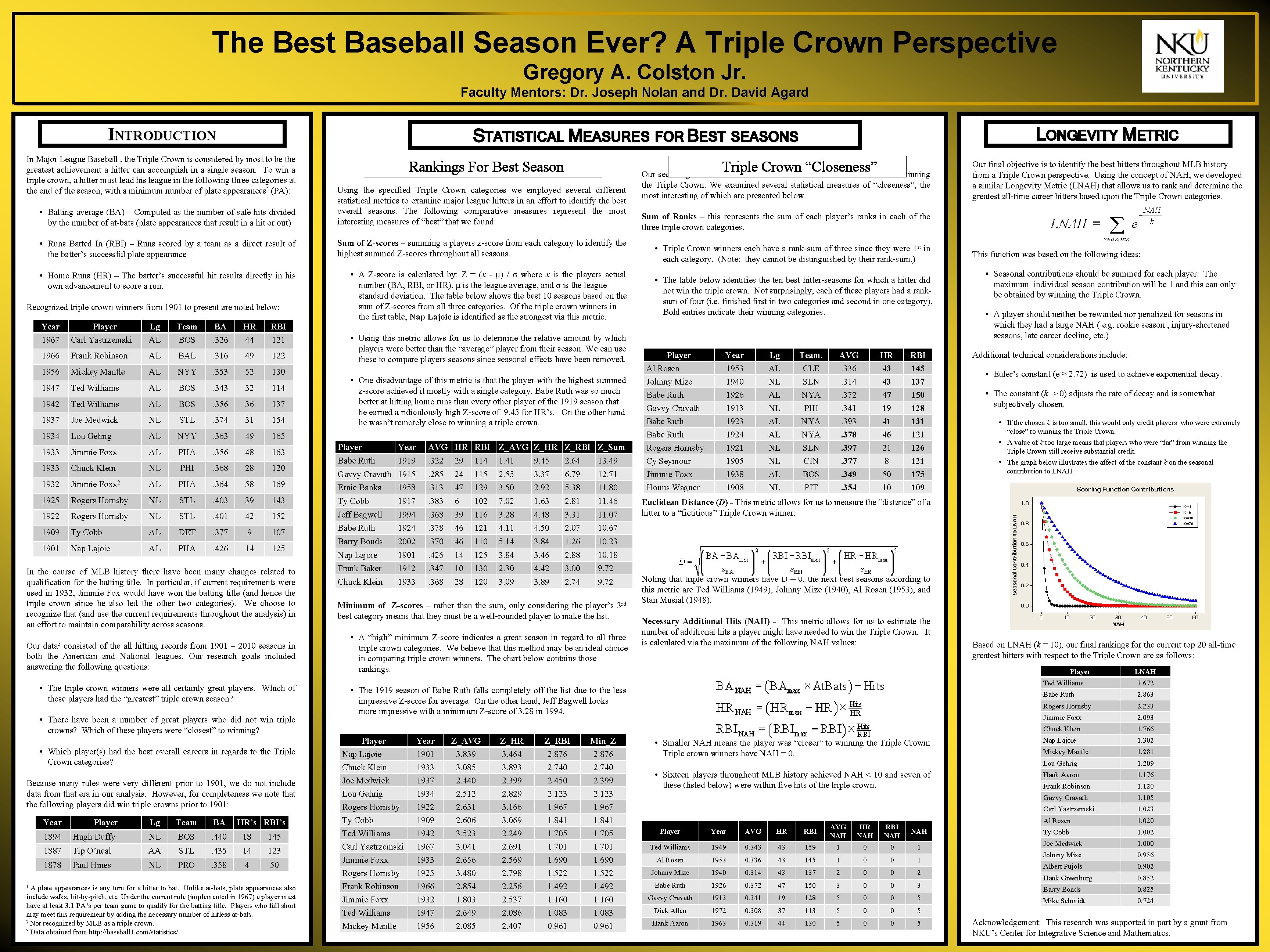

The Best Baseball Season Ever? A Triple Crown Perspective Gregory A. Colston Jr. Faculty Mentors: Dr. Joseph Nolan and Dr. David Agard INTRODUCTION In Major League Baseball , the Triple Crown is considered by most to be the greatest achievement a hitter can accomplish in a single season. To win a triple crown, a hitter must lead his league in the following three categories at the end of the season, with a minimum number of plate appearances 1 (PA): Rankings For Best Season Triple Crown “Closeness” Our second goal was to measure the “closeness” of a hitter’s season to winning • Batting average (BA) – Computed as the number of safe hits divided by the number of at-bats (plate appearances that result in a hit or out) Using the specified Triple Crown categories we employed several different statistical metrics to examine major league hitters in an effort to identify the best overall seasons. The following comparative measures represent the most interesting measures of “best” that we found: • Runs Batted In (RBI) – Runs scored by a team as a direct result of the batter’s successful plate appearance Sum of Z-scores – summing a players z-score from each category to identify the highest summed Z-scores throughout all seasons. • Home Runs (HR) – The batter’s successful hit results directly in his own advancement to score a run. • A Z-score is calculated by: Z = (x - µ) / σ where x is the players actual number (BA, RBI, or HR), µ is the league average, and σ is the league standard deviation. The table below shows the best 10 seasons based on the sum of Z-scores from all three categories. Of the triple crown winners in the first table, Nap Lajoie is identified as the strongest via this metric. Recognized triple crown winners from 1901 to present are noted below: Year 1967 Player Carl Yastrzemski Lg AL Team BOS BA. 326 HR 44 RBI 121 1966 Frank Robinson AL BAL . 316 49 122 1956 Mickey Mantle AL NYY . 353 52 130 1947 Ted Williams AL BOS . 343 32 114 1942 Ted Williams AL BOS . 356 36 137 1937 Joe Medwick NL STL . 374 31 154 1934 Lou Gehrig AL NYY . 363 49 165 1933 Jimmie Foxx AL PHA . 356 48 163 1933 Chuck Klein NL PHI . 368 28 120 1932 Jimmie Foxx 2 AL PHA . 364 58 1925 Rogers Hornsby NL STL . 403 1922 Rogers Hornsby NL STL 1909 Ty Cobb AL 1901 Nap Lajoie AL AVG HR RBI Z_AVG Z_HR Z_RBI Z_Sum Babe Ruth 1919 . 322 29 114 1. 41 9. 45 2. 64 13. 49 Gavvy Cravath 1915 . 285 24 115 2. 55 3. 37 6. 79 12. 71 169 Ernie Banks 1958 . 313 47 129 3. 50 2. 92 5. 38 11. 80 39 143 Ty Cobb 1917 . 383 6 102 7. 02 1. 63 2. 81 11. 46 . 401 42 152 Jeff Bagwell 1994 . 368 39 116 3. 28 4. 48 3. 31 11. 07 DET . 377 9 107 1924 . 378 46 121 4. 11 4. 50 2. 07 10. 67 PHA . 426 14 125 Babe Ruth Barry Bonds 2002 . 370 46 110 5. 14 3. 84 1. 26 10. 23 Nap Lajoie 1901 . 426 14 125 3. 84 3. 46 2. 88 10. 18 Frank Baker 1912 . 347 10 130 2. 30 4. 42 3. 00 9. 72 Chuck Klein 1933 . 368 28 120 3. 09 3. 89 2. 74 9. 72 • The triple crown winners were all certainly great players. Which of these players had the “greatest” triple crown season? • There have been a number of great players who did not win triple crowns? Which of these players were “closest” to winning? • Which player(s) had the best overall careers in regards to the Triple Crown categories? Because many rules were very different prior to 1901, we do not include data from that era in our analysis. However, for completeness we note that the following players did win triple crowns prior to 1901: 1894 1887 1878 Player Hugh Duffy Tip O’neal Paul Hines Lg NL AA NL Team BOS STL PRO BA. 440. 435. 358 HR’s RBI’s 18 14 4 145 123 50 1 A plate appearances is any turn for a hitter to bat. Unlike at-bats, plate appearances also include walks, hit-by-pitch, etc. Under the current rule (implemented in 1967) a player must have at least 3. 1 PA’s per team game to qualify for the batting title. Players who fall short may meet this requirement by adding the necessary number of hitless at-bats. 2 Not recognized by MLB as a triple crown. 3 Data obtained from http: //baseball 1. com/statistics/ Minimum of Z-scores – rather than the sum, only considering the player’s 3 rd best category means that they must be a well-rounded player to make the list. • A “high” minimum Z-score indicates a great season in regard to all three triple crown categories. We believe that this method may be an ideal choice in comparing triple crown winners. The chart below contains those rankings. • Triple Crown winners each have a rank-sum of three since they were 1 st in each category. (Note: they cannot be distinguished by their rank-sum. ) • The table below identifies the ten best hitter-seasons for which a hitter did not win the triple crown. Not surprisingly, each of these players had a ranksum of four (i. e. finished first in two categories and second in one category). Bold entries indicate their winning categories. Player Al Rosen Johnny Mize Babe Ruth Gavvy Cravath Babe Ruth Rogers Hornsby Cy Seymour Jimmie Foxx Honus Wagner Year 1953 1940 1926 1913 1924 1921 1905 1938 1908 Lg AL NL AL AL NL NL AL NL Team. CLE SLN NYA PHI NYA SLN CIN BOS PIT AVG. 336. 314. 372. 341. 393. 378. 397. 377. 349. 354 HR 43 43 47 19 41 46 21 8 50 10 RBI 145 137 150 128 131 126 121 175 109 Year 1901 1933 1937 1934 1922 1909 1942 1967 1933 1925 1966 1932 1947 1956 Z_AVG 3. 839 3. 085 2. 440 2. 512 2. 631 2. 606 3. 523 3. 041 2. 656 3. 480 2. 854 1. 803 2. 649 2. 085 Z_HR 3. 464 3. 893 2. 399 2. 829 3. 166 3. 069 2. 249 2. 691 2. 569 2. 798 2. 256 2. 537 2. 086 2. 407 Z_RBI 2. 876 2. 740 2. 450 2. 123 1. 967 1. 841 1. 705 1. 701 1. 690 1. 522 1. 492 1. 160 1. 083 0. 961 Min_Z 2. 876 2. 740 2. 399 2. 123 1. 967 1. 841 1. 705 1. 701 1. 690 1. 522 1. 492 1. 160 1. 083 0. 961 This function was based on the following ideas: • Seasonal contributions should be summed for each player. The maximum individual season contribution will be 1 and this can only be obtained by winning the Triple Crown. • A player should neither be rewarded nor penalized for seasons in which they had a large NAH ( e. g. rookie season , injury-shortened seasons, late career decline, etc. ) Additional technical considerations include: • Euler’s constant (e ≈ 2. 72) is used to achieve exponential decay. • The constant (k > 0) adjusts the rate of decay and is somewhat subjectively chosen. • If the chosen k is too small, this would only credit players who were extremely “close” to winning the Triple Crown. • A value of k too large means that players who were “far” from winning the Triple Crown still receive substantial credit. • The graph below illustrates the affect of the constant k on the seasonal contribution to LNAH. Euclidean Distance (D) - This metric allows for us to measure the “distance” of a hitter to a “fictitious” Triple Crown winner: Noting that triple crown winners have D = 0, the next best seasons according to this metric are Ted Williams (1949), Johnny Mize (1940), Al Rosen (1953), and Stan Musial (1948). Necessary Additional Hits (NAH) - This metric allows for us to estimate the number of additional hits a player might have needed to win the Triple Crown. It is calculated via the maximum of the following NAH values: Based on LNAH (k = 10), our final rankings for the current top 20 all-time greatest hitters with respect to the Triple Crown are as follows: Player • The 1919 season of Babe Ruth falls completely off the list due to the less impressive Z-score for average. On the other hand, Jeff Bagwell looks more impressive with a minimum Z-score of 3. 28 in 1994. Player Nap Lajoie Chuck Klein Joe Medwick Lou Gehrig Rogers Hornsby Ty Cobb Ted Williams Carl Yastrzemski Jimmie Foxx Rogers Hornsby Frank Robinson Jimmie Foxx Ted Williams Mickey Mantle Our final objective is to identify the best hitters throughout MLB history from a Triple Crown perspective. Using the concept of NAH, we developed a similar Longevity Metric (LNAH) that allows us to rank and determine the greatest all-time career hitters based upon the Triple Crown categories. Sum of Ranks – this represents the sum of each player’s ranks in each of the three triple crown categories. • One disadvantage of this metric is that the player with the highest summed z-score achieved it mostly with a single category. Babe Ruth was so much better at hitting home runs than every other player of the 1919 season that he earned a ridiculously high Z-score of 9. 45 for HR’s. On the other hand he wasn’t remotely close to winning a triple crown. Year Our data 3 consisted of the all hitting records from 1901 – 2010 seasons in both the American and National leagues. Our research goals included answering the following questions: the Triple Crown. We examined several statistical measures of “closeness”, the most interesting of which are presented below. • Using this metric allows for us to determine the relative amount by which players were better than the “average” player from their season. We can use these to compare players seasons since seasonal effects have been removed. Player In the course of MLB history there have been many changes related to qualification for the batting title. In particular, if current requirements were used in 1932, Jimmie Fox would have won the batting title (and hence the triple crown since he also led the other two categories). We choose to recognize that (and use the current requirements throughout the analysis) in an effort to maintain comparability across seasons. Year LONGEVITY METRIC STATISTICAL MEASURES FOR BEST SEASONS • Smaller NAH means the player was “closer” to winning the Triple Crown; Triple crown winners have NAH = 0. • Sixteen players throughout MLB history achieved NAH < 10 and seven of these (listed below) were within five hits of the triple crown. Player Year AVG HR RBI AVG NAH HR NAH RBI NAH Ted Williams 1949 0. 343 43 159 1 0 0 1 Al Rosen 1953 0. 336 43 145 1 0 0 1 Johnny Mize 1940 0. 314 43 137 2 0 0 2 Babe Ruth 1926 0. 372 47 150 3 0 0 3 Gavvy Cravath 1913 0. 341 19 128 5 0 0 5 Dick Allen 1972 0. 308 37 113 5 0 0 5 Hank Aaron 1963 0. 319 44 130 5 0 0 5 LNAH Ted Williams 3. 672 Babe Ruth 2. 863 Rogers Hornsby 2. 233 Jimmie Foxx 2. 093 Chuck Klein 1. 766 Nap Lajoie 1. 302 Mickey Mantle 1. 281 Lou Gehrig 1. 209 Hank Aaron 1. 176 Frank Robinson 1. 120 Gavvy Cravath 1. 105 Carl Yastrzemski 1. 023 Al Rosen 1. 020 Ty Cobb 1. 002 Joe Medwick 1. 000 Johnny Mize 0. 956 Albert Pujols 0. 902 Hank Greenburg 0. 852 Barry Bonds 0. 825 Mike Schmidt 0. 724 Acknowledgement: This research was supported in part by a grant from NKU’s Center for Integrative Science and Mathematics.

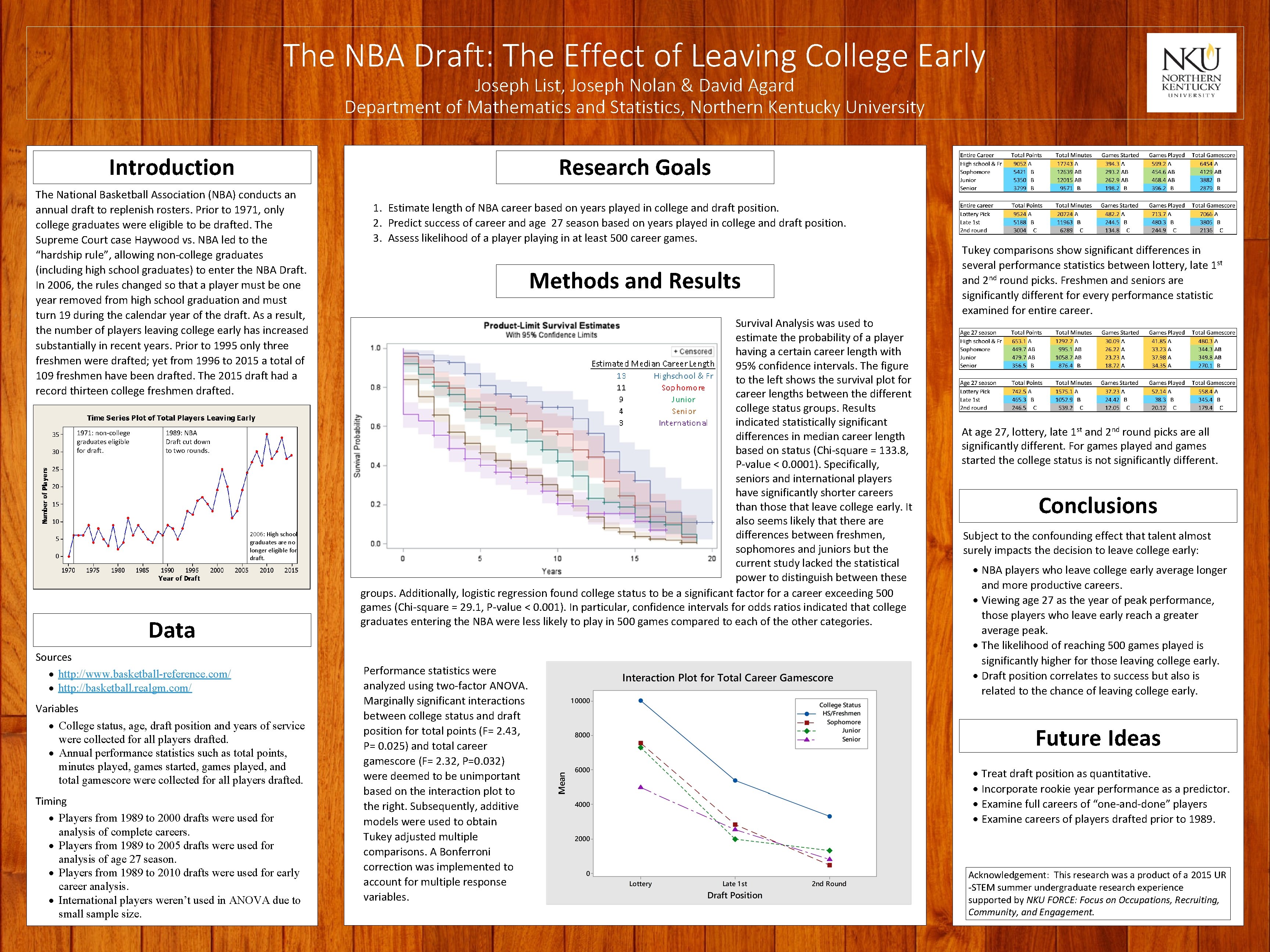

The NBA Draft: The Effect of Leaving College Early Joseph List, Joseph Nolan & David Agard Department of Mathematics and Statistics, Northern Kentucky University Introduction Research Goals The National Basketball Association (NBA) conducts an annual draft to replenish rosters. Prior to 1971, only college graduates were eligible to be drafted. The Supreme Court case Haywood vs. NBA led to the “hardship rule”, allowing non-college graduates (including high school graduates) to enter the NBA Draft. In 2006, the rules changed so that a player must be one year removed from high school graduation and must turn 19 during the calendar year of the draft. As a result, the number of players leaving college early has increased substantially in recent years. Prior to 1995 only three freshmen were drafted; yet from 1996 to 2015 a total of 109 freshmen have been drafted. The 2015 draft had a record thirteen college freshmen drafted. 1971: non-college graduates eligible for draft. 1989: NBA Draft cut down to two rounds. 2006: High school graduates are no longer eligible for draft. Data Sources http: //www. basketball-reference. com/ http: //basketball. realgm. com/ Variables College status, age, draft position and years of service were collected for all players drafted. Annual performance statistics such as total points, minutes played, games started, games played, and total gamescore were collected for all players drafted. Timing Players from 1989 to 2000 drafts were used for analysis of complete careers. Players from 1989 to 2005 drafts were used for analysis of age 27 season. Players from 1989 to 2010 drafts were used for early career analysis. International players weren’t used in ANOVA due to small sample size. 1. Estimate length of NBA career based on years played in college and draft position. 2. Predict success of career and age 27 season based on years played in college and draft position. 3. Assess likelihood of a player playing in at least 500 career games. Methods and Results Survival Analysis was used to estimate the probability of a player having a certain career length with 95% confidence intervals. The figure to the left shows the survival plot for career lengths between the different college status groups. Results indicated statistically significant differences in median career length based on status (Chi-square = 133. 8, P-value < 0. 0001). Specifically, seniors and international players have significantly shorter careers than those that leave college early. It also seems likely that there are differences between freshmen, sophomores and juniors but the current study lacked the statistical power to distinguish between these groups. Additionally, logistic regression found college status to be a significant factor for a career exceeding 500 games (Chi-square = 29. 1, P-value < 0. 001). In particular, confidence intervals for odds ratios indicated that college graduates entering the NBA were less likely to play in 500 games compared to each of the other categories. Performance statistics were analyzed using two-factor ANOVA. Marginally significant interactions between college status and draft position for total points (F= 2. 43, P= 0. 025) and total career gamescore (F= 2. 32, P=0. 032) were deemed to be unimportant based on the interaction plot to the right. Subsequently, additive models were used to obtain Tukey adjusted multiple comparisons. A Bonferroni correction was implemented to account for multiple response variables. Tukey comparisons show significant differences in several performance statistics between lottery, late 1 st and 2 nd round picks. Freshmen and seniors are significantly different for every performance statistic examined for entire career. At age 27, lottery, late 1 st and 2 nd round picks are all significantly different. For games played and games started the college status is not significantly different. Conclusions Subject to the confounding effect that talent almost surely impacts the decision to leave college early: NBA players who leave college early average longer and more productive careers. Viewing age 27 as the year of peak performance, those players who leave early reach a greater average peak. The likelihood of reaching 500 games played is significantly higher for those leaving college early. Draft position correlates to success but also is related to the chance of leaving college early. Future Ideas Treat draft position as quantitative. Incorporate rookie year performance as a predictor. Examine full careers of “one-and-done” players Examine careers of players drafted prior to 1989. Acknowledgement: This research was a product of a 2015 UR -STEM summer undergraduate research experience supported by NKU FORCE: Focus on Occupations, Recruiting, Community, and Engagement.

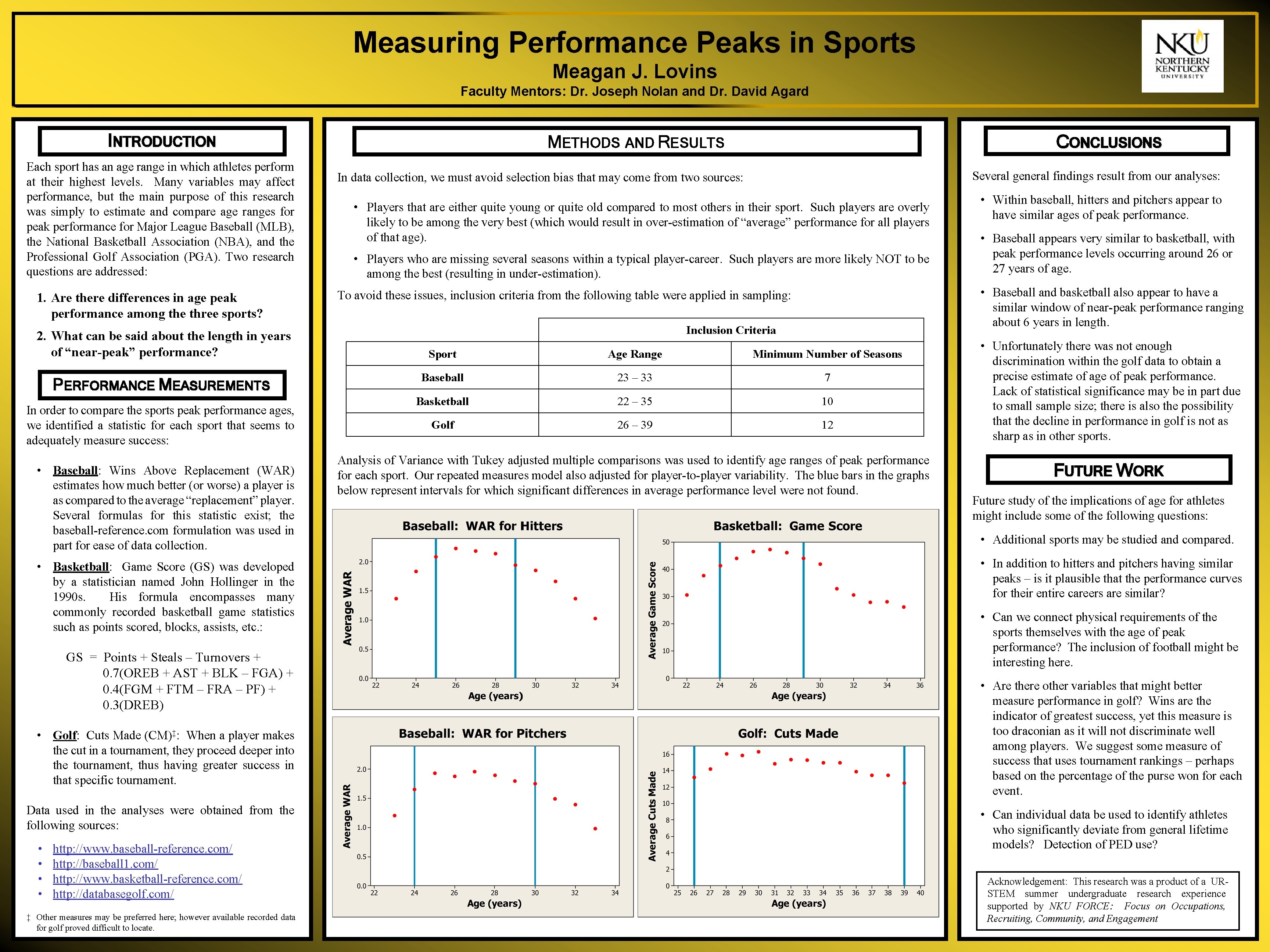

Measuring Performance Peaks in Sports Meagan J. Lovins Faculty Mentors: Dr. Joseph Nolan and Dr. David Agard INTRODUCTION Each sport has an age range in which athletes perform at their highest levels. Many variables may affect performance, but the main purpose of this research was simply to estimate and compare age ranges for peak performance for Major League Baseball (MLB), the National Basketball Association (NBA), and the Professional Golf Association (PGA). Two research questions are addressed: 1. Are there differences in age peak performance among the three sports? 2. What can be said about the length in years of “near-peak” performance? PERFORMANCE MEASUREMENTS In order to compare the sports peak performance ages, we identified a statistic for each sport that seems to adequately measure success: • Baseball: Wins Above Replacement (WAR) estimates how much better (or worse) a player is as compared to the average “replacement” player. Several formulas for this statistic exist; the baseball-reference. com formulation was used in part for ease of data collection. • Basketball: Game Score (GS) was developed by a statistician named John Hollinger in the 1990 s. His formula encompasses many commonly recorded basketball game statistics such as points scored, blocks, assists, etc. : GS = Points + Steals – Turnovers + 0. 7(OREB + AST + BLK – FGA) + 0. 4(FGM + FTM – FRA – PF) + 0. 3(DREB) • Golf: Cuts Made (CM)‡: When a player makes the cut in a tournament, they proceed deeper into the tournament, thus having greater success in that specific tournament. Data used in the analyses were obtained from the following sources: • • http: //www. baseball-reference. com/ http: //baseball 1. com/ http: //www. basketball-reference. com/ http: //databasegolf. com/ ‡ Other measures may be preferred here; however available recorded data for golf proved difficult to locate. CONCLUSIONS METHODS AND RESULTS Several general findings result from our analyses: In data collection, we must avoid selection bias that may come from two sources: • Players that are either quite young or quite old compared to most others in their sport. Such players are overly likely to be among the very best (which would result in over-estimation of “average” performance for all players of that age). • Players who are missing several seasons within a typical player-career. Such players are more likely NOT to be among the best (resulting in under-estimation). • Within baseball, hitters and pitchers appear to have similar ages of peak performance. • Baseball appears very similar to basketball, with peak performance levels occurring around 26 or 27 years of age. • Baseball and basketball also appear to have a similar window of near-peak performance ranging about 6 years in length. To avoid these issues, inclusion criteria from the following table were applied in sampling: Inclusion Criteria Sport Age Range Minimum Number of Seasons Baseball 23 – 33 7 Basketball 22 – 35 10 Golf 26 – 39 12 Analysis of Variance with Tukey adjusted multiple comparisons was used to identify age ranges of peak performance for each sport. Our repeated measures model also adjusted for player-to-player variability. The blue bars in the graphs below represent intervals for which significant differences in average performance level were not found. • Unfortunately there was not enough discrimination within the golf data to obtain a precise estimate of age of peak performance. Lack of statistical significance may be in part due to small sample size; there is also the possibility that the decline in performance in golf is not as sharp as in other sports. FUTURE WORK Future study of the implications of age for athletes might include some of the following questions: • Additional sports may be studied and compared. • In addition to hitters and pitchers having similar peaks – is it plausible that the performance curves for their entire careers are similar? • Can we connect physical requirements of the sports themselves with the age of peak performance? The inclusion of football might be interesting here. • Are there other variables that might better measure performance in golf? Wins are the indicator of greatest success, yet this measure is too draconian as it will not discriminate well among players. We suggest some measure of success that uses tournament rankings – perhaps based on the percentage of the purse won for each event. • Can individual data be used to identify athletes who significantly deviate from general lifetime models? Detection of PED use? Acknowledgement: This research was a product of a URSTEM summer undergraduate research experience supported by NKU FORCE: Focus on Occupations, Recruiting, Community, and Engagement

- Slides: 8