Summary 3 Cs Compulsory Capacity Conflict Misses Reducing

- Slides: 18

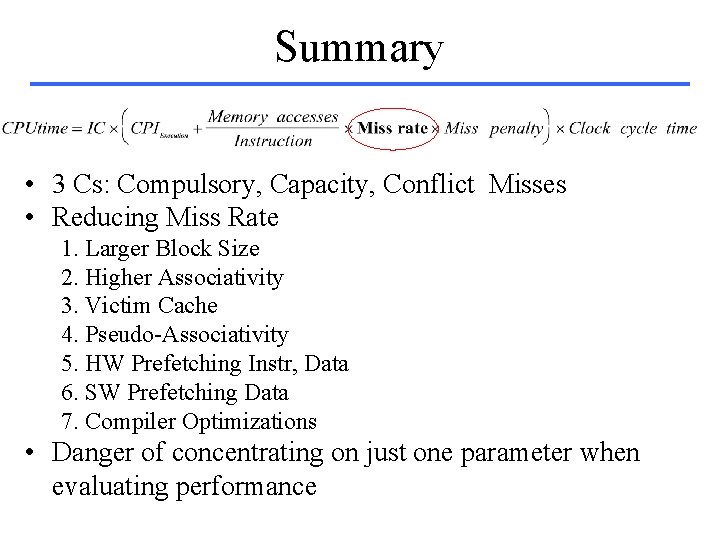

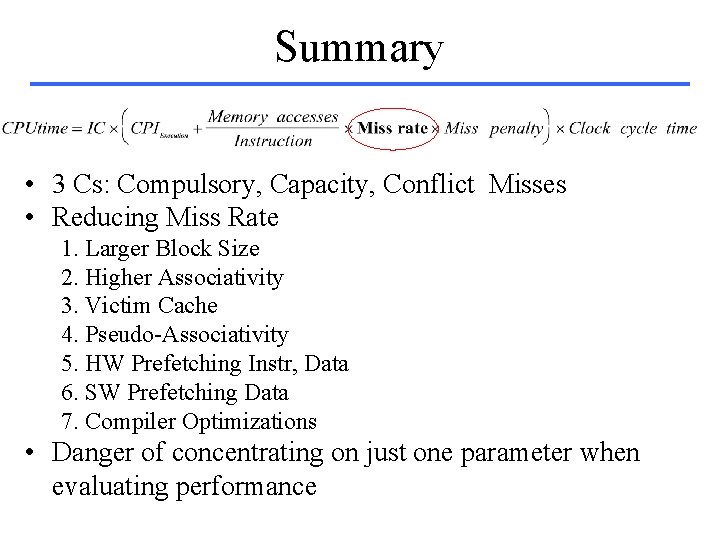

Summary • 3 Cs: Compulsory, Capacity, Conflict Misses • Reducing Miss Rate 1. Larger Block Size 2. Higher Associativity 3. Victim Cache 4. Pseudo-Associativity 5. HW Prefetching Instr, Data 6. SW Prefetching Data 7. Compiler Optimizations • Danger of concentrating on just one parameter when evaluating performance

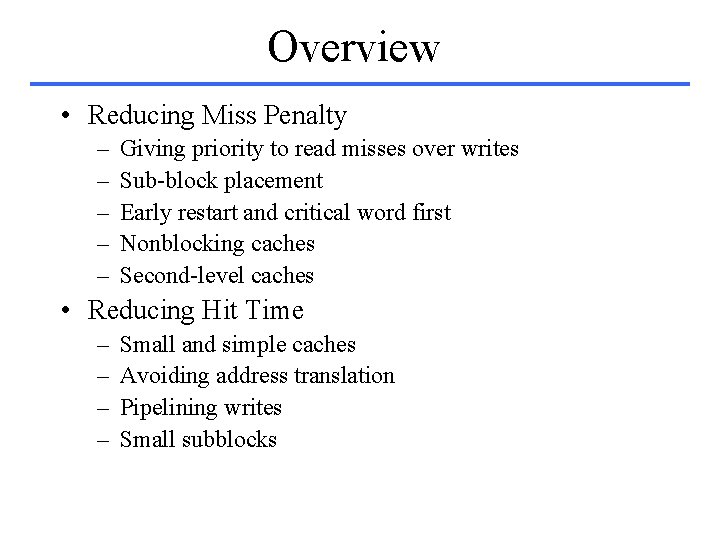

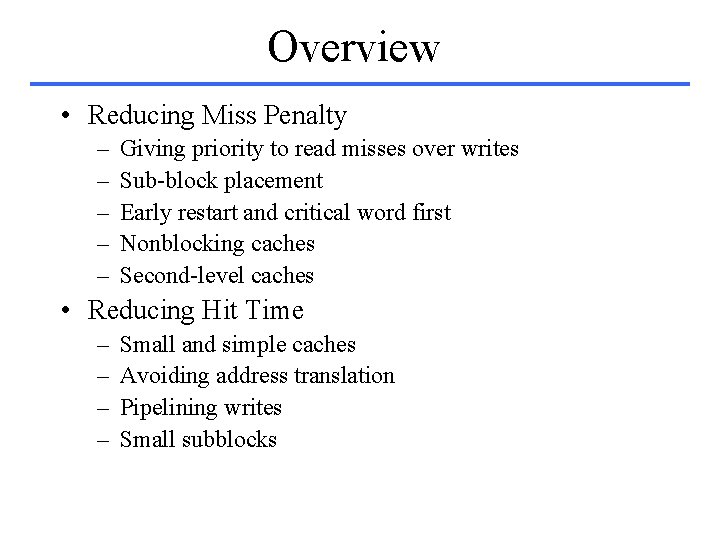

Overview • Reducing Miss Penalty – – – Giving priority to read misses over writes Sub-block placement Early restart and critical word first Nonblocking caches Second-level caches • Reducing Hit Time – – Small and simple caches Avoiding address translation Pipelining writes Small subblocks

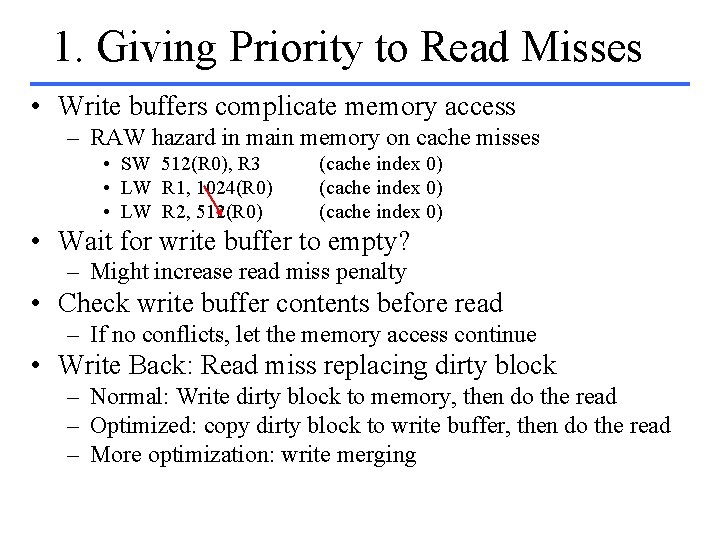

1. Giving Priority to Read Misses • Write buffers complicate memory access – RAW hazard in main memory on cache misses • SW 512(R 0), R 3 • LW R 1, 1024(R 0) • LW R 2, 512(R 0) (cache index 0) • Wait for write buffer to empty? – Might increase read miss penalty • Check write buffer contents before read – If no conflicts, let the memory access continue • Write Back: Read miss replacing dirty block – Normal: Write dirty block to memory, then do the read – Optimized: copy dirty block to write buffer, then do the read – More optimization: write merging

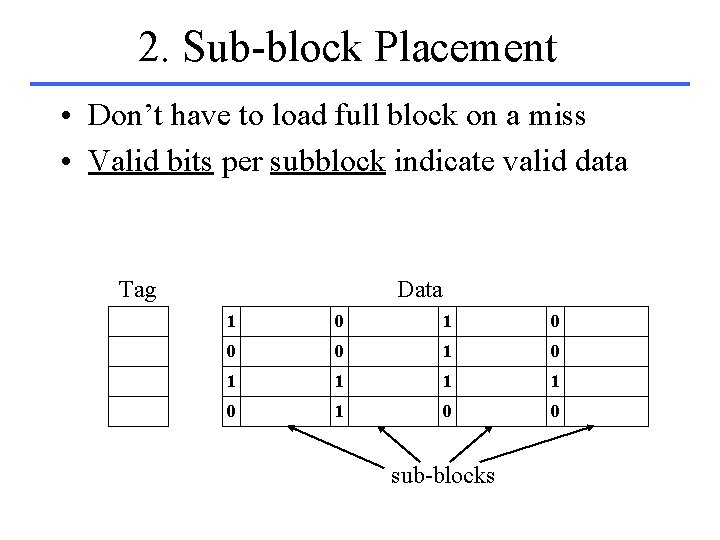

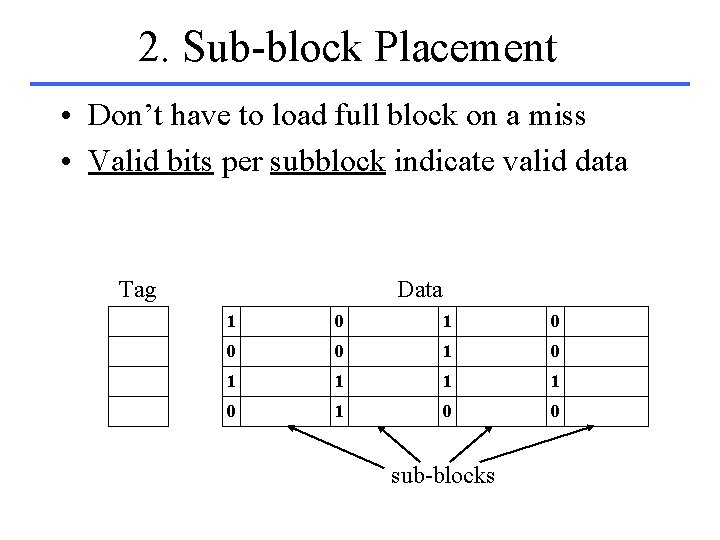

2. Sub-block Placement • Don’t have to load full block on a miss • Valid bits per subblock indicate valid data Tag Data 1 0 0 0 1 1 1 1 0 0 sub-blocks

3. Early Restart • Don’t wait for full block to be loaded – Early restart—As soon as the requested word arrives, send it to the CPU and let the CPU continue execution – Critical Word First—Request the missed word first and send it to the CPU as soon as it arrives; then fill in the rest of the words in the block. • Generally useful only in large blocks • Extremely good spatial locality can reduce impact – Back to back reads on two halves of cache block does not save you much (see example in book) – Need to schedule instructions!

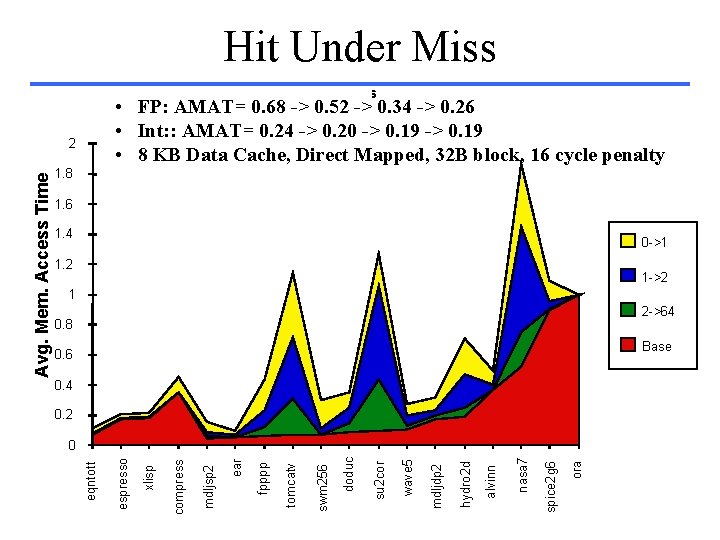

4. Nonblocking Caches • Non-blocking caches continue to supply cache hits during a miss – requires out-of-order execution CPU • “hit under miss” reduces the effective miss penalty by working during miss vs. ignoring CPU requests • “hit under multiple miss” may further lower the effective miss penalty by overlapping multiple misses – Significantly increases the complexity of the cache controller – Requires multiple memory banks (otherwise cannot support) – Pentium Pro allows 4 outstanding memory misses

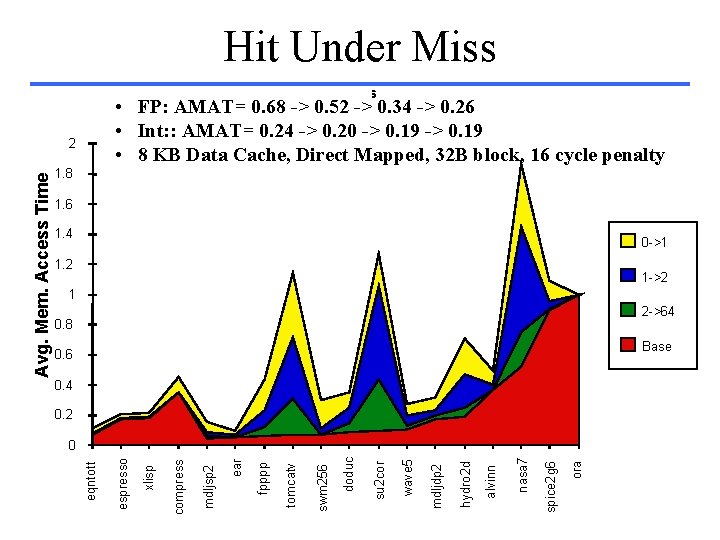

Hit Under Miss Hit Under i Misses • FP: AMAT= 0. 68 -> 0. 52 -> 0. 34 -> 0. 26 • Int: : AMAT= 0. 24 -> 0. 20 -> 0. 19 • 8 KB Data Cache, Direct Mapped, 32 B block, 16 cycle penalty 1. 8 1. 6 1. 4 0 ->1 1. 2 1 ->2 1 2 ->64 0. 8 Base 0. 6 0. 4 0. 2 ora spice 2 g 6 nasa 7 alvinn hydro 2 d mdljdp 2 wave 5 su 2 cor doduc swm 256 tomcatv fpppp ear mdljsp 2 compress xlisp espresso 0 eqntott Avg. Mem. Access Time 2

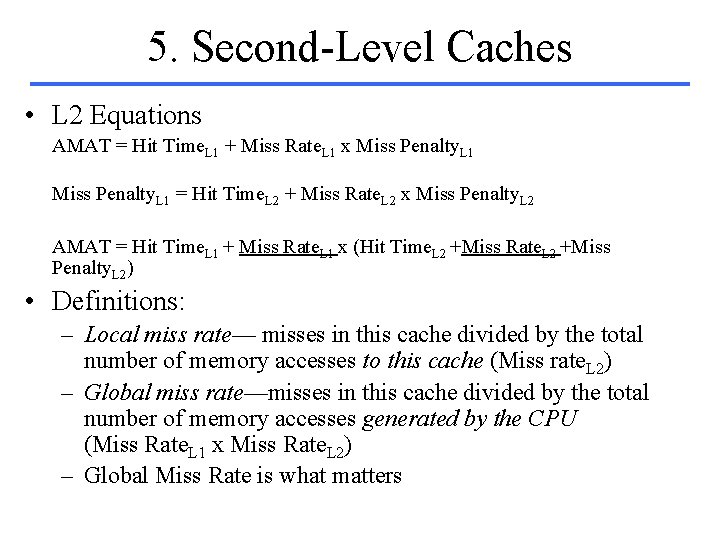

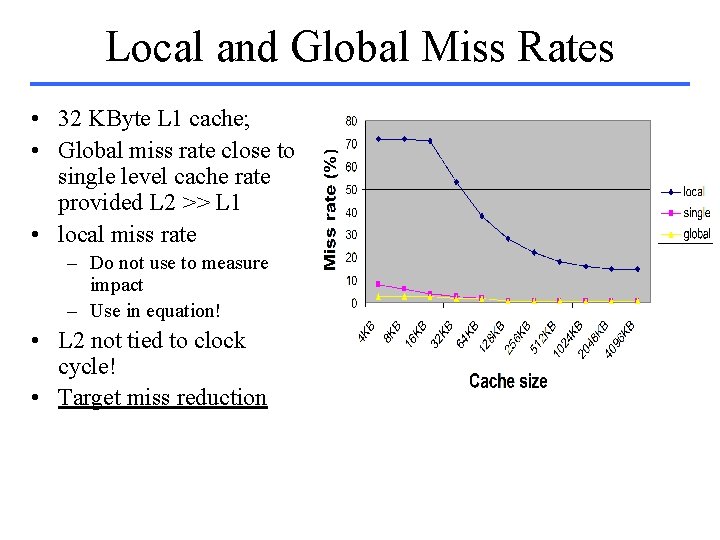

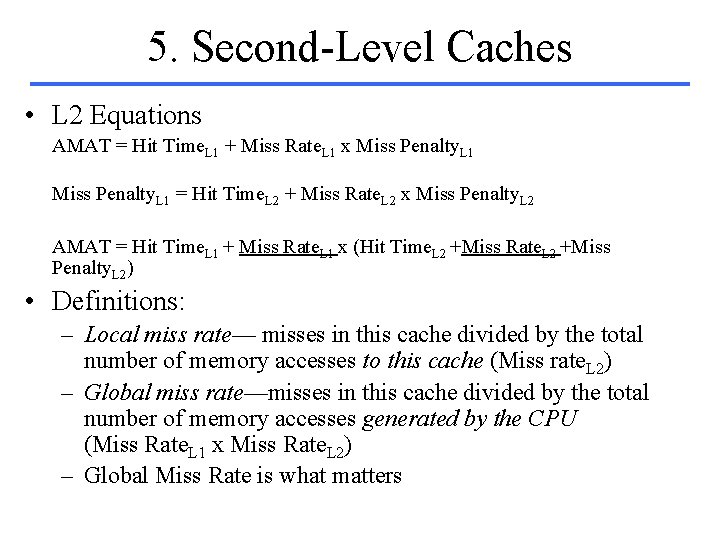

5. Second-Level Caches • L 2 Equations AMAT = Hit Time. L 1 + Miss Rate. L 1 x Miss Penalty. L 1 = Hit Time. L 2 + Miss Rate. L 2 x Miss Penalty. L 2 AMAT = Hit Time. L 1 + Miss Rate. L 1 x (Hit Time. L 2 +Miss Rate. L 2 +Miss Penalty. L 2) • Definitions: – Local miss rate— misses in this cache divided by the total number of memory accesses to this cache (Miss rate. L 2) – Global miss rate—misses in this cache divided by the total number of memory accesses generated by the CPU (Miss Rate. L 1 x Miss Rate. L 2) – Global Miss Rate is what matters

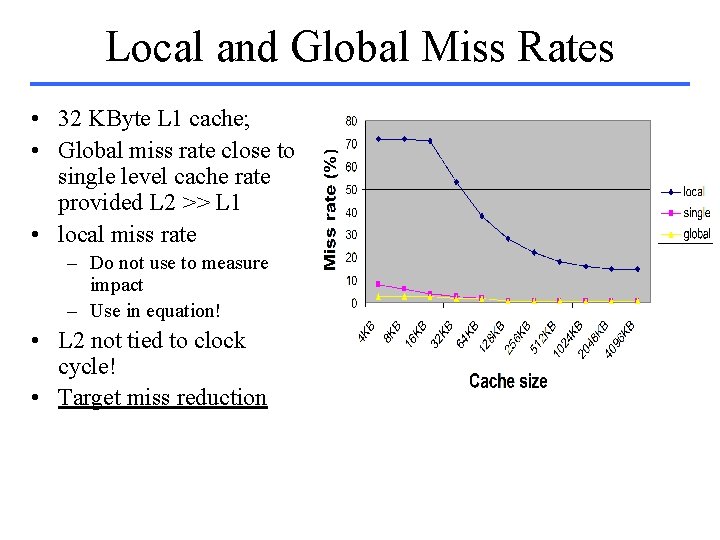

Local and Global Miss Rates • 32 KByte L 1 cache; • Global miss rate close to single level cache rate provided L 2 >> L 1 • local miss rate – Do not use to measure impact – Use in equation! • L 2 not tied to clock cycle! • Target miss reduction

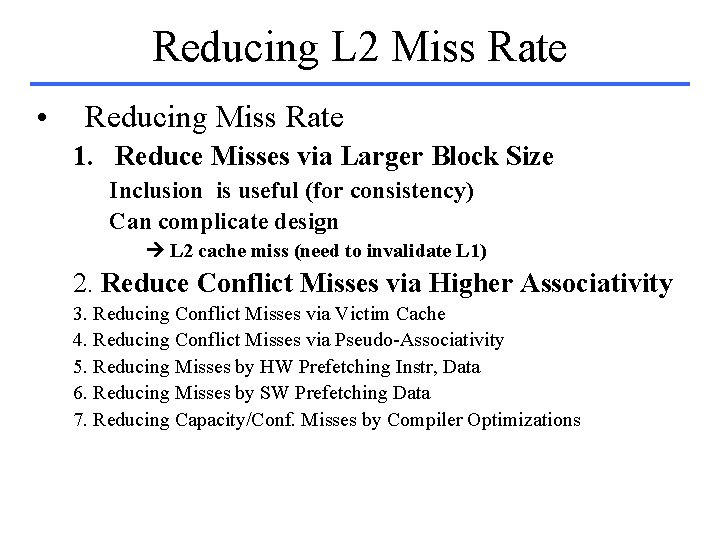

Reducing L 2 Miss Rate • Reducing Miss Rate 1. Reduce Misses via Larger Block Size Inclusion is useful (for consistency) Can complicate design L 2 cache miss (need to invalidate L 1) 2. Reduce Conflict Misses via Higher Associativity 3. Reducing Conflict Misses via Victim Cache 4. Reducing Conflict Misses via Pseudo-Associativity 5. Reducing Misses by HW Prefetching Instr, Data 6. Reducing Misses by SW Prefetching Data 7. Reducing Capacity/Conf. Misses by Compiler Optimizations

Miss Penalty Summary • Five techniques – – – Read priority over write on miss Subblock placement Early Restart and Critical Word First on miss Non-blocking Caches (Hit under Miss) L 2 Cache • Can be applied recursively to Multilevel Caches – Danger: time to DRAM will grow with multiple level

Reducing Hit Time • Hit time affects the CPU clock rate – Even for machines that take multiple cycles to access the cache • Techniques – Small and simple caches – Avoiding address translation – Pipelining writes

1. Small and Simple Caches • Small hardware is faster • Fits on the same chip as the processor • Alpha 21164 has 8 KB Instruction and 8 KB data cache + 96 KB second level cache? – Small data cache and fast clock rate • Direct Mapped, on chip – Overlap tag check with data transmission – For L 2 keep tag check on chip, data off chip fast tag check, large capacity associated with separate memory chip

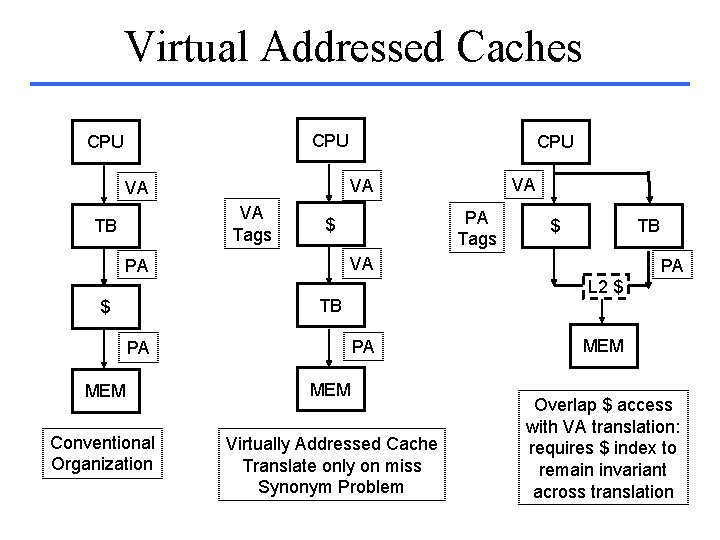

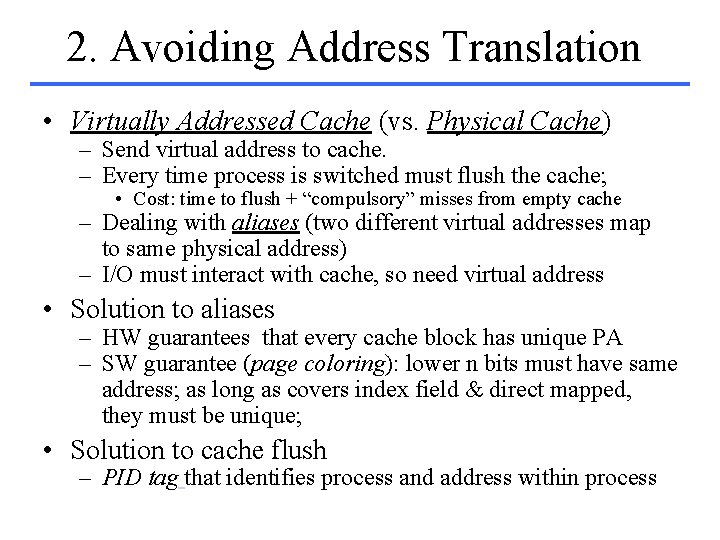

2. Avoiding Address Translation • Virtually Addressed Cache (vs. Physical Cache) – Send virtual address to cache. – Every time process is switched must flush the cache; • Cost: time to flush + “compulsory” misses from empty cache – Dealing with aliases (two different virtual addresses map to same physical address) – I/O must interact with cache, so need virtual address • Solution to aliases – HW guarantees that every cache block has unique PA – SW guarantee (page coloring): lower n bits must have same address; as long as covers index field & direct mapped, they must be unique; • Solution to cache flush – PID tag that identifies process and address within process

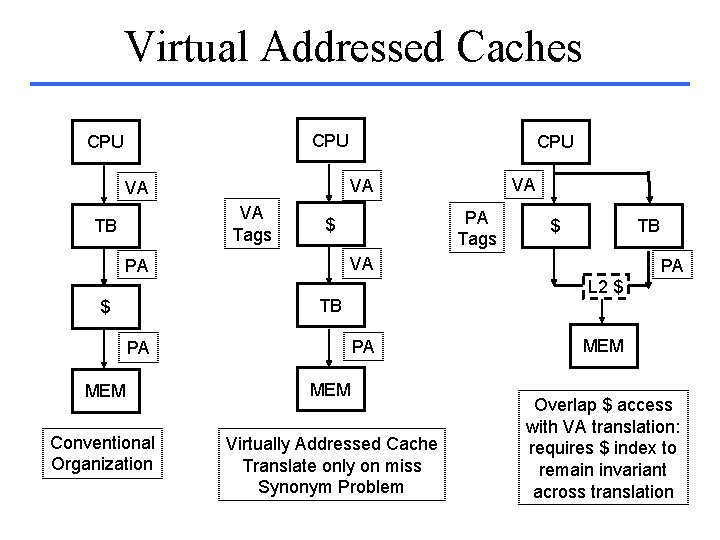

Virtual Addressed Caches CPU VA Tags PA Tags $ $ TB VA PA PA L 2 $ TB $ VA VA VA TB CPU MEM Conventional Organization Virtually Addressed Cache Translate only on miss Synonym Problem MEM Overlap $ access with VA translation: requires $ index to remain invariant across translation

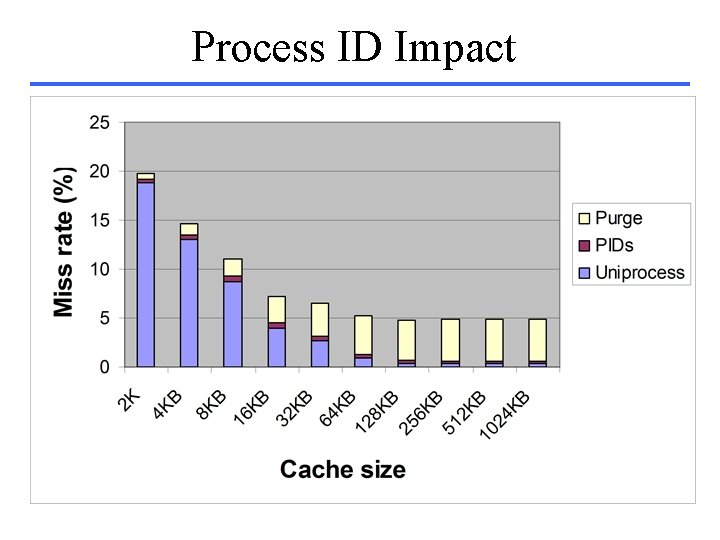

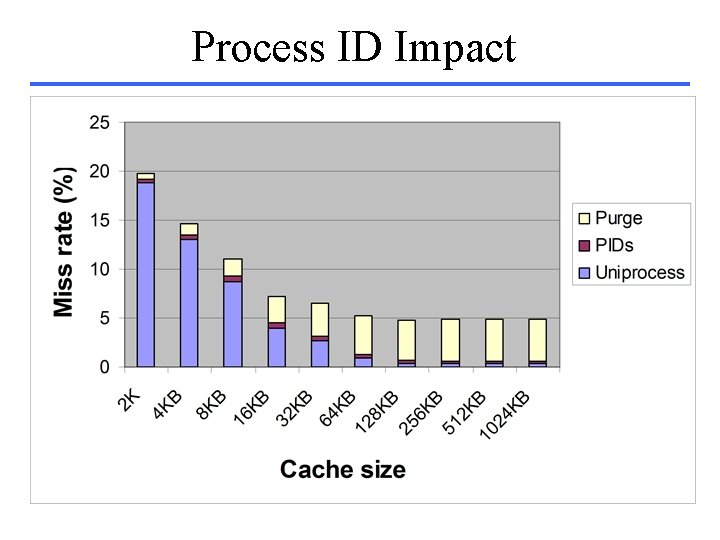

Process ID Impact

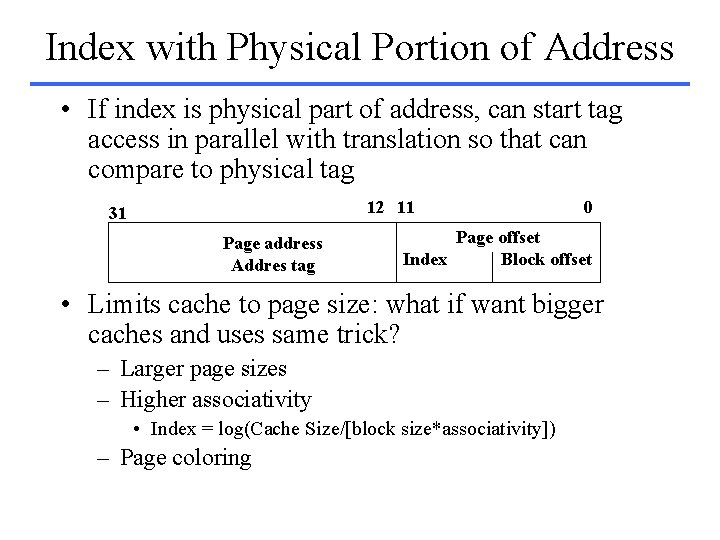

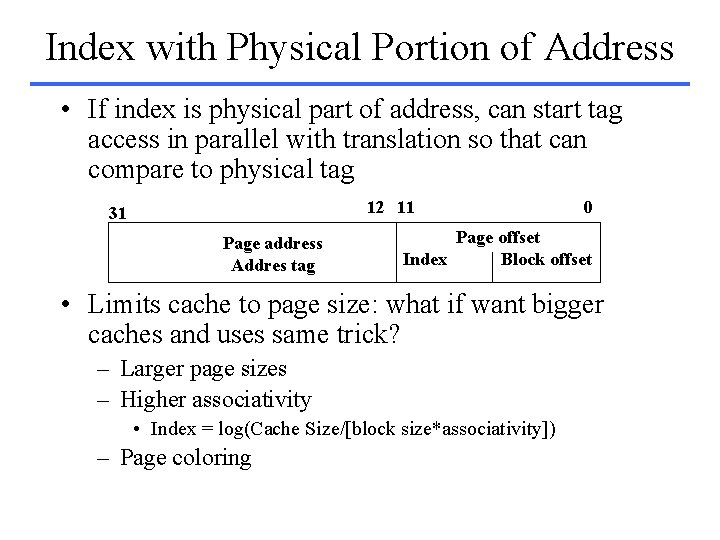

Index with Physical Portion of Address • If index is physical part of address, can start tag access in parallel with translation so that can compare to physical tag 12 11 31 Page address Addres tag 0 Page offset Index Block offset • Limits cache to page size: what if want bigger caches and uses same trick? – Larger page sizes – Higher associativity • Index = log(Cache Size/[block size*associativity]) – Page coloring

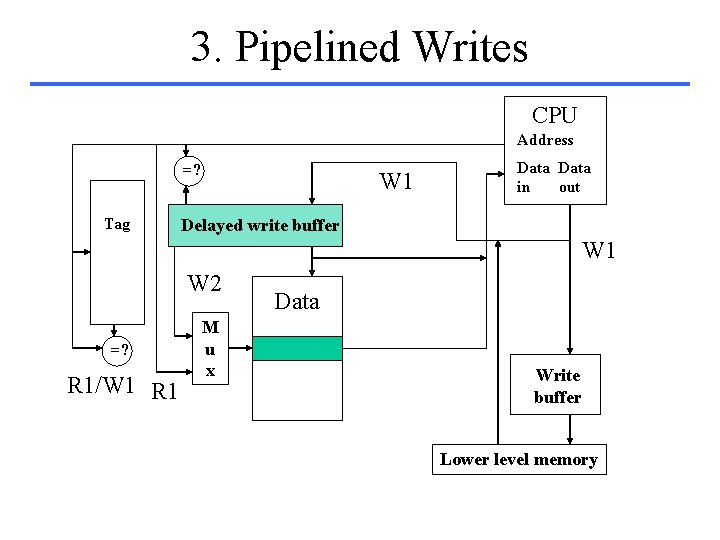

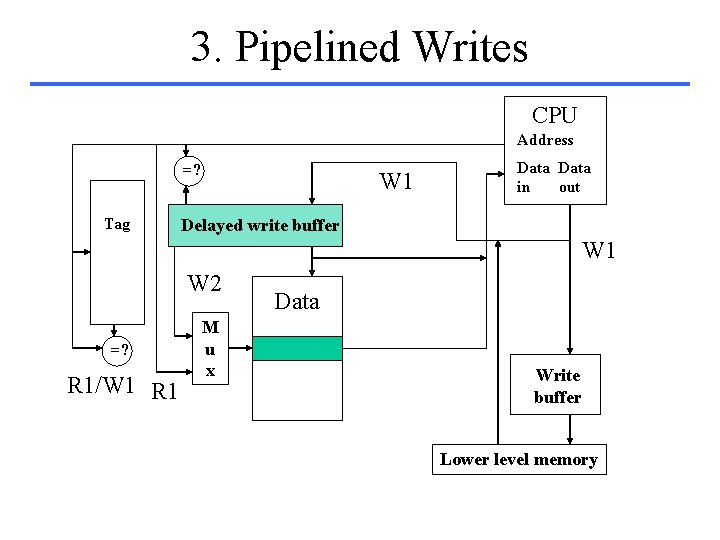

3. Pipelined Writes CPU Address =? Tag W 1 Data in out Delayed write buffer W 1 W 2 =? R 1/W 1 R 1 M u x Data Write buffer Lower level memory