Sublinear Algorithms via Precision Sampling Alexandr Andoni Microsoft

Sublinear Algorithms via Precision Sampling Alexandr Andoni (Microsoft Research) joint work with: Robert Krauthgamer (Weizmann Inst. ) Krzysztof Onak (CMU)

Goal Compute the number of Dacians in the empire Estimate S=a 1+a 2+…an where ai [0, 1] sublinearly…

Sampling Send accountants to a subset J of provinces Estimator: S =∑j J aj * n/J Chebyshev bound: with 90% success probability 0. 5*S – O(n/m) < S < 2*S + O(n/m) For constant additive error, need m~n

Precision Sampling Framework Send accountants to each province, but require only approximate counts Estimate a i, up to some pre-selected precision ui: |ai – a i| < ui Challenge: achieve good trade-off between quality of approximation to S total cost of estimating each a i to precision ui

Formalization Sum Estimator Adversary 1. fix precisions ui 1. fix a 1, a 2, …an 3. given a 1, a 2, …a n, output S s. t. |∑ai – S | < 1. What is cost? 2. fix a 1, a 2, …a n s. t. |ai – a i| < ui Here, average cost = 1/n * ∑ 1/ui to achieve precision ui, use 1/ui “resources”: e. g. , if ai is itself a sum ai=∑jaij computed by subsampling, then one needs Θ(1/ui) samples For example, can choose all ui=1/n Average cost ≈ n This is best possible, if estimator S = ∑a i

Precision Sampling Lemma Goal: estimate ∑ai from {a i} satisfying |ai-a i|<ui. Precision Sampling Lemma: can get, with 90% success: ε 1+ε – ε < S <error (1+ ε)S + ε 1. 5 multiplicative error: O(1)Sadditive and O(ε-3 log n) S – O(1) < S < 1. 5*S + O(1) with average cost equal to O(log n) Example: distinguish Σai=5 vs Σai=0 Consider two extreme cases: if five ai=1: sample all, but need only crude approx (ui=1/10) if all ai=5/n: only few with good approx ui=1/n, and the rest with ui=1

Precision Sampling Algorithm Precision Sampling Lemma: can get, with 90% success: ε 1+ε Algorithm: O(1)Sadditive and – ε < S <error (1+ ε)S + ε 1. 5 multiplicative error: O(ε-3 log n) S – O(1) < S < 1. 5*S + O(1) with average cost equal to O(log n) concrete distrib. = minimum of O(ε-3) u. r. v. [a i /ui - 4/ε]+ and ui’s Choose each function ui [0, 1] of i. i. d. Estimator: S = count number of i‘s s. t. a i / ui > 6 (modulo a normalization constant) Proof of correctness: we use only a i which are (1+ε)-approximation to ai E[S ] ≈ ∑ Pr[ai / ui > 6] = ∑ ai/6. E[1/u] = O(log n) w. h. p.

Why? Save time: Problem: computing edit distance between two strings new algorithm that obtains (log n)1/ε approximation in n 1+O(ε) time via efficient property-testing algorithm that uses Precision Sampling More details: see the talk by Robi on Friday! Save space: Problem: compute norms/frequency moments in streams gives a simple and unified approach to compute all lp, Fk moments, and other goodies More details: now

Streaming frequencies Setup: 1+ε estimate frequencies in small space Ethnicity Let xi = frequency of ethnicity i kth moment: Σxik k [0, 2]: space O(1/ε 2) Dacians 358 Galois 12 Barbarians 2988 [AMS’ 96, I’ 00, GC 07, Li 08, NW 10, KNPW 11] k>2: space O (n 1 -2/k) [AMS’ 96, SS’ 02, BYJKS’ 02, CKS’ 03, IW’ 05, BGKS’ 06, BO 10] Sometimes frequencies xi are negative: If measuring traffic difference (delay, etc) We want linear “dim reduction” L: Rn Rm m<<n Frequency

Norm Estimation via Precision Sampling Idea: Use PSL to compute the sum ||x||kk=∑ |xi|k General approach 1. Pick ui’s according to PSL and let yi=xi/ui 1/k 2. Compute all yik up to additive approximation O(1) Can be done by computing the heavy hitters of the vector y 3. Use PSL to compute the sum ||x||kk=∑ |xi|k Space bound is controlled by the norm ||y||2 Since heavy hitters under l 2 is the best we can do Note that ||y||2≤||x||2 * E[1/ui]

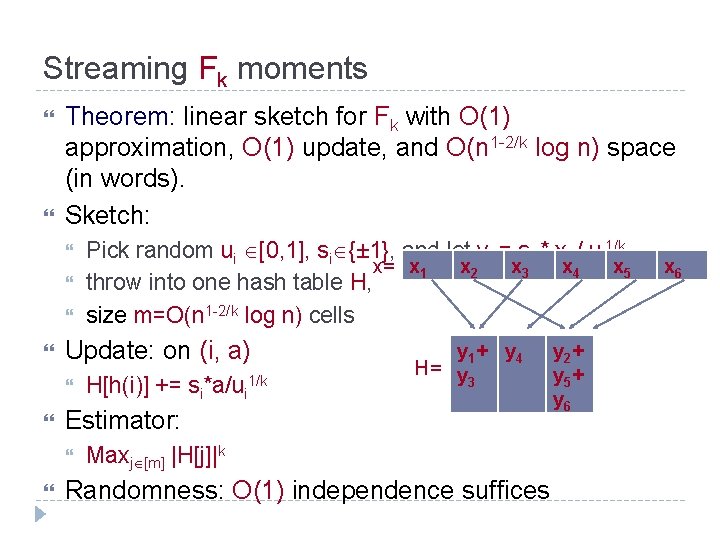

Streaming Fk moments Theorem: linear sketch for Fk with O(1) approximation, O(1) update, and O(n 1 -2/k log n) space (in words). Sketch: Update: on (i, a) H[h(i)] += si*a/ui 1/k y 1 + y 4 H= y 3 Estimator: Pick random ui [0, 1], si {± 1}, and let yi = si * xi / ui 1/k x= x 1 x 2 x 3 x 4 x 5 throw into one hash table H, size m=O(n 1 -2/k log n) cells Maxj [m] |H[j]|k Randomness: O(1) independence suffices y 2 + y 5 + y 6 x 6

More Streaming Algorithms Other streaming algorithms: Algorithm for all k-moments, including k≤ 2 For k>2, improves existing space bounds [AMS 96, IW 05, BGKS 06, BO 10] For k≤ 2, worse space bounds [AMS 96, I 00, GC 07, Li 08, NW 10, KNPW 11] Improved algorithm for mixed norms (lp of lk) [CM 05, GBD 08, JW 09] space bounded by (Rademacher) p-type constant Algorithm for lp-sampling problem [MW’ 10] This work extended to give tight bounds by [JST’ 11] Connections: Inspired by the streaming algorithm of [IW 05], but simpler Turns out to be distant relative of Priority Sampling [DLT’ 07]

Finale Other applications for Precision Sampling framework ? Better algorithms for precision sampling ? Best bound for average cost (for 1+ε approximation) Bounds for other cost models? Upper bound: O(1/ ε 3 * log n) (tight for our algorithm) Lower bound: Ω(1/ ε 2 * log n) E. g. , for 1/square root of precision, the bound is O(1 / ε 3/2) Other forms of “access” to ai’s ?

- Slides: 13