Subgroup Discovery Finding Local Patterns in Data Exploratory

Subgroup Discovery Finding Local Patterns in Data

Exploratory Data Analysis § Scan the data without much prior focus § Find unusual parts of the data § Analyse attribute dependencies § interpret this as ‘rule’: if X=x and Y=y then Z is unusual § Complex data: nominal, numeric, many attributes the Subgroup

Exploratory Data Analysis § Classification: model the dependency of the target on the remaining attributes § problem: sometimes classifier is a black-box, or uses only some of the available dependencies § for example: in decision trees, some attributes may not appear because of overshadowing § Exploratory Data Analysis: understanding the effects of all attributes on the target

Interactions between Attributes § Single-attribute effects are not enough § XOR problem is extreme example: 2 attributes with no info gain form a good subgroup § Apart from A=a, B=b, C=c, … § consider also A=a B=b, A=a C=c, …, B=b C=c, … A=a B=b C=c, … …

Subgroup Discovery Task “Find all subgroups within the inductive constraints that show a significant deviation in the distribution of the target attribute” § Inductive constraints: § Minimum coverage § (Maximum coverage) § Minimum quality (Information gain, X 2, WRAcc) § Maximum complexity § …

Subgroup Discovery: the Binary Target Case

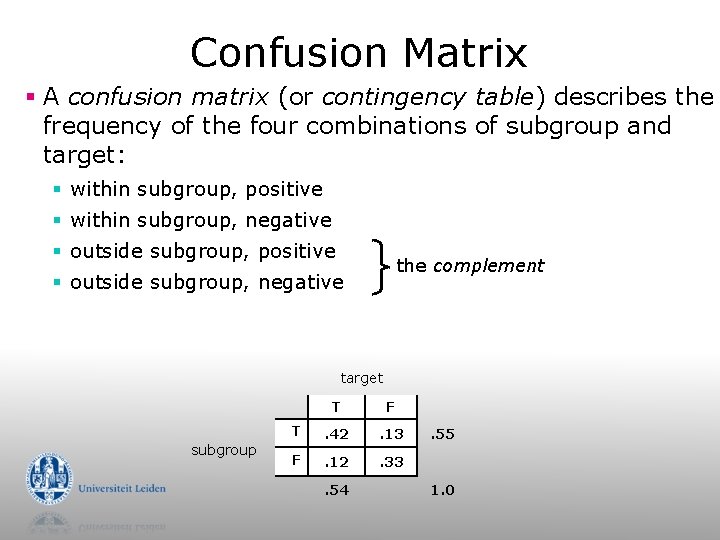

Confusion Matrix § A confusion matrix (or contingency table) describes the frequency of the four combinations of subgroup and target: § within subgroup, positive § within subgroup, negative § outside subgroup, positive the complement § outside subgroup, negative target subgroup T F T . 42 . 13 F . 12 . 33 . 54 . 55 1. 0

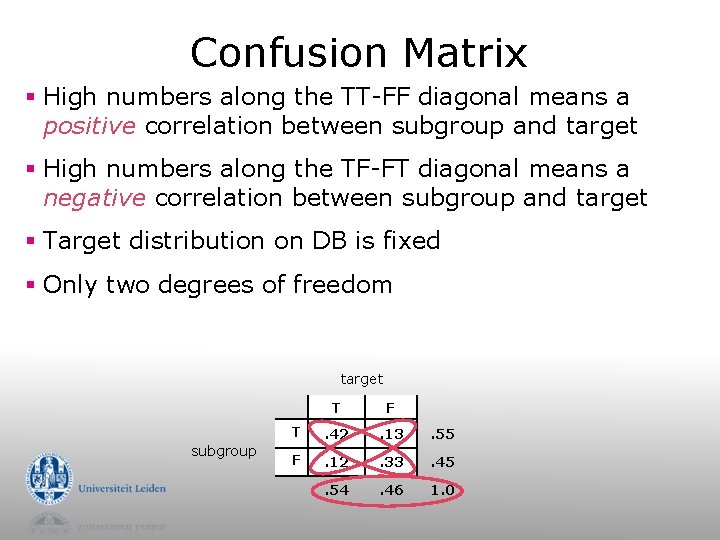

Confusion Matrix § High numbers along the TT-FF diagonal means a positive correlation between subgroup and target § High numbers along the TF-FT diagonal means a negative correlation between subgroup and target § Target distribution on DB is fixed § Only two degrees of freedom target subgroup T F T . 42 . 13 . 55 F . 12 . 33 . 45 . 54 . 46 1. 0

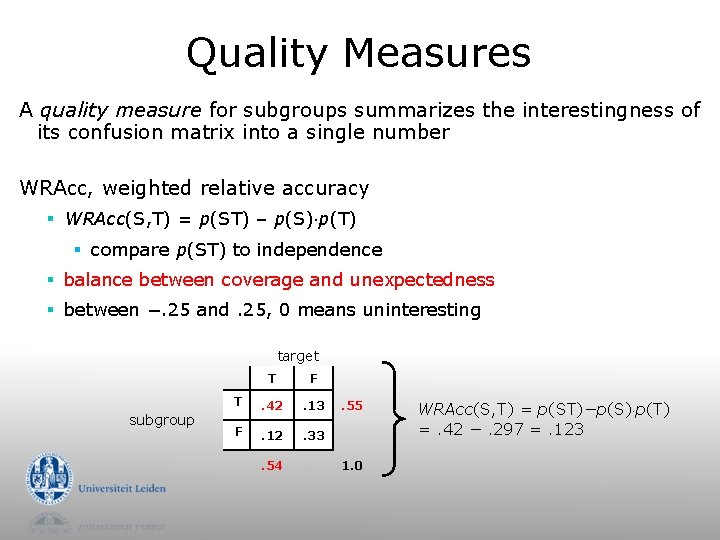

Quality Measures A quality measure for subgroups summarizes the interestingness of its confusion matrix into a single number WRAcc, weighted relative accuracy § WRAcc(S, T) = p(ST) – p(S) p(T) § compare p(ST) to independence § balance between coverage and unexpectedness § between −. 25 and. 25, 0 means uninteresting target subgroup T F T . 42 . 13 F . 12 . 33 . 54 . 55 1. 0 WRAcc(S, T) = p(ST)−p(S) p(T) =. 42 −. 297 =. 123

Quality Measures § WRAcc: Weighted Relative Accuracy § Information gain § X 2 § Correlation Coefficient § Laplace § Jaccard § Specificity § …

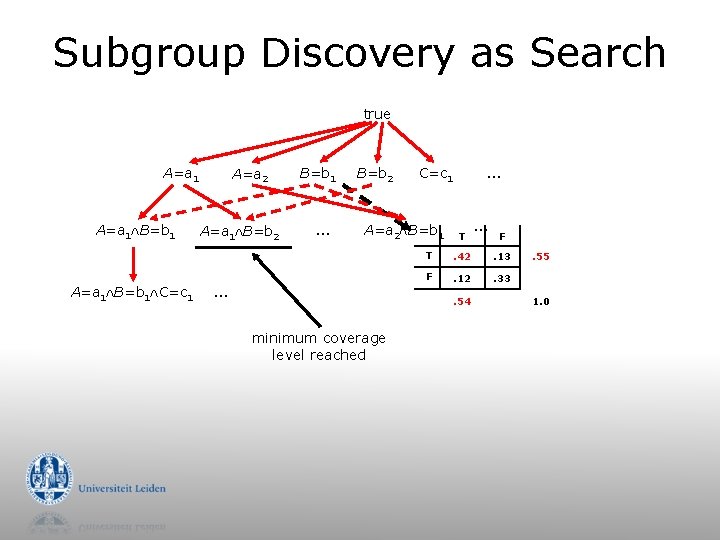

Subgroup Discovery as Search true A=a 1 B=b 1 C=c 1 A=a 2 A=a 1 B=b 2 B=b 1 … B=b 2 C=c 1 A=a 2 B=b 1 … … T F T . 42 . 13 F . 12 . 33 . 54 minimum coverage level reached … . 55 1. 0

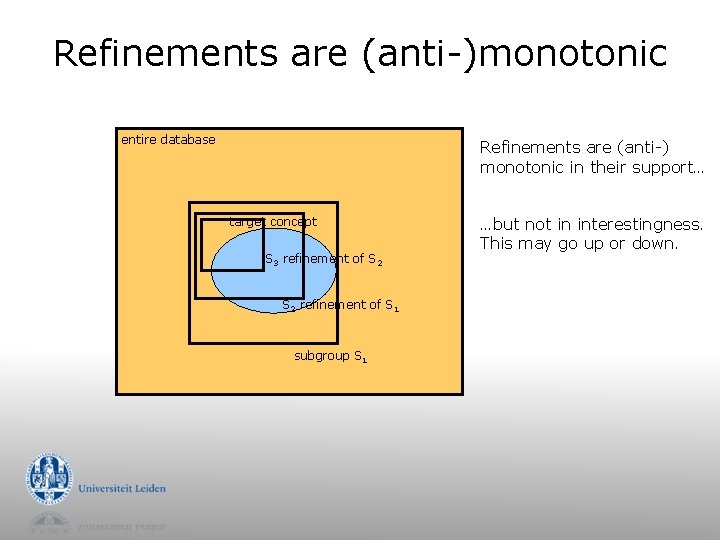

Refinements are (anti-)monotonic entire database Refinements are (anti-) monotonic in their support… target concept S 3 refinement of S 2 refinement of S 1 subgroup S 1 …but not in interestingness. This may go up or down.

Subgroup Discovery and ROC space

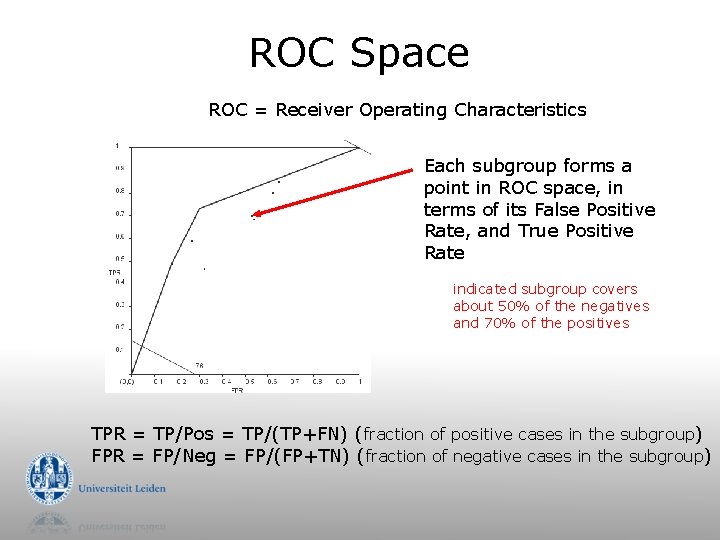

ROC Space ROC = Receiver Operating Characteristics Each subgroup forms a point in ROC space, in terms of its False Positive Rate, and True Positive Rate indicated subgroup covers about 50% of the negatives and 70% of the positives TPR = TP/Pos = TP/(TP+FN) (fraction of positive cases in the subgroup) FPR = FP/Neg = FP/(FP+TN) (fraction of negative cases in the subgroup)

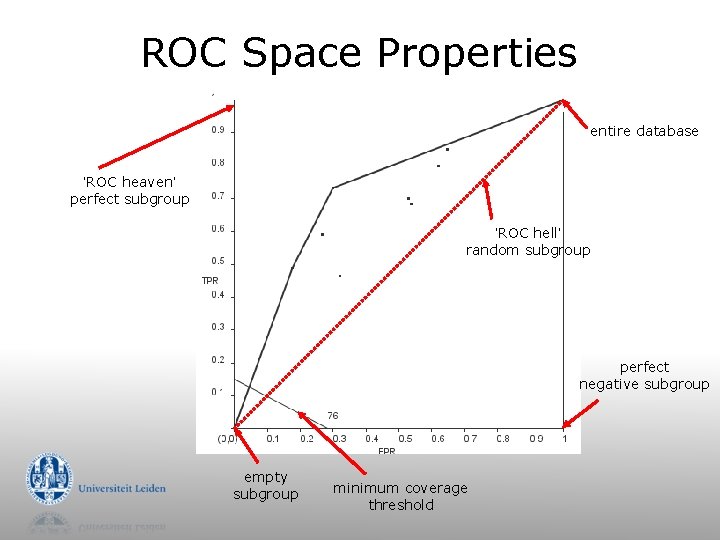

ROC Space Properties entire database ‘ROC heaven’ perfect subgroup ‘ROC hell’ random subgroup perfect negative subgroup empty subgroup minimum coverage threshold

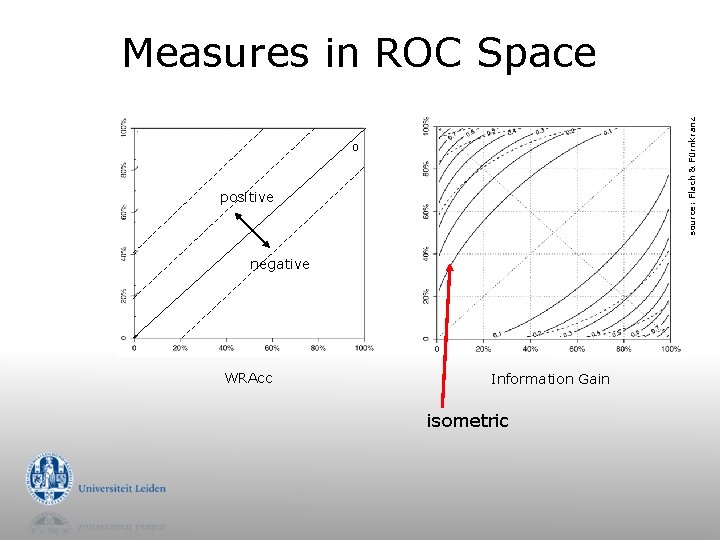

source: Flach & Fürnkranz Measures in ROC Space 0 positive negative WRAcc Information Gain isometric

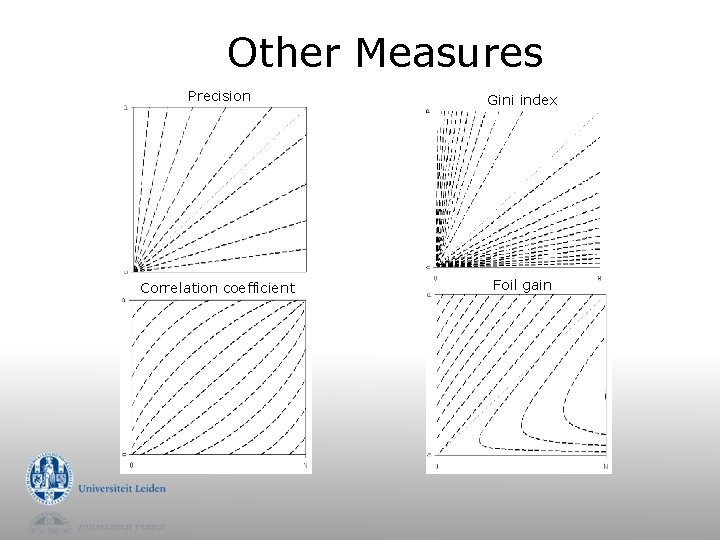

Other Measures Precision Gini index Correlation coefficient Foil gain

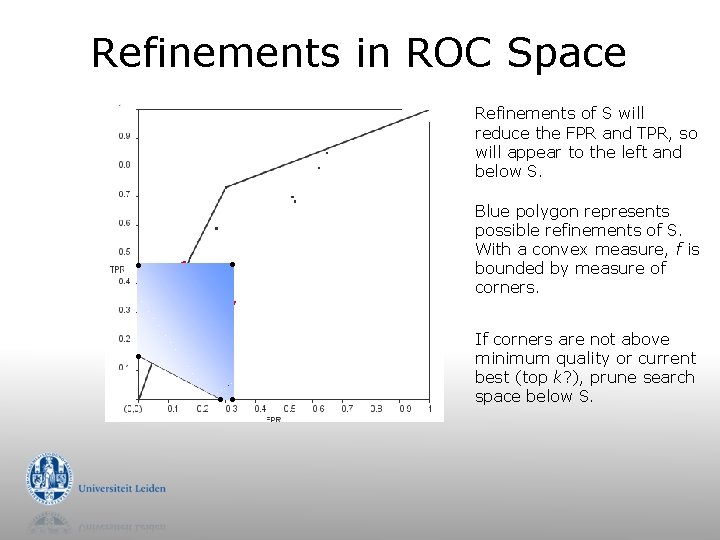

Refinements in ROC Space Refinements of S will reduce the FPR and TPR, so will appear to the left and below S. . . Blue polygon represents possible refinements of S. With a convex measure, f is bounded by measure of corners. If corners are not above minimum quality or current best (top k? ), prune search space below S.

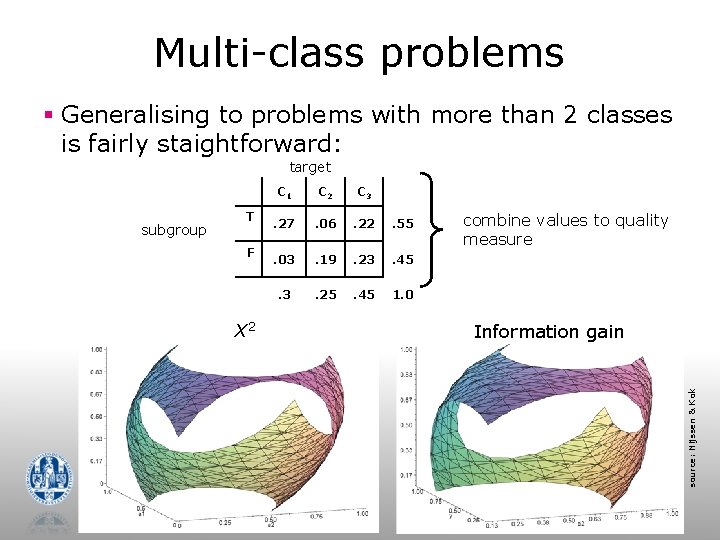

Multi-class problems § Generalising to problems with more than 2 classes is fairly staightforward: target T F X 2 C 3 . 27 . 06 . 22 . 55 . 03 . 19 . 23 . 45 . 3 . 25 . 45 1. 0 combine values to quality measure Information gain source: Nijssen & Kok subgroup C 1

Subgroup Discovery for Numeric Targets (regression)

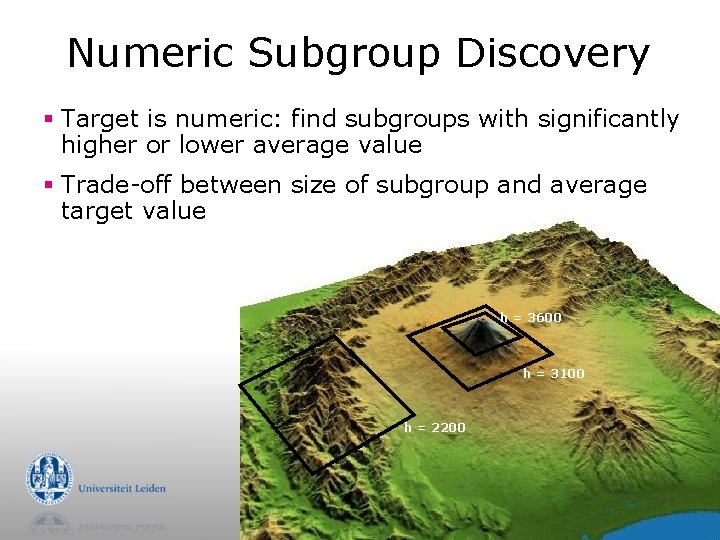

Numeric Subgroup Discovery § Target is numeric: find subgroups with significantly higher or lower average value § Trade-off between size of subgroup and average target value h = 3600 h = 3100 h = 2200

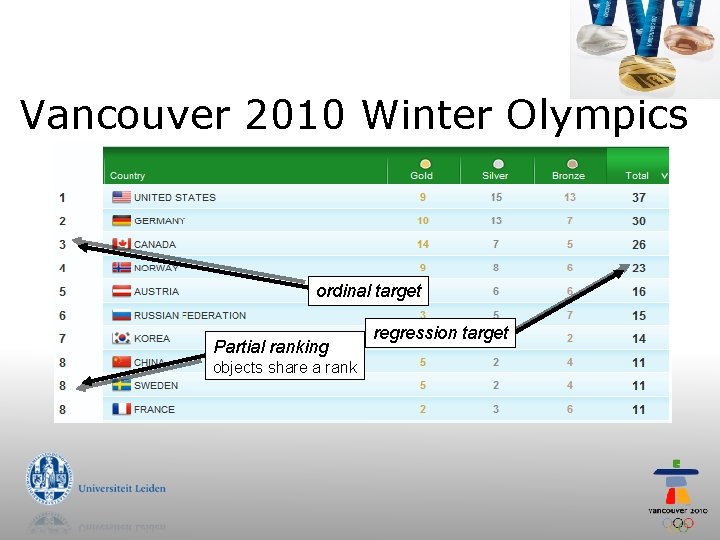

Vancouver 2010 Winter Olympics ordinal target Partial ranking objects share a rank regression target

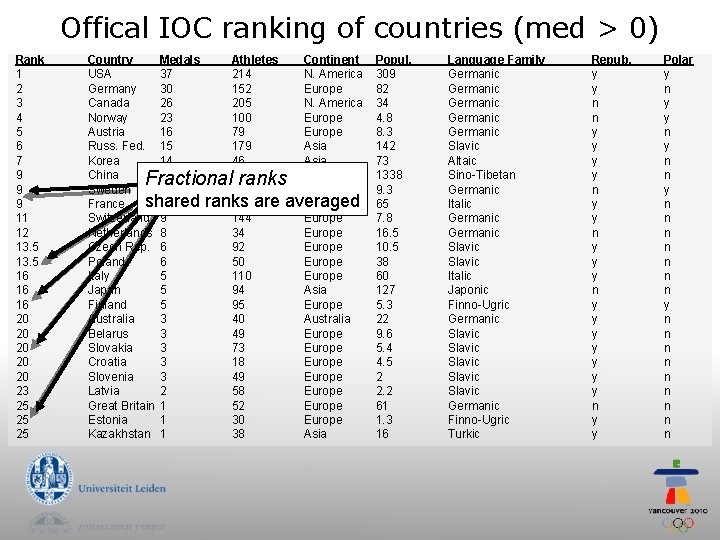

Offical IOC ranking of countries (med > 0) Rank 1 2 3 4 5 6 7 9 9 9 11 12 13. 5 16 16 16 20 20 20 23 25 25 25 Country Medals USA 37 Germany 30 Canada 26 Norway 23 Austria 16 Russ. Fed. 15 Korea 14 China 11 Sweden 11 shared France 11 Switzerland 9 Netherlands 8 Czech Rep. 6 Poland 6 Italy 5 Japan 5 Finland 5 Australia 3 Belarus 3 Slovakia 3 Croatia 3 Slovenia 3 Latvia 2 Great Britain 1 Estonia 1 Kazakhstan 1 Athletes 214 152 205 100 79 179 46 90 107 ranks 107 are 144 34 92 50 110 94 95 40 49 73 18 49 58 52 30 38 Continent N. America Europe Asia Europe averaged Europe Europe Asia Europe Australia Europe Europe Asia Fractional ranks Popul. 309 82 34 4. 8 8. 3 142 73 1338 9. 3 65 7. 8 16. 5 10. 5 38 60 127 5. 3 22 9. 6 5. 4 4. 5 2 2. 2 61 1. 3 16 Language Family Germanic Germanic Slavic Altaic Sino-Tibetan Germanic Italic Germanic Slavic Italic Japonic Finno-Ugric Germanic Slavic Slavic Germanic Finno-Ugric Turkic Repub. y y n n y y y n y y y y n y y Polar y n y n n n n

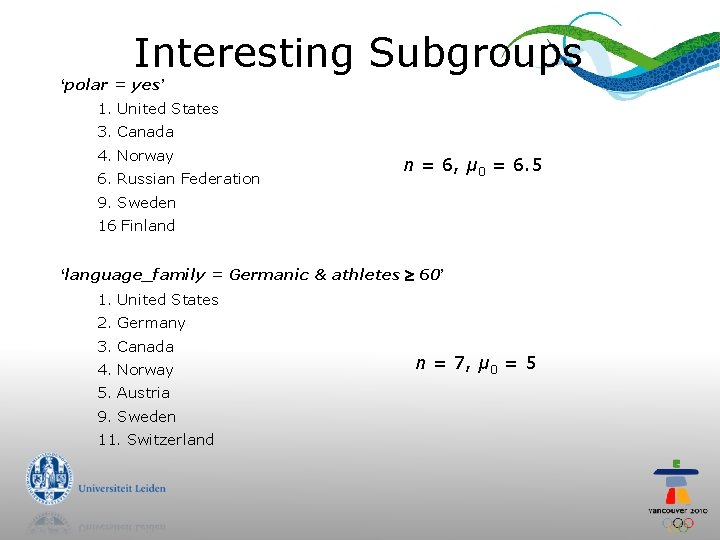

Interesting Subgroups ‘polar = yes’ 1. United States 3. Canada 4. Norway 6. Russian Federation n = 6, μ 0 = 6. 5 9. Sweden 16 Finland ‘language_family = Germanic & athletes 60’ 1. United States 2. Germany 3. Canada 4. Norway 5. Austria 9. Sweden 11. Switzerland n = 7, μ 0 = 5

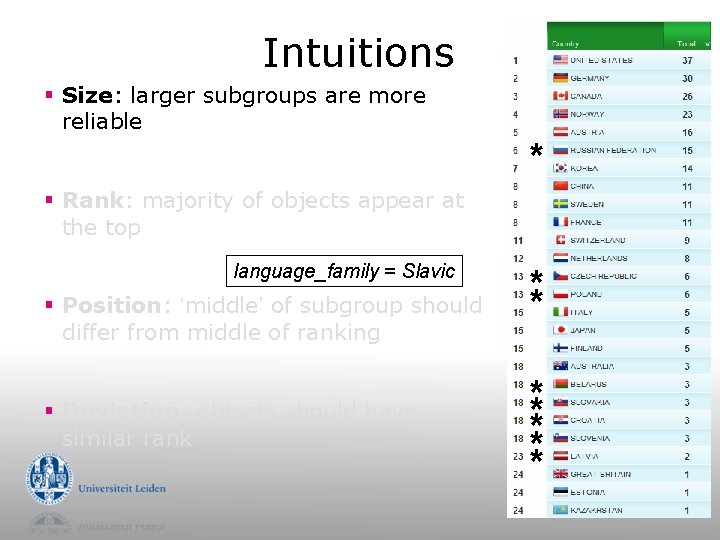

Intuitions § Size: larger subgroups are more reliable * § Rank: majority of objects appear at the top language_family = Slavic § Position: ‘middle’ of subgroup should differ from middle of ranking § Deviation: objects should have similar rank ** ** ** *

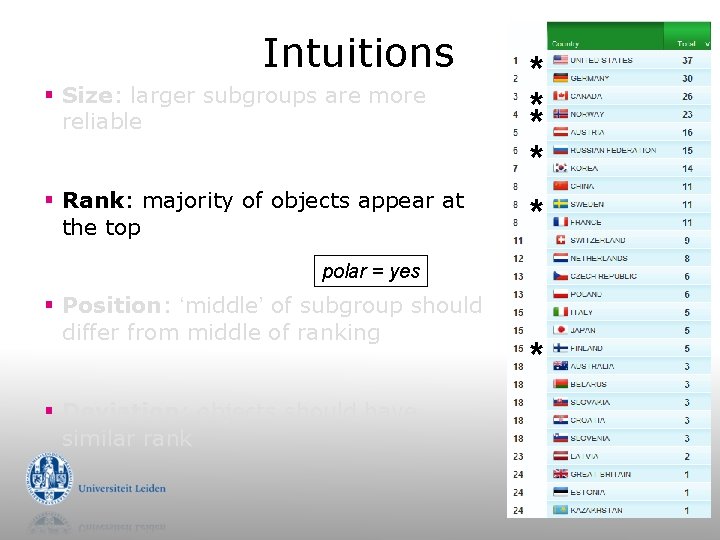

Intuitions § Size: larger subgroups are more reliable § Rank: majority of objects appear at the top * ** * * polar = yes § Position: ‘middle’ of subgroup should differ from middle of ranking § Deviation: objects should have similar rank *

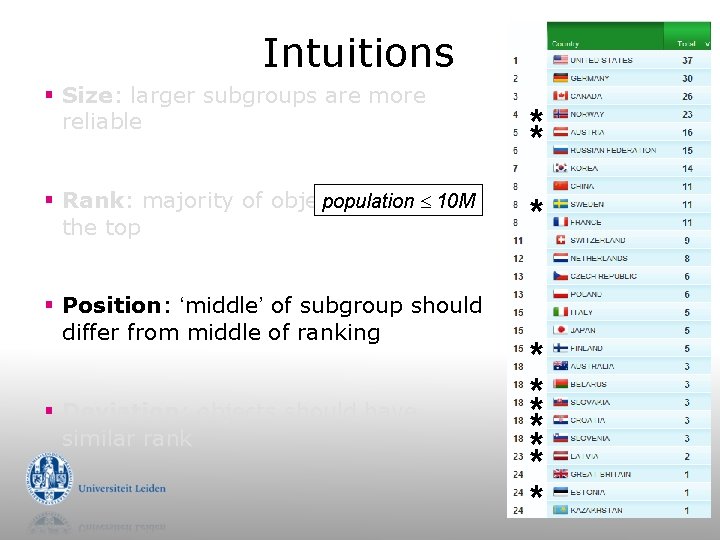

Intuitions § Size: larger subgroups are more reliable population 10 M § Rank: majority of objects appear at the top § Position: ‘middle’ of subgroup should differ from middle of ranking § Deviation: objects should have similar rank ** * * ** ** * *

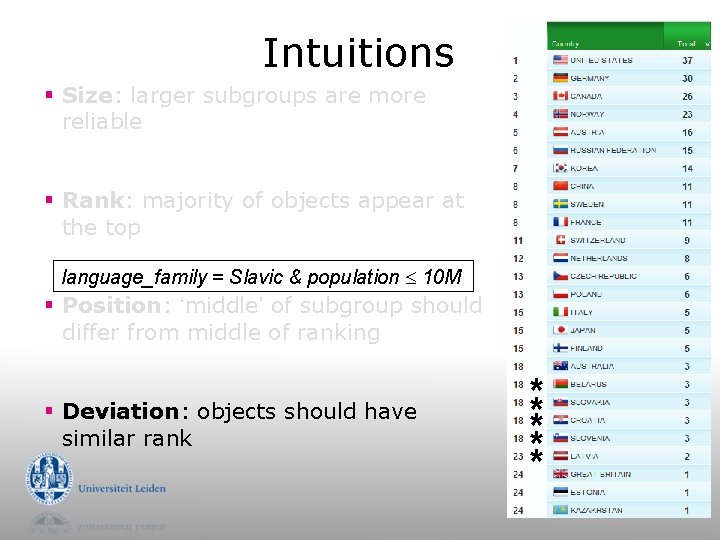

Intuitions § Size: larger subgroups are more reliable § Rank: majority of objects appear at the top language_family = Slavic & population 10 M § Position: ‘middle’ of subgroup should differ from middle of ranking § Deviation: objects should have similar rank ** ** *

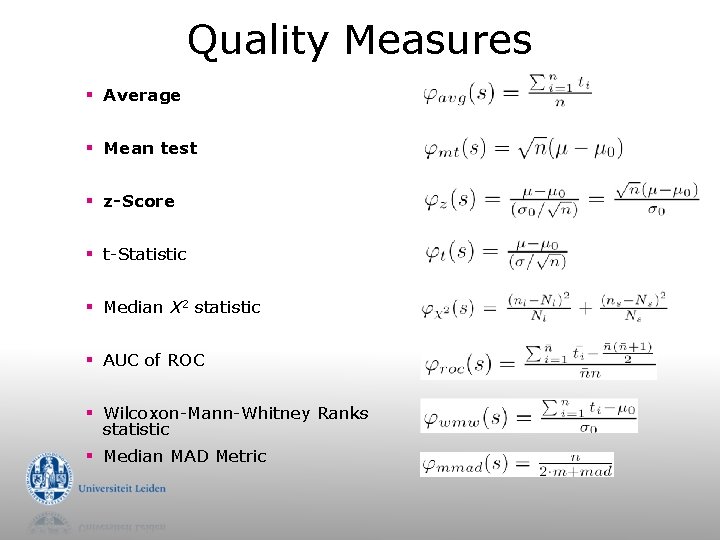

Quality Measures § Average § Mean test § z-Score § t-Statistic § Median X 2 statistic § AUC of ROC § Wilcoxon-Mann-Whitney Ranks statistic § Median MAD Metric

Cortana the open source Subgroup Discovery tool

Cortana Features § Generic Subgroup Discovery algorithm § quality measure § search strategy § inductive constraints § Flat file, . txt, . arff § Support for complex targets § >41 quality measures § ROC plots § Statistical validation

Target Concepts § ‘Classical’ Subgroup Discovery § nominal targets (classification) § numeric targets (regression) § Exceptional Model Mining § multiple targets § regression, correlation § multi-label classification (an extension of SD)

Mixed Data § Data types § binary § nominal § numeric § Numeric data is treated dynamically (no discretisation as preprocessing) § all: consider all available thresholds § bins: discretise the current candidate subgroup § best: find best threshold, and search from there

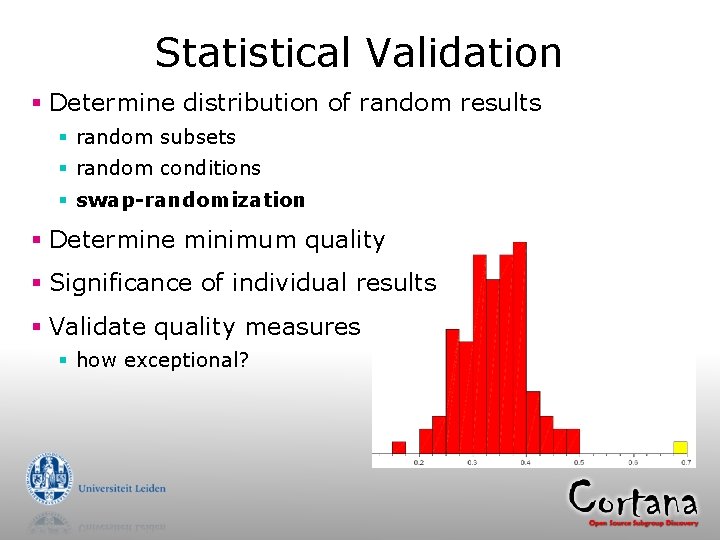

Statistical Validation § Determine distribution of random results § random subsets § random conditions § swap-randomization § Determine minimum quality § Significance of individual results § Validate quality measures § how exceptional?

Open Source § You can § Use Cortana binary datamining. liacs. nl/cortana. html § Use and modify Cortana sources (Java)

- Slides: 35