Student Process Data in Educational Assessment Wade Buckland

Student Process Data in Educational Assessment Wade Buckland, Research Scientist, Hum. RRO Cheryl Alcaya, Test Development Supervisor, Minnesota DOE Jennifer Dugan, Director of Statewide Testing, Minnesota DOE Monica Gribben, Senior Staff Research Scientist, Hum. RRO

Introduction to Process Data June 28, 2017 Presented at: CCSSO Austin, TX Presenter: Wade Buckland

paper assessment digital assessment scoring/reporting speed adaptive capability timing universal design innovative items

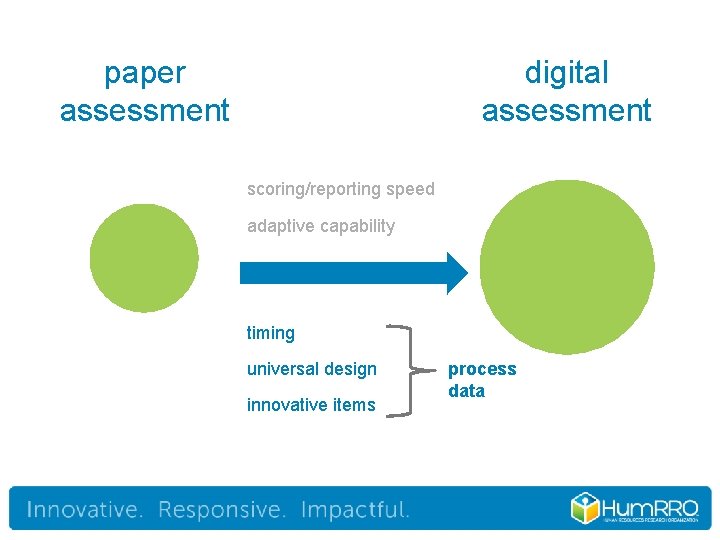

paper assessment digital assessment scoring/reporting speed adaptive capability timing universal design innovative items process data

process data user interactions/actions that can be observed/recorded – – clickstreams (next, back, etc. ) keyboard/touchscreen presses screen coordinates (mouse click) time stamping 5

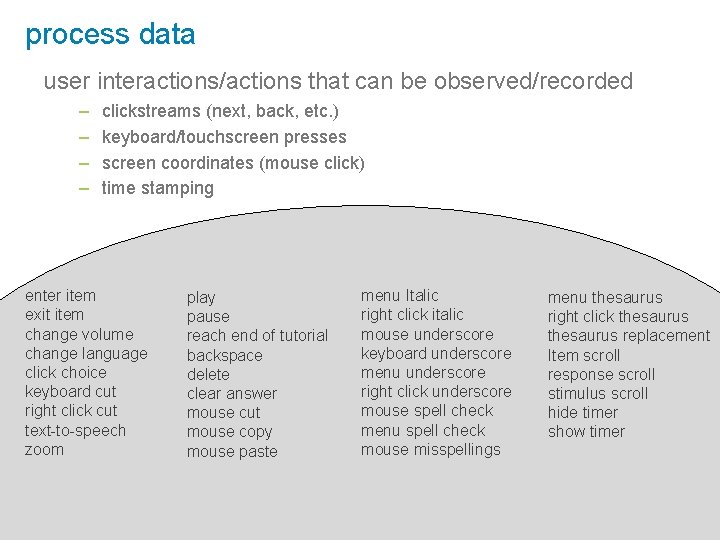

process data user interactions/actions that can be observed/recorded – – clickstreams (next, back, etc. ) keyboard/touchscreen presses screen coordinates (mouse click) time stamping enter item exit item change volume change language click choice keyboard cut right click cut text-to-speech zoom play pause reach end of tutorial backspace delete clear answer mouse cut mouse copy mouse paste menu Italic right click italic mouse underscore keyboard underscore menu underscore right click underscore mouse spell check menu spell check mouse misspellings menu thesaurus right click thesaurus replacement Item scroll response scroll stimulus scroll hide timer show timer 6

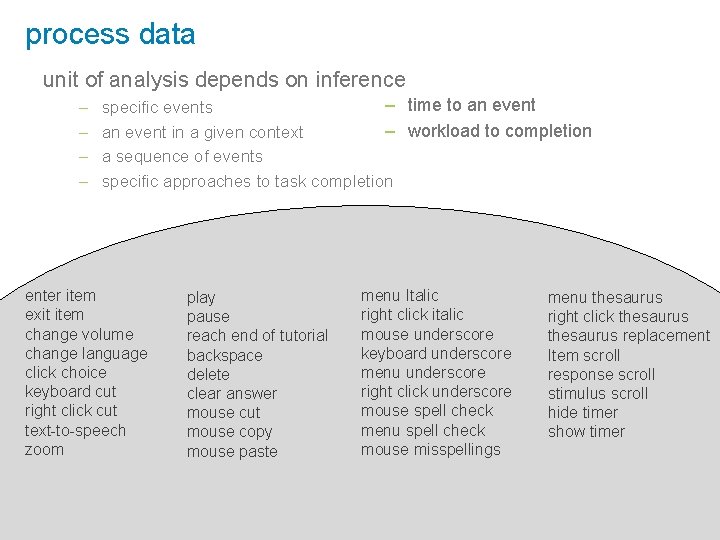

process data unit of analysis depends on inference – – – time to an event specific events – workload to completion an event in a given context a sequence of events specific approaches to task completion enter item exit item change volume change language click choice keyboard cut right click cut text-to-speech zoom play pause reach end of tutorial backspace delete clear answer mouse cut mouse copy mouse paste menu Italic right click italic mouse underscore keyboard underscore menu underscore right click underscore mouse spell check menu spell check mouse misspellings menu thesaurus right click thesaurus replacement Item scroll response scroll stimulus scroll hide timer show timer 7

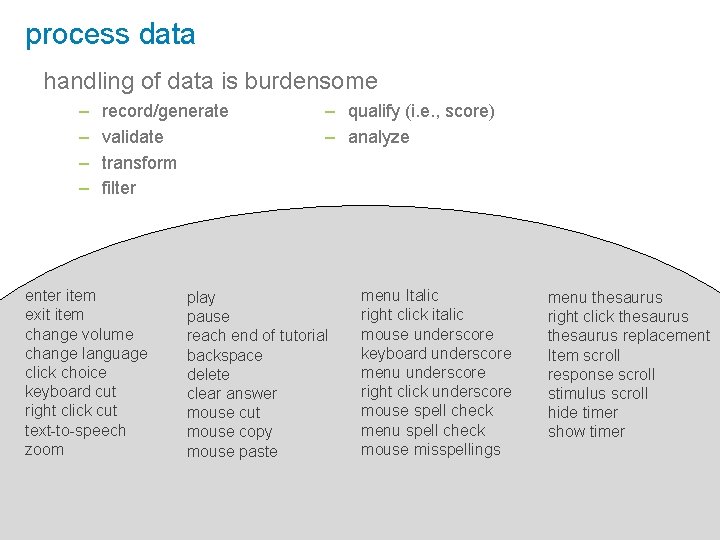

process data handling of data is burdensome – – record/generate validate transform filter enter item exit item change volume change language click choice keyboard cut right click cut text-to-speech zoom – qualify (i. e. , score) – analyze play pause reach end of tutorial backspace delete clear answer mouse cut mouse copy mouse paste menu Italic right click italic mouse underscore keyboard underscore menu underscore right click underscore mouse spell check menu spell check mouse misspellings menu thesaurus right click thesaurus replacement Item scroll response scroll stimulus scroll hide timer show timer 8

process data & timing ● ● Estimate engagement, motivation, fluency, reading speed, possibility of cheating Inform item development, test assembly, and calibration sample 9

process data & universal design / usability ● ● ● test prototypes of interface or modifications over time supplement usability tests/cognitive labs with quantitative data (e. g. , time on task) examine universal design tools and accommodation usage of special populations 10

process data & universal design / usability Microsoft Research Lumiere Project, 1993 -1995 11

process data & universal design / usability Microsoft Research Lumiere Project, 1993 -1995 12

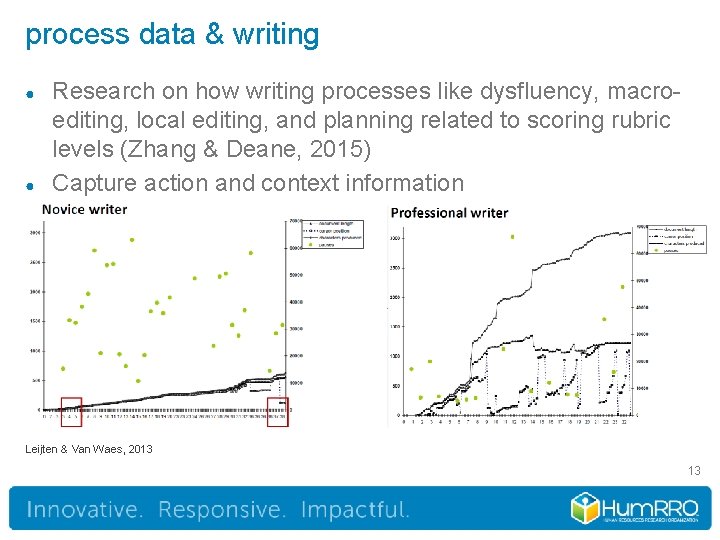

process data & writing ● ● Research on how writing processes like dysfluency, macroediting, local editing, and planning related to scoring rubric levels (Zhang & Deane, 2015) Capture action and context information Leijten & Van Waes, 2013 13

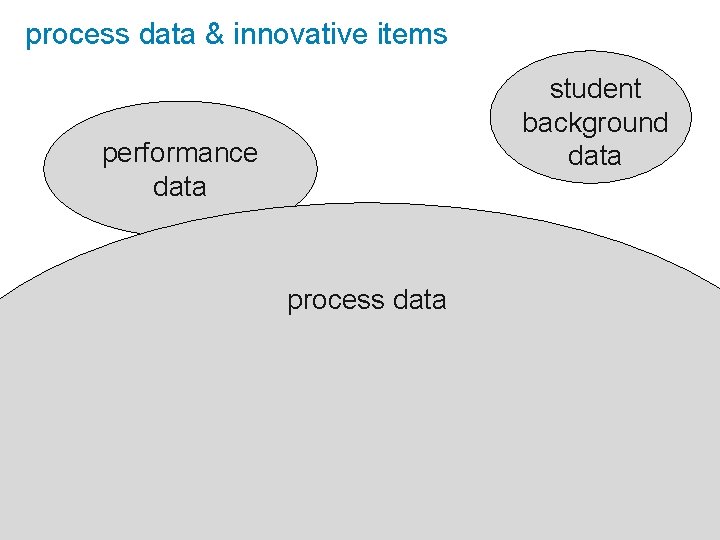

process data & innovative items student background data performance data process data 14

New Directions for Minnesota Science Assessments Cheryl Alcaya | Test Development Supervisor June 28, 2017

Today… Current assessments: • Assessments in grades 5, 8 and high school. • Based on academic standards adopted in 2009. • Summative, end-of year. • Comprehensive, (assesses grade bands for 3 -5 and 6 -8); high school assessment measures life science standards. • Online, 50% TEI including simulations, 50% selected response. 1/26/2022 Leading for educational excellence and equity, every day for every one. | education. state. mn. us 16

Today… How long have we been thinking and talking about an integrated assessment system? • It feels like forever! Why aren’t we there yet? • Policy issues • Technical quality expectations • Local control • Etc. 1/26/2022 Leading for educational excellence and equity, every day for every one. | education. state. mn. us 17

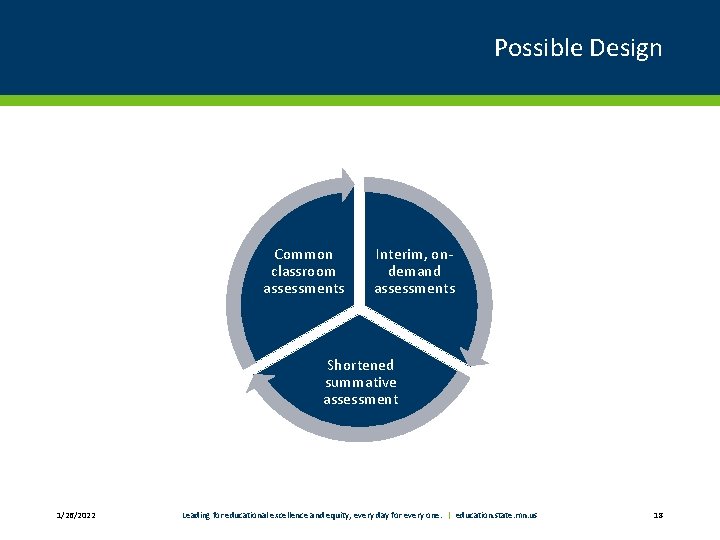

Possible Design Common classroom assessments Interim, ondemand assessments Shortened summative assessment 1/26/2022 Leading for educational excellence and equity, every day for every one. | education. state. mn. us 18

Tomorrow… • Minnesota’s academic standards for Science will be revised in 2018 -2019. • Next Generation Science Standards? NGSS-like standards? 1/26/2022 Leading for educational excellence and equity, every day for every one. | education. state. mn. us 19

Accountability Assessment in a Multi-Dimensional World • Can rich simulations and click-stream technology help us measure multidimensional learning (and still pass peer review)? • Highlight Science and Engineering Practices—conduct experiments without lab equipment. • Highlight inquiry—provide data on students’ inferencing skills. • Explore scientific phenomena otherwise inaccessible to students. 1/26/2022 Leading for educational excellence and equity, every day for every one. | education. state. mn. us 20

Pilot test of rich simulation tool • School year 2017 -2018. • Instruments are primarily teaching and learning tools. • Teachers can administer the “tests” on-demand—at the conclusion of a related unit of instruction. • Teachers have access to student profiles, with a goal to allow for more targeted intervention and enrichment. 1/26/2022 Leading for educational excellence and equity, every day for every one. | education. state. mn. us 21

Pilot test of rich simulation tool • School year 2017 -2018. • Instruments are primarily teaching and learning tools. • Teachers can administer the “tests” on-demand—at the conclusion of a related unit of instruction. • Teachers have access to student profiles, with a goal to allow for more targeted intervention and enrichment. 1/26/2022 Leading for educational excellence and equity, every day for every one. | education. state. mn. us 22

The Policy Perspective of Process Data Jennifer Dugan| Director, Statewide Testing

Policy Considerations • Perception of collecting too much data? • Subjects and grade levels? • All? • Only those best suited? • Impact to program • Educator buy-in • Fiscal? • Timing is everything 1/26/2022 Leading for educational excellence and equity, every day for every one. | education. state. mn. us 24

Can Process Data Lead to a Deeper Understanding of Student Performance? Presenter : Monica Gribben, Hum. RRO June 28, 2017 Headquarters: 66 Canal Center Plaza, Suite 700, Alexandria, VA 22314 -1578 | Phone: 703. 549. 3611 | www. humrro. org

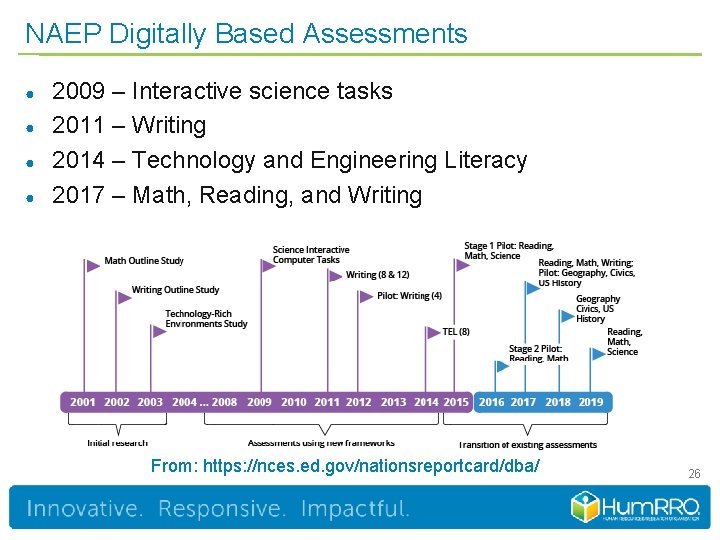

NAEP Digitally Based Assessments ● ● 2009 – Interactive science tasks 2011 – Writing 2014 – Technology and Engineering Literacy 2017 – Math, Reading, and Writing From: https: //nces. ed. gov/nationsreportcard/dba/ 26

Overview ● ● Why did we explore the use of process data for NAEP? What did we do? How can you use process data? What do you need to know to collect and use process data? 27

Why is the NAEP program interested in process data? ● ● Leverage the use of technology Inform item development Improve the student experience Improve understanding of student performance 28

Methodology ● Literature Review – – – ● ● Student assessment Education software Gaming and simulation Response time Eye tracking Data logging Interviews Expert Review 29

Findings ● Using process data in large-scale assessments – PISA exploratory research and secondary reporting – Educational software, gaming, and research ● Using process data to inform NAEP item development and assessment design – Student interactions versus expectations – Accommodations use versus expectations – Universal design features and tutorial or system design ● Using process data to inform scoring and reporting – Secondary reports 30

Findings (continued) ● Using task or process models – Answer specific research-based questions – Education software/games - specific environments and focused questions ● Differences by NAEP subject – Obvious elements (calculator, equation editor, passage navigation) ● Differences by NAEP item type – No patterns 31

Recommendations for Using Process Data ● ● ● ● Collect and store all process data elements Use cognitive expectations to develop models Identify meaningful sets of data elements Look for patterns Provide evidence of validity Understand use of accommodations better Develop task models Improve the usability of the assessment platform 32

Advancing the use of process data ● ● ● Recognize that using process data requires resources Develop a common language Share resources 33

Questions

- Slides: 34