Stereo Vision CS 485685 Computer Vision Prof Bebis

Stereo Vision CS 485/685 Computer Vision Prof. Bebis

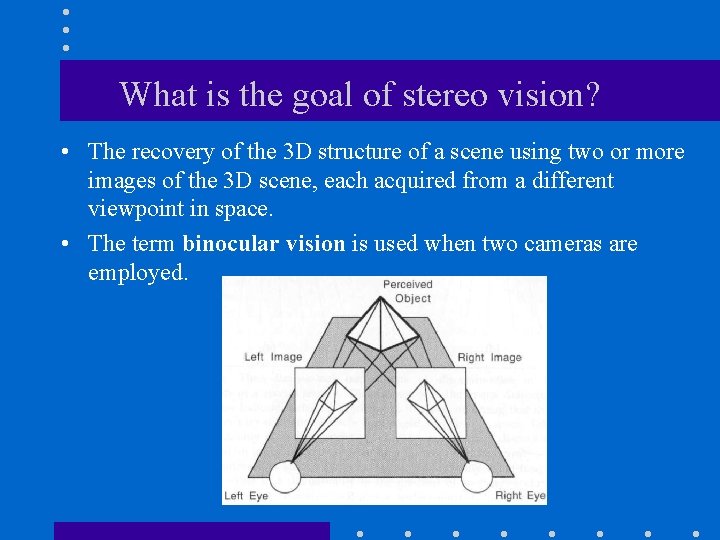

What is the goal of stereo vision? • The recovery of the 3 D structure of a scene using two or more images of the 3 D scene, each acquired from a different viewpoint in space. • The term binocular vision is used when two cameras are employed.

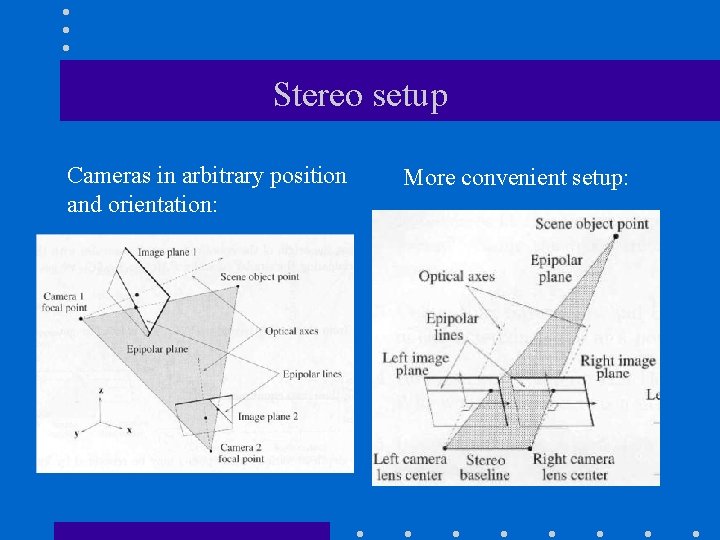

Stereo setup Cameras in arbitrary position and orientation: More convenient setup:

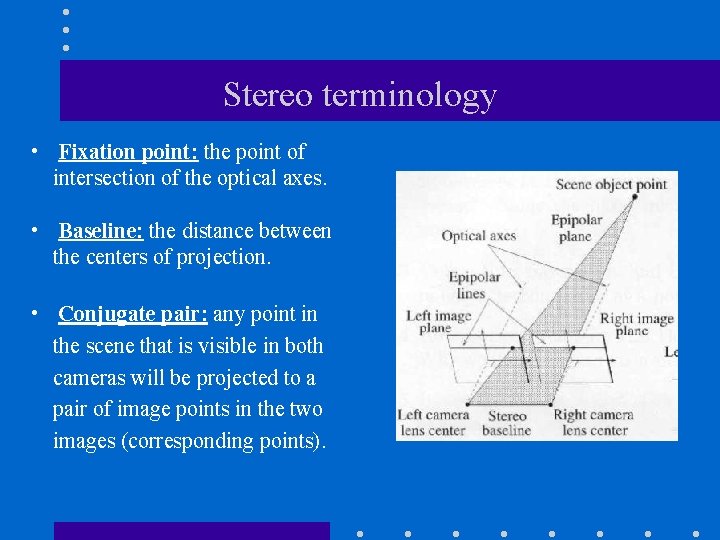

Stereo terminology • Fixation point: the point of intersection of the optical axes. • Baseline: the distance between the centers of projection. • Conjugate pair: any point in the scene that is visible in both cameras will be projected to a pair of image points in the two images (corresponding points).

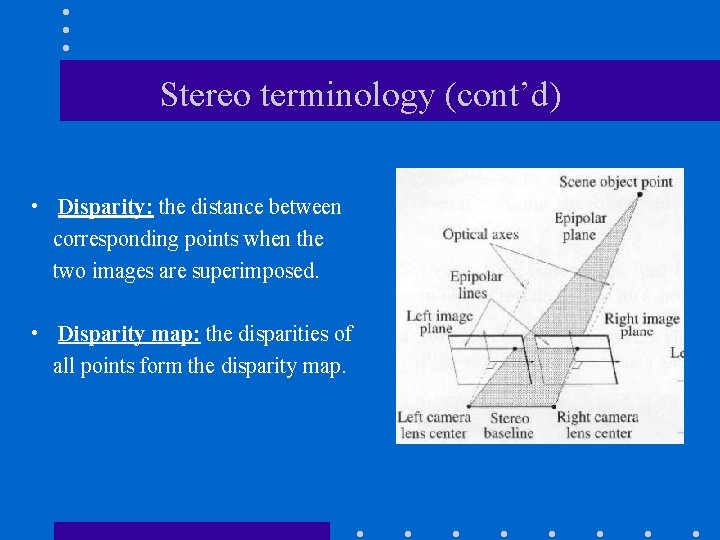

Stereo terminology (cont’d) • Disparity: the distance between corresponding points when the two images are superimposed. • Disparity map: the disparities of all points form the disparity map.

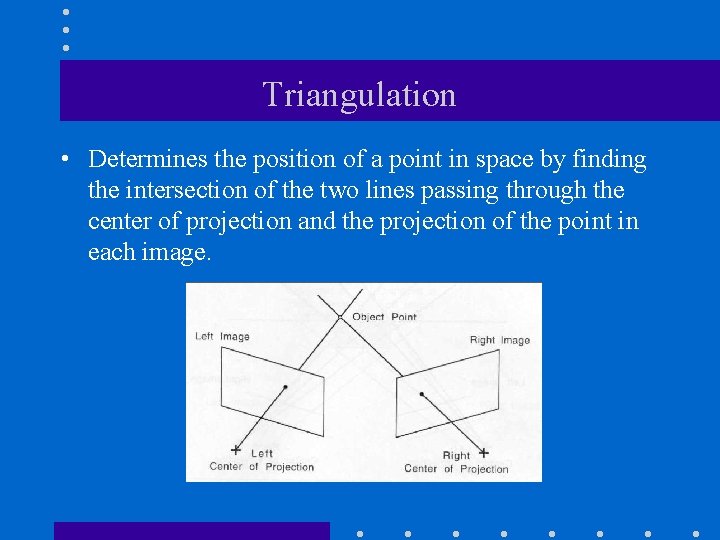

Triangulation • Determines the position of a point in space by finding the intersection of the two lines passing through the center of projection and the projection of the point in each image.

The two problems of stereo (1) The correspondence problem. (2) The reconstruction problem.

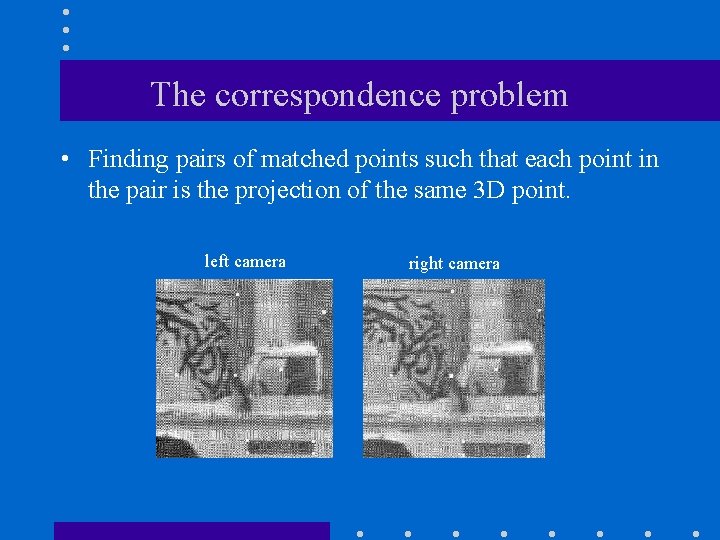

The correspondence problem • Finding pairs of matched points such that each point in the pair is the projection of the same 3 D point. left camera right camera

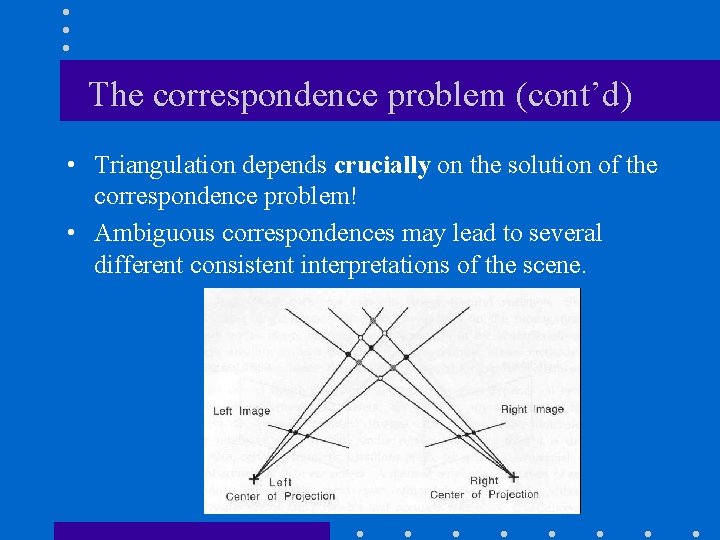

The correspondence problem (cont’d) • Triangulation depends crucially on the solution of the correspondence problem! • Ambiguous correspondences may lead to several different consistent interpretations of the scene.

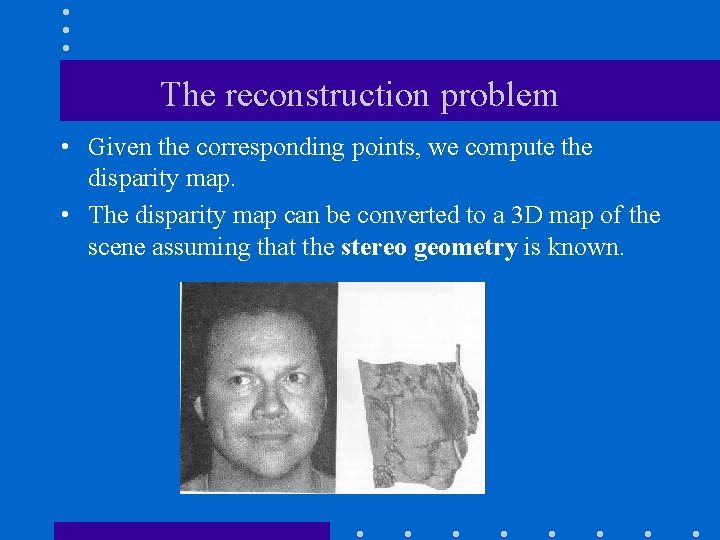

The reconstruction problem • Given the corresponding points, we compute the disparity map. • The disparity map can be converted to a 3 D map of the scene assuming that the stereo geometry is known.

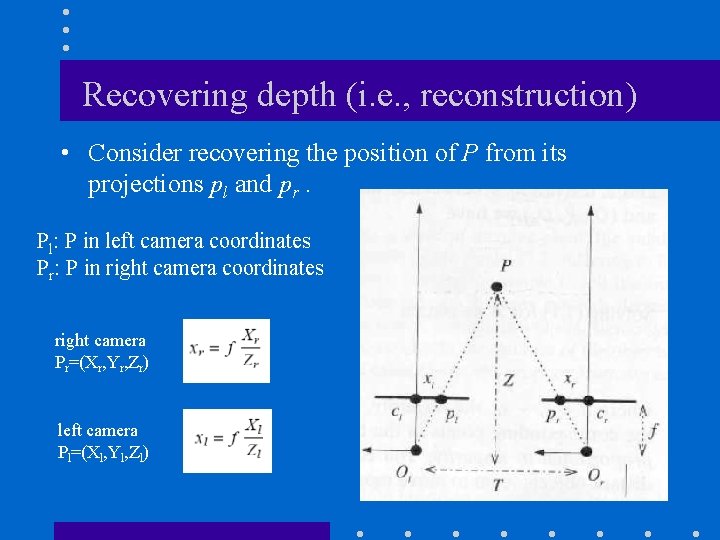

Recovering depth (i. e. , reconstruction) • Consider recovering the position of P from its projections pl and pr. Pl: P in left camera coordinates Pr: P in right camera coordinates right camera Pr=(Xr, Yr, Zr) left camera Pl=(Xl, Yl, Zl)

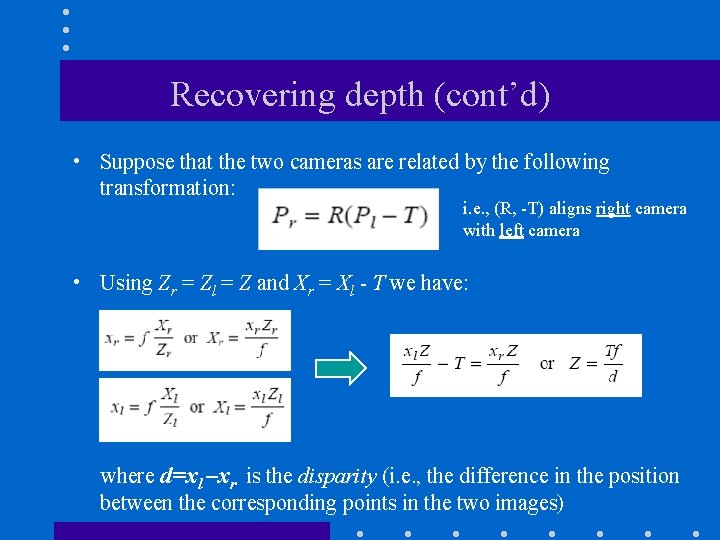

Recovering depth (cont’d) • Suppose that the two cameras are related by the following transformation: i. e. , (R, -T) aligns right camera with left camera • Using Zr = Zl = Z and Xr = Xl - T we have: where d=xl –xr is the disparity (i. e. , the difference in the position between the corresponding points in the two images)

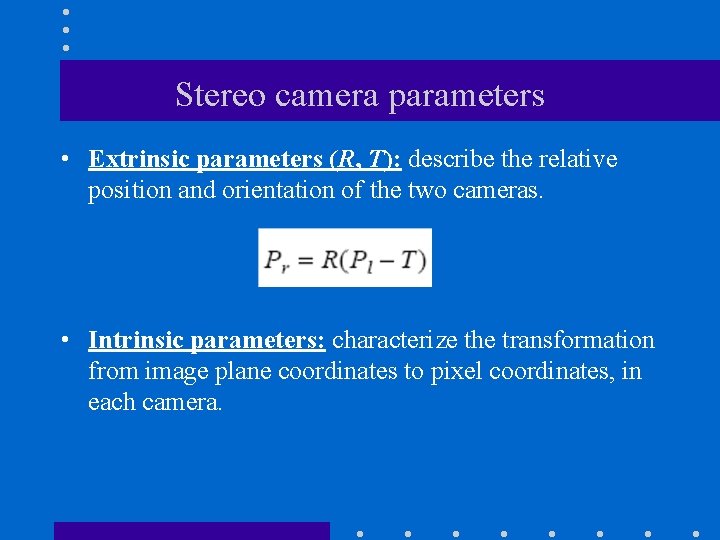

Stereo camera parameters • Extrinsic parameters (R, T): describe the relative position and orientation of the two cameras. • Intrinsic parameters: characterize the transformation from image plane coordinates to pixel coordinates, in each camera.

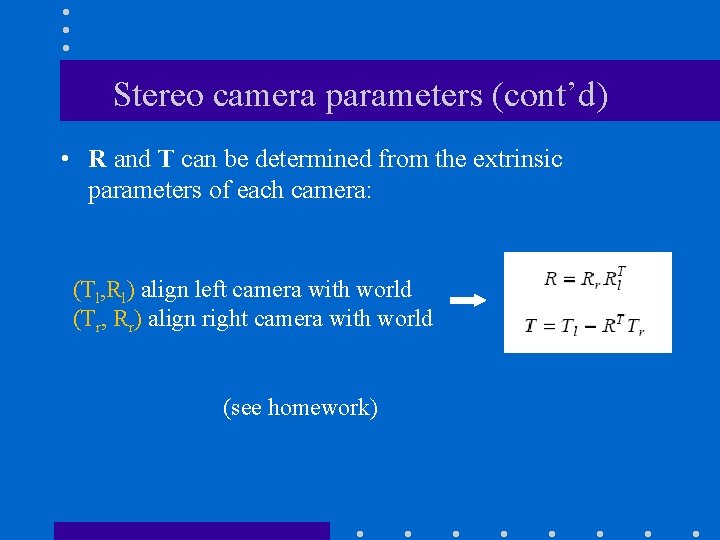

Stereo camera parameters (cont’d) • R and T can be determined from the extrinsic parameters of each camera: (Tl, Rl) align left camera with world (Tr, Rr) align right camera with world (see homework)

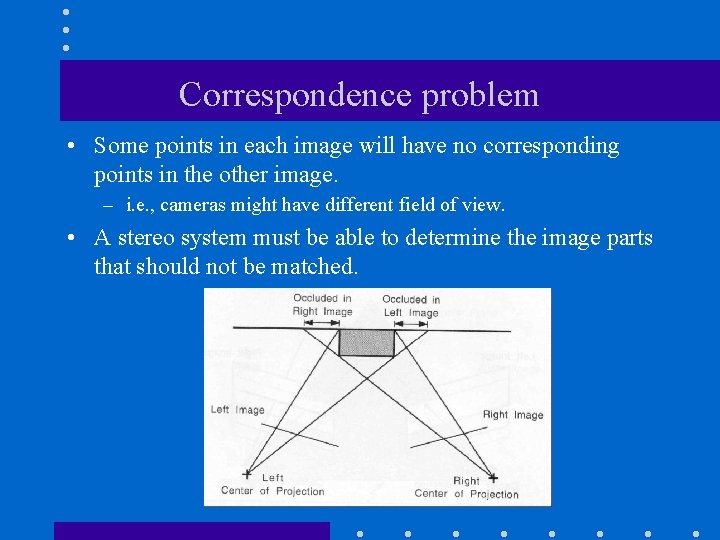

Correspondence problem • Some points in each image will have no corresponding points in the other image. – i. e. , cameras might have different field of view. • A stereo system must be able to determine the image parts that should not be matched.

Correspondence problem (cont’d) • Two main approaches: Intensity-based: attempt to establish a correspondence by matching image intensities. Feature-based: attempt to establish a correspondence by matching a sparse sets of image features.

Intensity-based Methods • Match image sub-windows between the two images (e. g. , using correlation). left right

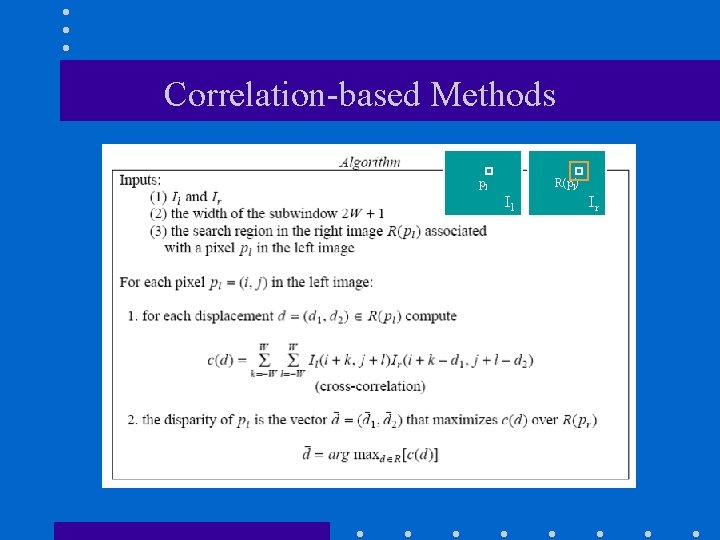

Correlation-based Methods pl R(pl) Il Ir

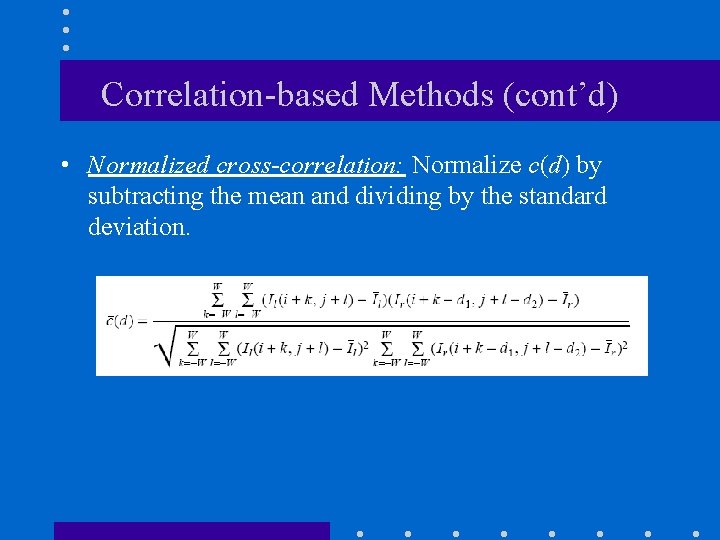

Correlation-based Methods (cont’d) • Normalized cross-correlation: Normalize c(d) by subtracting the mean and dividing by the standard deviation.

Correlation-based Methods (cont’d) • The success of correlation-based methods depends on whether the image window in one image exhibits a distinctive structure that occurs infrequently in the search region of the other image. left right

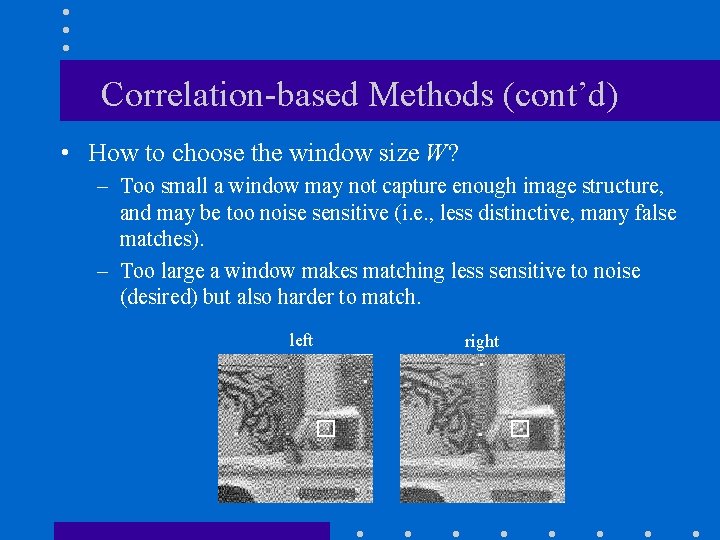

Correlation-based Methods (cont’d) • How to choose the window size W? – Too small a window may not capture enough image structure, and may be too noise sensitive (i. e. , less distinctive, many false matches). – Too large a window makes matching less sensitive to noise (desired) but also harder to match. left right

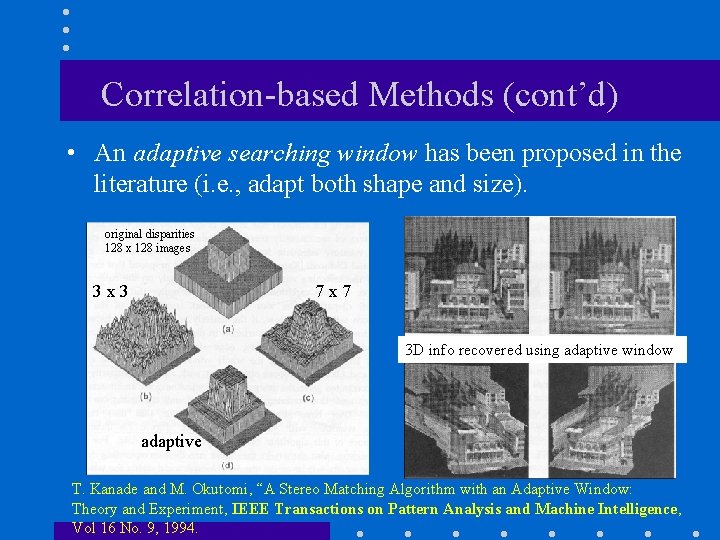

Correlation-based Methods (cont’d) • An adaptive searching window has been proposed in the literature (i. e. , adapt both shape and size). original disparities 128 x 128 images 3 x 3 7 x 7 3 D info recovered using adaptive window adaptive T. Kanade and M. Okutomi, “A Stereo Matching Algorithm with an Adaptive Window: Theory and Experiment, IEEE Transactions on Pattern Analysis and Machine Intelligence, Vol 16 No. 9, 1994.

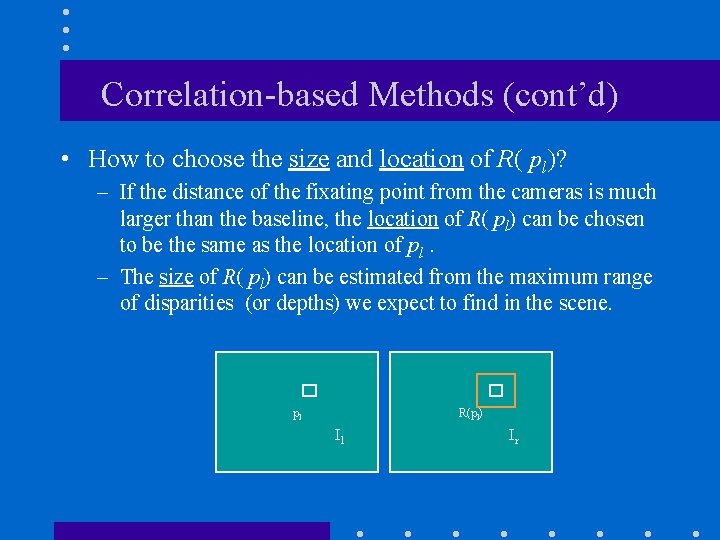

Correlation-based Methods (cont’d) • How to choose the size and location of R( pl)? – If the distance of the fixating point from the cameras is much larger than the baseline, the location of R( pl) can be chosen to be the same as the location of pl. – The size of R( pl) can be estimated from the maximum range of disparities (or depths) we expect to find in the scene. pl R(pl) Il Ir

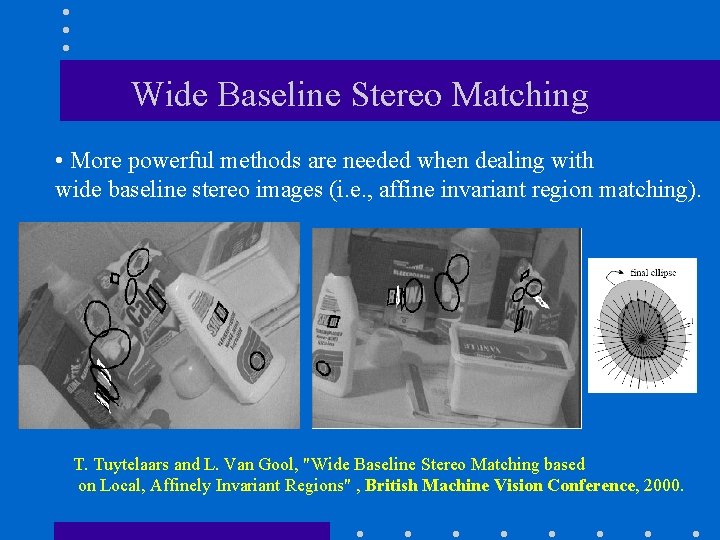

Wide Baseline Stereo Matching • More powerful methods are needed when dealing with wide baseline stereo images (i. e. , affine invariant region matching). T. Tuytelaars and L. Van Gool, "Wide Baseline Stereo Matching based on Local, Affinely Invariant Regions" , British Machine Vision Conference, 2000.

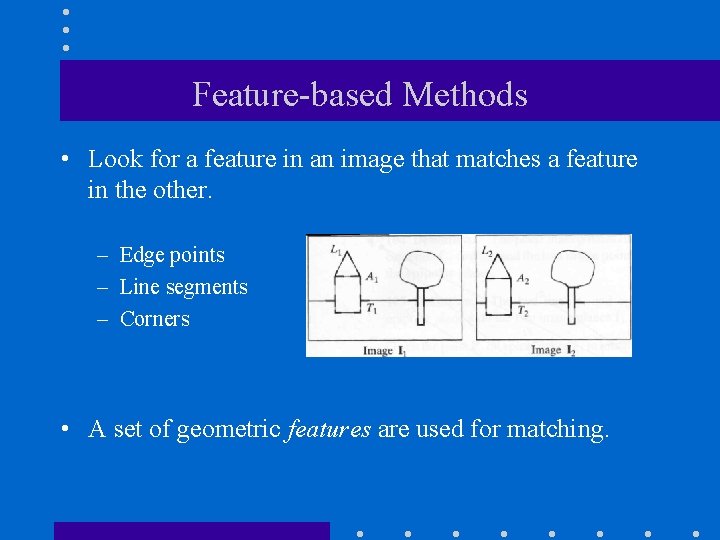

Feature-based Methods • Look for a feature in an image that matches a feature in the other. – Edge points – Line segments – Corners • A set of geometric features are used for matching.

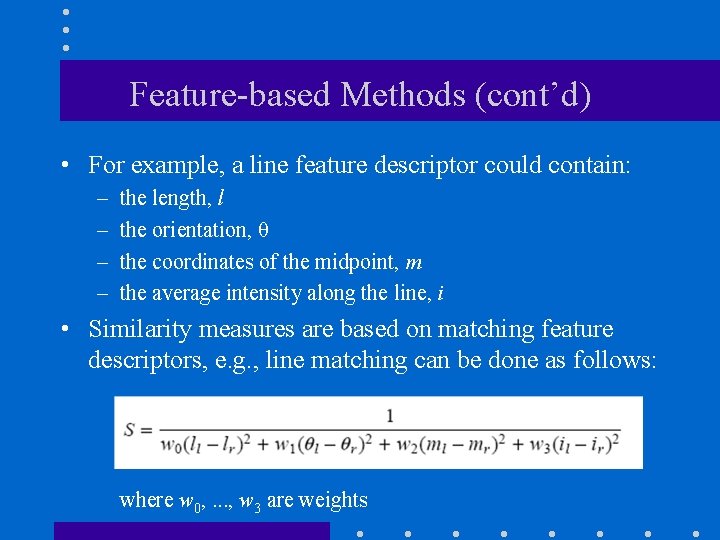

Feature-based Methods (cont’d) • For example, a line feature descriptor could contain: – – the length, l the orientation, θ the coordinates of the midpoint, m the average intensity along the line, i • Similarity measures are based on matching feature descriptors, e. g. , line matching can be done as follows: where w 0, . . . , w 3 are weights

Intensity-based vs feature-based approaches • Intensity-based methods – Provide a dense disparity map. – Need textured images to work well. – Sensitive to illumination changes. • Feature-based methods: – Faster than correlation-based methods. – Provide sparse disparity maps. – Relatively insensitive to illumination changes.

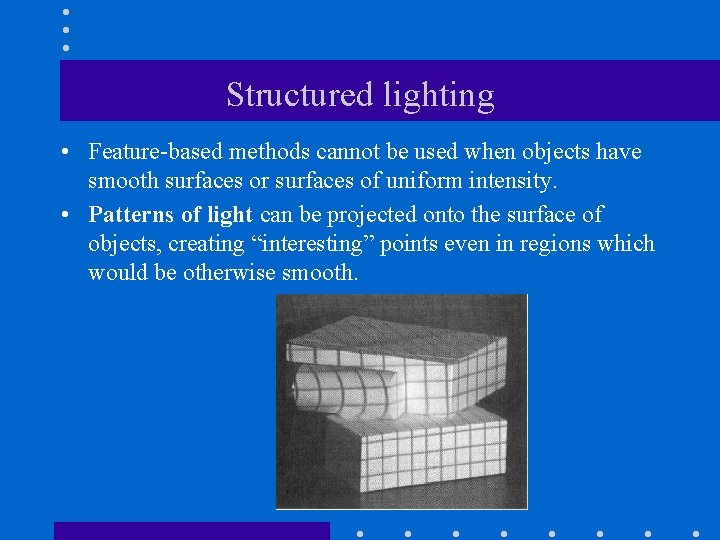

Structured lighting • Feature-based methods cannot be used when objects have smooth surfaces or surfaces of uniform intensity. • Patterns of light can be projected onto the surface of objects, creating “interesting” points even in regions which would be otherwise smooth.

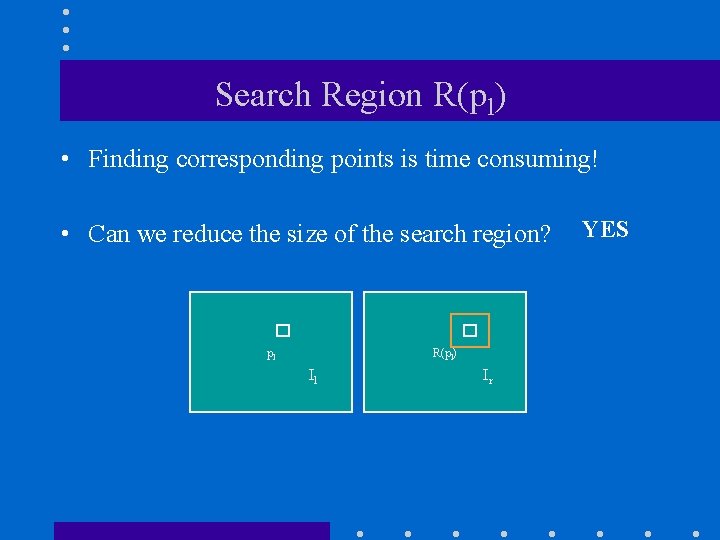

Search Region R(pl) • Finding corresponding points is time consuming! • Can we reduce the size of the search region? pl R(pl) Il Ir YES

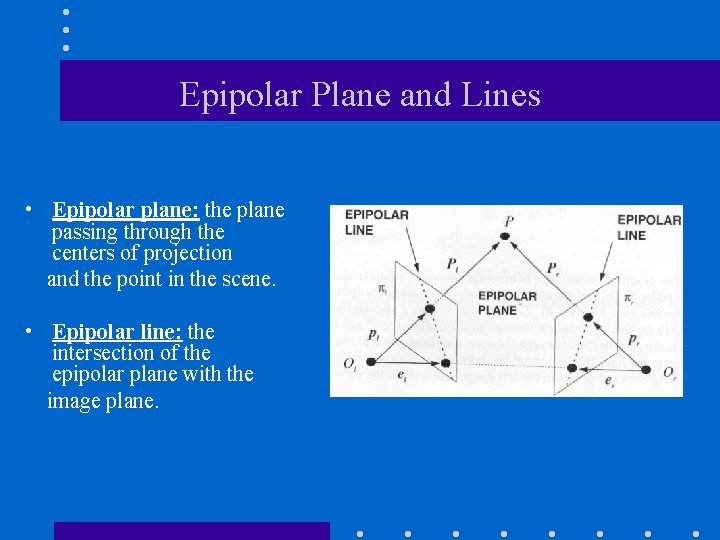

Epipolar Plane and Lines • Epipolar plane: the plane passing through the centers of projection and the point in the scene. • Epipolar line: the intersection of the epipolar plane with the image plane.

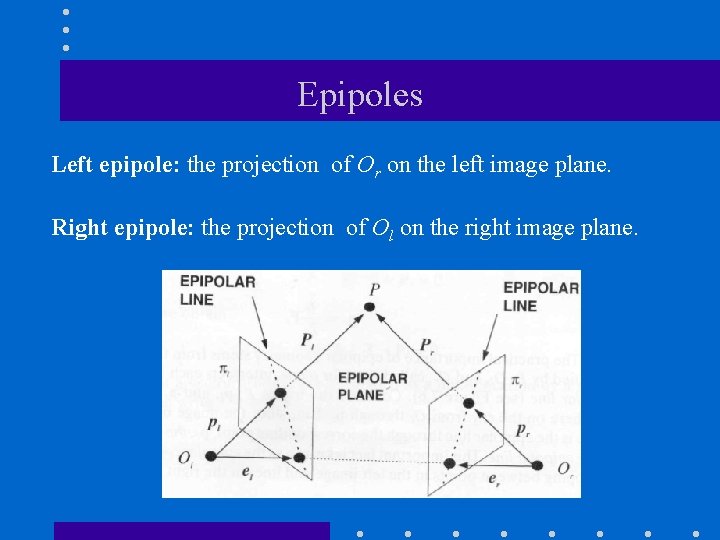

Epipoles Left epipole: the projection of Or on the left image plane. Right epipole: the projection of Ol on the right image plane.

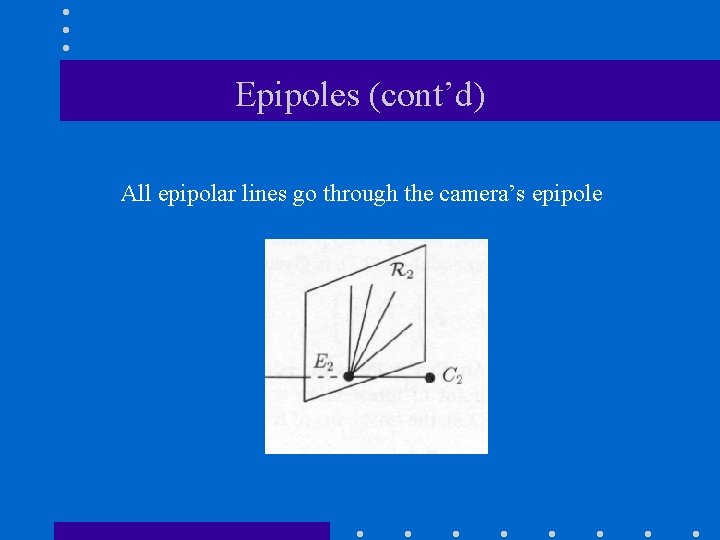

Epipoles (cont’d) All epipolar lines go through the camera’s epipole

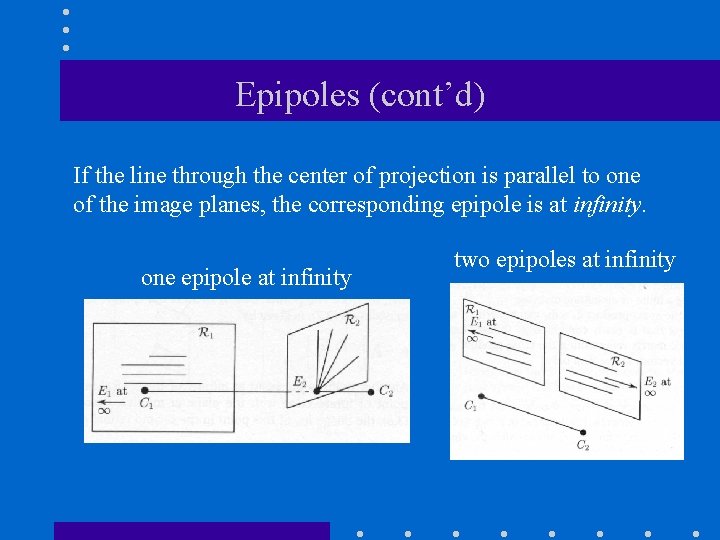

Epipoles (cont’d) If the line through the center of projection is parallel to one of the image planes, the corresponding epipole is at infinity. one epipole at infinity two epipoles at infinity

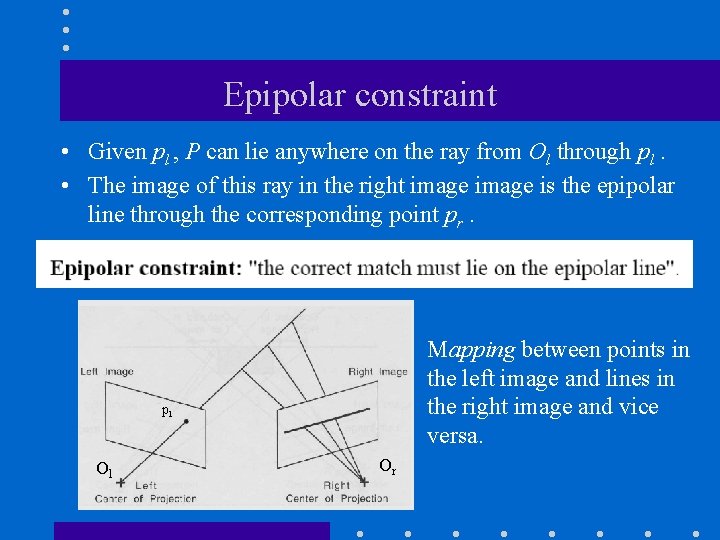

Epipolar constraint • Given pl , P can lie anywhere on the ray from Ol through pl. • The image of this ray in the right image is the epipolar line through the corresponding point pr. Mapping between points in the left image and lines in the right image and vice versa. pl Ol Or

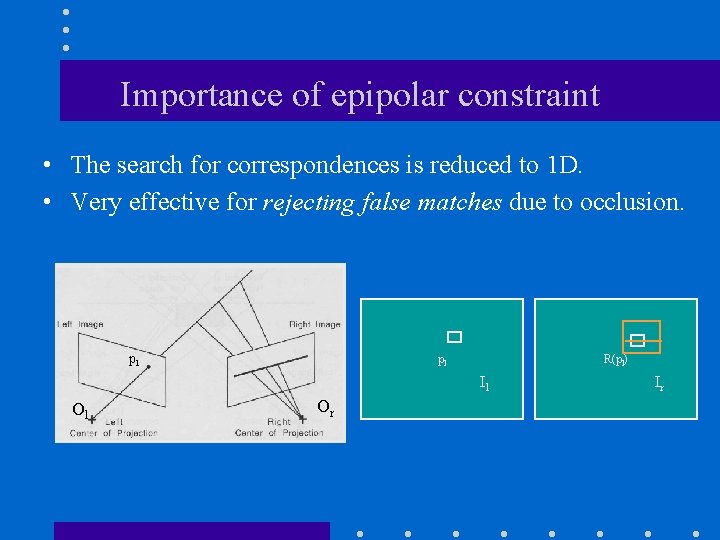

Importance of epipolar constraint • The search for correspondences is reduced to 1 D. • Very effective for rejecting false matches due to occlusion. pl pl R(pl) Il Ol Or Ir

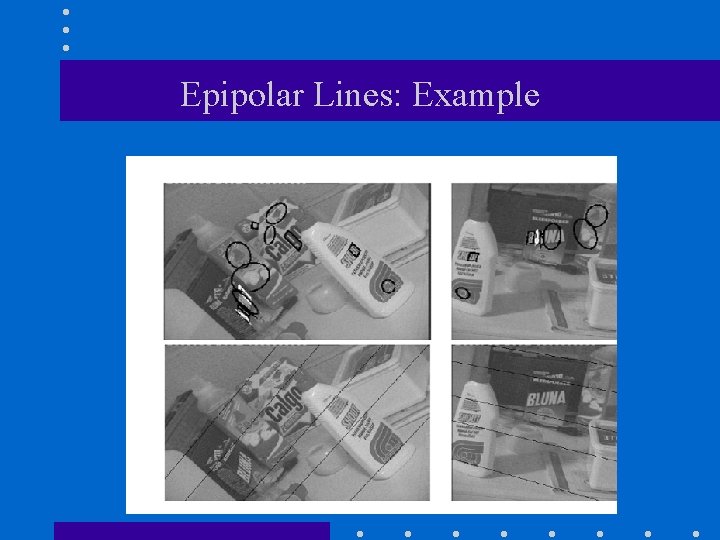

Epipolar Lines: Example

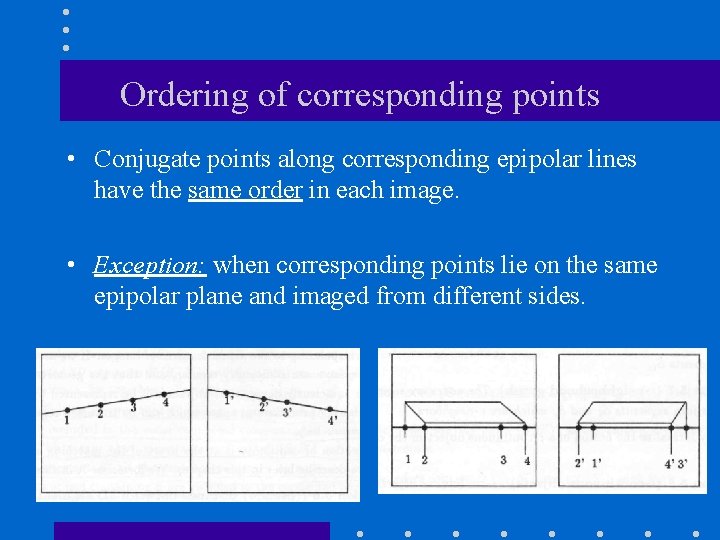

Ordering of corresponding points • Conjugate points along corresponding epipolar lines have the same order in each image. • Exception: when corresponding points lie on the same epipolar plane and imaged from different sides.

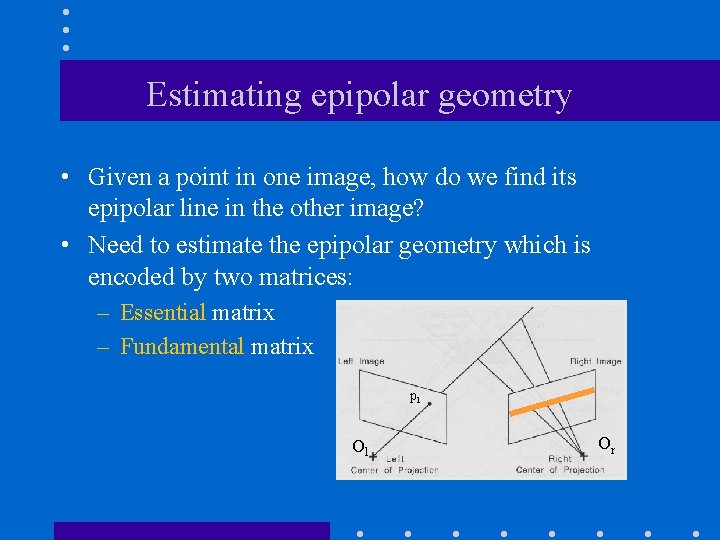

Estimating epipolar geometry • Given a point in one image, how do we find its epipolar line in the other image? • Need to estimate the epipolar geometry which is encoded by two matrices: – Essential matrix – Fundamental matrix pl Ol Or

Quick Review • Cross product • Homogeneous representation of lines

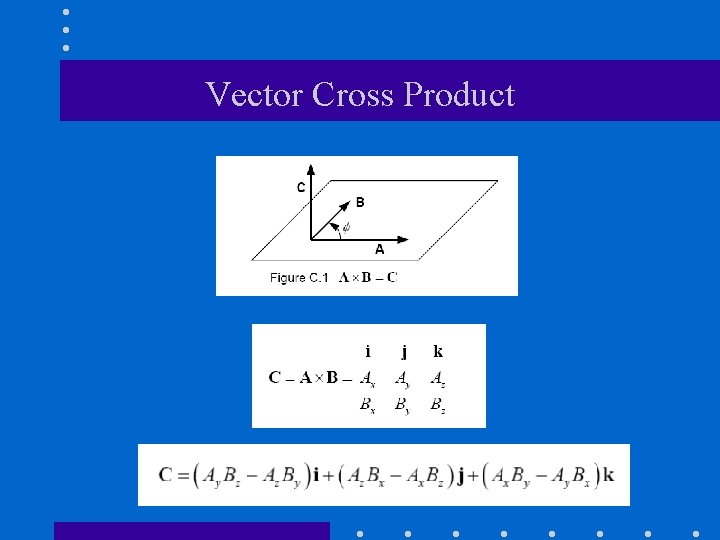

Vector Cross Product

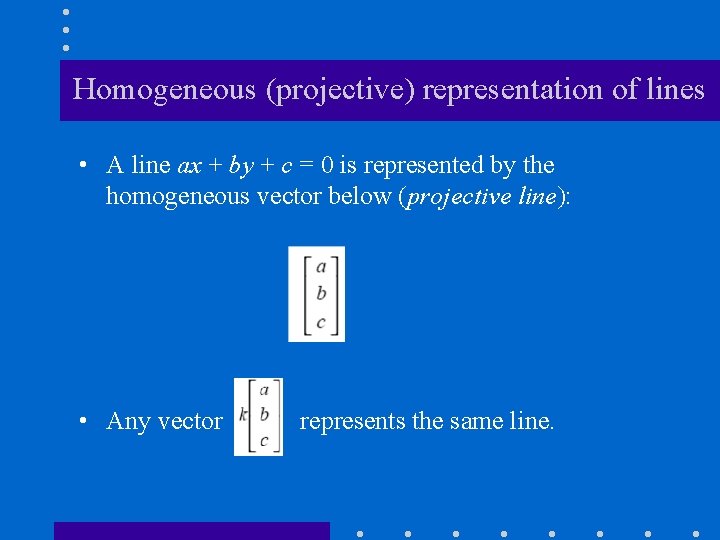

Homogeneous (projective) representation of lines • A line ax + by + c = 0 is represented by the homogeneous vector below (projective line): • Any vector represents the same line.

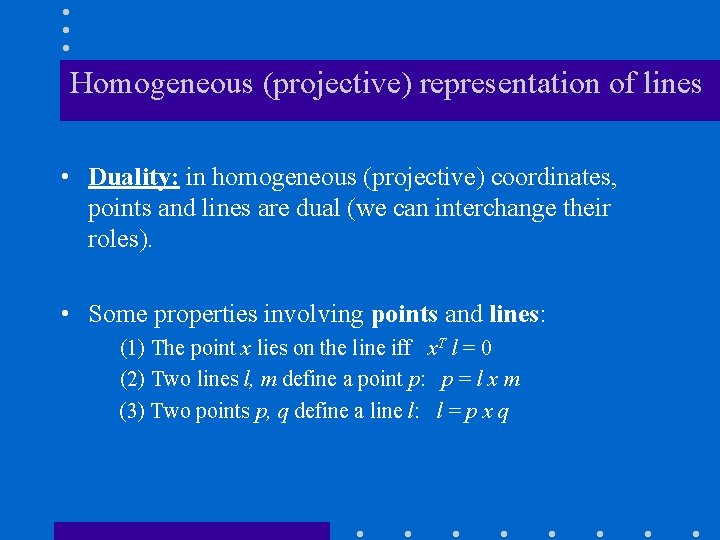

Homogeneous (projective) representation of lines • Duality: in homogeneous (projective) coordinates, points and lines are dual (we can interchange their roles). • Some properties involving points and lines: (1) The point x lies on the line iff x. T l = 0 (2) Two lines l, m define a point p: p = l x m (3) Two points p, q define a line l: l = p x q

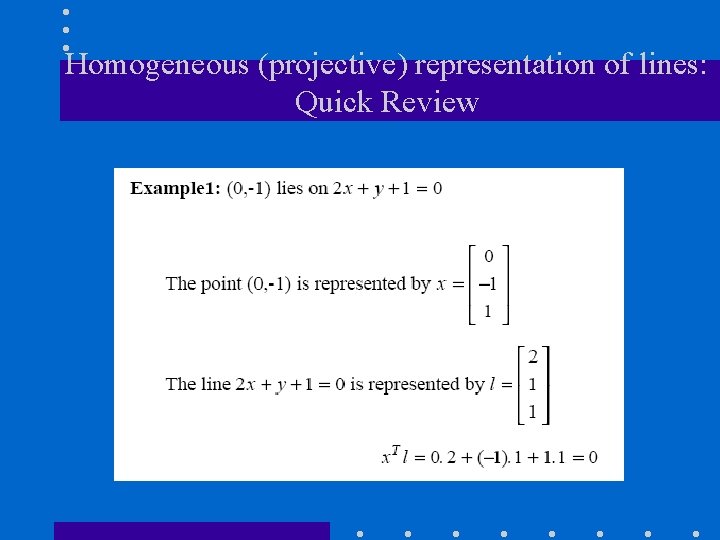

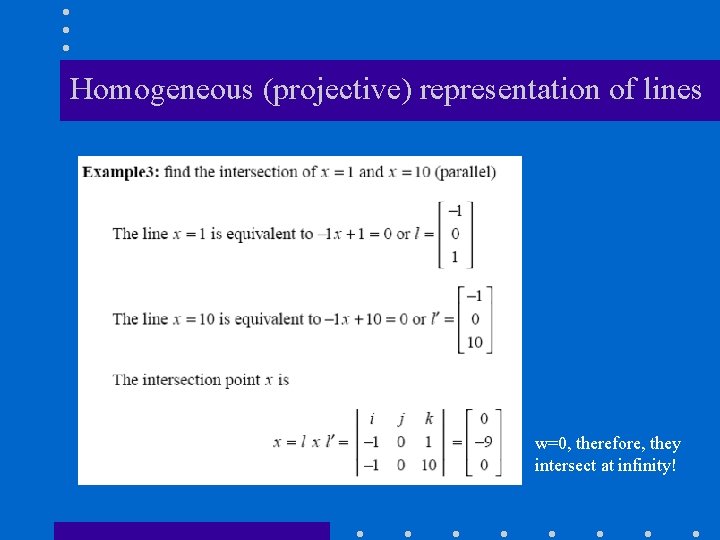

Homogeneous (projective) representation of lines: Quick Review

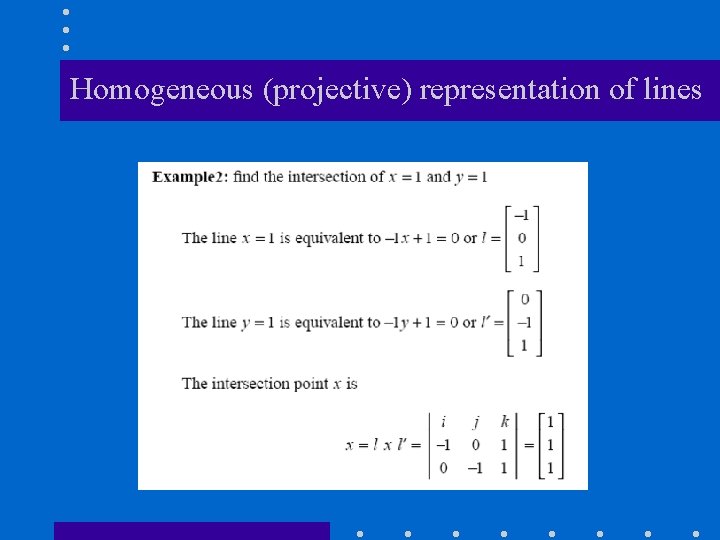

Homogeneous (projective) representation of lines

Homogeneous (projective) representation of lines w=0, therefore, they intersect at infinity!

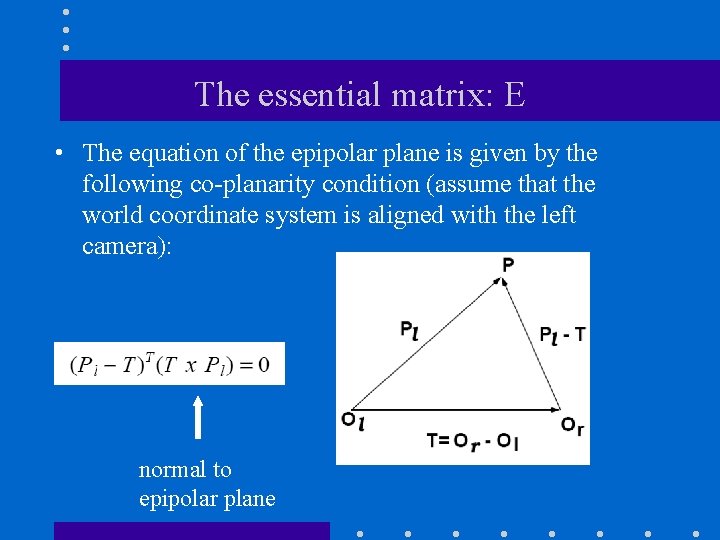

The essential matrix: E • The equation of the epipolar plane is given by the following co-planarity condition (assume that the world coordinate system is aligned with the left camera): normal to epipolar plane

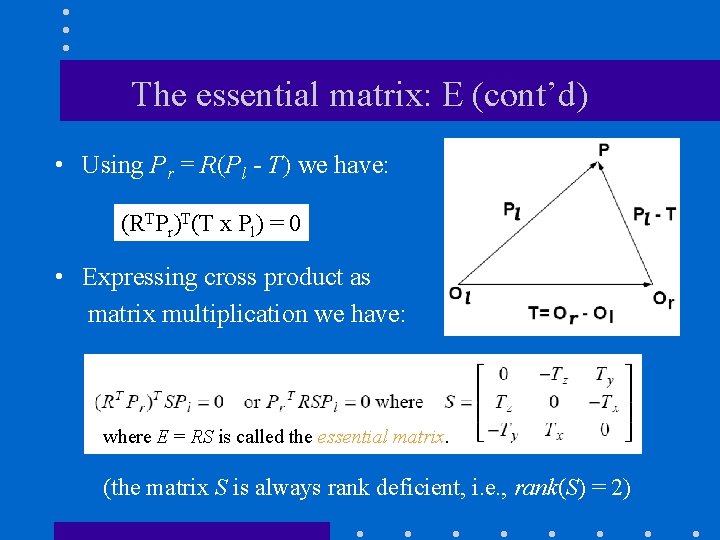

The essential matrix: E (cont’d) • Using Pr = R(Pl - T) we have: (RTPr)T(T x Pl) = 0 • Expressing cross product as matrix multiplication we have: where E = RS is called the essential matrix. (the matrix S is always rank deficient, i. e. , rank(S) = 2)

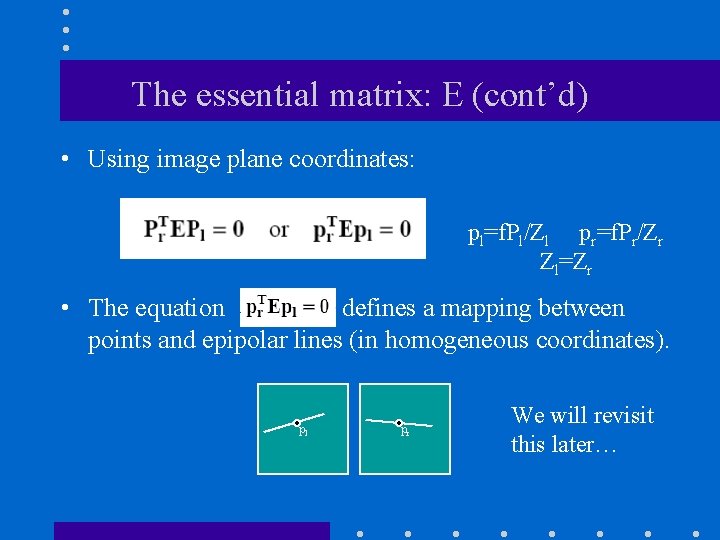

The essential matrix: E (cont’d) • Using image plane coordinates: pl=f. Pl/Zl pr=f. Pr/Zr Zl=Zr • The equation defines a mapping between points and epipolar lines (in homogeneous coordinates). pl pr We will revisit this later…

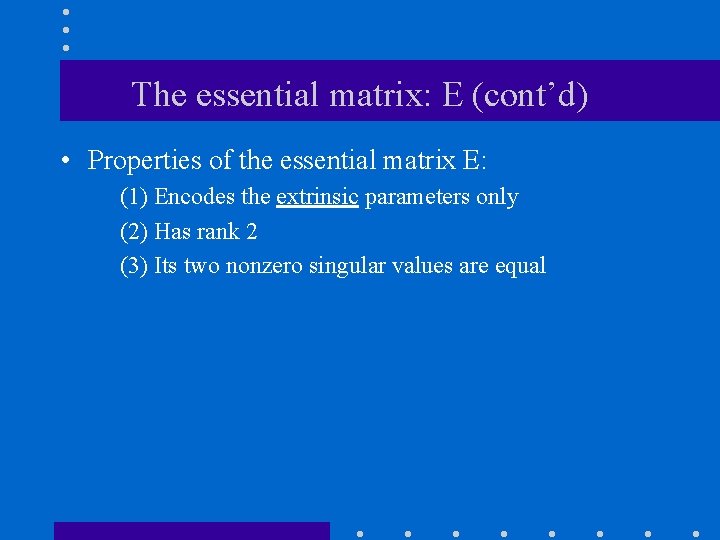

The essential matrix: E (cont’d) • Properties of the essential matrix E: (1) Encodes the extrinsic parameters only (2) Has rank 2 (3) Its two nonzero singular values are equal

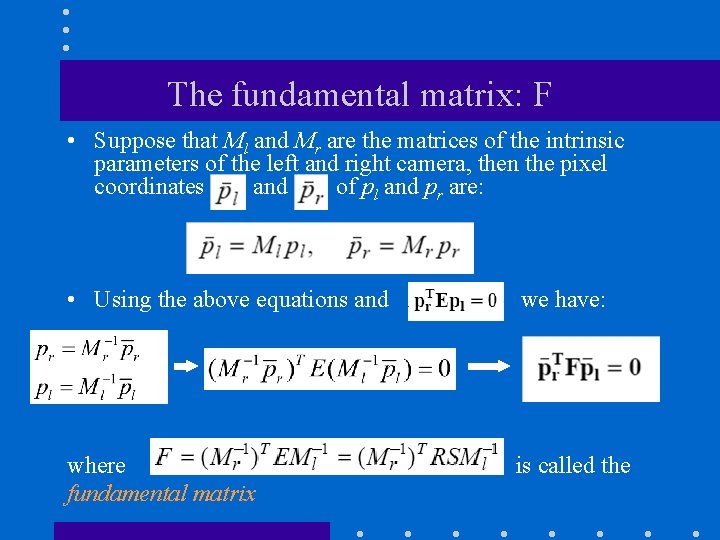

The fundamental matrix: F • Suppose that Ml and Mr are the matrices of the intrinsic parameters of the left and right camera, then the pixel coordinates and of pl and pr are: • Using the above equations and we have: where fundamental matrix is called the

The fundamental matrix: F (cont’d) • Properties of the fundamental matrix: (1)Encodes both the extrinsic and intrinsic parameters (2) Has rank 2

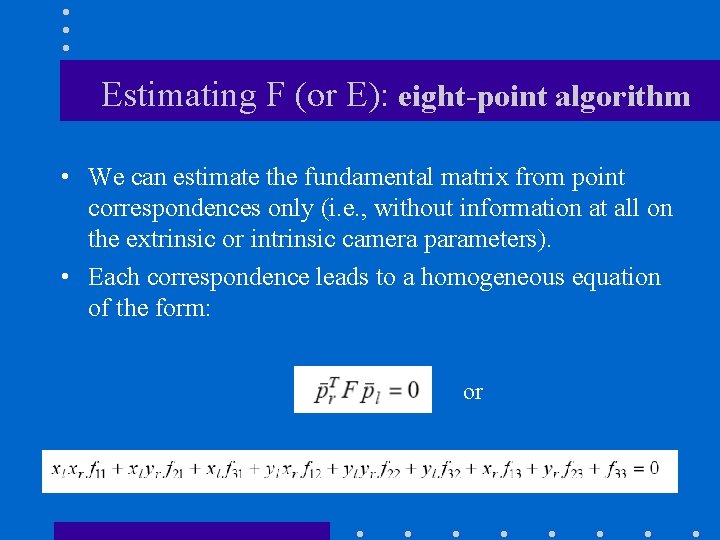

Estimating F (or E): eight-point algorithm • We can estimate the fundamental matrix from point correspondences only (i. e. , without information at all on the extrinsic or intrinsic camera parameters). • Each correspondence leads to a homogeneous equation of the form: or

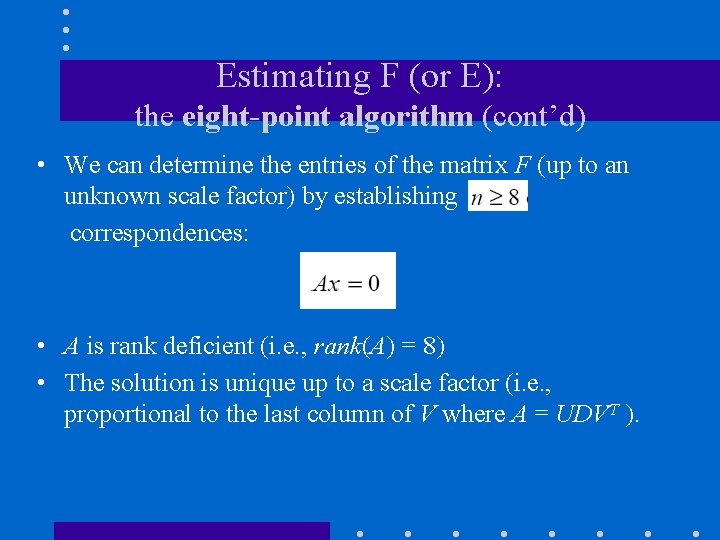

Estimating F (or E): the eight-point algorithm (cont’d) • We can determine the entries of the matrix F (up to an unknown scale factor) by establishing correspondences: • A is rank deficient (i. e. , rank(A) = 8) • The solution is unique up to a scale factor (i. e. , proportional to the last column of V where A = UDVT ).

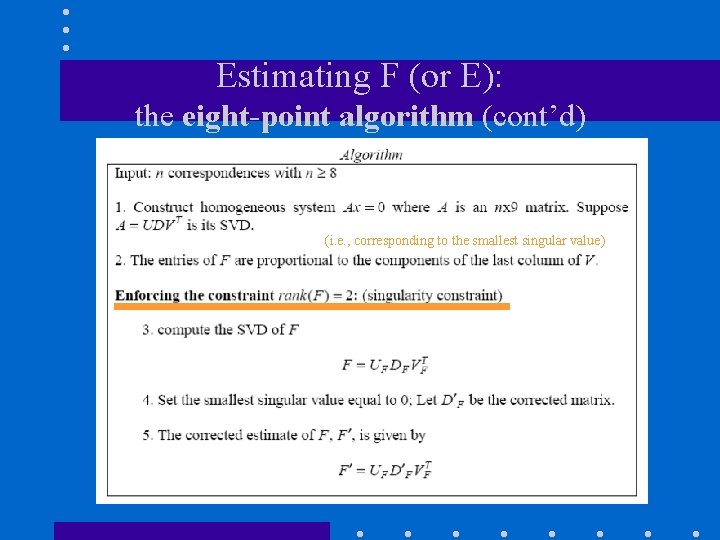

Estimating F (or E): the eight-point algorithm (cont’d) (i. e. , corresponding to the smallest singular value)

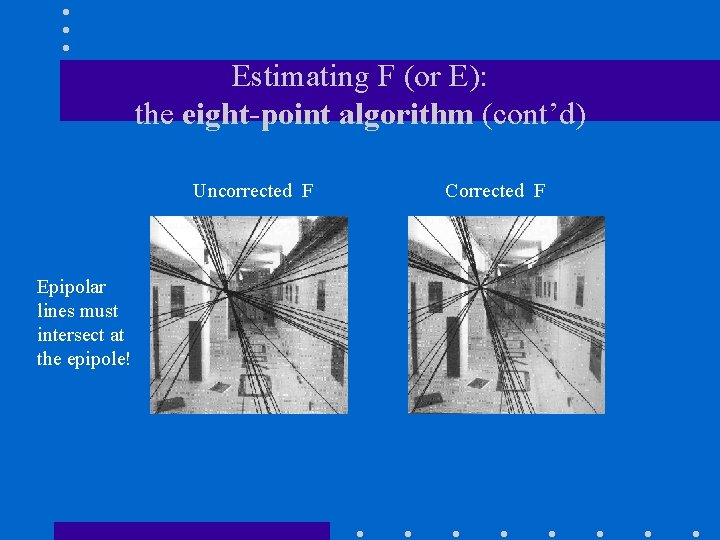

Estimating F (or E): the eight-point algorithm (cont’d) Uncorrected F Epipolar lines must intersect at the epipole! Corrected F

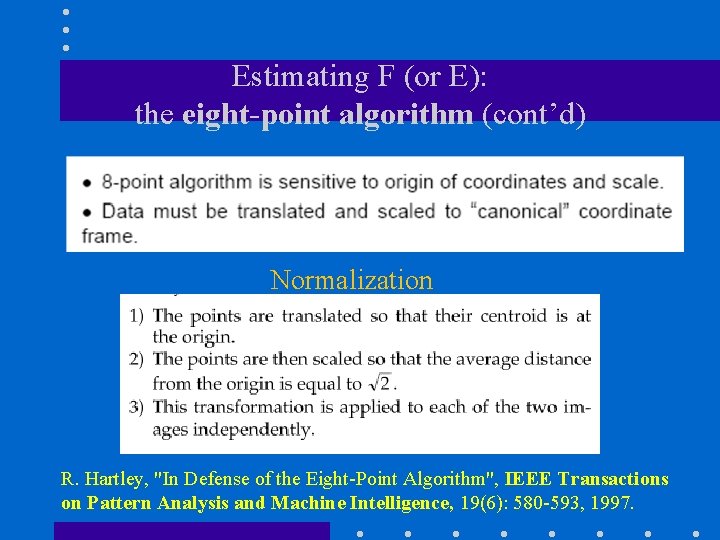

Estimating F (or E): the eight-point algorithm (cont’d) Normalization R. Hartley, "In Defense of the Eight-Point Algorithm", IEEE Transactions on Pattern Analysis and Machine Intelligence, 19(6): 580 -593, 1997.

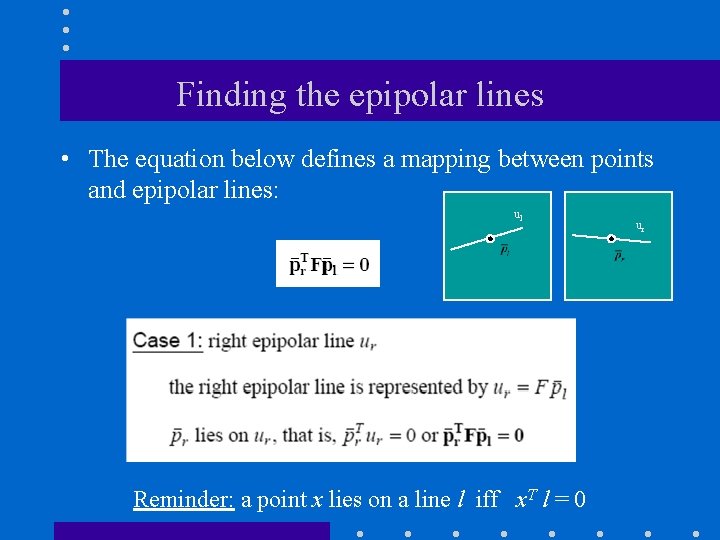

Finding the epipolar lines • The equation below defines a mapping between points and epipolar lines: ul Reminder: a point x lies on a line l iff x. T l = 0 ur

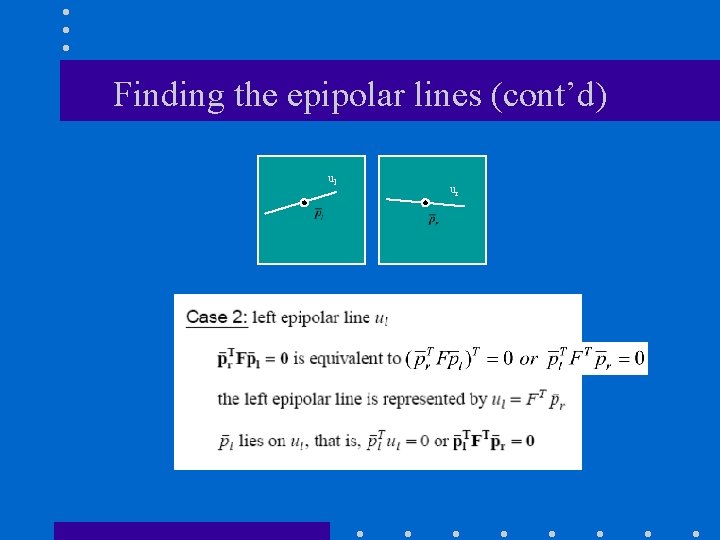

Finding the epipolar lines (cont’d) ul ur

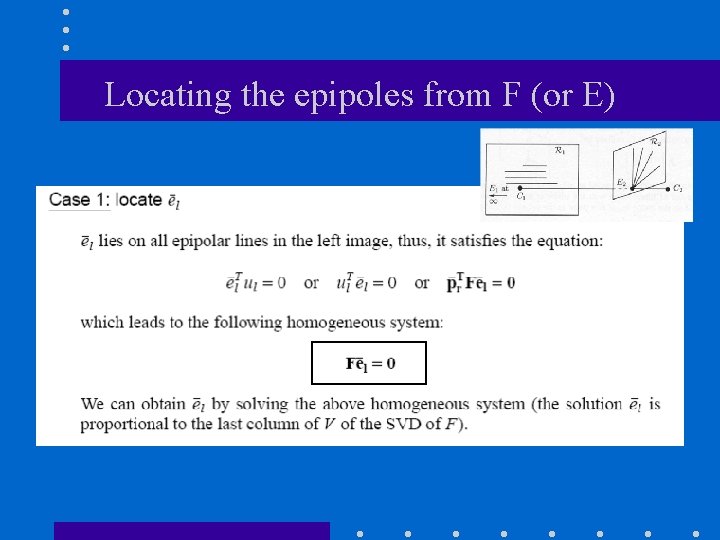

Locating the epipoles from F (or E)

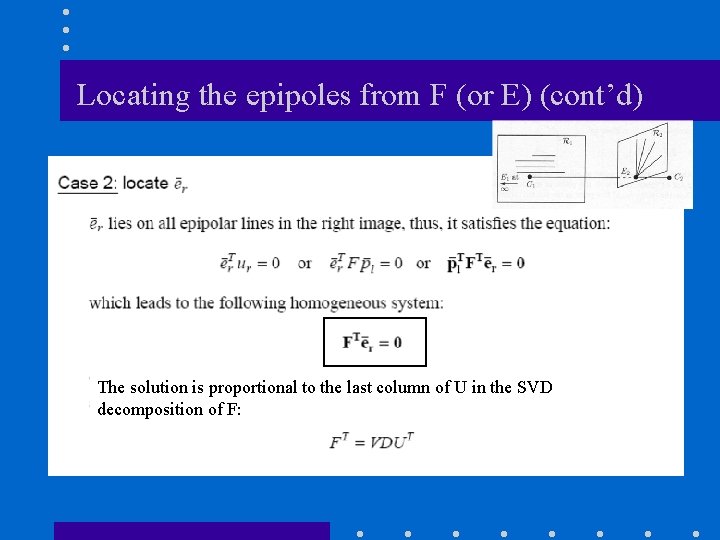

Locating the epipoles from F (or E) (cont’d) The solution is proportional to the last column of U in the SVD decomposition of F:

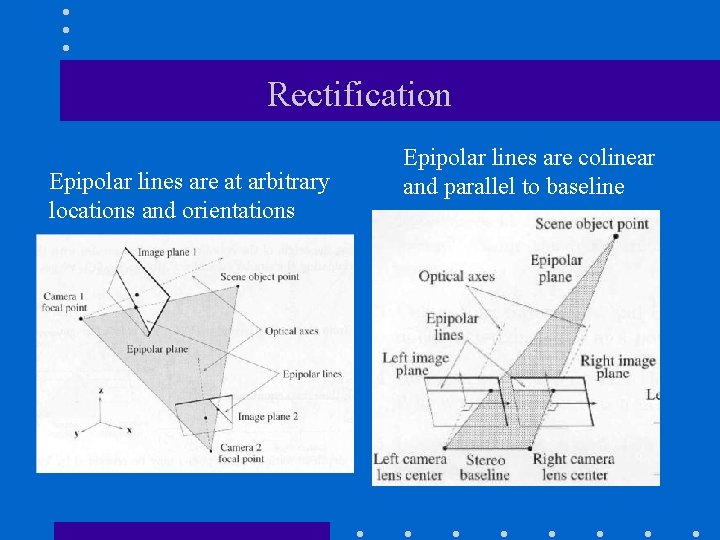

Rectification Epipolar lines are at arbitrary locations and orientations Epipolar lines are colinear and parallel to baseline

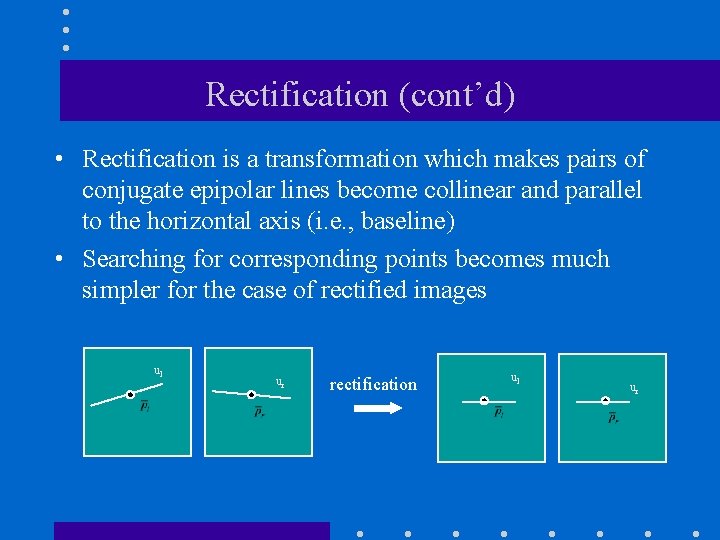

Rectification (cont’d) • Rectification is a transformation which makes pairs of conjugate epipolar lines become collinear and parallel to the horizontal axis (i. e. , baseline) • Searching for corresponding points becomes much simpler for the case of rectified images ul ur rectification ul ur

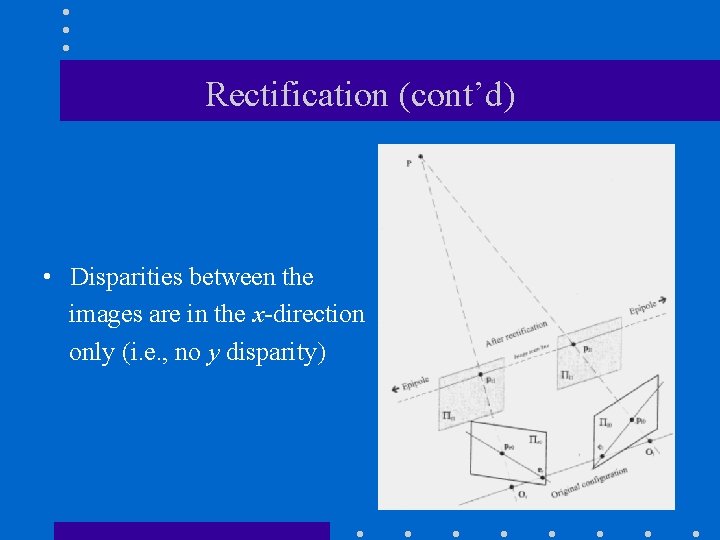

Rectification (cont’d) • Disparities between the images are in the x-direction only (i. e. , no y disparity)

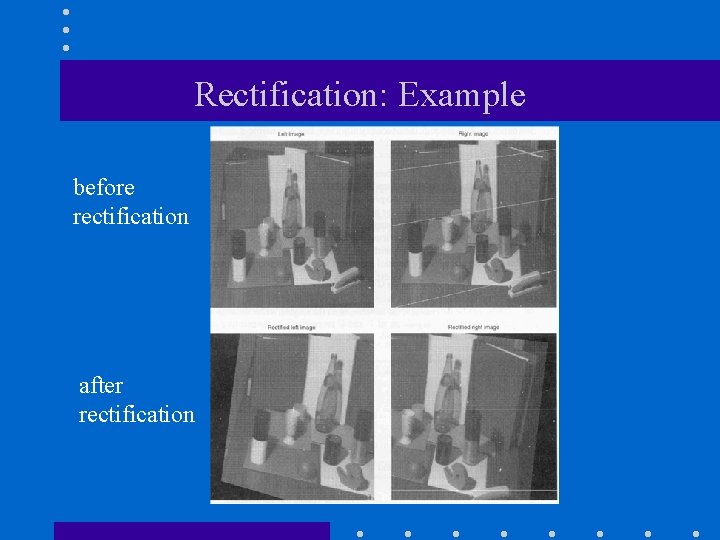

Rectification: Example before rectification after rectification

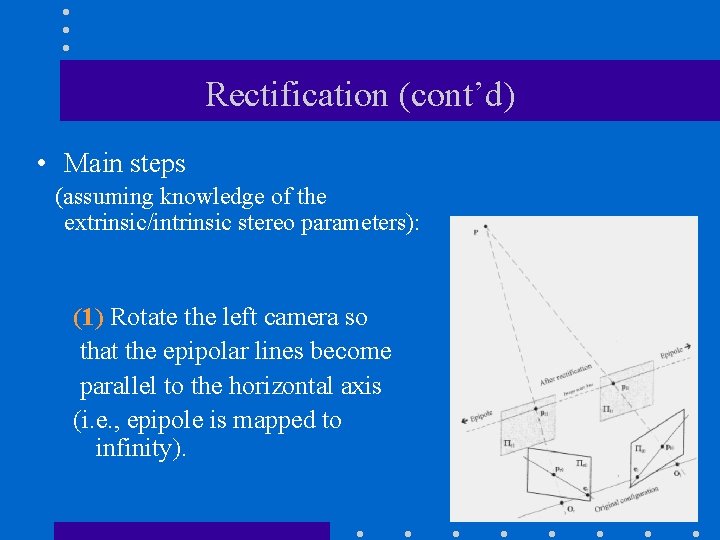

Rectification (cont’d) • Main steps (assuming knowledge of the extrinsic/intrinsic stereo parameters): (1) Rotate the left camera so that the epipolar lines become parallel to the horizontal axis (i. e. , epipole is mapped to infinity).

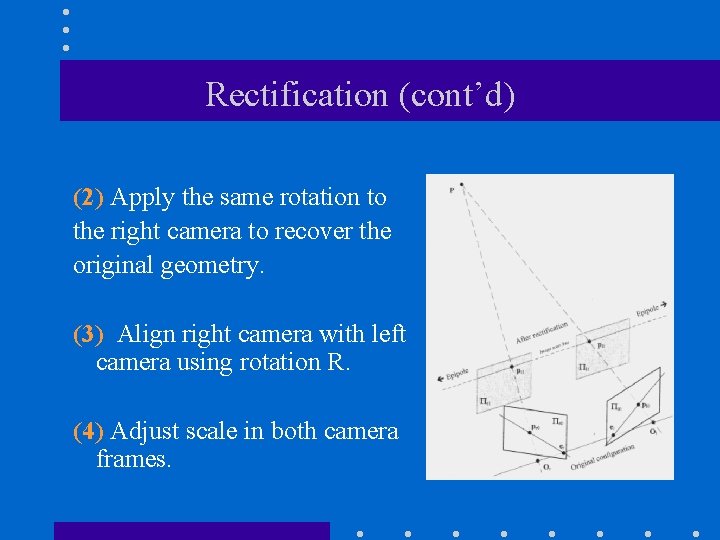

Rectification (cont’d) (2) Apply the same rotation to the right camera to recover the original geometry. (3) Align right camera with left camera using rotation R. (4) Adjust scale in both camera frames.

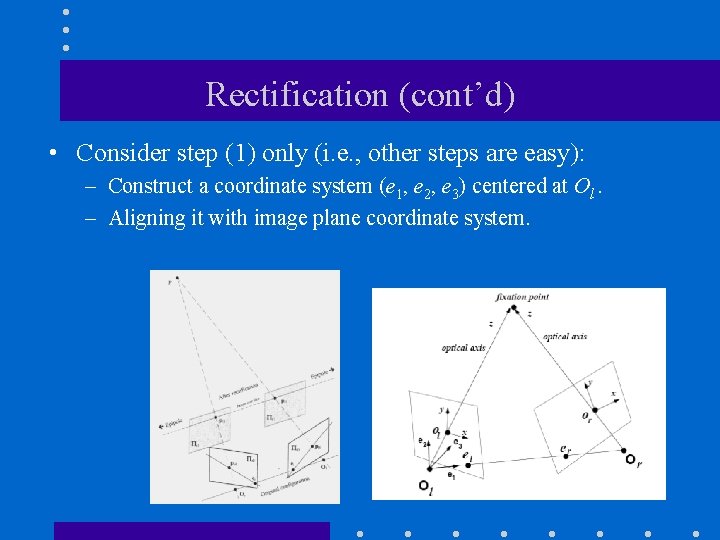

Rectification (cont’d) • Consider step (1) only (i. e. , other steps are easy): – Construct a coordinate system (e 1, e 2, e 3) centered at Ol. – Aligning it with image plane coordinate system.

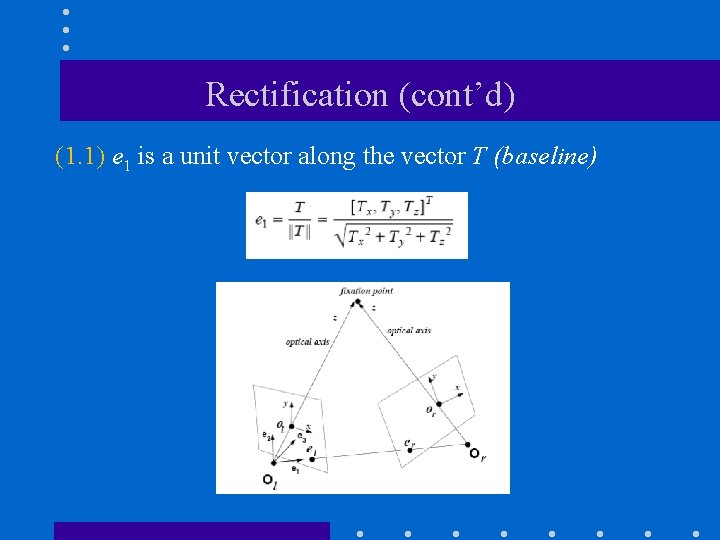

Rectification (cont’d) (1. 1) e 1 is a unit vector along the vector T (baseline)

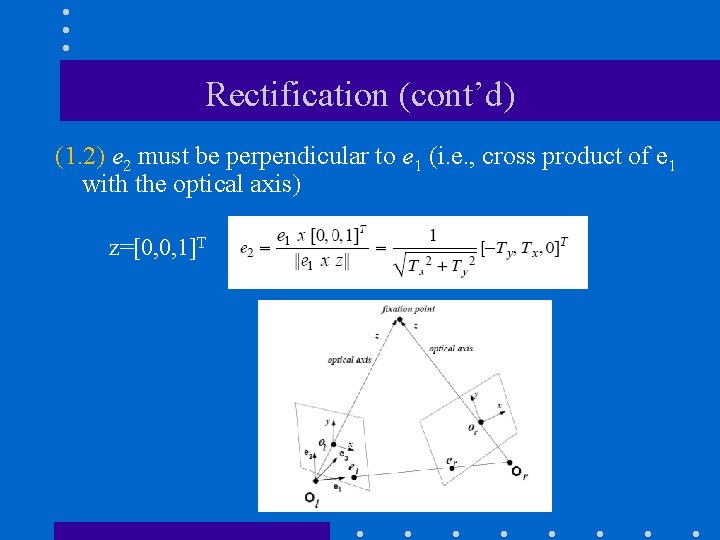

Rectification (cont’d) (1. 2) e 2 must be perpendicular to e 1 (i. e. , cross product of e 1 with the optical axis) z=[0, 0, 1]T

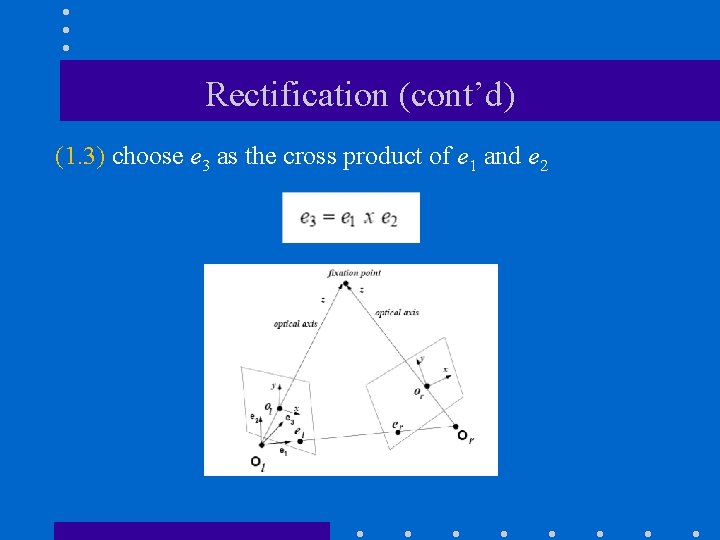

Rectification (cont’d) (1. 3) choose e 3 as the cross product of e 1 and e 2

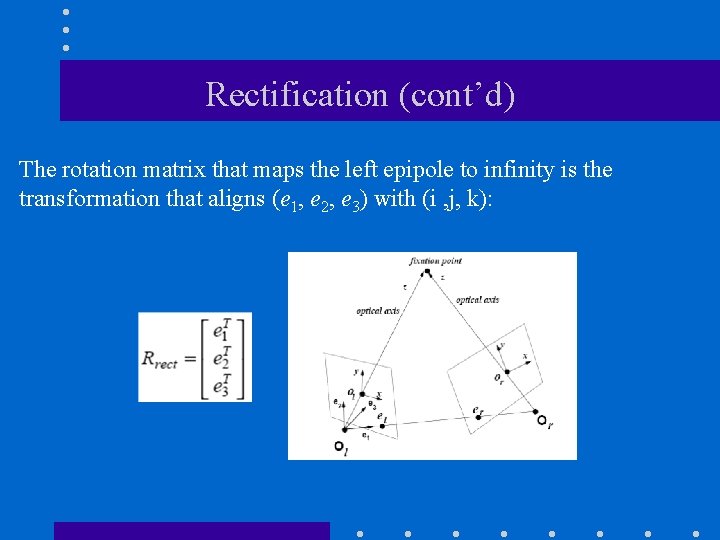

Rectification (cont’d) The rotation matrix that maps the left epipole to infinity is the transformation that aligns (e 1, e 2, e 3) with (i , j, k):

The reconstruction problem (1) Both intrinsic and extrinsic parameters are known: we can solve the reconstruction problem unambiguously by triangulation. (2) Only the intrinsic parameters are known: we can solve the reconstruction problem only up to an unknown scaling factor. (3) Neither the extrinsic nor the intrinsic parameters are available: we can solve the reconstruction problem only up to an unknown, global projective transformation.

(1) Reconstruction by triangulation • Assumptions and problem statement (1) Both the extrinsic and intrinsic camera parameters are known. (2) Compute the location of the 3 D points from their projections pl and pr

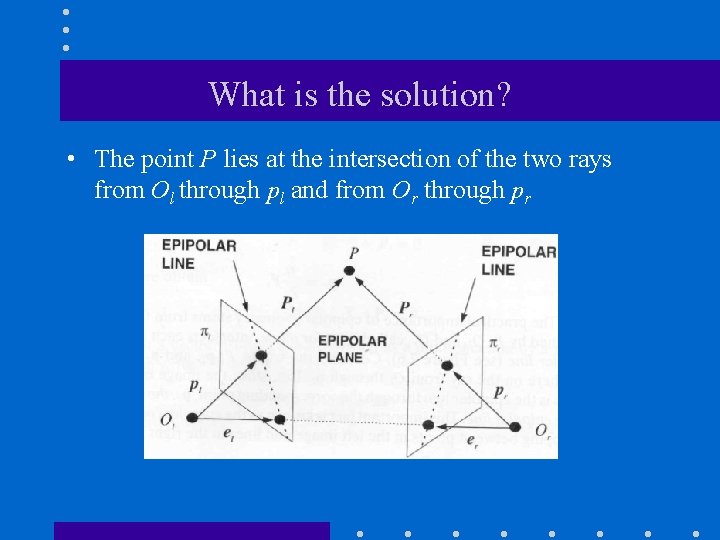

What is the solution? • The point P lies at the intersection of the two rays from Ol through pl and from Or through pr

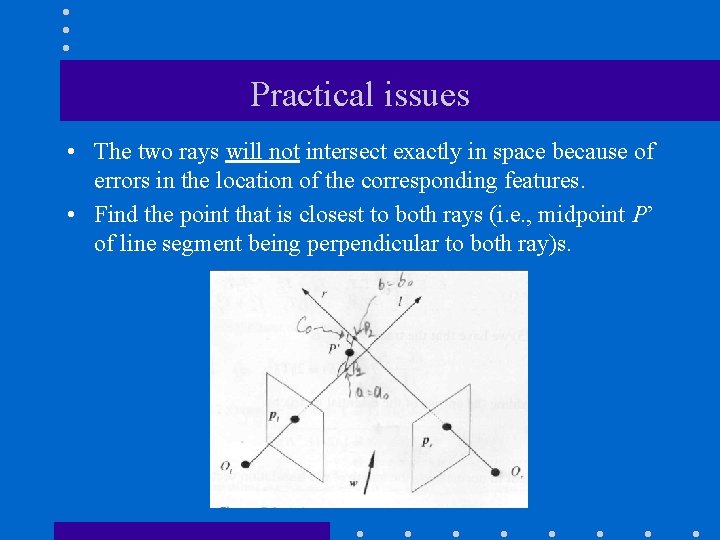

Practical issues • The two rays will not intersect exactly in space because of errors in the location of the corresponding features. • Find the point that is closest to both rays (i. e. , midpoint P’ of line segment being perpendicular to both ray)s.

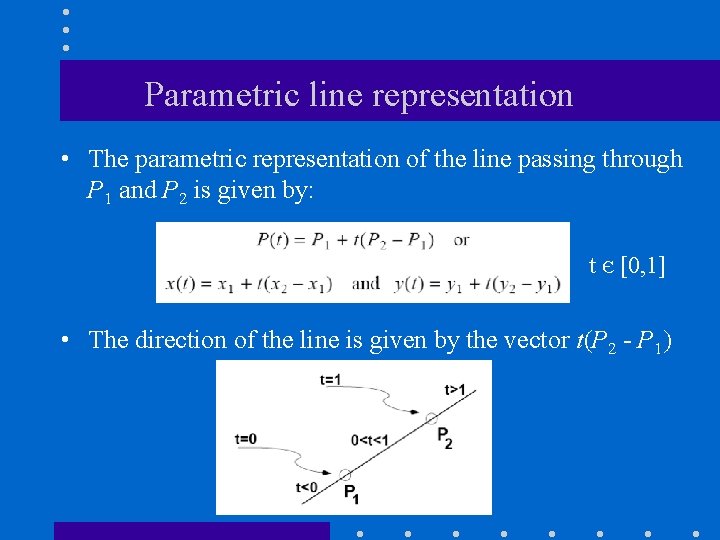

Parametric line representation • The parametric representation of the line passing through P 1 and P 2 is given by: t Є [0, 1] • The direction of the line is given by the vector t(P 2 - P 1)

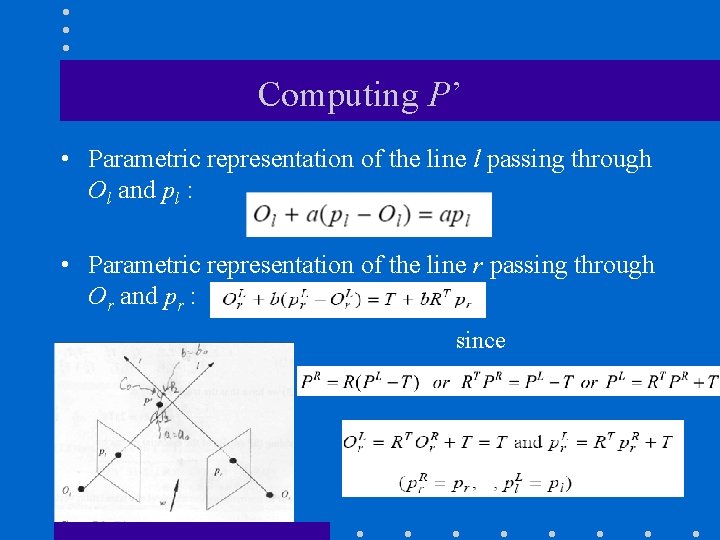

Computing P’ • Parametric representation of the line l passing through Ol and pl : • Parametric representation of the line r passing through Or and pr : since

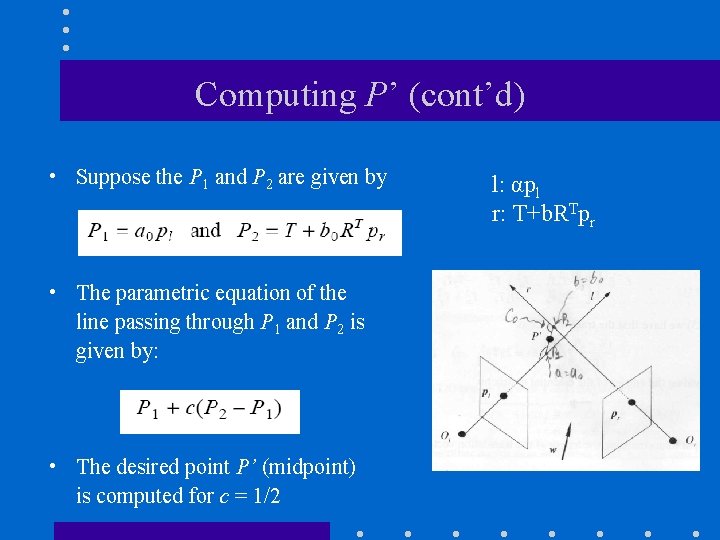

Computing P’ (cont’d) • Suppose the P 1 and P 2 are given by • The parametric equation of the line passing through P 1 and P 2 is given by: • The desired point P’ (midpoint) is computed for c = 1/2 l: αpl r: T+b. RTpr

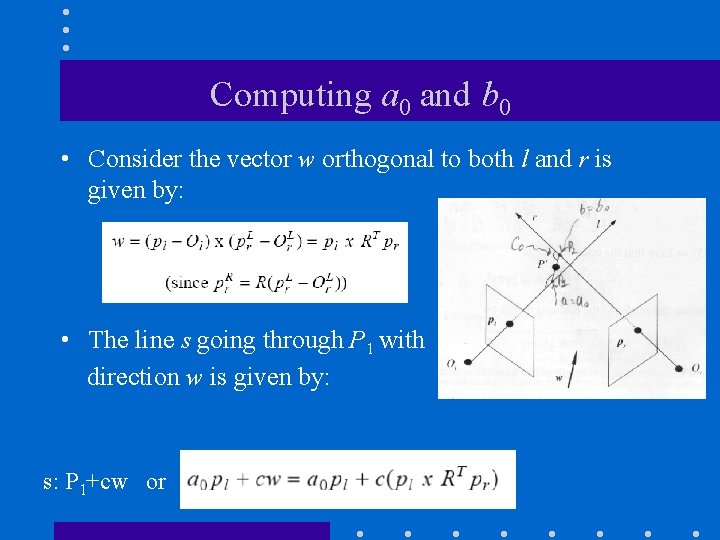

Computing a 0 and b 0 • Consider the vector w orthogonal to both l and r is given by: • The line s going through P 1 with direction w is given by: s: P 1+cw or

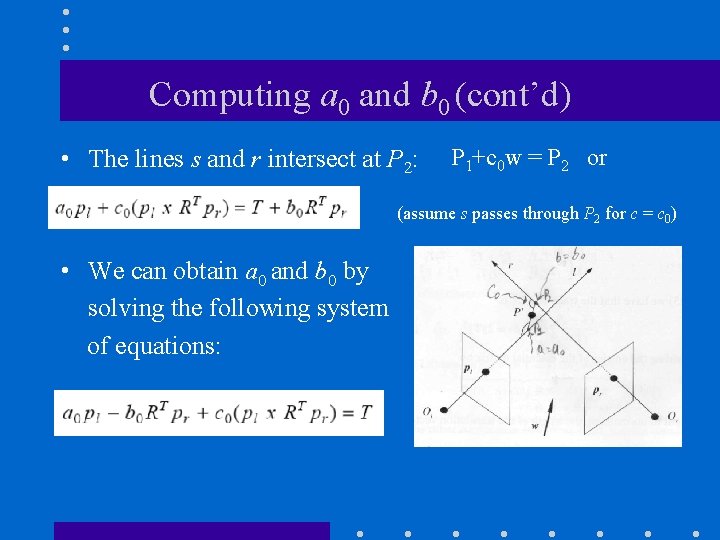

Computing a 0 and b 0 (cont’d) • The lines s and r intersect at P 2: P 1+c 0 w = P 2 or (assume s passes through P 2 for c = c 0) • We can obtain a 0 and b 0 by solving the following system of equations:

(2) Reconstruction up to a scale factor • Only the intrinsic camera parameters are known. • We cannot recover the true scale of the viewed scene since we do not know the baseline T (recall that Z = f. T/d). • Reconstruction is unique only up to an unknown scaling factor. – This factor can be determined if we know the distance between two points in the scene.

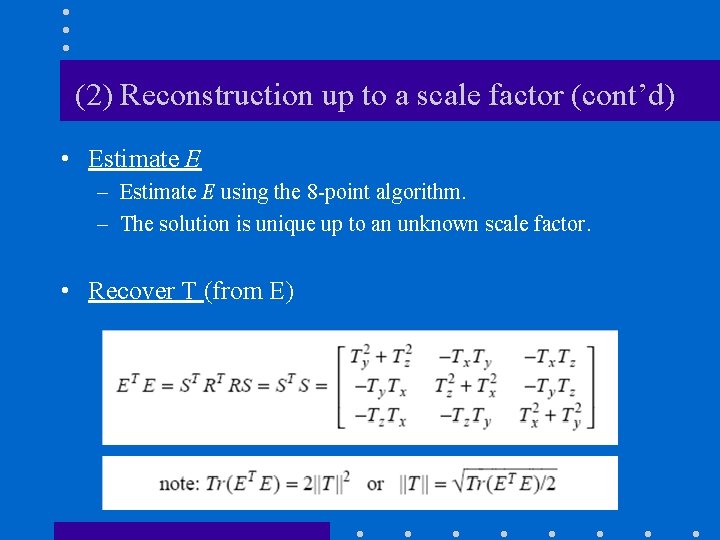

(2) Reconstruction up to a scale factor (cont’d) • Estimate E – Estimate E using the 8 -point algorithm. – The solution is unique up to an unknown scale factor. • Recover T (from E)

(2) Reconstruction up to a scale factor (cont’d) – To simplify the recovery of T, consider • Recover R (from E) – It can be shown that where

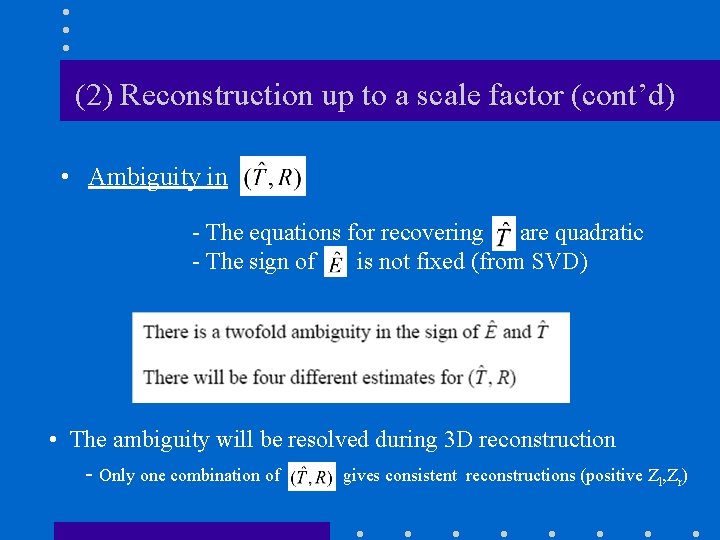

(2) Reconstruction up to a scale factor (cont’d) • Ambiguity in - The equations for recovering are quadratic - The sign of is not fixed (from SVD) • The ambiguity will be resolved during 3 D reconstruction - Only one combination of gives consistent reconstructions (positive Zl, Zr)

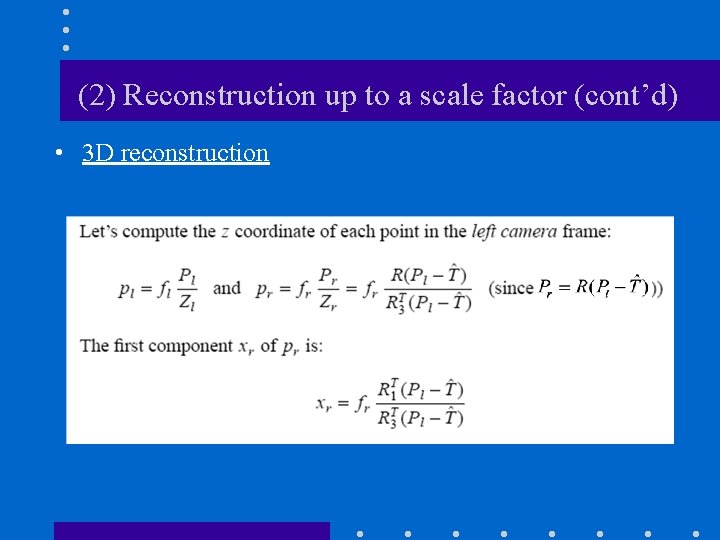

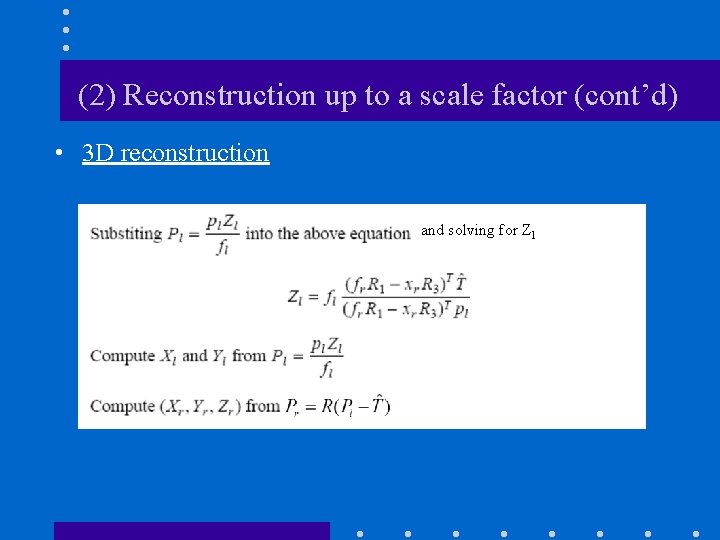

(2) Reconstruction up to a scale factor (cont’d) • 3 D reconstruction

(2) Reconstruction up to a scale factor (cont’d) • 3 D reconstruction and solving for Zl

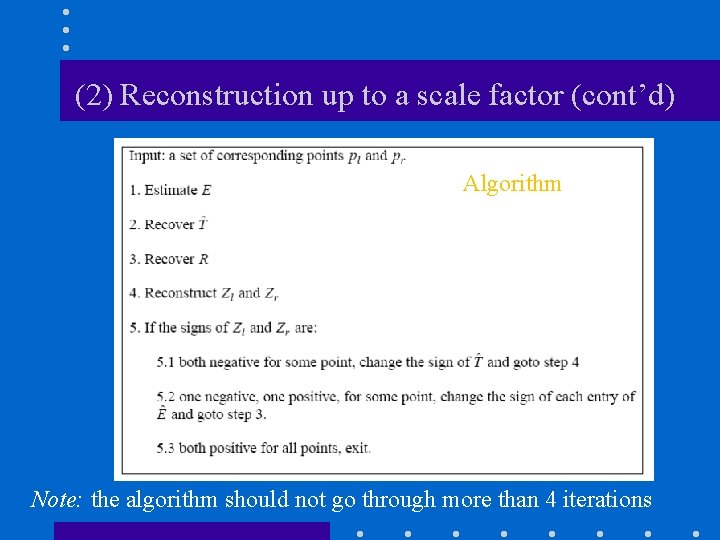

(2) Reconstruction up to a scale factor (cont’d) Algorithm Note: the algorithm should not go through more than 4 iterations

(3) Reconstruction up to a projective transformation • More complicated - see book chapter

- Slides: 88