Stealing Hyperparameters in Machine Learning Binghui Wang and

Stealing Hyperparameters in Machine Learning Binghui Wang and Neil Zhenqiang Gong ECE Department, Iowa State University

Machine Learning As A Service (MLaa. S) • Emerging technology to aid users with limited computing power or limited ML expertise to learn ML models • MLaa. S platforms

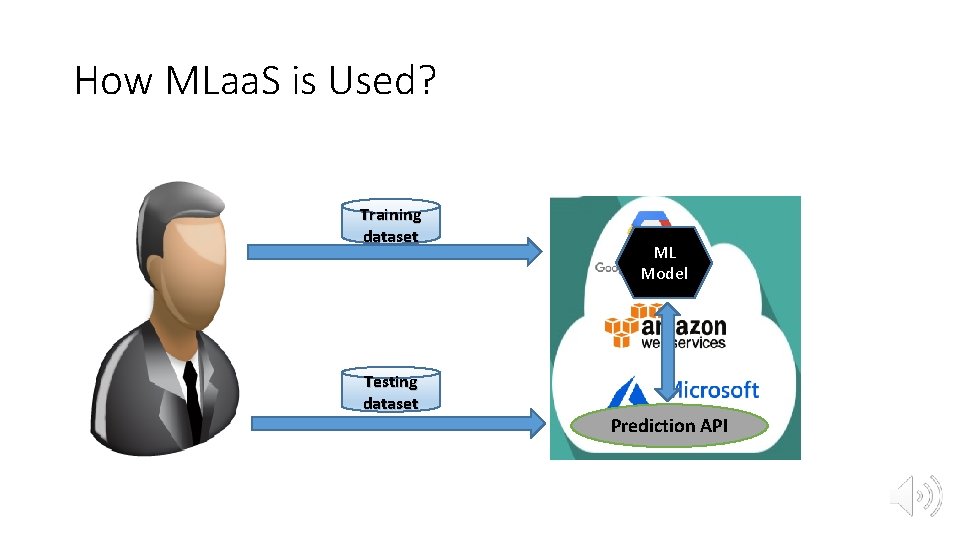

How MLaa. S is Used? Training dataset Testing dataset ML Model Prediction API

Privacy: A Big Challenge for MLaa. S • Model Inversion Attack • Fredrikson et al. CCS’ 15 • Membership Inference Attack • Shokri et al. IEEE S&P’ 17 • Model Extraction Attack • Trame r et al. Usenix Security’ 16

Our Work: A New Privacy Attack • Hyperparameter Stealing Attack • Hyperparameters are confidential for certain MLaa. S • Stealing hyperparameter values used by MLaa. S • Application Scenario • A user can be an attacker • Attacker can learn an ML model via MLaa. S with much less computational costs (or economical costs) without sacrificing testing performance

Outline • Machine Learning Background • Hyperparameter Stealing Attack • Evaluation • Defense • Conclusion

Outline • Machine Learning Background • Hyperparameter Stealing Attack • Evaluation • Defense • Conclusion

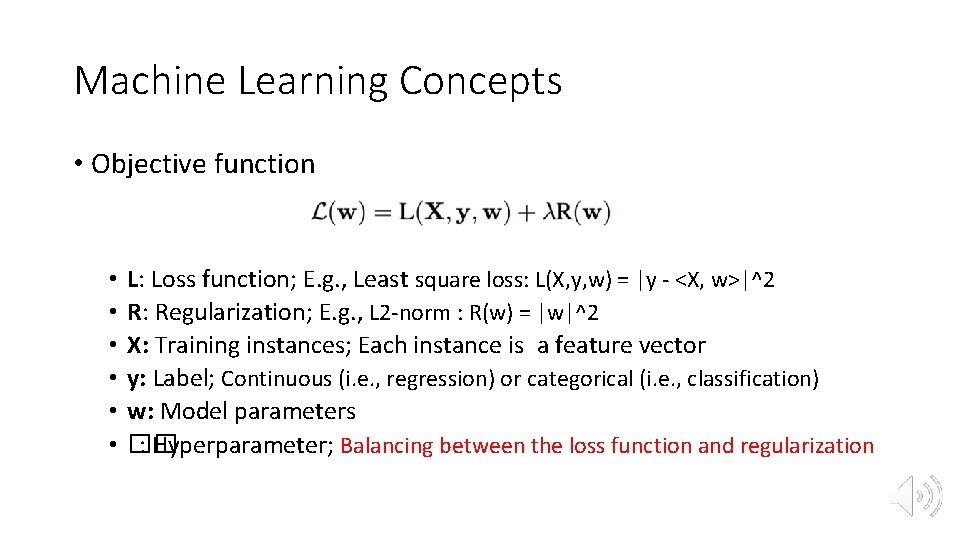

Machine Learning Concepts • Objective function • • • L: Loss function; E. g. , Least square loss: L(X, y, w) = |y - <X, w>|^2 R: Regularization; E. g. , L 2 -norm : R(w) = |w|^2 X: Training instances; Each instance is a feature vector y: Label; Continuous (i. e. , regression) or categorical (i. e. , classification) w: Model parameters �� : Hyperparameter; Balancing between the loss function and regularization

Machine Learning Tasks • Hyperparameter learning • Cross-validation • Time-consuming • Model parameter learning • Minimizing the objective function of an ML algorithm with a specified hyperparameter • Different ML algorithms use different loss functions and regularizations

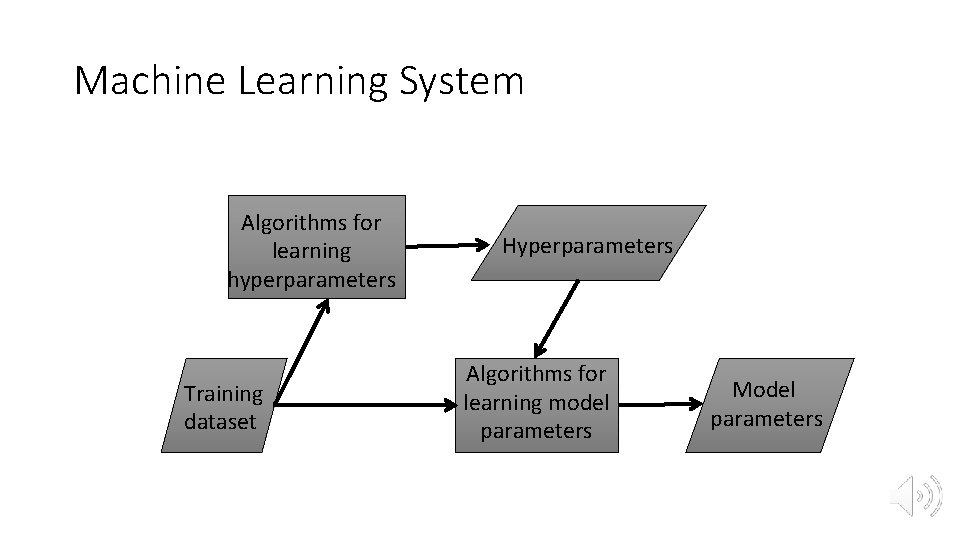

Machine Learning System Algorithms for learning hyperparameters Training dataset Hyperparameters Algorithms for learning model parameters Model parameters

Outline • Machine Learning Background • Hyperparameter Stealing Attack • Evaluation • Defense • Conclusion

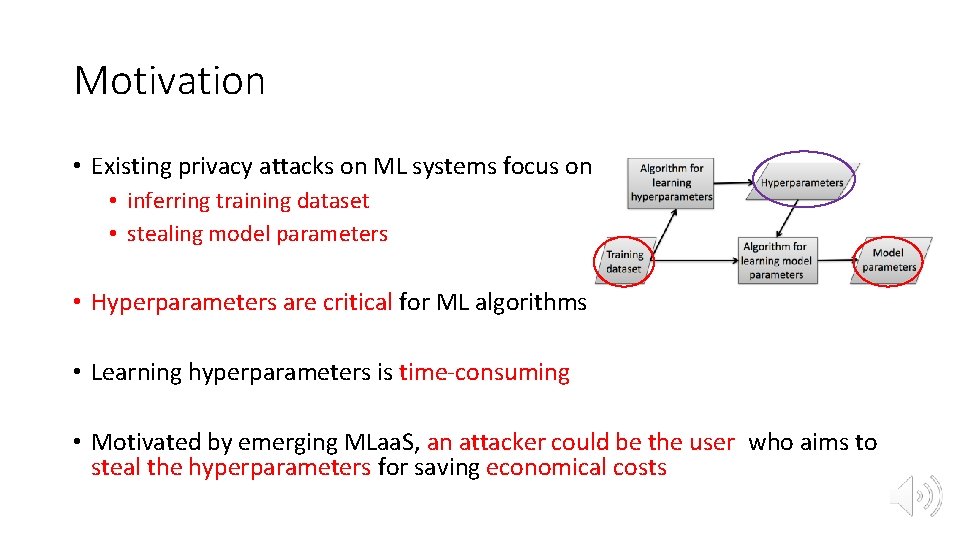

Motivation • Existing privacy attacks on ML systems focus on • inferring training dataset • stealing model parameters • Hyperparameters are critical for ML algorithms • Learning hyperparameters is time-consuming • Motivated by emerging MLaa. S, an attacker could be the user who aims to steal the hyperparameters for saving economical costs

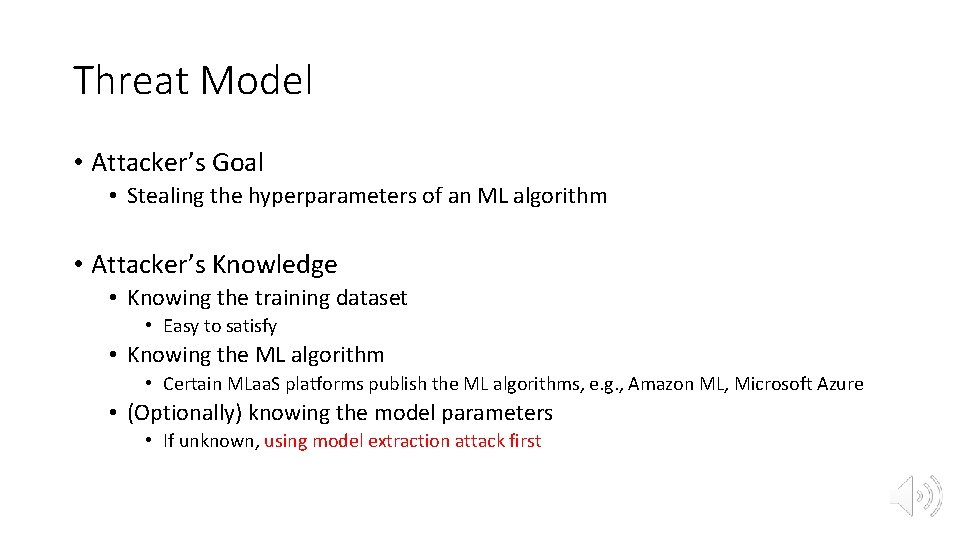

Threat Model • Attacker’s Goal • Stealing the hyperparameters of an ML algorithm • Attacker’s Knowledge • Knowing the training dataset • Easy to satisfy • Knowing the ML algorithm • Certain MLaa. S platforms publish the ML algorithms, e. g. , Amazon ML, Microsoft Azure • (Optionally) knowing the model parameters • If unknown, using model extraction attack first

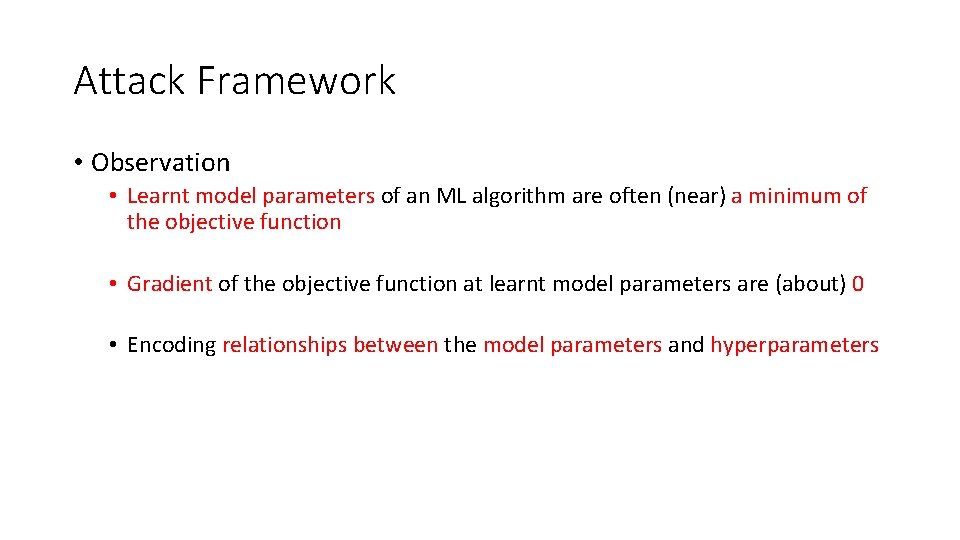

Attack Framework • Observation • Learnt model parameters of an ML algorithm are often (near) a minimum of the objective function • Gradient of the objective function at learnt model parameters are (about) 0 • Encoding relationships between the model parameters and hyperparameters

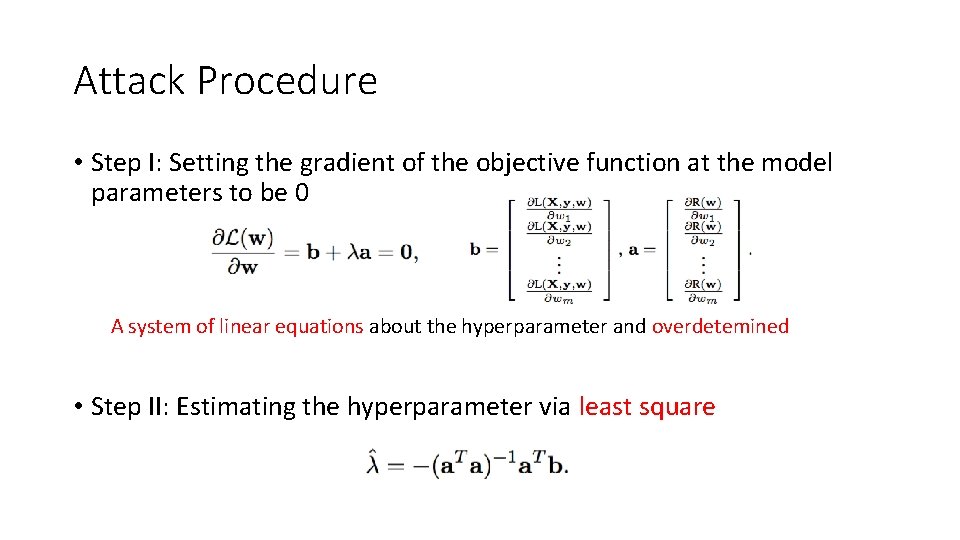

Attack Procedure • Step I: Setting the gradient of the objective function at the model parameters to be 0 A system of linear equations about the hyperparameter and overdetemined • Step II: Estimating the hyperparameter via least square

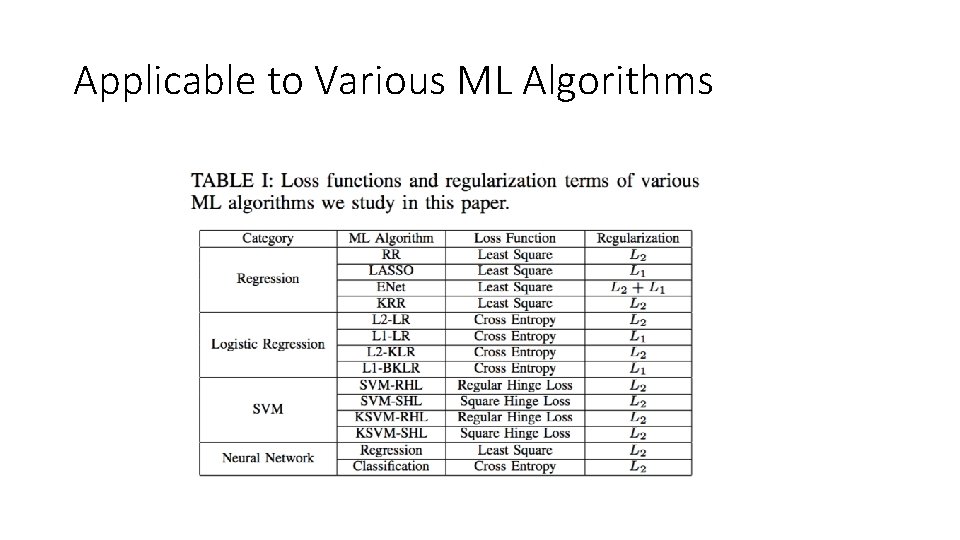

Applicable to Various ML Algorithms

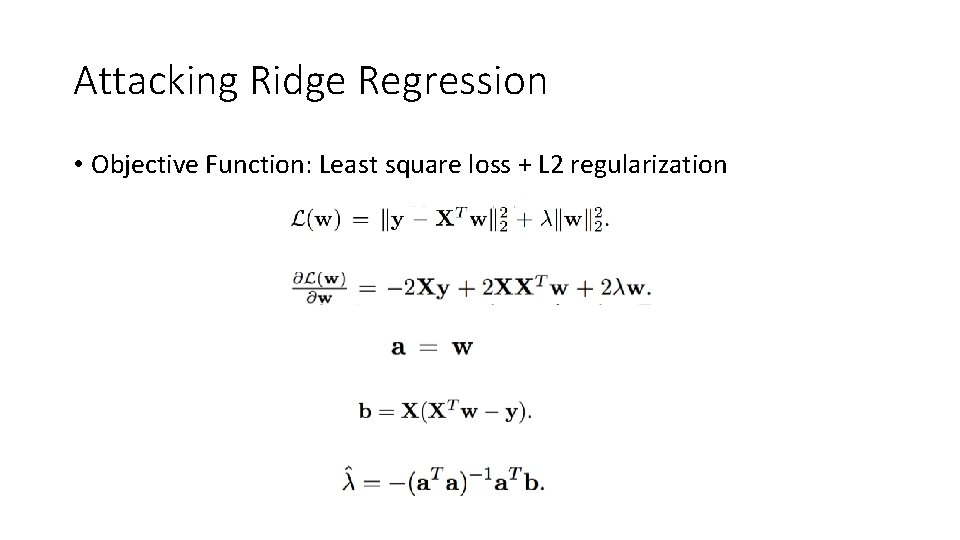

Attacking Ridge Regression • Objective Function: Least square loss + L 2 regularization

Outline • Machine Learning Background • Hyperparameter Stealing Attack • Evaluation • Defense • Conclusion

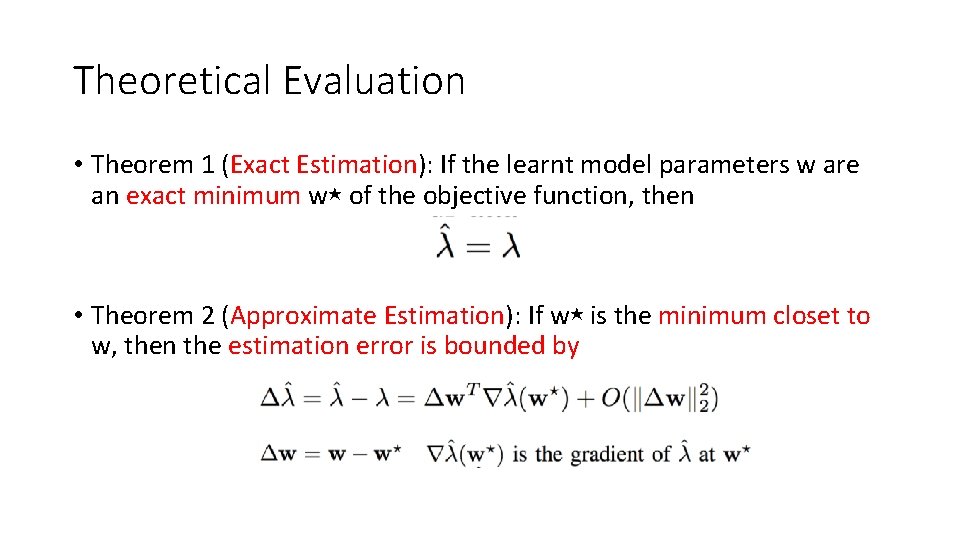

Theoretical Evaluation • Theorem 1 (Exact Estimation): If the learnt model parameters w are an exact minimum w⋆ of the objective function, then • Theorem 2 (Approximate Estimation): If w⋆ is the minimum closet to w, then the estimation error is bounded by

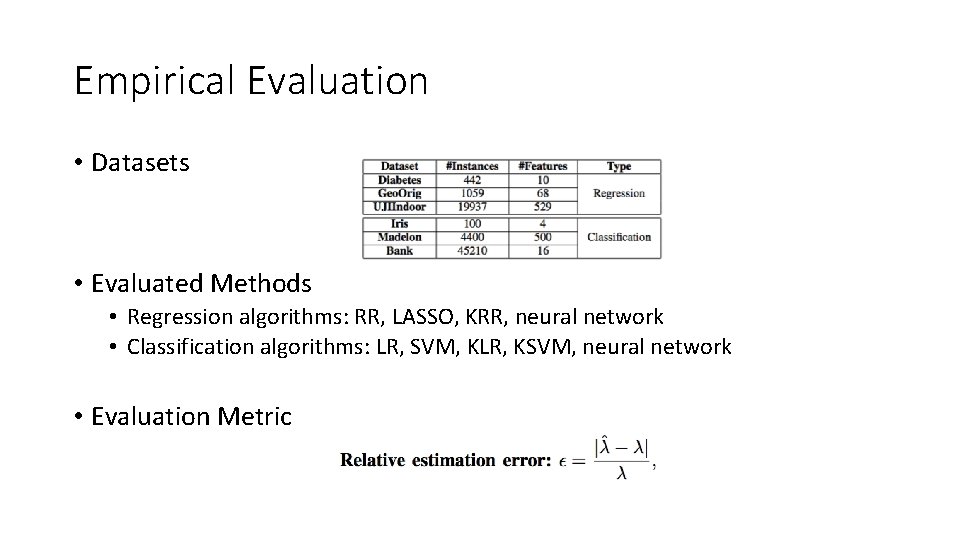

Empirical Evaluation • Datasets • Evaluated Methods • Regression algorithms: RR, LASSO, KRR, neural network • Classification algorithms: LR, SVM, KLR, KSVM, neural network • Evaluation Metric

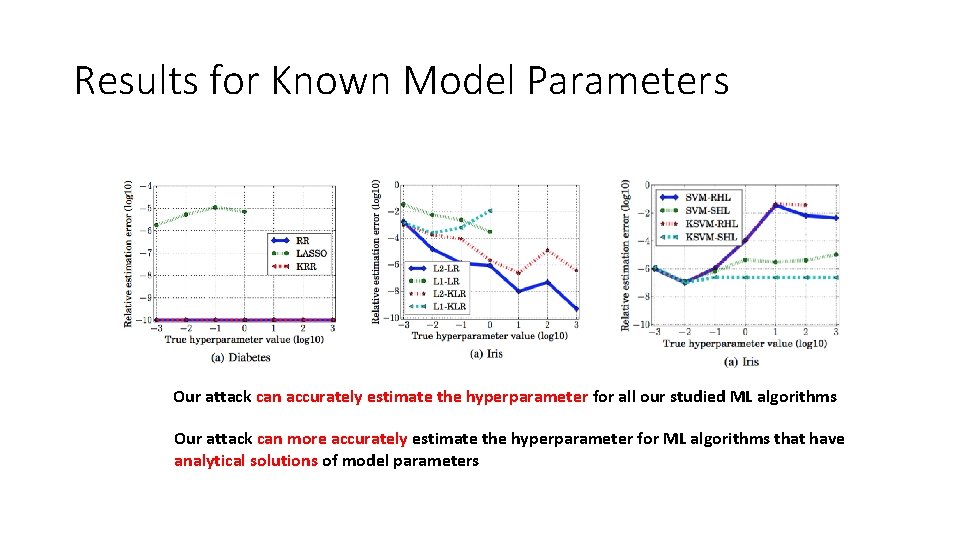

Results for Known Model Parameters Our attack can accurately estimate the hyperparameter for all our studied ML algorithms Our attack can more accurately estimate the hyperparameter for ML algorithms that have analytical solutions of model parameters

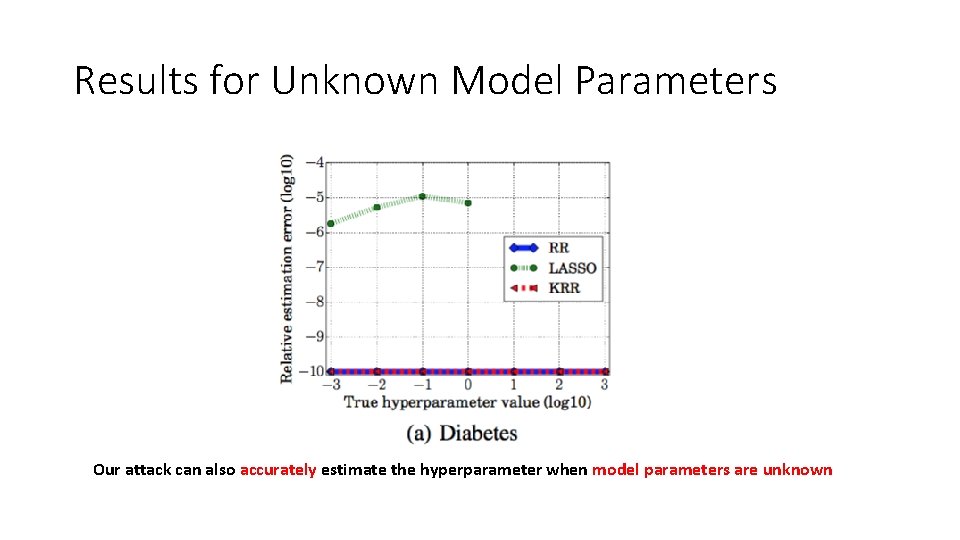

Results for Unknown Model Parameters Our attack can also accurately estimate the hyperparameter when model parameters are unknown

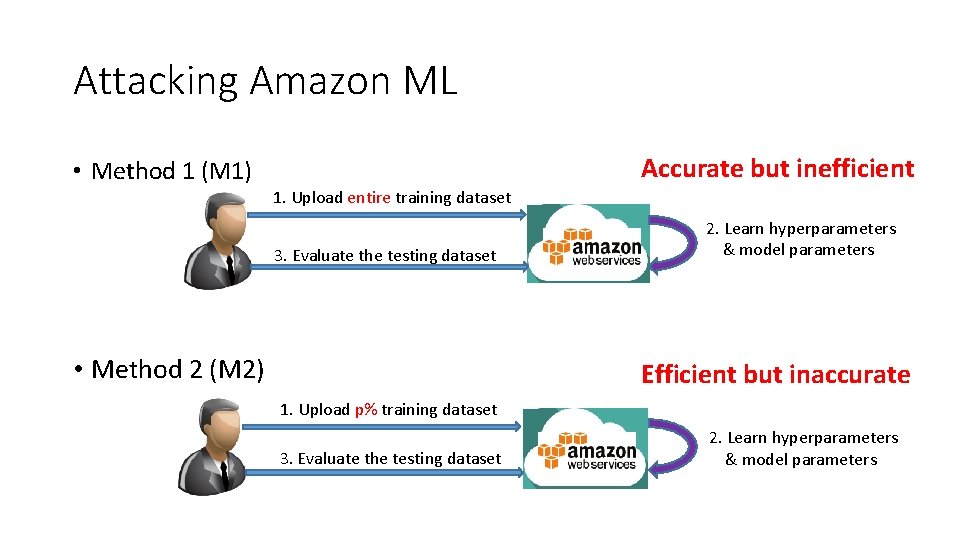

Attacking Amazon ML Accurate but inefficient • Method 1 (M 1) 1. Upload entire training dataset 3. Evaluate the testing dataset • Method 2 (M 2) 2. Learn hyperparameters & model parameters Efficient but inaccurate 1. Upload p% training dataset 3. Evaluate the testing dataset 2. Learn hyperparameters & model parameters

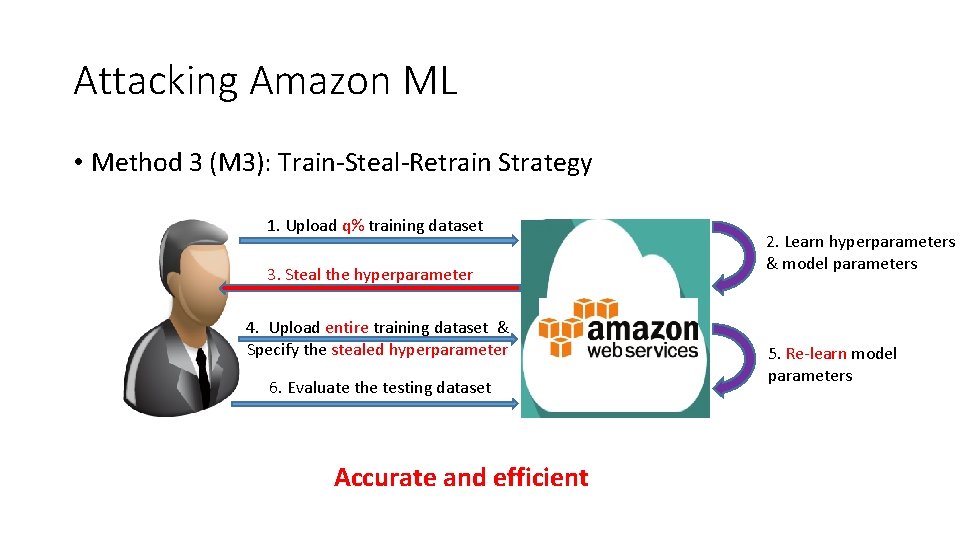

Attacking Amazon ML • Method 3 (M 3): Train-Steal-Retrain Strategy 1. Upload q% training dataset 3. Steal the hyperparameter 4. Upload entire training dataset & Specify the stealed hyperparameter 6. Evaluate the testing dataset Accurate and efficient 2. Learn hyperparameters & model parameters 5. Re-learn model parameters

Attacking Amazon ML • One half for training and one half for testing on the Bank dataset • M 2 and M 3 sample 15% and 3% of the training dataset • Training cost • M 1: $1. 02 vs M 3: $0. 16 • M 2: $0. 15 vs M 3: $0. 16 • Relative error • M 3 over M 1: 0. 92% • M 2 over M 1: 5. 1%

Outline • Machine Learning Background • Hyperparameter Stealing Attack • Evaluation • Defense • Conclusion

Rounding As a Defense • Rounding the learnt model parameters before sharing them to users • For instance, rounding a parameter 0. 8765 • With one decimal, 0. 9 • With two decimals, 0. 88 • Rounding technique was also used by other work • Fredrikson et al. CCS’ 15 • Trame r et al. Usenix Security’ 16

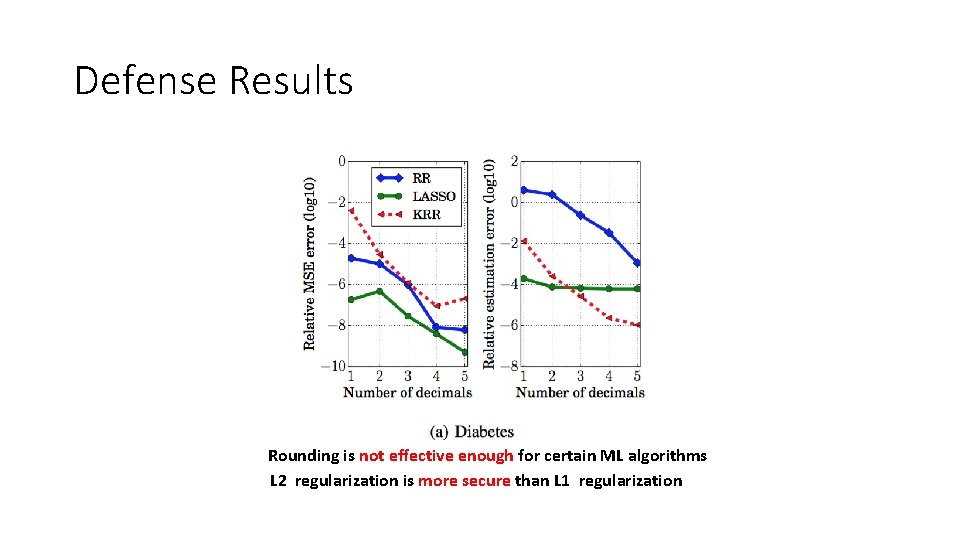

Defense Results Rounding is not effective enough for certain ML algorithms L 2 regularization is more secure than L 1 regularization

Outline • Machine Learning Background • Hyperparameter Stealing Attack • Evaluation • Defense • Conclusion

Conclusion • ML algorithms are vulnerable to hyperparameter stealing attacks • Our attack can help users save economical costs on MLaa. S platforms without sacrificing testing performance • We need new defenses for our hyperparameter stealing attacks

- Slides: 30