Statistics in R ANOVA BY KELSEY HUNTZBERRY MPH

Statistics in R ANOVA BY KELSEY HUNTZBERRY, MPH

One-Way ANOVA Overview

ANOVA Overview • ANOVA stands for Analysis of Variance https: //365 datascience. com/variance-standard-deviation-coefficient-variation/

When Do You Use One-Way ANOVA? • Compare the means between levels of one independent variable • One independent categorical variable with 2+ levels • Checks whether at least two levels’ means are significantly different from one another • A post-hoc test (i. e. another test run after the ANOVA) can tell you which groups were different and the magnitude of the difference

Groups vs. Levels • Example: Self-reported stress levels in different income groups • Dependent variable: Stress levels • Independent variable or group: Income • Levels within independent variable where means are compared: High, Medium, Low

One-Way ANOVA Example Scenarios • Often used to analyze simple experiments • A group of psychiatric patients are trying three different therapies: counseling, medication and biofeedback. You want to see if one therapy is better than the others. • A manufacturer has two different processes to make light bulbs. They want to know if one process is better than the other. • Students from different colleges take the same exam. You want to see if one college outperforms the other. Examples from: https: //www. statisticshowto. datasciencecentral. com/probability-and-statistics/hypothesis-testing/anova/

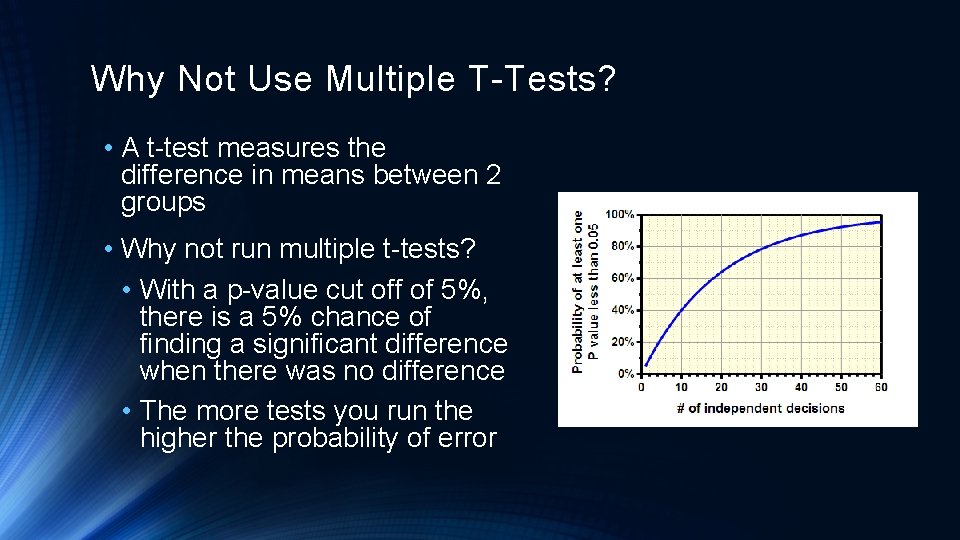

Why Not Use Multiple T-Tests? • A t-test measures the difference in means between 2 groups • Why not run multiple t-tests? • With a p-value cut off of 5%, there is a 5% chance of finding a significant difference when there was no difference • The more tests you run the higher the probability of error

One-Way ANOVA: Learn By Example

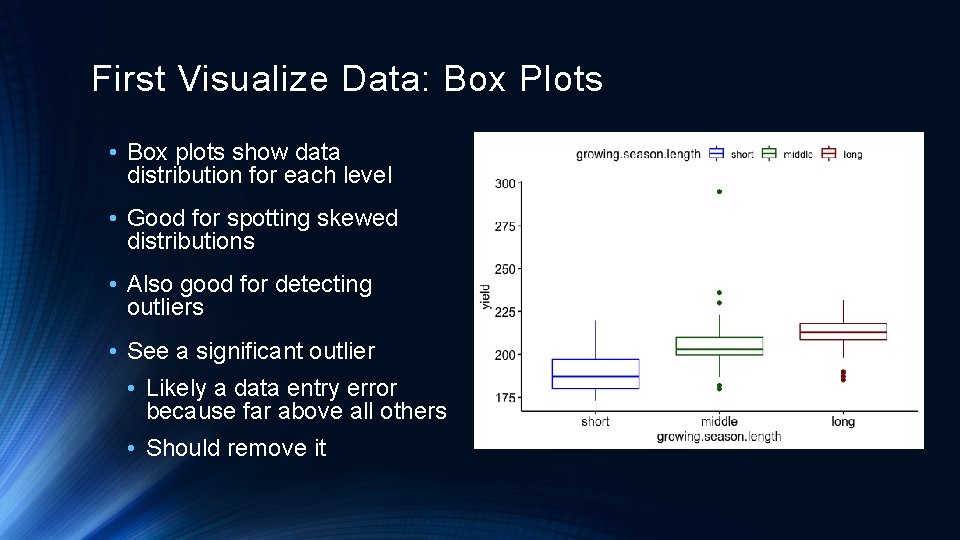

First Visualize Data: Box Plots • Box plots show data distribution for each level • Good for spotting skewed distributions • Also good for detecting outliers • See a significant outlier • Likely a data entry error because far above all others • Should remove it

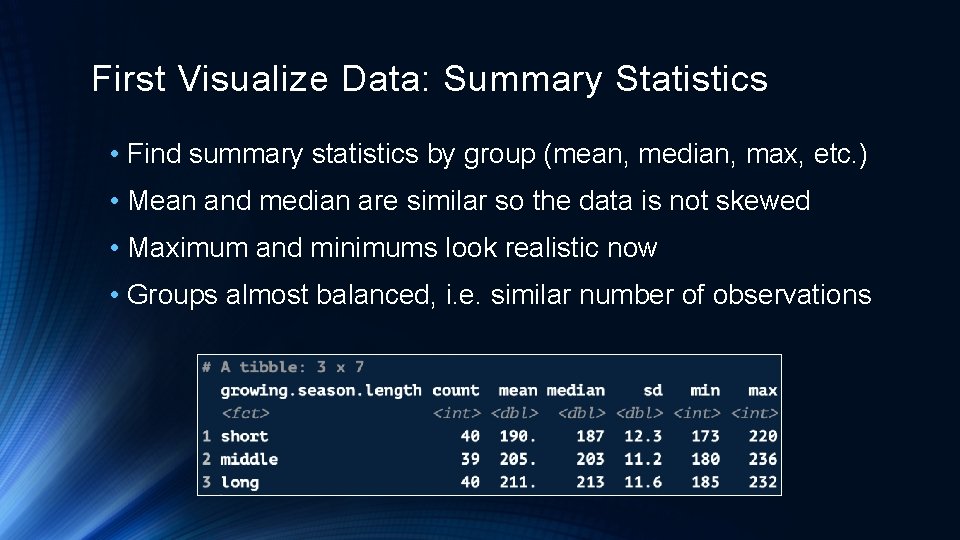

First Visualize Data: Summary Statistics • Find summary statistics by group (mean, median, max, etc. ) • Mean and median are similar so the data is not skewed • Maximum and minimums look realistic now • Groups almost balanced, i. e. similar number of observations

ANOVA Null and Alternative Hypotheses •

Running a One-Way ANOVA in R

Running a One-Way ANOVA in R Dependent Variable: What we are trying to explain Independent Variable: Testing its relationship to yield Data frame with data yield. aov <- aov(yield ~ growing. season. length, data = yield. df) summary(yield. aov) Print Results

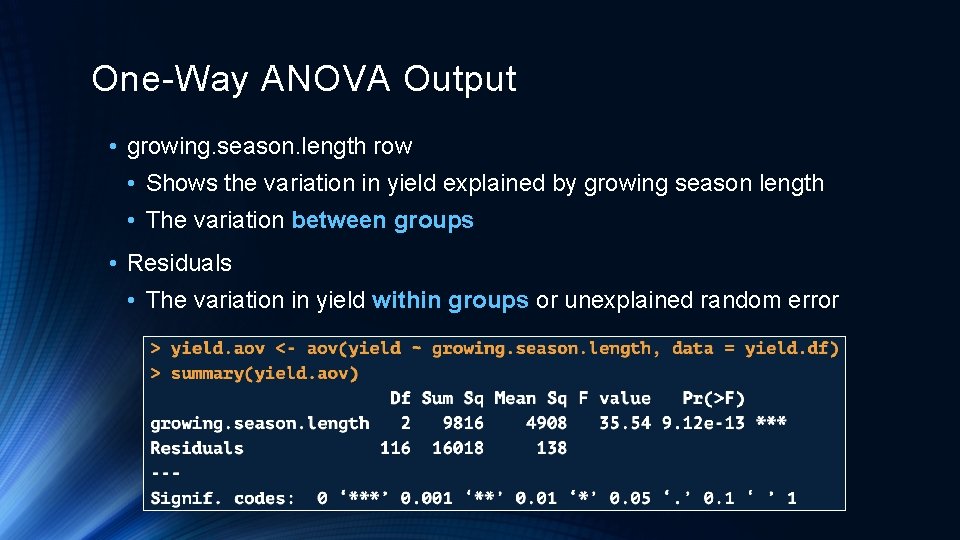

One-Way ANOVA Output • growing. season. length row • Shows the variation in yield explained by growing season length • The variation between groups • Residuals • The variation in yield within groups or unexplained random error

Degrees of Freedom • Df for growing. season. length = (number of group levels) -1 • Df for Residuals = (number of total data points – number of group levels) • 3 levels in growing. season. length and 119 total data points

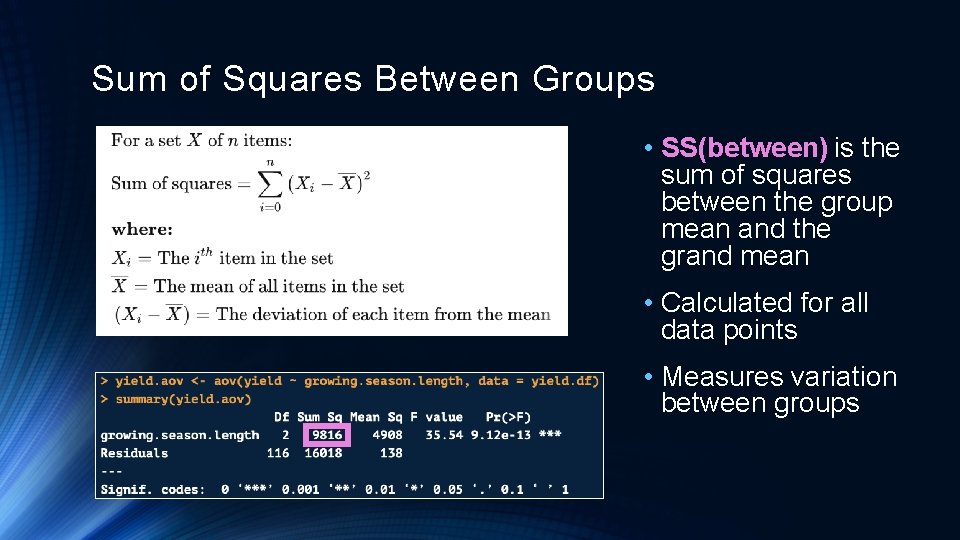

Sum of Squares Between Groups • SS(between) is the sum of squares between the group mean and the grand mean • Calculated for all data points • Measures variation between groups

Total Sum of Squares • SS(total) is the sum of squares between each point and the mean of the entire data set • Not pictured in output • Estimates the total variability in the data set

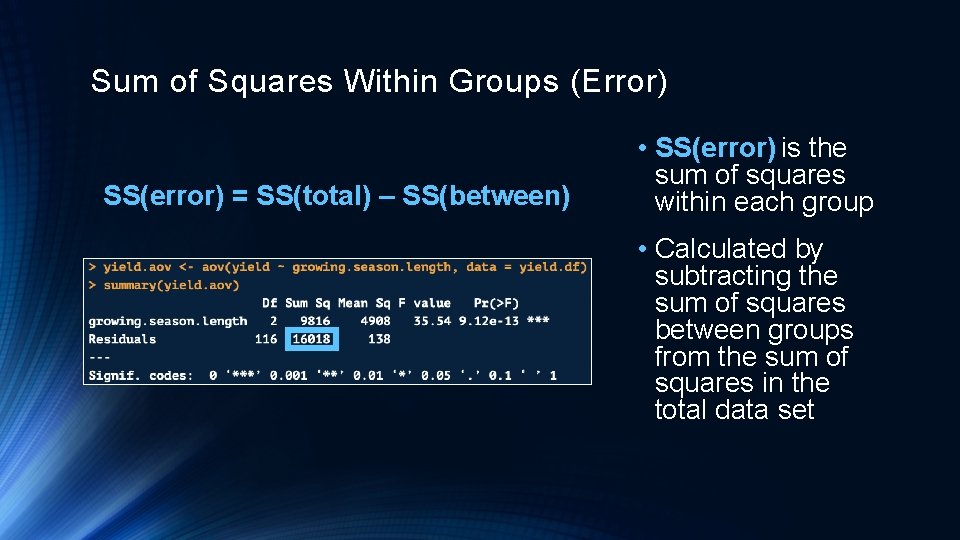

Sum of Squares Within Groups (Error) SS(error) = SS(total) – SS(between) • SS(error) is the sum of squares within each group • Calculated by subtracting the sum of squares between groups from the sum of squares in the total data set

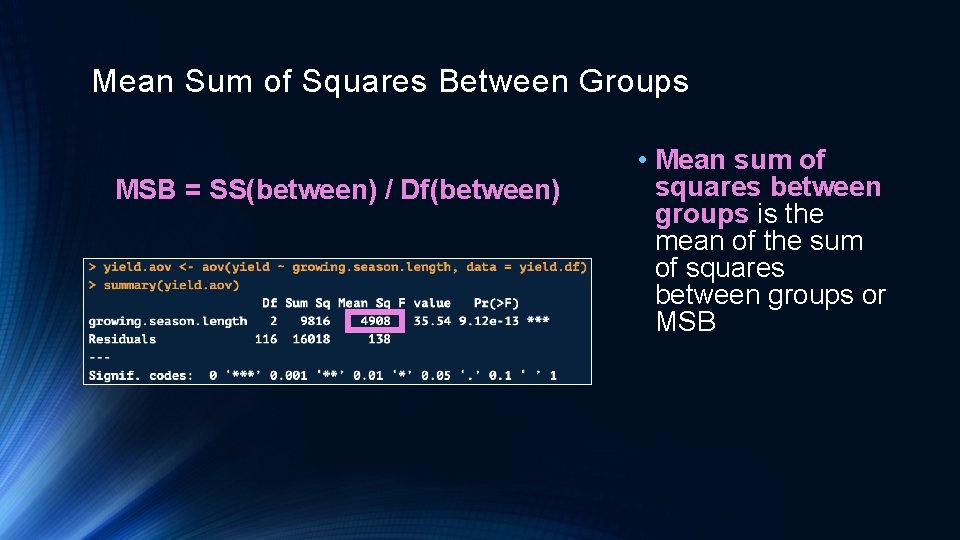

Mean Sum of Squares Between Groups MSB = SS(between) / Df(between) • Mean sum of squares between groups is the mean of the sum of squares between groups or MSB

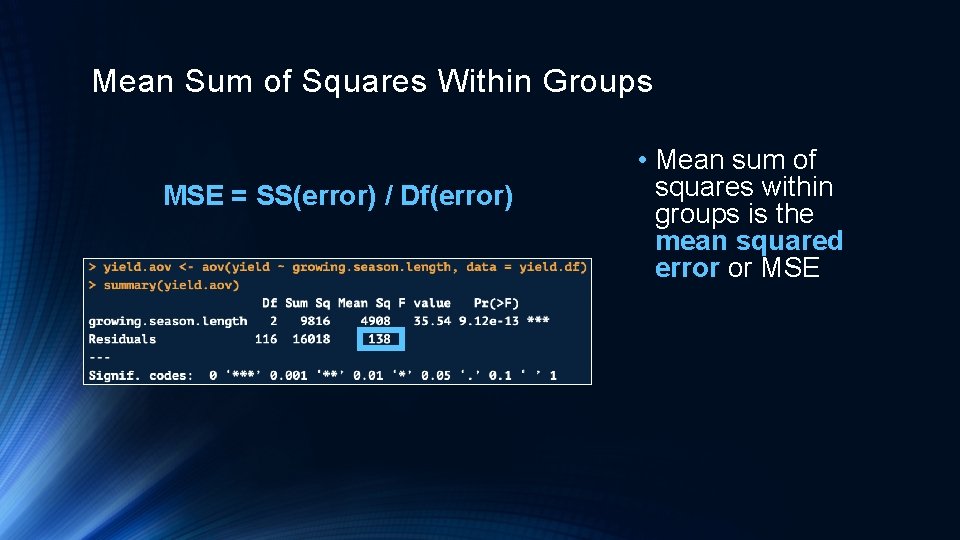

Mean Sum of Squares Within Groups MSE = SS(error) / Df(error) • Mean sum of squares within groups is the mean squared error or MSE

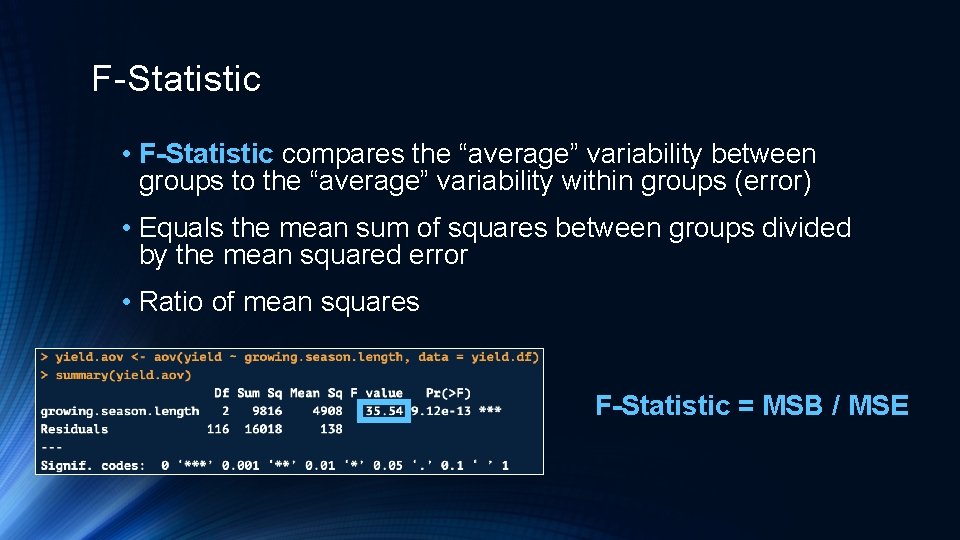

F-Statistic • F-Statistic compares the “average” variability between groups to the “average” variability within groups (error) • Equals the mean sum of squares between groups divided by the mean squared error • Ratio of mean squares F-Statistic = MSB / MSE

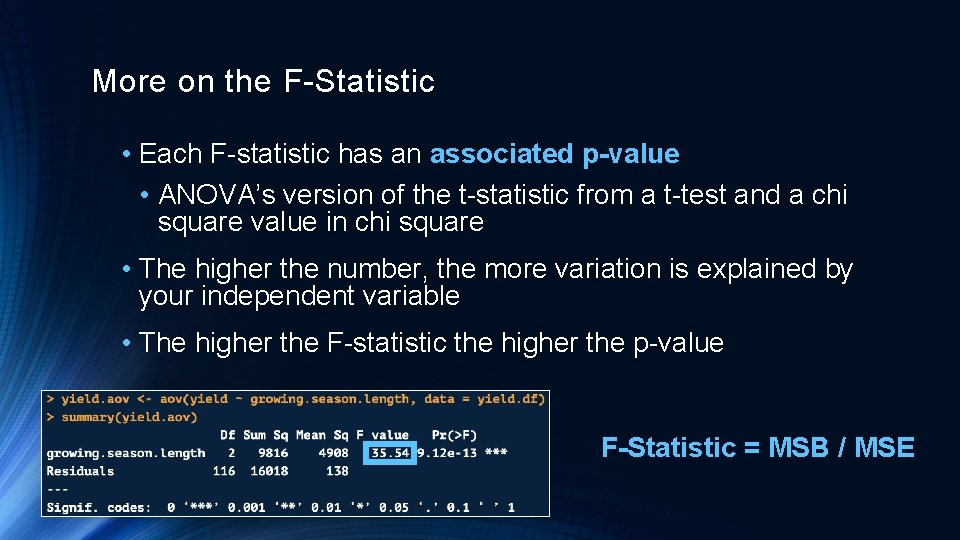

More on the F-Statistic • Each F-statistic has an associated p-value • ANOVA’s version of the t-statistic from a t-test and a chi square value in chi square • The higher the number, the more variation is explained by your independent variable • The higher the F-statistic the higher the p-value F-Statistic = MSB / MSE

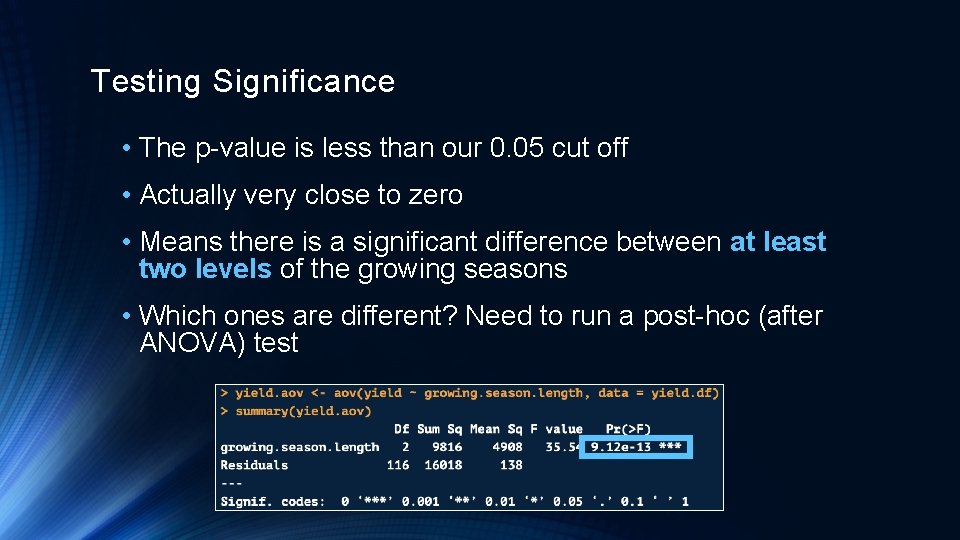

Testing Significance • The p-value is less than our 0. 05 cut off • Actually very close to zero • Means there is a significant difference between at least two levels of the growing seasons • Which ones are different? Need to run a post-hoc (after ANOVA) test

Tukey’s Honest Significant Difference (HSD) • The Tukey’s HSD test uses the results from an ANOVA to do pairwise comparisons of the means for each level of your independent variable • Tells you if the difference between level 1 and level 2 is significant, then level 2 and level 3, etc. • Run only after you run an ANOVA and its p-value is significant

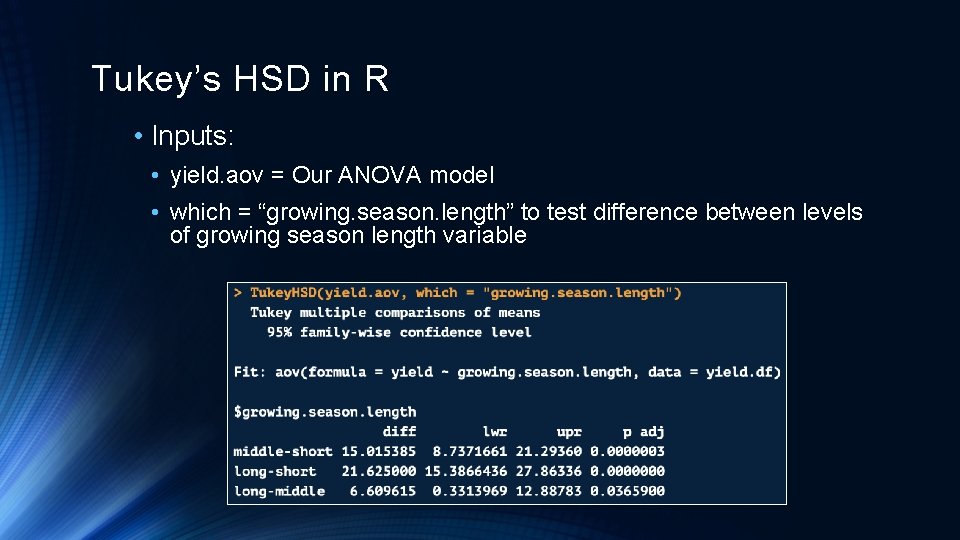

Tukey’s HSD in R • Inputs: • yield. aov = Our ANOVA model • which = “growing. season. length” to test difference between levels of growing season length variable

Tukey’s HSD in R • Outputs: • diff = Mean difference between the two groups • lwr, upr = Lower and upper-bound 95% confidence intervals • p adj = P-value of the difference in means • All growing season length means are significantly different from one another

Checking ANOVA Assumptions

ANOVA Assumptions • Observations are independent of one another • Would know based on domain knowledge • Residuals for each factor level are normally distributed • Tested with QQ-plot and Shapiro-Wilk test • Homogeneity or equal variances between groups • Tested with Levene’s Test • May want to remove significant outliers

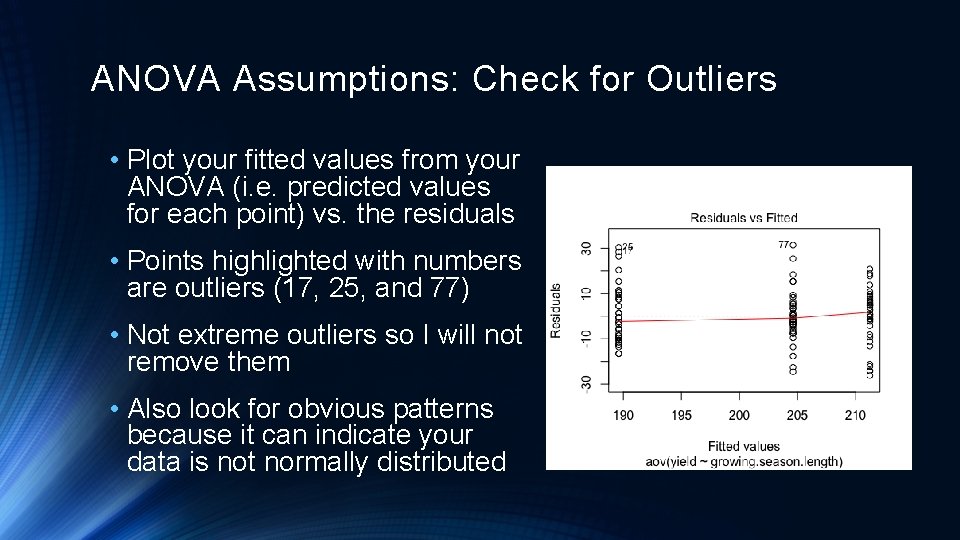

ANOVA Assumptions: Check for Outliers • Plot your fitted values from your ANOVA (i. e. predicted values for each point) vs. the residuals • Points highlighted with numbers are outliers (17, 25, and 77) • Not extreme outliers so I will not remove them • Also look for obvious patterns because it can indicate your data is not normally distributed

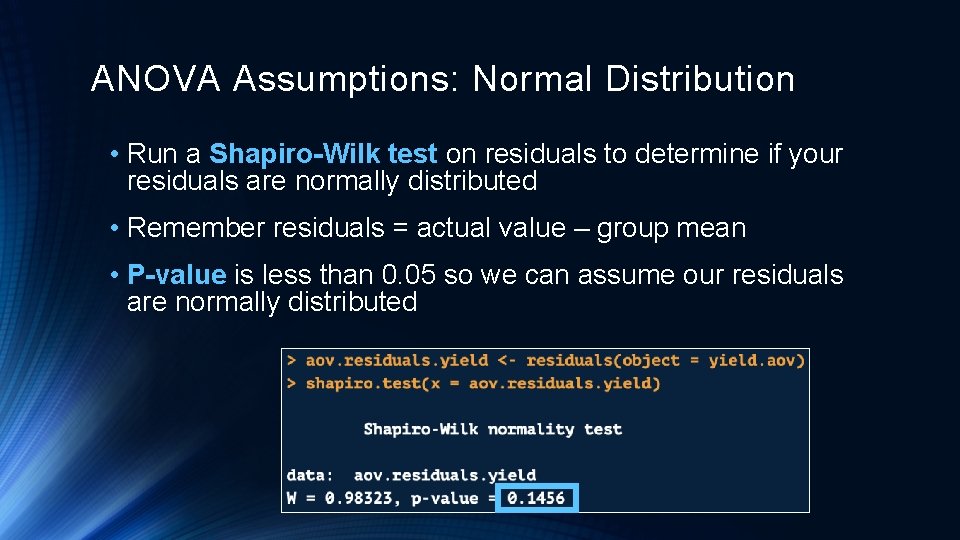

ANOVA Assumptions: Normal Distribution • Run a Shapiro-Wilk test on residuals to determine if your residuals are normally distributed • Remember residuals = actual value – group mean • P-value is less than 0. 05 so we can assume our residuals are normally distributed

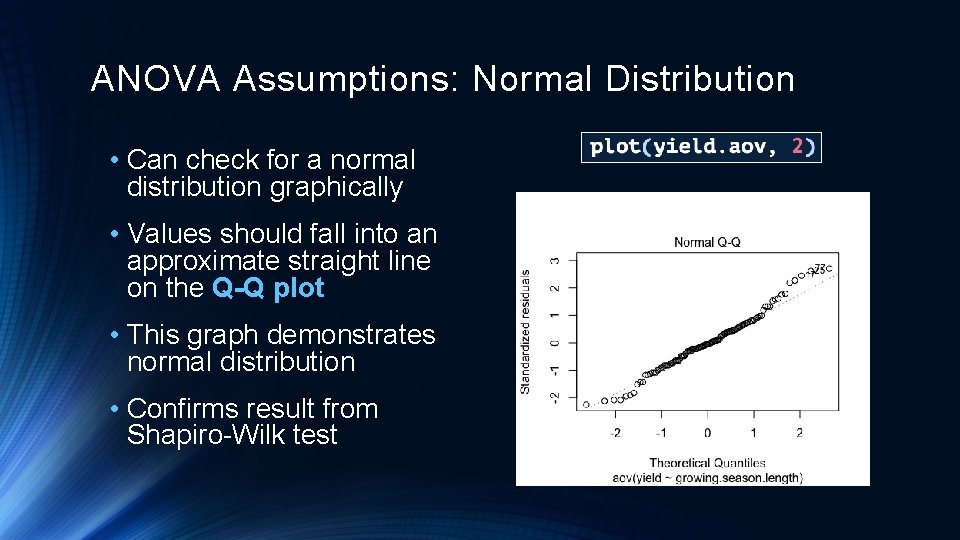

ANOVA Assumptions: Normal Distribution • Can check for a normal distribution graphically • Values should fall into an approximate straight line on the Q-Q plot • This graph demonstrates normal distribution • Confirms result from Shapiro-Wilk test

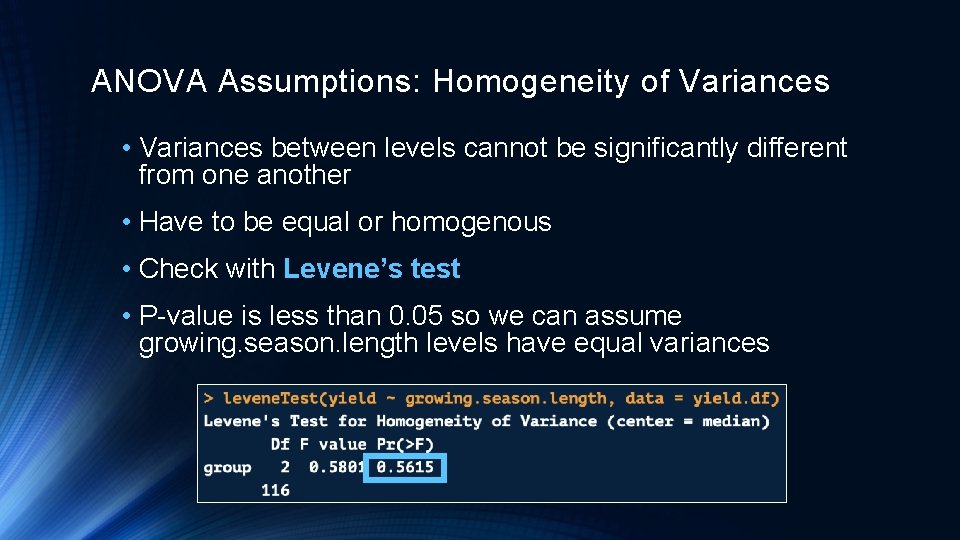

ANOVA Assumptions: Homogeneity of Variances • Variances between levels cannot be significantly different from one another • Have to be equal or homogenous • Check with Levene’s test • P-value is less than 0. 05 so we can assume growing. season. length levels have equal variances

Two-Way ANOVA Overview

Two-Way ANOVA • With a one-way ANOVA we measured the relationship between one independent variable with 2+ levels to a dependent variable • Our example: Relationship between growing season length and corn yield • A Two-Way ANOVA performs this same task except with 2 independent variables with 2+ levels each

Two-Way ANOVA Example • Will walk through an example from this link: http: //www. sthda. com/english/wiki/two-way-anova-test-in-r using a built -in R data set • The built-in R data set Tooth. Growth compares the effect of Vitamin C on tooth growth in guinea pigs • Independent Variable 1: • Dose of Vitamin C: 0. 5, 1. 0, 2. 0 • Independent Variable 2: Form of Vitamin C, either absorbic acid (AA) or orange juice (OJ) • Dependent Variable: Length of odontoblasts (cells responsible for tooth growth)

Two-Way ANOVA Walkthrough in R

Useful Links for Learning ANOVA • http: //www. sthda. com/english/wiki/two-way-anova-test-in-r • https: //www. analyticsvidhya. com/blog/2018/01/anova-analysisof-variance/ • https: //www. statisticshowto. datasciencecentral. com/probability -and-statistics/hypothesis-testing/anova/ • https: //www. guru 99. com/r-anova-tutorial. html • https: //newonlinecourses. science. psu. edu/stat 414/node/218/

Questions?

- Slides: 38