Statistics Data Analysis Course Number Course Section Meeting

Statistics & Data Analysis Course Number Course Section Meeting Time B 01. 1305 31 Wednesday 6 -8: 50 pm Regression and Correlation Professor S. D. Balkin -- March 5, 2003

Class Outline n Review of last class n Analyzing bivariate data n. Correlation Analysis n. Regression Analysis n Discuss Midterm Exam Professor S. D. Balkin -- March 5, 2003 2

Review of Last Class § Research Hypothesis, or Alternative Hypothesis is what the is trying to prove • § Null Hypothesis is the denial of the research hypothesis. It is what is trying to be disproved • § Denoted: Ha Denoted: H 0 Basic Logic 1. Assume that H 0 is true; 2. Calculate the value of the test statistic 3. If this value is highly unlikely, reject H 0 and support Ha, else, fail to reject H 0 due to lack of evidence 4. Calculate p-value to determine significance of hypothesis § We can use the sampling distribution to determine what values of the test statistic are sufficiently unlikely given the null hypothesis Professor S. D. Balkin -- March 5, 2003 3

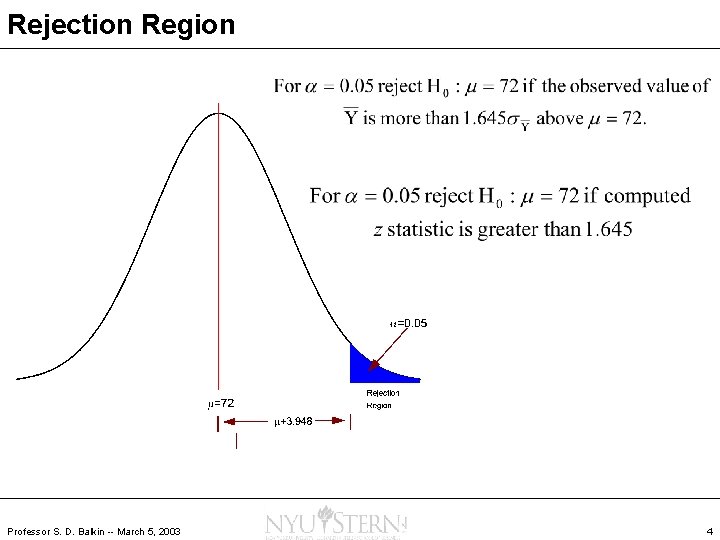

Rejection Region Professor S. D. Balkin -- March 5, 2003 4

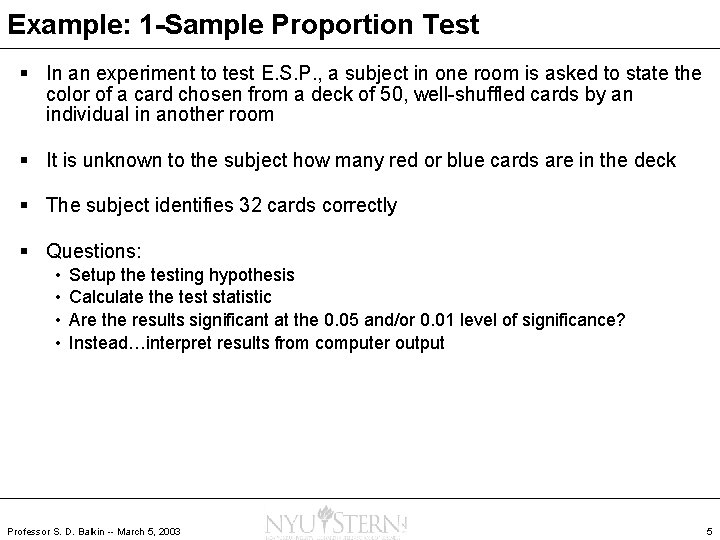

Example: 1 -Sample Proportion Test § In an experiment to test E. S. P. , a subject in one room is asked to state the color of a card chosen from a deck of 50, well-shuffled cards by an individual in another room § It is unknown to the subject how many red or blue cards are in the deck § The subject identifies 32 cards correctly § Questions: • • Setup the testing hypothesis Calculate the test statistic Are the results significant at the 0. 05 and/or 0. 01 level of significance? Instead…interpret results from computer output Professor S. D. Balkin -- March 5, 2003 5

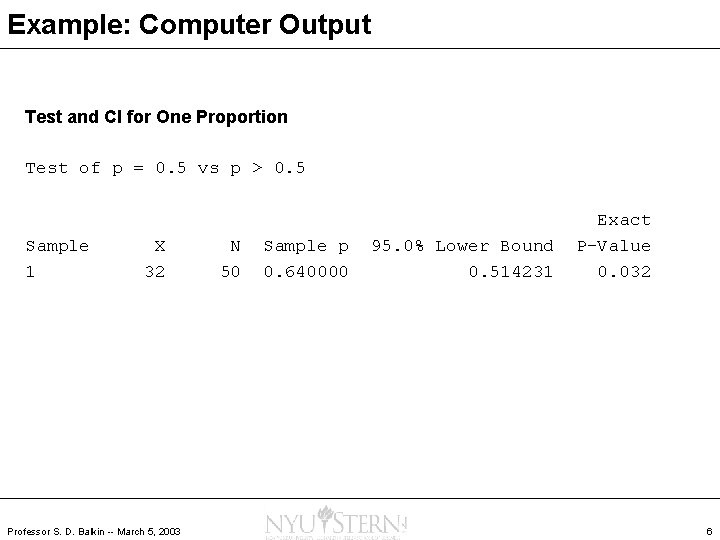

Example: Computer Output Test and CI for One Proportion Test of p = 0. 5 vs p > 0. 5 Sample 1 X 32 Professor S. D. Balkin -- March 5, 2003 N 50 Sample p 0. 640000 95. 0% Lower Bound 0. 514231 Exact P-Value 0. 032 6

Linear Regression and Correlation Methods Chapter 11 Professor S. D. Balkin -- March 5, 2003

Chapter Goals § Introduction to Bivariate Data Analysis • Introduction to Simple Linear Regression Analysis • Introduction to Linear Correlation Analysis § § § Interpret scatterplots Understand linear models and parameter estimation Identify high-leverage observations Understand the use of transformations Perform hypothesis tests and confidence intervals for linear model and correlation coefficient Professor S. D. Balkin -- March 5, 2003 8

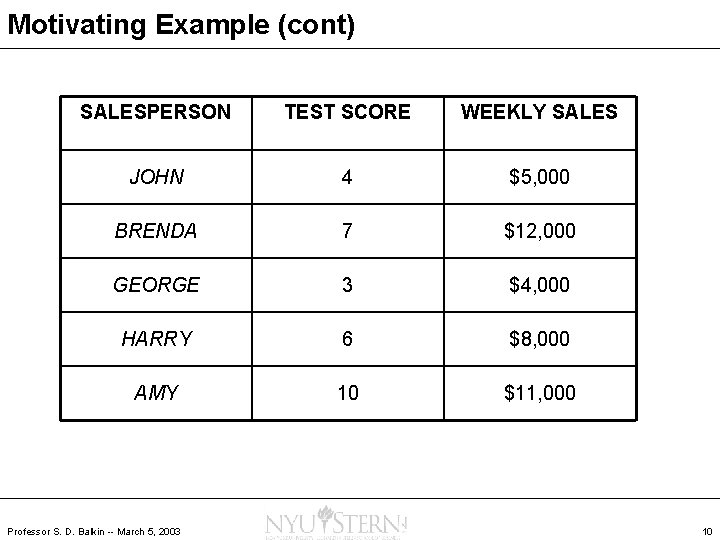

Motivating Example § Before a pharmaceutical sales rep can speak about a product to physicians, he must pass a written exam § An HR Rep designed such a test with the hopes of hiring the best possible reps to promote a drug in a high potential area § In order to check the validity of the test as a predictor of weekly sales, he chose 5 experienced sales reps and piloted the test with each one § The test scores and weekly sales are given in the following table: Professor S. D. Balkin -- March 5, 2003 9

Motivating Example (cont) SALESPERSON TEST SCORE WEEKLY SALES JOHN 4 $5, 000 BRENDA 7 $12, 000 GEORGE 3 $4, 000 HARRY 6 $8, 000 AMY 10 $11, 000 Professor S. D. Balkin -- March 5, 2003 10

Introduction to Bivariate Data § Up until now, we’ve focused on univariate data § Analyzing how two (or more) variables “relate” is very important to managers • • Prediction equations Estimate uncertainty around a prediction Identify unusual points Describe relationship between variables § Visualization • Scatterplot Professor S. D. Balkin -- March 5, 2003 11

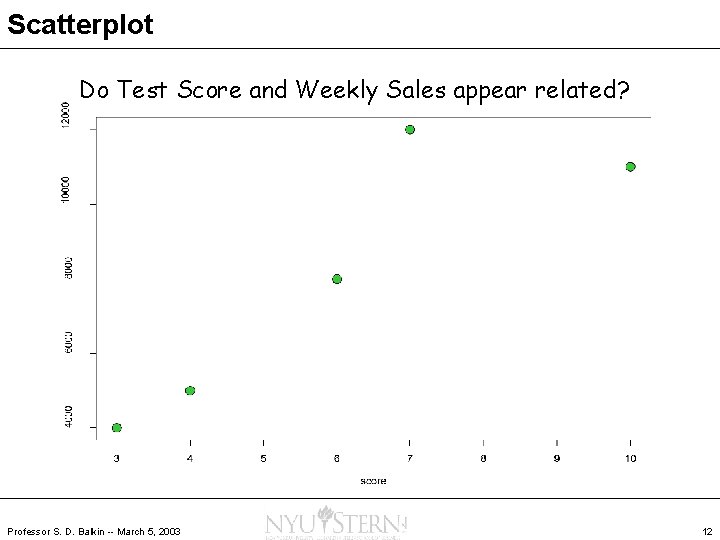

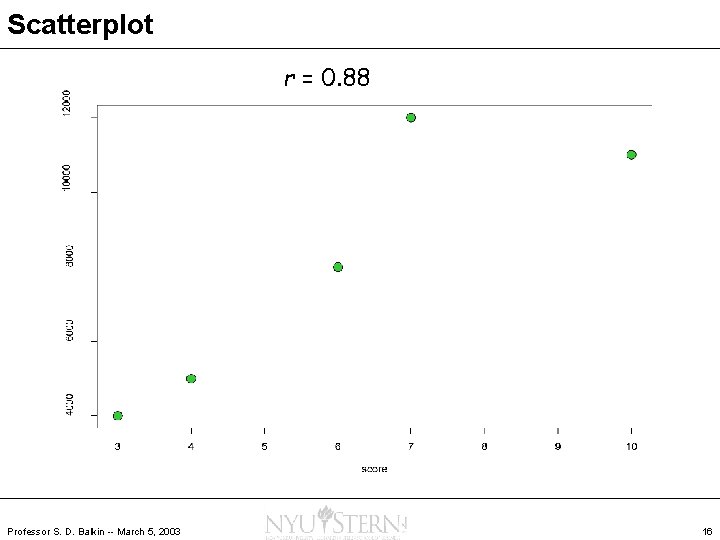

Scatterplot Do Test Score and Weekly Sales appear related? Professor S. D. Balkin -- March 5, 2003 12

Correlation Boomers' Little Secret Still Smokes Up the Closet July 14, 2002 …Parental cigarette smoking, past or current, appeared to have a stronger correlation to children's drug use than parental marijuana smoking, Dr. Kandel said. The researchers concluded that parents influence their children not according to a simple dichotomy — by smoking or not smoking — but by a range of attitudes and behaviors, perhaps including their style of discipline and level of parental involvement. Their own drug use was just one component among many… A Bit of a Hedge to Balance the Market Seesaw July 7, 2002 …Some so-called market-neutral funds have had as many years of negative returns as positive ones. And some have a high correlation with the market's returns… Professor S. D. Balkin -- March 5, 2003 13

Correlation Analysis § Statistical techniques used to measure the strength of the relationship between two variables § Correlation Coefficient: describes the strength of the relationship between two sets of variables • • Denoted r r assumes a value between – 1 and +1 r = -1 or r = +1 indicates a perfect correlation r = 0 indicates not relationship between the two sets of variables Direction of the relationship is given by the coefficient’s sign Strength of relationship does not depend on the direction r means LINEAR relationship ONLY Against All Odds: Correlation Professor S. D. Balkin -- March 5, 2003 14

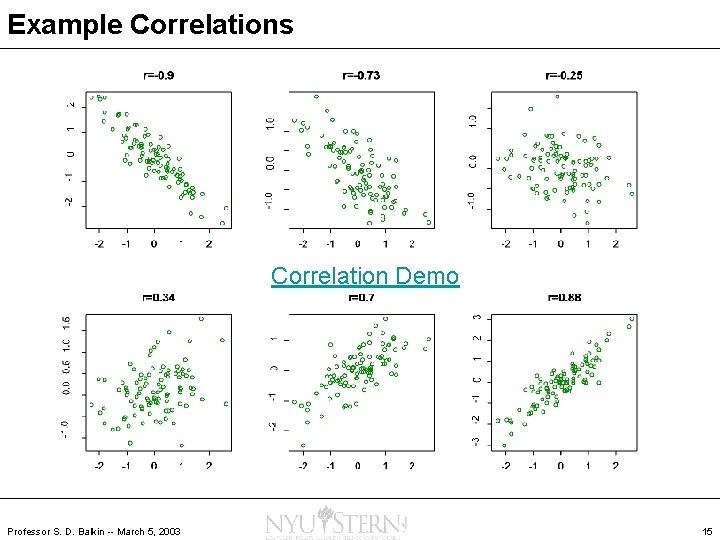

Example Correlations Correlation Demo Professor S. D. Balkin -- March 5, 2003 15

Scatterplot r = 0. 88 Professor S. D. Balkin -- March 5, 2003 16

Does Correlation Imply Causation? ? Professor S. D. Balkin -- March 5, 2003 17

Correlation and Causation § Must be very careful in interpreting correlation coefficients § Just because two variables are highly correlated does not mean that one causes the other • Ice cream sales and the number of shark attacks on swimmers are correlated • The miracle of the "Swallows" of Capistrano takes place each year at the Mission San Juan Capistano, on March 19 th and is accompanied by a large number of human births around the same time • The number of cavities in elementary school children and vocabulary size have a strong positive correlation. § To establish causation, a designed experiment must be run CORRELATION DOES NOT IMPLY CAUSATION Against All Odds: Causation Professor S. D. Balkin -- March 5, 2003 18

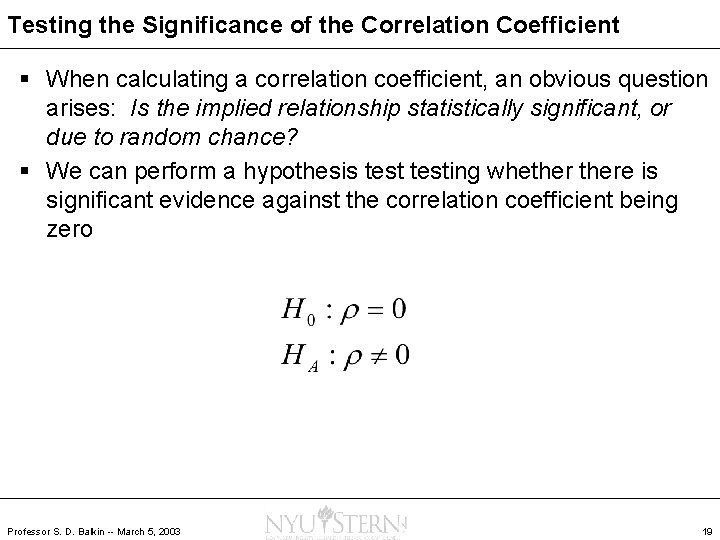

Testing the Significance of the Correlation Coefficient § When calculating a correlation coefficient, an obvious question arises: Is the implied relationship statistically significant, or due to random chance? § We can perform a hypothesis testing whethere is significant evidence against the correlation coefficient being zero Professor S. D. Balkin -- March 5, 2003 19

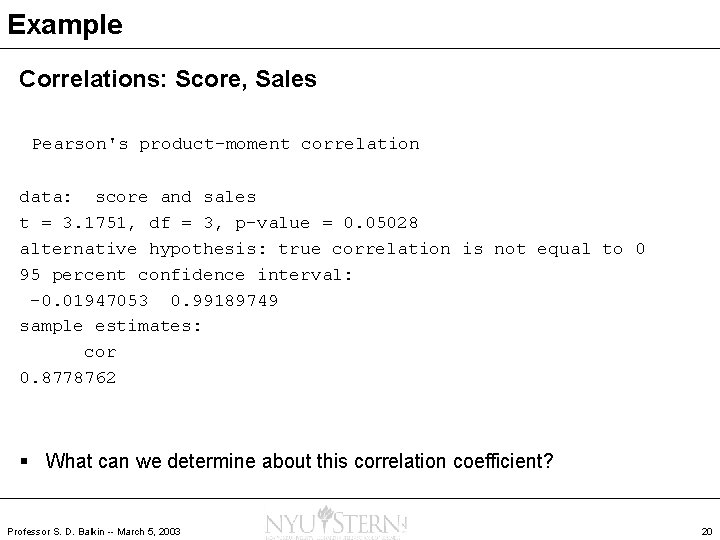

Example Correlations: Score, Sales Pearson's product-moment correlation data: score and sales t = 3. 1751, df = 3, p-value = 0. 05028 alternative hypothesis: true correlation is not equal to 0 95 percent confidence interval: -0. 01947053 0. 99189749 sample estimates: cor 0. 8778762 § What can we determine about this correlation coefficient? Professor S. D. Balkin -- March 5, 2003 20

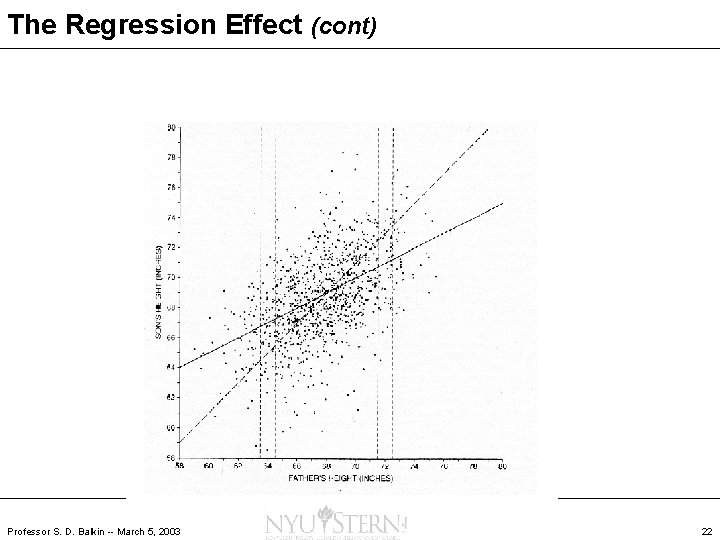

The Regression Effect Sir Francis Galton (1822 -1911) studied a data set of 1, 078 heights of fathers and sons. His data set is on the next page. Two lines are superimposed: The dashed line is a 45 -degree line, shifted up by one inch since on the average the sons were one inch taller than the fathers. The solid line is one that better fits the data set. (It’s the least squares line, to be described soon. ) Galton observed that tall fathers tend to have tall sons but the sons are not, on average, as tall as the fathers. Also, short fathers have short sons who, however, are not as short on average as their fathers. Galton called this effect “regression to the mean”. In other words, the son’s height tends to be closer to the overall mean height than the father’s height was. Nowadays, the term “regression” is used more generally in statistics to refer to the process of fitting a line to data. Professor S. D. Balkin -- March 5, 2003 21

The Regression Effect (cont) Professor S. D. Balkin -- March 5, 2003 22

Regression Analysis § Simple Regression Analysis is predicting one variable from another • Past data on relevant variables are used to create and evaluate a prediction equation § Variable being predicted is called the dependent variable § Variable used to make prediction is an independent variable Professor S. D. Balkin -- March 5, 2003 23

Introduction to Regression § Predicting future values of a variable is a crucial management activity • Future cash flows • Needs to raw materials into a supply chain • Future personnel or real estate needs § Explaining past variation is also an important activity • Explain past variation in demand for services • Impact of an advertising campaign or promotion Against All Odds: Describing Relationships Professor S. D. Balkin -- March 5, 2003 24

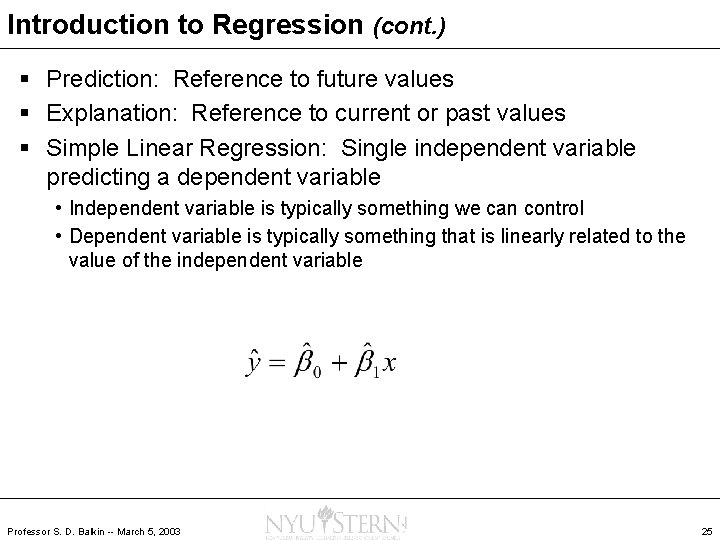

Introduction to Regression (cont. ) § Prediction: Reference to future values § Explanation: Reference to current or past values § Simple Linear Regression: Single independent variable predicting a dependent variable • Independent variable is typically something we can control • Dependent variable is typically something that is linearly related to the value of the independent variable Professor S. D. Balkin -- March 5, 2003 25

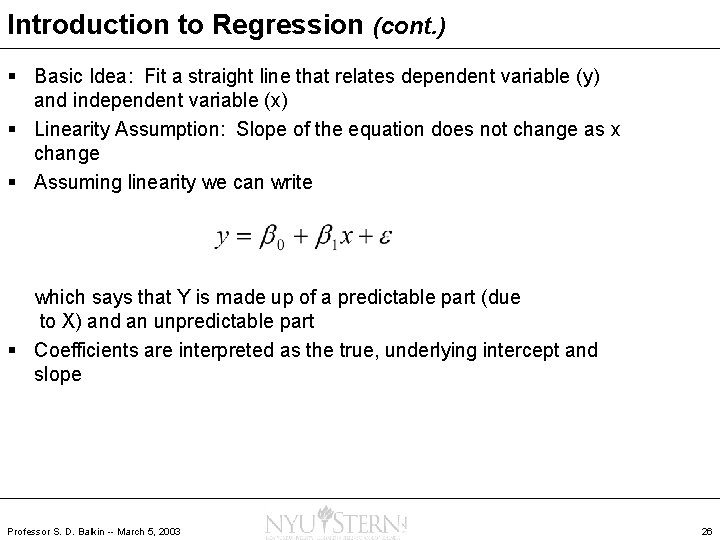

Introduction to Regression (cont. ) § Basic Idea: Fit a straight line that relates dependent variable (y) and independent variable (x) § Linearity Assumption: Slope of the equation does not change as x change § Assuming linearity we can write which says that Y is made up of a predictable part (due to X) and an unpredictable part § Coefficients are interpreted as the true, underlying intercept and slope Professor S. D. Balkin -- March 5, 2003 26

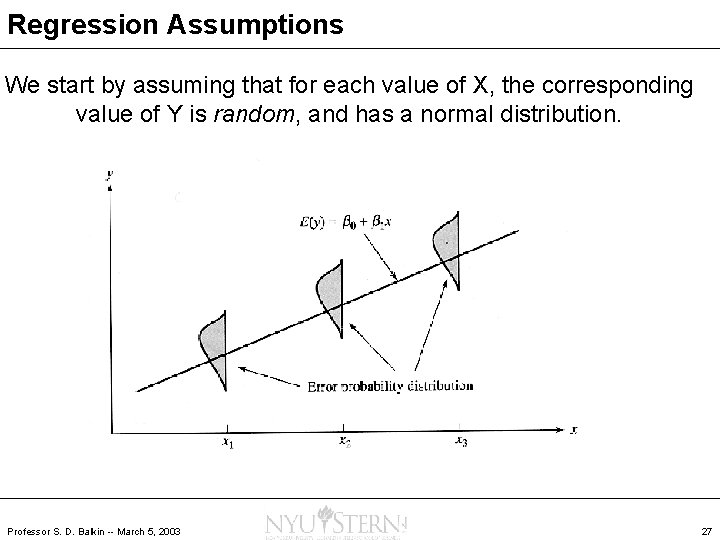

Regression Assumptions We start by assuming that for each value of X, the corresponding value of Y is random, and has a normal distribution. Professor S. D. Balkin -- March 5, 2003 27

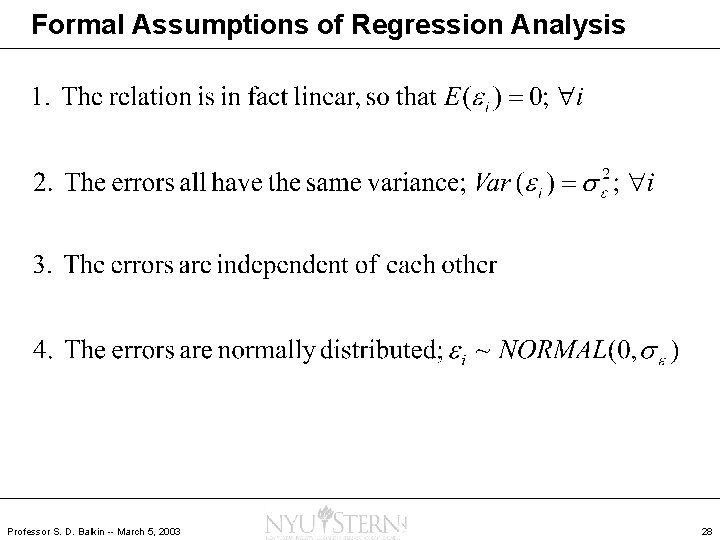

Formal Assumptions of Regression Analysis Professor S. D. Balkin -- March 5, 2003 28

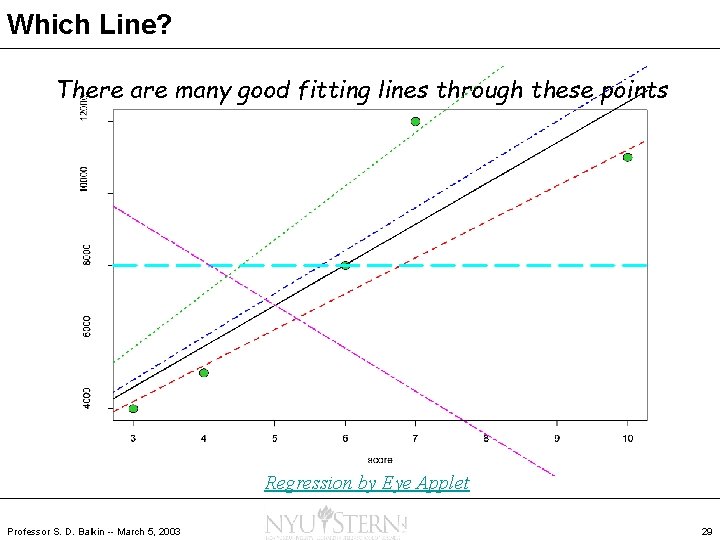

Which Line? There are many good fitting lines through these points Regression by Eye Applet Professor S. D. Balkin -- March 5, 2003 29

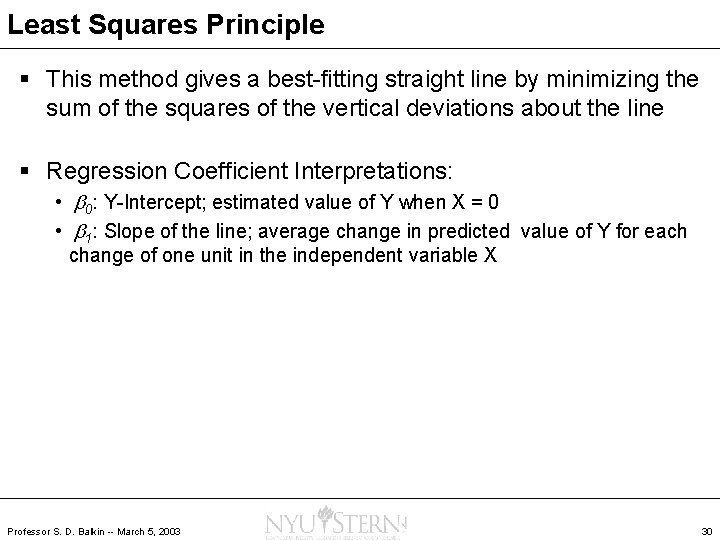

Least Squares Principle § This method gives a best-fitting straight line by minimizing the sum of the squares of the vertical deviations about the line § Regression Coefficient Interpretations: • b 0: Y-Intercept; estimated value of Y when X = 0 • b 1: Slope of the line; average change in predicted value of Y for each change of one unit in the independent variable X Professor S. D. Balkin -- March 5, 2003 30

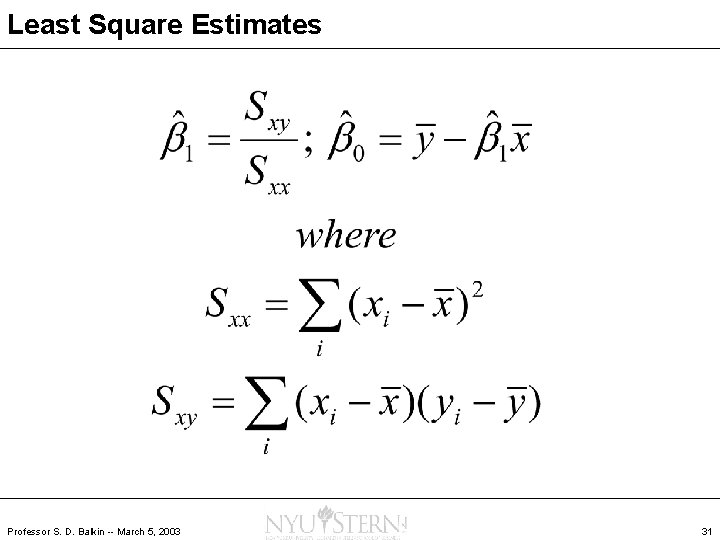

Least Square Estimates Professor S. D. Balkin -- March 5, 2003 31

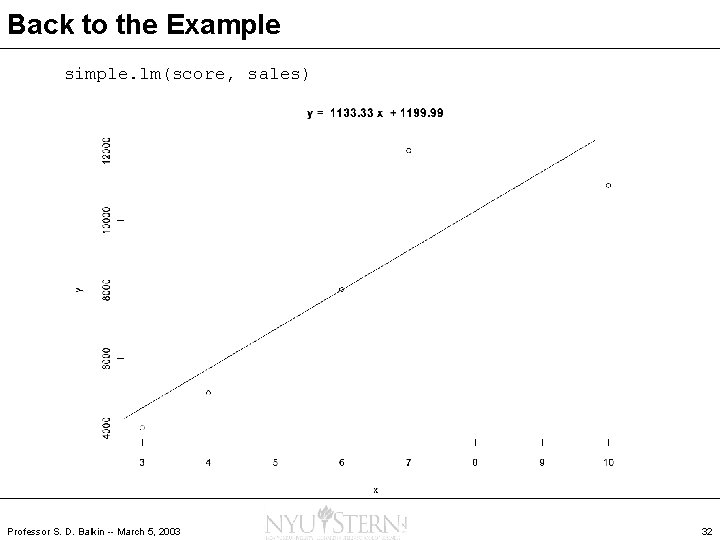

Back to the Example simple. lm(score, sales) Professor S. D. Balkin -- March 5, 2003 32

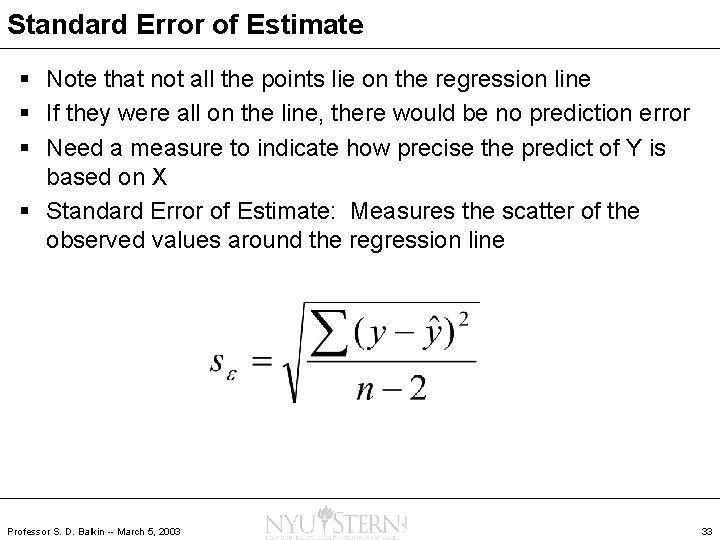

Standard Error of Estimate § Note that not all the points lie on the regression line § If they were all on the line, there would be no prediction error § Need a measure to indicate how precise the predict of Y is based on X § Standard Error of Estimate: Measures the scatter of the observed values around the regression line Professor S. D. Balkin -- March 5, 2003 33

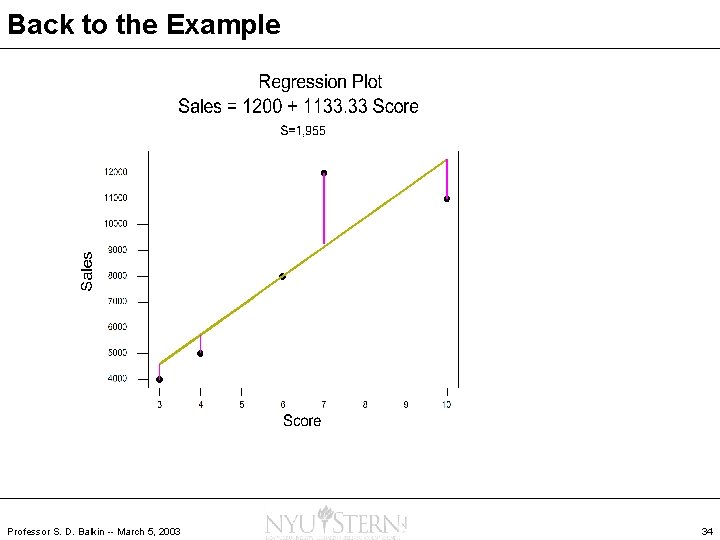

Back to the Example Professor S. D. Balkin -- March 5, 2003 34

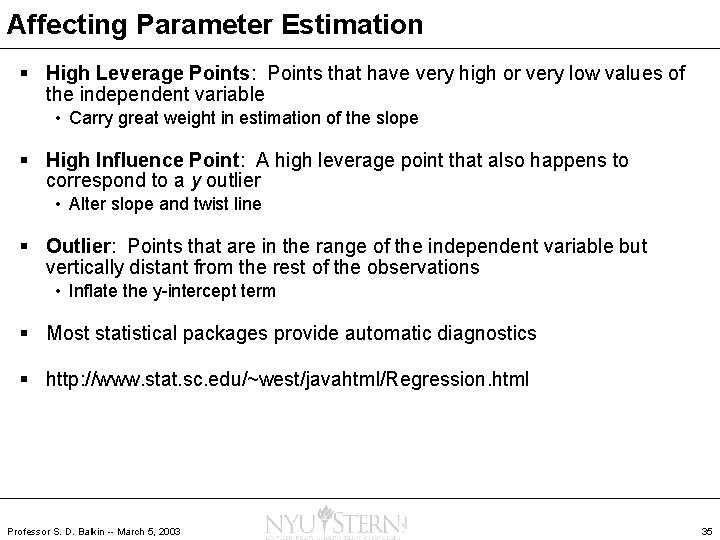

Affecting Parameter Estimation § High Leverage Points: Points that have very high or very low values of the independent variable • Carry great weight in estimation of the slope § High Influence Point: A high leverage point that also happens to correspond to a y outlier • Alter slope and twist line § Outlier: Points that are in the range of the independent variable but vertically distant from the rest of the observations • Inflate the y-intercept term § Most statistical packages provide automatic diagnostics § http: //www. stat. sc. edu/~west/javahtml/Regression. html Professor S. D. Balkin -- March 5, 2003 35

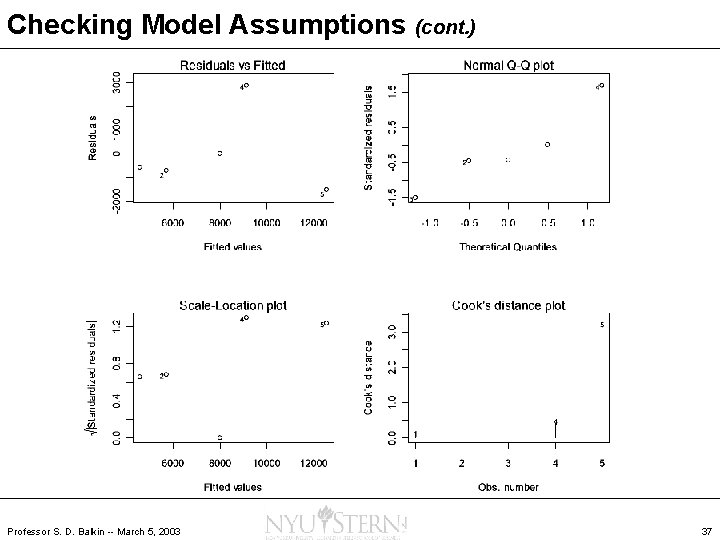

Checking Model Assumptions (cont. ) § Residuals vs. fitted: Look for trend in spread around y = 0 § Normal plot: Check if residuals are normally distributed § Scale-Location: Tallest points are the largest residuals § Cook’s Distance: Identifies influential points Professor S. D. Balkin -- March 5, 2003 36

Checking Model Assumptions (cont. ) Professor S. D. Balkin -- March 5, 2003 37

Inferences for Estimates § The slope, intercept, and residual standard deviation in a simple regression model are estimates based on limited data § Just like all other statistical quantities, they are affected by random error § We can apply ideas of hypothesis tests and confidence intervals to the regression estimates Professor S. D. Balkin -- March 5, 2003 38

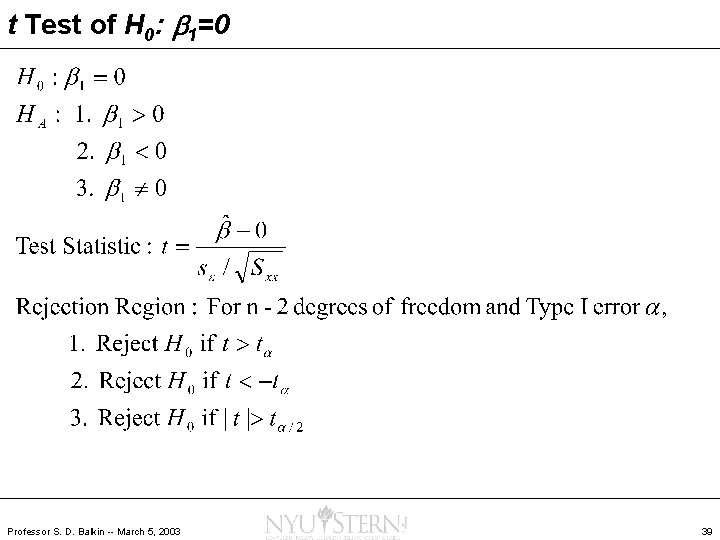

t Test of H 0: b 1=0 Professor S. D. Balkin -- March 5, 2003 39

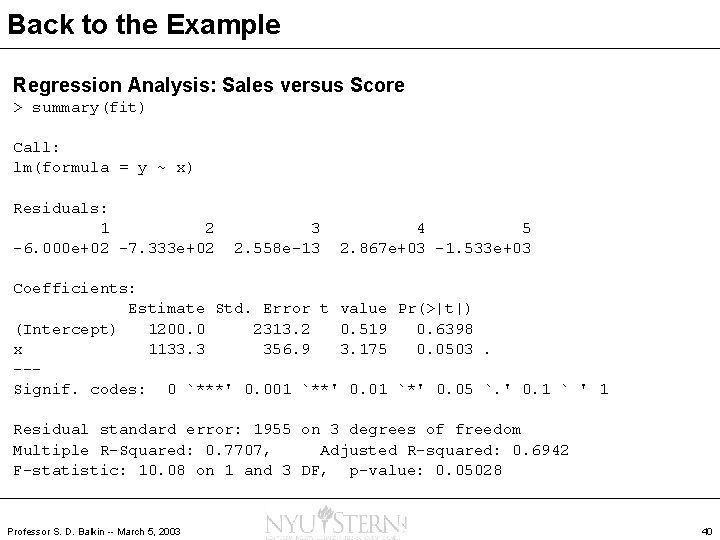

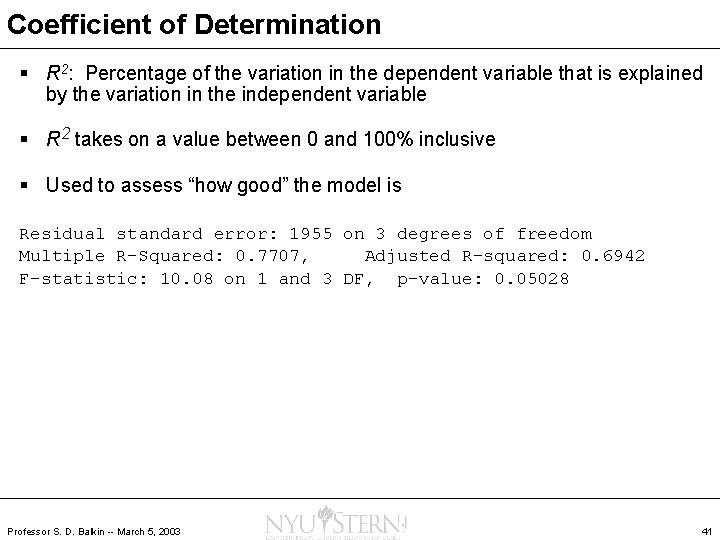

Back to the Example Regression Analysis: Sales versus Score > summary(fit) Call: lm(formula = y ~ x) Residuals: 1 2 -6. 000 e+02 -7. 333 e+02 3 2. 558 e-13 4 5 2. 867 e+03 -1. 533 e+03 Coefficients: Estimate Std. Error t value Pr(>|t|) (Intercept) 1200. 0 2313. 2 0. 519 0. 6398 x 1133. 3 356. 9 3. 175 0. 0503. --Signif. codes: 0 `***' 0. 001 `**' 0. 01 `*' 0. 05 `. ' 0. 1 ` ' 1 Residual standard error: 1955 on 3 degrees of freedom Multiple R-Squared: 0. 7707, Adjusted R-squared: 0. 6942 F-statistic: 10. 08 on 1 and 3 DF, p-value: 0. 05028 Professor S. D. Balkin -- March 5, 2003 40

Coefficient of Determination § R 2: Percentage of the variation in the dependent variable that is explained by the variation in the independent variable § R 2 takes on a value between 0 and 100% inclusive § Used to assess “how good” the model is Residual standard error: 1955 on 3 degrees of freedom Multiple R-Squared: 0. 7707, Adjusted R-squared: 0. 6942 F-statistic: 10. 08 on 1 and 3 DF, p-value: 0. 05028 Professor S. D. Balkin -- March 5, 2003 41

Example Professor S. D. Balkin -- March 5, 2003 42

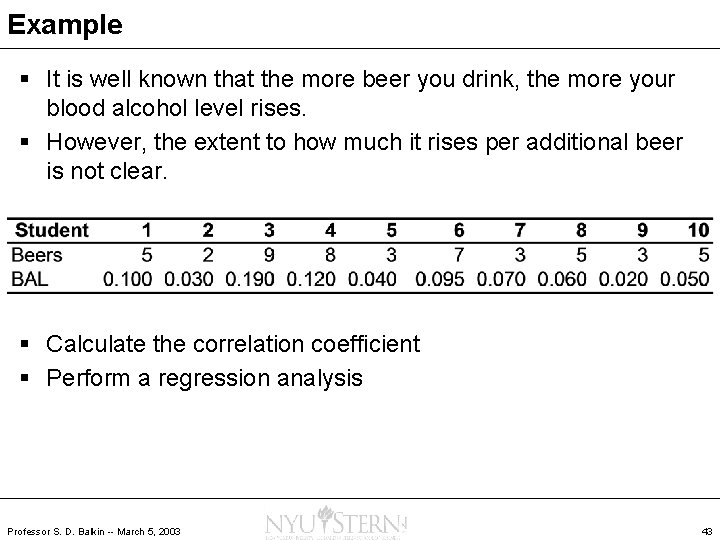

Example § It is well known that the more beer you drink, the more your blood alcohol level rises. § However, the extent to how much it rises per additional beer is not clear. § Calculate the correlation coefficient § Perform a regression analysis Professor S. D. Balkin -- March 5, 2003 43

Homework § Hildebrand/Ott • • 11. 10 11. 11 11. 12 11. 26 11. 44 11. 45 11. 46 11. 47 • Read Chapter 12 Professor S. D. Balkin -- March 5, 2003 § Verzani 44

- Slides: 44