Statistics cont Psych 231 Research Methods in Psychology

- Slides: 56

Statistics (cont. ) Psych 231: Research Methods in Psychology

n Journal Summary 2 assignment n n Due in class this week (Wednesday, Nov. 13 th) Group projects n n Plan to have your analyses done before Thanksgiving break, GAs will be available during lab times to help Poster sessions are last lab sections of the semester (last week of classes), so start thinking about your posters. I will lecture about poster presentations on Monday (a week from today). Announcements

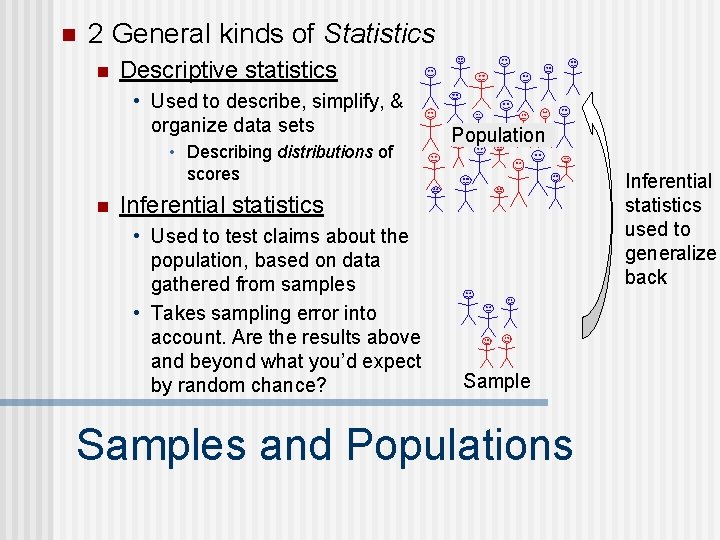

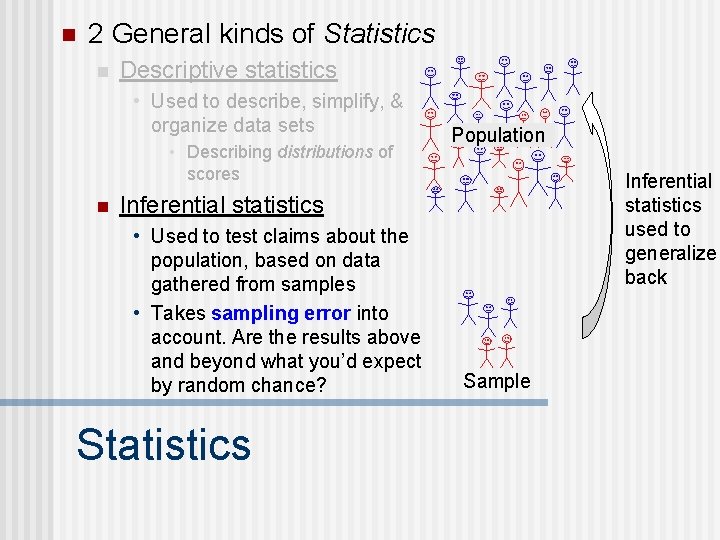

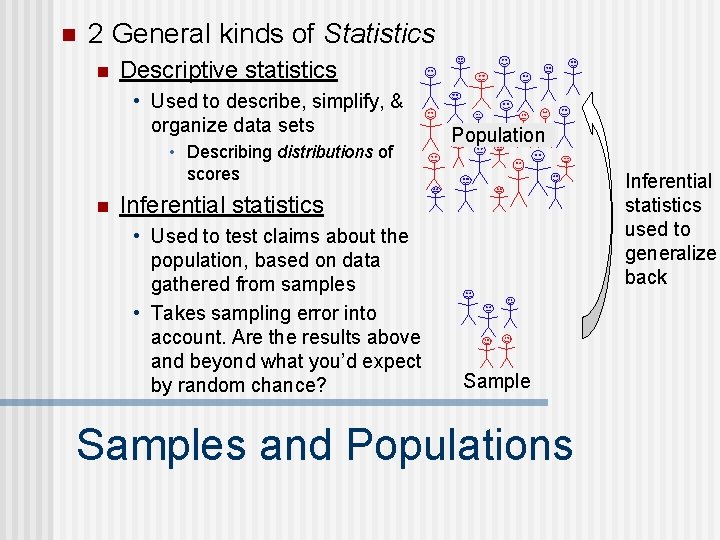

n 2 General kinds of Statistics n Descriptive statistics • Used to describe, simplify, & organize data sets • Describing distributions of scores n Population Inferential statistics used to generalize back Inferential statistics • Used to test claims about the population, based on data gathered from samples • Takes sampling error into account. Are the results above and beyond what you’d expect by random chance? Samples and Populations

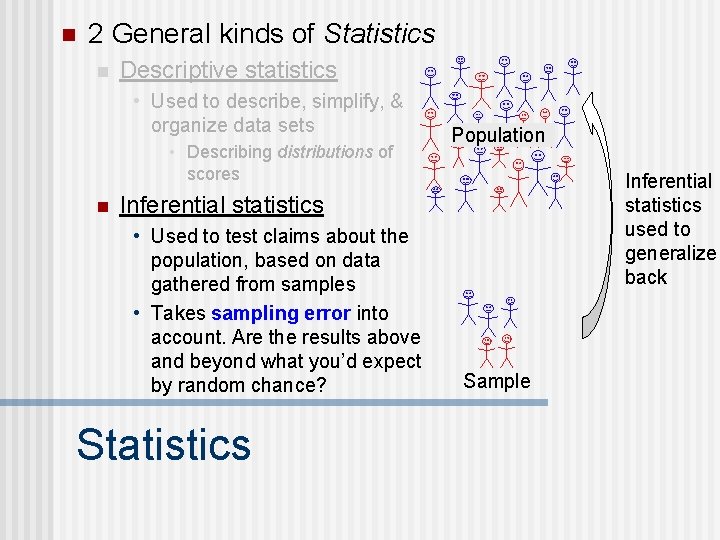

n 2 General kinds of Statistics n Descriptive statistics • Used to describe, simplify, & organize data sets • Describing distributions of scores n Population Inferential statistics used to generalize back Inferential statistics • Used to test claims about the population, based on data gathered from samples • Takes sampling error into account. Are the results above and beyond what you’d expect by random chance? Statistics Sample

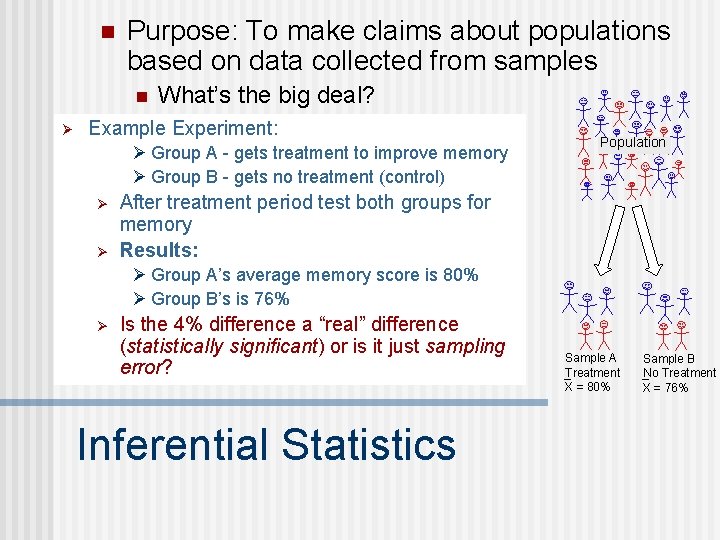

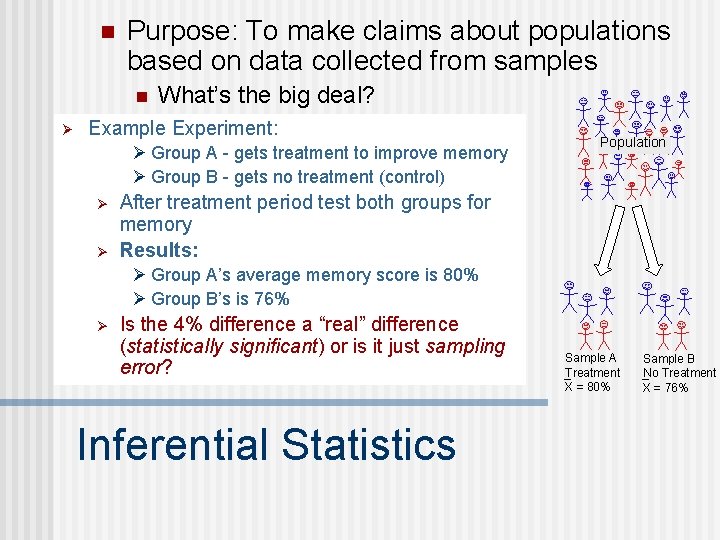

n Purpose: To make claims about populations based on data collected from samples n Ø What’s the big deal? Example Experiment: Ø Group A - gets treatment to improve memory Ø Group B - gets no treatment (control) Ø Ø Population After treatment period test both groups for memory Results: Ø Group A’s average memory score is 80% Ø Group B’s is 76% Ø Is the 4% difference a “real” difference (statistically significant) or is it just sampling error? Inferential Statistics Sample A Treatment X = 80% Sample B No Treatment X = 76%

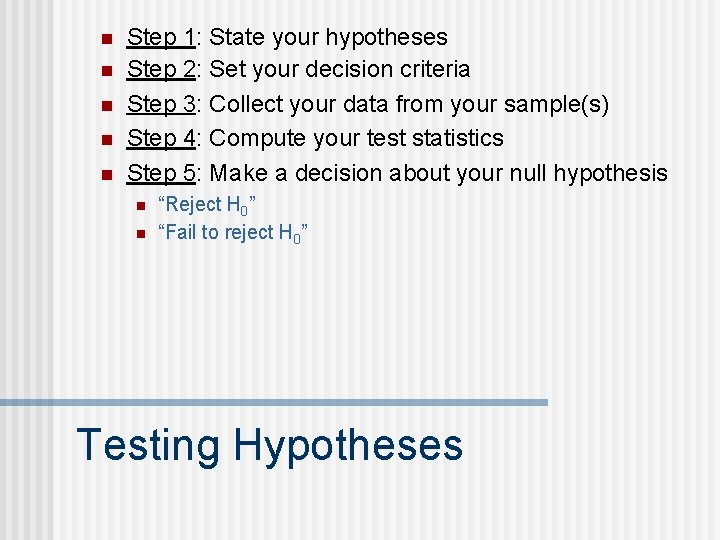

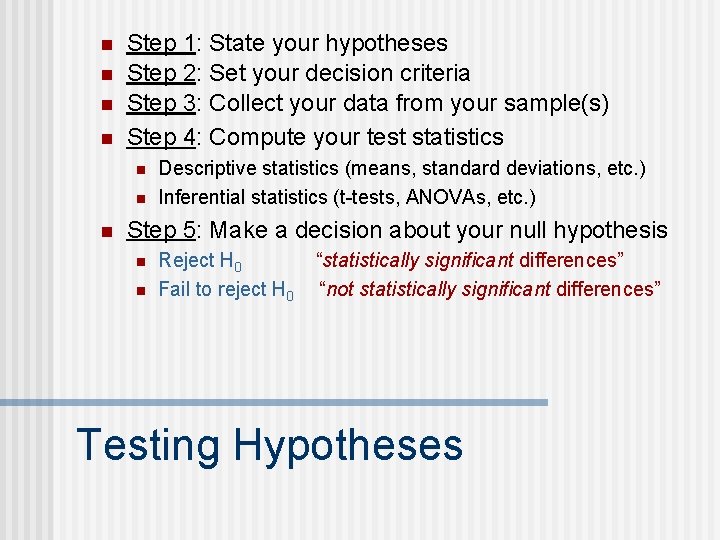

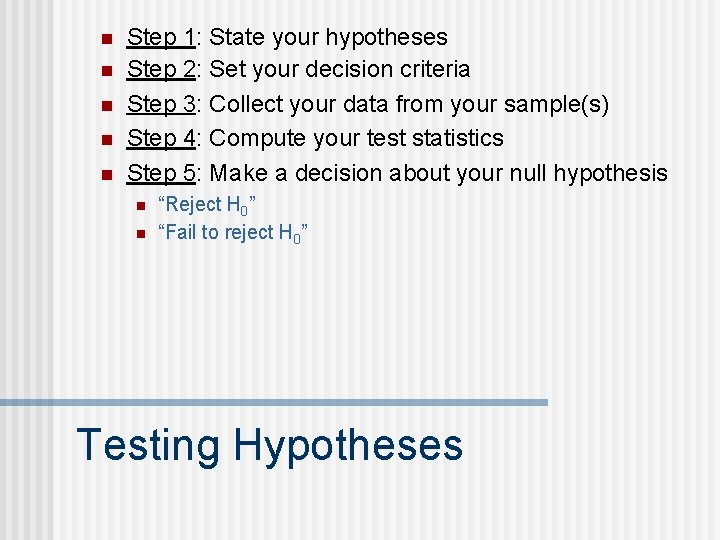

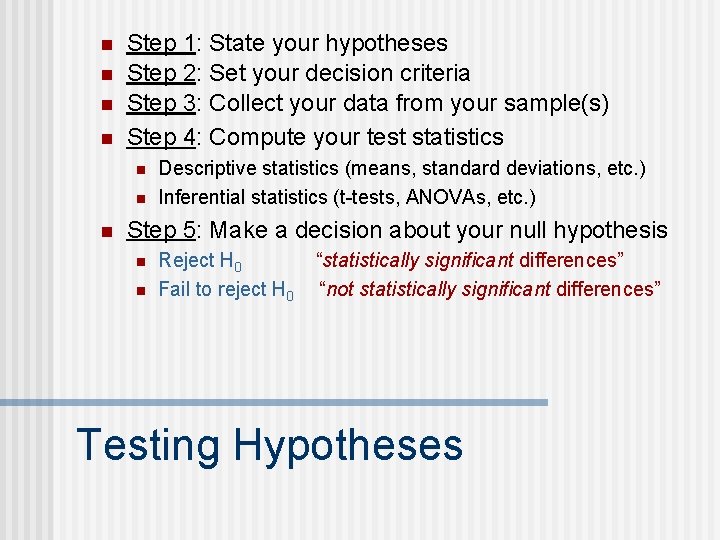

n n n Step 1: State your hypotheses Step 2: Set your decision criteria Step 3: Collect your data from your sample(s) Step 4: Compute your test statistics Step 5: Make a decision about your null hypothesis n n “Reject H 0” “Fail to reject H 0” Testing Hypotheses

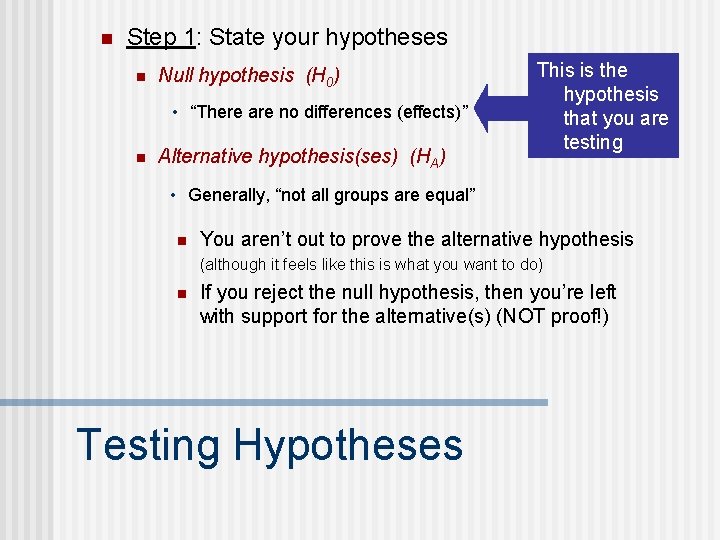

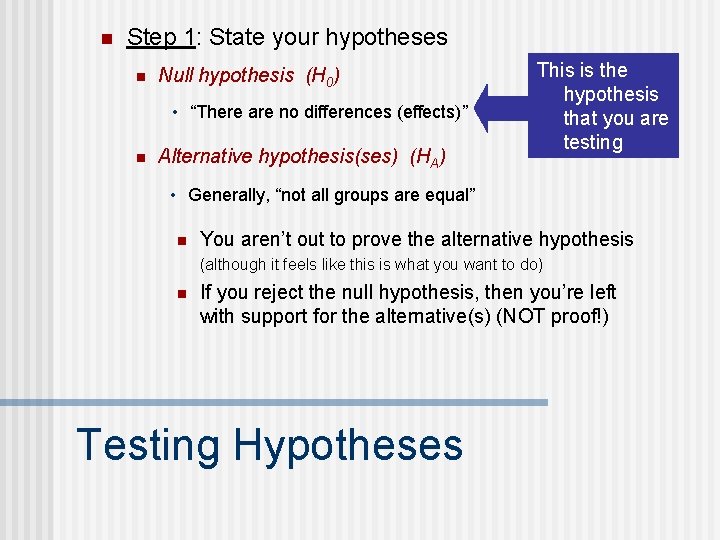

n Step 1: State your hypotheses n Null hypothesis (H 0) • “There are no differences (effects)” n Alternative hypothesis(ses) (HA) This is the hypothesis that you are testing • Generally, “not all groups are equal” n You aren’t out to prove the alternative hypothesis (although it feels like this is what you want to do) n If you reject the null hypothesis, then you’re left with support for the alternative(s) (NOT proof!) Testing Hypotheses

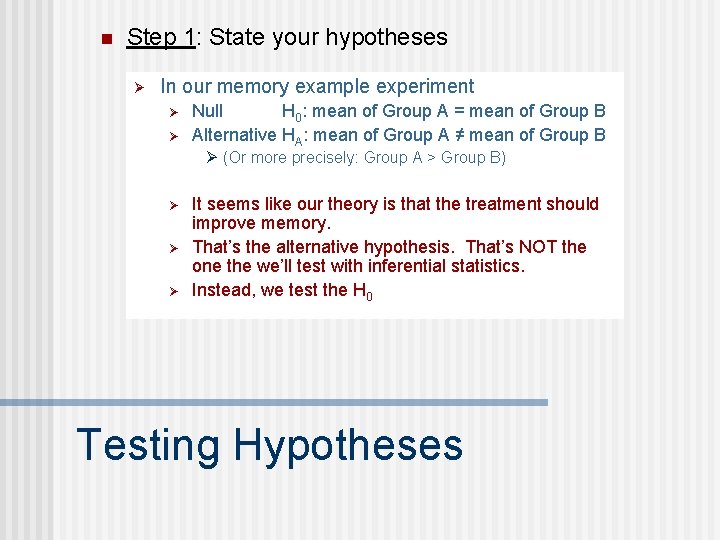

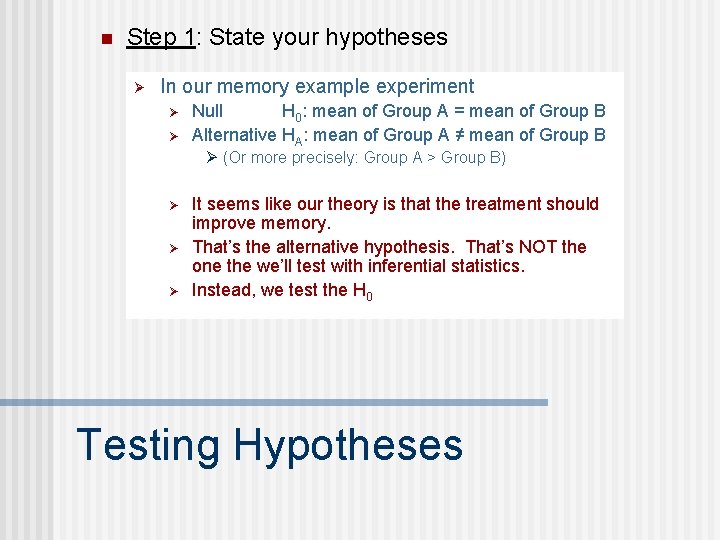

n Step 1: State your hypotheses Ø In our memory example experiment Ø Ø Null H 0: mean of Group A = mean of Group B Alternative HA: mean of Group A ≠ mean of Group B Ø (Or more precisely: Group A > Group B) Ø Ø Ø It seems like our theory is that the treatment should improve memory. That’s the alternative hypothesis. That’s NOT the one the we’ll test with inferential statistics. Instead, we test the H 0 Testing Hypotheses

n n Step 1: State your hypotheses Step 2: Set your decision criteria n Your alpha level will be your guide for when to: • “reject the null hypothesis” • “fail to reject the null hypothesis” n This could be correct conclusion or the incorrect conclusion • Two different ways to go wrong • Type I error: saying that there is a difference when there really isn’t one (probability of making this error is “alpha level”) • Type II error: saying that there is not a difference when there really is one Testing Hypotheses

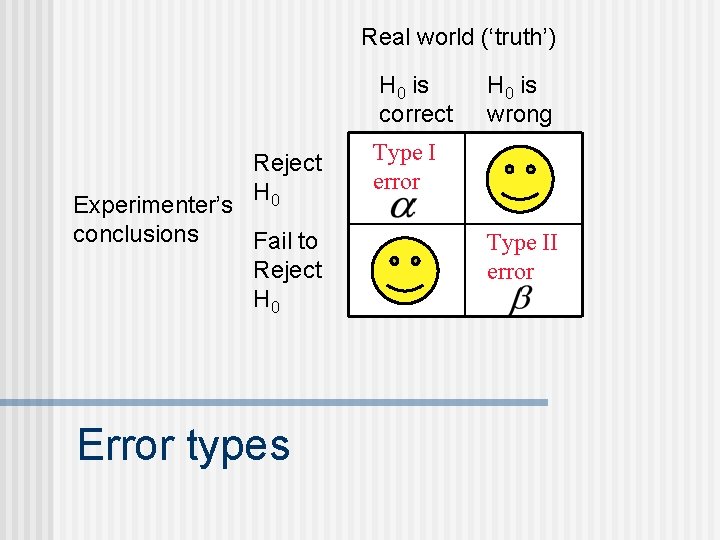

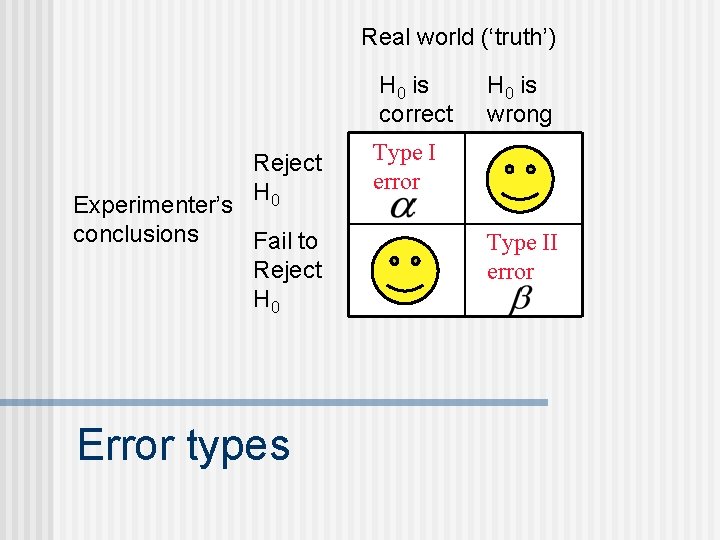

Real world (‘truth’) H 0 is correct Reject H 0 Experimenter’s conclusions Fail to Reject H 0 Error types H 0 is wrong Type I error Type II error

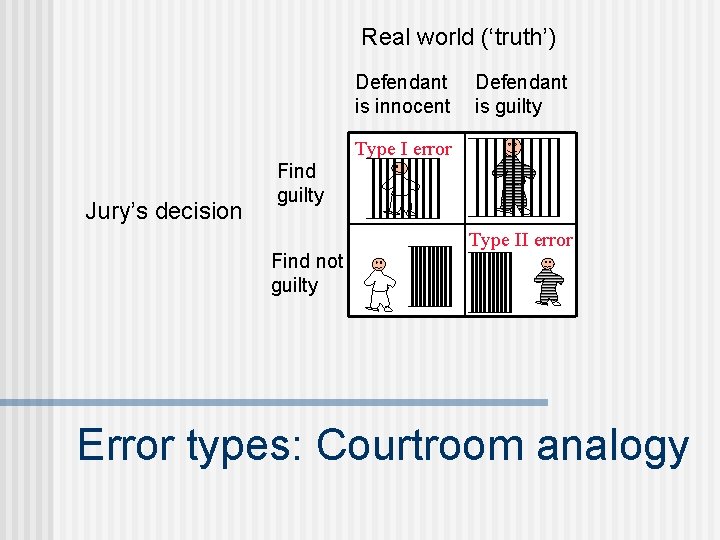

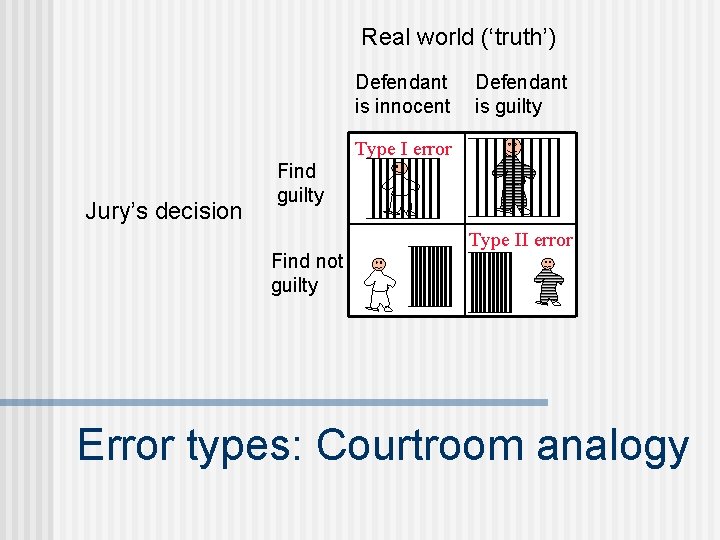

Real world (‘truth’) Defendant is innocent Defendant is guilty Type I error Jury’s decision Find guilty Find not guilty Type II error Error types: Courtroom analogy

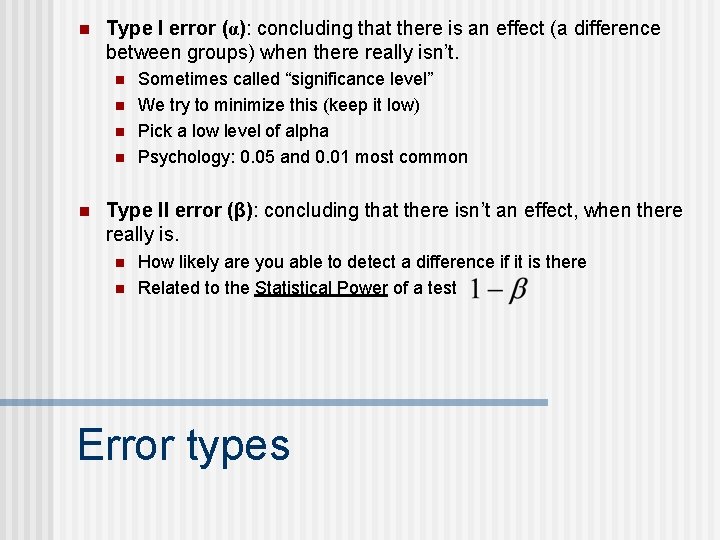

n Type I error (α): concluding that there is an effect (a difference between groups) when there really isn’t. n n n Sometimes called “significance level” We try to minimize this (keep it low) Pick a low level of alpha Psychology: 0. 05 and 0. 01 most common Type II error (β): concluding that there isn’t an effect, when there really is. n n How likely are you able to detect a difference if it is there Related to the Statistical Power of a test Error types

n n Step 1: State your hypotheses Step 2: Set your decision criteria Step 3: Collect your data from your sample(s) Step 4: Compute your test statistics n n n Descriptive statistics (means, standard deviations, etc. ) Inferential statistics (t-tests, ANOVAs, etc. ) Step 5: Make a decision about your null hypothesis n n Reject H 0 Fail to reject H 0 “statistically significant differences” “not statistically significant differences” Testing Hypotheses

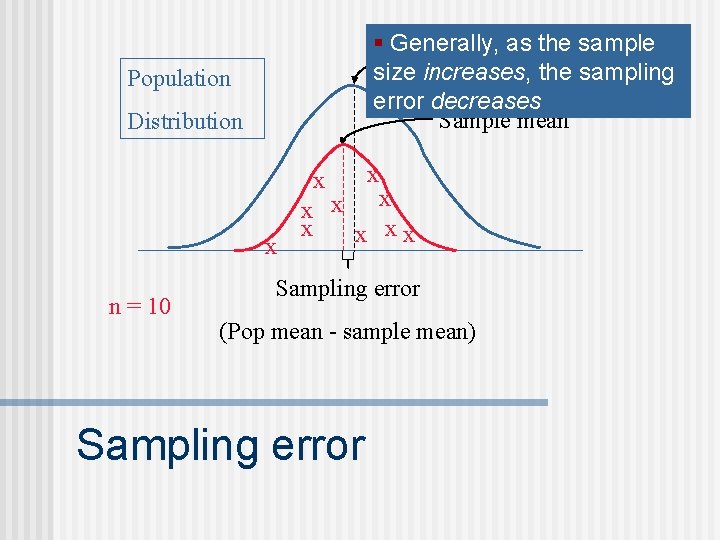

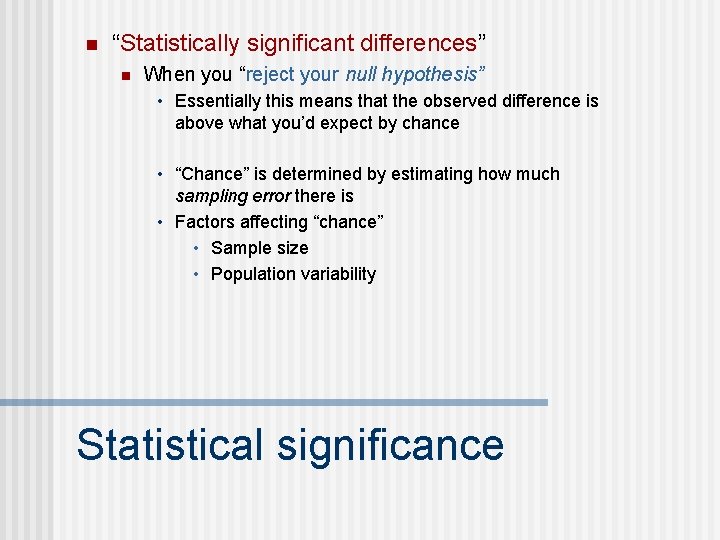

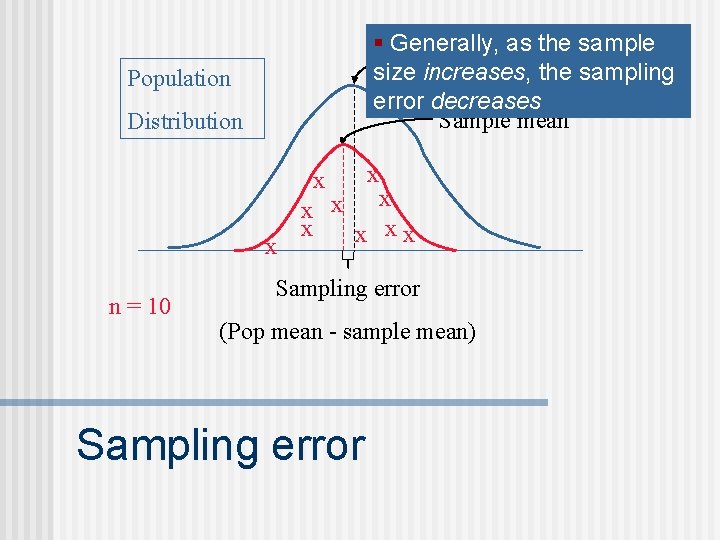

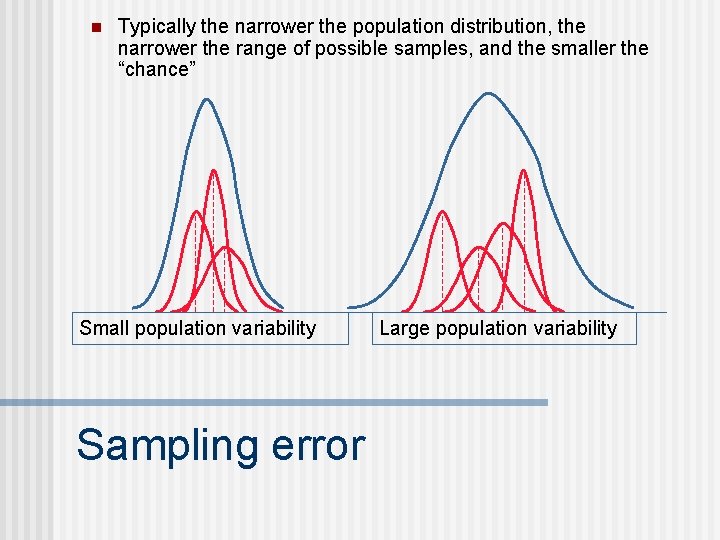

n “Statistically significant differences” n When you “reject your null hypothesis” • Essentially this means that the observed difference is above what you’d expect by chance • “Chance” is determined by estimating how much sampling error there is • Factors affecting “chance” • Sample size • Population variability Statistical significance

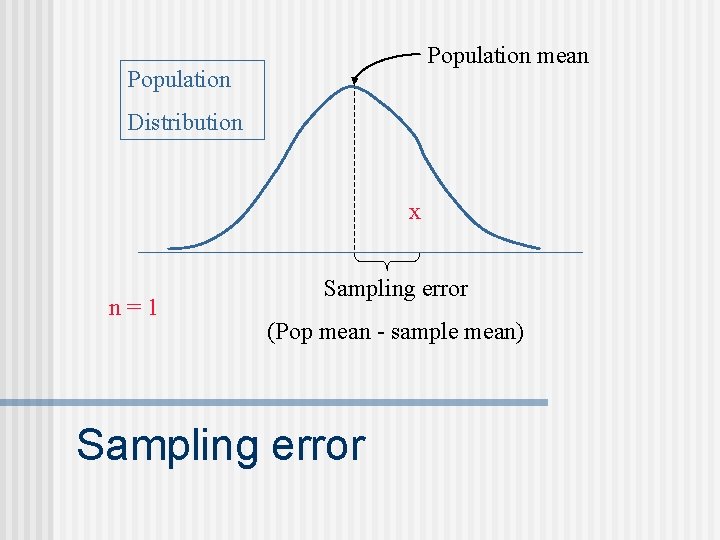

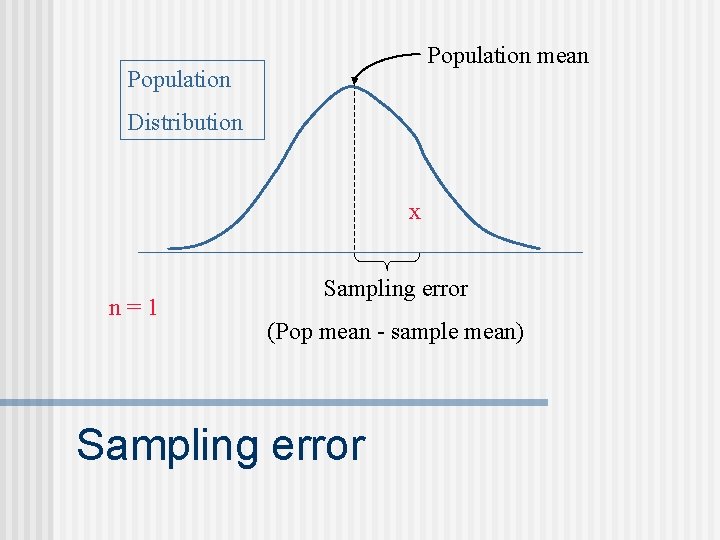

Population mean Population Distribution x n=1 Sampling error (Pop mean - sample mean) Sampling error

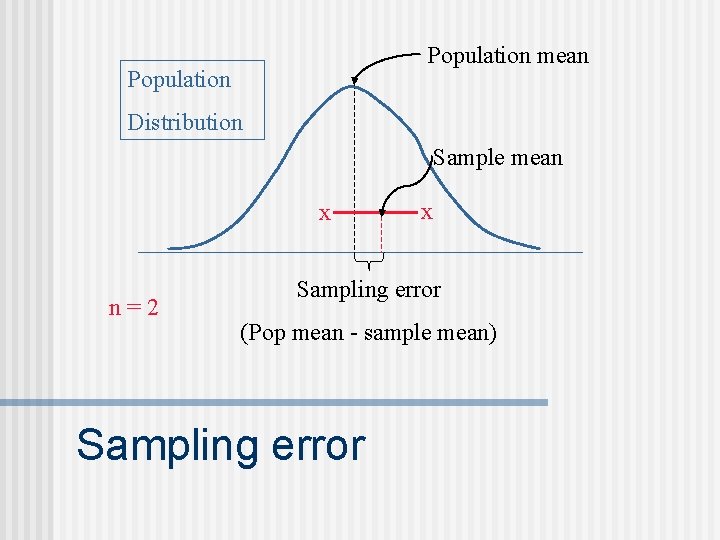

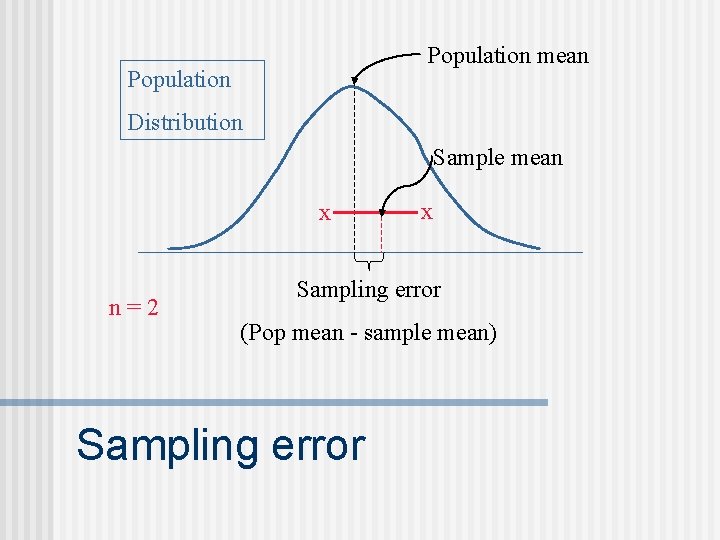

Population mean Population Distribution Sample mean x n=2 x Sampling error (Pop mean - sample mean) Sampling error

§ Generally, as mean the sample Population size increases, the sampling error decreases Sample mean Population Distribution x x n = 10 x x x xx Sampling error (Pop mean - sample mean) Sampling error

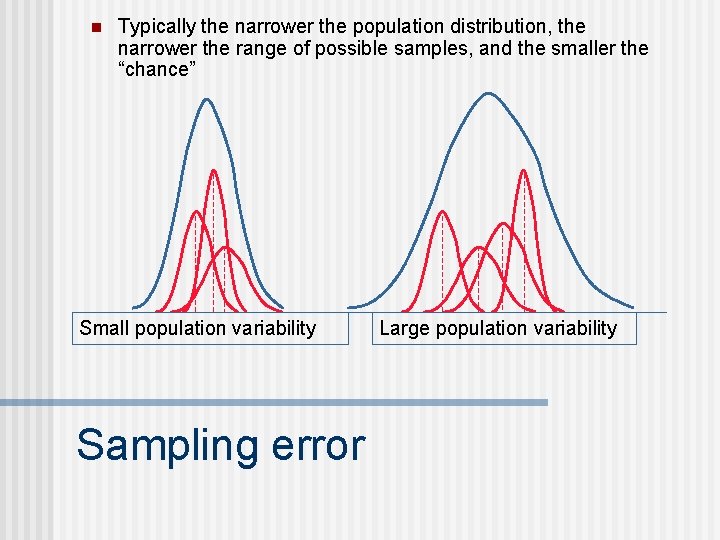

n Typically the narrower the population distribution, the narrower the range of possible samples, and the smaller the “chance” Small population variability Sampling error Large population variability

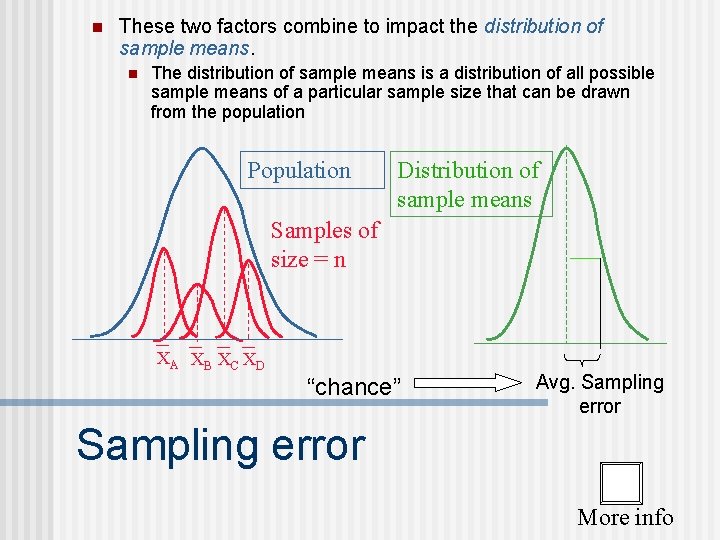

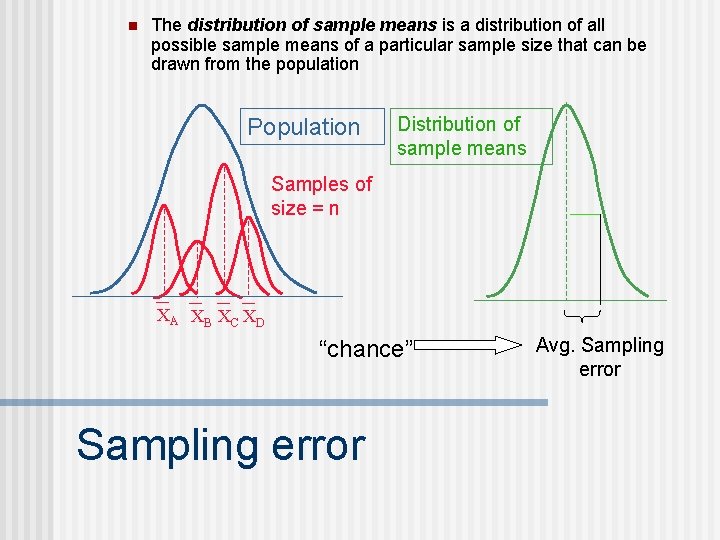

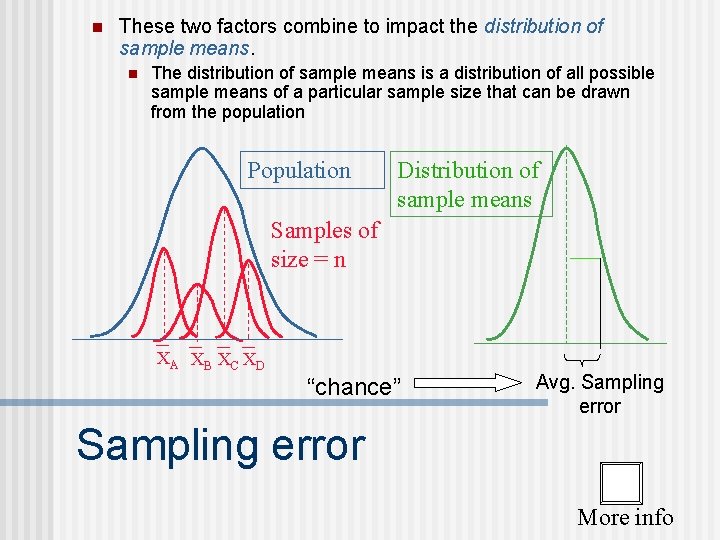

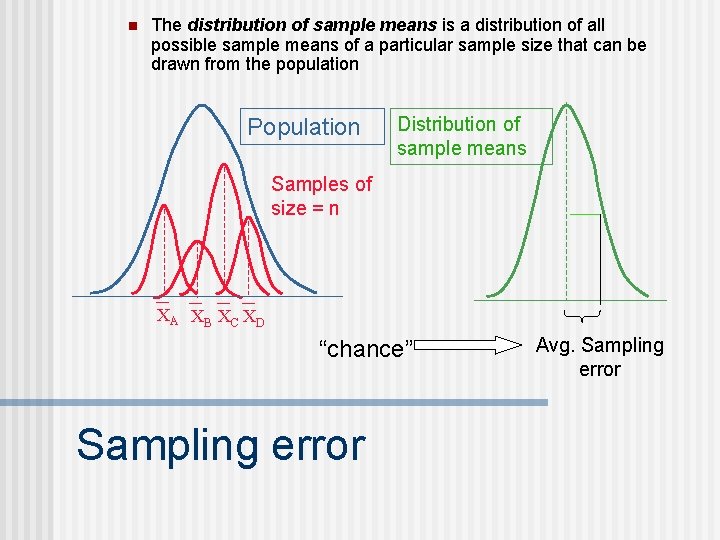

n These two factors combine to impact the distribution of sample means. n The distribution of sample means is a distribution of all possible sample means of a particular sample size that can be drawn from the population Population Distribution of sample means Samples of size = n XA XB XC XD “chance” Avg. Sampling error More info

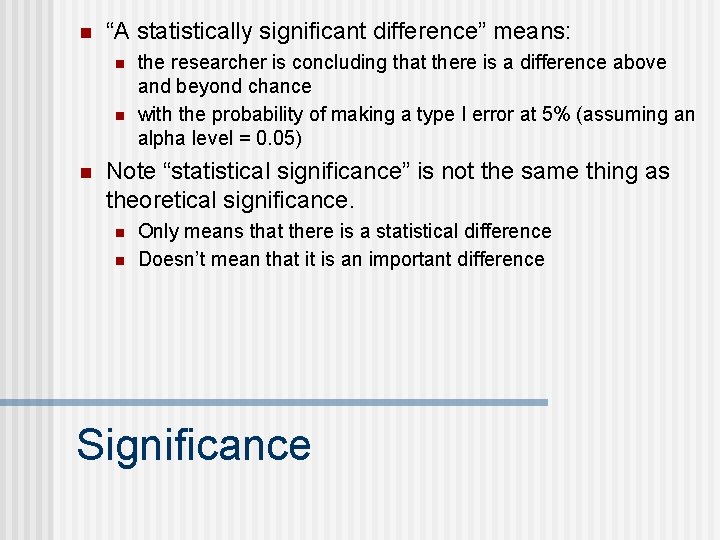

n “A statistically significant difference” means: n n n the researcher is concluding that there is a difference above and beyond chance with the probability of making a type I error at 5% (assuming an alpha level = 0. 05) Note “statistical significance” is not the same thing as theoretical significance. n n Only means that there is a statistical difference Doesn’t mean that it is an important difference Significance

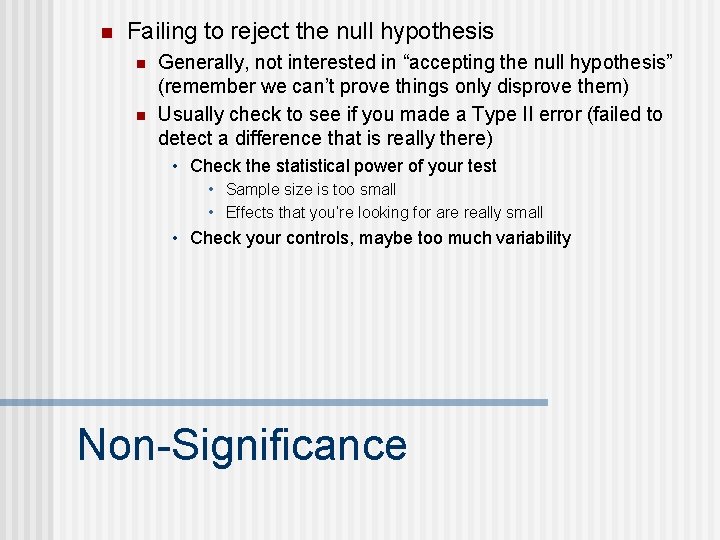

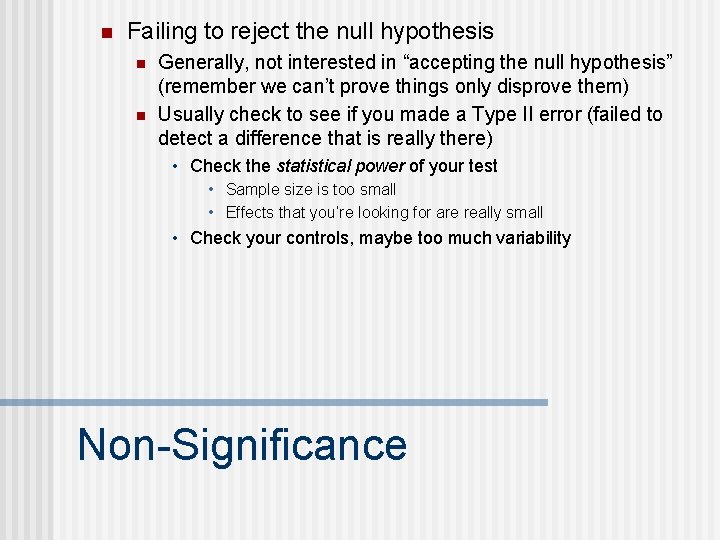

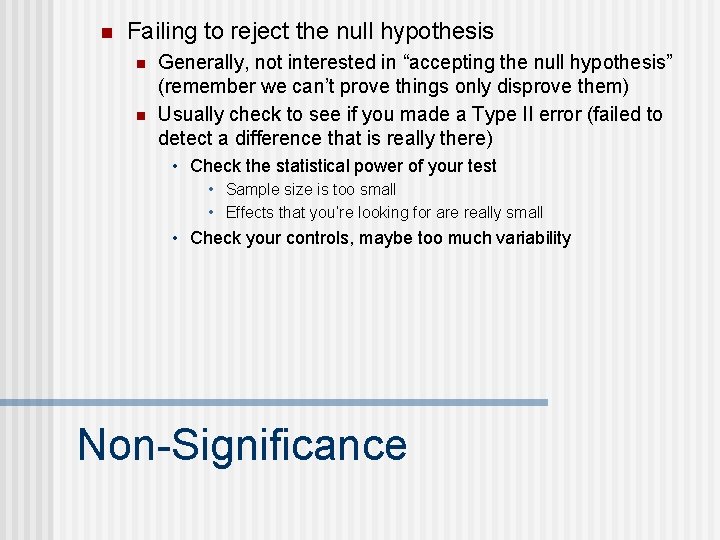

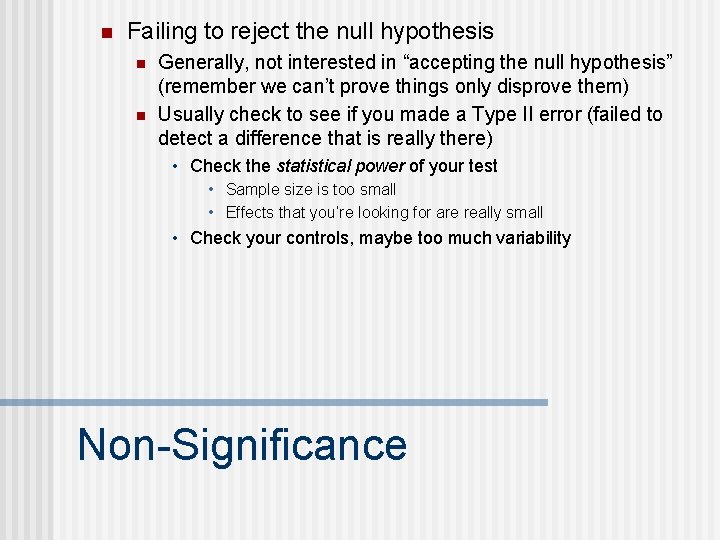

n Failing to reject the null hypothesis n n Generally, not interested in “accepting the null hypothesis” (remember we can’t prove things only disprove them) Usually check to see if you made a Type II error (failed to detect a difference that is really there) • Check the statistical power of your test • Sample size is too small • Effects that you’re looking for are really small • Check your controls, maybe too much variability Non-Significance

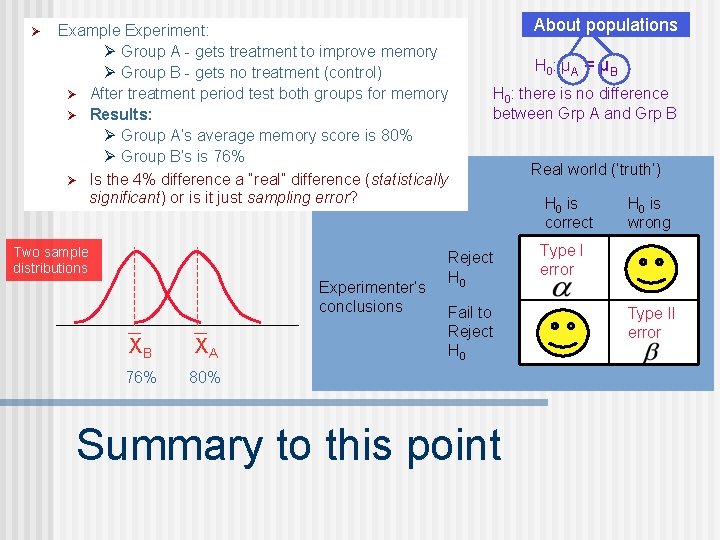

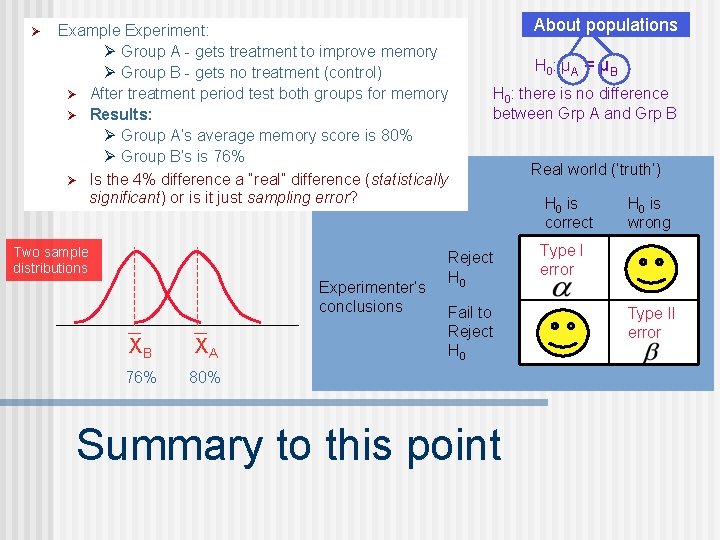

Ø Example Experiment: Ø Group A - gets treatment to improve memory Ø Group B - gets no treatment (control) Ø After treatment period test both groups for memory Ø Results: Ø Group A’s average memory score is 80% Ø Group B’s is 76% Ø Is the 4% difference a “real” difference (statistically significant) or is it just sampling error? Two sample distributions Experimenter’s conclusions XB XA 76% 80% About populations H 0 : μ A = μ B H 0: there is no difference between Grp A and Grp B Reject H 0 Fail to Reject H 0 Summary to this point Real world (‘truth’) H 0 is correct H 0 is wrong Type I error Type II error

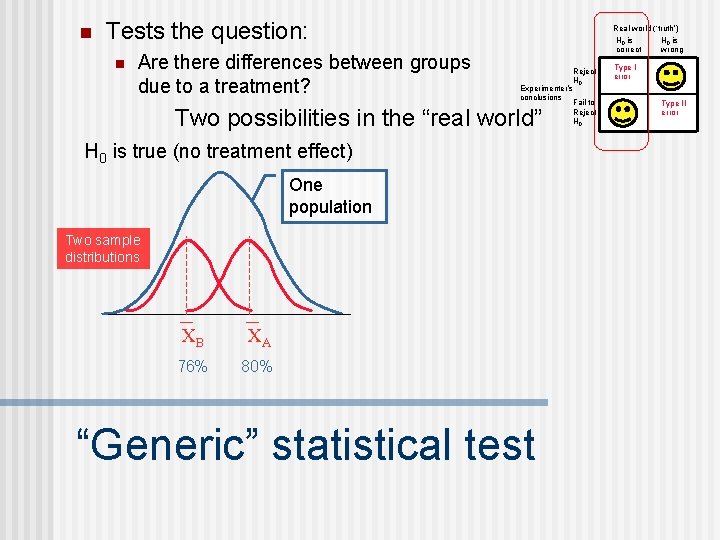

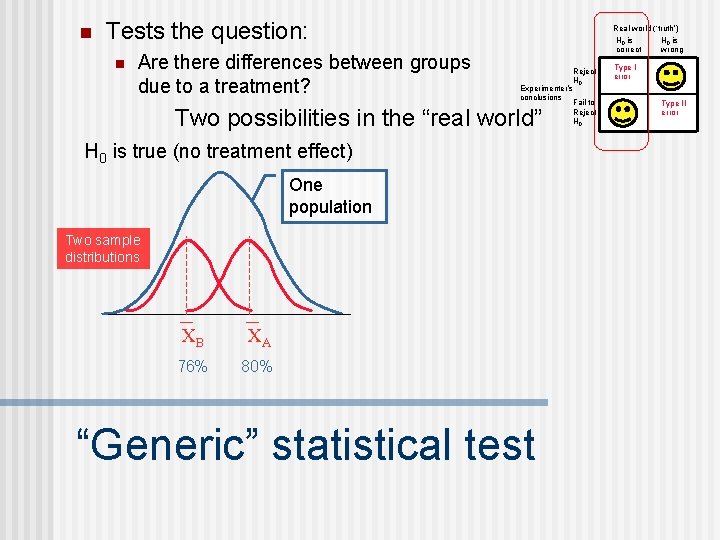

n Tests the question: n Are there differences between groups due to a treatment? Real world (‘truth’) H 0 is correct Reject H 0 Experimenter’s conclusions Fail to Reject H 0 Two possibilities in the “real world” H 0 is true (no treatment effect) One population Two sample distributions XB XA 76% 80% “Generic” statistical test H 0 is wrong Type I error Type II error

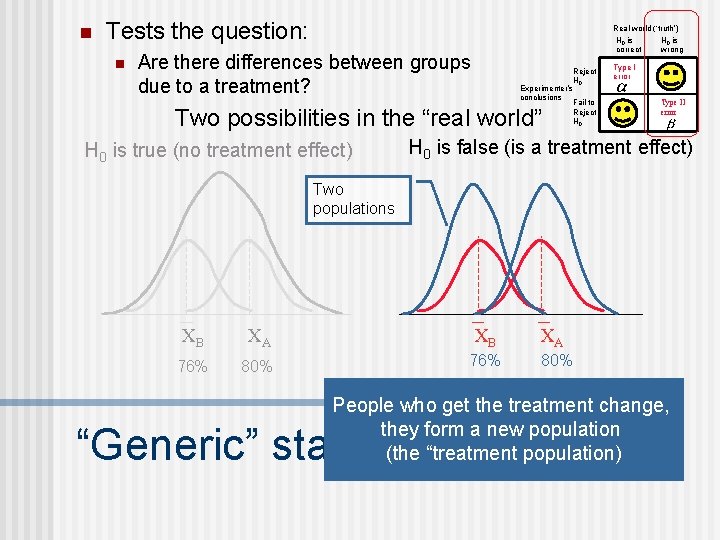

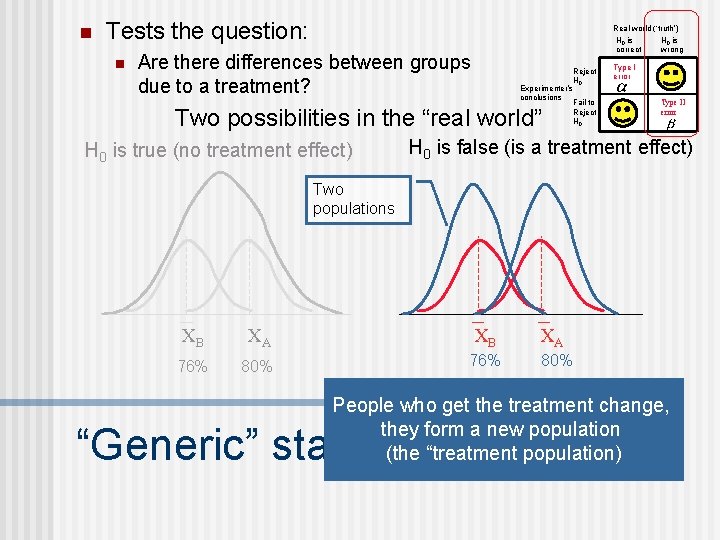

n Tests the question: n Real world (‘truth’) H 0 is correct Are there differences between groups due to a treatment? Reject H 0 Experimenter’s conclusions Fail to Reject H 0 Two possibilities in the “real world” H 0 is true (no treatment effect) H 0 is wrong Type I error Type II error H 0 is false (is a treatment effect) Two populations XB XA 76% 80% People who get the treatment change, they form a new population (the “treatment population) “Generic” statistical test

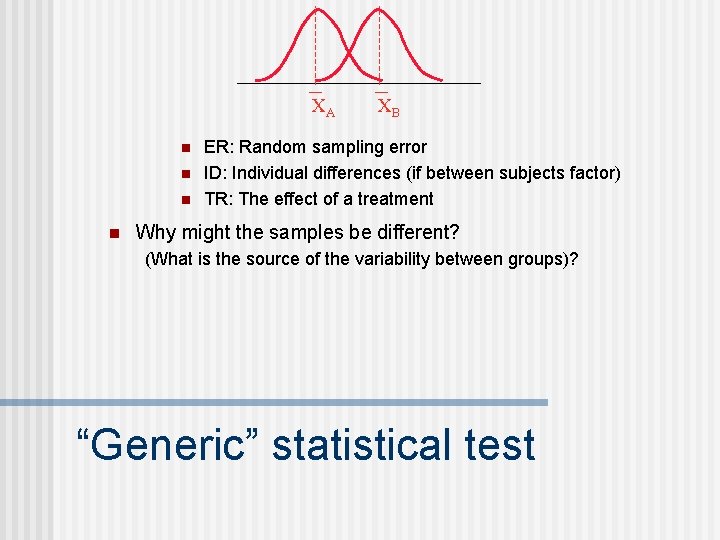

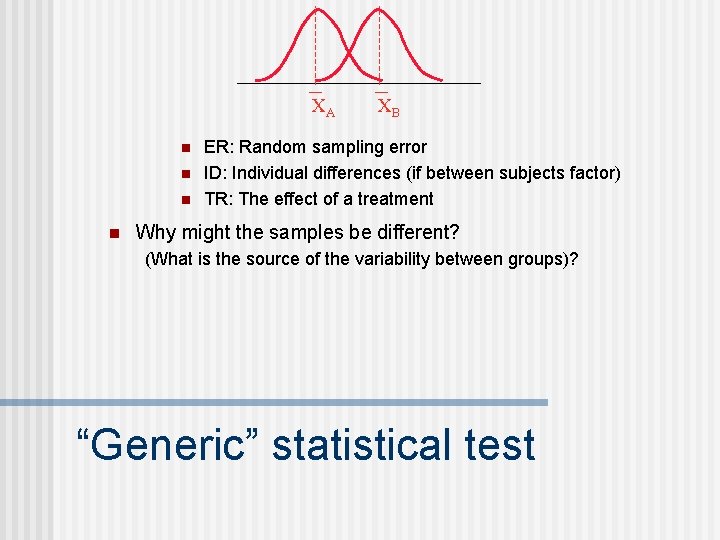

XA n n XB ER: Random sampling error ID: Individual differences (if between subjects factor) TR: The effect of a treatment Why might the samples be different? (What is the source of the variability between groups)? “Generic” statistical test

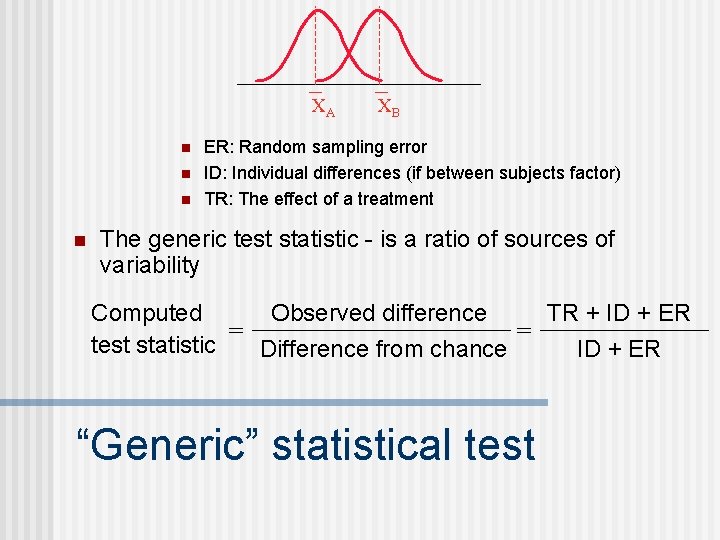

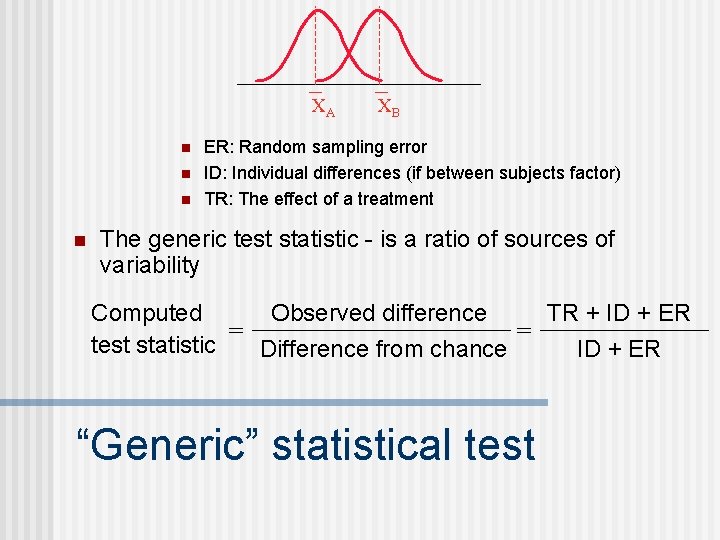

XA n n XB ER: Random sampling error ID: Individual differences (if between subjects factor) TR: The effect of a treatment The generic test statistic - is a ratio of sources of variability TR + ID + ER Observed difference Computed = = test statistic Difference from chance ID + ER “Generic” statistical test

n The distribution of sample means is a distribution of all possible sample means of a particular sample size that can be drawn from the population Population Distribution of sample means Samples of size = n XA XB XC XD “chance” Sampling error Avg. Sampling error

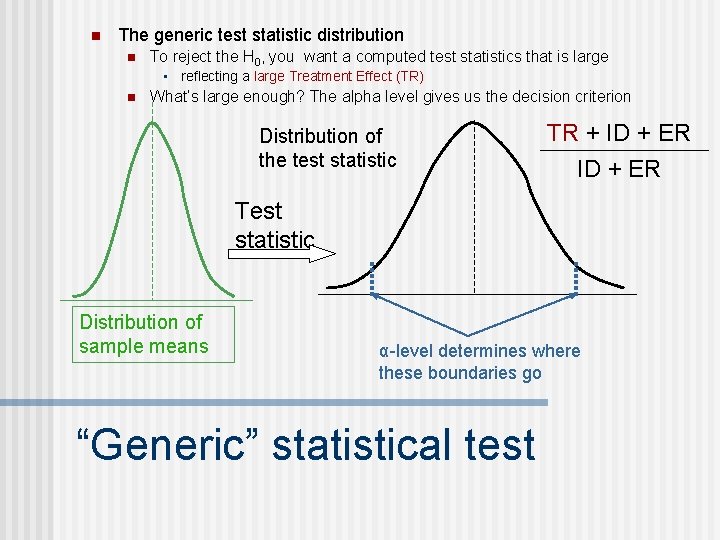

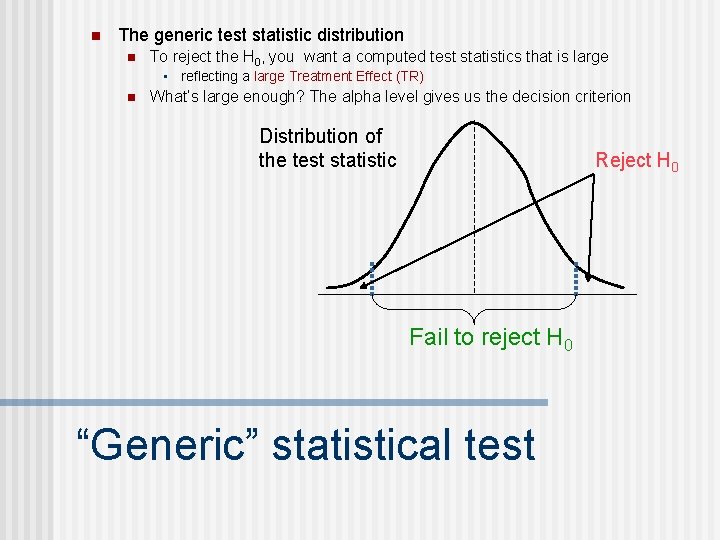

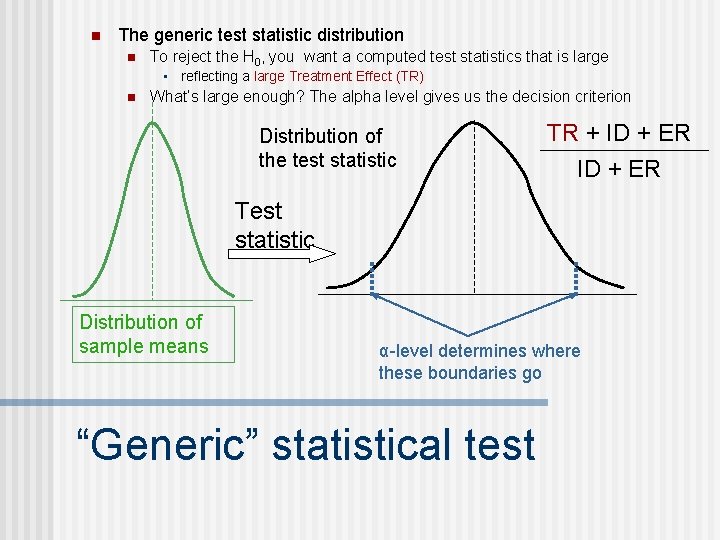

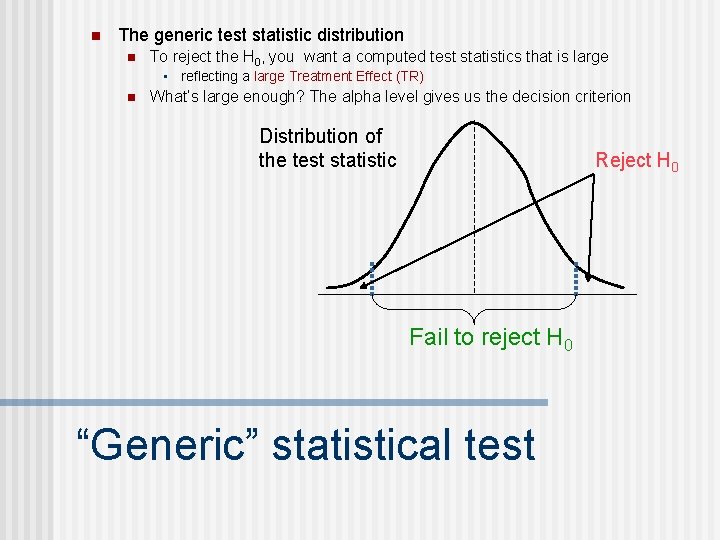

n The generic test statistic distribution n To reject the H 0, you want a computed test statistics that is large • reflecting a large Treatment Effect (TR) n What’s large enough? The alpha level gives us the decision criterion Distribution of the test statistic TR + ID + ER Test statistic Distribution of sample means α-level determines where these boundaries go “Generic” statistical test

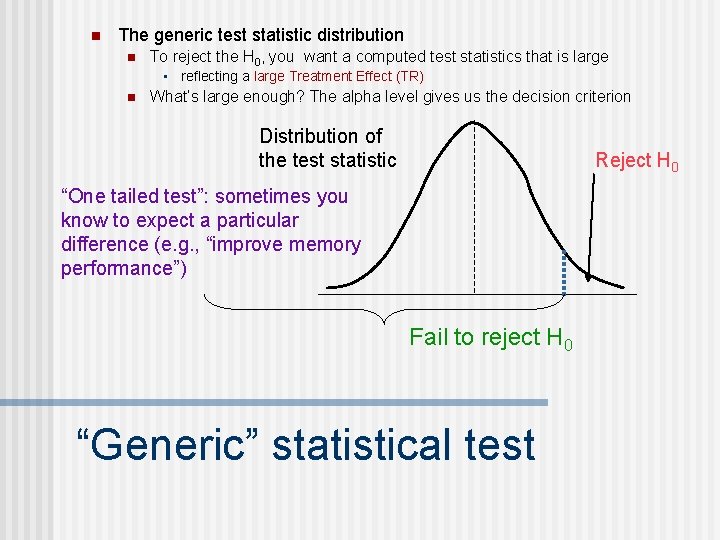

n The generic test statistic distribution n To reject the H 0, you want a computed test statistics that is large • reflecting a large Treatment Effect (TR) n What’s large enough? The alpha level gives us the decision criterion Distribution of the test statistic Reject H 0 Fail to reject H 0 “Generic” statistical test

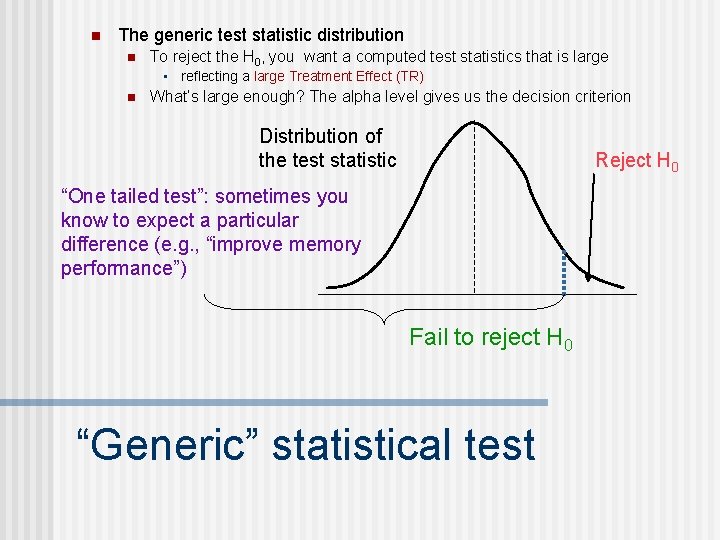

n The generic test statistic distribution n To reject the H 0, you want a computed test statistics that is large • reflecting a large Treatment Effect (TR) n What’s large enough? The alpha level gives us the decision criterion Distribution of the test statistic Reject H 0 “One tailed test”: sometimes you know to expect a particular difference (e. g. , “improve memory performance”) Fail to reject H 0 “Generic” statistical test

n Things that affect the computed test statistic n Size of the treatment effect • The bigger the effect, the bigger the computed test statistic n Difference expected by chance (sample error) • Sample size • Variability in the population “Generic” statistical test

n “A statistically significant difference” means: n n n the researcher is concluding that there is a difference above and beyond chance with the probability of making a type I error at 5% (assuming an alpha level = 0. 05) Note “statistical significance” is not the same thing as theoretical significance. n n Only means that there is a statistical difference Doesn’t mean that it is an important difference Significance

n Failing to reject the null hypothesis n n Generally, not interested in “accepting the null hypothesis” (remember we can’t prove things only disprove them) Usually check to see if you made a Type II error (failed to detect a difference that is really there) • Check the statistical power of your test • Sample size is too small • Effects that you’re looking for are really small • Check your controls, maybe too much variability Non-Significance

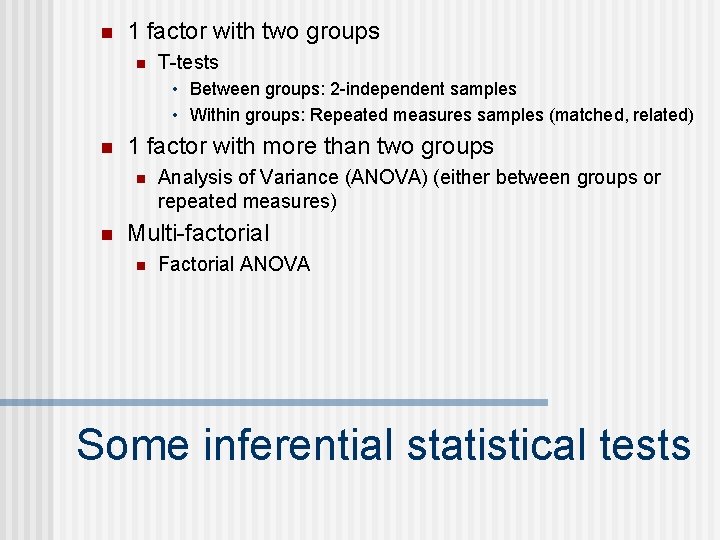

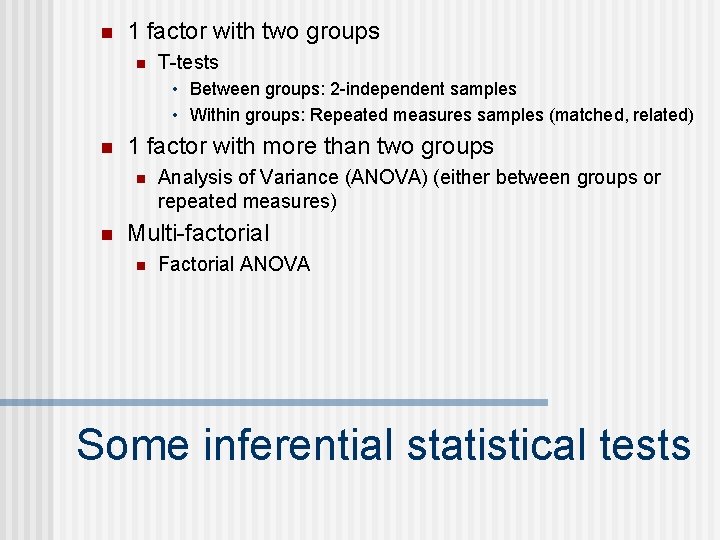

n 1 factor with two groups n T-tests • Between groups: 2 -independent samples • Within groups: Repeated measures samples (matched, related) n 1 factor with more than two groups n n Analysis of Variance (ANOVA) (either between groups or repeated measures) Multi-factorial n Factorial ANOVA Some inferential statistical tests

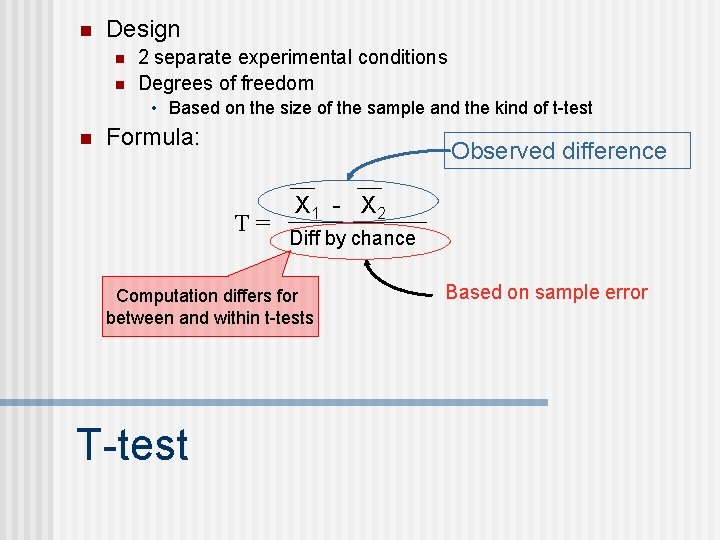

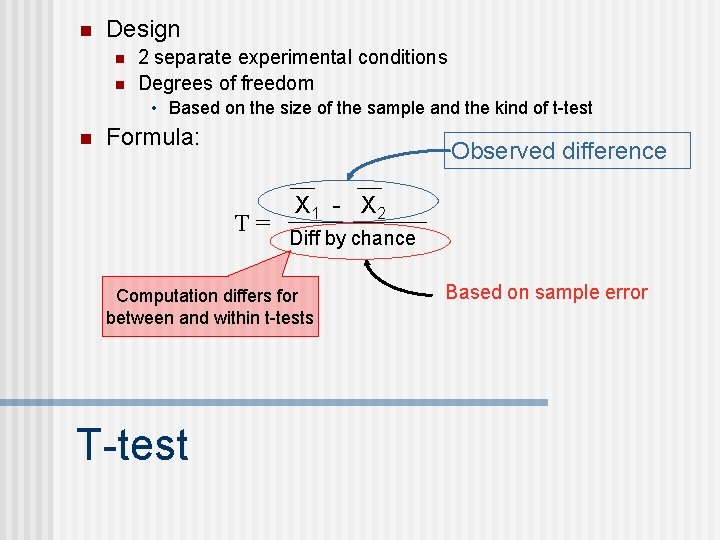

n Design n n 2 separate experimental conditions Degrees of freedom • Based on the size of the sample and the kind of t-test n Formula: Observed difference T= X 1 - X 2 Diff by chance Computation differs for between and within t-tests T-test Based on sample error

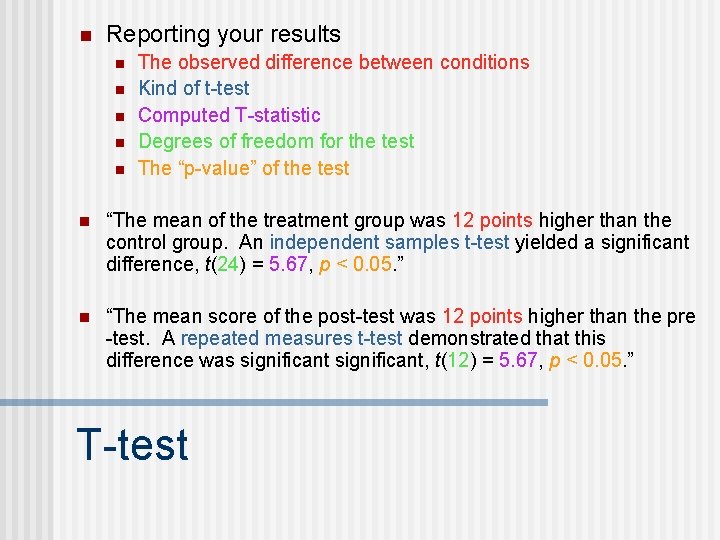

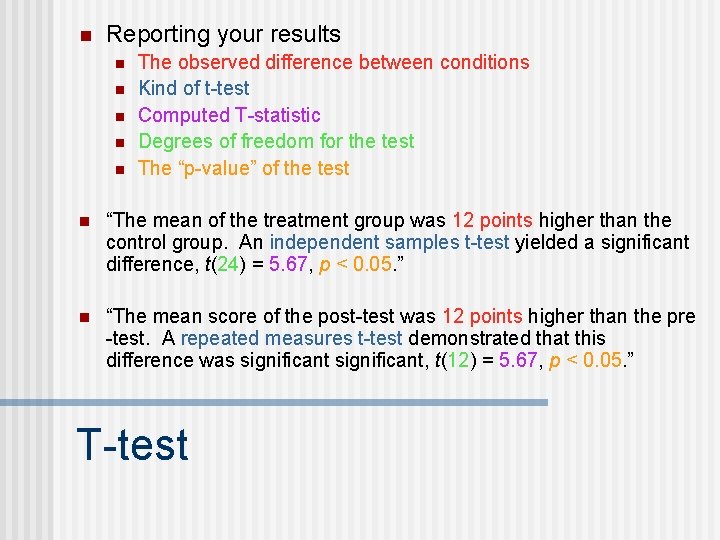

n Reporting your results n n n The observed difference between conditions Kind of t-test Computed T-statistic Degrees of freedom for the test The “p-value” of the test n “The mean of the treatment group was 12 points higher than the control group. An independent samples t-test yielded a significant difference, t(24) = 5. 67, p < 0. 05. ” n “The mean score of the post-test was 12 points higher than the pre -test. A repeated measures t-test demonstrated that this difference was significant, t(12) = 5. 67, p < 0. 05. ” T-test

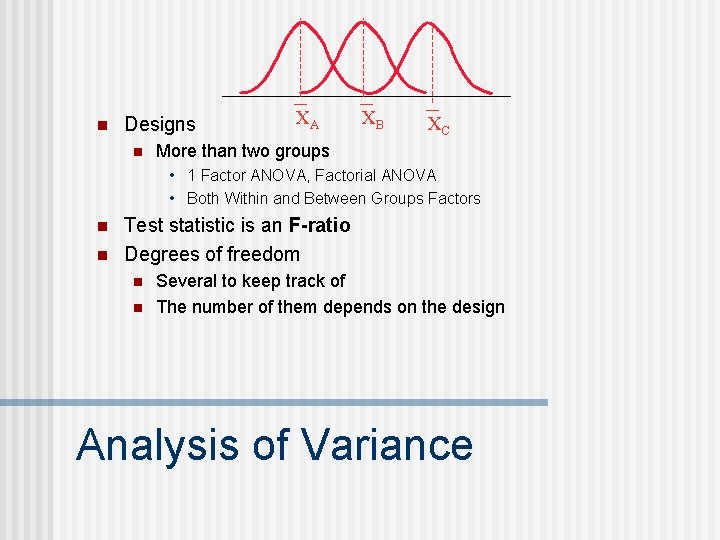

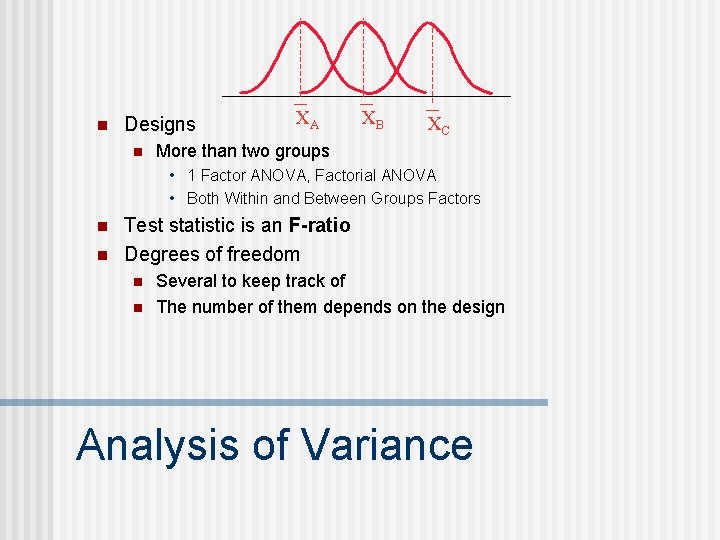

n Designs n XA XB XC More than two groups • 1 Factor ANOVA, Factorial ANOVA • Both Within and Between Groups Factors n n Test statistic is an F-ratio Degrees of freedom n n Several to keep track of The number of them depends on the design Analysis of Variance

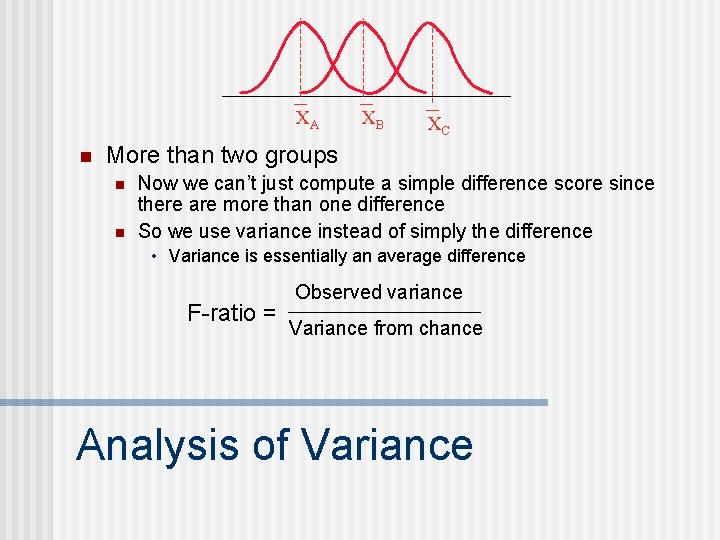

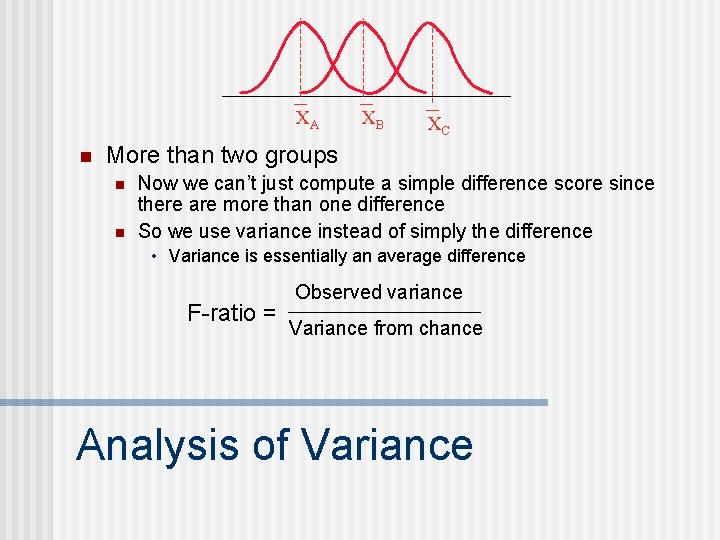

XA n XB XC More than two groups n n Now we can’t just compute a simple difference score since there are more than one difference So we use variance instead of simply the difference • Variance is essentially an average difference F-ratio = Observed variance Variance from chance Analysis of Variance

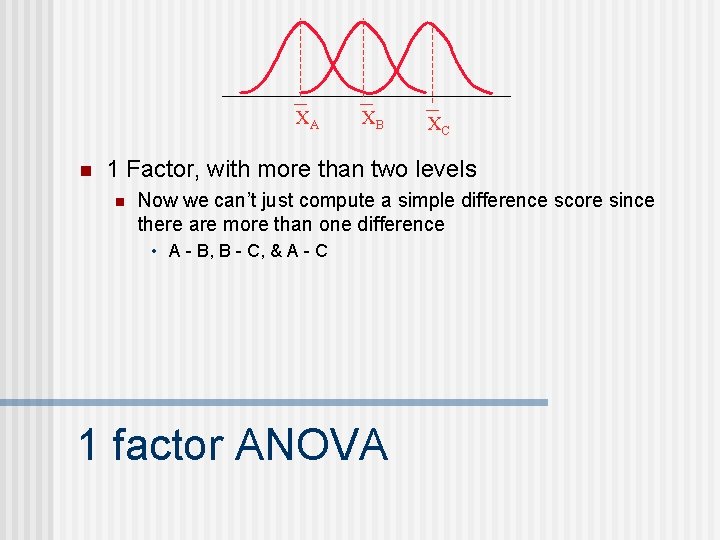

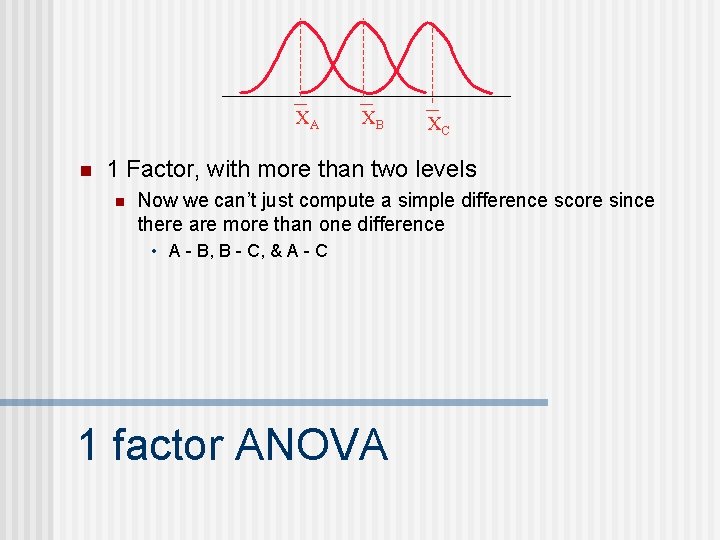

XA n XB XC 1 Factor, with more than two levels n Now we can’t just compute a simple difference score since there are more than one difference • A - B, B - C, & A - C 1 factor ANOVA

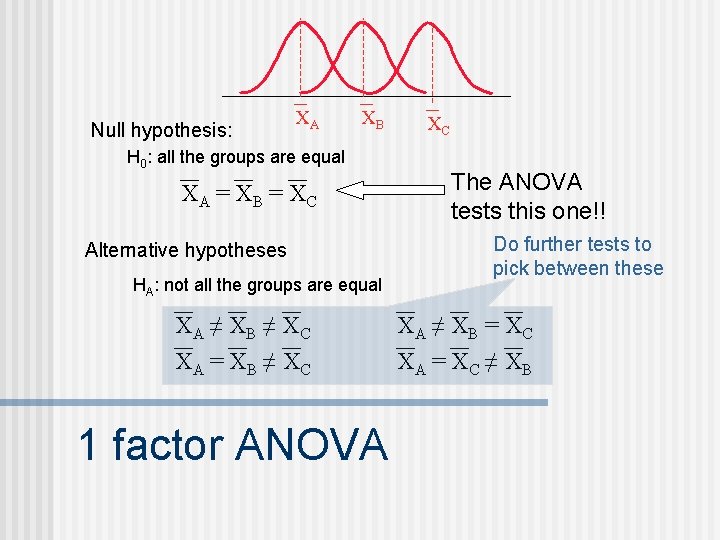

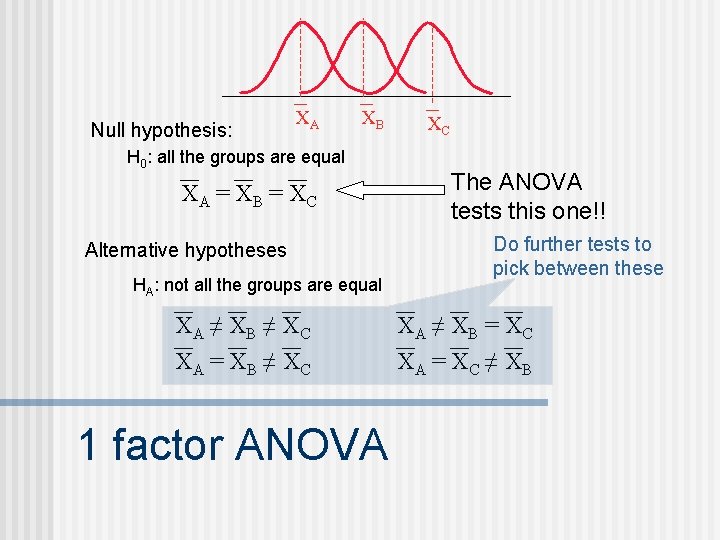

Null hypothesis: XA XB H 0: all the groups are equal XA = X B = X C Alternative hypotheses HA: not all the groups are equal XA ≠ X B ≠ X C XA = X B ≠ X C 1 factor ANOVA XC The ANOVA tests this one!! Do further tests to pick between these XA ≠ X B = X C XA = X C ≠ X B

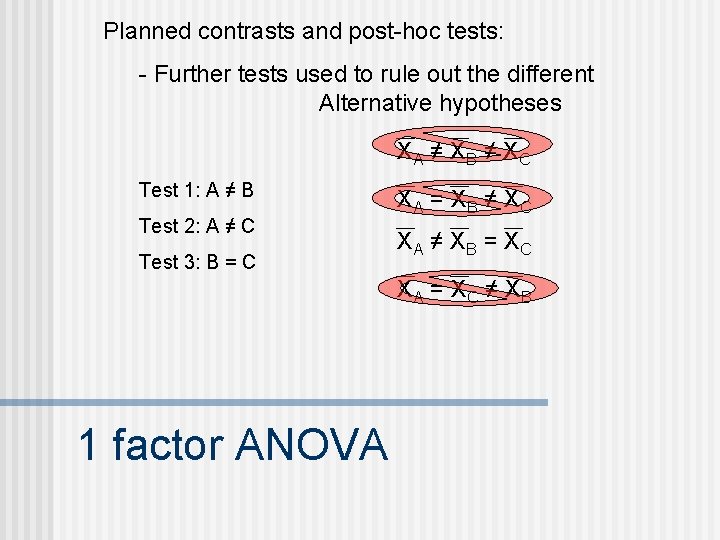

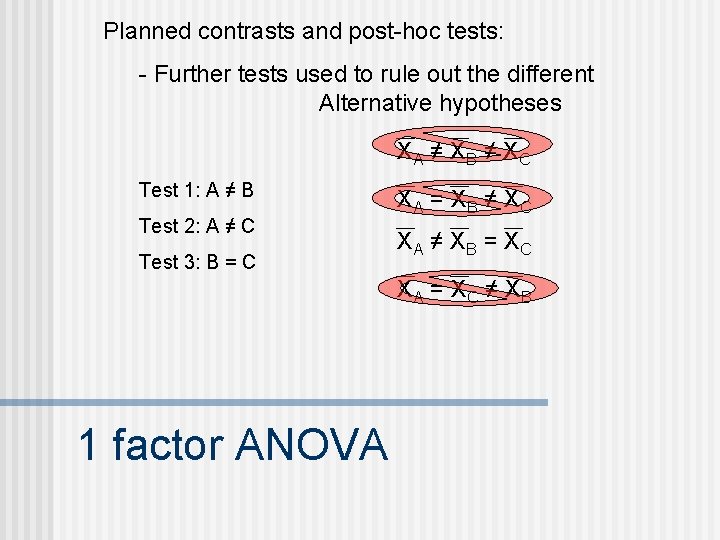

Planned contrasts and post-hoc tests: - Further tests used to rule out the different Alternative hypotheses XA ≠ X B ≠ X C Test 1: A ≠ B Test 2: A ≠ C Test 3: B = C XA = X B ≠ X C XA ≠ X B = X C XA = X C ≠ X B 1 factor ANOVA

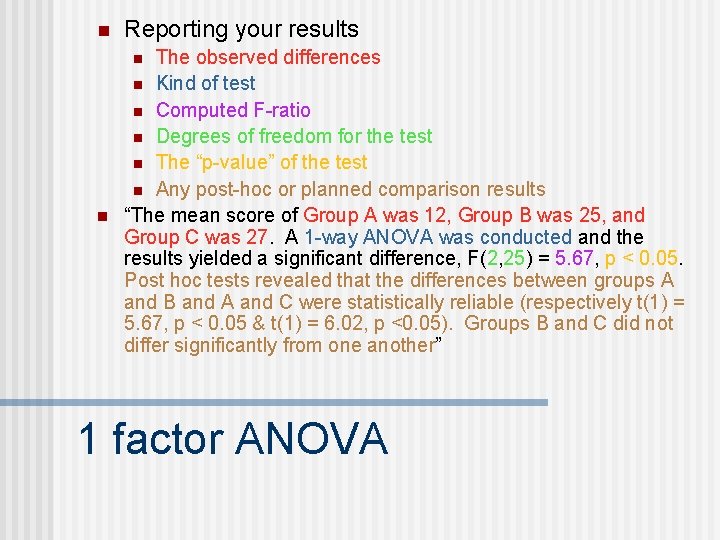

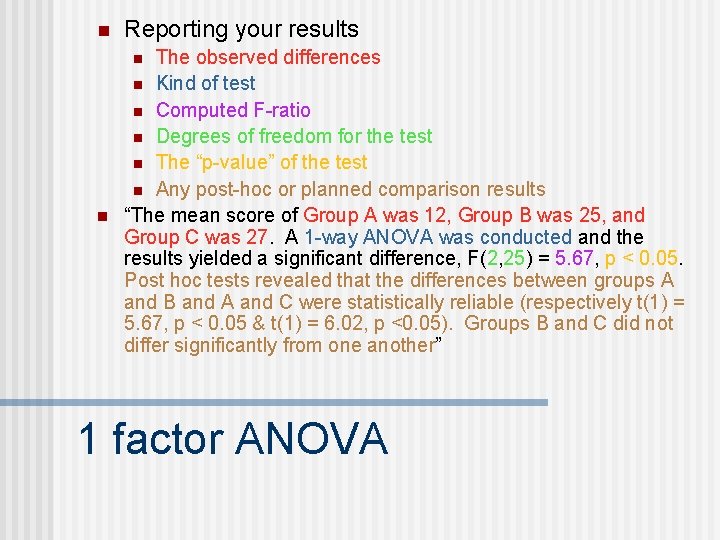

n Reporting your results The observed differences n Kind of test n Computed F-ratio n Degrees of freedom for the test n The “p-value” of the test n Any post-hoc or planned comparison results “The mean score of Group A was 12, Group B was 25, and Group C was 27. A 1 -way ANOVA was conducted and the results yielded a significant difference, F(2, 25) = 5. 67, p < 0. 05. Post hoc tests revealed that the differences between groups A and B and A and C were statistically reliable (respectively t(1) = 5. 67, p < 0. 05 & t(1) = 6. 02, p <0. 05). Groups B and C did not differ significantly from one another” n n 1 factor ANOVA

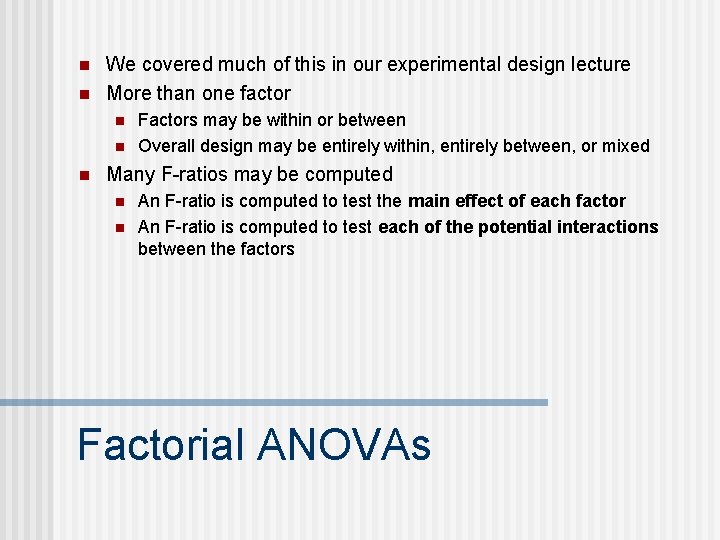

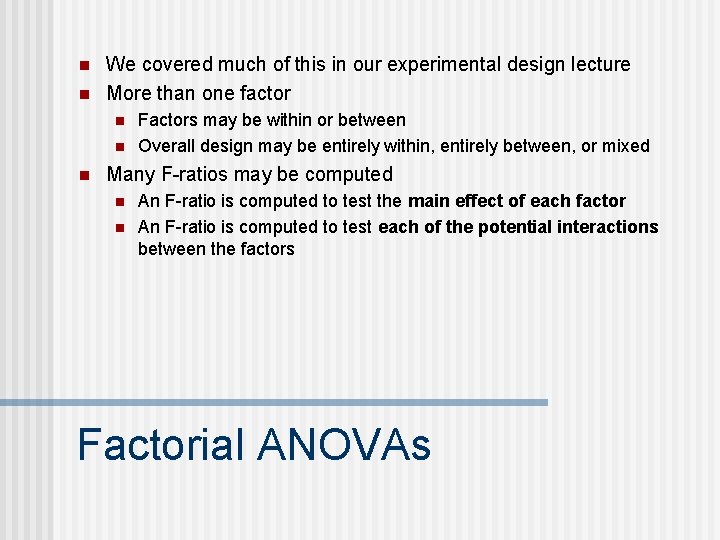

n n We covered much of this in our experimental design lecture More than one factor n n n Factors may be within or between Overall design may be entirely within, entirely between, or mixed Many F-ratios may be computed n n An F-ratio is computed to test the main effect of each factor An F-ratio is computed to test each of the potential interactions between the factors Factorial ANOVAs

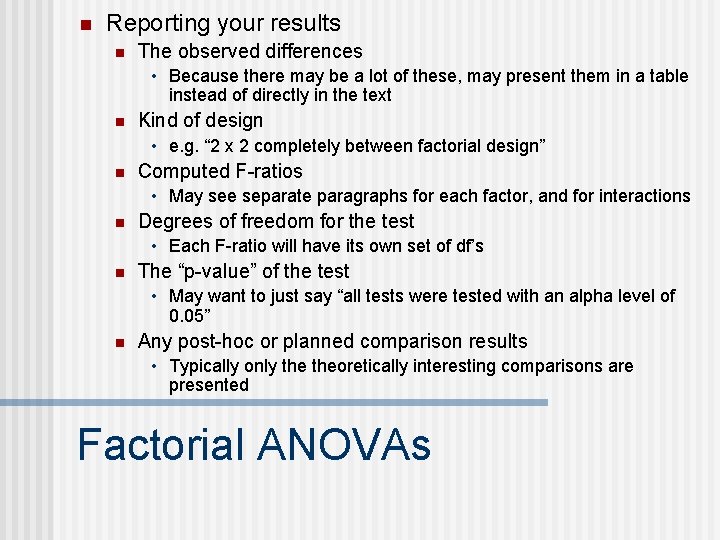

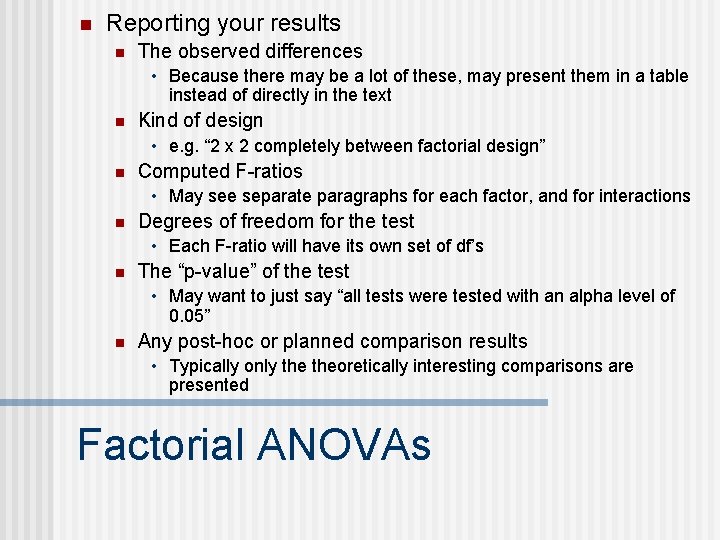

n Reporting your results n The observed differences • Because there may be a lot of these, may present them in a table instead of directly in the text n Kind of design • e. g. “ 2 x 2 completely between factorial design” n Computed F-ratios • May see separate paragraphs for each factor, and for interactions n Degrees of freedom for the test • Each F-ratio will have its own set of df’s n The “p-value” of the test • May want to just say “all tests were tested with an alpha level of 0. 05” n Any post-hoc or planned comparison results • Typically only theoretically interesting comparisons are presented Factorial ANOVAs

n The following slides are available for a little more concrete review of distribution of sample means discussion. Distribution of sample means

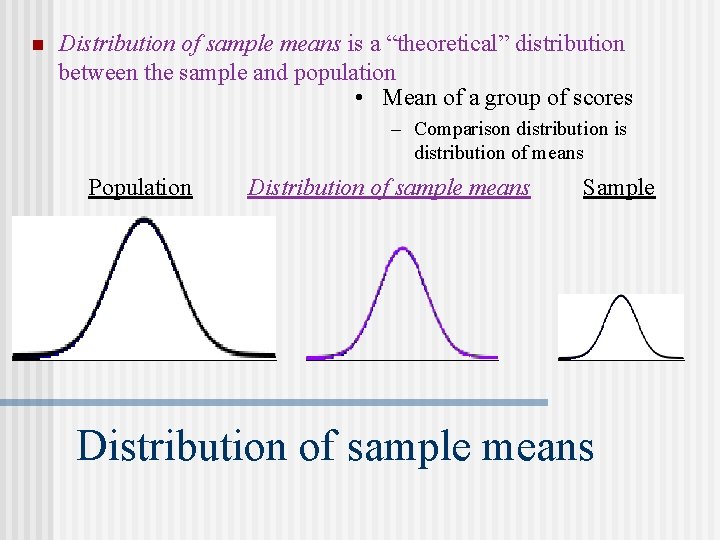

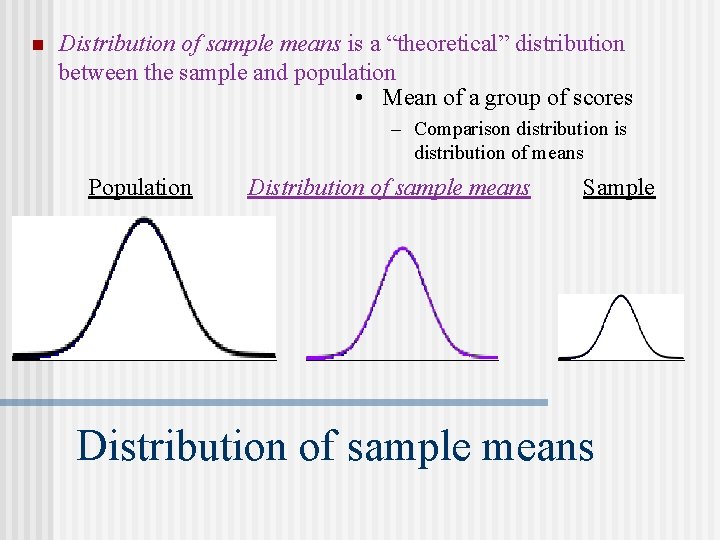

n Distribution of sample means is a “theoretical” distribution between the sample and population • Mean of a group of scores – Comparison distribution is distribution of means Population Distribution of sample means Sample Distribution of sample means

n A simple case n Population: 2 4 6 8 – All possible samples of size n = 2 Assumption: sampling with replacement Distribution of sample means

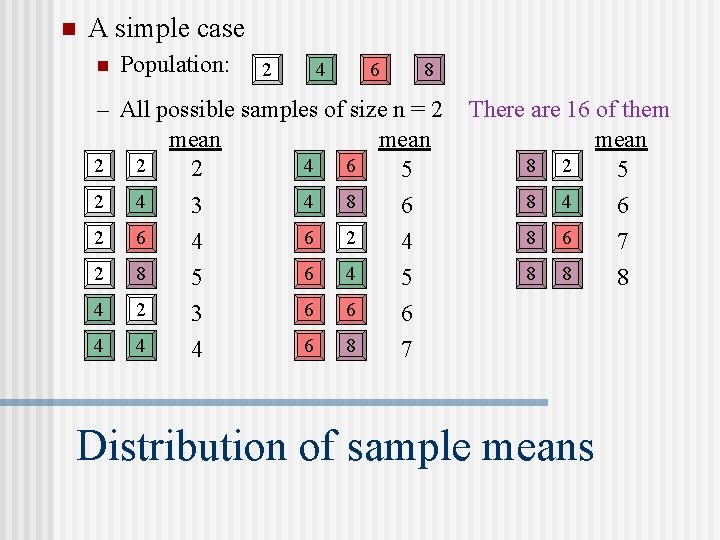

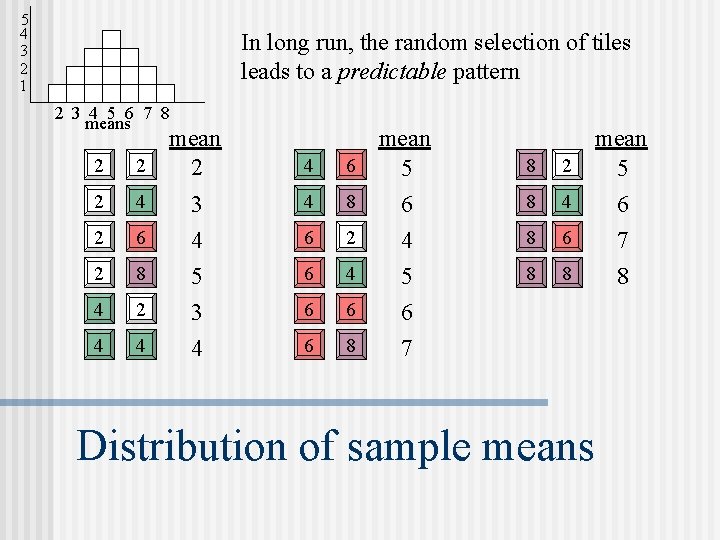

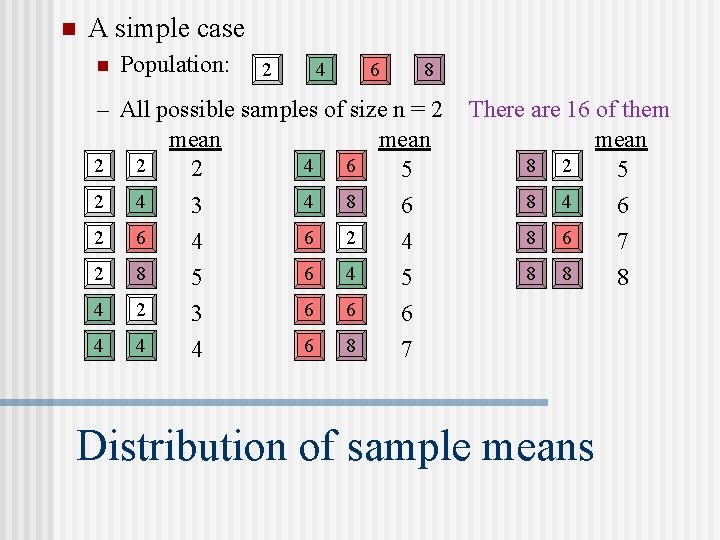

n A simple case n Population: 2 4 6 8 – All possible samples of size n = 2 mean 4 6 2 2 2 5 2 4 4 8 6 3 2 6 6 2 4 4 2 8 6 4 5 5 4 2 6 6 3 6 4 4 6 8 4 7 There are 16 of them mean 8 2 5 8 4 8 6 8 8 Distribution of sample means 6 7 8

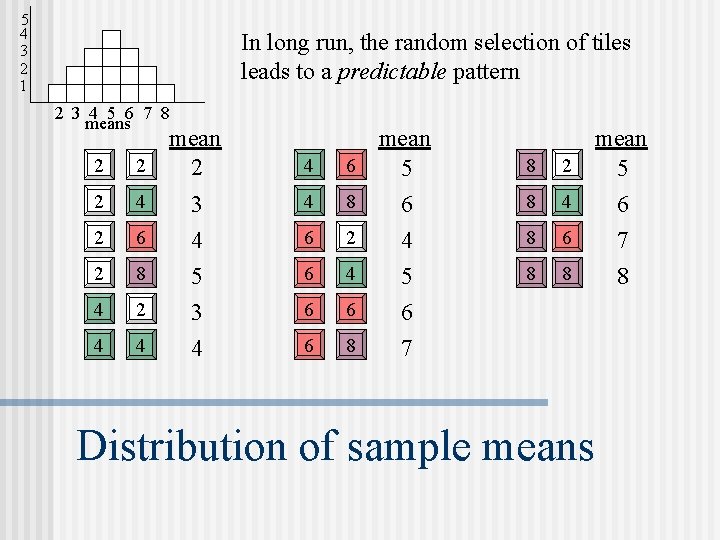

5 4 3 2 1 In long run, the random selection of tiles leads to a predictable pattern 2 3 4 5 6 7 8 means 2 mean 2 2 4 6 2 4 4 8 2 6 6 2 2 8 6 4 4 2 6 6 4 4 6 8 3 4 5 3 4 mean 5 6 4 5 6 7 8 2 8 4 8 6 8 8 Distribution of sample means mean 5 6 7 8

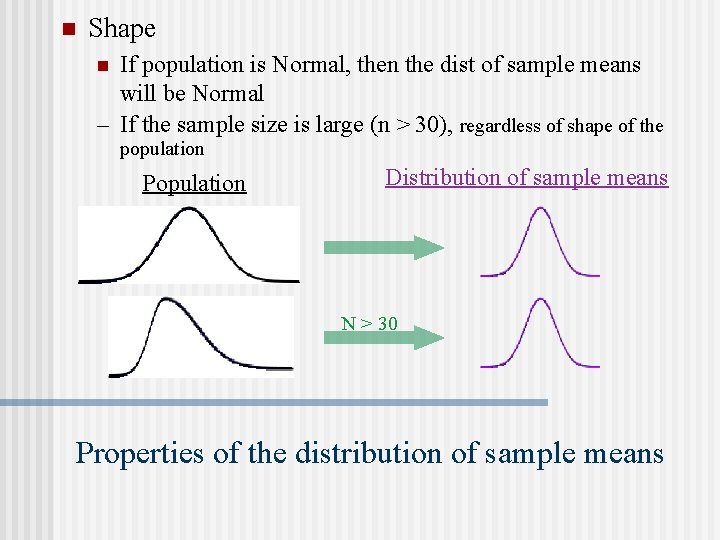

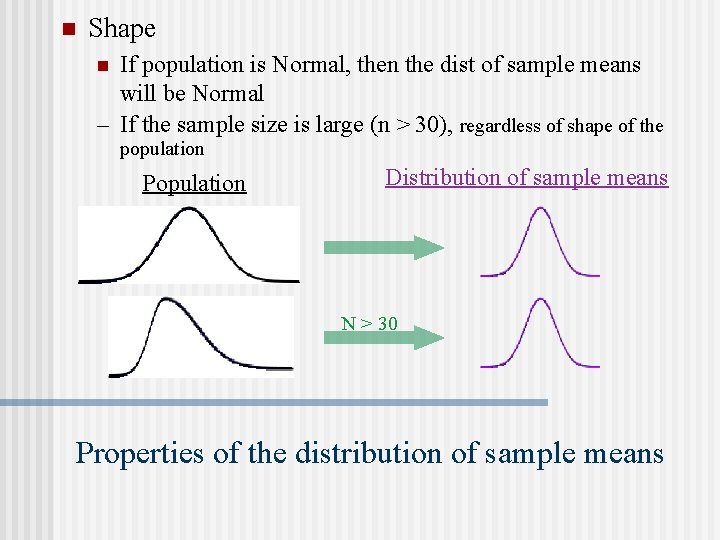

n Shape If population is Normal, then the dist of sample means will be Normal – If the sample size is large (n > 30), regardless of shape of the n population Population Distribution of sample means N > 30 Properties of the distribution of sample means

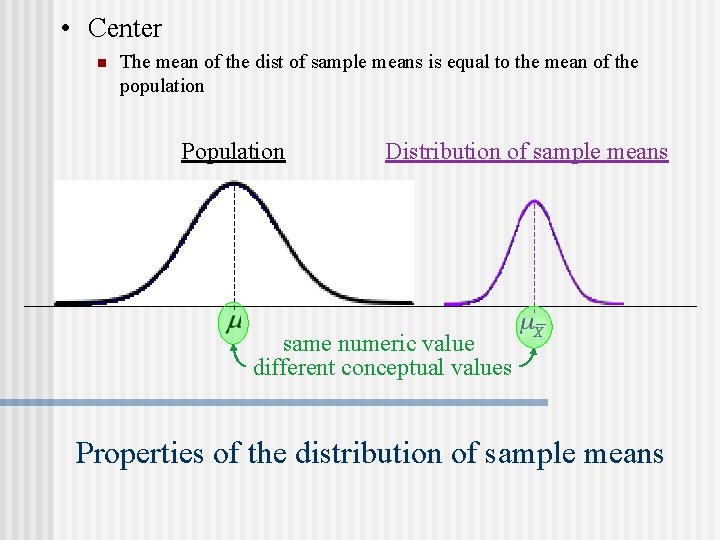

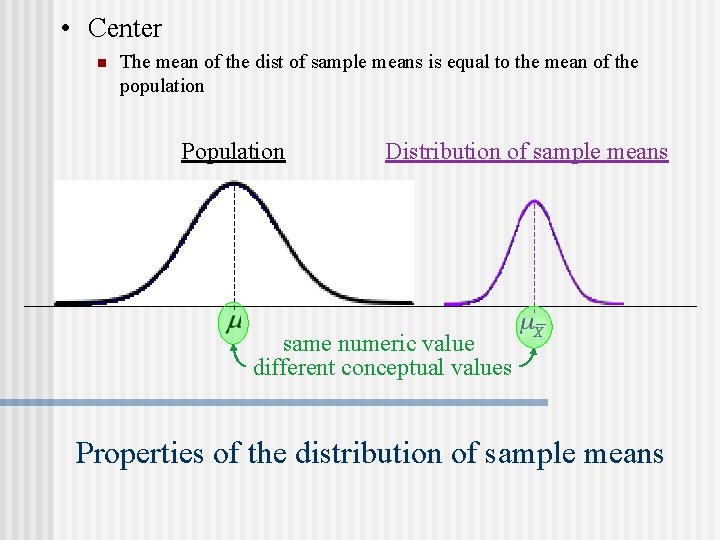

• Center n The mean of the dist of sample means is equal to the mean of the population Population Distribution of sample means same numeric value different conceptual values Properties of the distribution of sample means

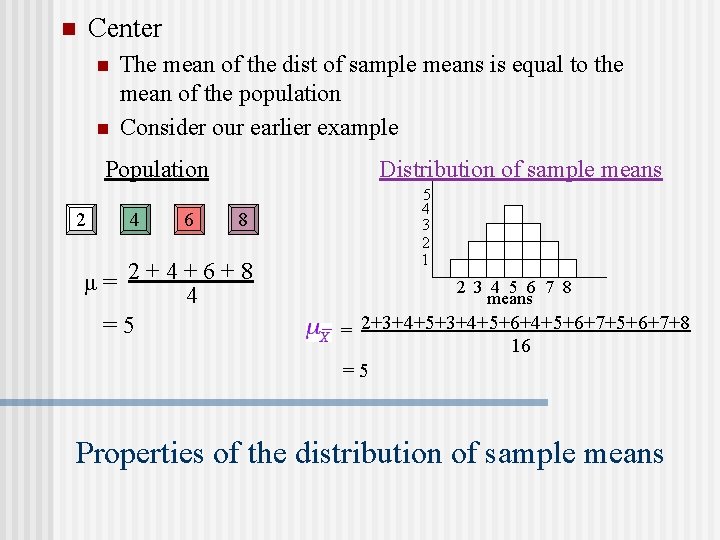

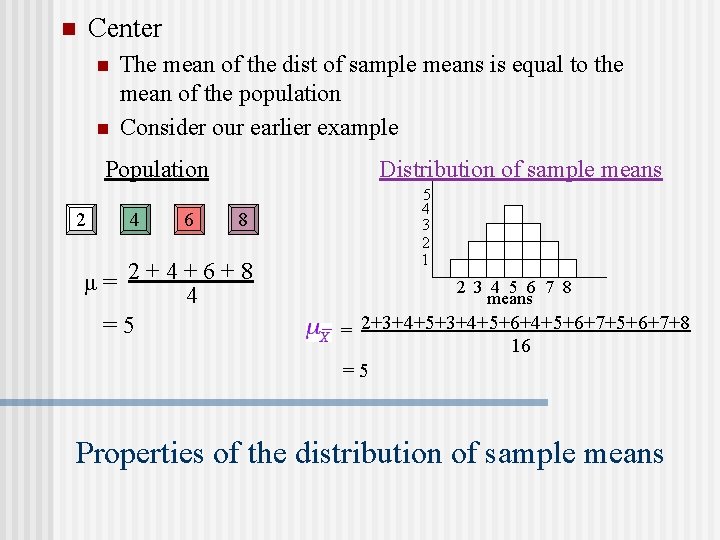

Center n n n The mean of the dist of sample means is equal to the mean of the population Consider our earlier example Population 2 4 6 Distribution of sample means 8 μ= 2+4+6+8 4 =5 5 4 3 2 1 2 3 4 5 6 7 8 means = 2+3+4+5+6+4+5+6+7+8 16 =5 Properties of the distribution of sample means

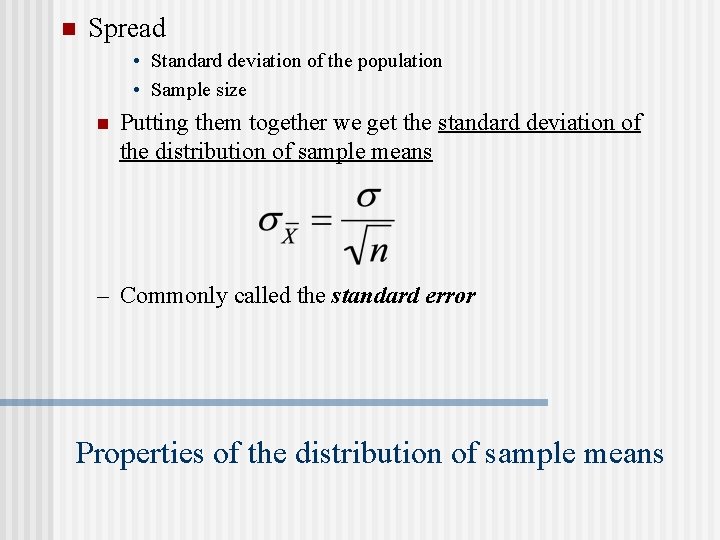

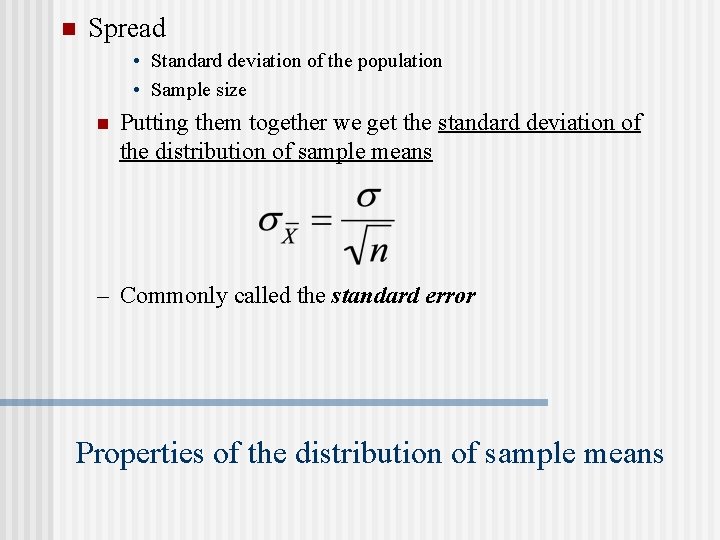

n Spread • Standard deviation of the population • Sample size n Putting them together we get the standard deviation of the distribution of sample means – Commonly called the standard error Properties of the distribution of sample means

n The standard error is the average amount that you’d expect a sample (of size n) to deviate from the population mean n In other words, it is an estimate of the error that you’d expect by chance (or by sampling) Standard error

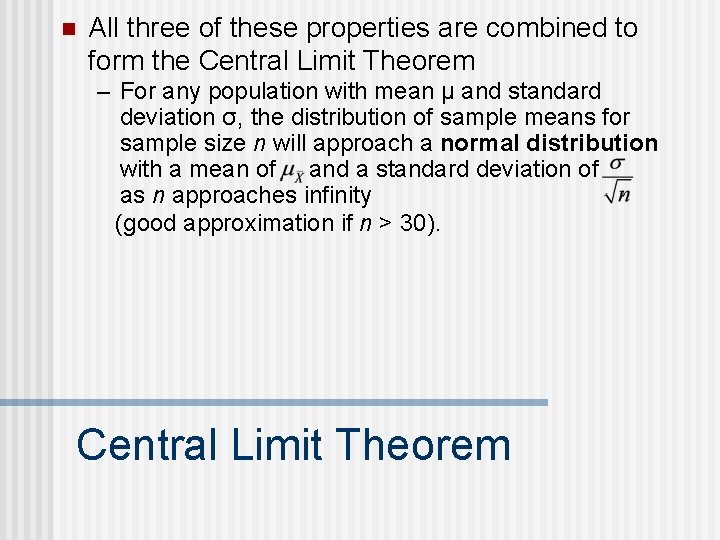

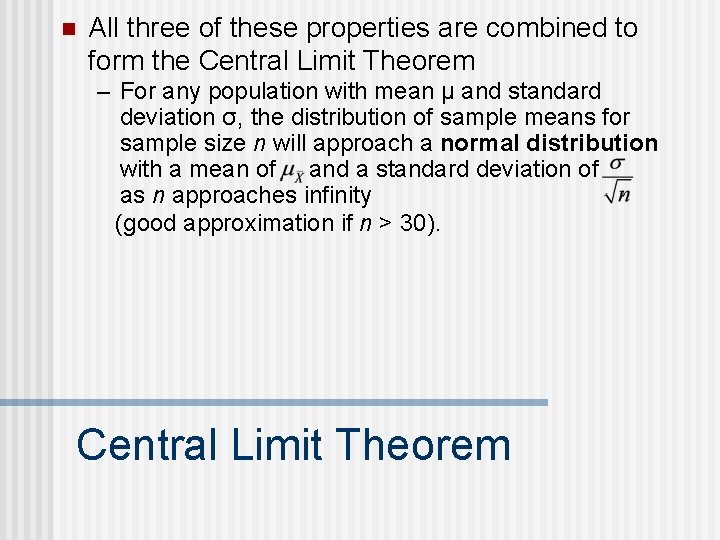

n All three of these properties are combined to form the Central Limit Theorem – For any population with mean μ and standard deviation σ, the distribution of sample means for sample size n will approach a normal distribution with a mean of and a standard deviation of as n approaches infinity (good approximation if n > 30). Central Limit Theorem

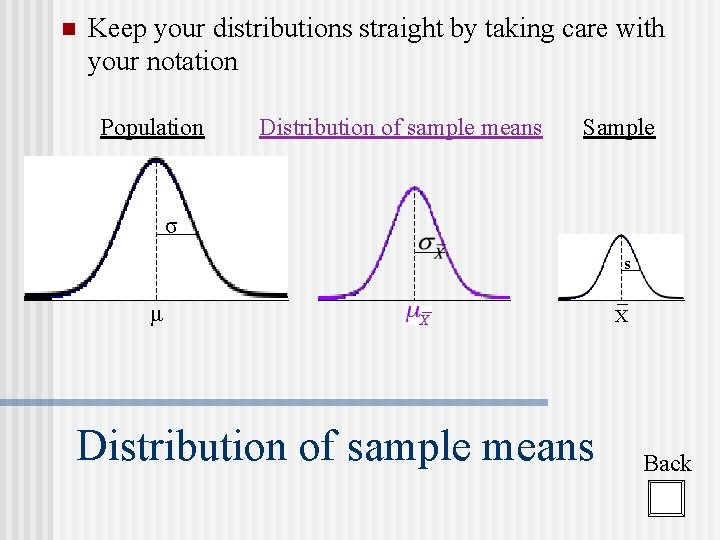

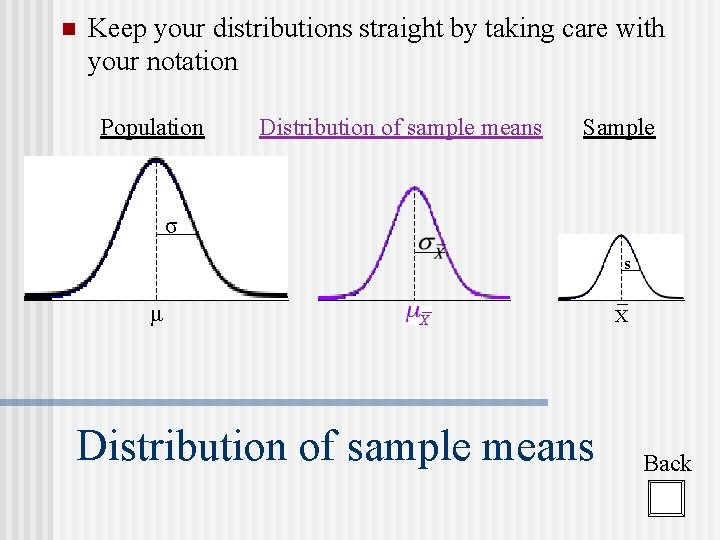

n Keep your distributions straight by taking care with your notation Population Distribution of sample means Sample σ s μ Distribution of sample means X Back