STATISTICS 200 Lecture 6 Thursday September 8 2016

STATISTICS 200 Lecture #6 Thursday, September 8, 2016 Textbook: Sections 3. 3 through 3. 5 Objectives (for two quantitative variables and their relationship): • Define and interpret residual • Define and interpret correlation coefficient • Interpret the square of the correlation coefficient (r-squared) • Recognize various pitfalls in using regression – Extrapolation is dangerous. – Outliers can have a huge effect. – Interpreting a linear relationship as causation is dangerous.

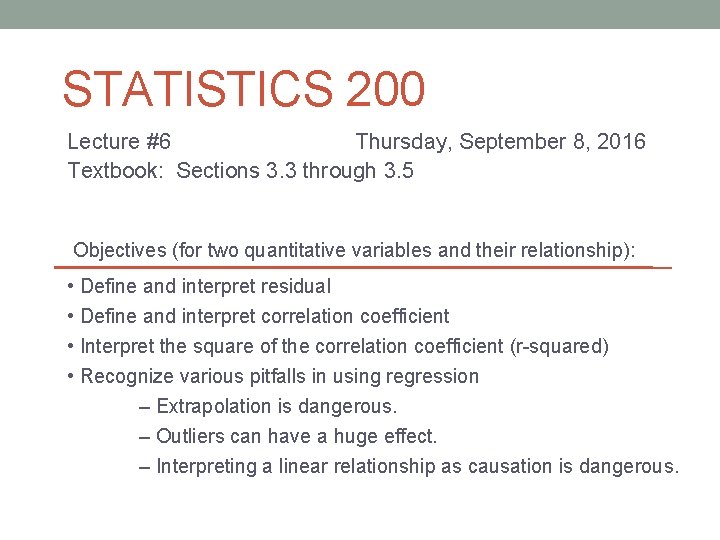

For which fitted line plot(s) does the y-intercept have a logical interpretation? a. b. c. d. e. line plot 1 line plot 2 line plot 3 line plots 1 & 3 line plots 1, 2, & 3 2

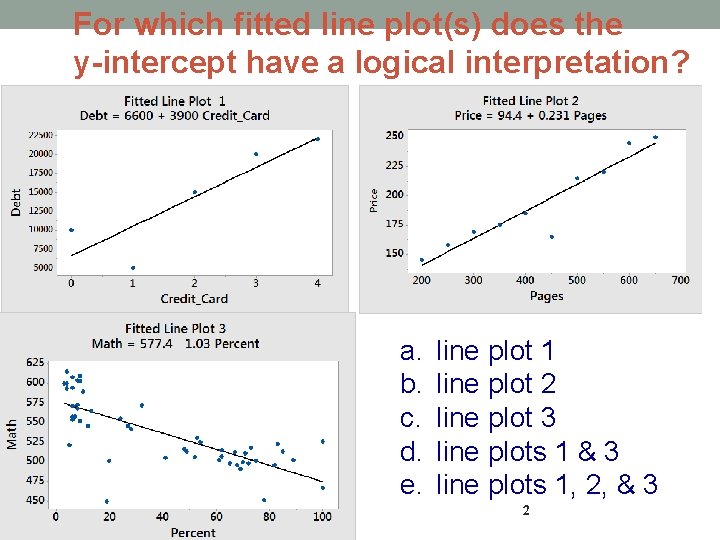

3 Residual: Deviation of Point from the Regression Line = observed - predicted

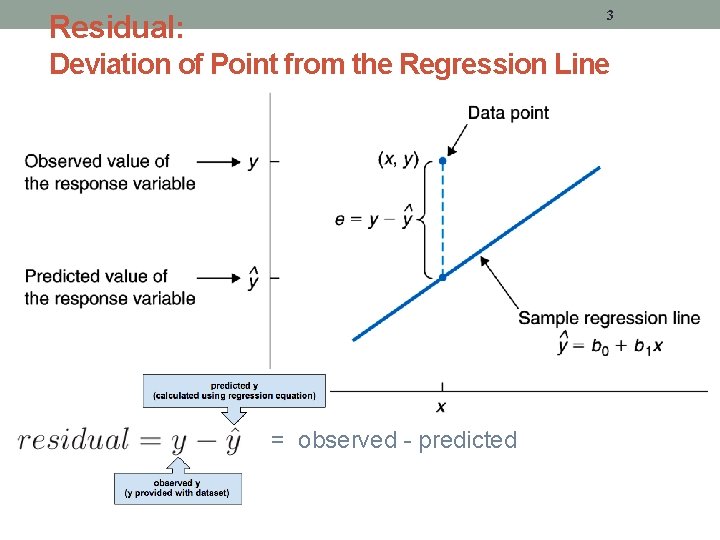

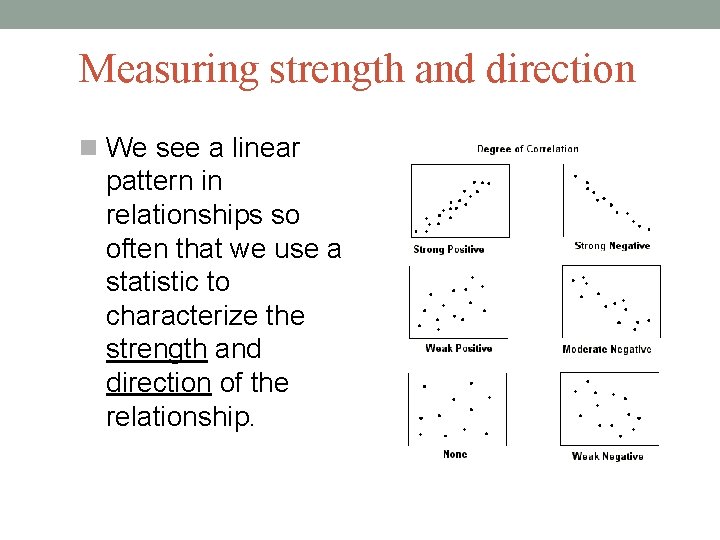

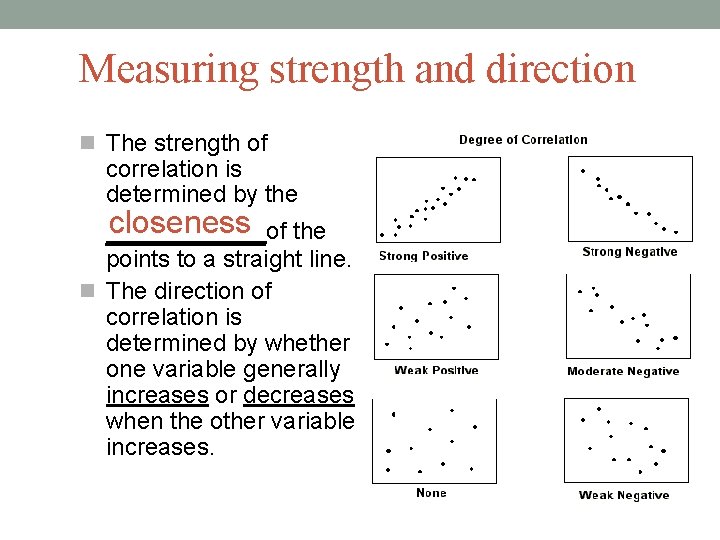

Measuring strength and direction We see a linear pattern in relationships so often that we use a statistic to characterize the strength and direction of the relationship.

Measuring strength and direction The strength of correlation is determined by the closeness of the ____ points to a straight line. The direction of correlation is determined by whether one variable generally increases or decreases when the other variable increases.

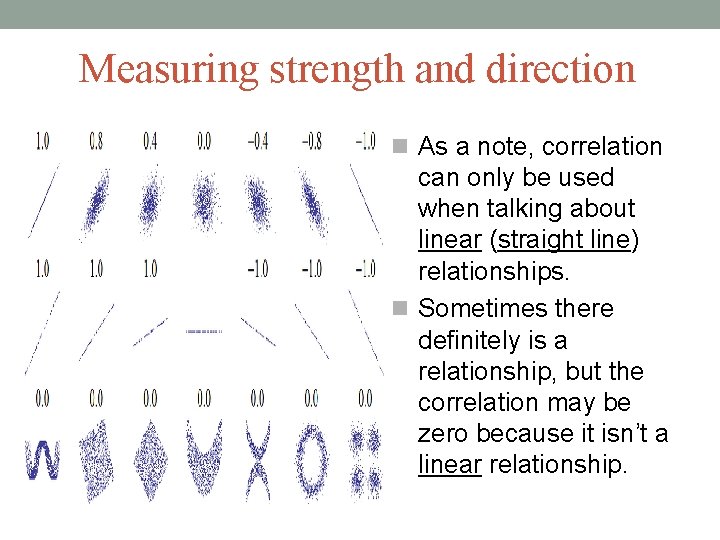

Measuring strength and direction As a note, correlation can only be used when talking about linear (straight line) relationships. Sometimes there definitely is a relationship, but the correlation may be zero because it isn’t a linear relationship.

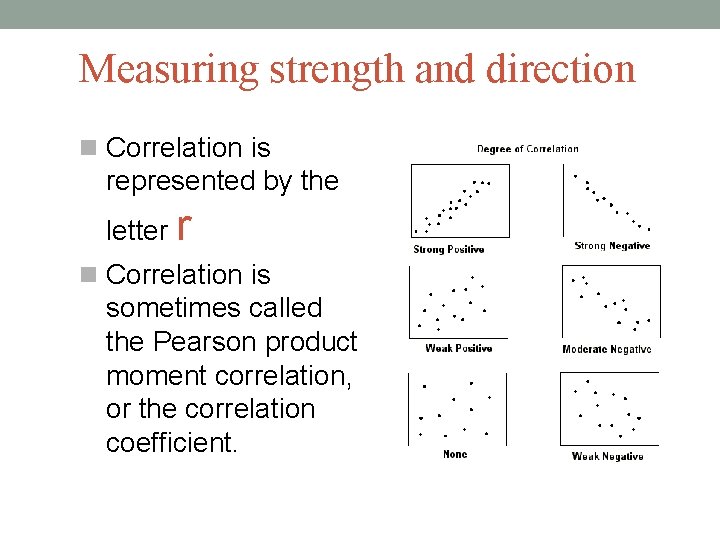

Measuring strength and direction Correlation is represented by the letter r Correlation is sometimes called the Pearson product moment correlation, or the correlation coefficient.

Measuring strength and direction For correlation: It doesn’t matter which variable you treat as the response and which variable you treat as the explanatory variable For the regression equation, it DOES matter which variable you treat as the response and which you treat as the explanatory.

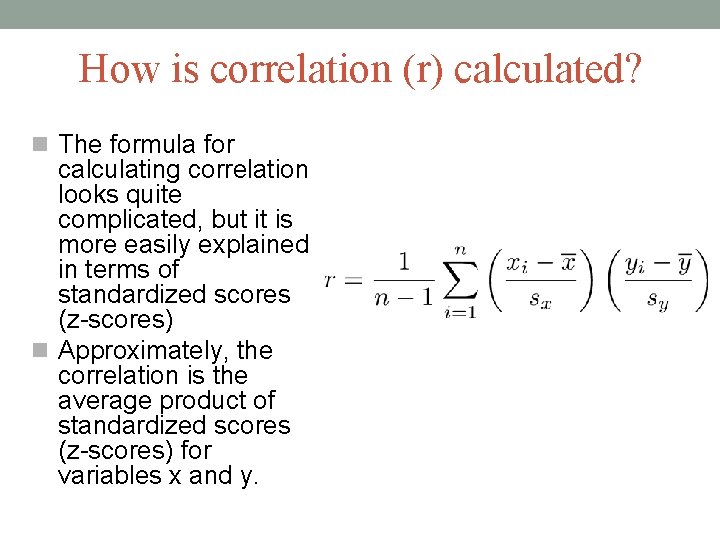

How is correlation (r) calculated? The formula for calculating correlation looks quite complicated, but it is more easily explained in terms of standardized scores (z-scores) Approximately, the correlation is the average product of standardized scores (z-scores) for variables x and y.

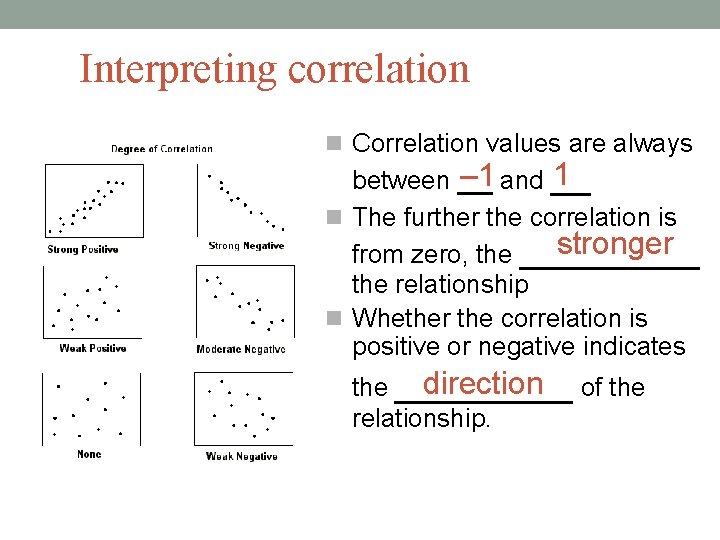

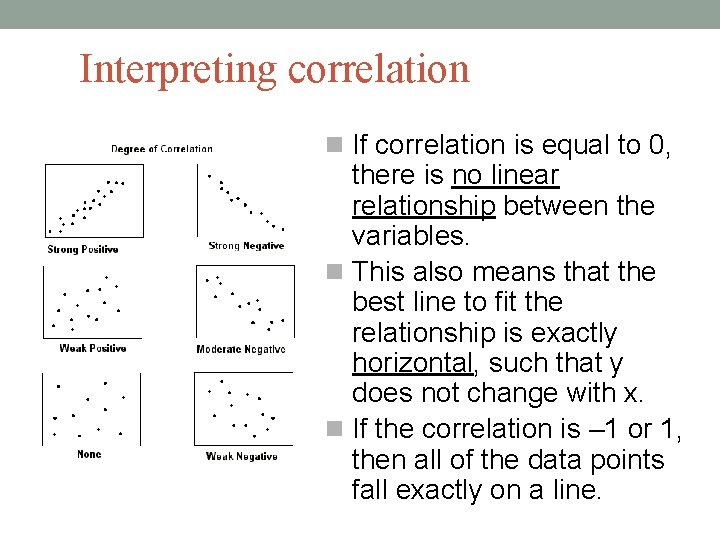

Interpreting correlation Correlation values are always 1 – 1 and __ between __ The further the correlation is stronger from zero, the _____ the relationship Whether the correlation is positive or negative indicates direction of the ____ relationship.

Interpreting correlation If correlation is equal to 0, there is no linear relationship between the variables. This also means that the best line to fit the relationship is exactly horizontal, such that y does not change with x. If the correlation is – 1 or 1, then all of the data points fall exactly on a line.

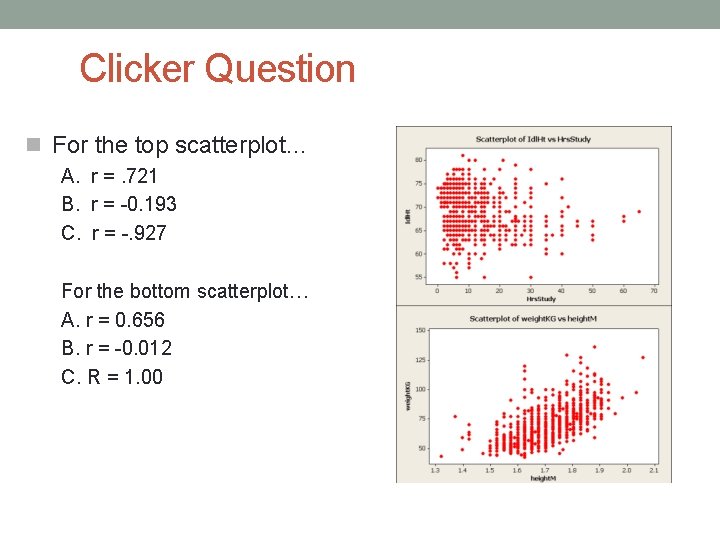

Clicker Question For the top scatterplot… A. r =. 721 B. r = -0. 193 C. r = -. 927 For the bottom scatterplot… A. r = 0. 656 B. r = -0. 012 C. R = 1. 00

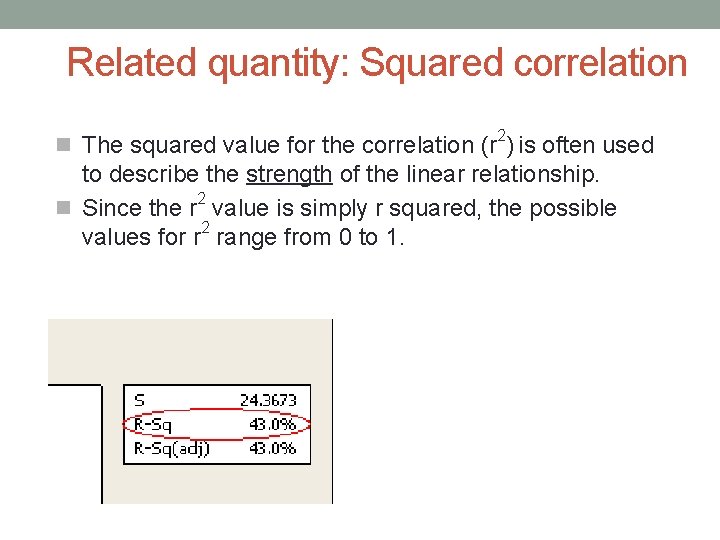

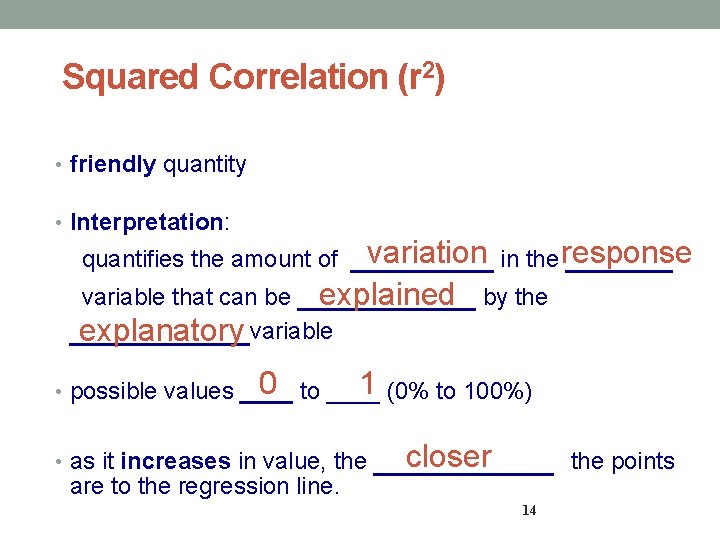

Related quantity: Squared correlation 2 The squared value for the correlation (r ) is often used to describe the strength of the linear relationship. 2 Since the r value is simply r squared, the possible values for r 2 range from 0 to 1.

Squared Correlation (r 2) • friendly quantity • Interpretation: variation in the response ______ variable that can be _____ explained by the _____ explanatory variable quantifies the amount of 1 (0% to 100%) 0 to ____ • possible values ____ • as it increases in value, the are to the regression line. closer _____ 14 the points

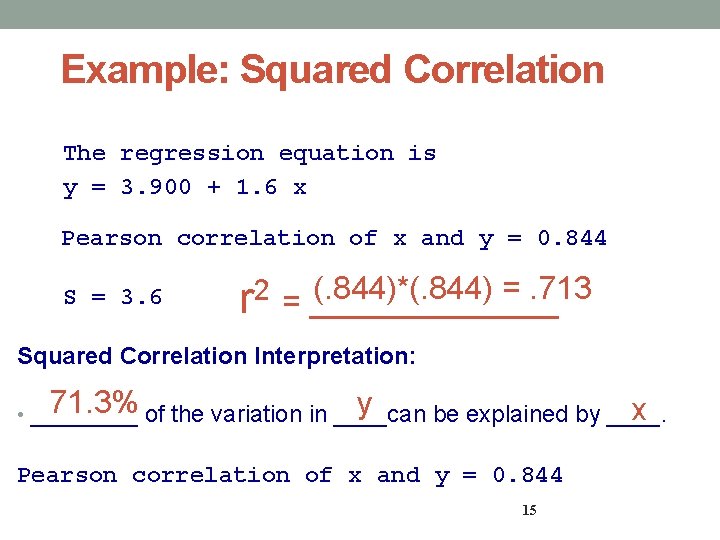

Example: Squared Correlation The regression equation is y = 3. 900 + 1. 6 x Pearson correlation of x and y = 0. 844 S = 3. 6 (. 844)*(. 844) =. 713 2 r = _______ Squared Correlation Interpretation: 71. 3% of the variation in ____can y x • ____ be explained by ____. Pearson correlation of x and y = 0. 844 15

Issues with regression Several problems can arise when you are analyzing the relationship between two quantitative variables: Extrapolation Influential Outliers Curvilinear Data Combining Groups Inappropriately

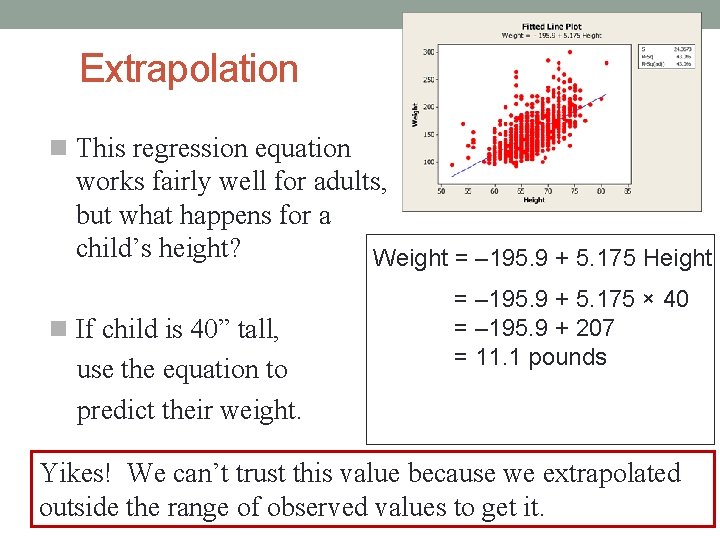

Extrapolation is when you use the regression equation to predict values outside _____ the range of observed data. For example, let’s look at height and weight data.

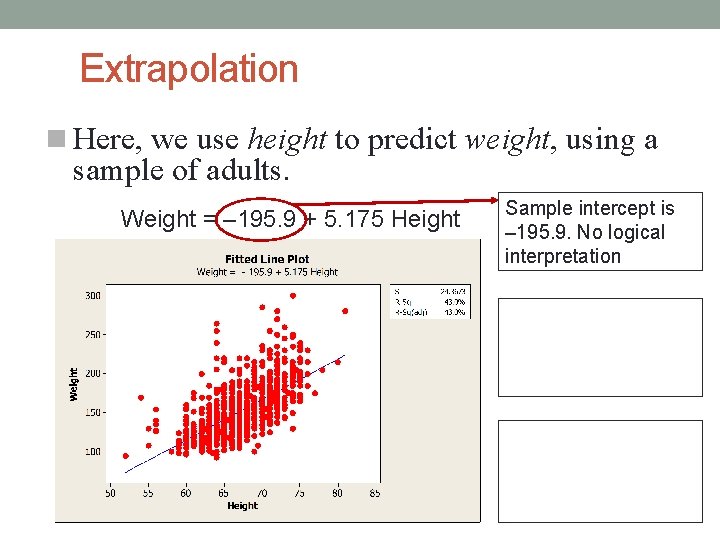

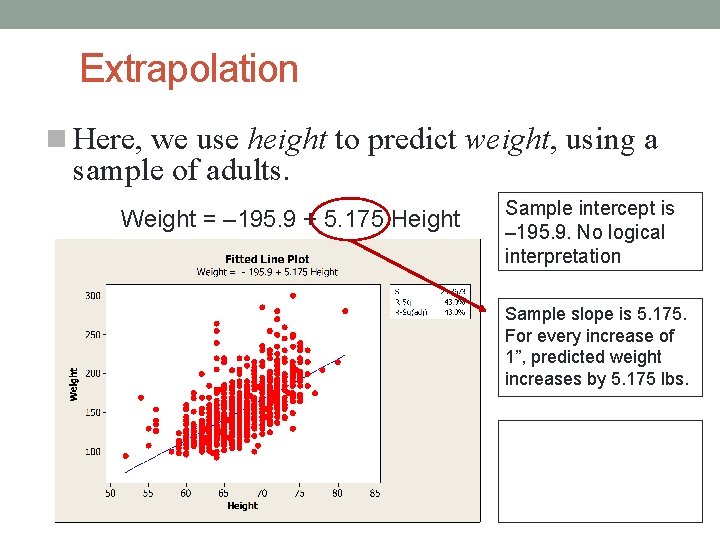

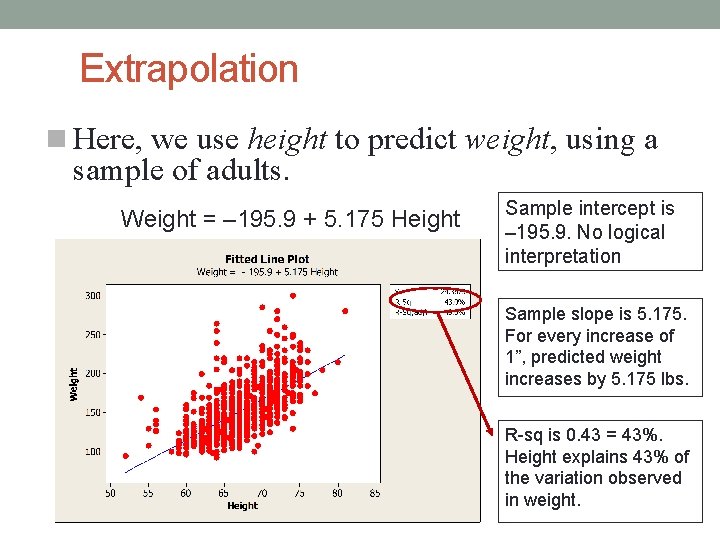

Extrapolation Here, we use height to predict weight, using a sample of adults. Weight = – 195. 9 + 5. 175 Height Sample intercept is – 195. 9. No logical interpretation

Extrapolation Here, we use height to predict weight, using a sample of adults. Weight = – 195. 9 + 5. 175 Height Sample intercept is – 195. 9. No logical interpretation Sample slope is 5. 175. For every increase of 1”, predicted weight increases by 5. 175 lbs.

Extrapolation Here, we use height to predict weight, using a sample of adults. Weight = – 195. 9 + 5. 175 Height Sample intercept is – 195. 9. No logical interpretation Sample slope is 5. 175. For every increase of 1”, predicted weight increases by 5. 175 lbs. R-sq is 0. 43 = 43%. Height explains 43% of the variation observed in weight.

Extrapolation This regression equation works fairly well for adults, but what happens for a child’s height? Weight = – 195. 9 + 5. 175 Height If child is 40” tall, use the equation to predict their weight. = – 195. 9 + 5. 175 × 40 = – 195. 9 + 207 = 11. 1 pounds Yikes! We can’t trust this value because we extrapolated outside the range of observed values to get it.

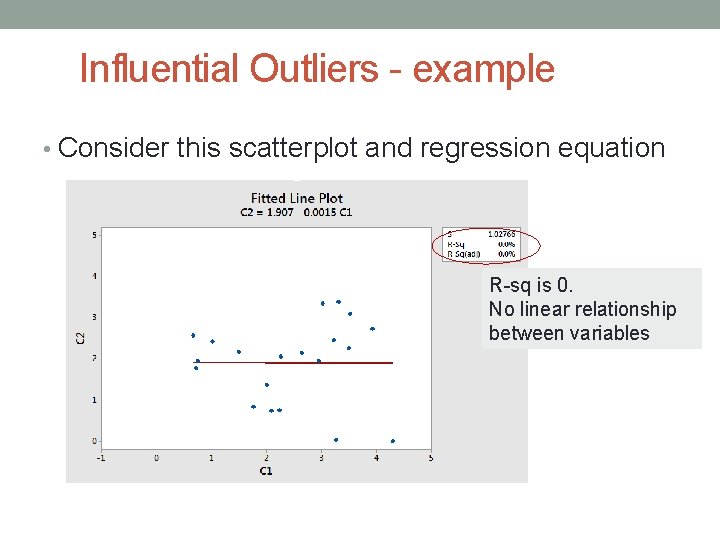

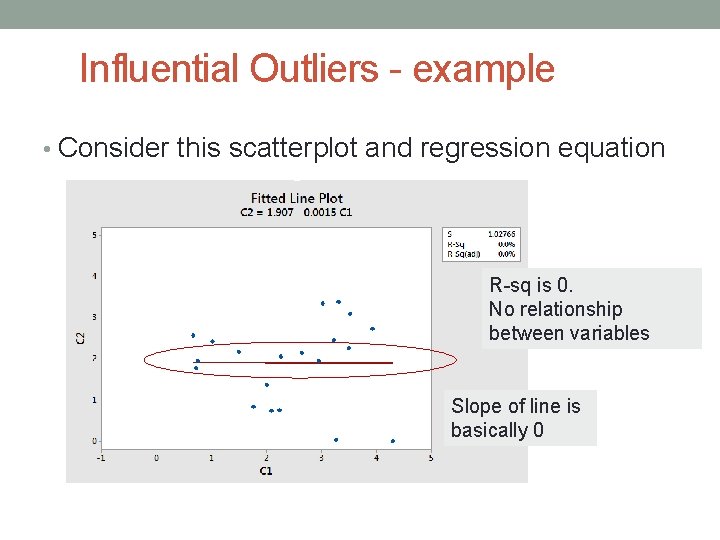

Influential Outliers - example • Consider this scatterplot and regression equation R-sq is 0. No linear relationship between variables

Influential Outliers - example • Consider this scatterplot and regression equation R-sq is 0. No relationship between variables Slope of line is basically 0

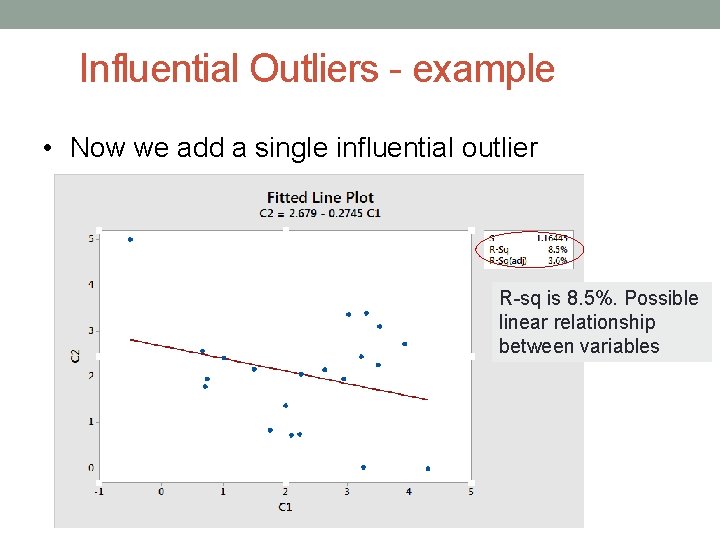

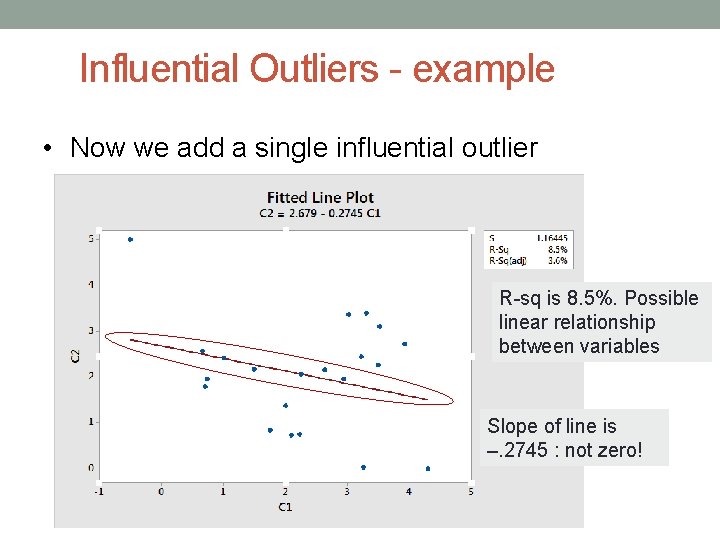

Influential Outliers - example • Now we add a single influential outlier R-sq is 8. 5%. Possible linear relationship between variables

Influential Outliers - example • Now we add a single influential outlier R-sq is 8. 5%. Possible linear relationship between variables Slope of line is –. 2745 : not zero!

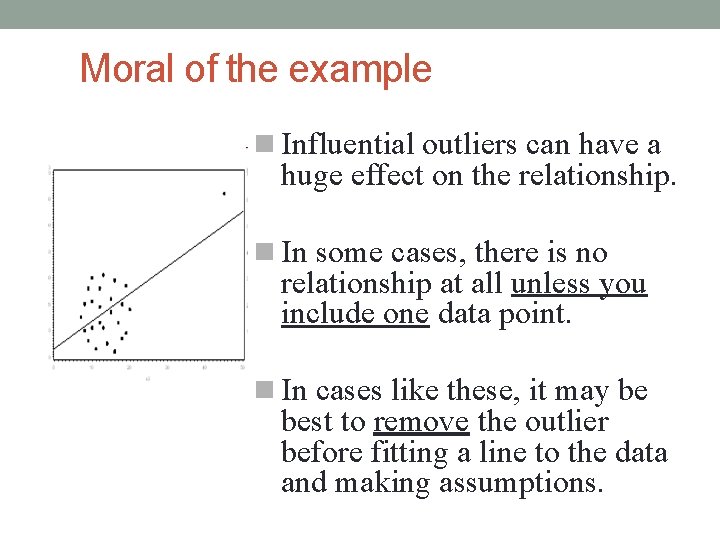

Moral of the example Influential outliers can have a huge effect on the relationship. In some cases, there is no relationship at all unless you include one data point. In cases like these, it may be best to remove the outlier before fitting a line to the data and making assumptions.

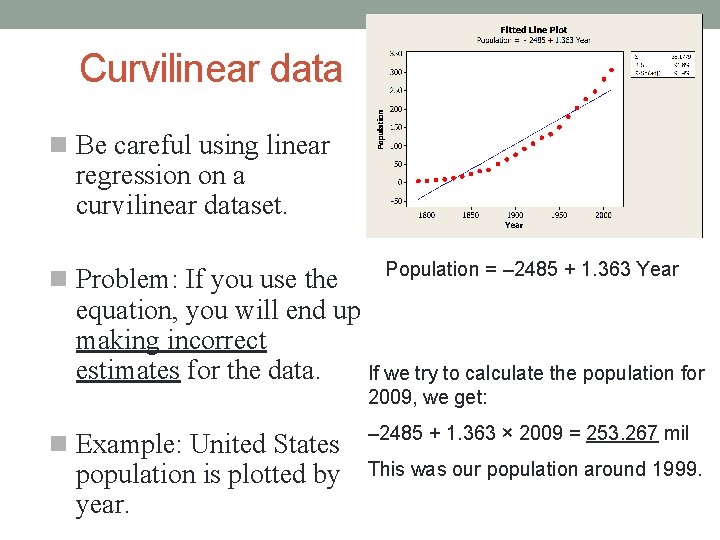

Curvilinear data Be careful using linear regression on a curvilinear dataset. Problem: If you use the Population = – 2485 + 1. 363 Year equation, you will end up making incorrect estimates for the data. If we try to calculate the population for 2009, we get: Example: United States population is plotted by year. – 2485 + 1. 363 × 2009 = 253. 267 mil This was our population around 1999.

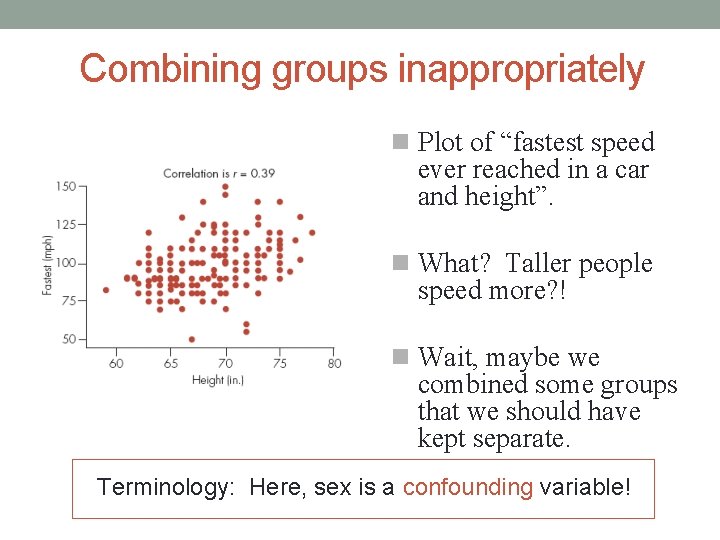

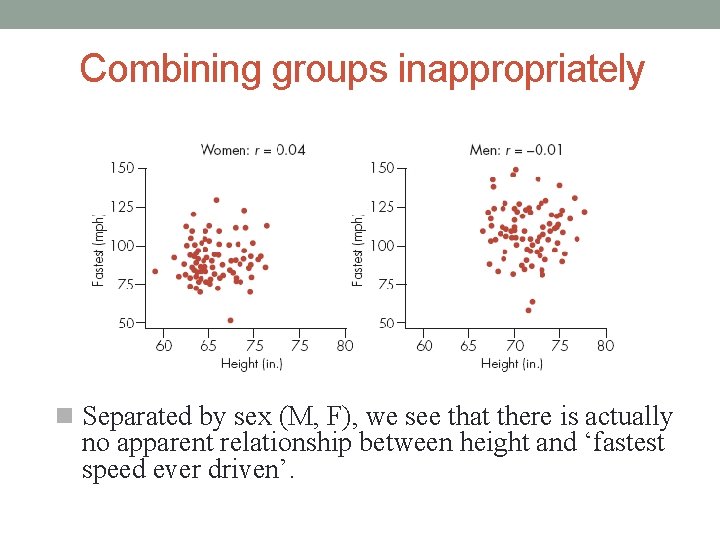

Combining groups inappropriately Plot of “fastest speed ever reached in a car and height”. What? Taller people speed more? ! Wait, maybe we combined some groups that we should have kept separate. Terminology: Here, sex is a confounding variable!

Combining groups inappropriately Separated by sex (M, F), we see that there is actually no apparent relationship between height and ‘fastest speed ever driven’.

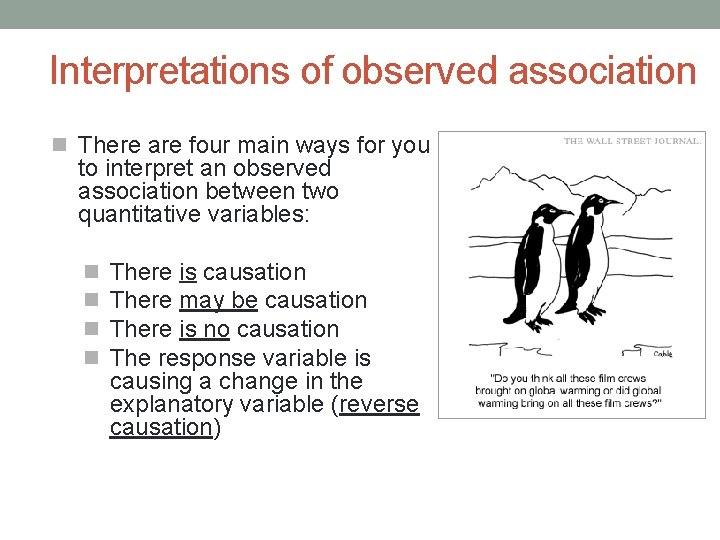

Interpretations of observed association There are four main ways for you to interpret an observed association between two quantitative variables: There is causation There may be causation There is no causation The response variable is causing a change in the explanatory variable (reverse causation)

Important note. . . A strong correlation does not necessarily mean there is a causal relationship between two variables. Most correlations come from observational studies and we can’t claim causation from observational studies! All a strong correlation means is that there is an association between the variables.

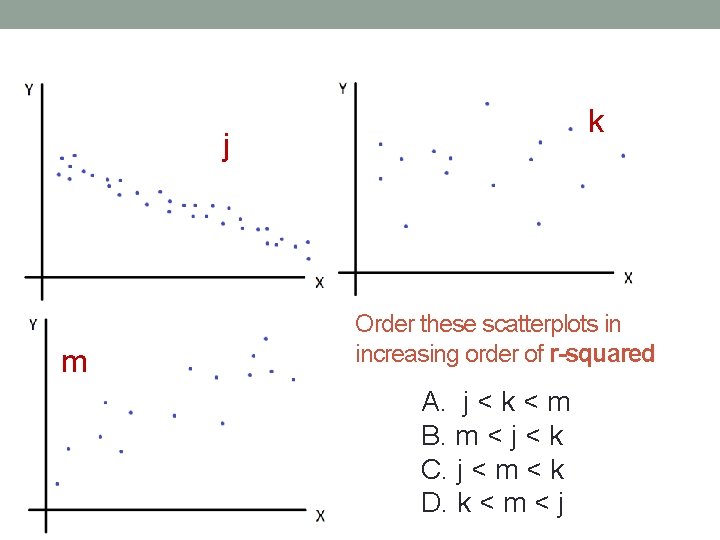

k j m Order these scatterplots in increasing order of r-squared A. j < k < m B. m < j < k C. j < m < k D. k < m < j

Review: If you understood today’s lecture, you should be able to solve • 3. 27, 3. 29, 3. 33, 3. 37, 3. 39, 3. 41, 3. 45, 3. 63, 3. 75, 3. 77. Recall objectives (for two quantitative variables): • Define and interpret residual • Define and interpret correlation coefficient • Interpret the square of the correlation coefficient (r-squared) • Recognize various pitfalls in using regression – Extrapolation is dangerous. – Outliers can have a huge effect. – Interpreting a linear relationship as causation is dangerous.

- Slides: 33