Statistical XFER Hybrid Statistical Rulebased Machine Translation Alon

Statistical XFER: Hybrid Statistical Rule-based Machine Translation Alon Lavie Language Technologies Institute Carnegie Mellon University Joint work with: Jaime Carbonell, Lori Levin, Bob Frederking, Erik Peterson, Christian Monson, Vamshi Ambati, Greg Hanneman, Kathrin Probst, Ariadna Font-Llitjos, Alison Alvarez, Roberto Aranovich Aug 29, 2007 Statistical XFER MT

Outline • • Background and Rationale Stat-XFER Framework Overview Elicitation Learning Transfer Rules Automatic Rule Refinement Example Prototypes Major Research Challenges Aug 29, 2007 Statistical XFER MT 2

Progression of MT • Started with rule-based systems – Very large expert human effort to construct languagespecific resources (grammars, lexicons) – High-quality MT extremely expensive only for handful of language pairs • Along came EBMT and then Statistical MT… – Replaced human effort with extremely large volumes of parallel text data – Less expensive, but still only feasible for a small number of language pairs – We “traded” human labor with data • Where does this take us in 5 -10 years? – Large parallel corpora for maybe 25 -50 language pairs • What about all the other languages? • Is all this data (with very shallow representation of language structure) really necessary? • Can we build MT approaches that learn deeper levels of language structure and how they map from one language to another? Aug 29, 2007 Statistical XFER MT 3

Rule-based vs. Statistical MT • Traditional Rule-based MT: – Expressive and linguistically-rich formalisms capable of describing complex mappings between the two languages – Accurate “clean” resources – Everything constructed manually by experts – Main challenge: obtaining broad coverage • Phrase-based Statistical MT: – Learn word and phrase correspondences automatically from large volumes of parallel data – Search-based “decoding” framework: • Models propose many alternative translations • Effective search algorithms find the “best” translation – Main challenge: obtaining high translation accuracy Aug 29, 2007 Statistical XFER MT 4

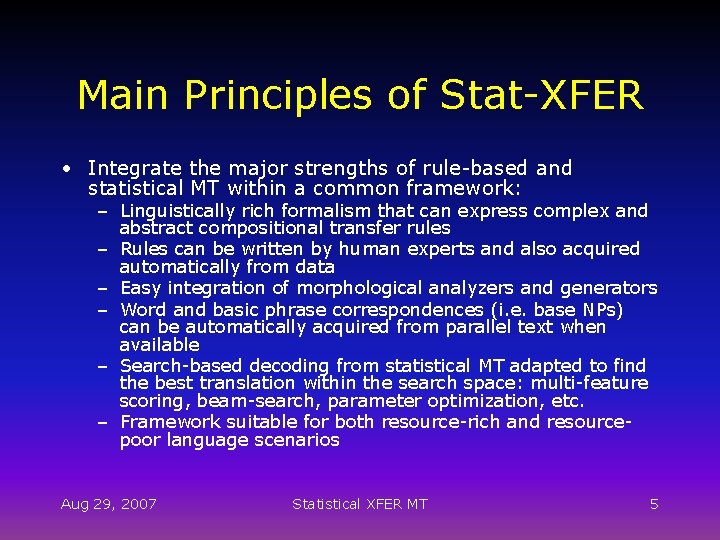

Main Principles of Stat-XFER • Integrate the major strengths of rule-based and statistical MT within a common framework: – Linguistically rich formalism that can express complex and abstract compositional transfer rules – Rules can be written by human experts and also acquired automatically from data – Easy integration of morphological analyzers and generators – Word and basic phrase correspondences (i. e. base NPs) can be automatically acquired from parallel text when available – Search-based decoding from statistical MT adapted to find the best translation within the search space: multi-feature scoring, beam-search, parameter optimization, etc. – Framework suitable for both resource-rich and resourcepoor language scenarios Aug 29, 2007 Statistical XFER MT 5

Stat-XFER MT Approach Interlingua Semantic Analysis Syntactic Parsing Sentence Planning Transfer Rules Text Generation Statistical-XFER Source (e. g. Quechua) Aug 29, 2007 Direct: SMT, EBMT Statistical XFER MT Target (e. g. English) 6

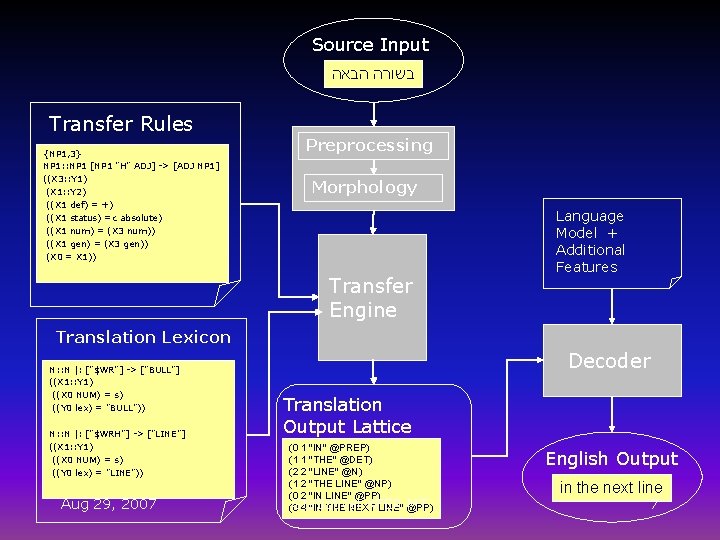

Source Input בשורה הבאה Transfer Rules {NP 1, 3} NP 1: : NP 1 [NP 1 "H" ADJ] -> [ADJ NP 1] ((X 3: : Y 1) (X 1: : Y 2) ((X 1 def) = +) ((X 1 status) =c absolute) ((X 1 num) = (X 3 num)) ((X 1 gen) = (X 3 gen)) (X 0 = X 1)) Preprocessing Morphology Transfer Engine Language Model + Additional Features Translation Lexicon N: : N |: ["$WR"] -> ["BULL"] ((X 1: : Y 1) ((X 0 NUM) = s) ((Y 0 lex) = "BULL")) N: : N |: ["$WRH"] -> ["LINE"] ((X 1: : Y 1) ((X 0 NUM) = s) ((Y 0 lex) = "LINE")) Aug 29, 2007 Decoder Translation Output Lattice (0 1 "IN" @PREP) (1 1 "THE" @DET) (2 2 "LINE" @N) (1 2 "THE LINE" @NP) (0 2 "IN LINE" @PP) Statistical XFER MT (0 4 "IN THE NEXT LINE" @PP) English Output in the next line 7

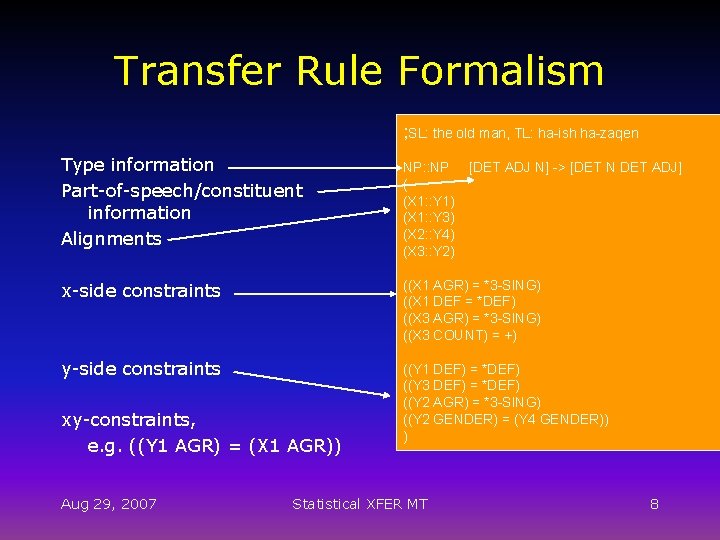

Transfer Rule Formalism ; SL: the old man, TL: ha-ish ha-zaqen Type information Part-of-speech/constituent information Alignments NP: : NP ( (X 1: : Y 1) (X 1: : Y 3) (X 2: : Y 4) (X 3: : Y 2) x-side constraints ((X 1 AGR) = *3 -SING) ((X 1 DEF = *DEF) ((X 3 AGR) = *3 -SING) ((X 3 COUNT) = +) y-side constraints ((Y 1 DEF) = *DEF) ((Y 3 DEF) = *DEF) ((Y 2 AGR) = *3 -SING) ((Y 2 GENDER) = (Y 4 GENDER)) ) xy-constraints, e. g. ((Y 1 AGR) = (X 1 AGR)) Aug 29, 2007 Statistical XFER MT [DET ADJ N] -> [DET N DET ADJ] 8

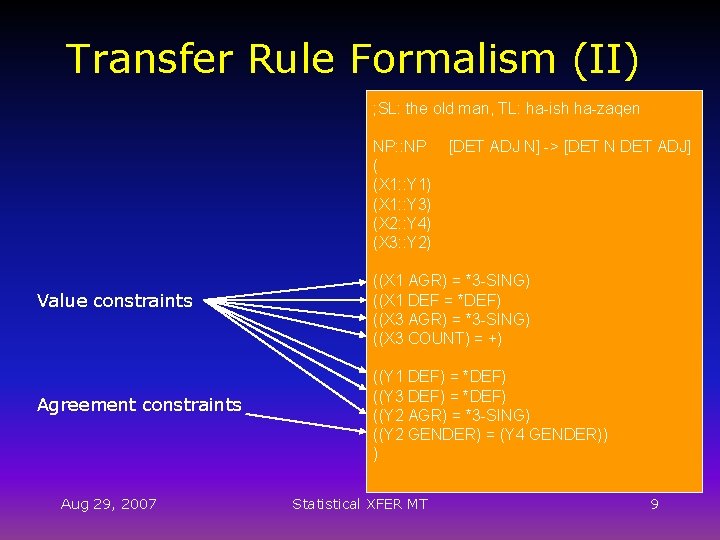

Transfer Rule Formalism (II) ; SL: the old man, TL: ha-ish ha-zaqen NP: : NP ( (X 1: : Y 1) (X 1: : Y 3) (X 2: : Y 4) (X 3: : Y 2) Value constraints Agreement constraints Aug 29, 2007 [DET ADJ N] -> [DET N DET ADJ] ((X 1 AGR) = *3 -SING) ((X 1 DEF = *DEF) ((X 3 AGR) = *3 -SING) ((X 3 COUNT) = +) ((Y 1 DEF) = *DEF) ((Y 3 DEF) = *DEF) ((Y 2 AGR) = *3 -SING) ((Y 2 GENDER) = (Y 4 GENDER)) ) Statistical XFER MT 9

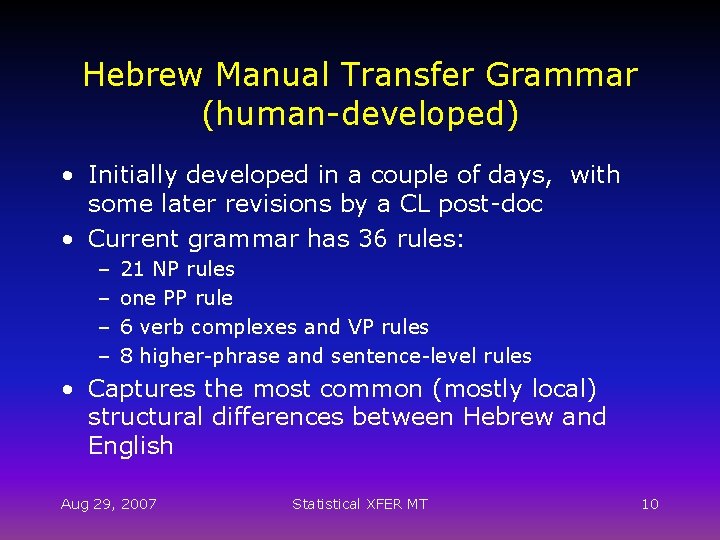

Hebrew Manual Transfer Grammar (human-developed) • Initially developed in a couple of days, with some later revisions by a CL post-doc • Current grammar has 36 rules: – – 21 NP rules one PP rule 6 verb complexes and VP rules 8 higher-phrase and sentence-level rules • Captures the most common (mostly local) structural differences between Hebrew and English Aug 29, 2007 Statistical XFER MT 10

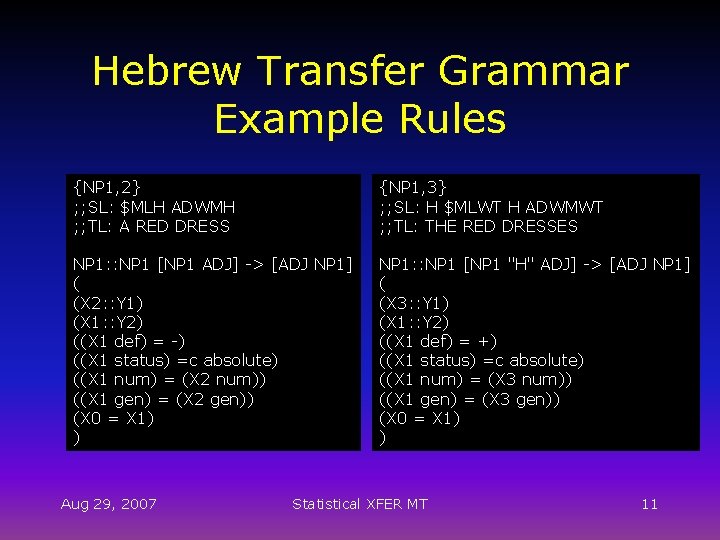

Hebrew Transfer Grammar Example Rules {NP 1, 2} ; ; SL: $MLH ADWMH ; ; TL: A RED DRESS {NP 1, 3} ; ; SL: H $MLWT H ADWMWT ; ; TL: THE RED DRESSES NP 1: : NP 1 [NP 1 ADJ] -> [ADJ NP 1] ( (X 2: : Y 1) (X 1: : Y 2) ((X 1 def) = -) ((X 1 status) =c absolute) ((X 1 num) = (X 2 num)) ((X 1 gen) = (X 2 gen)) (X 0 = X 1) ) NP 1: : NP 1 [NP 1 "H" ADJ] -> [ADJ NP 1] ( (X 3: : Y 1) (X 1: : Y 2) ((X 1 def) = +) ((X 1 status) =c absolute) ((X 1 num) = (X 3 num)) ((X 1 gen) = (X 3 gen)) (X 0 = X 1) ) Aug 29, 2007 Statistical XFER MT 11

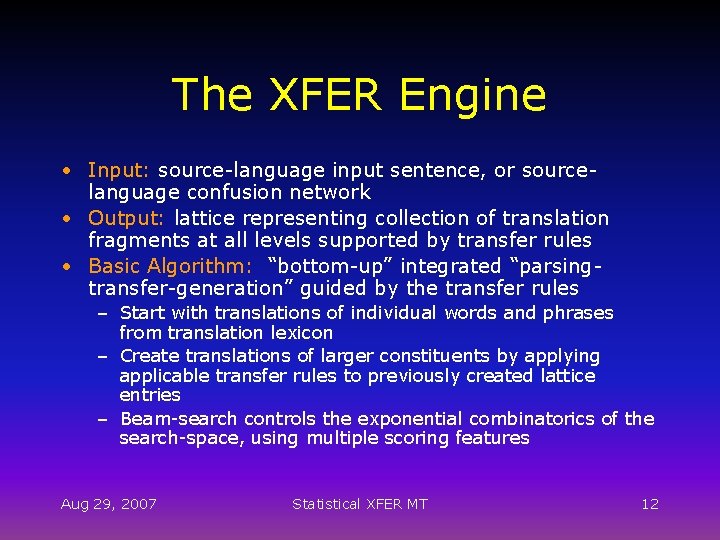

The XFER Engine • Input: source-language input sentence, or sourcelanguage confusion network • Output: lattice representing collection of translation fragments at all levels supported by transfer rules • Basic Algorithm: “bottom-up” integrated “parsingtransfer-generation” guided by the transfer rules – Start with translations of individual words and phrases from translation lexicon – Create translations of larger constituents by applying applicable transfer rules to previously created lattice entries – Beam-search controls the exponential combinatorics of the search-space, using multiple scoring features Aug 29, 2007 Statistical XFER MT 12

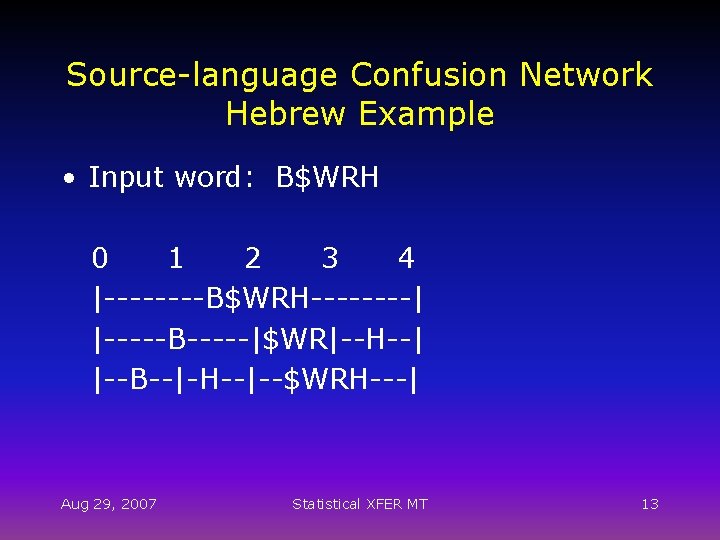

Source-language Confusion Network Hebrew Example • Input word: B$WRH 0 1 2 3 4 |----B$WRH----| |-----B-----|$WR|--H--| |--B--|-H--|--$WRH---| Aug 29, 2007 Statistical XFER MT 13

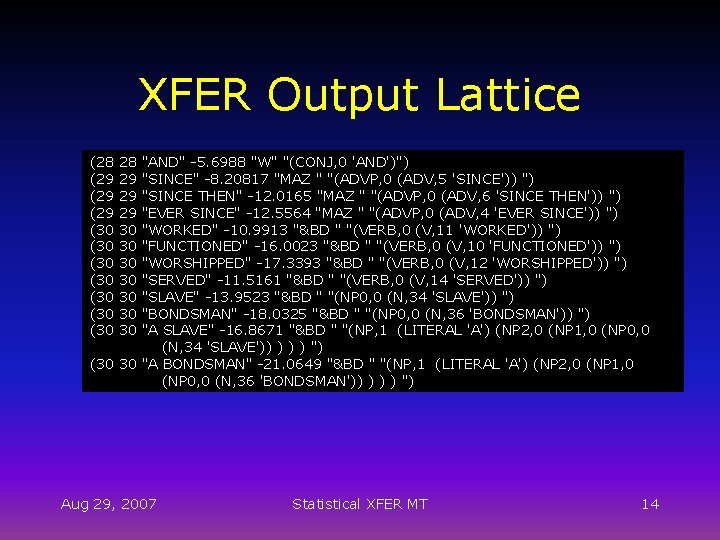

XFER Output Lattice (28 (29 (29 (30 (30 28 29 29 29 30 30 "AND" -5. 6988 "W" "(CONJ, 0 'AND')") "SINCE" -8. 20817 "MAZ " "(ADVP, 0 (ADV, 5 'SINCE')) ") "SINCE THEN" -12. 0165 "MAZ " "(ADVP, 0 (ADV, 6 'SINCE THEN')) ") "EVER SINCE" -12. 5564 "MAZ " "(ADVP, 0 (ADV, 4 'EVER SINCE')) ") "WORKED" -10. 9913 "&BD " "(VERB, 0 (V, 11 'WORKED')) ") "FUNCTIONED" -16. 0023 "&BD " "(VERB, 0 (V, 10 'FUNCTIONED')) ") "WORSHIPPED" -17. 3393 "&BD " "(VERB, 0 (V, 12 'WORSHIPPED')) ") "SERVED" -11. 5161 "&BD " "(VERB, 0 (V, 14 'SERVED')) ") "SLAVE" -13. 9523 "&BD " "(NP 0, 0 (N, 34 'SLAVE')) ") "BONDSMAN" -18. 0325 "&BD " "(NP 0, 0 (N, 36 'BONDSMAN')) ") "A SLAVE" -16. 8671 "&BD " "(NP, 1 (LITERAL 'A') (NP 2, 0 (NP 1, 0 (NP 0, 0 (N, 34 'SLAVE')) ) ") (30 30 "A BONDSMAN" -21. 0649 "&BD " "(NP, 1 (LITERAL 'A') (NP 2, 0 (NP 1, 0 (NP 0, 0 (N, 36 'BONDSMAN')) ) ") Aug 29, 2007 Statistical XFER MT 14

The Lattice Decoder • Simple Stack Decoder, similar in principle to simple Statistical MT decoders • Searches for best-scoring path of non-overlapping lattice arcs • No reordering during decoding • Scoring based on log-linear combination of scoring components, with weights trained using MERT • Scoring components: – Statistical Language Model – Fragmentation: how many arcs to cover the entire translation? – Length Penalty – Rule Scores – Lexical Probabilities Aug 29, 2007 Statistical XFER MT 15

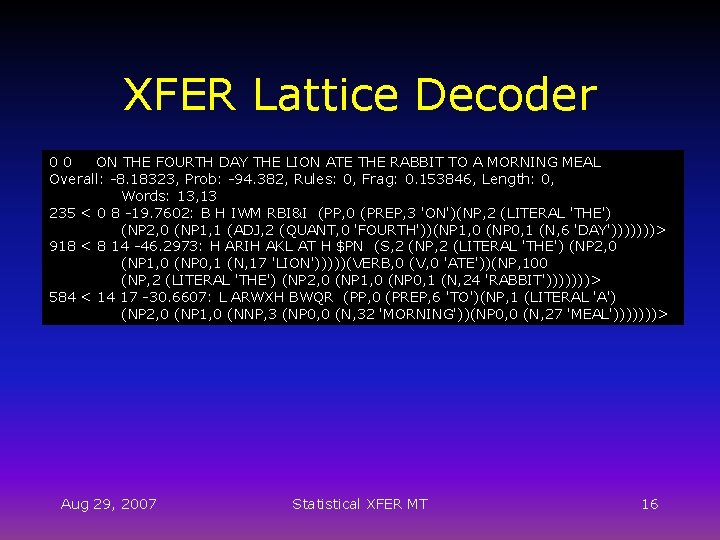

XFER Lattice Decoder 00 ON THE FOURTH DAY THE LION ATE THE RABBIT TO A MORNING MEAL Overall: -8. 18323, Prob: -94. 382, Rules: 0, Frag: 0. 153846, Length: 0, Words: 13, 13 235 < 0 8 -19. 7602: B H IWM RBI&I (PP, 0 (PREP, 3 'ON')(NP, 2 (LITERAL 'THE') (NP 2, 0 (NP 1, 1 (ADJ, 2 (QUANT, 0 'FOURTH'))(NP 1, 0 (NP 0, 1 (N, 6 'DAY')))))))> 918 < 8 14 -46. 2973: H ARIH AKL AT H $PN (S, 2 (NP, 2 (LITERAL 'THE') (NP 2, 0 (NP 1, 0 (NP 0, 1 (N, 17 'LION')))))(VERB, 0 (V, 0 'ATE'))(NP, 100 (NP, 2 (LITERAL 'THE') (NP 2, 0 (NP 1, 0 (NP 0, 1 (N, 24 'RABBIT')))))))> 584 < 14 17 -30. 6607: L ARWXH BWQR (PP, 0 (PREP, 6 'TO')(NP, 1 (LITERAL 'A') (NP 2, 0 (NP 1, 0 (NNP, 3 (NP 0, 0 (N, 32 'MORNING'))(NP 0, 0 (N, 27 'MEAL')))))))> Aug 29, 2007 Statistical XFER MT 16

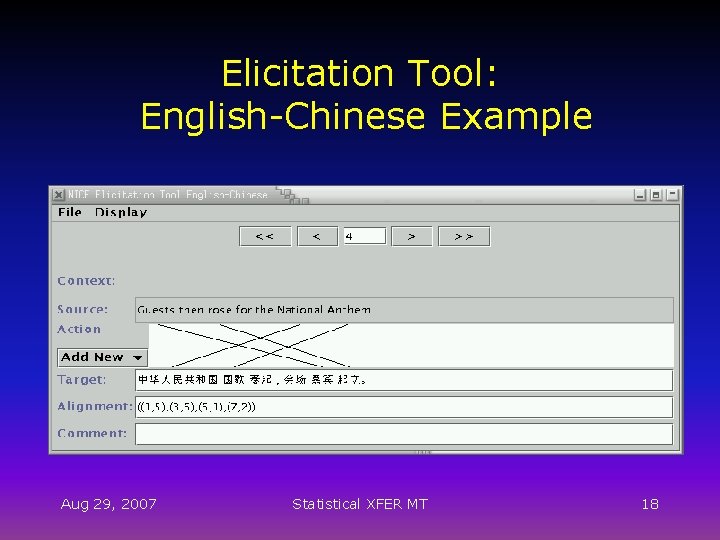

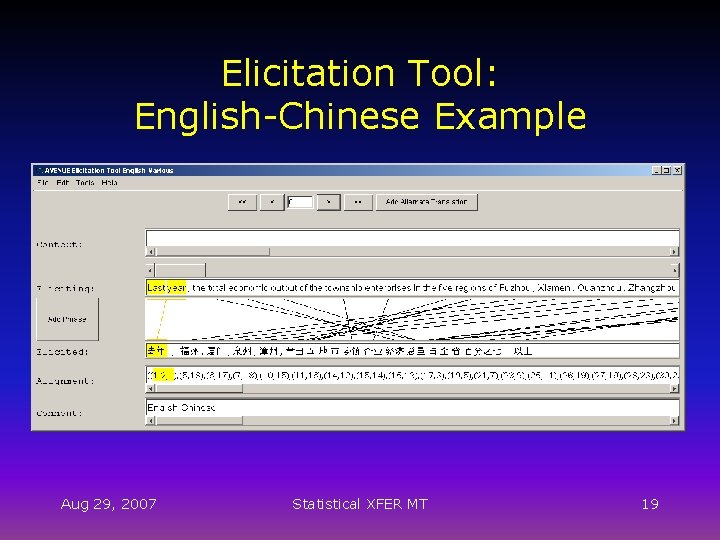

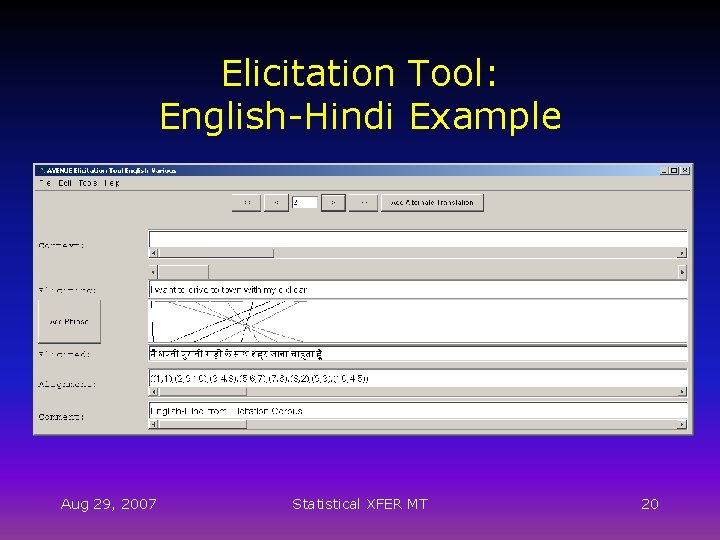

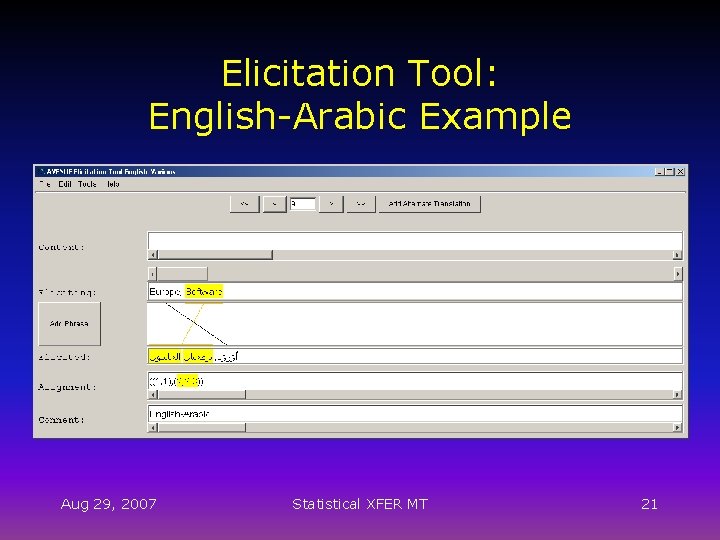

Data Elicitation for Languages with Limited Resources • Rationale: – Large volumes of parallel text not available create a small maximally-diverse parallel corpus that directly supports the learning task – Bilingual native informant(s) can translate and align a small pre-designed elicitation corpus, using elicitation tool – Elicitation corpus designed to be typologically and structurally comprehensive and compositional – Transfer-rule engine and new learning approach support acquisition of generalized transfer-rules from the data Aug 29, 2007 Statistical XFER MT 17

Elicitation Tool: English-Chinese Example Aug 29, 2007 Statistical XFER MT 18

Elicitation Tool: English-Chinese Example Aug 29, 2007 Statistical XFER MT 19

Elicitation Tool: English-Hindi Example Aug 29, 2007 Statistical XFER MT 20

Elicitation Tool: English-Arabic Example Aug 29, 2007 Statistical XFER MT 21

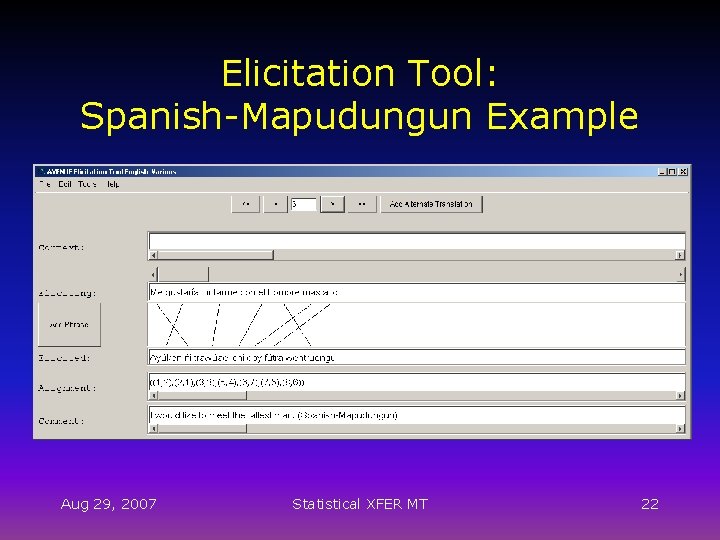

Elicitation Tool: Spanish-Mapudungun Example Aug 29, 2007 Statistical XFER MT 22

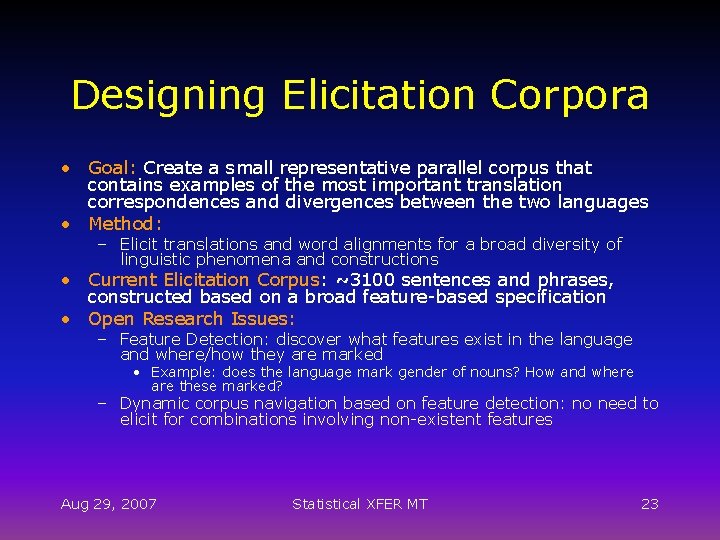

Designing Elicitation Corpora • Goal: Create a small representative parallel corpus that contains examples of the most important translation correspondences and divergences between the two languages • Method: – Elicit translations and word alignments for a broad diversity of linguistic phenomena and constructions • Current Elicitation Corpus: ~3100 sentences and phrases, constructed based on a broad feature-based specification • Open Research Issues: – Feature Detection: discover what features exist in the language and where/how they are marked • Example: does the language mark gender of nouns? How and where are these marked? – Dynamic corpus navigation based on feature detection: no need to elicit for combinations involving non-existent features Aug 29, 2007 Statistical XFER MT 23

Rule Learning - Overview • Goal: Acquire Syntactic Transfer Rules • Use available knowledge from the source side (grammatical structure) • Three steps: 1. Flat Seed Generation: first guesses at transfer rules; flat syntactic structure 2. Compositionality Learning: use previously learned rules to learn hierarchical structure 3. Constraint Learning: refine rules by learning appropriate feature constraints Aug 29, 2007 Statistical XFER MT 24

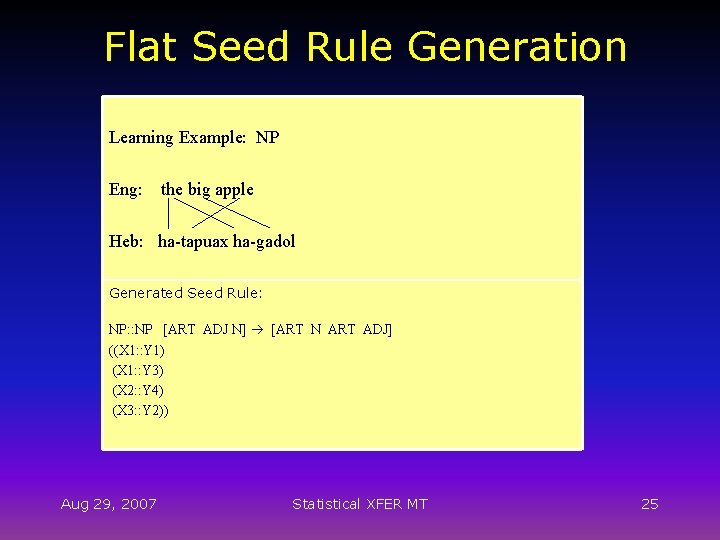

Flat Seed Rule Generation Learning Example: NP Eng: the big apple Heb: ha-tapuax ha-gadol Generated Seed Rule: NP: : NP [ART ADJ N] [ART N ART ADJ] ((X 1: : Y 1) (X 1: : Y 3) (X 2: : Y 4) (X 3: : Y 2)) Aug 29, 2007 Statistical XFER MT 25

![Compositionality Learning Initial Flat Rules: S: : S [ART ADJ N V ART N] Compositionality Learning Initial Flat Rules: S: : S [ART ADJ N V ART N]](http://slidetodoc.com/presentation_image/af6e79d80afe39e5c94ea4650278fff7/image-26.jpg)

Compositionality Learning Initial Flat Rules: S: : S [ART ADJ N V ART N] [ART N ART ADJ V P ART N] ((X 1: : Y 1) (X 1: : Y 3) (X 2: : Y 4) (X 3: : Y 2) (X 4: : Y 5) (X 5: : Y 7) (X 6: : Y 8)) NP: : NP [ART ADJ N] [ART N ART ADJ] ((X 1: : Y 1) (X 1: : Y 3) (X 2: : Y 4) (X 3: : Y 2)) NP: : NP [ART N] ((X 1: : Y 1) (X 2: : Y 2)) Generated Compositional Rule: S: : S [NP V NP] [NP V P NP] ((X 1: : Y 1) (X 2: : Y 2) (X 3: : Y 4)) Aug 29, 2007 Statistical XFER MT 26

![Constraint Learning Input: Rules and their Example Sets S: : S [NP V NP] Constraint Learning Input: Rules and their Example Sets S: : S [NP V NP]](http://slidetodoc.com/presentation_image/af6e79d80afe39e5c94ea4650278fff7/image-27.jpg)

Constraint Learning Input: Rules and their Example Sets S: : S [NP V NP] [NP V P NP] ((X 1: : Y 1) (X 2: : Y 2) (X 3: : Y 4)) {ex 1, ex 12, ex 17, ex 26} NP: : NP [ART ADJ N] [ART N ART ADJ] ((X 1: : Y 1) (X 1: : Y 3) (X 2: : Y 4) (X 3: : Y 2)) {ex 2, ex 3, ex 13} NP: : NP [ART N] ((X 1: : Y 1) (X 2: : Y 2)) {ex 4, ex 5, ex 6, ex 8, ex 10, ex 11} Output: Rules with Feature Constraints: S: : S [NP V NP] [NP V P NP] ((X 1: : Y 1) (X 2: : Y 2) (X 3: : Y 4) (X 1 NUM = X 2 NUM) (Y 1 NUM = Y 2 NUM) (X 1 NUM = Y 1 NUM)) Aug 29, 2007 Statistical XFER MT 27

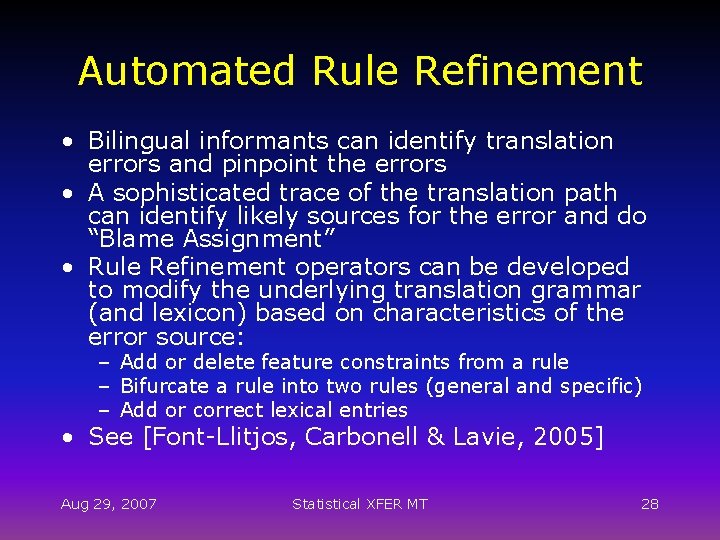

Automated Rule Refinement • Bilingual informants can identify translation errors and pinpoint the errors • A sophisticated trace of the translation path can identify likely sources for the error and do “Blame Assignment” • Rule Refinement operators can be developed to modify the underlying translation grammar (and lexicon) based on characteristics of the error source: – Add or delete feature constraints from a rule – Bifurcate a rule into two rules (general and specific) – Add or correct lexical entries • See [Font-Llitjos, Carbonell & Lavie, 2005] Aug 29, 2007 Statistical XFER MT 28

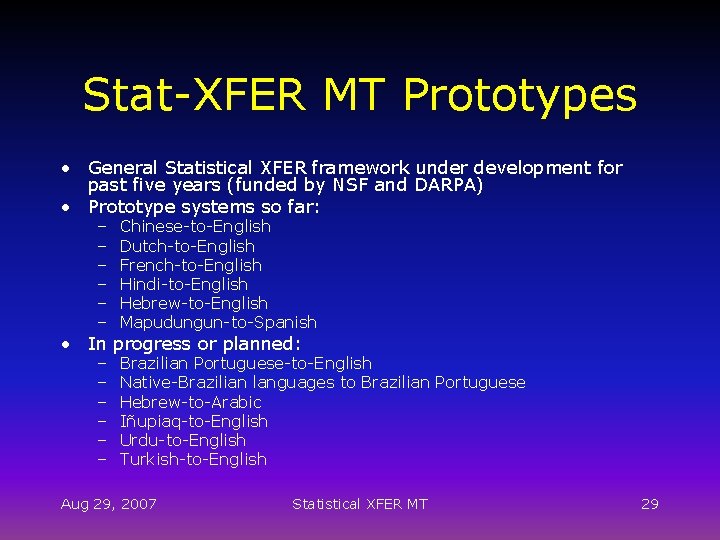

Stat-XFER MT Prototypes • General Statistical XFER framework under development for past five years (funded by NSF and DARPA) • Prototype systems so far: – – – Chinese-to-English Dutch-to-English French-to-English Hindi-to-English Hebrew-to-English Mapudungun-to-Spanish – – – Brazilian Portuguese-to-English Native-Brazilian languages to Brazilian Portuguese Hebrew-to-Arabic Iñupiaq-to-English Urdu-to-English Turkish-to-English • In progress or planned: Aug 29, 2007 Statistical XFER MT 29

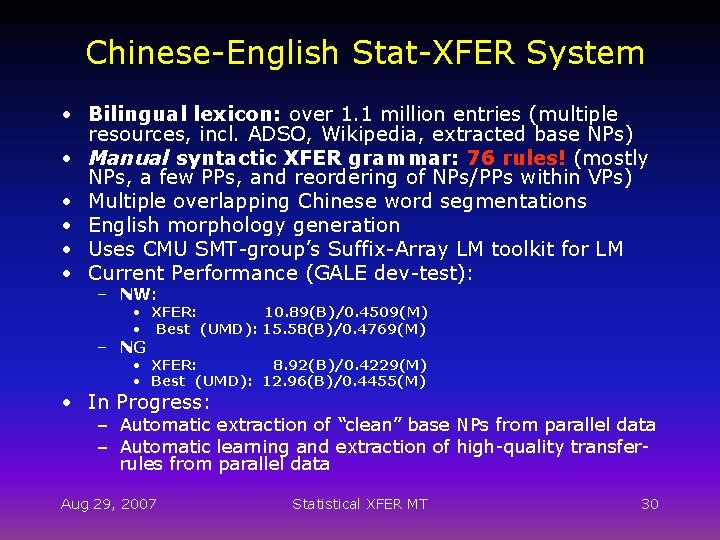

Chinese-English Stat-XFER System • Bilingual lexicon: over 1. 1 million entries (multiple resources, incl. ADSO, Wikipedia, extracted base NPs) • Manual syntactic XFER grammar: 76 rules! (mostly NPs, a few PPs, and reordering of NPs/PPs within VPs) • Multiple overlapping Chinese word segmentations • English morphology generation • Uses CMU SMT-group’s Suffix-Array LM toolkit for LM • Current Performance (GALE dev-test): – NW: • XFER: 10. 89(B)/0. 4509(M) • Best (UMD): 15. 58(B)/0. 4769(M) – NG • XFER: 8. 92(B)/0. 4229(M) • Best (UMD): 12. 96(B)/0. 4455(M) • In Progress: – Automatic extraction of “clean” base NPs from parallel data – Automatic learning and extraction of high-quality transferrules from parallel data Aug 29, 2007 Statistical XFER MT 30

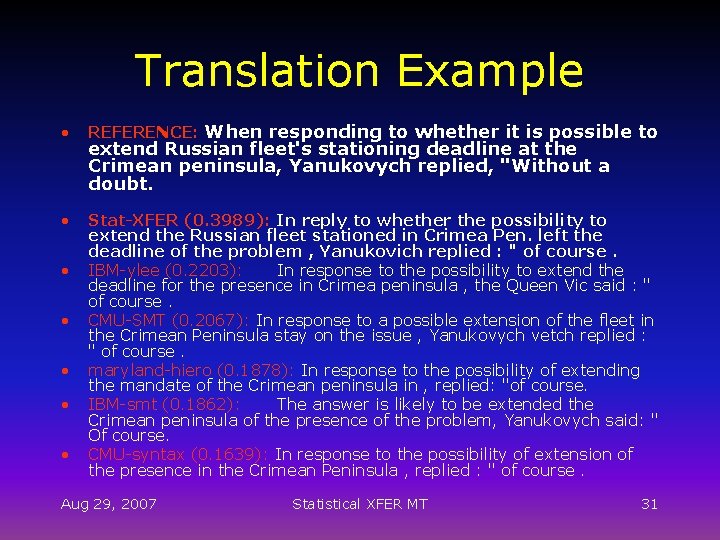

Translation Example • REFERENCE: When responding to whether it is possible to • Stat-XFER (0. 3989): In reply to whether the possibility to extend the Russian fleet stationed in Crimea Pen. left the deadline of the problem , Yanukovich replied : " of course. IBM-ylee (0. 2203): In response to the possibility to extend the deadline for the presence in Crimea peninsula , the Queen Vic said : " of course. CMU-SMT (0. 2067): In response to a possible extension of the fleet in the Crimean Peninsula stay on the issue , Yanukovych vetch replied : " of course. maryland-hiero (0. 1878): In response to the possibility of extending the mandate of the Crimean peninsula in , replied: "of course. IBM-smt (0. 1862): The answer is likely to be extended the Crimean peninsula of the presence of the problem, Yanukovych said: " Of course. CMU-syntax (0. 1639): In response to the possibility of extension of the presence in the Crimean Peninsula , replied : " of course. • • • extend Russian fleet's stationing deadline at the Crimean peninsula, Yanukovych replied, "Without a doubt. Aug 29, 2007 Statistical XFER MT 31

Major Research Directions • Automatic Transfer Rule Learning: – From manually word-aligned elicitation corpus – From large volumes of automatically word-aligned “wild” parallel data – In the absence of morphology or POS annotated lexica – Compositionality and generalization – Identifying “good” rules from “bad” rules – Effective models for rule scoring for • Decoding: using scores at runtime • Pruning the large collections of learned rules – Learning Unification Constraints Aug 29, 2007 Statistical XFER MT 32

Major Research Directions • Extraction of Base-NP translations from parallel data: – Base-NPs are extremely important “building blocks” for transfer-based MT systems • Frequent, often align 1 -to-1, improve coverage • Correctly identifying them greatly helps automatic wordalignment of parallel sentences – Parsers (or NP-chunkers) available for both languages: Extract base-NPs independently on both sides and find their correspondences – Parsers (or NP-chunkers) available for only one language (i. e. English): Extract base-NPs on one side, and find reliable correspondences for them using word-alignment, frequency distributions, other features… • Promising preliminary results Aug 29, 2007 Statistical XFER MT 33

Major Research Directions • Algorithms for XFER and Decoding – Integration and optimization of multiple features into search-based XFER parser – Complexity and efficiency improvements (i. e. “Cube Pruning”) – Non-monotonicity issues (LM scores, unification constraints) and their consequences on search Aug 29, 2007 Statistical XFER MT 34

Major Research Directions • Discriminative Language Modeling for MT: – Current standard statistical LMs provide only weak discrimination between good and bad translation hypotheses – New Idea: Use “occurrence-based” statistics: • Extract instances of lexical, syntactic and semantic features from each translation hypothesis • Determine whether these instances have been “seen before” (at least once) in a large monolingual corpus – The Conjecture: more grammatical MT hypotheses are likely to contain higher proportions of feature instances that have been seen in a corpus of grammatical sentences. – Goals: • Find the set of features that provides the best discrimination between good and bad translations • Learn how to combine these into a LM-like function for scoring alternative MT hypotheses Aug 29, 2007 Statistical XFER MT 35

Major Research Directions • Building Elicitation Corpora: – Feature Detection – Corpus Navigation • Automatic Rule Refinement • Translation for highly polysynthetic languages such as Mapudungun and Iñupiaq Aug 29, 2007 Statistical XFER MT 36

Questions? Aug 29, 2007 Statistical XFER MT 37

- Slides: 37