Statistical NLP Lecture 12 Probabilistic Context Free Grammars

Statistical NLP: Lecture 12 Probabilistic Context Free Grammars 1

Motivation 4 N-gram models and HMM Tagging only allowed us to process sentences linearly. 4 However, even simple sentences require a nonlinear model that reflects the hierarchical structure of sentences rather than the linear order of words. 4 Probabilistic Context Free Grammars are the simplest and most natural probabilistic model for tree structures and the algorithms for them are closely related to those for HMMs. 4 Note, however, that there are other ways of building probabilistic models of syntactic structure (see Chapter 12). 2

Formal Definition of PCFGs A PCFG consists of: – A set of terminals, {wk}, k= 1, …, V – A set of nonterminals, Ni, i= 1, …, n – A designated start symbol N 1 – A set of rules, {Ni --> j}, (where j is a sequence of terminals and nonterminals) – A corresponding set of probabilities on rules such that: i j P(Ni --> j) = 1 4 The probability of a sentence (according to grammar G) is given by: . P(w 1 m, t) where t is a parse tree of the sentence. = {t: yield(t)=w 1 m} P(t) 3

Assumptions of the Model 4 Place Invariance: The probability of a subtree does not depend on where in the string the words it dominates are. 4 Context Free: The probability of a subtree does not depend on words not dominated by the subtree. 4 Ancestor Free: The probability of a subtree does not depend on nodes in the derivation outside the subtree. 4

Some Features of PCFGs 4 A PCFG gives some idea of the plausibility of different parses. However, the probabilities are based on structural factors and not lexical ones. 4 PCFG are good for grammar induction. 4 PCFGs are robust. 4 PCFGs give a probabilistic language model for English. 4 The predictive power of a PCFG tends to be greater than for an HMM. Though in practice, it is worse. 4 PCFGs are not good models alone but they can be combined with a tri-gram model. 4 PCFGs have certain biases which may not be appropriate. 5

Questions fo PCFGs 4 Just as for HMMs, there are three basic questions we wish to answer: 4 What is the probability of a sentence w 1 m according to a grammar G: P(w 1 m|G)? 4 What is the most likely parse for a sentence: argmax t P(t|w 1 m, G)? 4 How can we choose rule probabilities for the grammar G that maximize the probability of a sentence, argmax. G P(w 1 m|G) ? 6

Restriction 4 In this lecture, we only consider the case of Chomsky Normal Form Grammars, which only have unary and binary rules of the form: • Ni --> Nj Nk • Ni --> wj 4 The parameters of a PCFG in Chomsky Normal Form are: • P(Nj --> Nr Ns | G) , an n 3 matrix of parameters • P(Nj --> wk|G), n. V parameters (where n is the number of nonterminals and V is the number of terminals) 4 r, s P(Nj --> Nr Ns) + k P (Nj --> wk) =1 7

From HMMs to Probabilistic Regular Grammars (PRG) 4 A PRG has start state N 1 and rules of the form: – Ni --> wj Nk – Ni --> wj 4 This is similar to what we had for an HMM except that in an HMM, we have n w 1 n P(w 1 n) = 1 whereas in a PCFG, we have w L P(w) = 1 where L is the language generated by the grammar. 4 PRG are related to HMMs in that a PRG is a HMM to which we should add a start state and a finish (or sink) state. 8

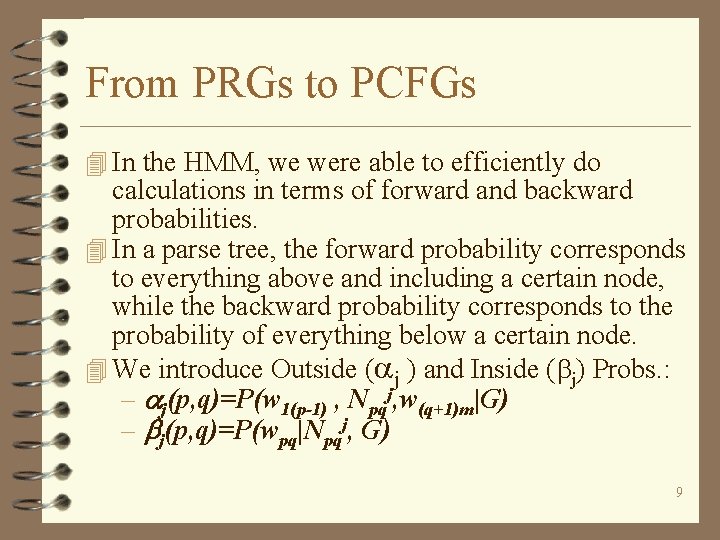

From PRGs to PCFGs 4 In the HMM, we were able to efficiently do calculations in terms of forward and backward probabilities. 4 In a parse tree, the forward probability corresponds to everything above and including a certain node, while the backward probability corresponds to the probability of everything below a certain node. 4 We introduce Outside ( j ) and Inside ( j) Probs. : – j(p, q)=P(w 1(p-1) , Npqj, w(q+1)m|G) – j(p, q)=P(wpq|Npqj, G) 9

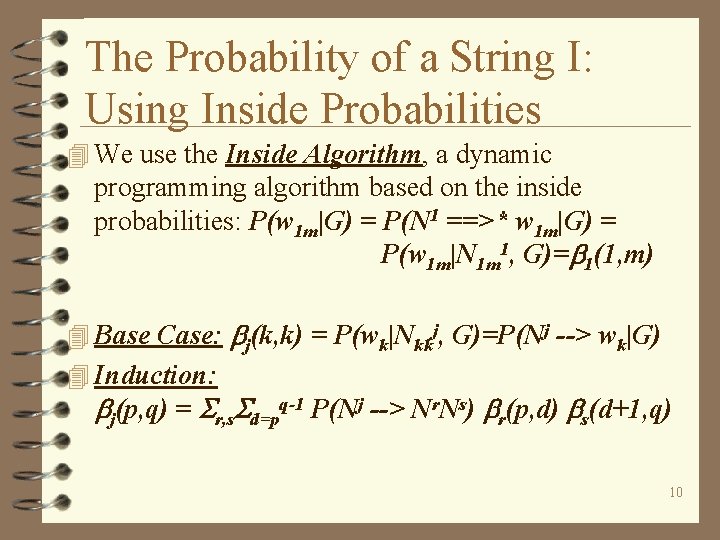

The Probability of a String I: Using Inside Probabilities 4 We use the Inside Algorithm, a dynamic programming algorithm based on the inside probabilities: P(w 1 m|G) = P(N 1 ==>* w 1 m|G) =. P(w 1 m|N 1 m 1, G)= 1(1, m) 4 Base Case: j(k, k) = P(wk|Nkkj, G)=P(Nj --> wk|G) 4 Induction: j(p, q) = r, s d=pq-1 P(Nj --> Nr. Ns) r(p, d) s(d+1, q) 10

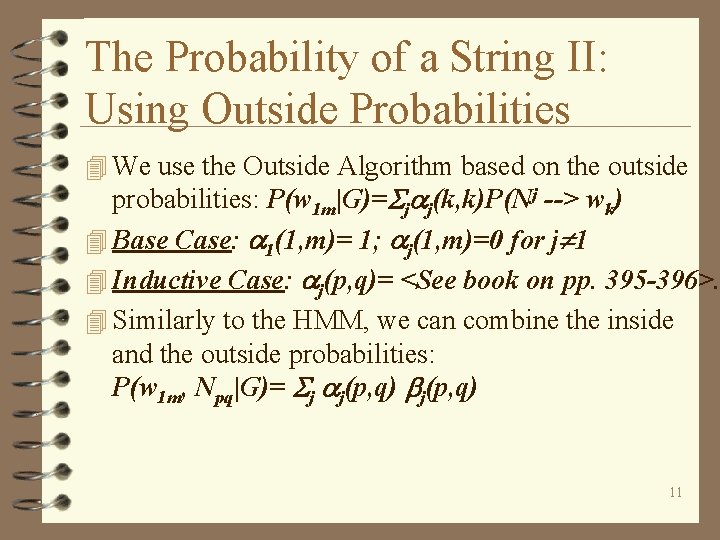

The Probability of a String II: Using Outside Probabilities 4 We use the Outside Algorithm based on the outside probabilities: P(w 1 m|G)= j j(k, k)P(Nj --> wk) 4 Base Case: 1(1, m)= 1; j(1, m)=0 for j 1 4 Inductive Case: j(p, q)= <See book on pp. 395 -396>. 4 Similarly to the HMM, we can combine the inside and the outside probabilities: P(w 1 m, Npq|G)= j j(p, q) 11

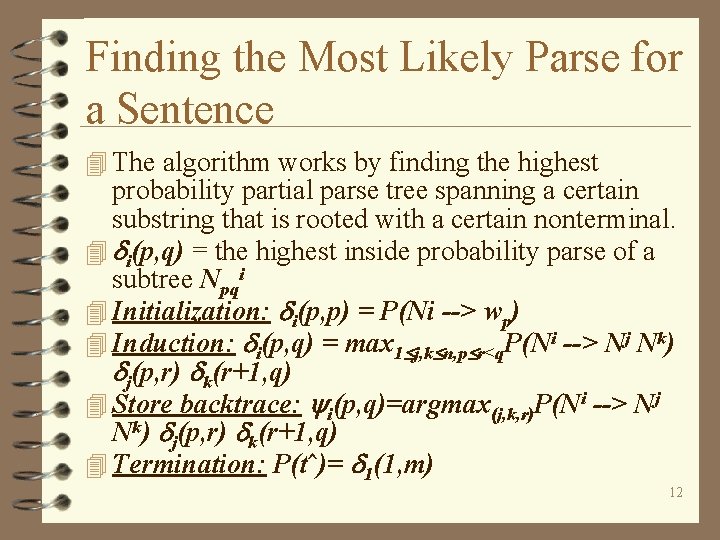

Finding the Most Likely Parse for a Sentence 4 The algorithm works by finding the highest probability partial parse tree spanning a certain substring that is rooted with a certain nonterminal. 4 i(p, q) = the highest inside probability parse of a subtree Npqi 4 Initialization: i(p, p) = P(Ni --> wp) 4 Induction: i(p, q) = max 1 j, k n, p r<q. P(Ni --> Nj Nk) j(p, r) k(r+1, q) 4 Store backtrace: i(p, q)=argmax(j, k, r)P(Ni --> Nj Nk) j(p, r) k(r+1, q) 4 Termination: P(t^)= 1(1, m) 12

Training a PCFG 4 Restrictions: We assume that the set of rules is given in advance and we try to find the optimal probabilities to assign to different grammar rules. 4 Like for the HMMs, we use an EM Training Algorithm called the Inside-Outside Algorithm which allows us to train the parameters of a PCFG on unannotated sentences of the language. 4 Basic Assumption: a good grammar is one that makes the sentences in the training corpus likely to occur ==> we seek the grammar that maximizes the likelihood of the training data. 13

Problems with the Inside-Outside Algorithm 4 Extremely Slow: For each sentence, each iteration of training is O(m 3 n 3). 4 Local Maxima are much more of a problem than in HMMs 4 Satisfactory learning requires many more nonterminals than are theoretically needed to describe the language. 4 There is no guarantee that the learned nonterminals will be linguistically motivated. 14

- Slides: 14