Statistical Natural Language Processing and Applications POSTECH AIGS

- Slides: 40

Statistical Natural Language Processing and Applications POSTECH AIGS Gary Geunbae Lee

Textbooks you can refer Jacob Eisenstein. Natural Language Processing (2018, draft) Jurafsky, D. and J. H. Martin: Speech and Language Processing. Prentice-Hall. 2009. 2 nd edition (3 rd edition, 2019 draft: http: //web. stanford. edu/~jurafsky/slp 3/) Yoav Goldberg. A Primer on Neural Network Models for Natural Language Processing (pdf) Manning, C. D. , Schütze, H. : Foundations of Statistical Natural Language Processing. The MIT Press. 1999. ISBN 0 -262 -13360 -1. 2

Goals of the HLT Computers would be a lot more useful if they could handle our email, do our library research, talk to us … But they are fazed by natural human language. How can we make computers have abilities to handle human language? (Or help them learn it as kids do? ) 3

A few applications of HLT Spelling correction, grammar checking …(language learning and evaluation e. g. TOEFL essay score) Better search engines Information extraction, gisting Psychotherapy; Harlequin romances; etc. New interfaces: Speech recognition (and text-to-speech) Dialogue systems (USS Enterprise onboard computer) Machine translation; speech translation (the Babel tower? ? ) Trans-lingual summarization, detection, extraction … 4

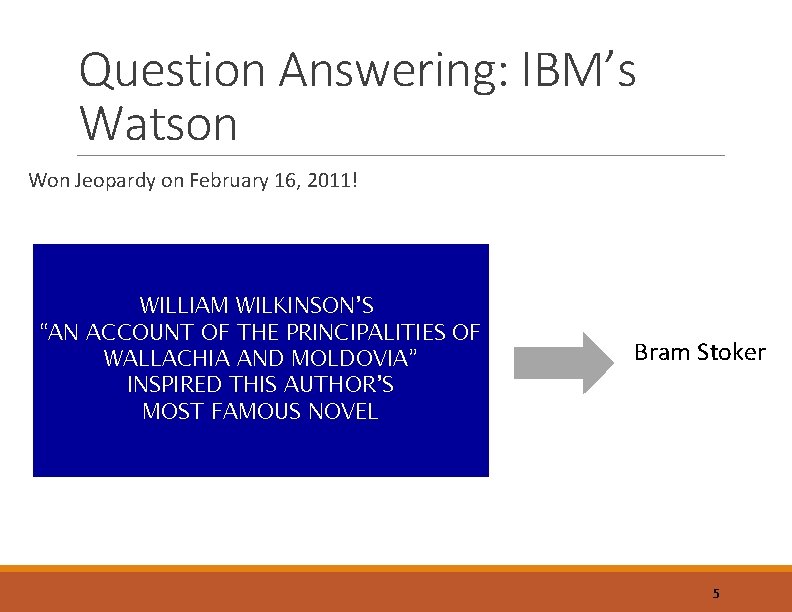

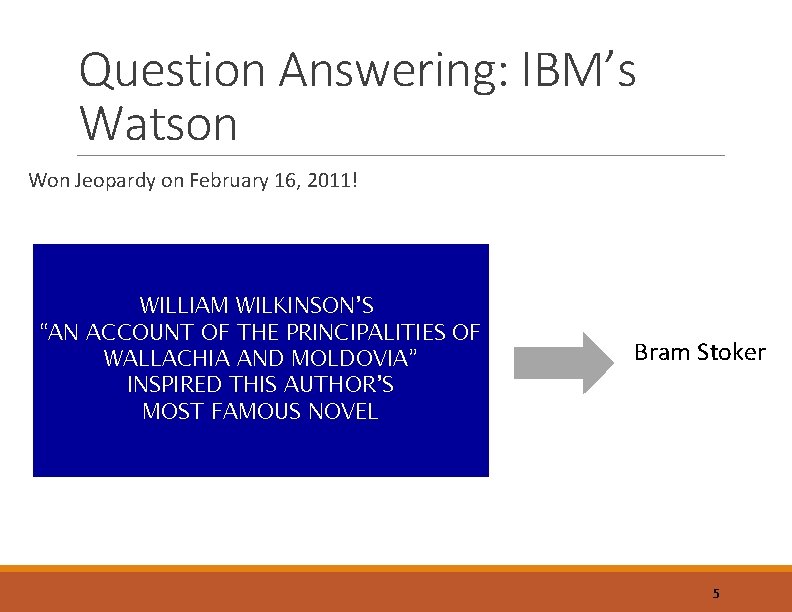

Question Answering: IBM’s Watson Won Jeopardy on February 16, 2011! WILLIAM WILKINSON’S “AN ACCOUNT OF THE PRINCIPALITIES OF WALLACHIA AND MOLDOVIA” INSPIRED THIS AUTHOR’S MOST FAMOUS NOVEL Bram Stoker 5

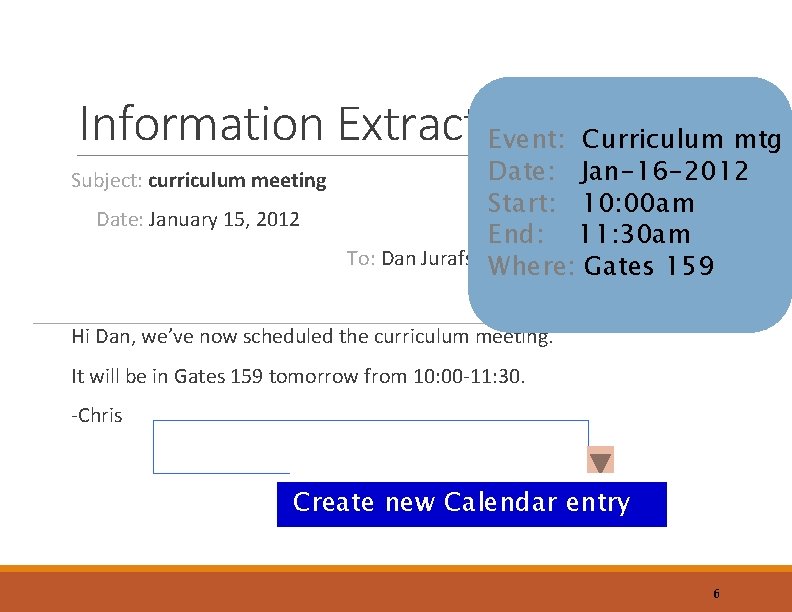

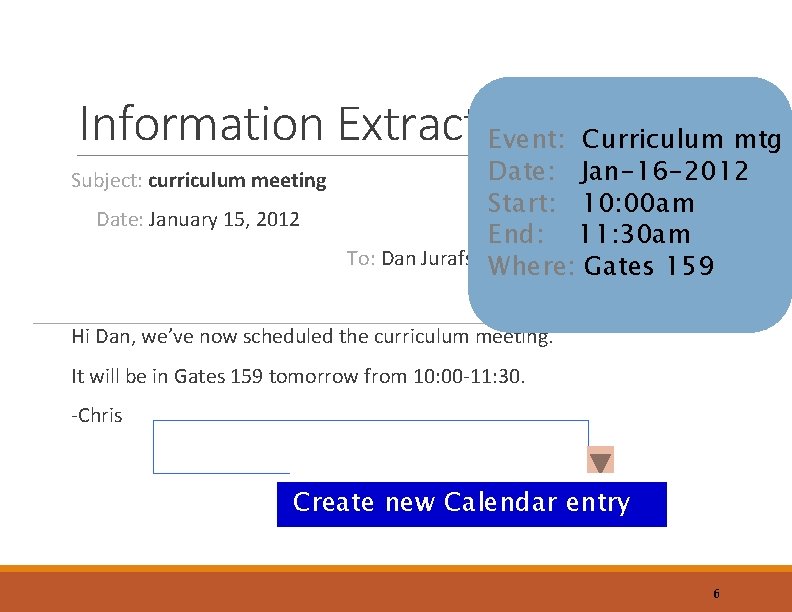

Information Extraction Event: Curriculum mtg Date: Jan-16 -2012 Subject: curriculum meeting Start: 10: 00 am Date: January 15, 2012 End: 11: 30 am To: Dan Jurafsky. Where: Gates 159 Hi Dan, we’ve now scheduled the curriculum meeting. It will be in Gates 159 tomorrow from 10: 00 -11: 30. -Chris Create new Calendar entry 6

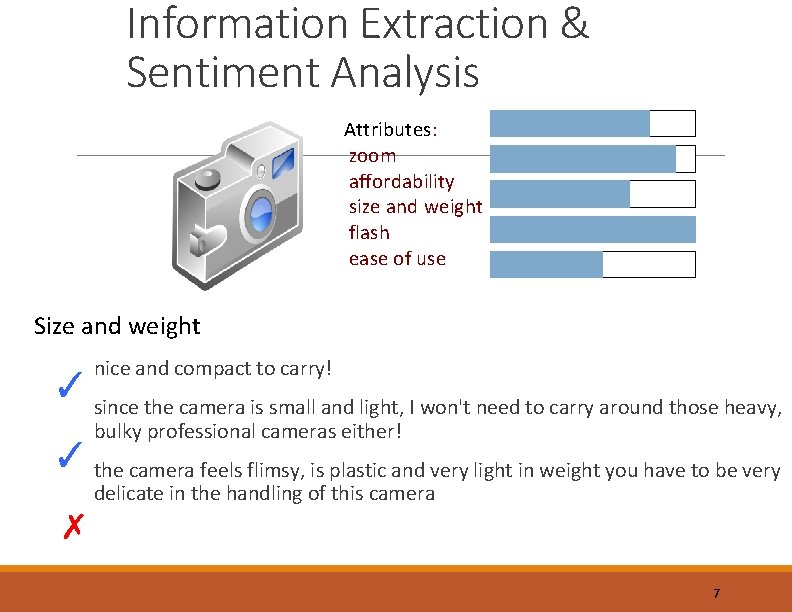

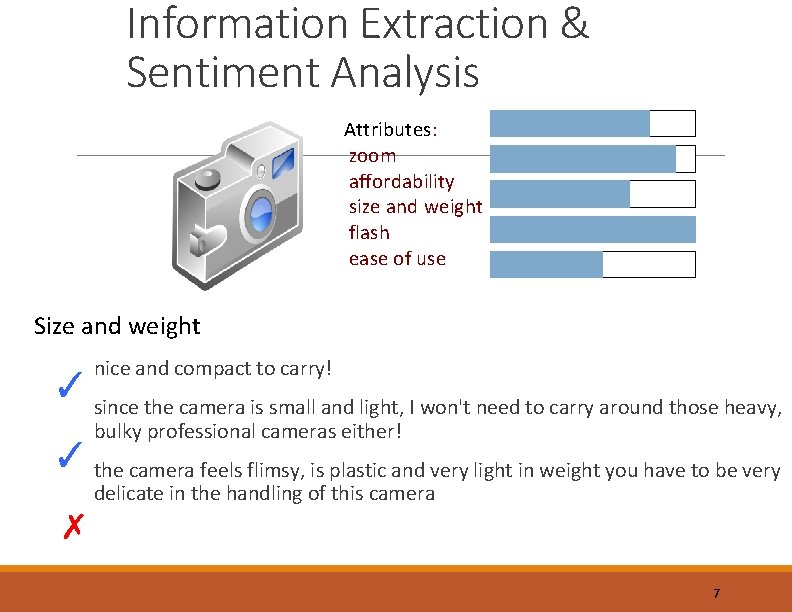

Information Extraction & Sentiment Analysis Attributes: zoom affordability size and weight flash ease of use Size and weight nice and compact to carry! ✓ since the camera is small and light, I won't need to carry around those heavy, bulky professional cameras either! ✓ the camera feels flimsy, is plastic and very light in weight you have to be very delicate in the handling of this camera ✗ 7

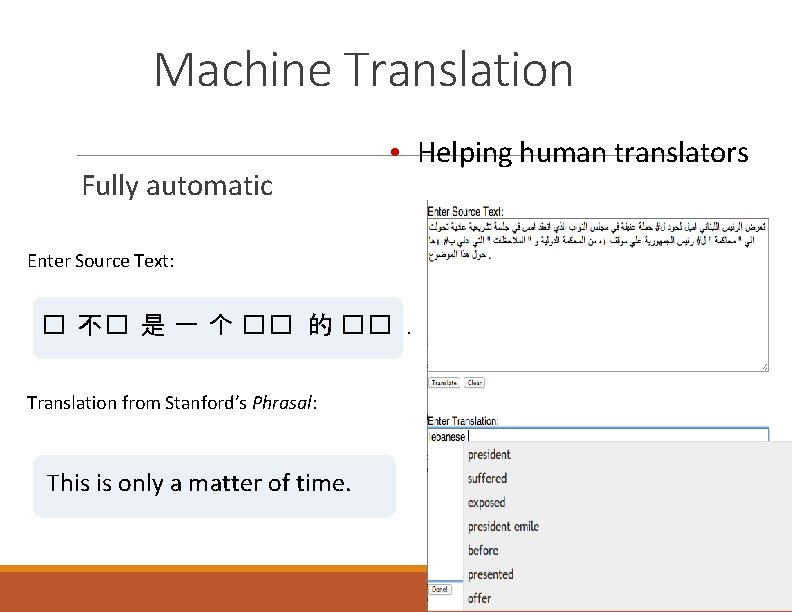

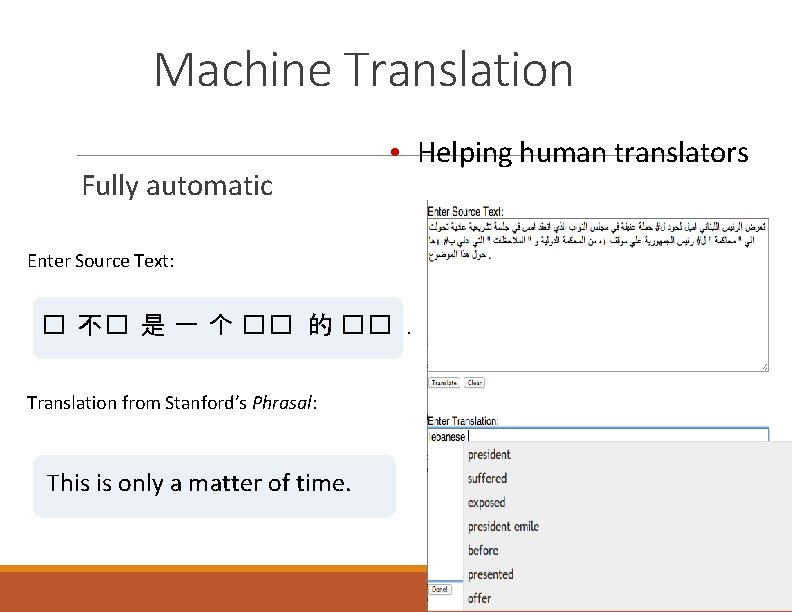

Machine Translation Fully automatic • Helping human translators Enter Source Text: � 不� 是 一 个 �� 的 ��. Translation from Stanford’s Phrasal: This is only a matter of time. 8

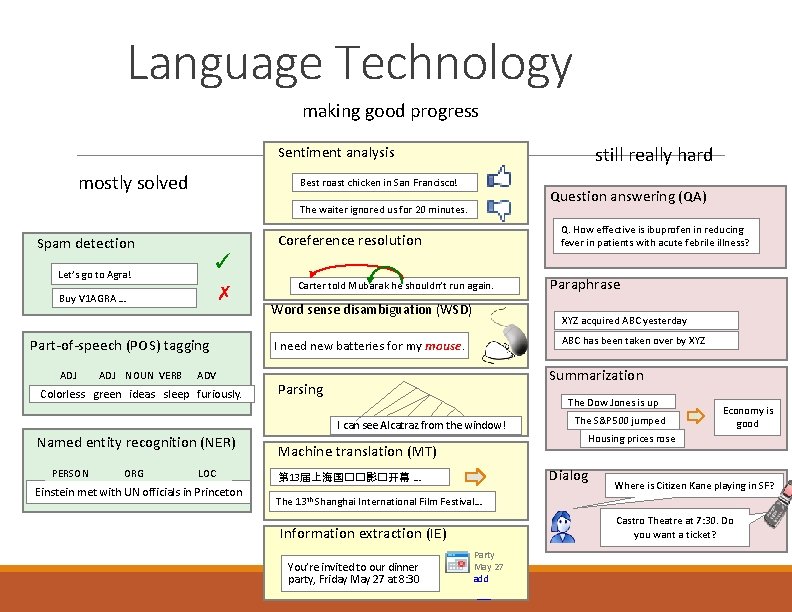

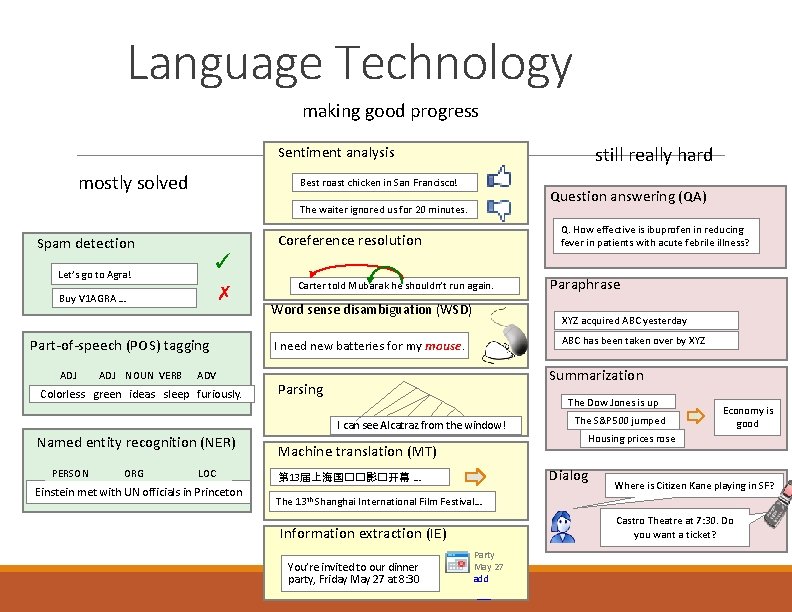

Language Technology making good progress still really hard Sentiment analysis mostly solved Best roast chicken in San Francisco! Question answering (QA) The waiter ignored us for 20 minutes. ✓ Let’s go to Agra! ✗ Buy V 1 AGRA … Part-of-speech (POS) tagging ADJ NOUN VERB Q. How effective is ibuprofen in reducing fever in patients with acute febrile illness? Coreference resolution Spam detection ADV Colorless green ideas sleep furiously. Carter told Mubarak he shouldn’t run again. Word sense disambiguation (WSD) XYZ acquired ABC yesterday ABC has been taken over by XYZ I need new batteries for my mouse. Summarization Parsing The Dow Jones is up I can see Alcatraz from the window! Named entity recognition (NER) PERSON ORG LOC Einstein met with UN officials in Princeton Machine translation (MT) The S&P 500 jumped Economy is good Housing prices rose Dialog 第 13届上海国��影�开幕 … 13 th Paraphrase Where is Citizen Kane playing in SF? Shanghai International Film Festival… Castro Theatre at 7: 30. Do you want a ticket? Information extraction (IE) You’re invited to our dinner party, Friday May 27 at 8: 30 Party May 27 add

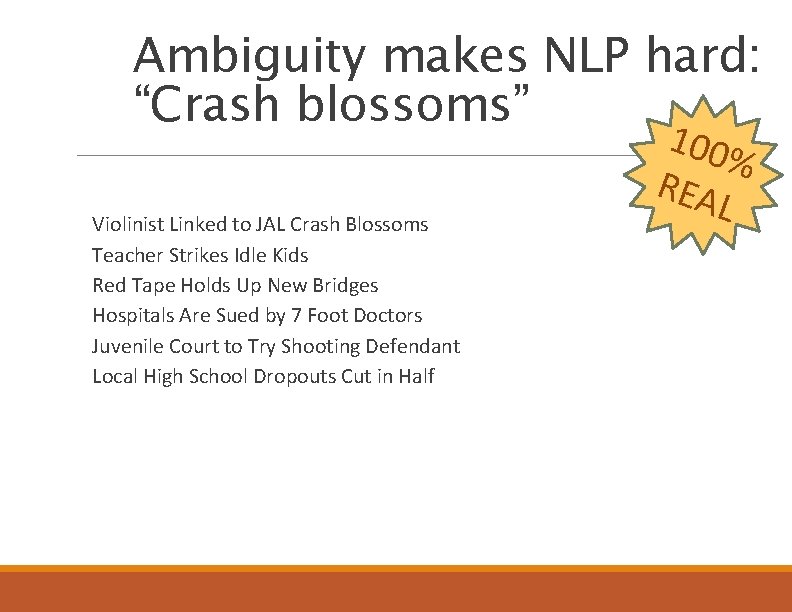

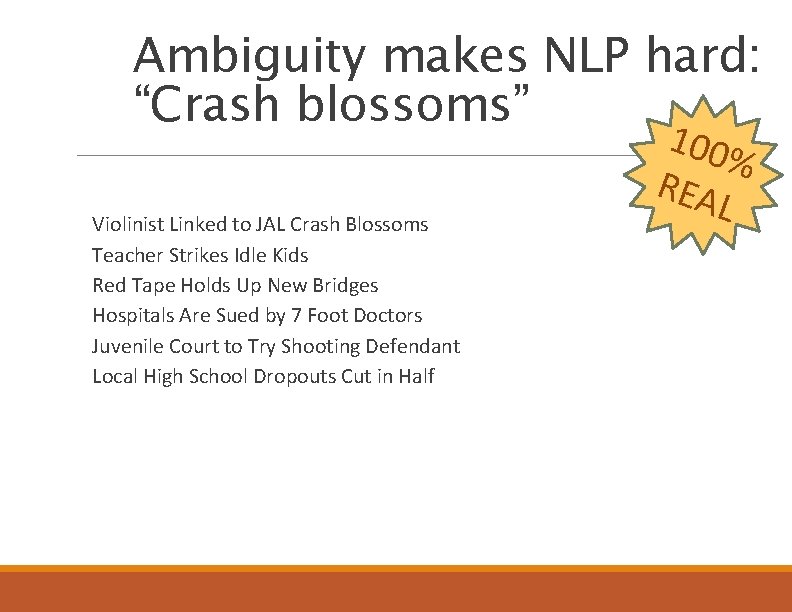

Ambiguity makes NLP hard: “Crash blossoms” Violinist Linked to JAL Crash Blossoms Teacher Strikes Idle Kids Red Tape Holds Up New Bridges Hospitals Are Sued by 7 Foot Doctors Juvenile Court to Try Shooting Defendant Local High School Dropouts Cut in Half 100 % REA L

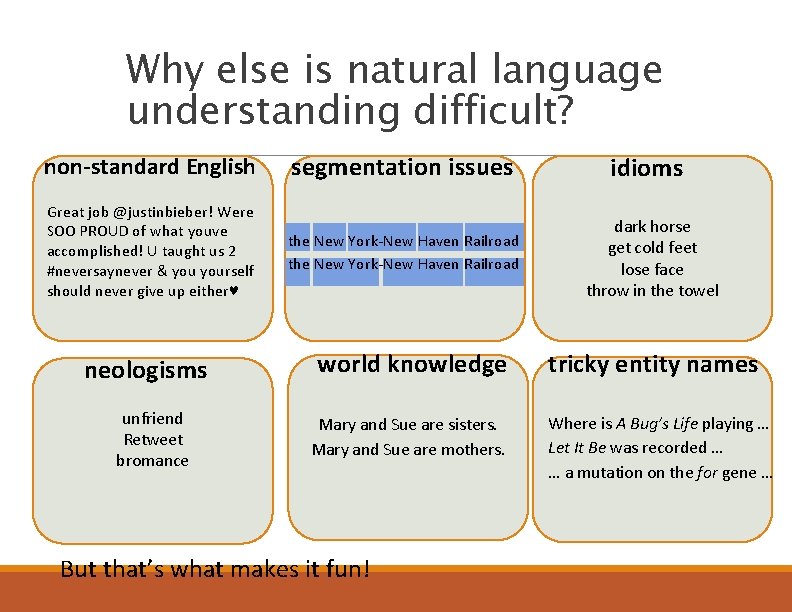

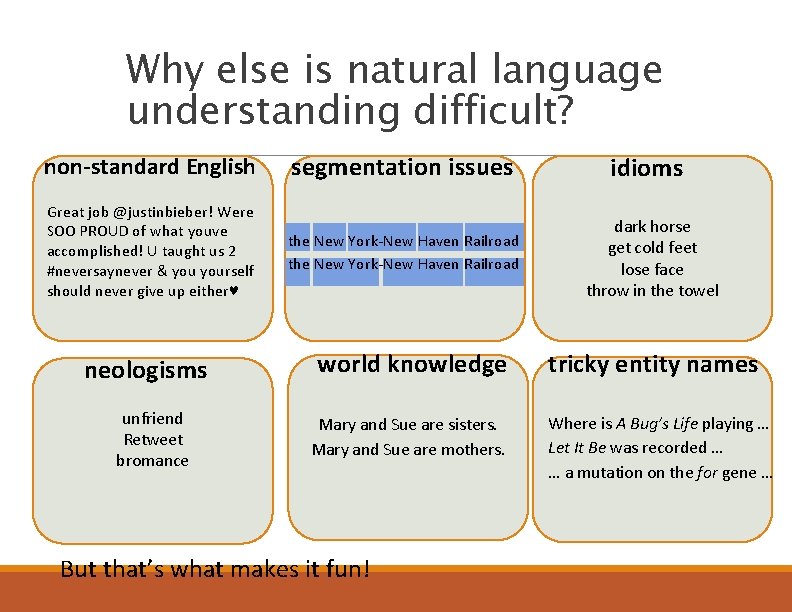

Why else is natural language understanding difficult? non-standard English segmentation issues Great job @justinbieber! Were SOO PROUD of what youve accomplished! U taught us 2 #neversaynever & yourself should never give up either♥ the New York-New Haven Railroad neologisms unfriend Retweet bromance world knowledge Mary and Sue are sisters. Mary and Sue are mothers. But that’s what makes it fun! idioms dark horse get cold feet lose face throw in the towel tricky entity names Where is A Bug’s Life playing … Let It Be was recorded … … a mutation on the for gene …

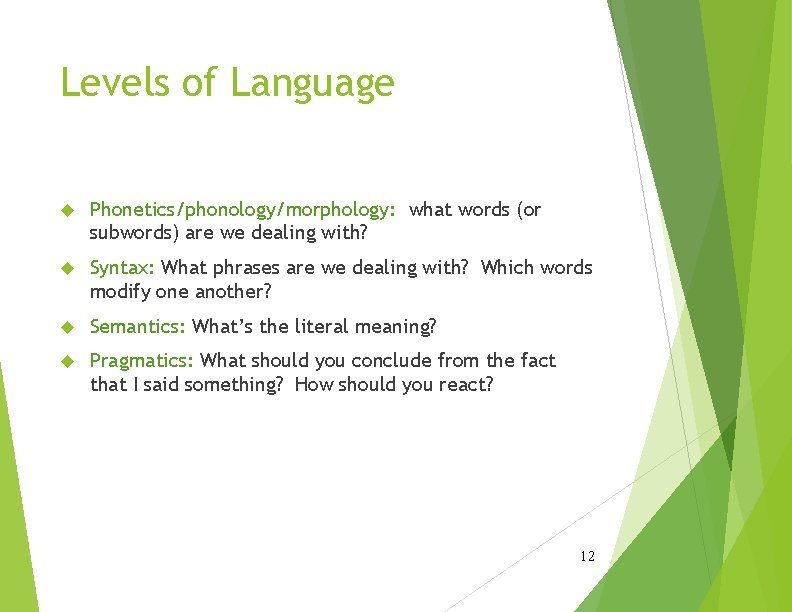

Levels of Language Phonetics/phonology/morphology: what words (or subwords) are we dealing with? Syntax: What phrases are we dealing with? Which words modify one another? Semantics: What’s the literal meaning? Pragmatics: What should you conclude from the fact that I said something? How should you react? 12

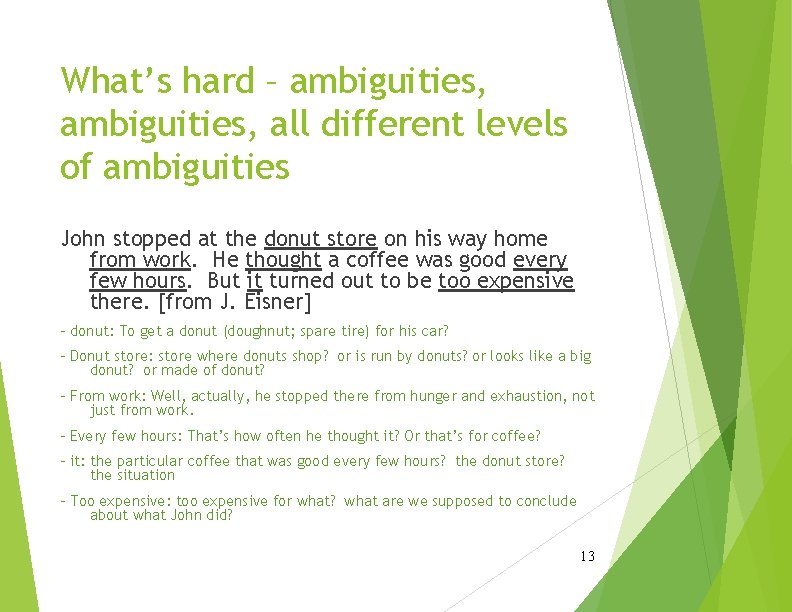

What’s hard – ambiguities, all different levels of ambiguities John stopped at the donut store on his way home from work. He thought a coffee was good every few hours. But it turned out to be too expensive there. [from J. Eisner] - donut: To get a donut (doughnut; spare tire) for his car? - Donut store: store where donuts shop? or is run by donuts? or looks like a big donut? or made of donut? - From work: Well, actually, he stopped there from hunger and exhaustion, not just from work. - Every few hours: That’s how often he thought it? Or that’s for coffee? - it: the particular coffee that was good every few hours? the donut store? the situation - Too expensive: too expensive for what? what are we supposed to conclude about what John did? 13

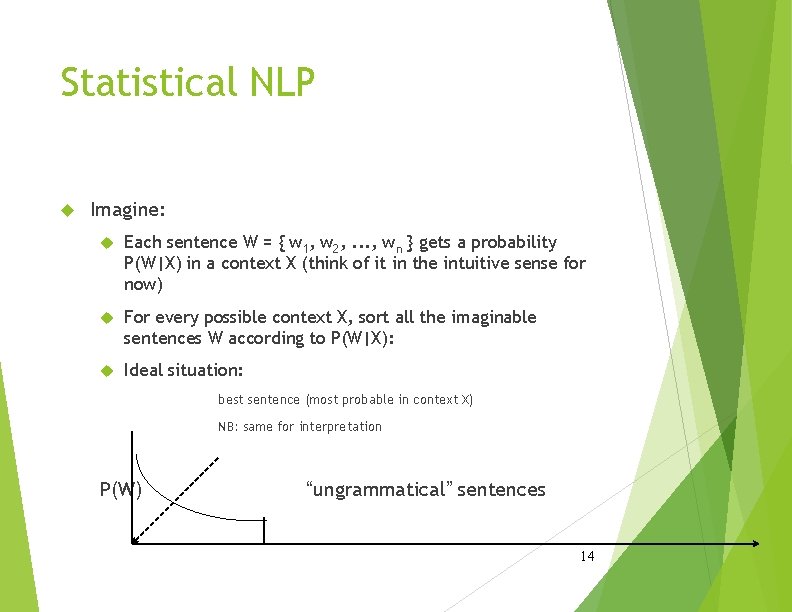

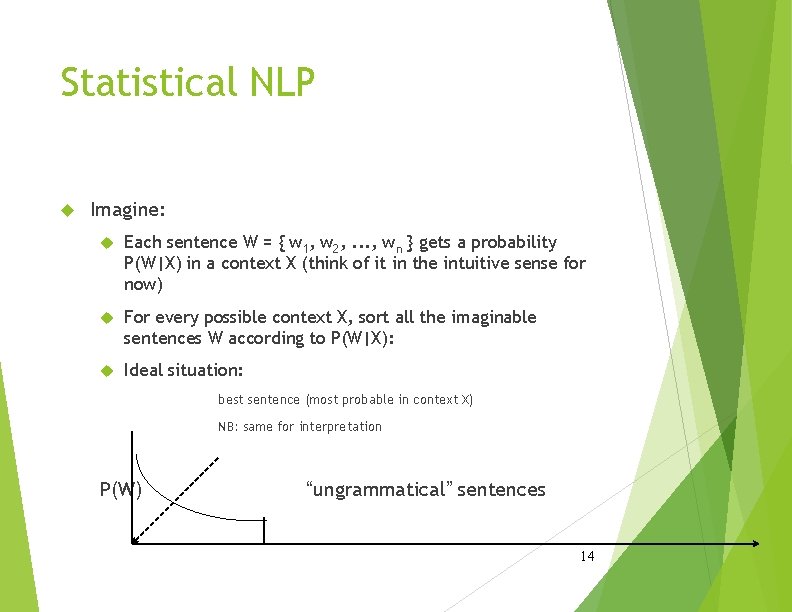

Statistical NLP Imagine: Each sentence W = { w 1, w 2, . . . , wn } gets a probability P(W|X) in a context X (think of it in the intuitive sense for now) For every possible context X, sort all the imaginable sentences W according to P(W|X): Ideal situation: best sentence (most probable in context X) NB: same for interpretation P(W) “ungrammatical” sentences 14

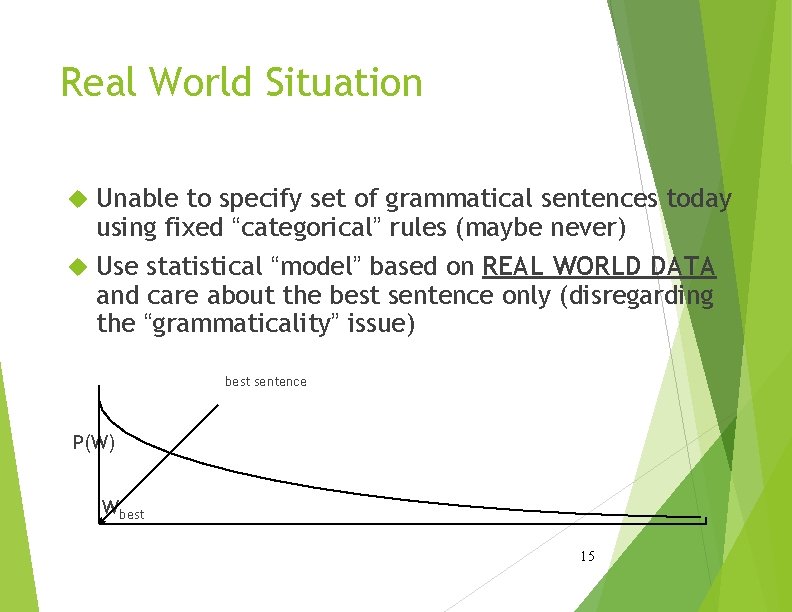

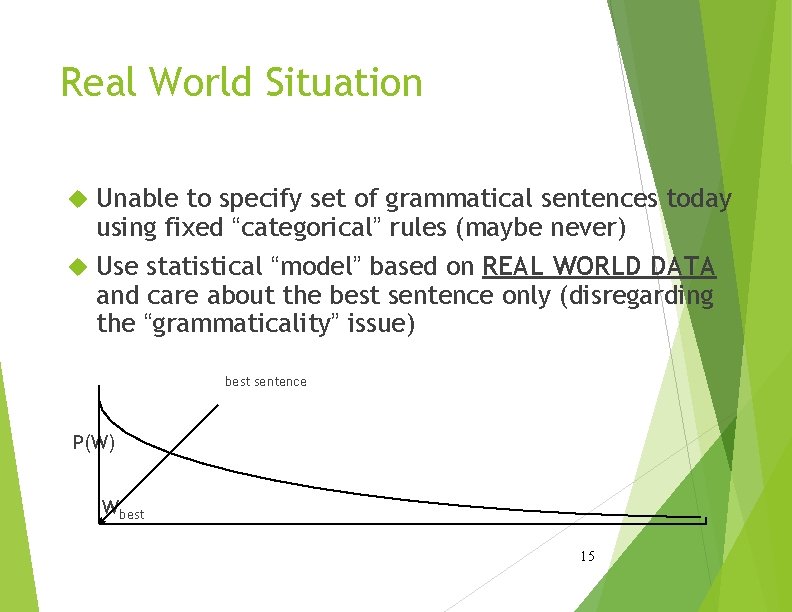

Real World Situation Unable to specify set of grammatical sentences today using fixed “categorical” rules (maybe never) Use statistical “model” based on REAL WORLD DATA and care about the best sentence only (disregarding the “grammaticality” issue) best sentence P(W) Wbest 15

Language Modeling (and the Noisy Channel) 16

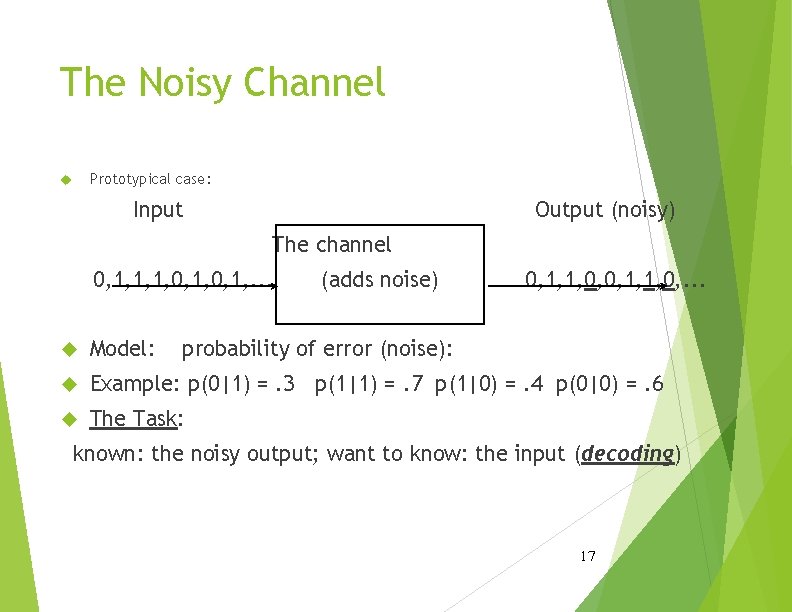

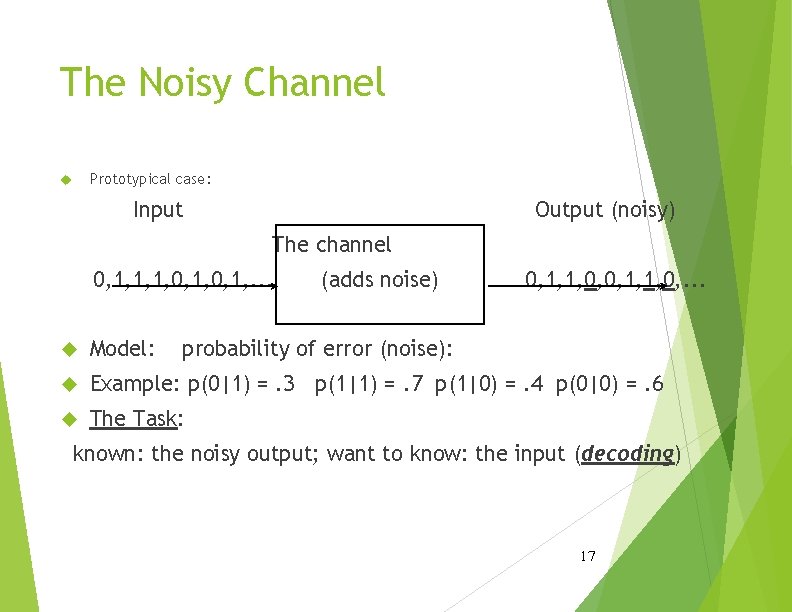

The Noisy Channel Prototypical case: Input Output (noisy) The channel 0, 1, 1, 1, 0, 1, . . . (adds noise) 0, 1, 1, 0, . . . Model: probability of error (noise): Example: p(0|1) =. 3 p(1|1) =. 7 p(1|0) =. 4 p(0|0) =. 6 The Task: known: the noisy output; want to know: the input (decoding) 17

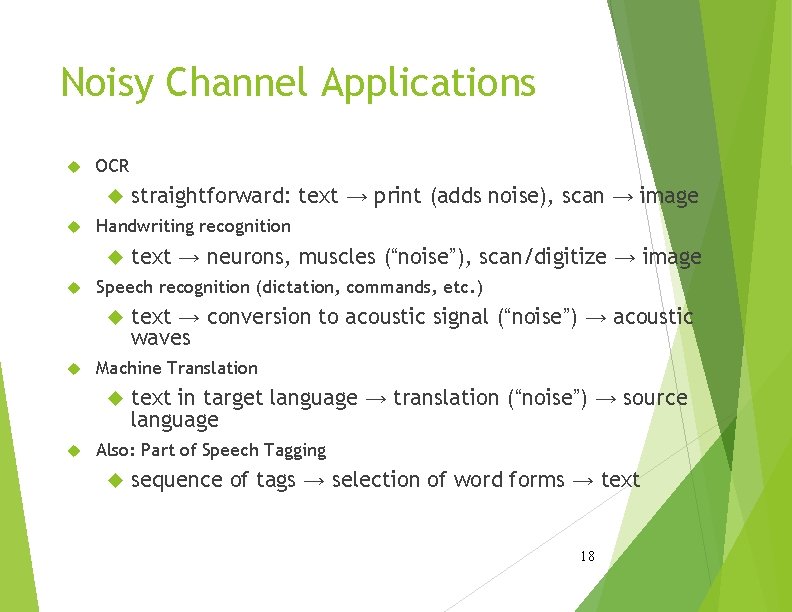

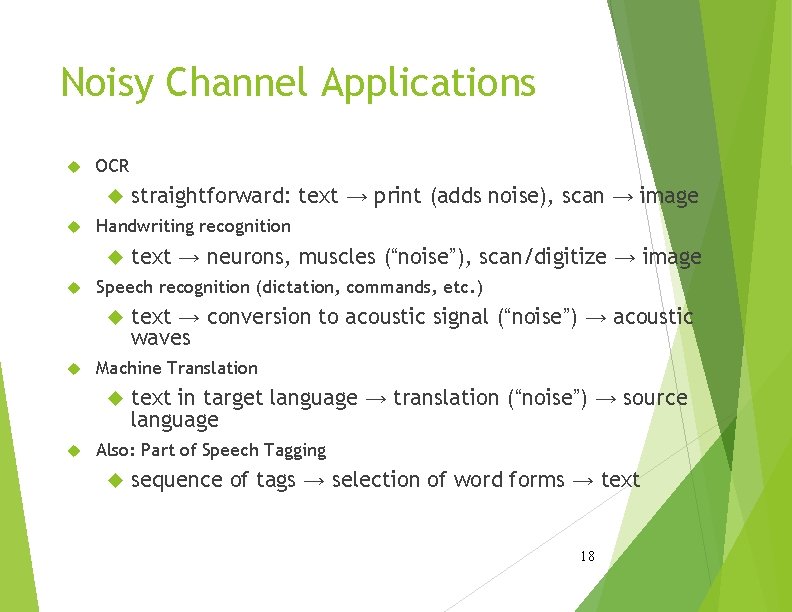

Noisy Channel Applications OCR Handwriting recognition text → conversion to acoustic signal (“noise”) → acoustic waves Machine Translation text → neurons, muscles (“noise”), scan/digitize → image Speech recognition (dictation, commands, etc. ) straightforward: text → print (adds noise), scan → image text in target language → translation (“noise”) → source language Also: Part of Speech Tagging sequence of tags → selection of word forms → text 18

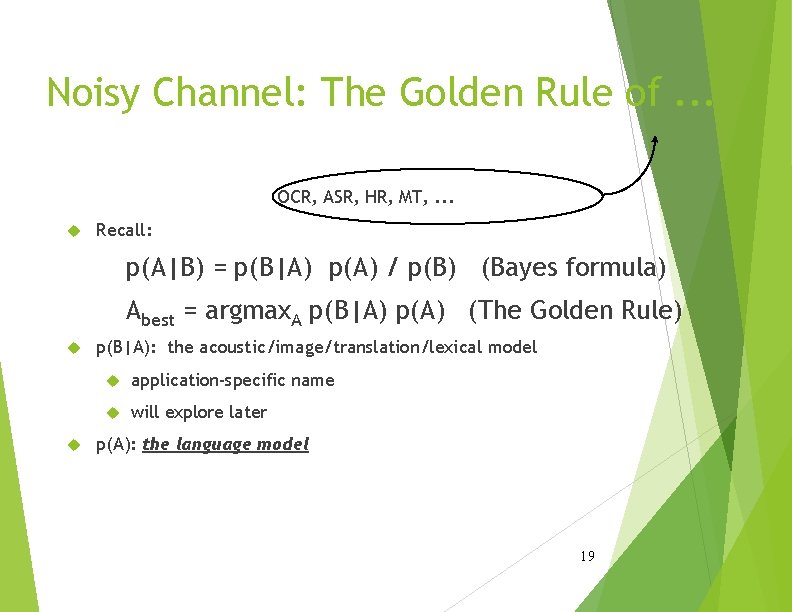

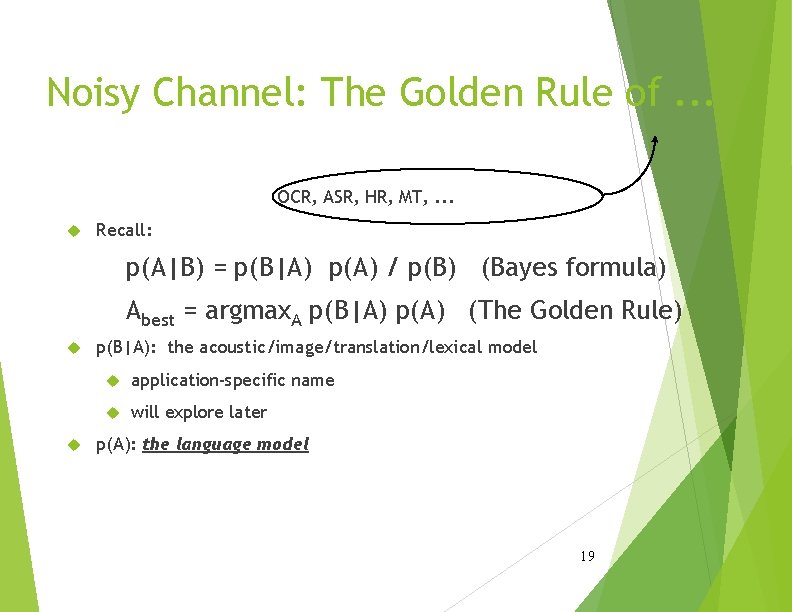

Noisy Channel: The Golden Rule of. . . OCR, ASR, HR, MT, . . . Recall: p(A|B) = p(B|A) p(A) / p(B) (Bayes formula) Abest = argmax. A p(B|A) p(A) (The Golden Rule) p(B|A): the acoustic/image/translation/lexical model application-specific name will explore later p(A): the language model 19

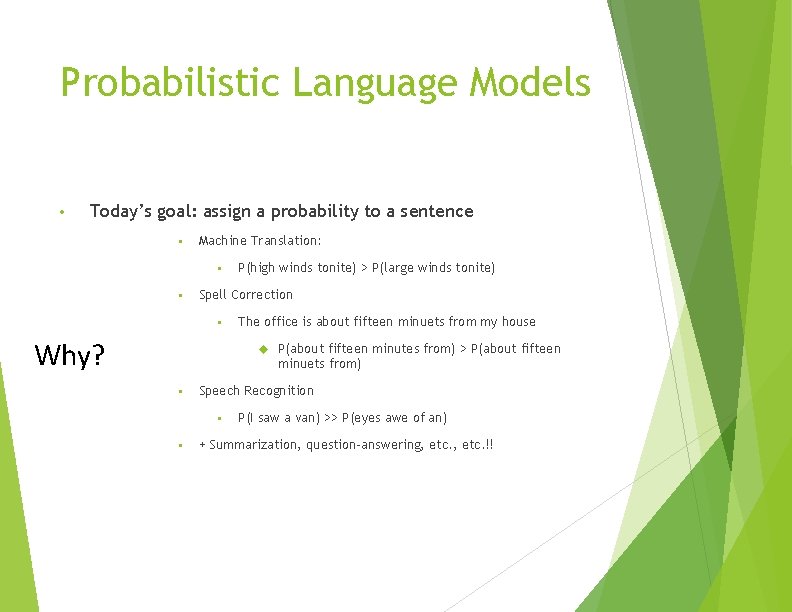

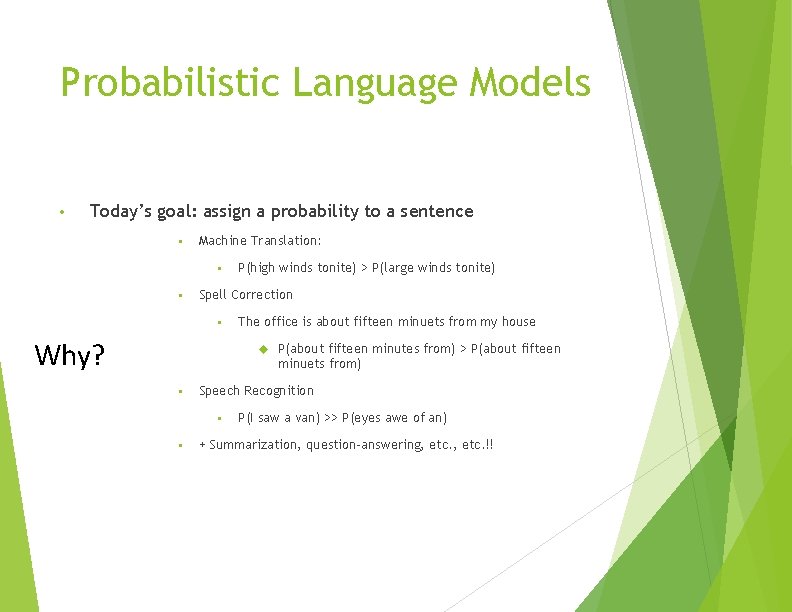

Probabilistic Language Models • Today’s goal: assign a probability to a sentence • Machine Translation: • • P(high winds tonite) > P(large winds tonite) Spell Correction • Why? The office is about fifteen minuets from my house P(about fifteen minutes from) > P(about fifteen minuets from) • Speech Recognition • • P(I saw a van) >> P(eyes awe of an) + Summarization, question-answering, etc. !!

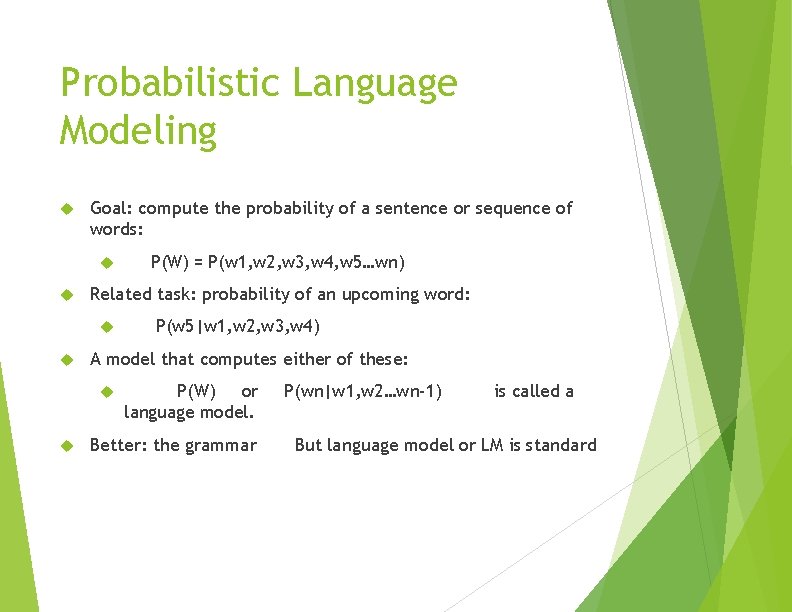

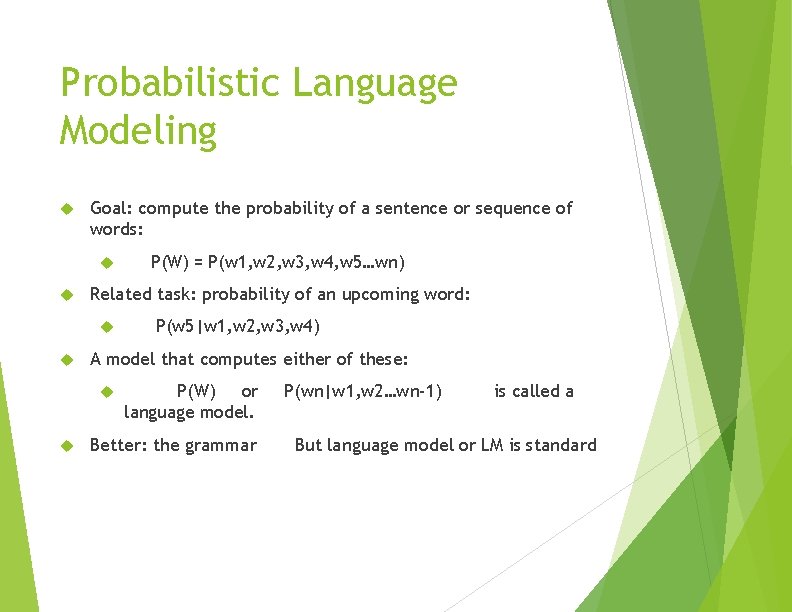

Probabilistic Language Modeling Goal: compute the probability of a sentence or sequence of words: Related task: probability of an upcoming word: P(w 5|w 1, w 2, w 3, w 4) A model that computes either of these: P(W) = P(w 1, w 2, w 3, w 4, w 5…wn) P(W) or language model. Better: the grammar P(wn|w 1, w 2…wn-1) is called a But language model or LM is standard

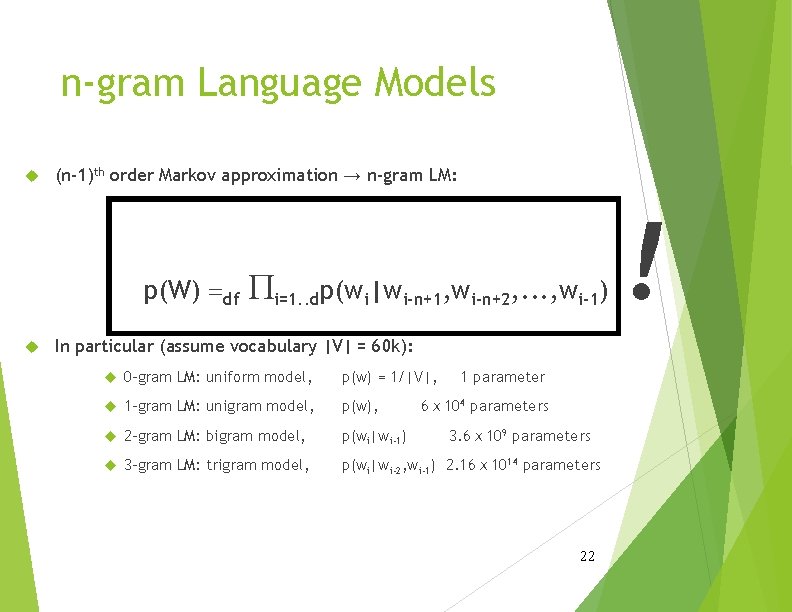

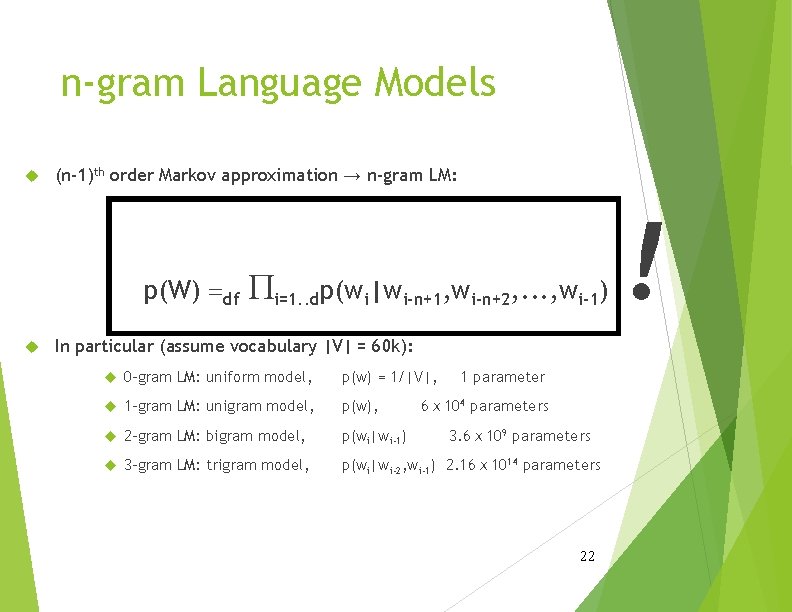

n-gram Language Models (n-1)th order Markov approximation → n-gram LM: p(W) =df Pi=1. . dp(wi|wi-n+1, wi-n+2, . . . , wi-1) In particular (assume vocabulary |V| = 60 k): 0 -gram LM: uniform model, p(w) = 1/|V|, 1 parameter 1 -gram LM: unigram model, p(w), 2 -gram LM: bigram model, p(wi|wi-1) 3 -gram LM: trigram model, p(wi|wi-2, wi-1) 2. 16ⅹ 1014 parameters 6ⅹ 104 parameters 3. 6ⅹ 109 parameters 22 !

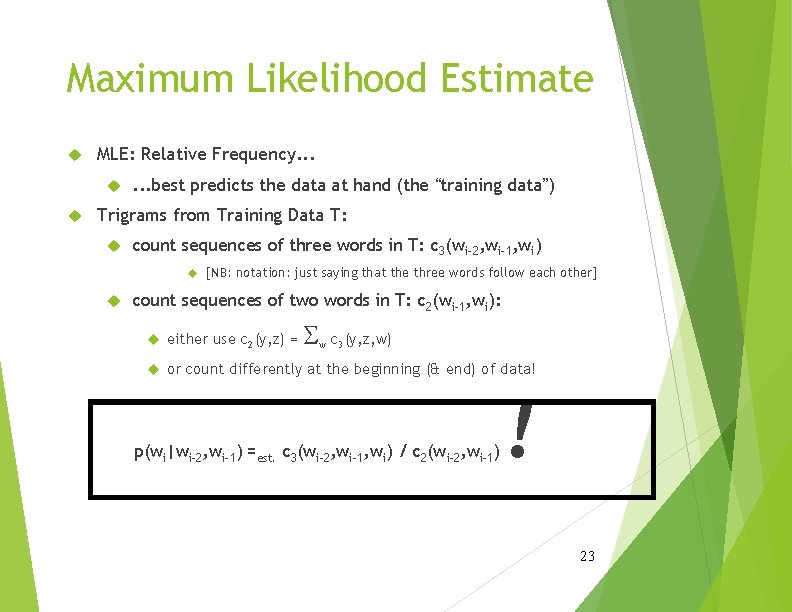

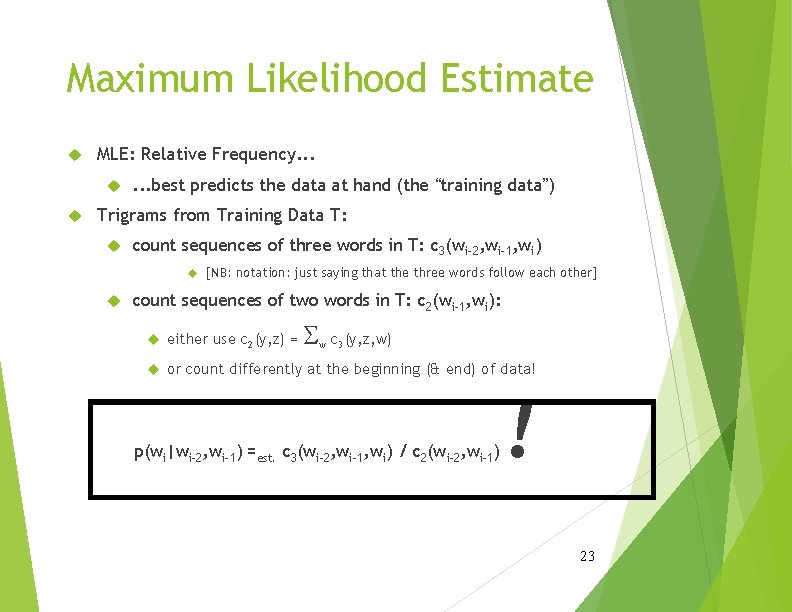

Maximum Likelihood Estimate MLE: Relative Frequency. . . . best predicts the data at hand (the “training data”) Trigrams from Training Data T: count sequences of three words in T: c 3(wi-2, wi-1, wi) [NB: notation: just saying that the three words follow each other] count sequences of two words in T: c 2(wi-1, wi): S either use c 2(y, z) = or count differently at the beginning (& end) of data! w c 3(y, z, w) p(wi|wi-2, wi-1) =est. c 3(wi-2, wi-1, wi) / c 2(wi-2, wi-1) ! 23

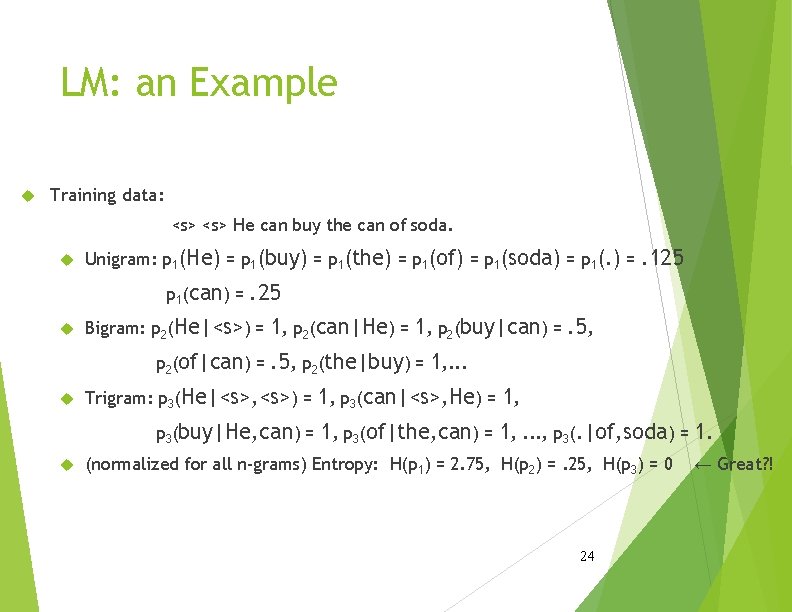

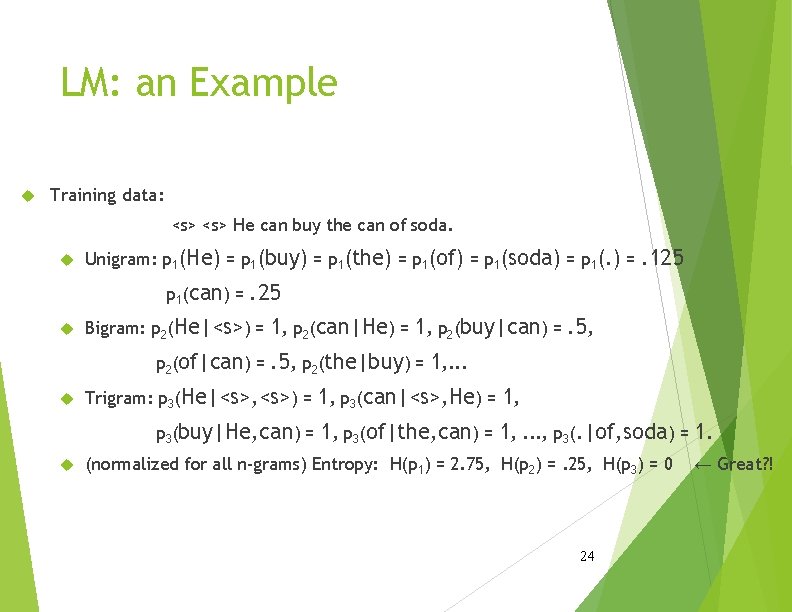

LM: an Example Training data: <s> He can buy the can of soda. Unigram: p 1(He) = p 1(buy) = p 1(the) = p 1(of) = p 1(soda) = p 1(can) = . 25 Bigram: p 2(He|<s>) = 1, p 2(can|He) = 1, p 2(buy|can) = p 2(of|can) = . 125 . 5, p 2(the|buy) = 1, . . . Trigram: p 3(He|<s>, <s>) = 1, p 3(can|<s>, He) = 1, p 3(buy|He, can) = 1, p 3(of|the, can) = 1, . . . , p 3(. |of, soda) = 1. (normalized for all n-grams) Entropy: H(p 1) = 2. 75, H(p 2) =. 25, H(p 3) = 0 24 ← Great? !

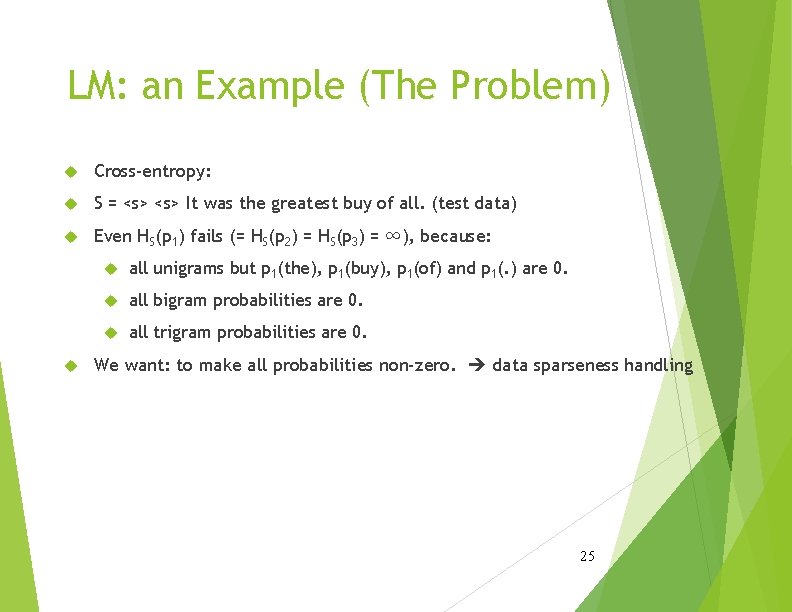

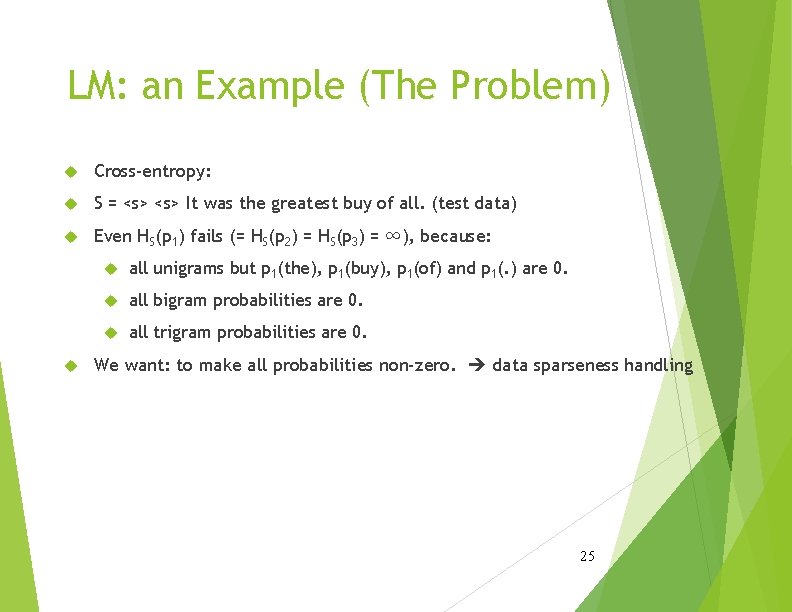

LM: an Example (The Problem) Cross-entropy: S = <s> It was the greatest buy of all. (test data) Even HS(p 1) fails (= HS(p 2) = HS(p 3) = ∞), because: all unigrams but p 1(the), p 1(buy), p 1(of) and p 1(. ) are 0. all bigram probabilities are 0. all trigram probabilities are 0. We want: to make all probabilities non-zero. data sparseness handling 25

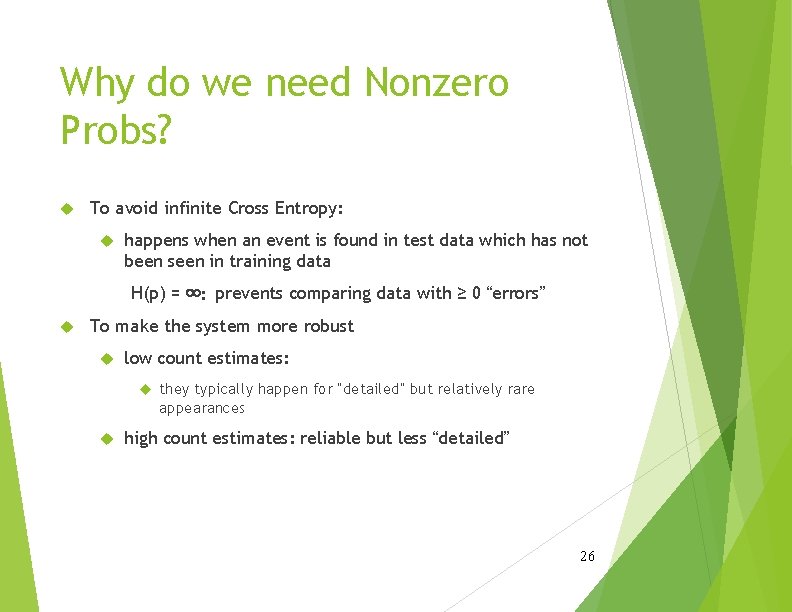

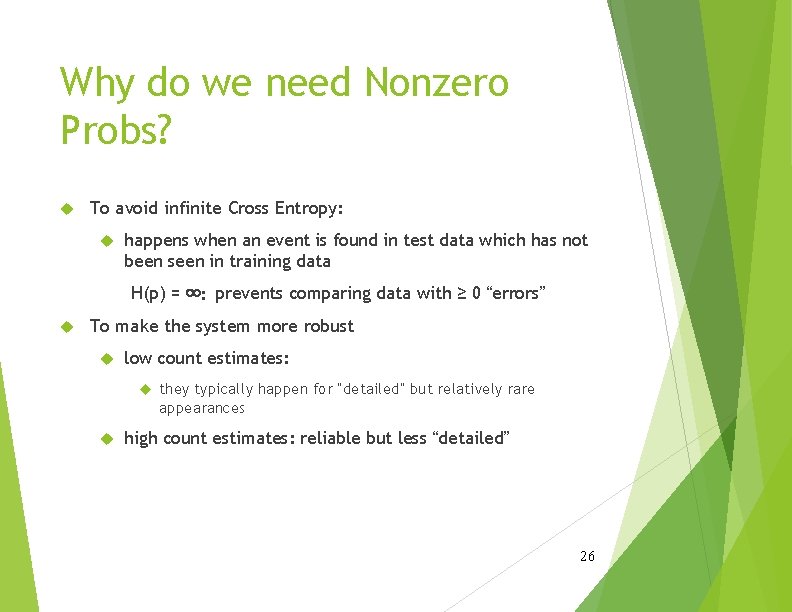

Why do we need Nonzero Probs? To avoid infinite Cross Entropy: happens when an event is found in test data which has not been seen in training data H(p) = ∞: prevents comparing data with ≥ 0 “errors” To make the system more robust low count estimates: they typically happen for “detailed” but relatively rare appearances high count estimates: reliable but less “detailed” 26

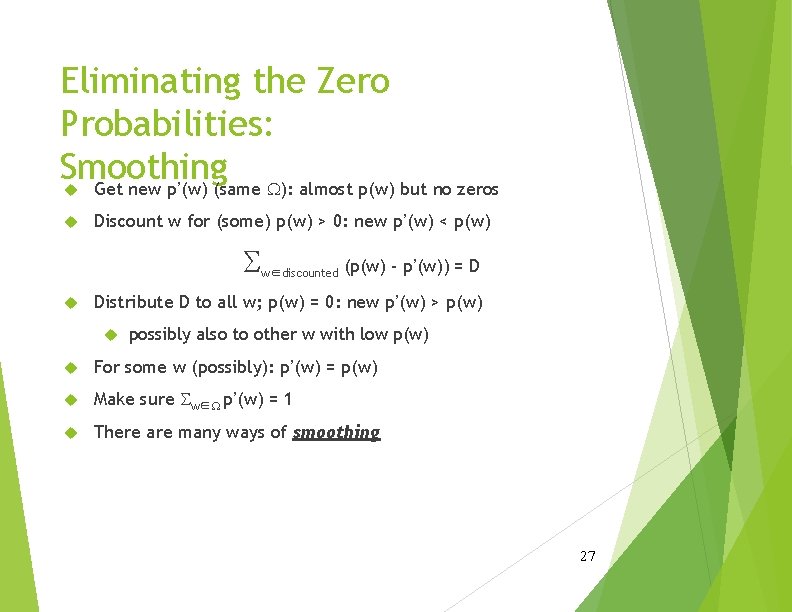

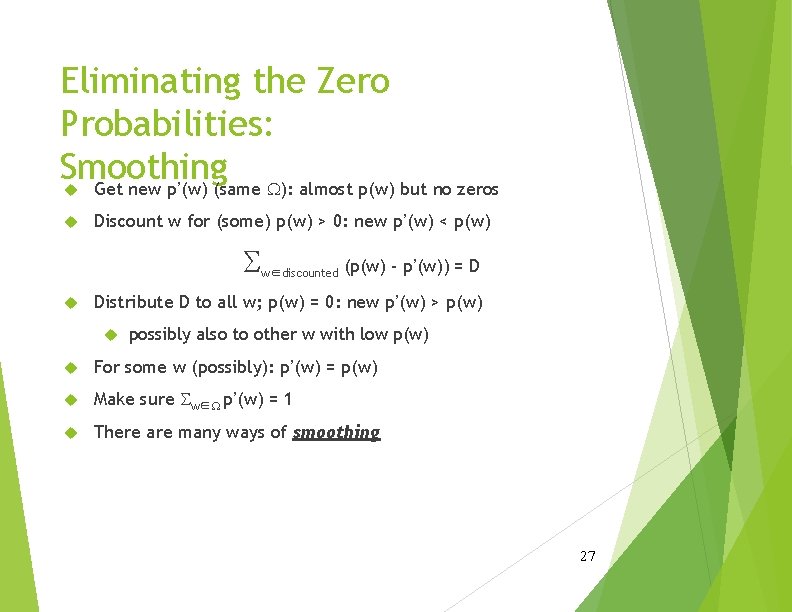

Eliminating the Zero Probabilities: Smoothing Get new p’(w) (same W): almost p(w) but no zeros Discount w for (some) p(w) > 0: new p’(w) < p(w) S w∈discounted (p(w) - p’(w)) = D Distribute D to all w; p(w) = 0: new p’(w) > p(w) possibly also to other w with low p(w) For some w (possibly): p’(w) = p(w) Make sure Sw∈W p’(w) = 1 There are many ways of smoothing 27

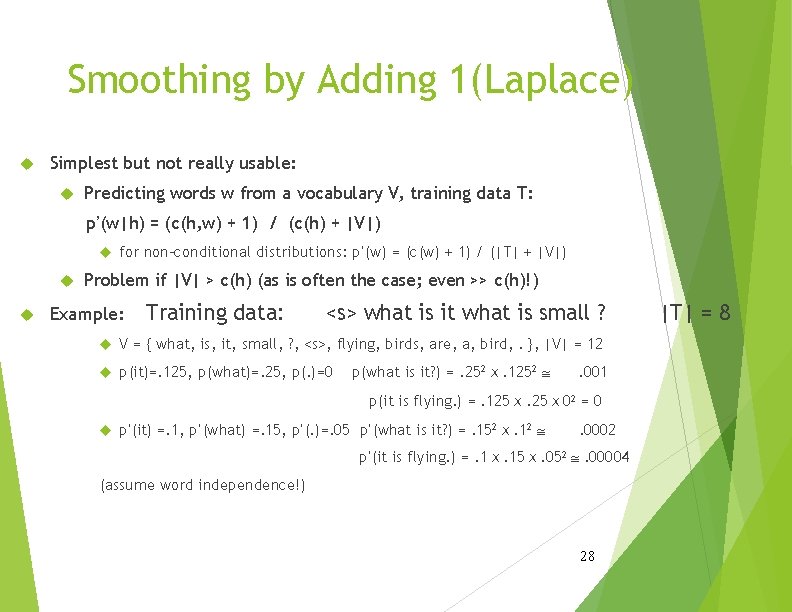

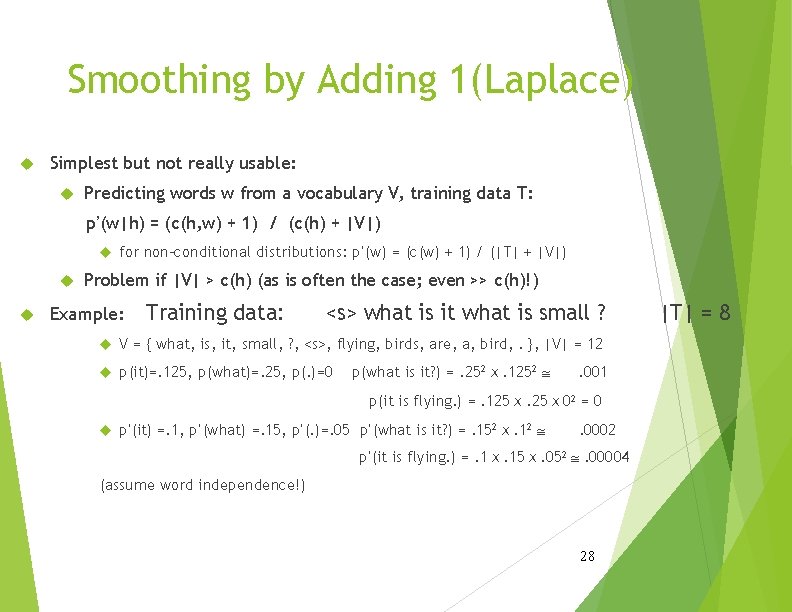

Smoothing by Adding 1(Laplace) Simplest but not really usable: Predicting words w from a vocabulary V, training data T: p’(w|h) = (c(h, w) + 1) / (c(h) + |V|) for non-conditional distributions: p’(w) = (c(w) + 1) / (|T| + |V|) Problem if |V| > c(h) (as is often the case; even >> c(h)!) Example: Training data: <s> what is it what is small ? V = { what, is, it, small, ? , <s>, flying, birds, are, a, bird, . }, |V| = 12 p(it)=. 125, p(what)=. 25, p(. )=0 p(what is it? ) =. 252ⅹ. 1252 @ . 001 p(it is flying. ) =. 125ⅹ. 25ⅹ 02 = 0 p’(it) =. 1, p’(what) =. 15, p’(. )=. 05 p’(what is it? ) =. 152ⅹ. 12 @ . 0002 p’(it is flying. ) =. 1ⅹ. 15ⅹ. 052 @. 00004 (assume word independence!) 28 |T| = 8

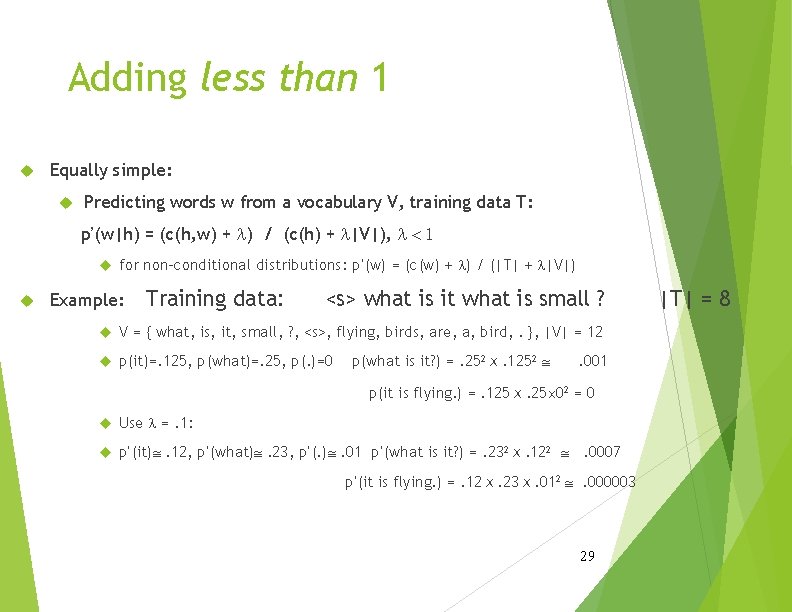

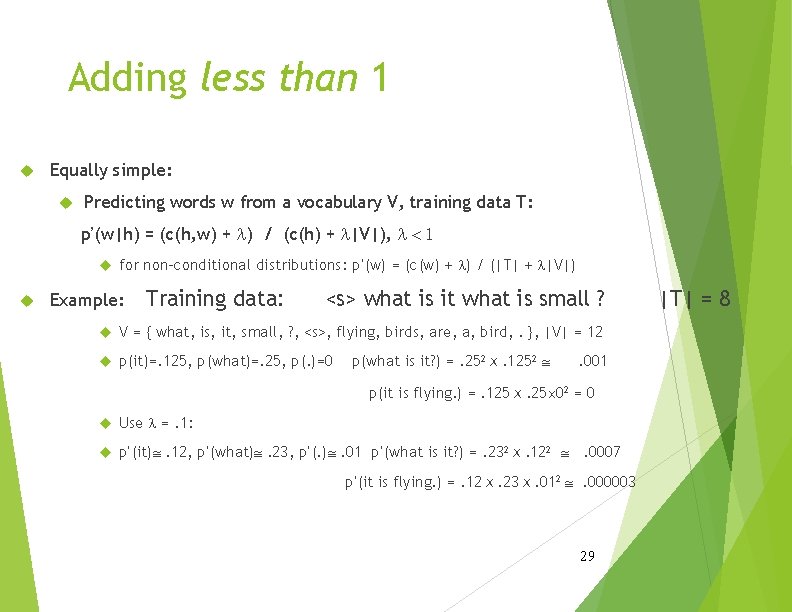

Adding less than 1 Equally simple: Predicting words w from a vocabulary V, training data T: p’(w|h) = (c(h, w) + l) / (c(h) + l|V|), l < 1 for non-conditional distributions: p’(w) = (c(w) + l) / (|T| + l|V|) Example: Training data: <s> what is it what is small ? V = { what, is, it, small, ? , <s>, flying, birds, are, a, bird, . }, |V| = 12 p(it)=. 125, p(what)=. 25, p(. )=0 p(what is it? ) =. 252ⅹ. 1252 @ . 001 p(it is flying. ) =. 125ⅹ. 25´ 02 = 0 Use l =. 1: p’(it)@. 12, p’(what)@. 23, p’(. )@. 01 p’(what is it? ) =. 232ⅹ. 122 @. 0007 p’(it is flying. ) =. 12ⅹ. 23ⅹ. 012 @. 000003 29 |T| = 8

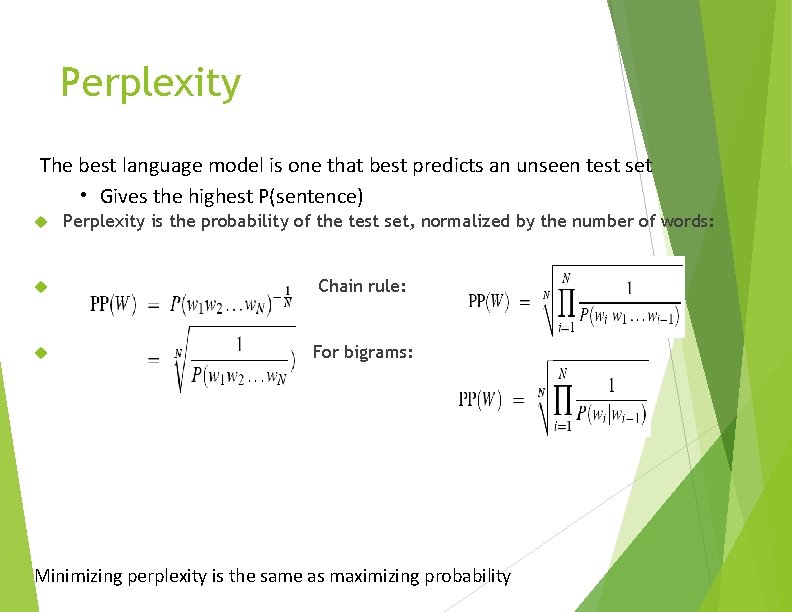

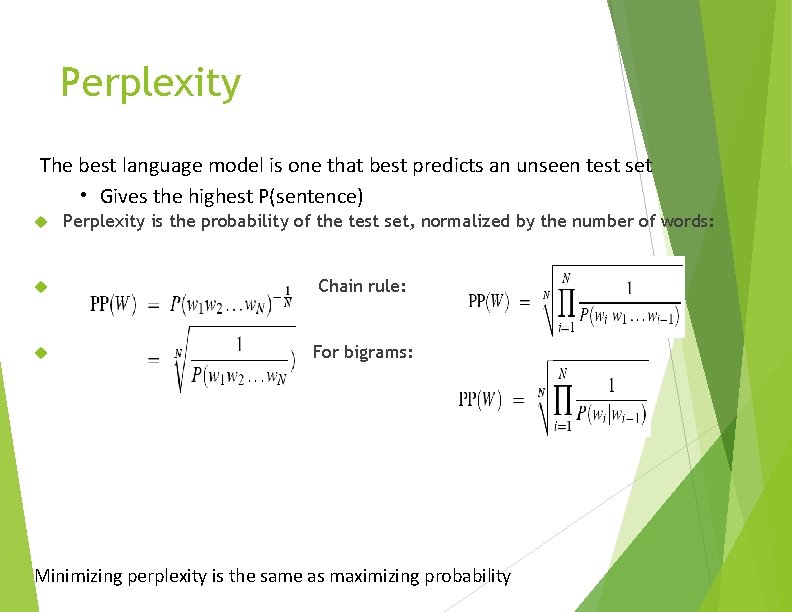

Perplexity The best language model is one that best predicts an unseen test set • Gives the highest P(sentence) Perplexity is the probability of the test set, normalized by the number of words: Chain rule: For bigrams: Minimizing perplexity is the same as maximizing probability

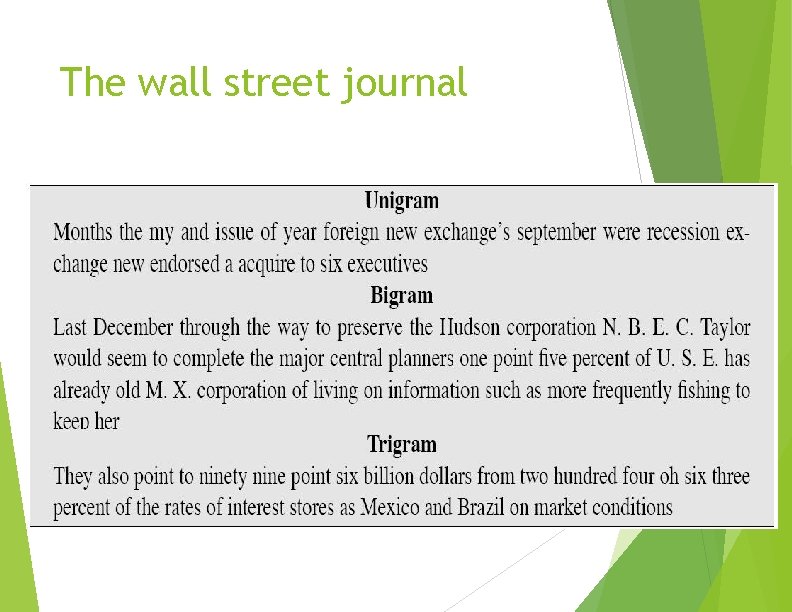

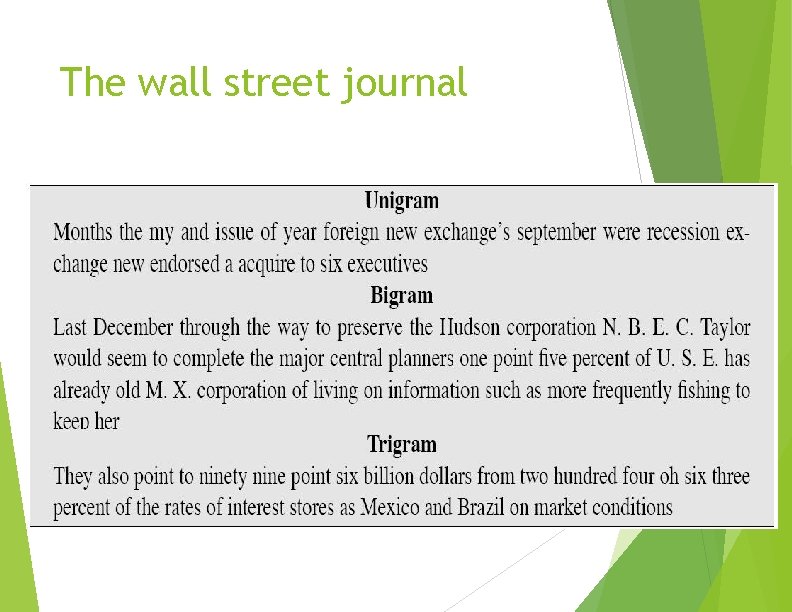

The wall street journal

Text Classification and Naïve Bayes The Task of Text Classification

Dan Jurafsky Text Classification • • Assigning subject categories, topics, or genres Spam detection Authorship identification Age/gender identification Language Identification Sentiment analysis …

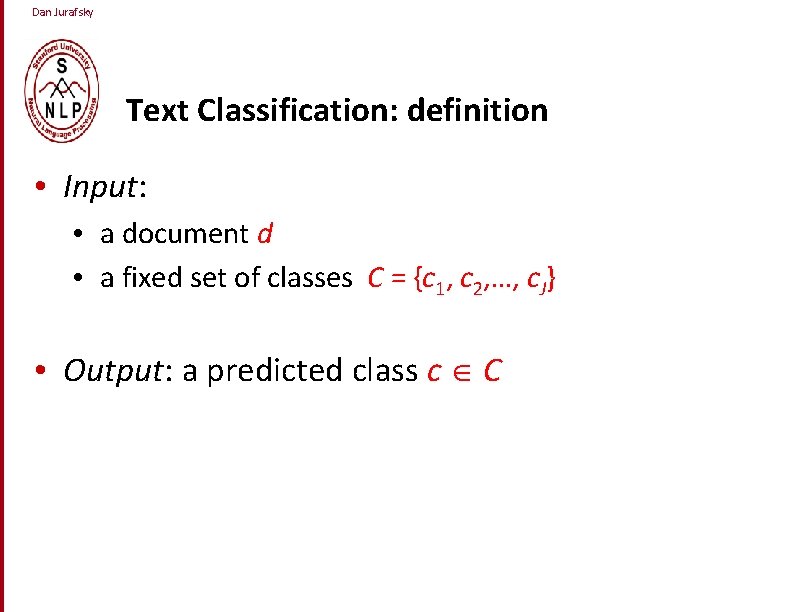

Dan Jurafsky Text Classification: definition • Input: • a document d • a fixed set of classes C = {c 1, c 2, …, c. J} • Output: a predicted class c C

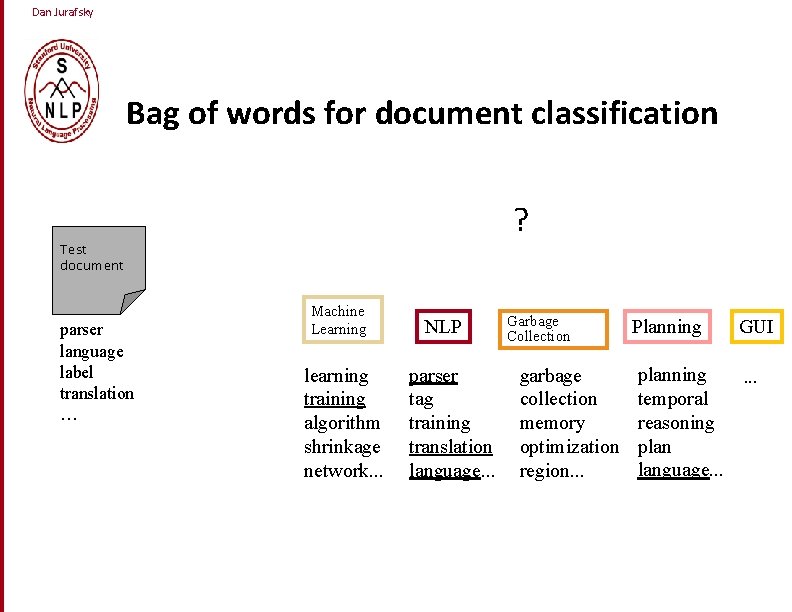

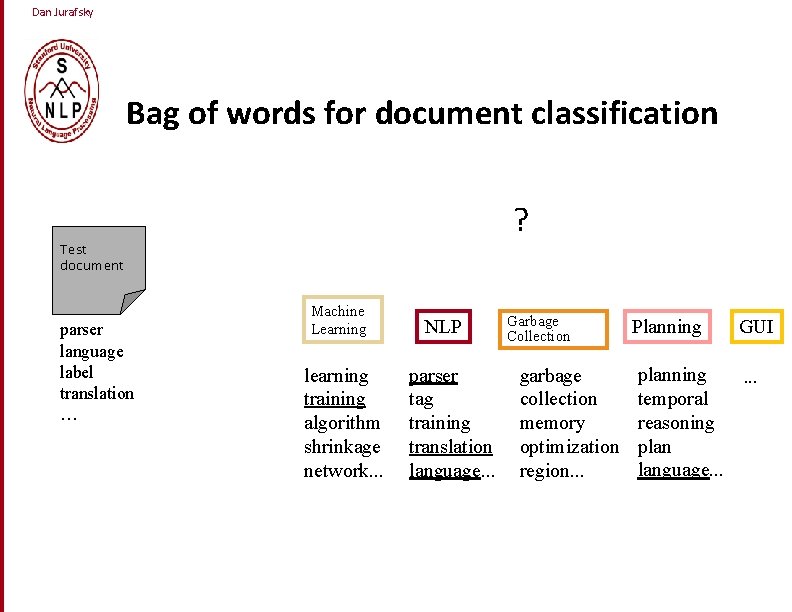

Dan Jurafsky Bag of words for document classification ? Test document parser language label translation … Machine Learning learning training algorithm shrinkage network. . . NLP parser tag training translation language. . . Garbage Collection garbage collection memory optimization region. . . Planning GUI planning. . . temporal reasoning plan language. . .

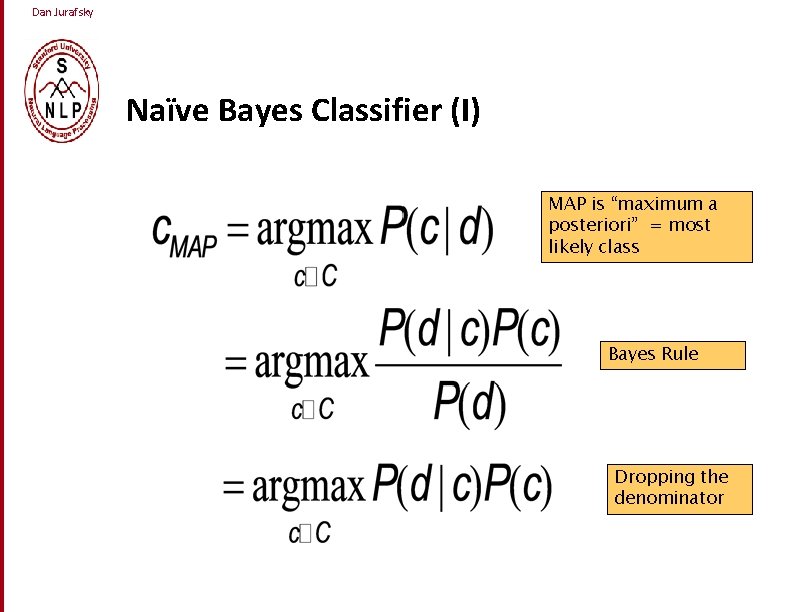

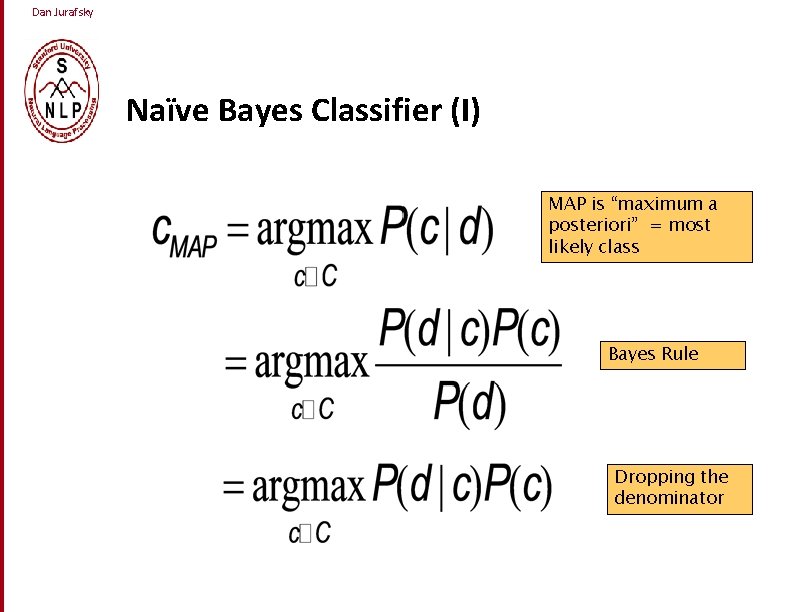

Dan Jurafsky Naïve Bayes Classifier (I) MAP is “maximum a posteriori” = most likely class Bayes Rule Dropping the denominator

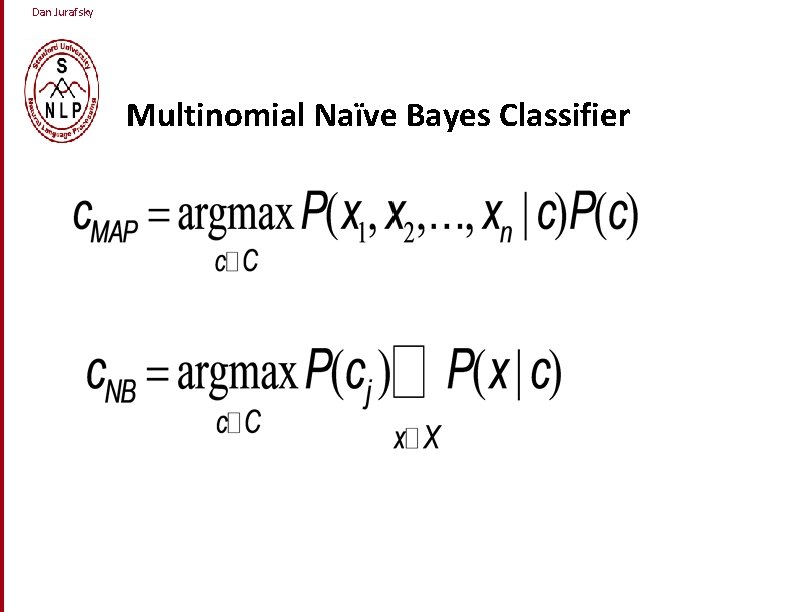

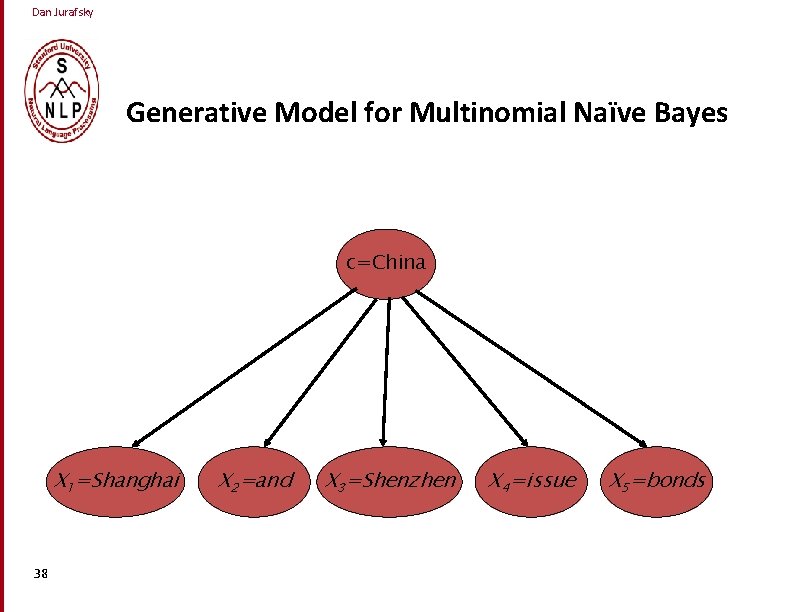

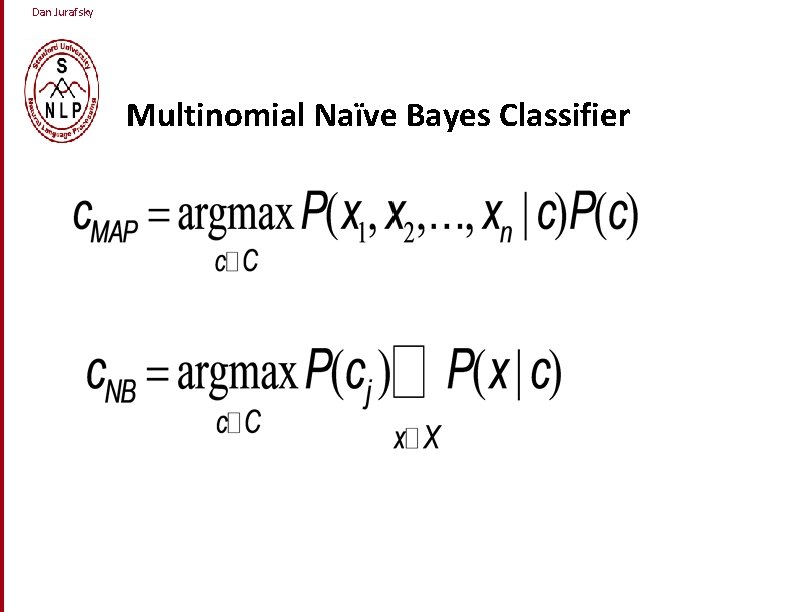

Dan Jurafsky Multinomial Naïve Bayes Classifier

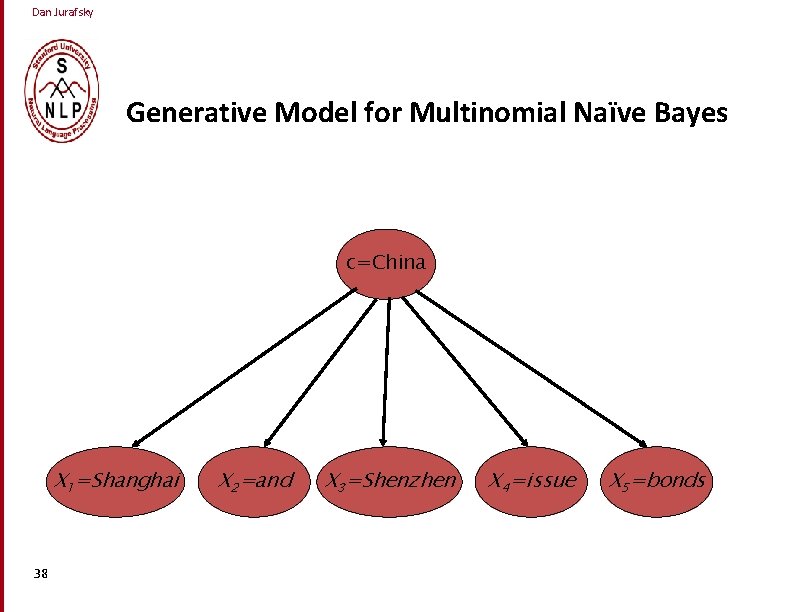

Dan Jurafsky Generative Model for Multinomial Naïve Bayes c=China X 1=Shanghai 38 X 2=and X 3=Shenzhen X 4=issue X 5=bonds

Supp Ponix characters 40