Statistical Natural Language Processing Advanced AI Part II

- Slides: 18

Statistical Natural Language Processing Advanced AI - Part II Luc De Raedt University of Freiburg WS 2005/2006 Many slides taken from Helmut Schmid

Topic n n Statistical Natural Language Processing Applies ¡ Machine Learning / Statistics to n ¡ n Learning : the ability to improve one’s behaviour at a specific task over time - involves the analysis of data (statistics) Natural Language Processing Following parts of the book ¡ Statistical NLP (Manning and Schuetze), MIT Press, 1999.

Rationalism versus Empiricism n Rationalist ¡ ¡ n Noam Chomsky - innate language structures AI : hand coding NLP Dominant view 1960 -1985 Cf. e. g. Steven Pinker’s The language instinct. (popular science book) Empiricist ¡ ¡ ¡ Ability to learn is innate AI : language is learned from corpora Dominant 1920 -1960 and becoming increasingly important

Rationalism versus Empiricism n Noam Chomsky: ¡ n But it must be recognized that the notion of “probability of a sentence” is an entirely useless one, under any known interpretation of this term Fred Jelinek (IBM 1988) ¡ ¡ Every time a linguist leaves the room the recognition rate goes up. (Alternative: Every time I fire a linguist the recognizer improves)

This course n Empiricist approach ¡ n Focus will be on probabilistic models for learning of natural language No time to treat natural language in depth ! ¡ ¡ (though this would be quite useful and interesting) Deserves a full course by itself n Covered in more depth in Logic, Language and Learning (SS 05, prob. SS 06)

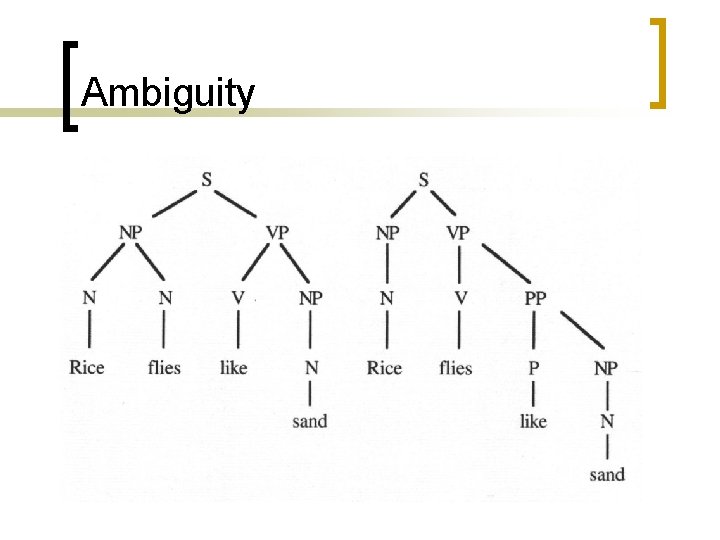

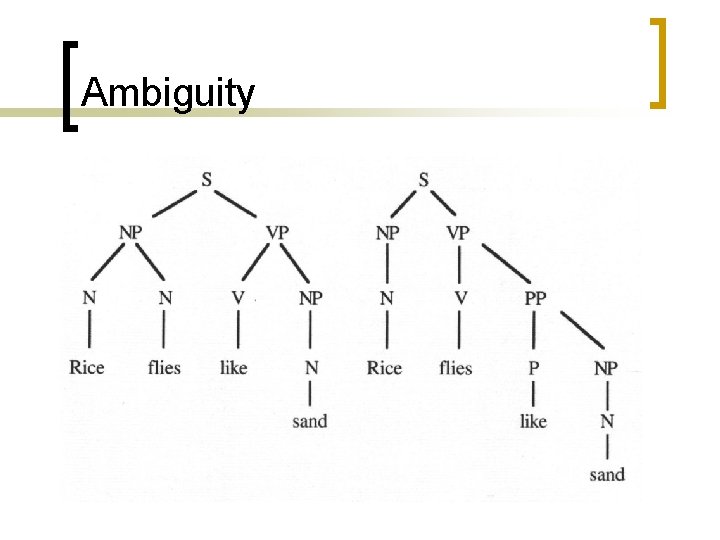

Ambiguity

NLP and Statistics Statistical Disambiguation • Define a probability model for the data • Compute the probability of each alternative • Choose the most likely alternative

NLP and Statistics Statistical Methods deal with uncertainty. They predict the future behaviour of a system based on the behaviour observed in the past. Statistical Methods require training data. The data in Statistical NLP are the Corpora

Corpora § Corpus: text collection for linguistic purposes § Tokens How many words are contained in Tom Sawyer? 71. 370 § Types How many different words are contained in T. S. ? 8. 018 § Hapax Legomena words appearing only once

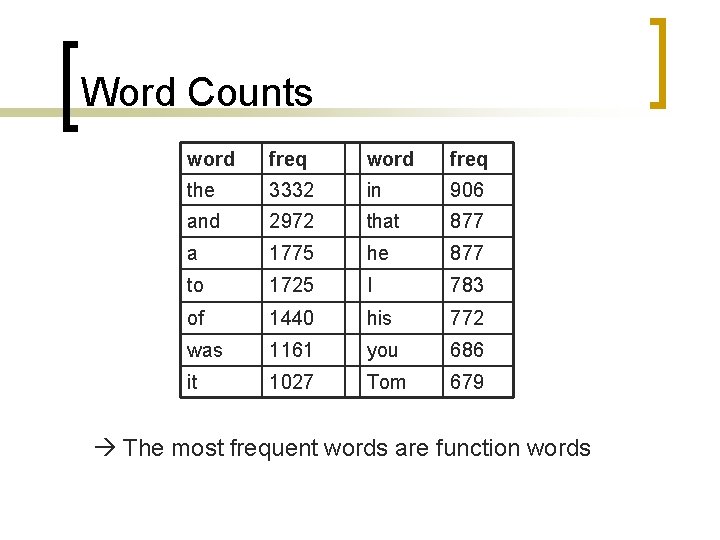

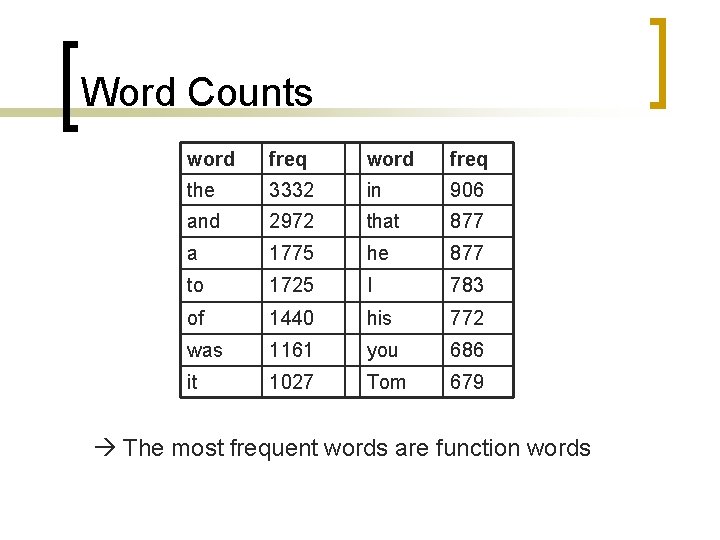

Word Counts word freq the 3332 in 906 and 2972 that 877 a 1775 he 877 to 1725 I 783 of 1440 his 772 was 1161 you 686 it 1027 Tom 679 The most frequent words are function words

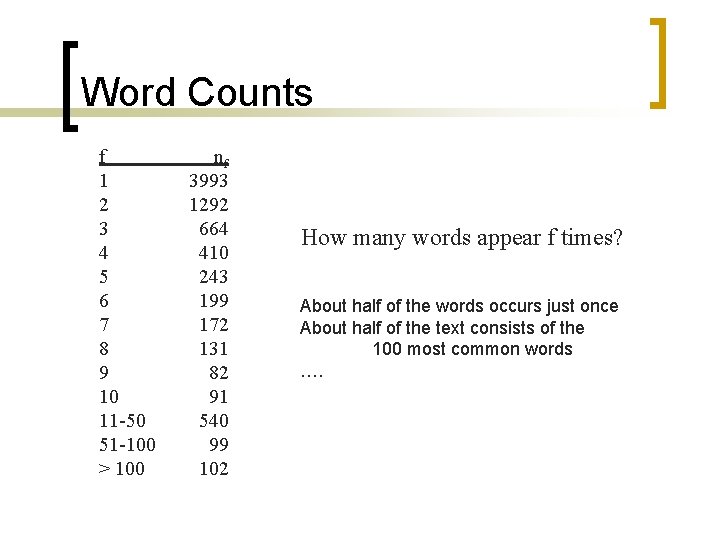

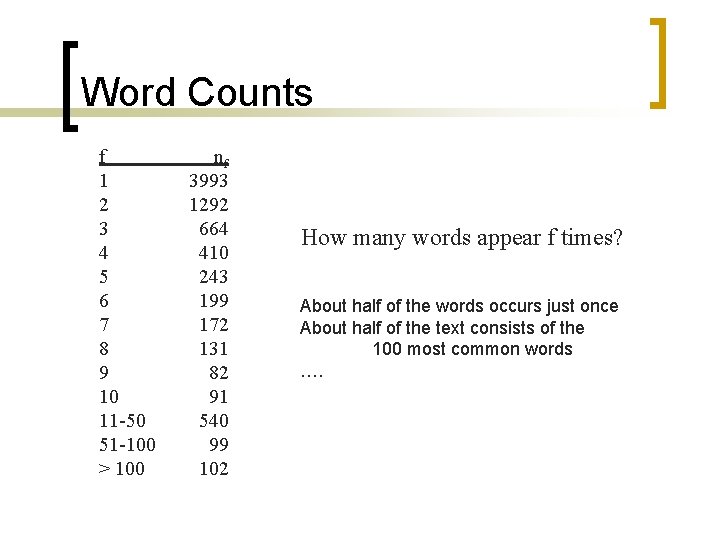

Word Counts f 1 2 3 4 5 6 7 8 9 10 11 -50 51 -100 > 100 nf 3993 1292 664 410 243 199 172 131 82 91 540 99 102 How many words appear f times? About half of the words occurs just once About half of the text consists of the 100 most common words ….

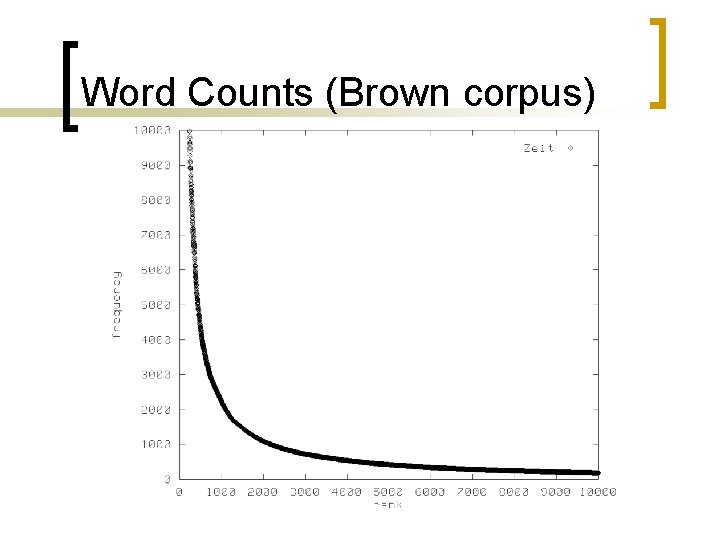

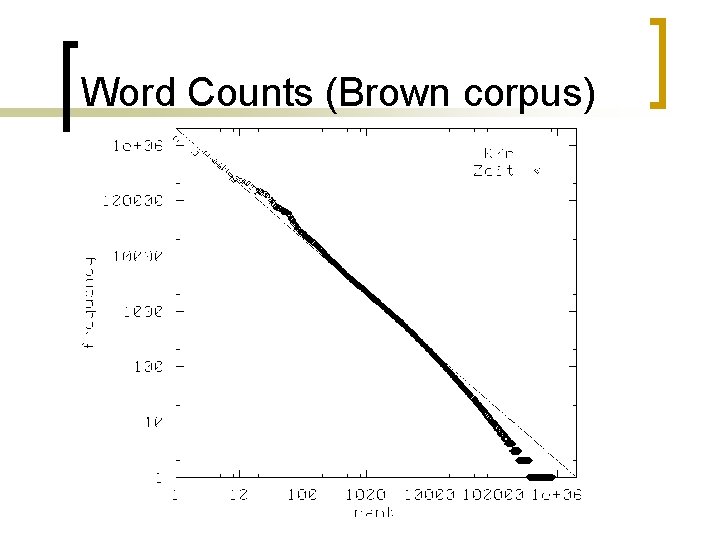

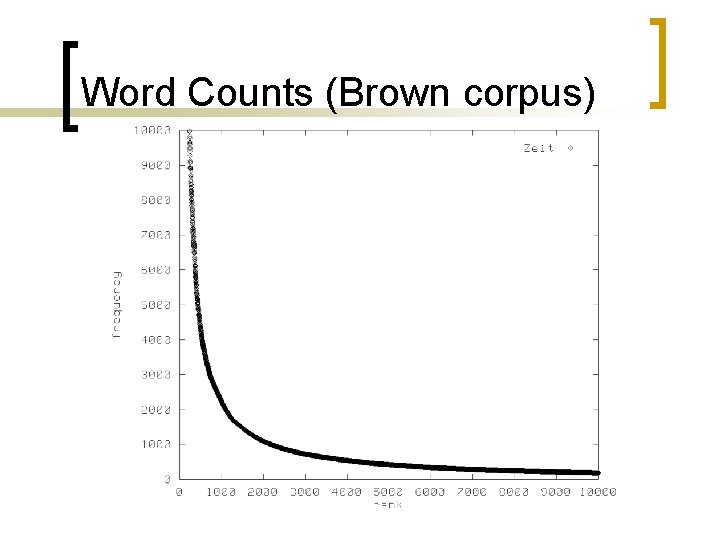

Word Counts (Brown corpus)

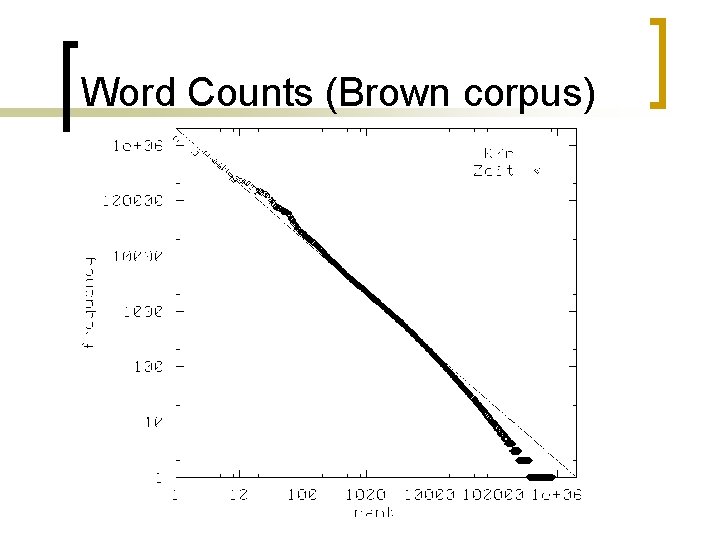

Word Counts (Brown corpus)

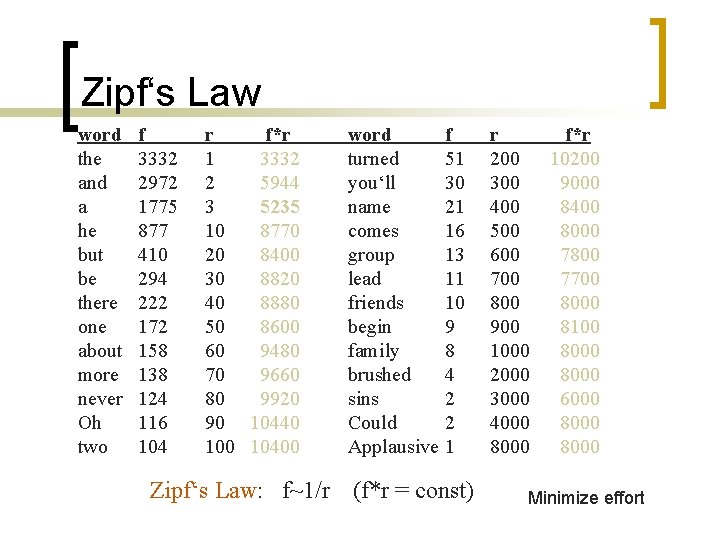

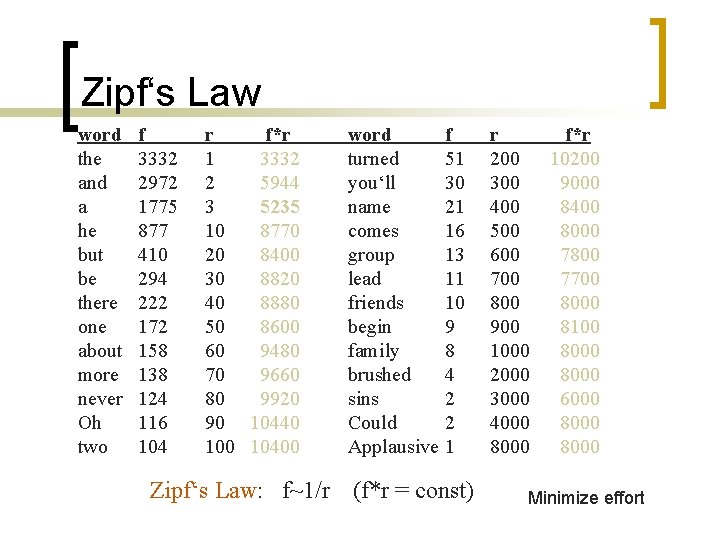

Zipf‘s Law word the and a he but be there one about more never Oh two f 3332 2972 1775 877 410 294 222 172 158 138 124 116 104 r f*r 1 3332 2 5944 3 5235 10 8770 20 8400 30 8820 40 8880 50 8600 60 9480 70 9660 80 9920 90 10440 10400 Zipf‘s Law: f~1/r word turned you‘ll name comes group lead friends begin family brushed sins Could Applausive f 51 30 21 16 13 11 10 9 8 4 2 2 1 (f*r = const) r 200 300 400 500 600 700 800 900 1000 2000 3000 4000 8000 f*r 10200 9000 8400 8000 7800 7700 8000 8100 8000 6000 8000 Minimize effort

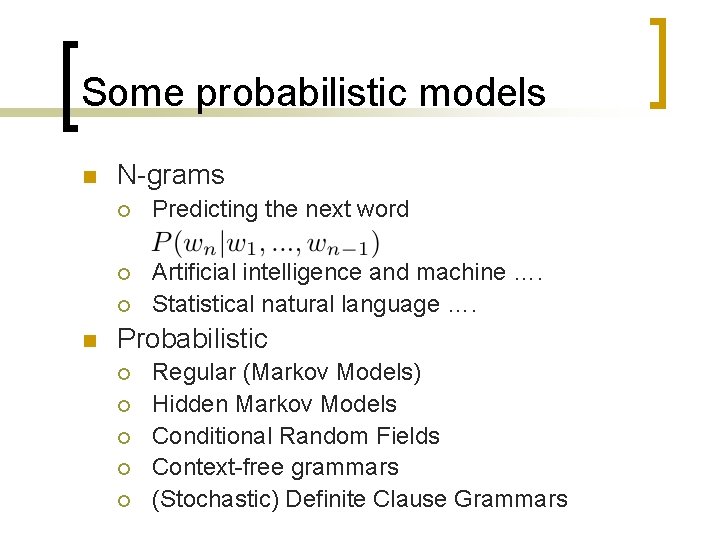

Some probabilistic models n N-grams ¡ Predicting the next word ¡ Artificial intelligence and machine …. Statistical natural language …. ¡ n Probabilistic ¡ ¡ ¡ Regular (Markov Models) Hidden Markov Models Conditional Random Fields Context-free grammars (Stochastic) Definite Clause Grammars

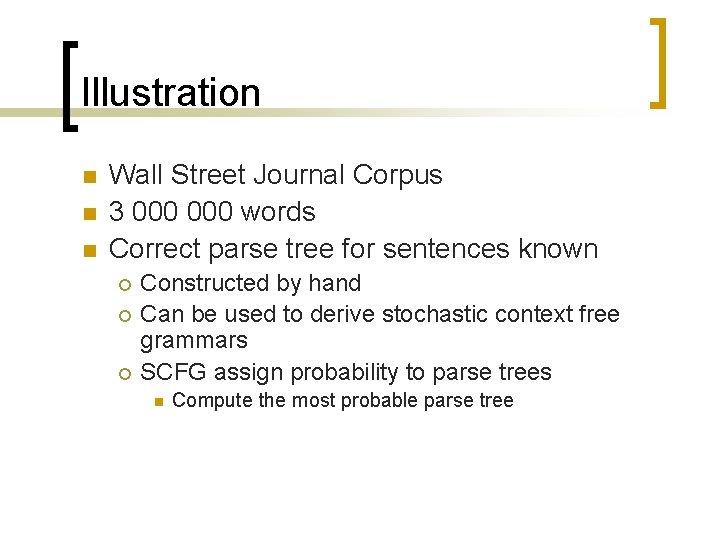

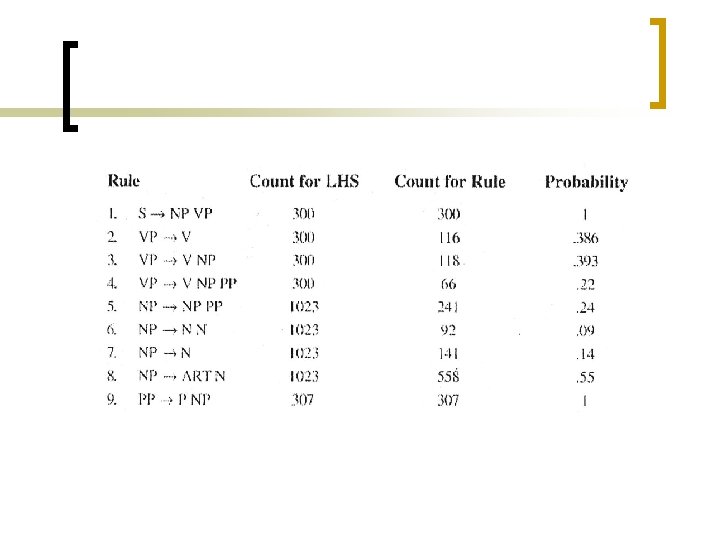

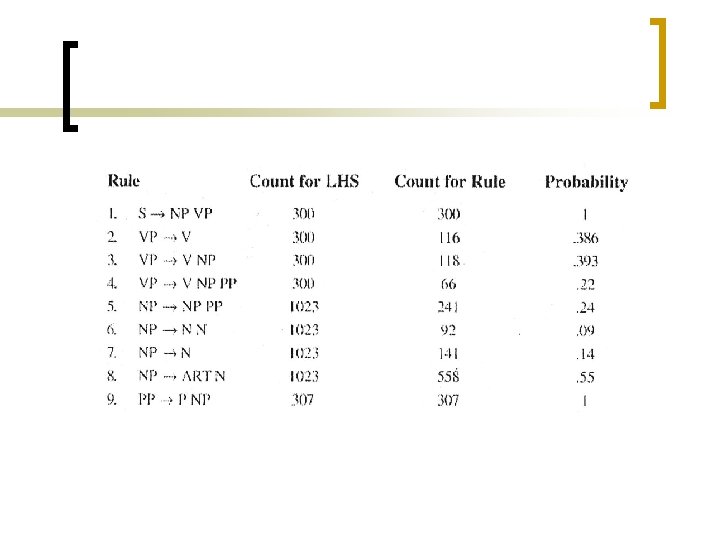

Illustration n Wall Street Journal Corpus 3 000 words Correct parse tree for sentences known ¡ ¡ ¡ Constructed by hand Can be used to derive stochastic context free grammars SCFG assign probability to parse trees n Compute the most probable parse tree

Conclusions n n Overview of some probabilistic and machine learning methods for NLP Also very relevant to bioinformatics ! ¡ Analogy between parsing n n A sentence A biological string (DNA, protein, m. RNA, …)