STATISTICAL METHODS FOR COMPARABILITY ASSESSMENT IN DRUG DEVELOPMENT

STATISTICAL METHODS FOR COMPARABILITY ASSESSMENT IN DRUG DEVELOPMENT Yuanyuan Duan, Mark D Johnson, Yanbing Zheng, Lanju Zhang Non-Clinical Statistics, Abb. Vie Inc May 2016 Equivalance initiative| 31 Mar 2016| Company Confidential © 2016 1

Disclosure The presentation was sponsored by Abb. Vie contributed to the design, research, and interpretation of data, writing, reviewing, and approving the presentation All authors are employees of Abb. Vie, Inc. May 2021 Process Comparison| May 2016 | MBSW Meeting 2

Agenda Introduction Current Regulatory Perspective Comparability assessment Real-World Examples 1. Univariate: Scale Down Model 2. Multivariate: Dissolution Profile Comparison 3. Linear Model: HDX MS 4. Nonlinear Model: Parallelism testing of logistic model May 2021 Process Comparison| May 2016 | MBSW Meeting 3

Introduction Change is the law of life. John F Kennedy Process Comparison| May 2016 | MBSW Meeting 4

Introduction Change is throughout the lifecycle of drug research, development and manufacturing. Process Comparison| May 2016 | MBSW Meeting 5

Introduction Examples • Analytical method ü Method change ü Critical reagent change ü Critical method parameter change • Manufacturing process ü Scale up &scale down ü Critical parameter change, temperature, PH, pressure ü Process component change, eg, cell line, equipment, buffer • Transfer ü Method transfer to another lab ü Process to another site Process Comparison| May 2016 | MBSW Meeting 6

Current Regulatory Perspective Regulations and Guidance for Manufacturing Changes • FDCA Section 506 A and FDAMA Section 116 • 21 CFR 601. 12 Changes to an approved biologics application • ICH Q 5 E : Comparability of biotechnological/biological Products subject to changes in their manufacturing process • ICH Q 8: Pharmaceutical development • ICH Q 9: Quality risk management • ICH Q 10 Pharmaceutical quality system Process Comparison| May 2016 | MBSW Meeting 7

Comparability assessment- Purpose • Demonstrate the comparability and quality consistency of pre- and postchange products • Demonstrate the change does not have an adverse effect on the quality, safety and efficacy of the drug products Different from biosimilarity/bioequivalence • Comparability is to demonstrate the consistency of the same product before and after some change to its manufacturing process • Biosimilarity/bioequivalence is to demonstrate the consistency between a biosimilar/bioequivalent drug product and its reference originator drug product Process Comparison| May 2016 | MBSW Meeting 8

Comparability assessment- Equivalence Test For evaluating process or method changes, the Equivalence approach is recommended The hypotheses for this method are: H 0: μ 1 -μ 2> θ or μ 1 -μ 2< -θ (θ : Equivalence Margin) H 1: Not H 0 Recommended approaches for this analysis are: Confidence Interval (CI) approach Two one-sided test (TOST) Challenge to this approach is accurately identifying θ. May 2021 Process Comparison| May 2016 | MBSW Meeting 9

Comparability assessment- Equivalence Margin The equivalence margin may be established using the following two approaches: • Fixed margin: o Defined based on experts’ knowledge about the process or method being evaluated. o Different margin criteria are required for different assays (i. e. , 1% might be suitable for HPLC; 2. 5% might be suitable for Bioassay) o Challenge is to identify a specific number for equivalence margin • Non-fixed margin: o Function of assay variability , e. g. θ = cσ o Consistent rule across many assay methods o Based on statistical power of rejecting equivalence between the methods/processes using a limited observation total May 2021 Process Comparison| May 2016 | MBSW Meeting 10

Real-world Examples 1. Univariate: Scale Down Model 2. Multivariate: Dissolution Profile Comparison 3. Linear Model: HDX MS 4. Nonlinear Model: parallelism testing of logistic model May 2021 Process Comparison| May 2016 | MBSW Meeting 11

Background: Scale Down Model Process A: 3 L, scale down cell culture process Process B: 3000 L, manufacturing process The scale-down model mimics the manufacturing process from thaw to harvest following the same split ratios Performance of the 3 L scale-down model is compared to 3000 L manufacturing scale performance Purpose: show comparability, indicating that the scale-down model, is sufficiently predictive of the 3000 L production scale process performance and acceptable for use in supporting upstream process characterization studies. May 2021 Process Comparison| May 2016 | MBSW Meeting 12

Scale Down Model Work Flow • Define approach used to qualify model. • Identify representative scale-down (i. e. , 3 L) and manufacturing scale (i. e. , 3000 L) data sets • Determine performance and operating parameters for assessment and provide rationale for selection. • Determine critical quality attributes (CQA) for assessment and provide rationale for selection. • Perform qualitative and statistical analyses May 2021 Process Comparison| May 2016 | MBSW Meeting 13

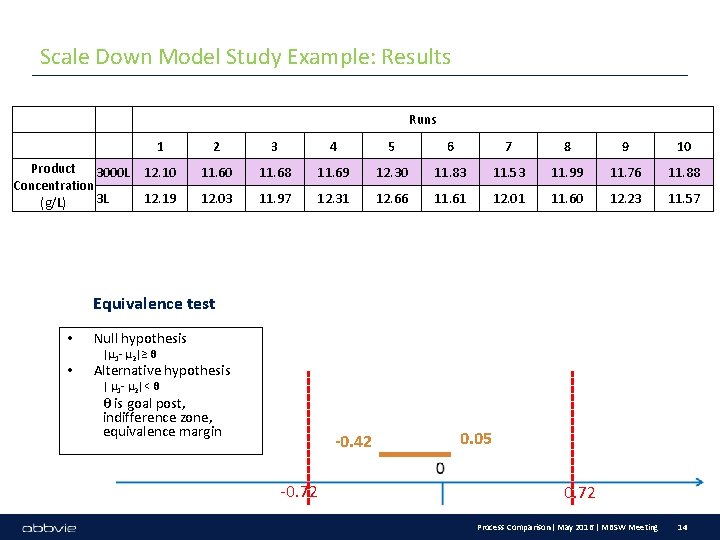

Scale Down Model Study Example: Results Runs Product 3000 L Concentration 3 L (g/L) 1 2 3 4 5 6 7 8 9 10 12. 10 11. 68 11. 69 12. 30 11. 83 11. 53 11. 99 11. 76 11. 88 12. 19 12. 03 11. 97 12. 31 12. 66 11. 61 12. 01 11. 60 12. 23 11. 57 Equivalence test • • Null hypothesis |μ 1 - μ 2|≥ θ Alternative hypothesis | μ 1 - μ 2|< θ θ is goal post, indifference zone, equivalence margin -0. 42 -0. 72 0. 05 0. 72 Process Comparison| May 2016 | MBSW Meeting 14

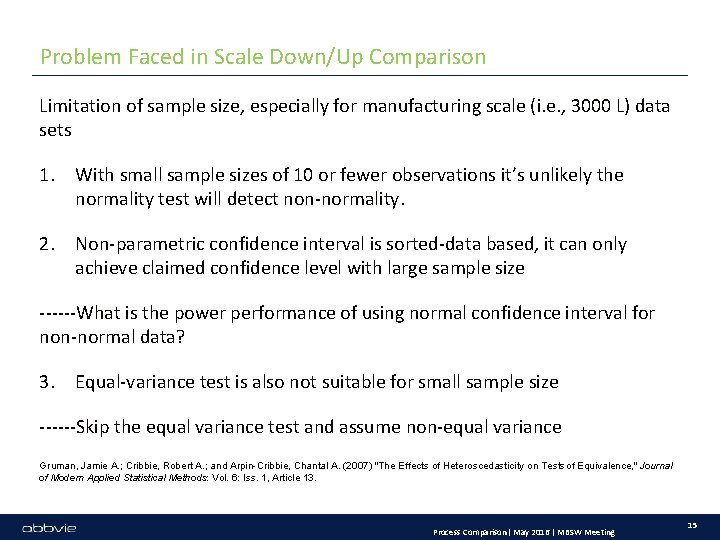

Problem Faced in Scale Down/Up Comparison Limitation of sample size, especially for manufacturing scale (i. e. , 3000 L) data sets 1. With small sample sizes of 10 or fewer observations it’s unlikely the normality test will detect non-normality. 2. Non-parametric confidence interval is sorted-data based, it can only achieve claimed confidence level with large sample size ------What is the power performance of using normal confidence interval for non-normal data? 3. Equal-variance test is also not suitable for small sample size ------Skip the equal variance test and assume non-equal variance Gruman, Jamie A. ; Cribbie, Robert A. ; and Arpin-Cribbie, Chantal A. (2007) "The Effects of Heteroscedasticity on Tests of Equivalence, " Journal of Modern Applied Statistical Methods: Vol. 6: Iss. 1, Article 13. Process Comparison| May 2016 | MBSW Meeting 15

Real-world Examples 1. Univariate: Scale Down Model 2. Multivariate: Dissolution Profile Comparison 3. Linear Model: HDX MS 4. Nonlinear Model: parallelism testing of logistic model May 2021 Process Comparison| May 2016 | MBSW Meeting 16

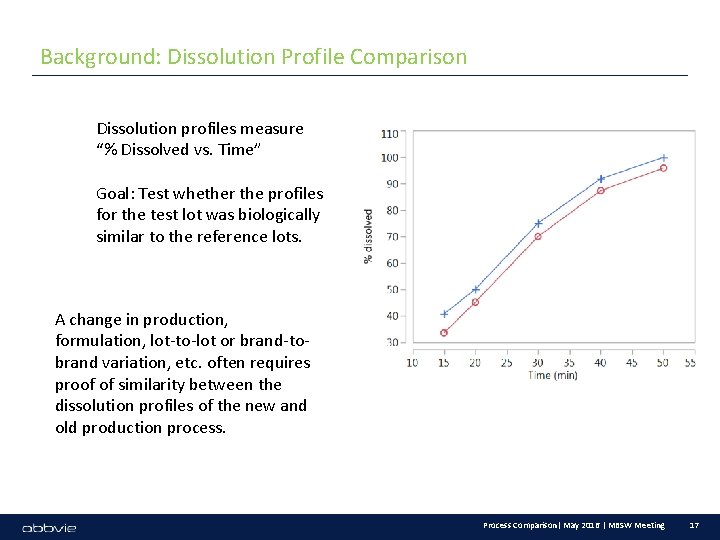

Background: Dissolution Profile Comparison Dissolution profiles measure “% Dissolved vs. Time” Goal: Test whether the profiles for the test lot was biologically similar to the reference lots. A change in production, formulation, lot-to-lot or brand-tobrand variation, etc. often requires proof of similarity between the dissolution profiles of the new and old production process. Process Comparison| May 2016 | MBSW Meeting 17

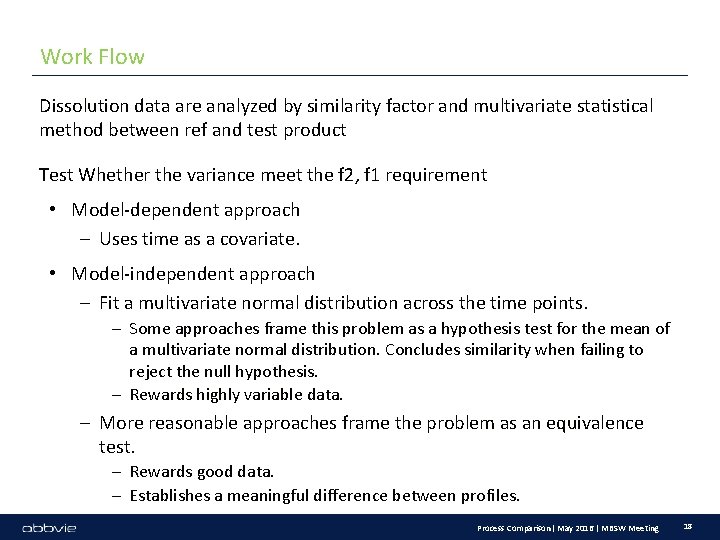

Work Flow Dissolution data are analyzed by similarity factor and multivariate statistical method between ref and test product Test Whether the variance meet the f 2, f 1 requirement • Model-dependent approach – Uses time as a covariate. • Model-independent approach – Fit a multivariate normal distribution across the time points. – Some approaches frame this problem as a hypothesis test for the mean of a multivariate normal distribution. Concludes similarity when failing to reject the null hypothesis. – Rewards highly variable data. – More reasonable approaches frame the problem as an equivalence test. – Rewards good data. – Establishes a meaningful difference between profiles. Process Comparison| May 2016 | MBSW Meeting 18

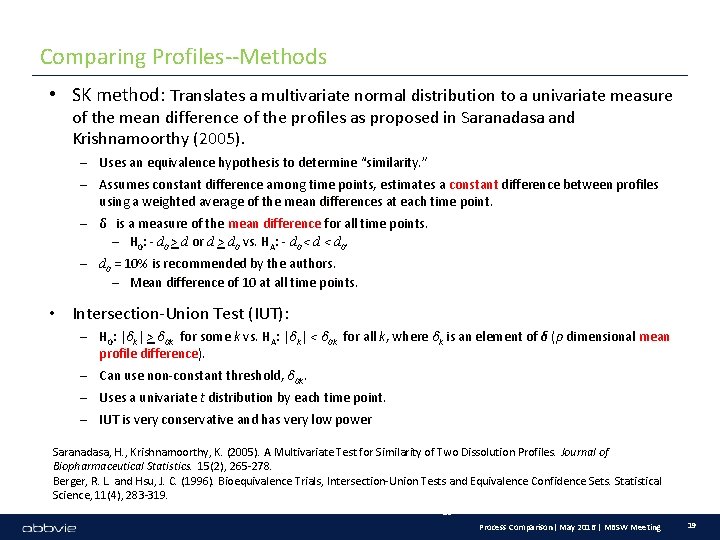

Comparing Profiles--Methods • SK method: Translates a multivariate normal distribution to a univariate measure of the mean difference of the profiles as proposed in Saranadasa and Krishnamoorthy (2005). – Uses an equivalence hypothesis to determine “similarity. ” – Assumes constant difference among time points, estimates a constant difference between profiles using a weighted average of the mean differences at each time point. – δ is a measure of the mean difference for all time points. – H 0: - d 0 > d or d > d 0 vs. HA: - d 0 < d 0. – d 0 = 10% is recommended by the authors. – Mean difference of 10 at all time points. • Intersection-Union Test (IUT): – H 0: |δk| > δ 0 k for some k vs. HA: |δk| < δ 0 k for all k, where δk is an element of δ (p dimensional mean profile difference). – Can use non-constant threshold, δ 0 k. – Uses a univariate t distribution by each time point. – IUT is very conservative and has very low power Saranadasa, H. , Krishnamoorthy, K. (2005). A Multivariate Test for Similarity of Two Dissolution Profiles. Journal of Biopharmaceutical Statistics. 15(2), 265 -278. Berger, R. L. and Hsu, J. C. (1996). Bioequivalence Trials, Intersection-Union Tests and Equivalence Confidence Sets. Statistical Science, 11(4), 283 -319. 19 Process Comparison| May 2016 | MBSW Meeting 19

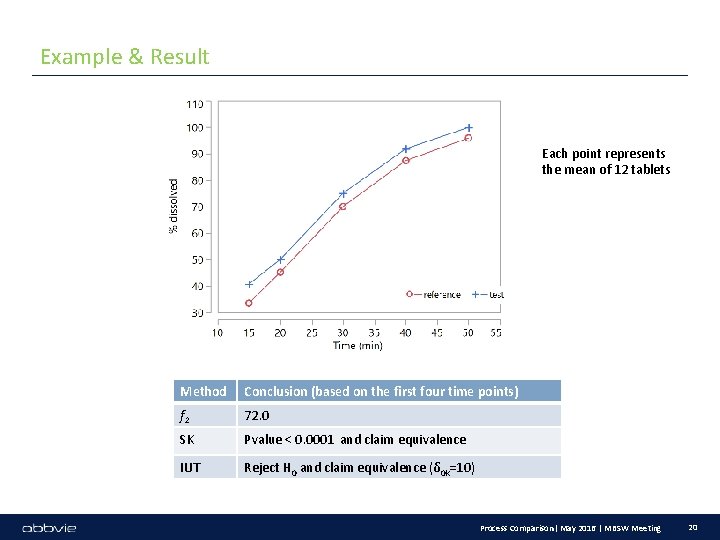

Example & Result Each point represents the mean of 12 tablets Method Conclusion (based on the first four time points) f 2 72. 0 SK Pvalue < 0. 0001 and claim equivalence IUT Reject H 0 and claim equivalence (δ 0 k=10) Process Comparison| May 2016 | MBSW Meeting 20

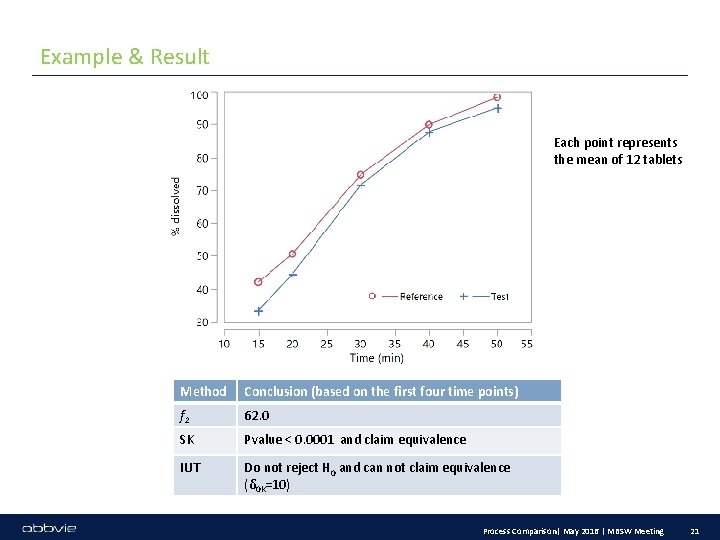

Example & Result Each point represents the mean of 12 tablets Method Conclusion (based on the first four time points) f 2 62. 0 SK Pvalue < 0. 0001 and claim equivalence IUT Do not reject H 0 and can not claim equivalence (δ 0 k=10) Process Comparison| May 2016 | MBSW Meeting 21

Real-world Examples 1. Univariate: Scale Down Model 2. Multivariate: Dissolution Profile Comparison 3. Linear Model: HDX MS 4. Nonlinear Model: parallelism testing of logistic model May 2021 Process Comparison| May 2016 | MBSW Meeting 22

Background: HDX MS The function, efficacy, and safety of protein biopharmaceuticals are tied to their three-dimensional structure. Production of protein drugs are sensitive to the use of cells, expression systems, and/or growth conditions (in vivo chemical modifications) In addition, PTM may occur through changes of purification strategies as well as filling, vialing and storage steps (in vitro chemical modifications) NMR or X-ray are either too complex or time consuming, or not applicable for routine biopharmaceutical analysis. Hydrogen/deuterium exchange (HDX) mass spectrometry (MS) has become a key technique to understand protein structure and dynamics. Houde, Damian, Steven A. Berkowitz, and John R. Engen. "The utility of hydrogen/deuterium exchange mass spectrometry in biopharmaceutical comparability studies. " Journal of pharmaceutical sciences 100. 6 (2011): 2071 -2086. Process Comparison| May 2016 | MBSW Meeting 23

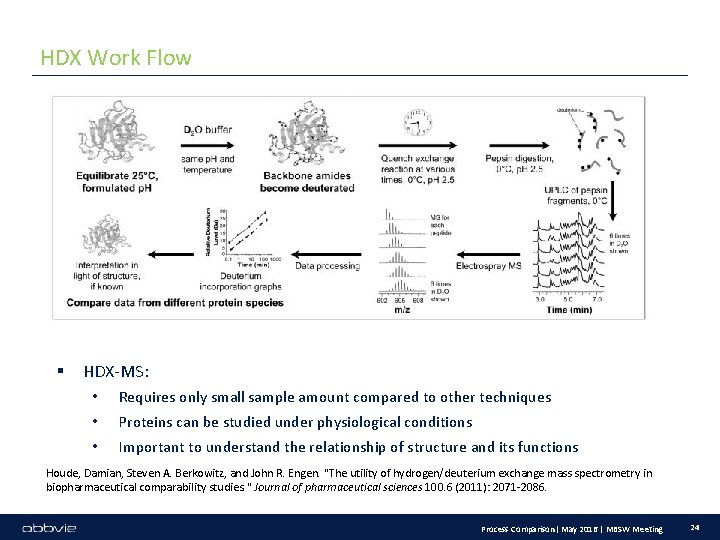

HDX Work Flow § HDX-MS: • Requires only small sample amount compared to other techniques • Proteins can be studied under physiological conditions • Important to understand the relationship of structure and its functions Houde, Damian, Steven A. Berkowitz, and John R. Engen. "The utility of hydrogen/deuterium exchange mass spectrometry in biopharmaceutical comparability studies. " Journal of pharmaceutical sciences 100. 6 (2011): 2071 -2086. Process Comparison| May 2016 | MBSW Meeting 24

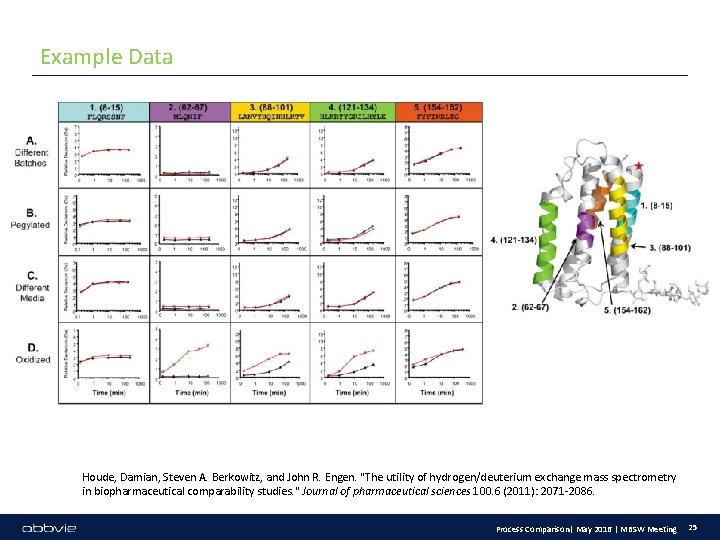

Example Data Houde, Damian, Steven A. Berkowitz, and John R. Engen. "The utility of hydrogen/deuterium exchange mass spectrometry in biopharmaceutical comparability studies. " Journal of pharmaceutical sciences 100. 6 (2011): 2071 -2086. Process Comparison| May 2016 | MBSW Meeting 25

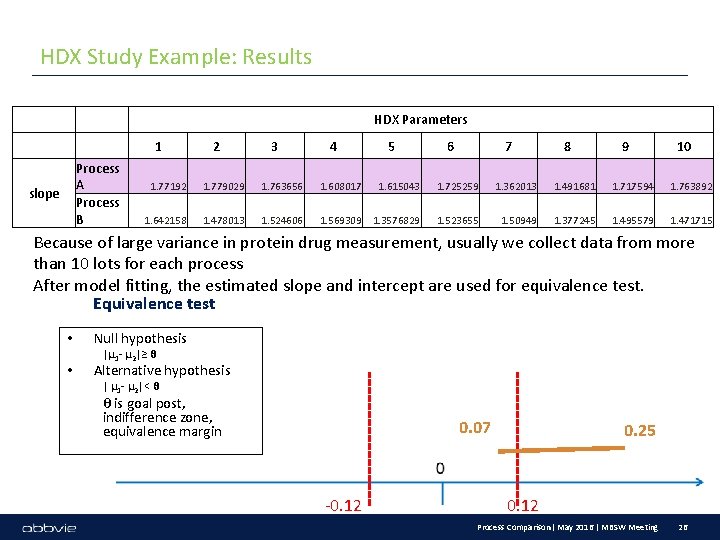

HDX Study Example: Results HDX Parameters 1 Process A Process B slope 2 3 4 5 6 7 8 9 10 1. 77192 1. 779029 1. 763656 1. 608017 1. 615043 1. 725259 1. 362013 1. 491681 1. 717594 1. 763892 1. 642158 1. 478013 1. 524606 1. 569309 1. 3576829 1. 523655 1. 50949 1. 377245 1. 495579 1. 471715 Because of large variance in protein drug measurement, usually we collect data from more than 10 lots for each process After model fitting, the estimated slope and intercept are used for equivalence test. Equivalence test • • Null hypothesis |μ 1 - μ 2|≥ θ Alternative hypothesis | μ 1 - μ 2|< θ θ is goal post, indifference zone, equivalence margin 0. 07 -0. 12 0. 25 0. 12 Process Comparison| May 2016 | MBSW Meeting 26

Problem Faced in Linear Example One protein has multiple peptides, to prove the comparability we need to confirm all the peptides being comparable, multiplicity need to be considered. ANCOVA can also be used for process comparison (as Q 1 E), lot can be treated as nested in process. The choice of p-value cut-off need further discussion. We only compare the slope and intercept, so the linear model assumption need to be checked. If we only have 1 curve in each group, we can also use Parallelism Test. How to incorporate variability associated with slope and intercept estimation? Process Comparison| May 2016 | MBSW Meeting 27

Real-world Examples 1. Univariate: Scale Down Model 2. Multivariate: Dissolution Profile Comparison 3. Linear Model: HDX MS 4. Nonlinear Model: parallelism testing of logistic model May 2021 Process Comparison| May 2016 | MBSW Meeting 28

Background: Parallelism Testing • Parallelism between sets of dose-response data is a prerequisite for determination of relative biological activity or binding capacity • For example, relative potency assay methods for CMC quality control of new biological entities require statistical evaluation to demonstrate similarity between reference standard and sample. • F-statistics were applied to demonstrate curve similarity. • Due to the mathematical calculation of F-statistics false negative statistical results are generated for very precise assays. • The revised USP Chapters 〈1032〉 and 〈1034〉 suggest testing parallelism using an equivalence method. Process Comparison| May 2016 | MBSW Meeting 29

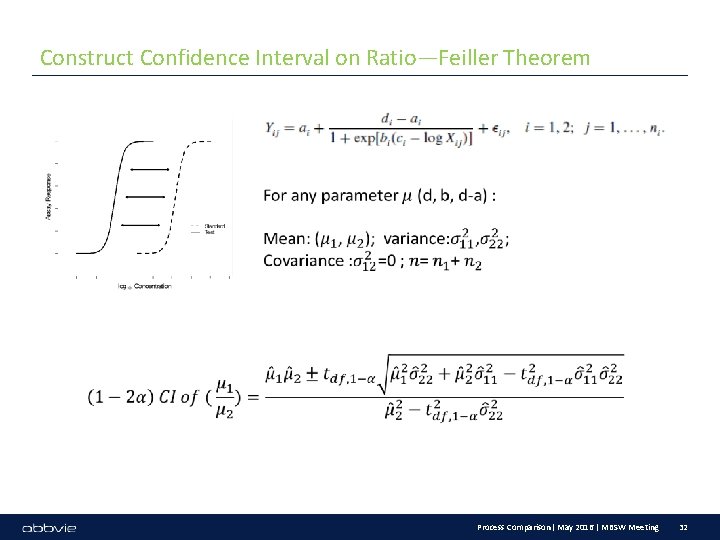

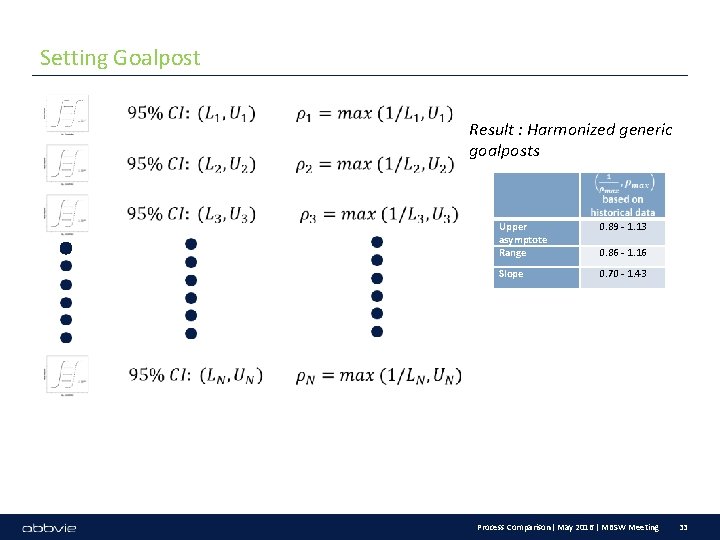

Parallelism Test Work Flow • Measure of similarity – ratio (sample vs. reference) of upper asymptotic, slope, and range (lower – upper asymptotic) • Construct confidence intervals on ratio – Feiller’s method • Compute extreme values • Goalpost based on Tolerance interval of historical data May 2021 Process Comparison| May 2016 | MBSW Meeting 30

Why Ratio? ---To Avoid Different Scale Process Comparison| May 2016 | MBSW Meeting 31

Construct Confidence Interval on Ratio—Feiller Theorem 22/05/2021 Process Comparison| May 2016 | MBSW Meeting 32

Setting Goalpost Result : Harmonized generic goalposts 22/05/2021 Upper asymptote Range 0. 89 - 1. 13 Slope 0. 70 - 1. 43 0. 86 - 1. 16 Process Comparison| May 2016 | MBSW Meeting 33

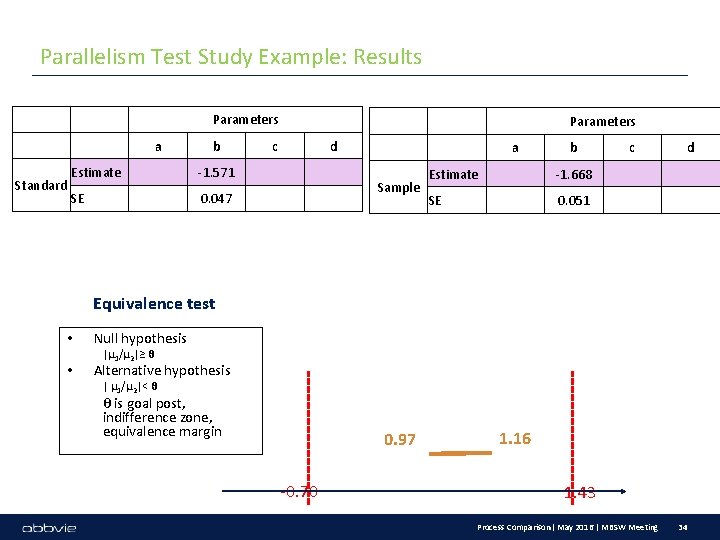

Parallelism Test Study Example: Results Parameters a Standard b Estimate -1. 571 SE 0. 047 Parameters c d a Sample b Estimate -1. 668 SE 0. 051 c d Equivalence test • • Null hypothesis |μ 1/μ 2|≥ θ Alternative hypothesis | μ 1/μ 2|< θ θ is goal post, indifference zone, equivalence margin 0. 97 -0. 70 1. 16 1. 43 Process Comparison| May 2016 | MBSW Meeting 34

Summary • Reviewed Current Regulatory Perspective • Equivalence Test • Real-world Examples shown how equivalence test is used in univariate, multivariate, linear and non-linear model. Process Comparison| May 2016 | MBSW Meeting 35

Thank you for your attention!

Backup Slides: Definition of power The power for an equivalence test is the probability that we will correctly conclude that the means are equivalent, when in fact they actually are equivalent. When truth is equivalence: p-value<0. 05 (reject the null hypothesis and conclude equivalence) Simulate from different distributions with different means and same variance ( normal, uniform, lognormal, exponential) Normal(mean, sigma); Uniform(Mean-sigma, Mean+sigma), Lognormal( log(mean), sigma); exponential(1/mean) Simulate 10000 tests of x 1, x 2, with true mean difference=delta, goalpost=3*sd(x 1). For simplicity, we assume equal variance

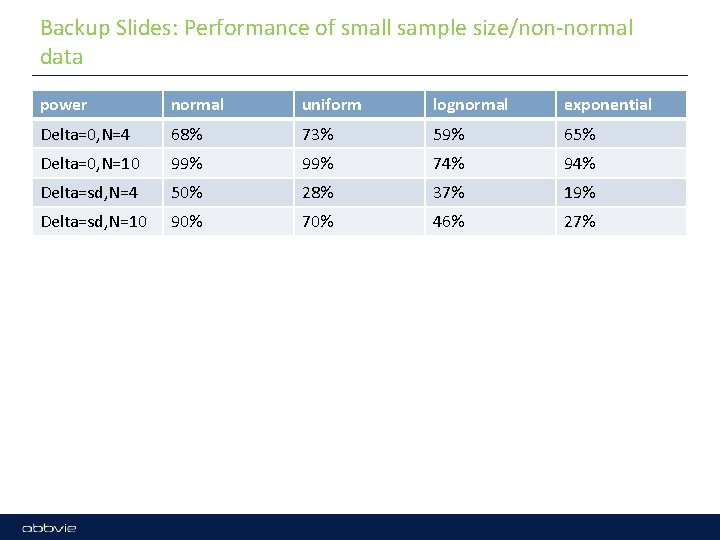

Backup Slides: Performance of small sample size/non-normal data power normal uniform lognormal exponential Delta=0, N=4 68% 73% 59% 65% Delta=0, N=10 99% 74% 94% Delta=sd, N=4 50% 28% 37% 19% Delta=sd, N=10 90% 70% 46% 27%

- Slides: 38