STATISTICAL INFERENCE May be divided into two major

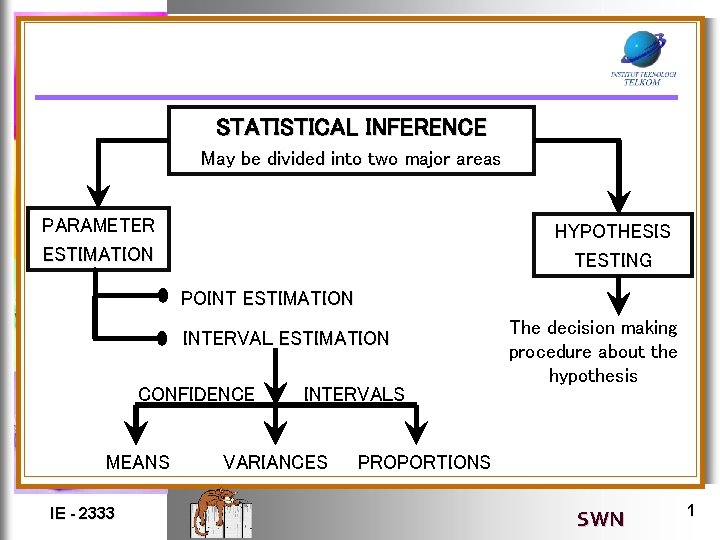

STATISTICAL INFERENCE May be divided into two major areas PARAMETER ESTIMATION HYPOTHESIS TESTING POINT ESTIMATION INTERVAL ESTIMATION CONFIDENCE MEANS IE - 2333 INTERVALS VARIANCES The decision making procedure about the hypothesis PROPORTIONS SWN 1

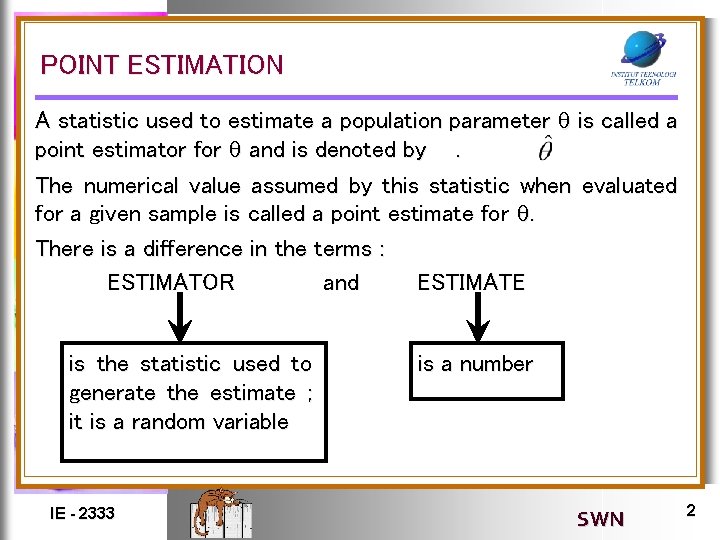

POINT ESTIMATION A statistic used to estimate a population parameter is called a point estimator for and is denoted by. The numerical value assumed by this statistic when evaluated for a given sample is called a point estimate for . There is a difference in the terms : ESTIMATOR and ESTIMATE is the statistic used to generate the estimate ; it is a random variable IE - 2333 is a number SWN 2

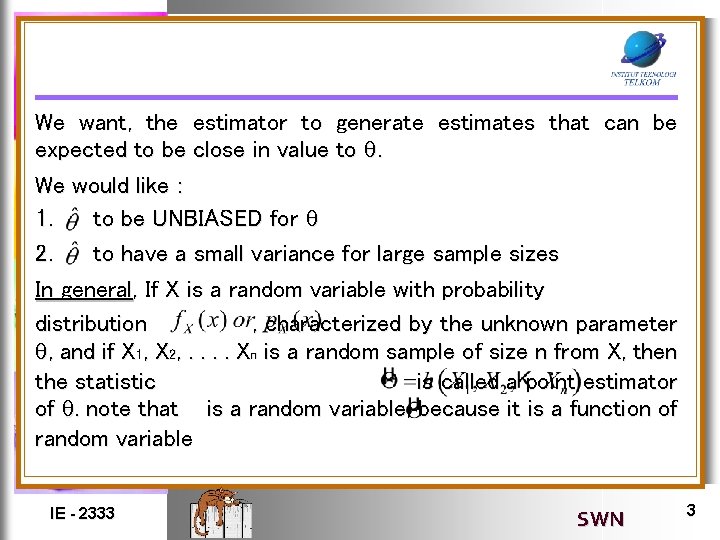

We want, the estimator to generate estimates that can be expected to be close in value to . We would like : 1. to be UNBIASED for 2. to have a small variance for large sample sizes In general, If X is a random variable with probability distribution , characterized by the unknown parameter , and if X 1, X 2, . . Xn is a random sample of size n from X, then the statistic is called a point estimator of . note that is a random variable, because it is a function of random variable IE - 2333 SWN 3

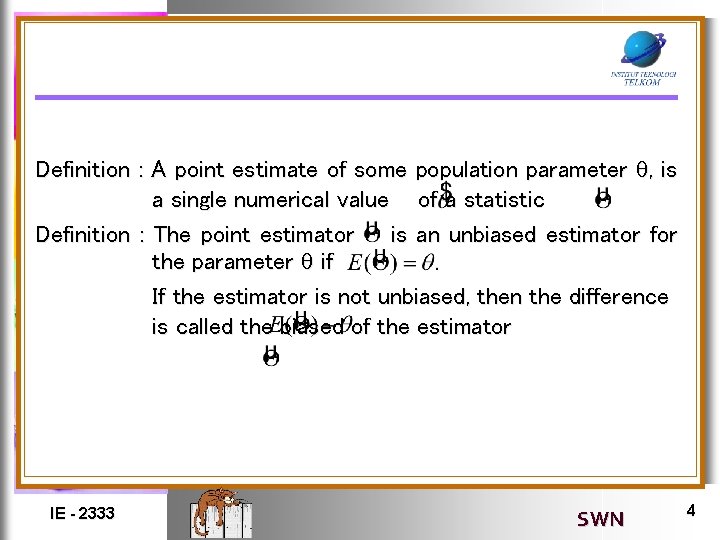

Definition : A point estimate of some population parameter , is a single numerical value of a statistic Definition : The point estimator is an unbiased estimator for the parameter if If the estimator is not unbiased, then the difference is called the biased of the estimator IE - 2333 SWN 4

VARIANCE AND MEAN SQUARE ERROR OF POINT ESTIMATOR A A logical principle of estimation, when selecting among several estimator, is to chose the estimator that has minimum variance. Definition : If we consider all unbiased estimator of , the one with the smallest variance is called the minimum variance unbiased estimator (MVUE). Some times the MVUE is called the UMVUE, where the first U represents “Uniformly”, meaning “for all ” IE - 2333 SWN 5

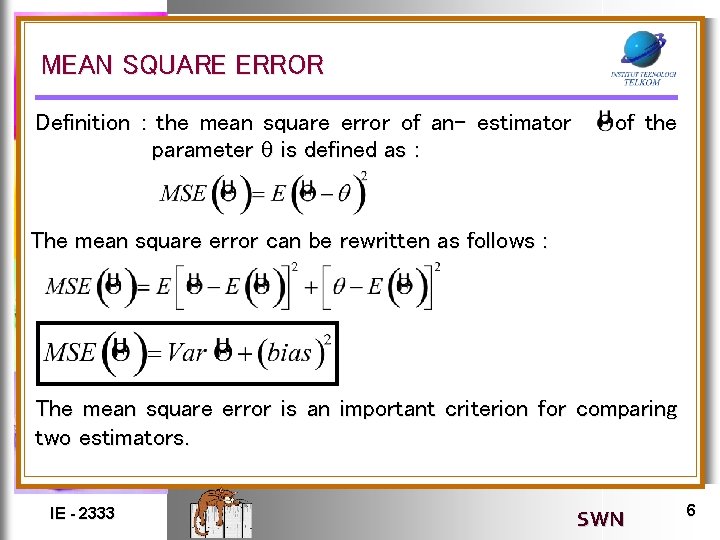

MEAN SQUARE ERROR Definition : the mean square error of an- estimator parameter is defined as : of the The mean square error can be rewritten as follows : The mean square error is an important criterion for comparing two estimators. IE - 2333 SWN 6

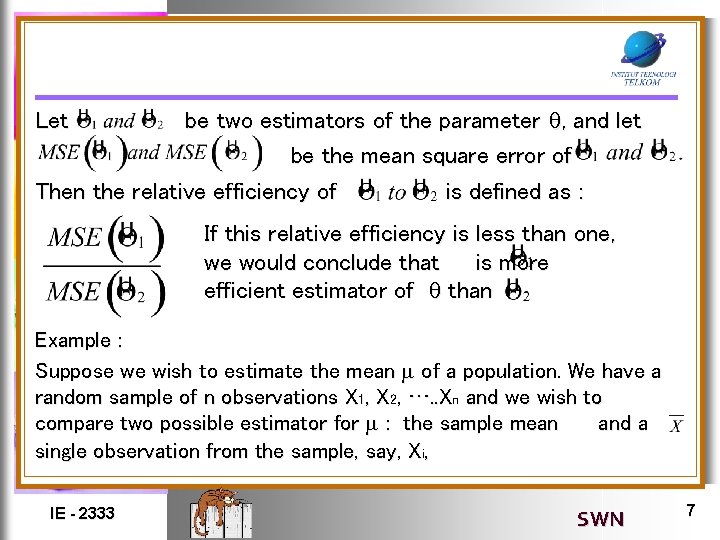

be two estimators of the parameter , and let be the mean square error of Then the relative efficiency of is defined as : Let If this relative efficiency is less than one, we would conclude that is more efficient estimator of than Example : Suppose we wish to estimate the mean of a population. We have a random sample of n observations X 1, X 2, …. . Xn and we wish to compare two possible estimator for : the sample mean and a single observation from the sample, say, Xi, IE - 2333 SWN 7

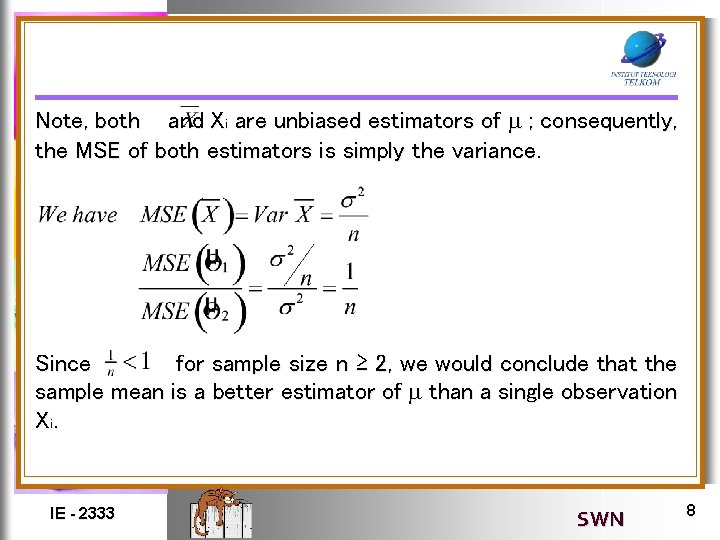

Note, both and Xi are unbiased estimators of ; consequently, the MSE of both estimators is simply the variance. Since for sample size n ≥ 2, we would conclude that the sample mean is a better estimator of than a single observation Xi. IE - 2333 SWN 8

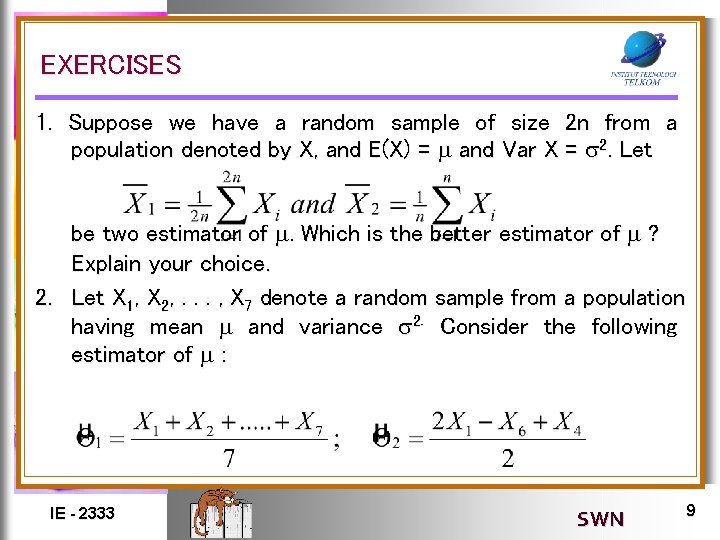

EXERCISES 1. Suppose we have a random sample of size 2 n from a population denoted by X, and E(X) = and Var X = 2. Let be two estimator of . Which is the better estimator of ? Explain your choice. 2. Let X 1, X 2, . . . , X 7 denote a random sample from a population having mean and variance 2. Consider the following estimator of : IE - 2333 SWN 9

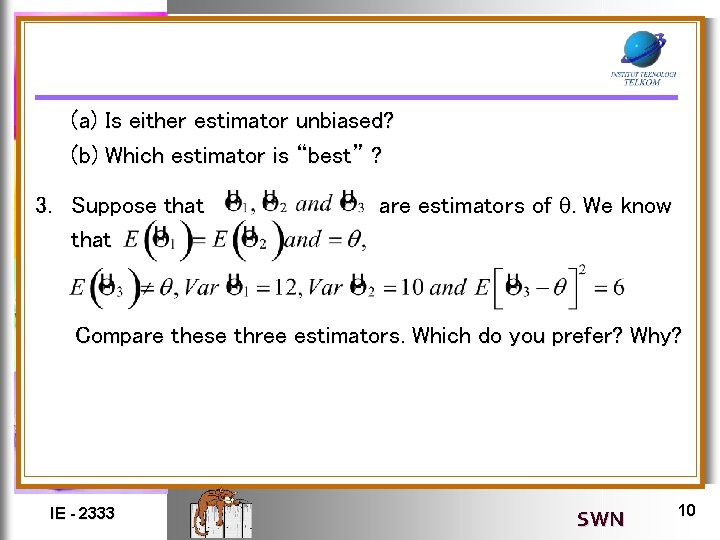

(a) Is either estimator unbiased? (b) Which estimator is “best” ? 3. Suppose that are estimators of . We know Compare these three estimators. Which do you prefer? Why? IE - 2333 SWN 10

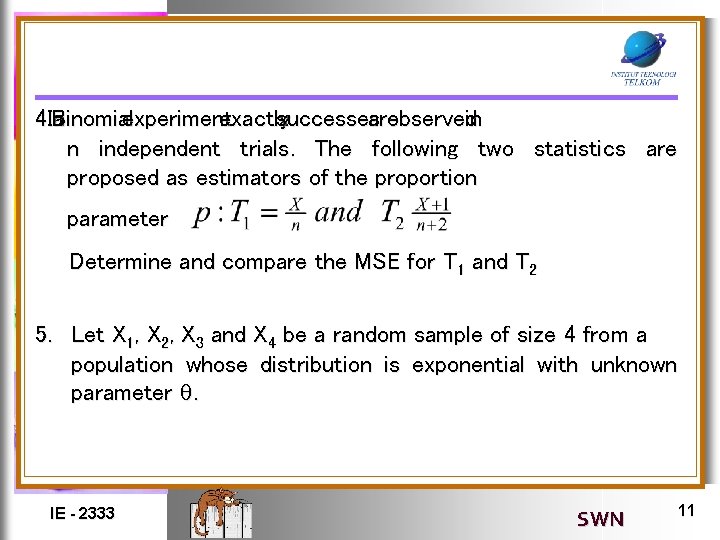

4. In Binomial a experiment exactly successes x are observed in n independent trials. The following two statistics are proposed as estimators of the proportion parameter Determine and compare the MSE for T 1 and T 2 5. Let X 1, X 2, X 3 and X 4 be a random sample of size 4 from a population whose distribution is exponential with unknown parameter . IE - 2333 SWN 11

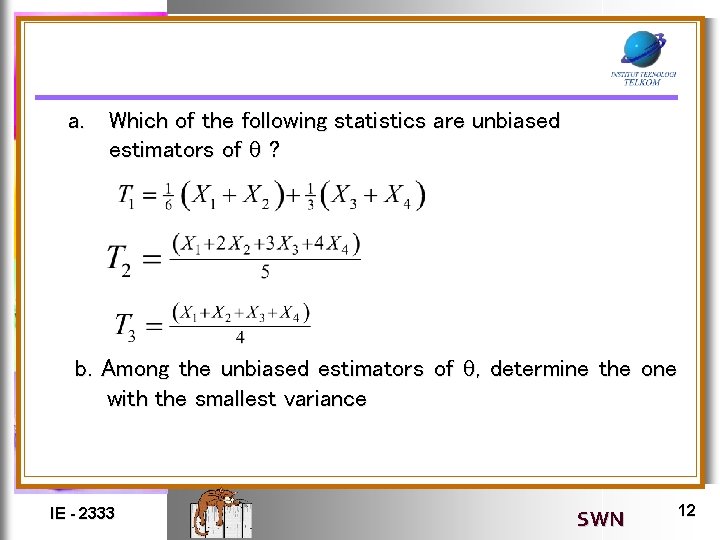

a. Which of the following statistics are unbiased estimators of ? b. Among the unbiased estimators of , determine the one with the smallest variance IE - 2333 SWN 12

- Slides: 12