Static Function Prefetching for Efficient Code Management on

- Slides: 27

Static Function Prefetching for Efficient Code Management on Scratchpad Memory Youngbin Kim, Kyoungwoo Lee, Aviral Shrivastava * Yonsei University, Korea ** Arizona State University, USA Dependable Computing Lab. Dept. of Computer Science Yonsei University ICCD’ 19

Summary of the Talk l In SPM-based code management, prefetching has not been extensively studied – Hard to recover from incorrect prefetching l We proposed a technique to insert prefetch instructions at compile-time without any run-time data structure l Reduces CPU idle time by 58. 5% and execution time by 14. 7% on average by enabling parallel executions of DMA and CPU http: //dclab. yonsei. ac. kr

Outline l Introduction l Our Approach – Finding Load Locations – Inserting Prefetch Instructions l Experimental Results l Conclusion http: //dclab. yonsei. ac. kr

Outline l Introduction l Our Approach – Finding Load Locations – Inserting Prefetch Instructions l Experimental Results l Conclusion http: //dclab. yonsei. ac. kr

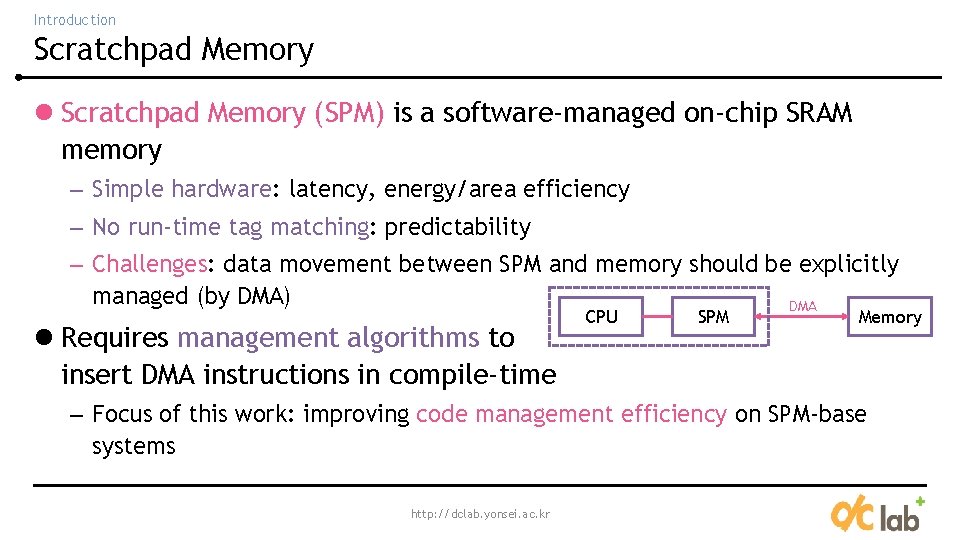

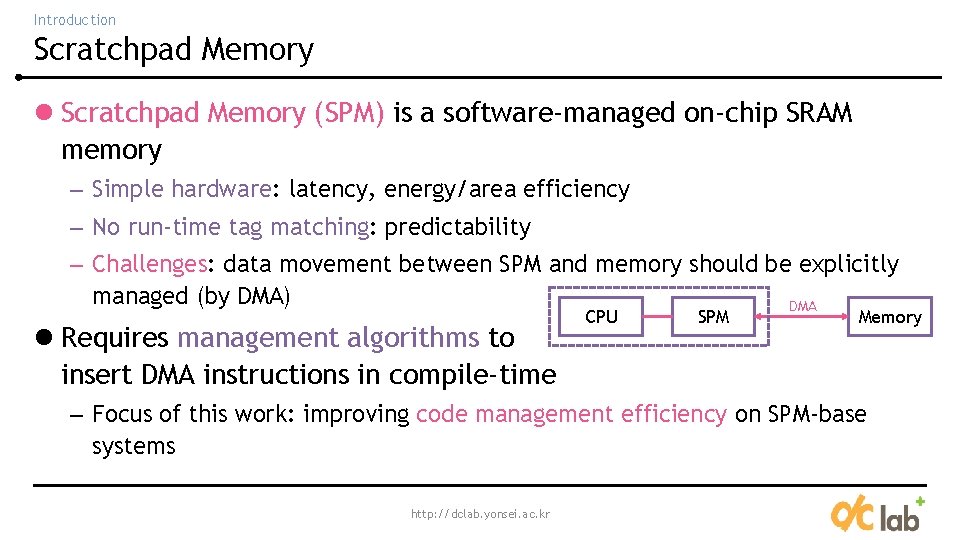

Introduction Scratchpad Memory l Scratchpad Memory (SPM) is a software-managed on-chip SRAM memory – Simple hardware: latency, energy/area efficiency – No run-time tag matching: predictability – Challenges: data movement between SPM and memory should be explicitly managed (by DMA) DMA l Requires management algorithms to insert DMA instructions in compile-time CPU SPM Memory – Focus of this work: improving code management efficiency on SPM-base systems http: //dclab. yonsei. ac. kr

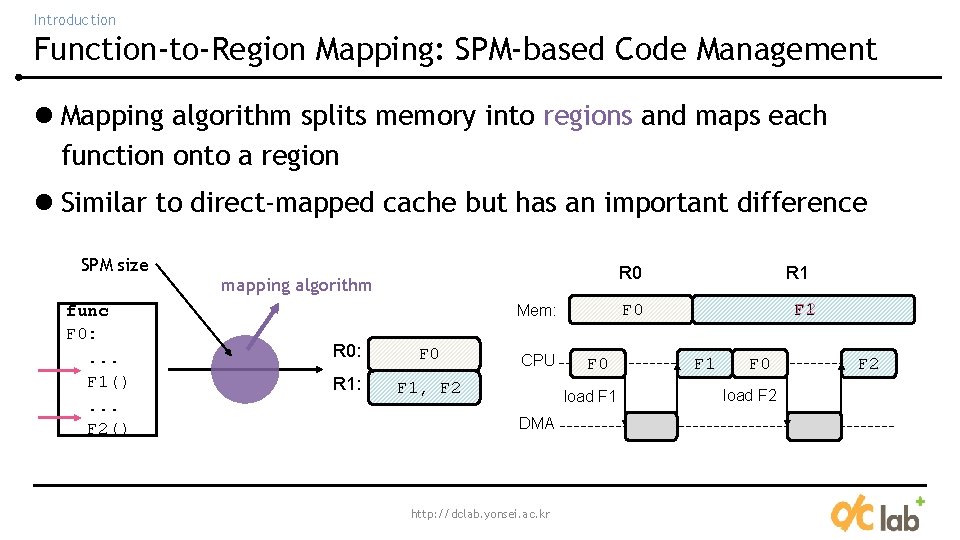

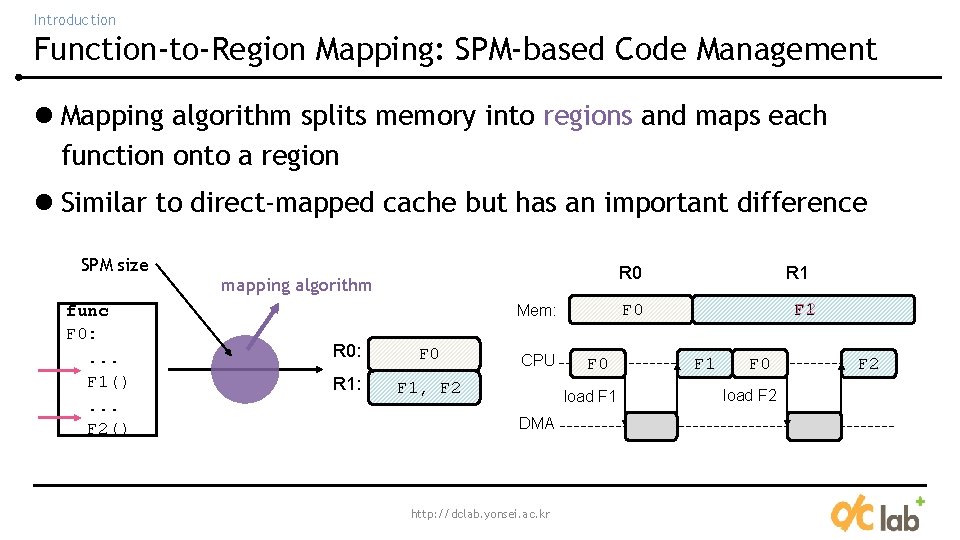

Introduction Function-to-Region Mapping: SPM-based Code Management l Mapping algorithm splits memory into regions and maps each function onto a region l Similar to direct-mapped cache but has an important difference SPM size func F 0: . . . F 1(). . . F 2() R 0 mapping algorithm F 0 R 1: F 1, F 2 CPU F 0 load F 1 DMA http: //dclab. yonsei. ac. kr F 2 F 1 F 0 Mem: R 0: R 1 F 0 load F 2

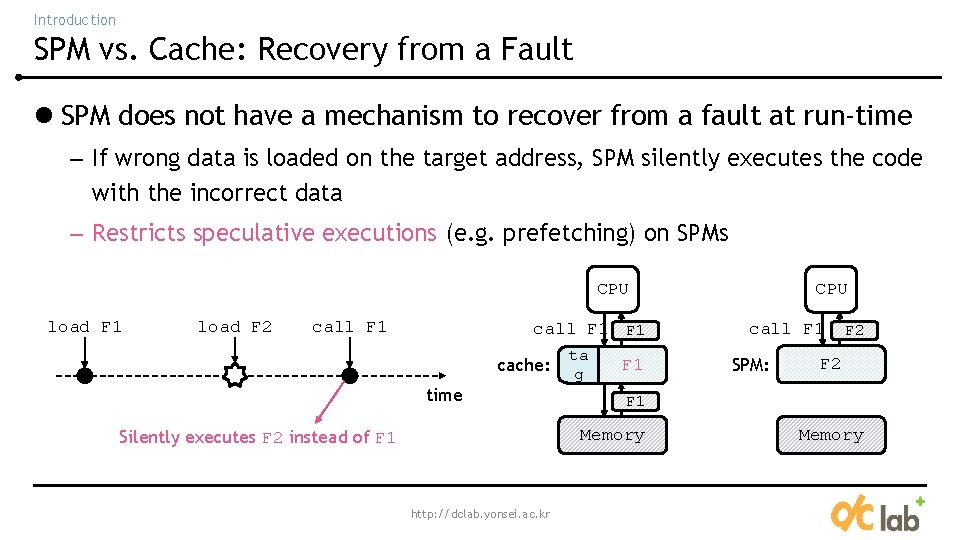

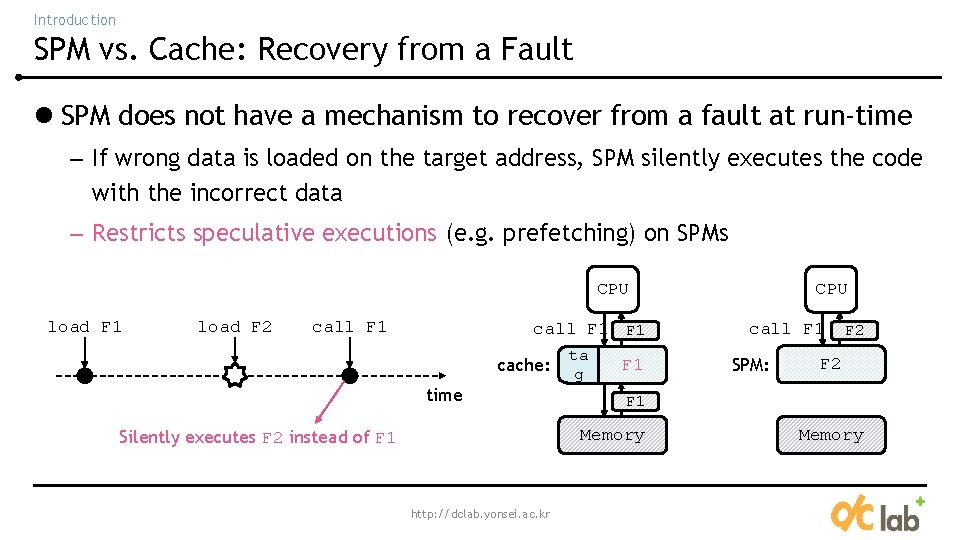

Introduction SPM vs. Cache: Recovery from a Fault l SPM does not have a mechanism to recover from a fault at run-time – If wrong data is loaded on the target address, SPM silently executes the code with the incorrect data – Restricts speculative executions (e. g. prefetching) on SPMs CPU load F 1 load F 2 call F 1 F 1 cache: time ta g F 2 F 1 http: //dclab. yonsei. ac. kr call F 1 SPM: F 2 F 1 Memory Silently executes F 2 instead of F 1 CPU Memory

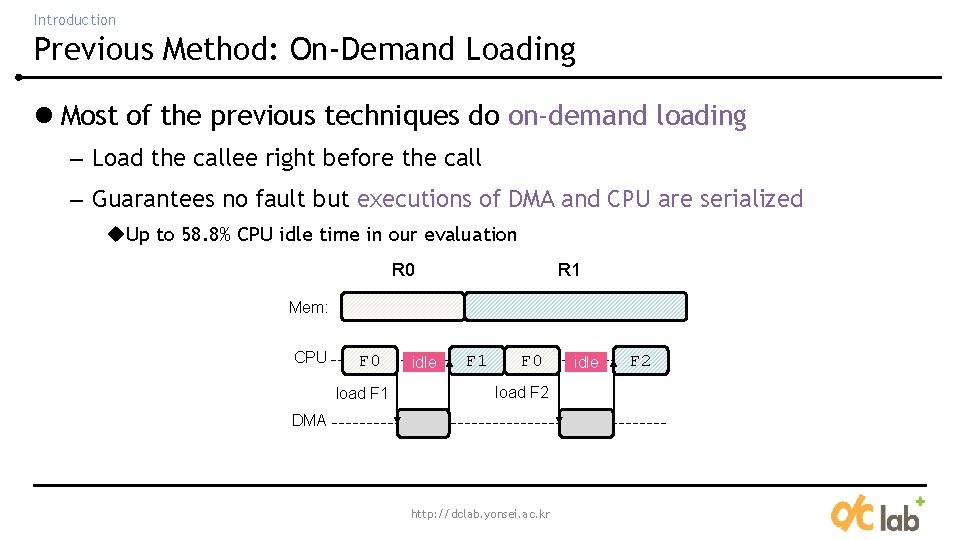

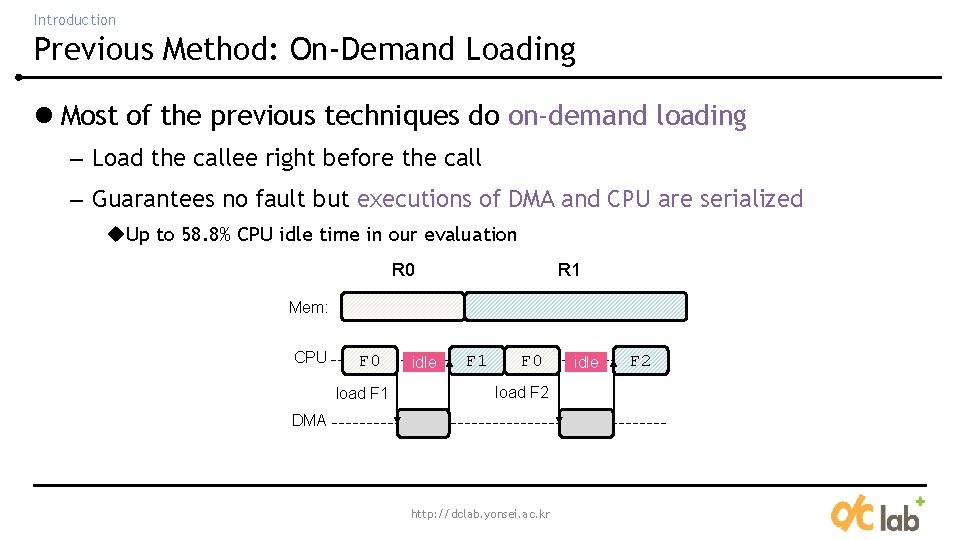

Introduction Previous Method: On-Demand Loading l Most of the previous techniques do on-demand loading – Load the callee right before the call – Guarantees no fault but executions of DMA and CPU are serialized u. Up to 58. 8% CPU idle time in our evaluation R 0 R 1 Mem: CPU F 0 load F 1 idle F 1 F 0 load F 2 DMA http: //dclab. yonsei. ac. kr idle F 2

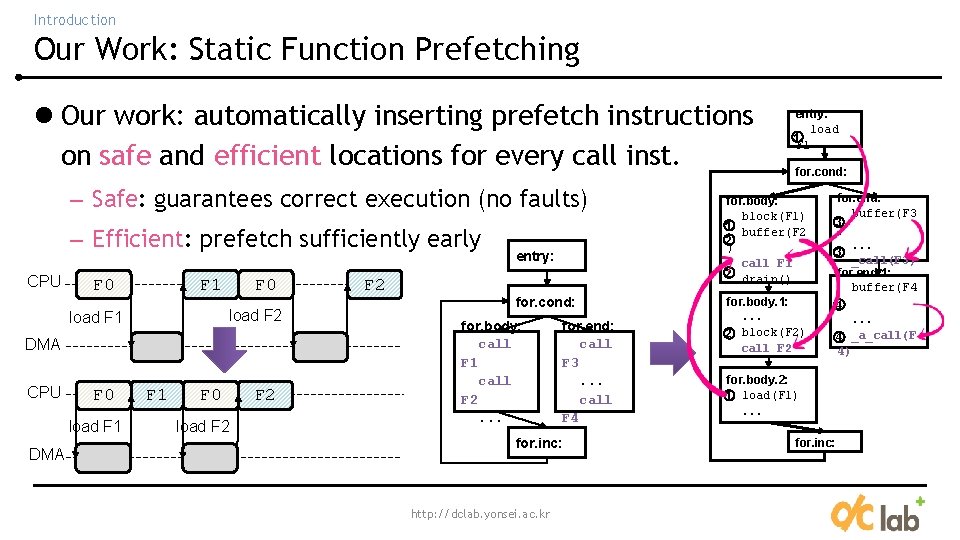

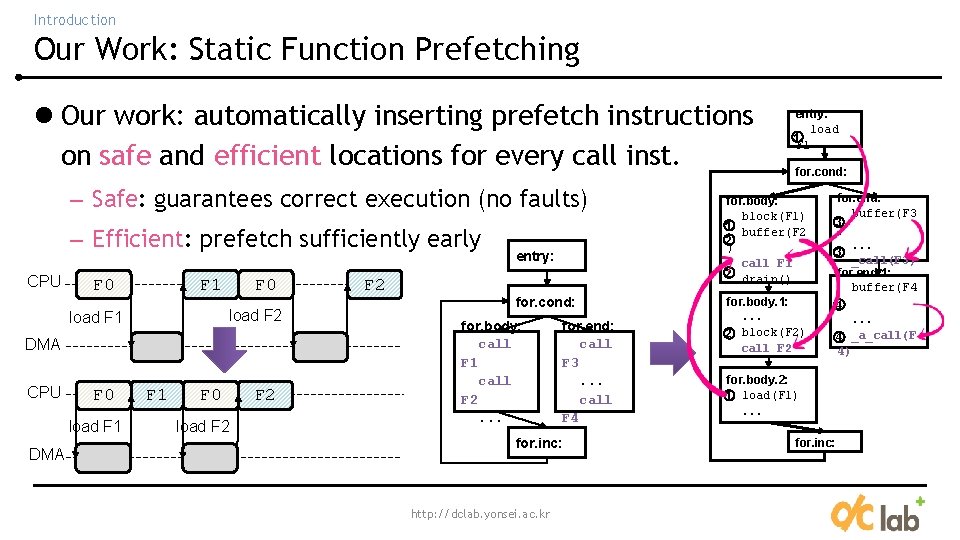

Introduction Our Work: Static Function Prefetching l Our work: automatically inserting prefetch instructions on safe and efficient locations for every call inst. – Safe: guarantees correct execution (no faults) – Efficient: prefetch sufficiently early CPU F 0 F 1 F 0 load F 2 load F 1 DMA CPU F 0 load F 1 DMA F 1 F 0 load F 2 F 2 entry: for. cond: for. body: call F 1 call F 2. . . for. end: call F 3. . . call F 4 for. inc: http: //dclab. yonsei. ac. kr entry: load 1 F 1 for. cond: for. body: block(F 1) 1 buffer(F 2 2 ) call F 1 2 drain() for. body. 1: . . . 2 block(F 2) call F 2 for. end: buffer(F 3 3 ). . . 3 _call(F 3) for. end. 1: buffer(F 4 4). . . 4 _a_call(F 4) for. body. 2: 1 load(F 1) . . . for. inc:

Introduction Summary of the Contributions l Reduces CPU idle time by 58. 5% and execution time by 14. 7% on average without changing the mapping algorithm l Only relies on static analysis: no profiling or run-time data structure l Orthogonal to the mapping algorithms and other optimization methods http: //dclab. yonsei. ac. kr

Outline l Introduction l Our Approach – Finding Load Locations – Inserting Prefetch Instructions l Experimental Results l Conclusion http: //dclab. yonsei. ac. kr

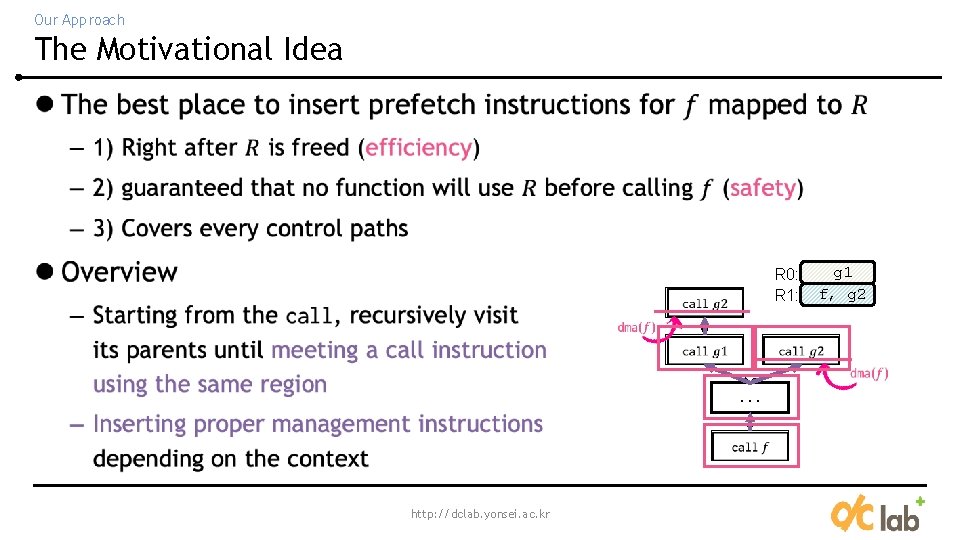

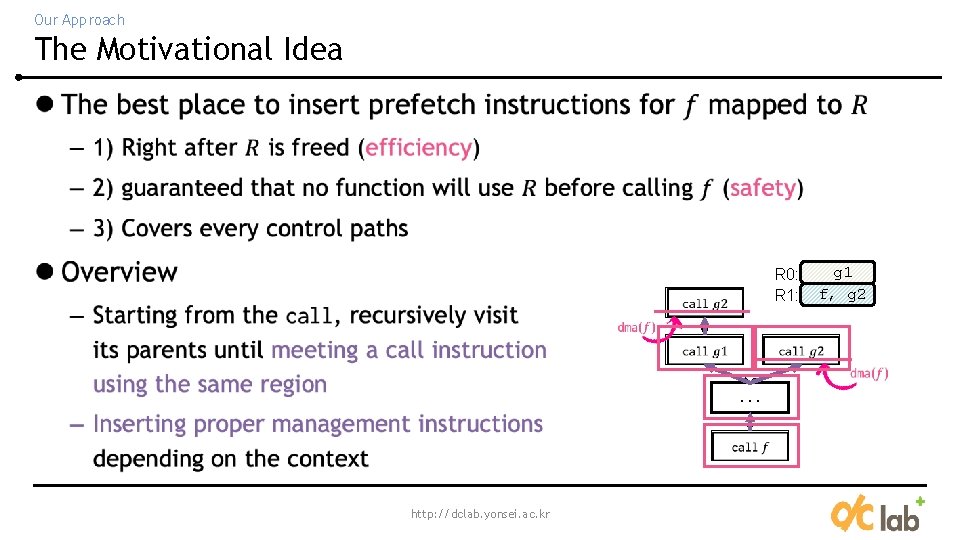

Our Approach The Motivational Idea l R 0: R 1: . . . http: //dclab. yonsei. ac. kr g 1 f, g 2

Outline l Introduction l Our Approach – Finding Load Locations – Inserting Prefetch Instructions l Experimental Results l Conclusion http: //dclab. yonsei. ac. kr

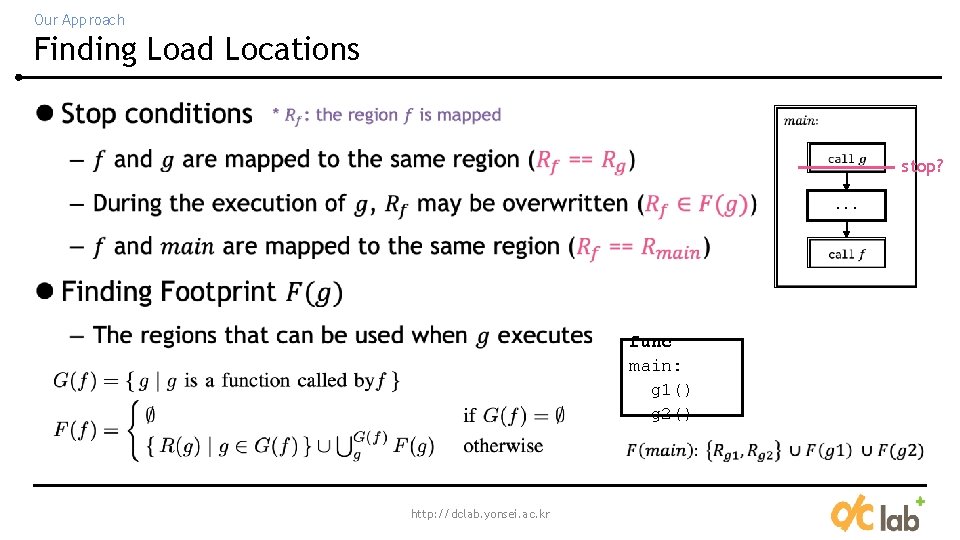

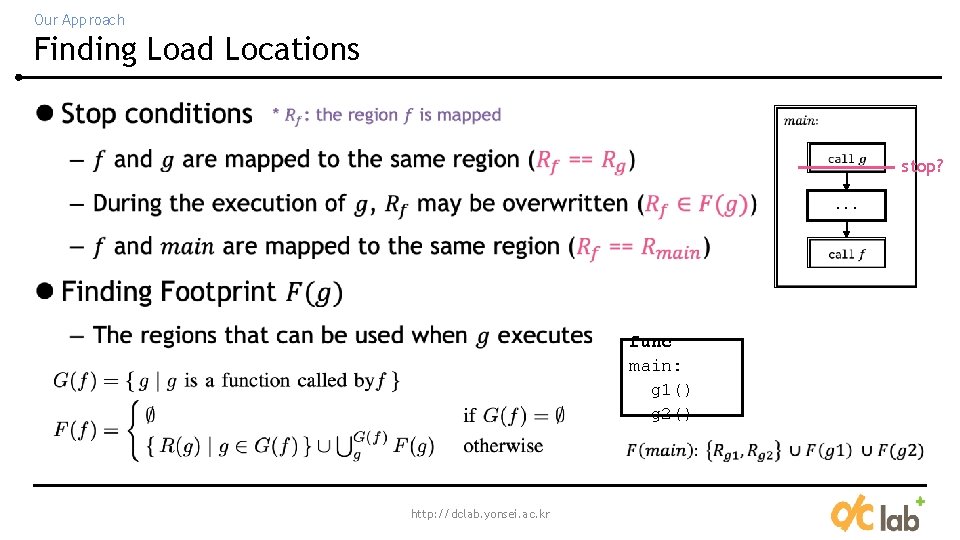

Our Approach Finding Load Locations l stop? . . . func main: g 1() g 2() http: //dclab. yonsei. ac. kr

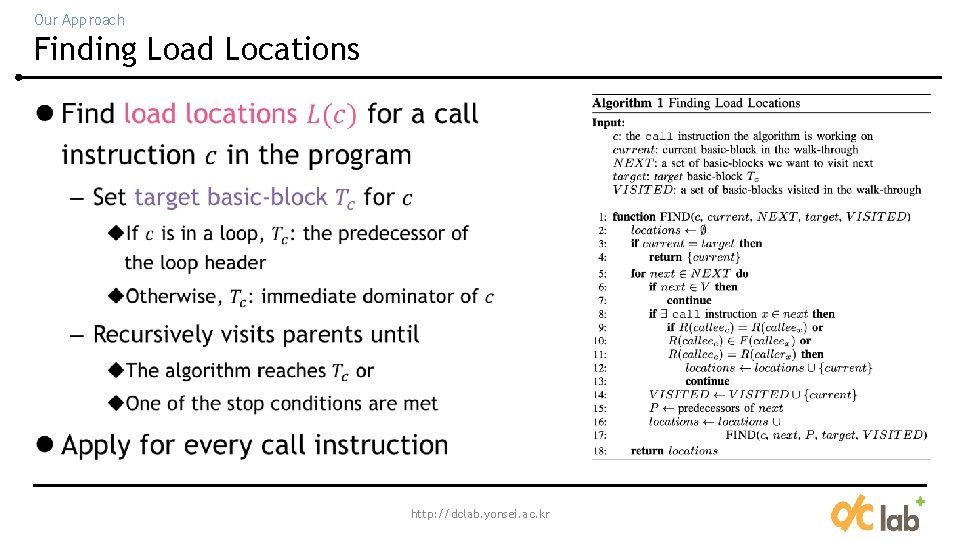

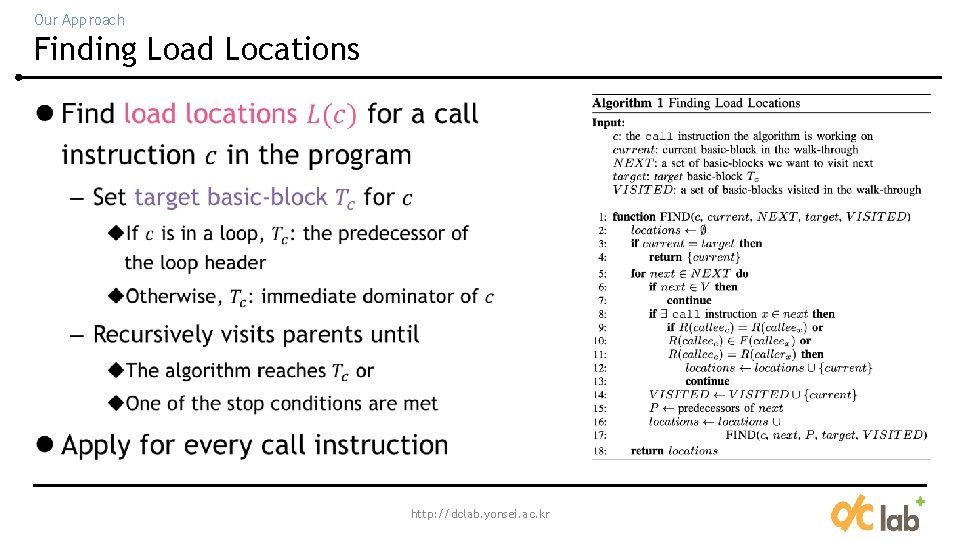

Our Approach Finding Load Locations l http: //dclab. yonsei. ac. kr

Outline l Introduction l Our Approach – Finding Load Locations – Inserting Prefetch Instructions l Experimental Results l Conclusion http: //dclab. yonsei. ac. kr

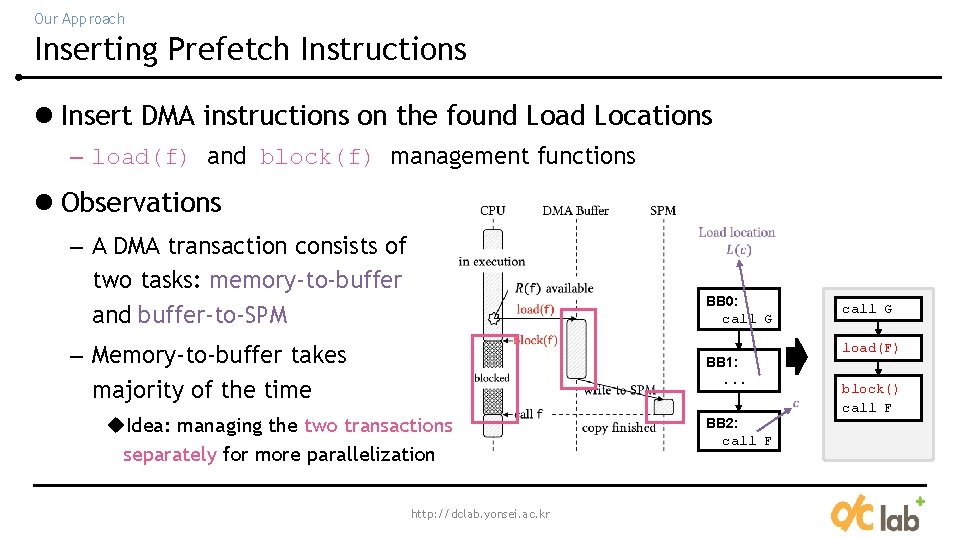

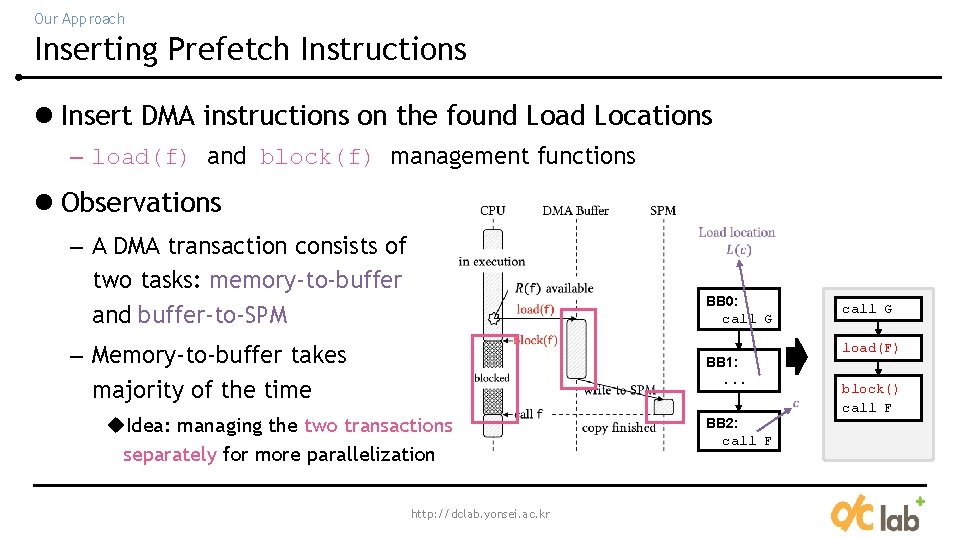

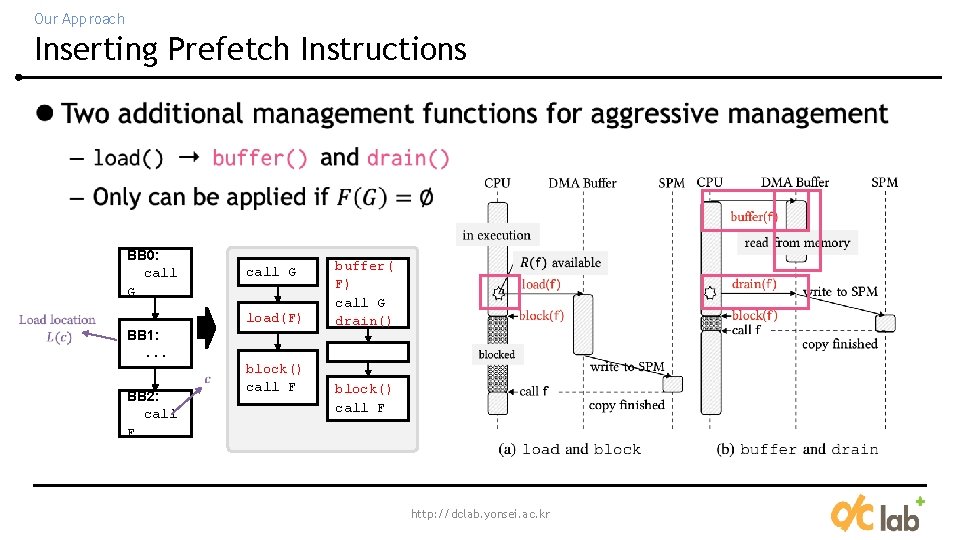

Our Approach Inserting Prefetch Instructions l Insert DMA instructions on the found Load Locations – load(f) and block(f) management functions l Observations – A DMA transaction consists of two tasks: memory-to-buffer and buffer-to-SPM BB 0: call G – Memory-to-buffer takes majority of the time BB 1: . . . u. Idea: managing the two transactions separately for more parallelization http: //dclab. yonsei. ac. kr BB 2: call F call G load(F) block() call F

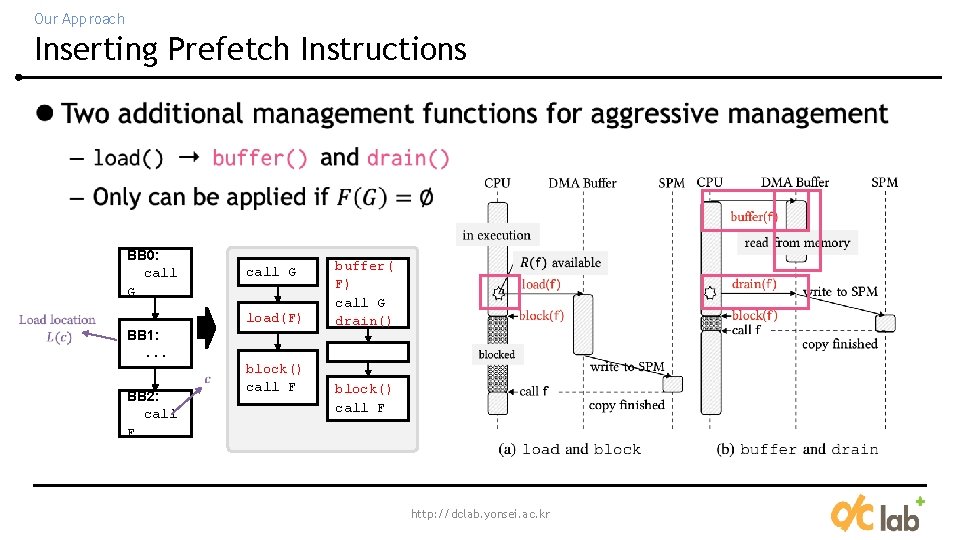

Our Approach Inserting Prefetch Instructions l BB 0: call G load(F) BB 1: . . . BB 2: call F block() call F buffer( F) call G drain() block() call F http: //dclab. yonsei. ac. kr

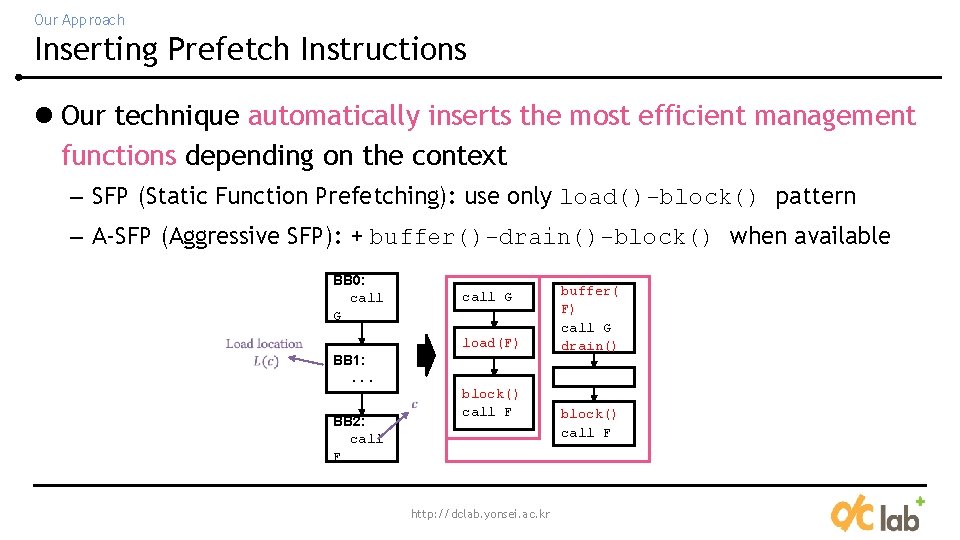

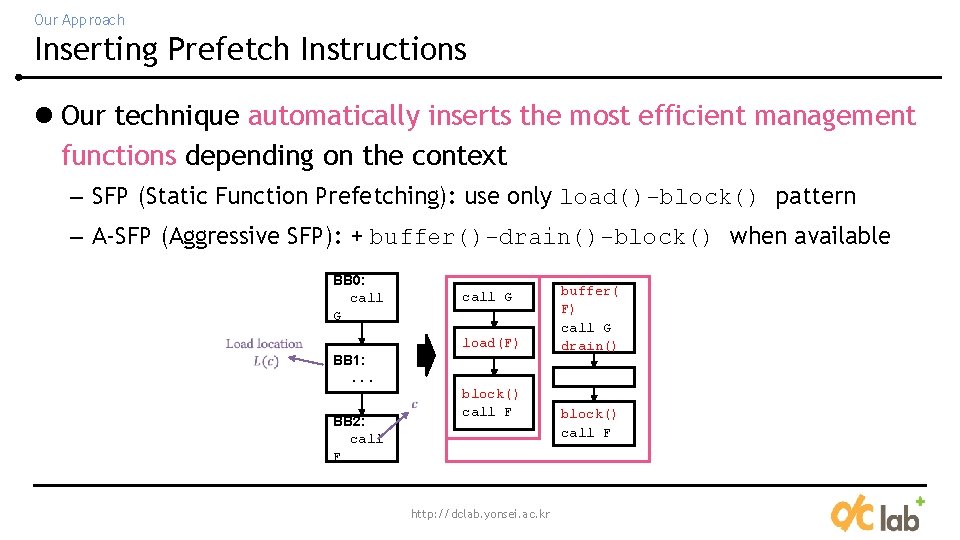

Our Approach Inserting Prefetch Instructions l Our technique automatically inserts the most efficient management functions depending on the context – SFP (Static Function Prefetching): use only load()-block() pattern – A-SFP (Aggressive SFP): + buffer()-drain()-block() when available BB 0: call G load(F) BB 1: . . . BB 2: call F block() call F http: //dclab. yonsei. ac. kr buffer( F) call G drain() block() call F

Outline l Introduction l Our Approach – Finding Load Locations – Inserting Prefetch Instructions l Experimental Results l Conclusion http: //dclab. yonsei. ac. kr

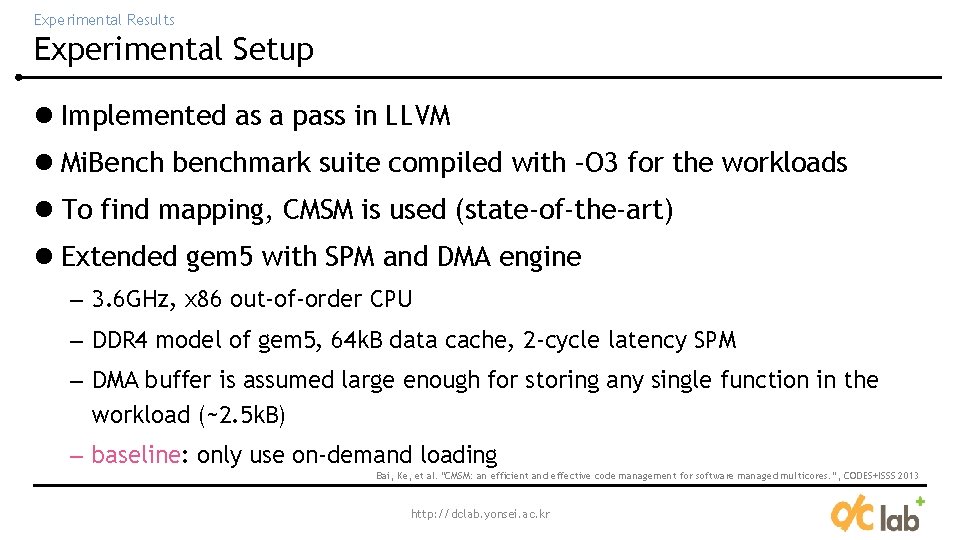

Experimental Results Experimental Setup l Implemented as a pass in LLVM l Mi. Bench benchmark suite compiled with –O 3 for the workloads l To find mapping, CMSM is used (state-of-the-art) l Extended gem 5 with SPM and DMA engine – 3. 6 GHz, x 86 out-of-order CPU – DDR 4 model of gem 5, 64 k. B data cache, 2 -cycle latency SPM – DMA buffer is assumed large enough for storing any single function in the workload (~2. 5 k. B) – baseline: only use on-demand loading Bai, Ke, et al. "CMSM: an efficient and effective code management for software managed multicores. ”, CODES+ISSS 2013 http: //dclab. yonsei. ac. kr

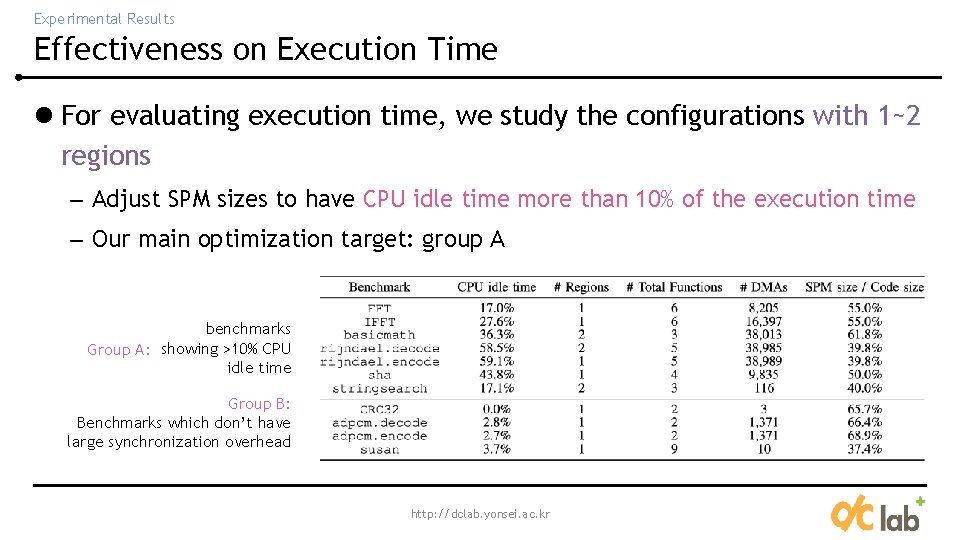

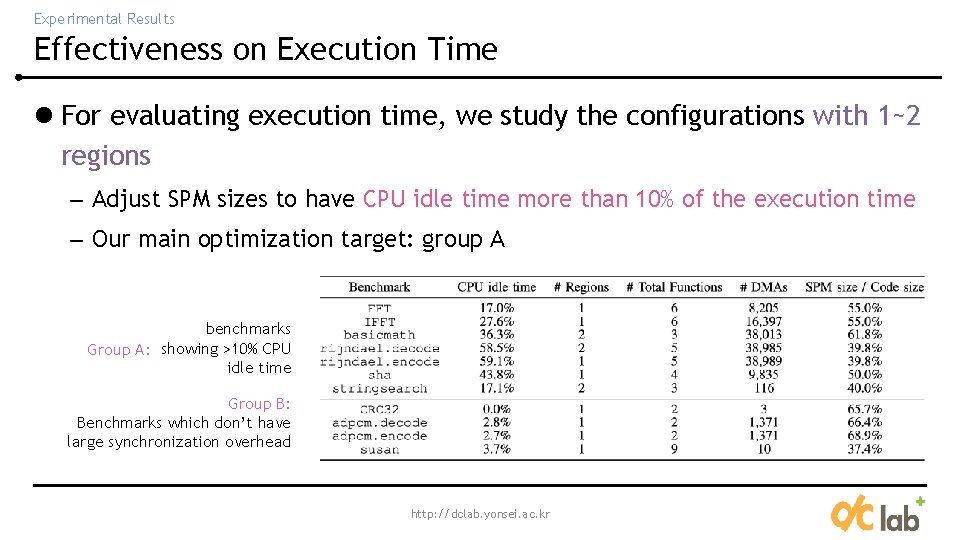

Experimental Results Effectiveness on Execution Time l For evaluating execution time, we study the configurations with 1~2 regions – Adjust SPM sizes to have CPU idle time more than 10% of the execution time – Our main optimization target: group A benchmarks Group A: showing >10% CPU idle time Group B: Benchmarks which don’t have large synchronization overhead http: //dclab. yonsei. ac. kr

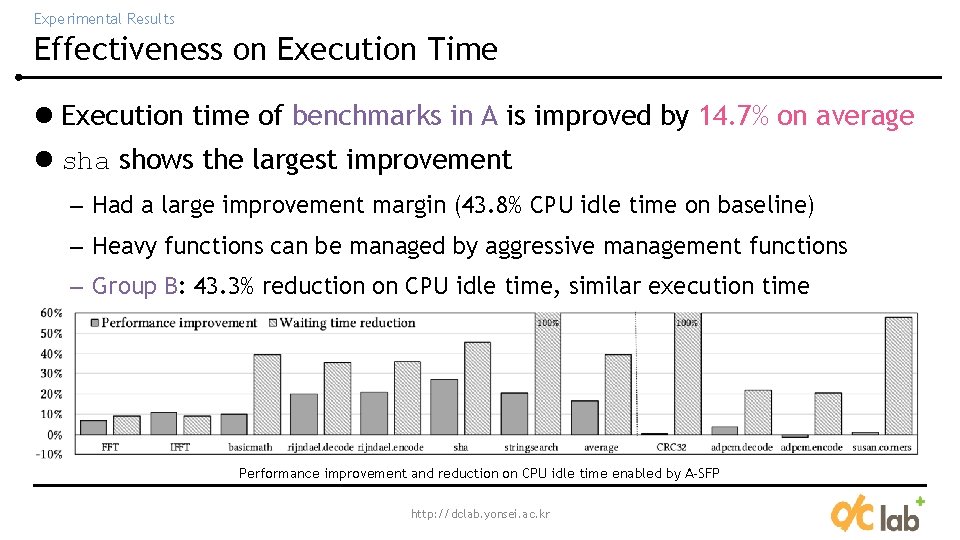

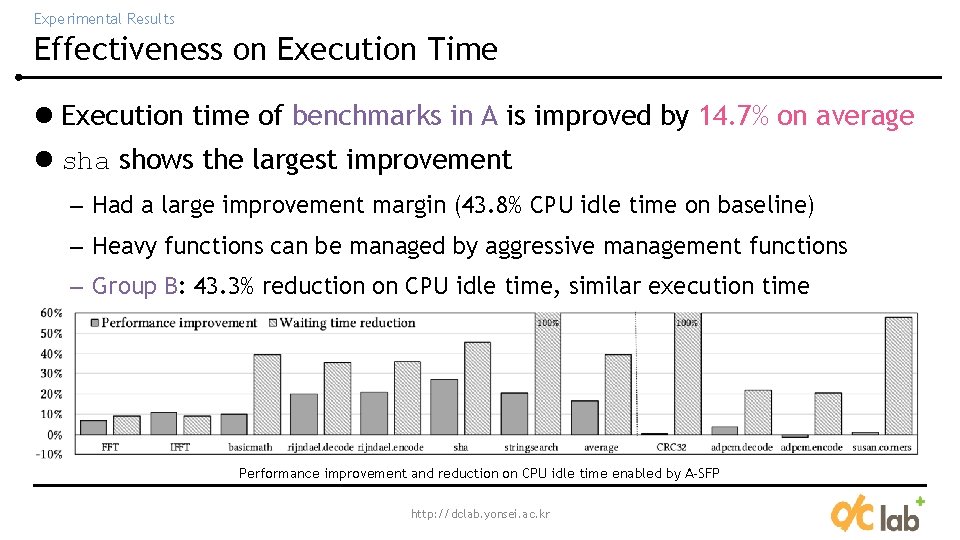

Experimental Results Effectiveness on Execution Time l Execution time of benchmarks in A is improved by 14. 7% on average l sha shows the largest improvement – Had a large improvement margin (43. 8% CPU idle time on baseline) – Heavy functions can be managed by aggressive management functions – Group B: 43. 3% reduction on CPU idle time, similar execution time Performance improvement and reduction on CPU idle time enabled by A-SFP http: //dclab. yonsei. ac. kr

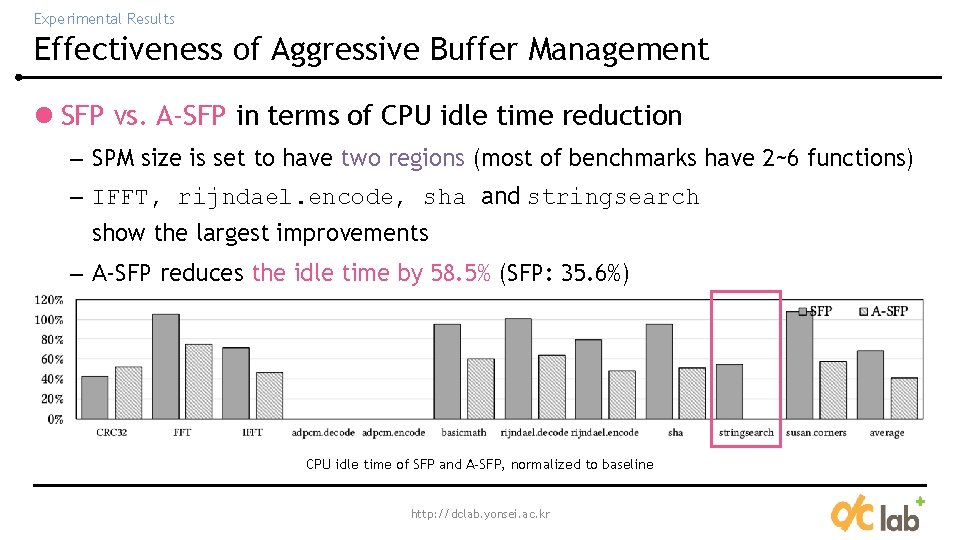

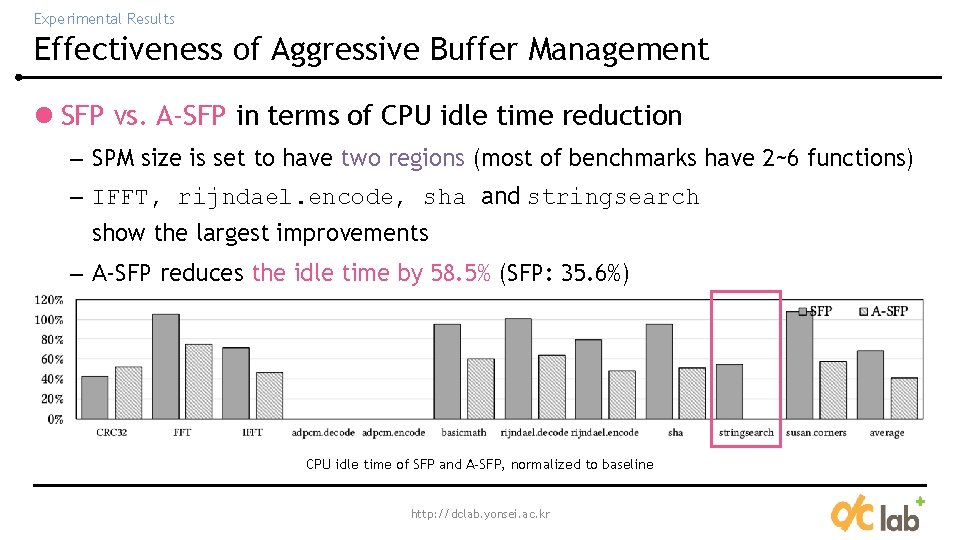

Experimental Results Effectiveness of Aggressive Buffer Management l SFP vs. A-SFP in terms of CPU idle time reduction – SPM size is set to have two regions (most of benchmarks have 2~6 functions) – IFFT, rijndael. encode, sha and stringsearch show the largest improvements – A-SFP reduces the idle time by 58. 5% (SFP: 35. 6%) CPU idle time of SFP and A-SFP, normalized to baseline http: //dclab. yonsei. ac. kr

Outline l Introduction l Our Approach – Finding Load Locations – Inserting Prefetch Instructions l Experimental Results l Conclusion http: //dclab. yonsei. ac. kr

Conclusion l In SPM-based code management, compile-time code prefetching has not been extensively studied – Hard to recover from faults, thus relies on on-demand loading l We propose an algorithm (A-SFP) to find safe and efficient prefetch locations for all function calls in a program l A-SFP can reduce CPU idle time by 58. 5% on average – Results in 14. 7% execution time improvement without changing the mapping algorithm http: //dclab. yonsei. ac. kr

THANK YOU! http: //dclab. yonsei. ac. kr