Stanford University SLAC NIIT the Digital Divide Bandwidth

Stanford University, SLAC, NIIT, the Digital Divide & Bandwidth Challenge Prepared by Les Cottrell, SLAC for the NIIT , February 22, 2006

Stanford University • Location

Some facts • Founded in 1890’s by Governor Leland Stanford & wife Jane – in memory of son Leland Stanford Jr. – Apocryphal story of foundation • Movies invented at Stanford • 1600 freshman entrants/year (12% acceptance), 7: 1 student: faculty, students from 53 countries • 169 K living Stanford alumni

Some alumni • • Sports: Tiger Woods, John Mc. Enroe Sally Ride Astronaut Vint Cerf “father of Internet” Industry: – Hewlett & Packard, Steve Ballmer CEO Microsoft, Scott Mc. Nealy Sun … • Ex-presidents: Ehud Barak Israel, Alejandro Toledo Peru • US Politics: Condoleeza Rice, George Schultz, President Hoover

Some Startups • Founded Silicon Valley (turned orchards into companies): – Start by providing land encouragement (investment) for companies started by Stanford alumni, such as HP & Varian – More recently: Sun (Stanford University Network), Cisco, Yahoo, Google

Excellence • 17 Nobel prizewinners • Stanford Hospital • Stanford Linear Accelerator Center (SLAC) – my home: – National Lab operated by Stanford University funded by US Department of Energy – Roughly 1400 staff, + contractors & outside users => 3000, ~ 2000 on site at a given time – Fundamental research in: • • • Experimental particle physics Theoretical physics Accelerator research Astro-physics Synchrotron Light research – Has faculty to pursue above research and awards degrees, 3 Nobel prizewinners

Work with NIIT • Co-supervision of students, build research capacity, publish etc. , for example: – Quantify the Digital Divide: • Develop a measurement infrastructure to provide information on the extent of the Digital Divide: – Within Pakistan, between Pak & other regions • Improve understanding, provide planning information, expectations, identify needs • Provide and deploy tools in Pakistan • MAGGIE-NS collaboration - projects: – TULIP - Faran – Network Weather Forecasting – Fawad, Fareena – Anomaly – Fawad, Adnan, Muhammad Ali • Detection, diagnosis and alerting – Ping. ER Management - Waqar – MTBF/MTTR of networks – Not assigned – Federating Network monitoring Infrastructures – Asma, Abdullah • Smokeping, Ping. ER, AMP, Mon. ALISA, OWAMP … – Digital Divide – Aziz, Akbar, Rabail

Quantifying the Digital Divide: A scientific overview of the connectivity of South Asian and African Countries Les Cottrell. SLAC, Aziz Rehmatullah. NIIT, Jerrod Williams. SLAC, Arshad Ali. NIIT Presented at the CHEP 06 Meeting, Mumbai, India February 2006 www. slac. stanford. edu/grp/scs/net/talk 05/icfa-chep 06. ppt

Introduction • Ping. ER project originally (1995) for measuring network performance for US, Europe and Japanese HEP community • Extended this century to measure Digital Divide for Academic & Research community • Last year added monitoring sites in S. Africa, Pakistan & India • Will report on network performance to these regions from US and Europe – trends, comparisons • Plus early results within and between these regions

Why does it matter? • Scientists cannot collaborate as equal partners unless they have connectivity to share data, results, ideas etc. • Distance education needs good communication for access to libraries, journals, educational materials, video, access to other teachers and researchers.

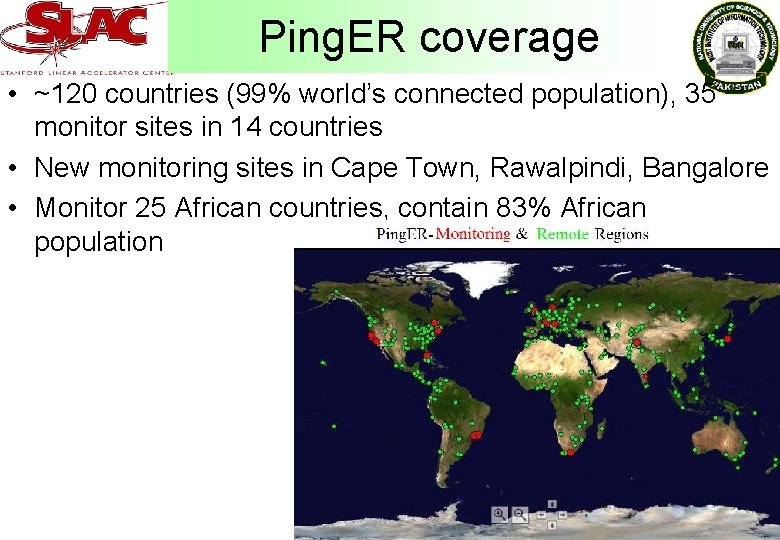

Ping. ER coverage • ~120 countries (99% world’s connected population), 35 monitor sites in 14 countries • New monitoring sites in Cape Town, Rawalpindi, Bangalore • Monitor 25 African countries, contain 83% African population

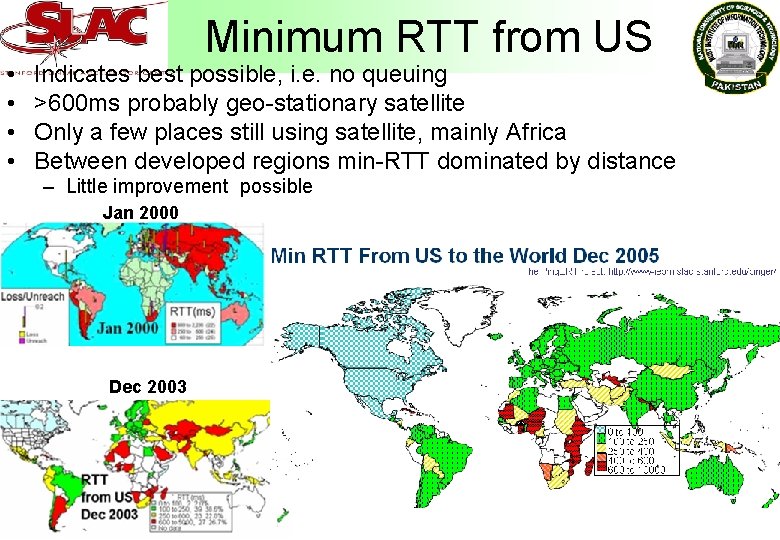

• • Minimum RTT from US Indicates best possible, i. e. no queuing >600 ms probably geo-stationary satellite Only a few places still using satellite, mainly Africa Between developed regions min-RTT dominated by distance – Little improvement possible Jan 2000 Dec 2003

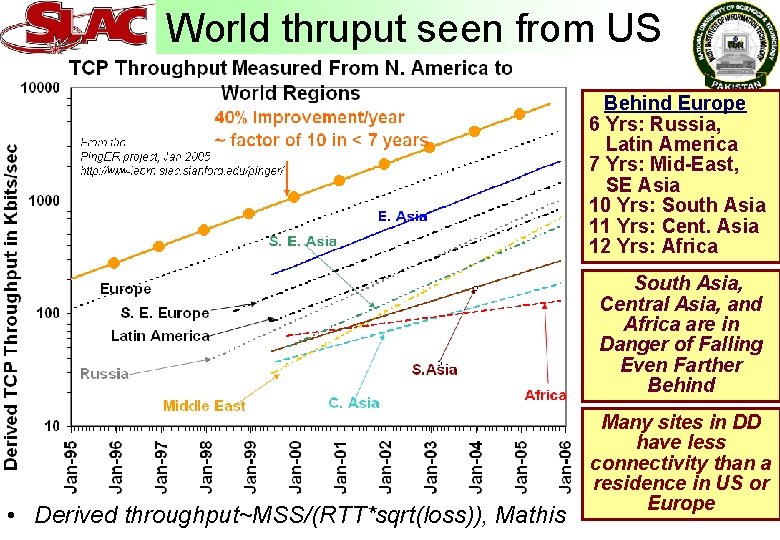

World thruput seen from US Behind Europe 6 Yrs: Russia, Latin America 7 Yrs: Mid-East, SE Asia 10 Yrs: South Asia 11 Yrs: Cent. Asia 12 Yrs: Africa South Asia, Central Asia, and Africa are in Danger of Falling Even Farther Behind • Derived throughput~MSS/(RTT*sqrt(loss)), Mathis Many sites in DD have less connectivity than a residence in US or Europe

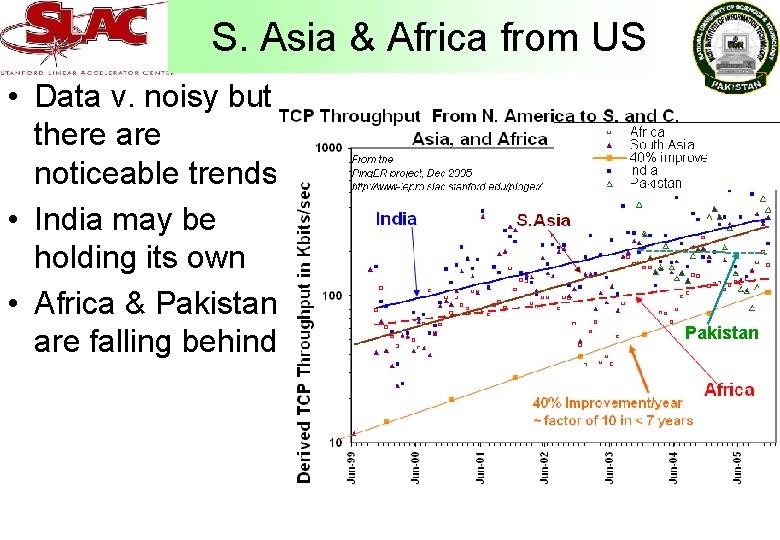

S. Asia & Africa from US • Data v. noisy but there are noticeable trends • India may be holding its own • Africa & Pakistan are falling behind Pakistan

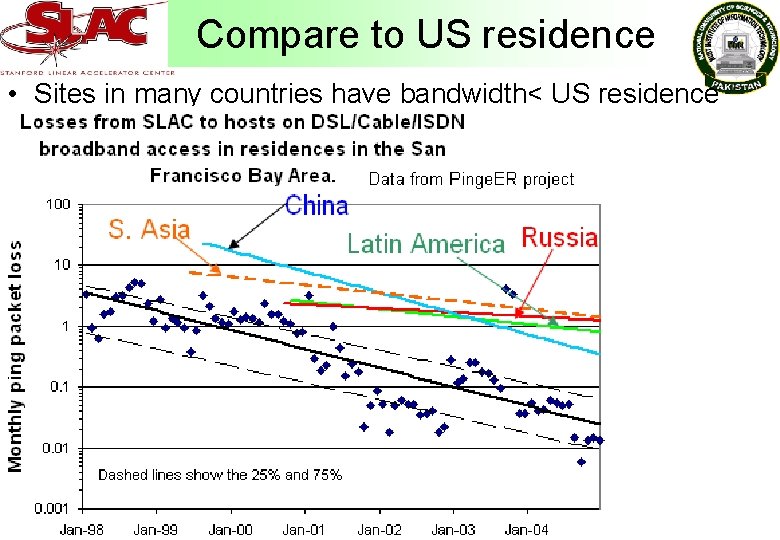

Compare to US residence • Sites in many countries have bandwidth< US residence

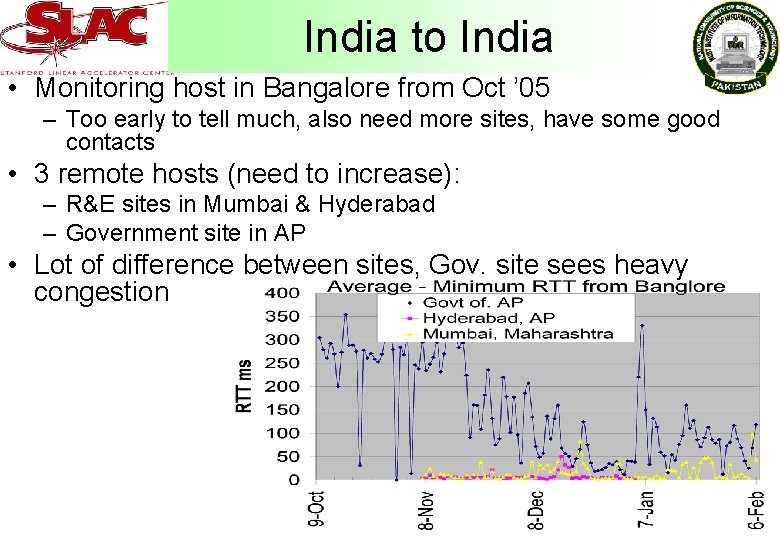

India to India • Monitoring host in Bangalore from Oct ’ 05 – Too early to tell much, also need more sites, have some good contacts • 3 remote hosts (need to increase): – R&E sites in Mumbai & Hyderabad – Government site in AP • Lot of difference between sites, Gov. site sees heavy congestion

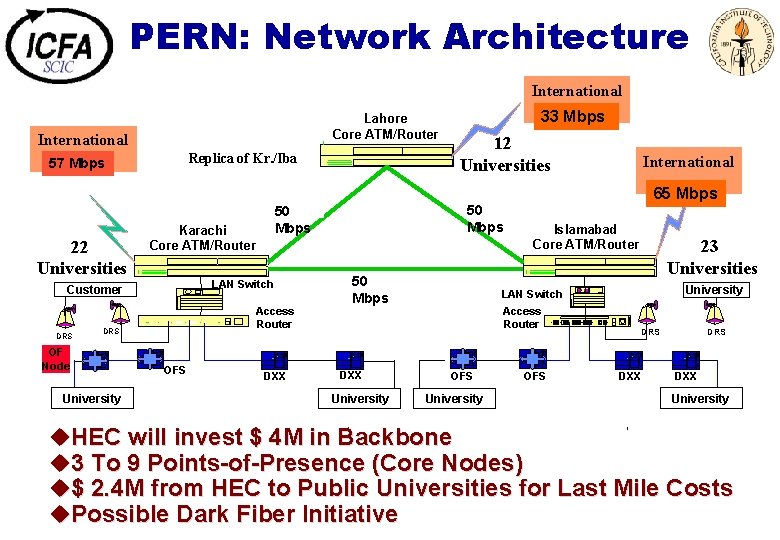

PERN: Network Architecture Lahore Core ATM/Router International 57 4 MB Mbps 22 Universities Replica of Kr. /Iba DRS OF Node University 2 x 2 Mbps 50 Mbps LAN Switch Access Router DRS OFS 12 Universities 2 x 2 Mbps 50 Karachi Core ATM/Router Customer International 33 2 MB Mbps DXX 50 2 x 2 Mbps University Islamabad Core ATM/Router 23 Universities University LAN Switch Mbps DXX International 2 MB 65 Mbps Access Router OFS University OFS DRS DXX University u. HEC will invest $ 4 M in Backbone u 3 To 9 Points-of-Presence (Core Nodes) u$ 2. 4 M from HEC to Public Universities for Last Mile Costs u. Possible Dark Fiber Initiative

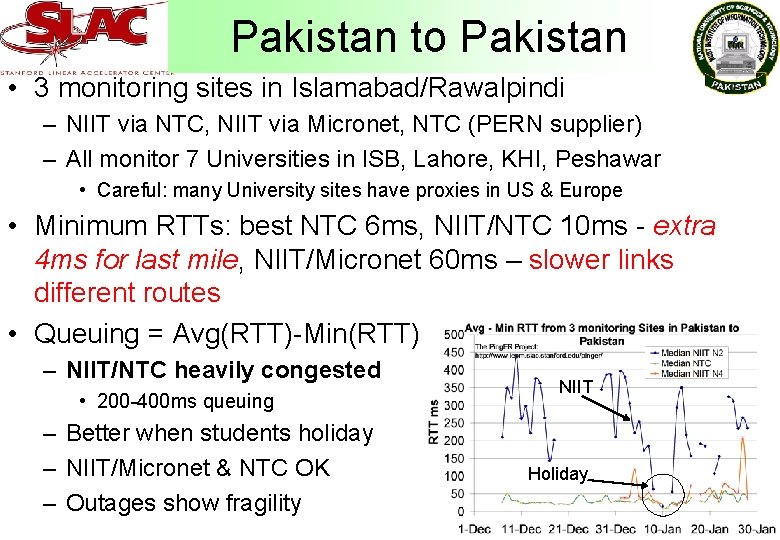

Pakistan to Pakistan • 3 monitoring sites in Islamabad/Rawalpindi – NIIT via NTC, NIIT via Micronet, NTC (PERN supplier) – All monitor 7 Universities in ISB, Lahore, KHI, Peshawar • Careful: many University sites have proxies in US & Europe • Minimum RTTs: best NTC 6 ms, NIIT/NTC 10 ms - extra 4 ms for last mile, NIIT/Micronet 60 ms – slower links different routes • Queuing = Avg(RTT)-Min(RTT) – NIIT/NTC heavily congested • 200 -400 ms queuing – Better when students holiday – NIIT/Micronet & NTC OK – Outages show fragility NIIT Holiday

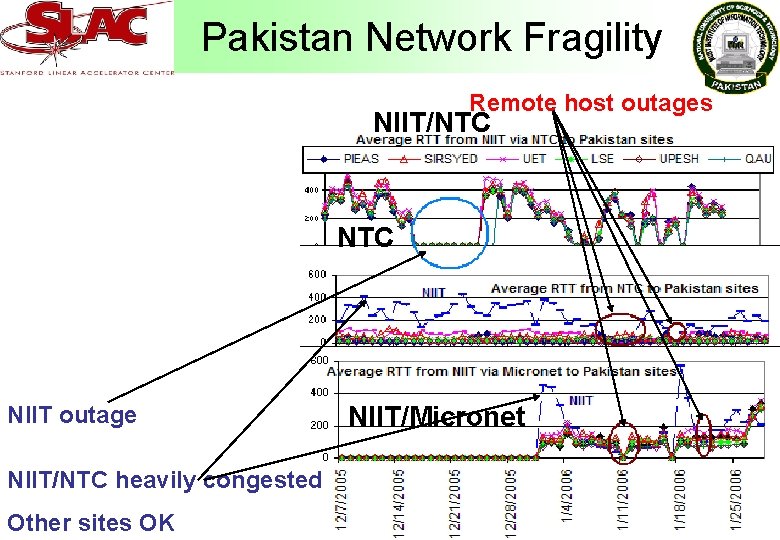

Pakistan Network Fragility Remote host outages NIIT/NTC NIIT outage NIIT/NTC heavily congested Other sites OK NIIT/Micronet

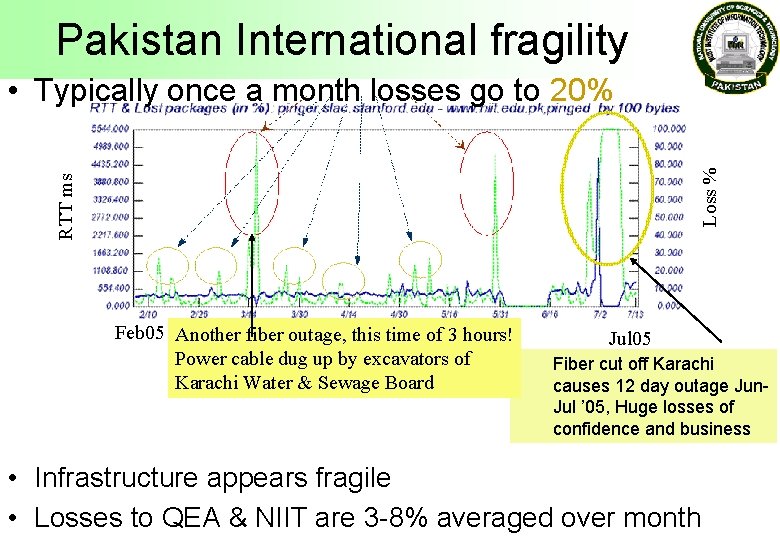

Pakistan International fragility RTT ms Loss % • Typically once a month losses go to 20% Feb 05 Another fiber outage, this time of 3 hours! Power cable dug up by excavators of Karachi Water & Sewage Board Jul 05 Fiber cut off Karachi causes 12 day outage Jun. Jul ’ 05, Huge losses of confidence and business • Infrastructure appears fragile • Losses to QEA & NIIT are 3 -8% averaged over month

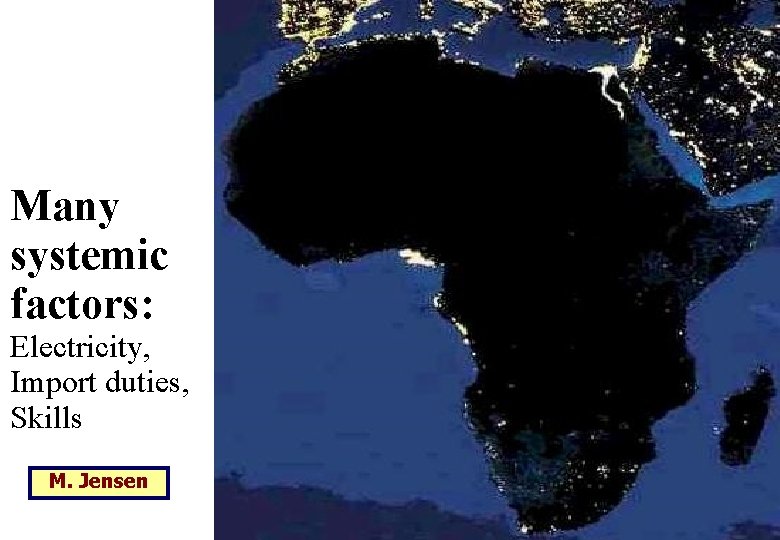

Many systemic factors: Electricity, Import duties, Skills M. Jensen

Average Cost $ 11/kbps/Month

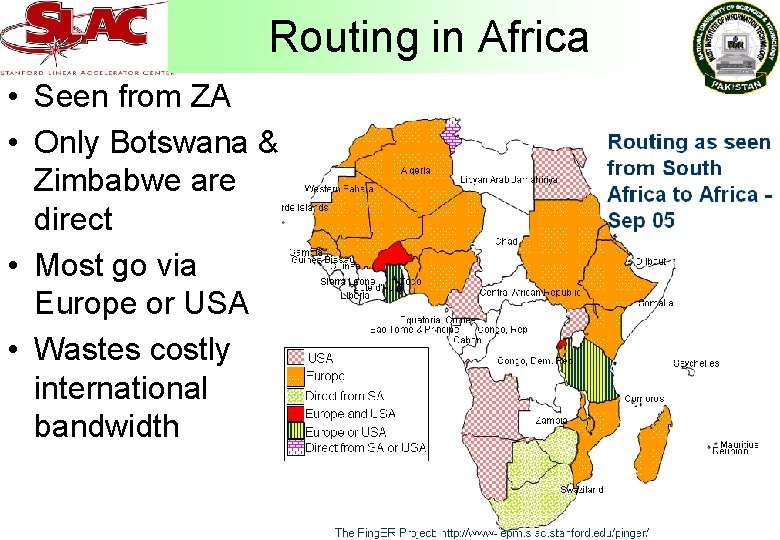

Routing in Africa • Seen from ZA • Only Botswana & Zimbabwe are direct • Most go via Europe or USA • Wastes costly international bandwidth

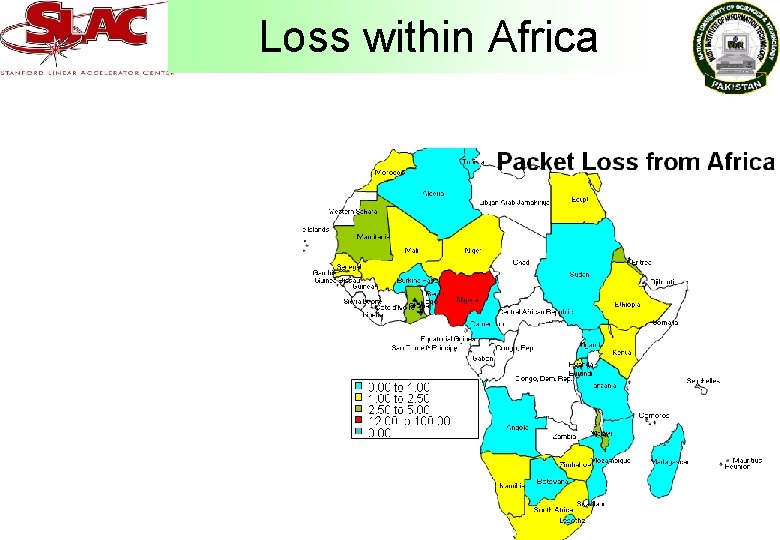

Loss within Africa

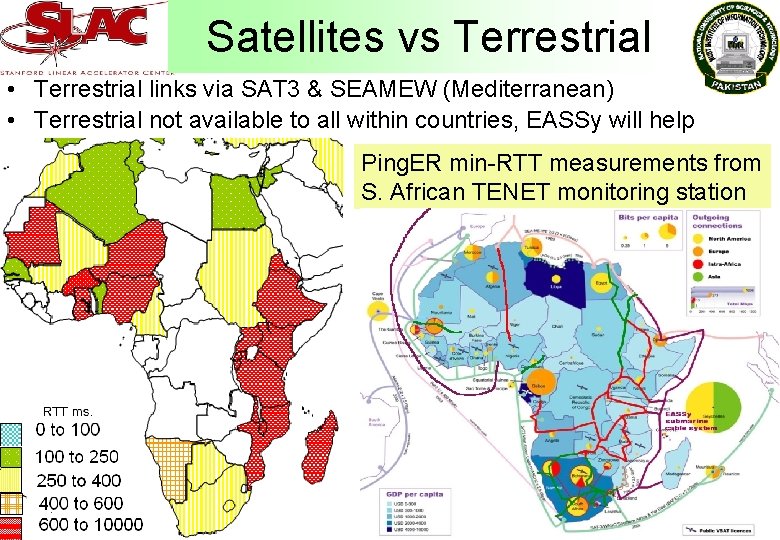

Satellites vs Terrestrial • Terrestrial links via SAT 3 & SEAMEW (Mediterranean) • Terrestrial not available to all within countries, EASSy will help Ping. ER min-RTT measurements from S. African TENET monitoring station

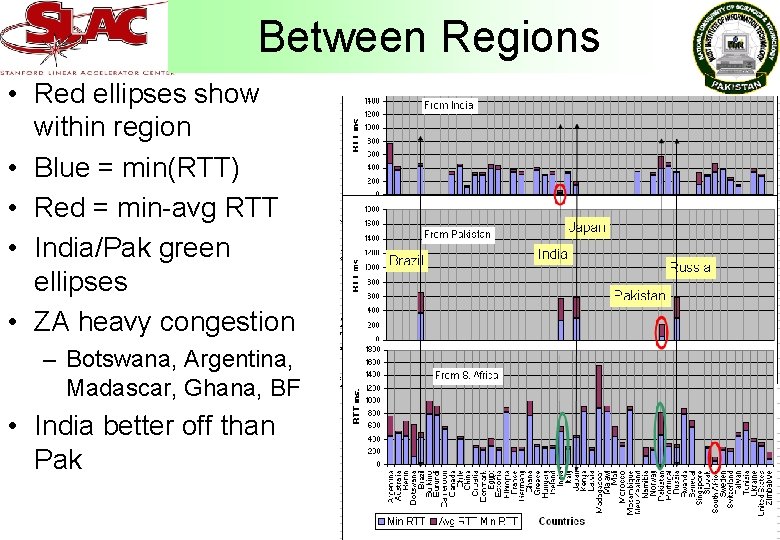

Between Regions • Red ellipses show within region • Blue = min(RTT) • Red = min-avg RTT • India/Pak green ellipses • ZA heavy congestion – Botswana, Argentina, Madascar, Ghana, BF • India better off than Pak

Overall • • • Sorted by Median throughput Within region performance better (blue ellipses) Europe, N. America, E. Asia Russia generally good M. East, Oceania, S. E. Asia, L. America acceptable Africa, C. Asia, S. Asia poor

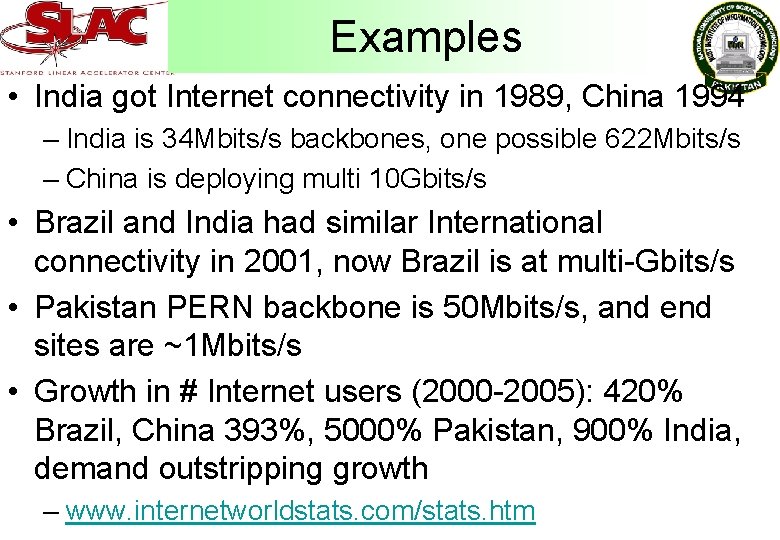

Examples • India got Internet connectivity in 1989, China 1994 – India is 34 Mbits/s backbones, one possible 622 Mbits/s – China is deploying multi 10 Gbits/s • Brazil and India had similar International connectivity in 2001, now Brazil is at multi-Gbits/s • Pakistan PERN backbone is 50 Mbits/s, and end sites are ~1 Mbits/s • Growth in # Internet users (2000 -2005): 420% Brazil, China 393%, 5000% Pakistan, 900% India, demand outstripping growth – www. internetworldstats. com/stats. htm

Conclusions • S. Asia and Africa ~ 10 years behind and falling further behind creating a Digital Divide within a Digital Divide • India appears better than Africa or Pakistan • Last mile problems, and network fragility • Decreasing use of satellites, still needed for many remote countries in Africa and C. Asia – EASSy project will bring fibre to E. Africa • Growth in # users 2000 -2005 400% Africa, 5000% Pakistan networks not keeping up • Need more sites in developing regions and longer time period of measurements

More information • Thanks to: Harvey Newman & ICFA for encouragement & support, Anil Srivastava (World Bank) & N. Subramanian (Bangalore) for India, NIIT, NTC and PERN for Pakistan monitoring sites, FNAL for Ping. ER management support, Duncan Martin & TENET (ZA). • Future: work with VSNL & ERnet for India, Julio Ibarra & Eriko Porto for L. America, NIIT & NTC for Pakistan • Also see: • ICFA/SCIC Monitoring report: – www. slac. stanford. edu/xorg/icfa-net-paper-jan 06/ • Paper on Africa & S. Asia – www. slac. stanford. edu/grp/scs/net/papers/chep 06/paper-final. pdf • Ping. ER project: – www-iepm. slac. stanford. edu/pinger/

SC|05 Bandwidth Challenge ESCC Meeting 9 th February ‘ 06 Yee-Ting Li Stanford Linear Accelerator Center

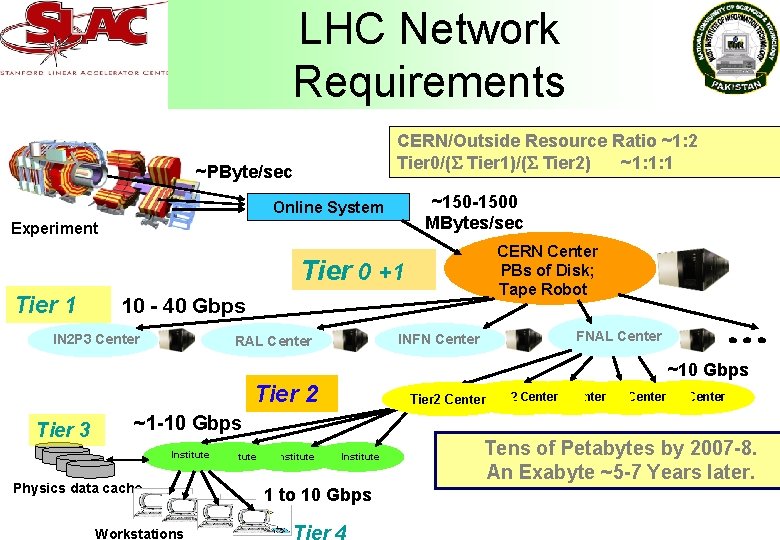

LHC Network Requirements CERN/Outside Resource Ratio ~1: 2 Tier 0/( Tier 1)/( Tier 2) ~1: 1: 1 ~PByte/sec ~150 -1500 MBytes/sec Online System Experiment CERN Center PBs of Disk; Tape Robot Tier 0 +1 Tier 1 10 - 40 Gbps IN 2 P 3 Center INFN Center RAL Center FNAL Center ~10 Gbps Tier 2 Tier 3 Tier 2 Center Tier 2 Center ~1 -10 Gbps Institute Physics data cache Workstations Institute 1 to 10 Gbps Tens of Petabytes by 2007 -8. An Exabyte ~5 -7 Years later.

Overview • Bandwidth Challenge – ‘The Bandwidth Challenge highlights the best and brightest in new techniques for creating and utilizing vast rivers of data that can be carried across advanced networks. ‘ – Transfer as much data as possible using real applications over a 2 hour window • We did… – Distributed Tera. Byte Particle Physics Data Sample Analysis – ‘Demonstrated high speed transfers of particle physics data between host labs and collaborating institutes in the USA and worldwide. Using state of the art WAN infrastructure and Grid Web Services based on the LHC Tiered Architecture, they showed realtime particle event analysis requiring transfers of Terabyte-scale datasets. ’

Overview • In detail, during the bandwidth challenge (2 hours): – 131 Gbps measured by SCInet BWC team on 17 of our waves (15 minute average) – 95. 37 TB of data transferred. • (3. 8 DVD’s per second) – 90 -150 Gbps (peak 150. 7 Gbps) • On day of challenge – Transferred ~475 TB ‘practising’ (waves were shared, still tuning applications and hardware) – Peak one way USN utlisation observed on a single link was 9. 1 Gbps (Caltech) and 8. 4 Gbps (SLAC) • Also wrote to Stor. Cloud – SLAC: wrote 3. 2 TB in 1649 files during BWC – Caltech: 6 GB/sec with 20 nodes

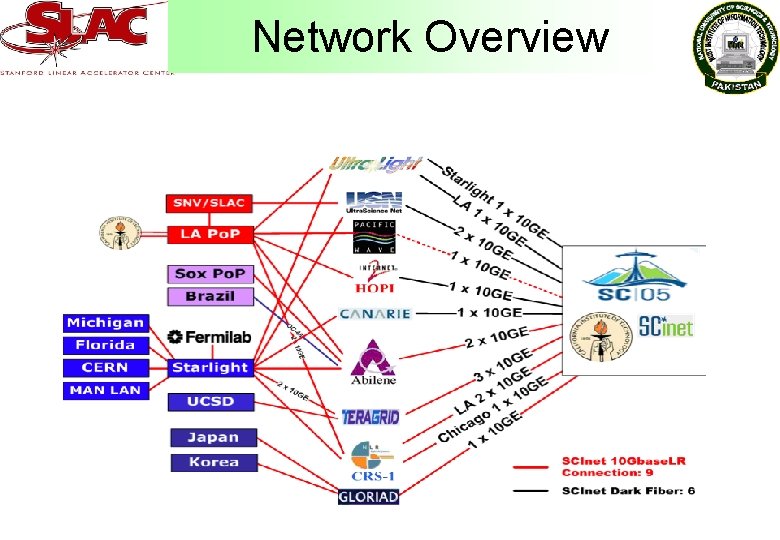

Networking Overview • We had 22 10 Gbits/s waves to the Caltech and SLAC/FNAL booths. Of these: – 15 waves to the Caltech booth (from Florida (1), Korea/GLORIAD (1), Brazil (1 * 2. 5 Gbits/s), Caltech (2), LA (2), UCSD, CERN (2), U Michigan (3), FNAL(2)). – 7 x 10 Gbits/s waves to the SLAC/FNAL booth (2 from SLAC, 1 from the UK, and 4 from FNAL). • The waves were provided by Abilene, Canarie, Cisco (5), ESnet (3), GLORIAD (1), HOPI (1), Michigan Light Rail (Mi. LR), National Lambda Rail (NLR), Tera. Grid (3) and Ultra. Science. Net (4).

Network Overview

Hardware (SLAC only) • At SLAC: – 14 x 1. 8 Ghz Sun v 20 z (Dual Opteron) – 2 x Sun 3500 Disk trays (2 TB of storage) – 12 x Chelsio T 110 10 Gb NICs (LR) – 2 x Neterion/S 2 io Xframe I (SR) – Dedicated Cisco 6509 with 4 x 4 x 10 GB blades • At SC|05: – 14 x 2. 6 Ghz Sun v 20 z (Dual Opteron) – 10 QLogic HBA’s for Stor. Cloud Access – 50 TB Storage at SC|05 provide by 3 PAR (Shared with Caltech) – 12 x Neterion/S 2 io Xframe I NICs (SR) – 2 x Chelsio T 110 NICs (LR) – Shared Cisco 6509 with 6 x 4 x 10 GB blades

Hardware at SC|05

Software • BBCP ‘Babar File Copy’ – Uses ‘ssh’ for authentication – Multiple stream capable – Features ‘rate synchronisation’ to reduce byte retransmissions – Sustained over 9 Gbps on a single session • Xroot. D – Library for transparent file access (standard unix file functions) – Designed primarily for LAN access (transaction based protocol) – Managed over 35 Gbit/sec (in two directions) on 2 x 10 Gbps waves – Transferred 18 TBytes in 257, 913 files • DCache – 20 Gbps production and test cluster traffic

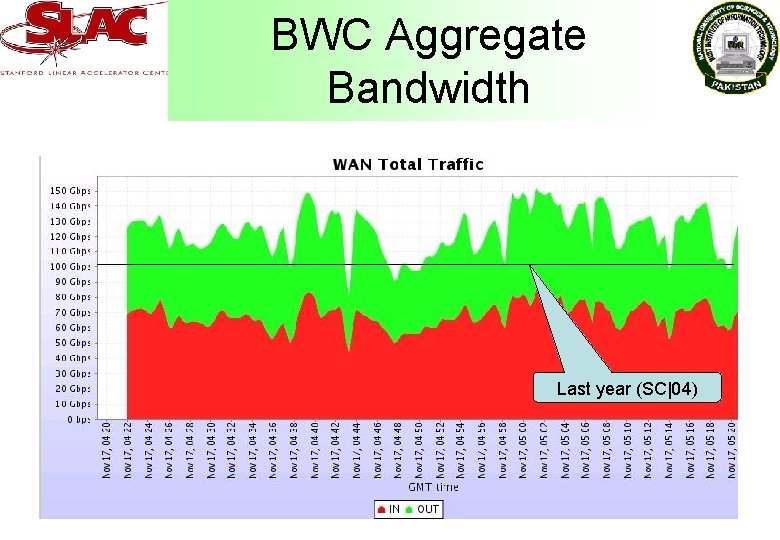

BWC Aggregate Bandwidth Last year (SC|04)

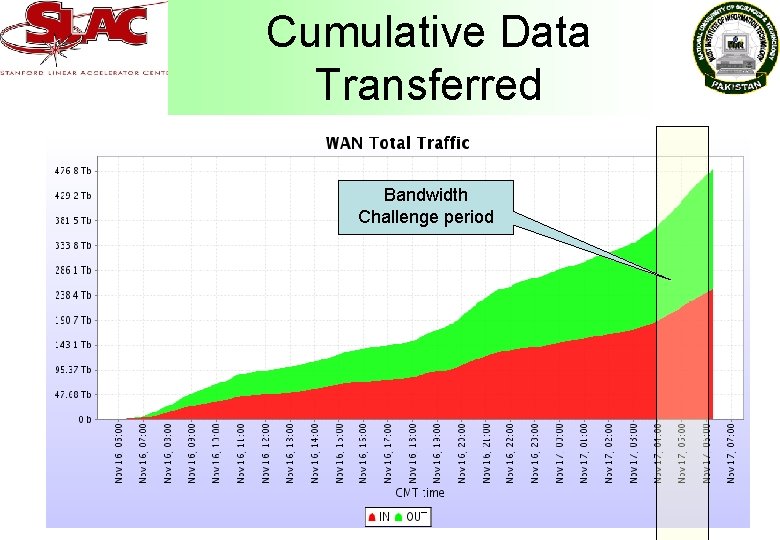

Cumulative Data Transferred Bandwidth Challenge period

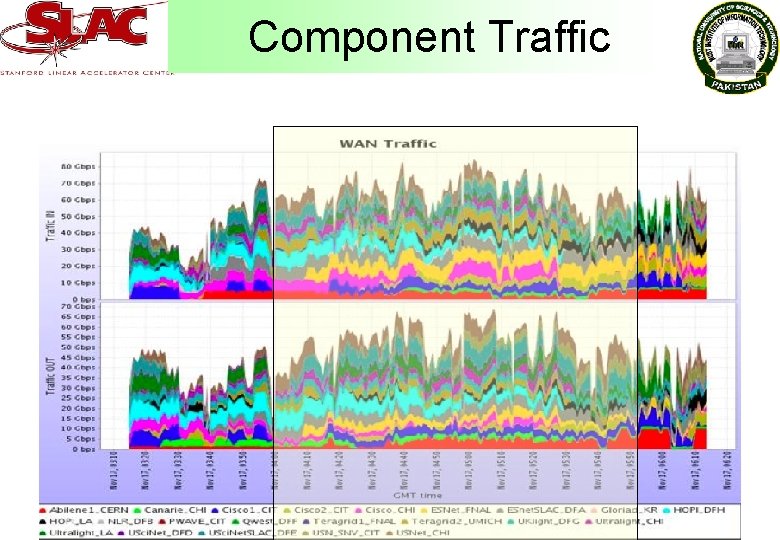

Component Traffic

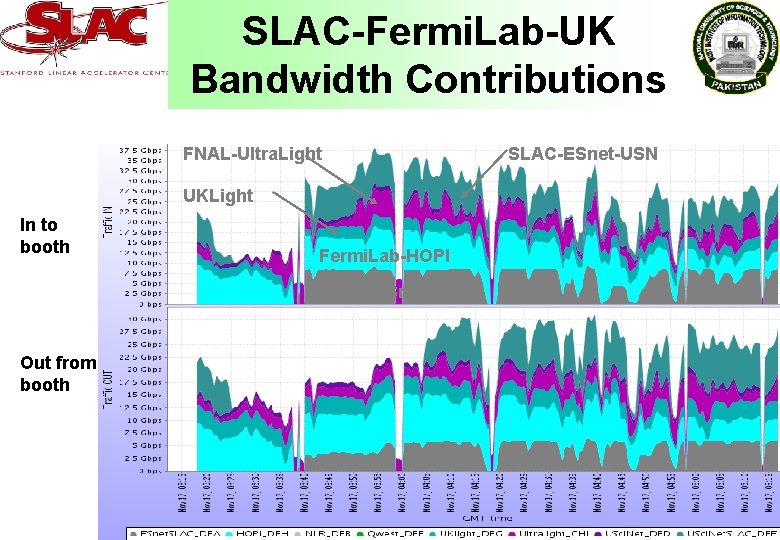

SLAC-Fermi. Lab-UK Bandwidth Contributions FNAL-Ultra. Light UKLight In to booth Fermi. Lab-HOPI SLAC-ESnet Out from booth SLAC-ESnet-USN

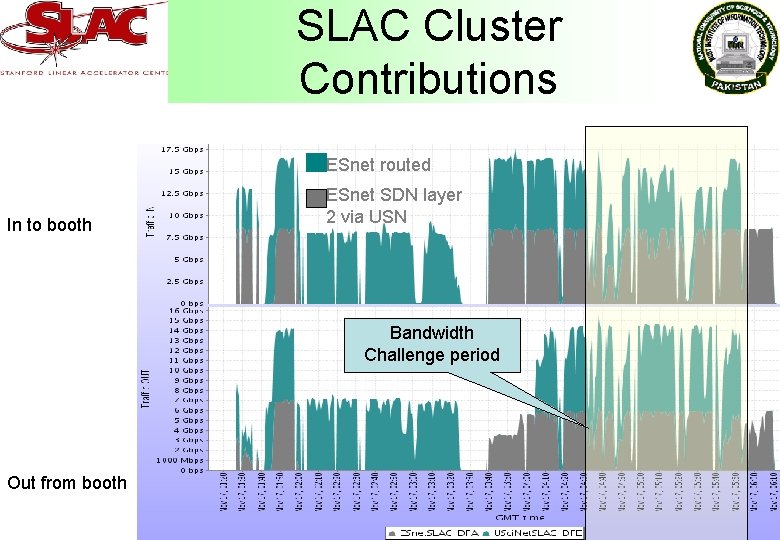

SLAC Cluster Contributions ESnet routed In to booth ESnet SDN layer 2 via USN Bandwidth Challenge period Out from booth

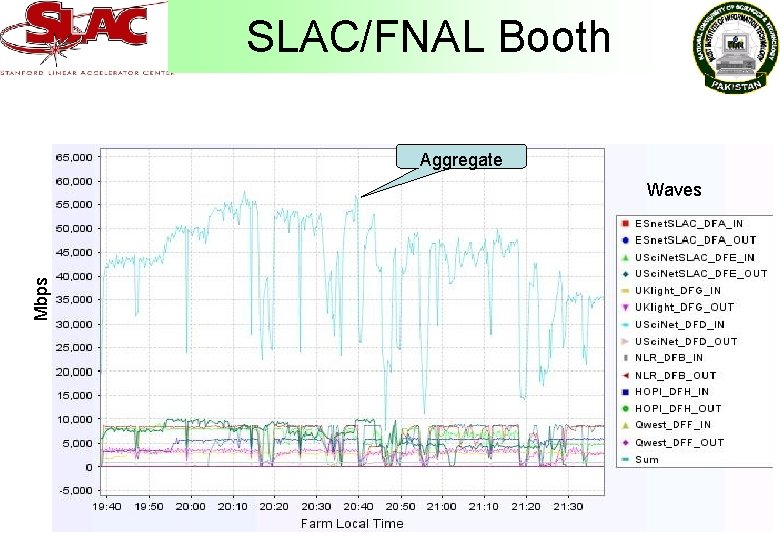

SLAC/FNAL Booth Aggregate Mbps Waves

Problems… • Managerial/PR – Initial request for loan hardware took place 6 months in advance! – Lots and lots of paperwork to keep account of all loan equipment • Logistical – Set up and tore down a pseudo production network and servers in a space of week! – Testing could not begin until waves were alight • Most waves lit day before challenge! – Shipping so much hardware not cheap! – Setting up monitoring

Problems… • Tried to configure hardware and software prior to show • Hardware – NICS • We had 3 bad Chelsios (bad memory) • Xframe II’s did not work in UKLight’s Boston machines – Hard-disks • 3 dead 10 K disks (had to ship in spare) – 1 x 4 Port 10 Gb blade DOA – MTU mismatch between domains – Router blade died during stress testing day before BWC! – Cables! • Software – Used golden disks for duplication (still takes 30 minutes per disk to replicate!) – Linux kernels: • Initially used 2. 6. 14, found sever performance problems compared to 2. 6. 12. – (New) Router firmware caused crashes under heavy load • Unfortunately, only discovered just before BWC • Had to manually restart the affected ports during BWC

Problems • Most transfers were from memory to memory (Ramdisk etc). – Local caching of (small) files in memory – Reading and writing to disk will be the next bottleneck to overcome

Conclusion • Previewed the IT Challenges of the next generation Data Intensive Science Applications (High Energy Physics, astronomy etc) – Petabyte-scale datasets – Tens of national and transoceanic links at 10 Gbps (and up) – 100+ Gbps aggregate data transport sustained for hours; We reached a Petabyte/day transport rate for real physics data • Learned to gauge difficulty of the global networks and transport systems required for the LHC mission – Set up, shook down and successfully ran the systems in < 1 week – Understood and optimized the configurations of various components (Network interfaces, router/switches, OS, TCP kernels, applications) for high performance over the wide area network.

Conclusion • Products from this the exercise – An optimized Linux (2. 6. 12 + NFSv 4 + FAST and other TCP stacks) kernel for data transport; after 7 full kernel-build cycles in 4 days – A newly optimized application-level copy program, bbcp, that matches the performance of iperf under some conditions. – Extensions of Xrootd, an optimized low-latency file access application for clusters, across the wide area – Understanding of the limits of 10 Gbps-capable systems under stress. – How to effectively utilize 10 GE and 1 GE connected systems to drive 10 gigabit wavelengths in both directions. – Use of production and test clusters at FNAL reaching more than 20 Gbps of network throughput. • Significant efforts remain from the perspective of highenergy physics – Management, integration and optimization of network resources – End-to-end capabilities able to utilize these network resources. This includes applications and IO devices (disk and storage systems)

Press and PR • 11/8/05 - Brit Boffins aim to Beat LAN speed record from vnunet. com • SC|05 Bandwidth Challenge SLAC Interaction Point. • Top Researchers, Projects in High Performance Computing Honored at SC/05. . . Business Wire (press release) - San Francisco, CA, USA • 11/18/05 - Official Winner Announcement • 11/18/05 - SC|05 Bandwidth Challenge Slide Presentation • 11/23/05 - Bandwidth Challenge Results from Slashdot • 12/6/05 - Caltech press release • 12/6/05 - Neterion Enables High Energy Physics Team to Beat World Record Speed at SC 05 Conference CCN Matthews News Distribution Experts • High energy physics team captures network prize at SC|05 from SLAC • High energy physics team captures network prize at SC|05 Eureka. Alert! • 12/7/05 - High Energy Physics Team Smashes Network Record, from Science Grid this Week. • Congratulations to our Research Partners for a New Bandwidth Record at Super. Computing 2005, from Neterion.

- Slides: 52