Standards for Machine Learning Inferencing and Vision Acceleration

- Slides: 27

Standards for Machine Learning, Inferencing and Vision Acceleration Neil Trevett | Khronos President NVIDIA | VP Developer Ecosystems This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 1

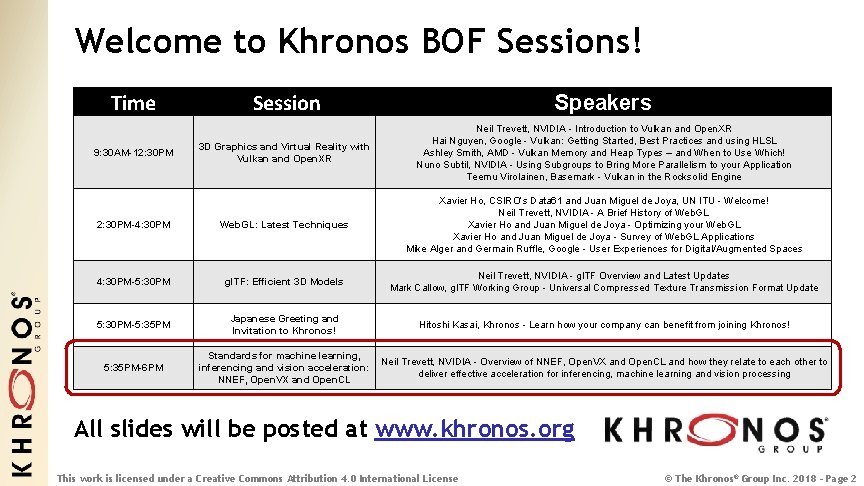

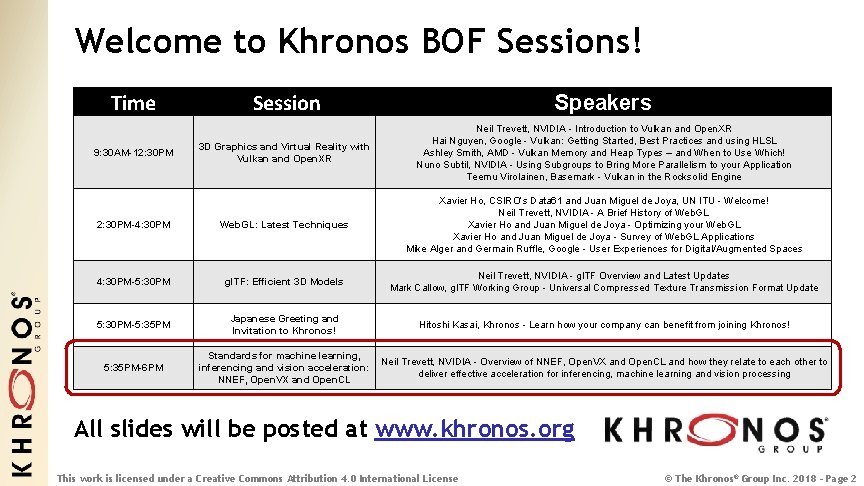

Welcome to Khronos BOF Sessions! Time Session Speakers 3 D Graphics and Virtual Reality with Vulkan and Open. XR Neil Trevett, NVIDIA - Introduction to Vulkan and Open. XR Hai Nguyen, Google - Vulkan: Getting Started, Best Practices and using HLSL Ashley Smith, AMD - Vulkan Memory and Heap Types – and When to Use Which! Nuno Subtil, NVIDIA - Using Subgroups to Bring More Parallelism to your Application Teemu Virolainen, Basemark - Vulkan in the Rocksolid Engine 2: 30 PM-4: 30 PM Web. GL: Latest Techniques Xavier Ho, CSIRO’s Data 61 and Juan Miguel de Joya, UN ITU - Welcome! Neil Trevett, NVIDIA - A Brief History of Web. GL Xavier Ho and Juan Miguel de Joya - Optimizing your Web. GL Xavier Ho and Juan Miguel de Joya - Survey of Web. GL Applications Mike Alger and Germain Ruffle, Google - User Experiences for Digital/Augmented Spaces 4: 30 PM-5: 30 PM gl. TF: Efficient 3 D Models Neil Trevett, NVIDIA - gl. TF Overview and Latest Updates Mark Callow, gl. TF Working Group - Universal Compressed Texture Transmission Format Update 5: 30 PM-5: 35 PM Japanese Greeting and Invitation to Khronos! Hitoshi Kasai, Khronos - Learn how your company can benefit from joining Khronos! 5: 35 PM-6 PM Standards for machine learning, inferencing and vision acceleration: NNEF, Open. VX and Open. CL Neil Trevett, NVIDIA - Overview of NNEF, Open. VX and Open. CL and how they relate to each other to deliver effective acceleration for inferencing, machine learning and vision processing 9: 30 AM-12: 30 PM All slides will be posted at www. khronos. org This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 2

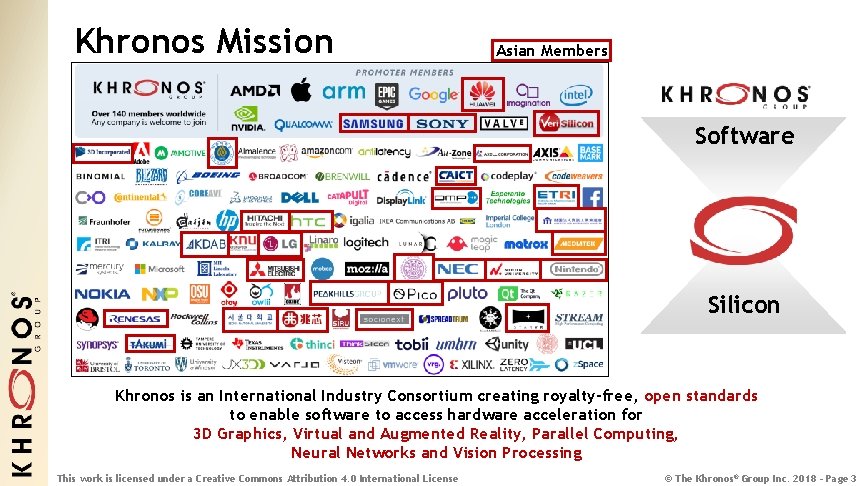

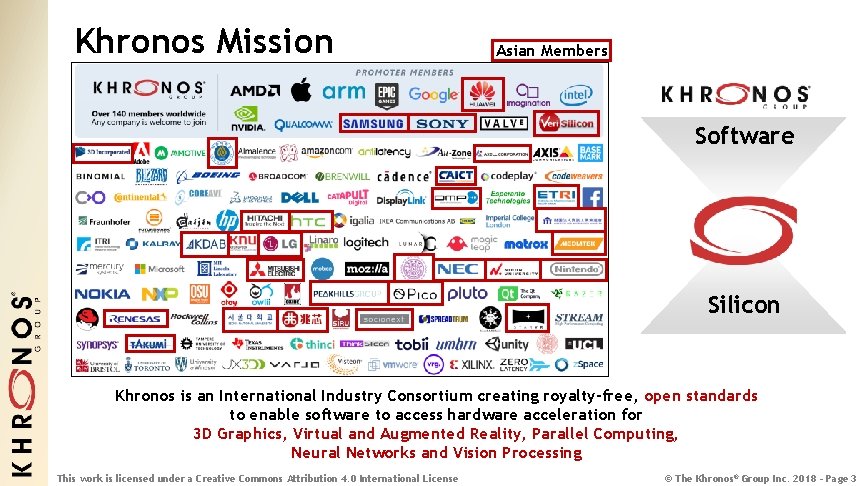

Khronos Mission Asian Members Software Silicon Khronos is an International Industry Consortium creating royalty-free, open standards to enable software to access hardware acceleration for 3 D Graphics, Virtual and Augmented Reality, Parallel Computing, Neural Networks and Vision Processing This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 3

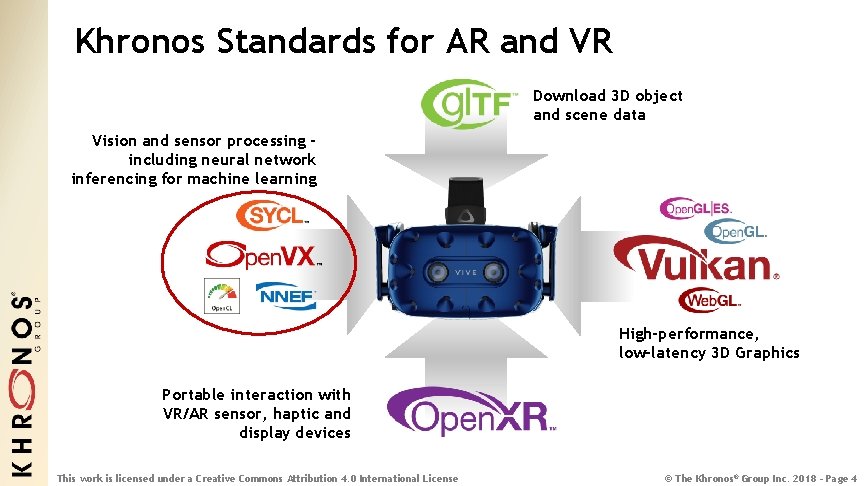

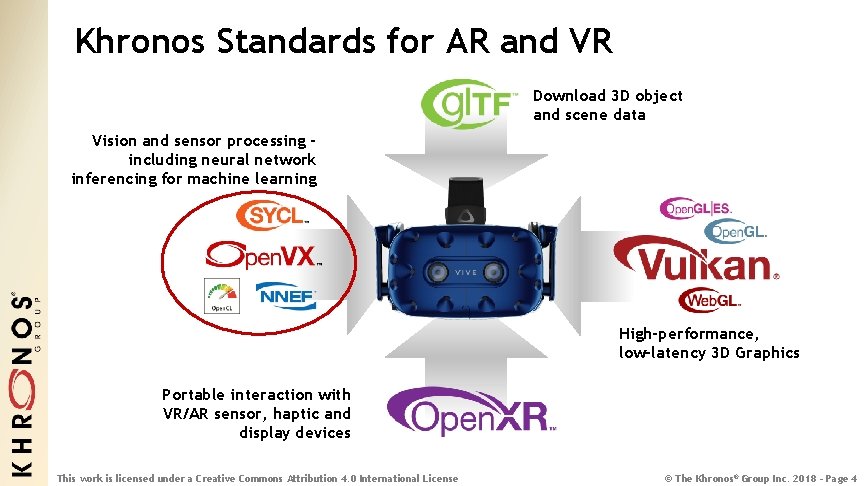

Khronos Standards for AR and VR Download 3 D object and scene data Vision and sensor processing including neural network inferencing for machine learning High-performance, low-latency 3 D Graphics Portable interaction with VR/AR sensor, haptic and display devices This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 4

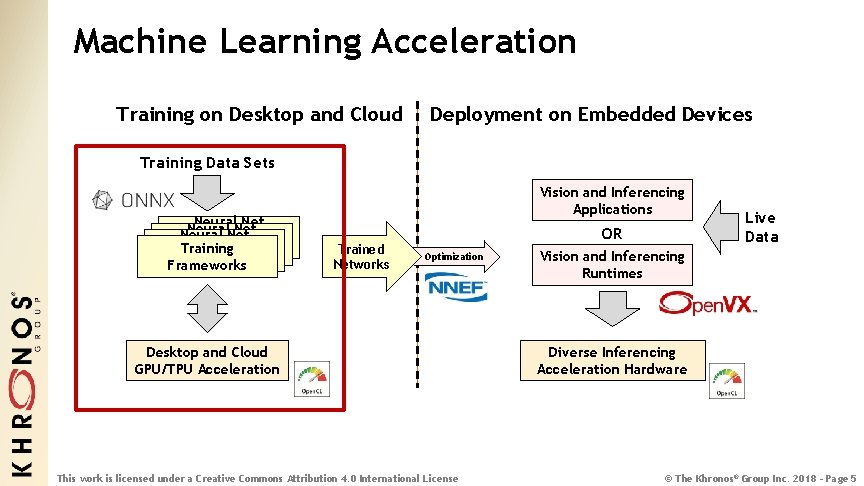

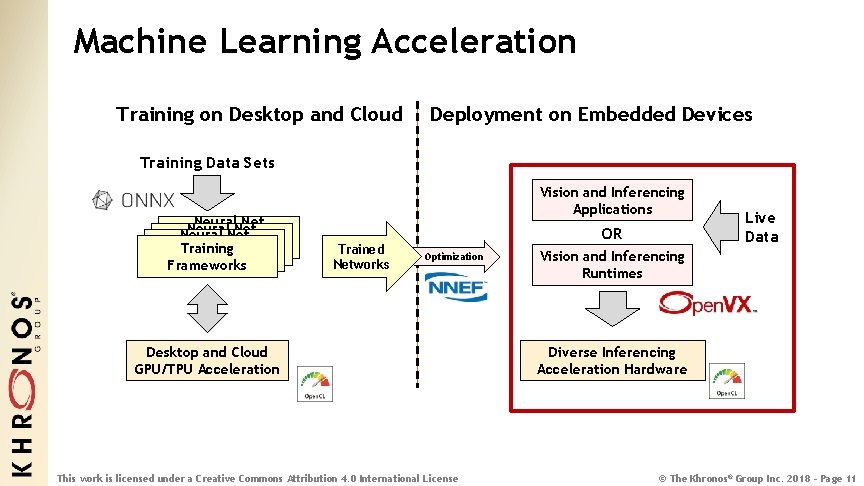

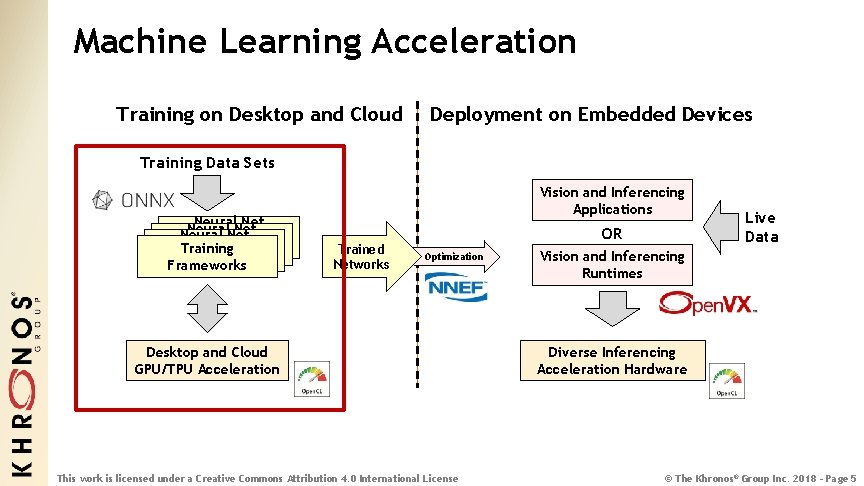

Machine Learning Acceleration Training on Desktop and Cloud Deployment on Embedded Devices Training Data Sets Neural. Net Neural Net Training Frameworks Vision and Inferencing Applications Trained Networks OR Optimization Desktop and Cloud GPU/TPU Acceleration This work is licensed under a Creative Commons Attribution 4. 0 International License Live Data Vision and Inferencing Runtimes Diverse Inferencing Acceleration Hardware © The Khronos® Group Inc. 2018 - Page 5

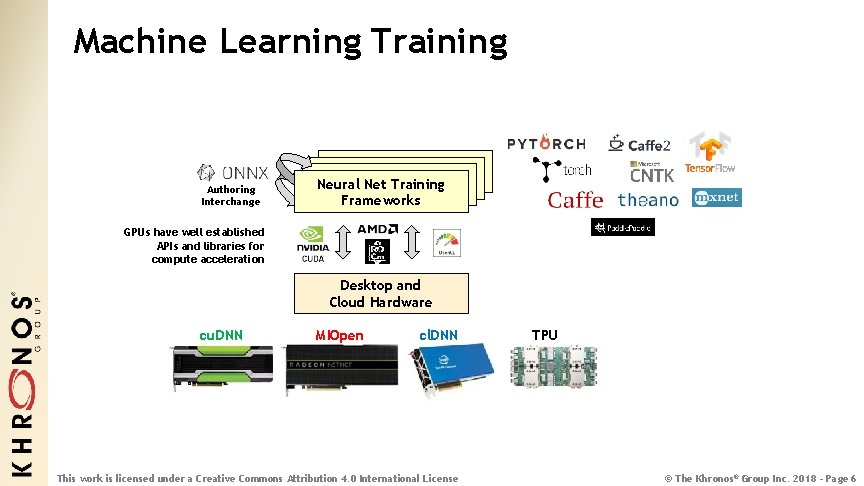

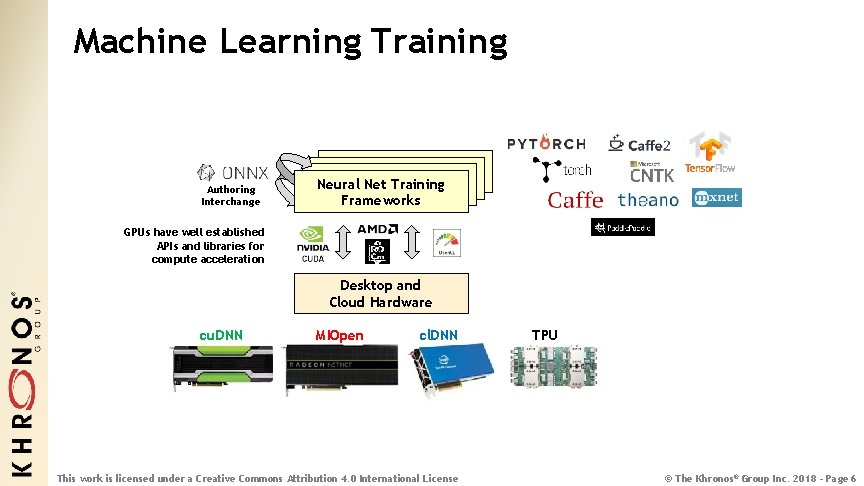

Machine Learning Training Authoring Interchange Neural. Net. Training Frameworks Neural Frameworks GPUs have well established APIs and libraries for compute acceleration Desktop and Cloud Hardware cu. DNN MIOpen cl. DNN This work is licensed under a Creative Commons Attribution 4. 0 International License TPU © The Khronos® Group Inc. 2018 - Page 6

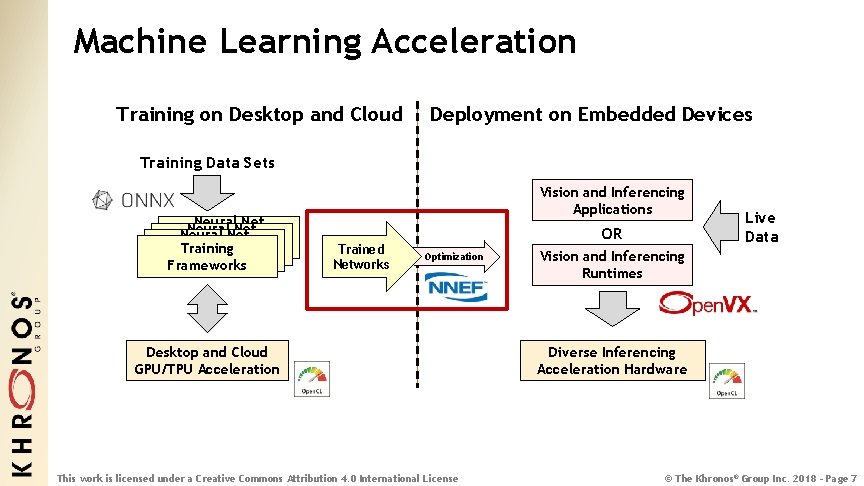

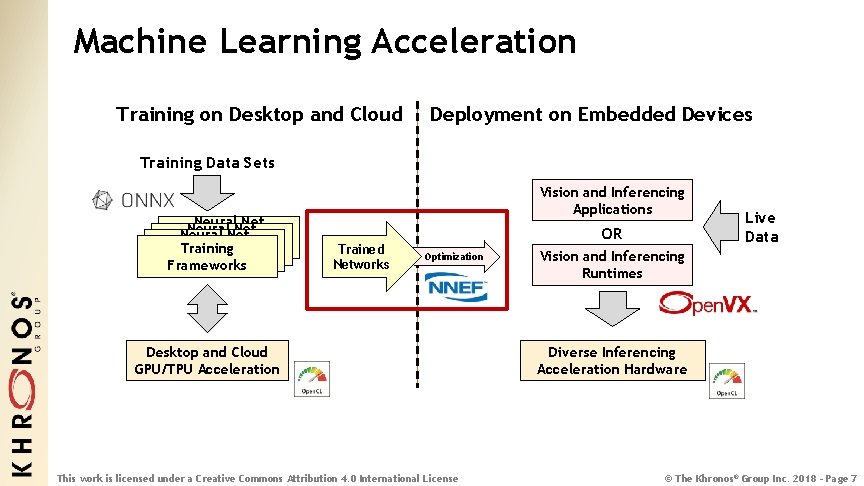

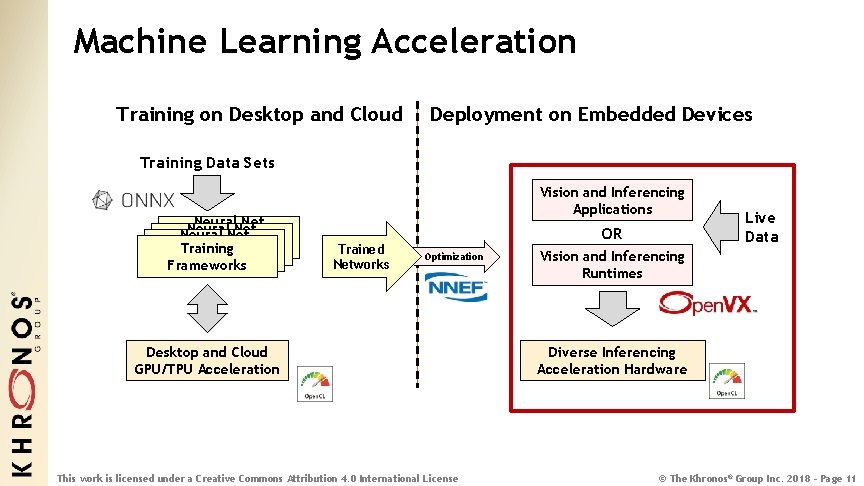

Machine Learning Acceleration Training on Desktop and Cloud Deployment on Embedded Devices Training Data Sets Neural. Net Neural Net Training Frameworks Vision and Inferencing Applications Trained Networks OR Optimization Desktop and Cloud GPU/TPU Acceleration This work is licensed under a Creative Commons Attribution 4. 0 International License Live Data Vision and Inferencing Runtimes Diverse Inferencing Acceleration Hardware © The Khronos® Group Inc. 2018 - Page 7

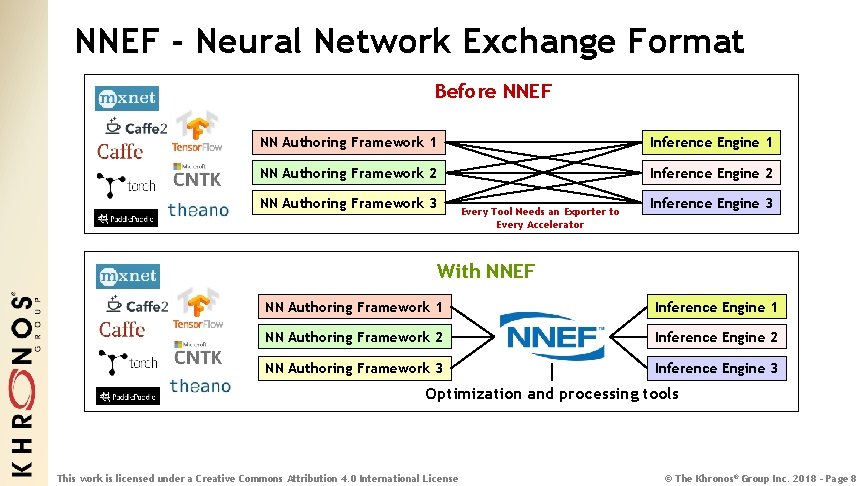

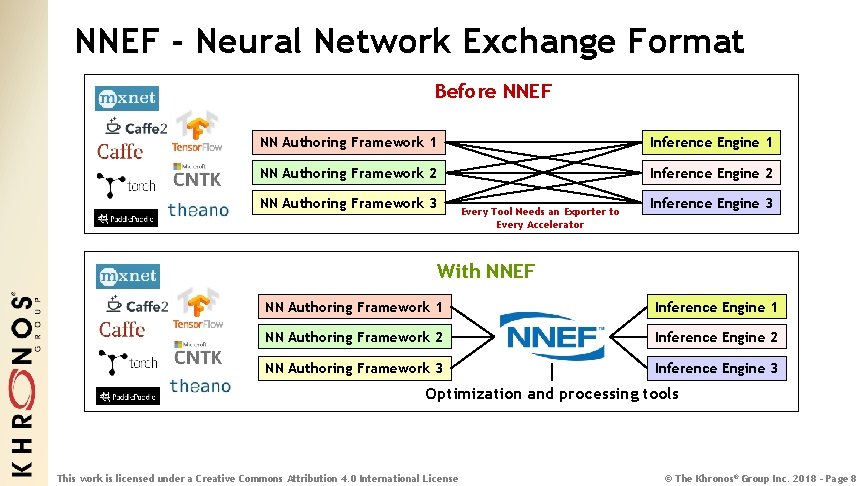

NNEF - Neural Network Exchange Format Before NNEF NN Authoring Framework 1 Inference Engine 1 NN Authoring Framework 2 Inference Engine 2 NN Authoring Framework 3 Every Tool Needs an Exporter to Every Accelerator Inference Engine 3 With NNEF NN Authoring Framework 1 Inference Engine 1 NN Authoring Framework 2 Inference Engine 2 NN Authoring Framework 3 Inference Engine 3 Optimization and processing tools This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 8

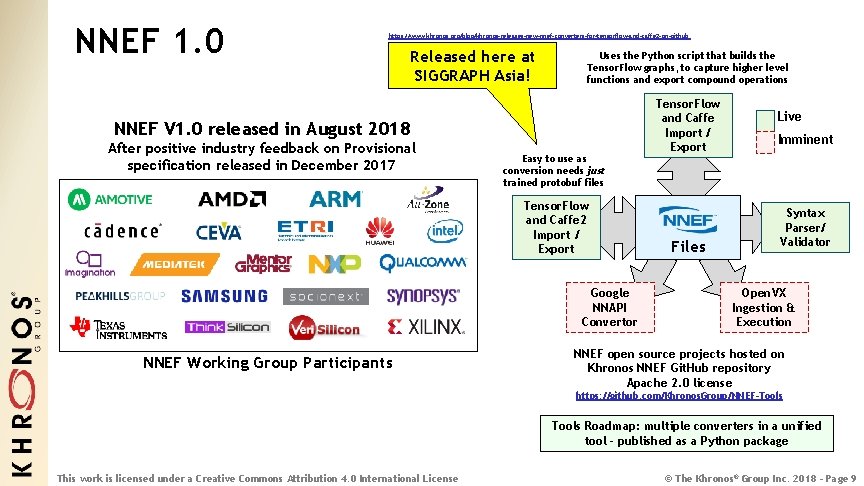

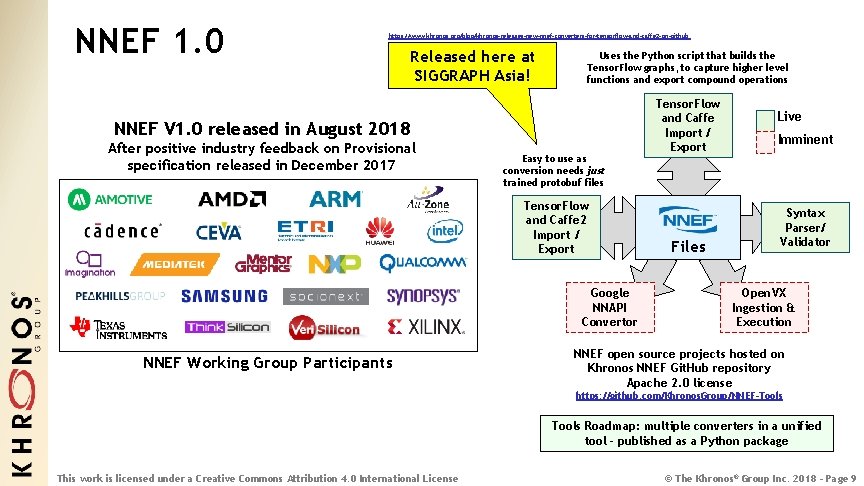

NNEF 1. 0 https: //www. khronos. org/blog/khronos-releases-new-nnef-converters-for-tensorflow-and-caffe 2 -on-github Released here at SIGGRAPH Asia! Uses the Python script that builds the Tensor. Flow graphs, to capture higher level functions and export compound operations NNEF V 1. 0 released in August 2018 After positive industry feedback on Provisional specification released in December 2017 Easy to use as conversion needs just trained protobuf files Tensor. Flow and Caffe 2 Import / Export Google NNAPI Convertor NNEF Working Group Participants Tensor. Flow and Caffe Import / Export Files Live Imminent Syntax Parser/ Validator Open. VX Ingestion & Execution NNEF open source projects hosted on Khronos NNEF Git. Hub repository Apache 2. 0 license https: //github. com/Khronos. Group/NNEF-Tools Roadmap: multiple converters in a unified tool - published as a Python package This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 9

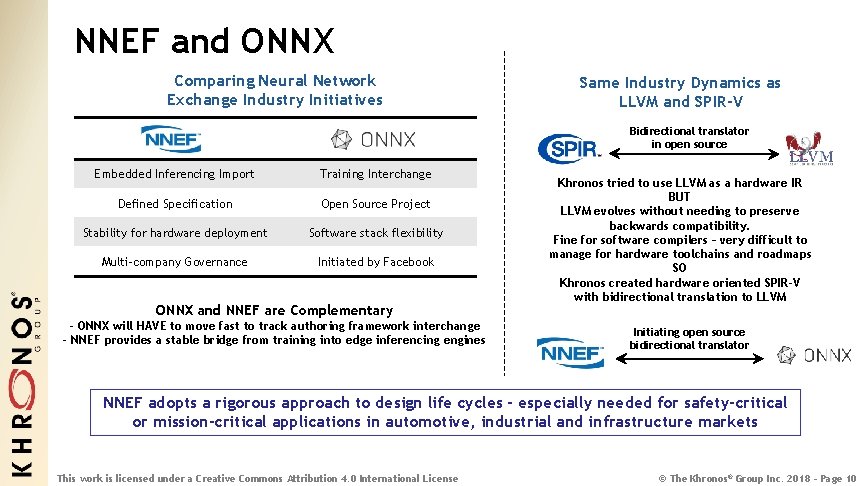

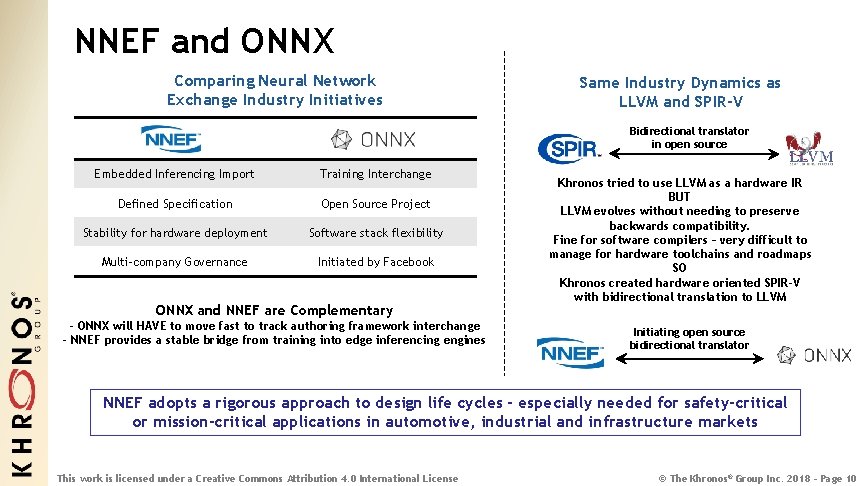

NNEF and ONNX Comparing Neural Network Exchange Industry Initiatives Same Industry Dynamics as LLVM and SPIR-V Bidirectional translator in open source Embedded Inferencing Import Training Interchange Defined Specification Open Source Project Stability for hardware deployment Software stack flexibility Multi-company Governance Initiated by Facebook ONNX and NNEF are Complementary - ONNX will HAVE to move fast to track authoring framework interchange - NNEF provides a stable bridge from training into edge inferencing engines Khronos tried to use LLVM as a hardware IR BUT LLVM evolves without needing to preserve backwards compatibility. Fine for software compilers – very difficult to manage for hardware toolchains and roadmaps SO Khronos created hardware oriented SPIR-V with bidirectional translation to LLVM Initiating open source bidirectional translator NNEF adopts a rigorous approach to design life cycles - especially needed for safety-critical or mission-critical applications in automotive, industrial and infrastructure markets This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 10

Machine Learning Acceleration Training on Desktop and Cloud Deployment on Embedded Devices Training Data Sets Neural. Net Neural Net Training Frameworks Vision and Inferencing Applications Trained Networks OR Optimization Desktop and Cloud GPU/TPU Acceleration This work is licensed under a Creative Commons Attribution 4. 0 International License Live Data Vision and Inferencing Runtimes Diverse Inferencing Acceleration Hardware © The Khronos® Group Inc. 2018 - Page 11

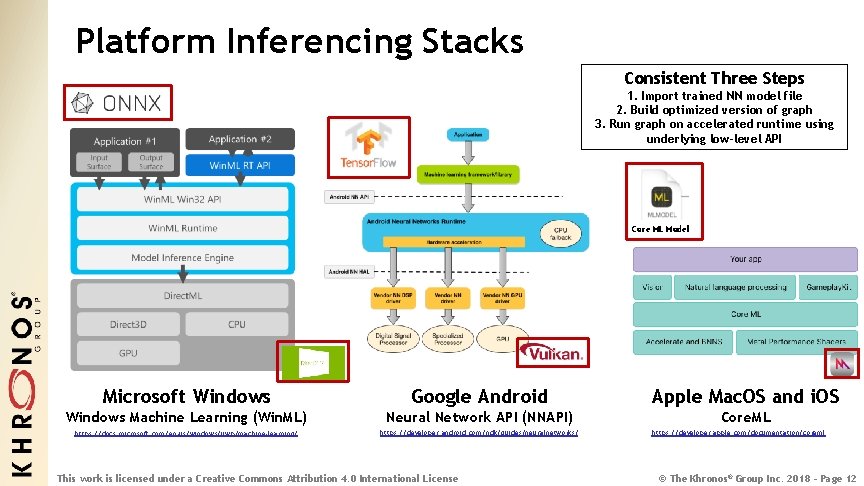

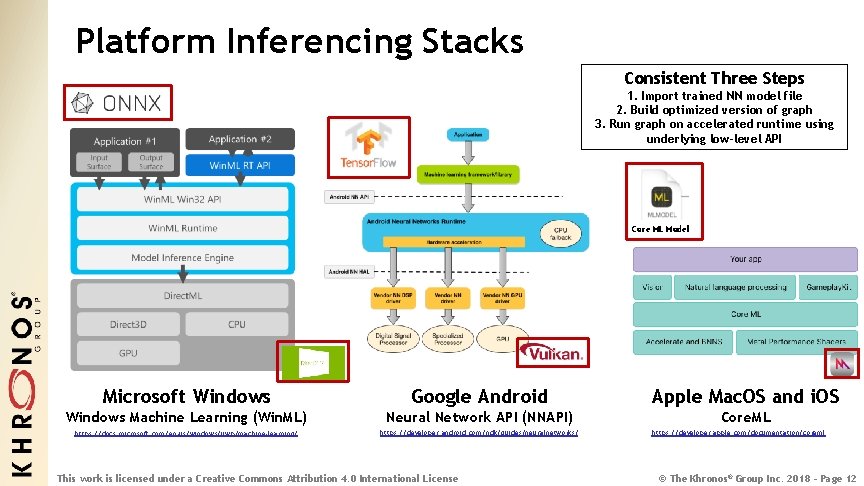

Platform Inferencing Stacks Consistent Three Steps 1. Import trained NN model file 2. Build optimized version of graph 3. Run graph on accelerated runtime using underlying low-level API Core ML Model Microsoft Windows Google Android Windows Machine Learning (Win. ML) Neural Network API (NNAPI) https: //docs. microsoft. com/en-us/windows/uwp/machine-learning/ https: //developer. android. com/ndk/guides/neuralnetworks/ This work is licensed under a Creative Commons Attribution 4. 0 International License Apple Mac. OS and i. OS Core. ML https: //developer. apple. com/documentation/coreml © The Khronos® Group Inc. 2018 - Page 12

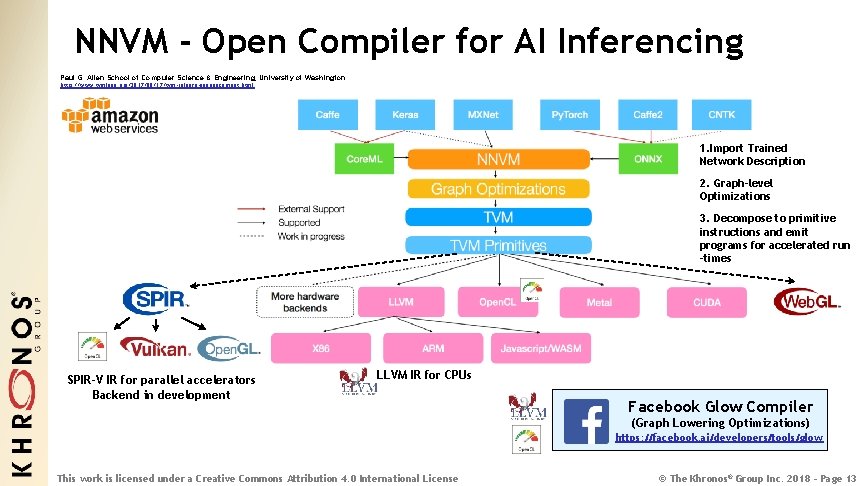

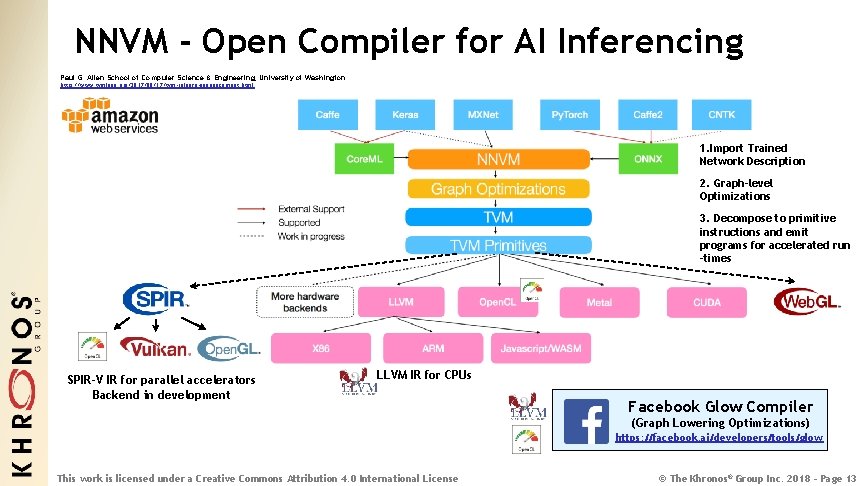

NNVM - Open Compiler for AI Inferencing Paul G. Allen School of Computer Science & Engineering, University of Washington http: //www. tvmlang. org/2017/08/17/tvm-release-announcement. html 1. Import Trained Network Description 2. Graph-level Optimizations 3. Decompose to primitive instructions and emit programs for accelerated run -times SPIR-V IR for parallel accelerators Backend in development LLVM IR for CPUs Facebook Glow Compiler (Graph Lowering Optimizations) https: //facebook. ai/developers/tools/glow This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 13

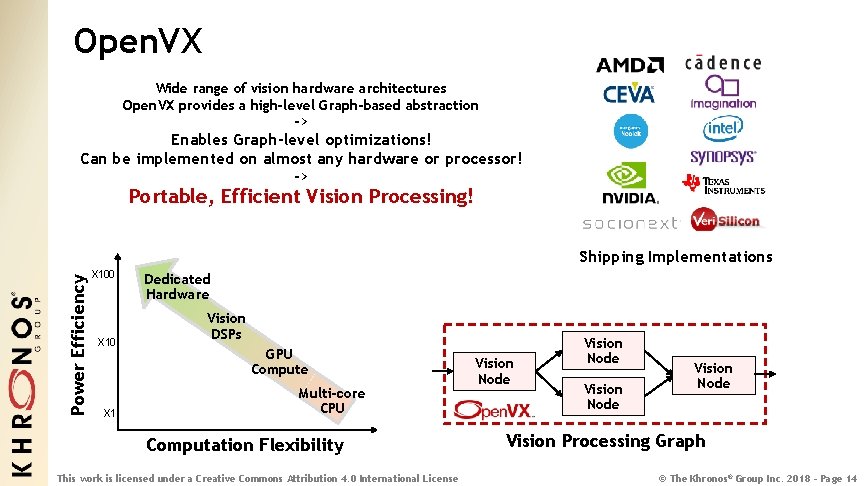

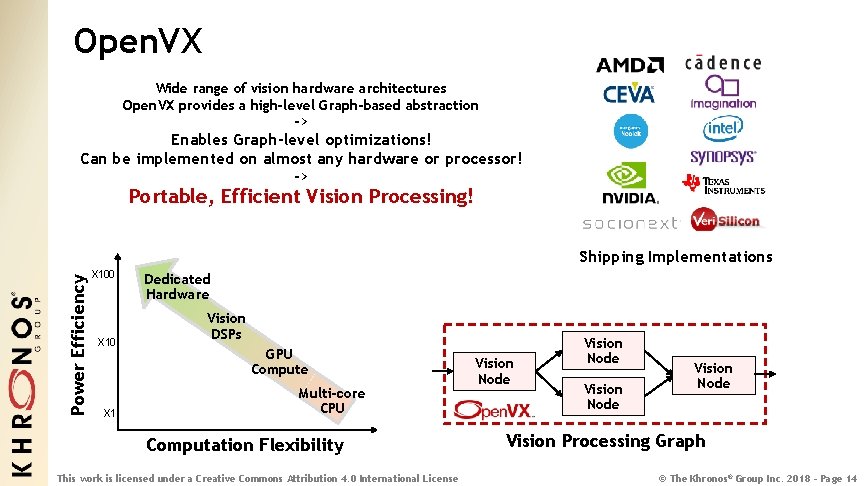

Open. VX Wide range of vision hardware architectures Open. VX provides a high-level Graph-based abstraction -> Enables Graph-level optimizations! Can be implemented on almost any hardware or processor! -> Portable, Efficient Vision Processing! Power Efficiency Shipping Implementations X 100 X 1 Dedicated Hardware Vision DSPs GPU Compute Multi-core CPU Computation Flexibility This work is licensed under a Creative Commons Attribution 4. 0 International License Vision Node Vision Processing Graph © The Khronos® Group Inc. 2018 - Page 14

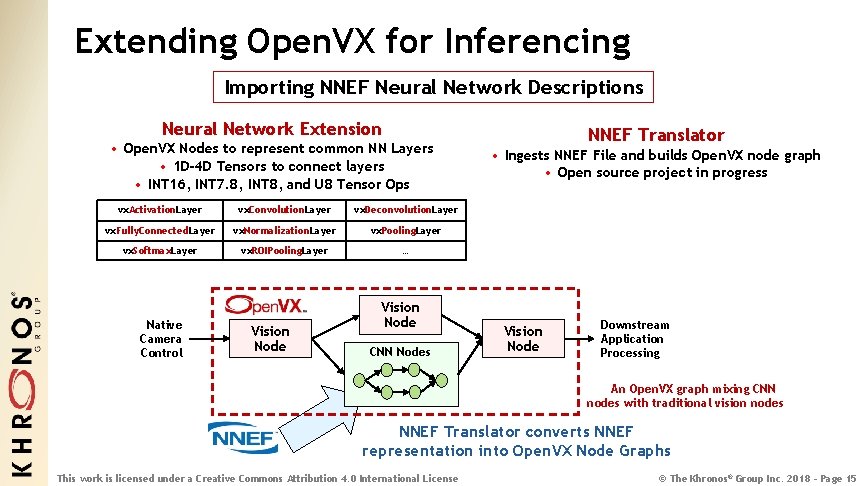

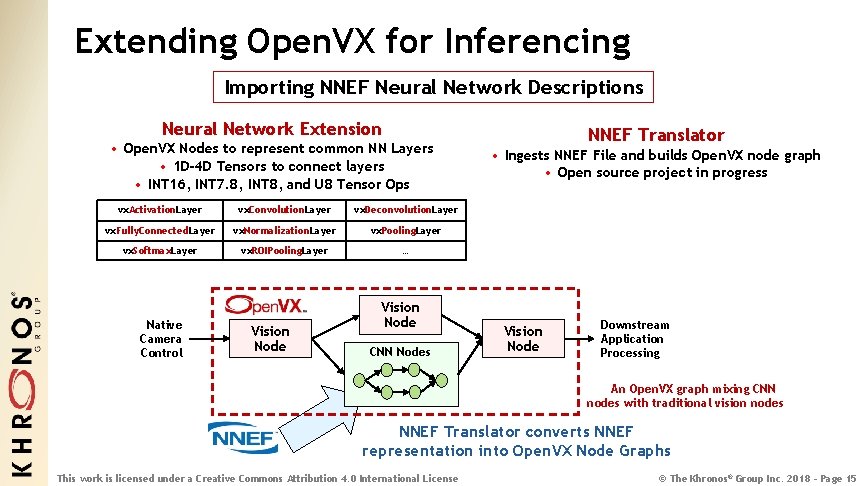

Extending Open. VX for Inferencing Importing NNEF Neural Network Descriptions Neural Network Extension • Open. VX Nodes to represent common NN Layers • 1 D-4 D Tensors to connect layers • INT 16, INT 7. 8, INT 8, and U 8 Tensor Ops vx. Activation. Layer vx. Convolution. Layer vx. Deconvolution. Layer vx. Fully. Connected. Layer vx. Normalization. Layer vx. Pooling. Layer vx. Softmax. Layer vx. ROIPooling. Layer … Native Camera Control Vision Node CNN Nodes NNEF Translator • Ingests NNEF File and builds Open. VX node graph • Open source project in progress Vision Node Downstream Application Processing An Open. VX graph mixing CNN nodes with traditional vision nodes NNEF Translator converts NNEF representation into Open. VX Node Graphs This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 15

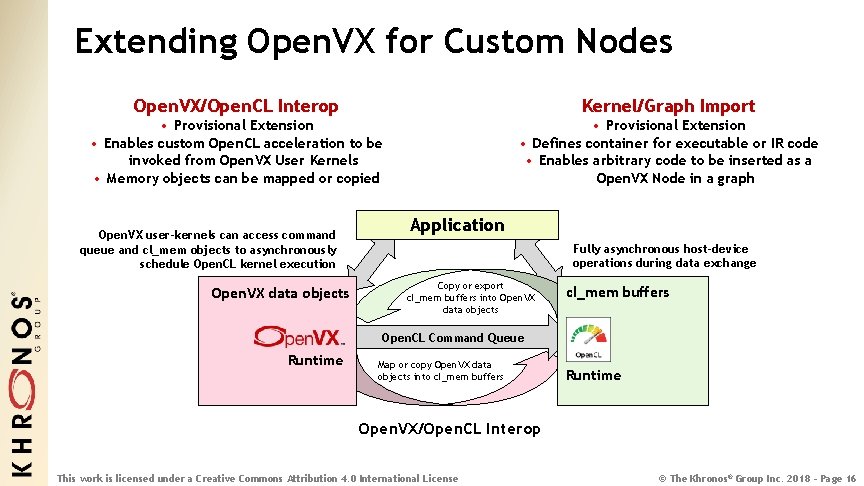

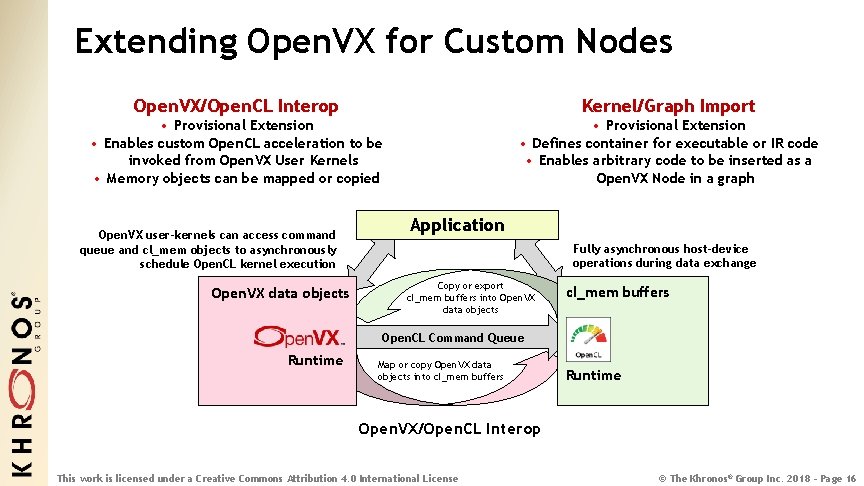

Extending Open. VX for Custom Nodes Open. VX/Open. CL Interop Kernel/Graph Import • Provisional Extension • Enables custom Open. CL acceleration to be invoked from Open. VX User Kernels • Memory objects can be mapped or copied • Provisional Extension • Defines container for executable or IR code • Enables arbitrary code to be inserted as a Open. VX Node in a graph Open. VX user-kernels can access command queue and cl_mem objects to asynchronously schedule Open. CL kernel execution Open. VX data objects Application Fully asynchronous host-device operations during data exchange Copy or export cl_mem buffers into Open. VX data objects cl_mem buffers Open. CL Command Queue Runtime Map or copy Open. VX data objects into cl_mem buffers Runtime Open. VX/Open. CL Interop This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 16

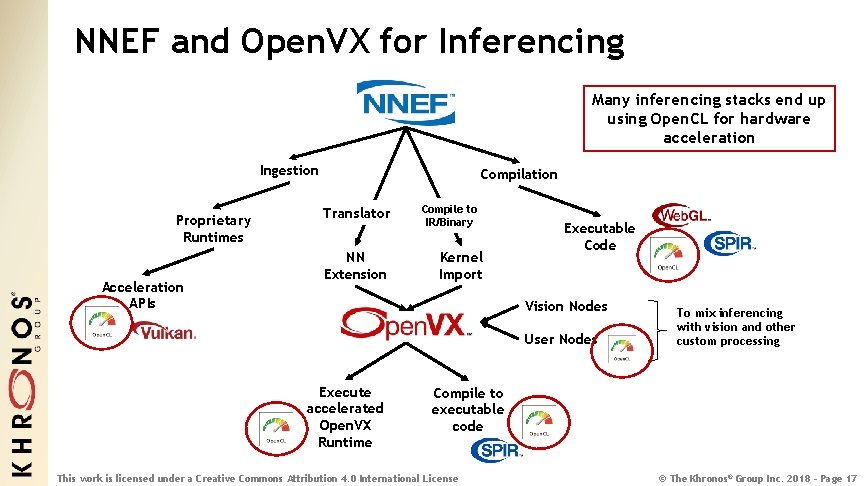

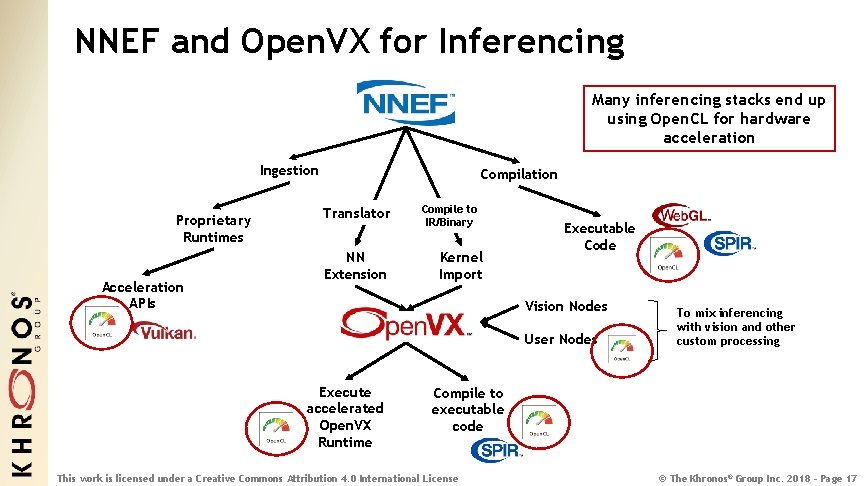

NNEF and Open. VX for Inferencing Many inferencing stacks end up using Open. CL for hardware acceleration Ingestion Proprietary Runtimes Acceleration APIs Compilation Translator NN Extension Compile to IR/Binary Kernel Import Executable Code Vision Nodes User Nodes Execute accelerated Open. VX Runtime To mix inferencing with vision and other custom processing Compile to executable code This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 17

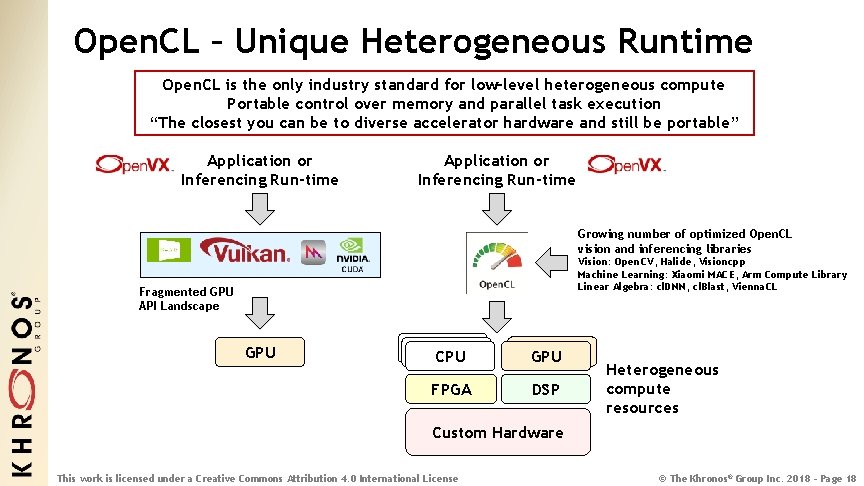

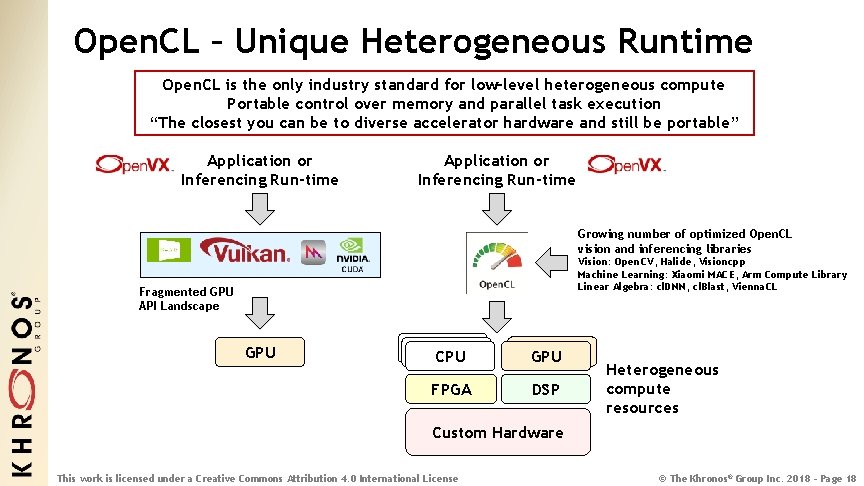

Open. CL – Unique Heterogeneous Runtime Open. CL is the only industry standard for low-level heterogeneous compute Portable control over memory and parallel task execution “The closest you can be to diverse accelerator hardware and still be portable” Application or Inferencing Run-time Growing number of optimized Open. CL vision and inferencing libraries Vision: Open. CV, Halide, Visioncpp Machine Learning: Xiaomi MACE, Arm Compute Library Linear Algebra: cl. DNN, cl. Blast, Vienna. CL Fragmented GPU API Landscape GPU CPU CPU GPU FPGA DSP Heterogeneous compute resources Custom Hardware This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 18

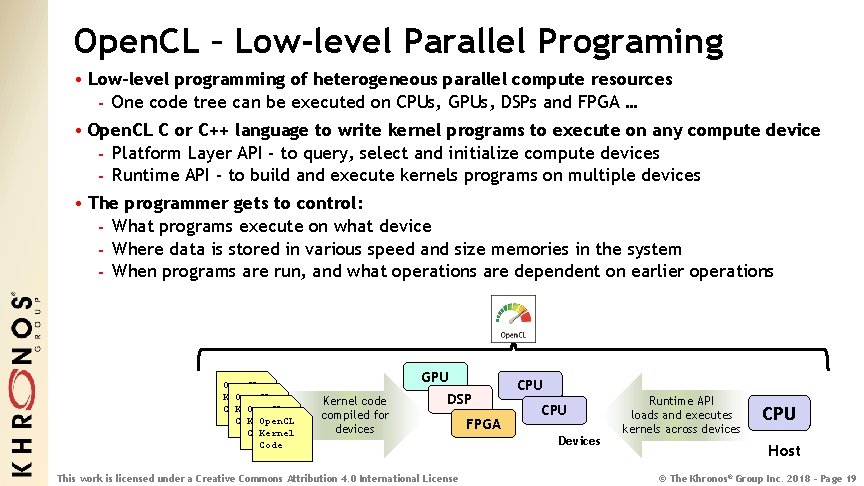

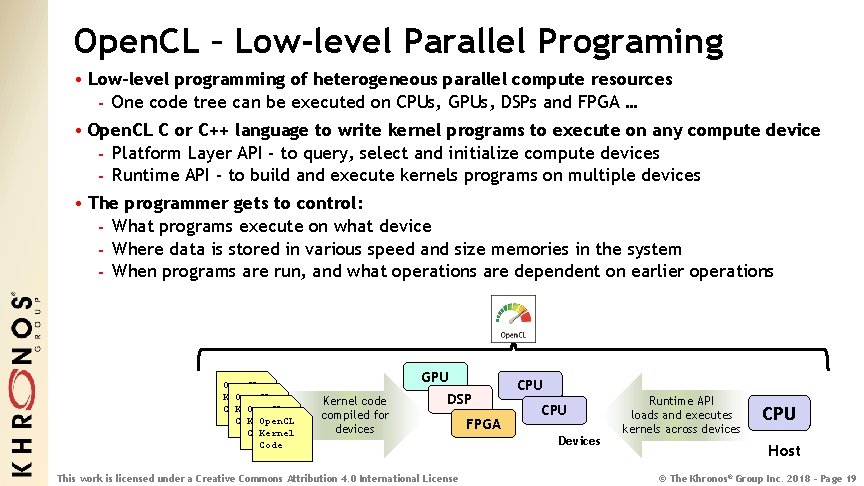

Open. CL – Low-level Parallel Programing • Low-level programming of heterogeneous parallel compute resources - One code tree can be executed on CPUs, GPUs, DSPs and FPGA … • Open. CL C or C++ language to write kernel programs to execute on any compute device - Platform Layer API - to query, select and initialize compute devices - Runtime API - to build and execute kernels programs on multiple devices • The programmer gets to control: - What programs execute on what device - Where data is stored in various speed and size memories in the system - When programs are run, and what operations are dependent on earlier operations Open. CL Kernel Open. CL Code Kernel Code GPU Kernel code compiled for devices DSP This work is licensed under a Creative Commons Attribution 4. 0 International License FPGA CPU Devices Runtime API loads and executes kernels across devices CPU Host © The Khronos® Group Inc. 2018 - Page 19

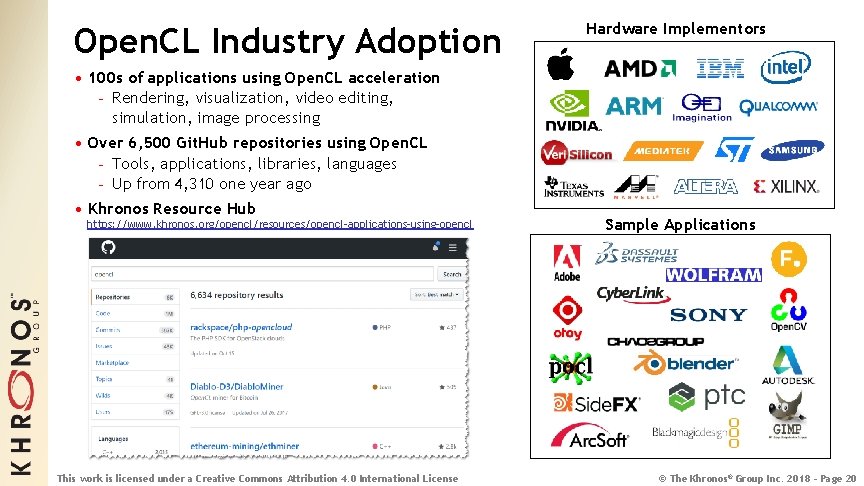

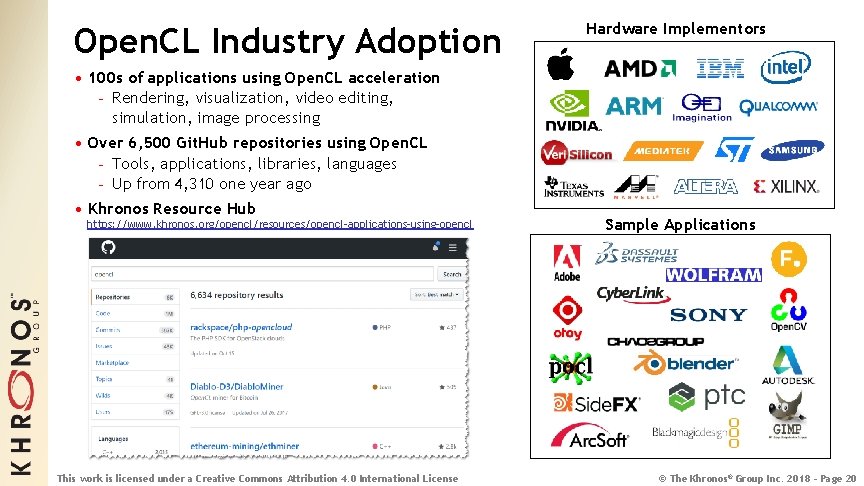

Open. CL Industry Adoption Hardware Implementors • 100 s of applications using Open. CL acceleration - Rendering, visualization, video editing, simulation, image processing • Over 6, 500 Git. Hub repositories using Open. CL - Tools, applications, libraries, languages - Up from 4, 310 one year ago • Khronos Resource Hub https: //www. khronos. org/opencl/resources/opencl-applications-using-opencl This work is licensed under a Creative Commons Attribution 4. 0 International License Sample Applications © The Khronos® Group Inc. 2018 - Page 20

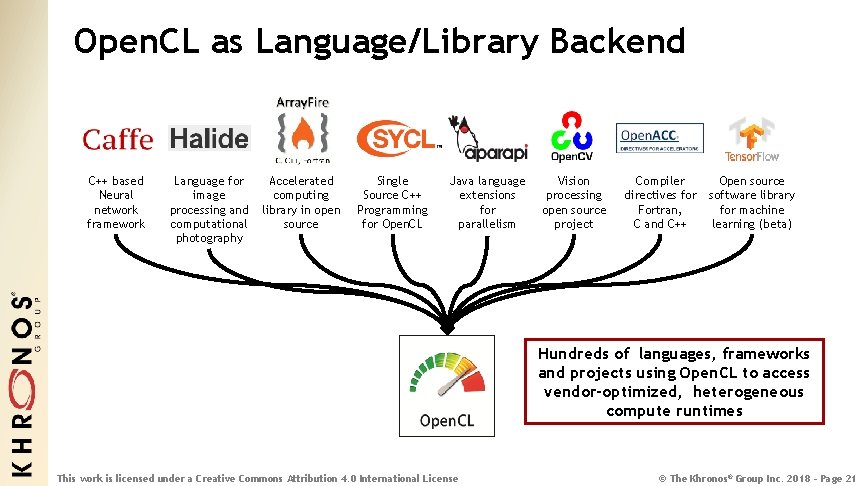

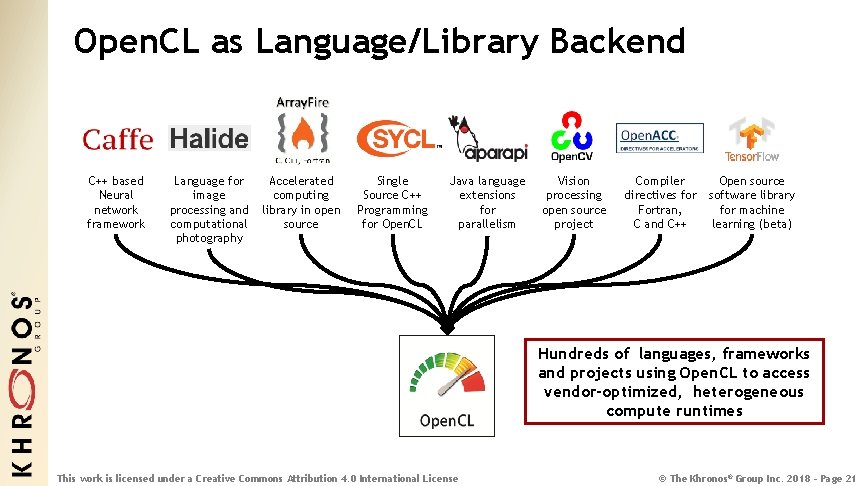

Open. CL as Language/Library Backend C++ based Neural network framework Language for image processing and computational photography Accelerated computing library in open source Single Source C++ Programming for Open. CL Java language extensions for parallelism Vision processing open source project Compiler directives for Fortran, C and C++ Open source software library for machine learning (beta) Hundreds of languages, frameworks and projects using Open. CL to access vendor-optimized, heterogeneous compute runtimes This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 21

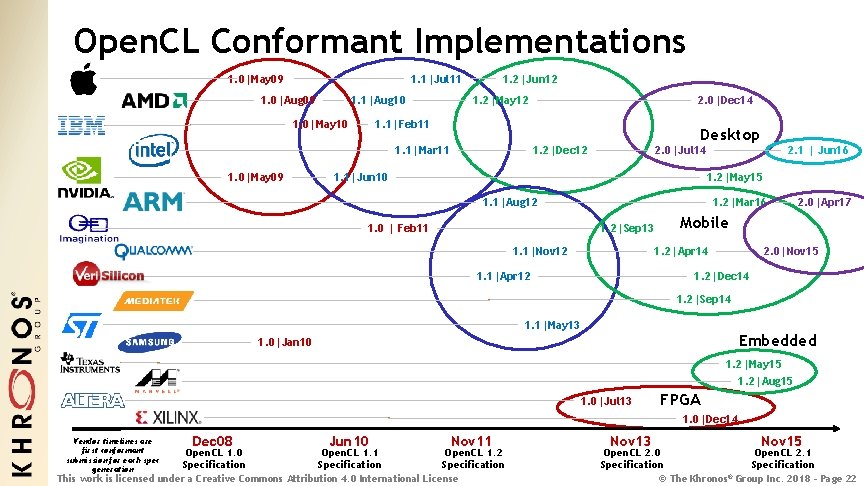

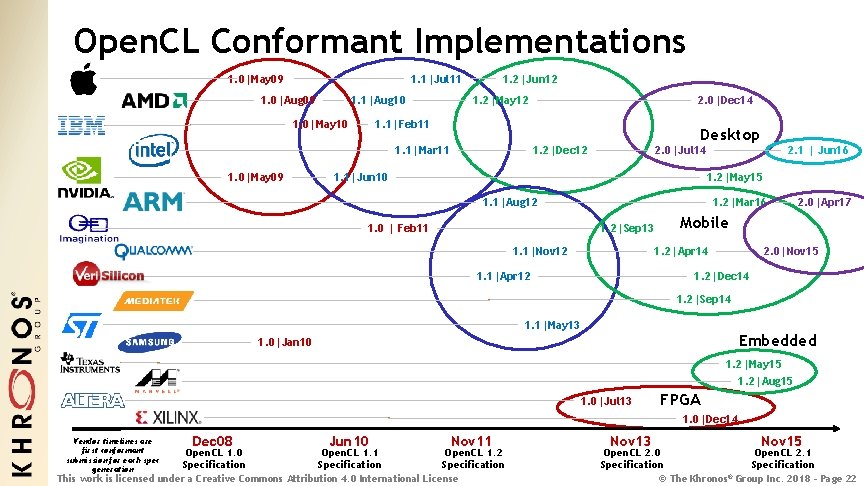

Open. CL Conformant Implementations 1. 0|May 09 1. 1|Jul 11 1. 0|Aug 09 1. 1|Aug 10 1. 0|May 10 1. 2|Jun 12 1. 2|May 12 1. 1|Feb 11 Desktop 2. 0|Jul 14 1. 2|Dec 12 1. 1|Mar 11 1. 0|May 09 2. 0|Dec 14 1. 1|Jun 10 2. 1 | Jun 16 1. 2|May 15 1. 1|Aug 12 1. 2|Mar 16 1. 2|Sep 13 1. 0 | Feb 11 1. 1|Nov 12 Mobile 1. 2|Apr 14 1. 1|Apr 12 2. 0|Apr 17 2. 0|Nov 15 1. 2|Dec 14 1. 2|Sep 14 1. 1|May 13 Embedded 1. 0|Jan 10 1. 2|May 15 1. 2|Aug 15 1. 0|Jul 13 FPGA 1. 0|Dec 14 Vendor timelines are first conformant submission for each spec generation Dec 08 Jun 10 Nov 11 Open. CL 1. 0 Open. CL 1. 1 Open. CL 1. 2 Specification This work is licensed under a Creative Commons Attribution 4. 0 International License Nov 13 Nov 15 Open. CL 2. 0 Open. CL 2. 1 Specification © The Khronos® Group Inc. 2018 - Page 22

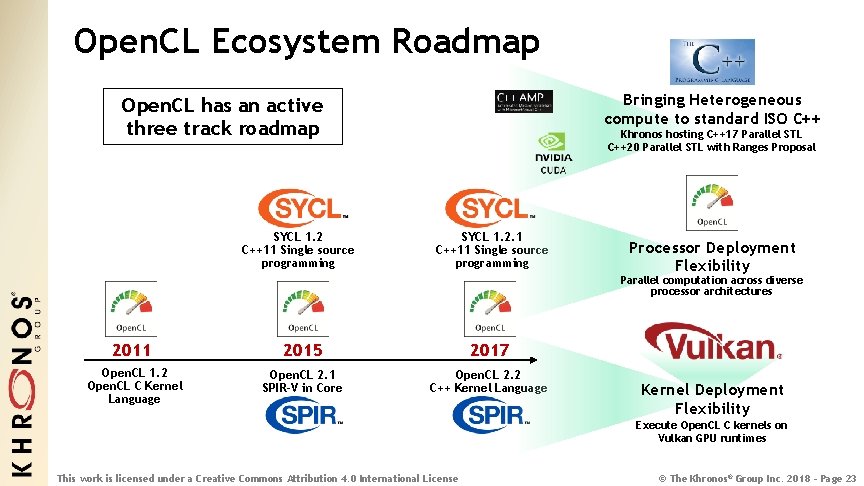

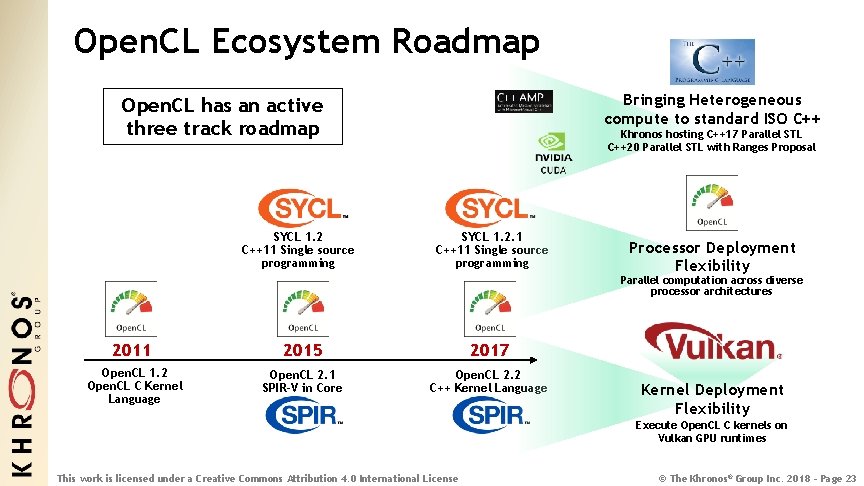

Open. CL Ecosystem Roadmap Bringing Heterogeneous compute to standard ISO C++ Open. CL has an active three track roadmap SYCL 1. 2 C++11 Single source programming Khronos hosting C++17 Parallel STL C++20 Parallel STL with Ranges Proposal SYCL 1. 2. 1 C++11 Single source programming Processor Deployment Flexibility Parallel computation across diverse processor architectures 2011 2015 2017 Open. CL 1. 2 Open. CL C Kernel Language Open. CL 2. 1 SPIR-V in Core Open. CL 2. 2 C++ Kernel Language Kernel Deployment Flexibility Execute Open. CL C kernels on Vulkan GPU runtimes This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 23

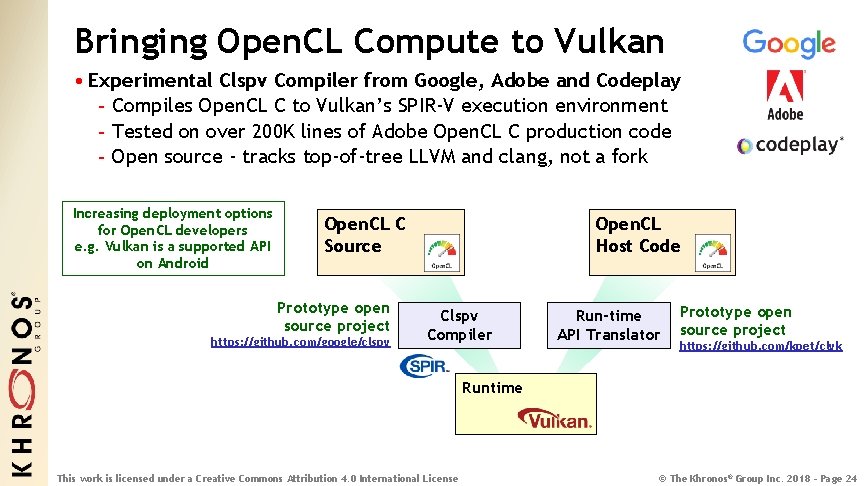

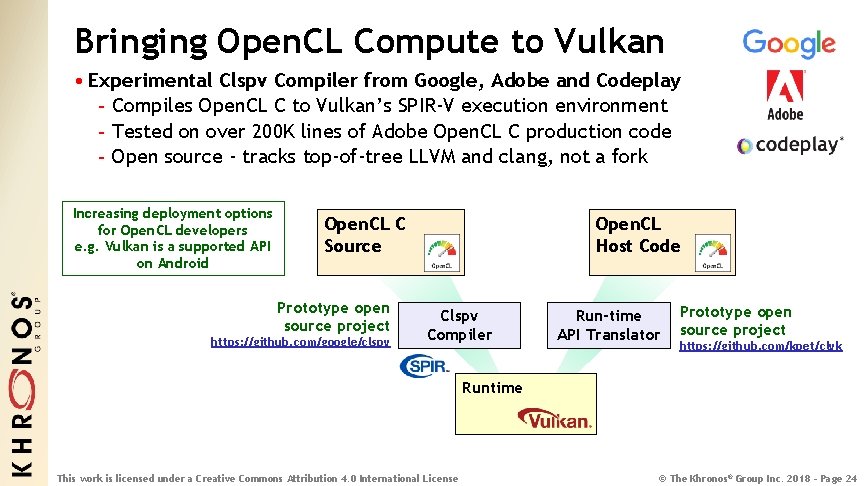

Bringing Open. CL Compute to Vulkan • Experimental Clspv Compiler from Google, Adobe and Codeplay - Compiles Open. CL C to Vulkan’s SPIR-V execution environment - Tested on over 200 K lines of Adobe Open. CL C production code - Open source - tracks top-of-tree LLVM and clang, not a fork Increasing deployment options for Open. CL developers e. g. Vulkan is a supported API on Android Open. CL C Source Prototype open source project https: //github. com/google/clspv Open. CL Host Code Clspv Compiler Run-time API Translator Prototype open source project https: //github. com/kpet/clvk Runtime This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 24

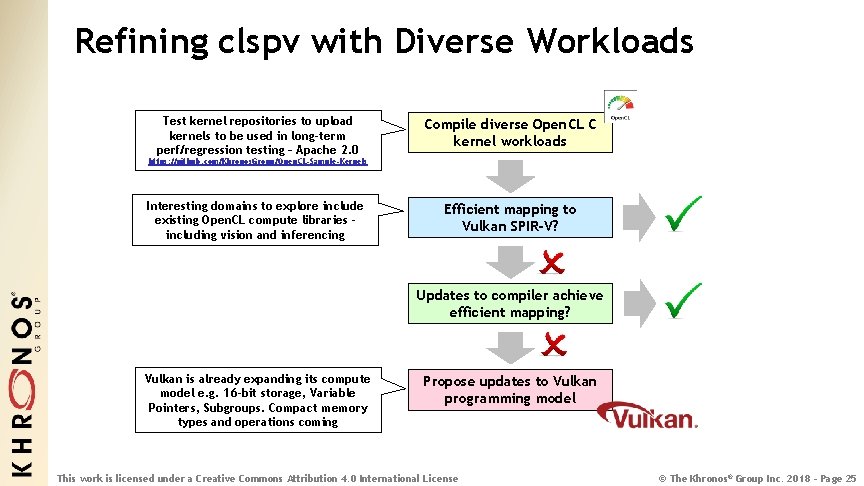

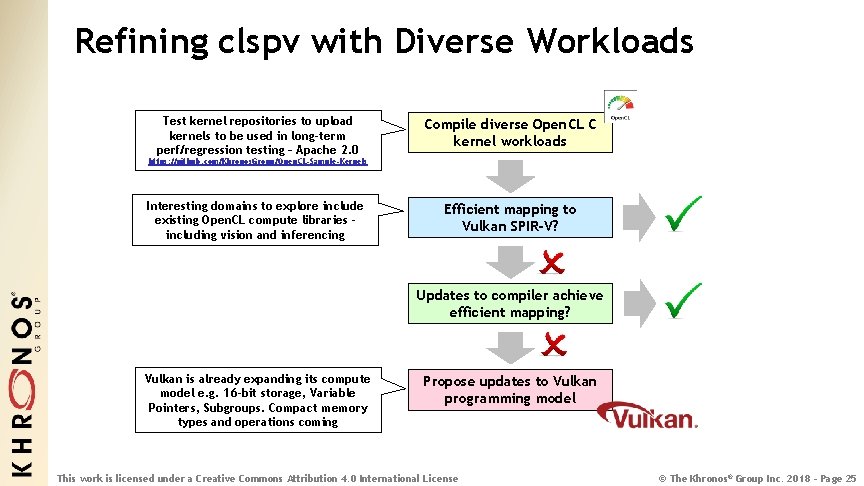

Refining clspv with Diverse Workloads Test kernel repositories to upload kernels to be used in long-term perf/regression testing – Apache 2. 0 Compile diverse Open. CL C kernel workloads https: //github. com/Khronos. Group/Open. CL-Sample-Kernels Interesting domains to explore include existing Open. CL compute libraries including vision and inferencing Efficient mapping to Vulkan SPIR-V? Updates to compiler achieve efficient mapping? Vulkan is already expanding its compute model e. g. 16 -bit storage, Variable Pointers, Subgroups. Compact memory types and operations coming Propose updates to Vulkan programming model This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 25

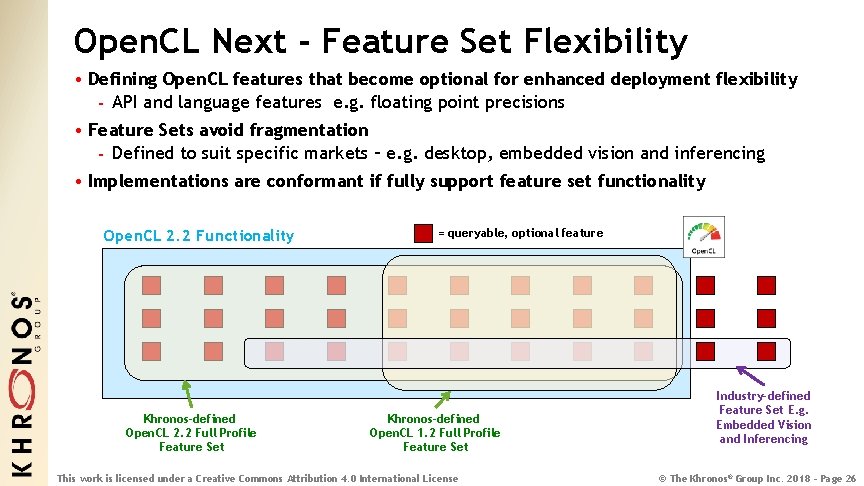

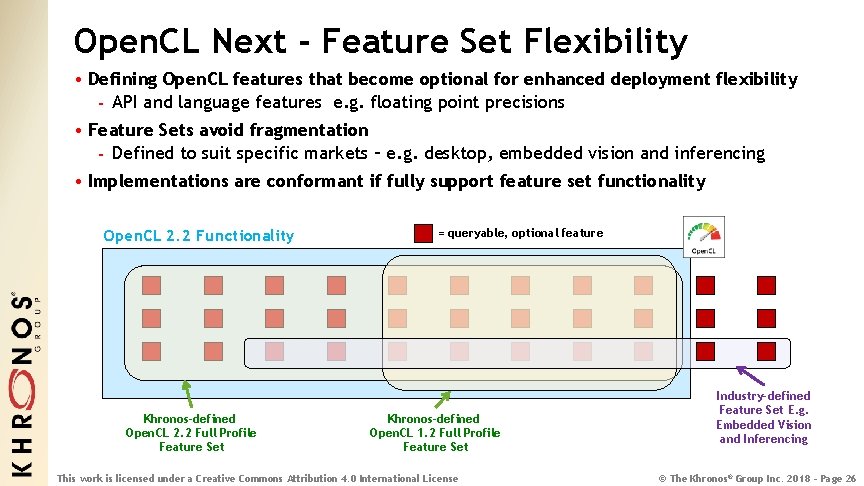

Open. CL Next - Feature Set Flexibility • Defining Open. CL features that become optional for enhanced deployment flexibility - API and language features e. g. floating point precisions • Feature Sets avoid fragmentation - Defined to suit specific markets – e. g. desktop, embedded vision and inferencing • Implementations are conformant if fully support feature set functionality Open. CL 2. 2 Functionality Khronos-defined Open. CL 2. 2 Full Profile Feature Set = queryable, optional feature Khronos-defined Open. CL 1. 2 Full Profile Feature Set This work is licensed under a Creative Commons Attribution 4. 0 International License Industry-defined Feature Set E. g. Embedded Vision and Inferencing © The Khronos® Group Inc. 2018 - Page 26

Thank You! • Khronos is creating cutting-edge royalty-free open standards - For 3 D, VR/AR, Compute, Vision and Machine Learning • Please join Khronos! - We welcome members from Japan and Asia - Influence the direction of industry standards - Get early access to draft specifications - Network with industry-leading companies • More Information - www. khronos. org - ntrevett@nvidia. com - @neilt 3 d Khronos Group Japan Local Member Meeting Open to current and prospective members! Thursday, 6 December 2018 5: 00 PM – 8: 00 PM JST Yurakucho Cafe & Dining B 1 F, Tokyo International Forum Chiyoda-ku, Tōkyō-to 100 -0005 Please Register for Free! This work is licensed under a Creative Commons Attribution 4. 0 International License © The Khronos® Group Inc. 2018 - Page 27