Stage Net Slice A Reconfigurable Microarchitecture Building Block

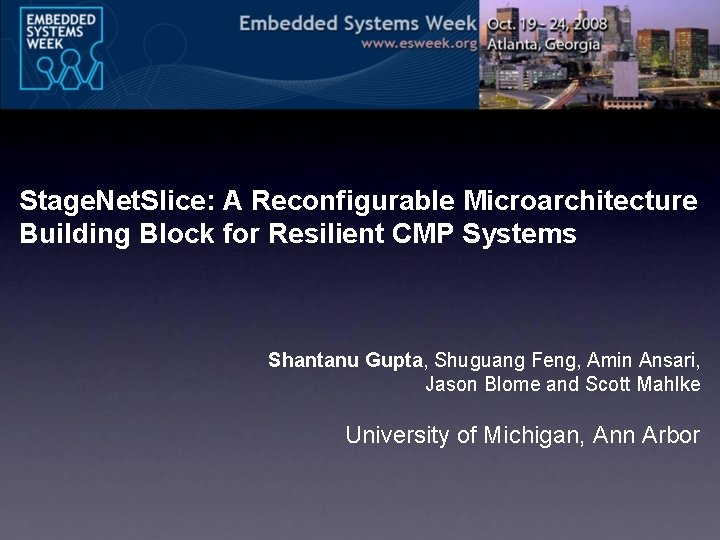

Stage. Net. Slice: A Reconfigurable Microarchitecture Building Block for Resilient CMP Systems Shantanu Gupta, Shuguang Feng, Amin Ansari, Jason Blome and Scott Mahlke University of Michigan, Ann Arbor

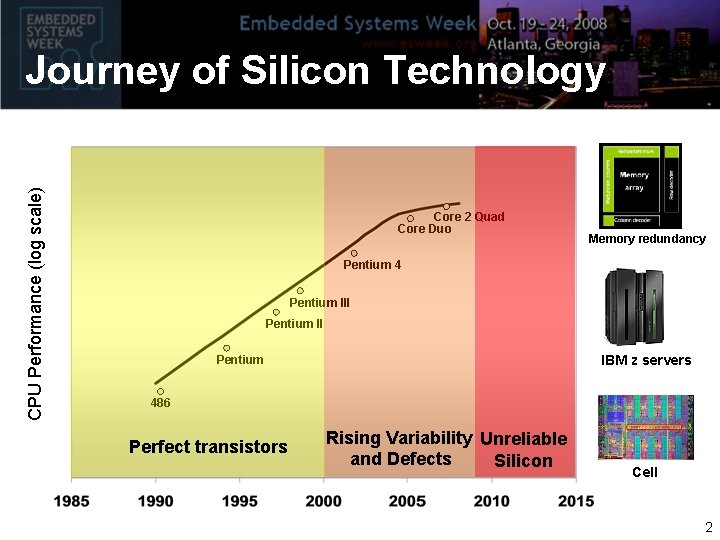

CPU Performance (log scale) Journey of Silicon Technology Core 2 Quad Core Duo Memory redundancy Pentium 4 Pentium III Pentium II IBM z servers Pentium 486 Perfect transistors Rising Variability Unreliable and Defects Silicon Cell 2

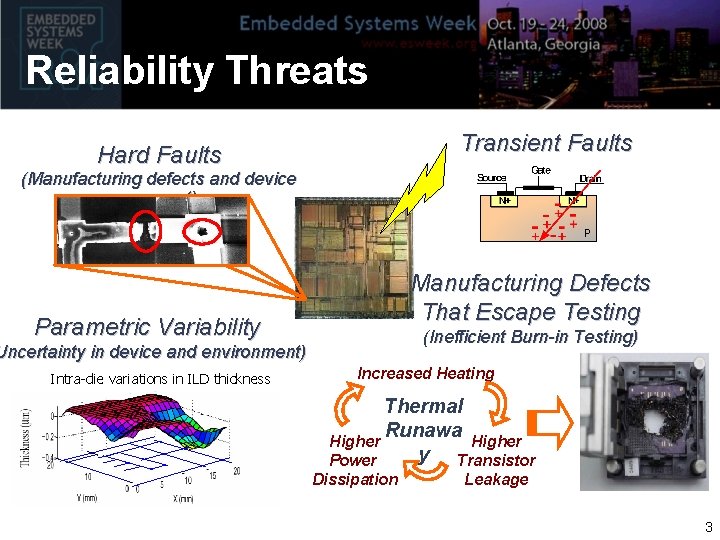

Reliability Threats Transient Faults Hard Faults (Manufacturing defects and device wear-out) Manufacturing Defects That Escape Testing Parametric Variability (Inefficient Burn-in Testing) Uncertainty in device and environment) Intra-die variations in ILD thickness Increased Heating Thermal Runawa Higher y Transistor Power Dissipation Leakage 3

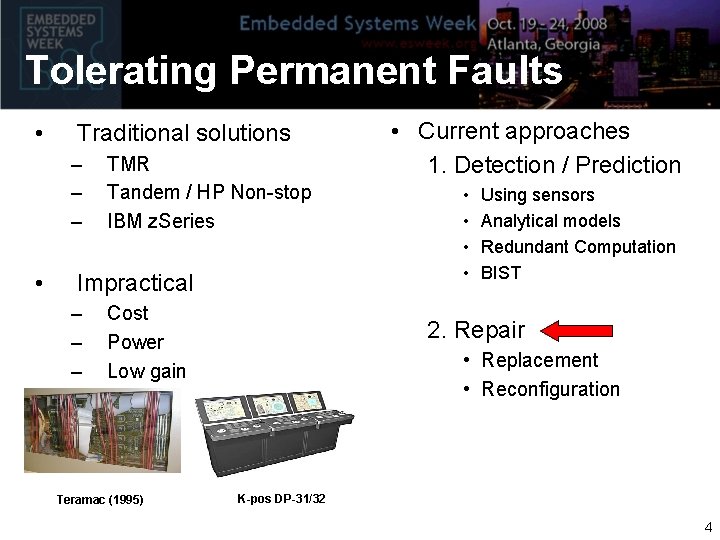

Tolerating Permanent Faults • Traditional solutions – – – • TMR Tandem / HP Non-stop IBM z. Series Impractical – – – Cost Power Low gain Teramac (1995) • Current approaches 1. Detection / Prediction • • Using sensors Analytical models Redundant Computation BIST 2. Repair • Replacement • Reconfiguration K-pos DP-31/32 4

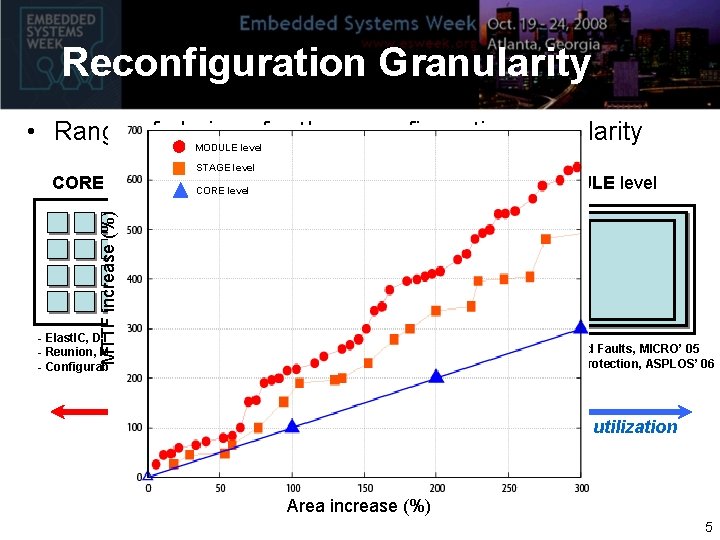

Reconfiguration Granularity • Range of choices for the reconfiguration granularity MODULE level MTTF increase (%) CORE level STAGE level MODULE level FETCH DEC EXEC MEM WB - Elast. IC, DT’ 06 - Reunion, MICRO’ 06 - Configurable Isolation, ISCA’ 07 - Online Diagnosis of Hard Faults, MICRO’ 05 - Ultra Low-Cost Defect Protection, ASPLOS’ 06 - Detour, DSN’ 08 Lower design complexity Lower overheads Better resource utilization Area increase (%) 5

Goal of this Research • Incremental redundancy solutions not enough • Design a computing substrate – Highly reconfigurable – Provides scalable fault tolerance – Marginal overheads Design that can enable CMPs capable of facing ~ 100 s of faults while maintaining useful throughput 6

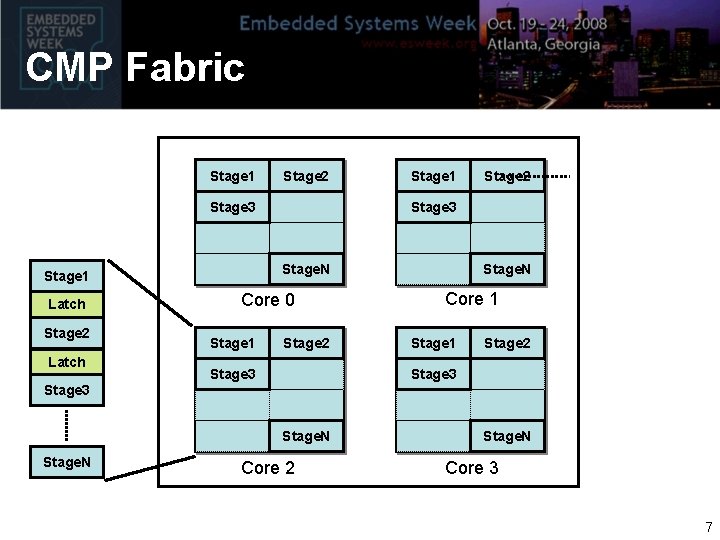

CMP Fabric Stage 1 Stage 2 Stage 3 Stage 2 Latch Core 0 Stage 1 Stage 2 Stage 3 Stage. N Stage 1 Latch Stage 1 Stage 2 Stage 3 Stage. N Core 2 Stage. N Core 3 7

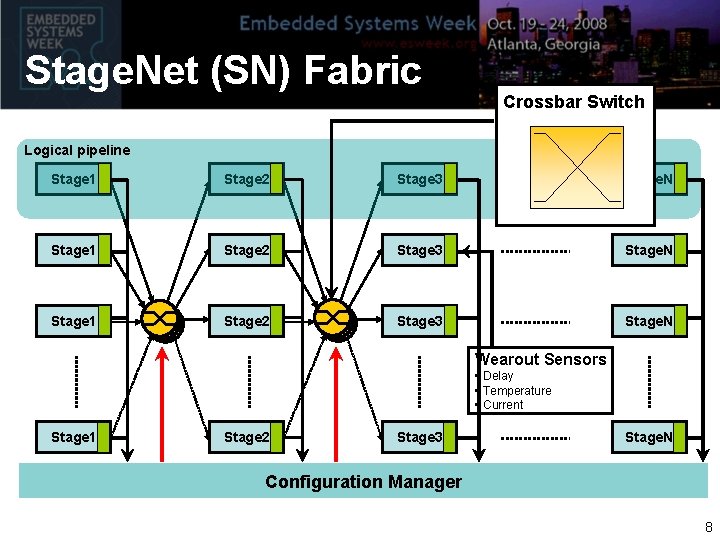

Stage. Net (SN) Fabric Crossbar Switch Logical pipeline Stage 1 Stage 2 Stage 3 Stage. N Wearout Sensors • Delay • Temperature • Current Stage 1 Stage 2 Stage 3 Stage. N Configuration Manager 8

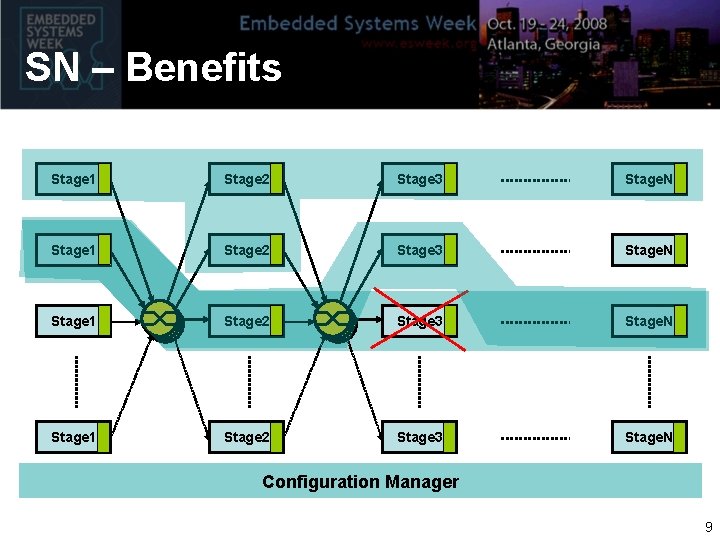

SN – Benefits Stage 1 Stage 2 Stage 3 Stage. N Configuration Manager 9

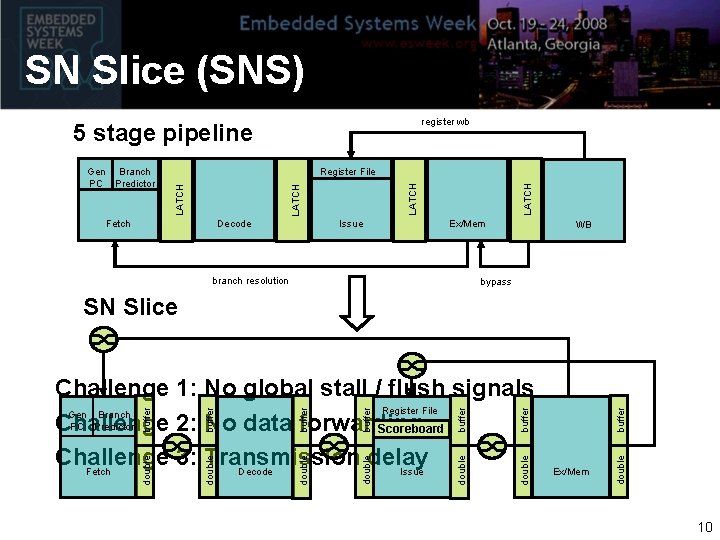

SN Slice (SNS) register wb 5 stage pipeline Fetch Decode Issue LATCH Register File LATCH Gen Branch PC Predictor Ex/Mem branch resolution WB bypass buffer buffer double double Ex/Mem double buffer double Challenge 1: No global stall / flush signals Register File Gen Branch PC Predictor Challenge 2: No data forwarding Scoreboard Challenge 3: Transmission delay Decode Issue Fetch buffer SN Slice 10

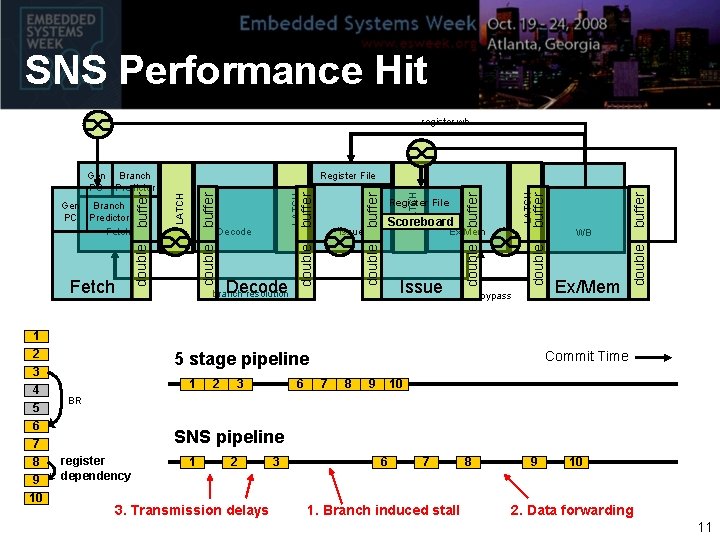

SNS Performance Hit register wb 7 8 9 10 double Issue branch resolution double bypass 2 3 6 Ex/Mem Commit Time 5 stage pipeline 1 WB buffer Ex/Mem double LATCH Scoreboard buffer Register File buffer LATCH buffer Decode Issue double LATCH buffer Decode double Fetch 1 2 3 4 5 6 Register File double Gen Branch PC Predictor Fetch buffer Gen Branch PC Predictor 7 8 9 10 BR SNS pipeline register dependency 1 2 3. Transmission delays 3 6 7 1. Branch induced stall 8 9 10 2. Data forwarding 11

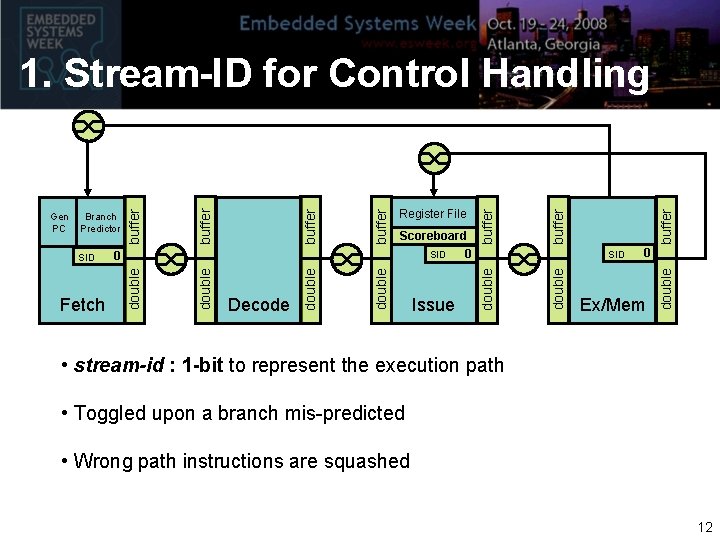

buffer 0 Ex/Mem double Issue SID double Scoreboard 0 SID buffer Register File double buffer Decode double Fetch 0 double SID buffer Branch Predictor double Gen PC buffer 1. Stream-ID for Control Handling • stream-id : 1 -bit to represent the execution path • Toggled upon a branch mis-predicted • Wrong path instructions are squashed 12

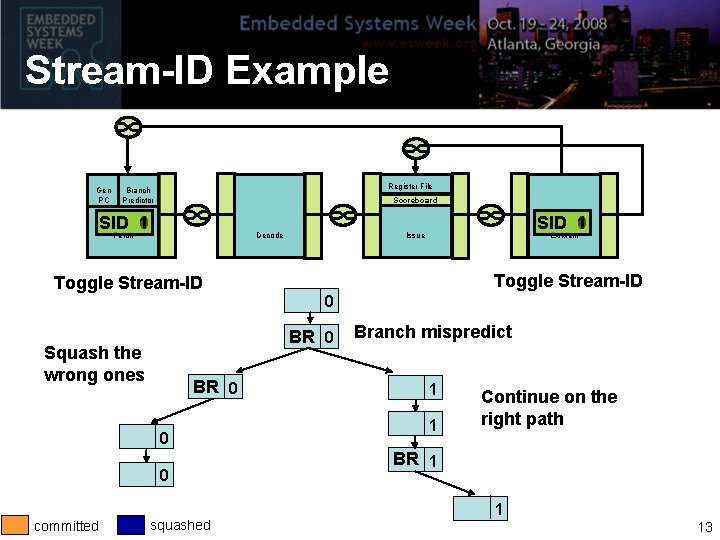

Stream-ID Example Gen PC Register File Branch Predictor Scoreboard SID 1 0 Fetch Decode SID 1 0 Issue Ex/Mem Toggle Stream-ID 0 BR 0 Squash the wrong ones BR 0 0 0 committed squashed Branch mispredict 1 1 Continue on the right path BR 1 1 13

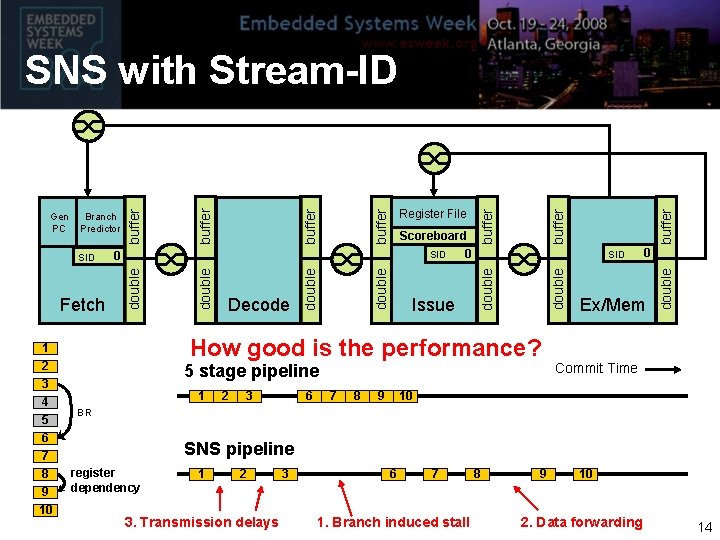

1 2 3 4 5 6 7 8 9 10 How good is the performance? 5 stage pipeline 1 2 3 6 7 8 9 buffer 0 Ex/Mem double SID double Issue buffer Scoreboard 0 SID double buffer Decode double Fetch Register File 0 double SID buffer Branch Predictor double Gen PC buffer SNS with Stream-ID Commit Time 10 BR SNS pipeline register dependency 1 2 3. Transmission delays 3 6 7 1. Branch induced stall 8 9 10 2. Data forwarding 14

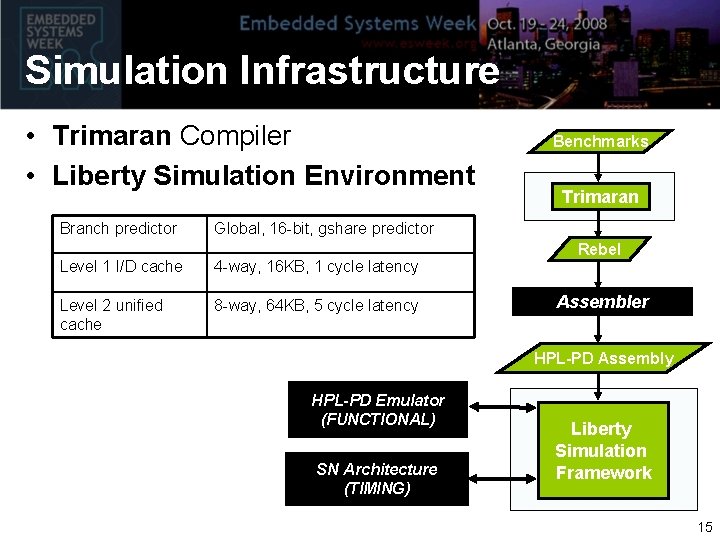

Simulation Infrastructure • Trimaran Compiler • Liberty Simulation Environment Branch predictor Benchmarks Trimaran Global, 16 -bit, gshare predictor Level 1 I/D cache 4 -way, 16 KB, 1 cycle latency Level 2 unified cache 8 -way, 64 KB, 5 cycle latency Rebel Assembler HPL-PD Assembly HPL-PD Emulator (FUNCTIONAL) SN Architecture (TIMING) Liberty Simulation Framework 15

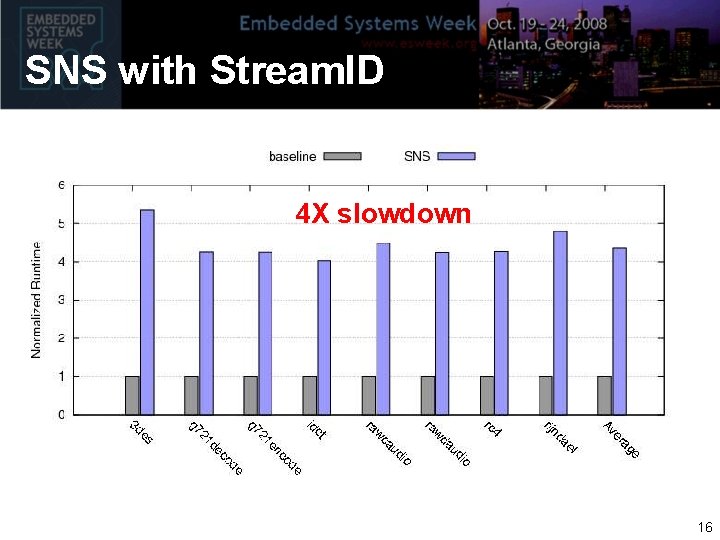

SNS with Stream. ID 4 X slowdown 16

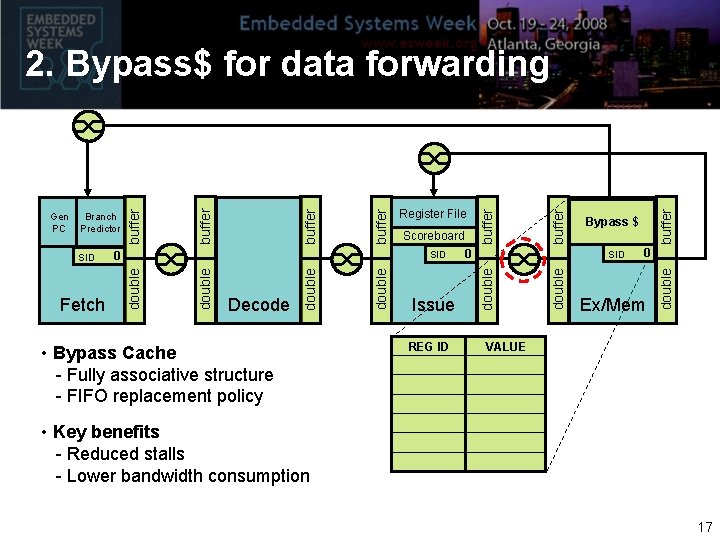

• Bypass Cache - Fully associative structure - FIFO replacement policy REG ID buffer Bypass $ SID 0 Ex/Mem double Issue 0 double Decode buffer Scoreboard double buffer Register File SID double Fetch 0 double SID buffer Branch Predictor double Gen PC buffer 2. Bypass$ for data forwarding VALUE • Key benefits - Reduced stalls - Lower bandwidth consumption 17

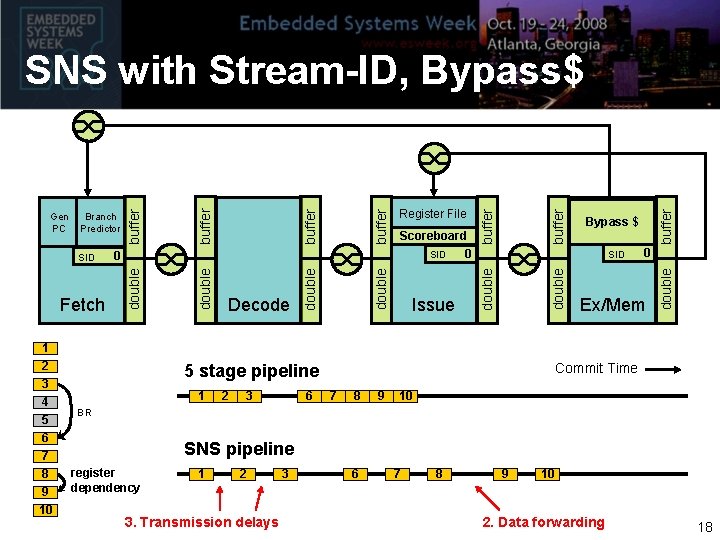

1 2 3 4 5 6 7 8 9 10 2 3 6 buffer Ex/Mem Commit Time 5 stage pipeline 1 0 double Issue Bypass $ SID double Scoreboard 0 SID buffer Register File double buffer Decode double Fetch 0 double SID buffer Branch Predictor double Gen PC buffer SNS with Stream-ID, Bypass$ 7 8 9 10 BR SNS pipeline register dependency 1 2 3. Transmission delays 3 6 7 8 9 10 2. Data forwarding 18

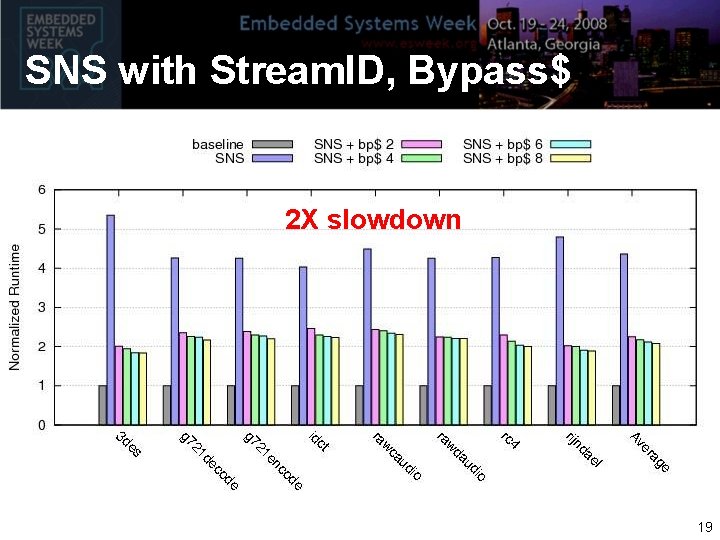

SNS with Stream. ID, Bypass$ 2 X slowdown 19

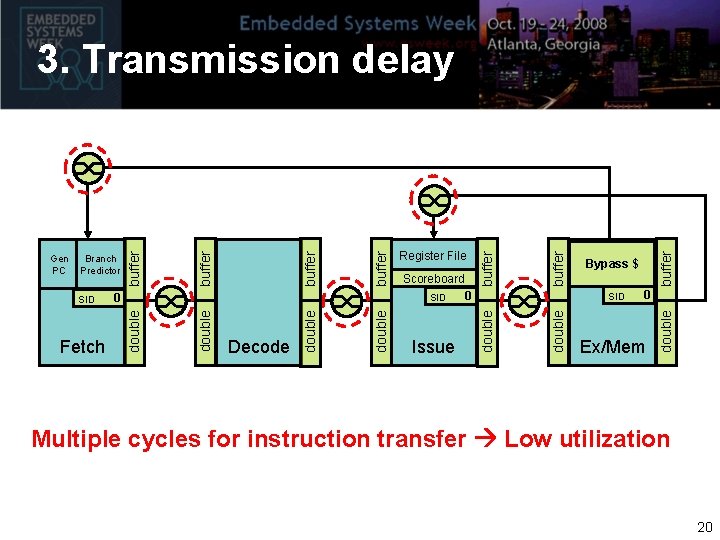

buffer Bypass $ SID 0 Ex/Mem double Issue 0 double Decode buffer Scoreboard double buffer Register File SID double Fetch 0 double SID buffer Branch Predictor double Gen PC buffer 3. Transmission delay Multiple cycles for instruction transfer Low utilization 20

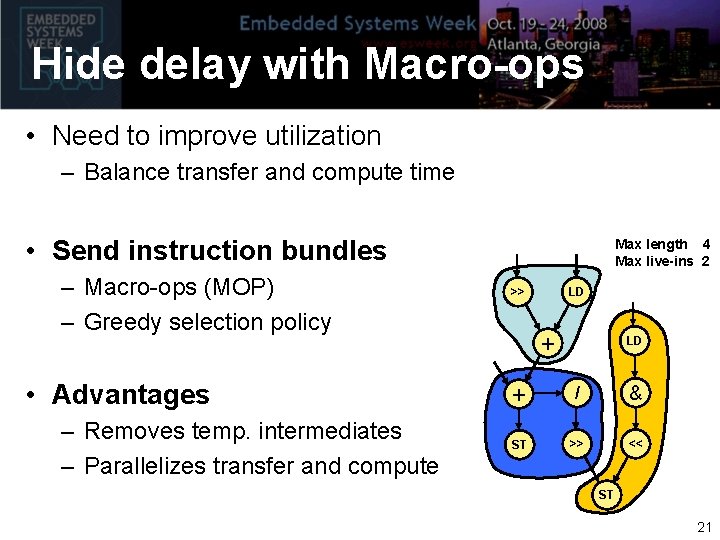

Hide delay with Macro-ops • Need to improve utilization – Balance transfer and compute time • Send instruction bundles – Macro-ops (MOP) – Greedy selection policy • Advantages – Removes temp. intermediates – Parallelizes transfer and compute Max length 4 Max live-ins 2 >> LD + / & ST >> << ST 21

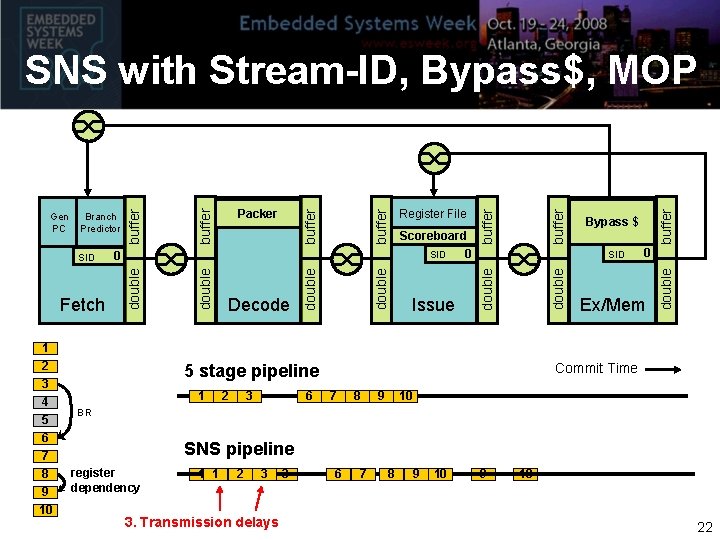

1 2 3 4 5 6 7 8 9 10 2 3 6 buffer Ex/Mem Commit Time 5 stage pipeline 1 0 double Issue Bypass $ SID double Scoreboard 0 SID buffer Register File double buffer Decode double Fetch 0 double SID Packer buffer Branch Predictor double Gen PC buffer SNS with Stream-ID, Bypass$, MOP 7 8 9 10 BR SNS pipeline register dependency 1 1 22 3 3. Transmission delays 3 6 67 87 9 10 8 9 10 22

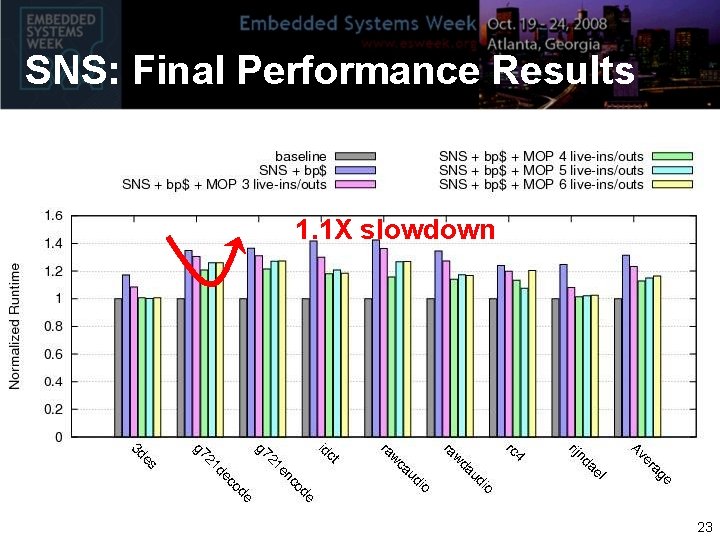

SNS: Final Performance Results 1. 1 X slowdown 23

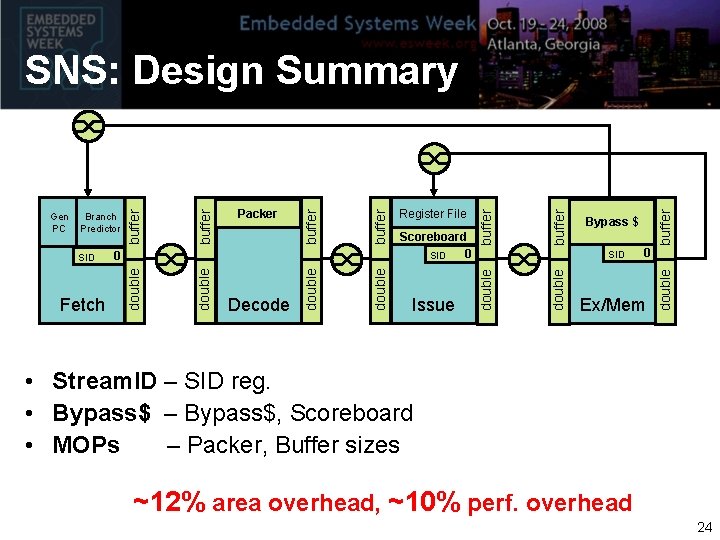

buffer 0 Ex/Mem double Issue Bypass $ SID double Scoreboard 0 SID buffer Register File double buffer double Decode double Fetch Packer 0 double SID buffer Branch Predictor double Gen PC buffer SNS: Design Summary • Stream. ID – SID reg. • Bypass$ – Bypass$, Scoreboard • MOPs – Packer, Buffer sizes ~12% area overhead, ~10% perf. overhead 24

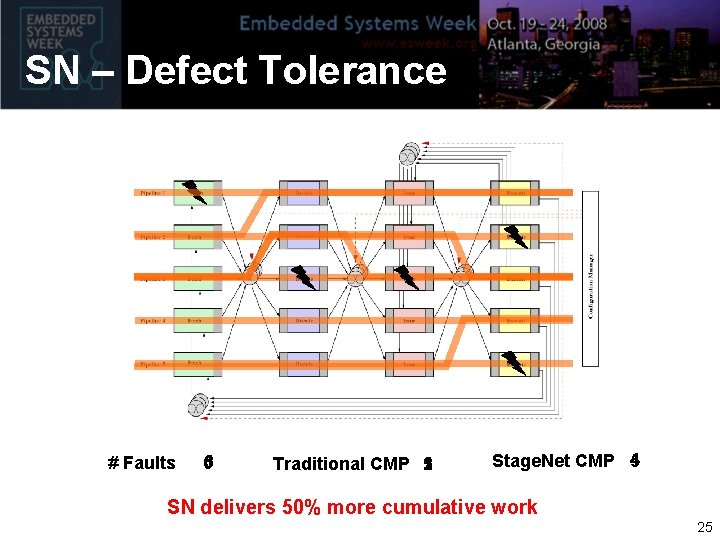

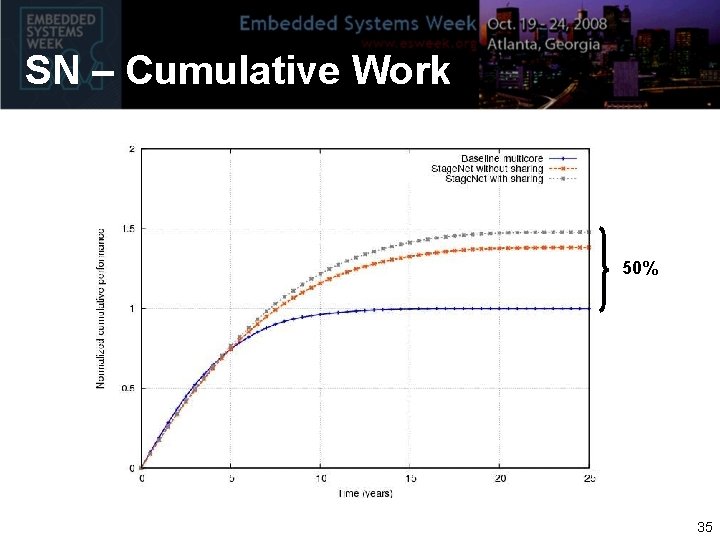

SN – Defect Tolerance # Faults 5 03 5 2 Traditional CMP 1 4 Stage. Net CMP 5 SN delivers 50% more cumulative work 25

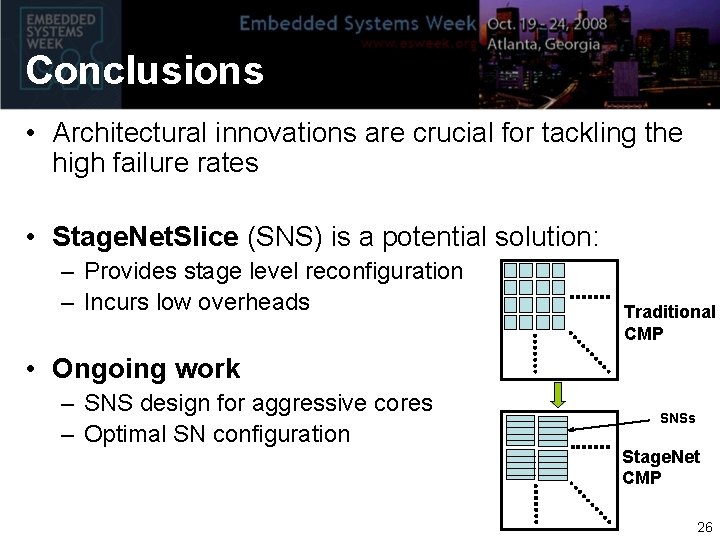

Conclusions • Architectural innovations are crucial for tackling the high failure rates • Stage. Net. Slice (SNS) is a potential solution: – Provides stage level reconfiguration – Incurs low overheads Traditional CMP • Ongoing work – SNS design for aggressive cores – Optimal SN configuration SNSs Stage. Net CMP 26

Thank You http: //cccp. eecs. umich. edu 27

Back up 28

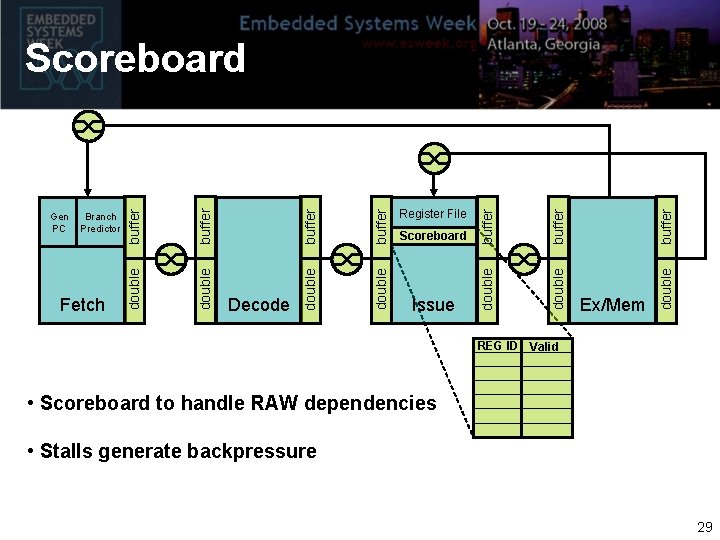

double buffer Issue Ex/Mem double Scoreboard buffer double Register File double buffer Decode double buffer Fetch double Branch Predictor buffer Gen PC double Scoreboard REG ID Valid • Scoreboard to handle RAW dependencies • Stalls generate backpressure 29

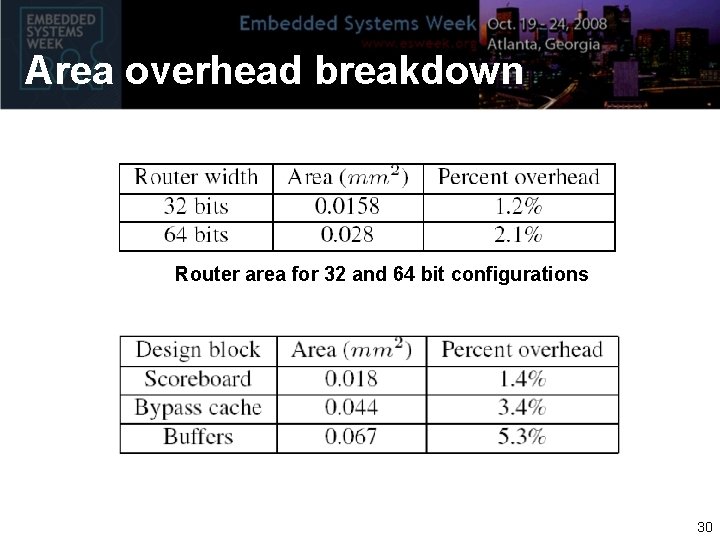

Area overhead breakdown Router area for 32 and 64 bit configurations 30

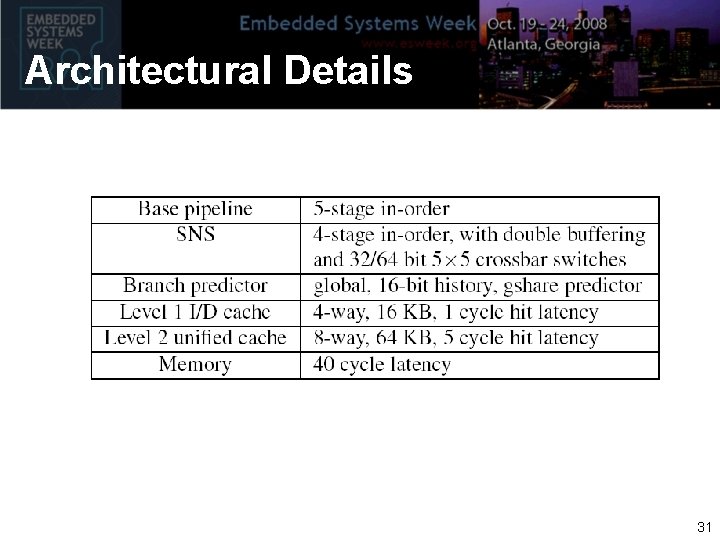

Architectural Details 31

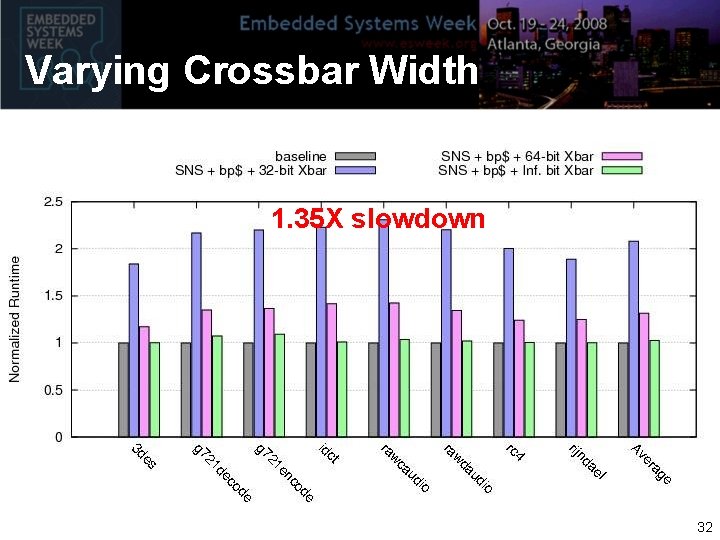

Varying Crossbar Width 1. 35 X slowdown 32

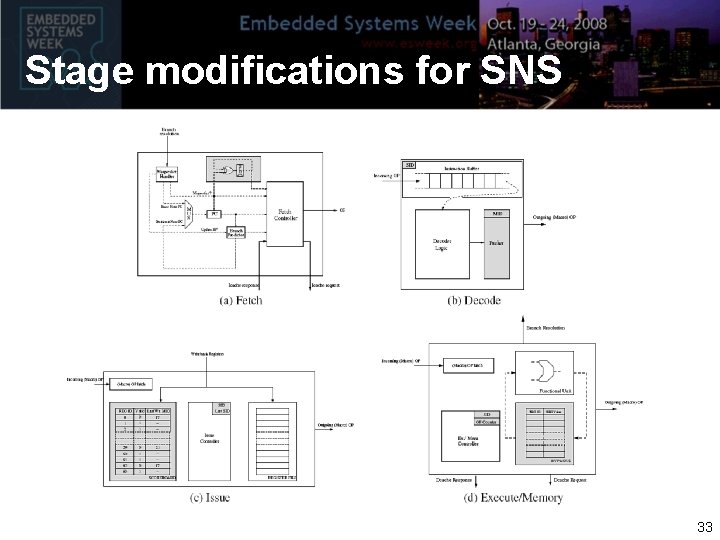

Stage modifications for SNS 33

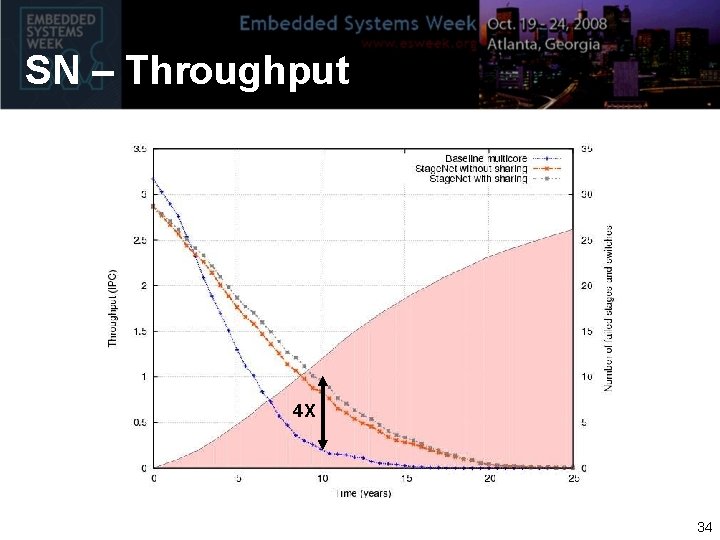

SN – Throughput 4 X 34

SN – Cumulative Work 50% 35

- Slides: 35