Stadium Hashing Scalable and Flexible Hashing on GPUs

Stadium Hashing: Scalable and Flexible Hashing on GPUs FARZAD KHORASANI, MEHMET E. BELVIRANLI, RAJIV GUPTA, LAXMI N. BHUYAN UNIVERSITY OF CALIFORNIA RIVERSIDE

What Stadium hashing addresses v Out-of-core GPU hashing. v. How to reduce the rate of host memory accesses due to collisions or multiple queries. v Mixed operation concurrency in GPU hashing. v. How insertion and retrievals (lookups) can be done safely simultaneously. v Warp execution efficiency for insertions. v How to avoid thread starvation due to rehashing.

Outline v Background & Motivation v Stadium Hashing (Stash) v Out-of-Core Scalability v Mixed Operation Concurrency Support v Operations and CUDA implementation v Warp-Efficient Batched Insertions with cl. Stash v Experimental Evaluation

Background: Cuckoo hashing v Hashing is one of the most fundamental operations. v Data are constituted from Key-Value pairs (KVPs). v The most notable implementation of a hash table on GPU is done by Alcantra et al. based on Cuckoo hashing. v Cuckoo hashing uses multiple hash functions. v Upon collision, already residing KVP is swapped with the new KVP. Swapped-out KVP will be rehashed (chaining). v For a retrieval, hash functions are applied to the query key and locations get verified.

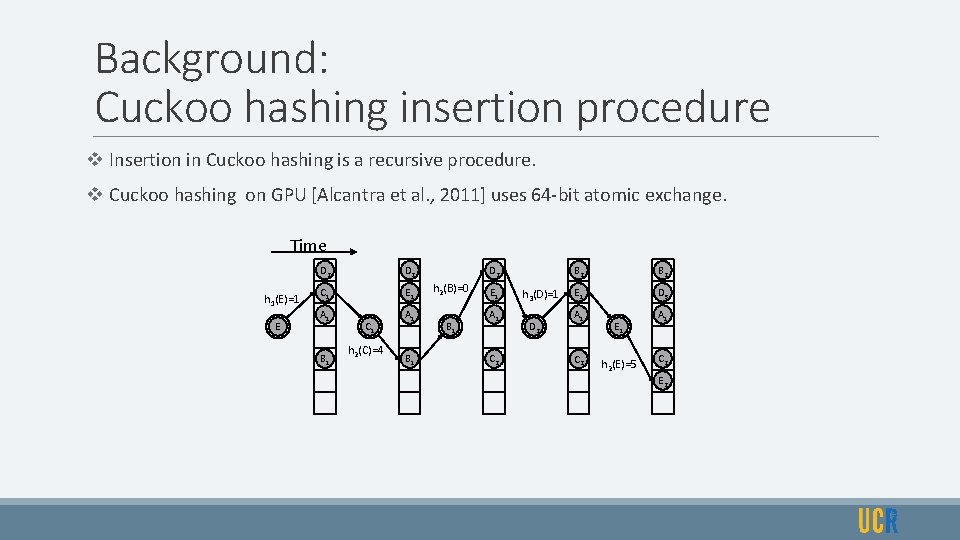

Background: Cuckoo hashing insertion procedure v Insertion in Cuckoo hashing is a recursive procedure. v Cuckoo hashing on GPU [Alcantra et al. , 2011] uses 64 -bit atomic exchange. Time h 1(E)=1 E D 2 C 1 E 1 A 1 B 1 C 1 h 2(C)=4 A 1 B 1 D 2 h 2(B)=0 B 1 E 1 A 1 C 2 h 3(D)=1 D 2 B 2 E 1 D 3 A 1 C 2 E 1 h 2(E)=5 A 1 C 2 E 2

Motivation: restrictions of Cuckoo hashing on GPU v Does not support concurrent insertion and retrieval. v Shows poor performance for large table sizes. v Does not use SIMD hardware efficiently. v Restricts the KVP to occupy 64 bits. v Fails when the Load Factor is high.

Stadium hashing v Stadium hashing (Stash) uses a split design: v A table for KVPs, that can be larger than 64 bits. v A compact auxiliary structure called ticket-board. v Ticket-board maintains one ticket per table bucket. v A ticket contains: v A single availability bit: indicating the status of the bucket. v A small number of info bits: providing a clue about the existing key in the bucket.

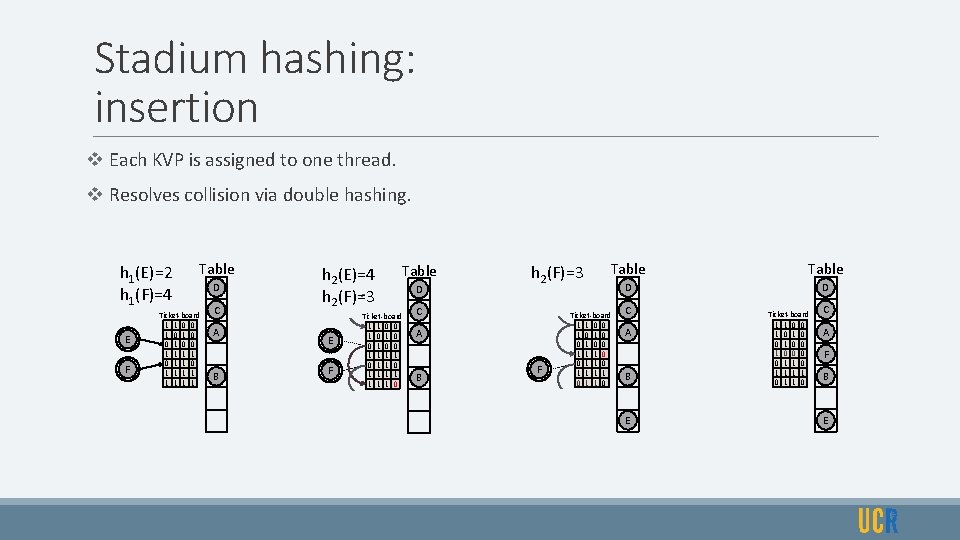

Stadium hashing: insertion v Each KVP is assigned to one thread. v Resolves collision via double hashing. h 1(E)=2 h 1(F)=4 E F Table Ticket-board 1 1 0 0 1 1 0 1 1 1 1 1 D C A B h 2(E)=4 h 2(F)=3 E F Table Ticket-board 1 1 0 0 1 1 0 1 1 1 1 1 0 D h 2(F)=3 C A B F Table D D Ticket-board 1 1 0 0 1 1 1 0 0 1 1 1 1 0 C A B E Ticket-board 1 1 0 0 1 0 0 1 1 0 C A F B E

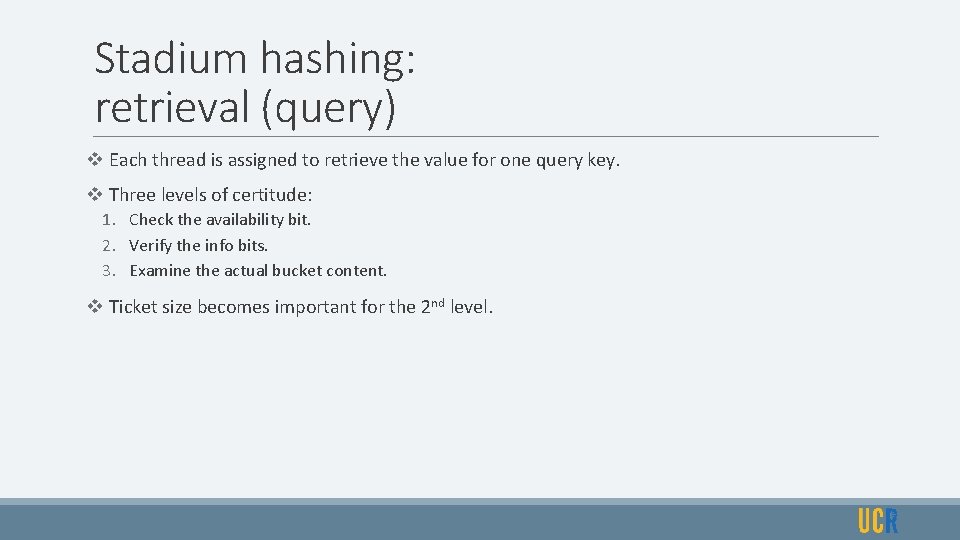

Stadium hashing: retrieval (query) v Each thread is assigned to retrieve the value for one query key. v Three levels of certitude: 1. Check the availability bit. 2. Verify the info bits. 3. Examine the actual bucket content. v Ticket size becomes important for the 2 nd level.

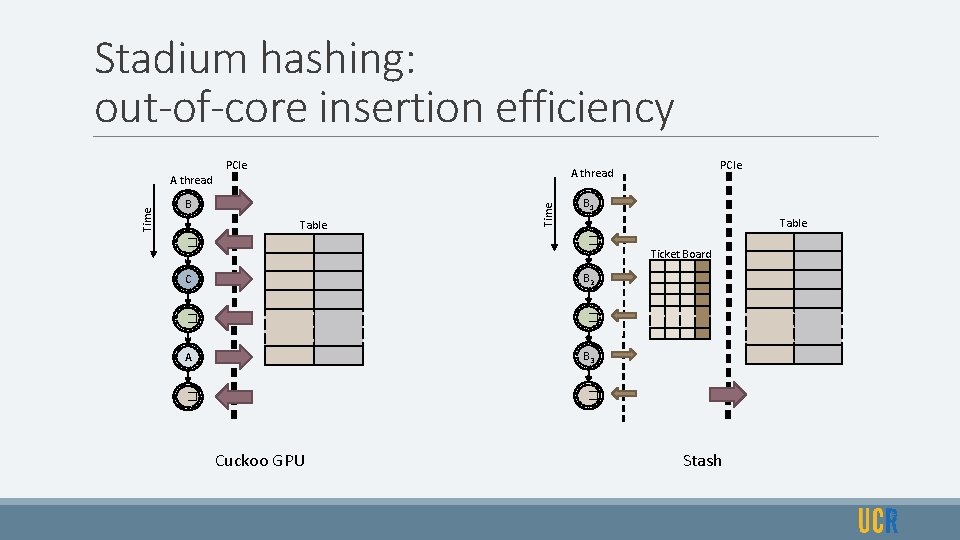

Stadium hashing: out-of-core insertion efficiency PCIe A thread B Table Time A thread B 1 Table � � C B 2 � � A B 3 � � Cuckoo GPU Ticket Board Stash

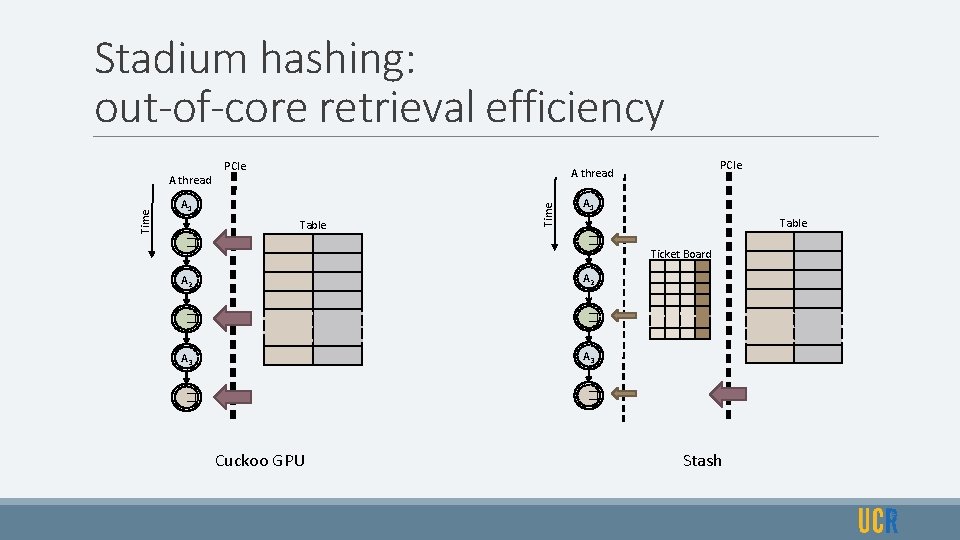

Stadium hashing: out-of-core retrieval efficiency PCIe A thread A 1 Table Time A thread PCIe A 1 Table � � Ticket Board A 2 � � A 3 � � Cuckoo GPU Stash

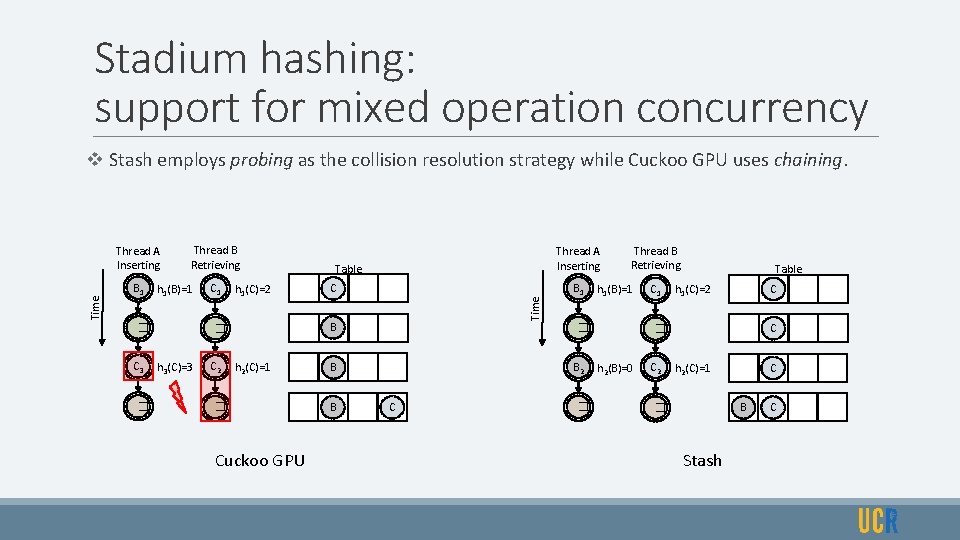

Stadium hashing: support for mixed operation concurrency Thread A Inserting Thread B Retrieving B 1 C 1 h 1(B)=1 � C 3 � h 1(C)=2 C B � h 3(C)=3 Table Time v Stash employs probing as the collision resolution strategy while Cuckoo GPU uses chaining. h 2(C)=1 � Cuckoo GPU Thread B Retrieving B 1 C 1 h 1(B)=1 � B 2 B B Thread A Inserting C � Table C h 1(C)=2 C � h 2(B)=0 C 2 h 2(C)=1 C B � Stash C

Stadium hashing: CUDA implementation v Uses a non-linear reversible hash function with diffusion in both directions. v Uses __umulhi() function to map the hashing outcome to the table size. v Slightly increases the table size to be a prime number for a full traversal with double hashing. v Uses atomic AND for ticket-board transactions. v Uses high-throughput bfe and bfi PTX instructions during insertion and retrieval.

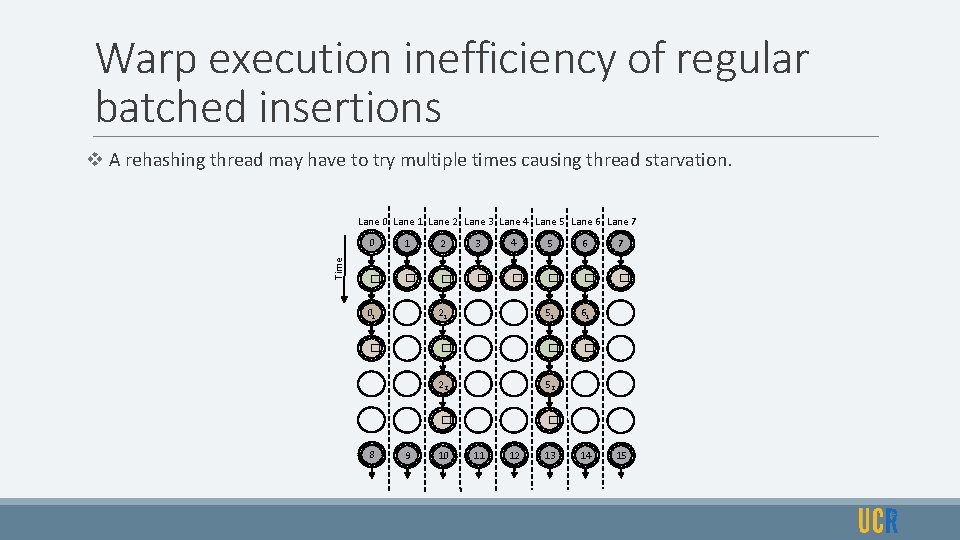

Warp execution inefficiency of regular batched insertions v A rehashing thread may have to try multiple times causing thread starvation. Time Lane 0 Lane 1 Lane 2 Lane 3 Lane 4 Lane 5 Lane 6 Lane 7 0 1 2 3 4 5 6 7 � � � � 01 21 51 61 � � 22 52 � � 8 9 10 11 12 13 14 15

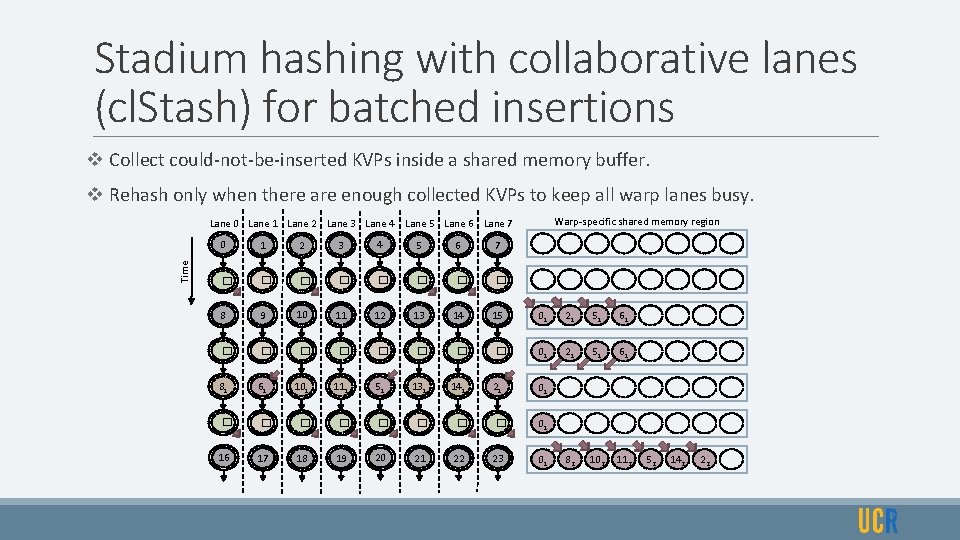

Stadium hashing with collaborative lanes (cl. Stash) for batched insertions v Collect could-not-be-inserted KVPs inside a shared memory buffer. v Rehash only when there are enough collected KVPs to keep all warp lanes busy. Warp-specific shared memory region Time Lane 0 Lane 1 Lane 2 Lane 3 Lane 4 Lane 5 Lane 6 Lane 7 0 1 2 3 4 5 6 7 � � � � 8 9 � � 81 61 � � 16 17 10 11 � � 101 111 � � 18 19 12 � 51 � 20 13 14 � � 131 141 � � 21 22 15 01 21 51 61 � 01 21 51 61 21 01 � 01 82 102 112 23 01 52 142 22

cl. Stash implementation v Thread’s success at inserting the KVP into the table acts as the predicate. v Intra-warp binary reduction using predicate to count the total number of successful threads. v Intra-warp binary prefix sum using the predicate to identify the address in the shared memory buffer. v Using Harris et al. method, high-throughput CUDA intrinsics are utilized.

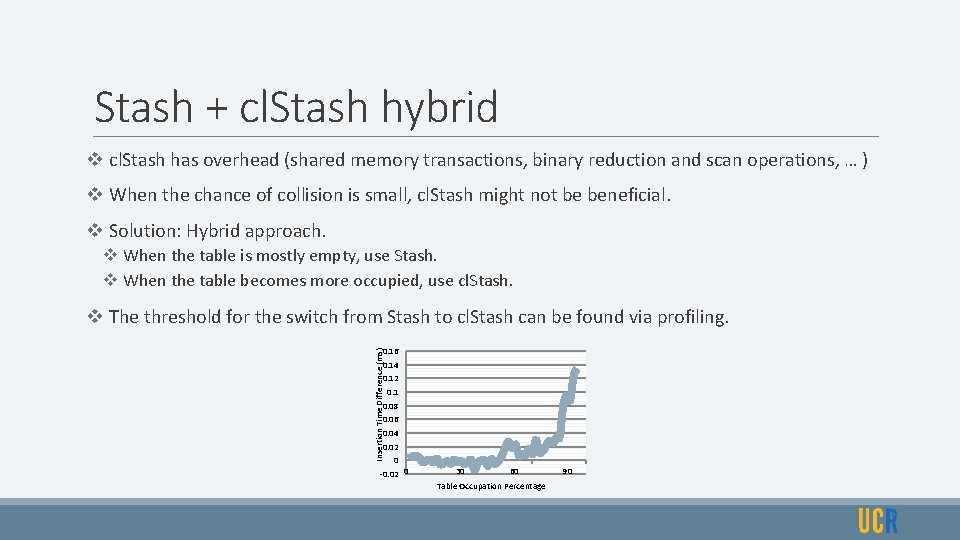

Stash + cl. Stash hybrid v cl. Stash has overhead (shared memory transactions, binary reduction and scan operations, … ) v When the chance of collision is small, cl. Stash might not be beneficial. v Solution: Hybrid approach. v When the table is mostly empty, use Stash. v When the table becomes more occupied, use cl. Stash. v The threshold for the switch from Stash to cl. Stash can be found via profiling. Insertion Time Difference (ms) 0. 16 0. 14 0. 12 0. 1 0. 08 0. 06 0. 04 0. 02 0 -0. 02 0 30 60 Table Occupation Percentage 90

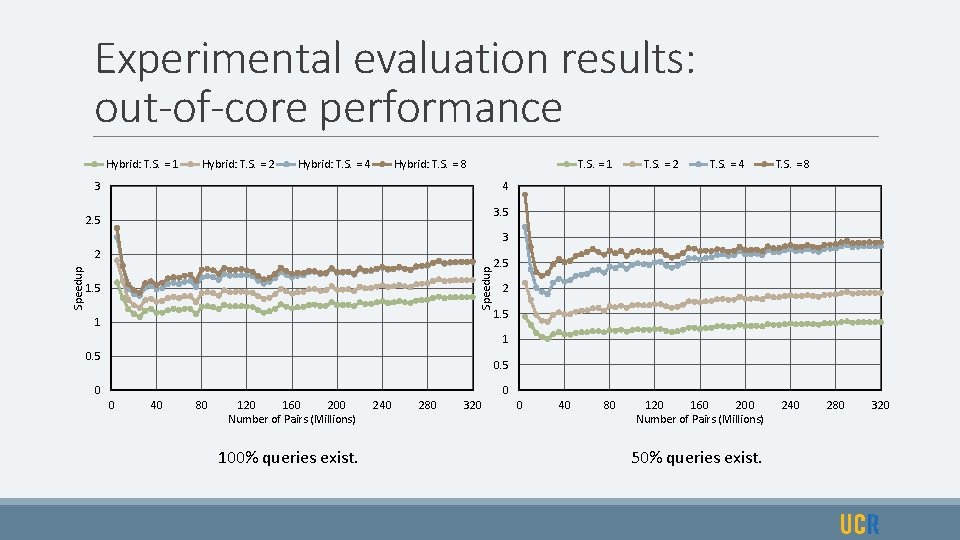

Experimental evaluation results: out-of-core performance Hybrid: T. S. = 1 Hybrid: T. S. = 2 Hybrid: T. S. = 4 Hybrid: T. S. = 8 T. S. = 1 3 T. S. = 2 T. S. = 4 T. S. = 8 4 3. 5 2. 5 3 Speedup 2 1. 5 1 2. 5 2 1. 5 1 0. 5 0 0 0 40 80 120 160 200 Number of Pairs (Millions) 100% queries exist. 240 280 320 0 40 80 120 160 200 Number of Pairs (Millions) 50% queries exist. 240 280 320

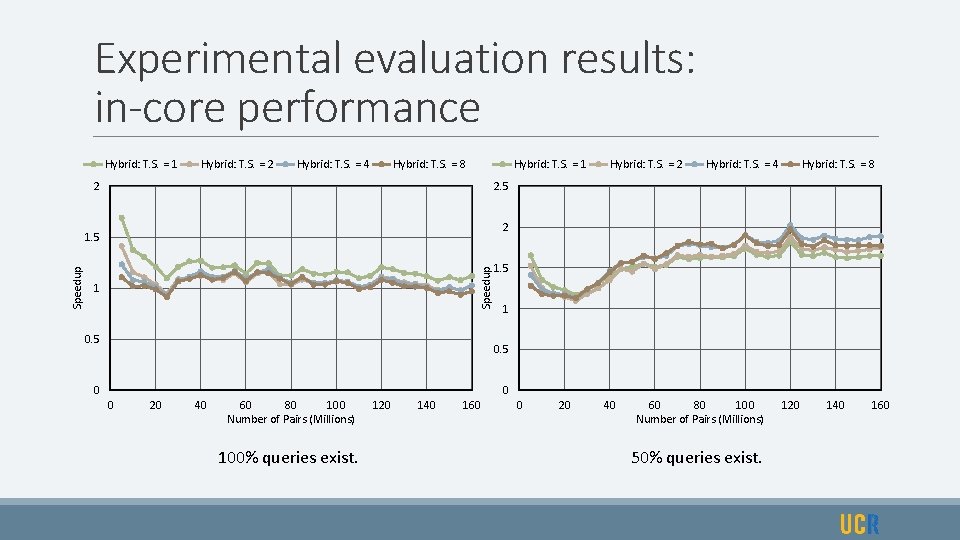

Experimental evaluation results: in-core performance Hybrid: T. S. = 1 Hybrid: T. S. = 2 Hybrid: T. S. = 4 Hybrid: T. S. = 8 Hybrid: T. S. = 1 2 Hybrid: T. S. = 4 Hybrid: T. S. = 8 2. 5 2 Speedup 1. 5 Speedup Hybrid: T. S. = 2 1 0. 5 0 0 0 20 40 60 80 100 Number of Pairs (Millions) 100% queries exist. 120 140 160 0 20 40 60 80 100 Number of Pairs (Millions) 50% queries exist. 120 140 160

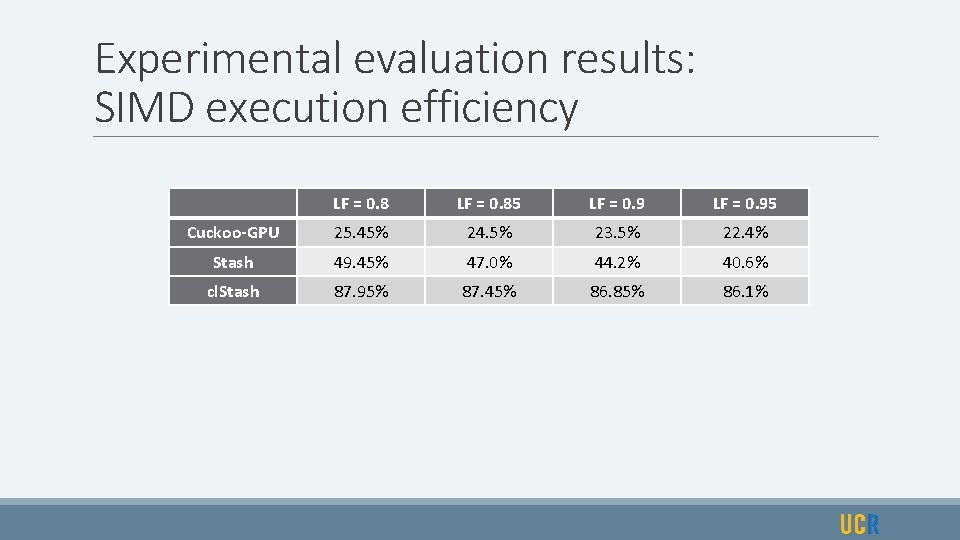

Experimental evaluation results: SIMD execution efficiency LF = 0. 85 LF = 0. 95 Cuckoo-GPU 25. 45% 24. 5% 23. 5% 22. 4% Stash 49. 45% 47. 0% 44. 2% 40. 6% cl. Stash 87. 95% 87. 45% 86. 85% 86. 1%

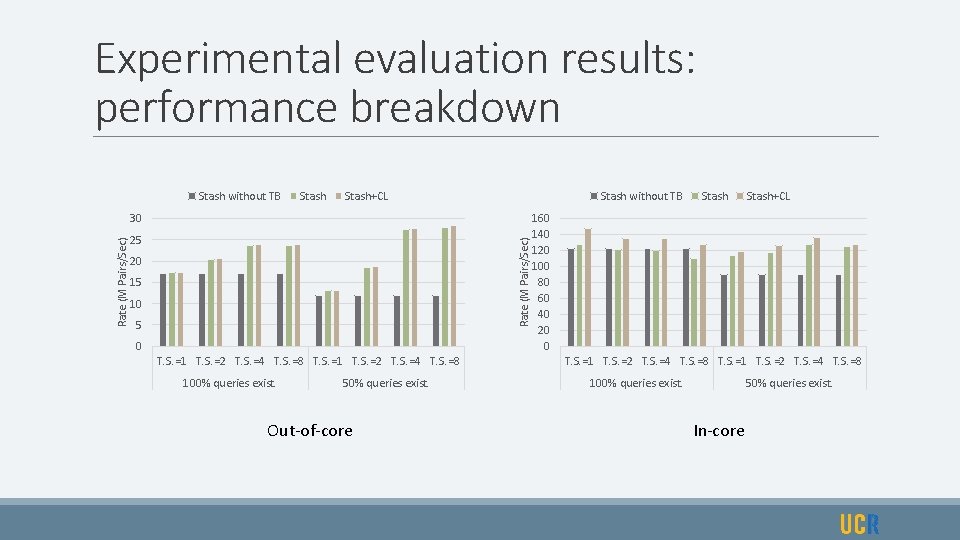

Experimental evaluation results: performance breakdown Stash without TB Stash+CL Stash without TB 25 Rate (M Pairs/Sec) 30 20 15 10 5 0 T. S. =1 T. S. =2 T. S. =4 T. S. =8 100% queries exist. 50% queries exist. Out-of-core Stash+CL 160 140 120 100 80 60 40 20 0 T. S. =1 T. S. =2 T. S. =4 T. S. =8 100% queries exist. 50% queries exist. In-core

Experimental evaluation results: insertion sensitivity analysis In-core Out-of-core

Experimental evaluation results: retrieval sensitivity analysis In-core Out-of-core

Summary Stadium hashing (Stash) for flexible and scalable GPU hashing. Unlike The most notable existing GPU hashing solution (Cuckoo hashing on GPU), Stash: v Provides Efficient performance on large out-of-core hash tables. v Supports mixed operation concurrency v Enables Efficient use of SIMT hardware with collaborative lanes technique (cl. Stash). v Supports large key value pairs and Load Factors as high as 1.

- Slides: 24