SSeer A Selective Perception System for Multimodal Office

- Slides: 41

S-Seer: A Selective Perception System for Multimodal Office Activity Recognition Nuria Oliver & Eric Horvitz Adaptive Systems & Interaction Microsoft Research

Overview of the Talk • • • Background: Seer system Value of information Selective Perception Policies Selective-Seer (S-Seer) Experiments and Video Summary and Future Directions

Background and Motivation • Research Area: Automatic recognition of human behavior from sensory observations • Applications: • Multimodal human-computer interaction • Visual surveillance, office awareness, distributed teams • Accessibility, medical applications

Sensing in Multimodal Systems • Multimodal sensing & reasoning in personal computing as central vs. peripheral • Multimodal signal processing: Typically requires large portion–if not nearly all–of computational resources • Need for strategies to control allocation of resources for perception in multimodal systems • Design-time and/or real-time

Seer Office Awareness System (ICMI 2002, CVIU 2004 (to appear)) • Seer: Prototype for performing real-time, multimodal, multi-scale office activity recognition • Distinguish among: • Phone conversation • Face-to-face conversation • Working on the computer • Presentation • Nobody around • Distant conversation • Other activities

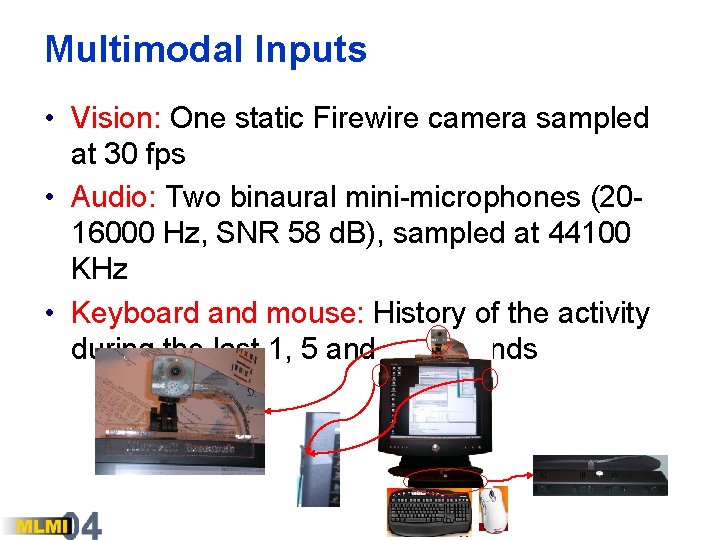

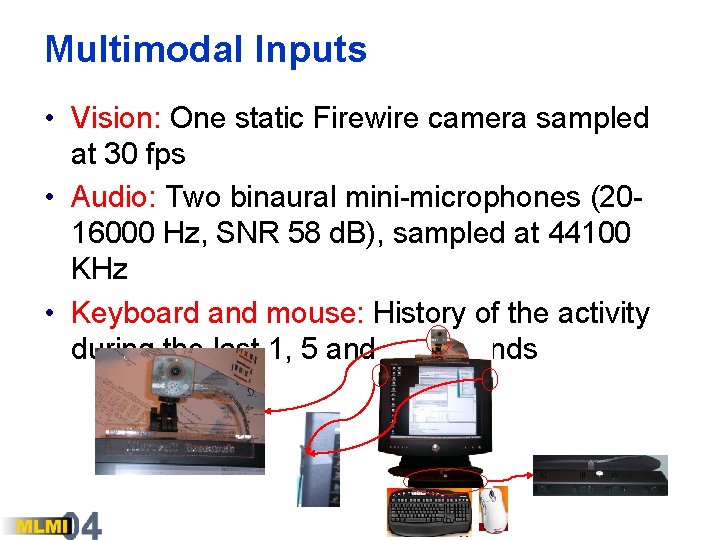

Multimodal Inputs • Vision: One static Firewire camera sampled at 30 fps • Audio: Two binaural mini-microphones (2016000 Hz, SNR 58 d. B), sampled at 44100 KHz • Keyboard and mouse: History of the activity during the last 1, 5 and 60 seconds

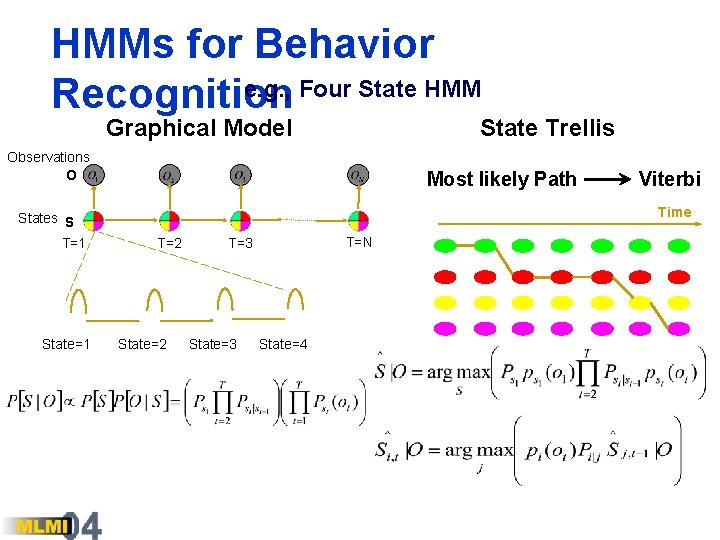

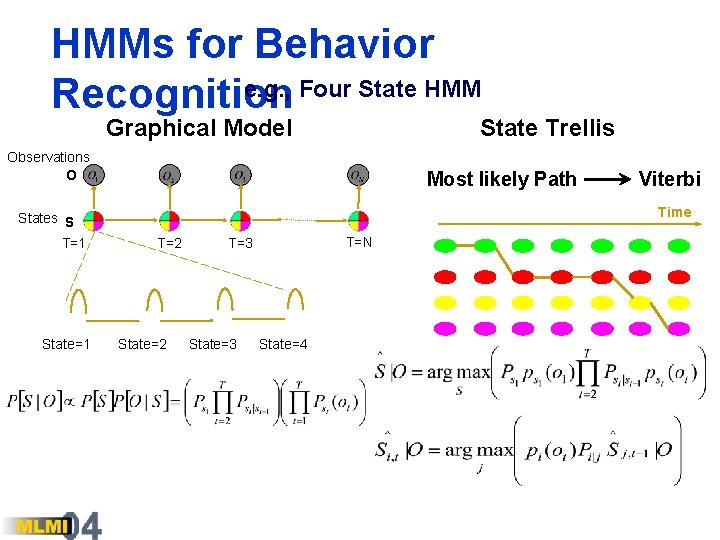

HMMs for Behavior e. g. , Four State HMM Recognition Graphical Model State Trellis Observations O Most likely Path Time States S T=1 State=1 Viterbi T=2 State=2 T=N T=3 State=4

Several Limitations of HMMs for Multimodal Reasoning • First-order Markov assumption doesn’t address long-term dependencies and multiple time granularities • Assumes single process dynamics—but signals may be generated by multiple processes. • Context limited to a single state variable. Multiple processes represented by Cartesian product HMM becomes intractable quickly • Large parameter space large data needs • Empirical experience: Representation sensitive to changes in the environment (lighting, background noise, etc)

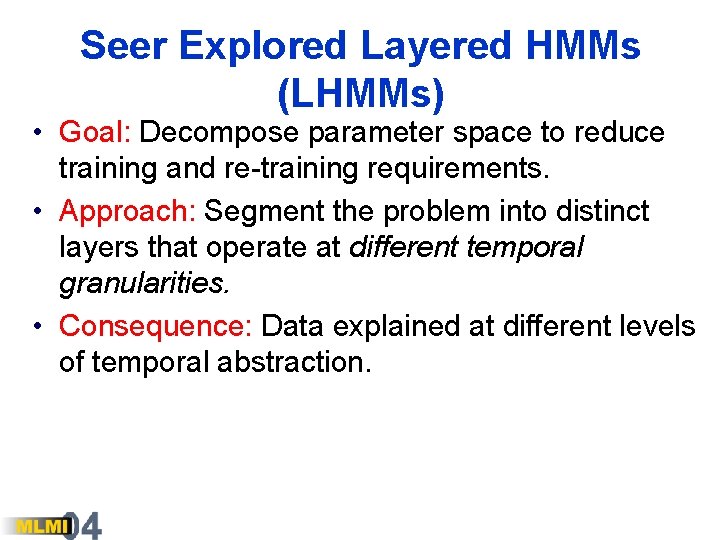

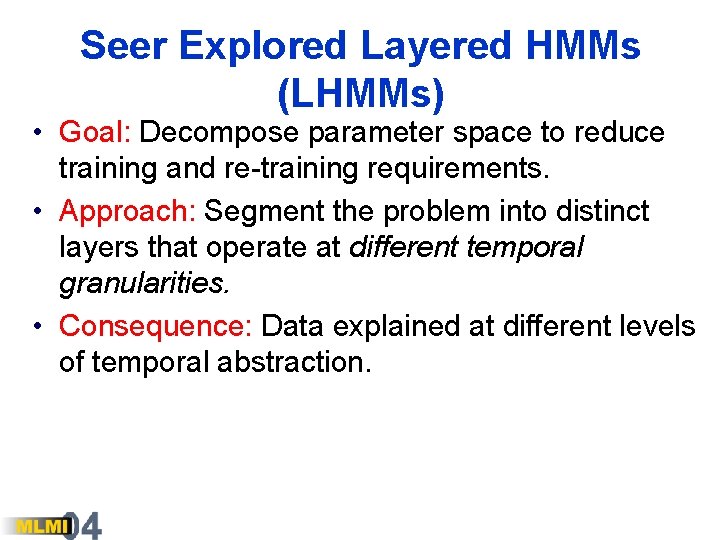

Seer Explored Layered HMMs (LHMMs) • Goal: Decompose parameter space to reduce training and re-training requirements. • Approach: Segment the problem into distinct layers that operate at different temporal granularities. • Consequence: Data explained at different levels of temporal abstraction.

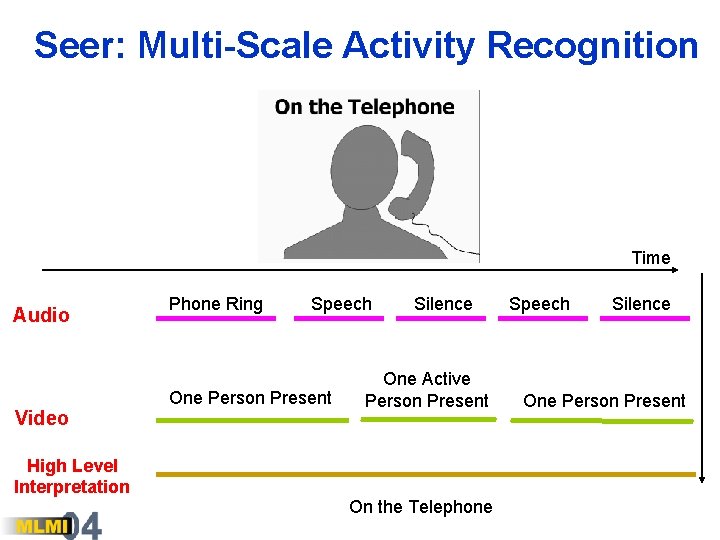

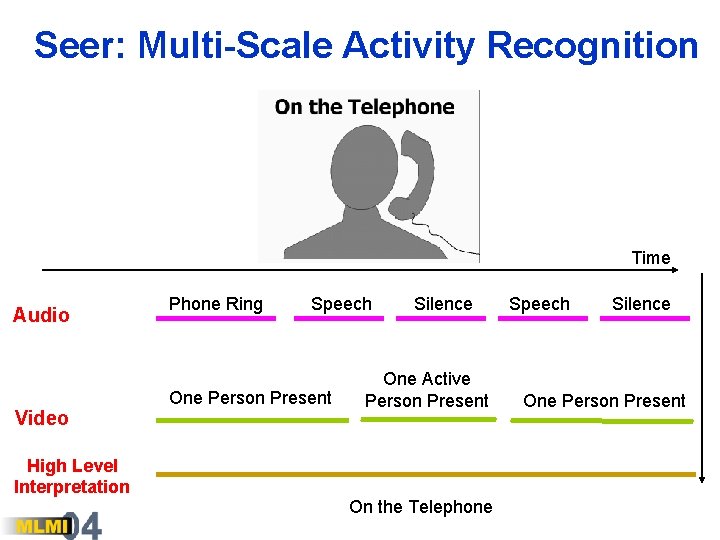

Seer: Multi-Scale Activity Recognition Time Audio Video High Level Interpretation Phone Ring Speech One Person Present Silence One Active Person Present On the Telephone Speech Silence One Person Present

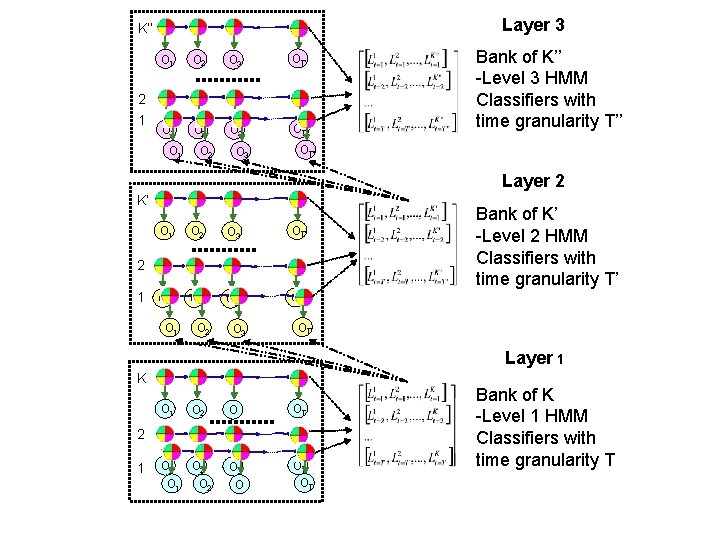

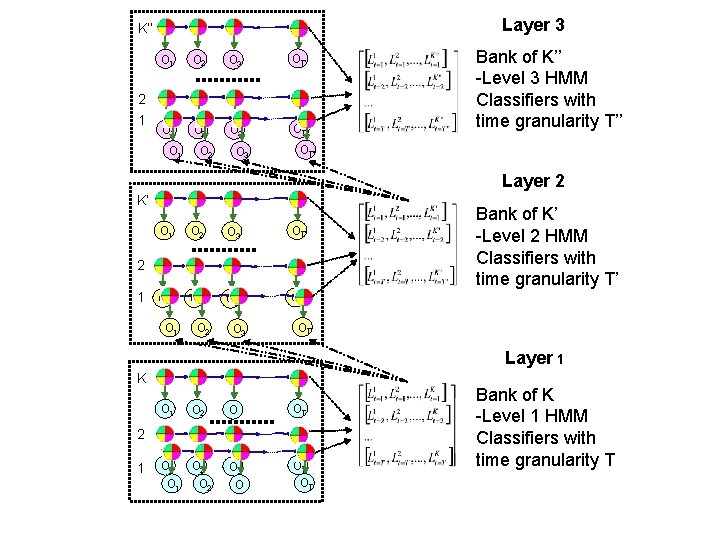

Layer 3 K’’ 2 1 O 2 O 3 OT’’ O 1 O 2 O 3 Bank of K’’ -Level 3 HMM Classifiers with time granularity T’’ OT’’ Layer 2 K’ O 1 O 2 O 3 OT’ 2 O 1 O 2 O 3 Bank of K’ -Level 2 HMM Classifiers with time granularity T’ OT’ Layer 1 K O 1 O 2 O OT O 1 O 2 O O 3 OT OT 2 1 Bank of K -Level 1 HMM Classifiers with time granularity T

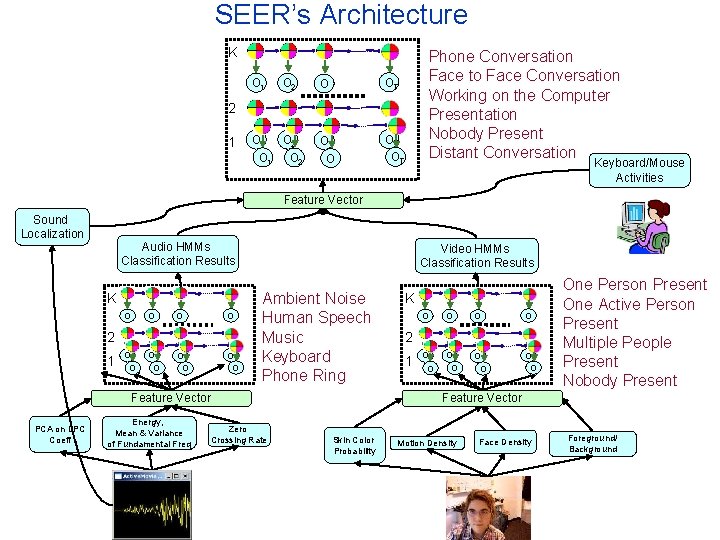

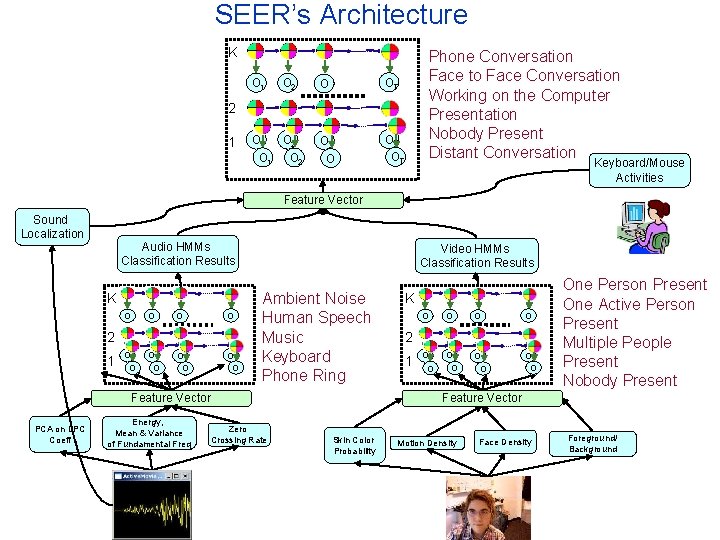

SEER’s Architecture K O 1 O 2 O OT O 1 O 2 O O 3 OT OT Phone Conversation Face to Face Conversation Working on the Computer Presentation Nobody Present Distant Conversation 2 1 Keyboard/Mouse Activities Feature Vector Sound Localization Audio HMMs Classification Results K O O 2 1 O O O O Video HMMs Classification Results Ambient Noise Human Speech Music Keyboard Phone Ring Feature Vector PCA on LPC Coeff Energy, Mean & Variance of Fundamental Freq Zero Crossing Rate K O O O 2 1 One Person Present One Active Person Present Multiple People Present Nobody Present Feature Vector Skin Color Probability Motion Density Face Density Foreground/ Background

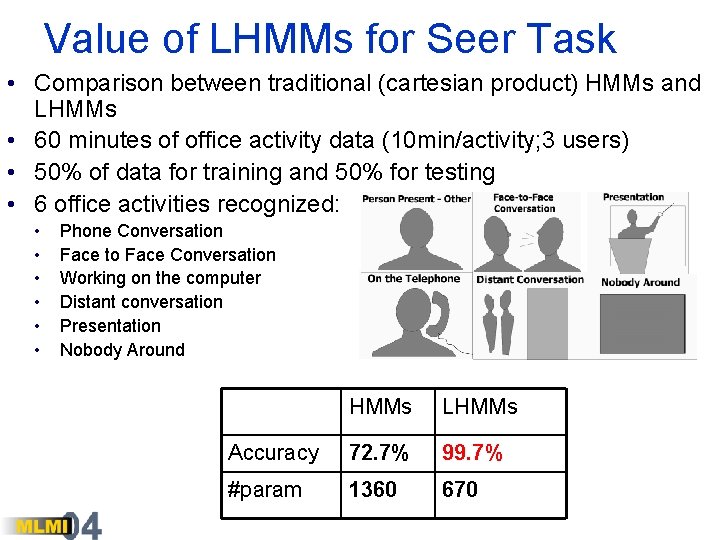

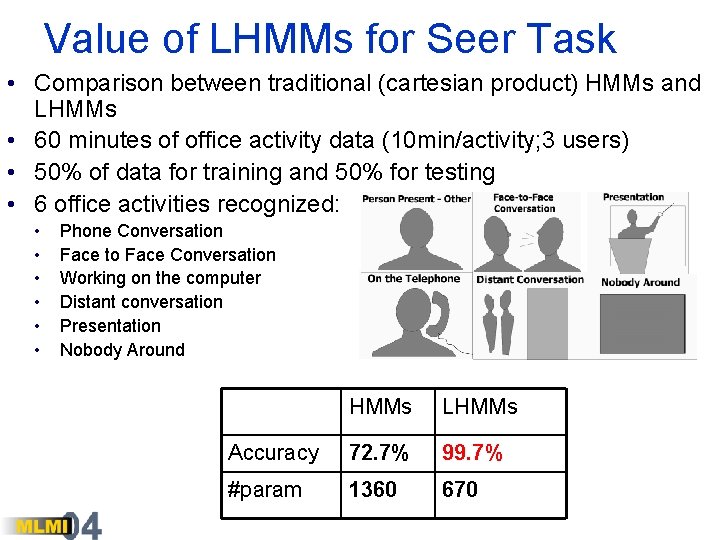

Value of LHMMs for Seer Task • Comparison between traditional (cartesian product) HMMs and LHMMs • 60 minutes of office activity data (10 min/activity; 3 users) • 50% of data for training and 50% for testing • 6 office activities recognized: • • • Phone Conversation Face to Face Conversation Working on the computer Distant conversation Presentation Nobody Around HMMs LHMMs Accuracy 72. 7% 99. 7% #param 1360 670

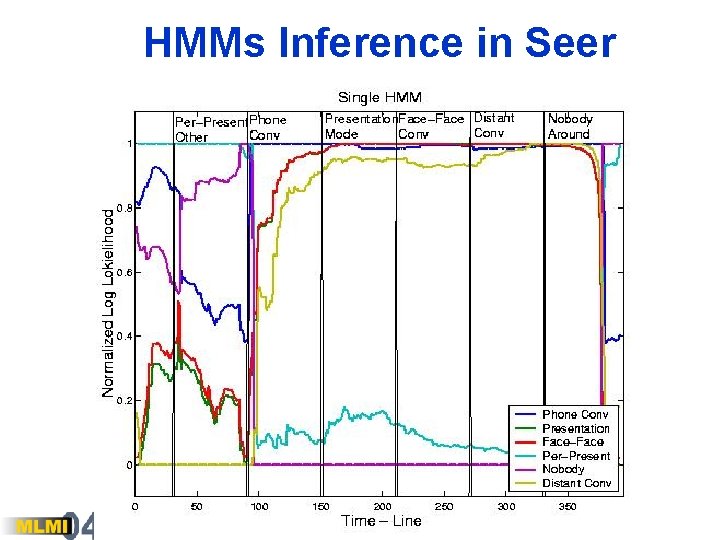

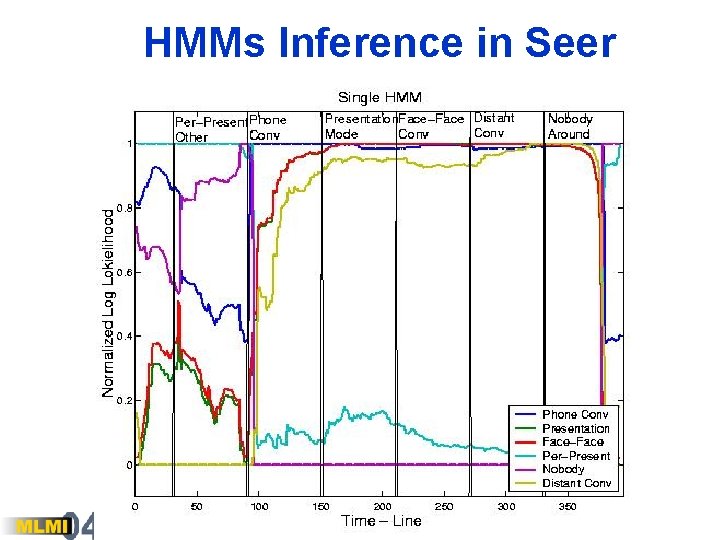

HMMs Inference in Seer

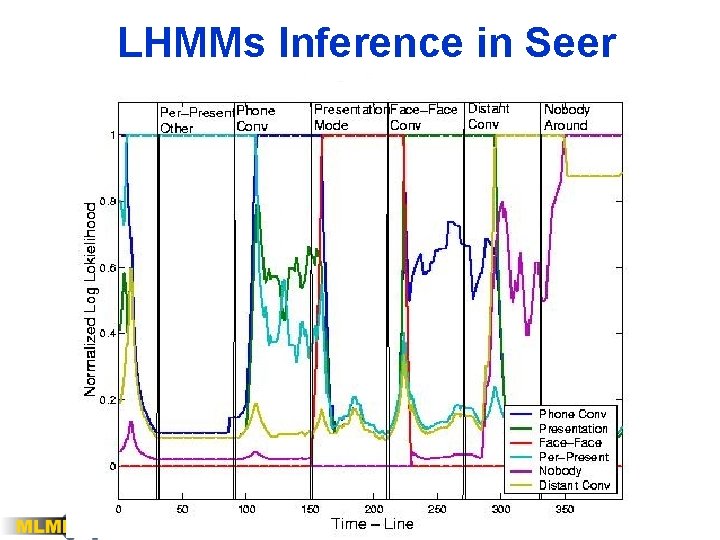

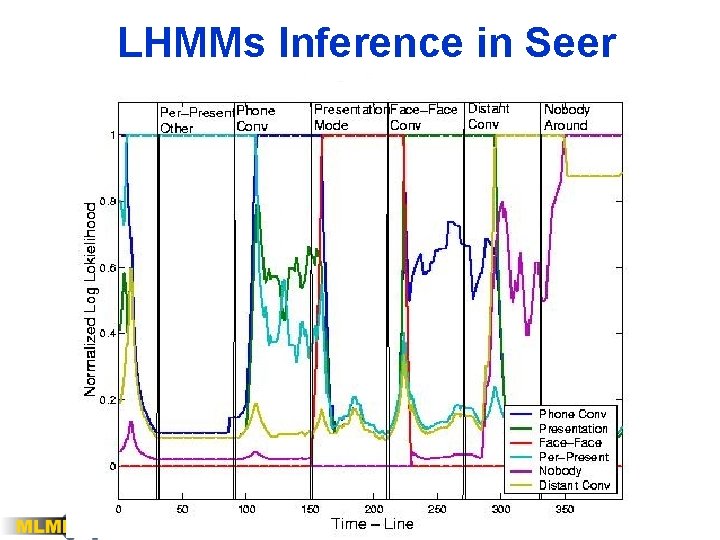

LHMMs Inference in Seer

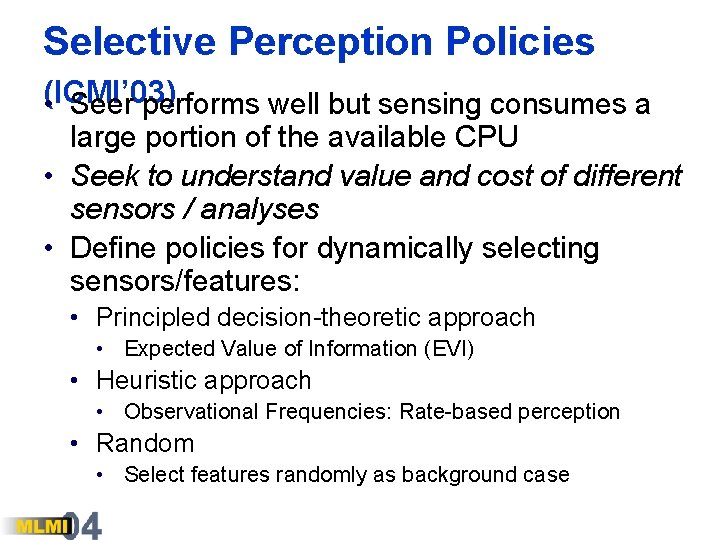

Selective Perception Policies (ICMI’ 03) • Seer performs well but sensing consumes a large portion of the available CPU • Seek to understand value and cost of different sensors / analyses • Define policies for dynamically selecting sensors/features: • Principled decision-theoretic approach • Expected Value of Information (EVI) • Heuristic approach • Observational Frequencies: Rate-based perception • Random • Select features randomly as background case

Related work • Principles for Guiding Perception • Expected value of information (EVI) as core concept of decision analysis (Raiffa, Howard) • Value of information in probabilistic reasoning systems, use in sequential diagnosis (Gorry, 79; Ben. Bassat 80, Horvitz, et al, 89; Heckerman, et al. 90) • Probability and utility to model the behavior of vision modules (Bolles, IJCAI’ 77), to score plans of perceptual actions (Garvey’ 76), reliability indicators to fuse vision modules (Toyama & Horvitz, 2000) • Growing interest in applying decision theory in perceptual applications in the area of active vision search tasks (Rimey’ 93)

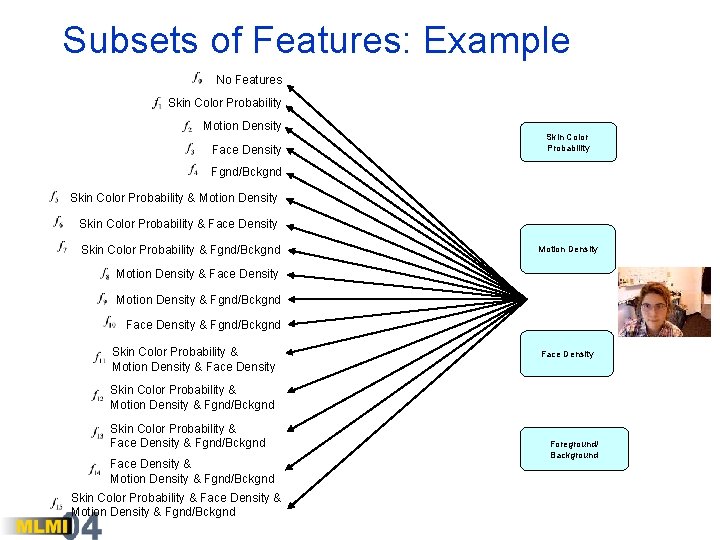

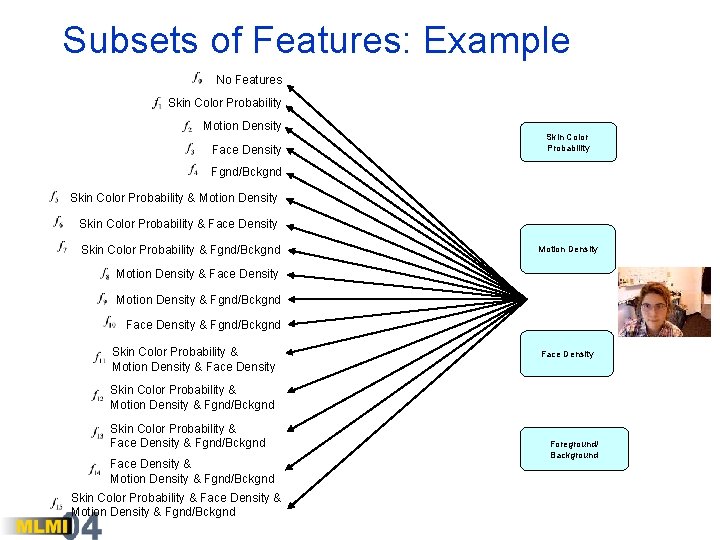

Policy 1: Expected Value of Information (EVI) • Decision-theoretic principles to determine value of observations. • EVI computed by considering value of eliminating uncertainty about the state of observational features under consideration • Example: Vision sensor (camera) features: Ø Motion density Ø Face density Ø Foreground density Ø Skin color density There are K=16 possible combinations of these features representing plausible sets of observations.

Subsets of Features: Example No Features Skin Color Probability Motion Density Face Density Skin Color Probability Fgnd/Bckgnd Skin Color Probability & Motion Density Skin Color Probability & Face Density Skin Color Probability & Fgnd/Bckgnd Motion Density & Face Density Motion Density & Fgnd/Bckgnd Face Density & Fgnd/Bckgnd Skin Color Probability & Motion Density & Face Density Skin Color Probability & Motion Density & Fgnd/Bckgnd Skin Color Probability & Face Density & Fgnd/Bckgnd Face Density & Motion Density & Fgnd/Bckgnd Skin Color Probability & Face Density & Motion Density & Fgnd/Bckgnd Foreground/ Background

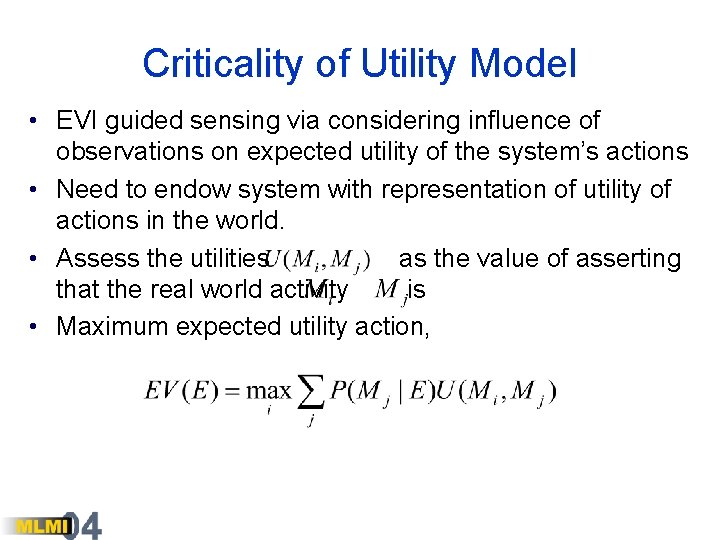

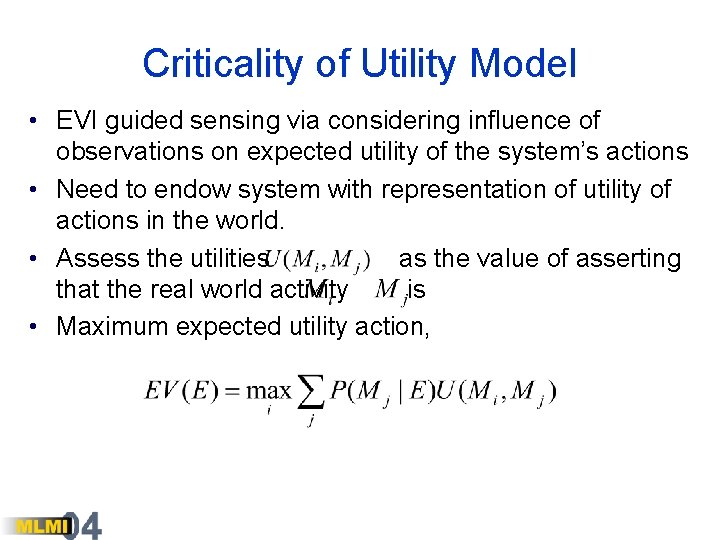

Criticality of Utility Model • EVI guided sensing via considering influence of observations on expected utility of the system’s actions • Need to endow system with representation of utility of actions in the world. • Assess the utilities as the value of asserting that the real world activity is • Maximum expected utility action,

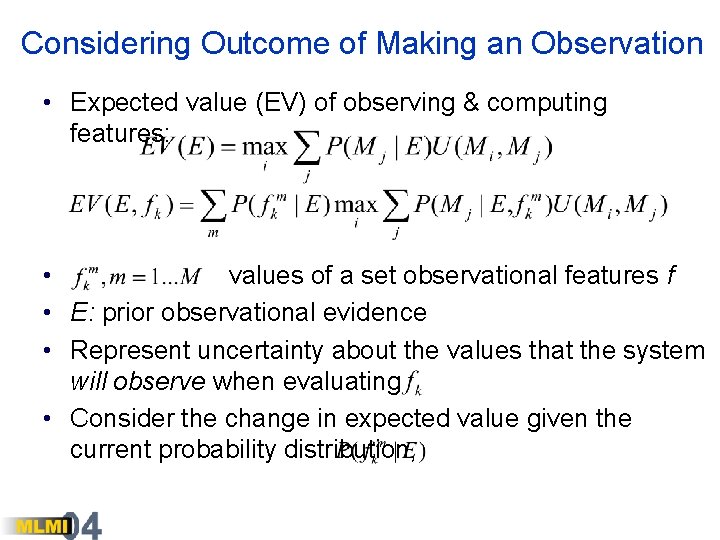

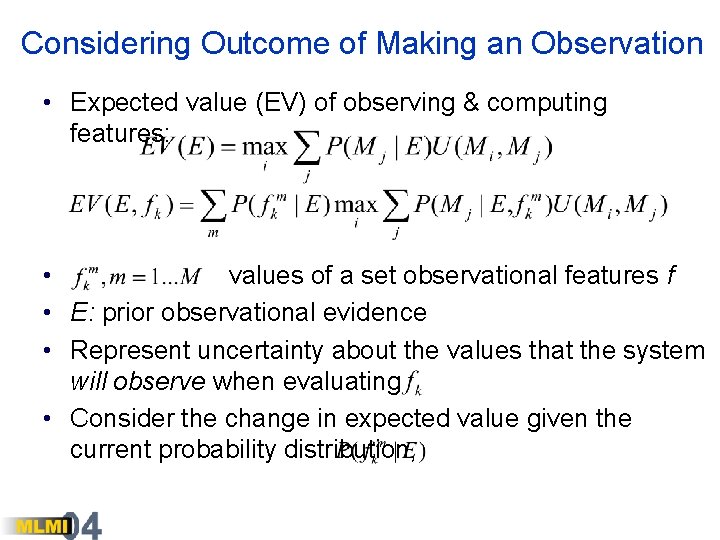

Considering Outcome of Making an Observation • Expected value (EV) of observing & computing features: • values of a set observational features f • E: prior observational evidence • Represent uncertainty about the values that the system will observe when evaluating • Consider the change in expected value given the current probability distribution,

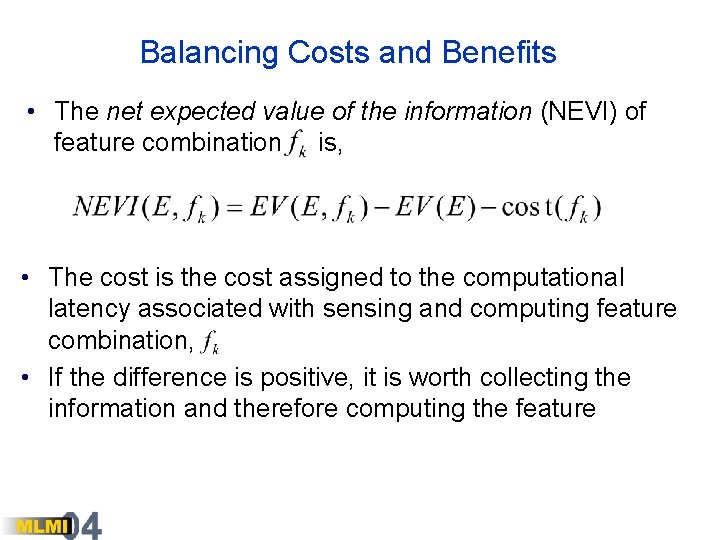

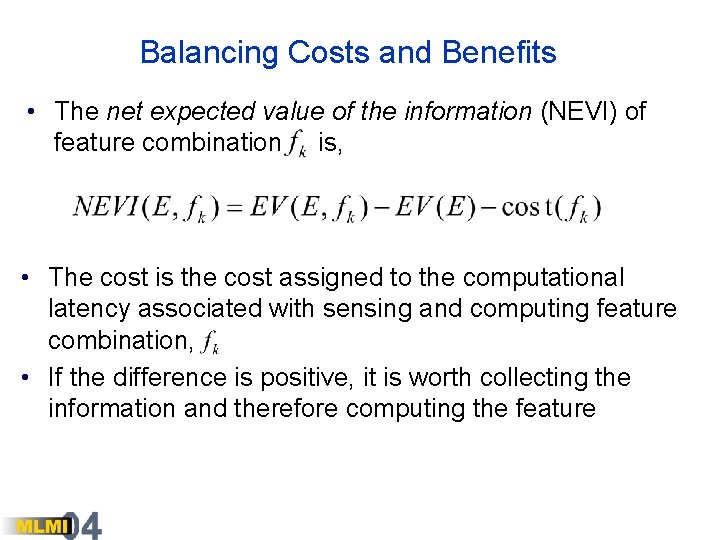

Balancing Costs and Benefits • The net expected value of the information (NEVI) of feature combination is, • The cost is the cost assigned to the computational latency associated with sensing and computing feature combination, • If the difference is positive, it is worth collecting the information and therefore computing the feature

Cost Models • Distinct cost models • Measure of total computation usage • Cost associated with latencies that users will experience • Costs of computation can be context dependent • Example: Expected cost model that takes into account the likelihood the user will experience poor responsiveness, and frustration if encountered.

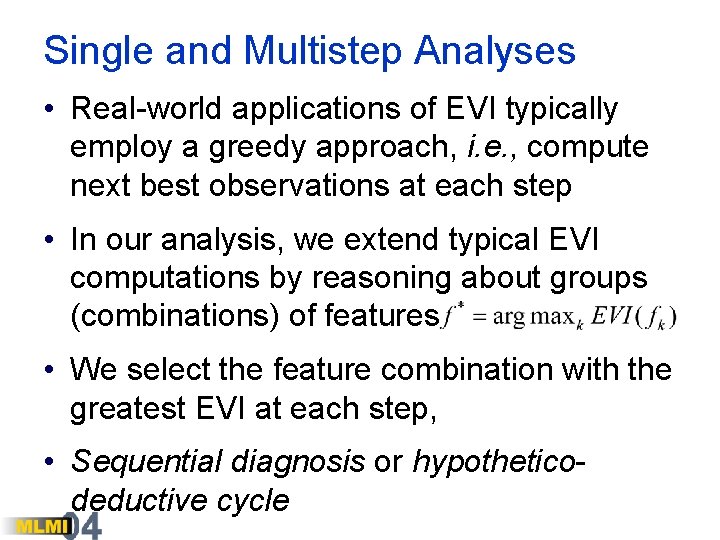

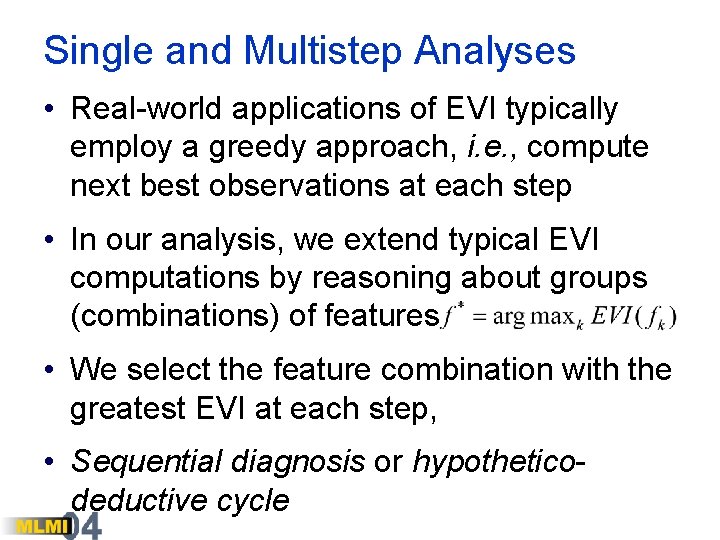

Single and Multistep Analyses • Real-world applications of EVI typically employ a greedy approach, i. e. , compute next best observations at each step • In our analysis, we extend typical EVI computations by reasoning about groups (combinations) of features • We select the feature combination with the greatest EVI at each step, • Sequential diagnosis or hypotheticodeductive cycle

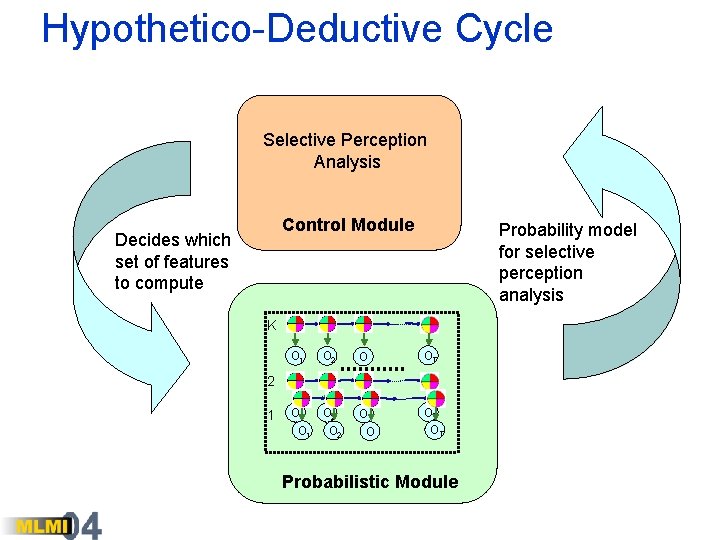

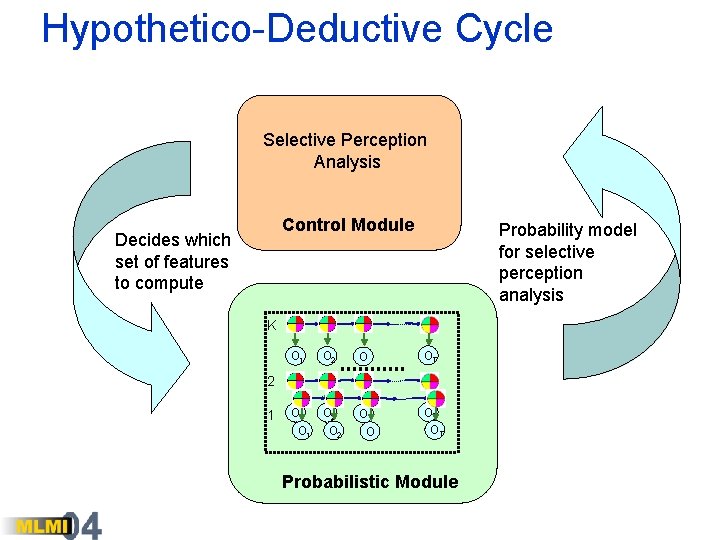

Hypothetico-Deductive Cycle Selective Perception Analysis Control Module Decides which set of features to compute Probability model for selective perception analysis K O 1 O 2 O OT O 1 O 2 O O 3 OT OT 2 1 Probabilistic Module

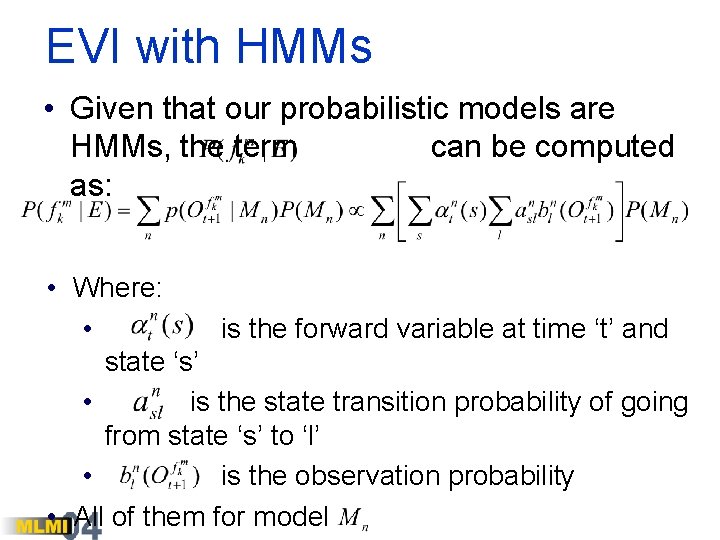

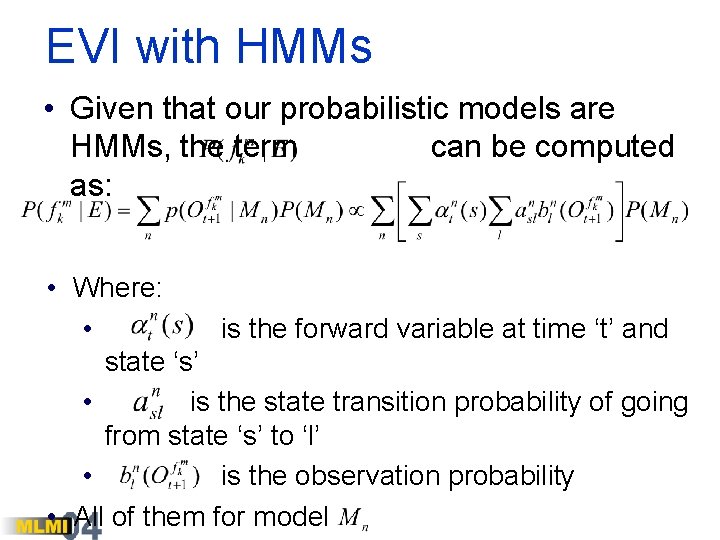

EVI with HMMs • Given that our probabilistic models are HMMs, the term can be computed as: • Where: • is the forward variable at time ‘t’ and state ‘s’ • is the state transition probability of going from state ‘s’ to ‘l’ • is the observation probability • All of them for model

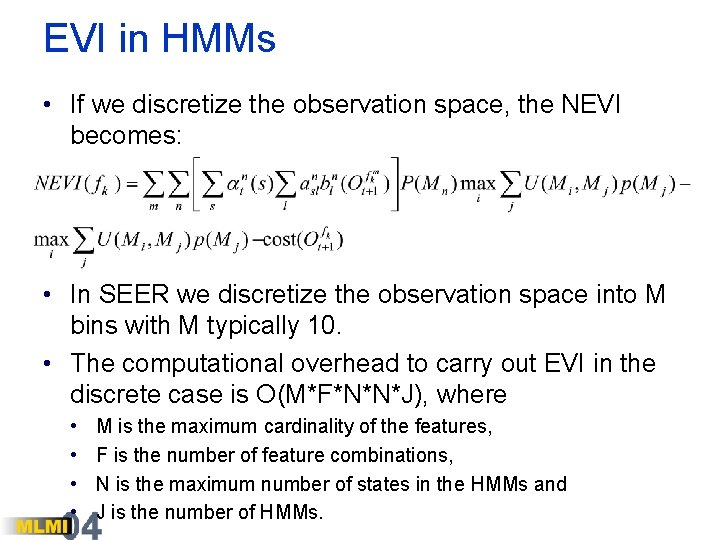

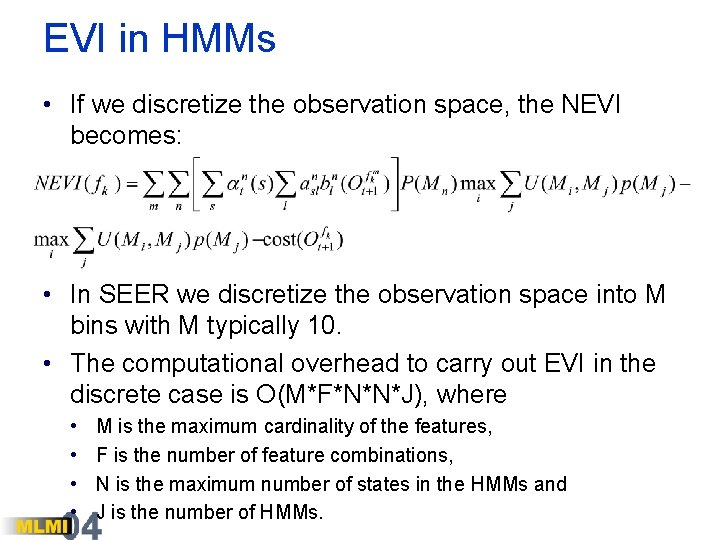

EVI in HMMs • If we discretize the observation space, the NEVI becomes: • In SEER we discretize the observation space into M bins with M typically 10. • The computational overhead to carry out EVI in the discrete case is O(M*F*N*N*J), where • • M is the maximum cardinality of the features, F is the number of feature combinations, N is the maximum number of states in the HMMs and J is the number of HMMs.

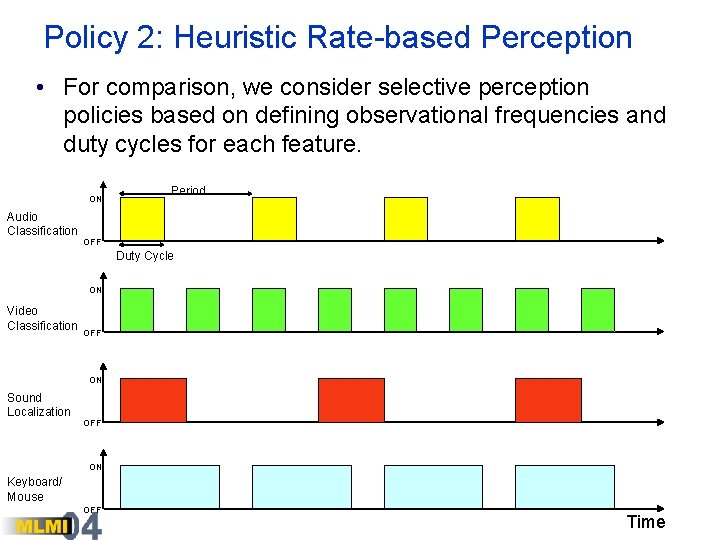

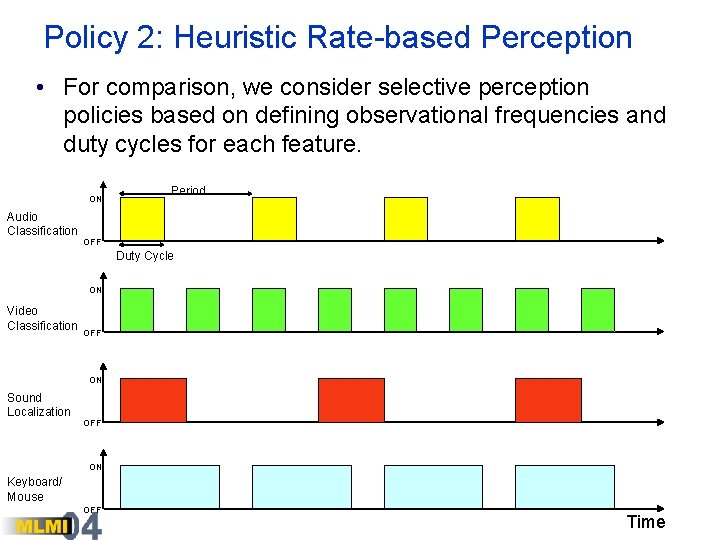

Policy 2: Heuristic Rate-based Perception • For comparison, we consider selective perception policies based on defining observational frequencies and duty cycles for each feature. ON Audio Classification Period OFF Duty Cycle ON Video Classification OFF ON Sound Localization OFF ON Keyboard/ Mouse OFF Time

Policy 3: Random Selection • Baseline policy for comparisons • Randomly select a feature combination from all possible

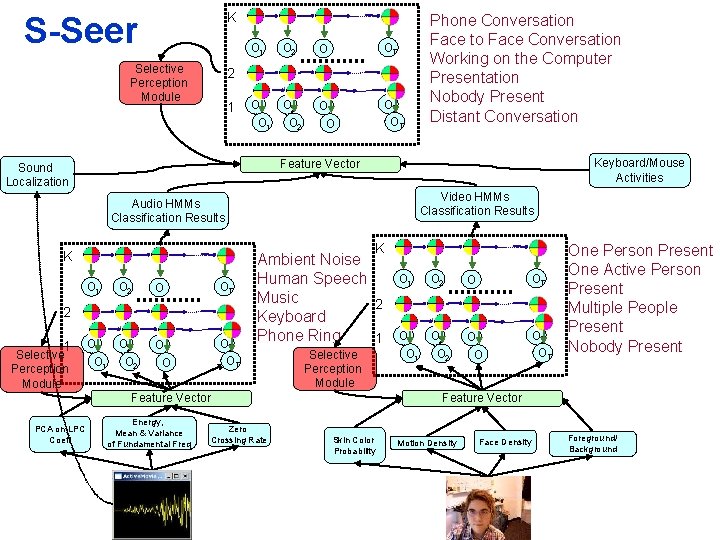

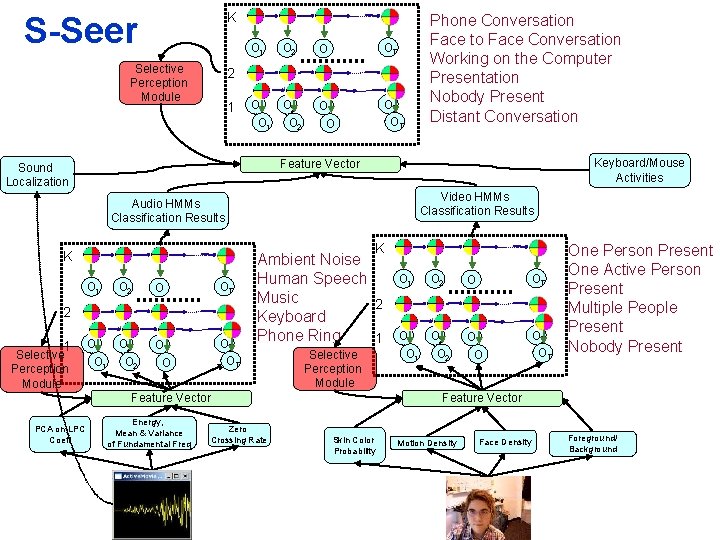

K S-Seer Selective Perception Module O 1 O 2 O OT O 1 O 2 O O 3 OT OT 2 1 Keyboard/Mouse Activities Feature Vector Sound Localization Video HMMs Classification Results Audio HMMs Classification Results K K O 1 O 2 O OT O 1 O 2 O O 3 OT OT 2 1 Selective Perception Module Ambient Noise Human Speech Music 2 Keyboard Phone Ring 1 Selective Perception Module Feature Vector PCA on LPC Coeff Phone Conversation Face to Face Conversation Working on the Computer Presentation Nobody Present Distant Conversation Energy, Mean & Variance of Fundamental Freq Zero Crossing Rate O 1 O 2 O OT O 1 O 2 O O 3 OT OT One Person Present One Active Person Present Multiple People Present Nobody Present Feature Vector Skin Color Probability Motion Density Face Density Foreground/ Background

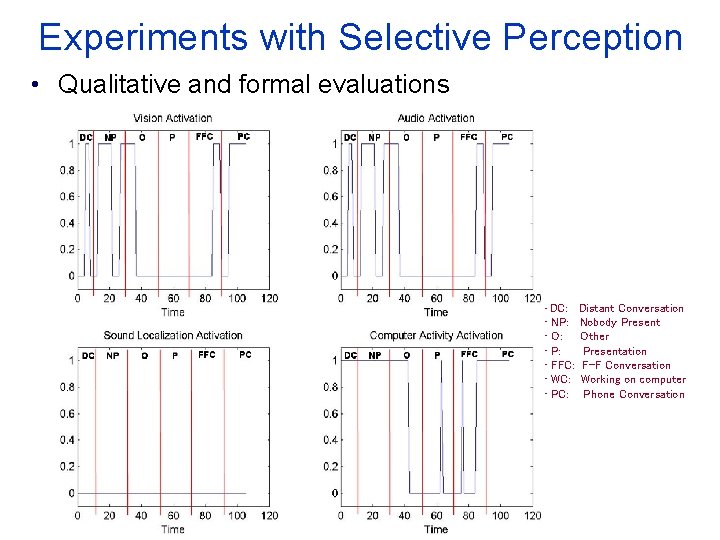

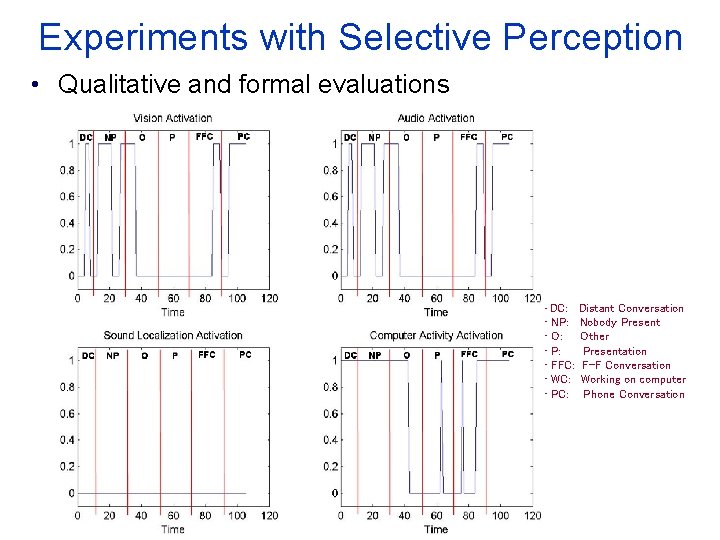

Experiments with Selective Perception • Qualitative and formal evaluations • DC: • NP: • O: • P: • FFC: • WC: • PC: Distant Conversation Nobody Present Other Presentation F-F Conversation Working on computer Phone Conversation

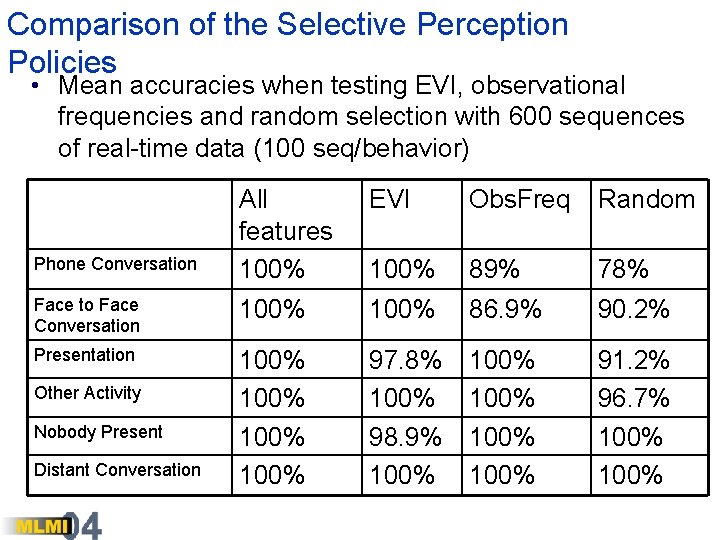

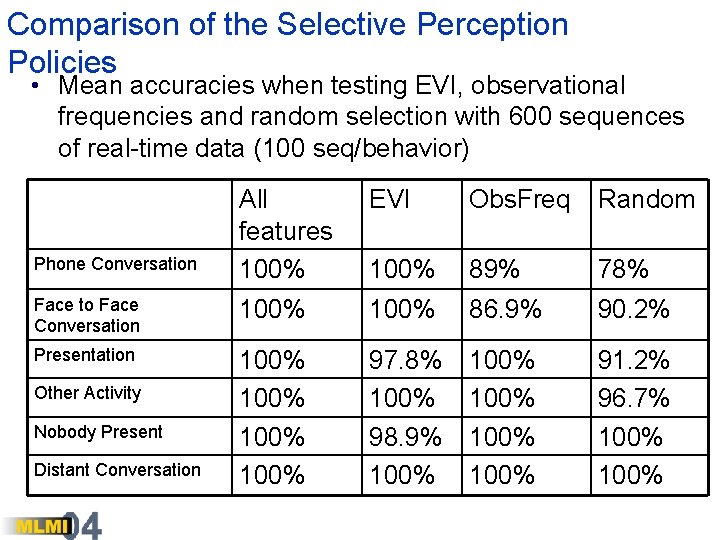

Comparison of the Selective Perception Policies • Mean accuracies when testing EVI, observational frequencies and random selection with 600 sequences of real-time data (100 seq/behavior) All features 100% EVI Obs. Freq Random 100% 89% 78% Face to Face Conversation 100% 86. 9% 90. 2% Presentation 100% 97. 8% 100% 98. 9% 100% 100% 91. 2% 96. 7% 100% Phone Conversation Other Activity Nobody Present Distant Conversation

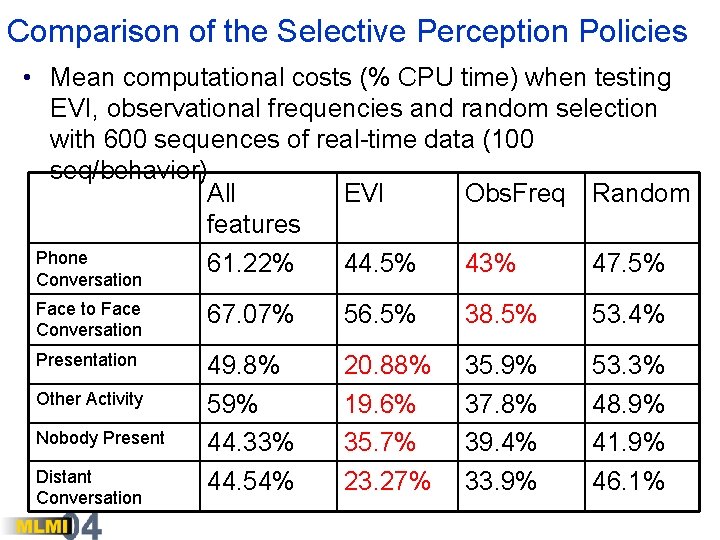

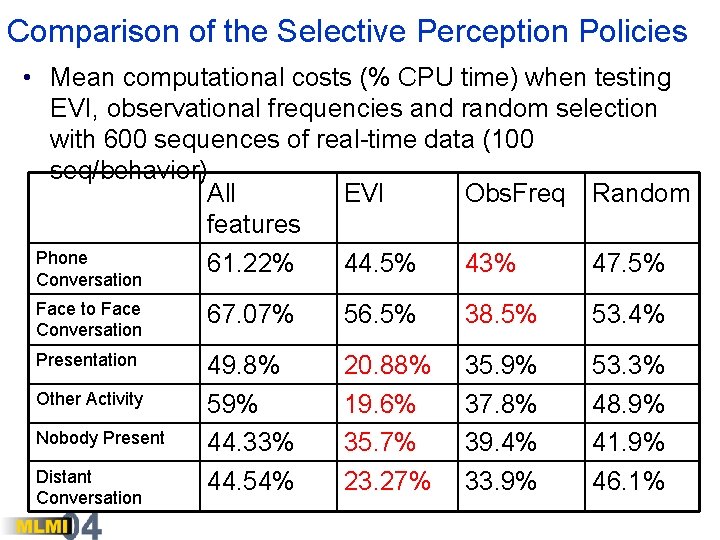

Comparison of the Selective Perception Policies • Mean computational costs (% CPU time) when testing EVI, observational frequencies and random selection with 600 sequences of real-time data (100 seq/behavior) All EVI Obs. Freq Random features Phone 61. 22% 44. 5% 43% 47. 5% Conversation Face to Face Conversation 67. 07% 56. 5% 38. 5% 53. 4% Presentation 49. 8% 59% 44. 33% 44. 54% 20. 88% 19. 6% 35. 7% 23. 27% 35. 9% 37. 8% 39. 4% 33. 9% 53. 3% 48. 9% 41. 9% 46. 1% Other Activity Nobody Present Distant Conversation

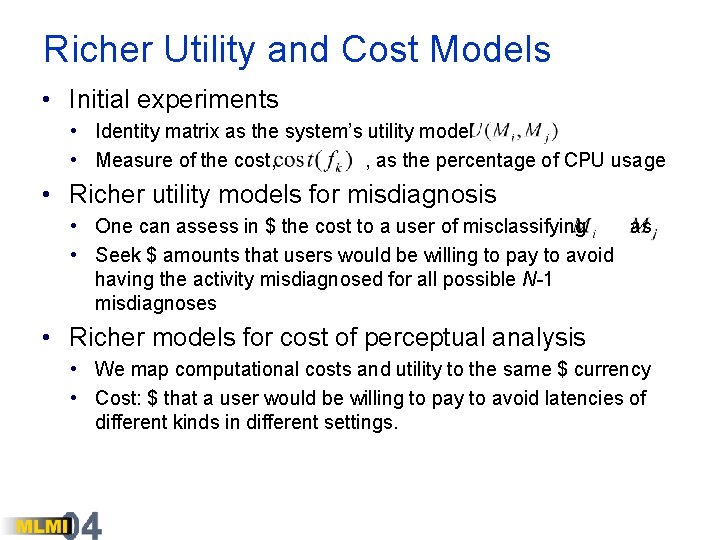

Richer Utility and Cost Models • Initial experiments • Identity matrix as the system’s utility model • Measure of the cost, , as the percentage of CPU usage • Richer utility models for misdiagnosis • One can assess in $ the cost to a user of misclassifying as • Seek $ amounts that users would be willing to pay to avoid having the activity misdiagnosed for all possible N-1 misdiagnoses • Richer models for cost of perceptual analysis • We map computational costs and utility to the same $ currency • Cost: $ that a user would be willing to pay to avoid latencies of different kinds in different settings.

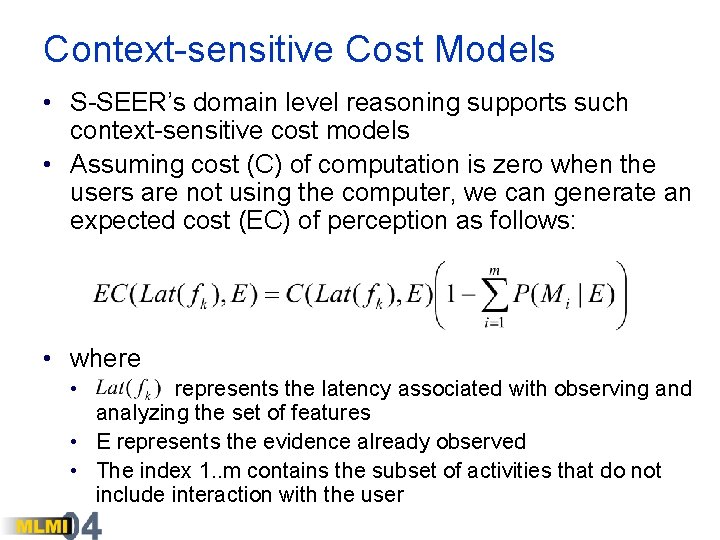

Context-sensitive Cost Models • S-SEER’s domain level reasoning supports such context-sensitive cost models • Assuming cost (C) of computation is zero when the users are not using the computer, we can generate an expected cost (EC) of perception as follows: • where • represents the latency associated with observing and analyzing the set of features • E represents the evidence already observed • The index 1. . m contains the subset of activities that do not include interaction with the user

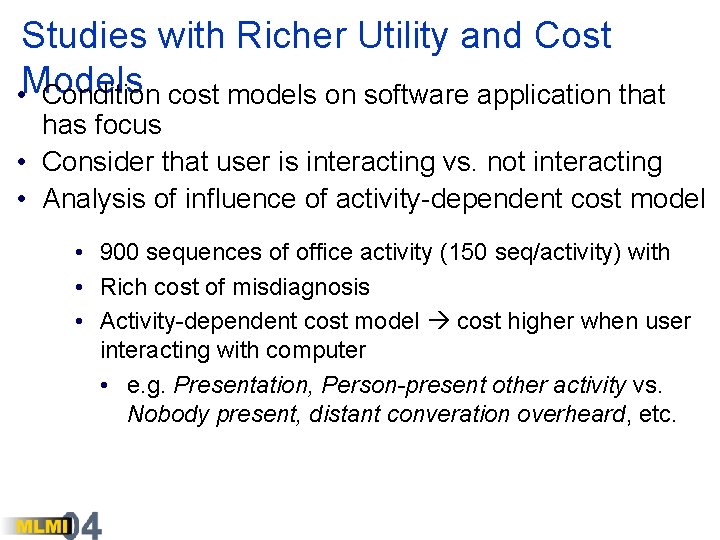

Studies with Richer Utility and Cost • Models Condition cost models on software application that has focus • Consider that user is interacting vs. not interacting • Analysis of influence of activity-dependent cost model • 900 sequences of office activity (150 seq/activity) with • Rich cost of misdiagnosis • Activity-dependent cost model cost higher when user interacting with computer • e. g. Presentation, Person-present other activity vs. Nobody present, distant converation overheard, etc.

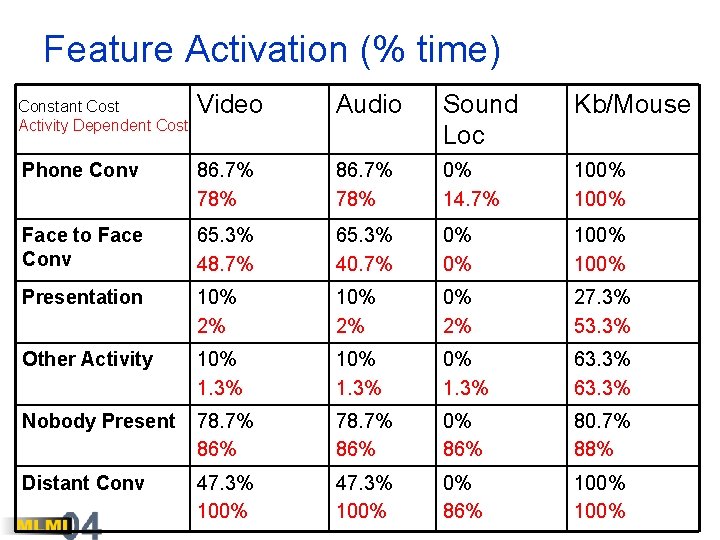

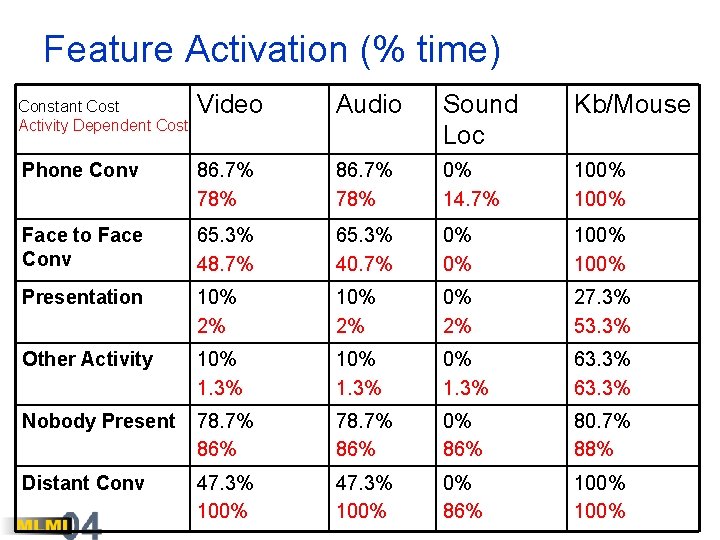

Feature Activation (% time) Constant Cost Activity Dependent Cost Video Audio Sound Loc Kb/Mouse Phone Conv 86. 7% 78% 0% 14. 7% 100% Face to Face Conv 65. 3% 48. 7% 65. 3% 40. 7% 0% 0% 100% Presentation 10% 2% 27. 3% 53. 3% Other Activity 10% 1. 3% 63. 3% Nobody Present 78. 7% 86% 0% 86% 80. 7% 88% Distant Conv 47. 3% 100% 0% 86% 100%

Video

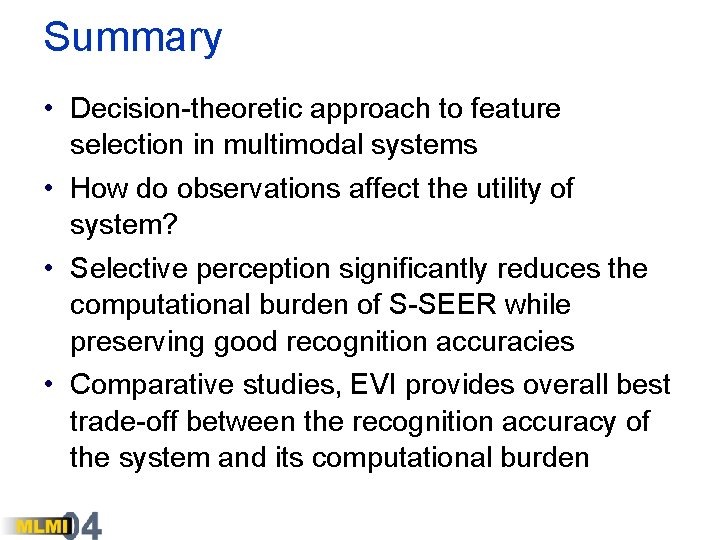

Summary • Decision-theoretic approach to feature selection in multimodal systems • How do observations affect the utility of system? • Selective perception significantly reduces the computational burden of S-SEER while preserving good recognition accuracies • Comparative studies, EVI provides overall best trade-off between the recognition accuracy of the system and its computational burden

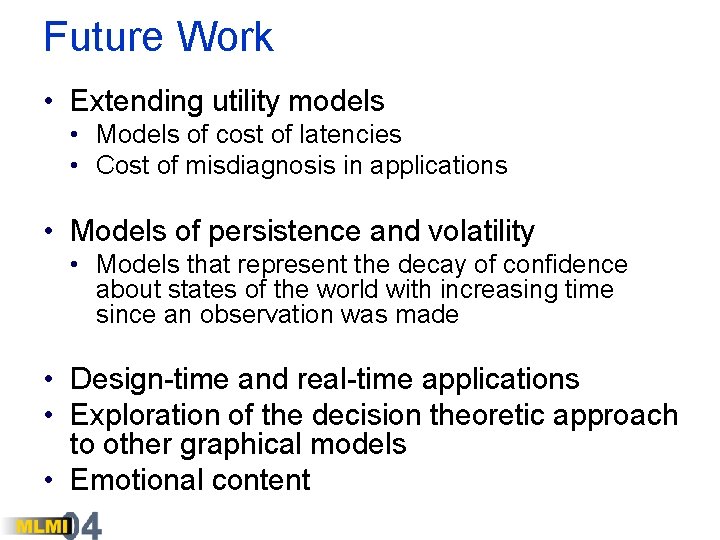

Future Work • Extending utility models • Models of cost of latencies • Cost of misdiagnosis in applications • Models of persistence and volatility • Models that represent the decay of confidence about states of the world with increasing time since an observation was made • Design-time and real-time applications • Exploration of the decision theoretic approach to other graphical models • Emotional content