SRIOV Handson Lab Rahul Shah Clayne Robison NFV

SR-IOV Hands-on Lab Rahul Shah Clayne Robison

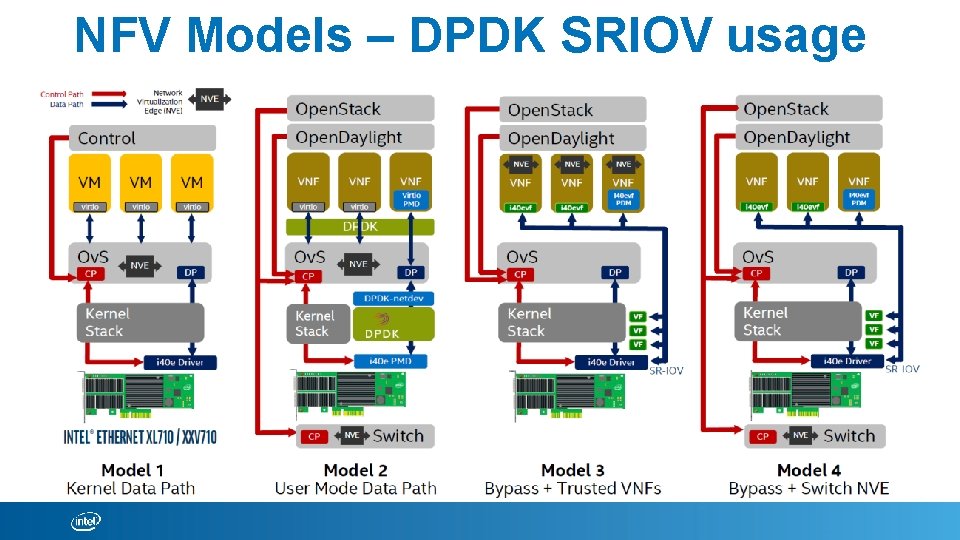

NFV Models – DPDK SRIOV usage

Intel® Ethernet XL 710 Family (I/O Virtualization)

Hands-On Session (SRIOV)

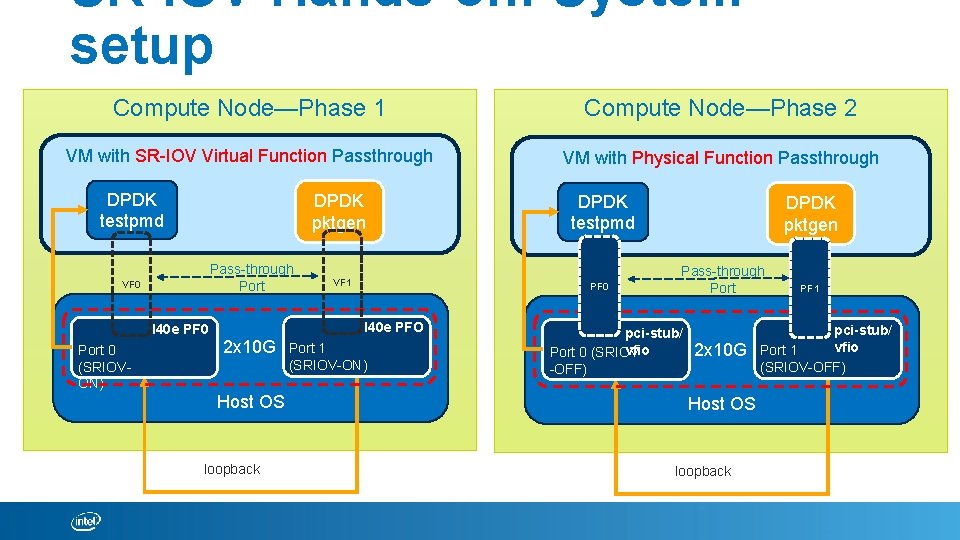

SR-IOV Hands-on: System setup Compute Node—Phase 1 Compute Node—Phase 2 VM with SR-IOV Virtual Function Passthrough VM with Physical Function Passthrough DPDK testpmd DPDK pktgen Pass-through Port VF 0 I 40 e PF 0 Port 0 (SRIOVON) VF 1 PF 0 I 40 e PFO 2 x 10 G Host OS loopback DPDK testpmd Port 1 (SRIOV-ON) DPDK pktgen Pass-through Port pci-stub/ vfio Port 0 (SRIOV -OFF) 2 x 10 G Host OS loopback PF 1 pci-stub/ vfio Port 1 (SRIOV-OFF)

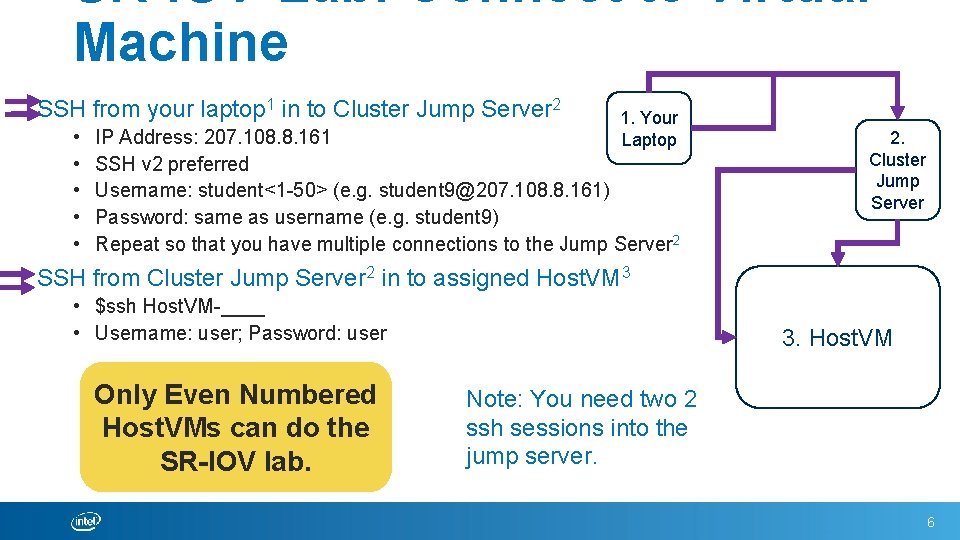

SR-IOV-Lab: Connect to Virtual Machine SSH from your laptop 1 in to Cluster Jump Server 2 • • • 1. Your Laptop IP Address: 207. 108. 8. 161 SSH v 2 preferred Username: student<1 -50> (e. g. student 9@207. 108. 8. 161) Password: same as username (e. g. student 9) Repeat so that you have multiple connections to the Jump Server 2 2. Cluster Jump Server SSH from Cluster Jump Server 2 in to assigned Host. VM 3 • $ssh Host. VM-____ • Username: user; Password: user Only Even Numbered Host. VMs can do the SR-IOV lab. 3. Host. VM Note: You need two 2 ssh sessions into the jump server. 6

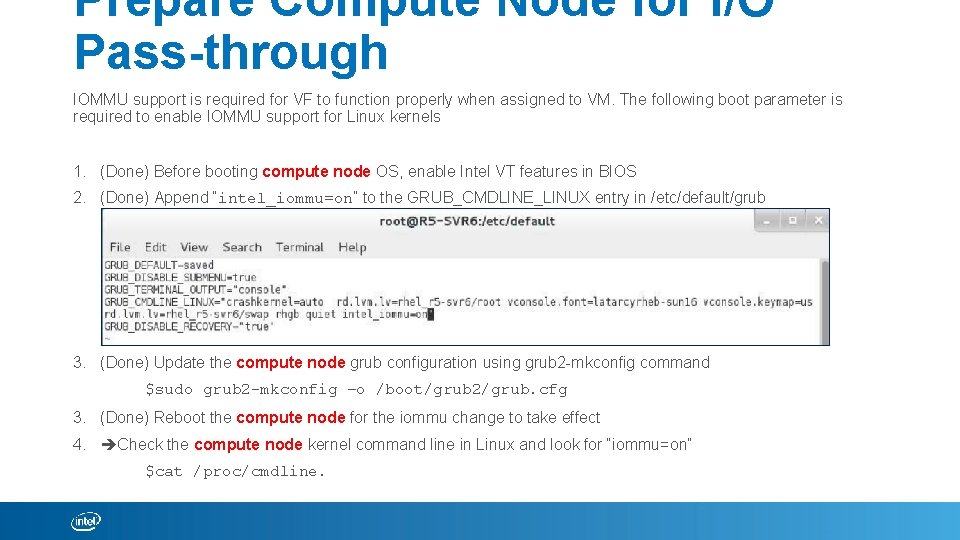

Prepare Compute Node for I/O Pass-through IOMMU support is required for VF to function properly when assigned to VM. The following boot parameter is required to enable IOMMU support for Linux kernels 1. (Done) Before booting compute node OS, enable Intel VT features in BIOS 2. (Done) Append “intel_iommu=on” to the GRUB_CMDLINE_LINUX entry in /etc/default/grub 3. (Done) Update the compute node grub configuration using grub 2 -mkconfig command $sudo grub 2 -mkconfig –o /boot/grub 2/grub. cfg 3. (Done) Reboot the compute node for the iommu change to take effect 4. Check the compute node kernel command line in Linux and look for “iommu=on” $cat /proc/cmdline.

Create Virtual Functions Linux does not create VFs by default. The X 710 server adapter supports upto 32 VFs per port. The XL 710 server adapters supports up to 64 VFs per port. 1. On the compute node, create the Virtual Functions: # echo 4 > /sys/class/net/[INTERFACE NAME]/device/sriov_numvfs (for kernel versions 3. 8. x and above) (For Kernel versions 3. 7. x and below, to get 4 VFs per port) #modprobe i 40 e max_vfs=4, 4 2. On the compute node, verify that the Virtual Functions were created: # lspci | grep ‘X 710 Virtual Function’ 3. On the compute node, bring up the link on the virtual functions # ip l set dev [INTERFACE NAME] up 4. You can assign a MAC address to each VF on the compute node. # ip l set dev enp 6 s 0 f 0 vf 0 mac aa: bb: cc: dd: ee: 00 Upon successful VF creation, the Linux operating system automatically loads the i 40 vf driver.

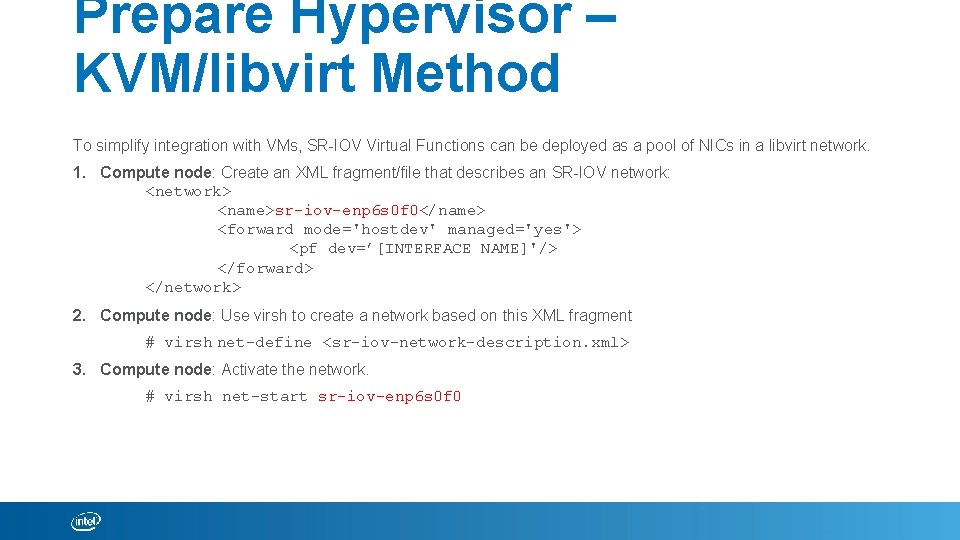

Prepare Hypervisor – KVM/libvirt Method To simplify integration with VMs, SR-IOV Virtual Functions can be deployed as a pool of NICs in a libvirt network. 1. Compute node: Create an XML fragment/file that describes an SR-IOV network: <network> <name>sr-iov-enp 6 s 0 f 0</name> <forward mode='hostdev' managed='yes'> <pf dev=’[INTERFACE NAME]'/> </forward> </network> 2. Compute node: Use virsh to create a network based on this XML fragment # virsh net-define <sr-iov-network-description. xml> 3. Compute node: Activate the network. # virsh net-start sr-iov-enp 6 s 0 f 0

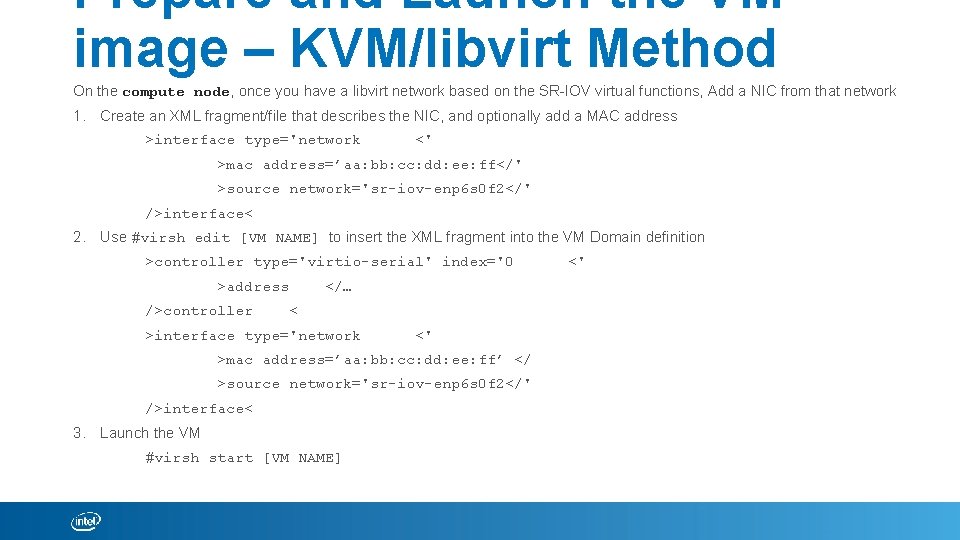

Prepare and Launch the VM image – KVM/libvirt Method On the compute node, once you have a libvirt network based on the SR-IOV virtual functions, Add a NIC from that network 1. Create an XML fragment/file that describes the NIC, and optionally add a MAC address >interface type='network <' >mac address=’aa: bb: cc: dd: ee: ff</' >source network='sr-iov-enp 6 s 0 f 2</' />interface< 2. Use #virsh edit [VM NAME] to insert the XML fragment into the VM Domain definition >controller type='virtio-serial' index='0 >address />controller </… < >interface type='network <' >mac address=’aa: bb: cc: dd: ee: ff’ </ >source network='sr-iov-enp 6 s 0 f 2</' />interface< 3. Launch the VM #virsh start [VM NAME] <'

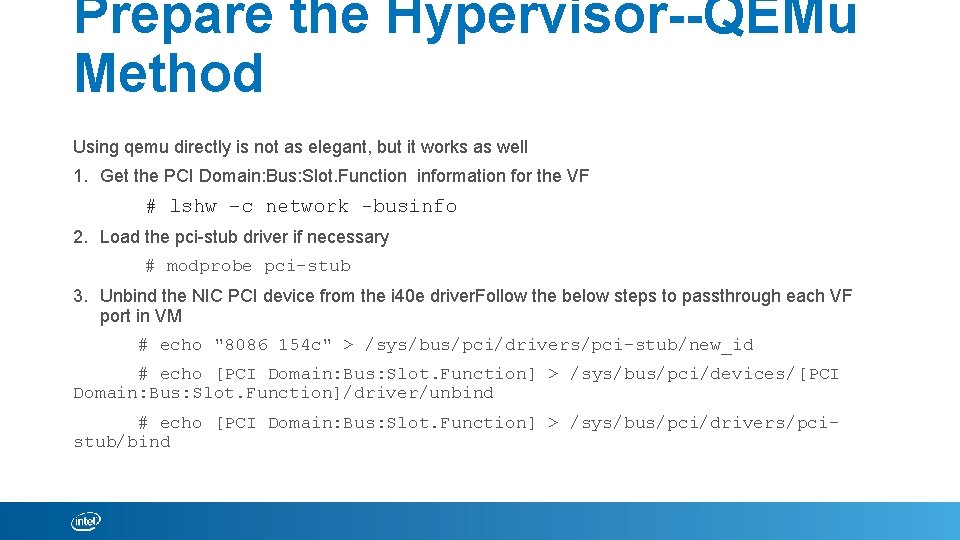

Prepare the Hypervisor--QEMu Method Using qemu directly is not as elegant, but it works as well 1. Get the PCI Domain: Bus: Slot. Function information for the VF # lshw –c network -businfo 2. Load the pci-stub driver if necessary # modprobe pci-stub 3. Unbind the NIC PCI device from the i 40 e driver. Follow the below steps to passthrough each VF port in VM # echo "8086 154 c" > /sys/bus/pci/drivers/pci-stub/new_id # echo [PCI Domain: Bus: Slot. Function] > /sys/bus/pci/devices/[PCI Domain: Bus: Slot. Function]/driver/unbind # echo [PCI Domain: Bus: Slot. Function] > /sys/bus/pci/drivers/pcistub/bind

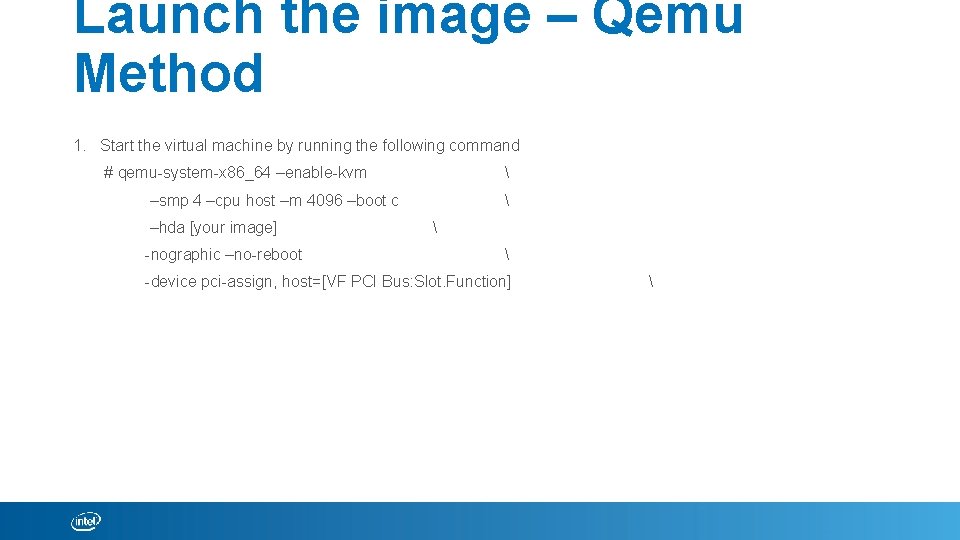

Launch the image – Qemu Method 1. Start the virtual machine by running the following command # qemu-system-x 86_64 –enable-kvm –smp 4 –cpu host –m 4096 –boot c –hda [your image] -nographic –no-reboot -device pci-assign, host=[VF PCI Bus: Slot. Function]

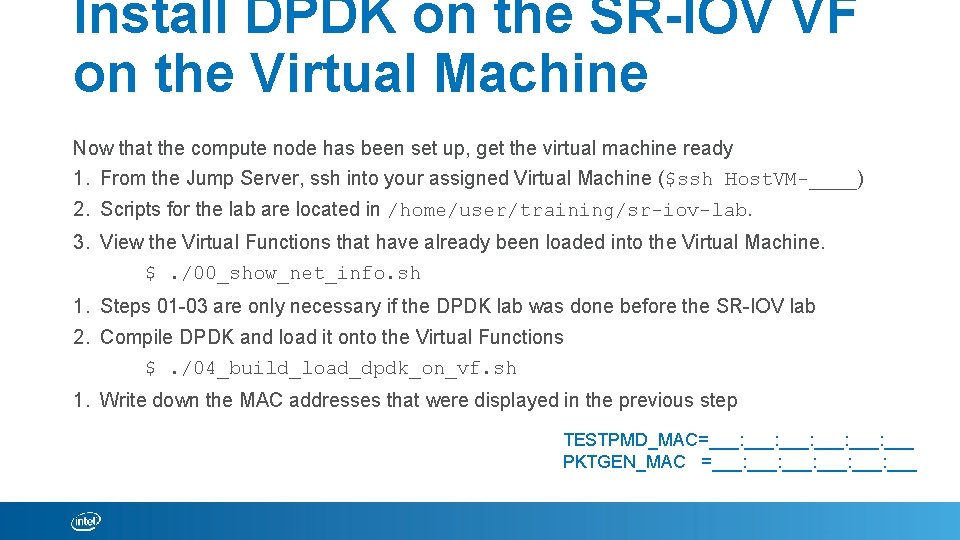

Install DPDK on the SR-IOV VF on the Virtual Machine Now that the compute node has been set up, get the virtual machine ready 1. From the Jump Server, ssh into your assigned Virtual Machine ($ssh Host. VM-____) 2. Scripts for the lab are located in /home/user/training/sr-iov-lab. 3. View the Virtual Functions that have already been loaded into the Virtual Machine. $. /00_show_net_info. sh 1. Steps 01 -03 are only necessary if the DPDK lab was done before the SR-IOV lab 2. Compile DPDK and load it onto the Virtual Functions $. /04_build_load_dpdk_on_vf. sh 1. Write down the MAC addresses that were displayed in the previous step TESTPMD_MAC=___: ___: ___ PKTGEN_MAC =___: ___: ___

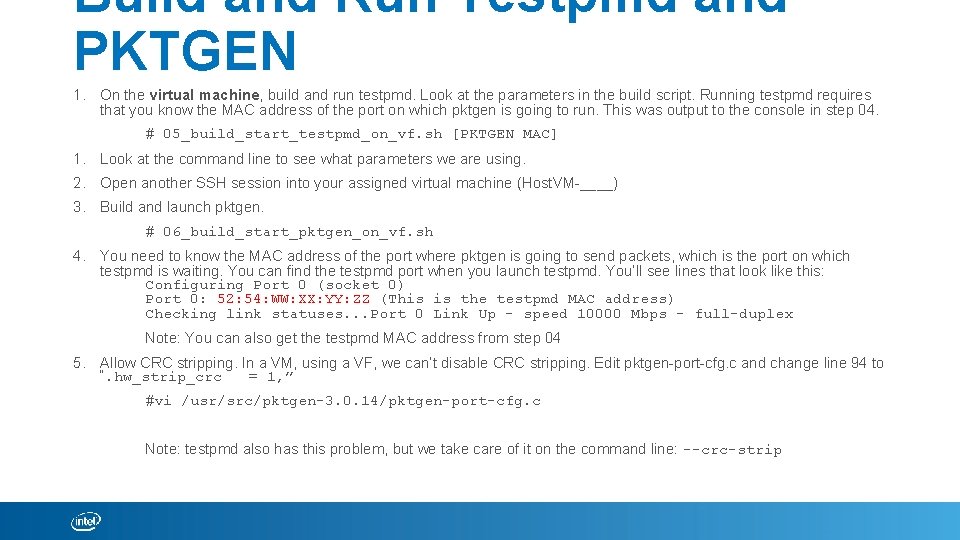

Build and Run Testpmd and PKTGEN 1. On the virtual machine, build and run testpmd. Look at the parameters in the build script. Running testpmd requires that you know the MAC address of the port on which pktgen is going to run. This was output to the console in step 04. # 05_build_start_testpmd_on_vf. sh [PKTGEN MAC] 1. Look at the command line to see what parameters we are using. 2. Open another SSH session into your assigned virtual machine (Host. VM-____) 3. Build and launch pktgen. # 06_build_start_pktgen_on_vf. sh 4. You need to know the MAC address of the port where pktgen is going to send packets, which is the port on which testpmd is waiting. You can find the testpmd port when you launch testpmd. You’ll see lines that look like this: Configuring Port 0 (socket 0) Port 0: 52: 54: WW: XX: YY: ZZ (This is the testpmd MAC address) Checking link statuses. . . Port 0 Link Up - speed 10000 Mbps - full-duplex Note: You can also get the testpmd MAC address from step 04 5. Allow CRC stripping. In a VM, using a VF, we can’t disable CRC stripping. Edit pktgen-port-cfg. c and change line 94 to “. hw_strip_crc = 1, ” #vi /usr/src/pktgen-3. 0. 14/pktgen-port-cfg. c Note: testpmd also has this problem, but we take care of it on the command line: --crc-strip

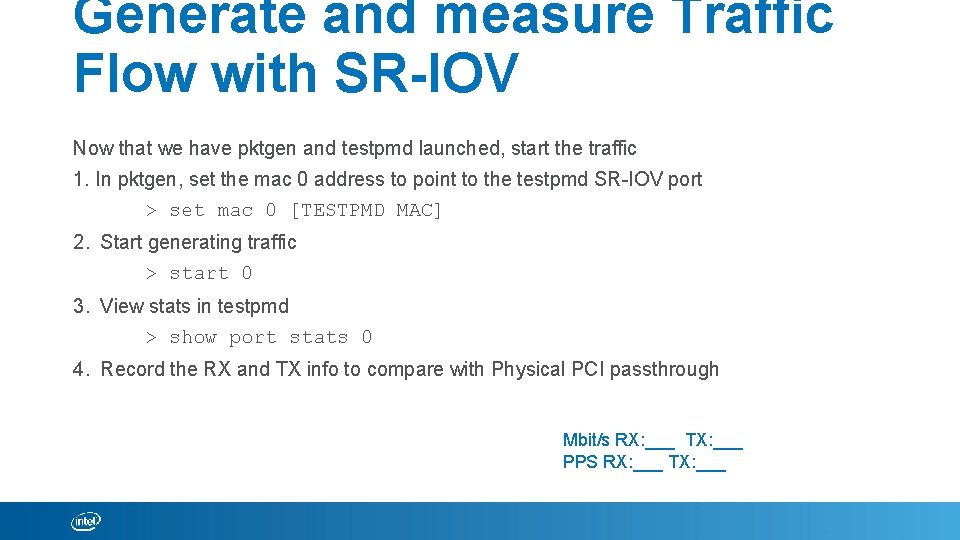

Generate and measure Traffic Flow with SR-IOV Now that we have pktgen and testpmd launched, start the traffic 1. In pktgen, set the mac 0 address to point to the testpmd SR-IOV port > set mac 0 [TESTPMD MAC] 2. Start generating traffic > start 0 3. View stats in testpmd > show port stats 0 4. Record the RX and TX info to compare with Physical PCI passthrough Mbit/s RX: ___ TX: ___ PPS RX: ___ TX: ___

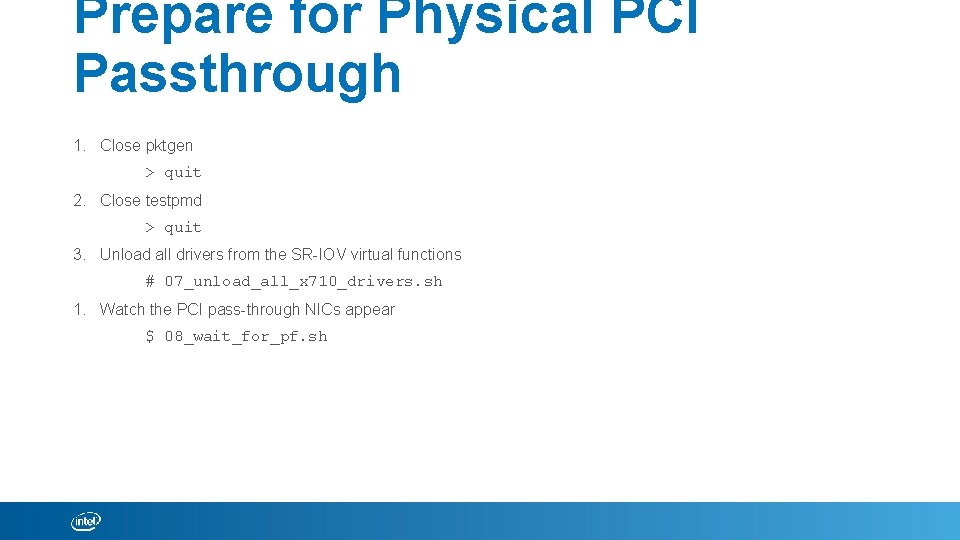

Prepare for Physical PCI Passthrough 1. Close pktgen > quit 2. Close testpmd > quit 3. Unload all drivers from the SR-IOV virtual functions # 07_unload_all_x 710_drivers. sh 1. Watch the PCI pass-through NICs appear $ 08_wait_for_pf. sh

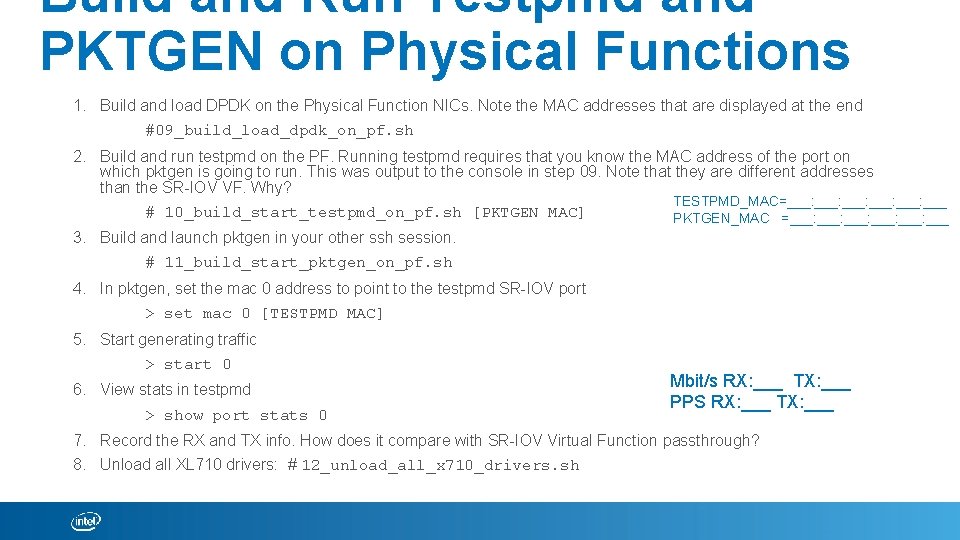

Build and Run Testpmd and PKTGEN on Physical Functions 1. Build and load DPDK on the Physical Function NICs. Note the MAC addresses that are displayed at the end #09_build_load_dpdk_on_pf. sh 2. Build and run testpmd on the PF. Running testpmd requires that you know the MAC address of the port on which pktgen is going to run. This was output to the console in step 09. Note that they are different addresses than the SR-IOV VF. Why? TESTPMD_MAC=___: ___: ___ # 10_build_start_testpmd_on_pf. sh [PKTGEN MAC] PKTGEN_MAC =___: ___: ___ 3. Build and launch pktgen in your other ssh session. # 11_build_start_pktgen_on_pf. sh 4. In pktgen, set the mac 0 address to point to the testpmd SR-IOV port > set mac 0 [TESTPMD MAC] 5. Start generating traffic > start 0 6. View stats in testpmd > show port stats 0 Mbit/s RX: ___ TX: ___ PPS RX: ___ TX: ___ 7. Record the RX and TX info. How does it compare with SR-IOV Virtual Function passthrough? 8. Unload all XL 710 drivers: # 12_unload_all_x 710_drivers. sh

Backup information • • SRIOV Pool (VF) x Queue PF-VF Mailbox Interface Initialization Mailbox Message support – DPDK IXGBE PMD vs Linux ixgbe Driver SRIOV L 2 Filters and Offloads

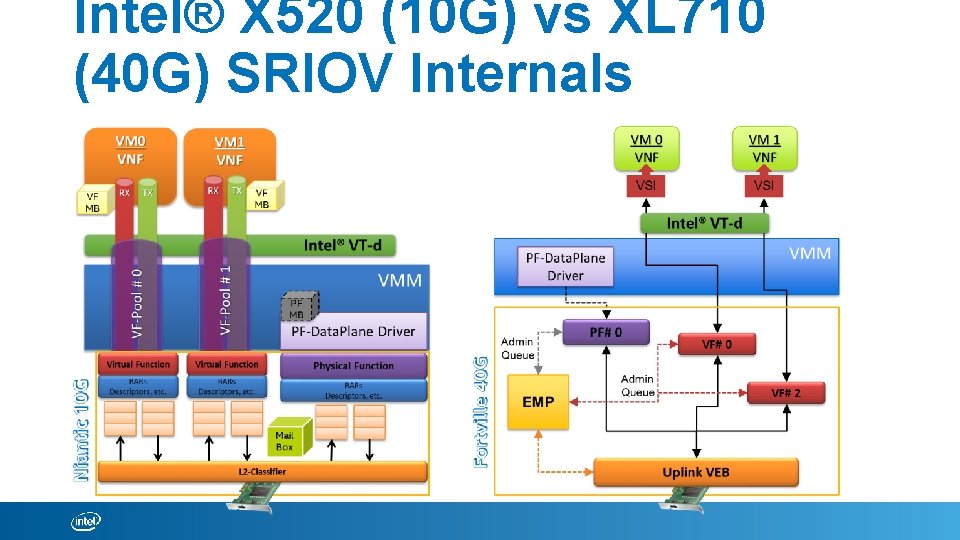

Intel® X 520 (10 G) vs XL 710 (40 G) SRIOV Internals

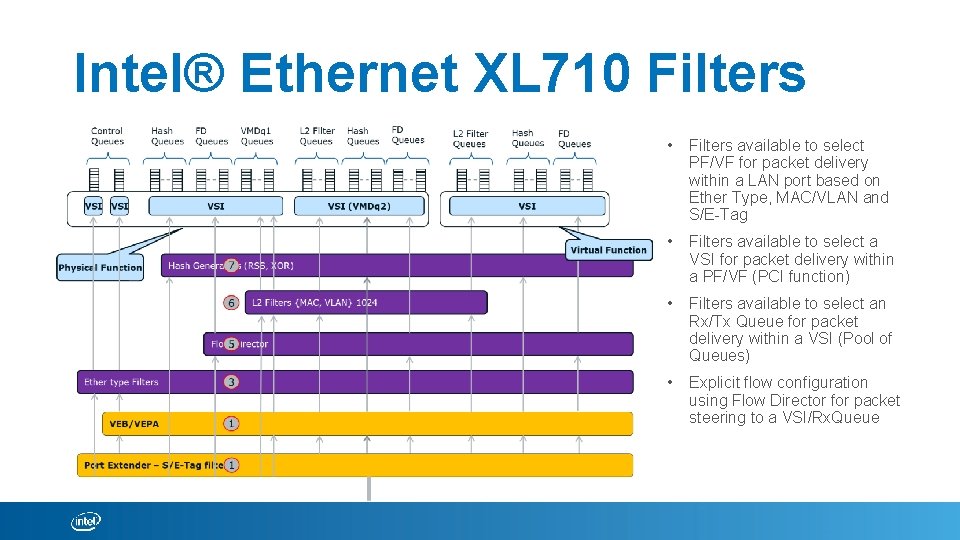

Intel® Ethernet XL 710 Filters • Filters available to select PF/VF for packet delivery within a LAN port based on Ether Type, MAC/VLAN and S/E-Tag • Filters available to select a VSI for packet delivery within a PF/VF (PCI function) • Filters available to select an Rx/Tx Queue for packet delivery within a VSI (Pool of Queues) • Explicit flow configuration using Flow Director for packet steering to a VSI/Rx. Queue

Reference • • Intel® Network Builders University - https: //networkbuilders. intel. com/university Basic Training – Course 3: NFV Technologies Basic Training – Course 5: The Road to Network Virtualization Basic Training – Course 6: Single Root I/O Virtualization DPDK – Course 1 -5: DPDK API and PMDs Data Plane Development Kit – dpdk. org Intel Open Source Packet Processing - https: //01. org/packet-processing • Intel® Resource Director Technology (Intel® RDT) • Intel® QAT Drivers

Questions. . !? Sessions coming up… 1. DPDK Performance Analysis with VTune Amplifier 2. DPDK Performance Benchmarking 3. DPDK Hashing Algo. Support

Creating VF – using DPDK pf driver - Backup 1. To use the port for DPDK, you need to bind it to igb_uio driver. You can check all the ports using following #. /tools/dpdk_nic_bind. py --status 2. To bind the ports to igb_uio driver #. /dpdk_nic_bind. py –b igb_uio 06: 00. 0 # echo 4 > /sys/bus/pci/devices/0000: bb: dd. ff/max_vfs 4. You can test the VFs created using the same lspci command before.

DPDK PF driver – Ho. ST CP/DP Backup 1. Run the DPDK PF driver in the host using testpmd application on a single PF port #. /x 86_64 -native-linuxapp-gcc/app/testpmd -c 0 x. F -n 4 -- -i --portmask=0 x 1 --nb-cores=2 <testpmd> set fwd mac <testpmd> start <testpmd> show port stats 0 – [optional]

- Slides: 24