Srikanth Kandula Jitendra Padhye and Victor Bahl Microsoft

Srikanth Kandula, Jitendra Padhye and Victor Bahl Microsoft Research FLYWAYS IN DATA CENTERS

Data Center Networking is a major cost of building large data centers Switches, routers, cabling complexity, management …. �Expensive equipment: aggregation switches cost > $250 K Tradeoff : provide “good” connectivity at “low” cost Lot of recent interest from industry and academia

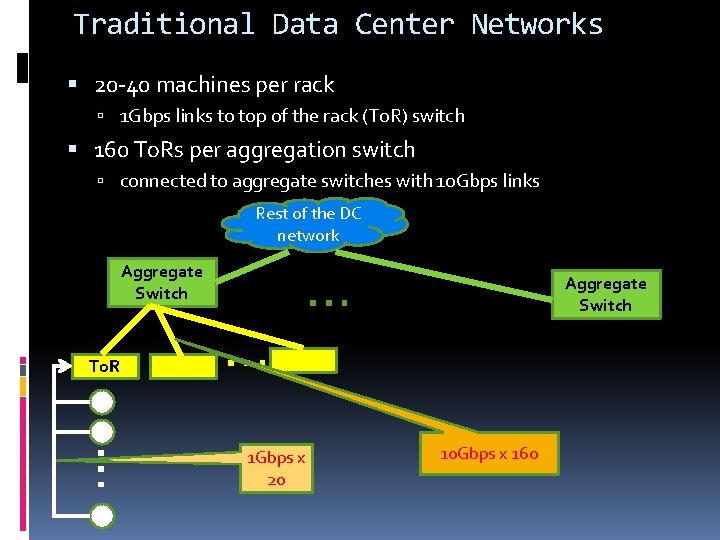

Traditional Data Center Networks 20 -40 machines per rack 1 Gbps links to top of the rack (To. R) switch 160 To. Rs per aggregation switch connected to aggregate switches with 10 Gbps links Rest of the DC network Aggregate Switch To. R … … … 1 Gbps x 20 Aggregate Switch 10 Gbps x 160

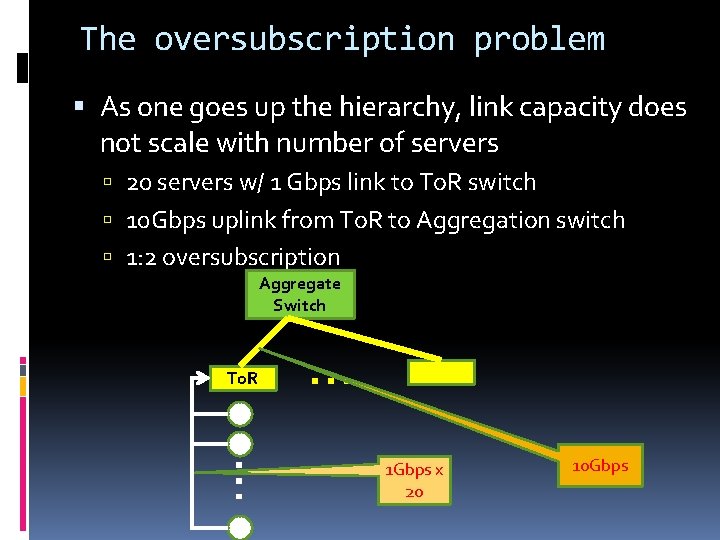

The oversubscription problem As one goes up the hierarchy, link capacity does not scale with number of servers 20 servers w/ 1 Gbps link to To. R switch 10 Gbps uplink from To. R to Aggregation switch 1: 2 oversubscription Aggregate Switch To. R … … 1 Gbps x 20 10 Gbps

The oversubscription problem As one goes up the hierarchy, link capacity does not scale with number of servers 20 servers w/ 1 Gbps link to To. R switch 10 Gbps uplink from To. R to Aggregation switch 1: 2 oversubscription Implications: Potential for congestion when communicating between racks So, applications minimize such communication

Possible solutions Fewer servers per To. R More switches, higher cost Use higher bandwidth links for To. R uplinks Technological limitations �Today, largest practical link is 40 Gbps (4 x 10 Gbps) Expensive �Either use more aggregation switches �Or build ones with sufficient backplane bandwidth Clos networks

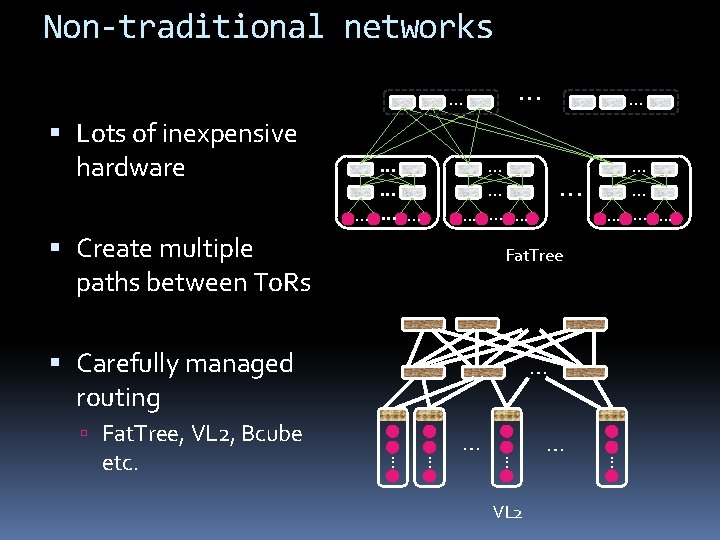

Non-traditional networks … Lots of inexpensive hardware … … … Create multiple paths between To. Rs … … … … … Fat. Tree Carefully managed routing … Fat. Tree, VL 2, Bcube VL 2 … … … etc. … …

Note that …. Key goal of all proposed solutions is to eliminate oversubscription There are various other advantages as well Why? Need to move VMs anywhere in the network Network is no longer a bottleneck Needed for all-pairs-shuffle workload Is there an alternative to eliminating oversubscription?

1: 1 Oversubscription may not always be necessary Studied application demands from a production cluster Short-lived, localized congestion If we can add capacity to “hotspots” as they form, we may not need to eliminate oversubscription

Data set Production cluster of 1500 servers Data-mining workload 1: 2 oversubscribed tree 20 servers per rack (75 total racks) 1 Gbps links from server to To. R 10 Gbps uplink from To. R to aggregation switch Socket-level traces over several weeks Demands computed by averaging traffic over 5 minute windows

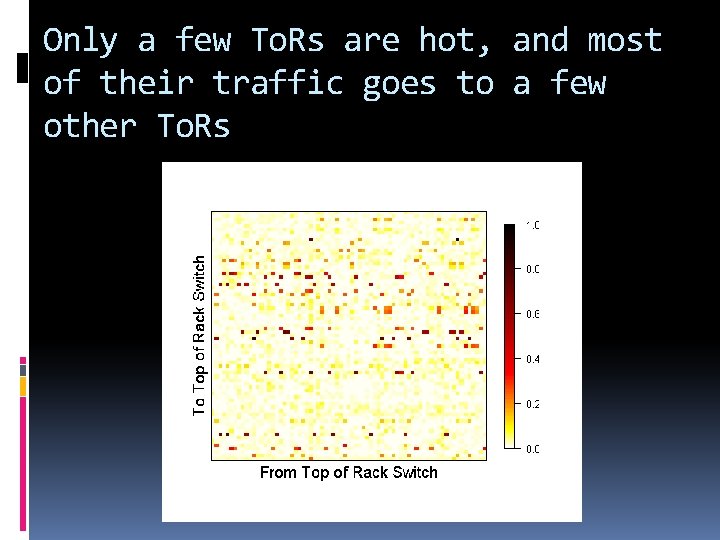

Only a few To. Rs are hot, and most of their traffic goes to a few other To. Rs

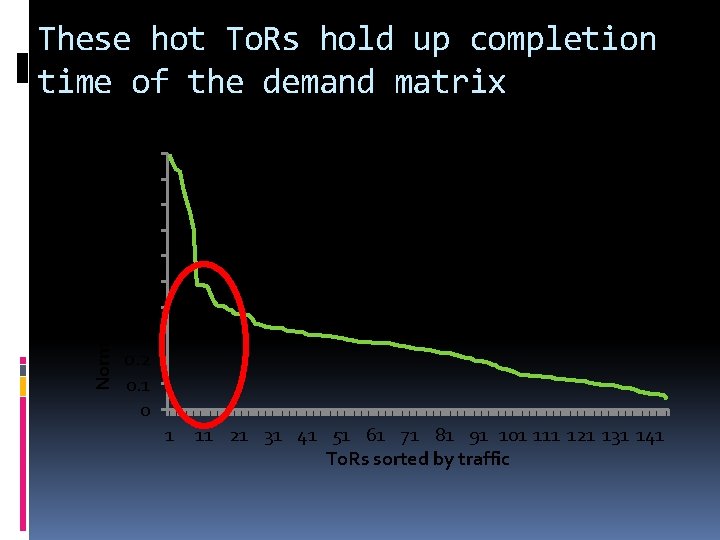

Normalized Completion Time These hot To. Rs hold up completion time of the demand matrix 1 0. 9 0. 8 0. 7 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0 Potential Speedup 1 11 21 31 41 51 61 71 81 91 101 111 121 131 141 To. Rs sorted by traffic

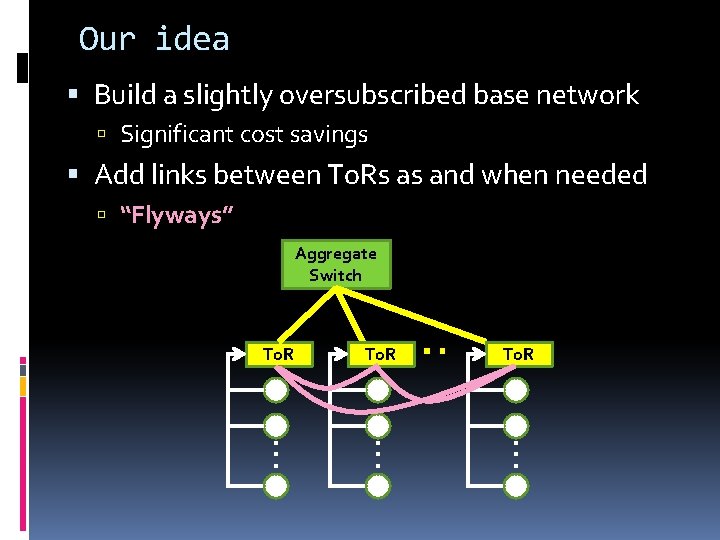

Our idea Build a slightly oversubscribed base network Significant cost savings Add links between To. Rs as and when needed “Flyways” Aggregate Switch … To. R … … To. R

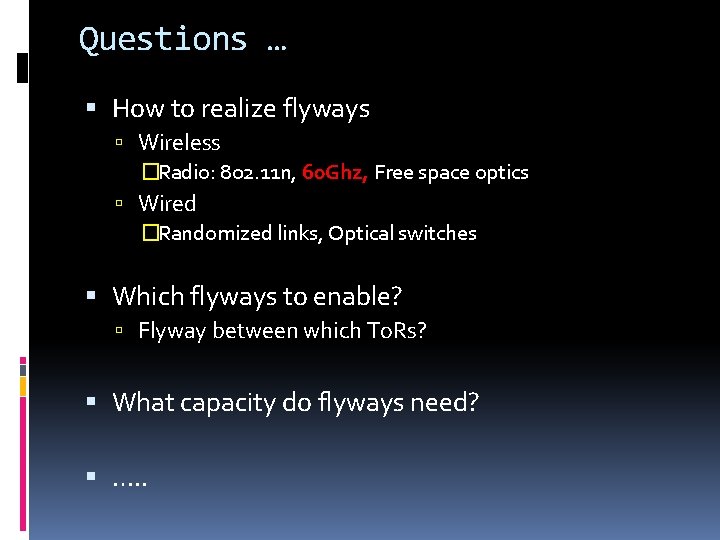

Questions … How to realize flyways Wireless �Radio: 802. 11 n, 60 Ghz, Free space optics Wired �Randomized links, Optical switches Which flyways to enable? Flyway between which To. Rs? What capacity do flyways need? …. .

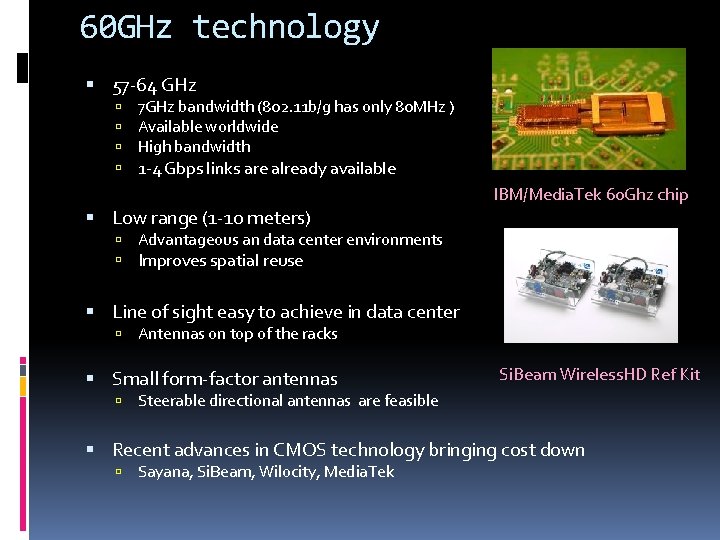

60 GHz technology 57 -64 GHz 7 GHz bandwidth (802. 11 b/g has only 80 MHz ) Available worldwide High bandwidth 1 -4 Gbps links are already available Low range (1 -10 meters) IBM/Media. Tek 60 Ghz chip Advantageous an data center environments Improves spatial reuse Line of sight easy to achieve in data center Antennas on top of the racks Small form-factor antennas Si. Beam Wireless. HD Ref Kit Steerable directional antennas are feasible Recent advances in CMOS technology bringing cost down Sayana, Si. Beam, Wilocity, Media. Tek

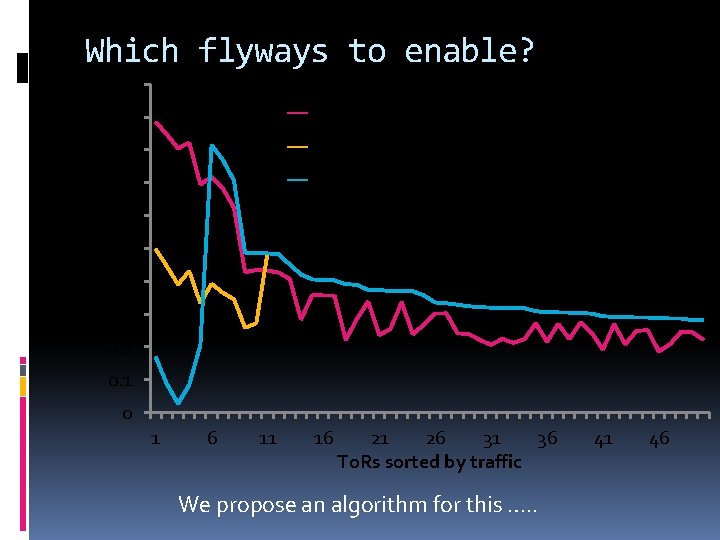

Which flyways to enable? Remaining traffic at To. R (Normalized) 1 0. 9 1 Flyway each for top 50 To. Rs 0. 8 5 Flyways each for top 10 To. Rs 0. 7 10 Flyways each for top 5 To. Rs 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0 1 6 11 16 21 26 31 36 To. Rs sorted by traffic We propose an algorithm for this …. . 41 46

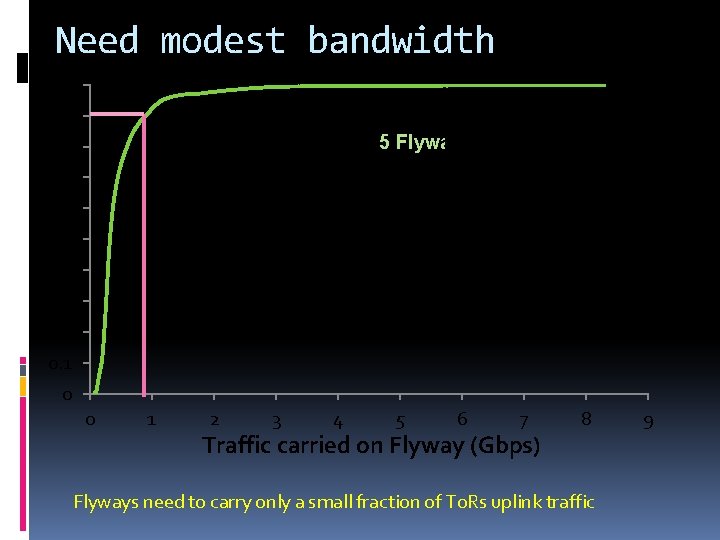

Need modest bandwidth 1 0. 9 5 Flyways each for top 10 To. Rs 0. 8 0. 7 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0 0 1 2 3 4 5 6 7 Traffic carried on Flyway (Gbps) 8 Flyways need to carry only a small fraction of To. Rs uplink traffic 9

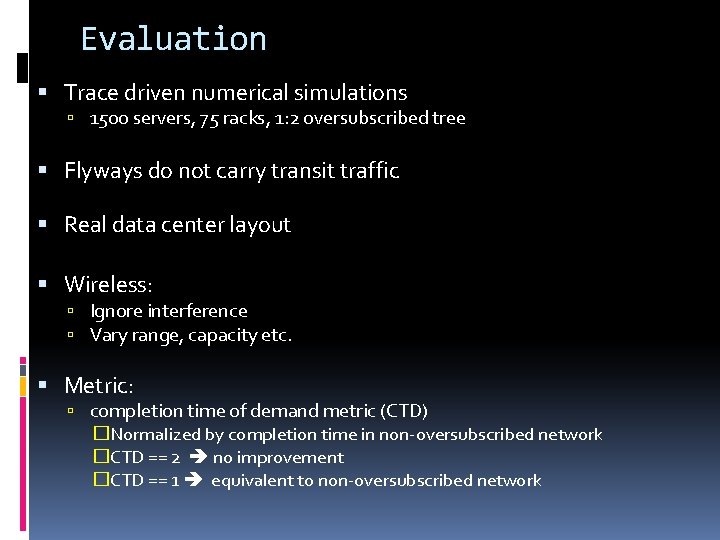

Evaluation Trace driven numerical simulations 1500 servers, 75 racks, 1: 2 oversubscribed tree Flyways do not carry transit traffic Real data center layout Wireless: Ignore interference Vary range, capacity etc. Metric: completion time of demand metric (CTD) �Normalized by completion time in non-oversubscribed network �CTD == 2 no improvement �CTD == 1 equivalent to non-oversubscribed network

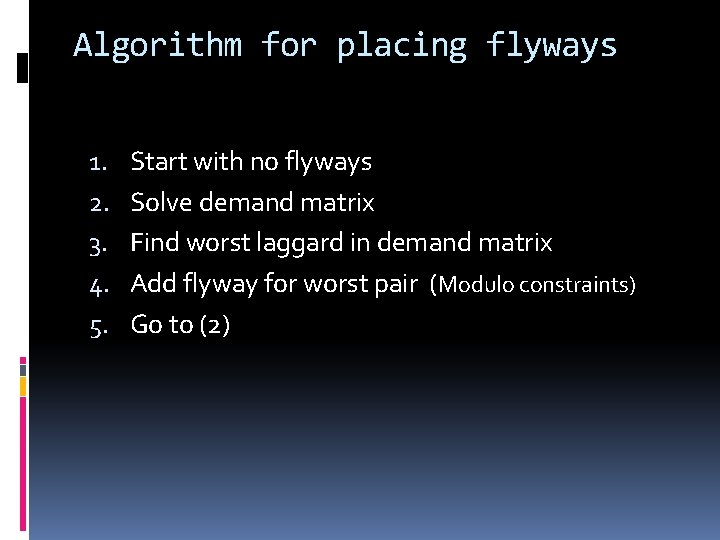

Algorithm for placing flyways 1. 2. 3. 4. 5. Start with no flyways Solve demand matrix Find worst laggard in demand matrix Add flyway for worst pair (Modulo constraints) Go to (2)

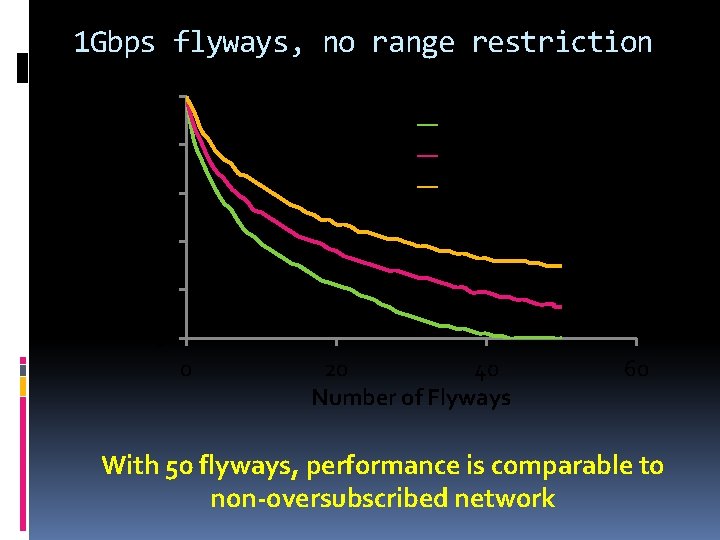

1 Gbps flyways, no range restriction Normalized CTD 2 25 th Percentile Median 75 th Percentile 1. 8 1. 6 1. 4 1. 2 1 0 20 40 Number of Flyways 60 With 50 flyways, performance is comparable to non-oversubscribed network

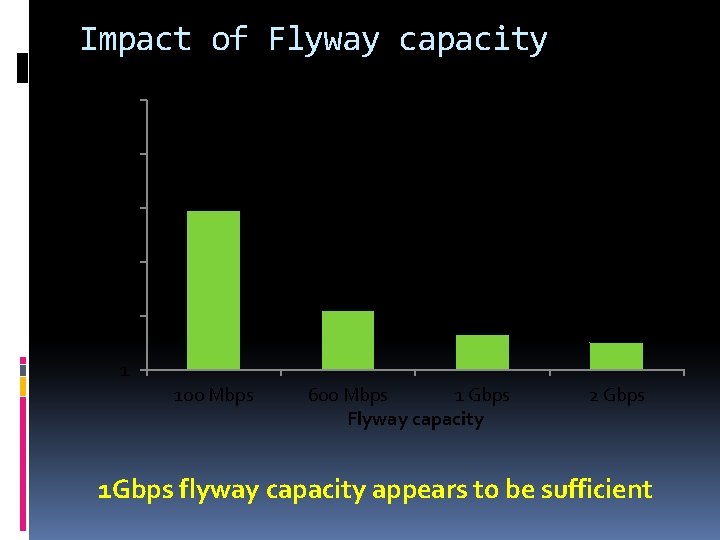

Impact of Flyway capacity Normalized CTD 2 1. 8 1. 6 1. 4 1. 2 1 100 Mbps 600 Mbps 1 Gbps Flyway capacity 2 Gbps 1 Gbps flyway capacity appears to be sufficient

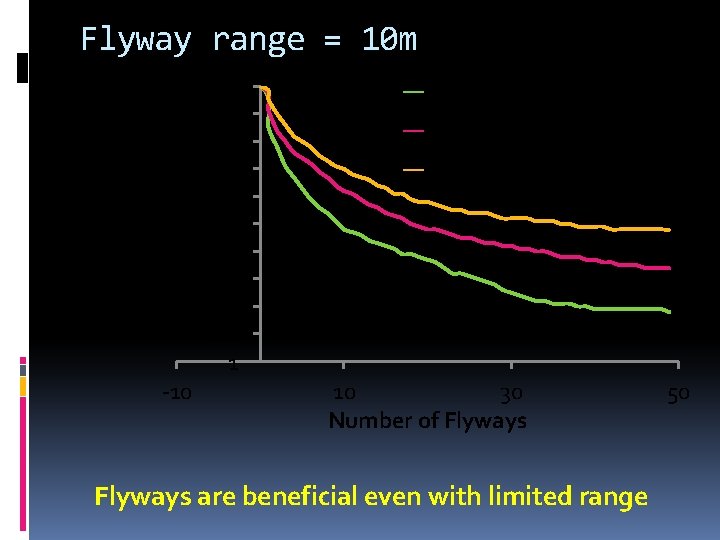

Flyway range = 10 m Normalized CTD 2 1. 9 1. 8 1. 7 1. 6 1. 5 1. 4 1. 3 1. 2 1. 1 1 -10 25 th Percentile Median 75 th Percentile 10 30 Number of Flyways are beneficial even with limited range 50

Conclusions A new paradigm for data center networks Slightly oversubscribed base network with dynamic capacity addition using flyways Today, 60 GHz wireless appears to be a good choice for flyways

Backup

Bandwidth needs Flyways carry far less traffic than uplink In our model, only To. R-to-To. R (1 hop) 60 GHz band is 9 x wide compared to 802. 11 b/g band With better encodings, significantly higher capacity is possible

- Slides: 25