Speech Synthesis with Artificial Neural Networks April 2020

- Slides: 21

Speech Synthesis with Artificial Neural Networks April 2020 Vassilis Tsiaras

Introduction • Generating natural speech from text (text-to-speech synthesis, TTS) remains a challenging task despite decades of investigation. • Over time, different techniques have dominated the field. • Concatenative synthesis with unit selection was the state-of-the-art for many years. • Statistical parametric speech synthesis (SPSS), which directly generates smooth trajectories of speech features to be synthesized by a vocoder, followed. • From its initiation (around 1995) up to 2013 the mainstream of SPSS was based on HMMs. • HMM based speech synthesis solved many of the issues that concatenative synthesis had with boundary artifacts, and adaptation to new data. • However, the audio produced by these systems often sounds muffled and unnatural. • From 2013 the researchers started to experiment with neural network based statistical models, and managed to improve the quality of the synthesized speech. • The big revolution in SPSS started around 2016 with the invention of neural network architectures that produce speech quality close to human (e. g. , Wave. Net) as well as sequence-to-sequence architectures that translate and align from text to speech parameters.

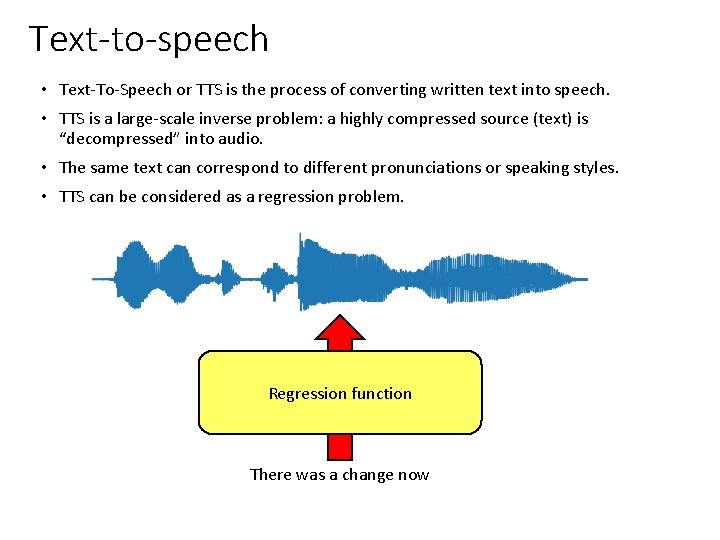

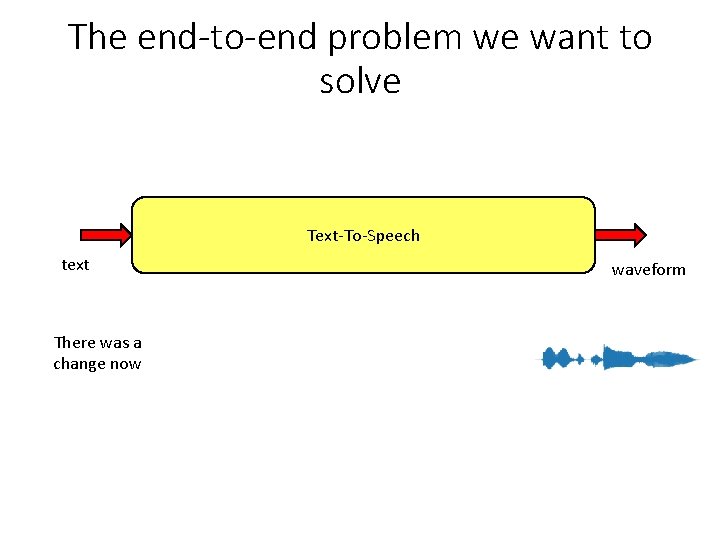

Text-to-speech • Text-To-Speech or TTS is the process of converting written text into speech. • TTS is a large-scale inverse problem: a highly compressed source (text) is “decompressed” into audio. • The same text can correspond to different pronunciations or speaking styles. • TTS can be considered as a regression problem. Regression function There was a change now

The end-to-end problem we want to solve Text-To-Speech text There was a change now waveform

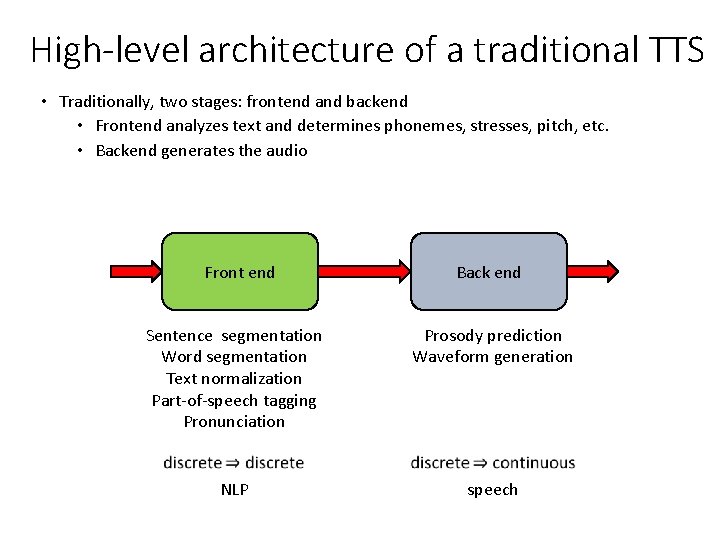

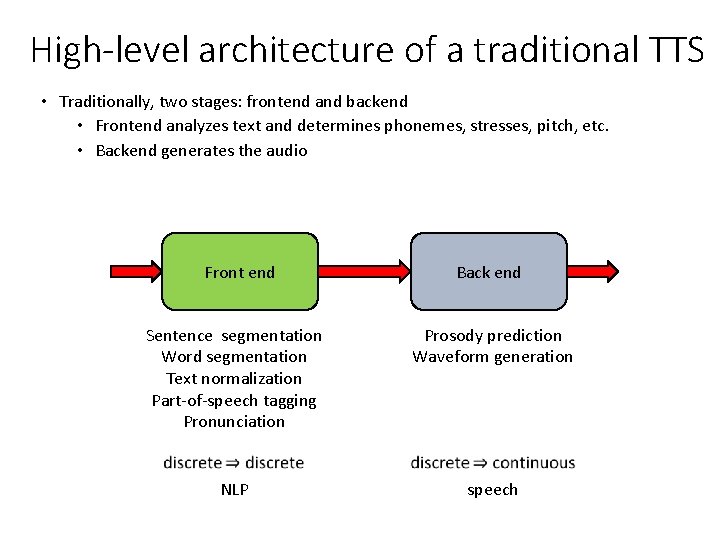

High-level architecture of a traditional TTS • Traditionally, two stages: frontend and backend • Frontend analyzes text and determines phonemes, stresses, pitch, etc. • Backend generates the audio Front end Back end Sentence segmentation Word segmentation Text normalization Part-of-speech tagging Pronunciation Prosody prediction Waveform generation NLP speech

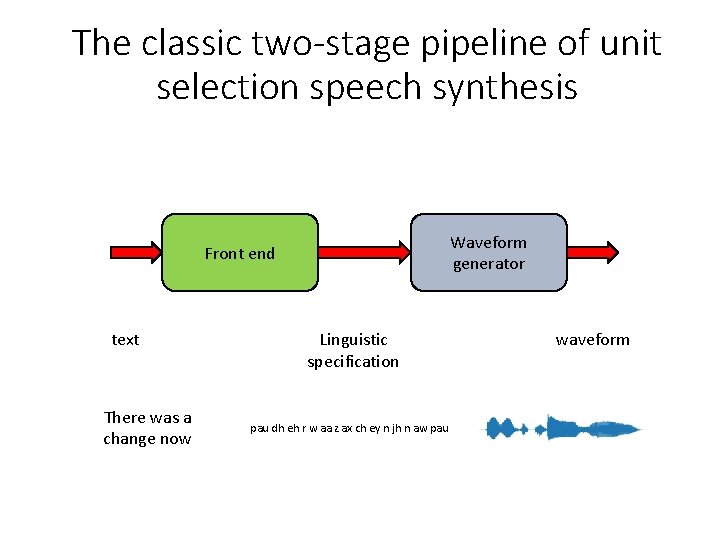

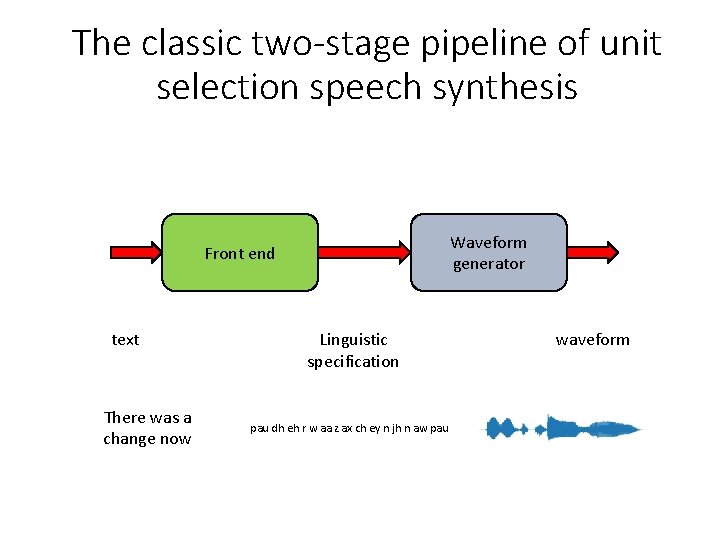

The classic two-stage pipeline of unit selection speech synthesis Waveform generator Front end text There was a change now Linguistic specification pau dh eh r w aa z ax ch ey n jh n aw pau waveform

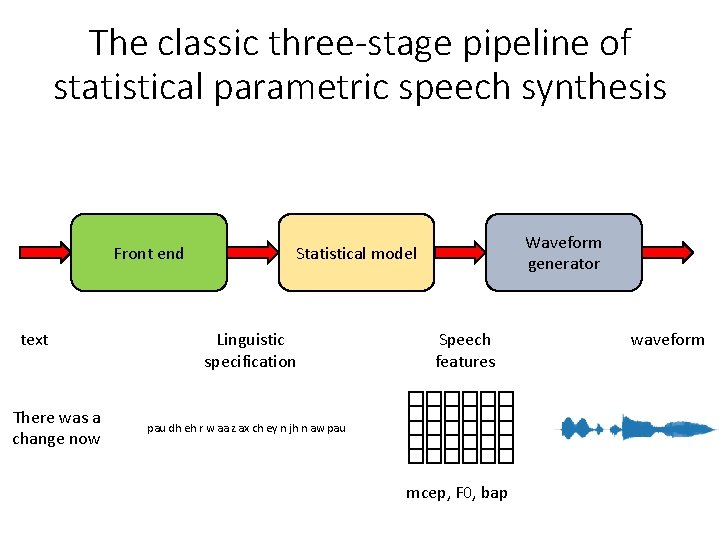

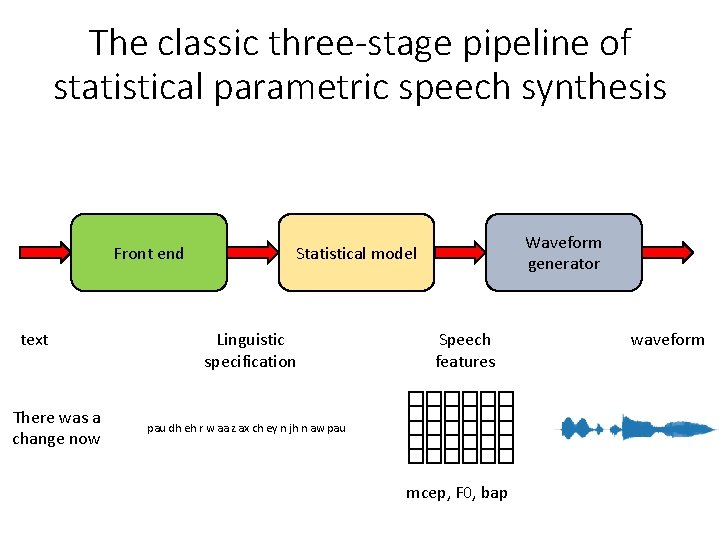

The classic three-stage pipeline of statistical parametric speech synthesis Front end text There was a change now Waveform generator Statistical model Linguistic specification Speech features pau dh eh r w aa z ax ch ey n jh n aw pau mcep, F 0, bap waveform

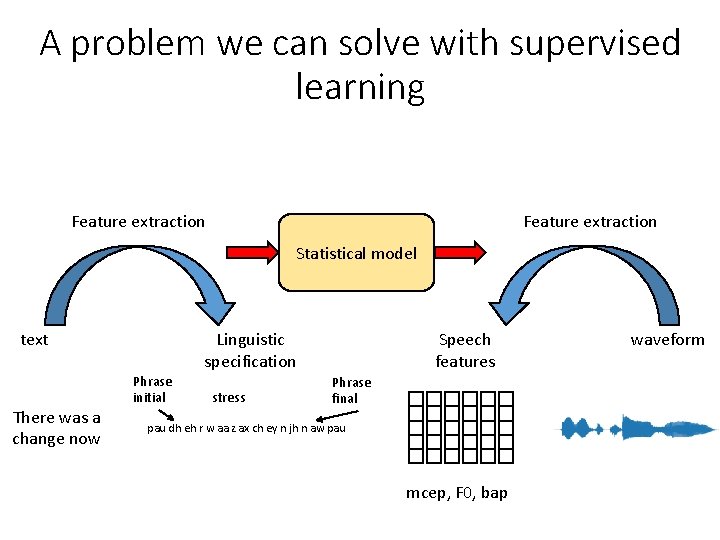

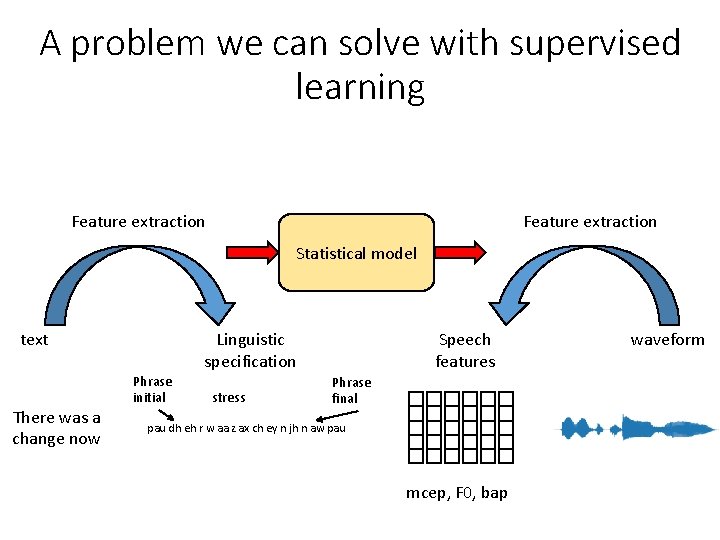

A problem we can solve with supervised learning Feature extraction Statistical model text There was a change now Linguistic specification Phrase initial stress Speech features Phrase final pau dh eh r w aa z ax ch ey n jh n aw pau mcep, F 0, bap waveform

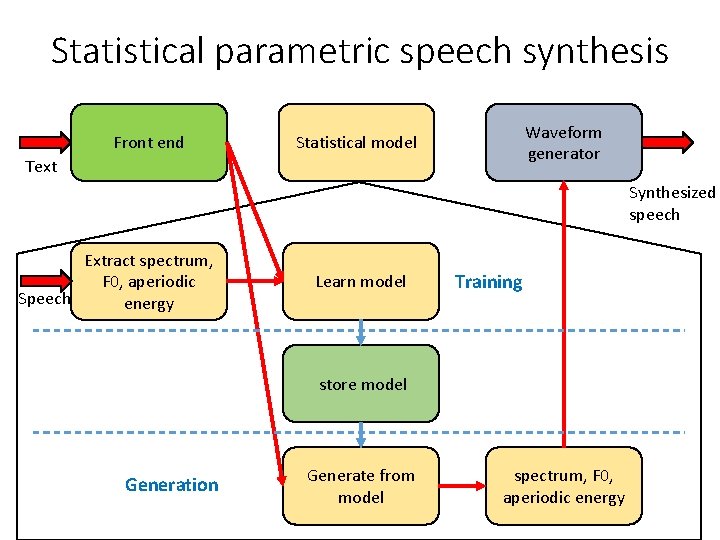

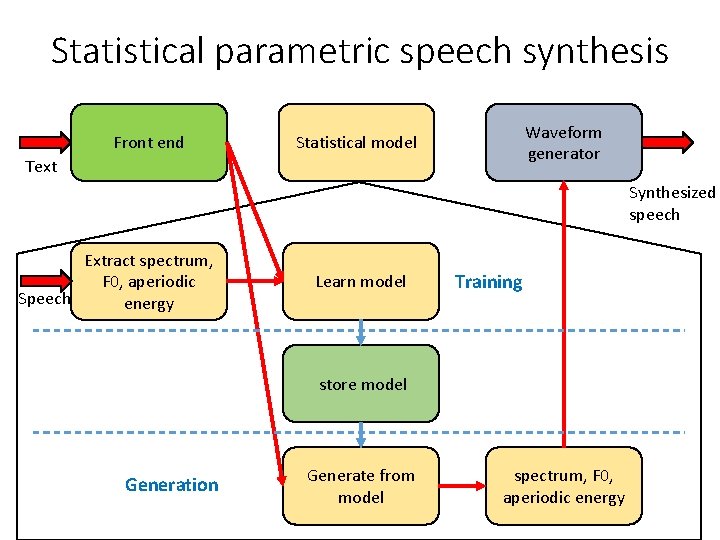

Statistical parametric speech synthesis Front end Waveform generator Statistical model Text Synthesized speech Extract spectrum, F 0, aperiodic Speech energy Learn model Training store model Generation Generate from model spectrum, F 0, aperiodic energy

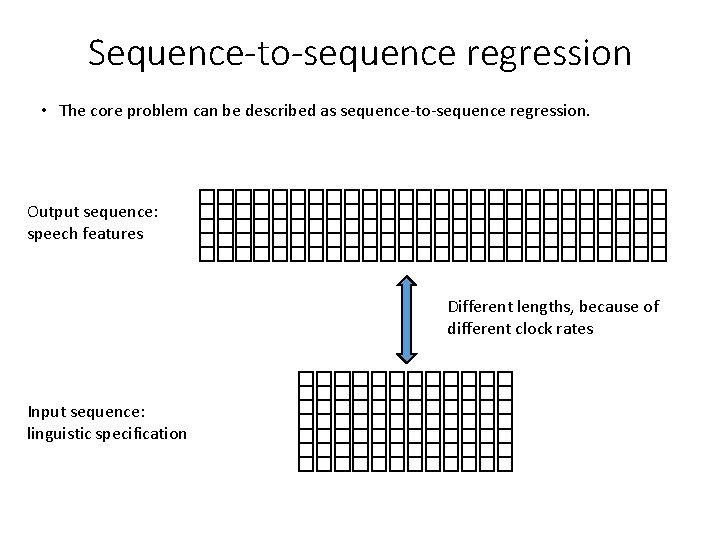

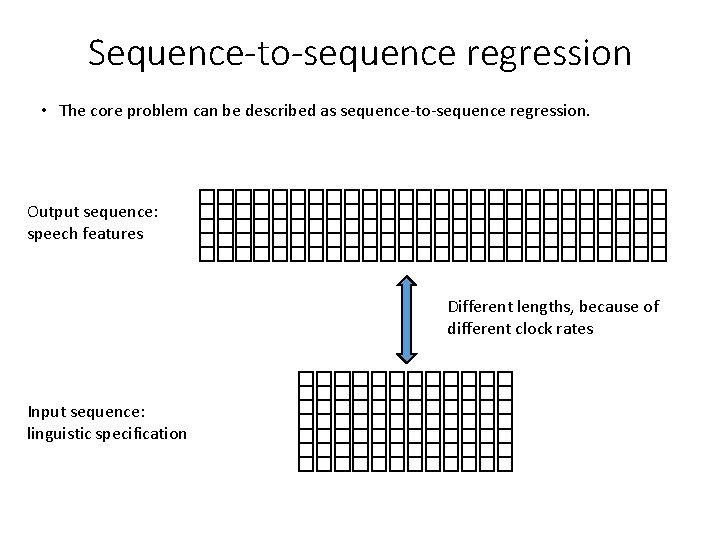

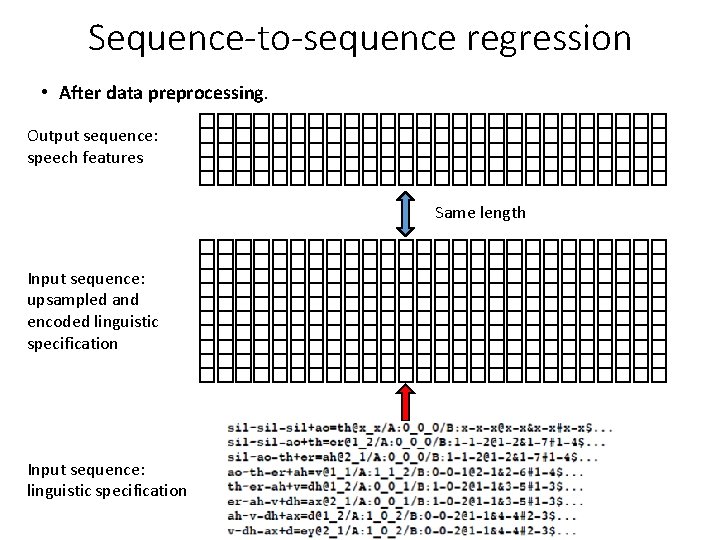

Sequence-to-sequence regression • The core problem can be described as sequence-to-sequence regression. Output sequence: speech features Different lengths, because of different clock rates Input sequence: linguistic specification

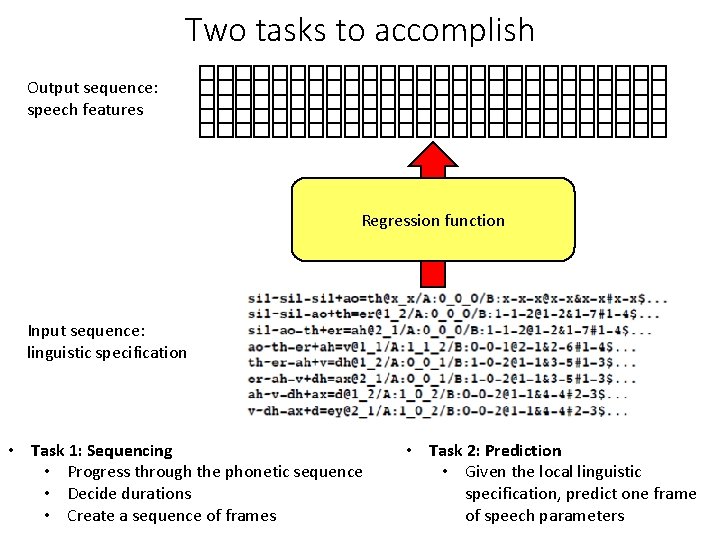

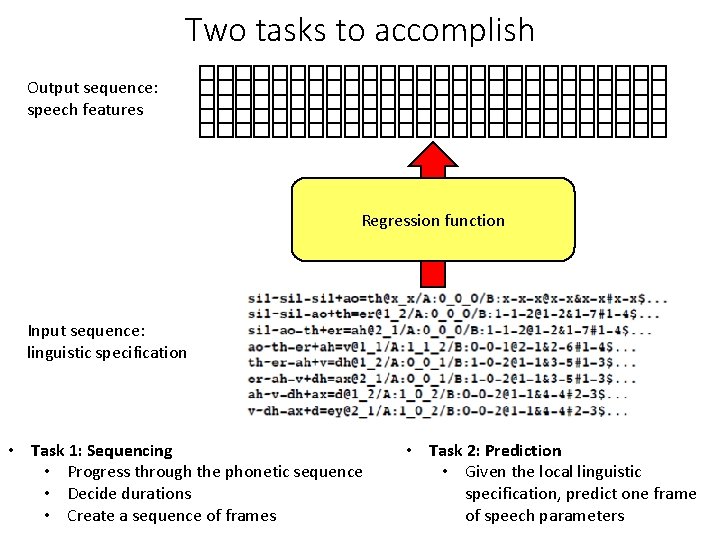

Two tasks to accomplish Output sequence: speech features Regression function Input sequence: linguistic specification • Task 1: Sequencing • Progress through the phonetic sequence • Decide durations • Create a sequence of frames • Task 2: Prediction • Given the local linguistic specification, predict one frame of speech parameters

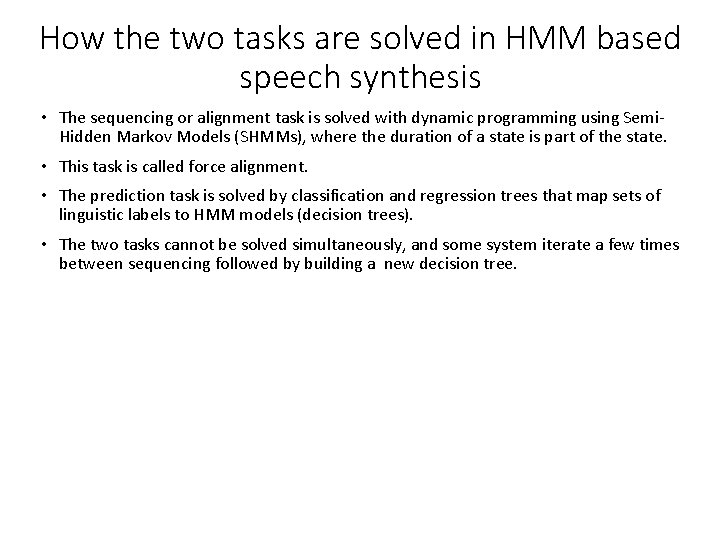

How the two tasks are solved in HMM based speech synthesis • The sequencing or alignment task is solved with dynamic programming using Semi. Hidden Markov Models (SHMMs), where the duration of a state is part of the state. • This task is called force alignment. • The prediction task is solved by classification and regression trees that map sets of linguistic labels to HMM models (decision trees). • The two tasks cannot be solved simultaneously, and some system iterate a few times between sequencing followed by building a new decision tree.

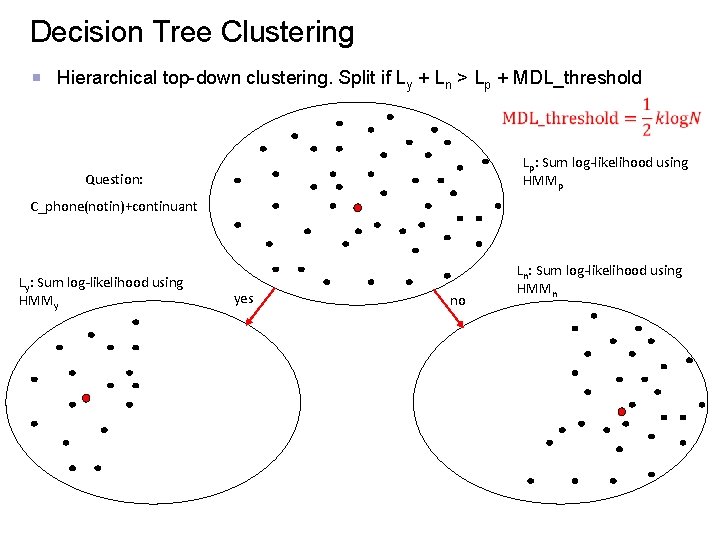

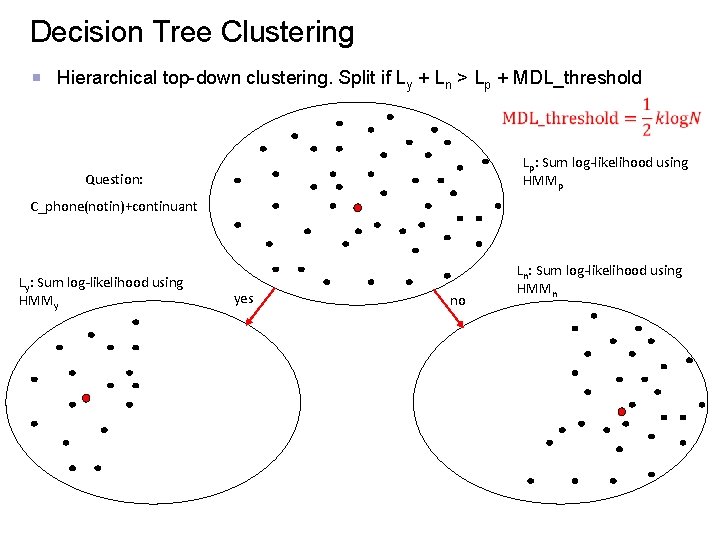

Decision Tree Clustering Hierarchical top-down clustering. Split if Ly + Ln > Lp + MDL_threshold Lp: Sum log-likelihood using HMMp Question: C_phone(notin)+continuant Ly: Sum log-likelihood using HMMy yes no Ln: Sum log-likelihood using HMMn

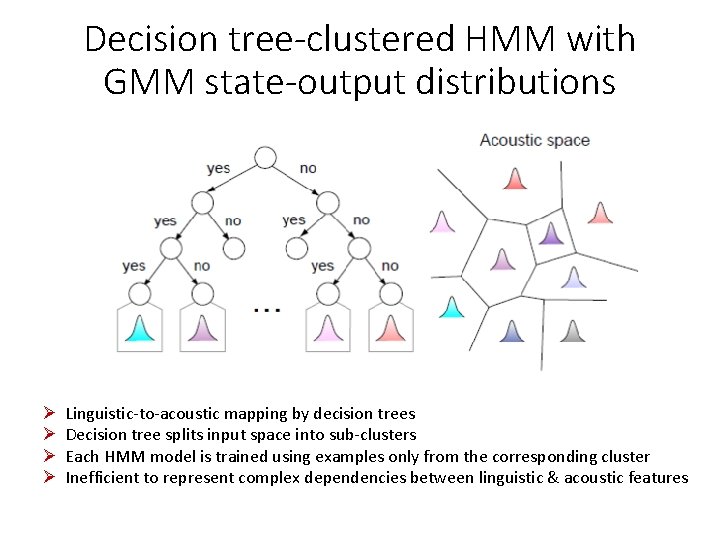

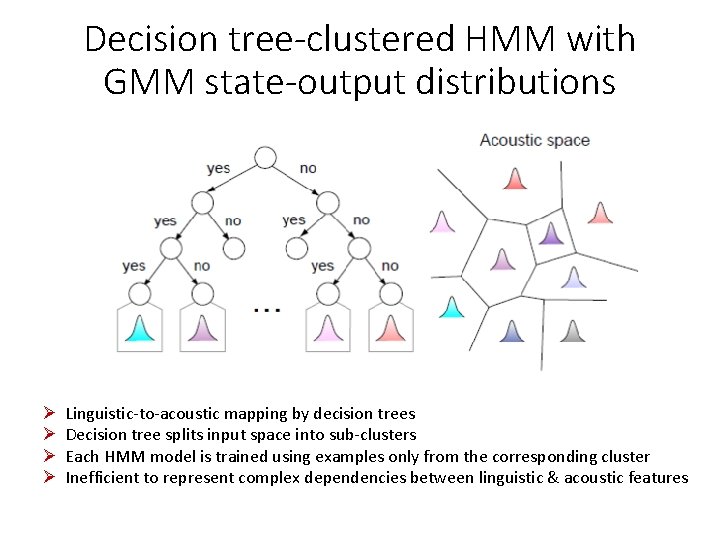

Decision tree-clustered HMM with GMM state-output distributions Ø Ø Linguistic-to-acoustic mapping by decision trees Decision tree splits input space into sub-clusters Each HMM model is trained using examples only from the corresponding cluster Inefficient to represent complex dependencies between linguistic & acoustic features

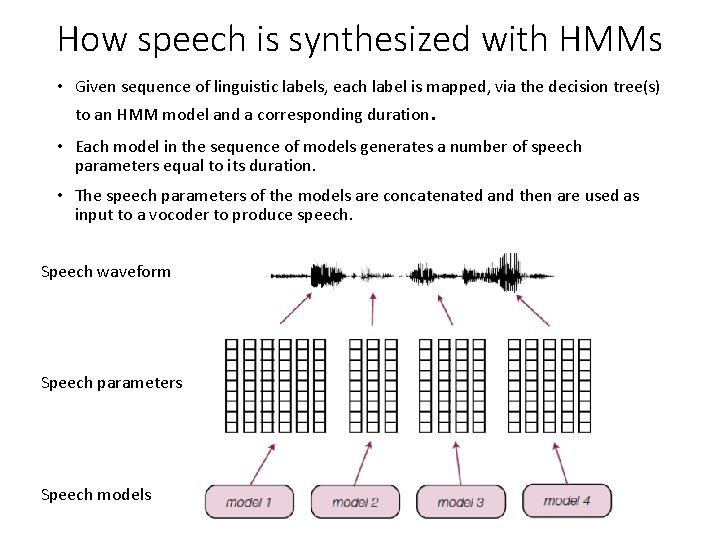

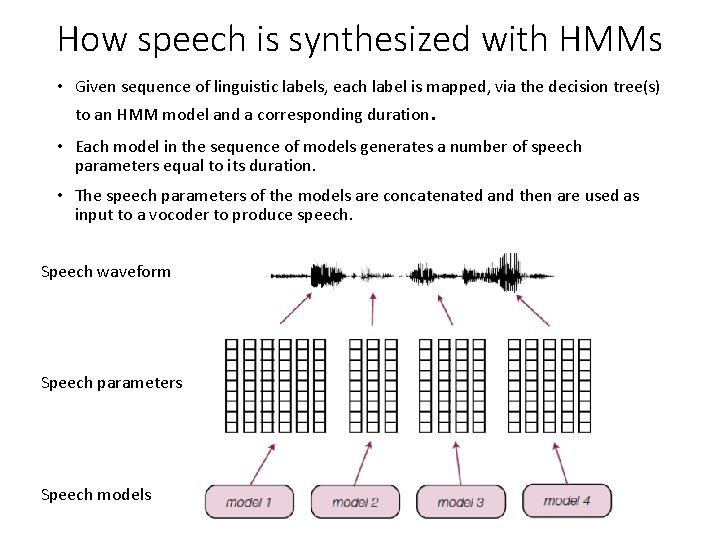

How speech is synthesized with HMMs • Given sequence of linguistic labels, each label is mapped, via the decision tree(s) to an HMM model and a corresponding duration. • Each model in the sequence of models generates a number of speech parameters equal to its duration. • The speech parameters of the models are concatenated and then are used as input to a vocoder to produce speech. Speech waveform Speech parameters Speech models

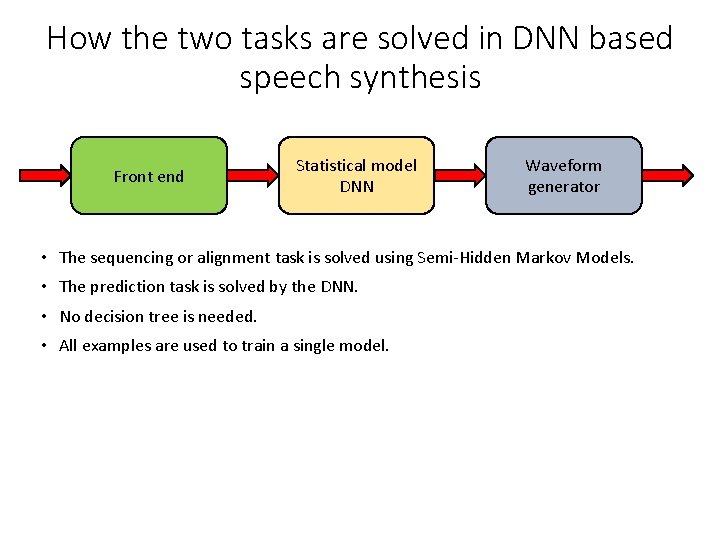

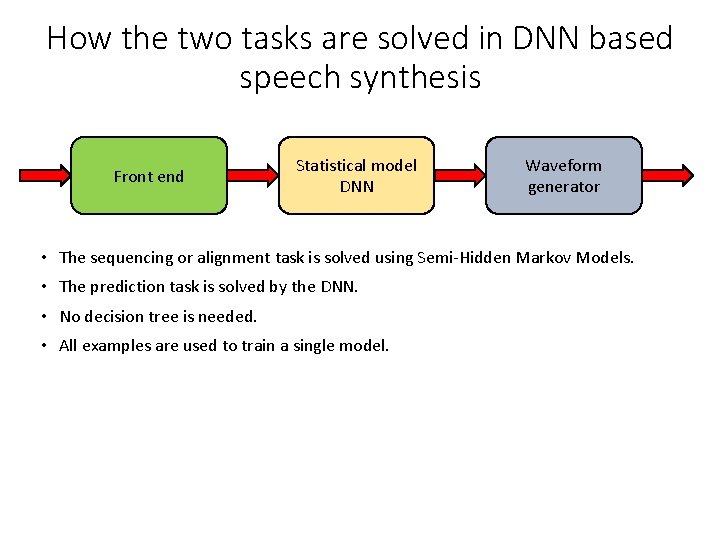

How the two tasks are solved in DNN based speech synthesis Front end Statistical model DNN Waveform generator • The sequencing or alignment task is solved using Semi-Hidden Markov Models. • The prediction task is solved by the DNN. • No decision tree is needed. • All examples are used to train a single model.

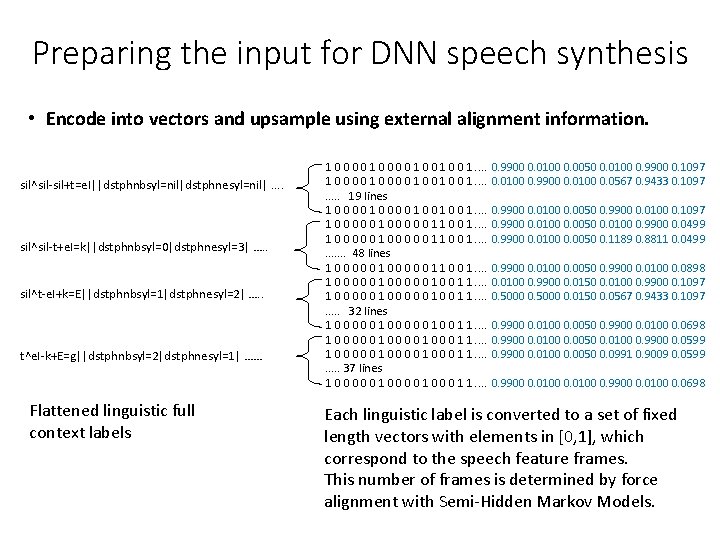

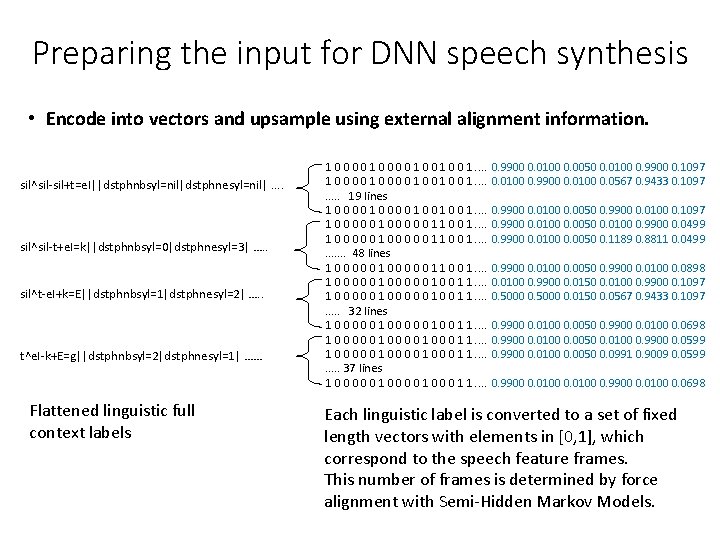

Preparing the input for DNN speech synthesis • Encode into vectors and upsample using external alignment information. sil^sil-sil+t=e. I||dstphnbsyl=nil|dstphnesyl=nil|. . sil^sil-t+e. I=k||dstphnbsyl=0|dstphnesyl=3| …. . sil^t-e. I+k=E||dstphnbsyl=1|dstphnesyl=2| …. . t^e. I-k+E=g||dstphnbsyl=2|dstphnesyl=1| …… Flattened linguistic full context labels 1 0 0 0 0 1 0 0 1. . . 19 lines 1 0 0 0 0 1. . . . 1 0 0 0 0 0 1 1 0 0 1. . . 48 lines 1 0 0 0 0 0 1 1 0 0 1. . . . 1 0 0 0 0 0 1 0 0 1 1. . 32 lines 1 0 0 0 0 0 1 1. . . . 1 0 0 0 0 0 1 0 0 0 1 1. . 37 lines 1 0 0 0 1 1. . 0. 9900 0. 0100 0. 0050 0. 0100 0. 9900 0. 1097 0. 0100 0. 9900 0. 0100 0. 0567 0. 9433 0. 1097 0. 9900 0. 0100 0. 0050 0. 9900 0. 0100 0. 1097 0. 9900 0. 0100 0. 0050 0. 0100 0. 9900 0. 0499 0. 9900 0. 0100 0. 0050 0. 1189 0. 8811 0. 0499 0. 9900 0. 0100 0. 0050 0. 9900 0. 0100 0. 0898 0. 0100 0. 9900 0. 0150 0. 0100 0. 9900 0. 1097 0. 5000 0. 0150 0. 0567 0. 9433 0. 1097 0. 9900 0. 0100 0. 0050 0. 9900 0. 0100 0. 0698 0. 9900 0. 0100 0. 0050 0. 0100 0. 9900 0. 0599 0. 9900 0. 0100 0. 0050 0. 0991 0. 9009 0. 0599 0. 9900 0. 0100 0. 0698 Each linguistic label is converted to a set of fixed length vectors with elements in [0, 1], which correspond to the speech feature frames. This number of frames is determined by force alignment with Semi-Hidden Markov Models.

Preparing the targets for DNN speech synthesis • Output: The speech parameters, usually mcep, lf 0, vuv, bap, phi, are normalized to have mean value 0 and standard deviation 1. • Optionally delta and delta-delta are also used.

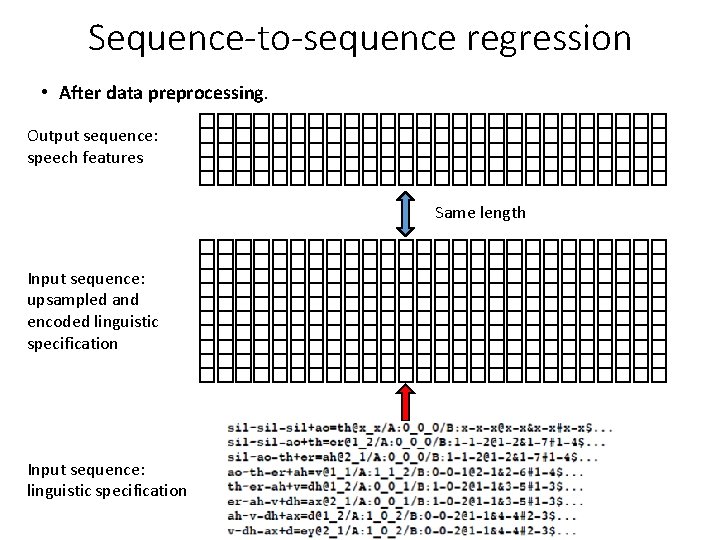

Sequence-to-sequence regression • After data preprocessing. Output sequence: speech features Same length Input sequence: upsampled and encoded linguistic specification Input sequence: linguistic specification

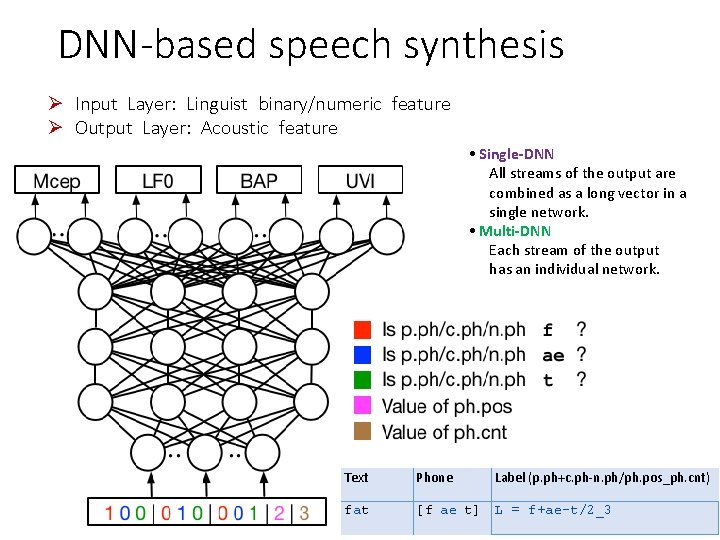

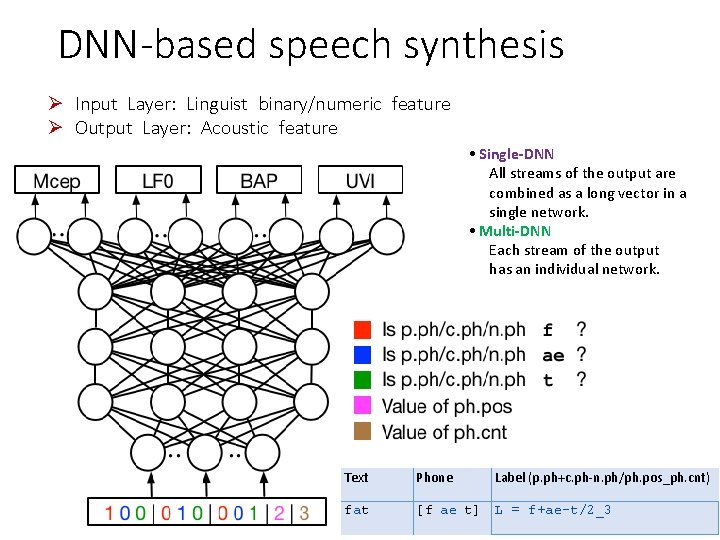

DNN-based speech synthesis Ø Input Layer: Linguist binary/numeric feature Ø Output Layer: Acoustic feature • Single-DNN All streams of the output are combined as a long vector in a single network. • Multi-DNN Each stream of the output has an individual network. Text Phone Label (p. ph+c. ph-n. ph/ph. pos_ph. cnt) fat [f ae t] L = f+ae-t/2_3

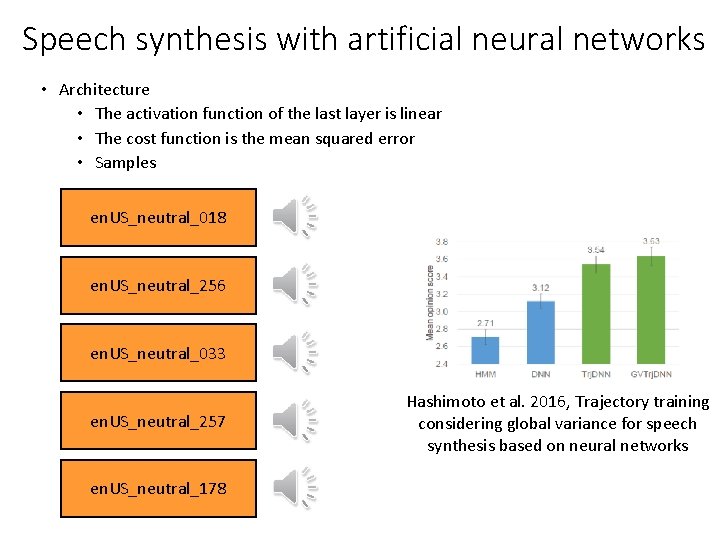

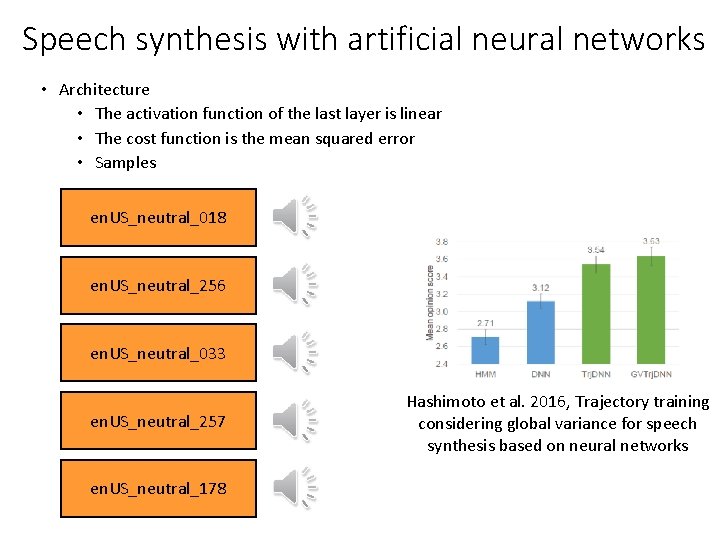

Speech synthesis with artificial neural networks • Architecture • The activation function of the last layer is linear • The cost function is the mean squared error • Samples en. US_neutral_018 en. US_neutral_256 en. US_neutral_033 en. US_neutral_257 en. US_neutral_178 Hashimoto et al. 2016, Trajectory training considering global variance for speech synthesis based on neural networks