Speech Segregation De Liang Wang Perception Neurodynamics Lab

Speech Segregation De. Liang Wang Perception & Neurodynamics Lab Ohio State University http: //www. cse. ohio-state. edu/pnl/ ICASSP'10 tutorial

Outline of presentation I. III. IV. Introduction: Speech segregation problem Auditory scene analysis (ASA) Speech enhancement Speech segregation by computational auditory scene analysis (CASA) V. Segregation as binary classification VI. Concluding remarks ICASSP'10 tutorial 2

Real-world audition What? • Speech message speaker age, gender, linguistic origin, mood, … • Music • Car passing by Where? • Left, right, up, down • How close? Channel characteristics Environment characteristics • Room reverberation • Ambient noise ICASSP'10 tutorial 3

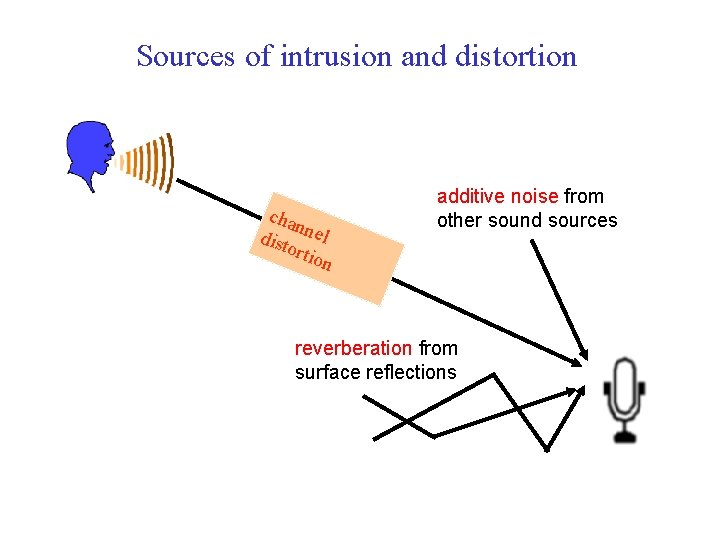

Sources of intrusion and distortion cha n dist nel orti on additive noise from other sound sources reverberation from surface reflections ICASSP'10 tutorial 4

Cocktail party problem • Term coined by Cherry • “One of our most important faculties is our ability to listen to, and • l follow, one speaker in the presence of others. This is such a common experience that we may take it for granted; we may call it ‘the cocktail party problem’…” (Cherry, 1957) “For ‘cocktail party’-like situations… when all voices are equally loud, speech remains intelligible for normal-hearing listeners even when there as many as six interfering talkers” (Bronkhorst & Plomp, 1992) Ball-room problem by Helmholtz l “Complicated beyond conception” (Helmholtz, 1863) • Speech segregation problem ICASSP'10 tutorial 5

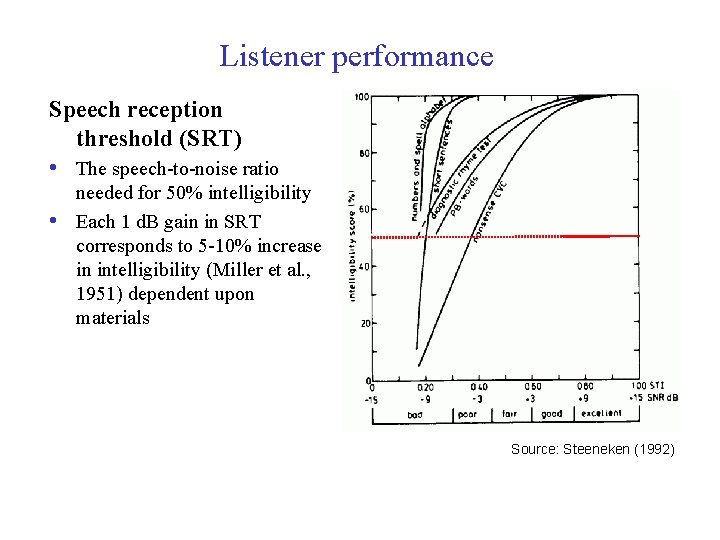

Listener performance Speech reception threshold (SRT) • The speech-to-noise ratio • needed for 50% intelligibility Each 1 d. B gain in SRT corresponds to 5 -10% increase in intelligibility (Miller et al. , 1951) dependent upon materials Source: Steeneken (1992) ICASSP'10 tutorial 6

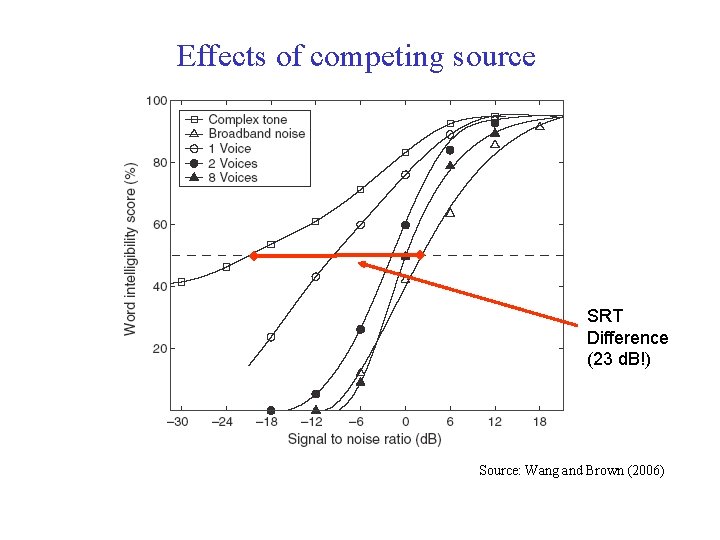

Effects of competing source SRT Difference (23 d. B!) Source: Wang and Brown (2006) ICASSP'10 tutorial 7

Some applications of speech segregation • Robust automatic speech and speaker recognition • Processor for hearing prosthesis • Hearing aids • Cochlear implants • Audio information retrieval ICASSP'10 tutorial 8

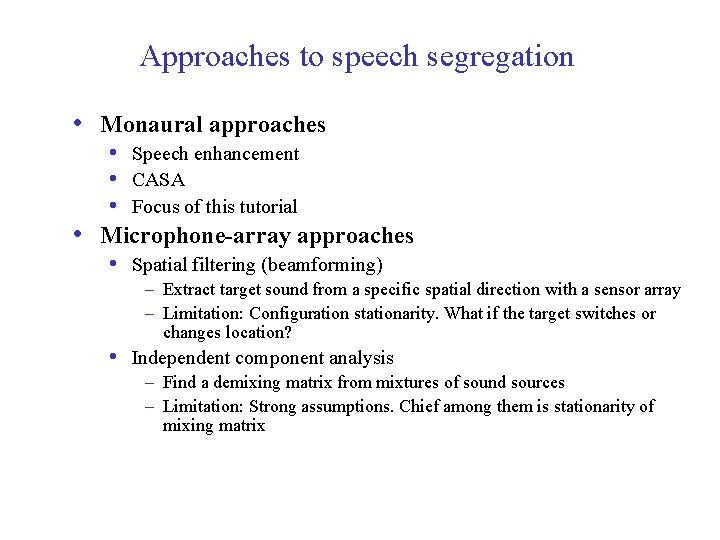

Approaches to speech segregation • Monaural approaches • Speech enhancement • CASA • Focus of this tutorial • Microphone-array approaches • Spatial filtering (beamforming) – Extract target sound from a specific spatial direction with a sensor array – Limitation: Configuration stationarity. What if the target switches or changes location? • Independent component analysis – Find a demixing matrix from mixtures of sound sources – Limitation: Strong assumptions. Chief among them is stationarity of mixing matrix ICASSP'10 tutorial 9

Part II: Auditory scene analysis • Human auditory system • How does the human auditory system organize sound? • Auditory scene analysis account ICASSP'10 tutorial 10

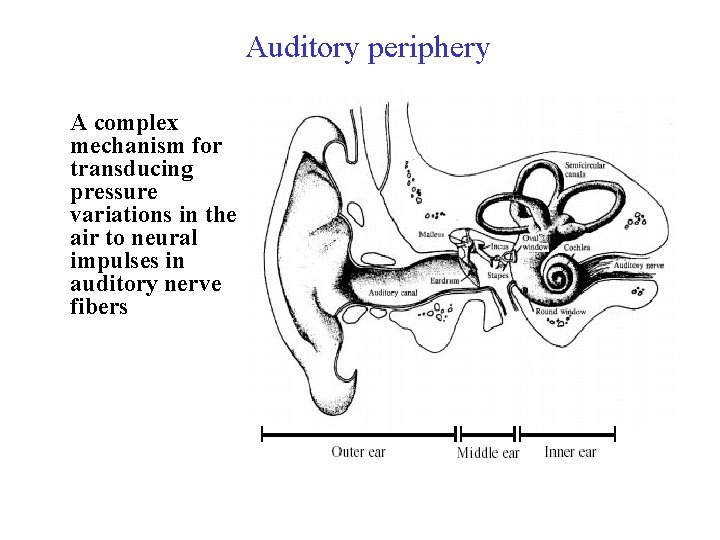

Auditory periphery A complex mechanism for transducing pressure variations in the air to neural impulses in auditory nerve fibers ICASSP'10 tutorial 11

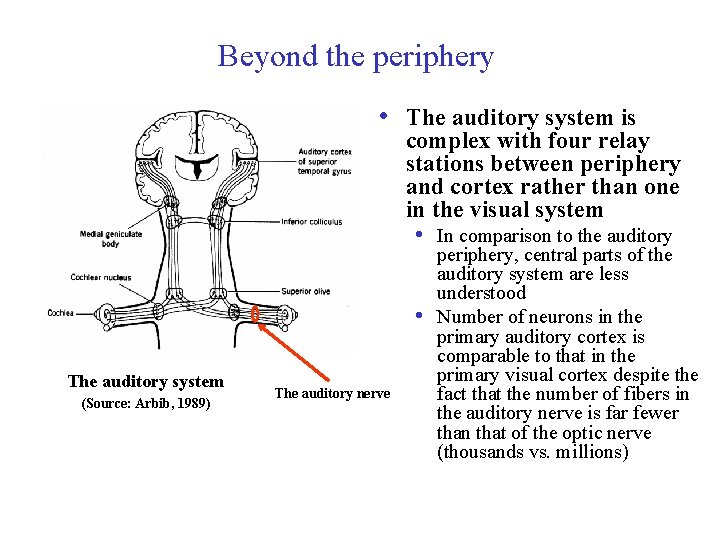

Beyond the periphery • The auditory system is complex with four relay stations between periphery and cortex rather than one in the visual system • In comparison to the auditory • The auditory system (Source: Arbib, 1989) ICASSP'10 tutorial The auditory nerve periphery, central parts of the auditory system are less understood Number of neurons in the primary auditory cortex is comparable to that in the primary visual cortex despite the fact that the number of fibers in the auditory nerve is far fewer than that of the optic nerve (thousands vs. millions) 12

Auditory scene analysis • Listeners are capable of parsing an acoustic scene (a • sound mixture) to form a mental representation of each sound source – stream – in the perceptual process of auditory scene analysis (Bregman, 1990) • From acoustic events to perceptual streams Two conceptual processes of ASA: • Segmentation. Decompose the acoustic mixture into sensory • elements (segments) Grouping. Combine segments into streams, so that segments in the same stream originate from the same source ICASSP'10 tutorial 13

Simultaneous organization groups sound components that overlap in time. ASA cues for simultaneous organization: • Proximity in frequency (spectral proximity) • Common periodicity • Harmonicity • Temporal fine structure • Common spatial location • Common onset (and to a lesser degree, common offset) • Common temporal modulation • Amplitude modulation (AM) • Frequency modulation (FM) • Demo: ICASSP'10 tutorial 14

Sequential organization groups sound components across time. ASA cues for sequential organization: • Proximity in time and frequency • Temporal and spectral continuity • Common spatial location; more generally, spatial continuity • Smooth pitch contour • Smooth format transition? • Rhythmic structure Demo: streaming in African xylophone music • Note in pentatonic scale ICASSP'10 tutorial 15

Primitive versus schema-based organization l Primitive grouping. Innate data-driven mechanisms, consistent with those described by Gestalt psychologists for visual perception – feature-based or bottom-up l l l It is domain-general, and exploits intrinsic structure of environmental sound Grouping cues described earlier are primitive in nature Schema-driven grouping. Learned knowledge about speech, music and other environmental sounds – modelbased or top-down l It is domain-specific, e. g. organization of speech sounds into syllables ICASSP'10 tutorial 16

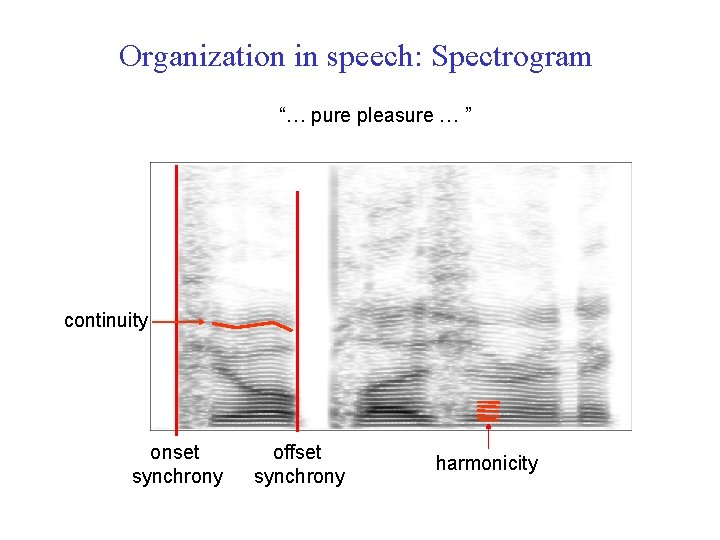

Organization in speech: Spectrogram “… pure pleasure … ” continuity onset synchrony ICASSP'10 tutorial offset synchrony harmonicity 17

Interim summary of ASA • Auditory peripheral processing amounts to a • • decomposition of the acoustic signal ASA cues essentially reflect structural coherence of natural sound sources A subset of cues believed to be strongly involved in ASA • Simultaneous organization: Periodicity, temporal modulation, onset • Sequential organization: Location, pitch contour and other source characteristics (e. g. vocal tract) ICASSP'10 tutorial 18

Part III. Speech enhancement l Speech enhancement aims to remove or reduce background noise l l Types of speech enhancement algorithms l l l Improve signal-to-noise ratio (SNR) Assumes stationary noise or at least that noise is more stationary than speech A tradeoff between speech distortion and noise distortion (residual noise) Spectral subtraction Wiener filtering Minimum mean square error (MMSE) estimation Subspace algorithms Material in this part is mainly based on Loizou (2007) ICASSP'10 tutorial 19

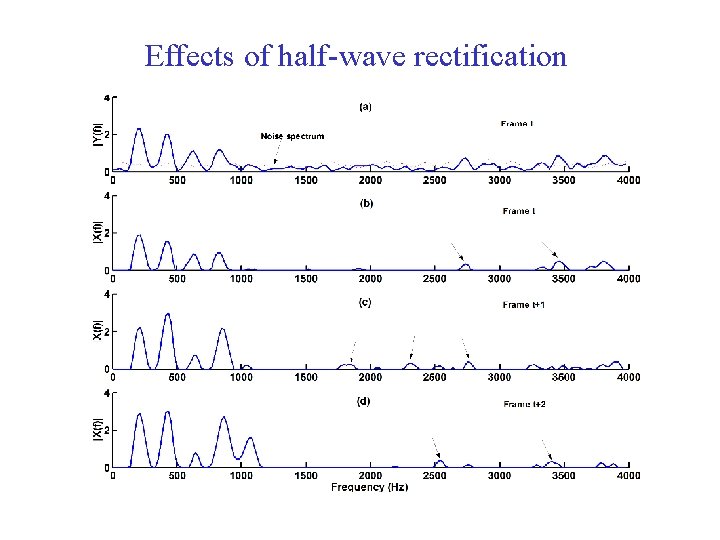

Spectral subtraction l l It is based on a simple principle: Assuming additive noise, one can obtain an estimate of the clean signal spectrum by subtracting an estimate of the noise spectrum from the noisy speech spectrum The noise spectrum can be estimated (and updated) during periods when the speech signal is absent or when only noise is present l It requires voice activity detection or speech pause detection ICASSP'10 tutorial 20

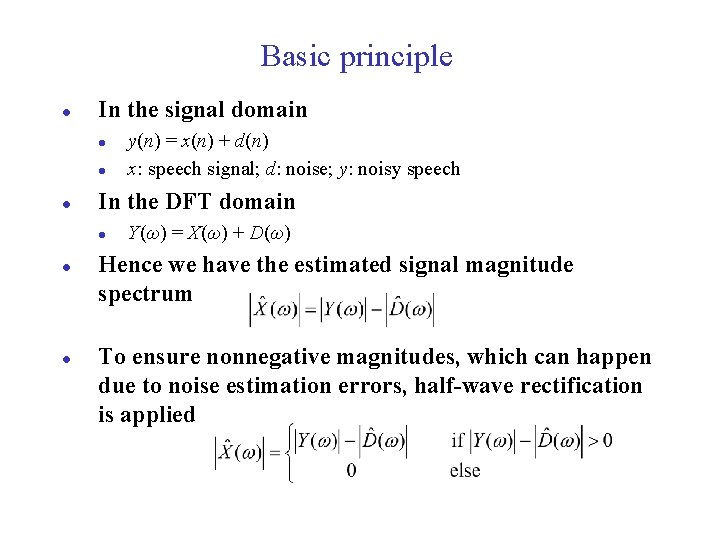

Basic principle l In the signal domain l l l In the DFT domain l l l y(n) = x(n) + d(n) x: speech signal; d: noise; y: noisy speech Y(ω) = X(ω) + D(ω) Hence we have the estimated signal magnitude spectrum To ensure nonnegative magnitudes, which can happen due to noise estimation errors, half-wave rectification is applied ICASSP'10 tutorial 21

Basic principle (cont. ) l l Assuming that speech and noise are uncorrelated, we have the estimated signal power spectrum In general l Again, half-wave rectification needs to be applied ICASSP'10 tutorial 22

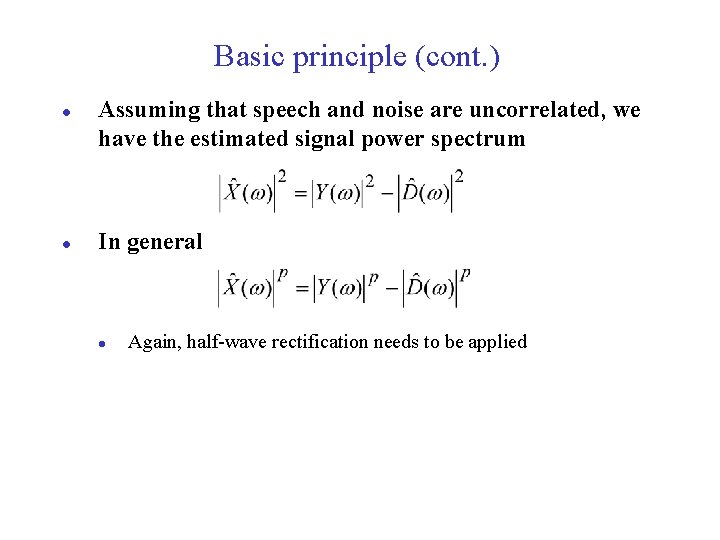

Flow diagram Noise estimation/ update Noisy Speech FFT + Phase information Enhanced Speech ICASSP'10 tutorial IFFT 23

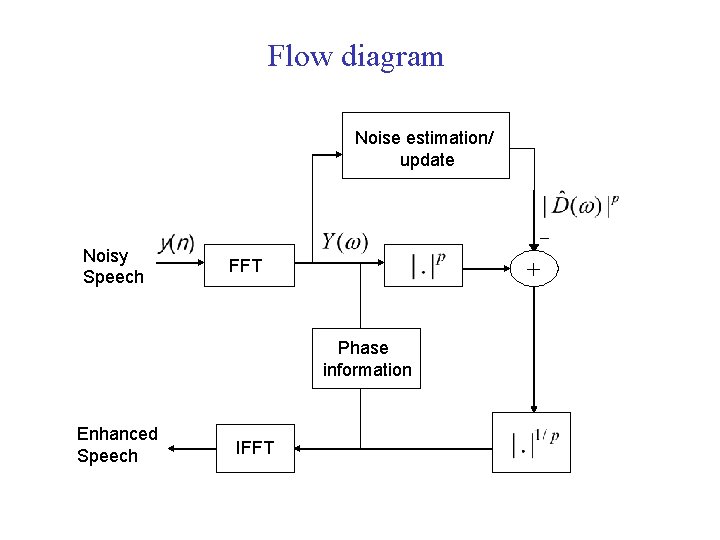

Effects of half-wave rectification ICASSP'10 tutorial 24

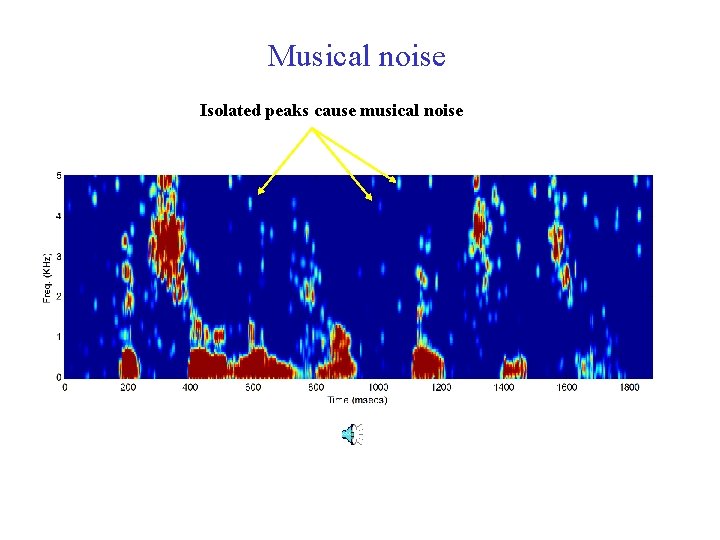

Musical noise Isolated peaks cause musical noise ICASSP'10 tutorial 25

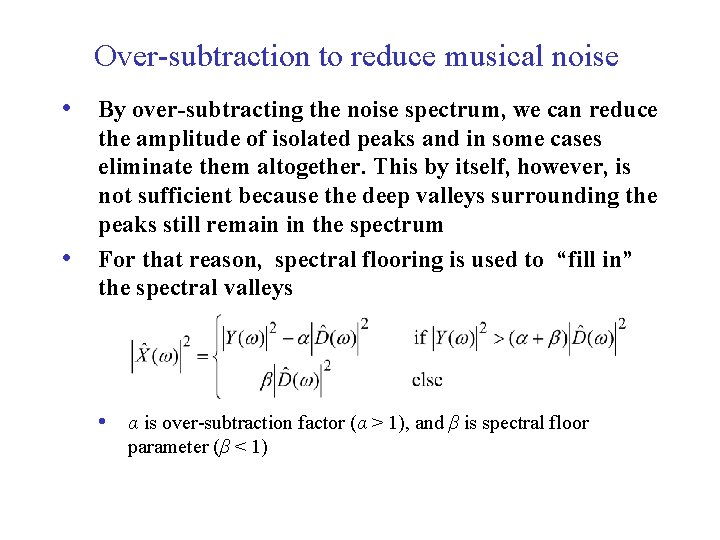

Over-subtraction to reduce musical noise • By over-subtracting the noise spectrum, we can reduce • the amplitude of isolated peaks and in some cases eliminate them altogether. This by itself, however, is not sufficient because the deep valleys surrounding the peaks still remain in the spectrum For that reason, spectral flooring is used to “fill in” the spectral valleys • α is over-subtraction factor (α > 1), and β is spectral floor parameter (β < 1) ICASSP'10 tutorial 26

Effects of parameters: Sound demo • • Half-wave rectification: α =1, β = 0 α =3, β = 0 α =8, β = 0. 1 α =8, β = 1 α =15, β = 0 Noisy sentence (+5 d. B SNR) Original (clean) sentence ICASSP'10 tutorial 27

Wiener filter • Aim: To find the optimal filter that minimizes the mean square error between the desired signal (clean signal) and the estimated output • Input to this filter: Noisy speech • Output of this filter: Enhanced speech ICASSP'10 tutorial 28

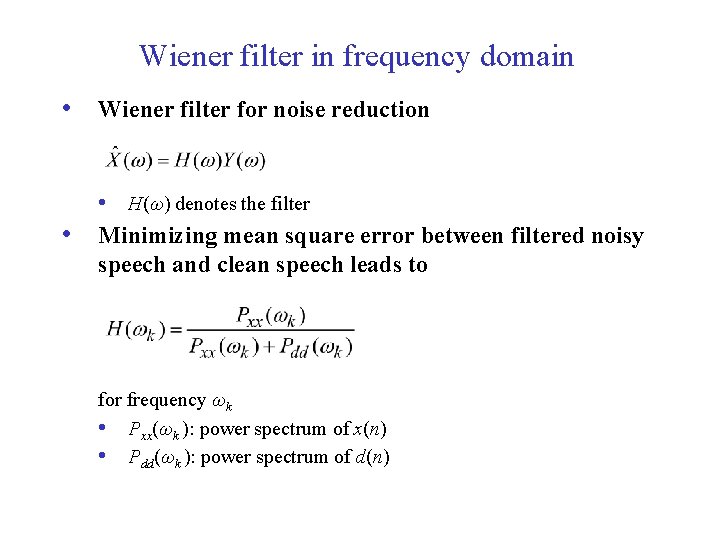

Wiener filter in frequency domain • Wiener filter for noise reduction • • H(ω) denotes the filter Minimizing mean square error between filtered noisy speech and clean speech leads to for frequency ωk • Pxx(ωk ): power spectrum of x(n) • Pdd(ωk ): power spectrum of d(n) ICASSP'10 tutorial 29

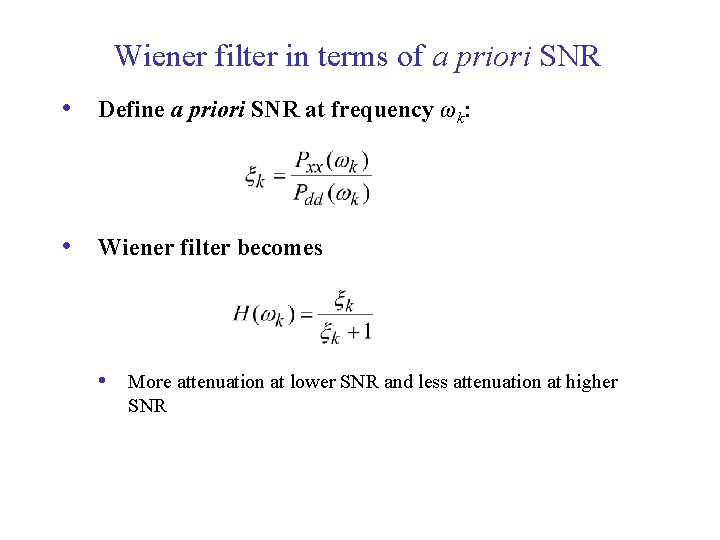

Wiener filter in terms of a priori SNR • Define a priori SNR at frequency ωk: • Wiener filter becomes • More attenuation at lower SNR and less attenuation at higher SNR ICASSP'10 tutorial 30

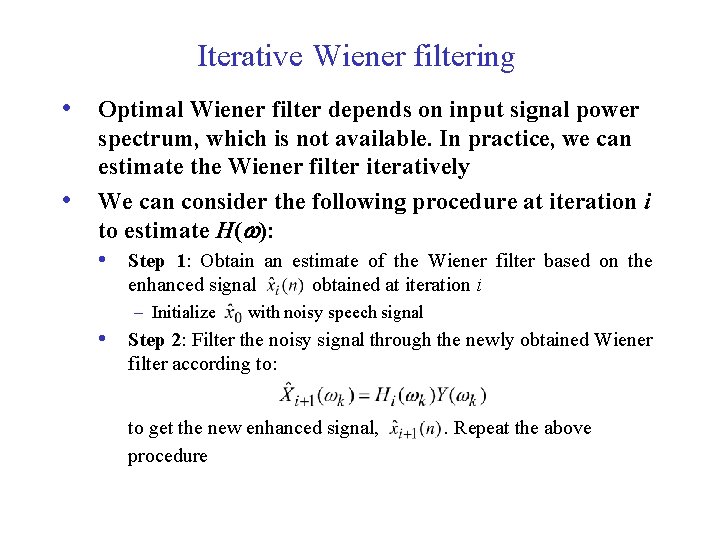

Iterative Wiener filtering • Optimal Wiener filter depends on input signal power • spectrum, which is not available. In practice, we can estimate the Wiener filter iteratively We can consider the following procedure at iteration i to estimate H(w): • Step 1: Obtain an estimate of the Wiener filter based on the enhanced signal – Initialize obtained at iteration i with noisy speech signal • Step 2: Filter the noisy signal through the newly obtained Wiener filter according to: to get the new enhanced signal, procedure ICASSP'10 tutorial . Repeat the above 31

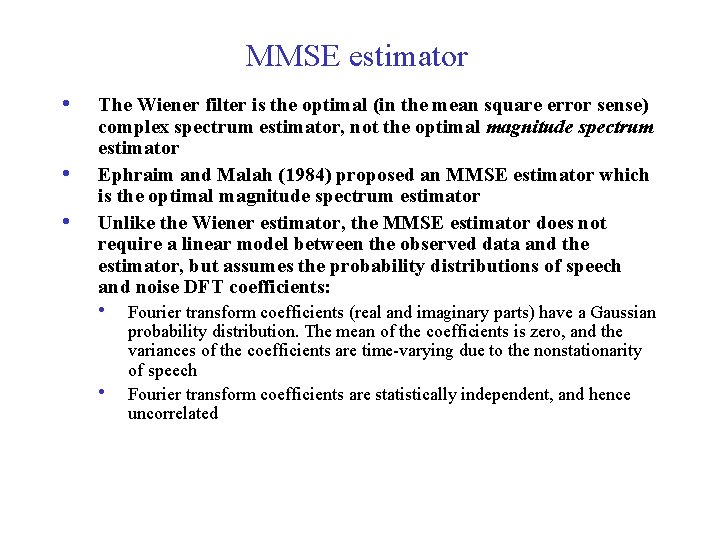

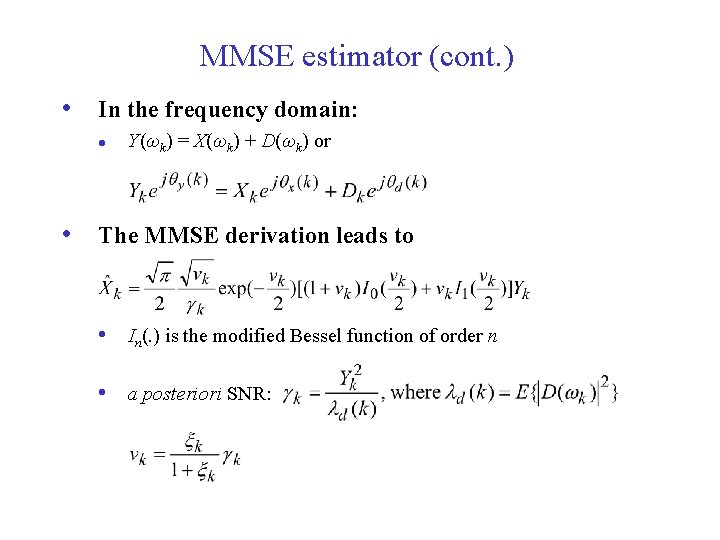

MMSE estimator • • • The Wiener filter is the optimal (in the mean square error sense) complex spectrum estimator, not the optimal magnitude spectrum estimator Ephraim and Malah (1984) proposed an MMSE estimator which is the optimal magnitude spectrum estimator Unlike the Wiener estimator, the MMSE estimator does not require a linear model between the observed data and the estimator, but assumes the probability distributions of speech and noise DFT coefficients: • • Fourier transform coefficients (real and imaginary parts) have a Gaussian probability distribution. The mean of the coefficients is zero, and the variances of the coefficients are time-varying due to the nonstationarity of speech Fourier transform coefficients are statistically independent, and hence uncorrelated ICASSP'10 tutorial 32

MMSE estimator (cont. ) • In the frequency domain: l Y(ωk) = X(ωk) + D(ωk) or • The MMSE derivation leads to • In(. ) is the modified Bessel function of order n • a posteriori SNR: ICASSP'10 tutorial 33

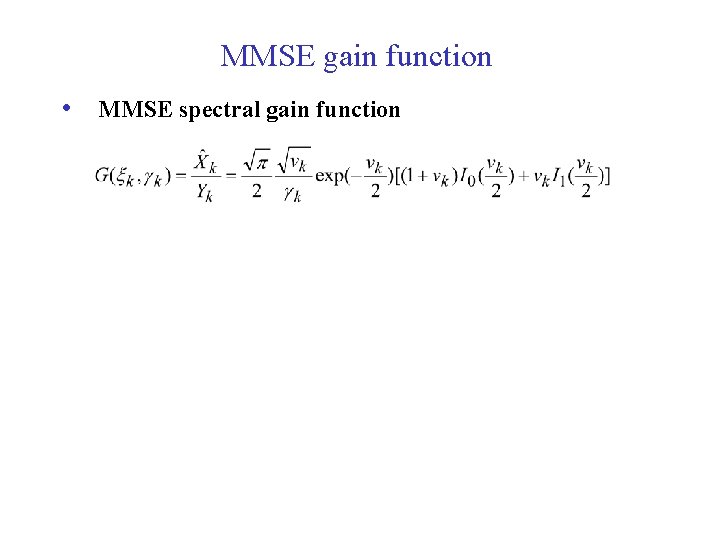

MMSE gain function • MMSE spectral gain function ICASSP'10 tutorial 34

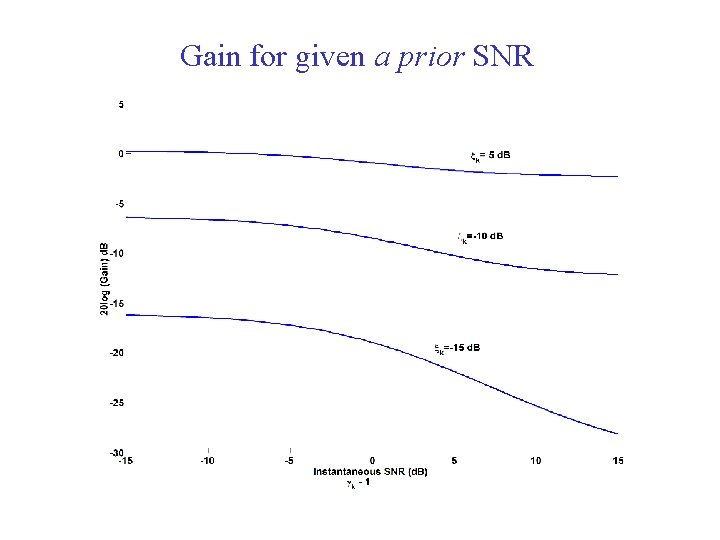

Gain for given a prior SNR ICASSP'10 tutorial 35

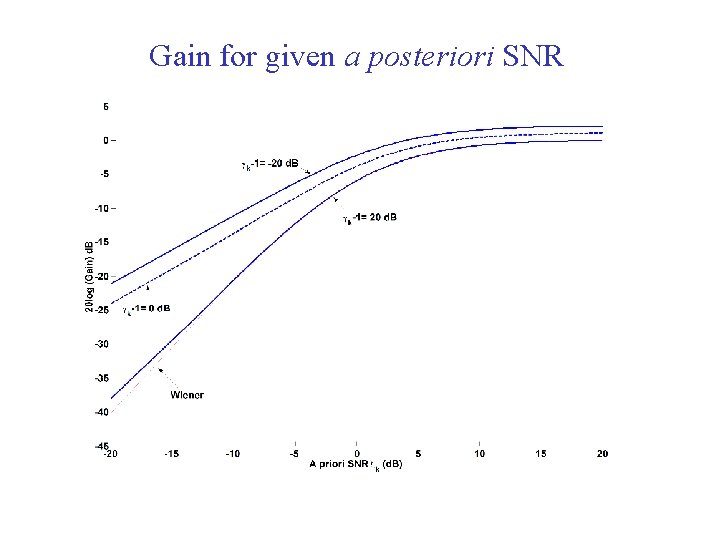

Gain for given a posteriori SNR ICASSP'10 tutorial 36

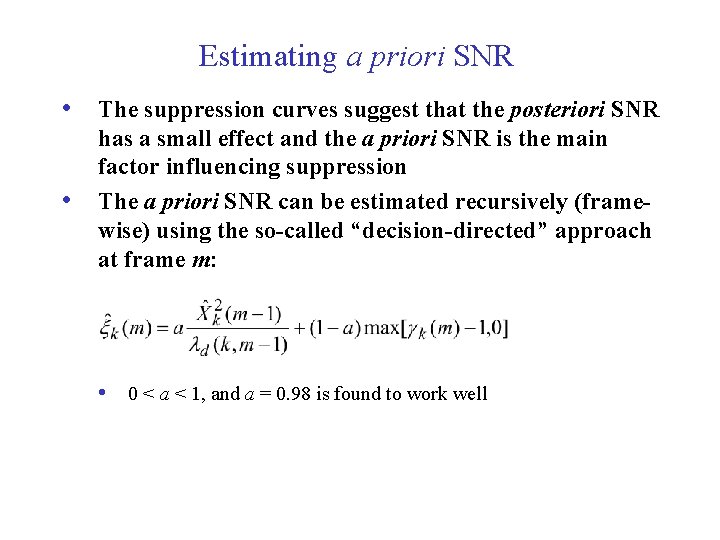

Estimating a priori SNR • The suppression curves suggest that the posteriori SNR • has a small effect and the a priori SNR is the main factor influencing suppression The a priori SNR can be estimated recursively (framewise) using the so-called “decision-directed” approach at frame m: • 0 < a < 1, and a = 0. 98 is found to work well ICASSP'10 tutorial 37

Other remarks and sound demo • It is noted that when the a priori SNR is estimated • • using the “decision-directed” approach, the enhanced speech has no “musical noise” A log-MMSE estimator also exists, which might be perceptually more meaningful Sound demo: • Noisy sentence (5 d. B SNR): • MMSE estimator: • Log-MMSE estimator: ICASSP'10 tutorial 38

Subspace-based algorithms • This class of algorithms is based on singular value • • decomposition (SVD) or eigenvalue decomposition of either data matrices or covariance matrices The basic idea behind the SVD approach is that the singular vectors corresponding to the largest singular values contain speech information, while the remaining singular vectors contain noise information Noise reduction is therefore accomplished by discarding the singular vectors corresponding to the smallest singular values ICASSP'10 tutorial 39

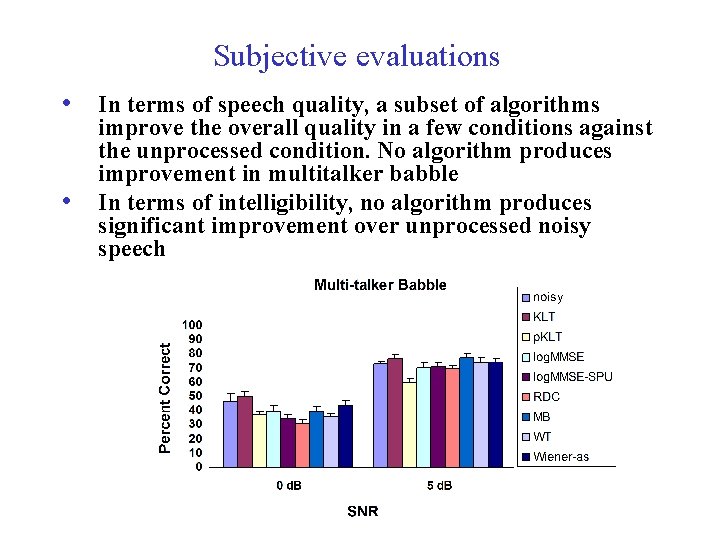

Subjective evaluations • In terms of speech quality, a subset of algorithms • improve the overall quality in a few conditions against the unprocessed condition. No algorithm produces improvement in multitalker babble In terms of intelligibility, no algorithm produces significant improvement over unprocessed noisy speech ICASSP'10 tutorial 40

Interim summary on speech enhancement • Algorithms are derived analytically • Optimization theory • Noise estimation is key • These algorithms are particularly needed for highly non-stationary environments • Speech enhancement algorithms cannot deal with • multitalker mixtures Inability to improve speech intelligibility ICASSP'10 tutorial 41

Part IV. CASA-based speech segregation l l Fundamentals of CASA for monaural mixtures CASA for speech segregation l l Feature-based algorithms Model-based algorithms ICASSP'10 tutorial 42

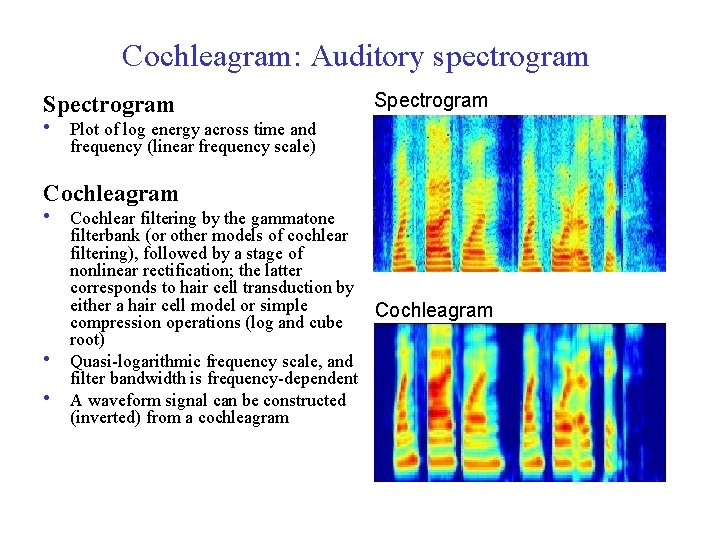

Cochleagram: Auditory spectrogram Spectrogram • Plot of log energy across time and frequency (linear frequency scale) Cochleagram • Cochlear filtering by the gammatone • • filterbank (or other models of cochlear filtering), followed by a stage of nonlinear rectification; the latter corresponds to hair cell transduction by either a hair cell model or simple Cochleagram compression operations (log and cube root) Quasi-logarithmic frequency scale, and filter bandwidth is frequency-dependent A waveform signal can be constructed (inverted) from a cochleagram ICASSP'10 tutorial 43

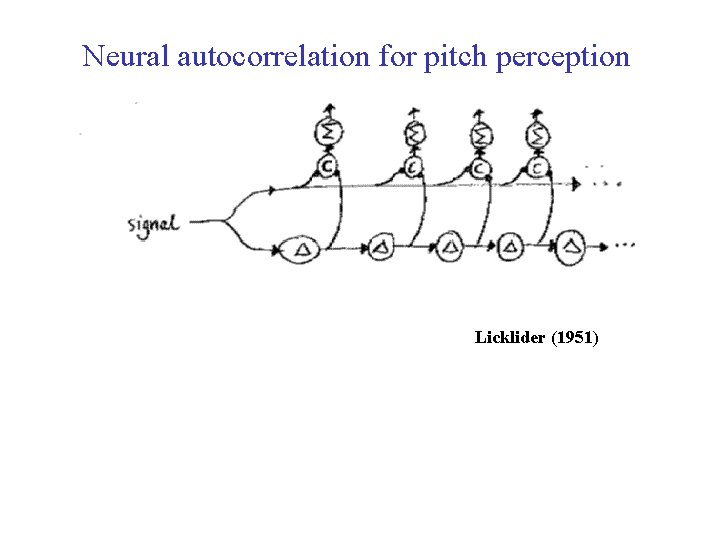

Neural autocorrelation for pitch perception Licklider (1951) ICASSP'10 tutorial 44

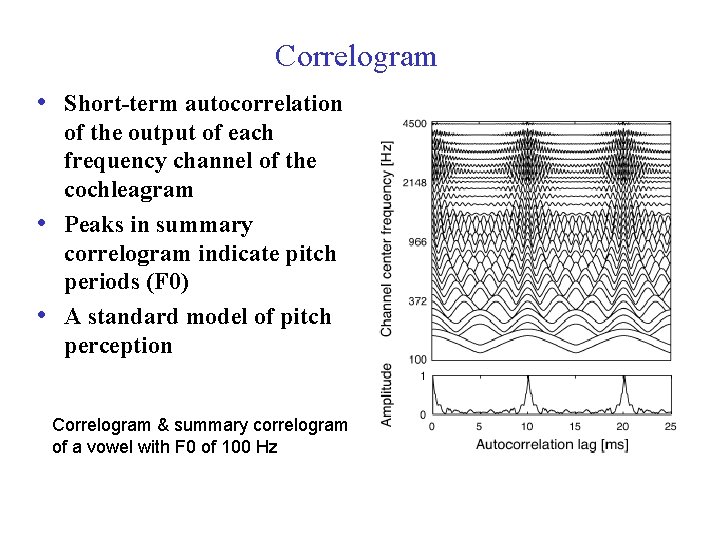

Correlogram • Short-term autocorrelation • • of the output of each frequency channel of the cochleagram Peaks in summary correlogram indicate pitch periods (F 0) A standard model of pitch perception Correlogram & summary correlogram of a vowel with F 0 of 100 Hz ICASSP'10 tutorial 45

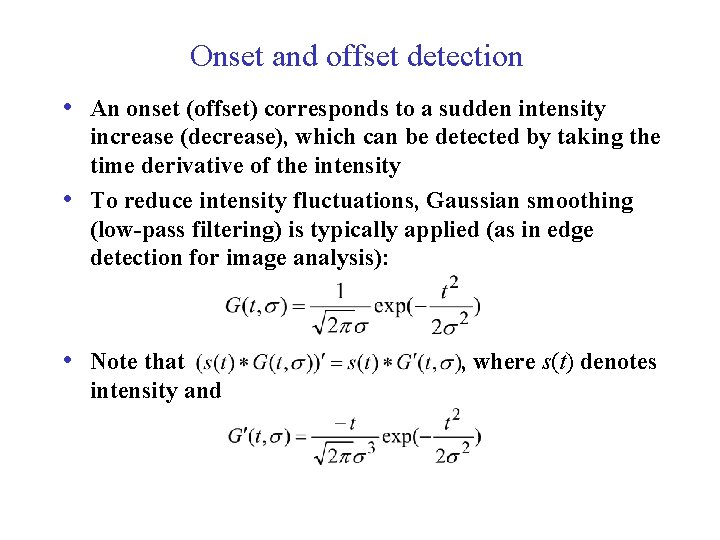

Onset and offset detection • An onset (offset) corresponds to a sudden intensity • increase (decrease), which can be detected by taking the time derivative of the intensity To reduce intensity fluctuations, Gaussian smoothing (low-pass filtering) is typically applied (as in edge detection for image analysis): • Note that , where s(t) denotes intensity and ICASSP'10 tutorial 46

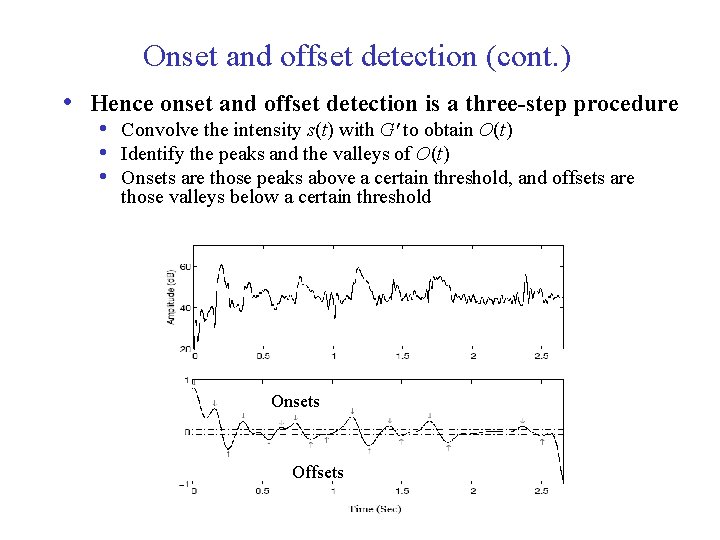

Onset and offset detection (cont. ) • Hence onset and offset detection is a three-step procedure • Convolve the intensity s(t) with G' to obtain O(t) • Identify the peaks and the valleys of O(t) • Onsets are those peaks above a certain threshold, and offsets are those valleys below a certain threshold Onsets Offsets ICASSP'10 tutorial 47

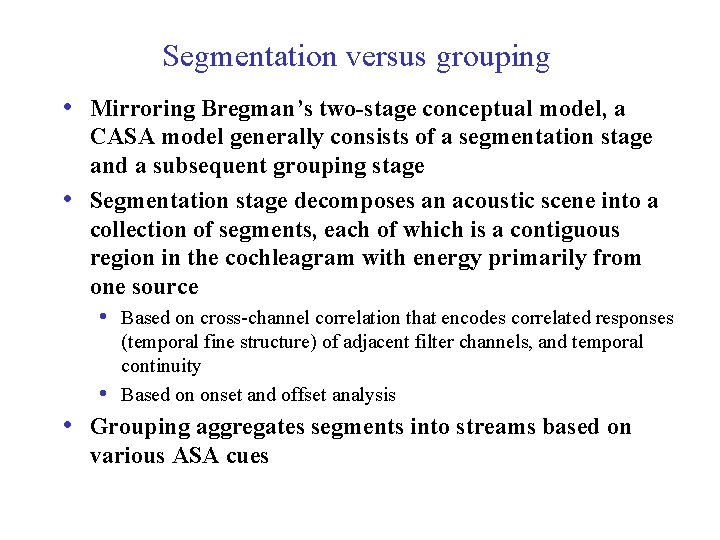

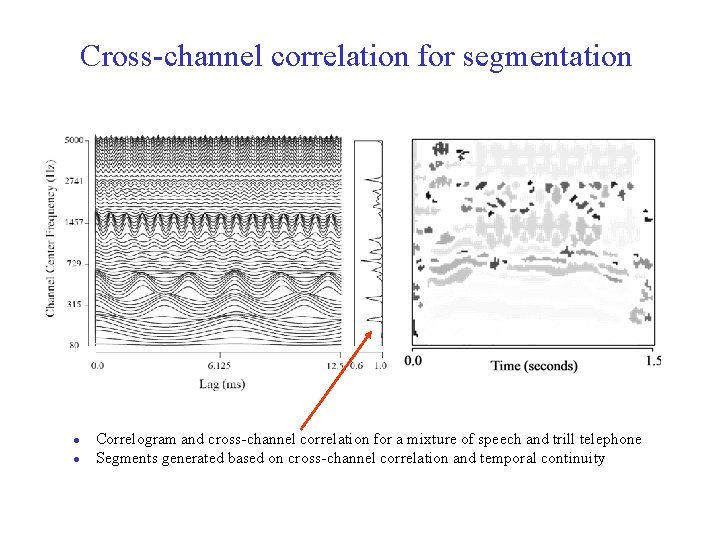

Segmentation versus grouping • Mirroring Bregman’s two-stage conceptual model, a • CASA model generally consists of a segmentation stage and a subsequent grouping stage Segmentation stage decomposes an acoustic scene into a collection of segments, each of which is a contiguous region in the cochleagram with energy primarily from one source • Based on cross-channel correlation that encodes correlated responses (temporal fine structure) of adjacent filter channels, and temporal continuity Based on onset and offset analysis • • Grouping aggregates segments into streams based on various ASA cues ICASSP'10 tutorial 48

Cross-channel correlation for segmentation l l Correlogram and cross-channel correlation for a mixture of speech and trill telephone Segments generated based on cross-channel correlation and temporal continuity ICASSP'10 tutorial 49

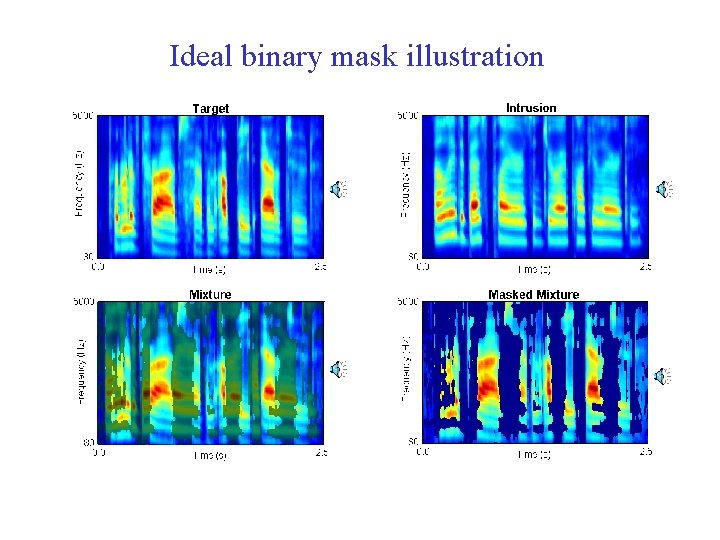

Ideal binary mask • A main CASA goal is to retain the parts of a mixture • where target sound is stronger than the acoustic background (i. e. to mask interference by the target), and discard the other parts (Hu & Wang, 2001; 2004) • What a target is depends on intention, attention, etc. In other words, the goal is to identify the ideal binary mask (IBM), which is 1 for a time-frequency (T-F) unit if the SNR within the unit exceeds a threshold, and 0 otherwise l l It does not actually separate the mixture! More discussion on the IBM in Part V ICASSP'10 tutorial 50

Ideal binary mask illustration ICASSP'10 tutorial 51

CASA for speech segregation l Monaural CASA systems for speech segregation are based on harmonicity, onset/offset, AM/FM, and trained models (Weintraub, 1985; Brown & Cooke, 1994; Ellis, 1996; Hu & Wang, 2004; Barker et al. , 2005; Radfar et al. , 2007) l Hu-Wang and Barker et al. models will be explained as representatives for feature-based and model-based methods, respectively ICASSP'10 tutorial 52

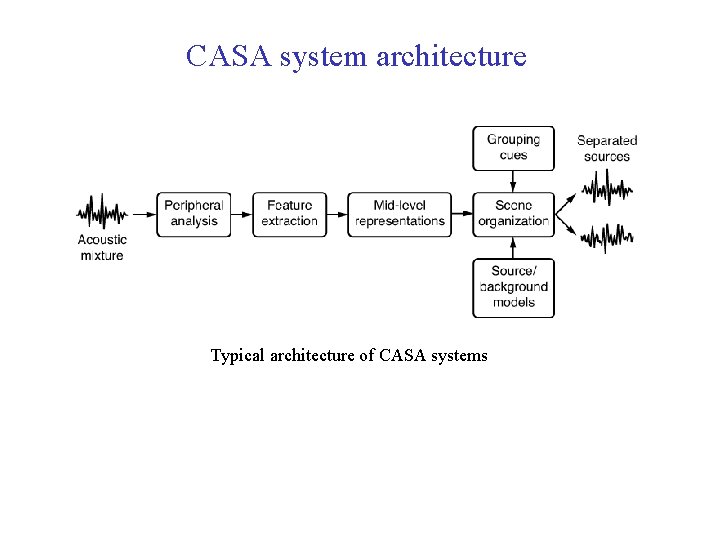

CASA system architecture Typical architecture of CASA systems ICASSP'10 tutorial 53

Voiced speech segregation l l l For voiced speech, lower harmonics are resolved while higher harmonics are not For unresolved harmonics, the envelopes of filter responses fluctuate at the fundamental frequency of speech A voiced speech segregation model by Hu and Wang (2004) applies different grouping mechanisms for lowfrequency and high-frequency signals: l l Low-frequency signals are grouped based on periodicity and temporal continuity High-frequency signals are grouped based on amplitude modulation and temporal continuity ICASSP'10 tutorial 54

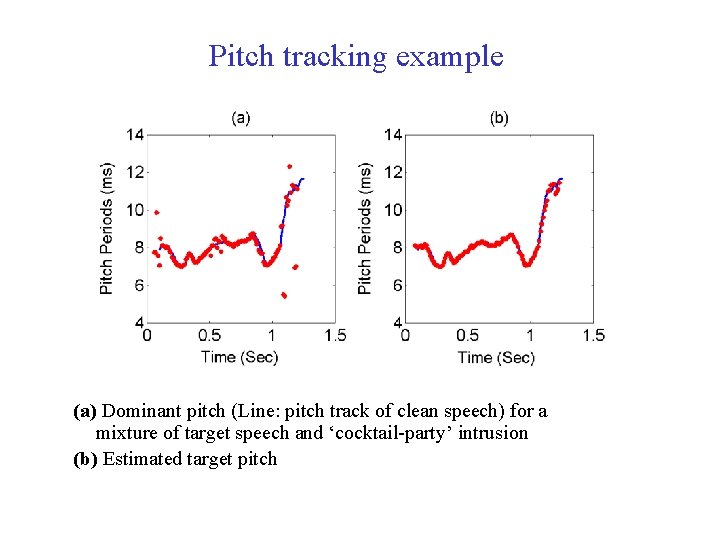

Pitch tracking l l Pitch periods of target speech are estimated from an initially segregated speech stream based on dominant pitch within each frame Estimated pitch periods are checked and re-estimated using two psychoacoustically motivated constraints: l l Target pitch should agree with the periodicity of the T-F units in the initial speech stream Pitch periods change smoothly, thus allowing for verification and interpolation ICASSP'10 tutorial 55

Pitch tracking example (a) Dominant pitch (Line: pitch track of clean speech) for a mixture of target speech and ‘cocktail-party’ intrusion (b) Estimated target pitch ICASSP'10 tutorial 56

T-F unit labeling l In the low-frequency range: l l A T-F unit is labeled by comparing the periodicity of its autocorrelation with the estimated target pitch In the high-frequency range: l l Due to their wide bandwidths, high-frequency filters respond to multiple harmonics. These responses are amplitude modulated due to beats and combinational tones (Helmholtz, 1863) A T-F unit in the high-frequency range is labeled by comparing its AM rate with the estimated target pitch ICASSP'10 tutorial 57

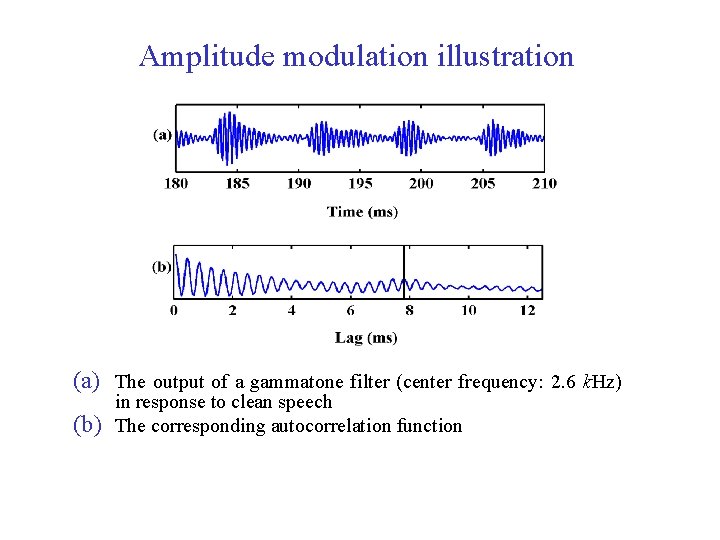

Amplitude modulation illustration (a) The output of a gammatone filter (center frequency: 2. 6 k. Hz) (b) in response to clean speech The corresponding autocorrelation function ICASSP'10 tutorial 58

Final segregation l l New segments corresponding to unresolved harmonics are formed based on temporal continuity and crosschannel correlation of response envelopes (i. e. common AM). Then they are grouped into the foreground stream according to AM rates Other units are grouped according to temporal and spectral continuity ICASSP'10 tutorial 59

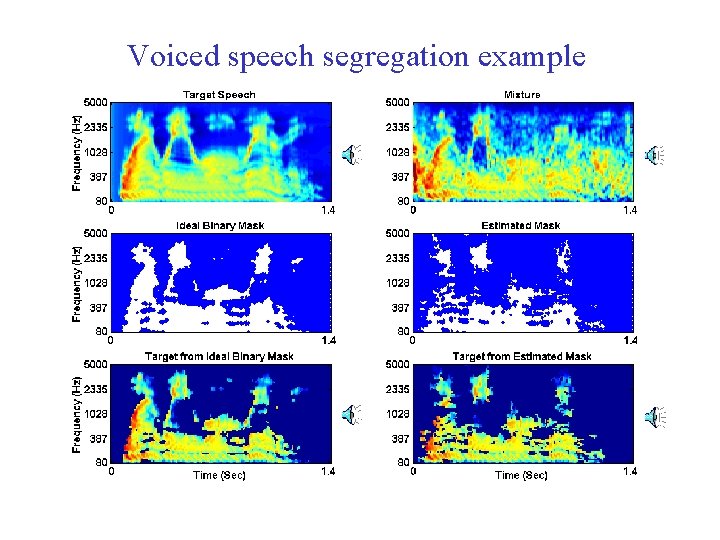

Voiced speech segregation example ICASSP'10 tutorial 60

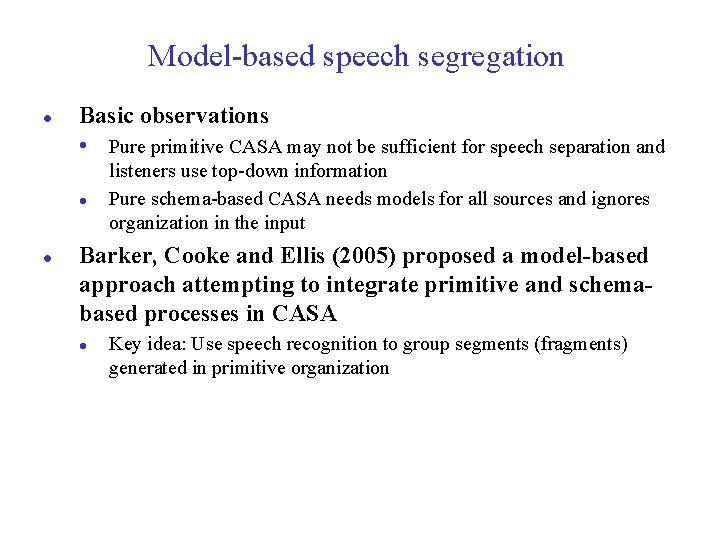

Model-based speech segregation l Basic observations • Pure primitive CASA may not be sufficient for speech separation and l l listeners use top-down information Pure schema-based CASA needs models for all sources and ignores organization in the input Barker, Cooke and Ellis (2005) proposed a model-based approach attempting to integrate primitive and schemabased processes in CASA l Key idea: Use speech recognition to group segments (fragments) generated in primitive organization ICASSP'10 tutorial 61

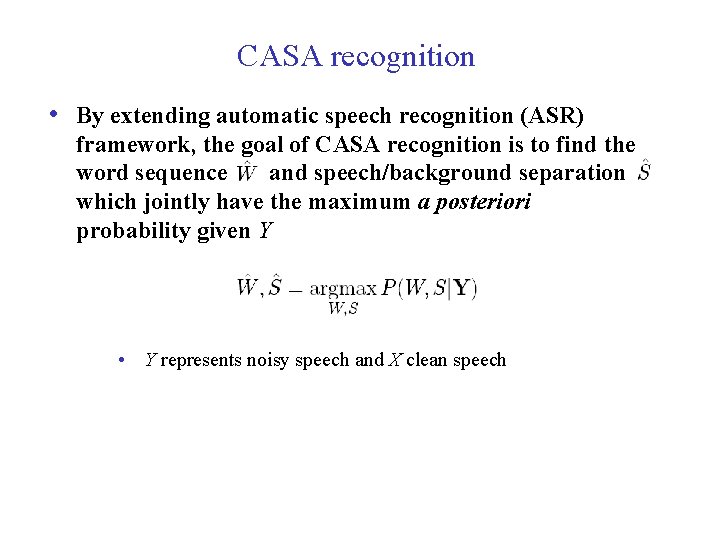

CASA recognition • By extending automatic speech recognition (ASR) framework, the goal of CASA recognition is to find the word sequence and speech/background separation which jointly have the maximum a posteriori probability given Y • Y represents noisy speech and X clean speech ICASSP'10 tutorial 62

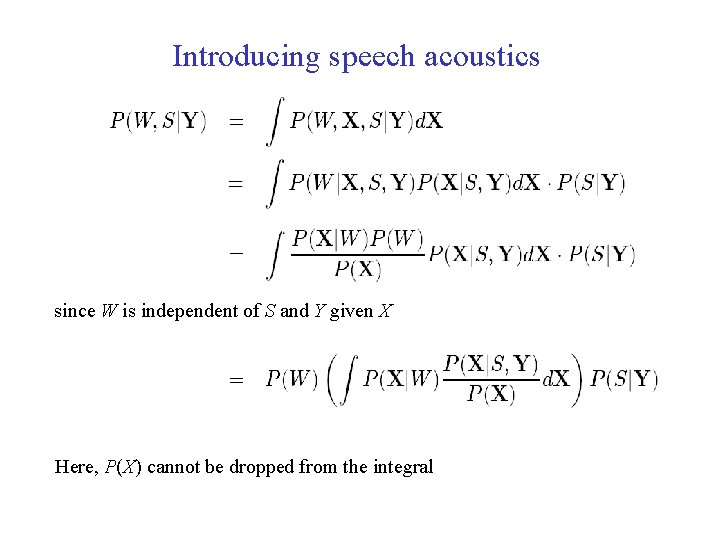

Introducing speech acoustics since W is independent of S and Y given X Here, P(X) cannot be dropped from the integral ICASSP'10 tutorial 63

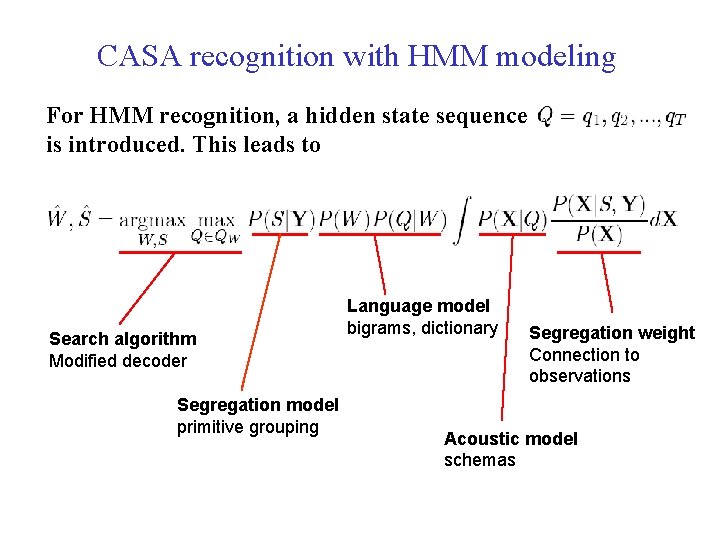

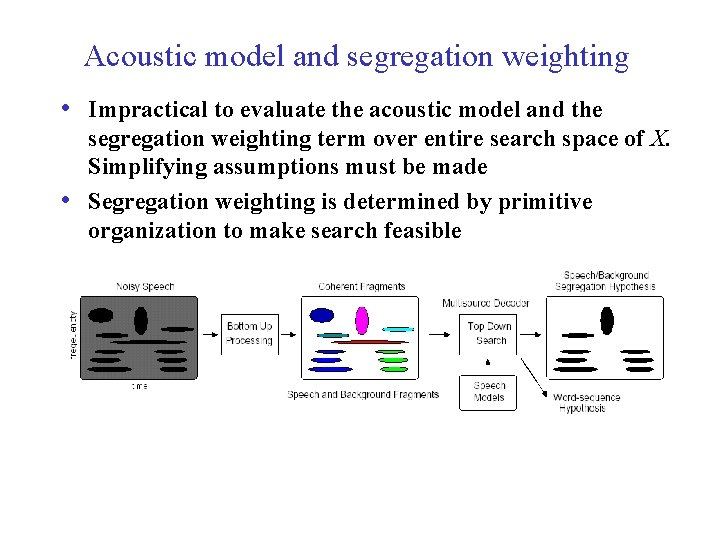

CASA recognition with HMM modeling For HMM recognition, a hidden state sequence is introduced. This leads to Search algorithm Modified decoder Segregation model primitive grouping ICASSP'10 tutorial Language model bigrams, dictionary Segregation weight Connection to observations Acoustic model schemas 64

Acoustic model and segregation weighting • Impractical to evaluate the acoustic model and the • segregation weighting term over entire search space of X. Simplifying assumptions must be made Segregation weighting is determined by primitive organization to make search feasible ICASSP'10 tutorial 65

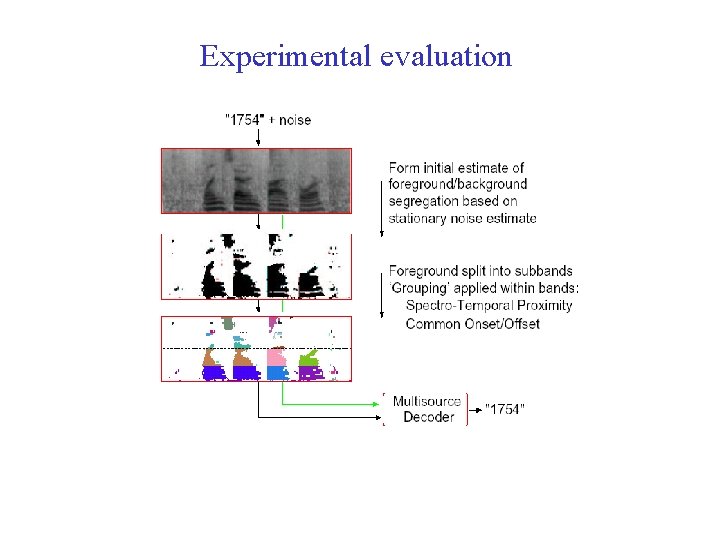

Experimental evaluation ICASSP'10 tutorial 66

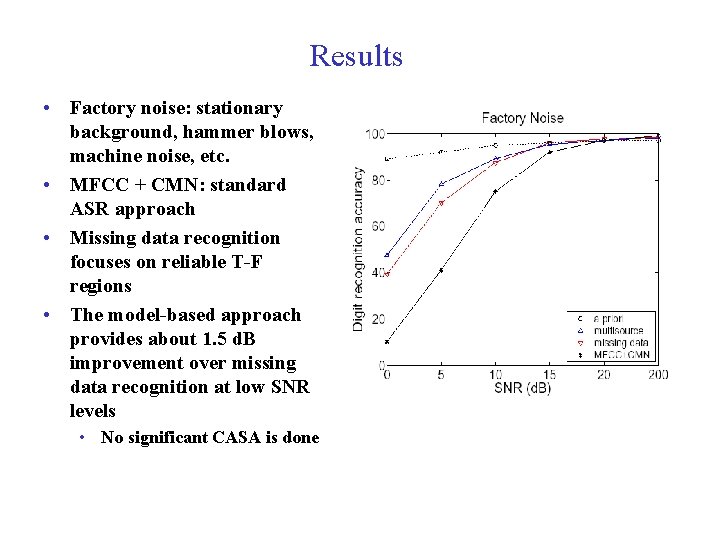

Results • Factory noise: stationary background, hammer blows, machine noise, etc. • MFCC + CMN: standard ASR approach • Missing data recognition focuses on reliable T-F regions • The model-based approach provides about 1. 5 d. B improvement over missing data recognition at low SNR levels • No significant CASA is done ICASSP'10 tutorial 67

Interim summary on CASA • Main progress occurs in voiced speech segregation based on pitch tracking and model-based grouping • Recent work starts to address unvoiced speech separation (e. g. Hu & Wang, 2008) • Integration of feature-based and model-based segregation is promising • Substantial effort attempts to incorporate speaker models into segregation (e. g. Shao & Wang, 2006) ICASSP'10 tutorial 68

Part V. Segregation as binary classification l What is the goal of speech segregation? l l l Ideal binary mask Speech intelligibility tests Classification approach to speech segregation l l An MLP based algorithm to separate reverberant speech A GMM based algorithm to improve intelligibility ICASSP'10 tutorial 69

What is the goal of speech segregation? l From the perspective of perceptual information processing, the analysis of the computational goal is critically important (Marr, 1982) l l What is the goal of audition? l l Computational theory analysis By analogy to vision (Marr, 1982), the purpose of audition is to produce an auditory description of the environment for the listener What is the goal of CASA? l l The goal of ASA is to segregate sound mixtures into separate perceptual representations (or auditory streams), each of which corresponds to an acoustic event (Bregman, 1990) By extrapolation the goal of CASA is to develop computational systems that extract individual streams from sound mixtures ICASSP'10 tutorial 70

Computational-theory analysis of ASA l l To form a stream, a sound must be audible on its own The number of streams that can be computed at a time is limited l l l Magical number 4 for simple sounds such as tones and vowels (Cowan, 2001)? 1+1, or figure-ground segregation, in noisy environment such as a cocktail party? Auditory masking further constrains the ASA output l Within a critical band a stronger signal masks a weaker one ICASSP'10 tutorial 71

Computational-theory analysis of ASA (cont. ) l ASA outcome depends on sound types (overall SNR is 0) l l Noise-Noise: pink Speech-Speech: Noise-Speech: Tone-Speech: ICASSP'10 tutorial , white , pink+white 72

Some alternative goals l Extract all underlying sound sources or the target sound source (the gold standard) l l l Enhance ASR l l l Implicit in speech enhancement and spatial filtering Segregating all sources is implausible, and probably unrealistic with one or two microphones Close coupling with a primary motivation of speech segregation Perceiving is more than recognizing (Treisman, 1999) Enhance human listening l l Advantage: close coupling with auditory perception There applications that involve no human listening ICASSP'10 tutorial 73

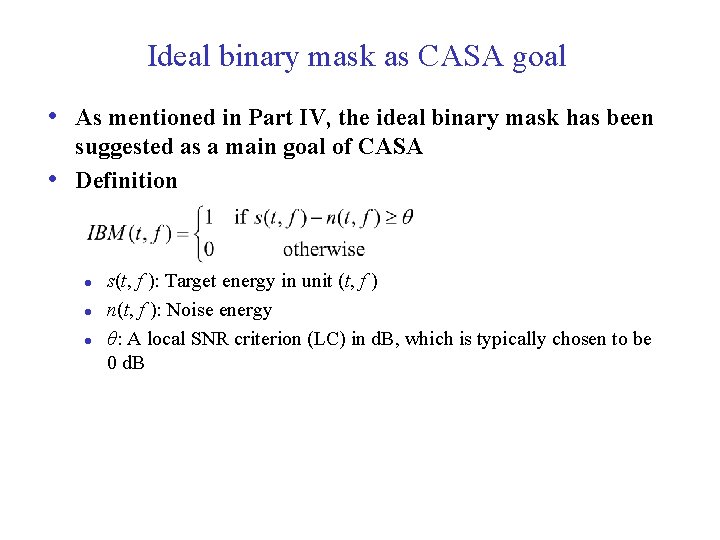

Ideal binary mask as CASA goal • As mentioned in Part IV, the ideal binary mask has been • suggested as a main goal of CASA Definition l l l s(t, f ): Target energy in unit (t, f ) n(t, f ): Noise energy θ: A local SNR criterion (LC) in d. B, which is typically chosen to be 0 d. B ICASSP'10 tutorial 74

Properties of IBM l IBM notion is consistent with computational-theory analysis of ASA l l Audibility and capacity Auditory masking Effects of target and noise types (spectral overlap) Optimality: Under certain conditions the IBM with θ = 0 d. B is the optimal binary mask from the perspective of SNR gain (Li & Wang, 2009) ICASSP'10 tutorial 75

Subject tests of IBM • Recent studies found large speech intelligibility improvements by applying ideal binary masking for normal-hearing (Brungart et al. , 2006; Li & Loizou, 2008), and hearing-impaired (Anzalone et al. , 2006; Wang et al. , 2009) listeners • Improvement for stationary noise is above 7 d. B for normal-hearing • (NH) listeners, and above 9 d. B for hearing-impaired (HI) listeners Improvement for modulated noise is significantly larger than for stationary noise ICASSP'10 tutorial 76

Test conditions of Wang et al. ’ 09 l l l SSN: Unprocessed monaural mixtures of speech-shaped noise (SSN) and Dantale II sentences (0 d. B: -10 d. B: ) CAFÉ: Unprocessed monaural mixtures of cafeteria noise (CAFÉ) and Dantale II sentences (0 d. B: -10 d. B: ) SSN-IBM: IBM applied to SSN (0 d. B: -10 d. B: -20 d. B: ) CAFÉ-IBM: IBM applied to CAFÉ (0 d. B: -10 d. B: -20 d. B: ) Intelligibility results are measured in terms of SRT ICASSP'10 tutorial 77

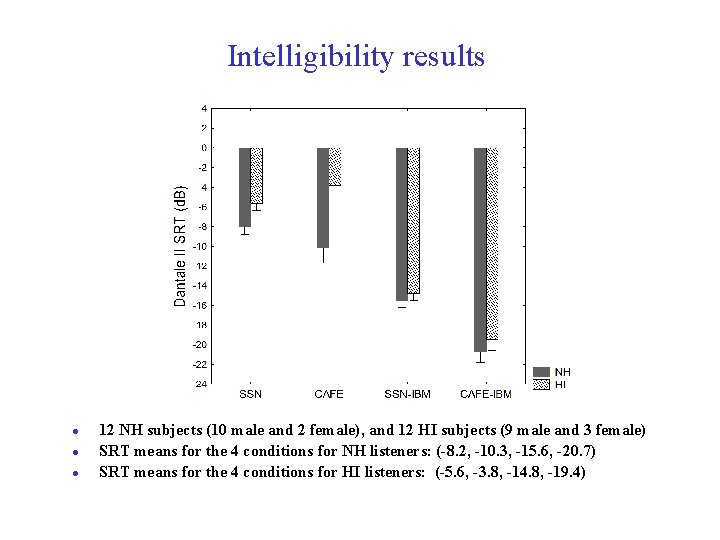

Intelligibility results l l l 12 NH subjects (10 male and 2 female), and 12 HI subjects (9 male and 3 female) SRT means for the 4 conditions for NH listeners: (-8. 2, -10. 3, -15. 6, -20. 7) SRT means for the 4 conditions for HI listeners: (-5. 6, -3. 8, -14. 8, -19. 4) ICASSP'10 tutorial 78

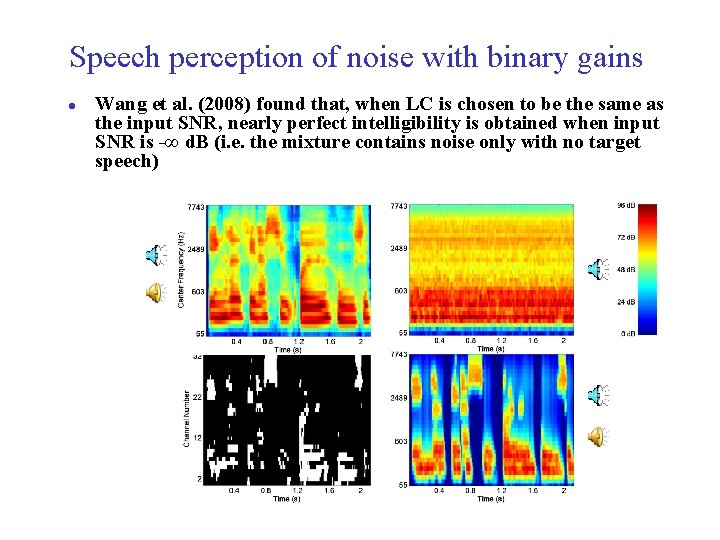

Speech perception of noise with binary gains l Wang et al. (2008) found that, when LC is chosen to be the same as the input SNR, nearly perfect intelligibility is obtained when input SNR is -∞ d. B (i. e. the mixture contains noise only with no target speech) ICASSP'10 tutorial 79

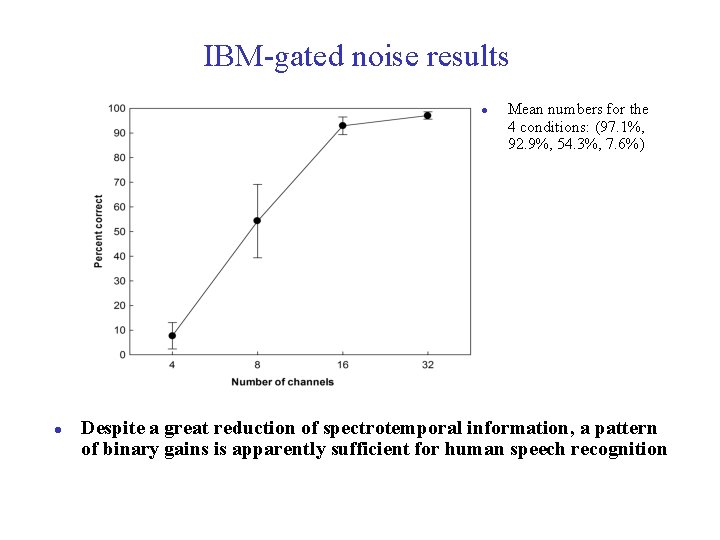

IBM-gated noise results l l Mean numbers for the 4 conditions: (97. 1%, 92. 9%, 54. 3%, 7. 6%) Despite a great reduction of spectrotemporal information, a pattern of binary gains is apparently sufficient for human speech recognition ICASSP'10 tutorial 80

Main points l l Speech intelligibility results support the IBM as an appropriate goal of CASA in general, and speech segregation in particular Hence solving the speech segregation problem would amount to binary classification l l This is a strong claim with major consequences This formulation opens the problem to a variety of pattern classification methods ICASSP'10 tutorial 81

Segregation of reverberant speech l Room reverberation poses an additional challenge to the problem of speech segregation l l l Segregation performance drops significantly in reverberation Common approach to deal with reverberation is inverse filtering, which is sensitive to different room configurations Jin and Wang (2009) proposed a model to segregate reverberant voiced speech based on classification ICASSP'10 tutorial 82

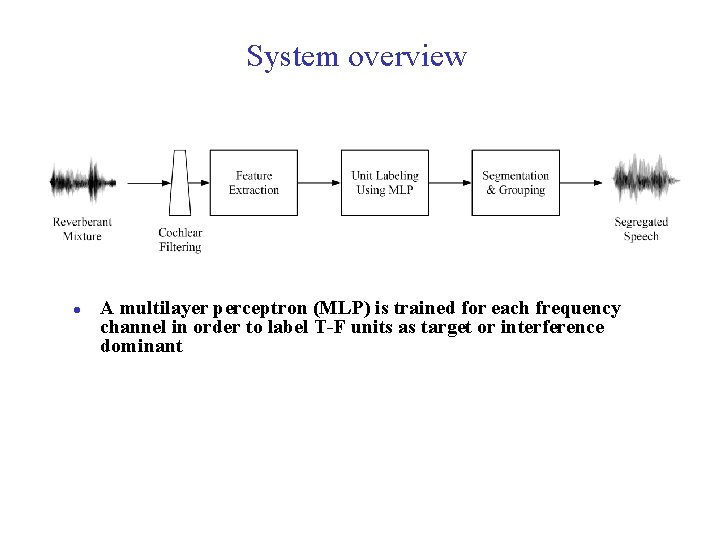

System overview l A multilayer perceptron (MLP) is trained for each frequency channel in order to label T-F units as target or interference dominant ICASSP'10 tutorial 83

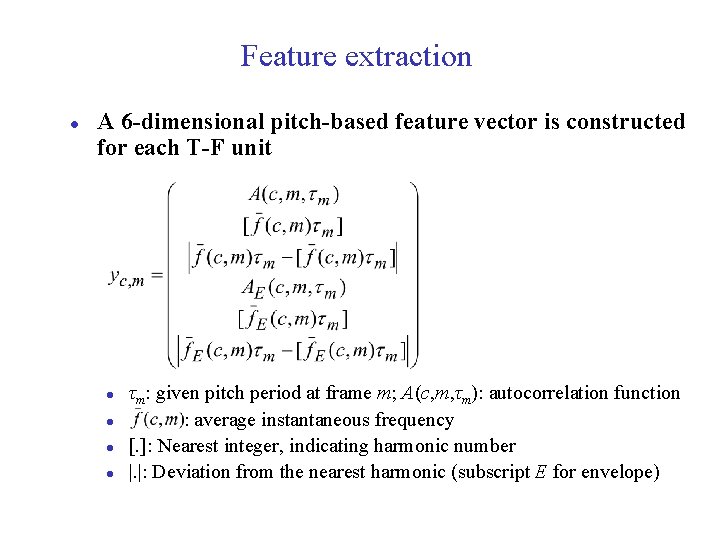

Feature extraction l A 6 -dimensional pitch-based feature vector is constructed for each T-F unit l l τm: given pitch period at frame m; A(c, m, τm): autocorrelation function : average instantaneous frequency [. ]: Nearest integer, indicating harmonic number |. |: Deviation from the nearest harmonic (subscript E for envelope) ICASSP'10 tutorial 84

MLP learning l The objective function is to maximize SNR: l l d: desired output; a: actual output; E: energy Generalized mean-square-error criterion – each squared error weighted by normalized energy (i. e. , cost sensitive learning) ICASSP'10 tutorial 85

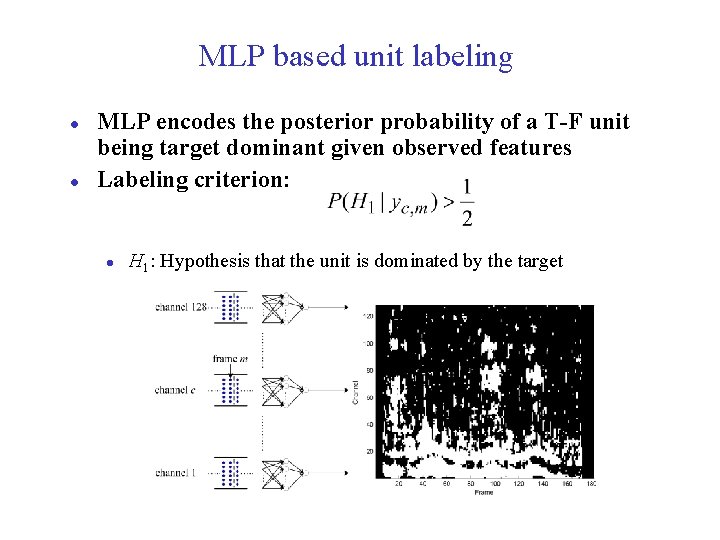

MLP based unit labeling l l MLP encodes the posterior probability of a T-F unit being target dominant given observed features Labeling criterion: l H 1: Hypothesis that the unit is dominated by the target ICASSP'10 tutorial 86

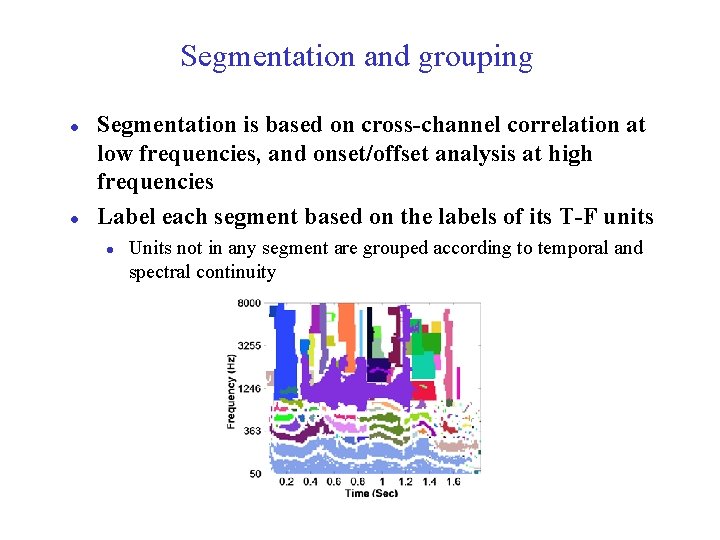

Segmentation and grouping l l Segmentation is based on cross-channel correlation at low frequencies, and onset/offset analysis at high frequencies Label each segment based on the labels of its T-F units l Units not in any segment are grouped according to temporal and spectral continuity ICASSP'10 tutorial 87

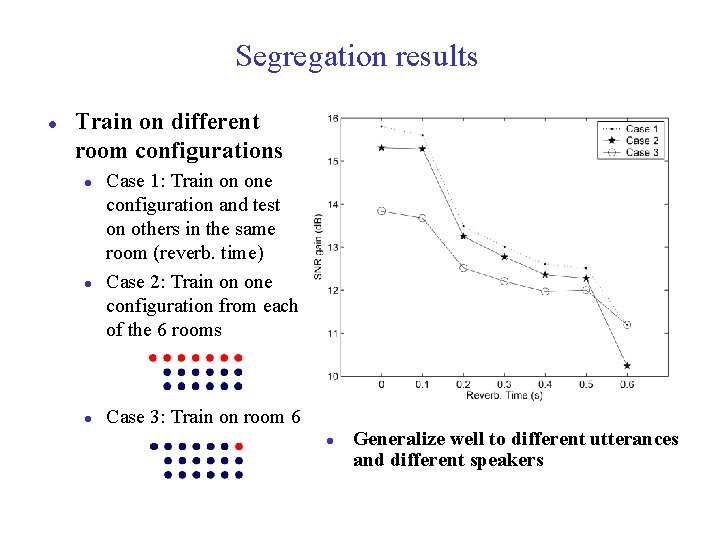

Segregation results l Train on different room configurations l l l Case 1: Train on one configuration and test on others in the same room (reverb. time) Case 2: Train on one configuration from each of the 6 rooms Case 3: Train on room 6 l ICASSP'10 tutorial Generalize well to different utterances and different speakers 88

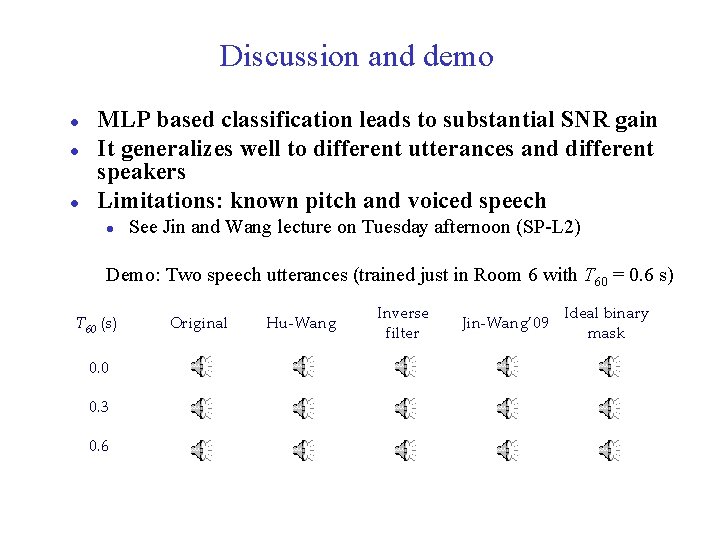

Discussion and demo l l l MLP based classification leads to substantial SNR gain It generalizes well to different utterances and different speakers Limitations: known pitch and voiced speech l See Jin and Wang lecture on Tuesday afternoon (SP-L 2) Demo: Two speech utterances (trained just in Room 6 with T 60 = 0. 6 s) T 60 (s) Original Hu-Wang Inverse filter Jin-Wang’ 09 Ideal binary mask 0. 0 0. 3 0. 6 ICASSP'10 tutorial 89

GMM-based classification l l Instead of treating voiced speech and unvoiced speech separately, a simpler approach is to perform classification on noisy speech regardless of voicing A classification model by Kim, Lu, Hu, and Loizou (2009) deals with speech segregation in a speaker and masker dependent way: l l l AM spectrum (AMS) features are used Classification is based on Gaussian mixture models (GMM) Speech intelligibility evaluation is performed with normal-hearing listeners ICASSP'10 tutorial 90

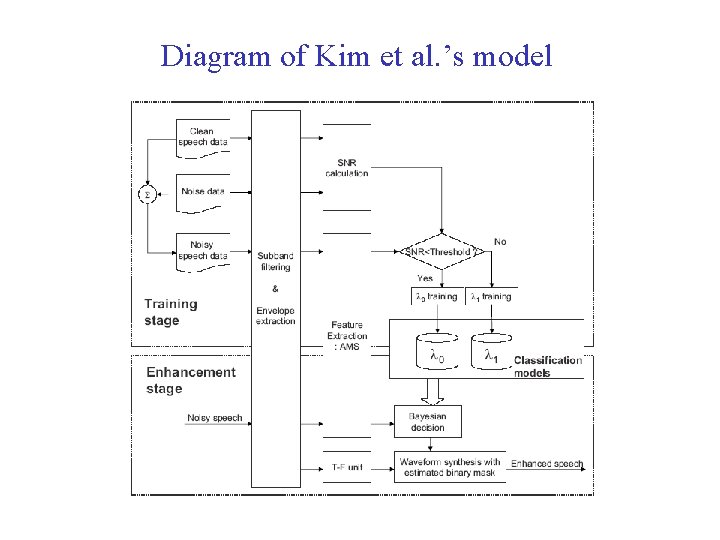

Diagram of Kim et al. ’s model ICASSP'10 tutorial 91

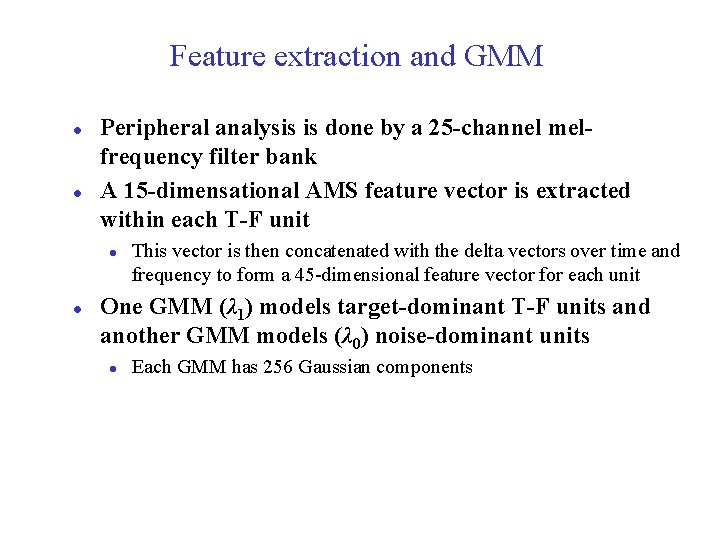

Feature extraction and GMM l l Peripheral analysis is done by a 25 -channel melfrequency filter bank A 15 -dimensational AMS feature vector is extracted within each T-F unit l l This vector is then concatenated with the delta vectors over time and frequency to form a 45 -dimensional feature vector for each unit One GMM (λ 1) models target-dominant T-F units and another GMM models (λ 0) noise-dominant units l Each GMM has 256 Gaussian components ICASSP'10 tutorial 92

Training and classification l l l To improve efficiency, each GMM is subdivided into two models during training: one for relatively high local SNRs and one for relatively low SNRs With the 4 trained GMMs, segregation comes down to Bayesian classification with prior probabilities of P(λ 0) and P(λ 1) estimated from the training data The training and test data are mixtures of IEEE sentences and 3 masking noises: babble, factory, and speech-shaped noise l Separate GMMs are trained for each speaker (a male and a female) and each masker ICASSP'10 tutorial 93

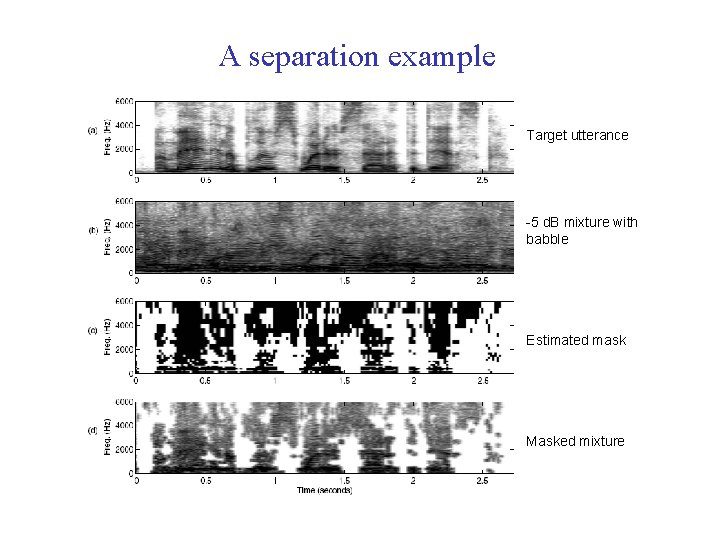

A separation example Target utterance -5 d. B mixture with babble Estimated mask Masked mixture ICASSP'10 tutorial 94

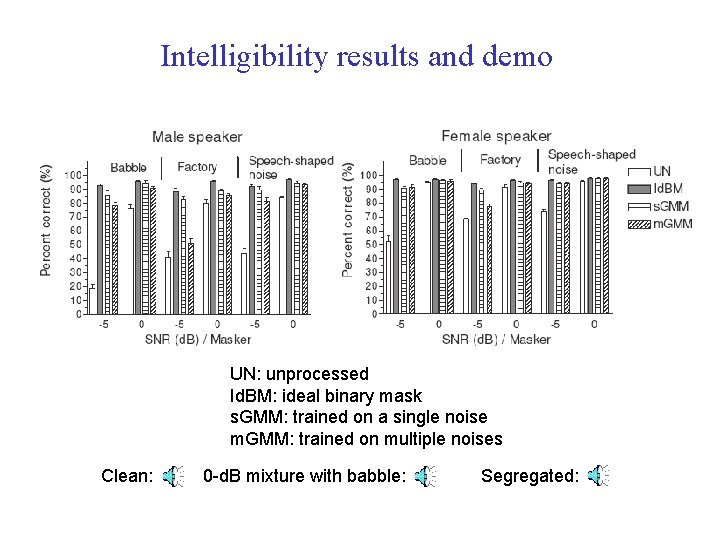

Intelligibility results and demo UN: unprocessed Id. BM: ideal binary mask s. GMM: trained on a single noise m. GMM: trained on multiple noises Clean: ICASSP'10 tutorial 0 -d. B mixture with babble: Segregated: 95

Discussion l GMM classifier achieves a hit rate (active units correctly classified) higher than 80% for most cases while keeps the false-alarm (FA) rate relatively low l l As expected, m. GMM results are worse than s. GMM HIT – FA well correlates with intelligibility The first monaural separation algorithm to achieve significant speech intelligibility improvements Main limitation is speaker and masker dependency l Also, AMS features would not be applicable to multitalker mixtures ICASSP'10 tutorial 96

Concluding remarks l Monaural speech segregation is of fundamental importance l l l Speech enhancement algorithms are based on statistical analysis of general signal properties l l Compared to beamforming, it does not assume configuration stationarity, hence more versatile for application It may hold the key to robustness to room reverberation For example, uncorrelatedness of speech and noise CASA-based speech segregation is based on analysis of perceptual and speech properties l For example, heavy use of pitch and model training ICASSP'10 tutorial 97

Concluding remarks (cont. ) l Speech enhancement aims to increase the SNR of noisy speech l l Reasonable for improving speech quality, but unsuccessful for improving speech intelligibility Binary masking is a key concept of CASA, which has led to the new formulation of segregation as binary classification l Classification based segregation shows promising results for improving speech intelligibility ICASSP'10 tutorial 98

Remarks on SNR and intelligibility l l SNR measures signal similarity to clean speech Recent intelligibility studies provide evidence that lower SNR can result in higher intelligibility! l l LC = 0 d. B is best for SNR gain (Li & Wang, 2009) However, intelligibility results in Brungart et al. (2006), Wang et al. (2009), and Kim et al. (2009) indicate that negative LC values produce higher intelligibility Hence the pursuit of increased SNR could be wrongly headed It is important to identify appropriate computational goals, and design evaluation metrics accordingly l This way, one can avoid making effort in wrong directions ICASSP'10 tutorial 99

Review of presentation I. III. IV. Introduction: Speech segregation problem Auditory scene analysis (ASA) Speech enhancement Speech segregation by computational auditory scene analysis (CASA) V. Segregation as binary classification VI. Concluding remarks ICASSP'10 tutorial 100

Further information l This tutorial is partly drawn from the following two books (Loizou, 2007; Wang & Brown, 2006) ICASSP'10 tutorial 101

Bibliography Anzalone et al. (2006) Ear & Hearing 27: 480 -492. Arbib (1989) The Metaphorical Brain 2. Wiley. Barker, Cooke, Ellis (2005) Speech Comm. 45: 5 -25. Bregman (1990) Auditory Scene Analysis. MIT Press. Bronkhorst & Plomp (1992) JASA 92: 3132 -3139. Brown & Cooke (1994) Comp. Speech & Lang. 8: 297336. Brungart et al. (2006) JASA 120: 4007 -4018. Cherry (1957) On Human Communication. Wiley. Cowan (2001) Beh. & Brain Sci. 24: 87 -185. Ellis (1996) Ph. D MIT. Ephraim & Malah (1984) IEEE T-ASSP 32: 1109 -1121. Helmholtz (1863) On the Sensation of Tone. Dover. Hu & Wang (2001) WASPPA. Hu & Wang (2004) IEEE T-NN 15: 1135 -1150. Hu & Wang (2008) JASA 124: 1306 -1319. Jin & Wang (2009) IEEE T-ASLP 17: 625 -638. Kim et al. (2009) JASA 126: 1486 -1494. Li N & Loizou (2008) JASA 123: 1673 -1682. ICASSP'10 tutorial Li Y & Wang (2009) Speech Comm. 51: 230 -239. Licklider (1951) Experientia 7: 128 -134. Loizou (2007) Speech enhancement: Theory and practice. CRC. Marr (1982) Vision. Freeman Miller, Heise, & Lichten (1951) JEP 41: 329 -335. Radfar, Dansereau, & Sayadiyan (2007) EURASIP JASMP: Article 84186 Shao & Wang (2006) IEEE T-ASLP 14: 289 -298 Steeneken (1992) Ph. D Univ of Amsterdam. Treisman (1999) Neuron 24: 105 -110. Wang & Brown, Ed. (2006) Computational auditory scene analysis. Wiley & IEEE Press. Weintraub (1985) Ph. D Stanford. Wang et al. (2008) JASA 124: 2303 -2307. Wang et al. (2009) JASA 125: 2336 -2347. 102

Resources and acknowledgments l Source programs for some studies referred to in this tutorial are available at OSU Perception & Neurodynamics Lab’s website http: //www. cse. ohio-state. edu/pnl l Bregman and Ahad (1995) produced a CD that accompanies Bregman’s 1990 book, which can be ordered from MIT Press Houtsma, Rossing, and Wagenaars (1987) produced a CD that demonstrates basic auditory perception phenomena, which can be ordered from the Acoustical Society of America Thanks to M. Cooke and P. Loizou for making available their slides, some of which are incorporated in this tutorial ICASSP'10 tutorial 103

- Slides: 103